The Supreme Court tossed out a billion-dollar verdict against an internet service provider (ISP) on Wednesday, in a closely watched case that could have severely damaged many Americans’ access to the internet if it had gone the other way.

Tech

This tree search framework hits 98.7% on documents where vector search fails

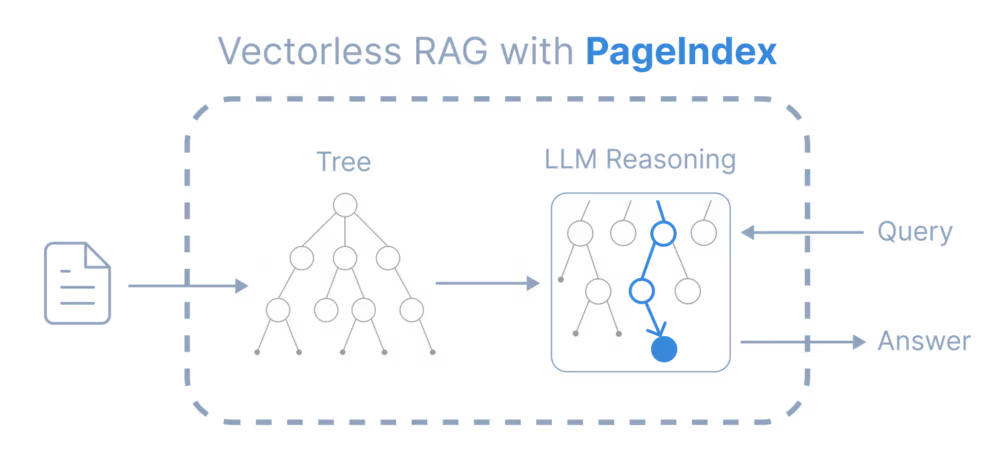

A new open-source framework called PageIndex solves one of the old problems of retrieval-augmented generation (RAG): handling very long documents.

The classic RAG workflow (chunk documents, calculate embeddings, store them in a vector database, and retrieve the top matches based on semantic similarity) works well for basic tasks such as Q&A over small documents.

PageIndex abandons the standard “chunk-and-embed” method entirely and treats document retrieval not as a search problem, but as a navigation problem.

But as enterprises try to move RAG into high-stakes workflows — auditing financial statements, analyzing legal contracts, navigating pharmaceutical protocols — they’re hitting an accuracy barrier that chunk optimization can’t solve.

AlphaGo for documents

PageIndex addresses these limitations by borrowing a concept from game-playing AI rather than search engines: tree search.

When humans need to find specific information in a dense textbook or a long annual report, they do not scan every paragraph linearly. They consult the table of contents to identify the relevant chapter, then the section, and finally the specific page. PageIndex forces the LLM to replicate this human behavior.

Instead of pre-calculating vectors, the framework builds a “Global Index” of the document’s structure, creating a tree where nodes represent chapters, sections, and subsections. When a query arrives, the LLM performs a tree search, explicitly classifying each node as relevant or irrelevant based on the full context of the user’s request.

“In computer science terms, a table of contents is a tree-structured representation of a document, and navigating it corresponds to tree search,” Zhang said. “PageIndex applies the same core idea — tree search — to document retrieval, and can be thought of as an AlphaGo-style system for retrieval rather than for games.”

This shifts the architectural paradigm from passive retrieval, where the system simply fetches matching text, to active navigation, where an agentic model decides where to look.

The limits of semantic similarity

There is a fundamental flaw in how traditional RAG handles complex data. Vector retrieval assumes that the text most semantically similar to a user’s query is also the most relevant. In professional domains, this assumption frequently breaks down.

Mingtian Zhang, co-founder of PageIndex, points to financial reporting as a prime example of this failure mode. If a financial analyst asks an AI about “EBITDA” (earnings before interest, taxes, depreciation, and amortization), a standard vector database will retrieve every chunk where that acronym or a similar term appears.

“Multiple sections may mention EBITDA with similar wording, yet only one section defines the precise calculation, adjustments, or reporting scope relevant to the question,” Zhang told VentureBeat. “A similarity based retriever struggles to distinguish these cases because the semantic signals are nearly indistinguishable.”

This is the “intent vs. content” gap. The user does not want to find the word “EBITDA”; they want to understand the “logic” behind it for that specific quarter.

Furthermore, traditional embeddings strip the query of its context. Because embedding models have strict input-length limits, the retrieval system usually only sees the specific question being asked, ignoring the previous turns of the conversation. This detaches the retrieval step from the user’s reasoning process. The system matches documents against a short, decontextualized query rather than the full history of the problem the user is trying to solve.

Solving the multi-hop reasoning problem

The real-world impact of this structural approach is most visible in “multi-hop” queries that require the AI to follow a trail of breadcrumbs across different parts of a document.

In a recent benchmark test known as FinanceBench, a system built on PageIndex called “Mafin 2.5” achieved a state-of-the-art accuracy score of 98.7%. The performance gap between this approach and vector-based systems becomes clear when analyzing how they handle internal references.

Zhang offers the example of a query regarding the total value of deferred assets in a Federal Reserve annual report. The main section of the report describes the “change” in value but does not list the total. However, the text contains a footnote: “See Appendix G of this report … for more detailed information.”

A vector-based system typically fails here. The text in Appendix G looks nothing like the user’s query about deferred assets; it is likely just a table of numbers. Because there is no semantic match, the vector database ignores it.

The reasoning-based retriever, however, reads the cue in the main text, follows the structural link to Appendix G, locates the correct table, and returns the accurate figure.

The latency trade-off and infrastructure shift

For enterprise architects, the immediate concern with an LLM-driven search process is latency. Vector lookups occur in milliseconds; having an LLM “read” a table of contents implies a significantly slower user experience.

However, Zhang explains that the perceived latency for the end-user may be negligible due to how the retrieval is integrated into the generation process. In a classic RAG setup, retrieval is a blocking step: the system must search the database before it can begin generating an answer. With PageIndex, retrieval happens inline, during the model’s reasoning process.

“The system can start streaming immediately, and retrieve as it generates,” Zhang said. “That means PageIndex does not add an extra ‘retrieval gate’ before the first token, and Time to First Token (TTFT) is comparable to a normal LLM call.”

This architectural shift also simplifies the data infrastructure. By removing reliance on embeddings, enterprises no longer need to maintain a dedicated vector database. The tree-structured index is lightweight enough to sit in a traditional relational database like PostgreSQL.

This addresses a growing pain point in LLM systems with retrieval components: the complexity of keeping vector stores in sync with living documents. PageIndex separates structure indexing from text extraction. If a contract is amended or a policy updated, the system can handle small edits by re-indexing only the affected subtree rather than reprocessing the entire document corpus.

A decision matrix for the enterprise

While the accuracy gains are compelling, tree-search retrieval is not a universal replacement for vector search. The technology is best viewed as a specialized tool for “deep work” rather than a catch-all for every retrieval task.

For short documents, such as emails or chat logs, the entire context often fits within a modern LLM’s context window, making any retrieval system unnecessary. Conversely, for tasks purely based on semantic discovery, such as recommending similar products or finding content with a similar “vibe,” vector embeddings remain the superior choice because the goal is proximity, not reasoning.

PageIndex fits squarely in the middle: long, highly structured documents where the cost of error is high. This includes technical manuals, FDA filings, and merger agreements. In these scenarios, the requirement is auditability. An enterprise system needs to be able to explain not just the answer, but the path it took to find it (e.g., confirming that it checked Section 4.1, followed the reference to Appendix B, and synthesized the data found there).

The future of agentic retrieval

The rise of frameworks like PageIndex signals a broader trend in the AI stack: the move toward “Agentic RAG.” As models become more capable of planning and reasoning, the responsibility for finding data is moving from the database layer to the model layer.

We are already seeing this in the coding space, where agents like Claude Code and Cursor are moving away from simple vector lookups in favor of active codebase exploration. Zhang believes generic document retrieval will follow the same trajectory.

“Vector databases still have suitable use cases,” Zhang said. “But their historical role as the default database for LLMs and AI will become less clear over time.”

Tech

UK warns 2G shutdown could leave older devices offline by 2033

In newly issued guidance, UK officials outlined the timeline for shutting down legacy mobile infrastructure. Operators have already switched off 3G services, and 2G is set to follow between 2029 and 2033. Users are being urged to prepare ahead of time, as not all devices will make the transition intact.

Read Entire Article

Source link

Tech

You Can Skip a Lot of Amazon’s Spring Sale, but Don’t Skip This Travel Upgrade

The WIRED Reviews Team has been covering Amazon’s Big Spring Sale since it began at on Wednesday, and the overall deals have been … not great, honestly. So far, we’ve found decent markdowns on vacuums, smart bird feeders, and even an air fryer we love, but I just saw that Cadence Capsules, those colorful magnetic containers you may have seen on your social media pages, are 20 percent off. (For reference, the last time I saw them on sale, they were a measly 9 percent off.)

If you’re not familiar, they allow you to decant your full-sized personal care products you use at home—from shampoo and sunscreen to serums and pills—into a labeled, modular system of hexagonal containers that are leak-proof, dishwasher safe, and stick together magnetically in your bag or on a countertop. No more jumbled, travel-sized toiletries and leaky, mismatched bottles and tubes.

Cadence Capsules have garnered some grumbling online for being overly heavy or leaking, but I’ve been using them regularly for about a year—I discuss decanting your daily-use products in my guide to How to Pack Your Beauty Routine for Travel—and haven’t experienced any leaks. They do add weight if you’re trying to travel super-light, and because they’re magnetic, they will also stick to other metal items in your toiletry bag, like bobby pins or other hair accessories. This can be annoying, especially if you’re already feeling chaotic or in a hurry.

Otherwise, Capsules are modular, convenient, and make you feel supremely organized—magnetic, interchangeable inserts for the lids come with permanent labels like “shampoo,” “conditioner,” “cleanser,” and “moisturizer.” Maybe you love this; maybe you don’t. But at least if you buy on Amazon, you can choose which label genre you get (Haircare, Bodycare, Skincare, Daily Routine). If this just isn’t your jam, the Cadence website offers a set of seven that allows you to customize the color and lid label of each Capsule, but that set is not currently on sale.

Tech

The Supreme Court is scared it’s going to break the internet

Wednesday’s decision in Cox Communications v. Sony Music Entertainment is part of a broader pattern. It is one of a handful of recent Supreme Court cases that threatened to break the internet — or, at least, to fundamentally harm its ability to function as it has for decades. In each case, the justices took a cautious and libertarian approach. And they’ve often done so by lopsided margins. All nine justices joined the result in Cox, although Justices Sonia Sotomayor and Ketanji Brown Jackson criticized some of the nuances of Justice Clarence Thomas’s majority opinion.

Some members of the Court have said explicitly that this wary approach stems from a fear that they do not understand the internet well enough to oversee it. As Justice Elena Kagan said in a 2022 oral argument, “we really don’t know about these things. You know, these are not like the nine greatest experts on the internet.”

Thomas’s opinion in Cox does a fine job of articulating why this case could have upended millions of Americans’ ability to get online. The plaintiffs were major music companies who, in Thomas’s words, have “struggled to protect their copyrights in the age of online music sharing.” It is very easy to pirate copyrighted music online. And the music industry has fought online piracy with mixed success since the Napster Wars of the late 1990s.

Before bringing the Cox lawsuit, the music company plaintiffs used software that allowed them to “detect when copyrighted works are illegally uploaded or downloaded and trace the infringing activity to a particular IP address,” an identification number assigned to online devices. The software informed ISPs when a user at a particular IP address was potentially violating copyright law. After the music companies decided that Cox Communications, the primary defendant in Cox, was not doing enough to cut off these users’ internet access, they sued.

Two practical problems arose from this lawsuit. One is that, as Thomas writes, “many users can share a particular IP address” — such as in a household, coffee shop, hospital, or college dorm. Thus, if Cox had cut off a customer’s internet access whenever someone using that client’s IP address downloaded something illegally, it would also wind up shutting off internet access for dozens or even thousands of innocent people.

Imagine, for example, a high-rise college dormitory where just one student illegally downloads the latest Taylor Swift album. That student might share an IP address with everyone else in that building.

The other reason the Cox case could have fundamentally changed how people get online is that the monetary penalties for violating federal copyright law are often astronomical. Again, the plaintiffs in Cox won a billion-dollar verdict in the trial court. If these plaintiffs had prevailed in front of the Supreme Court, ISPs would likely have been forced into draconian crackdowns on any customer that allowed any internet users to pirate music online — because the costs of failing to do so would be catastrophic.

But that won’t happen. After Cox, college students, hospital patients, and hotel guests across the country can rest assured that they will not lose internet access just because someone down the hall illegally downloads “The Fate of Ophelia.” Thomas’s decision does not simply reject the music industry’s suit against Cox, it nukes it from orbit.

Cox, moreover, is the most recent of at least three decisions where the Court showed similarly broad skepticism of lawsuits or statutes seeking to regulate the internet.

The Supreme Court is an internet-based company’s best friend

The most striking thing about Thomas’s majority opinion in Cox is its breadth. Cox does not simply reject this one lawsuit, it cuts off a wide swath of copyright suits against internet service providers.

Thomas argues that, in order to prevail in Cox, the music industry plaintiffs would have needed to show that Cox “intended” for its customers to use its service for copyright infringement. To overcome this hurdle, the plaintiffs would have needed to show either that internet service providers “promoted and marketed their [service] as a tool to infringe copyrights” or that the only viable use of the internet is to illegally download copyrighted music.

Thomas also adds that the mere fact that Cox may have known that some of its users were illegally pirating copyrighted material is not enough to hold them liable for that activity.

As a legal matter, this very broad holding is dubious. As Sotomayor argues in a separate opinion, Congress enacted a law in 1998 which creates a safe harbor for some ISPs that are sued for copyright infringement by their customers. Under that 1998 law, the lawsuit fails if the ISP “adopted and reasonably implemented” a system to terminate repeat offenders of federal copyright law.

The fact that this safe harbor exists suggests that Congress believed that ISPs which do not comply with its terms may be sued. But Thomas’s opinion cuts off many lawsuits against defendants who do not comply with the safe harbor provision.

Still, while lawyers can quibble about whether Thomas or Sotomayor have the best reading of federal law, Thomas’s opinion was joined by a total of seven justices. And it is consistent with the Court’s previous decisions seeking to protect the internet from lawsuits and statutes that could undermine its ability to function.

In Twitter v. Taamneh (2023), a unanimous Supreme Court rejected a lawsuit seeking to hold social media companies liable for overseas terrorist activity. Twitter arose out of a federal law permitting suits against anyone “who aids and abets, by knowingly providing substantial assistance” to certain acts of “international terrorism.” The plaintiffs in Twitter claimed that social media companies were liable for an ISIS attack that killed 39 people in Istanbul, because ISIS used those companies’ platforms to post recruitment videos and other content.

Thomas also wrote the majority opinion in Twitter, and his opinion in that case mirrors the Cox decision’s view that internet companies generally should not be held responsible for bad actors who use their products. “Ordinary merchants,” Thomas wrote in Twitter, typically should not “become liable for any misuse of their goods and services, no matter how attenuated their relationship with the wrongdoer.”

Indeed, several key justices are so protective of the internet — or, at least, so cautious about interfering with it — that they’ve taken a libertarian approach to internet companies even when their own political party wants to control online discourse.

In Moody v. Netchoice (2024) the Court considered two state laws, one from Texas and one from Florida, that sought to force social media companies to publish conservative and Republican voices that those companies had allegedly banned or otherwise suppressed. As Texas’s Republican Gov. Greg Abbott said of his state’s law, it was enacted to stop a supposedly “dangerous movement by social media companies to silence conservative viewpoints and ideas.”

Both laws were blatantly unconstitutional. The First Amendment does not permit the government to force Twitter or Facebook to unban someone for the same reason the government cannot force a newspaper to publish op-eds disagreeing with its regular columnists. As the Court held in Miami Herald Publishing Co. v. Tornillo (1974), media outlets have an absolute right to determine “the choice of material” that they publish.

After Moody reached the Supreme Court, however, the justices uncovered a procedural flaw in the plaintiffs’ case that should have required them to send the case back down to the lower courts without weighing in on whether the two state laws are constitutional. Yet, while the Court did send the case back down, it did so with a very pointed warning that the US Court of Appeals for the Fifth Circuit, which had backed Texas’s law, “was wrong.”

Six justices, including three Republicans, joined a majority opinion leaving no doubt that the Texas and Florida laws violate the First Amendment. They protected the sanctity of the internet, even when it was procedurally improper for them to do so.

This Supreme Court isn’t normally so protective of institutions

One reason why the Court’s hands-off-the-internet approach in Cox, Twitter, and Moody is so remarkable is that the Supreme Court’s current majority rarely shows such restraint in other cases, at least when those cases have high partisan or ideological stakes.

In two recent decisions — Mahmoud v. Taylor (2025) and Mirabelli v. Bonta (2026) — for example, the Court’s Republican majority imposed onerous new burdens on public schools, which appear to be designed to prevent those schools from teaching a pro-LGBTQ viewpoint to students whose parents find gay or trans people objectionable. I’ve previously explained why public schools will struggle to comply with Mahmoud and Mirabelli, and why many might find compliance impossible. Neither opinion showed even a hint of the caution that the Court displayed in Cox and similar cases.

Similarly, in Medina v. Planned Parenthood (2025), the Court handed down a decision that is likely to render much of federal Medicaid law unenforceable. If taken seriously, Medina overrules decades of Supreme Court decisions shaping the rights of about 76 million Medicaid patients, including a decision the Court handed down as recently as 2023 — though it remains to be seen if the Court’s Republican majority will apply Medina’s new rule in a case that doesn’t involve an abortion provider.

The Court’s Republican majority, in other words, is rarely cautious. And it is often willing to throw important American institutions such as the public school system or the US health care system into turmoil, especially in highly ideological cases.

But this Court does appear to hold the internet in the same high regard that it holds religious conservatives and opponents of abortion. And that means that the internet is one institution that these justices will protect.

Tech

Saturn’s Rings and Storms Stand Out in Combined Webb and Hubble Telescope Views

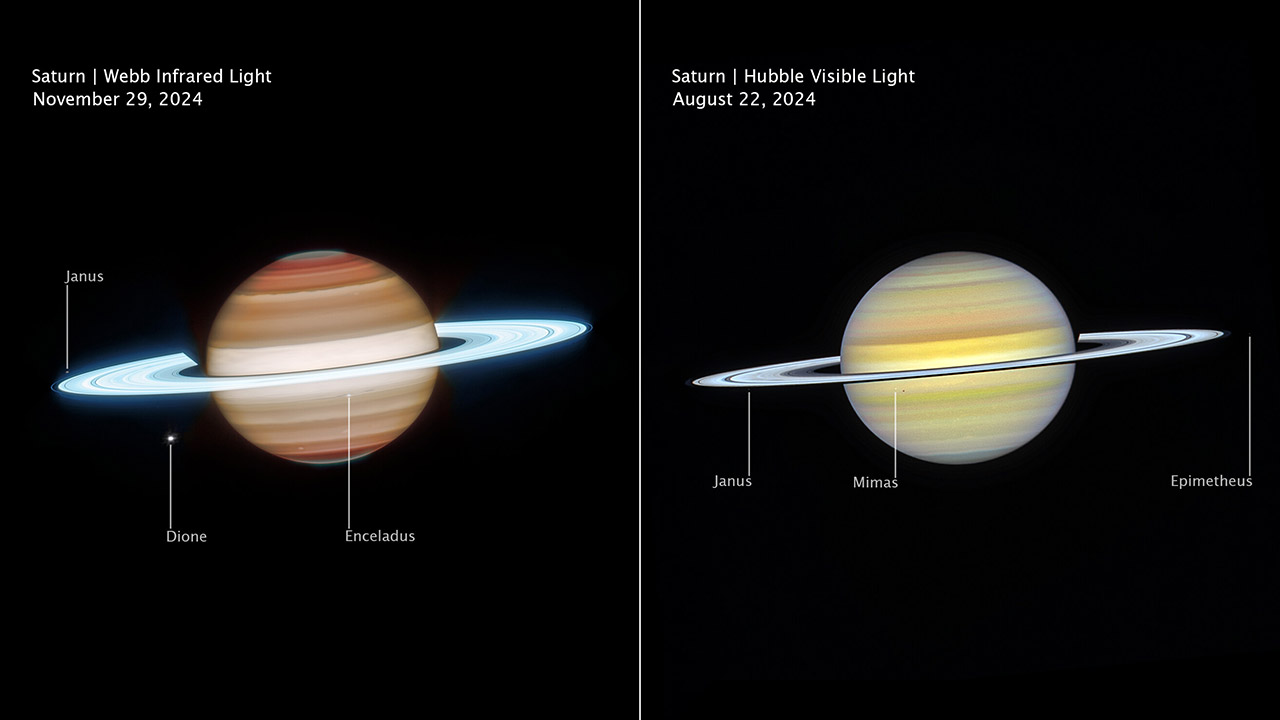

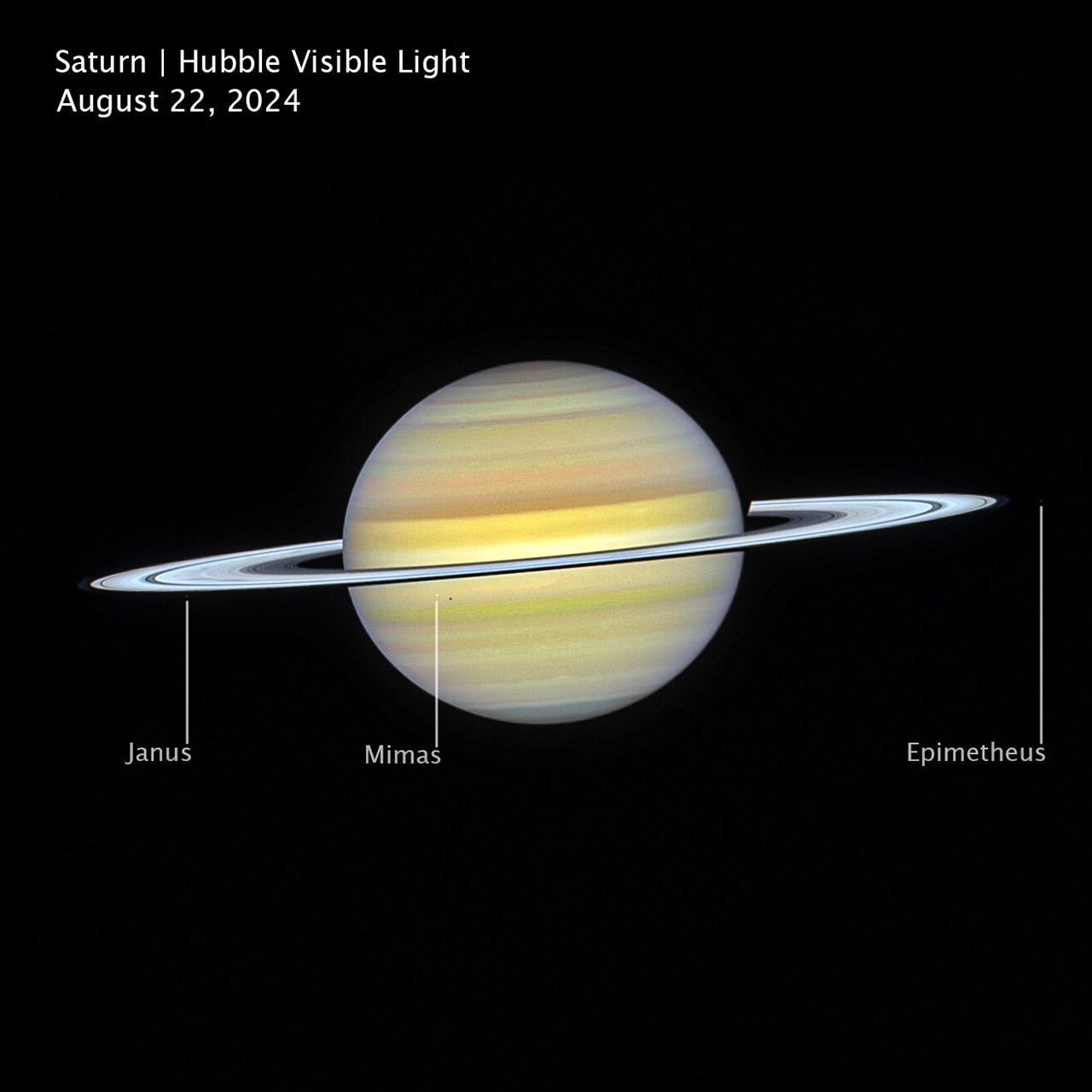

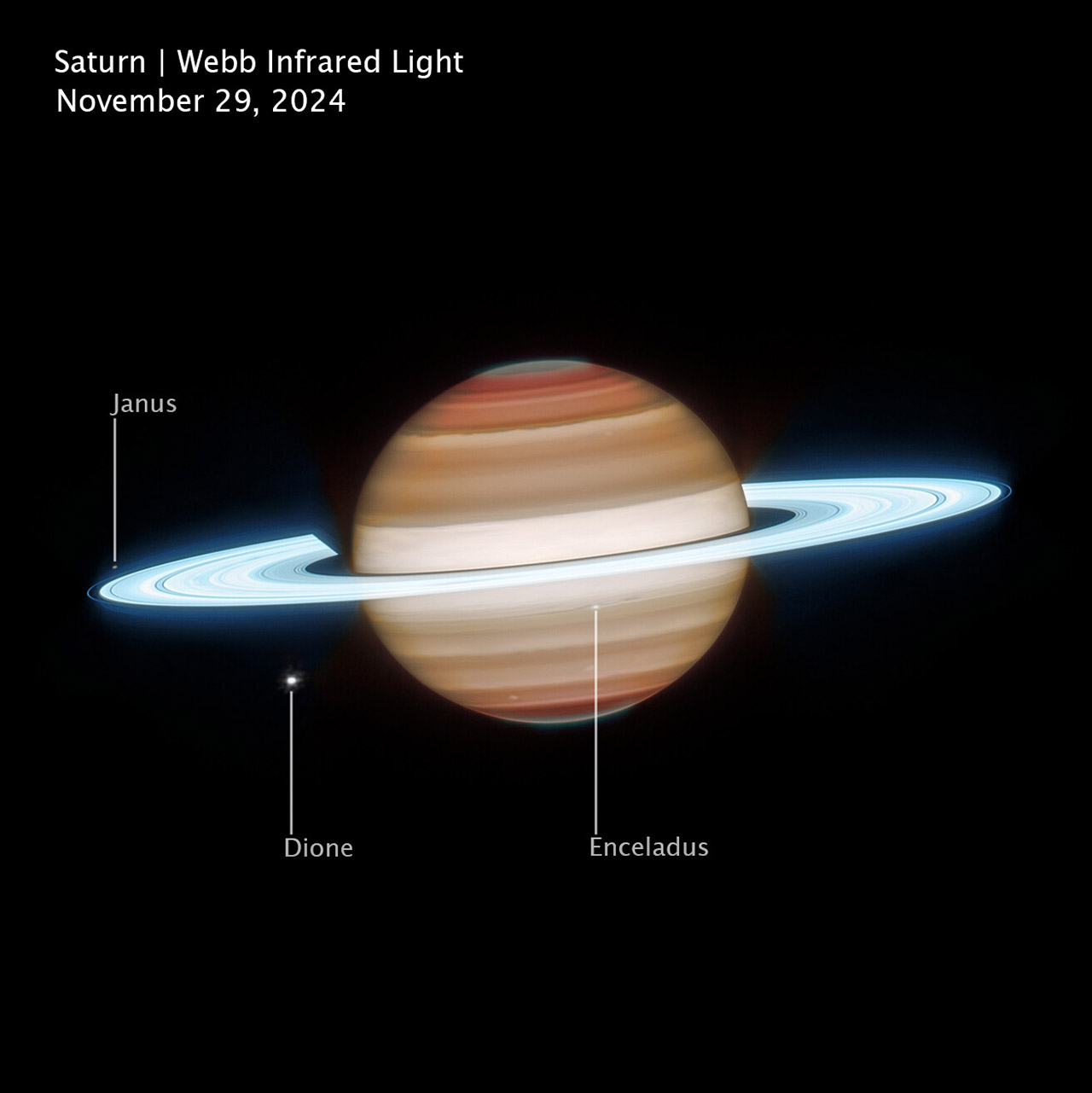

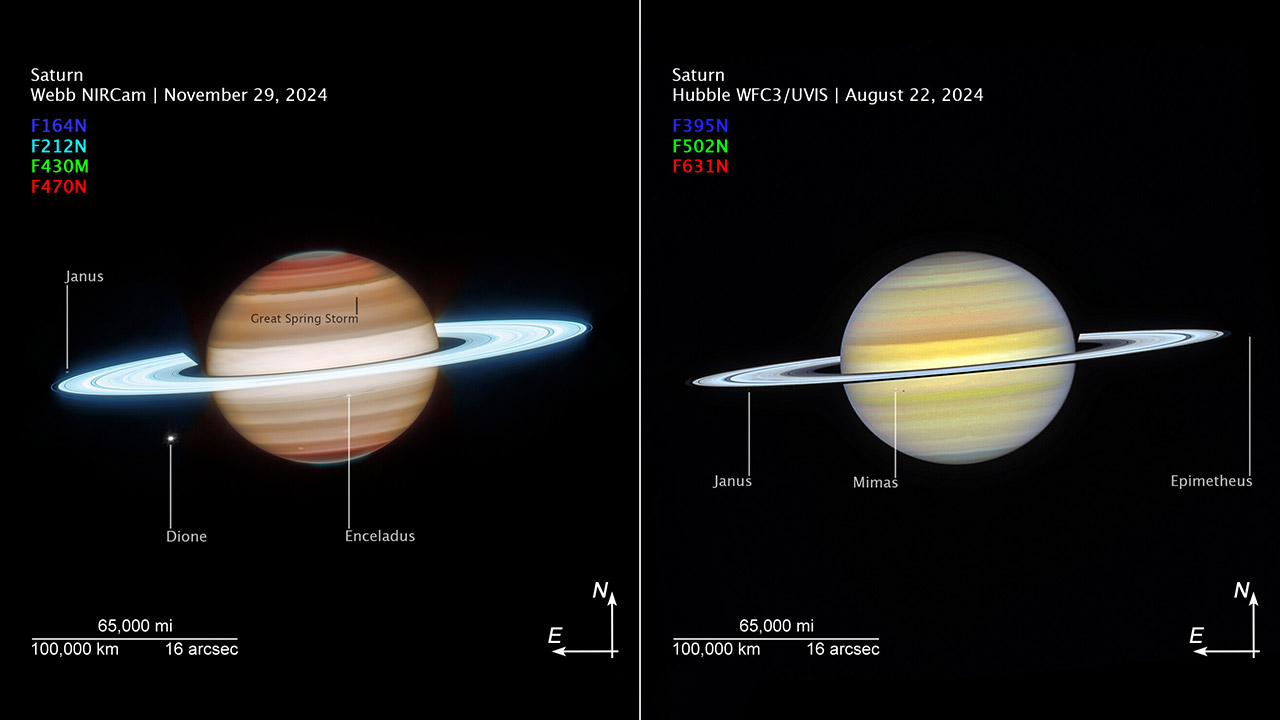

Astronomers have just released what may be the sharpest views of Saturn ever captured, courtesy of the Hubble and James Webb space telescopes working in tandem. One image was taken in visible light and is breathtaking on its own, while the other, captured in infrared, pulls back the curtain on an entirely different layer of detail across the planet’s clouds, rings, and poles.

Hubble captured its image on August 22nd during a routine weather monitoring sweep of the outer planets. Bands of clouds wrap around the globe with subtle shifts in tone where sunlight catches the upper atmosphere, and the rings cast long shadows across the planet’s face at that particular angle. Three of Saturn’s smaller moons, Janus, Mimas, and Epimetheus, sit quietly at the edges of the frame, adding a sense of scale to an already striking image.

Sale

Gskyer Telescope, 70mm Aperture 400mm AZ Mount Astronomical Refracting Telescope for Kids Beginners…

- Superior Optics: 400mm(f/5.7) focal length and 70mm aperture, fully coated optics glass lens with high transmission coatings creates stunning images…

- Magnification: Come with two replaceable eyepieces and one 3x Barlow lens.3x Barlow lens trebles the magnifying power of each eyepiece. 5×24 finder…

- Wireless Remote: This refractor telescope includes one smart phone adapter and one Wireless camera remote to explore the nature of the world easily…

The James Webb Space Telescope returned to the same spot a few months later on November 29th, this time with its near infrared camera. The rings respond brilliantly to infrared light, the water ice within them practically glowing in the exposure. The narrow outer F ring shows up with crisp definition alongside the broader B ring, which carries subtle spoke like structures that are easy to miss at first glance. The wider field of view also reveals six of Saturn’s larger moons, including Titan off to one side and Dione and Enceladus sitting remarkably close together.

The two images were taken 14 weeks apart, during a period when Saturn was slowly approaching its 2025 equinox. The northern hemisphere is easing out of summer while the south is just beginning its transition into spring, and that gradual seasonal shift gives astronomers a rare window to track how the planet’s clouds, rings, and atmospheric features evolve over the coming decade.

Hubble’s visible light image captures Saturn’s surface and the cloud formations that scientists have been studying for decades, but Webb’s infrared view goes considerably deeper, revealing cloud structures and atmospheric compounds at multiple levels, from the dense lower layers all the way up to the thin air at the top. Together the two images give researchers something far more powerful than either could provide alone, allowing them to study the atmosphere in layers rather than as a single flat snapshot.

The Webb image reveals a wavy jet stream cutting across the northern mid latitudes, bent by atmospheric waves churning beneath it. Further south a handful of small storms dot the lower hemisphere, one of which appears to be the final remnant of the enormous storm system that raged for years after it first appeared in 2010. Over in the Hubble image the famous north pole hexagon is faintly visible, the six sided wind pattern that has persisted since the 1980s and shows no signs of fading yet, though it will eventually disappear as Saturn’s north pole descends into a 15 year winter by the 2040s.

The poles in the infrared image take on a grey green tint that scientists believe could be caused by high altitude aerosols or charged particles connected to auroral activity around Saturn’s magnetic field, details that are simply invisible in visible light. The rings tell their own story across both images as well. Visible light shows their structure and the shadows they cast across the planet’s surface, while infrared highlights just how reflective the ice particles within them are, making the entire ring system pop against the darkness of space. Subtle differences between the two images also reflect the different viewing angles and wavelengths each telescope works with, adding another layer of information for researchers to work through.

[Source]

Tech

Intercom’s new post-trained Fin Apex 1.0 beats GPT-5.4 and Claude Sonnet 4.6 at customer service resolutions

Intercom is taking an unusual gamble for a legacy software company: building its own AI model.

The 15-year-old, Dublin, Ireland-based massive customer service platform announced Fin Apex 1.0 on Thursday, a small, purpose-built AI model that the company claims outperforms leading frontier models from OpenAI and Anthropic on the metrics that matter most for customer support.

The model powers Intercom’s existing Fin AI agent, which already handles over one million customer conversations weekly.

According to benchmarks shared with VentureBeat, Fin Apex 1.0 achieves a 73.1% resolution rate—the percentage of customer issues fully resolved without human intervention—compared to 71.1% for both GPT-5.4 and Claude Opus 4.5, and 69.6% for Claude Sonnet 4.6. That roughly 2 percentage point margin may sound modest, but it’s wider than the typical gap between successive generations of frontier models.

“If you’re running large service operations at scale and you’ve got 10 million customers or a billion dollars in revenue, a delta of 2% or 3% is a really large amount of customers and interactions and revenue,” Intercom CEO Eoghan McCabe told VentureBeat in a video call interview earlier this week.

The model also shows significant improvements in speed and accuracy. Fin Apex delivers responses in 3.7 seconds—0.6 seconds faster than the next-fastest competitor—and demonstrates a 65% reduction in hallucinations compared to Claude Sonnet 4.6.

Perhaps most striking for enterprise buyers: it runs at roughly one-fifth the cost of using frontier models directly, and is included in Intercom’s existing “per-outcome”-based pricing structure for its existing customer plans.

What’s the base model? Does it even matter?

But there’s a catch. When asked to specify which base model Apex was built on—and its parameter size—Intercom declined.

“We’re not sharing the base model we used for Apex 1.0—for competitive reasons and also because we plan to switch base models over time,” a company spokesperson told VentureBeat. The company would only confirm that the model is “in the size of hundreds of millions of parameters.”

That’s a notably small model. For comparison, Meta’s Llama 3.1 ranges from 8 billion to 405 billion parameters; even efficient open-weights models like Mistral 7B dwarf the sub-billion scale Intercom describes.

Whether Apex’s performance claims hold up against that context—or whether the benchmarks reflect optimizations possible only in narrow, domain-specific applications—remains an open question.

Intercom says it learned from the backlash AI coding startup Cursor faced when critics accused the coding assistant of burying the fact that its Composer 2 model was built on fine-tuned open-weights models rather than proprietary technology. But the lesson Intercom drew may not satisfy skeptics: the company is transparent that it used an open-weights base, just not which one.

“We are very transparent that we have” used an open-weights model, the spokesperson said. Yet declining to name the model while claiming transparency is a contradiction that will likely draw scrutiny—particularly as more companies tout “proprietary” AI that amounts to post-trained open-source foundations.

Post-training as the new frontier

Intercom’s argument is that the base model simply doesn’t matter much anymore.

“Pre-training is kind of a commodity now,” McCabe said. “The frontier, if you will, is actually in post-training. Post-training is the hard part. You need proprietary data. You need proprietary sources of truth.”

The company post-trained its chosen foundation using years of proprietary customer service data accumulated through Fin, which now resolves 2 million customer queries per week. That process involved more than just feeding transcripts into a model. Intercom built reinforcement learning systems grounded in real resolution outcomes, teaching the model what successful customer service actually looks like—the appropriate tone, judgment calls, conversational structure, and critically, how to recognize when an issue is truly resolved versus when a customer is still frustrated.

“The generic models are trained on generic data on the internet. The specific models are trained on hyper-specific domain data,” McCabe explained. “It stands to reason therefore that the intelligence of the generic models is generic, and the intelligence of the specific models is domain-specific and therefore operates in a far superior way for that use case.”

If McCabe is right that the magic is entirely in post-training, the reluctance to name the base becomes harder to justify. If the foundation is truly interchangeable, what competitive advantage does secrecy protect?

A $100 million bet paying off

The announcement comes as Intercom’s AI-first pivot appears to be working. Fin is approaching $100 million in annual recurring revenue and growing at 3.5x, making it the fastest-growing segment of the company’s $400 million ARR business. Fin is projected to represent half of Intercom’s total revenue early next year.

That trajectory represents a remarkable turnaround. When Fin launched, its resolution rate was just 23%. Today it averages 67% across customers, with some large enterprise deployments seeing rates as high as 75%.

To make this happen, Intercom grew its AI team from roughly 6 researchers to 60 over the past three years—a significant investment for a company that McCabe admits was “in a really bad place” before its AI pivot. The average growth rate for public software companies sits around 11%; Intercom expects to hit 37% growth this year.

“We’re by far the first in the category to train our own model,” McCabe said. “There’s no one else that’s going to have this for a year or more.”

The speciation and specialization of AI

McCabe’s thesis aligns with a broader trend that Andrej Karpathy, former AI leader at Tesla and OpenAI, recently described as the “speciation” of AI models—a proliferation of specialized systems optimized for narrow tasks rather than general intelligence.

Customer service, McCabe argues, is uniquely suited for this approach. It’s one of only two or three enterprise AI use cases that have found genuine economic traction so far, alongside coding assistants and potentially legal AI. That’s attracted over a billion dollars in venture funding to competitors like Decagon and Sierra—and made the space, in McCabe’s words, “ruthlessly competitive.”

The question is whether domain-specific models represent a durable advantage or a temporary arbitrage that frontier labs will eventually close. McCabe believes the labs face structural limitations.

“Maybe the future is that Anthropic has a big offering of many different specialized models. Maybe that’s what it looks like,” he said. “But the reality is that I don’t think the generic models are going to be able to keep up with the domain-specific models right now.”

Beyond efficiency to experience

Early enterprise AI adoption focused heavily on cost reduction—replacing expensive human agents with cheaper automated ones. But McCabe sees the conversation shifting toward experience quality.

“Originally it was like, ‘Holy shit, we can actually do this for so much cheaper.’ And now they’re thinking, ‘Wait, no, we can give customers a far better experience,’” he said.

The vision extends beyond simple query resolution. McCabe imagines AI agents that function as consultants—a shoe retailer’s bot that doesn’t just answer shipping questions but offers styling advice and shows customers how different options might look on them.

“Customer service has always been pretty shit,” McCabe said bluntly. “Even the very best brands, you’re left waiting on a call, you’re bounced around different departments. There’s an opportunity now to provide truly perfect customer experience.”

Pricing and availability

For existing Fin customers, the upgrade to Apex comes at no additional cost. Intercom confirmed that customer pricing remains unchanged—users continue to pay per outcome as before, at $0.99 per resolved interaction, and automatically benefit from the new model.

Apex is not available as a standalone model or through an external API. It is accessible only through Fin, meaning businesses cannot license the model independently or integrate it into their own products. That constraint may limit Intercom’s ability to monetize the model beyond its existing customer base—but it also keeps the technology proprietary in a practical sense, regardless of what the underlying base model turns out to be.

What’s next

Intercom plans to expand Fin beyond customer service into sales and marketing—positioning it as a direct competitor to Salesforce’s Agentforce vision, which aims to provide AI agents across the customer lifecycle.

For the broader SaaS industry, Intercom’s move raises uncomfortable questions. If a 15-year-old customer service company can build a model that outperforms OpenAI and Anthropic in its domain, what does that mean for vendors still relying on generic API calls? And if “post-training is the new frontier,” as McCabe insists, will companies claiming breakthroughs face pressure to show their work—or continue hiding behind competitive secrecy while touting transparency?

McCabe’s answer to the first question, laid out in a recent LinkedIn post, is stark: “If you can’t become an agent company, your CRUD app business has a diminishing future.”

The answer to the second remains to be seen.

Tech

Ring's new doorbells bring 2K and 4K video to battery models

The most advanced of the new models, the Ring Battery Video Doorbell Pro 2nd Gen, is priced at $249.99 and features 4K video and a 10x digital zoom. The Battery Video Doorbell Plus 2nd Gen, at $179.99, offers 2K video resolution and 6x zoom. A third model, the $99.99 Battery…

Read Entire Article

Source link

Tech

5 Car Names That Have Been Used By Multiple Manufacturers

Car manufacturers often strive to give their models unique names so that they can stand out in a crowded market. These could be as simple as a string of letters and numbers to indicate a model’s position in a brand’s lineup, like Audi, BMW, and Mercedes-Benz, or they could follow a company’s traditional naming structure, like how many Lamborghini models often reference bullfighting. There are also quite a few model names that have a special meaning attached to them.

But despite their efforts to create unique names, there are a few model names that have been used by multiple manufacturers. Note that these aren’t cars that have simply been rebadged to have a different logo on their grille but have kept their model name, like the Buick/Opel Cascada. Instead, we’re looking at car names that have been used by multiple car makers that aren’t related to each other at all.

There are multiple reasons for this — one infamous example is the Pontiac GTO, which was specifically named to evoke the performance of the legendary Ferrari GTO. Another explanation for the same model names is that they’re inspired by a place or a body style, or it could be that it’s been years, if not decades, since a particular name was last used, so current buyers are unlikely to mistake it for another vehicle. But whatever the case, these are a few car names that have been used by multiple brands.

California

If you’re a fan of sports cars and you hear the “California” model name, Ferrari would likely be the first brand that would come to mind. This name was first used on a car with the prancing horse logo in 1957 when it released the 250 California and was in production until 1963. Ferrari released the 365 California in 1966, but it only produced 14 examples and was only made for a year. The Italian carmaker named this convertible after the Golden State because it wanted buyers to imagine California and its open roads, hoping to sell more examples to the American market.

Ferrari revived the California model name in 2008, this time dropping the numbers and simply calling it “California.” This model still followed the 2+2 convertible formula used by the cars that inspired it but featured a hard-top roof. The carmaker also said that this was its first V8 road car to feature a mid-front layout that delivered excellent handling and performance.

On the other side of the spectrum, Volkswagen also released its own California model in 2005. But instead of a top-down sports car, this one is a campervan based on the VW Transporter. Ironically, despite being named after a U.S. state, you can’t buy this vehicle in the United States. The closest that you can get is the VW ID. Buzz — and even though it’s a rather nice passenger van, it still doesn’t give you everything you need for camping, including a kitchen sink.

Century

The Century model name is often attributed to Toyota, which is the car company’s most premier offering, introduced in 1967. Despite being continuously sold since then, this model has only had three generations and one SUV model — a nod to its timeless elegance, with the latest model still resembling the first one produced nearly 60 years ago. Unfortunately, you cannot get the Toyota Century in the U.S. There is another Century that you can buy, though, but it’s not as luxurious as the one from Japan.

The American model that bears the Century name comes from Buick, and it’s even older than the one from Toyota. The Buick Century arrived in 1936, mating the small body of the Buick Special with a 120-horsepower straight-eight engine from the larger Roadmaster. This gave it an excellent power-to-weight ratio, with some people calling it “the banker’s hot rod.” The American car company released the second-generation Century from 1954 to 1958, and it used the same formula as the original model. In 1973, the third-generation Century arrived, with the car company continuously producing the model until 2005 through the sixth generation.

The Buick and Toyota Century catered to completely different markets. The Toyota Century focuses on comfort and craftsmanship, with the Japanese emperor using one as his official state car. On the other hand, the Buick Century is a bit more focused on performance, with the car brand stuffing the biggest engine it could find in the smallest chassis it has in its lineup. Unfortunately, the styling of the last-generation Century was rather bland, and it also had some engine problems, landing it in our list of Buick models you should steer clear of.

GT

GT stands for Gran Turismo in Italian, or Grand Touring in English, and, in theory, GT cars are designed to travel long distances at high speeds and offer all the luxuries and creature comforts that any driver would want while behind the wheel. Because of this, many carmakers add the GT moniker to their cars to indicate a sportier or performance-focused trim of an existing model. However, a few carmakers have decided to use “GT” as an actual model name instead of using it to denote a premium variant.

One of the most popular GT models is the Ford GT, which the company first introduced in 2004 to celebrate its 100th anniversary and pay homage to the Le Mans-winning GT40. The American carmaker eventually introduced a second generation in 2017, before ending production in 2022. But even though the Ford GT technically fits the description of a grand tourer, it’s more of a supercar than a GT. A better example would be the Mercedes-AMG GT — although the 2025 model is one of the fastest AMG models ever made, it’s still quite comfortable and luxurious.

We’ve also seen other European manufacturers use the GT badge, with the Opel GT being one of the most underrated German cars that deserves more attention. This two-door coupe, made from 1968 to 1973, somewhat resembles a Chevrolet Corvette, and it also received a second-generation model from 2007 to 2010 as a rebadged Saturn Sky/Pontiac Solstice. Italian manufacturer Alfa Romeo also had a GT model from 2003 to 2010, but because it was based on a compact hatchback, it lacked the space buyers wanted from a true GT.

Monza

The Ferrari Monza is one of the most interesting models to come out of the prancing horse’s stable. This vehicle comes with several cool features that any car enthusiast and collector would love, like its “virtual windscreen” and its single carbon-fiber seat for the SP1 model. The Ferrari Monza is inspired by the brand’s racing cars from the 1950s, with its retro styling and an 809 hp 6.5-liter V12 engine designed to make the car go from 0 to 62 mph in under 3 seconds.

On the flip side, Chevrolet produced a subcompact two-door muscle car from 1975 to 1980 bearing the same name. Although this isn’t some exotic sports car that cost millions of dollars, it’s still one of the most underappreciated Chevy muscle cars you can find. Even though it shared the same platform as the Vega, the American carmaker used the profile of the Ferrari 365 GTC/4 as an inspiration for this vehicle and even named it after an Italian racetrack.

The Chevrolet Monza had quite a good run in motorsport, with the vehicle being favored by drag racers for its small size and good aerodynamics, and also winning a couple of IMSA Camel GT titles — presumably with custom or tuned engines. But because it arrived in the mid-1970s, that meant it was caught at the height of the Malaise Era. Factory models came with anemic engine options, with tests showing that it took more than 13 seconds for the Monza to hit 60 mph from a standstill and required nearly 20 seconds to finish the quarter mile.

Sebring

Although it’s not as popular as the 24 Hours of Le Mans, the 12 Hours of Sebring is still one of America’s most iconic endurance races, beginning in 1952. It’s for this reason that we see two models from different carmakers sport this name — Chrysler and Maserati. The Maserati Sebring is one of the classic cars from the 1960s that no one talks about today, although it still fetches six-digit bids at auctions. The Italian carmaker released this model in 1962 to commemorate its success at the Sebring endurance race, and it was in production until 1968. Despite that relatively long period, Maserati only made fewer than 600 Sebrings, making it quite a rare vehicle.

Chrysler also made its own Sebring from 1995 until 2010. But unlike the Italian Sebring, which had a limited production run, this midsize model is a mass-market car designed to compete against the likes of the Honda Accord and Toyota Camry. The Chrysler vehicle is available either as a sedan or a coupe, and you can also get the latter as a convertible if you like feeling the wind in your hair. In 2011, the American carmaker discontinued the Sebring name and replaced it with the 200, although the 200 is a heavily revised Sebring and not an all-new model.

Tech

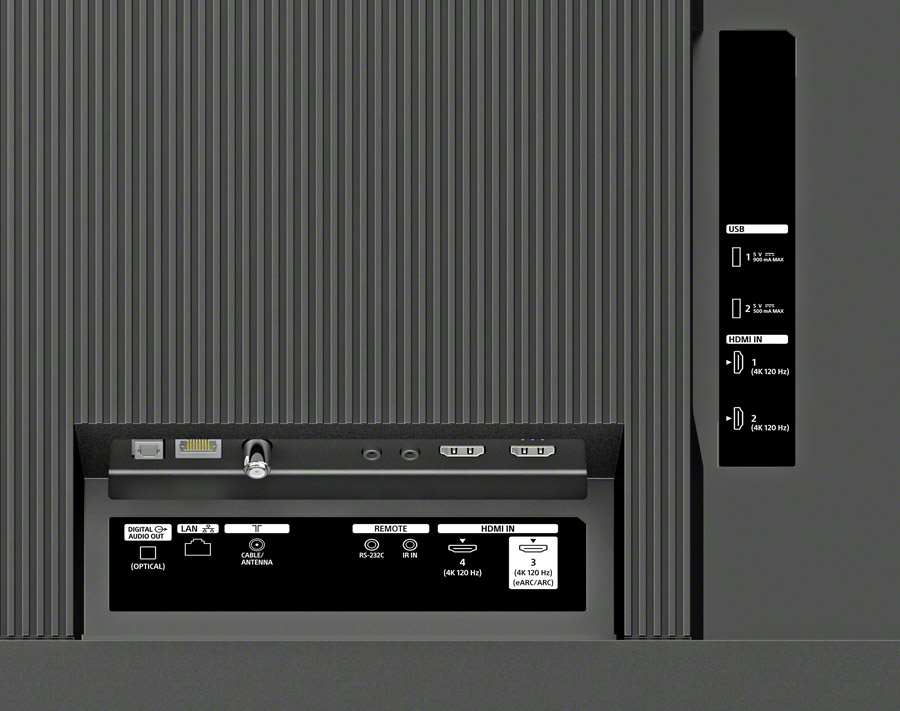

Sony Just Put Their Best Video Processor Inside a Budget-Friendly TV: Meet the BRAVIA 3, Mark II

Along with a slew of new home theater audio products, Sony just dropped some pretty big TV news. The company’s top BRAVIA XR video processor, including XR Triluminos Pro color processing, is trickling down to a new price point in the budget-friendly “foundation series” BRAVIA 3, Mark II LED/LCD TV.

Sony is well known for their video processing prowess, turning low quality streaming and low definition videos into something that is quite watchable, even on the largest screens. To see it included in a more affordable model is encouraging for those who want a high quality big screen TV that doesn’t break the bank.

The BRAVIA 3 II will be available in screen sizes from 43 inches up to a whopping 100 inches diagonal. And the prices are surprisingly affordable starting at $599.99 (MSRP) for the 43-inch model up to $3,099 (MSRP) for the 100-incher.

The BRAVIA 3, Mark II is said to offer a “wide color gamut” and is built on the Google TV platform for access to thousands of streaming apps. It supports Dolby Vision and HDR 10 HDR as well as both Dolby Atmos and DTS:X surround sound. It even supports the IMAX Enhanced DTS:X soundtracks on Disney+ and the Sony Pictures Core app.

The TV includes Google Gemini AI on-board for intuitive natural language-based search. All four of the HDMI ports include HDMI 2.1 with support for sources up to 4K/120 Hz. The BRAVIA 3, Mark II also features a simpler redesigned remote control designed with accessibility in mind.

The Bottom Line

Frankly we don’t have a lot of information on the new set yet, and haven’t seen one in person, but with Sony’s best processor on board, the BRAVIA 3, II should provide a nice upgrade to the budget-priced models from the popular Chinese and Korean brands. We’ll share more and we know more.

Pricing & Availability

The BRAVIA 3, Mark II will be available this Spring at Best Buy, Amazon, the Sony store and other Sony-authorized retailers at the following prices:

- 43-inch BRAVIA 3, Mark II: $599.99 USD / $849.99 CAD

- 50-inch BRAVIA 3, Mark II: $699.99 USD / $979.99 CAD

- 55-inch BRAVIA 3, Mark II: $799.99 USD / $1,099.99 CAD

- 65-inch BRAVIA 3, Mark II: $899.99 USD / $1,299.99 CAD

- 75-inch BRAVIA 3, Mark II: $1,199.99 USD / $1,699.99 CAD

- 85-inch BRAVIA 3, Mark II: $1,599.99 USD / $2,299.99 CAD

- 100-inch BRAVIA 3, Mark II: $3,099.99 USD / $4,399.99 CAD

Related Reading:

Tech

Want to watch the Seattle Mariners? Here are the TV and streaming options as Opening Day arrives

The Seattle Mariners open the 2026 season tonight at T-Mobile Park against the Cleveland Guardians. And perhaps the biggest question on the minds of fans, apart from whether the team will make another playoff run, is how in the heck to watch the games on TV or online.

It hasn’t been easy to figure out. But for fans who can’t be there in person, there are finally some answers to where and how you can see the team.

The Mariners announced more details on Wednesday for traditional cable and streaming viewers in the Seattle area and across the Pacific Northwest, including:

- Comcast/Xfinity subscribers can watch games on channel 1261.

- Charter/Spectrum subscribers in Seattle/Tacoma market can watch on channel 414.

- DirecTV watchers can turn to channel 687.

The Mariners listed other cities and providers in the team’s home territory — Washington, Oregon, Idaho, Montana, Alaska — in a Wednesday post on X, below.

“Fans who are already subscribed to providers carrying Mariners TV will automatically see the channel populate into their channel lineup,” the team said.

The Mariners also enabled a “channel finder” on their website that lets fans search by zip code to find available providers and channel information in their area.

I hopelessly searched for YouTube TV, the service I use, on Thursday morning, and got this message: “Sorry, but the Seattle Mariners are not being offered by YouTube TV at this time. Please call them directly or click below to let them know you want the Seattle Mariners added to your channel lineup.”

Those without a broadcast provider in the mix can turn to Mariners.TV, the direct-to-consumer, standalone streaming option. Fans can stream games with no local blackouts via web or the MLB App on mobile or smart TV devices for $99.99/season or $19.99/month.

Fans who live outside the Mariners home market will be able to watch games with the standard MLB.TV package.

The team’s TV guide also lists games this season that will be shown exclusively on Apple TV, Peacock, NBC and elsewhere. For instance, this weekend’s series against Cleveland features two games on Mariners TV (Thursday, Saturday), one on Apple TV (Friday) and one on Peacock (Sunday).

The release of the details comes just hours before the first pitch of the new season, and some fans commenting on the team’s social media post appeared to have run out of patience. Those who purchased Mariners.TV only to find out that it’s now included in their cable/satellite/internet TV subscription, can request a refund, the team said.

The Mariners shut down Root Sports Northwest at the end of the 2025 season and announced that games would be produced and distributed by Major League Baseball for streaming, cable and satellite TV.

Tech

Bad news skeptics – GitHub says it will employ user data to train its AI after all

- GitHub rolls out on-by-default AI user data training, with optional opt-out

- Business, Enterprise and some other platforms are excluded from the change

- The company explains that users’ real-time, live data is crucial for good training

GitHub Chief Product Officer Mario Rodriguez has announced that the platform will be using user data to train its AI models, operating on an opt-out basis that automatically subscribes users into the data collection system.

The change won’t just affect Free users, but also Pro and Pro+ – Copilot Business, Enterprise, student accounts and teacher accounts will be exempt from the new user data training change.

The company blog post adds AI-generated content as well as user feedback and interactions will all go into training the AI models.

Article continues below

GitHub will use your data to train its AI models, it confirms

Some of the elements that will go into training GitHub’s AI include: inputs, like prompts and snippets of code; outputs, including accepted content and edited suggestions; code context; comments and documentation; file names and repo structures; Copilot interactions and even feedback like thumbs up/down.

As well as the account types mentioned above and those who opt out, there is one third and final category of user who will be exempt from the training change. “Content from your issues, discussions, or private repositories at rest,” Rodriguez writes, carefully pointing out that even private repos can be used if a user is actively using Copilot.

The company is keen to point out that real-world interaction data vastly improves model training, thanking users who choose to share their data.

“We believe the future of AI-assisted development depends on real-world interaction data from developers like you,” the CPO added.

GitHub publicly stating its position on user data training is an important step, but while users are given the option to opt out, many are still unhappy about the on-by-default setting.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

-

Crypto World6 days ago

NIO (NIO) Stock Plunges 6.5% as Shelf Registration Sparks Dilution Worries

-

Fashion6 days ago

Fashion6 days agoWeekend Open Thread: Adidas – Corporette.com

-

NewsBeat1 day ago

NewsBeat1 day agoManchester United reach agreement with Casemiro over contract clause amid transfer speculation

-

Politics6 days ago

Politics6 days agoJenni Murray, Long-Serving Woman’s Hour Presenter, Dies Aged 75

-

Crypto World5 days ago

Crypto World5 days agoBest Crypto to Buy Now: Strategy Just Spent $1.57 Billion on Bitcoin During Fear While Early Investors Quietly Enter Pepeto for 150x Potential

-

Crypto World5 days ago

Crypto World5 days agoBitcoin Price News: Bhutan Sells $72 Million in BTC Under Fiscal Pressure, but the Smart Money Entering Pepeto Sees What the Market Does Not

-

Tech7 days ago

Tech7 days agoinKONBINI Lets You Spend Summer Days Behind the Register

-

News Videos17 hours ago

News Videos17 hours agoParliament publishes latest register of MPs’ financial interests

-

Sports3 days ago

Sports3 days agoRemo Stars and Kano Pillars Strengthen Survival Hopes in NPFL

-

Politics7 days ago

Politics7 days agoGender equality discussions at UN face pushbacks and US resistance

-

Business4 days ago

Business4 days agoNo Winner in March 21 Drawing as Prize Rolls to $133 Million for Next

-

Sports3 days ago

Sports3 days agoGary Kirsten Accuses Pakistan Cricket Board Of ‘Interference’, Mohsin Naqvi Responds

-

Tech4 days ago

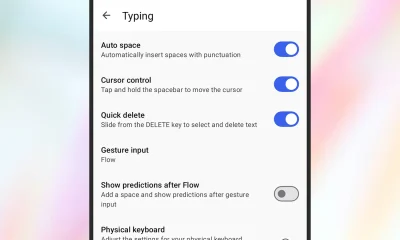

Tech4 days agoGive Your Phone a Huge (and Free) Upgrade by Switching to Another Keyboard

-

Sports6 days ago

Sports6 days ago2026 Kentucky Derby horses, odds, futures, preview, date: Expert who nailed 12 Derby-Oaks Doubles enters picks

-

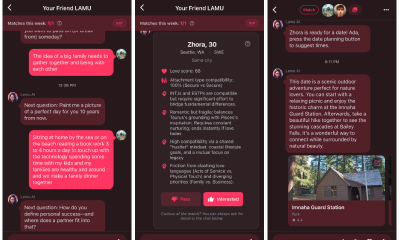

Tech4 days ago

Tech4 days agoAI enters the chat: New Seattle dating app relies on tech to facilitate meaningful human connections

-

Business7 days ago

Business7 days agoDLocal: Entering 2026 At Escape Velocity

-

Business6 days ago

Columbia Sportswear enters $500 million credit agreement with JPMorgan Chase

-

Tech5 days ago

Tech5 days agoToday’s NYT Connections Hints, Answers for March 22 #1015

-

News Videos3 days ago

News Videos3 days agoCh 9 Financial Management Part 1 | Detailed One Shot | Class 12 Business Studies Boards 2026

-

Business4 days ago

Business4 days agoWill Duke Basketball Win It All? Duke Basketball Enters Second Round as Third Favorite to Claim NCAA Title

You must be logged in to post a comment Login