Just over a year ago, OpenAI co-founder Andrej Karpathy coined the term “vibe coding” and it’s exactly what it sounds like. In a post on X, he wrote that it’s where “you fully give in to the vibes, embrace exponentials, and forget that the code even exists.”

Tech

What is vibe coding? AI coding with Claude, Codex, and Gemini, explained

Since then, coders from all backgrounds — and folks with zero experience — have tapped into their vibes to make apps and websites. Vibe coding platforms, powered by AI models like Claude, Codex, and Gemini, have gained traction as a way to give normies a toolset to code whatever they want, without writing a single line of script.

Tech behemoths like Amazon and bustling Silicon Valley startups even have their coders using it. It’s doing the grunt work for now, but they say it’s opening up a whole new world of possibilities. One possibility: It takes their job. But it’s a trade-off that some of them are willing to make.

Clive Thompson wrote a book about this and spent time with over 70 vibe coders to understand how the technology is upending the industry and if this is the end of computer programming as we know it. On Today, Explained, co-host Sean Rameswaram dug into these questions and even vibe coded a simple website while doing it.

Below is an excerpt of the conversation, edited for length and clarity. There’s much more in the full podcast, so listen to Today, Explained wherever you get podcasts, including Apple Podcasts, Pandora, and Spotify.

You spent a lot of time hanging out with coders who were vibe coding. And from what I could tell from reading your piece in the New York Times Magazine is that they’re not vibe coding the same way that I was vibe coding.

No, they’re doing something that’s a lot more aggressive and ambitious. What they’re doing is they are using multiple agents, kind of swarms of agents at the same time. If they’re using Claude Code or Codex or Gemini they will have it wired into their laptops. Those agents can create files, destroy files. They can take code that’s been written, they can push it live into production in the world.

And they will also work little teams. So when they want to create a piece of software, sometimes they’ll write, like, a spec, like a page saying, “Here’s what I want to do.” Or sometimes they’ll just talk to the agent. But they’ll be kind of talking to the lead agent that’s going to be the head of the team and they’ll talk to it and say, “Here’s what I want you to do. What do you think? Give me your ideas.” And they’ll sort of go back and forth generating a plan. And when they’re confident that this top agent understands what is to be done, they’ll say, “All right. Go do it.”

And that one will spawn off several subagents. It will have one agent that’s writing code, another one that is testing the code. It’s quite wild to watch them do this. And sometimes if it does something wrong, they’ll have to yell at it. They’ll be like, “This is unacceptable.” Or they’ll say things like, you know, “This is embarrassing. You’re humiliating me.”

And I said to him, “What’s up with that? Does that language improve the sort of output of these agents?” And he was like, “I couldn’t prove it. But generally we find that when we sort of reprimand them a little bit, they become a little more reliable.”

Can you help us understand just how much time, money, human labor is being saved by vibe coding at the level that you observed?

Yeah, it can be really significant. They’re most significant when someone is building something new from scratch. The startup founders, one- or two-person, three-person shops, they’re like, “I need to get to market fast. There might be 10 other people with this idea. I got to beat them.” It’s dizzying. Some of those people were telling me that they were working 20 times faster than they would on their own. Stuff that would normally have taken them a day now takes half an hour.

But at a very large and mature company like Amazon or Google, you’ve got billions of lines of existing code and if one little part of it stops working, that could cascade through everything. So those folks are definitely using the agents, but they are less likely to be pushing stuff rapidly out. They’re more likely to be looking carefully at it and putting it through what’s known as code review, where multiple humans look at it and go, “Oh, okay, does that work?” So for them, basically it’s like a 10 percent improvement in terms of the velocity of productivity of the engineers, how fast they go from having an idea to making it happen.

And what’s really interesting, and you may have discovered this too, in your vibe coding: a lot of engineers told me that it was even less about speed than about the ability to experiment with a bunch of ideas and see which one might really work.

In the before times, you’d have an idea for a feature. Are you really going to spend six weeks developing it just to discover that it’s not really what you thought it was going to be?

Now, well, let’s just do 10 different versions of that over the next week and let’s look at all of them and then we can pick the one we want. You might not necessarily have gone faster, but the feature that you’ve got is exactly the one you wanted and you know because you held it in your hands.

A lot of tech layoffs in the past few years, and now we’re talking about how vibe coding has dramatically overturned the norms in engineering. How are developers feeling about that?

Well, here’s the thing. So there is definitely a civil war insofar as there is the majority of people that I spoke to, and I reached out to a very wide array — I talked to 75 developers.

And I actively wanted to talk to ones that didn’t like AI because I wanted to know their feelings. It’s a minority of people that are really hotly opposed, but they’re very, very strongly opposed. They don’t like the fact that these are trained on stolen materials. They don’t like the fact that it uses tons of energy. They don’t like the fact that they think it’s going to de-skill [people].

Why do you think they’re not the majority, when this is so clearly going to replace so many of them and bypass all of their ethical, moral concerns and objections?

I think it’s because for a lot of developers it’s just such a delightful experience in the short term of going from everything being a slow slog to it being like, “Oh my God, all these ideas and things I wanted to do, I can now try them and do them.”

Because it’s fun, basically.

It’s enormously fun. The pleasure of coding used to be that there were a lot of these little wins when you got something working. Those little wins have gone away because you’re not doing that bug fixing, you’re not doing that line writing.

So the big wins are just coming in avalanches and it’s very intoxicating. Also, there are ones who essentially don’t think that those bad labor things are going to obtain. They think there’s a potential that more [jobs] will get created in areas that they have previously been unable to be created.

Give it five years for us. Does this harken the end of computer programming as we know it?

No, I would not go so far as to say that it ends in five years. I do think it becomes something very different potentially. I still think — everyone told me, and I believe — that you still need some understanding of the way a code base works to do the complicated things.

Weirdly, what you might see is something a little different, which is the explosion of code in areas where there is currently none. There’s a bazillion people out there that are code-adjacent. You work in accounting, you are a wizard at Excel, and you can import data if you’re given the ability now to have an agent say, “Okay, could you bring more data in?”

There is going to be this really weird world where there’s a lot of customized software for an audience of two, three people. We have thought of software historically as something that only exists if 10,000 people or a million people want it because it costs a lot of money to make it.

But if you can now start making it for next to nothing, you can start using it the way that we use Post-it notes. Put it all over the place. I need to jot this idea down. I’m going to make this happen. And maybe this software solves one problem for this afternoon and we never use it again. Software starts becoming almost disposable.

Tech

Anthropic says it hit a $30 billion revenue run rate after ‘crazy’ 80x growth

Dario Amodei is not the kind of CEO who talks loosely about numbers. The Anthropic co-founder and chief executive, a former VP of research at OpenAI with a PhD in computational neuroscience from Princeton, has built a reputation for measured public statements — particularly around the financial performance of a company that, until recently, disclosed almost nothing about its business.

So when Amodei took the stage at Anthropic’s Code with Claude developer conference on Wednesday and offered a genuinely striking piece of financial candor, the room paid attention.

“We tried to plan very well for a world of 10x growth per year,” Amodei said during a fireside chat with Anthropic’s chief product officer, Ami Vora. “And yet we saw 80x. And so that is the reason we have had difficulties with compute.”

Anthropic had planned for tenfold growth. But revenue and usage increased 80-fold in the first quarter on an annualized basis, a rate Amodei described as “just crazy” and “too hard to handle.”

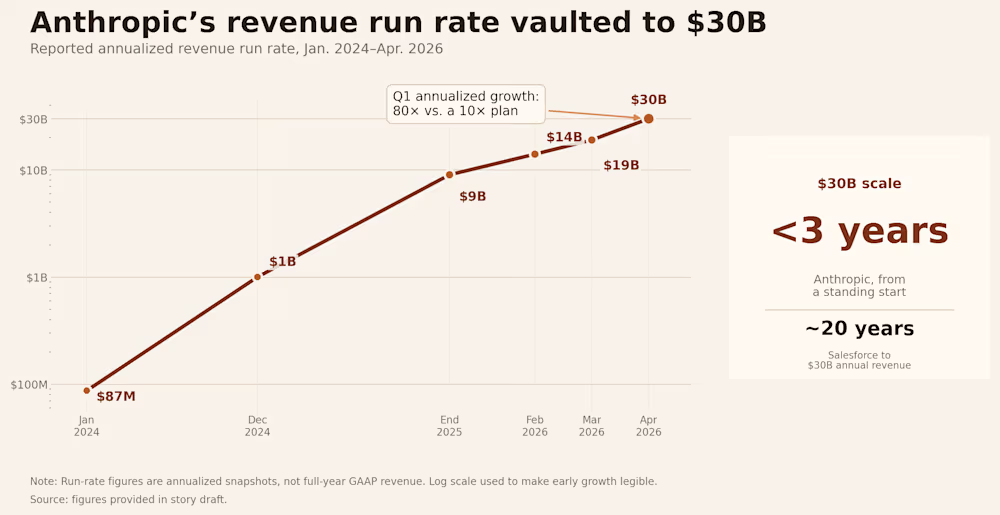

The number demands context. Annualized growth rates can overstate sustained performance — a single strong quarter, extrapolated across a full year, can paint a picture that doesn’t hold. Amodei knows this. But the underlying trajectory is not a mirage. Anthropic has crossed a $30 billion annualized revenue run rate, up sharply from roughly $9 billion at the end of 2025, and that growth is being driven largely by enterprise demand. The company’s revenue trajectory has been relentless: $87 million run rate in January 2024, $1 billion by December 2024, $9 billion by end of 2025, $14 billion in February 2026, $19 billion in March, and $30 billion in April.

For context: Salesforce took about 20 years to reach $30 billion in annual revenue. Anthropic did it in under three years from a standing start.

Claude Code became the fastest-growing product in enterprise software history

The growth story at Anthropic is, to a remarkable degree, a single-product story. Claude Code, the company’s agentic AI coding tool launched publicly in mid-2025, has become the fastest-growing product in the company’s history — and, by several measures, one of the fastest-growing software products ever built.

Claude Code hit $1 billion in annualized revenue within six months of launch, and the growth hasn’t slowed down. By February 2026, the product was generating over $2.5 billion in run-rate revenue. The company also said Claude Code’s weekly active users had doubled since January 1 and that business subscriptions had quadrupled since the start of 2026.

The mechanics of the product are straightforward. Claude Code is not a chatbot that suggests snippets. It reads a codebase, plans a sequence of actions, executes them using real development tools, evaluates the result, and adjusts its approach. The developer sets the objective and retains control over what gets committed, but the execution loop runs independently. The average developer using Claude Code now spends 20 hours per week working with the tool.

At Anthropic itself, the majority of code is now written by Claude Code. Engineers focus on architecture, product thinking, and continuous orchestration: managing multiple agents in parallel, giving direction, and making the decisions that shape what gets built.

That last point may be the most revealing detail Amodei disclosed at the conference: this is the first year Anthropic’s own internal pull requests have inflected upward due to Claude’s work on the company’s own codebase. The tool that Anthropic sells to developers is now a material contributor to Anthropic’s own engineering output. That creates a feedback loop that is almost impossible for competitors without a comparable product to replicate — the company is using its own product to build the next version of its own product.

The enterprise numbers tell the same story. The company now counts over 1,000 enterprise customers spending more than $1 million per year on Claude services, a figure that has doubled since February. Much of this increase has been fueled by a wave of corporate customers including Uber and Netflix.

Amodei framed the adoption curve in economic terms. “Software engineers are the ones who are fastest to adopt new technology,” he said on stage. “It’s a foreshadowing of how things are going to work across the economy, and how the economy is going to be transformed by AI.”

Anthropic’s 80x growth created a compute crisis it couldn’t solve alone

Hypergrowth creates its own category of problem. When demand outstrips supply by an order of magnitude, the constraint is not go-to-market strategy or product-market fit. The constraint is physics.

The company is growing so fast that its infrastructure has struggled to keep up, forcing Anthropic into what may be the most unexpected partnership in the current AI cycle. Amodei’s comments came hours after Anthropic announced a deal with Elon Musk’s SpaceX to use all of the compute capacity at his company’s Colossus 1 data center in Memphis, Tennessee. As part of the agreement, Anthropic will get access to more than 300 megawatts of capacity — over 220,000 Nvidia GPUs, including dense deployments of H100, H200, and next-generation GB200 accelerators.

The deal is remarkable for several reasons. Musk has been, until very recently, one of Anthropic’s most vocal critics. He has said Anthropic is “doomed to become the opposite of its name” and wrote in February that “Anthropic hates Western Civilization.” But on Wednesday, Musk changed his tune, saying he spent a lot of time with senior members of the Anthropic team over the past week and that he was “impressed.” “Everyone I met was highly competent and cared a great deal about doing the right thing. No one set off my evil detector,” Musk wrote.

The strategic logic on both sides is clear. xAI’s Colossus 1 ended up with capacity that Grok’s user base never grew into, while Anthropic needs compute immediately. Anthropic has been signing deals with Amazon, Google, Nvidia, and Microsoft for more compute capacity, but most of that isn’t expected to come online until late 2026 or early 2027. The SpaceX deal gives Anthropic a significant boost now — the key word being “now.”

As one industry watcher summarized the alignment: “Elon’s enemy is Sam. Dario’s enemy is Sam. Enemy of my enemy is a compute partner.”

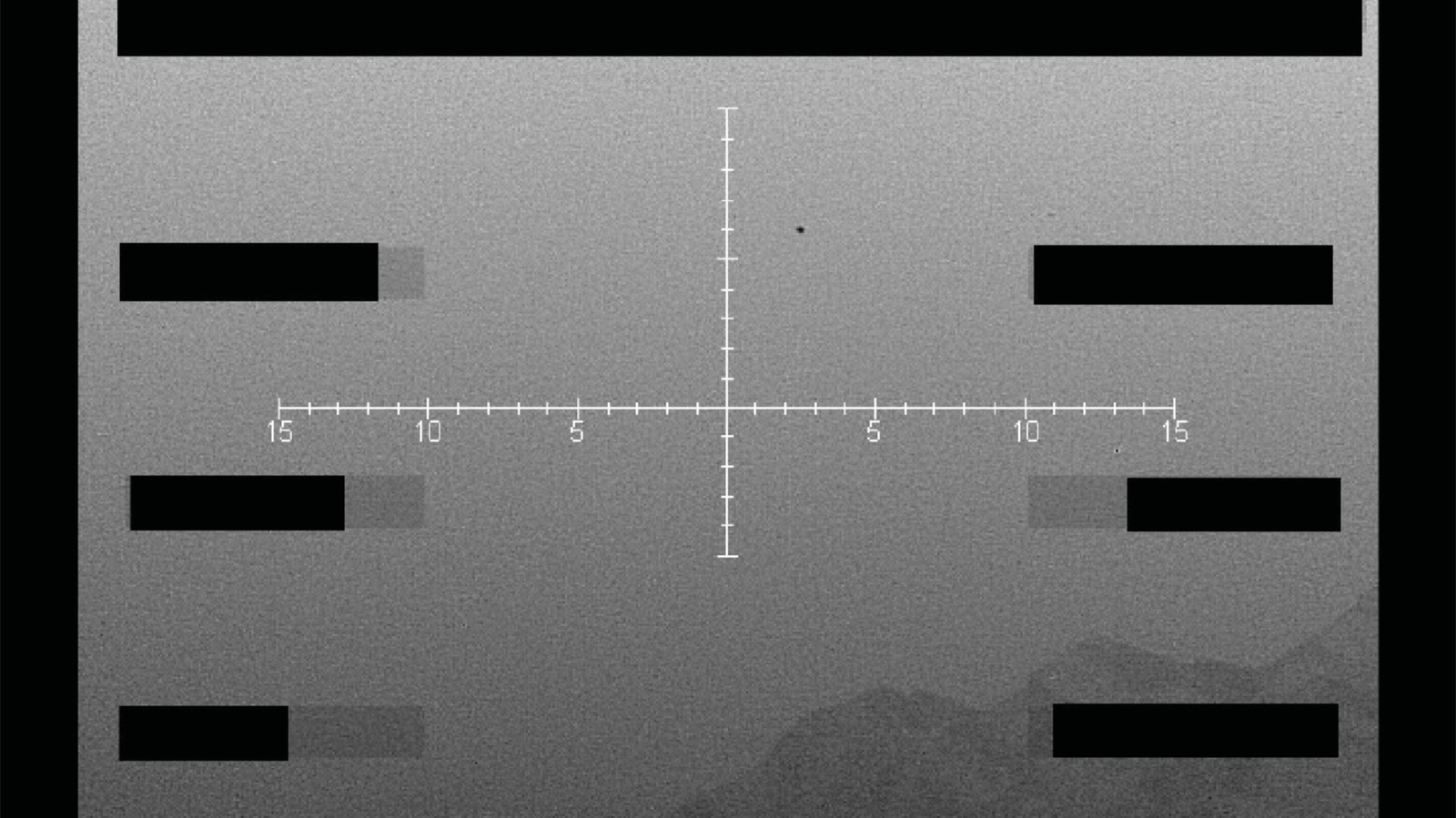

Last month, Anthropic said demand for Claude has led to “inevitable strain on our infrastructure,” which has impacted “reliability and performance” for its users, particularly during peak hours. The company admitted in a postmortem from late April that three bugs had affected Claude Code since March 4, and that internal tests hadn’t caught them, leading to several weeks of degraded performance. Amodei said at the Code with Claude conference that the company is “working as quickly as possible to provide more” capacity and will “pass that compute on to you as soon as we can.”

A near-trillion-dollar valuation makes Anthropic’s IPO the most anticipated debut in years

The growth figures arrive at a moment when Anthropic’s valuation is itself becoming one of the defining financial stories of the AI era.

Anthropic has begun weighing a fresh funding round that would value the company at more than $900 billion, according to people familiar with the matter, potentially leapfrogging its longtime rival OpenAI as the world’s most valuable AI startup. The velocity of the escalation is difficult to overstate. From $61.5 billion in March 2025, to $183 billion by its Series F in September, to $380 billion in February, to, if the current discussions proceed, more than $900 billion in May. Anthropic’s shares were already trading at an implied $1 trillion valuation on secondary markets earlier this month.

Instead of cashing out, many existing investors are waiting to potentially exit during Anthropic’s anticipated IPO later this year. The company is raising what is likely to be its last private round before going public to fund its massive computing needs. Bloomberg has reported that the company is weighing an IPO as early as October 2026, with Goldman Sachs, JPMorgan, and Morgan Stanley already in early discussions.

Anthropic is also building out infrastructure on longer time horizons. Amazon has agreed to invest up to $25 billion in Anthropic, securing up to 5 gigawatts of compute capacity for training and deploying Claude models. Anthropic also secured 5 gigawatts of computing capacity as part of a separate deal with Google and Broadcom that will start to come online next year. The total commitment is staggering — tens of gigawatts of compute across three separate hardware ecosystems: Amazon’s Trainium chips, Google’s TPUs via Broadcom, and Nvidia GPUs through SpaceX and Microsoft Azure.

For perspective: Anthropic’s $30 billion run rate exceeds the trailing twelve-month revenues of all but approximately 130 S&P 500 companies. A company that was essentially pre-revenue in early 2024 now out-earns most of the Fortune 500.

That comparison comes with caveats. Private-market revenue run rate is not the same thing as audited GAAP revenue, gross margin, free cash flow, or public float. OpenAI has internally argued that Anthropic’s $30 billion figure is overstated by roughly $8 billion, pointing to questions about whether revenues from AWS and Google Cloud should be reported at gross value or net of the partner’s cut. The accounting question will ultimately be resolved when both companies file IPO prospectuses — but even on a net basis, Anthropic’s growth rate is unlike anything in enterprise software history.

Dario Amodei’s vision for AI extends far beyond coding — and he’s given himself a deadline

The financial story — 80x growth, a near-trillion-dollar valuation, a scramble to secure enough GPUs to meet demand — is dramatic on its own terms. But Amodei used his time on stage to place it inside a larger thesis about where AI is headed.

He described a progression from single agents to multiple agents to what he called whole organizational intelligence — from “a team of smart people in a room” to “a country of geniuses in the data center.” The framing is deliberately expansive. What Anthropic is selling today is a coding tool. What Amodei is describing is a future in which entire categories of knowledge work are performed by fleets of AI agents operating in parallel, supervised by humans who define objectives and review outputs.

He reiterated a prediction he made roughly a year ago: that 2026 would see the first billion-dollar company run entirely by a single person. “Hasn’t quite happened yet,” he said. “But we’ve got seven more months.”

The company has also been navigating political headwinds. The Pentagon declared Anthropic a supply chain risk in March, blacklisting it from work with the military. The company has warned the designation could result in billions in lost revenue, with over one hundred enterprise customers reportedly expressing doubts about continuing their relationships.

And yet — as that scuffle makes its way through the legal system, Anthropic is only getting more popular. Amodei said this week he’s eventually hoping for “more normal” expansion.

There is a temptation, when covering a company growing at this rate, to let the numbers speak for themselves. They shouldn’t. Growth at 80x annualized is not a business plan — it’s an emergency. It means demand has outrun infrastructure, that customers want something the company cannot yet reliably deliver at scale, and that every week of constrained capacity is a week during which competitors can close the gap.

The investors funding Anthropic — including SoftBank, Amazon, Nvidia, Google, a16z, Lightspeed, and ICONIQ — are making a specific bet: that compute costs continue to fall per unit of intelligence, that revenue keeps compounding faster than burn, and that whoever owns the AI infrastructure layer in 2029 will generate returns that make the interim losses irrelevant.

Amodei’s candor at Code with Claude was not a victory lap. It was a diagnostic — an admission that his company is running faster than it can steer. He planned for a world of 10x growth and got 80x instead. Now he has seven months to prove that the infrastructure, the organization, and the vision can catch up to the demand. The country of geniuses in the data center is getting crowded. The question is whether anyone remembered to build enough rooms.

Tech

Microsoft is adding a feature that briefly maxes out your CPU to make Windows feel snappier

According to insider sources, Microsoft engineers are working on a new feature called “Low Latency Profile” (LLP) aimed at improving Windows 11’s performance in certain critical, system-wide tasks. The change is already present in recent preview builds distributed to Windows Insider participants, meaning enthusiast users can enable and test it…

Read Entire Article

Source link

Tech

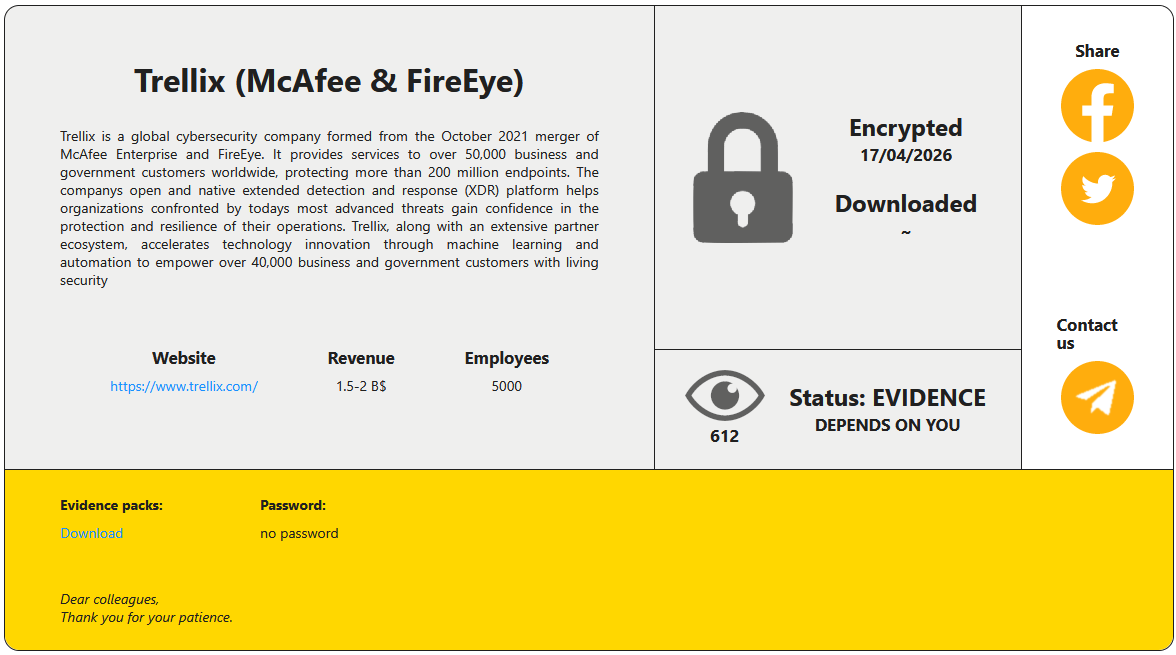

Trellix source code breach claimed by RansomHouse hackers

The attack on the Trellix source code repository disclosed last week has been claimed by the RansomHouse threat group, which leaked a small set of images as proof of the intrusion.

Yesterday, the threat actor published on their data leak site screenshots indicating access to the cybersecurity company’s appliance management system. However, BleepingComputer could not confirm the authenticity of the data.

Trellix is an international cybersecurity firm with global Fortune 100 customers. In 2025, the company had more than 53,000 customers in 185 countries and 3,500 employees.

The company confirmed the breach in a statement on May 1st and said that it was investigating the incident. “Trellix recently identified unauthorized access to a portion of our source code repository. Upon learning of this matter, we immediately began working with leading forensic experts to resolve it,” stated Trellix.

“We have also notified law enforcement. Based on our investigation to date, we have found no evidence that our source code release or distribution process was affected, or that our source code has been exploited.”

At the time, BleepingComputer’s request for details went unanswered, and the company did not disclose any information about the perpetrators.

Following a new request for comments after RansomHouse’s disclosure, Trellix told BleepingComputer that it was “aware of claims of responsibility for the attack and are looking into it.”

According to the threat actor, the intrusion occurred on April 17 and resulted in data encryption.

Source: BleepingComputer

RansomHouse is a cybercrime group that launched in 2022 as a data-extortion operation, listing victims on a darkweb portal and leaking or selling data stolen from their corporate networks.

Over time, the threat actor added more advanced encryption utilities to their toolkit, such as ‘Mario,’ which performs a dual-encryption pass with two keys on target files, and ‘MrAgent,’ which automates the deployment of encryptors on VMware ESXi hypervisors.

A recent high-profile case involving RansomHouse was that of Japanese e-commerce giant Askul Corporation, from which the threat group stole 740,000 customer records, among other sensitive information.

Trellix’s investigation is still underway, and the company previously promised to share more details once they become available.

AI chained four zero-days into one exploit that bypassed both renderer and OS sandboxes. A wave of new exploits is coming.

At the Autonomous Validation Summit (May 12 & 14), see how autonomous, context-rich validation finds what’s exploitable, proves controls hold, and closes the remediation loop.

Tech

Helion makes big bet on ‘Tiny Merge’ fusion testbed to meet aggressive Microsoft timeline

EVERETT, Wash. — With just three years left on a hard deadline to prove its fusion approach works, Helion Energy is still wrestling with fundamental questions — and it’s building a new, smaller machine to help find answers faster.

Since launching more than a decade ago, Helion has built increasingly larger prototype devices to test and refine its fusion technology as it races to deliver a source of nearly limitless clean energy. But by 2028, Helion is contractually obligated to have a commercial facility producing energy from fusion reactions, essentially replicating the physics that power the sun.

So now it’s going small.

The company is building a downsized testbed device called “Tiny Merge,” a machine less than one-eighth the size of Polaris, its seventh-generation and final prototype. The decision reflects the reality that key issues remain that Helion’s larger, more expensive prototypes haven’t fully resolved. These concerns must be addressed before final designs for a power plant can be locked in.

“With this agile testbed, we will be able to test new ideas with much less energy and far fewer resource requirements, meaning we can iterate faster than we can on full-scale machines such as Polaris,” said Michael Hua, Helion’s senior director of radiation safety and nuclear science.

GeekWire got a sneak peek at Tiny Merge during a recent tour of the company’s sprawling R&D facility north of Seattle. Behind massive curtains in a cordoned-off section of the building sits the gleaming, tubular fusion device measuring roughly 8 feet long.

Running parallel to the machine are two rows of tall shelving — heavy-duty versions of what you’d find at a home improvement store — that will eventually hold hundreds of mini-fridge-sized capacitors to store power flowing into and out of the device. Helion plans to have Tiny Merge up and running by the end of the summer, leaving roughly two years to incorporate what it learns into final designs.

The stakes couldn’t be higher. Over in Eastern Washington, Helion has broken ground on Orion, a facility that it hopes will be the first to produce fusion energy at a commercial scale. It’s a feat no one has yet accomplished, though more than 45 companies are trying.

Helion has made the sector’s most aggressive timeline commitment through a deal with Microsoft to supply electricity from Orion for a data center development starting in 2028. Miss that deadline, and Helion faces financial penalties from Microsoft and partner Constellation.

The company is counting on Tiny Merge to help make that big bet pay off.

Fusion works by heating matter and compressing it into a plasma, a superheated state in which atoms are stripped of their electrons. In those extreme conditions, atomic nuclei collide, fuse and release energy. The process holds enormous promise for abundant clean power, but achieving it at scale remains a formidable scientific challenge.

The team’s first tests with Tiny Merge will focus on the formation and merging of plasma rings, said Manav Singh, Helion’s director of electrical engineering. The company has researched this with previous prototypes, Singh said, but new results have prompted further questions. “There’s a few much more deep investigations we want to do,” he added.

Helion and the broader fusion industry have made measurable progress in recent years, with devices hitting new records in temperature and pressure. Companies have poured significant funding into the pursuit, with Helion alone raising more than $1 billion from investors including OpenAI CEO Sam Altman.

But plenty of skeptics remain, arguing that grid-scale fusion energy is still many years away — if it ever arrives.

RELATED: Helion gives behind-the-scenes tour of secretive 60-foot fusion prototype as it races to deployment

Tech

Google’s Gemini Intelligence leak has me excited, but please not that name

While Google is helping Apple upgrade its AI, the search giant may have taken a little too much liking to the Apple Intelligence name. A new leak shared by Mysticleaks on Telegram seems to show “Gemini Intelligence” inside Google’s software running on what looks like a Pixel smartphone.

For now, it is best to take the leak with a grain of salt until there is something more concrete. But if the video is accurate, Google could be preparing the feature for the Pixel 11 series, which is expected to launch around August 2026.

Is Google really calling it Gemini Intelligence?

The irony is almost too rich. Apple Intelligence is Apple’s big bet on making Siri smarter, more personal, and actually useful in the AI age. And yet Apple has signed a multi-year partnership with Google to power next-gen Siri with Gemini models. So Google may simultaneously be fueling Apple Intelligence and launching Gemini Intelligence. That is either very efficient or very silly branding.

Google has already started expanding Gemini’s Personal Intelligence features. These allow Gemini to connect with apps like Gmail, Google Photos, YouTube, and Search to answer questions with a user’s own context. Instead of asking a generic chatbot for help, users can ask for information tied to their emails, photos, saved details, and activity across Google services.

Why would Pixel 11 make sense?

Pixel phones have long been Google’s test bed for AI features, including call screening and AI-powered photo editing tools. If “Gemini Intelligence” is real, Pixel 11 would be the natural place to introduce it as a deeply integrated, phone-level AI layer. We just hope that the name gets a second pass. Assuming, of course, that there’s a name to pass on at all.

Tech

Western Digital Promo Code: 15% Off

Started more than 50 years ago, data storage company Western Digital is one of the world’s largest computer hard disk drive manufacturers, and produces solid-state drives (SSDs) and flash memory devices. Western Digital makes all the essentials for home office and business digital storage, whether you want to back up via cloud storage, easily take your presentation on a USB flash drive to your next important meeting, or upgrade your home security surveillance’s storage system, Western Digital has what you need—and we have promo codes to help you save.

Recycle and Save 15% Off With Western Digital Promo

One of the biggest issues of our modern life is how to responsibly recycle e-waste. That’s why Western Digital makes it easier to recycle your old, broken, or defunct electronics. With Western Digital’s Easy Recycle program, you can safely dispose of NAS systems and internal or external HDDs and SSDs. Plus, they recycle devices from any manufacturer—not just Western Digital products. And when you go green and recycle through their program, you’ll get a 15% off Western Digital promo code that counts towards your next purchase of $50 or more when you shop online at Western Digital.

Get 10% Off With a Western Digital Coupon Code

Right now, you can save 10% on your first order when you sign up to receive emails from Western Digital. All you need to do is head to Western Digital’s promo page, where you’ll input your email to sign up for special offers, promotions, and that Western Digital promo code for 10% off. The code will be sent to your inbox where you can use it to save on tech essentials.

Does Western Digital Have Free Shipping?

Western Digital has even more ways to save, with free standard shipping on eligible orders of $50 or more for non-members. Western Digital members receive free standard shipping on all eligible orders in the lower 48.

Additional Western Digital Deals

Western Digital has education discounts, where students and teachers can get up to 15% off purchases after verifying their status with Youth Discount. Once their identity is verified, they’ll get a voucher code sent to their inbox to use at checkout. Western Digital also has a 15% discount for seniors 55 years or older. Seniors just need to verify their status with Senior Discount. Once age is verified, folks will get a Western Digital promo code sent to their email to save.

In a commitment to sustainability, Western Digital has a program with Easy Recycle, where you can safely dispose of NAS systems and internal or external HDDs and SSDs. (They’ll also recycle devices from any manufacturer, not just Western Digital). As a token of appreciation for participating in their initiative for a greener future, participants can get 15% off their next purchase of $50 or more.

Choosing the Right Western Digital Product

It’s hard to know which is the right digital storage system for you—in fact, we even made a handy guide on How to Back Up Your Digital Life, and have a whole roundup of some of our favorite WIRED-tested external hard drives. In a similar vein, Western Digital created a FAQ webpage on how to choose the right storage drive for your needs, like budget and data. A Western Digital Hardrive is a budget-friendly option that delivers the capacity needed to store years of photos, videos, backups, workloads, and archives. While a Western Digital SSD offers fast and reliable responsiveness for more large-scale operating systems and active projects.

Tech

FlexiSpot C7 Morpher office chair review

Why you can trust TechRadar

We spend hours testing every product or service we review, so you can be sure you’re buying the best. Find out more about how we test.

FlexiSpot continues to shine with great options across a wide range of pricing tiers, making it a bit difficult to pin down a higher-priced offering or a budget option.

The C7 Morpher leans towards the higher end of mid-tier – expect to pay around $800 / £800 when not on sale. It’s a nice enough ergonomic office chair, but it blends in a bit more than some of the best office chairs I’ve reviewed at this price, looking not too dissimilar from other ‘serious’ and ‘professional’ seats.

Depending on what you want and your own design and styling preferences, this may be preferred. I know I prefer simple black or dark grey chairs, unless it’s an accent or statement piece, but that usually comes with elegance. Some people prefer fun colors to liven up their workspace, while others prefer a specific color to match what they already have (or to avoid clashing).

The C7 Morper can fit that niche of looking nice and simple, but not cheap, but it’s still not going to be an elegant statement piece.

Flexispot C7 Morpher: Price and availability

The FlexiSpot C7 Morpher is available for $800 from FlexiSpot.com and £800 from FlexiSpot.co.uk. However, at the time of review, it’s discounted in the US to $650, and FlexiSpot generally run sales on all its office chairs – if you can wait a bit and watch the price, I’d suggest doing so.

Cost-wise, this is akin the excellent Steelcase Series 2, sitting at the upper end of mid-tier (arguably, it’s broaching the premium price-point). What it lacks in design style, it makes up for in comfort features.

Flexispot C7 Morpher: Unboxing and First Impressions

The C7 Morpher arrived in a single, unassuming box that weighed just under 80 lbs. Once we started unboxing we noticed that every piece was individually wrapped in foam to help make sure that the chair gets delivered in good condition, which is something I appreciate as some chair companies skimp on this and then the chairs can sometimes arrive damaged.

With a single person, setup took about 30 minutes from unboxing to fully assembled utilizing the included T-handle Allen wrench — though we didn’t utilize the included gloves this time.

Upon first inspection, my team and I agree that the materials for this chair are on the nicer quality side of the spectrum, especially for this price range. The wheel base and the arms are made from aluminum, while the chair frame is a durable plastic material. The chair seat and back are covered in a comfortable yet durable fabric mesh material that seems like it’ll be able to last quite a while without any signs of wear and tear.

As I’ve mentioned in the past for other chairs, I’m a big fan of mesh backs due to the increased airflow circulation and because I naturally run a bit warmer than the average individual, so I appreciated seeing that on this chair.

Flexispot C7 Morpher: Design & Build Quality

After years now of having leg rests available on chairs and having them become more and more common, I have noticed that I rarely end up actually using it.

However, that could very well just be a personal thing, as I don’t usually use these chairs for anything but work. I’m usually trying to be really intentional with my posture when sitting, but if you’re the kind of person who would utilize it, this is another one of the chairs that has a built-in one that slides underneath the seat when not in use.

Beyond the largest and materials, the other adjustability points are pretty standard for Flexispot chairs, and they’re still overall usually on the more adjustable side when it comes to ergonomic offerings for these office thrones.

Flexispot C7 Morpher: In use

I’ve put this chair through the test with a handful of my team members, some friends, some family, and several others who have walked past that have been interested in my ever-growing chair collection.

While I haven’t tested the capacity up to 380 lb, I have tested the height range with individuals ranging from 5’7″ to 6’2″, and there seems to be wiggle room on both ends for comfortable seating in this chair.

If you plan to use this on a low-pile carpet or a hard floor, the standard casters will be good enough. However, if you want a smoother ride, or if you are on a rougher surface or longer carpet, you will want to upgrade the casters. If you’re interested, FlexiSpot offers this at an additional cost, or you can pick some up on Amazon.

Flexispot C7 Morpher: Final verdict

If you’re looking for a simple chair that will still provide ergonomic comfort for all-day work, but you don’t want to spend an arm and a leg, then this is a chair that’s worth considering. But if you’re the kind of person who wants a more luxurious or elegant-looking chair that perhaps stands out a little bit more – especially at this price, then the C7 Morpher may not be the option for you.

For more office furniture, we’ve tested the best standing desks.

Tech

Department Of War Sets Up UFO Website, But There Isn’t Much To See

The Department of War has announced that it’s published “never-before-seen files” of unidentified anomalous phenomena (UAP) on a new government webpage, and plans to add material on a “rolling basis.” Some Pentagon UAP footage was declassified during President Donald Trump’s first term, but this new page appears to be the results of a February Truth Social post from Trump calling on the DOW and related agencies “to begin the process of identifying and releasing Government files related to alien and extraterrestrial life, unidentified aerial phenomena (UAP), and unidentified flying objects (UFOs).”

The webpage — war.gov/UFO — includes a carousel of images and files from the DOW, FBI, NASA and more, presented in a way that seems to intentionally lean into the conspiratorial nature of UFO fandom in general. You don’t have to spend long clicking through images and downloading PDFs to realize that there’s not much in the way of actual evidence of aliens, though. Whether or not the files are supposed to direct attention away from the other flailing projects of the second Trump administration — a disastrous war with Iran, for example — they’re much more interesting as an example of how a bureaucracy processes and catalogs unexplained phenomena than as a smoking gun that proves extraterrestrials have visited Earth.

Suspicion that the US government knows more about unidentified anomalous phenomena (the term that replaced unidentified aerial phenomena and UFOs) than it’s letting on has been around for decades, but confirmation of formal research into the subject wasn’t made official until the Advanced Aerospace Threat Identification Program (AATIP) was revealed in 2017. AATIP was formed in 2007 to study UAP and later disbanded in 2012, but its work has been carried on by other government groups and task forces, most recently the All-domain Anomaly Resolution Office, an organization currently working inside the DOW that contributed to this new release of files.

The videos of UAP shared during the first Trump administration were unexplained, but ruled by a government report to not be an alien spacecraft. It’s not clear new files released by the DOW will change anything, but they do make for an interesting curio at the very least.

Tech

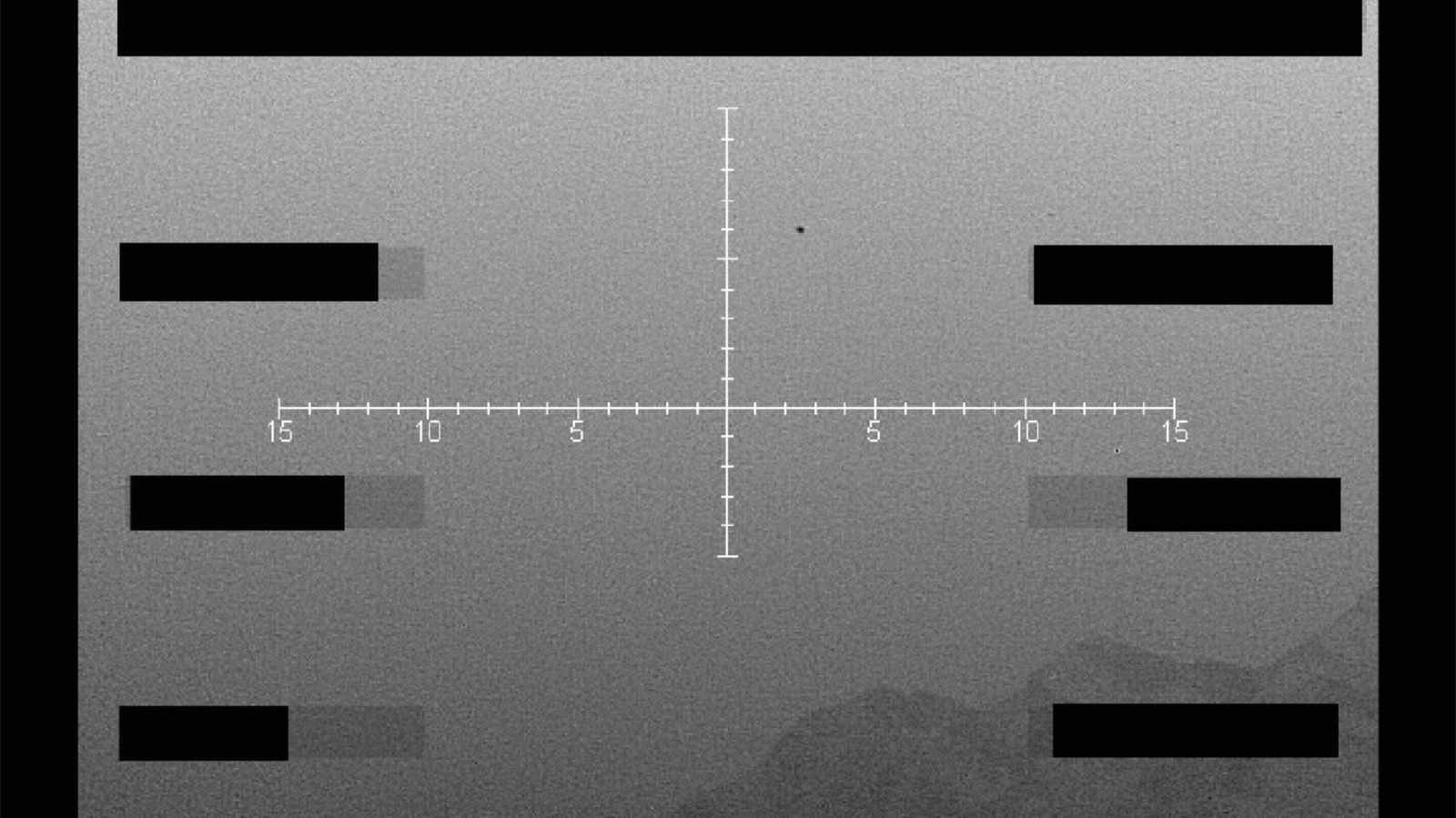

Apple has brought Trump-backed Intel on board for future chips

Intel could soon be making Apple’s chips. Image credit: Intel

Apple has reportedly reached a preliminary agreement that will see Intel become a chipmaking partner, helping reduce the company’s reliance on TSMC for Mac chips and more.

Reports had earlier suggested that the two companies were discussing a deal that would help Apple diversify its supply chain. Now, the pair are closer than ever to manufacturing some of Apple’s chips in the United States.

According to a report Wall Street Journal on Friday, the two companies have been in discussions about the project for more than a year. Significant progress has been made in recent months, however.

It’s currently unclear which Apple devices Intel will produce chips for, however. A report from late 2025 suggested that Apple intended for Intel to produce M-series chips destined for the Mac and iPad lineups.

Trump steps in

The WSJ report notes that Apple’s decision to use Intel comes after President Trump personally advocated for the move. Trump has pushed for more U.S.-based manufacturing of Apple components, and the company has pledged to spend $400 million to make that happen.

The U.S. government previously signed a deal to effectively buy a $9 billion, 10% stake in Intel. Since then, it’s been keen for companies to use Intel wherever possible.

Apple joins Nvidia in giving Intel new business. Nvidia invested $5 billion in Intel in September 2025, with the latter building custom data center CPUs.

Apple currently relies heavily on TSMC to produce the chips for its iPhones, iPads, Macs, Apple Watches, and more. However, manufacturing capacity and Apple’s reliance on a single chipmaker has caused issues of late.

Apple was caught flat-footed by the popularity of the MacBook Neo. A recent boom in Mac mini and Mac Studio popularity has also seen both products become increasingly difficult to buy.

As demand for high-performance chips continues to grow, deals like the one Apple appears to be signing with Intel become increasingly important. As the AI boom requires more silicon than ever, having two companies producing your chips is surely better than one.

Even before Trump’s push to bring manufacturing to the U.S., Apple was aware of its need to divest its supply chain. As far back as the COVID pandemic, Apple found it relied too heavily on Chinese plants.

Since then, Apple has moved more and more manufacturing to other countries, reducing its reliance on China. Both India and Vietnam have been beneficiaries to date, with the U.S. following suit.

Tech

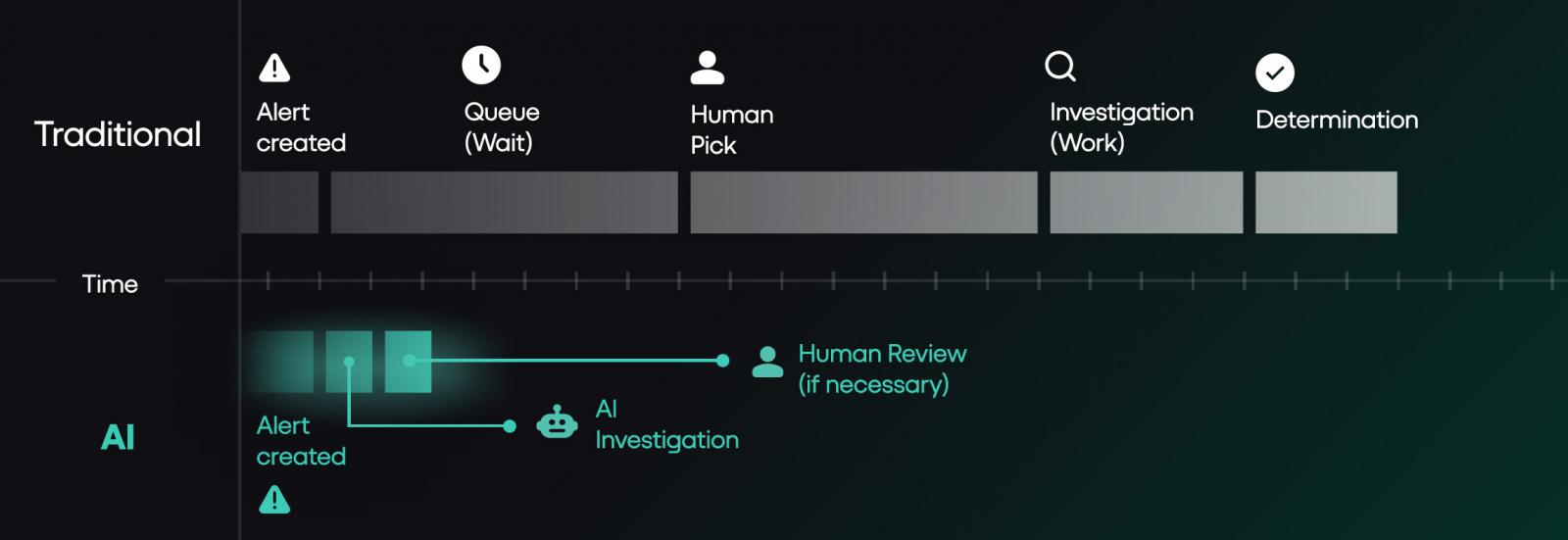

Why More Analysts Won’t Solve Your SOC’s Alert Problem

By Rich Perkins, Principal Sales Engineer, Prophet Security

Your security spend has roughly doubled in six years. Your time-to-investigate and respond hasn’t moved. Your CFO is asking why the security headcount keeps growing while the metrics that matter to the business don’t.

The architecture under your SOC is the reason. Not your team. Not your tooling investment. Not your hiring funnel. The operating model your program inherited assumed human-driven alert triage at the volume the business was producing five years ago, and the business stopped producing alerts at that volume a long time ago.

This is a piece about why hiring more analysts won’t close the gap, what changes when you fix the model instead, and the specific limitations and questions that should shape any AI SOC evaluation. It includes a four-question diagnostic you can run on your own program in the time it takes to finish a coffee.

The math the industry doesn’t want to admit

Google Mandiant’s recent M-Trends reporting puts global median dwell time at 14 days. The same report found that in 2025 the “hand-off” window between initial access and subsequent transfer to secondary threat group collapsed to just 22 seconds, a 95% drop from the 8 hours from 2022. Crowdstrike’s 2026 Global Threat report uncovered similar trends, with the average breakout time falling to 29 minutes, from initial access to exfiltration.

IBM’s most recent Cost of a Data Breach research puts the average time to identify and contain a breach in 2025 at 241 days, with an average cost of $4.88 million. That’s a drop of 16% from 2020, when the time to identify and contain a breach stood at 281 days. Those numbers have not improved at the pace security spending would suggest, despite that spending having roughly doubled in five years, nor have they kept up with the shorter “breakout” or “hand-off” window

This isn’t framed to scare defenders into chasing the next hype. It’s the operating reality. Money in, complexity in, but the curve from detection to investigation and containment barely moves.

SOC teams have already done the obvious efficiency moves. They tier severity. They auto-close known-benign alert classes. They suppress noisy detection rules. They tune. They route. That’s not the problem.

The problem is that even after all of that work, the volume that lands on humans for actual investigation still exceeds what humans can investigate at the depth required. We’ve written an entire ebook on how the SOC queue is the breach, which you can download here.

In the deployments I’ve worked across, the post-tiering volume that hits human triage typically lands in the 120 to 150 alerts per day range. At 20 minutes per investigation including documentation, that’s 40 to 50 analyst-hours daily. SOC teams of 5 to 10 analysts can cover the top of that range during business hours, leaving the rest of the queue for the next shift, the next day, or never.

That’s the gap that doesn’t close with more headcount. You can’t hire enough analysts to investigate 100% of post-tiering volume at the depth the work requires. You can hire your way to better coverage at the margins. You cannot hire your way to the model change.

Most breaches don’t trigger a high severity alert. Instead the first signs appear in a low severity alert that gets buried in a queue no human can clear.

This ebook from Prophet Security breaks down why the alert backlog is the actual attack surface, and what changes when AI investigates every alert.

A diagnostic you can run on your own SOC

Before going further, run these four questions on your program. Honestly. The answers map your SOC capacity blind spots more reliably than any vendor pitch will.

1. What percentage of alerts above your defined investigation threshold did your team actually investigate last quarter? If less than 90%, you have a coverage gap that’s hiding real risk. The gap exists because of how the work flows, not because anyone is dropping the ball. More headcount won’t close it.

2. How many detection rules has your team suppressed in the last 12 months without an engineering ticket to replace the coverage? Suppressing noisy rules is healthy tuning. Suppressing them without follow-up engineering to replace what they were watching is debt. Each undocumented suppression is an attack surface you’ve stopped watching, and the threats those rules were designed to catch don’t go away because you disabled them.

3. What was your senior analyst turnover last year, and how long did each replacement take to reach productive contribution? If turnover exceeds 15% or ramp exceeds 6 months, your bench is fragile. You’re one resignation away from operational impact. Tribal knowledge walking out the door is a single point of failure most programs don’t have a remediation plan for.

4. If alert volume doubled tomorrow, what’s the first thing your team would stop doing? The honest answer is the part of your program that’s already underwater. Whatever you’d cut first is what’s currently holding on by a thread. That’s where to focus the operating model conversation.

If three or more of these answers concern you, the productive conversation moves past hiring and into a different question: whether the architecture under your team can carry the program you actually want to run.

What changes when the model fixes

The teams making real progress aren’t the ones hiring more analysts. They’re the ones changing what work humans are required to do at all.

JB Poindexter & Co, an 8,500-employee diversified manufacturer, deployed Prophet AI in 2025. In the first 60 days, they ran 4,407 investigations through the platform with a mean time to investigate under 4 minutes.

That’s 73 investigations per day at depth, against a Mandiant industry median dwell time measured in days. The deployment returned roughly 1,469 hours of analyst time to their team, equivalent to 6.3 analyst-years of investigation capacity at full annualization.

Their CISO, John Barrow, framed the outcome as “faster, more focused, and able to scale without adding immediate headcount.”

The operating model shift in that sentence is what matters. Not “we hired more people.” Not “we worked our existing people harder.” The work no longer required the same number of people.

Cabinetworks ran 3,200 alerts through Prophet AI in 33 days. Six escalated to a human. The unexpected outcome was a 90% reduction in SIEM costs, primarily from no longer needing to ingest and store raw EDR and identity telemetry that had been pulled into the SIEM purely for analyst pivot queries.

When the AI handles those pivots directly against source systems, that ingest tier becomes optional. The line item that gets cut isn’t the obvious one, and most teams don’t model that secondary saving when they evaluate AI SOC tools. They should. For programs running enterprise SIEM contracts in the seven-figure range, the secondary savings often exceed the cost of the AI platform itself.

A second outcome worth noting: when the queue clears, teams stop having to ignore low and medium severity alerts. Most SOCs quietly stop investigating those classes under capacity pressure, even when their security leadership knows the coverage gap matters. A medium-severity alert isn’t risky because it’s medium.

It’s risky because that’s where real attackers hide while your team is buried in critical-severity noise. Bringing the medium and low tiers back into investigation scope is the coverage shift most teams want and very few can resource.

Every deployment requires two to four weeks of focused tuning before reaching steady state.

How CISOs are funding this

The piece a CISO is mentally writing while reading vendor content is the budget request. Where does this money come from?

Three patterns I’ve seen work, in order of CISO political difficulty.

Path one: Unapproved headcount budget. The cleanest funding path. The team has approved or pending headcount the program hasn’t filled, and the AI platform replaces the need to hire that role. Fully loaded cost for a Tier 2 analyst typically runs $180K to $300K depending on market and seniority, which sets the floor for what the AI platform needs to displace to make the math work.

The JB Poindexter pattern fits here. The “scaling without adding immediate headcount” framing is procurement language for “this is what we’re doing instead of approving the next hire.”

Path two: SIEM cost reduction. If your team is using the SIEM as an investigation pivot workspace (raw EDR telemetry, identity logs, network data), and the AI platform takes over those pivots, the SIEM ingest and storage tier becomes optional.

The Cabinetworks pattern. SIEM ingest savings depend heavily on volume but commonly run 30 to 60 percent of total SIEM spend when investigation telemetry is the main driver.

For programs running mid-six-figure or seven-figure SIEM contracts, this funding path can fully cover the AI platform with savings left over. Get your SIEM renewal cycle date before you start the evaluation, because the timing matters.

Path three: Tool displacement. The hardest political fight. Replacing an existing SOAR, an existing case management workflow, or an existing managed service. The savings vary too widely to generalize, but the displacement creates internal opposition from whoever owns the displaced tool. Plan for it as a 6-month change management project, not a procurement decision.

Most programs end up funding through a combination of paths one and two. Path three is a year-two conversation, not a year-one one.

Where humans still need to lead

I’m pro AI SOC. I work for one. So when I tell you where it isn’t the right tool, take it seriously. Three categories where I’d recommend keeping humans in the lead.

Insider threat investigations where the signal lives in human context, not logs. AI does fine on the DLP-shaped insider threat work where the signal is in telemetry: unusual file movement, exfil to personal cloud, after-hours pulls of sensitive repos. Where it struggles is the harder subset where the deciding signal isn’t in any log.

The PIP that started Monday. The conversation a manager had two weeks ago. The contractor whose contract ends Friday. AI doesn’t have that context. Your humans do.

The right design splits the work cleanly: AI handles the telemetry layer, your team handles the human-context layer. Asking one tool to do both is where these investigations break down.

Novel TTPs with no analog in training data. AI investigation is fundamentally pattern-matching over historical examples. By definition, that’s weakest on attacks that don’t look like anything you’ve seen. Your senior threat hunters earn their keep on the alerts that don’t match anything in the catalog. Don’t outsource that work.

Highly regulated environments where data residency rules dictate where alert telemetry can live. If your compliance posture won’t let metadata leave a specific cloud or country, most AI SOC platforms (Prophet AI included) require real architecture work to fit. Some can’t fit at all. Don’t let any vendor wave that concern away with a slide.

If you’re evaluating an AI SOC tool, ask the vendor exactly where their tool fails. If they don’t have an answer ready, that’s the answer.

Three questions buyers always ask

Three questions come up in almost every evaluation, and they deserve direct answers.

What happens when the AI gets it wrong? Prophet AI documents every step of every investigation. Every question asked, every query run, every piece of evidence pulled, the reasoning that led to the verdict. When a verdict is wrong, the chain of reasoning shows exactly where it went wrong, and your team can encode the correction back into Guidance so the same mistake doesn’t repeat.

That’s a different audit trail than the three-sentence case notes most analysts write under queue pressure today, and it matters more than vendor content typically acknowledges.

Regulators are starting to ask about AI-driven security decisions. Boards are asking about defensible documentation of what the SOC investigated and why. Post-incident reviews are easier to run when the evidence chain is complete by default. The audit trail isn’t a feature. It’s how you keep your seat at the table when the auditor or the board comes asking.

What happens to detection engineering? This is the question senior practitioners ask first, and it’s the right question. You might worry that if AI handles investigation, your team loses the natural feedback loop where analysts catch and tune noisy detections. The honest answer: that work moves explicitly upstream.

Instead of relying on manual triage to spot noise, detecting engineering now use the AI’s comprehensive investigation data as a massive feedback loop, shifting the focus from suppressing alerts to equipping the AI with better context..

To make that upstream work happen, detection engineering shifts from an emergent activity squeezed between alerts to a scheduled discipline owned by the senior analysts whose triage time the AI has freed up. Teams that fail to operationalize that shift see detection quality drift over time. Teams that operationalize it well see detection quality improve, because the engineering happens with intention and dedicated focus.

What does the buying committee look like? AI SOC platforms touch security operations, but the procurement conversation often pulls in IT (for integrations and identity), compliance (for data handling and audit posture), legal (for the data processing agreement and AI-specific contractual terms), and procurement (for vendor risk review).

Plan for that early. Programs that try to push AI SOC through as a security-team decision often hit a six-week delay when compliance discovers the data flow questions in week four. Programs that bring compliance and legal in at the start of the evaluation typically close in half the time.

The vendor-risk question worth asking

One question vendor content almost never addresses directly, and CISOs care about it more than vendors realize: what happens to your program if the AI SOC vendor gets acquired, pivots, or fails? Three-year procurement cycles outlast a lot of vendor strategies.

Three things worth confirming with any AI SOC vendor before signing.

First, data portability: can you export your investigation history, Guidance configurations, and detection logic in a format that survives a vendor change?

Second, runbook independence: are the human-readable Guidance rules you encoded specific to this vendor, or do they document SOC logic your team could rebuild elsewhere?

Third, contractual continuity: what happens to service obligations, data handling, and support during an acquisition or wind-down event?

The third tends to separate the serious vendors from the rest. Most can answer the first two. Few have a clean answer to the third without significant pre-work, which is itself a signal worth noting during evaluation.

Closing thought

Prophet Security’s agentic AI SOC platform operationalizes expert analyst techniques at machine speed across all alert volumes, regardless of severity, to ensure a consistently clear triage queue and preemptively neutralize threats.

If your honest answers to the four diagnostic questions earlier in this piece concerned you, the next conversation isn’t whether AI SOC is the answer. It’s what your senior analysts would actually do with their Tuesday mornings if the triage queue weren’t running them.

That’s the operating model question. Whether you solve it with Prophet Security or someone else, the architecture is what needs to change. Hiring more analysts to triage at machine-generated volume is a strategy that worked in 2018. The math hasn’t worked since 2022.

The teams that change the architecture will get a different conversation with their board next year. The teams that don’t will get the same one they had last year, with a slightly higher number on the spend line and the same number on the time-to-detect line.

Pick the conversation you want to be having.

If your SOC is dealing with alert overload or long investigation times, we’d be happy to show you what Prophet AI looks like in practice. Request a demo or reach out directly to learn more.

Rich Perkins is a Principal Sales Engineer at Prophet Security. Reach him at rich.perkins@prophetsecurity.ai or connect on LinkedIn.

Sponsored and written by Prophet Security.

-

Crypto World1 day ago

Crypto World1 day agoHarrisX Poll Found 52% of Registered Voters Support the CLARITY Act

-

NewsBeat6 days ago

NewsBeat6 days agoChannel 5 – All Creatures Great and Small series 7 new post

-

Crypto World2 days ago

Crypto World2 days agoUpbit adds B3 Korean won pair as Base token gains Korea access

-

Tech5 days ago

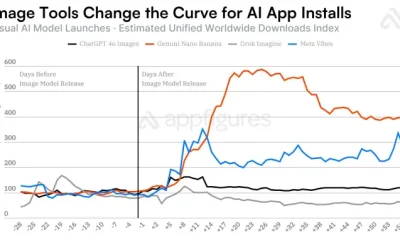

Tech5 days agoImage AI models now drive app growth, beating chatbot upgrades

-

NewsBeat2 days ago

NewsBeat2 days agoNCP car park operator enters administration putting 340 UK sites at risk of closure

-

News Videos6 days ago

News Videos6 days agoAP Dhillon – Old Money (Official Audio)

-

Fashion16 hours ago

Fashion16 hours agoWeekend Open Thread: Marianne Dress

-

Sports6 days ago

Sports6 days agoFive killed in Texas plane crash identified as Amarillo pickleball players

-

Entertainment6 days ago

New Netflix Movies in May 2026 — My Top 3 Picks to Stream

-

Crypto World7 days ago

Crypto World7 days agoPi Network Mandates Protocol 23 Upgrade for All Mainnet Nodes Before May 15 Deadline

-

Entertainment6 days ago

Entertainment6 days agoMelissa Joan Hart and More Stars Attend 2026 Kentucky Derby

-

Entertainment6 days ago

Anna Nicole Smith’s Daughter Attends 2026 Kentucky Derby

-

Crypto World6 days ago

Crypto World6 days agoBitcoin mining equities rise in 2026 as BTC lags behind

-

Business6 days ago

Business6 days agoLuka Doncic Injury Update: Doncic’s Hamstring Recovery Slows Lakers’ Hopes Against Thunder: Can He Run Yet?

-

Entertainment6 days ago

Entertainment6 days agoVenus Williams’ Best Met Gala Looks Over the Years

-

Entertainment6 days ago

“Storage Wars” star Darrell Sheets' ex-wife breaks silence on his death

-

Business7 days ago

Business7 days agoKuwait International Airport Resumes Operations After Closure as Regional Tensions Ease in 2026

-

Sports6 days ago

Sports6 days agoIs Man United v Liverpool on TV? Channel, streaming and how to watch Premier League fixture

-

Entertainment7 days ago

Entertainment7 days agoAfter 2 Years, This Taylor Sheridan Neo-Western Doesn’t Have a Single Bad Episode

-

Crypto World6 days ago

Crypto World6 days agoQ1 2026 Tech Layoffs AI Wave Hits 81,747 as Firms Shift to AI Infrastructure

You must be logged in to post a comment Login