Passwords have been the standard of online security. Next was the two-factor authentication. Then security questions, CAPTCHA, and fingerprinting of devices. Every layer introduced with a new threat. Both were ultimately defeated by more advanced scams.

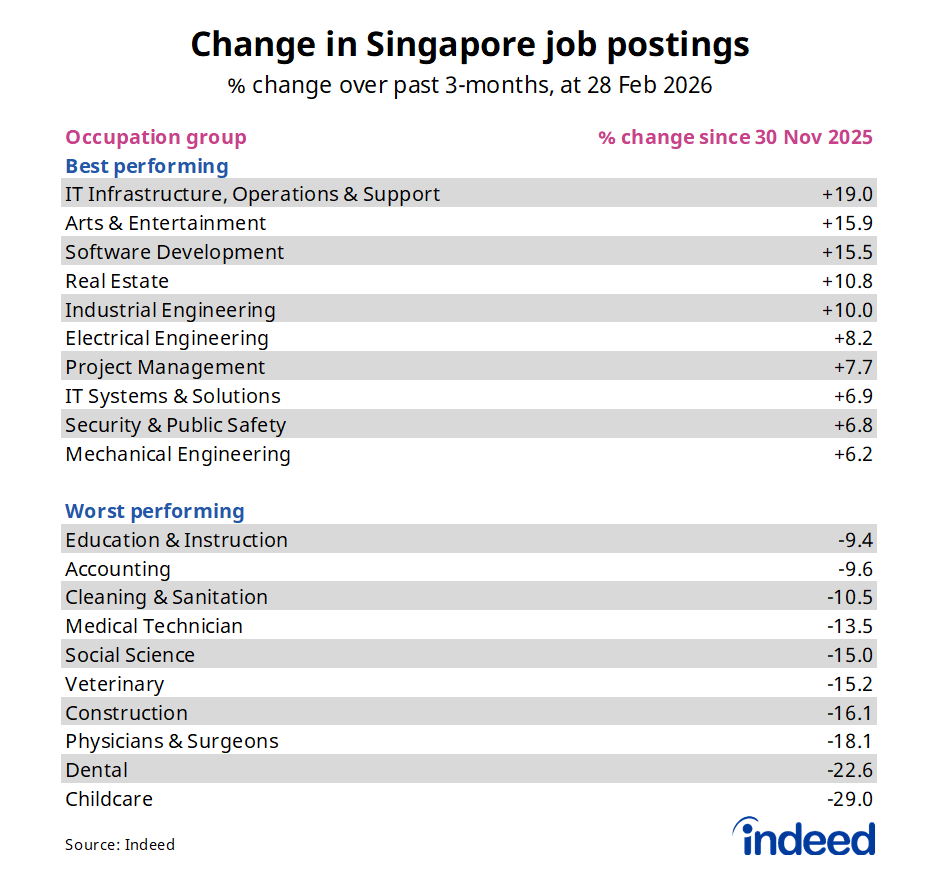

The trend is obvious: any security system relying on what one knows or possesses will be susceptible to theft, copying, or social engineering. The one verification level that is truly hard to counterfeit is who someone is – and that is exactly where artificial intelligence has transformed all that.

Identity verification using AI is no longer a niche technology that is implemented only by banks and governmental agencies. It is also going to be the minimum security requirement of any business onboarding clients digitally, transacting high-value deals, or working within a regulated sector in 2026. The knowledge of how it works, why it is important, and how to apply it is now a business competency rather than an IT issue.

The Issue Classic Security Cannot Address

It is only prudent to know what AI-driven identity verification is meant to address before delving into how it works, since the threat landscape has changed drastically.

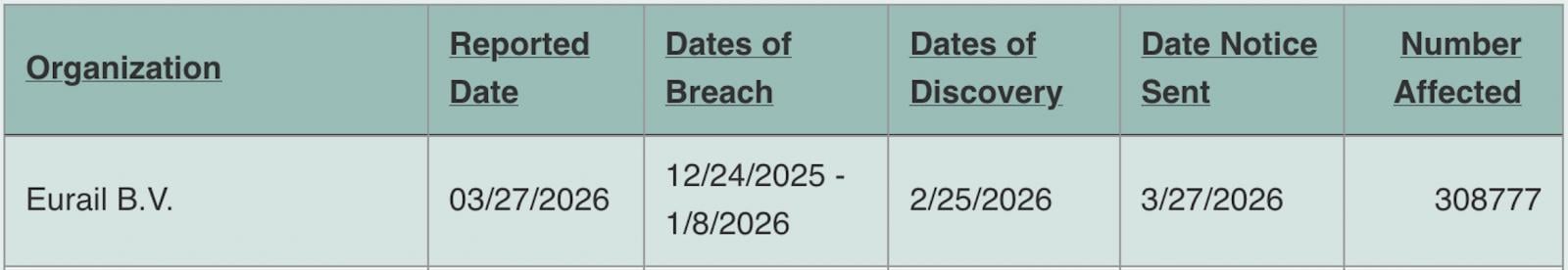

Credential breach has rendered credentials a worthless security signal. The Cost of a Data Breach Report by IBM indicated that in 2024, the mean data breach involved more than 25,000 records. Out of the thousands of breaches that have taken place worldwide in the last ten years, billions of usernames and passwords, social security numbers, dates of birth, and answers to security questions are now being sold on the dark web. With access to such databases, a fraudster can easily pass through traditional credential checks since the credentials are authentic, only that they are owned by a different person.

The synthetic identity fraud has generated a new breed of criminal. More than stealing an existing identity, advanced fraudsters are building identities, assembling a real Social Security number (usually that of a child or an aged individual with no credit history) with invented names, addresses, and biographical information. These artificial identities can withstand a simple verification check since some of the information is authentic. They are mostly unnoticed by traditional rules-based fraud detection systems.

Deepfakes created by AI have defeated selfie-based authentication. The fast development of generative AI has brought about tools that are capable of generating photorealistic fake images, videos, and even real-time video feeds of non-existent individuals within minutes. The days of systems utilizing a mere selfie photo to verify identity are long gone, with fraudsters capable of uploading a deepfake image that, visually, resembles a real photo.

Credential theft, synthetic identity fraud, and AI-generated deepfakes are the three converging threats that next-generation AI-powered identity verification is designed to deal with.

The reality of what AI-Powered Identity Verification does

Identity verification is not just an AI-based technology. It is a multi-tiered system of a series of AI models operating together to determine with high probability that an individual is who they claim they are.

Document Authentication

The initial layer is document checking. A user enters a government-issued identity document, passport, driver’s license, national ID card, and an AI model compares it with thousands of known document templates that exist in the world.

The level of the analysis is much higher than determining whether the document is real. Machine learning algorithms trained on millions of real and fake documents analyze the quality of microprints, the presence of UV patterns, holographic elements, font authenticity, MRZ (Machine Readable Zone) information integrity, and pixel-level anomalies (which signify editing and manipulation). Digitally manipulated documents (even in subtle ways) are detected within seconds.

The system of document verification is available in modern document verification systems that can verify more than 14,000 types of documents representing more than 190 countries, which would not otherwise be feasible to verify manually.

Biometric Face Matching

When the document has been verified, the system will compare the face on the document to a live selfie or a video submission by the individual purporting to be the document holder. In AI facial recognition models, the geometric distance between facial features, such as the distance between eyes, nose shape, jaw angle, and a confidence score of the match, is calculated.

It is a quick, precise, and much more dependable method than a visual inspection by people. Research by the National Institute of Standards and Technology (NIST) consistently reported that the best facial recognition algorithms perform better than human examiners in face matching tasks, especially when there are changes in lighting, angle, and age.

Liveness Detection

It is the layer that deals with deepfake fraud in particular, and it is in this area that AI has achieved the most critical progress.

Liveness detection identifies when the face presented is that of a real, physically present human being, or whether it is a photograph, printed mask, video recording, or deepfake generated by a computer AI. Passive liveness detection examines a single image of slight signs of non-liveness: texture anomalies, unnatural light reflection, absence of micro-movements, or compression artifacts suggesting a screen capture. Active liveness detection requires the user to do randomized behaviors: blink, move their head, smile, which are virtually impossible to impersonate by a still image and computationally infeasible to spoof by a live deepfake.

Passive and active liveness detection combined has increased the threshold to deepfake fraud attacks to the extent that the cost of a successful attack is usually more economical than the fraudulent value, and AI-generated identity fraud attacks are thus not economical in most criminal activities.

Cross-Referencing of Data and AML Screening

Outside the biometric layer, identity verification systems built with AI will cross-verify the verified identity data against external databases in real-time. This encompasses global sanctions lists, Politically Exposed Persons (PEP) databases, adverse media sources, and watchlists that are managed by regulatory agencies such as the OFAC, the UN, and the EU.

It is this AML screening layer that makes identity verification a compliance tool, as well as a security tool, such that businesses can fulfill their Know Your Customer (KYC) and Anti-Money Laundering (AML) requirements alongside the verification check, instead of as a downstream operation.

The Importance of Thematically Integrated Security to Business Security – Not Just Compliance

The argument of AI-based identity verification as compliance is well-established. In practically every jurisdiction, financial services companies, fintech, and other regulated businesses are required to perform KYC and AML processes on a compulsory basis. Failing to meet them carries substantial financial penalties and reputational risk.

However, the business security case is far bigger than regulatory compliance – and to most businesses, the non-compliance risks pale in comparison with the direct losses of fraud that can be easily facilitated by poor identity verification.

Businesses are directly affected by account takeover fraud. Once a fraudster manages to create a successful impersonation of an authentic customer in the process of onboarding or recovering an account, they access the available accounts, payment methods, and stored credit. The ensuing chargebacks, frauds, and dispute settlements are more on the business side than the card network. Account takeover fraud is a major and increasing direct operating expense to e-commerce companies and financial technology applications.

New account fraud generates unpayable debts. Synthetic identity fraud generally leads to the so-called bust-out schemes in which a fraudster accumulates credit exposure on a variety of products, and then defaults on all of them at once. To lenders, credit providers, and buy-now-pay-later sites, the damages of a single synthetic identity that has been nurtured over months can go into tens of thousands of dollars.

Financial loss is compounded by reputational loss because of instances of fraud. In cases where clients of a business fall victim to fraud by a security breach on a platform, the reputational loss is more than just the direct financial loss. The loss of customers, media attention, and regulatory investigations after a fraud incident can be even more expensive than the actual losses incurred in the fraud itself – especially to a business in which trust is the product.

At the onboarding stage, AI-based identity checks prevent the vast majority of such attack vectors, prior to the creation of a fraudulent account. Compliance cost avoidance is only part of the payback; it is the avoidance of downstream fraud losses that grow with business expansion.

Real-life Application: What Companies Should know

The practical considerations of AI-powered identity verification extend beyond the technology when business leaders consider this technology.

Should Be API-First Deployment

Contemporary identity verification systems are implemented through API integration – linking your onboarding process with the verification service without having the customer leave your site. This retains the customer experience and facilitates instant verification decisions. Find options that enable integration of SDKs in mobile applications and provide a webhook-based delivery of decisions to reduce the onboarding latency.

Risk Level should be configurable to determine Verification Decisions

Customers do not pose the same fraud risk, and not all transactions need the same level of verification. An effective AI-based solution enables companies to set up verification processes according to risk indicators – introduce lightweight document verification to transactions with low risks and complete biometric verification with liveness detection to high-value or high-risk onboarding situations. This risk-based model maintains conversion rates among legitimate customers and focuses verification resources where the fraud risk is the greatest.

Audit Trails are Not Negotiable

Each verification decision, be it approval, rejection, or flagged to undergo manual review, should be recorded with a time stamp, the particular methods used to verify, the confidence levels delivered by the methods, and the documentation. Such an audit trail is necessary in regulatory audits, chargeback audits, and internal fraud audits. Firms that are subject to FINTRAC, GDPR, or other regulations must generate such records when they are requested, usually in 30 days or fewer.

Should Be Constructed Human Review Escalation

AI verification systems are extremely precise, yet no computerized system can be 100 percent confident in all cases. Good implementations involve a queue of cases with AI confidence less than a set-point – often around 5-10% of all verifications. The edge cases that are not detected by the automated systems are picked by human reviewers looking at the flagged cases, and their verdicts are used to inform further improvement of the model.

Select a Partner that has Worldwide Document Covers

When you have customers in a variety of countries, your identity verification provider should accept document types in those countries. An optimized system for North American documents will result in an unacceptable high rate of false rejection of customers with a Southeast Asian, Middle Eastern, or African identity document. Such solutions as the document verification offered by Shufti Pro can work with documents issued in 190+ countries – an essential feature that businesses with international clientele can use.

The Competitive Advantage of this Right

The divide between companies that have invested in solid identity verification infrastructure and those that have not is widening, and the difference has repercussions beyond losses in fraud.

The relations between payment processors are based on fraud indicators. The card networks and payment processors keep a close eye on the chargeback rates and the fraud rates. Companies with low fraud traces due to proper identity checking receive superior processing rates, increased transaction limits, and preference of merchants. Companies that have higher fraud rates will be charged higher fees, delays in processing, and, in the worst case, the merchant account will be shut down.

Security posture is also necessary to acquire enterprise clients. Enterprise customers: Large enterprises (especially in the financial services, medical, and government contracting) perform vendor security testing before contracting. Documented, auditable identity verification and fraud prevention program is becoming a condition to winning enterprise business, and not a differentiator.

Fraud infrastructure is studied in investor due diligence. In the case of growth-stage businesses that are in need of investment, fraud prevention infrastructure is part of due diligence. Fintech, e-commerce, and SaaS investors prefer to observe that the business has developed security basics that can scale up since fraud losses that can be controlled at the early stage become existential at the growth stage when the infrastructure is lacking.

The Future: The Future of AI Identity Verification

The technology does not stand still. Several trends are underway transforming AI-driven identity verification in 2026 and beyond.

Continuous authentication has passed onboarding. Instead of authenticating identity when creating an account, AI systems are starting to track behavioral indicators, such as typing patterns, mouse motions, transaction activities, etc., in real time, and used in the course of a user session, which indicates anomalies that may indicate account takeover.

There is an increasing regulatory trend toward decentralized identity frameworks, in which verified credentials are stored by the user, but not by individual businesses, both in the EU and Canada. These frameworks minimise the data liability that businesses already bear when it comes to storing identity documents and biometric data.

There are ever-growing regulatory requirements across the world. Fintrac of Canada, the AML package of the EU, and other systems in Asia-Pacific are increasing standards of identity verification – that is, what is best practice now will become legal minimum tomorrow.

Concluision

The paradigm change that AI-enabled identity verification will be a transition to proactive security, rather than reactive security. Conventional methods identified fraud only once it occurred, by way of chargeback, account audits, and forensic audits. Verification, which is AI-based, detects fraud when it is attempted – before creation of a fraudulent account, before a stolen identity being impersonated, before a deep fake passing through an onboarding test.

In the case of businesses that are scaling, that change does not qualify as a security upgrade. It is a foundation. Survivable losses of fraud at a small scale are devastating at the growth stage. It is the businesses that develop strong identity verification infrastructure early that develop without the compounding drag of costs associated with fraud, compliance failures, and reputational incidents slowing them down.

With the cost of impersonation in a digital economy falling to almost zero, the cost of not authenticating identity is increasing year after year.

.png)

.png)

You must be logged in to post a comment Login