For the past year, early adopters of autonomous AI agents have been forced to play a murky game of chance: keep the agent in a useless sandbox or give it the keys to the kingdom and hope it doesn’t hallucinate a catastrophic “delete all” command.

To unlock the true utility of an agent—scheduling meetings, triaging emails, or managing cloud infrastructure—users have had to grant these models raw API keys and broad permissions, raising the risk of their systems being disrupted by an accidental agent mistake.

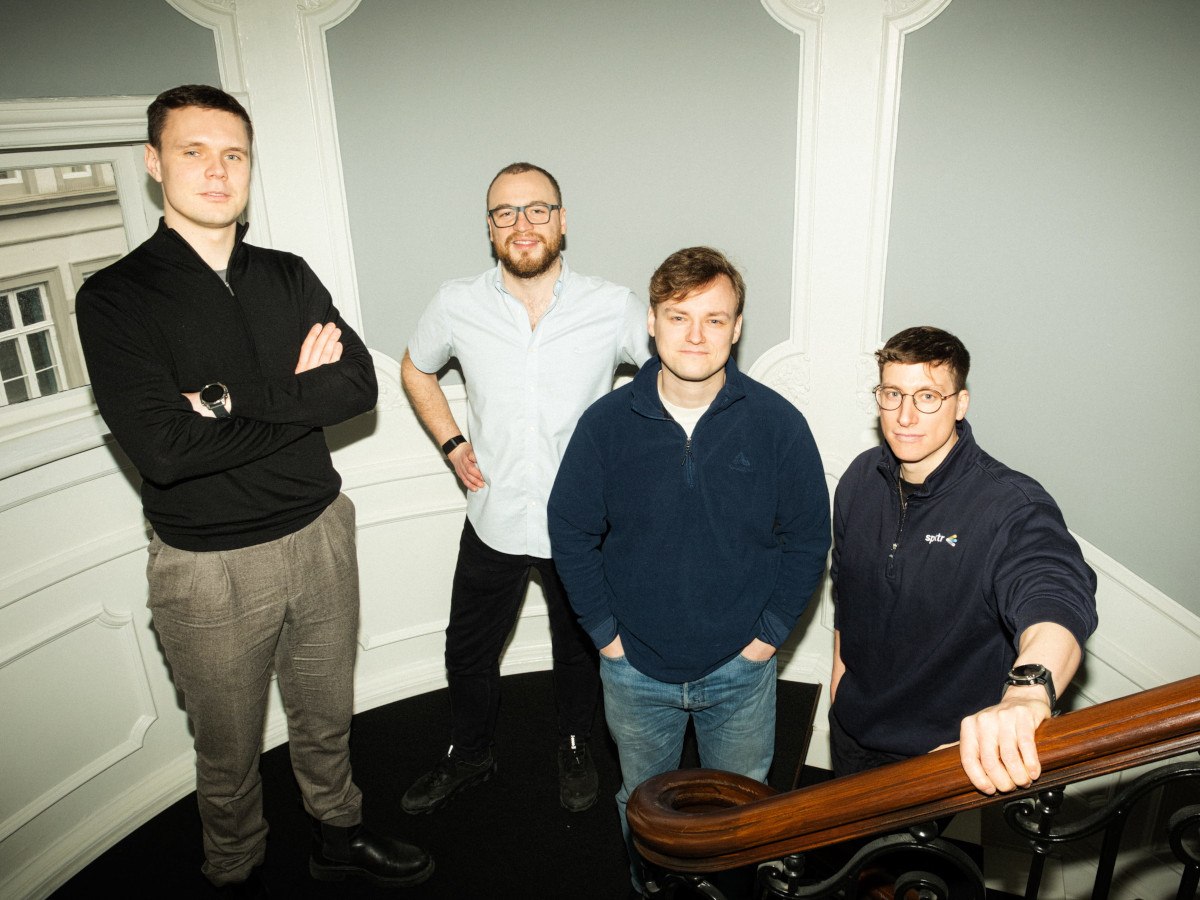

That tradeoff ends today. The creators of the open source sandboxed NanoClaw agent framework — now known under their new private startup named NanoCo — have announced a landmark partnership with Vercel and OneCLI to introduce a standardized, infrastructure-level approval system.

By integrating Vercel’s Chat SDK and OneCLI’s open source credentials vault, NanoClaw 2.0 ensures that no sensitive action occurs without explicit human consent, delivered natively through the messaging apps where users already live.

The specific use cases that stand to benefit most are those involving high-consequence “write” actions. That is, in DevOps, an agent could propose a cloud infrastructure change that only goes live once a senior engineer taps “Approve” in Slack.

For finance teams, an agent could prepare batch payments or invoice triaging, with the final disbursement requiring a human signature via a WhatsApp card.

Technology: security by isolation

The fundamental shift in NanoClaw 2.0 is the move away from “application-level” security to “infrastructure-level” enforcement. In traditional agent frameworks, the model itself is often responsible for asking for permission—a flow that Gavriel Cohen, co-founder of NanoCo, describes as inherently flawed.

“The agent could potentially be malicious or compromised,” Cohen noted in a recent interview. “If the agent is generating the UI for the approval request, it could trick you by swapping the ‘Accept’ and ‘Reject’ buttons.”

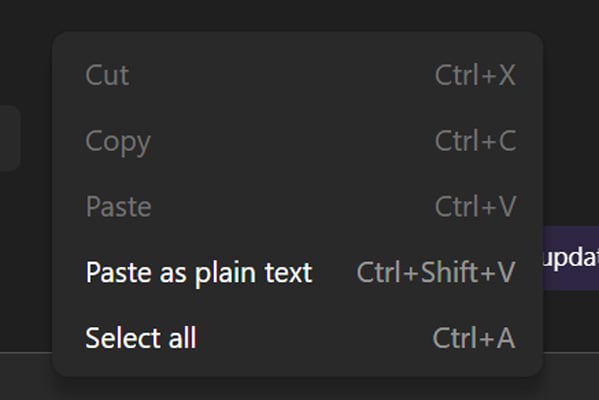

NanoClaw solves this by running agents in strictly isolated Docker or Apple Containers. The agent never sees a real API key; instead, it uses “placeholder” keys. When the agent attempts an outbound request, the request is intercepted by the OneCLI Rust Gateway. The gateway checks a set of user-defined policies (e.g., “Read-only access is okay, but sending an email requires approval”).

If the action is sensitive, the gateway pauses the request and triggers a notification to the user. Only after the user approves does the gateway inject the real, encrypted credential and allow the request to reach the service.

Product: bringing the ‘human’ into the loop

While security is the engine, Vercel’s Chat SDK is the dashboard. Integrating with different messaging platforms is notoriously difficult because every app—Slack, Teams, WhatsApp, Telegram—uses different APIs for interactive elements like buttons and cards.

By leveraging Vercel’s unified SDK, NanoClaw can now deploy to 15 different channels from a single TypeScript codebase. When an agent wants to perform a protected action, the user receives a rich interactive card on their phone. “The approval shows up as a rich, native card right inside Slack or WhatsApp or Teams, and the user taps once to approve or deny,” said Cohen. This “seamless UX” is what makes human-in-the-loop oversight practical rather than a productivity bottleneck.

The full list of 15 supported messaging apps/channels contains many favored by enterprise knowledge workers, including:

-

Slack

-

WhatsApp

-

Telegram

-

Microsoft Teams

-

Discord

-

Google Chat

-

iMessage

-

Facebook Messenger

-

Instagram

-

X (Twitter)

-

GitHub

-

Linear

-

Matrix

-

Email

-

Webex

Background on NanoClaw

NanoClaw launched on January 31, 2026, as a minimalist and security-focused response to the “security nightmare” inherent in complex, non-sandboxed agent frameworks.

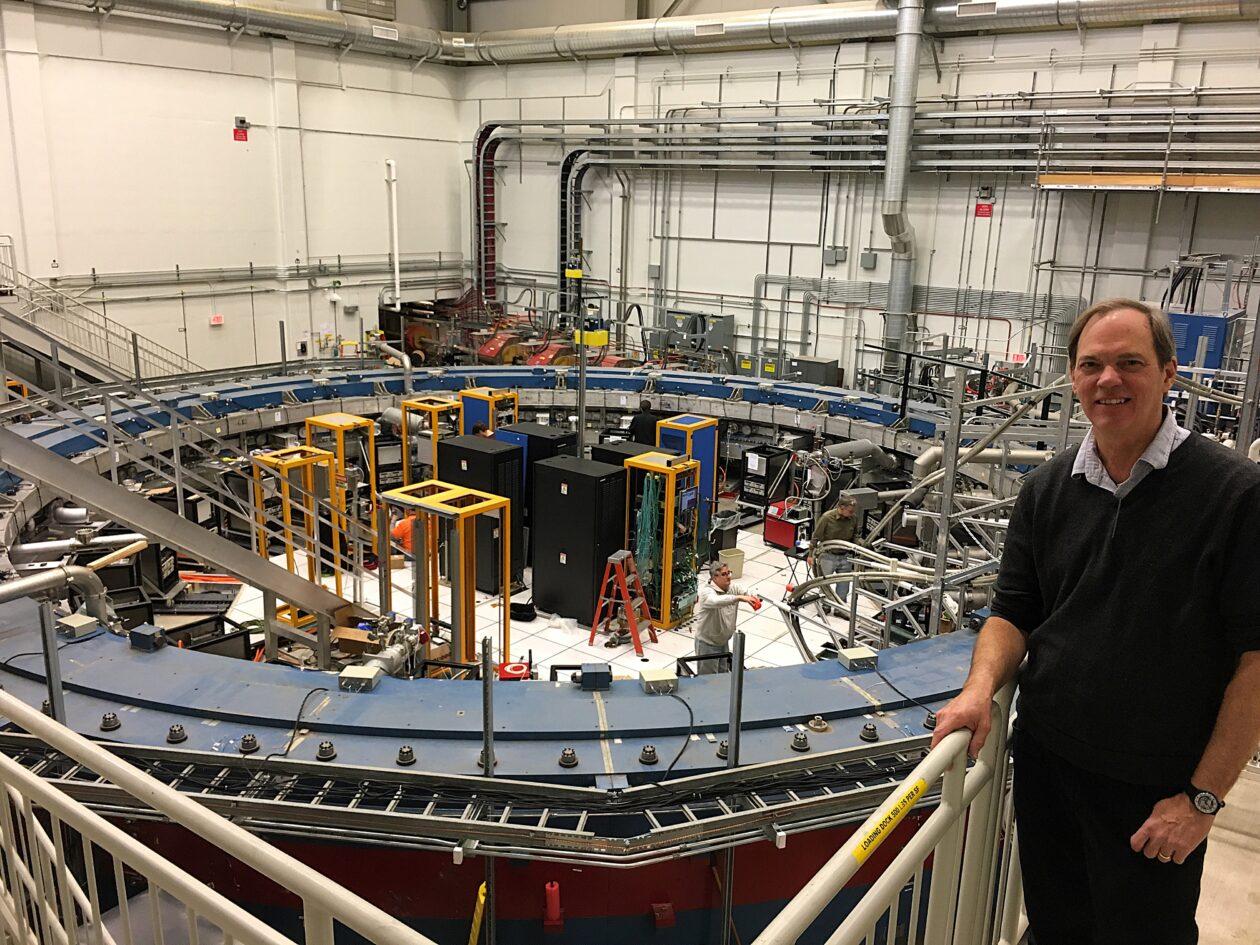

Created by Cohen, a former Wix.com engineer, and marketed by his brother Lazer, CEO of B2B tech public relations firm Concrete Media, the project was designed to solve the auditability crisis found in competing platforms like OpenClaw, which had grown to nearly 400,000 lines of code.

By contrast, NanoClaw condensed its core logic into roughly 500 lines of TypeScript—a size that, according to VentureBeat, allows the entire system to be audited by a human or a secondary AI in approximately eight minutes.

The platform’s primary technical defense is its use of operating system-level isolation. Every agent is placed inside an isolated Linux container—utilizing Apple Containers for high performance on macOS or Docker for Linux—to ensure that the AI only interacts with directories explicitly mounted by the user.

As detailed in VentureBeat’s reporting on the project’s infrastructure, this approach confines the “blast radius” of potential prompt injections strictly to the container and its specific communication channel.

In March 2026, NanoClaw further matured this security posture through an official partnership with the software container firm Docker to run agents inside “Docker Sandboxes”.

This integration utilizes MicroVM-based isolation to provide an enterprise-ready environment for agents that, by their nature, must mutate their environments by installing packages, modifying files, and launching processes—actions that typically break traditional container immutability assumptions.

Operationally, NanoClaw rejects the traditional “feature-rich” software model in favor of a “Skills over Features” philosophy. Instead of maintaining a bloated main branch with dozens of unused modules, the project encourages users to contribute “Skills”—modular instructions that teach a local AI assistant how to transform and customize the codebase for specific needs, such as adding Telegram or Gmail support.

This methodology, as described on NanoClaw’s website and in VentureBeat interviews, ensures that users only maintain the exact code required for their specific implementation.

Furthermore, the framework natively supports “Agent Swarms” via the Anthropic Agent SDK, allowing specialized agents to collaborate in parallel while maintaining isolated memory contexts for different business functions.

Licensing and open source strategy

NanoClaw remains firmly committed to the open source MIT License, encouraging users to fork the project and customize it for their own needs. This stands in stark contrast to “monolithic” frameworks.

NanoClaw’s codebase is remarkably lean, consisting of only 15 source files and roughly 3,900 lines of code, compared to the hundreds of thousands of lines found in competitors like OpenClaw.

The partnership also highlights the strength of the “Open Source Avengers” coalition.

By combining NanoClaw (agent orchestration), Vercel Chat SDK (UI/UX), and OneCLI (security/secrets), the project demonstrates that modular, open-source tools can outpace proprietary labs in building the application layer for AI.

Community reactions

As shown on the NanoClaw website, the project has amassed more than 27,400 stars on GitHub and maintains an active Discord community.

A core claim on the NanoClaw site is that the codebase is small enough to understand in “8 minutes,” a feature targeted at security-conscious users who want to audit their assistant.

In an interview, Cohen noted that iMessage support via Vercel’s Photon project addresses a common community hurdle: previously, users often had to maintain a separate Mac Mini to connect agents to an iMessage account.

The enterprise perspective: should you adopt?

For enterprises, NanoClaw 2.0 represents a shift from speculative experimentation to safe operationalization.

Historically, IT departments have blocked agent usage due to the “all-or-nothing” nature of credential access. By decoupling the agent from the secret, NanoClaw provides a middle ground that mirrors existing corporate security protocols—specifically the principle of least privilege.

Enterprises should consider this framework if they require high-auditability and have strict compliance needs regarding data exfiltration. According to Cohen, many businesses have not been ready to grant agents access to calendars or emails because of security concerns. This framework addresses that by ensuring the agent structurally cannot act without permission.

Enterprises stand to benefit specifically in use cases involving “high-stakes” actions. As illustrated in the OneCLI dashboard, a user can set a policy where an agent can read emails freely but must trigger a manual approval dialog to “delete” or “send” one.

Because NanoClaw runs as a single Node.js process with isolated containers , it allows enterprise security teams to verify that the gateway is the only path for outbound traffic. This architecture transforms the AI from an unmonitored operator into a supervised junior staffer, providing the productivity of autonomous agents without forgoing executive control.

Ultimately, NanoClaw is a recommendation for organizations that want the productivity of autonomous agents without the “black box” risk of traditional LLM wrappers. It turns the AI from a potentially rogue operator into a highly capable junior staffer who always asks for permission before hitting the “send” or “buy” button.

As AI-native setups become the standard, this partnership establishes the blueprint for how trust will be managed in the age of the autonomous workforce.

You must be logged in to post a comment Login