At the start of this year, it seemed like everybody was reminiscing about the year 2016. In January alone, Spotify saw a 790 percent increase in 2016-themed playlists. People were declaring that the 2026 vibe would match the feel-good vibes of 2016.

Tech

Gen Z is obsessing over 2016 songs, fashion and more. Why???

The only problem is that the experience of living through 2016 was far different from what Gen Z in particular remembers.

Daysia Tolentino is the journalist behind the newsletter Yap Year, where she’s been chronicling online affinity for the 2010s for almost a year now. Gen Z tends to blend all of the years together causing them to hype up the fun cultural parts and ignore the international and political turmoil that marked 2016. Tolentino says 2016 nostalgia might actually be a sign that young people are ready to break out of these cycles of nostalgia and reach for something new.

Tolentino spoke with Today, Explained host Astead Herndon about how 2016 has stuck with us and what our nostalgia for that time might reveal.

There’s much more in the full podcast, so listen to Today, Explained wherever you get your podcasts, including Apple Podcasts, Pandora, and Spotify.

Where did this 2016 trend start?

It’s been building up since last year, especially on TikTok. People have been slowly bringing back 2016 trends, whether that’s the mannequin challenge with the Black Beatles song, or pink wall aesthetics, and these really warm, hazy Instagram filters. When we entered the New Year in 2026, there were a lot of TikToks saying that 2026 was going to be like 2016.

I was curious about that. What does that even mean? I don’t actually think people know what that means at all. Then, a couple weeks ago, you see a lot of people on Instagram, especially peak Instagram influencers, posting themselves at their peak in 2016, which inspired everybody to post their own 2016 photos.

In your newsletter, you’ve tried to define what the 2016 mood board is. Can you explain that for me? When we’re thinking 2016 vibes, what do we mean?

When I look at 2016, I see makeup gurus on YouTube blow up at this time, and the makeup at the time is extremely maximalist. It’s very full glam, full beat, very matte, very colorful, some neon wigs at this time. You have the King Kylie of it all.

2016 was such a pivotal moment in internet culture. I think that is when we started to really enter this influencer era in full force. Prior to that, we had creators, but we didn’t have as much of this monetization infrastructure to make everything online an ad essentially. People were posting whatever they wanted to post.

It was the year that social media companies started pushing your news feed toward an engagement-based algorithm versus a friends-only chronological feed. In 2016, you see this flip toward influencer culture and this more put together easily consumable image and vibe to everything, and that trickles down into the culture of Instagram, so then people start posting as if they’re influencers themselves.

Even if you are a teenager like me at the time, if I look at my own Instagram, I could see my own posts mimicking influencers, becoming more polished, and becoming more aesthetic. I think people have missed that a lot, although I think people romanticize 2016 and forget a lot about what that year is actually like.

What do you think this says about 2026?

The entire 2020s so far, people on TikTok, especially young people, have been romanticizing the 2010s. I think, in general, people associate the 2010s with a sense of optimism, especially post-2012. Young people have grown up in such a tumultuous time with the pandemic, the economy, with politics and the world in general. It feels really hopeless at times, so people are looking back to that time that literally looked so sunny, and positive, and wonderful, and low stakes. I think it’s really easy for people to become really fixated on this time period, even if that wasn’t the actual reality, right?

Why do you think people are only cherry picking the good parts of 2016?

It was one of the last years in which we engaged in a monoculture together, and we had shared pieces of culture that we could remember. We could all remember “Closer” being on the radio like 24/7 at the time. I think a lot of people romanticized 2016, because it is the last time they remember unification in any way. It feels like the last kind of moment of normalcy before this decade of turmoil.

As much as there was so much change and disruption happening in 2016, whether that’s Donald Trump, whether that’s Brexit, or even the rise of Bernie Sanders, there were so many people who were so excited about that. I think there was a feeling of disruption that could be mistaken for general optimism. Then, this hope for something different to come that began in 2016 did not materialize in maybe the ways that people wanted them to. But I think a lot of people can remember that feeling and the shared culture that we all had that nobody really is able to share in these days.

I’m 32. I can’t imagine me 10 years ago thinking that the best years were behind me and not in front of me. Am I just being old, or does some of this feel like a generation that’s been raised on remakes and sequels looking back instead of looking forward?

Yeah, that is something I’m concerned about frequently. I’m 27; I shouldn’t be like, “Being 17 was the best years of my life.” It is too obsessed with looking back, because you are unable to imagine a better future forward. That is always really concerning. That is always an indication that there’s a loss of hope,

But, I think that this year, it seems like the energy from people online is about creating something new, and introducing friction, and moving forward from this constant need for escapism that the internet has provided us for the past 10 years. I have seen that rise alongside this nostalgia that has been so widely publicized and widely talked about.

I think people are ready for new things. I think people are ready to move on from constant escapism that the internet and social media brings, including constant nostalgia.

Tech

ShinyHunters claim responsibility for European Commission breach

![]()

Reportedly, the crime group accessed more than 350GB of stolen data related to data dumps of mail servers, databases, confidential documents, contracts and other sensitive material.

The extortion group ShinyHunters has been linked to the recent (24 March) breach of the European Commission’s Europa.eu platform, in which a reported 350GB of data, across multiple databases, was accessed and stolen.

In a statement issued after the incident (27 March), the European Commission stated that their early findings suggest that private data has been accessed and Union entities affected by the attack will be contacted. The Commission’s internal systems are not believed to have been affected.

The Commission explained it will continue to monitor the situation, taking the necessary precautions to ensure the security of its systems and data, as well as work to analyse what happened so it can use the results to improve its cybersecurity capabilities.

While the Commission has not shared further details on the incident, alleged data dumps uploaded to ShinyHunters’ Tor data leak site are said to include content from mail servers, internal communications systems, databases, confidential documents, contracts and additional sensitive material. 90GB of information allegedly stolen from the European Commission’s compromised cloud network has already been shared.

ShinyHunters are an extortion group established around 2020, who have carried out a number of high-profile, financially-motivated attacks on groups such as Salesforce, Allianz Life, SoundCloud and Ticketmaster. The criminal organisation also claimed responsibility for an attack on Match Group, which owns Tinder, Hinge, Meetic, Match.com and OkCupid.

In July 2024, AT&T paid a member of the ShinyHunters hacking group $370,000 to delete the data of millions of customers following a massive data breach of its systems. Reportedly, the stolen data exposed the calls and texts of nearly all of the platform’s 110m cellular customers after ShinyHunters stole the information from the cloud data giant Snowflake.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

Tech

This Is How Trump Is Already Threatening the Midterms

The White House did not respond to a request for comment about the meetings, but an official who was not authorized to speak on the record, told WIRED at the time: “The White House does not comment on mysterious meetings with unnamed staffers.”

Simultaneously, Trump has also sought to absolve officials of any wrongdoing in the wake of the 2020 election. Last year, Trump gave “full, complete and unconditional” pardons to a slate of people who had tried, and failed, to help him overturn the 2020 election results. In recent months, Trump has pressured Colorado governor Jared Polis to release Tina Peters, the former county clerk in Mesa County, Colorado, who became a hero for the right’s election deniers when she facilitated a security breach during a software update of her county’s election management system.

Peters was found guilty of four felonies, but Trump has been mounting a campaign in recent months to get her released, even going so far as to say he “pardoned” her, even though he has no power to do so given she was convicted on state charges.

Election Day Interference

While Trump has not announced specific plans to deploy troops to polling locations or seize voting machines, he and his administration have certainly been suggesting that such action is not off the table.

In January, Trump lamented not having the National Guard seize certain voting machines after the 2020 election. In early February, White House press secretary Karoline Leavitt told reporters that while she hasn’t specifically heard Trump discussing the possibility, she couldn’t “guarantee that an ICE agent won’t be around a polling location in November.” (The question was in response to former White House adviser Steve Bannon stating: “We’re going to have ICE surround the polls come November. We’re not going to sit here and allow you to steal the country again … We will never again allow an election to be stolen.”)

Earlier this month, during his confirmation hearing to head up the Department of Homeland Security, Senator Markwayne Mullin said he would be willing to deploy ICE to polling locations to address “a specific threat.”

The result of the Trump administration’s drip feed of threats and dog whistles is that those who are running elections in states across the country are already war-gaming what happens if ICE or the National Guard show up at their voting locations.

Michael McNulty, the policy director at Issue One, a nonprofit that tracks the impact of money in politics, also points to the fact that the Department of Justice sent monitors to oversee elections in November in New Jersey and California, despite no federal elections being held. “The concern is that this could become a massive deployment of, quote unquote, observers by the DOJ in 2026 who might do something more, whether it’s intimidation, whether it’s interfering with local election officials, to get data to confirm conspiracy theories,” McNulty tells WIRED.

FBI Raids

On January 28, the FBI raided the election office in Fulton County, Georgia, executing a search warrant that allowed it to seize ballots, ballot images, tabulator tapes, and the voter rolls related to the 2020 election. The search warrant affidavit, unsealed a few weeks ago, shows that the FBI relied on the work of Kurt Olsen, a lawyer who was appointed by the administration to investigate election security in October and who has a long history of working with some of the country’s biggest election deniers, including Patrick Byrne, Mike Lindell, and Kari Lake. Olsen’s claims are based on debunked and previously investigated conspiracy theories about the 2020 election.

The raid was also notable for the presence of Tulsi Gabbard, the director of national intelligence, who is, according to The Guardian, running a parallel investigation into the 2020 election with the apparent tacit approval of Trump.

Tech

Fiber HDMI cables enable full-bandwidth 8K over runs up to 990 feet

The product is an active optical cable (AOC) for HDMI. Instead of relying solely on copper, it carries most of its signal over fiber-optic strands. Inside the cable, HDMI electrical signals are converted into optical signals for the journey between the two ends, then converted back to electrical signals at…

Read Entire Article

Source link

Tech

In with a bang, out in silence — the end of the Mac Pro

For almost two decades, the Mac Pro bounced between coveted and beloved, to derided and forgotten. Now, it’s finally over.

Apple is reportedly pressing the off switch on the Mac Pro

All political careers end in failure, and all devices fade out as they are eventually superseded. Yet this time it’s more that the Mac Pro has been usurped, and possibly even stabbed in the back.

If you’re a Mac Pro fan, you know this day is coming, and you probably don’t want to believe it. It’s true that the Mac Pro has long lost its crown as the most powerful Mac, but still this is the legendary Mac Pro.

Continue Reading on AppleInsider | Discuss on our Forums

Tech

Is It Time For Open Source to Start Charging For Access?

“It’s time to charge for access,” argues a new opinion piece at The Register. Begging billion-dollar companies to fund open source projects just isn’t enough, writes long-time tech reporter Steven J. Vaughan-Nichols:

Screw fair. Screw asking for dimes. You can’t live off one-off charity donations… Depending on what people put in a tip jar is no way to fund anything of value… [A]ccording to a 2024 Tidelift maintainer report, 60 percent of open source maintainers are unpaid, and 60 percent have quit or considered quitting, largely due to burnout and lack of compensation. Oh, and of those getting paid, only 26 percent earn more than $1,000 a year for their work. They’d be better paid asking “Would you like fries with that?” at your local McDonald’s…

Some organizations do support maintainers, for example, there’s HeroDevs and its $20 million Open Source Sustainability Fund. Its mission is to pay maintainers of critical, often end-of-life open source components so they can keep shipping patches without burning out. Sentry’s Open Source Pledge/Fund has given hundreds of thousands of dollars per year directly to maintainers of the packages Sentry depends on. Sentry is one of the few vendors that systematically maps its dependency tree and then actually cuts checks to the people maintaining that stack, as opposed to just talking about “giving back.”

Sentry is on to something. We have the Linux Foundation to manage commercial open source projects, the Apache Foundation to oversee its various open source programs, the Open Source Initiative (OSI) to coordinate open source licenses, and many more for various specific projects. It’s time we had an organization with the mission of ensuring that the top programmers and maintainers of valuable open source projects get a cut of the tech billionaire pie.

We must realign how businesses work with open source so that payment is no longer an optional charitable gift but a cost of doing business. To do that, we need an organization to create a viable, supportable path from big business to individual programmer. It’s time for someone to step up and make this happen. Businesses, open source software, and maintainers will all be better off for it.

One possible future… Bruce Perens wrote the original Open Source definition in 1997, and now proposes a not-for-profit corporation developing “the Post Open Collection” of software, distributing its licensing fees to developers while providing services like user support, documentation, hardware-based authentication for developers, and even help with government compliance and lobbying.

Tech

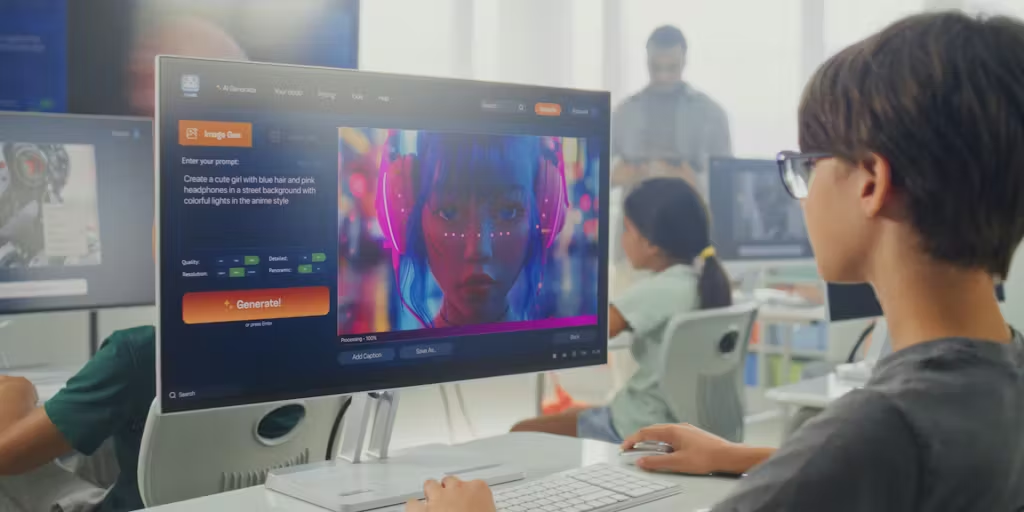

From “Hello, World!” to AI: What Skills Actually Prepare Students for the Future?

This article is part of the collection: Teaching Tech: Navigating Learning and AI in the Industrial Revolution.

A little over a decade ago, schools were swept into what many described as a movement to prepare students for the future of work. That work was coding — “Hello, world!”

Districts introduced new courses, nonprofits expanded access to computer science education and a growing ecosystem of programs promised to teach students the skills needed to enter the tech workforce. For many, it felt like a necessary correction to a rapidly digitizing world. But over time, a more complicated picture emerged.

While access to computer science education expanded, the relationship between early coding exposure and long-term workforce outcomes became uneven. The “learn to code” movement raised an important question that still lingers today: Which skills actually endure when technologies change? That question has resurfaced in a new form.

Today, generative AI is driving a similar wave of urgency. Schools are once again being encouraged to adapt quickly, often with the same underlying rationale that teachers must prepare students for a future shaped by emerging technologies.

But if the instructional role of AI remains unclear, and if the tools themselves are likely to evolve rapidly, the more persistent challenge may lie elsewhere.

After conducting a two-year research project alongside teachers, who are adapting and are open to integrating AI, we found that uptake is still minimal. Most of our participants, including those who are engineering or computer science teachers, still struggle to identify a clear or universal instructional use case for widespread AI integration.

So, what should students learn to help them adapt to whatever comes next?

A growing body of research suggests that the answer may lie not in teaching students how to use a particular AI system, but in helping them understand the computational ideas that make those systems possible.

The Limits of Teaching the Tool

In recent years, many discussions about AI education have centered on teaching students how to use generative tools effectively. Prompt engineering, for example, has become a common topic in professional development workshops and online tutorials.

Yet, focusing heavily on tool-specific skills can create a familiar educational problem, because technology changes faster than curricula.

Teaching students how to interact with a specific interface risks becoming the equivalent of teaching to standardized tests, rather than teaching students important lessons that don’t appear on state exams.

The history of computing education offers a useful example. In the early 2010s, a wave of coding initiatives encouraged schools to teach programming skills broadly. While many of those programs expanded access to computer science education, subsequent analysis showed that workforce pipelines in technology remained uneven, and many students learned tool-specific skills without developing deeper computational reasoning abilities.

That experience offers a cautionary lesson for the current AI moment. If the goal of integrating AI into education is long-term preparation for technological change, focusing narrowly on how to use today’s tools may not be the most durable strategy.

The Skill That Outlasts the Tool

A growing body of research suggests that computational thinking is a more durable educational objective.

Computational thinking refers to a set of problem-solving practices used in computer science and other analytical disciplines. These include:

- breaking complex problems into smaller components

- recognizing patterns

- designing step-by-step processes

- evaluating the outputs of automated systems

These skills apply not only to programming but also to fields ranging from engineering to public policy.

Importantly, they also help students understand how algorithmic systems operate.

When students learn computational thinking, they gain the ability to analyze how technologies like AI produce results rather than simply accepting those results as authoritative.

In this sense, computational thinking provides a conceptual bridge between traditional academic skills and emerging digital systems.

What Teachers Are Already Doing

Many teachers in our study were already moving in this direction, often without using the term computational thinking.

When teachers asked students to analyze chatbot errors, they were encouraging students to examine how algorithmic systems produce outputs. When they designed exercises comparing training data and algorithms to everyday processes, they were helping students reason about how automated systems work.

These approaches do not require students to rely heavily on AI tools themselves. Instead, they position AI as a case study for examining how technology shapes information.

That framing aligns with longstanding educational goals around critical thinking, media literacy and problem-solving.

Implications for Educators

If the instructional use case for generative AI remains uncertain, educators may benefit from focusing on skills that remain valuable regardless of which tools dominate in the future.

Several practical approaches are already emerging in classrooms. Teachers can use AI systems as objects of analysis, asking students to evaluate outputs, identify errors and investigate how models generate responses.

Lessons can connect AI to broader topics such as data quality, algorithmic bias and information reliability.

Assignments that emphasize reasoning, structured problem solving and evidence evaluation continue to support the kinds of cognitive work that remain central to learning.

These approaches allow students to engage with AI without allowing the technology to replace the thinking process itself.

Implications for EdTech Developers

The experiences teachers described also highlight an opportunity for edtech companies.

Many current AI tools were developed as general-purpose language systems and later introduced into education contexts. As a result, teachers are often left to determine whether and how those tools align with classroom learning goals. Future products may benefit from deeper collaboration with educators during the design process.

Teachers in our conversations were already experimenting with small classroom applications, designing AI literacy lessons and building course-specific chatbots.

These experiments resemble early-stage product development.

Partnerships between educators, edtech developers and product managers could help identify instructional problems that AI systems could realistically address.

The Next Phase of the Research

The conversations described in this series represent an early attempt to document how teachers are navigating the arrival of generative AI.

As schools continue experimenting with these tools, the next challenge will be to develop governance frameworks that help educators evaluate when and how AI should be used in learning environments.

Our research team is beginning the next phase of this work by partnering with school districts to develop guidance for AI governance and inviting edtech companies interested in exploring these questions collaboratively.

Rather than assuming that AI will inevitably transform classrooms, this phase of the project will focus on identifying the conditions under which AI tools actually support teaching and learning and how to reduce harm when they don’t.

The fourth grade teacher’s question remains a useful guide: What can I actually use this for in math?

Until the answer becomes clearer, many teachers will likely continue doing what professionals in any field do when new technologies appear: experimenting cautiously, adopting what works and relying on their judgment to decide where or if the tool belongs.

If your school, district, organization, or edtech company is interested in learning more about joining our next project on AI governance, contact our research team at research@edsurge.com.

Tech

French AI start-up Mistral raises $830m in debt

![]()

The Paris-based company is building out ‘cutting-edge’ European data centres with a total capacity ambition of 200MW by 2027.

French AI start-up Mistral has raised $830m in its first debt financing, for the purposes of funding its data centre near Paris.

The company said the deal, supported by a consortium of seven “top-tier” global banks, would pay for Nvidia Grace Blackwell infrastructure with 13,800 Nvidia GB300 GPUs at the “cutting-edge” centre, bringing powered capacity to 44MW.

The data centre at Bruyères-le-Châtel, scheduled to be operational in the first half of this year, was previously earmarked to train AI models belonging to Mistral and its customers, while also “delivering high-performance inference services”, according to the company.

Last month, Mistral said it would spend over $1.4bn in Sweden on digital infrastructure, including a data centre, building towards its stated goal of 200MW of capacity across Europe by 2027.

“Scaling our infrastructure in Europe is critical to empower our customers and to ensure AI innovation and autonomy remain at the heart of Europe,” said Arthur Mensch, CEO of Mistral AI.

“We will continue to invest in this area, given the surging and sustained demand from governments, enterprises and research institutions seeking to build their own customised AI environment, rather than depend on third-party cloud providers.”

Mistral said it is building a “vertically integrated AI company” comprising “frontier open-weight models, deep enterprise integration, production deployments and its own compute infrastructure”.

It counts organisations in the tech, retail, logistics and public sectors among its customers, and has already partnered with the likes of AMSL, Ericsson and the European Space Agency to train models on their proprietary data.

Earlier this month, Mistral launched both ‘Small 4’, the newest model in its fully open-source ‘Small’ series with an aim of consolidating capabilities of its flagship models, and ‘Forge’, a platform that lets enterprises build custom models trained on their own data.

Last September, the 2023-founded French AI darling announced a Series C raise of around $2bn at a post-money valuation of more than $13bn, led by Dutch chipmaker ASML. Existing investors DST Global, Andreessen Horowitz, Bpifrance, General Catalyst, Index Ventures, Lightspeed and Nvidia took part.

Although a frontrunner in the European AI space, Mistral is far behind US competitors such as OpenAI and Anthropic in terms of funding levels and valuations.

Mistral is a founding member of the Nvidia Nemotron Coalition. As part of the initiative, Mistral and Nvidia plan to co-develop frontier open-source AI models.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

Tech

NASA Picks Intuitive Machines for a 2030 Artemis Moon Delivery Loaded with Science Tools and a Human Time Capsule

NASA has awarded Intuitive Machines a $180.4 million contract to deliver seven science payloads to a carefully chosen site near the lunar south pole. The Houston based company will use one of its larger lander configurations for the mission, designated IM-5, with a target landing date of around 2030 at Mons Malapert. The location was selected for good reason. The ridge maintains fairly consistent line of sight with Earth, receives relatively steady sunlight, and sits close to permanently shadowed regions that may hold water ice, a resource that could prove critical to sustaining long term human operations on the Moon.

The lander arrives loaded with instruments ready to start collecting data from the moment it touches down. A stereo camera package developed at NASA’s Langley Research Center, called the Stereo Cameras for Lunar Plume Surface Studies, will capture how the descent engines disturb the fine lunar soil, information that will help engineers design landing systems that cause less disruption to the surface. A near infrared spectrometer mounted on a small rover from Honeybee Robotics, led by NASA’s Ames Research Center, will then scan for minerals and potential ice deposits while also measuring surface temperatures and mapping how the soil composition varies across the landing area.

LEGO Technic NASA Artemis Space Launch System Rocket Building Toy for Boys & Girls – STEM Learning…

- BUILD AN OFFICIAL NASA ROCKET – Kids prepare to explore outer space with the LEGO Technic NASA Artemis Space Launch System Rocket (42221) building…

- 3-STAGE ROCKET SEPARATION – Young builders can turn the hand crank to watch the rocket separate in 3 distinct stages: solid rocket boosters, core…

- STEM BUILDING TOY FOR KIDS – This educational rocket kit was created in collaboration with NASA and ESA to showcase the authentic system that will…

A mass spectrometer called MSolo, built at NASA’s Kennedy Space Center, will analyze gases present at the landing site immediately after touchdown, focusing on lightweight molecules that could prove useful for future lunar explorers. Radiation monitoring is handled by a set of four detectors developed by the Korea Astronomy and Space Science Institute, measuring surface exposure levels to assess risks for both equipment and future crew while also providing insight into the geological history of the surrounding area.

A set of small sensors aboard the Australian Space Agency’s Roo-ver will track how landing plumes interact with surface materials across varying distances over time, part of NASA Goddard Space Flight Center’s Multifunctional Nanosensor Platform. The Roo-ver will also demonstrate its ability to navigate and move independently across uneven lunar terrain. A Laser Retroreflector Array, also out of Goddard, rounds out the payload with a compact set of mirrors designed to bounce laser signals back to orbiting spacecraft, improving navigation accuracy for future missions passing overhead or coming in to land nearby and helping establish reliable reference points across the lunar surface.

Rounding out the cargo is Sanctuary on the Moon, a time capsule developed in France containing information about human civilization, science, technology, culture, and the human genome, etched onto 24 durable synthetic sapphire discs. It is built to last, and designed to be found.

[Source]

Tech

Google’s new compression drastically shrinks AI memory use while quietly speeding up performance across demanding workloads and modern hardware environments

- Google TurboQuant reduces memory strain while maintaining accuracy across demanding workloads

- Vector compression reaches new efficiency levels without additional training requirements

- Key-value cache bottlenecks remain central to AI system performance limits

Large language models (LLMs) depend heavily on internal memory structures that store intermediate data for rapid reuse during processing.

One of the most critical components is the key-value cache, described as a “high-speed digital cheat sheet” that avoids repeated computation.

This mechanism improves responsiveness, but it also creates a major bottleneck because high-dimensional vectors consume substantial memory resources.

Article continues below

Memory bottlenecks and scaling pressure

As models scale, this memory demand becomes increasingly difficult to manage without compromising speed or accessibility in modern LLM deployments.

Traditional approaches attempt to reduce this burden through quantization, a method that compresses numerical precision.

However, these techniques often introduce trade-offs, particularly reduced output quality or additional memory overhead from stored constants.

This tension between efficiency and accuracy remains unresolved in many existing systems that rely on AI tools for large-scale processing.

Google’s TurboQuant introduces a two-stage process intended to address these long-standing limitations.

The first stage relies on PolarQuant, which transforms vectors from standard Cartesian coordinates into polar representations.

Instead of storing multiple directional components, the system condenses information into radius and angle values, creating a compact shorthand, reducing the need for repeated normalization steps and limits the overhead that typically accompanies conventional quantization methods.

The second stage applies Quantized Johnson-Lindenstrauss, or QJL, which functions as a corrective layer.

While PolarQuant handles most of the compression, it can leave small residual errors, as QJL reduces each vector element to a single bit, either positive or negative, while preserving essential relationships between data points.

This additional step refines attention scores, which determine how models prioritize information during processing.

According to reported testing, TurboQuant achieves efficiency gains across several long-context benchmarks using open models.

The system reportedly reduces key-value cache memory usage by a factor of six while maintaining consistent downstream results.

It also enables quantization to as little as three bits without requiring retraining, which suggests compatibility with existing model architectures.

The reported results also include gains in processing speed, with attention computations running up to eight times faster than standard 32-bit operations on high-end hardware.

These results indicate that compression does not necessarily degrade performance under controlled conditions, although such outcomes depend on benchmark design and evaluation scope.

This system could also lower operation costs by reducing memory demands, while making it easier to deploy models on constrained devices where processing resources remain limited.

At the same time, freed resources may instead be redirected toward running more complex models, rather than reducing infrastructure demands.

While the reported results appear consistent across multiple tests, they remain tied to specific experimental conditions.

The broader impact will depend on real-world implementation, where variability in workloads and architectures may produce different outcomes.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

Tech

Apple’s Early Days: Massive Oral History Shares Stories About Young Wozniak and Jobs

Apple’s 50th anniversary is this week — and Fast Company’s Harry McCracken just published an 11,000-word oral history with some fun stories from Apple’s earliest days and the long and winding road to its very first home computers:

Steve Wozniak, cofounder, Apple: I told my dad when I was in high school, “I’m going to own a computer someday.” My dad said, “It costs as much as a house.” And I sat there at the table — I remember right where we were sitting — and I said, “I’ll live in an apartment.” I was going to have a computer if it was ever possible. I didn’t need a house.

Woz even remembers trying to build a home computer early on with a teenaged Steve Jobs and Bill Fernandez from rejected parts procured from local electronics companies. Woz designed it — “not from anybody else’s design or from a manual. And Fernandez was one of those kids that could use a soldering iron.”

Bill Fernandez: The computer was very basic. It was working, and we were starting to talk about how we could hook a teletype up to it. Mrs. Wozniak called a reporter from the San Jose Mercury, and he came over with a photographer. We set up the computer on the floor of Steve Wozniak’s bedroom.

Well, the core integrated circuit that ran the power supply that I built was an old reject part. We turned on the computer, and the power supply smoked and burnt out the circuitry. So we didn’t get our photos in the paper with an article about the boy geniuses.

But within a few years Jobs and Wozniak both wound up with jobs at local tech companies. Atari cofounder Nolan Bushnell remembers that Steve Jobs “wasn’t a good engineer, but he was a great technician. He was pristine in his ability to solder, which was actually important in those days.” Meanwhile Allen Baum had shared Wozniak’s high school interest in computers, and later got Woz a job working at Hewlett-Packard — where employees were allowed to use stockroom parts for private projects. (“When he needed some parts, even if we didn’t have them, I could order them.”) Baum helped with the Apple I and II, and joined Apple a decade later.

Wozniak remembers being inspired to build that first Apple I by the local Homebrew Computing Club, people “talking about great things that would happen to society, that we would be able to communicate like we never did [before] and educate in new ways. And being a geek would be important and have value.” And once he’d built his first computer, “I wanted these people to help create the revolution. And so I passed out my designs with no copyright notices — public domain, open source, everything. A couple of other people in the club did build it.”

But Woz and Jobs had even tried pitching the computer as a Hewlett-Packard product, Woz remembers:

Steve Wozniak: I showed them what it would cost and how it would work and what it could do with my little demos. They had all the engineering people and the marketing people, and they turned me down. That was the first of five turndowns from Hewlett-Packard. Steve Jobs and I had to go into business on our own.

In the end, Randy Wigginton, Apple employee No. 6 remembers witnessing Jobs, Wozniak, and Ronald Wayne the signing of Apple’s founding contract, “which is pretty funny, because I was 15 at the time.” And it was Allen Baum’s father who gave Wozniak and Jobs the bridge loan to buy the parts they’d need for their first 500 computers.

After all the memories, the article concludes that “Trying to connect every dot between Apple, the tiny, dirt-poor 1970s startup, and Apple, the $3.7 trillion 21st-century global colossus, is impossible.”

But this much is clear: The company has always been at its best when its original quirky humanity and willingness to be an outlier shine through.

Mark Johnson, Apple employee No. 13: I was in Cupertino just yesterday. It’s totally different. They own Cupertino now.

Jonathan Rotenberg, who cofounded the Boston Computer Society in 1977 at age 13: People want to hate Apple, because it is big and powerful. But Apple has an underlying moral purpose that is immensely deep and expansive…

Mike Markkula, the early retiree from Intel whose guidance and money turned the garage startup into a company: The culture mattered. People were there for the right reasons — to build something transformative — not just to make money. That alignment produced extraordinary results…

Steve Wozniak: Everything you do in life should have some element of joy in it. Even your work should have an element of joy… When you’re about to die, you have certain memories. And for me, it’s not going to be Apple going public or Apple being huge and all that. It’s really going to be stories from the period when humble people spotted something that was interesting and followed it

I’ll be thinking of that when I die, along with a lot of pranks I played. The important things.

-

NewsBeat5 days ago

NewsBeat5 days agoManchester United reach agreement with Casemiro over contract clause amid transfer speculation

-

News Videos4 days ago

News Videos4 days agoParliament publishes latest register of MPs’ financial interests

-

Sports7 days ago

Sports7 days agoGary Kirsten Accuses Pakistan Cricket Board Of ‘Interference’, Mohsin Naqvi Responds

-

Sports7 days ago

Sports7 days agoRemo Stars and Kano Pillars Strengthen Survival Hopes in NPFL

-

NewsBeat3 days ago

NewsBeat3 days agoThe Story hosts event on Durham’s historic registers

-

Business4 days ago

Business4 days agoInstagram, YouTube Found Responsible for Teen’s Mental Health Struggle in Historic Ruling

-

News Videos7 days ago

News Videos7 days agoCh 9 Financial Management Part 1 | Detailed One Shot | Class 12 Business Studies Boards 2026

-

NewsBeat5 days ago

NewsBeat5 days agoTesco is selling new Cadbury Dairy Milk bar and people can’t wait to try it

-

Entertainment7 days ago

Entertainment7 days agoCynthia Bailey Dishes on ‘RHOA’ Season 17, Discusses Kandi

-

Tech7 days ago

Tech7 days agoSamsung will soon let you control smart home devices from your car’s dashboard

-

Entertainment2 days ago

Entertainment2 days agoLana Del Rey Celebrates Her Husband’s 51st Birthday In New Post

-

Fashion6 days ago

Fashion6 days agoDoes It Matter What You Wear When You’re Laid Off and Looking?

-

NewsBeat7 days ago

NewsBeat7 days agoColombian military plane with 110 soldiers onboard crashes following takeoff

-

Business6 days ago

Business6 days agoMore women enter wealth management, but few in advisory roles: study

-

Fashion7 days ago

Fashion7 days agoFringe Bags for the Season

-

NewsBeat6 days ago

NewsBeat6 days agoEntrepreneurs Forum survey reveals optimism in North East

-

NewsBeat6 days ago

NewsBeat6 days agoNASA Artemis II Astronauts enter 14-Day quarantine as moon rocket reaches launchpad

-

Business6 days ago

Business6 days agoLate-paying firms face multimillion-pound fines under new crackdown

-

Politics7 days ago

Politics7 days agoHow Media Platforms Balance Performance and Accessibility in Image Delivery

-

Crypto World6 days ago

Crypto World6 days agoBTC gives up $70,000 level as markets mull higher interest rates

You must be logged in to post a comment Login