TL;DR

A People Analytics study analyzing 205 tech professionals found that early employee attrition is driven more by stalled career momentum than workplace culture. Promotions, internal mobility, and visible growth opportunities were the strongest predictors of retention, while team socialization had little measurable impact.

I went into this research convinced I already knew the answer.

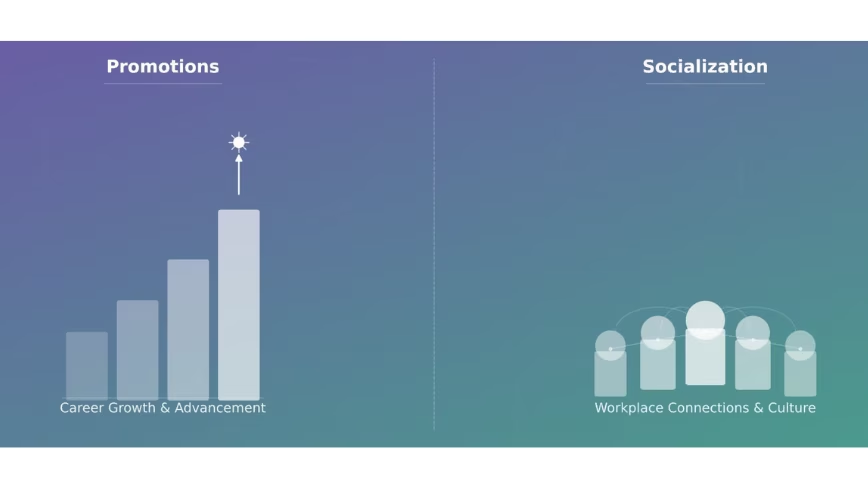

After more than a decade in People Analytics, the last few years at Meta, I had a working theory about why tech employees leave their jobs within the first year. Two things, I believed, were doing most of the damage: whether someone was getting promoted, and how often they were socialising with their immediate team outside of work. The first felt obvious. The second felt like the kind of human factor the industry consistently underweights.

I was half right.

When I surveyed 205 tech professionals globally and trained a machine learning model to predict early attrition, promotions came out as the single strongest signal in the dataset. But socialisation? It barely registered. And the factors that did matter alongside promotions pointed somewhere I hadn’t fully anticipated. Early attrition in tech isn’t primarily a culture problem. It’s a career momentum problem.

That finding changed how I think about retention. I suspect it might do the same for you.

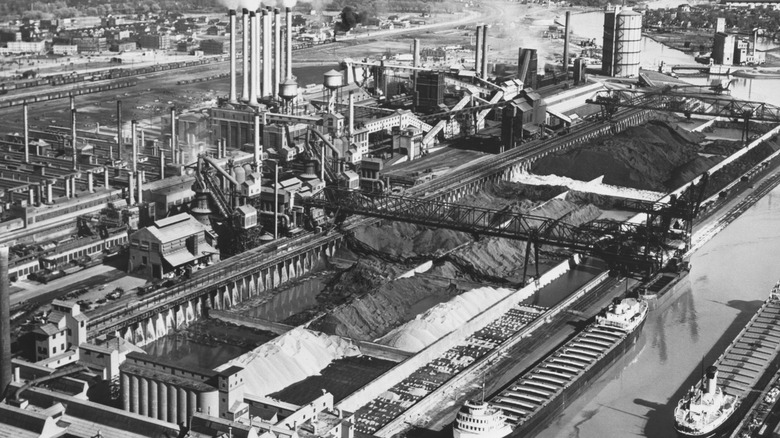

Tech has always had an attrition problem

The technology industry has one of the highest attrition rates of any sector. Median tenure at many tech companies sits at around one year, regardless of company size. This isn’t a post-pandemic hangover or a hot job market anomaly. It’s been the structural baseline for as long as the industry has existed, and the industry has never really solved it.

The costs are well documented. Replacing an employee can run up to 2.5 times their salary once you factor in recruiting, onboarding, lost productivity and the institutional knowledge that walks out the door with them. Research suggests that a single standard deviation increase in attrition rate correlates with an 8.9% drop in profits. In an era where tech companies are simultaneously pouring billions into AI infrastructure and scrutinising every other line of their cost base, haemorrhaging money on preventable attrition is a harder position to defend than it used to be.

What’s less well understood is why the problem persists despite enormous investment in trying to fix it. Tech companies spend heavily on perks, engagement programmes, culture initiatives and manager training. Some of it works at the margins. None of it has bent the curve in any meaningful way.

Part of the reason, I’d argue, is that most retention efforts are reactive. Someone signals they’re unhappy, or worse, hands in their notice, and the response kicks in. By then it’s usually too late. The question that has always interested me professionally isn’t how to respond to attrition once it’s happening. It’s whether you can see it coming early enough to do something about it.

There was no dataset for this, so I made one

The first problem I ran into was data. There’s no shortage of public datasets on employee attrition, but almost none of them specify industry. The most widely used one is a fictional HR dataset created by IBM data scientists, which has been recycled across dozens of academic studies. It’s clean, it’s accessible, and it tells you nothing specific about the technology sector.

So I built my own. I designed a 24-question survey and distributed it globally to professionals in the tech industry, with one hard requirement: both their current and previous employers had to be technology companies. After removing duplicates and incomplete responses, I had 205 usable records. Not a massive dataset by industry standards, but clean, specific, and purpose-built for the question I was trying to answer.

I defined “early attrition” as leaving a job within the first year. Every respondent was then classified as either an early attrition or not, and that classification became the target the model was trained to predict.

From there, I trained five machine learning algorithms on the data and tested each one across multiple configurations. I utilized an F1 score rather than simple accuracy to measure performance, and the reason matters. A model predicting whether someone left within a year could technically achieve high accuracy just by labelling everyone “stayed longer” since that’s the more common outcome. An F1 score accounts for that imbalance and gives a more honest picture of how well the model is actually working. The best-performing setup combined an algorithm called Extra Trees Classifier with a technique called SMOTE, which addressed the imbalance in the dataset by generating synthetic examples of the minority class. That combination achieved an F1 score of 0.97 out of a possible 1.

The model worked. The more interesting question was what it had learned.

Promotion was the loudest signal in the room

Of all the variables in the dataset, the number of times someone had been promoted in their previous job was the single strongest predictor of whether they left within the first year. The correlation was -0.54, which in plain terms means this: the fewer promotions someone had received, the more likely they were to be an early attrition. Not marginally more likely. Significantly more likely.

This confirmed half of my original hypothesis, and it shouldn’t surprise anyone who has worked in tech. Promotion isn’t just a title change or a pay increase. For most people, especially earlier in their careers, it’s the primary signal that the company sees them and is investing in their future. When that signal doesn’t come, people start looking for it elsewhere.

Nearly half of the respondents in my survey, 49%, had never been promoted in their previous job. That number sat with me. In an industry that prides itself on meritocracy and moving fast, nearly half the sample had never received a single formal recognition of progression. The model was picking up on something that was hiding in plain sight.

Alongside promotions, three other factors emerged as meaningful predictors. Each one is worth unpacking individually because the directionality isn’t always what you’d expect.

Age. Younger workers were significantly more likely to be early attritions. The correlation between age and early departure was -0.49, meaning the older the respondent, the less likely they were to have left within the first year. This makes intuitive sense when you think about it from a career psychology perspective. Earlier career employees carry less sunk cost, face more aggressive recruiting from competitors, and tend to have higher expectations of rapid progression. When those expectations aren’t met quickly, they move. For HR leaders, this means early-career and new graduate hires deserve disproportionate attention in the first twelve months. Visible career pathing and early promotion signals aren’t a nice-to-have for this cohort. They’re retention infrastructure.

Internal role changes. This one cuts against a common assumption. Employees who had experienced more role changes within their previous company were actually less likely to have been early attritions, with a correlation of -0.49. The instinct is often to treat internal mobility as a sign of restlessness. The data suggests the opposite. Movement inside a company appears to be a marker of engagement and investment, not instability. People who get moved around, who change teams or functions, are people who have been given reasons to stay invested. Rotational programmes and internal transfers aren’t just good for skill development. They’re retention tools.

Manager changes. The most counterintuitive finding in the dataset. Employees who had experienced more manager changes in their previous company were less likely to have left within the first year, with a correlation of -0.44. The assumption most people make is that manager instability drives attrition, and there is plenty of research supporting that at a general level. But within this dataset, the relationship ran the other way.

One thing worth being transparent about across both of these findings: someone who left within the first year simply had less time to accumulate role changes or manager changes than someone who stayed longer. That tenure effect is real and worth acknowledging. But the directional signal still holds. Employees who had weathered multiple manager changes or moved across teams had, by definition, found reasons to stay through organisational disruption. They had built enough roots that a change in reporting line or a shift in scope wasn’t enough to push them out. The dependency on any single manager or single role appears to be highest in the early months, before an employee has built broader organisational depth.

Taken together, these four factors point toward a consistent underlying pattern. Early attrition in tech tends to cluster around employees who are younger, less promoted, less mobile internally, and less embedded in the organisation. They haven’t been stagnant necessarily, but they haven’t been invested in either. The model wasn’t identifying people who were inherently likely to leave. It was identifying people who hadn’t yet been given enough reasons to stay.

The socialisation hypothesis didn’t survive contact with the data

I want to be honest about the part of my hypothesis that was wrong, because I think it’s actually the more instructive finding.

My original assumption was that how frequently employees socialised with their immediate teammates outside of work would be a meaningful predictor of early attrition. The logic felt sound. A sense of belonging, of actually liking the people you work with enough to spend time with them voluntarily, seemed like exactly the kind of human glue that keeps people in their seats during the first year when everything else is still uncertain.

The data didn’t support it. Socialisation frequency came out as one of the weakest signals in the entire model, with a near-zero correlation to early attrition after balancing the dataset.

I’ve thought about why that might be. One possibility is that socialisation outside work is a symptom of a good job rather than a cause of staying in one. People socialise with their teams when things are going well, when they feel settled, when they’re not spending their evenings on LinkedIn. It may be more of a trailing indicator than a leading one. Another possibility is that within the specific context of the first year, career momentum simply carries more weight than social connection. You can like your team and still leave if you’re not being promoted, not being moved, not being invested in.

What this tells me, practically, is that companies leaning heavily on culture and social programming as a retention strategy may be solving for the wrong thing, at least in the early tenure window. Those investments aren’t wasted. But if promotion cadence and internal mobility are broken underneath, no amount of team offsites is going to close the gap.

The signal is already in your data

Here is what I find most striking about these findings. None of the factors the model identified as predictive of early attrition are hidden. They’re not buried in sentiment data or detectable only through expensive listening programmes. Promotion history, age, internal mobility, manager changes. Every one of those data points exists in your HRIS right now. Most companies are sitting on the signal and not reading it.

The pattern the model learned to recognise looks something like this. An employee who is earlier in their career, has been in role for several months without a promotion conversation on the horizon, has never moved teams or changed scope internally, and whose entire organisational identity is still tied to a single manager they may or may not have a strong relationship with. That person is not necessarily unhappy yet. They may not have even consciously decided to leave. But the conditions for early attrition are already in place.

The traditional response to that situation, if it gets noticed at all, is reactive. A skip-level conversation after someone flags dissatisfaction. A retention offer after a competing offer has already landed. A manager coaching conversation after the engagement survey comes back low. By that point the decision is usually already made, or close to it.

What the data suggests is that the intervention window is much earlier than most organisations treat it. The first six months of someone’s tenure is when the pattern is being set. Are they getting feedback that signals a future at this company? Are they being considered for stretch opportunities or cross-functional projects? Is someone actively managing their career trajectory, or are they simply being left to onboard and get on with it?

This doesn’t require a machine learning model to act on. It requires People Analytics teams and HR business partners to start treating early tenure as a risk period that deserves structured attention, not just a probationary formality. Simple cohort analysis on your existing workforce data can surface who is sitting in the high-risk pattern right now. Who is under 30, has been in their role for more than eight months, has never changed teams, and has not had a promotion discussion documented? That list exists in your data today.

The AI investment angle matters here too. At a moment when technology companies are making significant bets on artificial intelligence and scrutinising headcount and operational costs more carefully than they have in years, the economics of preventable attrition look different than they did in a looser environment. Losing an early-career employee within the first year and absorbing the cost of replacing them, which can reach up to 2.5 times their salary, is not just a talent problem. It is a financial inefficiency that sits alongside every other cost a leadership team is being asked to justify.

Retention, viewed through that lens, stops being a soft HR metric and starts looking like an operational priority.

The harder question isn’t who’s leaving. It’s who you’ve given a reason to stay

Predicting attrition is the easier half of the problem. I want to be clear about that. A model can learn to recognise the pattern of someone who hasn’t been invested in. What it can’t do is tell you why your organisation keeps producing that pattern, or what it would actually take to change it.

That’s the question I’d leave with every HR and People Analytics leader reading this. Not “how do we build a model like this” but “what would we find if we ran this kind of analysis on our own workforce today?” Because the data is there. The pattern is legible. The gap is almost always in whether anyone is looking for it with enough time to act.

Tech companies are currently navigating one of the more complicated cost environments the industry has seen in a while. AI infrastructure spending is accelerating at a pace that is putting real pressure on every other budget line. Headcount decisions are being made with more scrutiny. The tolerance for inefficiency, financial or operational, is lower than it has been in years. In that context, the cost of losing an employee within the first year and absorbing the full weight of replacing them sits in uncomfortable tension with the AI investment conversation happening in the same leadership meeting.

You cannot cut your way to efficiency while quietly haemorrhaging talent at the bottom of the tenure curve. The two conversations need to be in the same room.

The research I did was a starting point, not a solution. A survey of 205 professionals, a machine learning model, a set of findings that confirmed some assumptions and challenged others. What it pointed toward, more than anything, is that early attrition in tech is not mysterious. It follows a pattern. That pattern is detectable. And in most organisations, the data needed to detect it already exists.

The question is whether anyone is looking.

You must be logged in to post a comment Login