Cerebras Systems has done what many chip startups aspire to but few ever achieve. On Thursday, the company and long-time Nvidia rival raised $5.55 billion in an initial public offering (IPO), making the company worth more than $66 billion on its first day of trading.

The milestone didn’t happen overnight. It took more than a decade, a radically different approach to chipmaking, and two separate attempts at an IPO to pull off.

Founded in 2015 by former SeaMicro head Andrew Feldman, Cerebras Systems’ first chips looked nothing like GPUs or AI accelerators of the time.

The bet that put Cerebras on the map

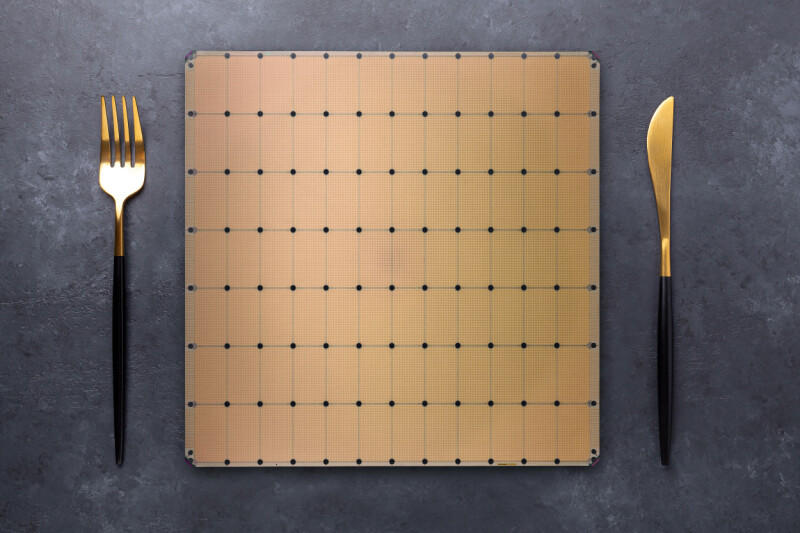

At the time, most high-end GPUs used dies measuring roughly 800 square mm that’d been cut from a larger wafer. Eight or more of these GPUs would typically be stitched together by high-speed interconnects, like NVLink, which allowed them to pool their resources and behave like one big accelerator.

Rather than cutting up a wafer into smaller chips just to reconnect them again, Cerebras figured why not etch all that compute into a wafer-sized chip? And so the Wafer-Scale Engine (WSE), a giant chip measuring 46,225 square mm — about the size of a dinner plate — was born.

Cerebras’ first chips weren’t just bigger; they were purpose-built for AI training and sported a novel compute engine designed to speed up the highly sparse matrix multiply-accumulate operations common in deep learning.

This hardware sparsity took advantage of the fact that large portions of a neural network’s parameters ultimately end up being zeros, allowing Cerebras to boost the effective computational output of its first-gen WSE accelerators from 2.65 16-bit petaFLOPS to 26.5.

Nvidia added support for sparsity in its Ampere generation a year later, but it only worked for a specific ratio (2:4), limiting its effectiveness to select use cases.

To train a model, up to 16 of these chips could be ganged together over a high-speed interconnect. This was kind of important too, because unlike GPUs, which stored model weights in HBM or GDDR memory, Cerebras’ chips were almost entirely reliant on on-chip SRAM. Although SRAM is insanely fast, which is why it’s used for caches in basically every modern processor, it’s not particularly space efficient.

While Cerebras’ first wafer-scale accelerator could theoretically reach 9 petabytes per second of memory bandwidth, it was limited to just 18 GB of capacity at a time when Nvidia was already at 32 GB per GPU and about to make the leap to 40 GB or even 80 GB per chip.

Still, the approach was performant enough that for its second-generation wafer-scale accelerator, launched in 2021, Cerebras doubled down on the architecture.

While the WSE-2 wasn’t physically larger, the move to TSMC’s 7nm process tech allowed the company to more than double the transistor count, compute density, SRAM capacity, and bandwidth.

The chips also supported larger clusters, scaling up to 192, though in practice these clusters were usually smaller at between 16 and 32 systems per site.

It was also around this time that Cerebras caught the attention of United Arab Emirates-based cloud provider G42, which quickly became its largest financier. By mid-2023, the chip startup had secured orders worth $900 million for nine supercomputing sites with a 36 exaFLOPS of super sparse AI compute between them.

A year later, Cerebras made the jump to TSMC’s 5nm process with the WSE-3 and while memory and bandwidth only saw modest gains, compute once again doubled now topping a 125 petaFLOPS of Sparse (12.5 petaFLOPS dense) compute at 16-bit precision.

Cerebras’ CS-3 systems have now seen the largest deployment, and now power the majority of the Condor Galaxy cluster it built for G42, as well as several new sites across North America and Europe.

Cerebras’ inference inflection

Up to mid-2024, Cerebras’ primary focus had been on training, but then the company announced a boutique inference-as-a-service offering to rival those from competing chip startups like Groq and SambaNova.

It turns out, Cerebras’ latest AI accelerators’ massive SRAM capacity not only made them potent training accelerators but particularly well suited to high-speed LLM inference.

In its third iteration, Cerebras’ wafer scale accelerators boasted more memory bandwidth than they could realistically use. At 21 PB/s, the chip’s memory is nearly 1000x faster than Nvidia’s new Rubin GPUs.

This, along with a dash of speculative decoding, allowed Cerebras to generate tokens far faster than any GPU-based system of the time. Even today, Cerebras routinely ranks among the fastest inference providers in the world.

According to Artificial Analysis, Cerebras’ kit can churn out more than 2,200 tokens a second when running GPT-OSS 120B High, 2.8x faster than the next closed GPU cloud Fireworks.

Cerebras didn’t know it at the time, but its inference platform would be a much bigger business than anyone had expected, and in September 2024, the company submitted its S-1 filing to the SEC to take the company public. Almost exactly a year later, Feldman quietly pulled its S-1, delaying its IPO.

His reasons? The company’s initial S-1 filing was rather concerning, as it showed G42 was responsible for 87 percent of its revenues. But in the year since launching its inference platform, Cerebras had racked up several high-profile customer wins from big names like Alphasense, AWS, Cognition, Meta, Mistral AI, Notion, and Perplexity. Feldman explained that the initial S-1 didn’t yet show the financial results of this growth. The company believed it would have a better story to tell investors later down the road.

Cerebras’ inference platform has only grown since then. The company has steadily expanded its footprint while announcing deeper relationships with AWS and adding OpenAI as a customer.

On Thursday, the startup officially joined the NASDAQ under the ticker CBRS, having raised $5.5 billion in the process. Shares skyrocketed nearly 70 percent on the first day of trading, as investors poured their money into a new way to play the AI boom.

An IPO is something many startups aspire to but few, especially in the cut throat world of semiconductors, ever accomplish.

What happens now

From a technical perspective, Cerebras is overdue for a refresh.

The WSE-3 accelerators that pushed it over the IPO finish line are getting rather long in the tooth and the architecture lead afforded by its SRAM-heavy design is shrinking.

Nvidia’s acquihire of Groq gave Feldman’s long-time rival an SRAM-packed inference platform of its own, while others are racing to catch up.

From here, we can only speculate, but we’ll hazard a guess that Cerebras’ new shareholders are going to want to see new silicon sooner than later.

Based on its existing roadmap, we expect WSE-4 will offer a sizable leap in floating point performance, though not necessarily at 16-bit precision. Much of the industry has aligned around lower precision data types like FP8 and FP4. An exaFLOP of ultra-sparse FP4 compute wouldn’t shock us in the least.

How useful sparsity would actually be for LLM inference is another matter. LLM inference hasn’t historically benefited much from sparsity, but that’s never stopped chipmakers from advertising sparse FLOPS anyway.

We also expect to see Cerebras pack more SRAM into its next wafer scale compute platform, possibly using TSMC’s 3D chip stacking tech to do it. The WSE-3’s 44GB of SRAM capacity remains a limiting factor for what models it can and can’t serve efficiently.

A trillion parameter model like Kimi K2 would require somewhere between 12 and 48 of Cerebras’ WSE-3 accelerators, depending on how the model weights are stored and how many parameters have been pruned, and so any increase in SRAM capacity would go a long way toward improving the efficiency of its accelerators.

More collaborations

Alongside new silicon, we can also expect to see more collaborations akin to Cerebras’ tie-up with AWS.

Earlier this year, AWS announced it would combine its Trainium3 AI accelerators with Cerebras’ WSE-3-based systems to speed up its inference platform in much the same way Nvidia is doing with Groq’s accelerators.

Cerebras could certainly do something similar with AMD or any other chipmaker. In this sense, Cerebras is in the position to offer its chips as a decode accelerator, which offloads the bandwidth intensive parts of the inference pipeline onto its chips, while other parts handle the compute heavy prompt processing side of the equation.

However, Cerebras frames its next collab; its shareholders are going to expect growth. And as the saying goes, the enemy of my enemy is my friend. ®

You must be logged in to post a comment Login