Business

Home working, long leases and rise of parking apps – what went wrong for NCP

Business

ITM Power Plc (ITMPF) Pre Recorded Shareholder/Analyst Call – Slideshow

ITM Power Plc (ITMPF) Pre Recorded Shareholder/Analyst Call – Slideshow

Business

Melania Trump denies any Epstein connection, seeks end to ‘lies’

Melania Trump denies any Epstein connection, seeks end to ‘lies’

Business

Chevron's local profit slides $1.4b

US-based Chevron Corporation’s Australian business unit made $1.4 billion less year-on-year in 2025, according to its annual report.

Business

NVIDIA Dominates AI Chip Race as Market Surges Toward $500 Billion Milestone

SAN FRANCISCO — NVIDIA Corp. solidified its commanding lead in the exploding artificial intelligence chip sector in early 2026, capturing roughly 80-85% of the AI accelerator market while the broader AI semiconductor industry hurtled toward half a trillion dollars in annual revenue amid insatiable demand for training and inference horsepower.

The Santa Clara, California-based company’s Blackwell platform, including the high-performance B100 and B200 GPUs, continued to sell out rapidly, powering the vast majority of the world’s largest AI data centers. Analysts project generative AI chips alone could approach $500 billion in revenue this year, representing nearly half of the global semiconductor market’s explosive growth toward $1.3 trillion overall.

NVIDIA’s dominance stems from its full-stack approach: not just raw silicon but the CUDA software ecosystem that has become the de facto standard for AI developers worldwide. CEO Jensen Huang has repeatedly described the shift as entering an “AI factory” era, with hyperscalers and enterprises racing to deploy massive GPU clusters for everything from large language models to scientific simulations.

Yet the race is far from over. A diverse field of challengers — from traditional semiconductor giants to hyperscale cloud providers designing custom silicon — is chipping away at NVIDIA’s near-monopoly, particularly in cost-sensitive inference workloads and specialized training tasks. Here are the 10 leading AI chip manufacturers shaping the industry in 2026, ranked by a blend of market share, technological impact, revenue contribution and innovation momentum.

1. NVIDIA Corp.

No company defines the AI chip boom like NVIDIA. Its data center revenue exploded past $100 billion in 2025, fueled by the Hopper and now Blackwell architectures. The Blackwell Ultra series promises 2.5 times the speed and up to 25 times better energy efficiency compared to prior generations, making it the go-to choice for flagship models from OpenAI, Anthropic and others.

NVIDIA’s strength lies in ecosystem lock-in. Developers trained on CUDA find switching costly, giving the company pricing power even as supply constraints ease. The upcoming Rubin architecture, slated for late 2026, is already generating buzz as the next leap forward. Despite growing competition, analysts expect NVIDIA to maintain 70-85% share in high-end AI accelerators through the year.

2. Advanced Micro Devices Inc. (AMD)

AMD has emerged as the most credible GPU alternative to NVIDIA, with its Instinct MI300X and newer MI355X accelerators gaining traction. The MI355X is touted as four times faster than the MI300X in key workloads, positioning it as a direct rival to Blackwell for data center deployments.

Microsoft has become one of AMD’s largest customers, deploying MI300X chips alongside NVIDIA GPUs to diversify supply. AMD’s advantage lies in price-performance ratios that appeal to cloud providers seeking to lower total cost of ownership. CEO Lisa Su has raised the long-term addressable market for AI accelerators to $1 trillion by 2030, and the company’s Zen 5 CPU architecture further bolsters hybrid AI systems.

3. Taiwan Semiconductor Manufacturing Co. (TSMC)

While not a designer of AI chips, TSMC is the indispensable manufacturer behind nearly all advanced AI silicon. The foundry produces cutting-edge 3-nanometer and 5-nanometer wafers for NVIDIA, AMD, Broadcom and hyperscalers’ custom designs, holding over 60% of the global foundry market and nearly 90% for leading-edge nodes.

TSMC’s Q1 2026 revenue surged 35% year-over-year to record levels, driven overwhelmingly by AI demand. The company is quadrupling advanced packaging capacity, particularly CoWoS for high-bandwidth memory integration critical to AI GPUs. Expansions in Arizona, Japan and Taiwan underscore its role as the backbone of the AI supply chain, even as geopolitical risks loom.

4. Broadcom Inc.

Broadcom has carved out a powerful niche in custom AI accelerators and high-speed networking silicon that glues AI clusters together. The company partners with Google on TPUs and is reportedly co-designing chips for Meta and potentially OpenAI, delivering energy-efficient ASICs tailored to specific workloads.

Its Ethernet switching and custom silicon expertise help hyperscalers reduce reliance on off-the-shelf GPUs. Broadcom’s backlog remains robust, and analysts see it benefiting from the shift toward inference-optimized and domain-specific chips as AI deployment scales beyond initial training phases.

5. Alphabet Inc. (Google)

Google pioneered custom AI silicon with its Tensor Processing Units (TPUs), now in their seventh generation with the Ironwood TPU v7. Released in late 2025, Ironwood scales to massive pods and is described by some analysts as technically on par with or superior to NVIDIA’s Blackwell in certain training and inference efficiency metrics.

TPUs power much of Google Cloud’s AI offerings and internal workloads for Gemini models. Google’s vertical integration — designing chips, owning the data centers and developing the models — gives it cost and performance advantages that are pressuring pure-play GPU vendors.

6. Amazon.com Inc. (AWS)

Amazon Web Services has aggressively expanded its Trainium and Inferentia lines. The Trainium3 UltraServer, unveiled in late 2025, packs 144 chips and delivers over four times the performance of prior generations while improving energy efficiency by 40%. AWS claims significant cost savings — up to 50% lower training expenses versus GPUs for many workloads.

Hundreds of thousands of Trainium chips are already deployed, including large clusters for Anthropic. As the world’s largest cloud provider, AWS uses its own silicon to control costs and offer competitive pricing to enterprise customers seeking alternatives to NVIDIA-dominated infrastructure.

7. Microsoft Corp.

Microsoft’s Maia 100 and follow-on Maia 200 accelerators are gaining deployment in Azure data centers, with claims of substantial performance edges in FP4 precision over competitors. The company continues blending in-house silicon with NVIDIA and AMD GPUs to optimize for OpenAI workloads and general cloud AI services.

Maia’s development reflects Microsoft’s massive AI infrastructure spend. While early generations faced delays, the strategy aims to reduce long-term dependency on external suppliers and tailor hardware to the specific needs of Copilot and enterprise AI applications.

8. Intel Corp.

Intel is fighting to regain relevance in AI with its Gaudi accelerators and Xeon processors featuring built-in AI enhancements. Under new leadership, the company is emphasizing total cost of ownership advantages and pushing into AI PCs with Core Ultra chips that bring neural processing units to laptops and desktops.

Intel’s foundry ambitions could eventually position it as a U.S.-based alternative to TSMC for AI chip production. While trailing in high-end data center GPUs, Intel sees opportunities in inference, edge AI and hybrid CPU-GPU systems.

9. Cerebras Systems

Among startups, Cerebras stands out with its wafer-scale engine (WSE-3), a dinner-plate-sized chip packing 900,000 AI cores and delivering extreme memory bandwidth. The system claims up to 75 times faster inference on large models compared to GPU clusters, with massive gains in scientific computing.

Cerebras targets hyperscale users needing ultra-fast throughput for reasoning and simulation tasks. Its full-wafer approach minimizes data movement bottlenecks that plague traditional multi-chip designs.

10. Qualcomm Technologies Inc.

Qualcomm leads in edge and mobile AI with its Snapdragon platforms and dedicated neural processing units. As on-device AI grows — powering features in smartphones, laptops and IoT devices — Qualcomm’s power-efficient designs are critical for battery-constrained applications and privacy-focused inference.

The company is expanding into automotive and data center edge use cases, positioning itself for the next wave of distributed AI where not every computation requires massive cloud clusters.

Outlook: Fragmentation and Opportunity

The AI chip landscape in 2026 reflects both NVIDIA’s enduring supremacy and a healthy push toward diversification. Hyperscalers’ custom ASICs are maturing, promising lower costs and better efficiency for specific workloads, while memory leaders like Micron and SK Hynix ride the high-bandwidth memory wave essential for all advanced AI systems.

Challenges remain: supply chain bottlenecks, enormous capital requirements for new fabs, and geopolitical tensions around Taiwan. Yet the momentum is unmistakable. Global semiconductor revenue is forecast to top $1.3 trillion this year, with AI as the primary catalyst.

For enterprises and investors, the message is clear: the AI chip race is accelerating, rewarding those who can deliver not just raw performance but sustainable, scalable and cost-effective intelligence at every layer of the stack. As models grow more capable and AI permeates every industry, the companies on this list — and nimble newcomers — will determine how fast and how far the technology revolution can run.

Business

EU and US near critical minerals deal to combat Chinese control, Bloomberg News reports

EU and US near critical minerals deal to combat Chinese control, Bloomberg News reports

Business

Short Waits Across Terminals on April 10

LOS ANGELES — Travelers at Los Angeles International Airport enjoyed relatively smooth security screenings Friday, with TSA wait times averaging under 15 minutes at most checkpoints as passenger volume remained moderate and staffing held steady on April 10, 2026.

Official data from the LAX website and real-time trackers showed the Tom Bradley International Terminal (TBIT) reporting general boarding waits of 8 to 11 minutes and TSA PreCheck lanes clearing in 3 to 5 minutes as of late Thursday into early Friday. Other terminals, including the recently modernized Terminal B, posted similarly light lines during off-peak morning hours, offering a welcome contrast to peak-period backups that can stretch 30-45 minutes.

LAX, one of the world’s busiest airports handling more than 80 million passengers annually, operates nine terminals with multiple security checkpoints. Friday’s lighter traffic aligned with typical mid-spring patterns outside major holidays, allowing many travelers to move quickly from curbside to gates.

Airport officials noted that while conditions can change rapidly, current staffing levels and programs like TSA PreCheck, CLEAR and the now-concluded Fast Lane have helped stabilize flow. Travelers are still advised to arrive with ample buffer time, especially for international flights departing from TBIT.

Historical patterns at LAX show clear peaks and valleys. Mornings from 7-9 a.m. and afternoons from 3-6 p.m. traditionally see the longest lines, sometimes exceeding 30 minutes. Off-peak windows — early mornings before 7 a.m. and evenings after 8 p.m. — frequently deliver waits of 10-15 minutes or less, matching Friday’s favorable reports.

Recent passenger feedback on social media and forums echoed the positive trend. Several travelers reported clearing security in under 10 minutes at various terminals, praising efficient staffing and improved layouts following years of modernization projects. “LAX TSA was shockingly quick today — under 8 minutes door to door,” one recent post noted.

The airport’s ongoing infrastructure upgrades have played a key role. Terminal renovations, expanded checkpoint space and better digital signage have reduced bottlenecks that once plagued LAX. Real-time wait time displays on the official flylax.com website and the MyTSA app help passengers choose optimal checkpoints.

TSA PreCheck continues delivering major time savings. Eligible passengers typically breeze through dedicated lanes in 3-5 minutes, while standard screening varies more widely. CLEAR biometric enrollment, available at multiple terminals, further accelerates the process for subscribers by handling identity verification upfront.

For international travelers, TBIT remains the primary hub with its own security area. Friday’s data showed manageable lines there despite the terminal’s high volume of long-haul flights. Domestic terminals 1 through 8 and the newer Terminal B generally posted comparable or shorter waits.

Broader national TSA trends influence LAX operations. The agency has worked to address staffing challenges through recruitment and seasonal adjustments. While occasional surges still occur — particularly around holidays or major events — Friday represented a smoother day amid spring travel season.

Experts recommend several strategies for navigating LAX security efficiently. Download the MyTSA app for live updates. Enroll in TSA PreCheck or CLEAR if flying frequently. Pack liquids in compliant quart-size bags to avoid secondary screening. Use the airport’s website or flight apps for gate information and estimated walk times.

LAX’s reputation has improved significantly in recent years. Once notorious for long lines and outdated facilities, targeted investments have enhanced passenger experience. Efficient security contributes heavily to that progress, especially on days like Friday when operations align favorably.

Looking ahead, travelers should monitor conditions closely. Weekends, spring break remnants and summer peaks can quickly increase volumes. Weather disruptions or flight delays often create cascading effects on security lines. Checking real-time data remains the best practice.

For those departing Friday, the shorter lines translated to less stress and more time to enjoy LAX’s improved amenities — from dining options and shopping to relaxation areas and art installations. Families, business travelers and tourists alike benefited from the efficient start to their journeys.

As operations continue throughout the day, officials will adjust staffing to match demand. The consistent message from LAX remains: verify wait times in real time, build in a safety buffer and prepare for variability even on seemingly ideal days.

Friday’s light security footprint served as a reminder that when passenger flow, staffing and timing align, LAX can deliver one of the more manageable experiences among major U.S. hubs. Travelers passing through today likely appreciated the rare gift of time saved at security before heading to their gates.

Business

Sodexo S.A. 2026 Q2 – Results – Earnings Call Presentation (OTCMKTS:SDXAY) 2026-04-10

Seeking Alpha’s transcripts team is responsible for the development of all of our transcript-related projects. We currently publish thousands of quarterly earnings calls per quarter on our site and are continuing to grow and expand our coverage. The purpose of this profile is to allow us to share with our readers new transcript-related developments. Thanks, SA Transcripts Team

Business

RBI’s move to scrap investment buffer could lift banks’ capital

One basis point is a hundredth of a percentage point.

Analysts said as much as ₹40,000 crore to ₹60,000 crore of accumulated reserves would get transferred to Tier I capital, although the gains could reflect on banks’ books only in FY27. Yields had crossed 7% for the benchmark 10-year paper toward the latter half of the March quarter, widening the spread over the policy rate.

More Money to lend Ending the practice of keeping aside funds for mark-to-market losses may unlock up to ₹60k-cr capital for banks

The IFR is an additional buffer of 2% of outstanding investments that banks must maintain daily to cushion against fluctuations in bond prices. The RBI has now proposed to discontinue the requirement of IFR and permit them to treat this buffer as Tier 1 capital. Effectively, the balance in the IFR may be transferred to statutory reserve, general reserve, or balance of profit & loss account. Public comments have been invited to the draft by April 29.

Karthik Srinivasan, group head, financial sector ratings, Icra, said the rating company estimates that MTM losses for banks in the quarter ended March could be about ₹15,000 crore to ₹20,000 crore.

“But assuming that these new IFR norms are effective from the first quarter of this fiscal, the gains to Tier 1 capital could be at least ₹40,000 crore which in a way is available for banks to lend. So net-net one can assume that banks are benefitting from this measure and increase in capital can potentially lead to higher lending opportunities,” Srinivasan said.

The IFR is scrapped just when banks are likely to show MTM losses in their treasury books with yield on benchmark 10-year government security hardening 45 basis points to 7.04% at the end of March 2026 from 6.59% at the end of December 2025.

Business

This coat cost $248 in illegal tariffs. Will he ever get the money back?

Importers are in line for tariff refunds. But whether everyone who paid the for the tariffs will get money back is a trickier question.

Business

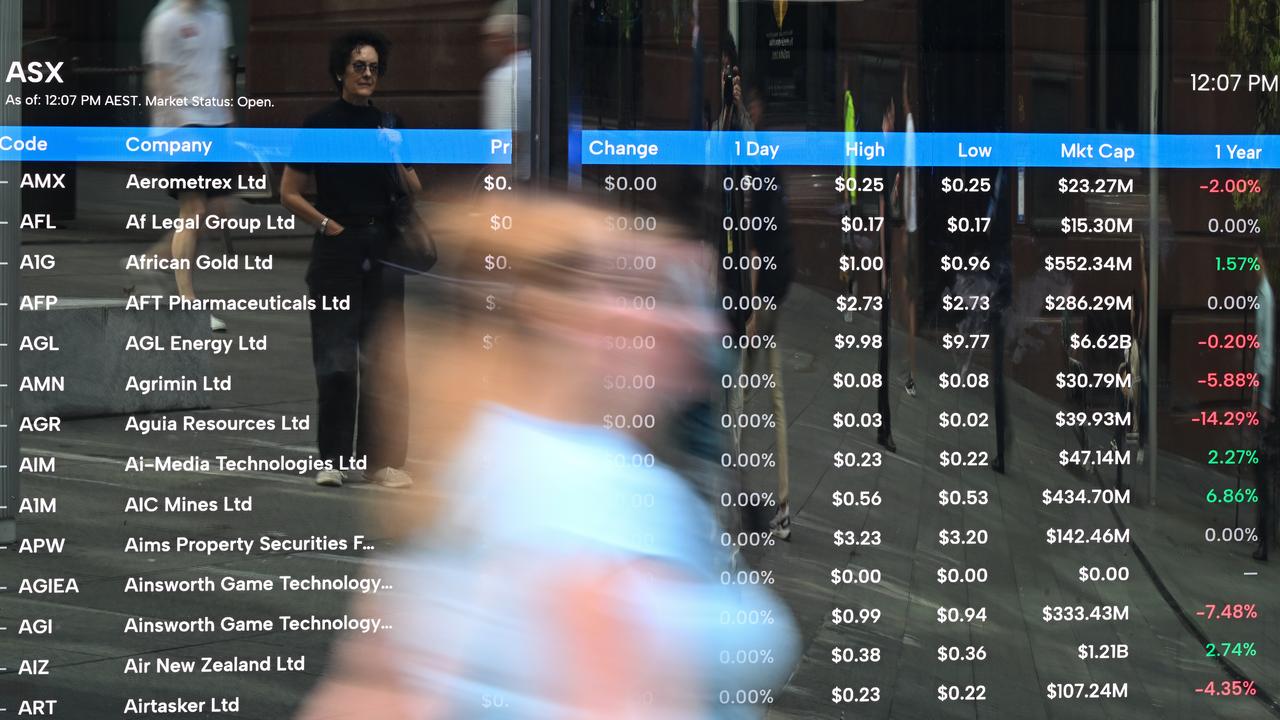

ASX has best week since 2022 despite ceasefire strain

Australia’s share market has notched its best week since October 2022, despite slipping ahead of key US-Iran ceasefire talks and with little sign Iran’s Hormuz Strait blockade is easing.

-

Fashion7 days ago

Fashion7 days agoWeekend Open Thread: Spanx – Corporette.com

-

Business4 days ago

Business4 days agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Sports6 days ago

Sports6 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Business6 days ago

Business6 days agoExpert Picks for Every Need

-

Tech3 days ago

Tech3 days agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Business5 days ago

Business5 days agoNo Jackpot Winner, Prize to Climb to $231 Million

-

Fashion4 days ago

Fashion4 days agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Fashion3 days ago

Fashion3 days agoLet’s Discuss: DEI in 2026

-

Politics7 days ago

Wings Over Scotland | The quality of mercy

-

Crypto World2 days ago

Crypto World2 days agoBitcoin recovers as US and Iran Agree a Ceasefire Deal

-

Business5 days ago

Business5 days agoAkebia Therapeutics, Inc. (AKBA) Discusses Pipeline Progress and Strategic Focus on Kidney Disease Treatments at R&D Day – Slideshow

-

Crypto World22 hours ago

Crypto World22 hours agoCanary Capital Files SEC Registration for PEPE ETF

-

Fashion7 days ago

Fashion7 days agoFrugal Friday’s Workwear Report: Hammered Metallic Button Sweater Vest

-

Politics6 days ago

Politics6 days agoThe UK should not pay a penny in slavery reparations

-

Tech4 days ago

Tech4 days agoSamsung just gave up on its own Messages app

-

Tech4 days ago

Tech4 days agoHaier is betting big that your next TV purchase will be one of these

-

Fashion7 days ago

Fashion7 days agoTory Burch’s Spring 2026 Campaign Goes on a Getaway

-

Fashion7 days ago

Fashion7 days agoWeekly News Update, 4.3.26 – Corporette.com

-

NewsBeat7 days ago

NewsBeat7 days agoKemi Badenoch talks ‘spring cleaning’ Reform defections

-

Tech4 days ago

Tech4 days agoGamer Restores the Original PlayStation Portal From Two Decades Ago

You must be logged in to post a comment Login