Crypto World

Key initiatives aimed at quantum-proofing the world’s largest blockchain

Quantum computers capable of breaking the Bitcoin blockchain do not exist today. Developers, however, are already considering a wave of upgrades to build defenses against the potential threat, and rightfully so, as the threat is no longer hypothetical.

This week, Google published research suggesting that a sufficiently powerful quantum computer could crack Bitcoin’s core cryptography in under nine minutes — one minute faster than the average Bitcoin block settlement time. Some analysts believe such a threat could become a reality by 2029.

Stakes are high: About 6.5 million bitcoin tokens, worth hundreds of billions of dollars, sit in addresses a quantum computer could directly target. Some of these coins belong to Bitcoin’s pseudonymous creator, Satoshi Nakamoto. Besides, the potential compromise would damage Bitcoin’s core tenets – “trust the code “and “sound money.”

Here’s what the threat looks like, along with proposals under consideration to mitigate it.

Two ways a quantum machine could attack Bitcoin

Let’s first understand the vulnerability before discussing the proposals.

Bitcoin’s security is built on a one-way mathematical relationship. When you create a wallet, a private key and a secret number are generated, from which a public key is derived.

Spending bitcoin tokens requires proving ownership of a private key, not by revealing it, but by using it to generate a cryptographic signature that the network can verify.

This system is foolproof because modern computers would take billions of years to break elliptic curve cryptography — specifically the Elliptic Curve Digital Signature Algorithm (ECDSA) — to reverse-engineer the private key from the public key. So, the blockchain is said to be computationally impossible to compromise.

But a future quantum computer can change this one-way street into a two-way street by deriving your private key from the public key and draining your coins.

The public key is exposed in two ways: From coins sitting idle onchain (the long-exposure attack) or coins in motion or transactions waiting in the memory pool (short-exposure attack).

Pay-to-public key (P2PK) addresses (used by Satoshi and early miners) and Taproot (P2TR), the current address format activated in 2021, are vulnerable to the long exposure attack. Coins in these addresses do not need to move to reveal their public keys; the exposure has already happened and is readable by anyone on earth, including a future quantum attacker. Roughly 1.7 million BTC sits in old P2PK addresses — including Satoshi’s coins.

The short exposure is tied to the mempool — the waiting room of unconfirmed transactions. While transactions sit there awaiting inclusion in a block, your public key and signature are visible to the entire network.

A quantum computer could access that data, but it would have only a brief window — before the transaction is confirmed and buried under additional blocks — to derive the corresponding private key and act on it.

Initiatives

BIP 360: Removing public key

As noted earlier, every new Bitcoin address created using Taproot today permanently exposes a public key onchain, giving a future quantum computer a target that never goes away.

The Bitcoin Improvement Proposal (BIP) 360 removes the public key permanently embedded on-chain and visible to everyone by introducing a new output type called Pay-to-Merkle-Root (P2MR).

Recall that a quantum computer studies the public key, reverse-engineers the exact shape of the private key and forges a working copy. If we remove the public key, the attack has nothing to work from. Meanwhile, everything else, including Lightning payments, multi-signature setups and other Bitcoin features, remains the same.

However, if implemented, this proposal protects only new coins going forward. The 1.7 million BTC already sitting in old exposed addresses is a separate problem, addressed by other proposals below.

SPHINCS+ / SLH-DSA: Hash-based post-quantum signatures

SPHINCS+ is a post-quantum signature scheme built on hash functions, avoiding the quantum risks facing elliptic curve cryptography used by Bitcoin. While Shor’s algorithm threatens ECDSA, hash-based designs like SPHINCS+ are not seen as similarly vulnerable.

The scheme was standardized by the National Institute of Standards and Technology (NIST) in August 2024 as FIPS 205 (SLH-DSA) after years of public review.

The tradeoff for security is size. While current bitcoin signatures are 64 bytes, SLH-DSA are 8 kilobytes (KB) or more in size. As such, adopting SLH-DSA would sharply increase block space demand and raise transaction fees.

As a result, proposals such as SHRIMPS (another hash-based post-quantum signature scheme) and SHRINCS have already been introduced to reduce signature sizes without sacrificing post-quantum security. Both build on SHPINCS+ while aiming to retain its security guarantees in a more practical, space-efficient form suitable for blockchain use.

Tadge Dryja’s Commit/Reveal Scheme: An Emergency Brake for the Mempool

This proposal, a soft fork suggested by Lightning Network co-creator Tadge Dryja, aims to protect transactions in the mempool from a future quantum attacker. It does so by separating transaction execution into two phases: Commit and Reveal.

Imagine informing a counterparty that you will email them, then actually sending an email. The former is the commit phase, and the latter is the reveal.

On the blockchain, this means you first publish a sealed fingerprint of your intention — just a hash, which reveals nothing about the transaction. The blockchain timestamps that fingerprint permanently. Later, when you broadcast the actual transaction, your public key becomes visible — and yes, a quantum computer watching the network could derive your private key from it and forge a competing transaction to steal your funds.

But that forged transaction is immediately rejected. The network checks: does this spend have a prior commitment registered on-chain? Yours does. The attacker’s does not — they created it moments ago. Your pre-registered fingerprint is your alibi.

The issue, however, is the increased cost due to the transaction being broken into two phases. So, it’s described as an interim bridge, practical to deploy while the community works on building quantum defences.

Hourglass V2: Slowing the spending of old coins

Proposed by developer Hunter Beast, Hourglass V2 targets the quantum vulnerability tied to roughly 1.7 million BTC held in older, already-exposed addresses.

The proposal accepts that these coins could be stolen in a future quantum attack and seeks to slow the bleeding by limiting sales to one bitcoin per block, to avoid a catastrophic overnight mass liquidation that could crater the market.

The analogy is a bank run: you cannot stop people from withdrawing, but you can limit the pace of withdrawals to prevent the system from collapsing overnight. The proposal is controversial because even this limited restriction is seen by some in the Bitcoin community as a violation of the principle that no external party can ever interfere with your right to spend your coins.

Conclusion

These proposals are not yet activated, and Bitcoin’s decentralized governance, spanning developers, miners and node operators, means any upgrade is likely to take time to materialize.

Still, the steady flow of proposals predating this week’s Google report suggests the issue has long been on developers’ radar, which may help temper market concerns.

Crypto World

Crypto policy stakes rise as Anthropic launches PAC amid AI policy rift

Anthropic, the AI safety-focused lab behind several widely used language models, has moved to formalize its political engagement by launching an employee-funded political action committee named AnthroPAC. A filing with the Federal Election Commission shows the organization as a connected entity to Anthropic, organized as a separate segregated fund and aimed at receiving voluntary contributions from employees. The filing outlines the PAC’s intent to participate in federal elections while remaining aligned with the company’s stated interest in AI policy and safety considerations.

Under U.S. campaign finance rules, individual contributions to a federal candidate are capped at $5,000 per election, with disclosures required through public filings. AnthroPAC’s organizers say the fund is designed to support candidates from both major parties. However, observers and industry watchers are already raising questions about how closely the effort will stay within bipartisan lines, given broader debates over AI regulation, safety standards, and the strategic direction of AI policy in Washington.

The AnthroPAC move lands as Anthropic navigates a fraught relationship with the U.S. government over how its technology should be employed. Separately, the Defense Department in February designated Anthropic as a supply chain risk—an action tied to the company’s stance against the use of its AI in fully autonomous weapons and mass surveillance. Anthropic has challenged that designation in court, contending it constitutes retaliation for a protected position. A federal judge in California has temporarily blocked the measure and paused further restrictions while the dispute unfolds.

Beyond governance and defense concerns, Anthropic has already been active politically this cycle. Notably, the company contributed $20 million to Public First Action, a political committee focused on AI safety and related policy advocacy, underscoring the firm’s broader strategy to influence AI-related regulation and public safety standards.

Meanwhile, Anthropic’s broader ecosystem is drawing capital and infrastructure support that could accelerate its technology roadmap. In a related development, Google is preparing to back a multibillion-dollar data-center project in Texas that would be leased to Anthropic via Nexus Data Centers. The project’s initial phase could exceed $5 billion, with Google expected to provide construction loans and be joined by banks arranging additional financing. The arrangement highlights the growing demand for AI infrastructure capable of supporting expansion in model training, inference, and data storage.

Key takeaways

- Anthropic formed AnthroPAC, an employee-funded political action committee registered as a separate segregated fund under the company’s umbrella.

- The PAC is intended to support candidates from both parties, with strict contribution limits and mandatory disclosures under U.S. election law.

- The move occurs amid fraught relations with the Pentagon over AI use, including a safety-focused designation that Anthropic is challenging in court.

- Anthropic has a track record of political giving in this cycle, including a $20 million contribution to Public First Action focused on AI safety.

- Google’s backing of a Texas data-center project for Anthropic signals strong infrastructure demand and potential financing mechanisms that could accelerate AI deployment.

Anthropic’s political engagement and the policy context

The formation of AnthroPAC marks a notable step in how AI firms engage with lawmakers and regulators. By coordinating staff contributions through a dedicated PAC, Anthropic signals a structured approach to influencing elections and policy debates that shape the development and governance of artificial intelligence. The FEC filing describes AnthroPAC as a “connected organization” operating under a separate segregated fund, aligning with typical industry practices for corporate-employee political activity. While the stated aim is bipartisanship, the broader AI policy environment in the United States has become highly polarized, with differing views on liability, safety mandates, data privacy, and government access to AI systems.

Investors and builders watching the space can interpret this as part of a broader trend: major AI developers increasingly engage directly in policy conversations, seeking to frame the regulatory environment in ways that balance innovation with oversight. The implications extend beyond ethics and governance; policy direction can materially affect the regulatory runway for product development, procurement, and collaboration with public sector actors. The presence of a formal PAC also raises questions about how corporate political contributions could influence which AI-safety and governance proposals gain traction on Capitol Hill and in regulatory agencies.

Defense frictions and legal maneuvering

The tension between Anthropic and the Department of Defense centers on how the company’s models should be deployed in sensitive contexts. The Pentagon’s decision to label Anthropic as a supply chain risk stemmed from the company’s public stance against fully autonomous weapons and broad surveillance use. Anthropic has challenged that designation in court, arguing that it amounts to retaliation for a viewpoint it regards as legitimate and protected. A federal judge in California issued a temporary ruling to pause the measure and related restrictions while the case proceeds, illustrating the jurisdictional balance between corporate risk assessments and national-security considerations in AI technology usage.

For policymakers, the case underscores a core policy question: where should the line be drawn between compelling safety and preserving innovation? If courts narrow how procurement risk designations can be wielded, it could affect how similar technology providers are treated as the government expands its AI procurement and testing programs. Conversely, if the government can justify risk designations on safety grounds, it could strengthen leverage for tighter controls on how AI systems are used in defense contexts.

Political giving and AI-safety advocacy

Anthropic’s political activity isn’t limited to its new PAC. Earlier in the cycle, the company contributed a sizable $20 million to Public First Action, a political arm focused on AI safety and public-interest considerations tied to the development and governance of AI technologies. This level of funding signals a broader strategy to influence public discourse and regulatory design around AI, complementing the PAC’s electoral role with policy advocacy and education efforts. Observers are watching how such funding patterns translate into concrete policy outcomes, particularly in an environment where legislators are weighing landmark AI bills and safety standards that could shape model development, data usage, and transparency requirements.

Infrastructure bets amid AI acceleration

Infrastructure matters are increasingly central to AI strategy, and Google’s involvement in a Texas data-center project for Anthropic is a vivid illustration. The Nexus Data Centers-leased facility, if realized as outlined, could become a cornerstone asset to support large-scale model training and deployment. The project’s initial phase exceeding $5 billion underscores the capital intensity of modern AI initiatives and the financial orchestration that underpins them. Google’s expected role in providing construction loans, alongside competitive financing arrangements from banks, points to the consolidation of AI infrastructure finance as a distinct sub-market within the tech sector. For Anthropic and similar firms, such backing could shorten timelines to deploy more capable models and scale services that demand robust, energy-efficient, and highly reliable data-center capacity.

As policy debates progress, industry participants and investors should monitor both political and practical developments: how much traction new AI safety proposals gain in Congress, how procurement rules evolve in defense programs, and how infrastructure financing evolves to accommodate the next wave of AI workloads. Each of these strands will influence not only which AI products reach market first, but also how quickly the industry can translate research advances into real-world use cases across enterprise, healthcare, and public services.

Readers should stay attentive to any updates on Anthropic’s PAC activity and the Pentagon case outcomes, as both arenas will shape the company’s public-facing strategy and its broader partnerships. The balance between safety-driven governance and aggressive innovation remains a live tension set to define the next phase of AI adoption and investment.

Crypto World

Crypto Token Glut Is Diluting Value And Breaking Investor Returns

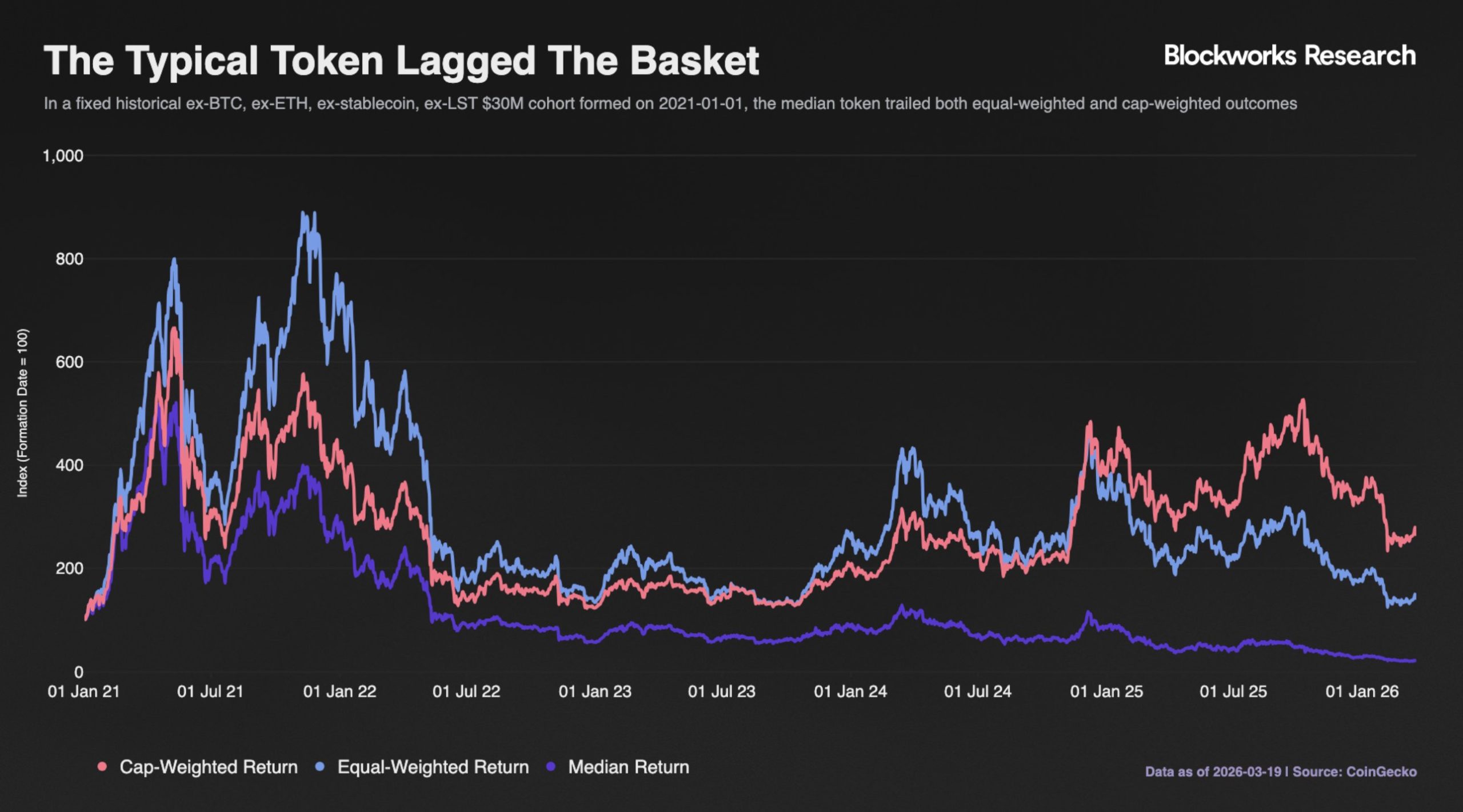

The rapid growth in the number of crypto tokens is outpacing the value they generate, creating an “existential” problem for the industry, according to Michael Ippolito, co-founder of Blockworks.

In a series of posts on X, Ippolito noted that while total crypto market capitalization remains relatively strong, the average value per token tells a different story. “The average coin is only slightly higher than where it was in 2020 (!) and down ~50% since 2021,” he wrote.

Median token returns have also deteriorated sharply. Most tokens are down roughly 80% from their highs, suggesting that gains have been concentrated in a narrow set of large-cap assets, while the broader market underperforms, Ippolito claimed.

He argued that the imbalance appears to be driven by a rapid expansion in token supply. “We created a TON of new assets and STILL total market cap is flat,” he wrote, adding that this dynamic effectively dilutes value across a growing pool of tokens.

Related: Bitcoin ‘done’ with 85% crashes, says Cathie Wood amid new $34K target

Token prices break from fundamentals

Ippolito also claimed that the relationship between fundamentals and price has weakened. In 2021, token prices closely tracked onchain revenue. Recent data shows that despite a resurgence in protocol revenues, prices have not followed, pointing to a disconnect between usage and investor returns.

He argued that this signals a loss of confidence in tokens as vehicles for capturing value. “The token problem is existential for this industry,” he said, adding that without stronger alignment between fundamentals and price, the sector risks losing its core appeal.

In a post on X, Arthur Cheong, founder and CEO of DeFiance Capital, said he agrees “with the urgency to fix the current situation of tokens in the crypto industry,” warning that if the market continues to concentrate around a small set of assets like Bitcoin and Ether, the broader crypto ecosystem risks losing relevance.

Related: Bitcoin shorts risk $2.5 billion liquidation at $72K: Are bears in danger?

Capital shifts from tokens to stocks

Investor demand is increasingly moving away from newly launched tokens toward publicly listed crypto firms, as most token launches fail to hold value, a February research from DWF Labs found. The report revealed that over 80% of projects trade below their token generation event (TGE) price, with typical losses of 50% to 70% within about three months.

The pattern appears structural rather than cyclical. According to DWF’s Andrei Grachev, most tokens peak within the first month before declining under sustained selling pressure. Factors such as airdrops and early investor unlocks add to the supply overhang, reinforcing downward price trends even for projects with active products or protocols.

Magazine: Bitcoin may take 7 years to upgrade to post-quantum — BIP-360 co-author

Crypto World

Token supply surge leaves most crypto assets underwater

Crypto markets are facing renewed pressure as the number of tokens keeps rising faster than the value those assets create.

Summary

- Most crypto tokens trade far below prior highs as supply keeps rising across markets.

- New token launches keep growing, while value creation fails to support broader market pricing today.

- Investor demand is shifting toward crypto stocks as many token launches lose value quickly.

Market participants are now questioning whether token launches, supply schedules, and value capture models still support long-term investor interest across the wider sector.

Michael Ippolito, co-founder of Blockworks, said the crypto industry now faces an “existential” token problem as supply continues to expand. In a series of posts on X, he said total market capitalization has stayed relatively firm, but the average value of individual tokens has remained weak.

He wrote that “the average coin is only slightly higher than where it was in 2020” and is down about 50% from 2021 levels. He also said most tokens now trade roughly 80% below their peak prices, showing that gains have stayed concentrated in a small group of large-cap assets.

Ippolito also said token prices no longer move in line with protocol fundamentals as closely as they did in 2021. At that time, prices and onchain revenue often moved together. Recent data, however, shows that protocol revenues have recovered in many cases while token prices have not.

He said this gap points to weaker confidence in tokens as tools for capturing network value. According to him, “the token problem is existential for this industry,” as the market no longer rewards activity and revenue in the same way it did in earlier cycles.

Arthur Cheong, founder and chief executive of DeFiance Capital, agreed with the urgency of the issue. In a post on X, he said the market needs to address token structure problems before attention shifts even more toward a narrow group of assets such as Bitcoin and Ether.

That view adds to growing concern that smaller tokens may continue to lose relevance if investors keep focusing on a few dominant names. The trend has raised questions about whether the broader token market can still attract capital on a wide scale.

Investors Shift Focus to Crypto Stocks

A February report from DWF Labs said investor demand has increasingly moved from newly launched tokens to publicly listed crypto companies. The report found that more than 80% of projects trade below their token generation event price, with losses of 50% to 70% within about three months.

DWF Labs partner Andrei Grachev said most tokens reach their highest level within the first month after launch and then fall under steady selling pressure.

He said airdrops and early investor unlocks often add more supply to the market, making it harder for prices to hold even when projects remain active.

Crypto World

Rocket Lab (RKLB) vs AST SpaceMobile (ASTS): Top Space Stocks to Monitor in 2026

Quick Overview

- Rocket Lab achieved 38% revenue growth, reaching $601.8 million in 2025, backed by a $1.85 billion record backlog

- An $816 million Space Development Agency contract strengthened Rocket Lab’s position in government aerospace

- AST SpaceMobile generated $70.9 million in 2025 revenue as it continues early-stage commercial infrastructure development

- AST maintains over $3.9 billion in pro forma liquidity to support satellite constellation expansion

- Analyst consensus favors Rocket Lab with a Moderate Buy rating, while AST receives a Reduce rating

Among space sector equities, Rocket Lab and AST SpaceMobile stand out as two of the most discussed investment opportunities. However, these companies pursue fundamentally different strategies and carry distinct risk-reward profiles. One has established a diversified operational foundation. The other represents a transformative vision for global mobile connectivity.

Rocket Lab delivered impressive financial performance throughout 2025. The company recorded 38% year-over-year revenue expansion, totaling $601.8 million. Its fourth-quarter performance reached a milestone with $179.7 million in revenue. Perhaps most significantly, the firm concluded 2025 with a $1.85 billion backlog—a 73% increase from the previous year. This substantial order book provides greater revenue visibility than most competitors in the space industry can demonstrate.

The company’s revenue composition demonstrates meaningful diversification beyond launch services. Product sales generated $371.6 million during 2025, complemented by $230.2 million from service operations. Today’s Rocket Lab manufactures complete spacecraft, subsystems, and specialized components for defense and intelligence agencies.

Government Contract Wins Strengthen Rocket Lab’s Position

A significant milestone arrived when the company secured an $816 million agreement with the Space Development Agency. This substantial contract validates Rocket Lab’s capabilities for executing complex, multi-year government programs. Meanwhile, the Neutron medium-class launch vehicle represents management’s primary catalyst for the next phase of expansion.

Profitability remains elusive despite operational progress. Rocket Lab recorded a $198.2 million net loss for 2025. Leadership projected continued negative adjusted EBITDA for the opening quarter of 2026. Market valuations currently reflect anticipated future scale rather than present earnings performance.

AST SpaceMobile pursues an entirely different opportunity. The enterprise aims to deploy a satellite constellation providing cellular broadband connectivity directly to unmodified smartphones—eliminating requirements for specialized equipment. Successfully executing this vision at scale could unlock previously inaccessible market segments that conventional satellite providers cannot economically serve.

AST remains in the foundational stages of its mission. The company reported $70.9 million in total 2025 revenue. Fourth-quarter performance contributed $54.3 million, primarily from gateway equipment deliveries, mobile network operator partnerships, and government development milestones.

Strong Liquidity Position Supports AST SpaceMobile’s Deployment Plans

The company maintained $2.8 billion in cash and equivalents as of year-end 2025. Following additional capital raises completed in early 2026, pro forma liquidity exceeded $3.9 billion. This financial cushion enables continued satellite deployment without near-term funding pressures.

AST has secured more than $1.2 billion in contracted revenue commitments from strategic partners. For a company just beginning to recognize meaningful revenue, this represents substantial commercial validation. Nevertheless, significant losses continue, and ultimate success depends critically on deployment velocity and network performance metrics.

Analyst sentiment clearly distinguishes between the two opportunities. Rocket Lab earns a Moderate Buy consensus rating, comprising 2 Strong Buys, 7 Buys, 7 Holds, and 1 Sell recommendation. AST SpaceMobile receives a Reduce consensus, with 2 Buys, 6 Holds, and 3 Sells.

Final Thoughts

Investment community confidence runs higher for Rocket Lab’s proven business model. While acknowledging AST’s substantial upside potential, analysts find the opportunity more challenging to quantify at this development stage. Rocket Lab offers greater operational maturity, revenue diversification, and broader analytical support. AST represents a higher-risk proposition with correspondingly larger potential returns if its satellite broadband architecture achieves technical and commercial success.

Rocket Lab presents the more established investment thesis currently. AST SpaceMobile offers the more ambitious transformational opportunity. The appropriate choice depends entirely on individual investor risk tolerance and portfolio objectives.

Crypto World

UK Courts Anthropic After US Military Dispute Sparks Blacklist Concerns

TLDR

- The British government is actively pursuing Anthropic for expanded UK operations

- Offers include London headquarters expansion and dual stock exchange listing opportunities

- Prime Minister Keir Starmer’s administration is directly supporting the initiative

- Anthropic faced US blacklisting after declining to permit Claude for military surveillance or weaponized systems

- Federal courts have temporarily halted the blacklist enforcement, with additional legal challenges underway

British officials are making aggressive moves to attract Anthropic, the developer of the Claude AI assistant, as reported by the Financial Times. The UK sees a strategic opening to expand the company’s presence following escalating tensions between Anthropic and the Pentagon.

The British government’s pitch encompasses expanding Anthropic’s current London operations and facilitating a dual stock market listing. The UK’s Department of Science, Innovation and Technology is spearheading these initiatives.

Prime Minister Keir Starmer’s administration has thrown its weight behind the department’s outreach efforts. Officials plan to present these proposals directly to Anthropic’s Chief Executive Dario Amodei during his anticipated UK visit scheduled for late May.

Both Anthropic and the UK’s Department of Science, Innovation and Technology declined to provide statements when contacted by Reuters.

The Pentagon Dispute Explained

The Department of Defense labeled Anthropic as a national-security supply-chain threat. The designation stemmed from the company’s firm stance against permitting its Claude AI system to be deployed for US military surveillance operations or autonomous weaponry applications.

This classification resulted in Anthropic being added to a government blacklist. Such listings typically limit a company’s capacity to collaborate with federal agencies and approved contractors.

Anthropic mounted a swift legal response. A federal judge granted temporary relief, preventing the blacklist from becoming operational while litigation proceeds.

The AI company has simultaneously launched a separate legal challenge targeting the supply-chain threat classification itself. This additional lawsuit remains pending judicial review.

Britain’s Strategic Proposal

The UK’s aggressive courtship represents part of a wider strategy to capitalize on uncertainty surrounding American technology governance.

A dual stock listing arrangement would enable Anthropic shares to trade on British exchanges parallel to any potential US market debut. This structure would provide UK-based investors with immediate access to company equity.

Expanding the London facility would strengthen Anthropic’s European footprint significantly. Britain has cultivated a thriving AI ecosystem, with government officials making tech investment attraction a cornerstone policy objective.

The Financial Times report did not indicate whether Anthropic has shown interest in or rejected the British proposals.

Amodei’s late May UK visit is anticipated as the critical juncture when officials will formally present their complete package.

The temporary judicial stay on the blacklist designation leaves Anthropic’s regulatory status in flux. The resolution of both ongoing legal battles will probably determine the company’s strategic direction going forward.

Crypto World

Virgin Galactic (SPCE) Stock Faces Fresh Sell Rating Despite $750K Ticket Launch

Key Takeaways

- Wall Street Zen shifted its SPCE rating from “hold” to “sell” on April 4, 2026

- Shares currently trade near $2.43, while the analyst consensus price target stands at $3.45

- Virgin Galactic has resumed accepting reservations at $750,000 per seat for Delta Class flights

- First quarter earnings showed a loss per share of ($0.98), surpassing expectations, though revenue of $0.31 million fell short of projections

- Jefferies lowered its price objective from $8.00 to $5.00 while maintaining a “buy” stance, pointing to cash flow timing issues

Virgin Galactic (SPCE) stock began Friday’s session at $2.43, declining 1.4% during trading.

Virgin Galactic Holdings, Inc., SPCE

Wall Street Zen revised its outlook on SPCE from “hold” to “sell” on April 4, 2026. This shift reinforces a generally bearish analyst sentiment, with MarketBeat reporting a consensus rating of “Reduce” and a mean price target of $3.45.

Morgan Stanley maintains an “underweight” stance with a $2.30 price objective. Weiss Ratings similarly assigns a “sell” grade. Among six tracked analysts, one recommends buying, three suggest holding, and two advise selling.

Jefferies reduced its price forecast from $8.00 to $5.00 recently, while retaining its “buy” recommendation. The investment firm highlighted cash flow timing uncertainties within the developing space industry.

SPCE has fluctuated between $2.13 and $6.64 over the past 52 weeks. The stock’s 50-day moving average sits at $2.56, with the 200-day average at $3.25. A beta of 2.20 indicates significant volatility compared to broader market movements.

On March 30, Virgin Galactic announced Q1 earnings per share of ($0.98), outperforming the ($1.12) consensus forecast. Revenue reached $0.31 million, missing the anticipated $0.41 million.

Return on equity registers at negative 108.78%, while net margin sits at negative 18,063.93%. The company carries a debt-to-equity ratio of 1.87, though its current ratio of 2.87 indicates sufficient near-term liquidity.

Market capitalization currently stands around $177 million. Wall Street projects full-year earnings per share of ($16.05) for the ongoing fiscal period.

Fresh Reservations for Next-Generation Spacecraft

Coinciding with the rating downgrades, Virgin Galactic reopened its reservation system for flights aboard the upcoming Delta Class vehicle. Tickets now cost $750,000 per person — a $150,000 increase from the $600,000 price point in 2023.

The Delta Class accommodates six passengers, representing a two-seat capacity boost over previous models. Virgin Galactic plans to conduct test flights this summer, followed by commercial operations launching in the fall. Research missions will precede passenger journeys by six to eight weeks.

The initial offering includes 50 available seats before the company temporarily closes bookings. CEO Michael Colglazier indicated that future pricing rounds will feature higher rates, though specific amounts remain undisclosed.

Additionally, a queue of 675 “founding astronauts” — early customers who secured spots with deposits years earlier — will board flights at discounted rates compared to new purchasers.

Ambitious Monthly Flight Goals

Virgin Galactic’s most recent commercial mission, Galactic 07, took place on June 8, 2024. That flight marked the final journey of VSS Unity, the organization’s inaugural spacecraft.

Colglazier has established an ambitious goal of conducting 10 flights monthly by 2027, which would transport approximately 60 passengers each month. Achieving this frequency hinges on successful summer testing of the Delta Class vehicle.

Institutional investors control 46.62% of SPCE shares. Multiple funds expanded their holdings in recent quarters, with Truist Financial Corp boosting its position by 78.2% during Q4.

Susquehanna established a $3.50 price target in January 2026.

Crypto World

Micron (MU) Stock Plunges 20%: Is This a Dip Worth Buying or a Red Flag?

Key Takeaways

- Micron’s share price has tumbled approximately 20% following its second-quarter results released March 18, as investors worry about Google’s TurboQuant potentially cutting memory requirements

- Mizuho’s Vijay Rakesh continues to rate the stock as Outperform with a $530 target, characterizing the downturn as an attractive entry point

- The company’s DRAM average selling prices climbed in the mid-60% range during Q2, while NAND ASPs jumped in the high-70% range, demonstrating robust pricing strength

- Wall Street remains divided: certain analysts view the selloff as panic-driven, while others highlight risks from customer concentration and pricing sustainability

- Over the trailing twelve months, Micron shares have surged 324%, eclipsing gains from Nvidia, AMD, TSMC, and Broadcom

The past several weeks have proven turbulent for Micron. Following one of the most impressive rallies in the chip industry — climbing 324% year-over-year — the memory specialist encountered serious resistance. The trigger came from Google’s unveiling of TurboQuant, a lossless compression algorithm that sent jitters through the investment community about potential declines in DRAM and NAND requirements. Markets responded swiftly.

Following Micron’s fiscal second-quarter report on March 18, shares have declined approximately 20%. This represents a significant pullback for a business that recently stood as a poster child for the artificial intelligence boom.

The downturn revolves around one core concern: if Google’s TurboQuant technology enables superior data compression while preserving model precision, cloud giants may require substantially less physical memory for their AI operations. Reduced DRAM and NAND consumption translates to weakened pricing leverage for Micron. This narrative, though, faces pushback from multiple industry watchers.

Vijay Rakesh from Mizuho mounted a strong counterargument. He retained Outperform classifications for both Micron and Sandisk (SNDK), assigning price objectives of $530 and $710 respectively. Rakesh invoked the Jevons paradox — an economic principle suggesting efficiency gains frequently stimulate increased usage rather than decreased demand. His reference point: when DeepSeek emerged in 2025 and initially shook GPU equities, AI infrastructure investments ultimately intensified.

Rakesh further noted that Google’s TurboQuant documentation itself suggests possibilities for expanded models and accelerated inference capabilities, which would still necessitate considerable memory resources. He characterizes the present decline as excessive market pessimism.

Examining the Financial Performance

Micron’s second-quarter results painted an impressive picture. DRAM unit shipments increased mid-single digits on a sequential basis, while average selling prices surged in the mid-60% range. NAND unit volumes expanded low-single digits, accompanied by ASP growth in the high-70% territory. These represent exceptional pricing premiums, propelled by constrained availability rather than explosive volume expansion.

Seeking Alpha’s Oliver Rodzianko highlighted this pattern. He noted that Micron currently faces greater supply limitations than demand constraints, and that DRAM and NAND market tightness should persist past 2026 based on company guidance. His apprehension doesn’t center on technological factors — rather, he questions how much of Micron’s profitability stems from price inflation versus sustainable structural advantages.

Should pricing revert to historical norms, profit margins could face pressure. Rodzianko additionally emphasized customer concentration concerns: Micron maintains heavy exposure to hyperscaler capital expenditure, meaning any slowdown in that deployment cycle would deliver swift and substantial stock impact.

Optimistic Voices Emphasize AI Infrastructure Growth

Analyst Dmytro Lebid offered a decidedly positive perspective. He attributed the decline to “irrational investor behavior” and suggested the market is overstating deceleration threats. From his vantage point, cloud providers’ hunger for HBM3E memory remains undiminished, while Micron’s supply-limited status preserves margin health.

Nvidia’s ongoing requirements alone should sustain growth momentum, he contended, establishing a solid foundation beneath Micron’s pricing structure.

The company is simultaneously expanding production capabilities across Idaho, Tongluo, and Singapore facilities stretching into 2027–2028 — representing a strategic commitment that AI-powered memory consumption will maintain its upward trajectory.

As of early April 2026, Micron traded near $366 per share, commanding a market capitalization approaching $413 billion within a 52-week trading band of $61.54 to $471.34.

Crypto World

Binance USDT Reserves Surge as BWCI Hits One-Year High Amid Bitcoin Sell-Off

TLDR:

- Binance USDT inflows are nine times higher than levels recorded during Bitcoin’s all-time high of $123K in June 2025.

- The BWCI reached a one-year record of 74.58%, confirming that institutional players are driving the current liquidity surge.

- Binance Open Interest climbed 2.22% to $6.17B, with USDT reserves acting as direct collateral for derivatives expansion.

- Bitcoin’s $54K downside risk persists unless ETF flows confirm the reversal and global risk aversion sentiment fully fades.

Binance is registering USDT inflows nine times higher than those seen at Bitcoin’s all-time high of $123,000 in June 2025.

Bitcoin trades at $66,990 as of writing amid a geopolitical risk-off environment. On-chain data reveals a massive buildup of institutional liquidity on the platform. The Binance Whale Concentration Indicator, or BWCI, has reached a one-year high of 74.58%. This places Binance at the center of global digital dollar liquidity.

BWCI Signals Institutional Takeover on Binance

The BWCI measures liquidity quality by crossing inflow data with capital retention on the exchange. At Bitcoin’s June 2025 all-time high, the indicator registered just 8.25%, pointing to a retail-driven top.

Today’s reading of 74.58% confirms that large players are now absorbing panic liquidity. This marks a one-year record for institutional-grade capital concentration on the platform.

On-chain analyst GugaOnChain shared the data on social media, noting the scale of this divergence. The post stated that USDT flow is serving as direct collateral for Open Interest expansion. Open Interest on Binance rose 2.22% throughout the day, reaching a total of $6.17 billion.

USDT Exchange Reserves on Binance reached $3.4993 billion within a 24-hour window. Whales are deploying this capital to establish support levels in spot markets.

At the same time, they are directing derivatives activity using the same reserves. The BWCI confirms this flow is the direct engine behind the observed Open Interest growth.

This activity surpasses the flow recorded during the “Trump Tariff Flush” of April 9, 2025, which stood at 20.11%. The current BWCI reading of 74.58% places Binance above every other venue for deployable digital liquidity. Large players are consolidating strategic order book control not seen in recent months.

ETF Flows and Macro Sentiment Remain Key Variables for Bitcoin

Despite the strong liquidity buildup on Binance, downside risk for Bitcoin has not disappeared. A move toward $54,000 remains possible if ETF flows fail to confirm a trend reversal.

On-chain data strength alone cannot guarantee a new macro expansion. Broader geopolitical uncertainty continues to weigh on overall market direction.

ETF flows serve as a bridge between traditional finance and the crypto market. Without their confirmation, Binance’s accumulation may not translate into a sustained recovery. Global risk aversion sentiment must fully exhaust itself before the bullish case can materialize.

The current market structure on Binance differs from what was seen in prior downturns. Institutional players are not retreating; they are positioning with clear precision and scale.

This behavior reflects a degree of confidence in Bitcoin’s longer-term trajectory. Short-term risks tied to macro conditions, however, remain present.

The BWCI at its one-year high confirms this is not a retail-driven accumulation phase. Smart money is making deliberate moves while fear remains elevated in the broader market.

Macro headwinds will ultimately determine whether this positioning pays off. Binance, for now, stands as the clearest measure of institutional intent in the global crypto market.

Crypto World

Trump Coins Rally Following Rumors of the President’s Health

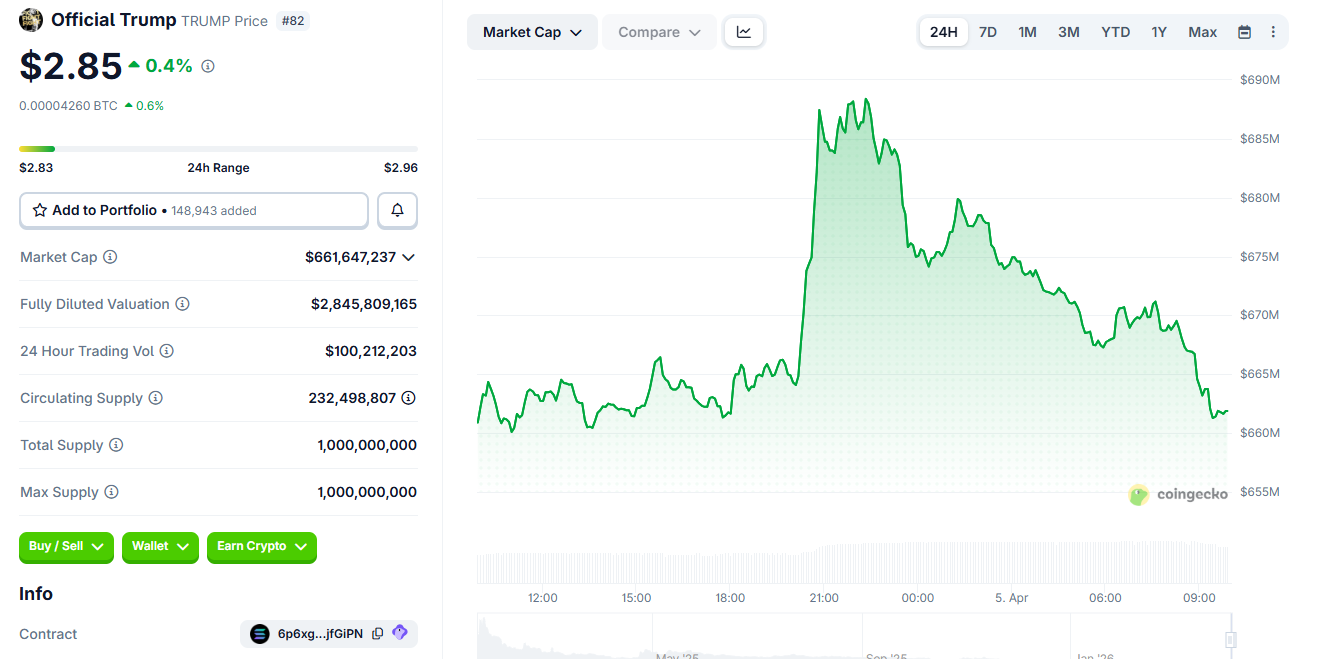

The meme coin Official TRUMP (TRUMP) surged on Saturday, April 4, 2026, amid rumors about President Donald Trump’s health.

An old video from 2024 (of the attempted attack in Butler, Pennsylvania) was shared again, and correspondents published images of a Marine sentry at the entrance to the West Wing.

The TRUMP Meme Coin Soars Amid Health Uncertainty

The rumors began after Donald Trump canceled his public appearances.

At the same time, rumors circulated that Trump had been rushed to Walter Reed National Military Medical Center following a medical emergency.

However, minutes later, presidential spokesman Steven Cheung slammed the news, indicating that Trump was still working.

“There has never been a president who has worked harder for the American people than President Trump. This Easter weekend, he worked nonstop at the White House and the Oval Office. God bless him,” Cheung articulated.

According to available information, Trump remained in Washington and was working at the White House and the Oval Office, without trips to his golf course or Walter Reed hospital.

Despite the denials, the TRUMP meme coin saw its price and trading volume rise over the past 24 hours.

After nearly 5% gains in the immediate aftermath of this news, TRUMP price was up by o.5% on Sunday, trading for $2.85 as of this writing. Other Trump-themed meme coins also saw a sharp rally.

These types of speculative assets are highly sensitive to news related to the US president. It reflects how rumors, even when debunked, can generate immediate movement in the political meme coin market.

Nevertheless, the TRUMP meme coin remains 96% below its all-time high of $73.43 recorded in January 2025.

The post Trump Coins Rally Following Rumors of the President’s Health appeared first on BeInCrypto.

Crypto World

Foxconn (2354.TW) Q1 Revenue Surges 30% Amid AI Server Boom and iPhone Sales

Key Highlights

- Hon Hai Precision’s first-quarter revenue climbed 29.7% annually to T$2.13 trillion (approximately $66.6 billion)

- Cloud and networking products division spearheaded expansion; smartphone manufacturing showed robust performance amid fresh Apple releases

- Monthly revenue for March reached an all-time high of T$803.7 billion, representing a 45.6% annual increase

- Management highlighted “volatile” geopolitical landscape, especially Middle East tensions, as a primary concern

- Shares have declined 16% since January, significantly trailing Taiwan’s benchmark index which gained 12%

Hon Hai Precision Industry — widely recognized as Foxconn — announced first-quarter revenue totaling T$2.13 trillion ($66.6 billion) this past Sunday, marking a 29.7% increase from the same period last year. The figure narrowly missed the LSEG SmartEstimate consensus of T$2.148 trillion.

The primary catalyst behind this impressive performance was the cloud and networking products unit, which benefited from explosive growth in AI infrastructure requirements. As Nvidia’s principal server manufacturer, Foxconn has positioned itself at the center of the AI hardware revolution, and this strategic partnership continues to deliver substantial returns.

The smart consumer electronics division — home to iPhone assembly operations — similarly demonstrated robust expansion following the introduction of new Apple products. As one of Foxconn’s most critical clients, Apple’s product refresh cycles consistently generate significant revenue momentum for the manufacturing giant.

March delivered particularly impressive results. The company generated T$803.7 billion in revenue, setting a new record for the month and representing a 45.6% surge compared to the previous year. These are the types of figures that capture Wall Street’s attention.

AI Infrastructure Momentum Continues

Foxconn indicated that demand for AI rack systems should maintain its upward trajectory throughout the second quarter, with operational metrics expected to improve both sequentially and year-over-year. While the company refrained from issuing precise numerical targets — consistent with its typical practice — the overall tone remained decidedly optimistic.

Comprehensive first-quarter financial results are scheduled for release on May 14, which will provide investors with deeper insights into profit margins and overall profitability beyond the top-line revenue figures.

The ongoing expansion of AI infrastructure remains the fundamental growth driver. Hyperscale data center operators show no signs of reducing their capital expenditure, and Foxconn maintains a crucial position within this critical supply chain.

Geopolitical Concerns Shadow Outlook

Notwithstanding the impressive financial performance, company leadership adopted a measured approach regarding future prospects. Foxconn emphasized that it “remains necessary to monitor the impact of the volatile global political and economic situation,” though the statement lacked detailed elaboration.

Chairman Young Liu has previously singled out the Middle East conflict as the most significant external threat confronting the organization throughout 2025. Vulnerabilities in supply chain networks and international logistics present legitimate risks to sustained operations.

This cautious stance appears to be influencing investor sentiment. Hon Hai shares have tumbled 16% year-to-date, presenting a dramatic divergence from Taiwan’s primary stock index, which has appreciated 12% during the identical timeframe.

The stock finished Thursday’s trading session down 2% ahead of the revenue announcement, largely mirroring broader market movements. Taiwan’s financial markets were shuttered Friday and resume operations Tuesday.

Market participants will be monitoring whether the exceptional March performance — combined with persistent AI sector tailwinds — proves sufficient to reverse sentiment on a stock that has underperformed the broader market by nearly 30 percentage points in 2025.

Complete quarterly earnings arrive May 14.

-

NewsBeat3 days ago

NewsBeat3 days agoSteven Gerrard disagrees with Gary Neville over ‘shock’ Chelsea and Arsenal claim | Football

-

Business2 days ago

Business2 days agoNo Jackpot Winner and $194 Million Prize Rolls Over

-

Fashion2 days ago

Fashion2 days agoWeekend Open Thread: Spanx – Corporette.com

-

Entertainment6 days ago

Fans slam 'heartbreaking' Barbie Dream Fest convention debacle with 'cardboard cutout' experience

-

Crypto World4 days ago

Crypto World4 days agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Tech6 days ago

Tech6 days agoThe Pixel 10a doesn’t have a camera bump, and it’s great

-

Crypto World5 days ago

Dems press CFTC, ethics board on prediction-market insider trades

-

Tech6 days ago

Tech6 days agoAvatar Legends: The Fighting Game comes out in July and it looks pretty slick

-

Business3 days ago

Business3 days agoLogin and Checkout Issues Spark Merchant Frustration

-

Sports14 hours ago

Sports14 hours agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Tech6 days ago

Tech6 days agoApple will hide your email address from apps and websites, but not cops

-

Sports5 days ago

Sports5 days agoTallest college basketball player ever, standing at 7-foot-9, entering transfer portal

-

Tech5 days ago

Tech5 days agoEE TV is using AI to help you find something to watch

-

Politics6 days ago

Politics6 days agoShould Trump Be Scared Strait?

-

Tech5 days ago

Tech5 days agoFlipsnack and the shift toward motion-first business content with living visuals

-

Tech7 days ago

Tech7 days agoElon Musk’s last co-founder reportedly leaves xAI

-

Fashion6 days ago

Fashion6 days agoThe Best Spring Trends of 2026

-

Tech5 days ago

Tech5 days agoHow to back up your iPhone & iPad to your Mac before something goes wrong

-

Crypto World6 days ago

Crypto World6 days agoBitcoin’s Six-Month Losing Streak: What On-Chain Data Says About the Market’s Next Move

-

Politics6 days ago

Politics6 days agoBBC slammed for ignoring author of The Fraud

You must be logged in to post a comment Login