Crypto World

Stanford flags rising opacity at the frontier

The AI models at the frontier of performance are also the least transparent about how they are built and tested, according to Stanford HAI’s 2026 AI Index released Monday, which found that companies are sharing progressively less about training data and benchmark performance even as their models become more powerful and more widely deployed.

Summary

- Stanford’s report documents that AI companies are sharing less information about how their models are trained, and that independent testing sometimes contradicts what companies report; “a lot of companies are not releasing how their models do in certain benchmarks, particularly the responsible-AI benchmarks,” the report states, citing growing opacity at the exact moment when accountability matters most.

- The benchmarks designed to measure AI progress are themselves failing: some are poorly constructed, with a popular math benchmark carrying a 42 percent error rate, while others can be gamed by models trained on the benchmark test data itself, meaning strong scores do not reliably indicate stronger or safer models in real-world deployment.

- US trust in the government to regulate AI sits at just 31 percent, the lowest of any country surveyed in the index; globally, the EU is trusted more than either the US or China to regulate AI effectively, a finding that reflects both the EU AI Act’s full enforcement in January 2026 and the absence of a comparable federal framework in the US.

SiliconAngle reported that the 2026 index documents a world where AI adoption is accelerating at historic speed while “public trust in AI oversight and transparency hits new lows.” The two trends are directly related: as AI tools reach more than half the global population and generate $172 billion in annual consumer value in the US alone, the lack of visibility into how the most powerful models are built and evaluated creates a governance gap that neither regulators nor the public can easily close without the data to work from.

The benchmark problem is not abstract. If a model scores well because it was trained on test data, that score provides no meaningful signal about how the model will perform on novel tasks in deployment. For complex use cases like AI agents and robots, the report notes that benchmarks barely exist yet, meaning the most consequential AI applications are being deployed with almost no standardized external validation.

The opacity operates at multiple levels. At the training level, companies have reduced disclosure about the datasets, filtering methods, and human feedback processes used to build their models. At the evaluation level, they are choosing which benchmarks to publish results on, a selection that naturally favors the tests on which their models perform well. At the deployment level, independent researchers testing the same models sometimes find results that contradict what companies have publicly stated. The Stanford report does not name specific companies but documents the pattern as industry-wide.

Why This Matters More Now Than It Did Two Years Ago

Two years ago, frontier AI models were research tools used primarily by developers and researchers. Today they are integrated into customer service systems, hiring workflows, medical information delivery, financial advice, and legal research. The gap between benchmark performance and real-world performance is no longer an academic concern; it determines whether the systems that millions of people interact with daily are actually doing what their developers claim. The report’s finding that responsible-AI benchmarks are the category companies most often decline to publish results on is precisely the category that matters most for those real-world applications.

What Regulatory and Industry Standards Currently Exist

As crypto.news has reported, the AI infrastructure buildout is advancing faster than the governance structures designed to evaluate it, a tension that is visible in both investment markets and public policy debates. As crypto.news has noted, the competitive pressure among frontier AI labs to release capable models quickly creates structural incentives against transparency, because publishing benchmark weaknesses or training methodology details can be exploited by competitors. Stanford’s report frames that dynamic as the central accountability problem of the current AI era, with 47 countries now having introduced AI-specific legislation but only 23 having enacted laws with active enforcement mechanisms.

Crypto World

XRP slips below $1.40 on heavy volume, tightening range puts breakout in focus

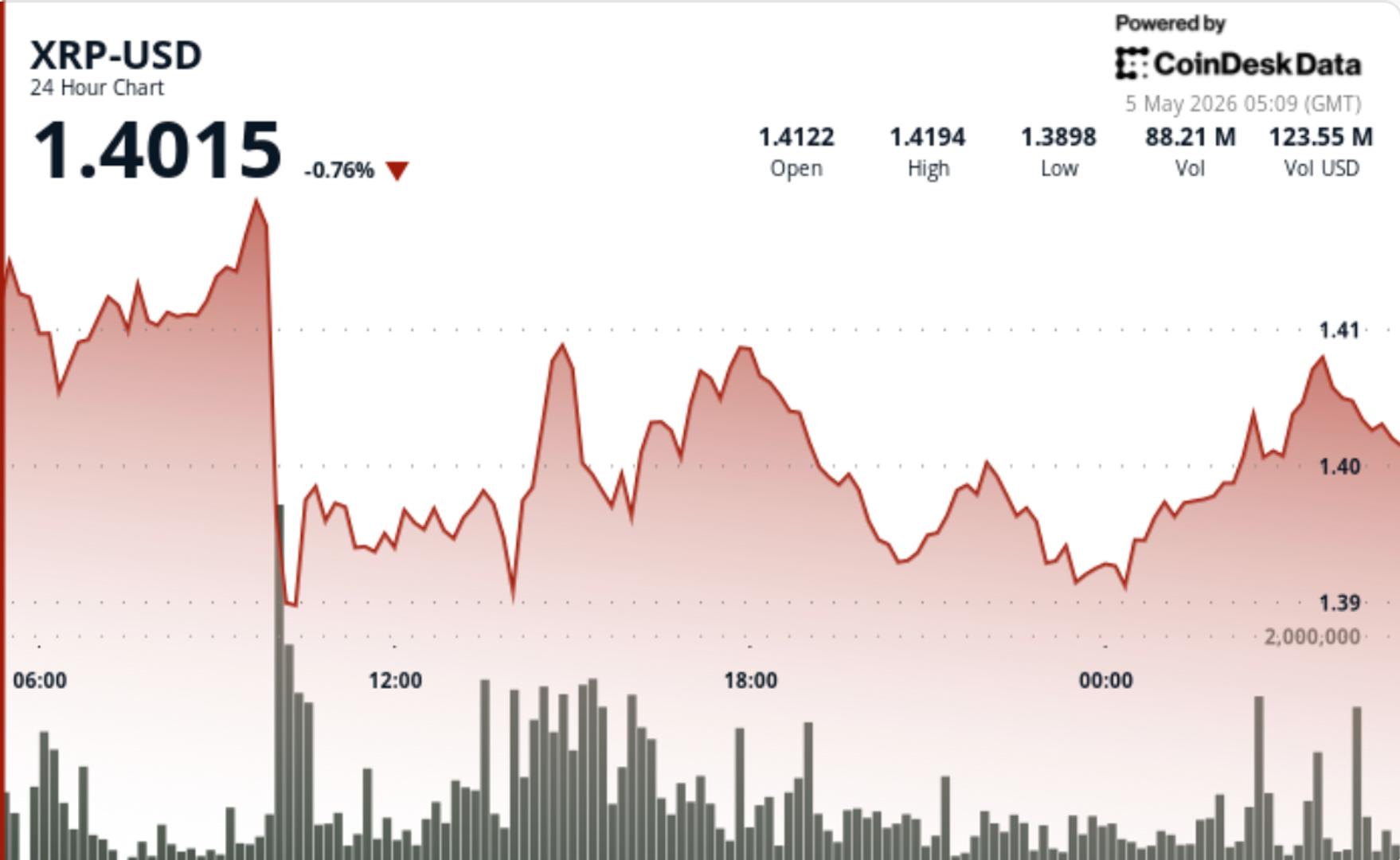

XRP slipped back under $1.40 after a high-volume break earlier in the session, but the lack of follow-through lower keeps price pinned in a tightening range where moves tend to build pressure rather than resolve it immediately.

News Background

• Broader crypto sentiment remained mixed, leaving XRP trading largely on technical structure rather than fresh catalysts.

• The market continues to rotate around key psychological levels, with $1.40 acting as a near-term pivot for positioning.

Price Action Summary

• XRP fell from $1.4109 to $1.3987, breaking below $1.40 on a 103M volume spike.

• Selling pushed price to $1.3865 before stabilizing into a narrow $1.3925–$1.4015 range.

• A late-hour push briefly reclaimed $1.40, but price failed to hold above the level into the close.

Technical Analysis

• The $1.40 level flipped from support to resistance after the breakdown, shifting short-term positioning.

• Volume was concentrated on the move lower, but faded during consolidation, suggesting selling pressure eased.

• Price is now compressing between $1.38 support and $1.41 resistance, with neither side in control.

• Momentum reset sharply during the recent drop, leaving room for expansion once direction resolves.

What traders should watch

• $1.40 remains the pivot. Reclaiming it shifts short-term bias back to upside.

• $1.41–$1.42 is the next resistance zone that needs to break for continuation.

• $1.38 is the floor. Losing it opens a move toward $1.34 and potentially $1.30.

Crypto World

Palantir Shatters Records With 85% Q1 Revenue Surge, Raises FY26 Outlook

Palantir Technologies (PLTR) reported Q1 2026 revenue of $1.633 billion, up 85% year over year.

The result represents the company’s fastest growth rate and was accompanied by an upward revision of its full-year outlook.

Palantir Q1 Earnings: 85% Revenue Surge, FY26 Guide Hits $7.66 Billion

According to the firm’s Q1 2026 financial results, US revenue doubled to $1.282 billion, a 104% year-over-year increase. US commercial revenue exploded 133% year over year to $595 million.

The US government segment grew 84% to $687 million. GAAP net income hit $871 million on a 53% margin. Furthermore, total contract value reached $2.41 billion, up 61%, and the company closed 206 deals worth $1 million or more. The Rule of 40 score climbed to 145%.

Follow us on X to get the latest news as it happens

Chief Executive Alex Karp positioned Palantir alongside semiconductor giants powering the AI buildout.

“We have shattered the metric, a feat matched only by other fellow AI infrastructure companies: NVIDIA, Micron, and SK hynix,” Karp said.

Stock reaction stayed mixed. PLTR closed at $146.03, up 1.36%. However, shares slid 2.70% to $142.09 after hours. Overall, Palantir shares are down 17.8% in 2026.

The stock slump did not deter Karp from raising the bar. Citing accelerating US demand, the CEO lifted FY 2026 guidance to 71% growth, 10 points above the prior outlook.

“We are raising our revenue guidance to between $7.650 – $7.662 billion,” the press release read.

Palantir also lifted US commercial revenue guidance above $3.224 billion, implying annual growth of at least 120%. Adjusted operating income guidance moved to a range of $4.440 billion to $4.452 billion.

Adjusted free cash flow projections climbed to between $4.2 billion and $4.4 billion. In addition, the firm said it expects to deliver GAAP operating income and net income in every quarter of 2026.

Subscribe to our YouTube channel to watch leaders and journalists provide expert insights

The post Palantir Shatters Records With 85% Q1 Revenue Surge, Raises FY26 Outlook appeared first on BeInCrypto.

Crypto World

World Liberty Financial takes Justin Sun to court, what happened?

World Liberty Financial said it is filing a lawsuit against Tron founder Justin Sun for defamation. The project announced the case in a thread on X and accused Sun of running a media campaign against the WLFI token project.

Summary

- WLFI says Justin Sun defamed the project after tokens linked to his entities were frozen.

- Sun previously sued WLFI, claiming the project froze tokens and removed his governance rights unfairly.

- The dispute now includes competing lawsuits, blacklist claims, governance concerns, and public online defamation allegations.

WLFI claimed Sun spread false statements after the project froze tokens linked to his entities. The team said Sun refused to stop after it challenged his claims. It also alleged that his comments aimed to damage the project’s reputation and token value.

According to WLFI, Sun’s entity, Blue Anthem, bought WLFI tokens in November 2024. The project later said Sun-linked entities carried out prohibited transactions, including transfers of WLFI tokens to Binance.

WLFI said it used its right to freeze the tokens to protect the ecosystem. The project stated that the freeze function was allowed under its Terms of Sale and Sun’s own agreements. It also said the governance process remains transparent and community-based.

WLFI rejects claims over governance and controls

The project said Sun accused it of adding backdoors, harming governance, and treating holders unfairly. WLFI denied those claims and said Sun used public posts, influencers, and bot activity to spread his position.

WLFI wrote that Sun called its governance a “scam” and accused the project of treating the community as an “ATM.” The project said those claims were false and damaging. It also said the dispute raises wider questions about trust in decentralized finance.

Sun had already sued World Liberty

The new lawsuit follows earlier legal action from Sun against World Liberty Financial. As we reported in April, Sun said he filed a case in a California federal court after the project allegedly froze his WLFI tokens and blocked his governance voting rights.

Sun said the freeze removed his ability to vote and threatened his holdings. He stated, “They wrongfully froze all of my tokens, stripped me of my right to vote on governance proposals, and have threatened to permanently destroy my tokens by ‘burning’ them.”

Additionally, the dispute also grew after Sun claimed WLFI contracts included an undisclosed blacklisting function. He alleged that the function could “freeze, restrict, and effectively confiscate” investor tokens. World Liberty rejected the claim and warned that legal action could follow.

Sun has said his lawsuit does not change his support for President Donald Trump or the administration’s crypto policy. He said his complaint targets individuals linked to the project, not Trump himself.

Crypto World

DTCC lines up 50 giants for tokenized securities launch

The Depository Trust & Clearing Corporation plans to start limited production trades of tokenized securities in July 2026.

Summary

- DTCC plans July tokenized securities pilots before targeting a full service launch in October 2026.

- Over 50 TradFi and DeFi firms joined DTCC’s working group, including BlackRock, Circle and Ondo.

- Initial tokenized assets may include major index ETFs, Russell 1000 stocks and U.S. Treasury securities.

The post-trade market infrastructure group aims to launch the full DTC tokenization service in October 2026.

DTCC said the service will cover real-world assets held in DTC custody. The firm said tokenized assets should carry the same rights, investor protections and ownership claims as securities held in traditional form. DTC currently provides custody and asset servicing for more than $114 trillion in securities.

Wall Street and DeFi firms join design work

The DTCC Industry Working Group includes more than 50 firms from traditional finance and crypto. The list includes BlackRock, Goldman Sachs, J.P. Morgan, Morgan Stanley, Bank of America, Circle, Fireblocks, Robinhood, Ondo Finance, Ripple Prime, NYSE Group, Nasdaq and Payward, Kraken’s parent company.

The group brings together asset managers, banks, trading venues, custodians, brokers and blockchain service providers. DTCC said it will use their feedback to test technical workflows, market readiness and the use of tokenized assets in a live production setting.

Additionally, DTC received a no-action letter from the U.S. Securities and Exchange Commission in December 2025. The letter allows DTC to offer a defined tokenization service for DTC participants and their clients for three years.

The approval covers a set of highly liquid assets. These include Russell 1000 constituents, ETFs that track major indexes, U.S. Treasury bills, Treasury notes and Treasury bonds. The July phase will remain limited as DTCC tests operations before the planned October launch.

Tokenization push moves closer to core markets

DTCC President and CEO Frank La Salla said, “Our vision is coming to fruition.” Brian Steele, DTCC managing director and president of clearing and securities services, said the service is “designed to provide systemic scale where deep liquidity already lives.”

The plan comes as tokenized real-world assets keep drawing attention from banks, asset managers and crypto firms. RWA.xyz data showed tokenized stocks rising from $375.4 million in May 2025 to about $1.21 billion in May 2026.

Ondo Finance’s role adds another crypto-focused participant to the working group. A crypto.news report said DTCC had selected Ondo alongside BlackRock, Goldman Sachs, J.P. Morgan, Circle and other firms to help shape how equities and Treasuries move on-chain.

DTCC is not building a separate crypto market. Its stated plan keeps custody, ownership rights and investor protections tied to existing securities infrastructure. The October target now gives banks, brokers and tokenization firms a clear schedule to test whether blockchain-based settlement can fit within U.S. market rules before the service moves beyond its trial stage.

Crypto World

Ripple Just Made It Harder for North Korea to Hide Inside Crypto Firms

Ripple is now contributing exclusive threat intelligence on DPRK (Democratic People’s Republic of Korea) cyber actors to Crypto ISAC, a nonprofit organization that helps crypto companies share security information and defend against cyber threats targeting digital assets.

The intelligence covers domains, wallets, and indicators of compromise from active DPRK hack campaigns. It also includes enriched profiles of suspected North Korean IT workers trying to embed themselves inside crypto firms.

Drift Hack Triggered Industry Reckoning

The Drift hack served as a wake-up call for the sector. Attackers spent months building trust with Drift contributors. They later deployed malicious software that compromised devices and bypassed traditional indicators of compromise.

The intruders manipulated individuals to seize control of multisig wallets and steal funds.

The same pattern has appeared at crypto and traditional financial firms. North Korean threat actors are operating from inside organizations rather than relying on smart contract exploits.

Crypto ISAC characterized the campaign as social engineering at a new level. The piece raised the central question of how to detect someone who appears to be a trusted partner.

Inside the DPRK Threat Intelligence Feed

The contributed data ranges from fraudulent domains and wallets to indicators of compromise from active DPRK operations.

Each profile of a suspected DPRK worker includes a LinkedIn account, an email, a location, and a contact number. The data also captures signals tying that individual to a wider campaign.

Ripple, Coinbase, and other Founding Members are integrating the data through Crypto ISAC’s new API. The system normalizes indicators across Web2 and Web3 environments and feeds directly into member security operations.

“For too long, information sharing was seen as optional. Today, it is the gold standard for security,” Justine Bone, Executive Director, Crypto ISAC said.

Why Collective Defense Matters

A threat actor who fails one company’s background check often applies to three more firms the same week. Crypto ISAC says that without shared intelligence, every defender facing Lazarus tactics starts from zero.

Jeff Lunglhofer, Coinbase Chief Information Security Officer, said the data model preserves context and confidence rather than raw indicators.

The model still has to scale across more member firms. Whether it outpaces incidents like the Kraken infiltration attempt will depend on adoption.

Ripple’s contribution builds on its broader security push at the company. The move signals a shift toward shared defense in the digital asset industry. The coming months should reveal whether other major exchanges and protocols follow suit.

The post Ripple Just Made It Harder for North Korea to Hide Inside Crypto Firms appeared first on BeInCrypto.

Crypto World

Solana Strategies buys privacy-focused cross-chain aggregator HoudiniSwap for $18M

SOL Strategies is acquiring privacy-focused cross-chain aggregator HoudiniSwap for $18M in cash, notes, and stock as it builds an institutional Solana treasury and routing stack.

Summary

- Nasdaq-listed Solana treasury firm SOL Strategies has agreed to acquire non-custodial cross-chain aggregator HoudiniSwap in a deal valued at $18 million.

- The consideration includes $8.25 million in cash, $5.75 million in six-month notes, and $4 million in STKE stock, priced off a 90-day VWAP.

- HoudiniSwap, which focuses on privacy-preserving cross-chain swaps and routing across CEXs, DEXs, and bridges, generated about $13 million in revenue last year.

According to reporting from The Block, SOL Strategies has signed a definitive agreement to acquire HoudiniSwap for $18 million as it continues to build out its Solana-centric infrastructure and services stack.

Cash, notes, and stock fund HoudiniSwap takeover

Deal terms include $8.25 million in cash, $5.75 million in six‑month promissory notes, and $4 million in SOL Strategies’ own STKE shares, with the equity component calculated using the volume‑weighted average STKE price over the 90 trading days before closing.

SOL Strategies, which trades on Nasdaq under the ticker STKE and on the Canadian Securities Exchange as HODL, describes itself as an institutional Solana validator and treasury platform with roughly $94 million worth of SOL in its own holdings as of late 2025.

The company has previously used acquisitions and structured financing to expand its footprint, including buying Laine, one of Solana’s largest independent validators, and securing up to $500 million in capital commitments to purchase and stake SOL on behalf of institutional clients.

Privacy-focused cross-chain routing comes into a listed vehicle

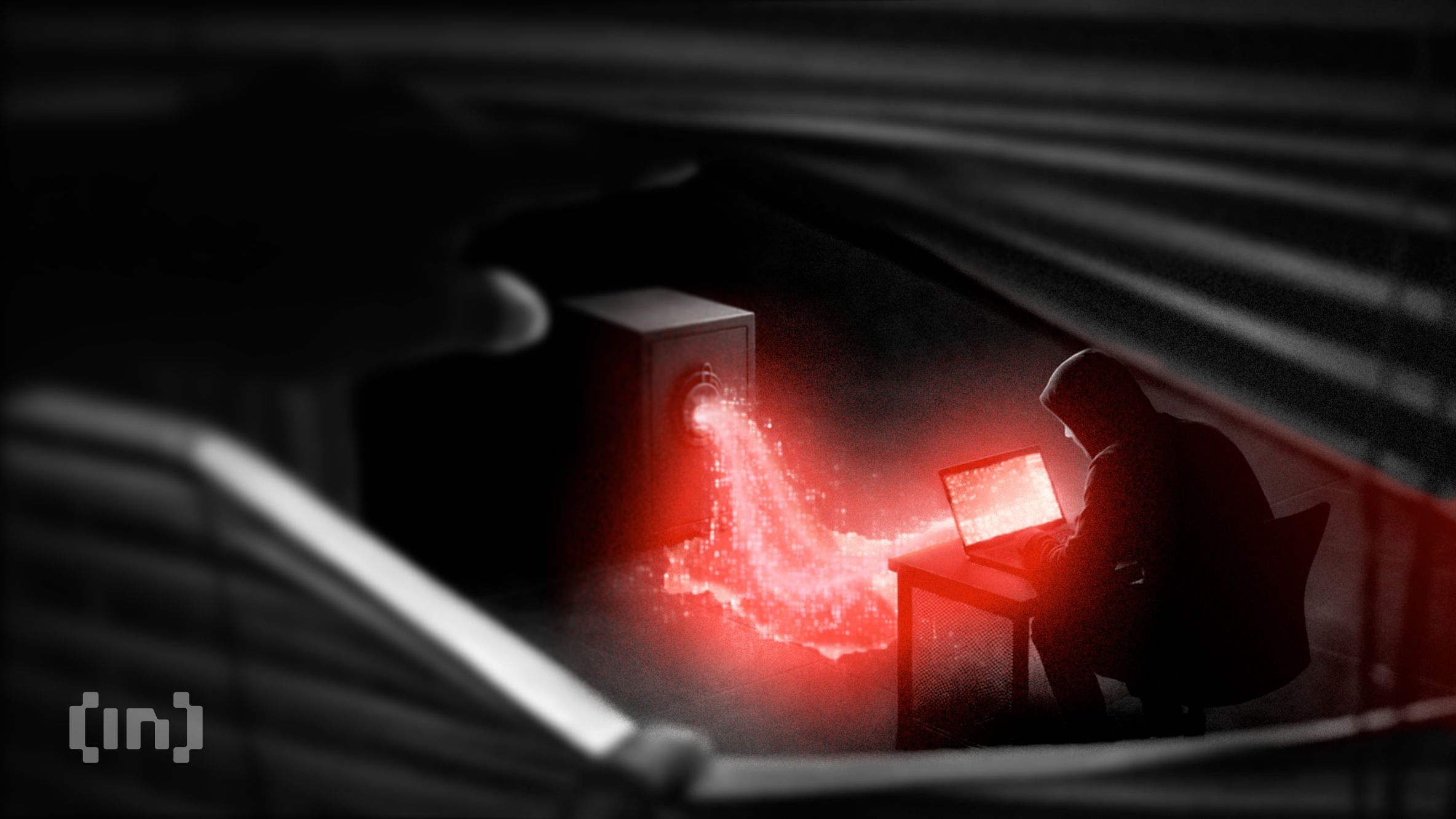

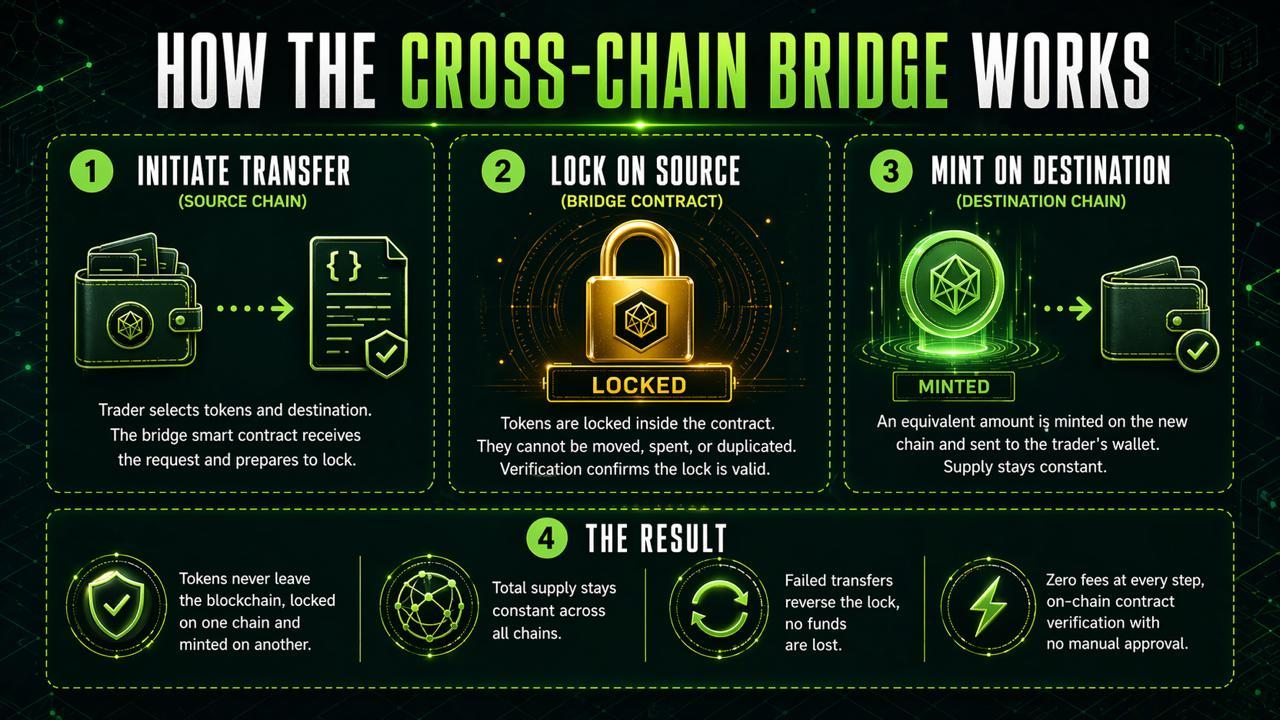

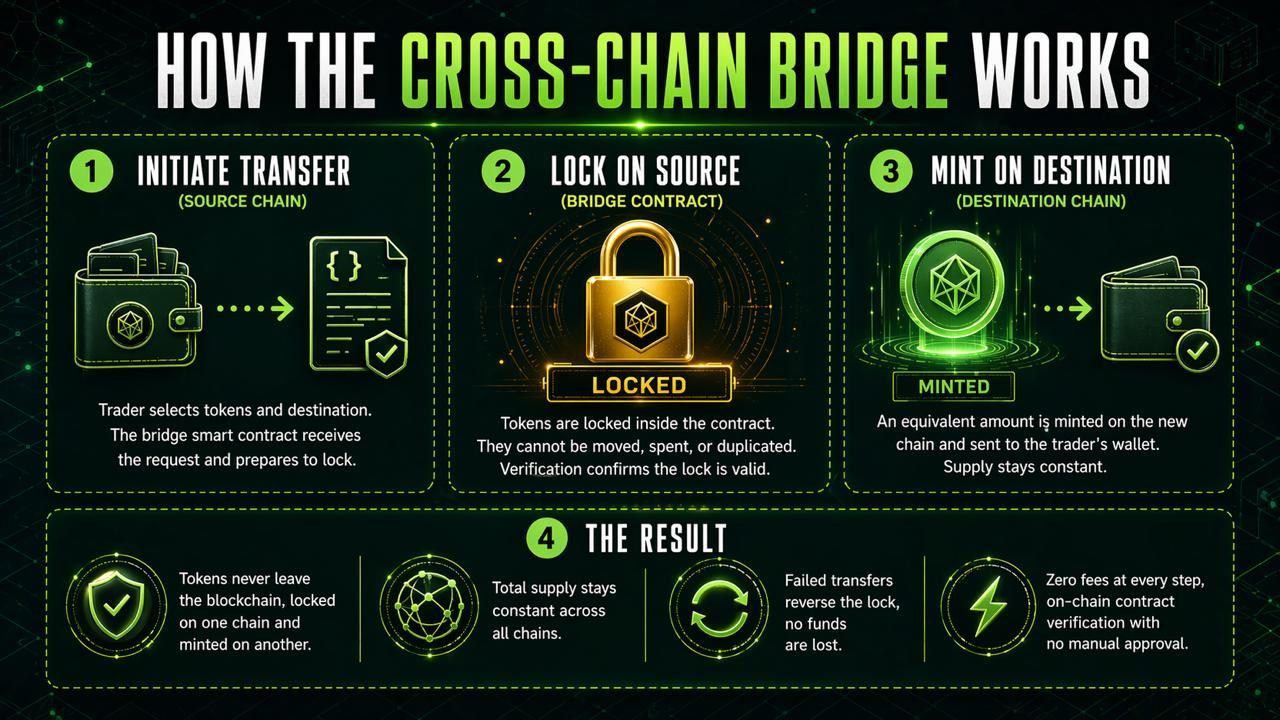

HoudiniSwap is a non‑custodial, privacy‑focused cross‑chain swap and aggregation platform that lets users route trades privately across centralized and decentralized exchanges as well as blockchain bridges.

The service uses Monero as a “tunnel” asset, breaking the visible on‑chain link between a sender wallet and a recipient wallet by moving funds into XMR and back out into a target asset, making it significantly harder for analytics firms to trace flows end‑to‑end.

Documentation and marketing materials stress that HoudiniSwap “does not take custody of, store, transmit, or route user funds” but instead acts as a liquidity aggregator and conduit between vetted exchanges and bridges, positioning the product as a compliant alternative to illicit mixers.

According to figures cited around the acquisition, HoudiniSwap generated roughly $13 million in revenue over the past year, off the back of rising demand for private, cross‑chain swaps across more than 100 supported networks and assets.

In a recent crypto.news overview, SOL Strategies’ public‑market strategy was described as aggregating Solana infrastructure, validators, and adjacent tooling into a single listed vehicle for institutions.

Another crypto.news analysis detailed how the firm’s $500 million staking facility is intended to turn SOL into “a yield‑bearing treasury reserve asset,” a plan that could now intersect with cross‑chain liquidity from HoudiniSwap.

A separate crypto.news feature on SOL Strategies’ validator and treasury platform noted that the company sees M&A as a “core growth lever,” with privacy‑preserving routing and cross‑chain tools identified as strategic gaps — niches this $18 million HoudiniSwap deal is now set to fill.

Crypto World

Top 3 Crypto Presales 2026: Whale Wallets Buy 270,000 BTC in 30 Days While Pepeto Targets 100x Before Listing

The top 3 crypto presales 2026 search keeps growing now that whale wallets bought 270,000 BTC in 30 days, the biggest monthly total since 2013 according to CoinDesk.

One well-timed position can change a whole financial future, and Bitcoin exchange reserves just dropped to a seven year low. While Bitcoin Hyper and IPO Genie draw early attention, Pepeto has passed $9.78 million with a working exchange and an approaching Binance listing where analysts project 100x.

Top 3 Crypto Presales 2026 Search Grows as Whale Accumulation Hits 13 Year Record

Whale wallets holding 1,000 or more BTC added 270,000 coins in April alone, beating every monthly total going back to 2013 per CoinDesk. Bitcoin exchange reserves fell to levels not seen since December 2017, meaning available supply on trading platforms is at a seven year low.

BTC holds $78,370 with the Fear and Greed Index at 39, a zone where large holders have historically started building positions before the next major move.

The search for the top 3 crypto presales 2026 grows during these conditions because capital flowing into presales during fear signals real commitment. The wallets entering now will collect when recovery arrives, and every prior cycle rewarded the positions built during exactly this stage of market sentiment.

Presale Leaders and the Tokens Building Recovery Returns

Pepeto: Leading the Top 3 Crypto Presales 2026

270,000 BTC bought by whale wallets in 30 days proves that large holders see something ahead, and the $9.78 million inside Pepeto proves smaller wallets see the same signal at the presale level. The exchange is not a promise on a roadmap. It is live. PepetoSwap handles trades at zero fees, so a $10,000 position stays at $10,000 from the moment it opens.

Fear brings scams, and manual research cannot keep up when hundreds of new tokens launch daily across every chain. The contract scanner reads each one on chain and catches drain traps before a buyer ever confirms. Meanwhile, 175% APY staking pulls tokens off the market daily, thinning the available supply that will face a wave of demand once the expected Binance listing brings a fresh audience. Fewer coins available and more buyers arriving is the force that separates the earliest positions from everyone who comes after.

The person behind the original Pepe built a token worth $11 billion without a single working product. Pepeto has a working exchange, a contract scanner, a cross chain bridge, and SolidProof verified contracts, all at a presale price of $0.0000001868. A former Binance infrastructure specialist handles the listing preparation.

That is why Pepeto leads the top 3 crypto presales 2026 search. The presale price is temporary. One day of trading on Binance replaces it, and the 100x analysts project is the return that early Pepe holders wish they had accessed with working tools behind it.

Bitcoin Hyper: Presale Active but Infrastructure Missing

Bitcoin Hyper calls itself a Bitcoin Layer 2 scaling solution, but nothing beyond the concept exists today. No live product, no exchange, no audit from a recognized firm, and no listing confirmed on any major platform.

The gap between a roadmap and a working product is where most presales fail, and Bitcoin Hyper has not crossed that gap. The timeline for delivery depends fully on future execution with no verifiable infrastructure running at present.

IPO Genie: Early Stage With No Exchange Layer

IPO Genie aims to tokenize access to pre-IPO deals, but the entire model depends on regulatory approvals that do not exist yet. No trading platform is live, no bridge connects external networks, and no audit from a firm like SolidProof backs the contracts.

Until working infrastructure arrives, IPO Genie is a bet on future delivery with no verified tools operating behind it today.

Conclusion:

Bitcoin Hyper has no product and IPO Genie has no exchange, but Pepeto has both plus a SolidProof audit and a Binance listing approaching, which is why the top 3 crypto presales 2026 search keeps returning to the same answer.

Pepe turned presale pricing into billions, and the same person now runs a project with working tools that the original never carried.

The Pepeto official website shows the presale entry that one day of Binance trading will remove from the table. Every cycle produces a handful of positions that define the returns for years, and the decision to enter during fear at $0.0000001868 or wait until after the listing is the only choice left.

Click To Visit Pepeto Website To Enter The Presale

FAQs

What are the top 3 crypto presales 2026?

Pepeto leads the top 3 crypto presales 2026 with $9.78 million raised, a live zero fee exchange, and an approaching Binance listing targeting 100x from presale pricing.

Why does the top 3 crypto presales 2026 search favor Pepeto over competitors?

Pepeto runs a live exchange with SolidProof verified contracts and the Pepe builder behind it. Bitcoin Hyper and IPO Genie lack working infrastructure and confirmed listing support.

Disclaimer: This is a Press Release provided by a third party who is responsible for the content. Please conduct your own research before taking any action based on the content.

Crypto World

HSBC first-quarter pre-tax profit misses estimates on wider-than-expected credit losses

Europe’s largest lender HSBC on Tuesday reported first-quarter pre-tax profit of $9.4 billion, marginally missing analysts’ estimates on the back of larger-than-expected credit losses and other impairment charges.

HSBC’s revenue gained 6%, year on year, exceeding estimates.

Here are HSBC’s first-quarter results compared with the consensus estimates compiled by the bank.

- Pre-tax profit: $9.4 billion vs. $9.59 billion

- Revenue: $18.6 billion vs. $18.49 billion

The lender’s first-quarter profit before tax fell to $9.4 billion, down from $9.5 billion a year earlier.

“We remain on track to have taken actions to deliver our $1.5bn annualised cost reduction by the end of June 2026,” the bank said in its statement. “Through the privatisation of Hang Seng Bank, we expect to realise $0.5bn in pre-tax revenue and cost synergies across both our brands in Hong Kong by the end of 2028.”

HSBC completed the privatization of Hang Seng Bank on Jan. 26, with the latter’s shares subsequently delisted from the Hong Kong Stock Exchange.

The bank highlighted risks due to the Middle East conflict, including higher oil prices, sharper inflation, a significant slowdown in GDP, warning that if those factors came into play there could be a “mid-to-high single digit percentage” negative impact on its profit before tax.

While HSBC maintained its targeted return on tangible equity — a measure of profitability — of 17%, it warned that should the adverse impact from the Middle East crisis materialize, it could bring RoTE, excluding notable items, below 17% in 2026.

The HSBC board approved its first interim dividend for 2026 of 10 cents per share.

Crypto World

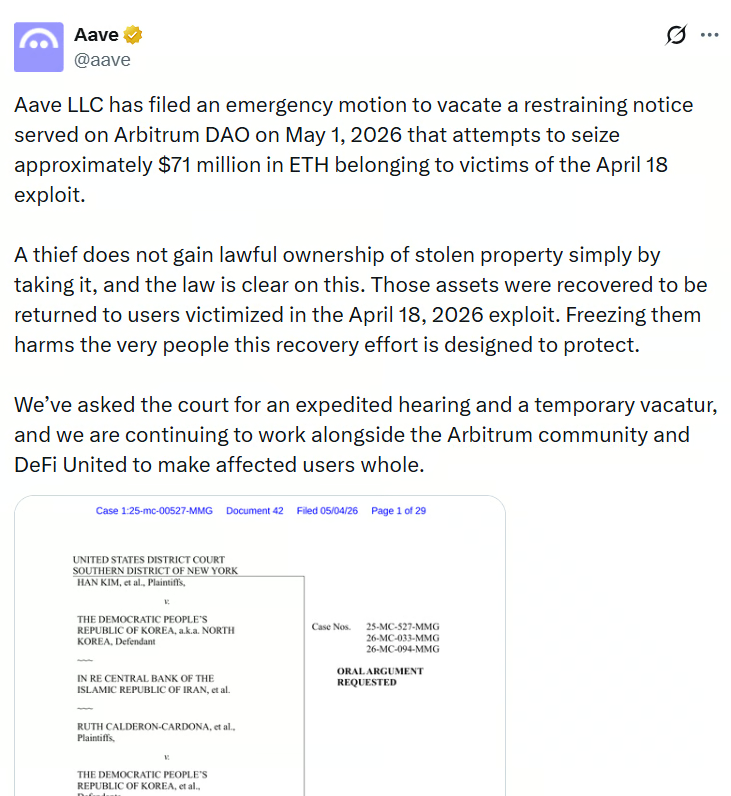

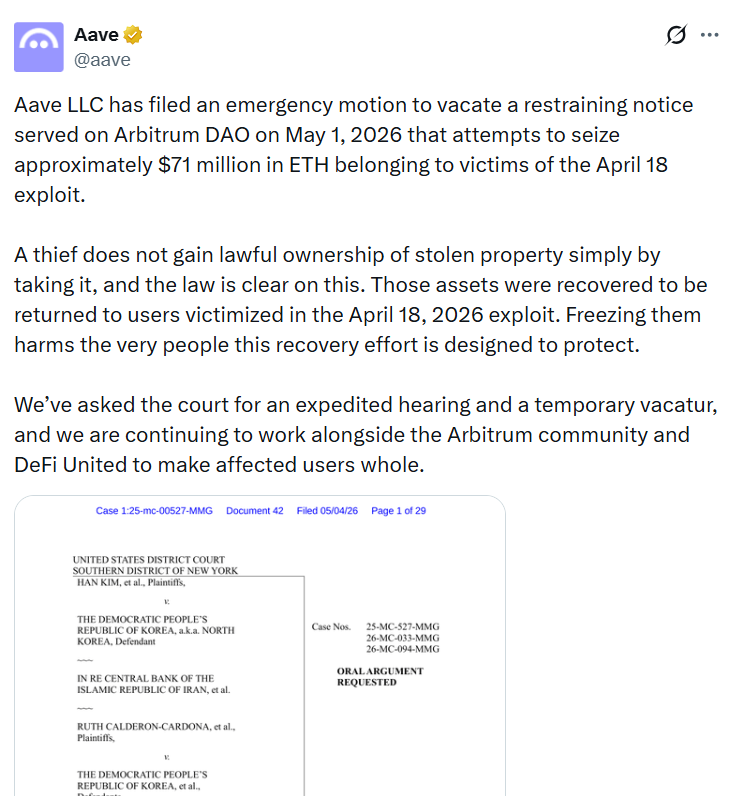

Aave Challenges Law Firm’s Freeze on Kelp Exploit Ether

Decentralized finance protocol Aave filed an emergency motion on Monday in New York to vacate a restraining notice from a US law firm aimed at blocking Arbitrum DAO from transferring 30,766 Ether to the victims of the Kelp exploit.

Gerstein Harrow LLP served Arbitrum DAO with a restraining notice on Friday, arguing its clients are owed over $877 million in default judgments against North Korea. The law firm claims the North Korean hacker group behind the Kelp exploit had possession of the tokens, giving its clients a legal claim over the Ether.

Aave filed the emergency motion in a New York district court, arguing that a thief doesn’t gain lawful ownership of property by stealing it. It also argued that North Korea is only suspected of being part of the theft, and that the law firm’s argument “defies logic, common sense and the law.”

The Arbitrum DAO has been voting on whether to release the Ether to assist DeFi United, an industrywide coordination effort to make rsETH holders whole and help restore rsETH’s backing following the $292 million Kelp DAO hack on April 18. Voting ends May 7.

Source: Aave

Delay will cause “irreparable harm” to Aave, crypto ecosystem

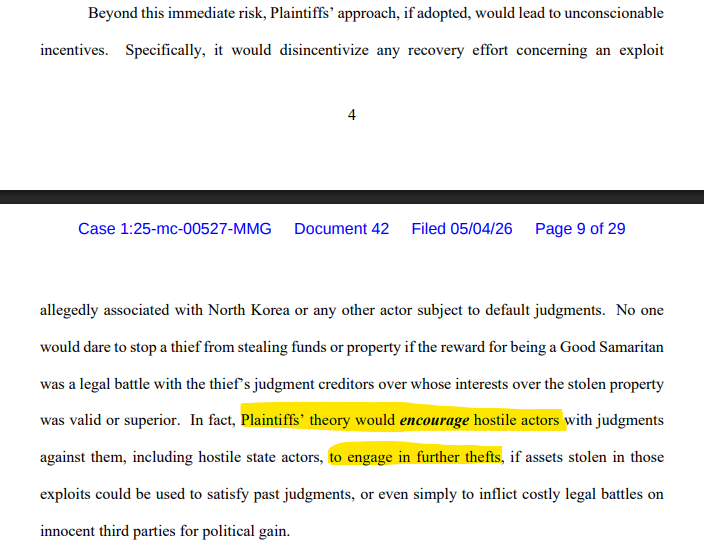

Aave argued that if the court upholds Gerstein Harrow’s notice, it could deter future recovery efforts for North Korea-related hacks because of the possibility of additional legal challenges to recover funds. It further argued that it could incentivize bad actors to target more crypto protocols.

Aave’s lawyers also warned that the delay is causing “irreparable harm” to the protocol, its users and the wider DeFi community, “none of which can be later cured by monetary damages.”

“If the immobilized assets remain subject to a freeze and are not made available to restore value to Aave protocol users, the entire DeFi ecosystem risks being destabilized,” Aave’s lawyers said.

“While Aave protocol users cannot retrieve their assets from the Aave protocol, if those assets were being used for collateral for other positions elsewhere then continued restraint on the immobilized assets may render those users unable to meet their related collateral obligations.”

Aave said that if a court upholds Gerstein Harrow’s notice, it could incentivize bad actors to target more crypto protocols. Source: CourtListener

They further argued against Gernstein Harrow’s claim that its clients have a right to the frozen Ether and also said the case is based on unsupported conjecture that the thief is North Korea.

“Plaintiffs in this case showed up, contending – based on conjecture from posts on the internet – that the thief was North Korea, and that by stealing the assets for a few hours, North Korea somehow became the rightful owner of those assets such that Plaintiffs here could restrain them for their own purposes,” lawyers for Aave said.

“The immobilized assets do not belong to North Korea or any affiliated entities. Instead, the immobilized assets belong to the users of the Aave protocol who were victimized when a third-party thief effectively stole their assets during a cyber exploit April 18, 2026.”

Related: Google Cloud flags North Korea-linked crypto malware campaign

If the court can’t immediately vacate the notice, Aave’s lawyers are requesting that Gerstein Harrow pay a $300 million bond to maintain the restraining notice until a decision is reached.

A judge hasn’t ruled on the emergency motion yet, and a hearing date hasn’t been scheduled.

Gerstein Harrow has filed similar cases in the past, arguing its clients have a claim to funds stolen by North Korea and frozen by crypto firms, including assets from the 2023 Heco Bridge hack and the 2025 Bybit exploit.

Magazine: DeFi’s billion-dollar secret: The insiders responsible for hacks

Crypto World

GameStop Stock Drops as Michael Burry Dumps Stake on eBay Bid

GameStop (GME) shares dropped after “The Big Short” investor Michael Burry sold his entire stake.

The investor announced the move on his Substack post. He revealed that the GameStop sale is his first divestment since launching the blog.

Follow us on X to get the latest news as it happens

Michael Burry Exits Entire GameStop Stake

GameStop stock ended Monday at $23.84, down 10.14%. GME continued lower in after-hours trading, dipping 1.22% to $23.55, Google Finance data shows.

Burry’s exit followed GameStop’s non-binding $55.5 billion proposal to acquire e-commerce platform eBay at $125 per share. The offer splits the payment evenly between cash and stock, with Ryan Cohen taking the chief executive role at the combined retailer.

The investor first disclosed his GameStop position in January. However, Cohen’s acquisition push prompted him to walk away.

“I may not last the week with my GameStop position fully intact,” he wrote in a note. “I will certainly sell to an extent, perhaps all or some, but alas, no, not none.”

Burry wrote that his Berkshire Hathaway-style blueprint for GameStop was incompatible with the leverage Cohen needs to close the eBay deal.

Subscribe to our YouTube channel to watch leaders and journalists provide expert insights

The post GameStop Stock Drops as Michael Burry Dumps Stake on eBay Bid appeared first on BeInCrypto.

-

Business6 days ago

Business6 days agoMost Commercial Energy Audits Miss the Real Losses

-

Fashion6 days ago

Fashion6 days agoKylie Jenner’s KHY Enters a New Era with ‘Born in LA’

-

NewsBeat1 day ago

NewsBeat1 day agoChannel 5 – All Creatures Great and Small series 7 new post

-

Tech3 days ago

Tech3 days agoTrump’s 25% EU auto tariff breaches Turnberry Agreement that also covers semiconductors and digital trade

-

Sports3 days ago

Sports3 days agoPaul Scholes issues Marcus Rashford reality check as agreement emerges over Man United star

-

Crypto World7 days ago

Crypto World7 days agoCFTC’s AI will review U.S. crypto registration applications, chairman tells CoinDesk

-

Business6 days ago

Business6 days agoBarclay Brothers Avoid Bankruptcy: HSBC Drops High Court Petitions After IVA Deal

-

Business5 days ago

Business5 days agoTesla Officially Registers Elon Musk’s Stock: What Investors Need to Know

-

Crypto World7 days ago

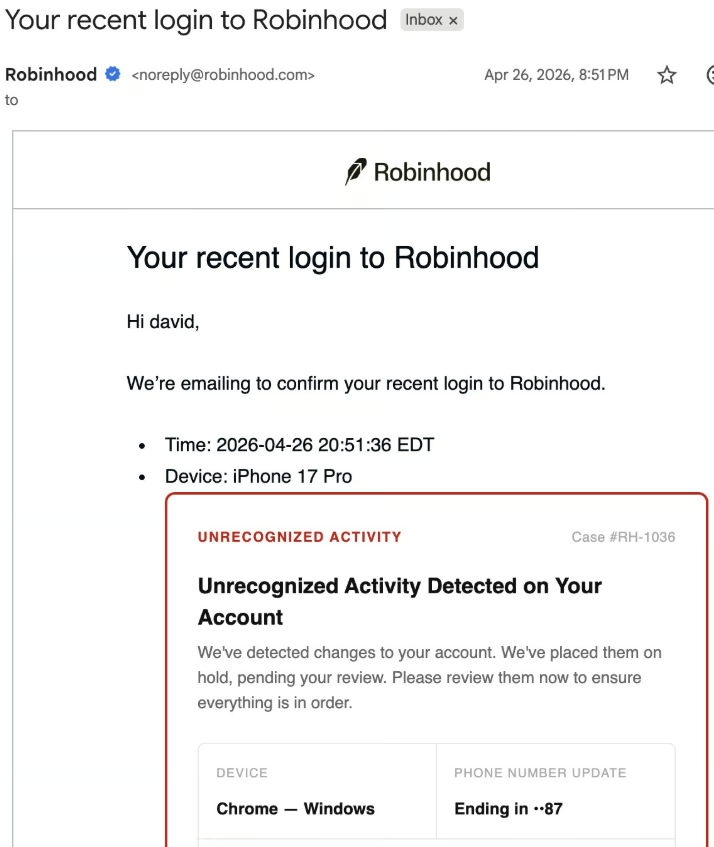

Crypto World7 days agoRobinhood Phishing Scam Exploits Gmail Dot Feature to Bypass Security

-

Tech7 days ago

Tech7 days agoGet Ready for More Brain-Scanning Consumer Gadgets

-

Crypto World7 days ago

Crypto World7 days agoGmail Dot Trick Underpins Robinhood Phishing, Sending Real-Looking Emails

-

Business4 days ago

Business4 days agoTwo Powerball Tickets Split $143 Million Jackpot in Indiana and Kansas

-

Tech5 days ago

Tech5 days agoTexas Instruments made a new flagship graphing calculator: the TI-84 Evo

-

Crypto World4 days ago

CoreWeave (CRWV) Stock Climbs 8% Despite $45M Insider Share Dump

-

Business2 days ago

Business2 days agoWinning Numbers Drawn as Jackpot Resets to $20 Million

-

Tech7 days ago

Tech7 days agoRobinhood account creation flaw abused to send phishing emails

-

Crypto World7 days ago

Crypto World7 days agoRobinhood Phishing Scam Uses Gmail Dot Trick to Send Real Emails

-

Crypto World7 days ago

Crypto World7 days agoSolana Clients Introduce Post-Quantum Solution Falcon

-

Crypto World5 days ago

Crypto World5 days agoSecuritize and Computershare Enable Tokenized Equity Issuance for Over 25,000 U.S.-Listed Stocks

-

Crypto World5 days ago

Crypto World5 days agoGibraltar Proposes Tokenized Funds Regulation to Bolster Compliance

You must be logged in to post a comment Login