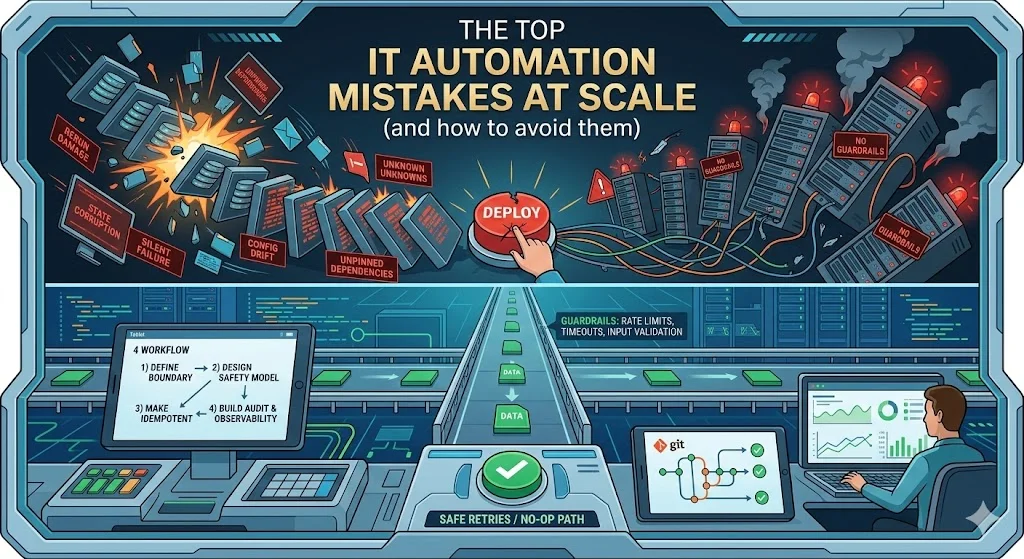

IT automation is supposed to reduce risk, speed delivery, and shrink operational overhead—but in real environments, it can also amplify mistakes, spread misconfigurations faster, and create “unknown unknowns” at scale.

This guide focuses on the failure patterns that hit intermediate-to-advanced teams (SRE/DevOps/Platform/IT Ops), plus a practical workflow for building automation that’s safe to run repeatedly, safe to change, and safe to roll back.

Quick take (read this first)

- Avoid “automation theater.” If you can’t explain the goal, blast radius, and rollback, you’re not ready to automate that workflow.

- Design for “safe retries”: idempotent actions, clear state checks, and predictable error handling.

- Ship guardrails by default: input validation, rate limits, timeouts, and a human fallback when conditions look unsafe.

- Treat automation as change management: version control, approvals where needed, and audit logs of what changed and who/what changed it.

Dominant intent (what searchers want)

Most people searching “IT automation mistakes” are not looking for tool comparisons—they want a practical checklist of what goes wrong in production and a concrete method to prevent those failures (especially around change control, security, and reliability).

The mistakes (and what to do instead)

1) Automating the wrong thing (or automating too early)

Mistake: Automating a workflow you don’t fully understand yet, or automating edge-case-heavy work before you’ve stabilized the “happy path.”

Do instead: Start by writing a one-page runbook that a human can follow, then automate that runbook. For a runbook pattern you can standardize across teams, create an internal “production runbooks” page like a production runbook template.

Practitioner note: If you can’t list the top 3 failure modes of the workflow, automation will discover them for you—at the worst possible time.

2) No clear definition of “done” (success criteria are vague)

Mistake: “Automate onboarding” without measurable success: time saved, error rate reduced, fewer tickets, fewer escalations.

Do instead: Pick one outcome metric (e.g., median provisioning time) and one safety metric (e.g., failed-run rate) before you write code. If you want a simple measurement framework, align it to toil-reduction thinking from Google’s guidance on eliminating toil.

3) Treating automation as a script, not as a product

Mistake: A “one-off” script becomes production-critical, but it has no owner, no lifecycle, and no on-call expectations.

Do instead: Assign an owner, a repo, and a release process (even if lightweight). For larger orgs, define a small internal policy page like an automation ownership model so abandoned automations don’t become permanent operational debt.

4) No change management (automation changes go out like ad-hoc edits)

Mistake: Updating automation directly on a server, or merging automation changes without review, testing, and traceability.

Do instead: Treat automation as change management: controlled changes, auditable history, and clear permissions. AWS explicitly frames change management as necessary for reliable operation and calls out automatic logging of changes as an auditing aid in the AWS Well-Architected change management guidance.

Advanced note: When you can, make changes small and reversible (roll-forward is nice; fast rollback is mandatory).

Mistake: Assuming upstream systems always send sane values, or that “only admins will run it.”

Do instead: Validate inputs like a hostile internet user might control them: bounds checks, allowlists, required fields, and “dry run” modes that show intended actions without taking them.

6) No guardrails (automation can trigger outages at scale)

Mistake: Automation that loops aggressively, fans out without limits, or repeatedly performs expensive “read” operations that become costly at scale.

Do instead: Add guardrails: timeouts, rate limits, concurrency limits, and safety checks using live signals (error rates, saturation, dependency health). Google’s SRE guidance warns that even read operations can spike device load at scale and that automation should default to humans if it hits unsafe conditions.

7) Not idempotent (re-runs cause damage)

Mistake: A failed run leaves partial state; rerunning makes it worse (duplicate accounts, duplicate firewall rules, double-billed resources).

Do instead: Design for safe retries: check current state first, apply only the delta, and make “no-op” a normal success path. If your team needs a shared pattern library, create idempotent automation patterns internally and enforce them in code reviews.

8) Poor error handling (failures are silent, unclear, or non-actionable)

Mistake: Catch-all exceptions that hide real failures, or errors that don’t tell the operator what to do next.

Do instead: Use structured error handling, return explicit exit codes, and log enough context to remediate quickly. For PowerShell-heavy environments, follow Microsoft’s official try/catch/finally guidance to handle terminating errors predictably.

9) No baseline config thinking (automation fights drift instead of controlling it)

Mistake: “Our automation sets the config” but there’s no approved baseline, no monitoring, and no controlled change process—so the environment drifts and nobody knows what “correct” is anymore.

Do instead: Establish and manage approved baselines and monitor for unauthorized changes as part of configuration management. NIST describes security-focused configuration management as managing and monitoring configurations to achieve adequate security and minimize organizational risk in NIST SP 800-128.

10) No “checklist” layer (you can’t verify automation outcomes)

Mistake: Automation changes settings, but you don’t have a consistent way to verify the final state (or detect unauthorized changes later).

Do instead: Treat verification as first-class: post-run checks, periodic compliance scans, and “expected state” reports. NIST describes security configuration checklists as instructions/procedures for securely configuring IT products, including verifying configuration and identifying unauthorized changes, in NIST SP 800-70 Rev. 5 (IPD).

11) Concurrency mistakes (two automations fight each other)

Mistake: Two pipeline runs apply infrastructure changes concurrently, or two operators run the same automation against the same target at the same time.

Do instead: Enforce locking and single-writer rules. Terraform state locking is designed to prevent concurrent writes and potential state corruption; if locking fails, Terraform doesn’t continue, per HashiCorp’s Terraform state locking documentation.

12) Supply-chain blind spots (automation depends on unpinned dependencies)

Mistake: CI/CD workflows pull third-party components by mutable tags, so what runs today isn’t guaranteed to be what ran yesterday.

Do instead: Pin and verify dependencies for your automation pipeline. GitHub’s guidance on secure use of Actions states that pinning to a full-length commit SHA is currently the only way to use an action as an immutable release in GitHub’s secure use reference.

If your org is standardizing workflow hardening, create a CI/CD security hardening playbook that covers pinning, reviews for sensitive workflows, and secret exposure pathways.

How to build automation safely (a practical workflow)

Step 1: Define the boundary

- What is the exact trigger (human, ticket, webhook, schedule)?

- What is the target scope (single host, one service, one environment, one account)?

- What must never happen (data loss, public exposure, mass deletion, privilege escalation)?

Step 2: Design the safety model

- Preflight: Validate inputs and permissions; confirm the target exists.

- Guardrails: Timeouts, rate limits, concurrency limits, circuit breakers, and a “stop” switch.

- Fallback: If conditions look unsafe, stop and route to a human with a clear message.

Step 3: Make it idempotent (safe retries)

- Read current state.

- Compute delta.

- Apply changes.

- Verify final state (and record evidence).

Step 4: Build observability and auditability

- Log: who/what triggered the run, what changed, and where.

- Metric: success rate, duration, retries, and rollbacks.

- Traceability: link runs to commits and tickets.

From a governance perspective, automatic logging of changes helps audit and quickly identify actions that might have impacted reliability, as described in the AWS Well-Architected change management guidance.

Step 5: Roll out like you mean it

- Start small: Canary a subset of targets, then expand.

- Prefer reversible changes: Plan rollback (or roll-forward) before the first run.

- Write the “undo” path: If reversal is impossible, add extra approval gates.

AWS highlights that deployments are a major production risk area and encourages automation (including testing and deploying changes) in its guidance on deploying changes with automation.

Decision tree: should you automate this?

START | |-- Does the workflow happen often (weekly+) OR during incidents? | |-- No --> Keep manual; improve documentation/runbook. | |-- Yes | |-- Can you clearly define "success" AND "unsafe" conditions? | |-- No --> Stabilize process; add measurements; then automate. | |-- Yes | |-- Can you make it safe to retry (idempotent) with a bounded blast radius? | |-- No --> Add guardrails/locks/approvals; then automate. | |-- Yes | '--> Automate, ship with preflight + guardrails + rollback + logging.

Implementation checklist (copy/paste for your PR)

- Has an owner and a repo (not “a script on a server”).

- Inputs validated; “dry run” supported for risky actions.

- Idempotent behavior documented (what happens on rerun).

- Concurrency controlled (locks, single-writer rules).

- Guardrails present (timeouts, rate limits, circuit breakers).

- Logs, metrics, and run IDs are emitted; changes are auditable.

- Rollback path defined and tested (or explicit approval gates if not reversible).

- Dependencies pinned and reviewed; CI/CD hardening applied.

Troubleshooting (real-world failure modes)

Problem: “It worked in staging but caused a production incident”

Common causes: missing guardrails, scale effects (read load), hidden dependencies, or assumptions about data shape. Add timeouts/rate limits and use live signals; SRE guidance notes automation needs safeguards and that scale can change the risk profile dramatically.

Problem: “We can’t explain what changed”

Fix: require versioned changes, run IDs, and change logs; align to controlled change management and automatic change logging as described in AWS’s change management guidance.

Problem: “Two runs conflicted and corrupted state”

Fix: enforce locking/single-writer rules; Terraform’s state locking model exists specifically to prevent concurrent state writes and to stop runs if locking fails.

Problem: “The automation fails, and the error is useless”

Fix: make errors actionable (what failed, why, what to do next), and use structured error handling. In PowerShell, ensure you’re handling terminating errors using try/catch/finally patterns described in Microsoft’s documentation.

FAQ

What’s the fastest way to reduce automation risk without slowing delivery?

Start with guardrails (timeouts, rate limits, safe defaults) and add change traceability (who/what/when) before you add more features.

When should we not automate?

Don’t automate workflows with unclear “unsafe conditions,” no rollback, or unclear ownership—until you fix those prerequisites.

How do we keep automation from creating more toil?

Measure the time spent operating the automation itself and ensure it reduces net operational work; toil framing and safeguards are emphasized in Google’s SRE guidance.

Is “configuration drift” always bad?

Not always—sometimes reality changes faster than code—but unmanaged drift makes environments less predictable; treat baselines and monitoring as first-class.

How do we implement configuration baselines in a practical way?

Define a baseline, implement it consistently, monitor deviations, and control changes; NIST’s security-focused configuration management guidance is a strong baseline reference for this program.

Do we need checklists if we already have IaC?

Yes—IaC expresses intent, but you still need verification that deployed systems match the intended secure configuration; NIST describes checklists as including verification and unauthorized-change detection.

What’s a minimum viable CI/CD hardening step for automation pipelines?

Pin third-party components to immutable identifiers; GitHub’s secure use guidance states full-length commit SHA pinning is the way to make an Action immutable.

Automate the evidence: logs, approvals where needed, and a clear history of changes; AWS explicitly calls out automatic logging as an auditing aid.

Key takeaways

- Automation failures are rarely “tool problems”—they’re safety, ownership, and change-management problems.

- Make automation safe to rerun, safe to stop, and safe to explain.

- Build guardrails that assume scale and bad inputs, and default to humans when unsafe.