This article is part of the collection: Teaching Tech: Navigating Learning and AI in the Industrial Revolution.

A little over a decade ago, schools were swept into what many described as a movement to prepare students for the future of work. That work was coding — “Hello, world!”

Districts introduced new courses, nonprofits expanded access to computer science education and a growing ecosystem of programs promised to teach students the skills needed to enter the tech workforce. For many, it felt like a necessary correction to a rapidly digitizing world. But over time, a more complicated picture emerged.

While access to computer science education expanded, the relationship between early coding exposure and long-term workforce outcomes became uneven. The “learn to code” movement raised an important question that still lingers today: Which skills actually endure when technologies change? That question has resurfaced in a new form.

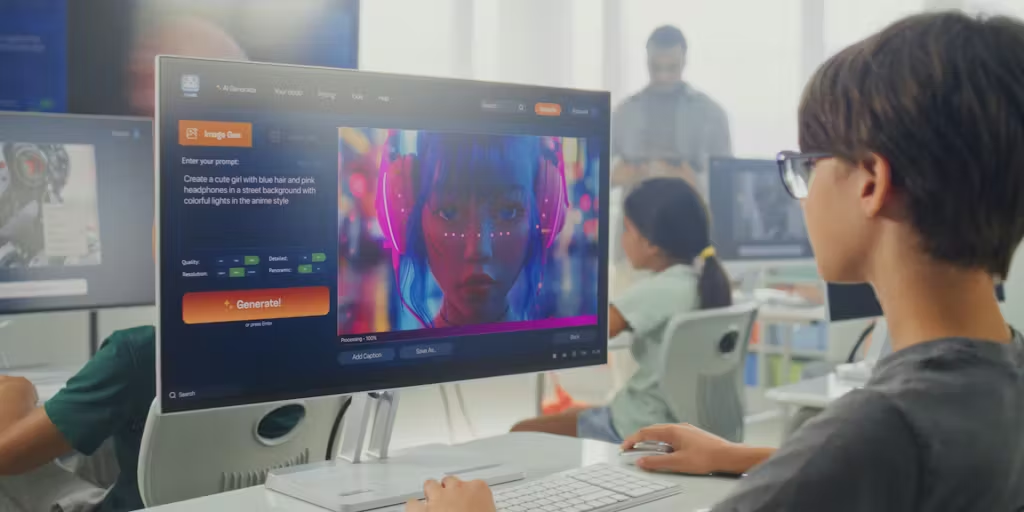

Today, generative AI is driving a similar wave of urgency. Schools are once again being encouraged to adapt quickly, often with the same underlying rationale that teachers must prepare students for a future shaped by emerging technologies.

But if the instructional role of AI remains unclear, and if the tools themselves are likely to evolve rapidly, the more persistent challenge may lie elsewhere.

After conducting a two-year research project alongside teachers, who are adapting and are open to integrating AI, we found that uptake is still minimal. Most of our participants, including those who are engineering or computer science teachers, still struggle to identify a clear or universal instructional use case for widespread AI integration.

So, what should students learn to help them adapt to whatever comes next?

A growing body of research suggests that the answer may lie not in teaching students how to use a particular AI system, but in helping them understand the computational ideas that make those systems possible.

The Limits of Teaching the Tool

In recent years, many discussions about AI education have centered on teaching students how to use generative tools effectively. Prompt engineering, for example, has become a common topic in professional development workshops and online tutorials.

Yet, focusing heavily on tool-specific skills can create a familiar educational problem, because technology changes faster than curricula.

Teaching students how to interact with a specific interface risks becoming the equivalent of teaching to standardized tests, rather than teaching students important lessons that don’t appear on state exams.

The history of computing education offers a useful example. In the early 2010s, a wave of coding initiatives encouraged schools to teach programming skills broadly. While many of those programs expanded access to computer science education, subsequent analysis showed that workforce pipelines in technology remained uneven, and many students learned tool-specific skills without developing deeper computational reasoning abilities.

That experience offers a cautionary lesson for the current AI moment. If the goal of integrating AI into education is long-term preparation for technological change, focusing narrowly on how to use today’s tools may not be the most durable strategy.

The Skill That Outlasts the Tool

A growing body of research suggests that computational thinking is a more durable educational objective.

Computational thinking refers to a set of problem-solving practices used in computer science and other analytical disciplines. These include:

- breaking complex problems into smaller components

- recognizing patterns

- designing step-by-step processes

- evaluating the outputs of automated systems

These skills apply not only to programming but also to fields ranging from engineering to public policy.

Importantly, they also help students understand how algorithmic systems operate.

When students learn computational thinking, they gain the ability to analyze how technologies like AI produce results rather than simply accepting those results as authoritative.

In this sense, computational thinking provides a conceptual bridge between traditional academic skills and emerging digital systems.

What Teachers Are Already Doing

Many teachers in our study were already moving in this direction, often without using the term computational thinking.

When teachers asked students to analyze chatbot errors, they were encouraging students to examine how algorithmic systems produce outputs. When they designed exercises comparing training data and algorithms to everyday processes, they were helping students reason about how automated systems work.

These approaches do not require students to rely heavily on AI tools themselves. Instead, they position AI as a case study for examining how technology shapes information.

That framing aligns with longstanding educational goals around critical thinking, media literacy and problem-solving.

Implications for Educators

If the instructional use case for generative AI remains uncertain, educators may benefit from focusing on skills that remain valuable regardless of which tools dominate in the future.

Several practical approaches are already emerging in classrooms. Teachers can use AI systems as objects of analysis, asking students to evaluate outputs, identify errors and investigate how models generate responses.

Lessons can connect AI to broader topics such as data quality, algorithmic bias and information reliability.

Assignments that emphasize reasoning, structured problem solving and evidence evaluation continue to support the kinds of cognitive work that remain central to learning.

These approaches allow students to engage with AI without allowing the technology to replace the thinking process itself.

Implications for EdTech Developers

The experiences teachers described also highlight an opportunity for edtech companies.

Many current AI tools were developed as general-purpose language systems and later introduced into education contexts. As a result, teachers are often left to determine whether and how those tools align with classroom learning goals. Future products may benefit from deeper collaboration with educators during the design process.

Teachers in our conversations were already experimenting with small classroom applications, designing AI literacy lessons and building course-specific chatbots.

These experiments resemble early-stage product development.

Partnerships between educators, edtech developers and product managers could help identify instructional problems that AI systems could realistically address.

The Next Phase of the Research

The conversations described in this series represent an early attempt to document how teachers are navigating the arrival of generative AI.

As schools continue experimenting with these tools, the next challenge will be to develop governance frameworks that help educators evaluate when and how AI should be used in learning environments.

Our research team is beginning the next phase of this work by partnering with school districts to develop guidance for AI governance and inviting edtech companies interested in exploring these questions collaboratively.

Rather than assuming that AI will inevitably transform classrooms, this phase of the project will focus on identifying the conditions under which AI tools actually support teaching and learning and how to reduce harm when they don’t.

The fourth grade teacher’s question remains a useful guide: What can I actually use this for in math?

Until the answer becomes clearer, many teachers will likely continue doing what professionals in any field do when new technologies appear: experimenting cautiously, adopting what works and relying on their judgment to decide where or if the tool belongs.

If your school, district, organization, or edtech company is interested in learning more about joining our next project on AI governance, contact our research team at research@edsurge.com.

You must be logged in to post a comment Login