Stephen D Turner of the University of Virginia explores the importance of governance and oversight around AI in the design and execution of lab experiments.

Artificial intelligence is rapidly learning to autonomously design and run biological experiments, but the systems intended to govern those capabilities are struggling to keep pace.

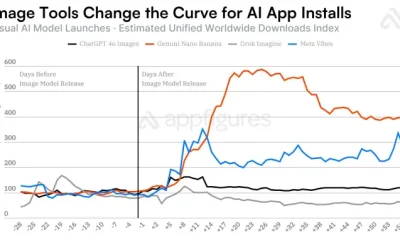

AI company OpenAI and biotech company Ginkgo Bioworks announced in February 2026 that OpenAI’s flagship model GPT-5 had autonomously designed and run 36,000 biological experiments. It did this through a robotic cloud laboratory, a facility where automated equipment controlled remotely by computers carries out experiments. The AI model proposed study designs, and robots carried them out and fed the data back to the model for the next round. Humans set the goal, and the machines did much of the work in the lab, cutting the cost of producing a desired protein by 40pc.

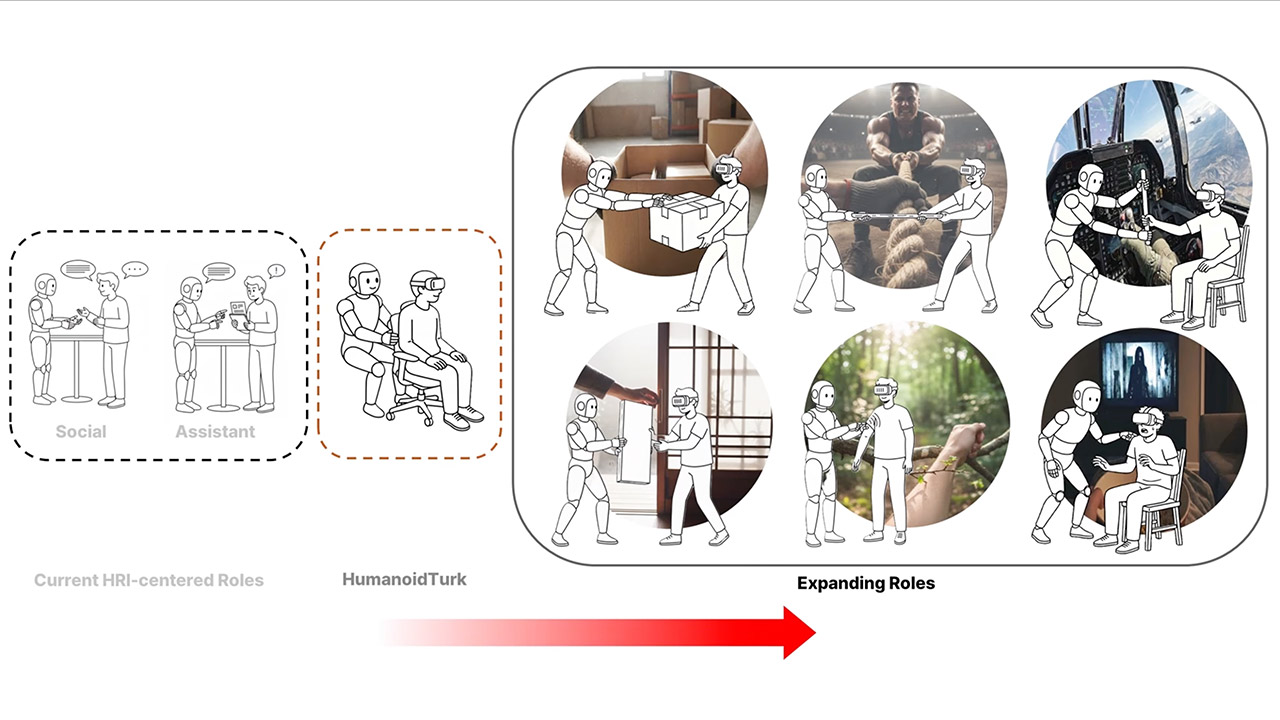

This is programmable biology: designing biological components on a computer and building them in the physical world, with AI closing the loop.

For decades, biology mostly moved from observation toward understanding. Scientists sequenced the genomes of organisms to catalogue all of their DNA, learning how genes encode the proteins that carry out life’s functions. The invention of tools like CRISPR then allowed scientists to edit that DNA for specific purposes, such as disabling a gene linked to disease. AI is now accelerating a third phase, where computers can both design biological systems and rapidly test them.

The process looks less like traditional benchwork in a lab and more like engineering: design, build, test, learn and repeat. Where a traditional experiment might test a single hypothesis, AI-driven programmable biology explores thousands of design variations in parallel, iterating the way an engineer refines a prototype.

As a data scientist who studies genomics and biosecurity, I research how AI is reshaping biological research and what safeguards that demands. Current safety measures and regulations have not kept pace with these capabilities, and the gap between what AI can do in biology and what governance systems are prepared to handle is growing.

What AI makes possible

The clearest example of how researchers are using AI to automate research is AI-accelerated protein design.

Proteins are the molecular machines that carry out most functions in living cells. Designing new ones has traditionally required years of trial and error because even small changes to a protein’s sequence can alter its shape and function in unpredictable ways.

Protein language models, which are AI systems trained on millions of natural protein sequences, can quickly predict how mutations will change a protein’s behavior or design new proteins. These AI models are designing potential new drugs and speeding vaccine development.

Paired with automated labs, these models create tight loops of experimentation and revision, testing thousands of variations in days rather than the months or years a human team would need.

Faster protein engineering could mean faster responses to emerging infections and cheaper drugs.

The dual-use problem

Researchers have raised concerns that these same AI tools could be misused, a challenge known as the dual-use problem: technologies developed for beneficial purposes can also be repurposed to cause harm.

For example, researchers have found that AI models integrated with automated labs can optimise how well a virus spreads, even without specialised training. Scientists have developed a risk-scoring tool to evaluate how AI could modify a virus’s capabilities, such as altering which species it infects or helping it evade the immune system.

Current AI models are able to walk users through the technical steps of recovering live viruses from synthetic DNA. Researchers have determined that AI could lower barriers at multiple stages in the process of developing a bioweapon, and that current oversight does not adequately address this risk.

Risk from bio AI

Experienced scientists are already using AI to plan and design biological experiments. The question of whether AI can help people with limited biology training carry out dangerous lab work is the subject of active research.

Two recent studies have reached different conclusions.

A study by AI company Scale AI and biosecurity nonprofit SecureBio found that when people with limited biology experience were given access to large language models, which is the type of AI behind tools like ChatGPT, they were able to complete biosecurity-related tasks such as troubleshooting complex virology lab protocols with four times greater accuracy. In some areas, these novices outperformed trained experts. Around 90pc of these novices reported little difficulty getting the models to provide risky biological information, such as detailed instructions on working with dangerous pathogens, despite built-in safety filters meant to block such outputs.

In contrast, a study led by Active Site, a research nonprofit that studies the use of AI in synthetic biology, found that AI help did not lead to significant differences in the ability of novices to complete the complex workflow to produce a virus in a biosafety laboratory. However, the AI-assisted group succeeded more often on most tasks and finished some steps faster, most notably on growing cells in the lab.

Hands-on work in the lab has traditionally been a bottleneck to translating designs into results. Even a brilliant study plan still depends on skilled human hands to carry out. That may not last, as cloud laboratories and robotic automation become cheaper and more accessible, allowing researchers to send AI-generated experimental designs to remote facilities for execution.

Responding to AI-driven biological risks

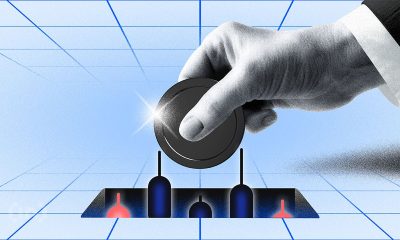

AI systems are now able to run experiments autonomously and at scale, but existing regulations were not designed for this. Rules governing biological research do not account for AI-driven automation, and rules governing AI do not specifically address its use in biology.

In the US, the Biden administration had issued a 2023 executive order on AI security that included biosecurity provisions, but the Trump administration revoked it. Screening the synthetic DNA that commercial providers make to ensure it cannot be misused to make pathogens or toxins remains mostly voluntary. A bipartisan bill introduced in 2026 to mandate DNA screening does not yet address AI-designed sequences that evade current detection methods.

The 1975 Biological Weapons Convention, an international treaty prohibiting the production and use of bioweapons, contains no provisions for AI. The UK AI Security Institute and the US National Security Commission on Emerging Biotechnology have both called for coordinated government action.

The safety evaluations that AI labs run before releasing new models are often opaque and unsuited to capture real-world risk. Researchers have estimated that even modest improvements in an AI model’s ability to help plan pathogen-related experiments could translate to thousands of additional deaths from bioterrorism per year. Timelines for when these capabilities cross critical thresholds remain unclear.

The Nuclear Threat Initiative has proposed a managed access framework for biological AI tools, matching who can use a given tool to the risk level of the model rather than blanket restrictions. The RAND Center on AI, Security and Technology outlined a set of actions researchers could take to improve biosecurity, including improved DNA synthesis screening and model evaluations before release. Researchers have also argued that biological data itself needs governance, especially genomic data that could train models with dangerous capabilities.

Some AI companies have started voluntarily imposing their own safety measures. Anthropic activated its highest safety tier when it released its most advanced model in mid-2025. At the same moment, OpenAI updated its Preparedness Framework, revising the thresholds for how much biological risk a model can pose before additional safeguards are required. But these are voluntary, company-specific steps. Anthropic’s CEO, Dario Amodei, wrote that the pace of AI development may soon outrun any single company’s ability to assess the risk of a given model.

When used in a well-controlled setting, AI can help scientists quickly reach their research goals. What happens when the same capabilities operate outside those controls is a question that policy has not yet answered. Overreact, and talent and investment may move elsewhere while the technology continues advancing anyway. Underreact, and the risks of that technology could be exploited to cause real harm.

![]()

Stephen D Turner is an associate professor of data science and an assistant dean for research at the University of Virginia School of Data Science. He has worked on biosecurity applications in national security and writes about AI, biosecurity and other topics.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

You must be logged in to post a comment Login