Tech

Fan fiction website AO3 is finally coming out of beta

The famous fan fiction website Archive of Our Own or AO3 has finally exited open beta, 17 years after it launched way back in 2009. AO3 is a nonprofit created by the by the Organization for Transformative Works. In an announcement, the team reminisced about its early days and how volunteers had to manually send out invitations to prospective writers. Upon launching the website on open beta, it only had 347 accounts and hosted 6,598 works. Now, it has 10 million registered users and is hosting 17 million fan-created works.

The team has highlighted some of the most useful features it has added over the past 17 years, including its tagging system. It also mentioned a feature it calls “Orphaning,” which allows authors to leave their works online even after deleting their account. In addition, it released the ability to download fanworks in AZW3, EPUB, MOBI, PDF or HTML format for offline access.

Even though the website has only just exited open beta, it has been stable for a long time. Users will not see huge changes, but the team also promised that it will not stop improving the fan fiction portal. It says its contributors and volunteers will continue tweaking the website, and it also continues to welcome anybody who has coding knowledge to contribute their time.

Tech

OpenAI Acquires Popular Tech-Industry Talk Show TBPN

OpenAI is acquiring tech news podcast TBPN, a fast-growing daily show hosted by John Coogan and Jordi Hays. OpenAI says TBPN will keep its editorial independence, even though the acquisition is widely viewed as part of a broader effort to influence public discourse around AI. CNBC reports: In the announcement, OpenAI CEO of AGI Deployment Fidji Simo wrote that their mission of bringing artificial general intelligence comes with a responsibility to have a space for “constructive conversation about the changes AI creates.” Altman has appeared on TBPN multiple times and is a frequent presence across media and podcasts, even hitting NBC’s “Tonight Show Starring Jimmy Fallon” in December.

The announcement says TBPN will maintain editorial independence and continue to choose its own guests. “TBPN is my favorite tech show. We want them to keep that going and for them to do what they do so well,” Altman wrote in a post on X. “I don’t expect them to go any easier on us, am sure I’ll do my part to help enable that with occasional stupid decisions.” OpenAI did not disclose the terms of the deal but said TBPN will be housed within its strategy organization. “While we’ve been critical of the industry at times, after getting to know Sam and the OpenAI team, what stood out most was their openness to feedback and commitment to getting this right,” wrote Hays in a statement. “Moving from commentary to real impact in how this technology is distributed and understood globally is incredibly important to us.”

Tech

Evolution of Ransomware: Multi-Extortion Ransomware Attacks

Ransomware’s Real-World Impact Across Industries

In February 2026, the University of Mississippi Medical Center (UMMC) fell victim to a ransomware attack. The incident took the Epic electronic health record system offline across 35 clinics and more than 200 telehealth sites, forcing the cancellation of chemotherapy appointments and the postponement of non-emergency surgeries. Medical staff were required to revert to paper-based workflows, leaving countless patients to bear the consequences.

UMMC is far from an isolated case. According to recent data, 93% of U.S. healthcare organizations experienced at least one cyberattack in 2025, and 72% of respondents reported that at least one incident directly disrupted patient care.

The manufacturing and financial sectors are equally exposed. In February 2026, payment processing network BridgePay suffered a ransomware attack that took its APIs, virtual terminals, and payment pages completely offline. Across all industries, publicly disclosed ransomware attacks surged 49% year-over-year in 2025, reaching 1,174 confirmed incidents.

As hospitals halt treatments, financial institutions freeze transactions, and manufacturers shut down production lines, ransomware has firmly established itself as a direct business risk with tangible operational consequences.

The Evolution of Ransomware: Double Extortion

Early ransomware operated on a straightforward premise: infiltrate a system, encrypt files, and demand payment in exchange for the decryption key. As organizations began countering this tactic by restoring from backups rather than paying ransoms, threat actors responded by developing a more lucrative model — double extortion.

In a double extortion attack, adversaries first exfiltrate sensitive files — such as patient records and billing data — before encrypting the target system. Victims are then pressured on two fronts: pay to receive the decryption key, or face public exposure of the stolen data.

Backups alone are insufficient against this model. Since attackers already possess the data, refusing to pay the ransom can result in the public release of sensitive files, exposing organizations to significant business losses and regulatory consequences.

The threat landscape has continued to escalate, with triple extortion cases on the rise — a tactic in which attackers directly contact a victim organization’s customers or partners to apply additional pressure.

As of 2025, 124 active ransomware groups have been identified, 73 of which are newly emerged.

The proliferation of AI-powered tools has lowered the barrier to entry for cybercrime, making ransomware capabilities increasingly accessible to less sophisticated actors.

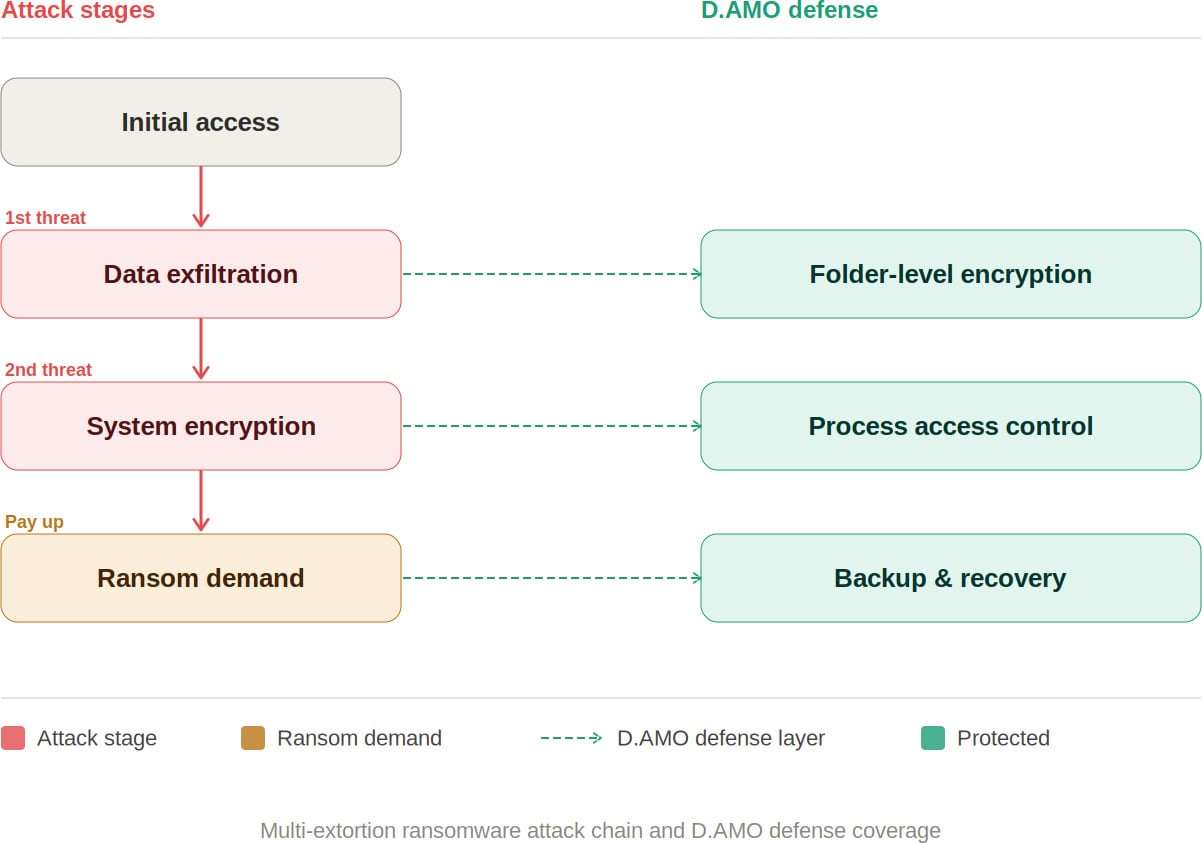

D.AMO makes stolen data unreadable.

See how D.AMO defends against every stage of a ransomware attack.

A Defense Architecture for Multi-Extortion Threats

The rise of multi-extortion ransomware fundamentally changes the assumptions underlying traditional defense strategies. Perimeter-based prevention alone is no longer sufficient.

Organizations need a security posture that protects data from being weaponized after a breach — rendering exfiltrated data unreadable, blocking ransomware from accessing files in the first place, and enabling rapid recovery even when an attack succeeds.

D.AMO: Blocking Every Stage of a Ransomware Attack

D.AMO, developed by Penta Security, is an encryption-based data protection platform designed to address every phase of a multi-extortion ransomware attack. It delivers integrated encryption, access control, and backup recovery across on-premises and cloud environments.

By applying file encryption and process-based access control technologies, D.AMO protects critical data stored on servers and PCs — safeguarding sensitive information against malicious programs through robust access enforcement. D.AMO’s key capabilities are as follows:

Folder-Level File Encryption

D.AMO KE encrypts all files within administrator-designated folders at the OS level. Deployable via an installer without source code modification, it operates using kernel-level encryption technology, enabling fast and secure encryption on existing systems with no disruption to the user experience.

Encryption policies are applied at the folder level, ensuring consistent protection with minimal operational overhead. Critically, even if an attacker exfiltrates sensitive data, the files remain encrypted — neutralizing the data exposure threat that is central to double extortion.

Access Control

D.AMO KE enforces strict access control over processes and OS users, permitting only explicitly authorized access. Ransomware and other malicious applications are automatically blocked from accessing encrypted folders, preventing unauthorized file manipulation.

All blocked activity is recorded through an audit log function and can be reviewed centrally via D.AMO Control Center.

Backup and Recovery

Even in the event of a successful attack, organizations can resume operations through an independently managed recovery system. With D.AMO in place, the ability to restore from backup significantly reduces dependence on decryption key negotiations with threat actors.

As multi-extortion tactics become the norm, neutralizing the data attackers seek to exploit has become a strategic priority. Organizations need the ability to render exfiltrated data unreadable, prevent ransomware from accessing files, and recover rapidly when incidents occur.

D.AMO addresses each stage of a ransomware attack within a single integrated platform — combining encryption, process-based access control, and backup recovery into a unified line of defense.

Want to learn more? Download the D.AMO Data Sheet.

Sponsored and written by Penta Security.

Tech

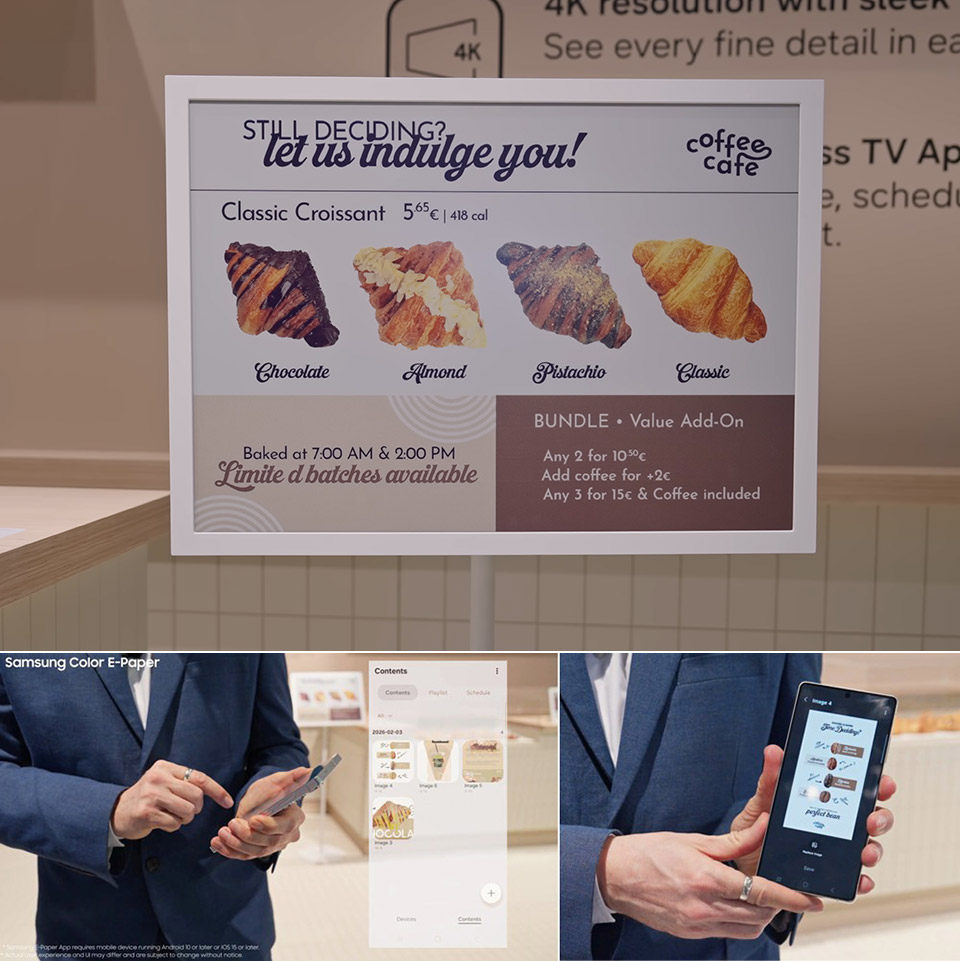

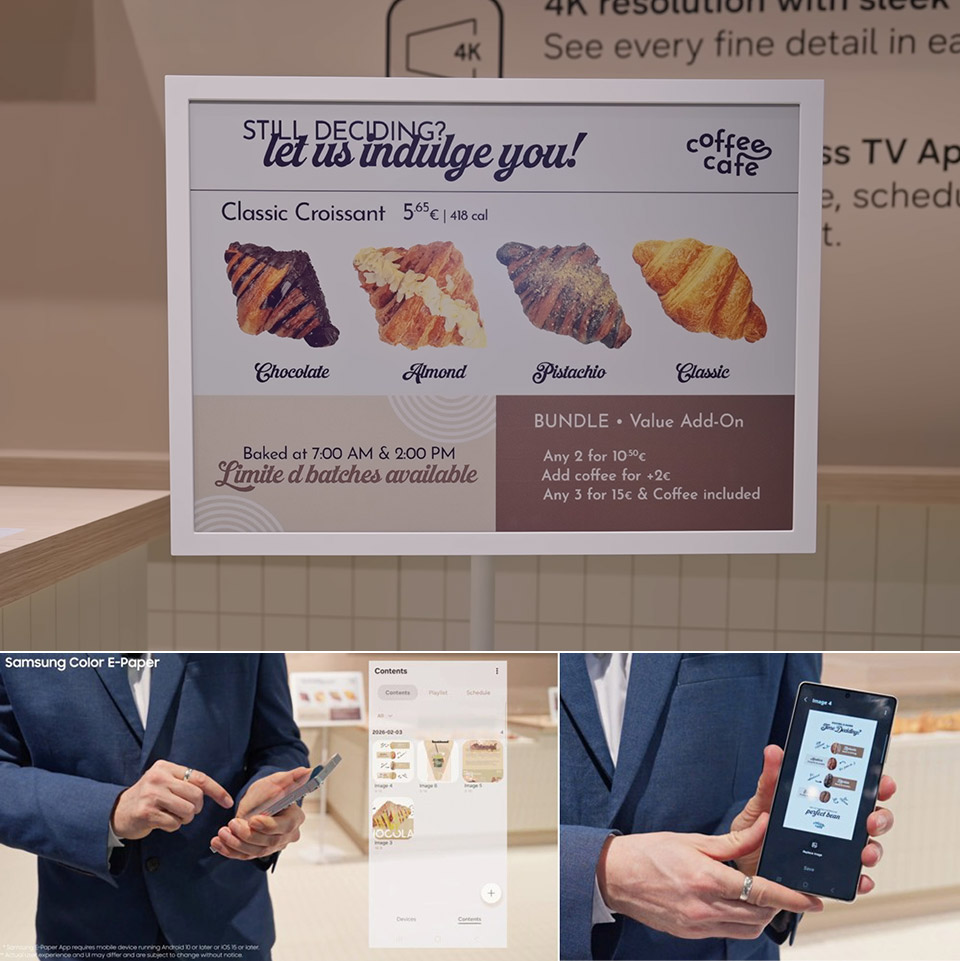

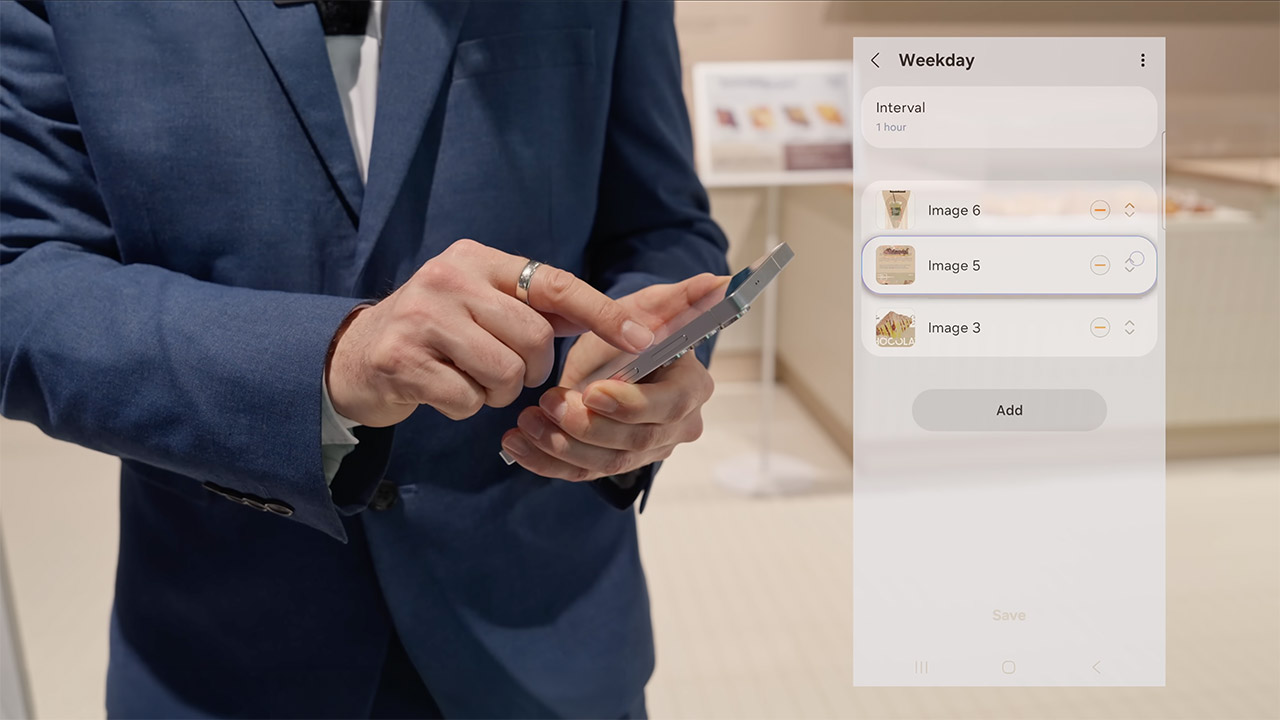

Samsung’s Color E-Paper Gives Retailers a Simple Way to Refresh Every Sign on the Spot

Retail locations have always employed posters and signage to capture the attention of customers, but changing them out on a daily basis is a time-consuming task for workers. Samsung has developed a solution: displays that appear like printed paper but feature digital flexibility. These are known as Color E-Paper and are available in a variety of sizes to meet your needs, including a 13-inch variant that is nearly identical to an A4 sheet, a 20-inch version that matches an A3, and a 32-inch choice if you have a large wall or window to fill.

Each of these display units is extremely thin (under 18mm) and light enough to hang almost anywhere. The 13-inch version may be hung on a door, slid onto a counter, or even fixed to a shelf without the need for additional hardware; simply use the brackets and stands that come with it. Inside each one are millions of very small receptacles, or tiny cups, filled with colored ink particles in red, yellow, white, and blue. Electric signals just nudge the appropriate ones to the top, forming the image or words you wish to view.

Sale

PhotoTag Smart Item Finder, Bluetooth Tracker Tag Works with Apple Find My & Google Find Hub (iOS…

- 【Works with Apple Find My & Google Find Hub】Compatible with both iPhone and Android, track your essentials with confidence. Allowing you to locate…

- 【Customizable Color E-Ink Display – Make It Truly Yours】Unlike ordinary tracker tags, PhotoTag features a customizable color E-Ink screen…

- 【“If Found” Contact Card – Help Lost Items Return】Turn your tracker into a smart return tag. Display your name, phone number, email, or…

Samsung engineers have devised a technique to mix and match those four colors to create a stunning 2.5 million different shades, almost as lovely as the real thing, and much like a real printed page, once the image is up, it consumes no electricity. The only time it requires power is when you change the content, and a full charge on the battery will last for weeks or even months, depending on how frequently you wish to update the data.

To get the fresh image on the screen, simply utilize the mobile app, which is accessible for most smartphones running the latest Android or iOS versions. Simply launch the app, select a layout, see how the colours will appear, and transmit it to the display. If you’re part of a large chain with multiple stores, Samsung has a cloud platform that can manage everything remotely and link with any other screens you already have in place. Bye bye ladder, heaps of paper to print, and no more waiting for the next delivery truck; it seems like a dream come true.

A handful of the models’ housings blend crushed recycled plastic with a special plant-based glue derived from the microscopic particles that floats on water, and the packaging is entirely made of paper rather than plastic. By selecting these solutions, retailers may reduce their environmental effect without sacrificing durability or the feel of real paper, which draws customers in. Retailers that have begun testing the displays have raved about the outcomes on shelves, café walls, and franchise shop gateways. You can swap out a menu board in a diner to go from breakfast to lunch in two seconds flat, highlight a flash sale in every single clothing store at the exact same time, and do the same thing for class schedules or seasonal notices in community areas without messing around.

Samsung plans to release the 20-inch models in the second half of 2026, but the smaller and larger sizes are now available in a few locations, and the early versions appear to be working properly in real stores as we speak. In the future, you may see updates that allow you to choose from different colors or batteries that last longer. For those tired of the never-ending cycle of printing, laminating, and continuously replacing signs, these displays quietly get the job done one gorgeous, low power panel at a time.

Tech

Apple's iPad is still showing the world how to do tablets, 16 years later

The iPad was mocked at launch, has been threatened by rivals throughout, and yet still remains the best-selling tablet ever made, 16 years after it first shipped to customers on April 3, 2010.

It’s easy to name alternatives to the iPad, you could be here all day listing myriad Android tablets. But it’s impossible to name even one true iPad competitor.

For after all of these years since it launched, and after all of the rival devices that have launched after that moment, there isn’t any one tablet that sells enough on its own to compete with the iPad. Its competition is the mass of cheaper rivals, which is not to be ignored, yet none of them have come close to the success of the iPad.

The closest is surely the Microsoft Surface, but if that’s the best and the best-known rival, it doesn’t appear to be doing all that well.

Continue Reading on AppleInsider | Discuss on our Forums

Tech

Python Blood Could Hold the Secret To Healthy Weight Loss

Longtime Slashdot reader fahrbot-bot writes: CU Boulder researchers are reporting that they have discovered an appetite-suppressing compound in python blood that helps the snakes consume enormous meals and go months without eating yet remain metabolically healthy. The findings were published in the journal Natural Metabolism on March 19, 2026.

Pythons can grow as big as a telephone pole, swallow an antelope whole, and go months or even years without eating — all while maintaining a healthy heart and plenty of muscle mass. In the hours after they eat, research has shown, their heart expands 25% and their metabolism speeds up 4,000-fold to help them digest their meal. The team measured blood samples from ball pythons and Burmese pythons, fed once every 28 days, immediately after they ate a meal. In all, they found 208 metabolites that increased significantly after the pythons ate. One molecule, called para-tyramine-O-sulfate (pTOS) soared 1,000-fold.

Further studies, done with Baylor University researchers, showed that when they gave high doses of pTOS to obese or lean mice, it acted on the hypothalamus, the appetite center of the brain, prompting weight loss without causing gastrointestinal problems, muscle loss or declines in energy. The study found that pTOS, which is produced by the snake’s gut bacteria, is not present in mice naturally. It is present in human urine at low levels and does increase somewhat after a meal. But because most research is done in mice or rats, pTOS has been overlooked. “We’ve basically discovered an appetite suppressant that works in mice without some of the side-effects that GLP-1 drugs have,” said senior author Leslie Leinwand, a distinguished professor of Molecular, Cellular and Developmental Biology who has been studying pythons in her lab for two decades. Drugs like Ozempic and Wegovy act on the hormone glucagon-like petide-1 (GLP-1).

Tech

The Best Samsung Galaxy S26 Cases (2026): S26, S26+, and S26 Ultra

Other Cases to Consider

Photograph: Louryn Strampe

Spigen Tough Armor and Nano Pop MagFit Cases: These affordable cases both look and perform well for the price. The Nano Pop case was just a little too slippery for me, and the Tough Armor case kickstand was flimsier than I’d have liked. But if none of our other recommendations tickle your fancy, these options aren’t the worst.

Dbrand Tank Case for $60: This case looks very tactical. If that’s the look you’re after, it’s worth considering. For me, I found the back textures to be a little overstimulating and unpleasant. I wasn’t ever able to forget about my phone case. The buttons are swappable, and there are camera covers to help ensure a cohesive aesthetic. The case is durable and sturdy, and it makes it easy to get a good grip on the phone. It just comes down to the kind of design you prefer.

Poetic Spartan, Revolution, and Guardian Cases: I thought all three of these cases were just fine. I liked the Revolution’s built-in camera privacy cover, which also helped to protect the large camera array from bumps and bruises. But I wasn’t a fan of the rest of the design—while the built-in kickstand is a neat feature, the entirety of the case was too bulky for my preferences. The Guardian was the thinnest, and I liked it for the most part although the black grippy edges were a little bulkier than I wanted them to be. I didn’t like the Spartan case’s built-in metal ring, tactical design, or rigid bumper corners. Overall, the Poetic cases I tried had appealing prices but their designs weren’t my favorite. All three of these cases come with screen protectors, which work just fine (though you’ll have to install them the old-fashioned way).

More Good Screen Protectors

Spigen AluminaCore Screen Protectors (2-pack) for $19: Installation was easy, with a foolproof frame and a peel-off sticker that leaves the protector exactly where you want it. I had some initial issues with bubbles (that I was able to remove with the included squeegee) which is why these aren’t my top pick. I do like that you get two in case of issues with installation, or as a replacement when you inevitably crack the first one.

Cases to Avoid

Samsung Slim Magnet Case for $70: If this case cost $20, then sure. But it’s $70 for an exceedingly thin plastic shell with a ring of magnets built in. The build feels flimsy, and the case feels slippery too. It’s almost easier to grip the phone with no protection rather than to hold it with this case on it. There are simply too many other options on the market for this one to be worthy of a recommendation.

Power up with unlimited access to WIRED. Get best-in-class reporting and exclusive subscriber content that’s too important to ignore. Subscribe Today.

Tech

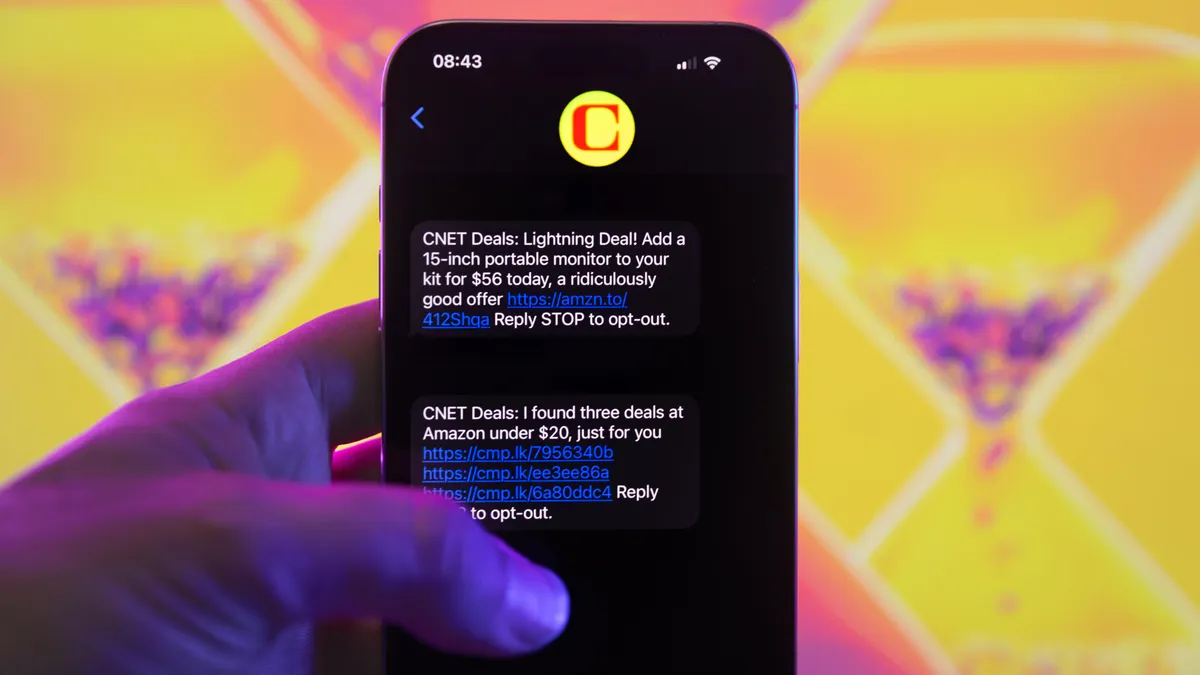

Let Us Text You the Very Best Deals Directly to Your Phone

Even though the Amazon Big Spring Sale has come to a close, that’s no reason to pay full price for the devices and gadgets you want. There are still some exceptional savings to be had with deals popping up left and right. Whether you’re picking up a new laptop or shopping for kitchen essentials, retailers regularly run sales that slash the amount you have to pay. But we know that it can be a real chore finding the best prices out there, and finding the time to trawl the web for the right deal isn’t always in the cards.

Well, luckily for you, it’s our job to search through all the sales. CNET’s shopping experts focus on finding deals that genuinely save you money. We know how to avoid the ones padded by inflated list prices or clever wording. If the discount isn’t real or the product isn’t worth owning, it doesn’t make the cut.

The team and I continually track and handpick the best offers from your favorite retailers, including Amazon and Walmart, as well as others, for our CNET Deals text subscribers. I’ll text the best sales at no cost straight to your phone, so you can keep an eye on the hottest drops and jump on them before everyone else does. It is never a bad time to save money, but with recent holiday expenses behind us, finding affordable items in early 2026 is more welcome than ever.

Why go through the effort of sifting through sales on your own when we can do it for you? Signing up for the CNET Deals text group (just scroll down) takes less than a minute. It is safe and trusted, plus you can opt out anytime. And the best part: It is free. The service costs nothing, and you’ll save money on products you love.

More about our deals text curation

This is the good stuff, not just “discounts” on items that were artificially inflated last week. We vet every deal to ensure the price is accurate and that the product is in stock when we send the text.

We send out a major Deal of the Day most days. During big shopping events, we send two texts a day on standout sales. If we find multiple deals at an ultra-low price, we hook you up in a single text.

My team and I apply the same care that we do across all of CNET, just in a bite‑size format. With daily deals texting, you will receive the same level of deep research and the same confirmation that these discounts are legitimate. And there is no AI pulling the strings. Real people are behind these texts and the research that has gone into finding the best deals.

We are a passionate and dedicated group of bargain hunters. So when we uncover something interesting for an affordable price, usually less than $50 with a significant discount, you will hear about it. If we find a cool thing on sale, we share that discovery. It is as simple as that. Join us today.

Tech

How Apple keeps redefining personal computing at 50

For a 50-year-old company, Apple remains pretty hip and nimble. This week, Devindra and Senior Reporter Igor Bonifacic dive into Apple’s big birthday, the state of the company today and what the next 50 years could bring. It remains one of the few PC companies that’s still firmly committed to the idea of personal computing. Also, we celebrate the successful launch of NASA’s Artemis II mission, which will bring us back to the Moon (but just for a close look).

Subscribe!

Topic

-

Apple at 50: Why it’s still all about personal computing – 1:16

-

Artemis II is safely on its way to the moon, but they’re having problems with Outlook – 37:48

-

SpaceX files for the largest IPO ever, what’s driving their hopes for a 1.75 Trillion valuation? – 40:52

-

Another Starlink satellite broke up in orbit, the second in 6 months – 47:21

-

Anthropic accidentally leaked source code for Claude Code – 52:17

-

FCC issues ban on all foreign-made WiFi routers – 57:18

-

Around Engadget – 1:02:09

-

Pop culture picks – 1:08:20

Credits

Hosts : Devindra Hardawar and Igor Bonifacic

Producer: Ben Ellman

Music: Dale North and Terrence O’Brien

Tech

Arcee’s new, open source Trinity-Large-Thinking is the rare, powerful U.S.-made AI model that enterprises can download and customize

The baton of open source AI models has been passed on between several companies over the years since ChatGPT debuted in late 2022, from Meta with its Llama family to Chinese labs like Qwen and z.ai. But lately, Chinese companies have started pivoting back towards proprietary models even as some U.S. labs like Cursor and Nvidia release their own variants of the Chinese models, leaving a question mark about who will originate this branch of technology going forward.

One answer: Arcee, a San Francisco based lab, which this week released AI Trinity-Large-Thinking—a 399-billion parameter text-only reasoning model released under the uncompromisingly open Apache 2.0 license, allowing for full customizability and commercial usage by anyone from indie developers to large enterprises.

The release represents more than just a new set of weights on AI code sharing community Hugging Face; it is a strategic bet that “American Open Weights” can provide a sovereign alternative to the increasingly closed or restricted frontier models of 2025.

This move arrives precisely as enterprises express growing discomfort with relying on Chinese-based architectures for critical infrastructure, creating a demand for a domestic champion that Arcee intends to fill.

As Clément Delangue, co-founder and CEO of Hugging Face, told VentureBeat in a direct message on X: “The strength of the US has always been its startups so maybe they’re the ones we should count on to lead in open-source AI. Arcee shows that it’s possible!”

Genesis of a 30-person frontier lab

To understand the weight of the Trinity release, one must understand the lab that built it. Based in San Francisco, Arcee AI is a lean team of only 30 people.

While competitors like OpenAI and Google operate with thousands of engineers and multibillion-dollar compute budgets, Arcee has defined itself through what CTO Lucas Atkins calls “engineering through constraint”.

The company first made waves in 2024 after securing a $24 million Series A led by Emergence Capital, bringing its total capital to just under $50 million. In early 2026, the team took a massive risk: they committed $20 million—nearly half their total funding—to a single 33-day training run for Trinity Large.

Utilizing a cluster of 2048 NVIDIA B300 Blackwell GPUs, which provided twice the speed of the previous Hopper generation, Arcee bet the company’s future on the belief that developers needed a frontier model they could truly own.

This “back the company” bet was a masterclass in capital efficiency, proving that a small, focused team could stand up a full pipeline and stabilize training without endless reserves.

Engineering through extreme architectural constraint

Trinity-Large-Thinking is noteworthy for the extreme sparsity of its attention mechanism. While the model houses 400 billion total parameters, its Mixture-of-Experts architecture means that only 1.56%, or 13 billion parameters, are active for any given token.

This allows the model to possess the deep knowledge of a massive system while maintaining the inference speed and operational efficiency of a much smaller one—performing roughly 2 to 3 times faster than its peers on the same hardware. Training such a sparse model presented significant stability challenges.

To prevent a few experts from becoming “winners” while others remained untrained “dead weight,” Arcee developed SMEBU, or Soft-clamped Momentum Expert Bias Updates.

This mechanism ensures that experts are specialized and routed evenly across a general web corpus. The architecture also incorporates a hybrid approach, alternating local and global sliding window attention layers in a 3:1 ratio to maintain performance in long-context scenarios.

The data curriculum and synthetic reasoning

Arcee’s partnership with fellow startup DatologyAI provided a curriculum of over 10 trillion curated tokens. However, the training corpus for the full-scale model was expanded to 20 trillion tokens, split evenly between curated web data and high-quality synthetic data.

Unlike typical imitation-based synthetic data where a smaller model simply learns to mimic a larger one, DatologyAI utilized techniques to synthetically rewrite raw web text—such as Wikipedia articles or blogs—to condense the information.

This process helped the model learn to reason over concepts and information rather than merely memorizing exact token strings.

To ensure regulatory compliance, tremendous effort was invested in excluding copyrighted books and materials with unclear licensing, attracting enterprise customers who are wary of intellectual property risks associated with mainstream LLMs.

This data-first approach allowed the model to scale cleanly while significantly improving performance on complex tasks like mathematics and multi-step agent tool use.

The pivot from yappy chatbots to reasoning agents

The defining feature of this official release is the transition from a standard “instruct” model to a “reasoning” model.

By implementing a “thinking” phase prior to generating a response—similar to the internal loops found in the earlier Trinity-Mini—Arcee has addressed the primary criticism of its January “Preview” release.

Early users of the Preview model had noted that it sometimes struggled with multi-step instructions in complex environments and could be “underwhelming” for agentic tasks.

The “Thinking” update effectively bridges this gap, enabling what Arcee calls “long-horizon agents” that can maintain coherence across multi-turn tool calls without getting “sloppy”.

This reasoning process enables better context coherence and cleaner instruction following under constraint. This has direct implications for Maestro Reasoning, a 32B-parameter derivative of Trinity already being used in audit-focused industries to provide transparent “thought-to-answer” traces.

The goal was to move beyond “yappy” or inefficient chatbots toward reliable, cheap, high-quality agents that stay stable across long-running loops.

Geopolitics and the case for American open weights

The significance of Arcee’s Apache 2.0 commitment is amplified by the retreat of its primary competitors from the open-weight frontier.

Throughout 2025, Chinese research labs like Alibaba’s Qwen and z.ai (aka Zhupai) set the pace for high-efficiency MoE architectures.

However, as we enter 2026, those labs have begun to shift toward proprietary enterprise platforms and specialized subscriptions, signaling a move away from pure community growth.

The fragmentation of these once-prolific teams, such as the departure of key technical leads from Alibaba’s Qwen lab, has left a void at the high end of the open-weight market. In the United States, the movement has faced its own crisis.

Meta’s Llama division notably retreated from the frontier landscape following the mixed reception of Llama 4 in April 2025, which faced reports of quality issues and benchmark manipulation.

For developers who relied on the Llama 3 era of dominance, the lack of a current 400B+ open model created an urgent need for an alternative that Arcee has risen to fill.

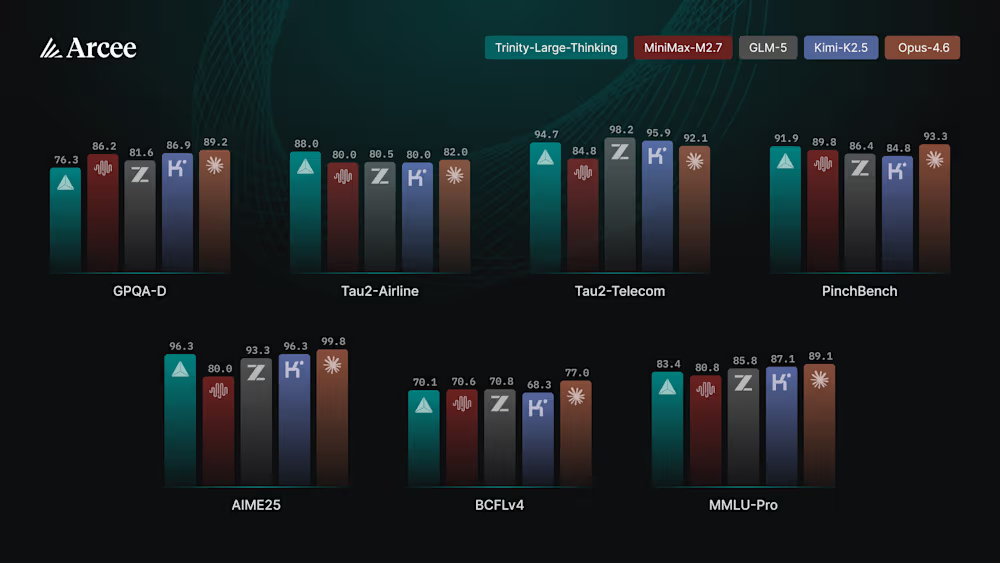

Benchmarks and how Arcee’s Trinity-Large-Thinking stacks up to other U.S. frontier open source AI model offerings

Trinity-Large-Thinking’s performance on agent-specific evaluations establishes it as a legitimate frontier contender. On PinchBench, a critical metric for evaluating model capability on autonomous agentic tasks, Trinity achieved a score of 91.9, placing it just behind the proprietary market leader, Claude Opus 4.6 (93.3).

This competitiveness is mirrored in IFBench, where Trinity’s score of 52.3 sits in a near-dead heat with Opus 4.6’s 53.1, indicating that the reasoning-first “Thinking” update has successfully addressed the instruction-following hurdles that challenged the model’s earlier preview phase.

The model’s broader technical reasoning capabilities also place it at the high end of the current open-source market. It recorded a 96.3 on AIME25, matching the high-tier Kimi-K2.5 and outstripping other major competitors like GLM-5 (93.3) and MiniMax-M2.7 (80.0).

While high-end coding benchmarks like SWE-bench Verified still show a lead for top-tier closed-source models—with Trinity scoring 63.2 against Opus 4.6’s 75.6—the massive delta in cost-per-token positions Trinity as the more viable sovereign infrastructure layer for enterprises looking to deploy these capabilities at production scale.

When it comes to other U.S. open source frontier model offerings, OpenAI’s gpt-oss tops out at 120 billion parameters, but there’s also Google with Gemma (Gemma 4 was just released this week) and IBM’s Granite family is also worth a mention, despite having lower benchmarks. Nvidia’s Nemotron family is also notable, but is fine-tuned and post-trained Qwen variants.

|

Benchmark |

Arcee Trinity-Large |

gpt-oss-120B (High) |

IBM Granite 4.0 |

Google Gemma 4 |

|

GPQA-D |

76.3% |

80.1% |

74.8% |

84.3% |

|

Tau2-Airline |

88.0% |

65.8%* |

68.3% |

76.9% |

|

PinchBench |

91.9% |

69.0% (IFBench) |

89.1% |

93.3% |

|

AIME25 |

96.3% |

97.9% |

88.5% |

89.2% |

|

MMLU-Pro |

83.4% |

90.0% (MMLU) |

81.2% |

85.2% |

So how is an enterprise supposed to choose between all these?

Arcee Trinity-Large-Thinking is the premier choice for organizations building autonomous agents; its sparse 400B architecture excels at “thinking” through multi-step logic, complex math, and long-horizon tool use. By activating only a fraction of its parameters, it provides a high-speed reasoning engine for developers who need GPT-4o-level planning capabilities within a cost-effective, open-source framework.

Conversely, gpt-oss-120B serves as the optimal middle ground for enterprises that require high-reasoning performance but prioritize lower operational costs and deployment flexibility.

Because it activates only 5.1B parameters per forward pass, it is uniquely suited for technical workloads like competitive code generation and advanced mathematical modeling that must run on limited hardware, such as a single H100 GPU.

Its configurable reasoning effort—offering “Low,” “Medium,” and “High” modes—makes it the best fit for production environments where latency and accuracy must be balanced dynamically across different tasks.

For broader, high-throughput applications, Google Gemma 4 and IBM Granite 4.0 serve as the primary backbones. Gemma 4 offers the highest “intelligence density” for general knowledge and scientific accuracy, making it the most versatile option for R&D and high-speed chat interfaces.

Meanwhile, IBM Granite 4.0 is engineered for the “all-day” enterprise workload, utilizing a hybrid architecture that eliminates context bottlenecks for massive document processing. For businesses concerned with legal compliance and hardware efficiency, Granite remains the most reliable foundation for large-scale RAG and document analysis.

Ownership as a feature for regulated industries

In this climate, Arcee’s choice of the Apache 2.0 license is a deliberate act of differentiation. Unlike the restrictive community licenses used by some competitors, Apache 2.0 allows enterprises to truly own their intelligence stack without the “black box” biases of a general-purpose chat model.

“Developers and Enterprises need models they can inspect, post-train, host, distill, and own,” Lucas Atkins noted in the launch announcement.

This ownership is critical for the “bitter lesson” of training small models: you usually need to train a massive frontier model first to generate the high-quality synthetic data and logits required to build efficient student models.

Furthermore, Arcee has released Trinity-Large-TrueBase, a raw 10-trillion-token checkpoint. TrueBase offers a rare, “unspoiled” look at foundational intelligence before instruction tuning and reinforcement learning are applied. For researchers in highly regulated industries like finance and defense, TrueBase allows for authentic audits and custom alignments starting from a clean slate.

Community verdict and the future of distillation

The response from the developer community has been largely positive, reflecting the desire for more open weights, U.S.-made mdoels.

On X, researchers highlighted the disruption, noting that the “insanely cheap” prices for a model of this size would be a boon for the agentic community.

On open AI model inference website OpenRouter, Trinity-Large-Preview established itself as the #1 most used open model in the U.S., serving over 80.6 billion tokens on peak days like March 1, 2026.

The proximity of Trinity-Large-Thinking to Claude Opus 4.6 on PinchBench—at 91.9 versus 93.3—is particularly striking when compared to the cost. At $0.90 per million output tokens, Trinity is approximately 96% cheaper than Opus 4.6, which costs $25 per million output tokens.

Arcee’s strategy is now focused on bringing these pretraining and post-training lessons back down the stack. Much of the work that went into Trinity Large will now flow into the Mini and Nano models, refreshing the company’s compact line with the distillation of frontier-level reasoning.

As global labs pivot toward proprietary lock-in, Arcee has positioned Trinity as a sovereign infrastructure layer that developers can finally control and adapt for long-horizon agentic workflows.

Tech

AI is doing the dirty work for insurance companies, and it’s getting worse

Insurance claims adjusters have never had a reputation for generosity. But at least they were human. That’s changing fast, and not in your favor. A report by Futurism details how AI automation is now a major trend in personal insurance, the health, home, and auto coverage most of us rely on.

Is your doctor’s opinion even part of the process anymore?

It doesn’t seem that your doctor’s opinion carries that much weight now. A Palm Beach Post investigation found that Iris Smith, an 80-year-old suffering from arthritis, may be a victim of AI-fueled preauthorization denials.

In another case, UnitedHealth is currently facing a class-action lawsuit alleging that AI-denied Medicare nursing care contributed to patient deaths. Meanwhile, a National Association of Insurance Commissioners survey found 84% of health insurers are using AI, with 68% deploying it for prior authorization approvals.

Most people give up and don’t even appeal these rejections because the process is too confusing or exhausting, which, if you’re an insurance company, is the outcome you want.

The worst part is that we know AI isn’t always accurate and has a tendency to hallucinate. It’s one thing if it makes a mistake while writing a report, but it’s a completely different ball game when it ends up denying medical aid to someone who truly needs it.

Is there anyone protecting your interests?

Florida Representative Lois Frankel isn’t having any of it. She told the Palm Beach Post she plans to fight any expansion into other states. “We believe Medicare was based on a promise that if your doctor says you need care, if you’re hurt and you need care, Medicare will be there for you, not AI.”

But if the past is any indication, her fight alone won’t be enough. Florida lawmakers tried to pass a bill in 2025, requiring human review for AI-generated denials. It passed the House, died in the Senate, and a Trump executive order discouraging state AI regulations didn’t help.

The silver lining, if you can call it that: nonprofits like Counterforce Health now offer free AI tools that analyze your denial letter and draft a customized appeal, making it easier to fight back. It’s AI versus AI at this point, and the world is growing gloomier by the day.

-

NewsBeat7 days ago

NewsBeat7 days agoThe Story hosts event on Durham’s historic registers

-

NewsBeat20 hours ago

NewsBeat20 hours agoSteven Gerrard disagrees with Gary Neville over ‘shock’ Chelsea and Arsenal claim | Football

-

Sports7 days ago

Sports7 days agoSweet Sixteen Game Thread: Tide vs Michigan

-

Entertainment4 days ago

Fans slam 'heartbreaking' Barbie Dream Fest convention debacle with 'cardboard cutout' experience

-

Crypto World2 days ago

Crypto World2 days agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Business15 hours ago

Business15 hours agoNo Jackpot Winner and $194 Million Prize Rolls Over

-

Entertainment6 days ago

Entertainment6 days agoLana Del Rey Celebrates Her Husband’s 51st Birthday In New Post

-

Tech5 days ago

Tech5 days agoThe Pixel 10a doesn’t have a camera bump, and it’s great

-

Crypto World3 days ago

Dems press CFTC, ethics board on prediction-market insider trades

-

Sports3 days ago

Sports3 days agoTallest college basketball player ever, standing at 7-foot-9, entering transfer portal

-

Tech4 days ago

Tech4 days agoAvatar Legends: The Fighting Game comes out in July and it looks pretty slick

-

Tech3 days ago

Tech3 days agoEE TV is using AI to help you find something to watch

-

Fashion5 days ago

Fashion5 days agoAmazon Sundays: Soft Spring Layers

-

Business2 days ago

Business2 days agoLogin and Checkout Issues Spark Merchant Frustration

-

Fashion6 days ago

Fashion6 days agoWhen Evening Dressing Gets Colorful for Spring

-

Tech3 days ago

Tech3 days agoHow to back up your iPhone & iPad to your Mac before something goes wrong

-

Tech4 days ago

Tech4 days agoApple will hide your email address from apps and websites, but not cops

-

Tech5 days ago

Tech5 days agoElon Musk’s last co-founder reportedly leaves xAI

-

Crypto World4 days ago

Crypto World4 days agoU.S. rule change may open trillions in 401(k) funds to crypto

-

Politics4 days ago

Politics4 days agoShould Trump Be Scared Strait?

You must be logged in to post a comment Login