Google Family Link is a free parental control tool built directly into Android that lets you manage your child’s device from your own phone. It covers app approvals, screen time limits, content filters, location sharing, and more — all without installing any third-party software. This guide walks you through every step: from pre-setup requirements to configuring the controls that actually matter after you are linked.

Quick take: Setup takes about ten minutes if both devices are nearby and the child’s Google Account is already created. The most common cause of failure is having multiple Google accounts on the child’s device — Family Link requires the child’s supervised account to be the only account on their phone during setup.

Before you start: what you need

Getting the right pieces in place before you open the app saves time and avoids the most common setup errors.

Device requirements

According to Google’s official Family Link device compatibility page, your child’s Android device needs to run Android 7.0 (Nougat) or higher for full functionality. Devices running Android 5.0 or 6.0 may support some settings but are not fully reliable. Your own device — the parent phone — needs Android 7.0 or higher, or iOS 16 or higher if you use an iPhone.

To check your child’s Android version: open Settings → scroll to the bottom → tap About phone → look for Android version.

Account requirements

- You need a Google Account (standard Gmail is fine).

- Your child needs a Google Account. If they are under 13, you will create one through the Family Link setup flow — you cannot use a standard account for children under 13 without parental supervision.

- The child’s device must have only one Google account signed in at setup time. If there are multiple accounts, Family Link will remove them during the process — a warning you want to see before, not during, setup.

Apps to download

- On your phone: Google Family Link (the parent version)

- On your child’s phone: Google Family Link for Children & Teens (a separate app)

Both are free on the Google Play Store. Make sure you download the correct version for each device — they are listed separately and serve different functions.

Step 1: Create your child’s Google Account (if they do not have one)

If your child already has a supervised Google Account, skip to Step 2.

Open the Family Link app on your phone and tap Get started. The app will ask whether your child has a Google Account. Select No. You will then be guided through creating a supervised account, which requires:

- Your child’s first name (a last name is optional)

- Their date of birth — this determines the type of account created and the applicable age rules in your country

- A Gmail address for the child (the app will suggest available options)

- A password for the child’s account

- Your own Google Account password to verify parental consent

Once the account is created, Google will ask you to review the privacy settings and data collection preferences for the account. Read through these carefully — this is where you control whether Google can use personalised ads, activity tracking, and similar settings on your child’s profile.

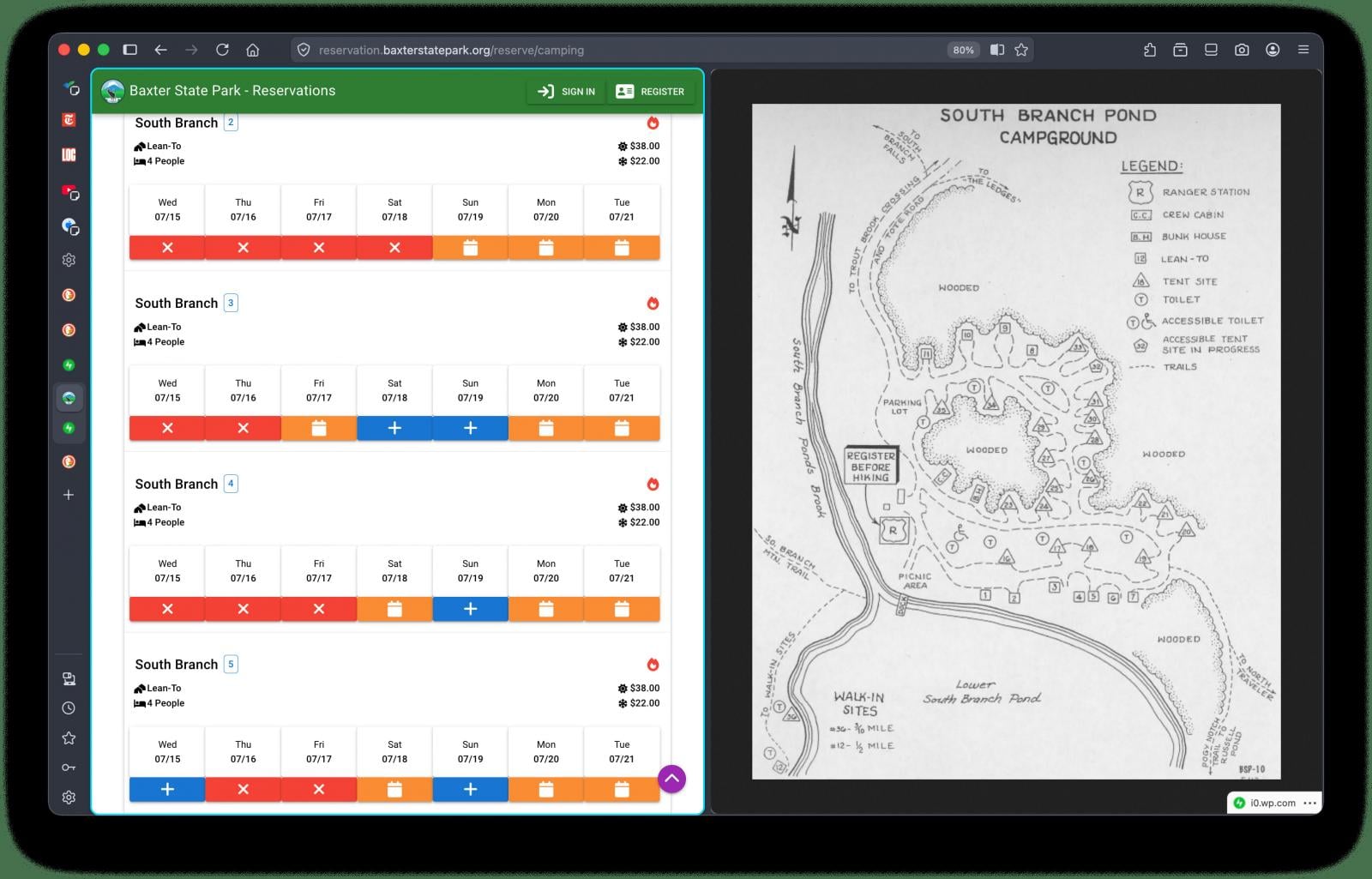

Step 2: Link the accounts using a code

With both apps open and both devices nearby, the Family Link app on your phone will generate a short linking code. Here is the exact sequence:

- On your phone (parent device): open the Family Link app, sign in with your Google Account, select your child’s account, and tap through until you see the linking code screen. Keep this screen visible.

- On your child’s phone (child device): open the Family Link for Children & Teens app, sign in with the child’s Google Account, and enter the code shown on your screen when prompted.

- Back on your phone: the app will confirm that the devices are linked. Tap Next to proceed to the permissions setup screen.

If the code expires before you enter it, tap Generate new code on the parent device. Codes are valid for a short window.

Step 3: Grant permissions on the child’s device

After the link code is accepted, the child’s device will display a series of permission screens. Keep tapping Allow or Next through all of them — these permissions are what allow Family Link to enforce screen time limits, manage apps, and report activity. Without them, most controls will not work.

You will also be prompted to name the child’s device (useful if you have more than one child or device) and to choose which apps the child can access immediately. You can approve or restrict app access from this screen, but you can also do it later from the Family Link dashboard on your own phone.

Once linked, most parents open the dashboard and are not sure where to start. Here is a practical order that covers the highest-value settings first.

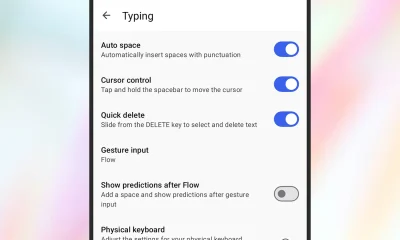

Screen time limits and Downtime

Go to Screen time in the Family Link app on your phone (this tab was redesigned in Google’s February 2025 Family Link update). You can set a total daily screen time limit, schedule Downtime (when the device locks automatically — useful for bedtime and homework), and view how much time your child spends on each app. These are the controls most families configure first.

School Time

School Time is a dedicated block mode that limits device use to approved apps only during school hours. It was previously available on smartwatches and became available on Android phones and tablets in the same February 2025 update. Set your child’s school schedule once, and the device will automatically restrict access during those hours without you needing to manage it manually each day.

App approvals

Under Controls, you can require your approval for every app your child attempts to download from the Play Store. When your child requests an app, you receive a notification on your phone and can approve or decline with one tap. You can also block specific apps already installed on the device.

Content filters

Family Link applies content filters across Google Search (SafeSearch), Chrome (site filtering), YouTube (supervised or restricted mode), and the Play Store (age-based content ratings). Go to Controls → Content filters to review each one. The default settings are conservative but worth reviewing against your child’s age and needs.

Following the February 2025 update, parents can now set which contacts their child is allowed to call and text on Android phones. Go to Controls → Contacts to add approved contacts directly from the Family Link app. Your child can request to add new contacts, which you can approve or decline. This is useful for younger children whose device use should be limited to family and close contacts.

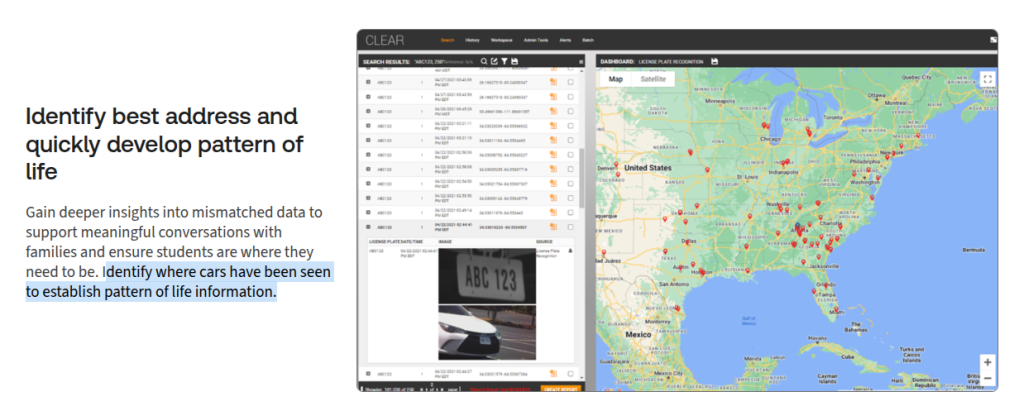

Location sharing

Under your child’s profile in the app, you will find a Location section. Tap See location to view the device on a map. Location sharing requires the child’s device to be on with location services enabled and connected to mobile data or Wi-Fi. It does not update in real time continuously; it shows the most recent known location and can be refreshed manually.

Step 5: Review security settings on the child’s device

Before handing the device back, confirm that Google Play Protect is enabled on the child’s phone. It scans installed apps for harmful behaviour and runs automatically in the background. To check: open Play Store → tap your account icon → Play Protect → confirm scanning is on.

Also review which apps have access to the camera, microphone, and location under Settings → Privacy → Permission manager. Remove permissions that do not match an app’s obvious function. This is a good habit to repeat every few months, particularly after new apps are added. For a broader overview of what each permission does, see the guide on understanding Android app permissions on this site.

What happens when your child turns 13

This is the section most setup guides miss, and it changed significantly at the start of 2026. Previously, children could independently disable Family Link supervision once they reached age 13. Google reversed that policy in January 2026 — teens now require explicit parental permission to remove supervision, regardless of age. You will receive a notification when your child is approaching the applicable age and can decide at that point whether to continue supervision or transition to an unsupervised account through a managed conversation.

If you choose to continue supervision for a teenager, it is worth revisiting your content filter and screen time settings. Controls that work well for a nine-year-old often create unnecessary friction for a fourteen-year-old, which can damage the trust that makes monitoring useful in the first place. You can find a more detailed discussion of that transition in the wider guide on legal Android phone monitoring for parents.

Decision framework: Family Link or a third-party app?

- Child under 13 using a personal Android device → Family Link is the right default. Free, official, no third-party trust required.

- Teenager active on social media with mental health or safety concerns → consider adding Bark alongside Family Link. Bark’s AI content detection covers platforms Family Link does not.

- Multiple children across Android and iOS, or a need for detailed per-app time limits → Qustodio covers multi-device families better than Family Link alone.

- Want to know more before deciding → the Bark vs Qustodio comparison on this site covers both in detail.

Implementation checklist

- Confirm child’s device runs Android 7.0 or higher.

- Download the correct Family Link app on both devices (two separate apps).

- Remove any additional Google accounts from the child’s device before starting.

- Create a supervised child Google Account during setup if the child does not already have one.

- Grant all permissions on the child’s device when prompted — do not skip any.

- Set Screen Time limits and Downtime schedule immediately after linking.

- Configure School Time if the child’s school schedule is consistent.

- Enable app approval for Play Store downloads.

- Set approved contacts if the child is young enough to benefit from contact restrictions.

- Confirm Google Play Protect is active on the child’s device.

- Review app permissions on the child’s device before handing it back.

Troubleshooting

The link code is not working

Codes expire quickly. Tap Generate new code on the parent device and re-enter it on the child’s device within a few seconds. Make sure both devices are connected to the internet.

Family Link is installed but controls are not applying

The most common cause is the child’s device being offline. Controls sync when the device has an internet connection. Also check that all permissions were granted during setup — open the child’s Family Link app and look for any incomplete setup warnings.

The child’s device shows a different account is still signed in

Family Link requires the child’s supervised account to be the only Google Account on the device. Go to Settings → Accounts on the child’s phone and remove any additional accounts before relinking.

Location is not updating

Check that location services are enabled on the child’s device (Settings → Location → make sure it is on). Also verify that the Family Link app has location permission under Settings → Apps → Family Link → Permissions.

App approvals are not coming through to the parent device

Check that notifications are enabled for the Family Link app on your own phone (Settings → Apps → Family Link → Notifications). Without notifications, approval requests will pile up unnoticed.

School Time is not locking the device during school hours

Confirm the schedule was saved correctly in the app and that the child’s device time zone matches the schedule you set. Devices in a different time zone will trigger School Time at the wrong local time.

Key takeaways

- Family Link is free, built by Google, and integrates at the OS level — it is the most reliable starting point for Android parental controls.

- Setup requires two separate apps: one on your phone, one on your child’s phone. Using the wrong app on either device is the most common setup error.

- The child’s supervised account must be the only Google Account on their device during setup.

- As of January 2026, teens need parental approval to remove supervision — this is a significant change from earlier policy.

- School Time, parent-approved contacts, and the redesigned Screen Time tab were all added in the February 2025 update — older setup guides may not mention these.

- Family Link works best alongside a conversation about why monitoring is in place. Transparent oversight tends to build better digital habits than hidden controls.

FAQ

Is Google Family Link free?

Yes. Google Family Link is completely free. There is no paid tier or premium version — all features are included at no cost.

Does my child know they are being monitored?

Yes. Family Link is a transparent tool by design. The child’s device displays a supervision indicator, and the child can see which apps are approved or restricted. It is not a hidden monitoring app.

Can I use Family Link if my child already has a Google Account?

Yes, but only if the account was created for a child under 13 through the supervised account creation flow, or if you add supervision to a teen’s existing account. Standard adult Google Accounts cannot be placed under Family Link supervision.

What happens if my child’s phone dies or goes offline?

Screen time limits and Downtime schedules that were already set will continue to apply. However, the parent dashboard will not update with new location data or activity reports until the device reconnects.

Can I use Family Link on an iPhone?

The parent Family Link app supports iOS 16 or higher on the parent’s device. However, Family Link cannot manage an iPhone as the child’s device — it only supervises Android devices and Chromebooks. For iPhone supervision, Apple’s Screen Time is the equivalent built-in tool.

Does Family Link track my child’s location all the time?

Family Link can show you your child’s device location when the device is online and location services are active. It does not continuously stream a live location; instead, it shows the most recent known location and allows you to request a refresh.

Can my child delete the Family Link app?

No. Family Link cannot be uninstalled by the child from a supervised Android device without parental approval. Since January 2026, teenagers also need parental permission to disable supervision from their account settings.

What is the difference between Family Link and Google Play parental controls?

Google Play parental controls only restrict content ratings inside the Play Store itself — they do not cover screen time, location, app usage, web filtering, or the rest of the device. Family Link is the full parental control system that includes Play Store controls alongside all other features. If you only want to restrict what your child can download, Play Store controls alone may be enough; for broader oversight, you need Family Link.

You must be logged in to post a comment Login