Tech

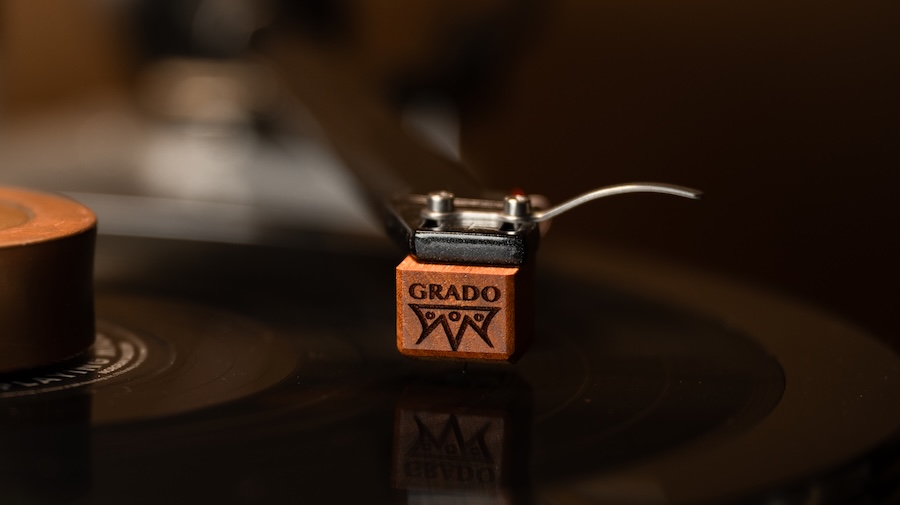

Grado updates its 2026 phono cartridge lineup across Lineage, Timbre, and Prestige series: Brooklyn Built For Your Turntable

Grado didn’t exactly drop this out of nowhere. When we spoke with the team at CanJam NYC 2026, there were enough hints to read between the lines, but nobody was about to say it out loud. Loose lips and all that. So we kept it quiet. Nobody wanted to end up in the East River. Now it’s official.

Grado Labs is rolling out an updated phono cartridge lineup across its Lineage, Timbre, and Prestige Series, built around targeted refinements to the stylus assembly, coil composition, and housing geometry. No reinvention, no marketing circus, just a clear effort to improve how these cartridges track, resolve detail, and behave in real-world setups.

The timing isn’t accidental. Vinyl’s resurgence has been very good to Grado’s cartridge business, but it’s also brought a flood of competition from legacy brands tightening their game to newer players looking to grab market share. Standing still isn’t an option when Ortofon, Audio-Technica, Denon, Hana, and Dynavector keep rolling out new cartridge models with every product cycle.

That’s what makes this update matter. They’re going back to the core elements that define cartridge performance and refining them across the board—better materials, tighter tolerances, and more consistency from model to model.

And let’s be clear—John Grado and Rich Grado didn’t build this brand by coasting. This is what staying relevant looks like when you’ve been doing it since 1953.

In other words, the vinyl boom may have kept the lights on, but this is Grado making sure nobody else walks in and starts rearranging the furniture.

What’s Actually Changed: Stylus, Coils, and Housing Get Real Upgrades

The stylus assembly has been refined across the lineup, with diamond profiles and cantilever materials more carefully matched to each specific model. That matters. You’re not getting a one-size-fits-all approach anymore. Some models step up to nude Shibata diamonds, which offer better groove contact and improved tracking compared to the elliptical profiles used throughout much of the previous generation—but not every cartridge gets that upgrade, and Grado isn’t pretending otherwise.

Coils have been updated across the board with OCC copper, with purity levels scaled depending on the model. The goal is pretty straightforward: cleaner signal transmission, better channel balance, and fewer inconsistencies from unit to unit.

On the mechanical side, the wood-bodied cartridges see revised housing geometry. This isn’t just cosmetic. The updated shapes are designed to improve stability during playback and make setup less of a headache—something anyone who has wrestled with cartridge alignment will appreciate.

Lineage Series: Grado’s Top Shelf, No Apologies

The Lineage Series sits at the top of Grado’s cartridge lineup and uses the company’s low-output moving iron, flux-bridger architecture across all three models. All three get Brazilian Ebony wood bodies, nude Shibata styli, and stereo/mono options, but the cantilever material, frequency range, resistance, and weight are not identical across the range. That’s where the pecking order starts to show.

Grado Epoch4 — $9,995

The Epoch4 is the flagship. It uses a Brazilian Ebony wood body, sapphire cantilever with Shibata diamond, 1.0mV output at 5 CMV (45 degrees), 5 Hz to 75 kHz controlled frequency response, average 35 dB channel separation from 10-30 kHz, 10-47k ohm input load, 8mH inductance, 95 ohms resistance, 10.5 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. Grado also says the internal signal path uses ultra-high purity OCC copper with gold plating, and that the cartridge undergoes cryogenic treatment during component prep and final assembly.

Grado Aeon4 — $4,995

The Aeon4 keeps the Brazilian Ebony body and sapphire/Shibata combo, with the same 1.0mV output, 10-47k ohm input load, 8mH inductance, 10.5 gram weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. Where it differs from the Epoch4 is the controlled frequency response, which is listed at 5 Hz to 70 kHz, and resistance, which drops to 74 ohms. Grado specifies ultra-high purity 7N OCC copper here. In other words, still serious, just not wearing the full tux.

Grado Statement4 — $3,500

The Statement4 is the entry point into the Lineage family, but it is not a stripped-down tourist model. It uses a Brazilian Ebony wood body and swaps to a machined boron cantilever with Shibata diamond. Specs include 1.0mV output at 5 CMV (45 degrees), 5 Hz to 65 kHz controlled frequency response, average 35 dB channel separation from 10-30 kHz, 10-47k ohm input load, 8mH inductance, 74 ohms resistance, 10 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. Like the Aeon4, it uses ultra-high purity 7N OCC copper and cryogenic treatment.

Timbre Series: Where Grado Dials It In for the Real World

The Timbre Series is where Grado Labs hits the balance point—high-end analog performance without drifting into Lineage-level pricing. This is the middle of the lineup, but it’s not a compromise. It’s a deliberate tuning exercise.

Across the range, Grado sticks with elliptical diamond styli and its moving iron, flux-bridger design. The emphasis here isn’t on any single upgrade—it’s on how everything works together. Stylus profile, cantilever material, coil composition, and housing are treated as a system, not a checklist. The result is a presentation that leans into tonal balance, coherence, and musical flow rather than hyper-detail for its own sake.

Material choices define the hierarchy. The Reference3 and Master3 use American Osage wood bodies with boron cantilevers for greater control and resolution, while the Sonata3 and Platinum3 move to Mediterranean Olive wood paired with aluminum cantilevers. The Opus3, built from American Maple, rounds things out with a simpler aluminum cantilever configuration. Same core design philosophy throughout, just scaled in execution.

Grado Reference4 — $1,500

The Reference4 sits at the top of the Timbre Series. It uses an American Osage wood body, machined boron cantilever with Shibata diamond, 4.0mV high output or 1.0mV low output at 5 CMV (45 degrees), 10 Hz to 60 kHz controlled frequency response, average 30 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 55mH inductance in high output and 6mH in low output, 660 ohms resistance in high output and 70 ohms in low output, 9.6 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. Grado also specifies ultra high purity 6N OCC copper in the internal signal path, along with cryogenic treatment and internal damping.

Grado Master4 — $1,000

The Master4 uses an American Osage wood body, machined boron cantilever with elliptical diamond, 4.0mV high output or 1.0mV low output at 5 CMV (45 degrees), 10 Hz to 60 kHz controlled frequency response, average 30 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 55mH inductance in high output and 6mH in low output, 660 ohms resistance in high output and 70 ohms in low output, 9.6 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. It is offered in high output, low output, and mono versions.

Grado Sonata4 — $600

The Sonata4 uses a Mediterranean Olive wood body, special aluminum cantilever with elliptical diamond, 4.0mV high output or 1.0mV low output at 5 CMV (45 degrees), 10 Hz to 60 kHz controlled frequency response, average 30 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 55mH inductance in high output and 6mH in low output, 660 ohms resistance in high output and 70 ohms in low output, 9.4 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. It is also offered in high output, low output, and mono versions.

Grado Platinum4 — $400

The Platinum4 uses a Mediterranean Olive wood body, aluminum cantilever with elliptical diamond, 4.0mV high output or 1.0mV low output at 5 CMV (45 degrees), 10 Hz to 60 kHz controlled frequency response, average 30 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 55mH inductance in high output and 6mH in low output, 660 ohms resistance in high output and 70 ohms in low output, 9.4 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. It is available in high output, low output, and mono versions.

Grado Opus4 — $300

The Opus4 is the entry point into the Timbre Series. It uses an American Maple wood body, aluminum cantilever with elliptical diamond, 4.0mV high output or 1.0mV low output at 5 CMV (45 degrees), 10 Hz to 60 kHz controlled frequency response, average 30 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 55mH inductance in high output and 6mH in low output, 660 ohms resistance in high output and 70 ohms in low output, 8.3 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. Grado says the internal signal path uses ultra high purity 5N OCC copper, with cryogenic treatment and internal damping as part of the build process.

Prestige Series: Where Grado Keeps It Simple and Affordable

The Prestige Series is the foundation of what Grado Labs has been doing for decades and it hasn’t survived this long by accident. This is the entry point into the lineup, but it’s built on a design that’s been refined over more than fifty years, not reinvented every product cycle. Those paying attention will notice that the lineup has been trimmed down.

Across the range, Grado sticks with elliptical diamond styli, aluminum cantilevers, and its moving iron, flux-bridger design. The goal here isn’t to chase ultimate resolution—it’s consistency. Strong tracking, a balanced tonal presentation, and performance that doesn’t drift over time. These are cartridges designed to work, not impress on spec sheets.

One of the biggest advantages in the Prestige Series is the user-replaceable stylus system. When the stylus wears out, you don’t toss the cartridge, you swap the stylus and keep going. It’s practical, cost-effective, and a big part of why these have remained popular with both newcomers and long-time vinyl listeners.

No exotic wood bodies here, no sapphire cantilevers, just a straightforward design that prioritizes reliability and ease of use without abandoning the Grado house sound.

Grado Prestige Gold4 — $260

The Prestige Gold4 sits at the top of the current Prestige Series. It uses a four piece OTL cantilever with a Grado specific elliptical diamond stylus mounted on a brass bushing, 4 mV output at 5 CMV (45 degrees), 10 Hz to 55 kHz frequency response, average 25 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 50 mH inductance, 660 ohms DC resistance, 6 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 um/mN compliance. Grado also says the Gold4 uses a machined turned generator for lower distortion and greater transparency, along with ultra high purity copper wire and its twin magnet / Flux-Bridger moving iron design.

Grado Prestige Red4 — $190

The Prestige Red4 uses a bonded elliptical diamond mounted to an aluminum cantilever, 4 mV output at 5 CMV (45 degrees), 10 Hz to 55 kHz frequency response, average 25 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 50 mH inductance, 660 ohms DC resistance, 6 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 um/mN compliance. Grado describes it as a high output moving iron cartridge and notes that the stylus assembly is user-replaceable, with Prestige Series styli interchangeable across models.

Grado Prestige Green4 — $140

The Prestige Green4 uses a bonded elliptical diamond mounted to an aluminum cantilever, 4 mV output at 5 CMV (45 degrees), 10 Hz to 55 kHz frequency response, average 25 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 50 mH inductance, 660 ohms DC resistance, 6 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 um/mN compliance. Grado also describes it as a high output moving iron cartridge with a user-replaceable stylus assembly, available in both standard mount and P-mount versions.

Trade-In Program: Grado’s Answer to Cartridge Burnout

Grado takes a different approach to long-term ownership, and it’s one that actually makes sense if you’ve been around analog long enough to know how this usually goes.

For its wood-bodied models, Grado Labs offers a cartridge trade-in program that lets you send back your existing cartridge; no matter how worn, and apply it toward a new one at a reduced cost. No drama, no “must be in mint condition” nonsense.

The idea is simple: keep people in the ecosystem without forcing them to start from scratch every time their stylus wears down or their system evolves. Instead of treating cartridges as disposable, Grado treats them like part of a longer-term upgrade path.

That flexibility cuts both ways. You can move up the range if you’re chasing more performance, or step sideways or even downward if your system changes or priorities shift. Either way, you’re getting a current production model with the latest refinements baked in. You won’t get that from Denon, Hana, or Clearaudio.

The Bottom Line

Grado didn’t reinvent anything—they refined the parts that actually matter. Across Lineage, Timbre, and Prestige, the updates focus on improved stylus assemblies, higher-purity OCC copper coils, and revised housing geometry, all aimed at better tracking, consistency, and easier setup.

On paper, the lineup is clearly tiered: Lineage pushes materials and resolution at the top, Timbre balances performance and design choices in the middle, and Prestige continues as the accessible, user-friendly foundation with its replaceable stylus system. Each range sticks to the same moving iron DNA, just executed at different levels.

Who should pay attention? Anyone with a vinyl setup who hasn’t looked at Grado in a while—and especially those watching how established brands respond to a more competitive cartridge market.

Reviews land in May and June.

For more information: gradolabs.com

Related Reading:

Tech

Coatue has a plan to buy up land for data centers, possibly for Anthropic

Coatue, one of the biggest names in venture capital and hedge funds, has a new plan to generate bigger returns on AI beyond its sizable stakes in Anthropic, OpenAI, xAI, and data center companies like Singapore’s DayOne and CoreWeave.

It has launched a venture called Next Frontier to buy up land near large power sources with the goal of turning those parcels into data centers, the Wall Street Journal reports. Sources tell the WSJ that Next Frontier has already signed a joint venture with Fluidstack, a cloud infrastructure startup that penned a $50 billion deal to build data centers for Anthropic. (Coatue did not respond to a request for comment.)

Although the U.S. already has 3,000 data centers, more than 1,500 new ones are in various stages of being built, according to Pew Research, most of them in rural areas. The frenzy is enticing land speculation and data center financing projects from lots of players, ranging from Blackstone to Kevin O’Leary from “Shark Tank.”

.

Tech

Meta buys robotics startup to bolster its humanoid AI ambitions

Meta has acquired humanoid robotics startup Assured Robot Intelligence (ARI) for an undisclosed sum, the social media giant said.

“We acquired Assured Robot Intelligence, a company at the frontier of robotic intelligence designed to enable robots to understand, predict, and adapt to human behaviors in complex and dynamic environments,” a Meta spokesperson told TechCrunch in an emailed statement.

ARI’s team, including its co-founders, will join Meta’s AI unit, the Superintelligence Labs research division. ARI had raised an undisclosed seed round from AI seed firm AIX Ventures.

The startup was building foundation models for humanoid robots to perform all types of physical labor such as household chores. Co-founder Xiaolong Wang was previously a researcher at Nvidia, and an associate professor at UC San Diego, with a list of prestigious awards to his name. Co-founder Lerrel Pinto, who previously taught at NYU and co-founded the kid-size humanoid startup Fauna Robotics before Amazon snapped it up last month, has also won a string of prestigious awards.

ARI will help Meta with its humanoid ambitions. “This team, led by Lerrel Pinto and Xiaolong Wang, will bring a deep expertise in how we can design our models and frontier capabilities for robot control and self-learning to whole-body humanoid control.”

Meta researchers have been working on humanoid robotics tech for years. A leaked memo from a year ago discussed Meta’s ambitions to build such a robot, including AI models and hardware, aimed at consumers.

Even if Meta never releases a consumer humanoid product, many AI experts these days believe that the path to artificial general intelligence (AGI) — the theoretical point at which AI reaches or surpasses human-level intelligence across all domains — will require training AI models in the physical world, where robots learn through direct interaction rather than data alone.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

The ARI and Fauna deals reflect a broader industry sprint — one where forecasts vary wildly, from Goldman Sachs’ projection of $38 billion by 2035 to Morgan Stanley’s estimate of $5 trillion by 2050 — a spread that reflects both the enormous potential and the uncertainty around tech that’s still finding its footing.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

Tech

No new Macs or iPads before September

Apple’s earnings call revealed a few things that make it easy to see what products we can and can’t expect between now and September. The “not coming” list is much longer than the “is probably coming” one.

The Mac is supply-constrained, the iPad isn’t being updated, and iPhones don’t release again until the fall. So, there’s not much left that could arrive in the intervening months.

The Mac mini, Mac Studio, and iMac are all awaiting their M5 upgrades, but Apple’s supply chain is already backed up quite a bit. You can’t purchase an M4 Mac mini if you wanted to.

Memory prices and scarce parts could mean a longer-than-usual wait for new Macs. It’s pretty safe to say based on Tim Cook’s remarks during earnings that there won’t be any through the summer.

The iPad is a gimme because Apple said one isn’t coming without directly saying so. During the earnings call, Apple made it clear that it would be a tough compare since the iPad with A16 was released a year ago.

So if you’re holding your breath for that new budget iPad with A19 and Apple Intelligence, you’ll be waiting a little while longer.

We’ve already got iPhone 17e, so there won’t be any new iPhones until September. Also, Apple Watch won’t get touched until then either.

AirPods and AirPods Pro tend to be announced alongside iPhone too. AirPods Pro were just upgraded in September 2025, but if AirPods 5 are ready, those likely won’t be announced until the iPhone event.

Apple Vision Pro just got the M5 chip in October 2025 after about 20 months on the market, so that won’t be touched anytime soon. And no, that product line hasn’t been abandoned even if rumors attempted to say as much.

There is one product category Apple could touch upon due to its unpredictable release cycle.

Apple Home products are always possible

The Apple TV 4K is still rocking the A15 processor that first debuted in the iPhone 13 in 2021. It is still supported by Apple’s modern operating systems, but at nearly 5 years old, it’s time for an update.

Since Apple TV 4K is the brains of an Apple Home, it might make sense to make that product capable of Apple Intelligence. I know I’d appreciate the upgrade to my new smart home.

The HomePod and HomePod mini are both rocking Apple Watch processors — the S7 and S5 respectively. The S7 debuted in Apple Watch Series 7 in 2021, while the S5 was included in the Apple Watch Series 5 in 2019.

It might not be entirely relevant, but watchOS doesn’t even support the S5 chipset anymore. While HomePods run a version of tvOS, that does indicate exactly how old these chipsets are.

It might be time for Apple to do a basic chipset upgrade of the HomePod and HomePod mini. While they likely won’t support Apple Intelligence natively, it would do them good to have modern networking standards for use in Apple Home.

Those are the only Apple Home products Apple offers today, but there are some rumored products too.

Home Hub and cameras are unlikely

Apple is expected to debut what we’ve been calling the Home Hub tablet at some point in 2026. There are also Apple security cameras in the pipeline, or at least a doorbell, but that release window isn’t known.

WWDC 2026 is expected to be filled with announcements regarding Apple Intelligence. One of the biggest announcements will be about Siri and its new Apple Foundation Model backend.

That Siri upgrade is what the Home Hub has been waiting for. However, while Apple could show off the Home Hub during WWDC to demonstrate AI advancements, it is unlikely to put it for sale until later.

Since the Home Hub product and Apple doorbell don’t have an Apple-equivalent, the company can safely pre-announce them at any point. I believe WWDC would be the best place to demonstrate the Home Hub, but the already-packed event may not have room for it.

Likely nothing until the fall

Since Apple has a bundle of smart home products waiting in the wings, it is safe to assume there might be an Apple Home-focused event in the future. So, even if Apple TV and the HomePods are ready to go, Apple might hold off on them for now.

If you’ve been keeping count, that means we should all have little to no expectations for hardware before the iPhone event in September. While many are likely waiting for their pet product to get an update, they’ll just have to make do with WWDC instead.

The OS 27 cycle will be an important one for Apple. It will be among the first things released to the public under the new CEO John Ternus.

Tech

Apple Vision Pro isn’t dead, Ternus talk, & AI rumors

An odd rumor led to premature calls of Apple Vision Pro’s death, rumors of AI and Home Hubs abound, and Apple’s App Store troubles continue on the AppleInsider Podcast.

AppleInsider Managing Editor Mike Wuerthele joins host Wesley Hilliard as a guest this week to catch up on CEO transition news. It’s clear that the silly coverage surrounding the upcoming transition is already becoming exhausting.

The Apple vs Epic trial continues to be an ongoing event that seems to have no end. This time, Apple has to go to the Supreme Court and Circuit Courts at once.

Your hosts dive into the odd Apple Vision Pro rumor that said Apple had given up on the product. They discuss why this likely isn’t the case and how the Vision product line will continue forward.

There’s also a lot to discuss around incoming products like the Home Hub and security cameras. Wes asks Mike if Apple makes too many products.

The show concludes with a discussion around WWDC and Apple’s AI efforts.

BONUS: Subscribe via Patreon or Apple Podcasts to hear AppleInsider+, the extended edition. This week, Wes and Mike discuss their work at AppleInsider and some odds and ends surrounding that.

Links from the show:

More AppleInsider podcasts

Tune in to our Smart Home Insider podcast covering the latest news, products, apps and everything HomeKit related. Subscribe in Apple Podcasts, Overcast, or just search for HomeKit Insider wherever you get your podcasts.

Podcast artwork from Basic Apple Guy. Download the free wallpaper pack here.

Those interested in sponsoring the show can reach out to us at: [email protected].

Subscribe to AppleInsider on:

Keep up with everything Apple in the weekly AppleInsider Podcast. Just say, “Hey, Siri,” to your HomePod mini and ask for these podcasts, and our latest HomeKit Insider episode too. If you want an ad-free main AppleInsider Podcast experience, you can support the AppleInsider podcast by subscribing for $5 per month through Apple’s Podcasts app, or via Patreon if you prefer any other podcast player.

Tech

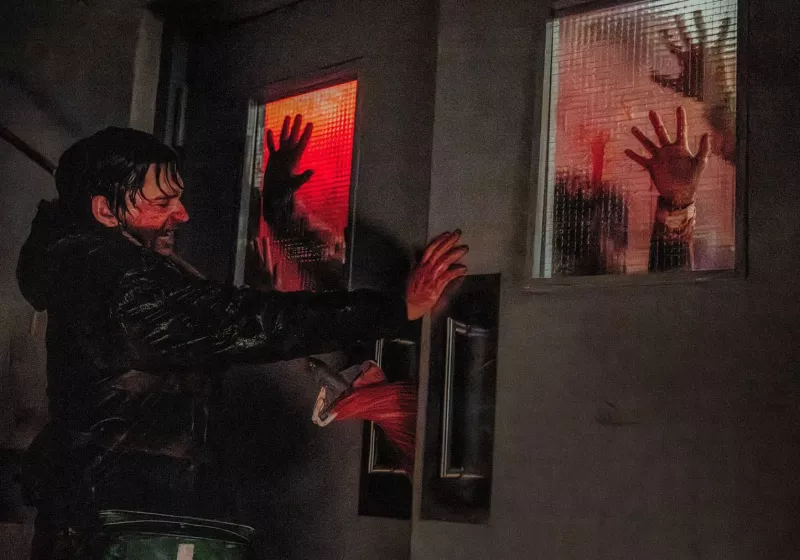

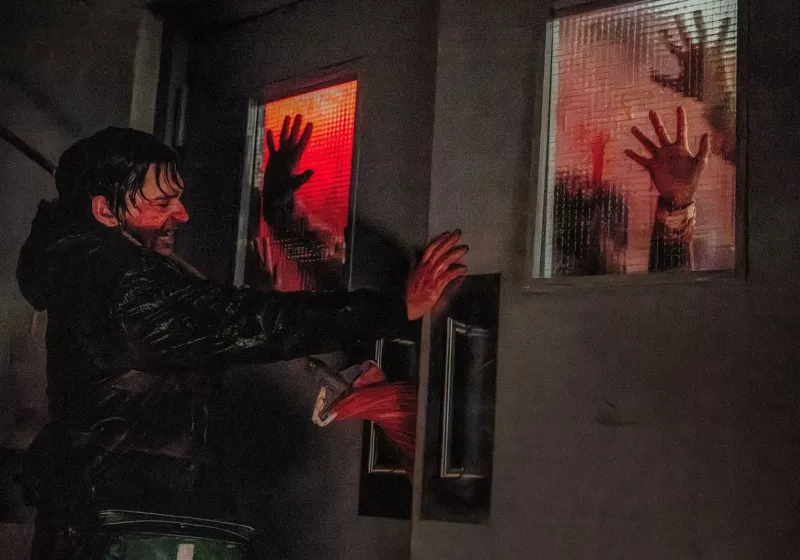

Resident Evil's next reboot leans into horror, not just action

Resident Evil promises an all-new story that follows a medical courier called Bryan, who isn’t having a good night.

Read Entire Article

Source link

Tech

Self-driving cars will no longer go scot-free in California as penalties go into effect

For years, California’s streets have hosted a quiet double standard: a human driver caught making an illegal U-turn got a ticket, but a driverless car doing the same thing got away with it, with perhaps a call to the manufacturer. That changes now.

The California DMV has announced what it calls the most important autonomous vehicle regulations in the United States. For the first time, self-driving cars can now be formally cited for breaking traffic laws (via Futurism).

What exactly can authorities do now?

Quite a lot, actually. Under the new rules, authorities can issue a “Notice of AV Noncompliance” directly to manufacturers whenever their autonomous vehicle (AV) commits a moving violation. All the notices add up as a formal paper trail that feeds into the DMV’s permit review process.

Beyond traffic citations, AV companies are bound to respond to first-responder calls within 30 seconds, provide access to manual override systems, and comply with emergency geofencing directives (clearing restricted zones within two minutes of being notified).

If self-driving carmakers fail to comply, they risk suspension of permits, fleet size restrictions, speed caps, and geographic operation limits, all of which could have a negative effect on the companies’ operations and revenue.

Does this affect self-driving trucks, too?

The same set of regulations also opens California roads to heavy-duty self-driving vehicles for the first time, with new permits now available for trucks weighing over 10,000 pounds. Aurora, which has been operating autonomous freight trucks in Texas, has welcomed the development.

What’s good is that AV companies have until summer 2026 to comply with the new communication, after which, the DMV’s enforcement kicks in. Given that the robotaxi services in America are scaling quickly, establishing a citation system tied directly to operating permits could keep things in check.

The regulations, in totality, were partly inspired by a September 2025 incident in San Bruno, where police were powerless in front of a Waymo that had allegedly made an illegal U-turn, and by repeated cases of robotaxis clogging emergency response routes across San Francisco.

Tech

New Lithium-Plasma Engine Passes Key Mars Propulsion Test

NASA engineers have tested a next-generation lithium-plasma electric propulsion system that reached 120 kilowatts, a new U.S. record and about 25 times the power of the electric thrusters on NASA’s Psyche spacecraft. “Designing and building these thrusters over the last couple of years has been a long lead-up to this first test,” said James Polk, who is a senior research scientist at NASA Jet Propulsion Laboratory. “It’s a huge moment for us because we not only showed the thruster works, but we also hit the power levels we were targeting. And we know we have a good testbed to begin addressing the challenges to scaling up.” Universe Today reports: While 120 kilowatts is a new record, NASA estimates it a future human mission to Mars will require 2 to 4 megawatts of power consisting of several thrusters and requiring more than 23,000 hours (958 days/2.6 years) of operation. To accomplish this, the thrusters would have to withstand more than 2,800 degrees Celsius (5,000 degrees Fahrenheit), which the thrusters achieved during testing.

The reason for the extended operation is due to the estimated time of an entire human mission to Mars, which is estimated to be approximately 2.6 years. This is because the launch window to Mars only opens once every two years due to the orbital behaviors of both planets. While no mission has ever returned from the Red Planet, this same launch window works from Mars to Earth, too. When launched within this window, robotic spacecraft have traditionally taken approximately 6-7 months to reach Mars.

However, a human mission would require a much larger spacecraft to accommodate the astronauts, food, fuel, water, and other mission-essential items. For the approximate 2.6-year mission, this would entail approximately 6-9 months traveling to Mars, followed by approximately 18 months on the surface of Mars until the next launch window opens, then another approximate 6-9 months back to Earth. However, having much less fuel due to the electric propulsion system could potentially alter this timeframe.

Tech

Uber wants to turn its millions of drivers into a sensor grid for self-driving companies

Uber has a long-term ambition that goes well beyond shuttling passengers: the company eventually wants to outfit its human drivers’ cars with sensors to soak up real-world data for autonomous vehicle (AV) companies — and potentially other companies training AI models on physical-world scenarios.

Praveen Neppalli Naga, Uber’s chief technology officer, revealed the plan in an interview at TechCrunch’s StrictlyVC event in San Francisco on Thursday night, describing it as a natural extension of a nascent program the company announced in late January called AV Labs.

“That is the direction we want to go eventually,” Naga said of equipping human drivers’ vehicles. “But first we need to get the understanding of the sensor kits and how they all work. There are some regulations — we have to make sure every state has [clarity on] what sensors mean, and what sharing it means.”

For now, AV Labs relies on a small, dedicated fleet of sensor-equipped cars that Uber operates itself, separate from its driver network. But the ambition is clearly much larger. Uber has millions of drivers globally, and if even a fraction of those cars could be transformed into rolling data-collection platforms, the scale of what Uber could offer the AV industry would dwarf what any individual AV company could assemble on its own.

The insight driving the program, Naga said, is that the limiting factor for AV development is no longer the underlying technology. “The bottleneck is data,” he said. “[Companies like Waymo] need to go around and collect the data, collect different scenarios. You may be able to say: in San Francisco, ‘At this school intersection, I want some data at this time of day so I can train my models.’ The problem for all these companies is access to that data, because they don’t have the capital to deploy the cars and go collect all this information.”

Becoming the data layer for the entire AV ecosystem is a pretty smart play, particularly considering Uber years ago abandoned its own ambitions to build self-driving cars (a move that co-founder Travis Kalanick has publicly lamented as a big mistake). Indeed, many industry observers have wondered if, without its own self-driving cars, Uber might one day be rendered irrelevant as AVs increasingly spring up around the globe.

The company currently has partnerships with 25 AV companies — including Wayve, which operates in London — and is building what Naga described as an “AV cloud”: a library of labeled sensor data that partner companies can query and use to train their models. Partners, which Uber plans to more aggressively invest in directly, can also use the system to run their trained models in “shadow mode” against real Uber trips, simulating how an AV would have performed without actually putting one on the road.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

“Our goal is not to make money out of this data,” Naga said. “We want to democratize it.”

Given the obvious commercial value of what Uber is building, that positioning may not last long. The company has already made equity investments in numerous AV players, and its ability to offer proprietary training data at scale could give it significant leverage over a sector that right now depends on Uber’s ride marketplace to reach customers.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

Tech

Roblox Reality is like DLSS 5 for Roblox, except it invents photorealistic details the game never had

Roblox Reality combines the popular game creation platform’s real-time graphics engine with an AI-powered video generator to dramatically increase the visual quality of user creations. The hybrid architecture does not yet run in real time, but Roblox aims to launch the first iteration in late 2026 or early 2027.

Read Entire Article

Source link

Tech

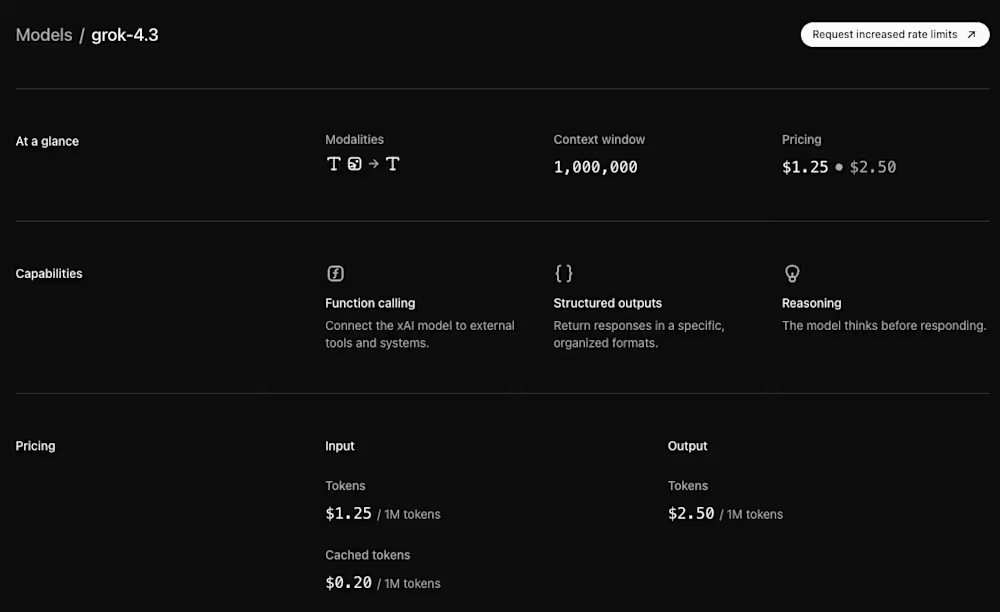

xAI launches Grok 4.3 at an aggressively low price and a new, fast, powerful voice cloning suite

While Elon Musk faces off against his former colleague and OpenAI co-founder Sam Altman in court, Musk’s rival firm xAI, founded to take on OpenAI, isn’t slowing down on launching competitive new products and services.

Last night, xAI shipped a new, proprietary base large language model (LLM), Grok 4.3, and a new voice cloning suite on the web.

The new products arrive after months of tumult from xAI that saw all of Musk’s 10 original co-founders of the lab and dozens more researchers exit the firm and Grok was eclipsed on performance by many new competing LLMs from the likes of OpenAI, Anthropic, Google, and Chinese firms DeepSeek, Moonshot (Kimi), Alibaba (Qwen), z.ai, and others.

While Grok 4.3 does mark a significant leap in performance on third-party benchmarks over its direct predecessor Grok 4.2, according to the independent AI model evaluation firm Artificial Analysis, it still remains below the state-of-the-art set by OpenAI and Anthropic’s latest models.

But the marquee feature of the Grok brand has — other than Musk’s stated opposition to “wokeness” and its more freewheeling personality and image generation policy — increasingly been its low price point when accessed by developers and users via the xAI application programming interface (API), a trend only furthered by Grok 4.3, which costs $1.25 per million input tokens and $2.50 per million output tokens (up to 200,000 input tokens, at which point costs double, a common pricing strategy of leading AI labs) compared to its direct predecessor Grok 4.2’s initial API pricing of $2/$6 per million input/output tokens.

According to xAI’s release notes, Grok 4.3 began beta testing in April for subscribers to xAI’s SuperGrok ($30 monthly) plan, and those of its sibling social network, X, through its Premium+ plan ($40 monthly with 50% for first two months). Now it’s available to all through the xAI API and through partner OpenRouter.

Reasoning baked-in and agentic tool-use capabilities

At the core of Grok 4.3 is a fundamental shift in how the model processes information. Unlike previous iterations where “chain-of-thought” or reasoning could often be toggled or configured by effort levels, Grok 4.3 is built with reasoning as an active, permanent state.

This means the model is designed to “think” before it speaks for every query, a strategy intended to maximize factual accuracy and the handling of complex, multi-step instructions.

The model’s memory is equally expansive, featuring a 1 million-token context window. To put this in perspective, a million tokens is roughly equivalent to several thick novels or the entire codebase of a mid-sized application.

This allows Grok 4.3 to maintain coherence over massive datasets, though xAI has implemented a “Higher context pricing” structure for requests that exceed the 200,000-token threshold.

This tiering suggests that while the “long-term memory” is available, the computational cost of managing that much information remains a significant overhead.Technically, the model accepts both text and image inputs, outputting text.

It is specifically optimized for agentic workflows—scenarios where an AI is not just answering a question but acting as an autonomous agent to complete a task.

For the first time, Grok has access to the same tools and environments a human professional would use. Evidence of this shift is visible in early user interactions:

-

Spreadsheet Engineering: In one instance, the model spent 6 minutes and 22 seconds in a “thought” phase to build a comprehensive OSRS Sailing Combat DPS analyzer. The resulting

.xlsxfile wasn’t a simple table but a multi-sheet dashboard including a “Reference_Data” set and a complex “DPS_Calculator” with formulaic auto-calculations. -

Professional Documentation: Grok now generates formatted PDFs, such as 12-page reports on SpaceX products. These documents incorporate branding, logos, hero images, and structured tables, moving well beyond the markdown blocks of previous iterations.

-

Visual Presentations: The model can design 9-slide PowerPoint decks, utilizing a “Sandwich Structure” (dark titles/conclusions with light content) and integrating data-driven decision matrices and humor.

However, its knowledge of the world is not infinite; the release notes list a knowledge cut-off date of December 2025. Yet, thanks to built-in web search, Grok can reference and use up-to-date information.

In fact, Grok 4.3 arrives with an enhanced ecosystem of tools designed to make it a functional digital employee. The xAI platform now offers a robust set of server-side tools that the model can invoke autonomously based on the complexity of the query.

-

Web and X Search: These tools allow Grok to bypass its knowledge cutoff by browsing the live internet or searching X (formerly Twitter) posts, user profiles, and threads.

-

Code Execution: The model can run Python code in a sandboxed environment to solve mathematical problems or process data.

-

File and Collections Search: A built-in Retrieval-Augmented Generation (RAG) system allows users to query uploaded document collections or search through specific file attachments.

xAI’s Custom Voices let you clone your voice at high quality in a minute or two

Beyond text, xAI has introduced Custom Voices, a sophisticated voice-cloning API and web-based voice cloning creation suite.

This product allows developers to clone a voice from a reference audio clip as short as 120 seconds. Once cloned, the “voice ID” can be used across xAI’s Text-to-Speech (TTS) and Voice Agent APIs.

xAI’s documentation emphasizes that this is not merely about timbre; the model is designed to pick up delivery patterns.

If a user records a reference clip in a “customer support” style, the resulting AI voice will mimic that helpful, professional inflection.

Despite the creative potential, xAI has placed strict geographic limits on this feature, making it available only in the United States, with a notable exception for Illinois due to regional biometric and privacy regulations.

While the console playground is open for general use, programmatic access via the POST /v1/custom-voices endpoint is currently gated to teams on an Enterprise plan.

I tried it myself and after moving through the requisite voice sampling screens on the web — the tool asks you to read aloud several passages of unrelated dialog — I indeed had a copy of my voice that sounded eerily identical to mine and accurately pronounced new words the same way I would when reading allowed from a new script it was given.

You can delete your custom voices in one click on xAI’s Custom Voices web application and create up to 30 new ones at a time.

In terms of licensing, the Custom Voices feature is strictly “scoped to your team” and is never made available to other users, ensuring a private, commercial license for corporate assets.

Access to the new Voice Agent API (grok-voice-think-fast-1.0) is billed at a flat rate of $3.00 per hour ($0.05 per minute) for speech-to-speech interactions. This is on the low-medium end of costs for other competing voice agents, according to my research:

|

Service |

Price per 1k Characters |

Estimated Cost per Minute |

Estimated Cost per Hour |

|

OpenAI TTS (Standard) |

$0.015 |

~$0.015 |

~$0.90 |

|

OpenAI TTS (HD) |

$0.030 |

~$0.030 |

~$1.80 |

|

Grok Voice Agent |

$0.05 |

$3.00 |

|

|

ElevenLabs (Starter) |

~$0.30 |

~$0.30 |

~$18.00 |

|

ElevenLabs (Pro) |

~$0.18 |

~$0.18 |

~$10.80 |

|

Play.ht |

~$0.20 |

~$0.20 |

~$12.00 |

|

Azure/Google Cloud |

$0.016 – $0.024 |

~$0.02 |

~$1.00 – $1.50 |

Complementing this is the standalone Text-to-Speech (TTS) service, which offers five distinct voices (Eve, Ara, Rex, Sal, and Leo) and is priced at $4.20 per 1 million characters.

For transcription needs, the Speech-to-Text (STT) API provides real-time streaming at $0.20 per hour, while batch processing is available at a discounted rate of $0.10 per hour.

To ensure security for client-side applications, xAI utilizes Ephemeral Tokens, allowing for secure WebSocket connections without exposing primary API keys.

Once created, these voices are private to the user’s team and can be used across all voice APIs by referencing a unique 8-character alphanumeric voice_id.

For highly regulated sectors, xAI maintains production-ready standards, including SOC 2 Type II auditing, HIPAA eligibility for healthcare workloads, and GDPR compliance.

Aggressively low API pricing as a differentiator

The most aggressive aspect of the Grok 4.3 announcement is its pricing structure. Bindu Reddy, CEO of enterprise assistant startup Abacus AI noted on X that the model is “as smart as Sonnet 4.6 and 5x cheaper and faster”.

The standard API rates are set at $1.25 per million input tokens and $2.50 per million output tokens. This reflects a significant reduction in cost compared to its predecessor, Grok 4.20, with Artificial Analysis reporting an approximately 40% lower input price and 60% lower output price.

According to our calculations at VentureBeat, that places Grok-4.3 firmly in the lowest cost half of all major foundation models, far closer to Chinese open source offerings than its U.S. proprietary rivals:

|

Model |

Input |

Output |

Total Cost |

Source |

|

MiMo-V2.5 Flash |

$0.10 |

$0.30 |

$0.40 |

|

|

Grok 4.1 Fast |

$0.20 |

$0.50 |

$0.70 |

|

|

MiniMax M2.7 |

$0.30 |

$1.20 |

$1.50 |

|

|

MiMo-V2.5 |

$0.40 |

$2.00 |

$2.40 |

|

|

Gemini 3 Flash |

$0.50 |

$3.00 |

$3.50 |

|

|

Kimi-K2.5 |

$0.60 |

$3.00 |

$3.60 |

|

|

Grok 4.3 |

$1.25 |

$2.50 |

$3.75 |

|

|

GLM-5 |

$1.00 |

$3.20 |

$4.20 |

|

|

GLM-5-Turbo |

$1.20 |

$4.00 |

$5.20 |

|

|

DeepSeek V4 Pro |

$1.74 |

$3.48 |

$5.22 |

|

|

GLM-5.1 |

$1.40 |

$4.40 |

$5.80 |

|

|

Claude Haiku 4.5 |

$1.00 |

$5.00 |

$6.00 |

|

|

Qwen3-Max |

$1.20 |

$6.00 |

$7.20 |

|

|

Gemini 3 Pro |

$2.00 |

$12.00 |

$14.00 |

|

|

GPT-5.4 |

$2.50 |

$15.00 |

$17.50 |

|

|

Claude Opus 4.7 |

$5.00 |

$25.00 |

$30.00 |

|

|

GPT-5.5 |

$5.00 |

$30.00 |

$35.00 |

However, the “reasoning” nature of the model introduces a new billing category: Reasoning tokens.

These are tokens generated during the model’s internal thinking process and are billed at the same rate as standard completion tokens. Effectively, users pay for the AI to “think” before it provides the final answer. xAI has also introduced several unique fee structures:

-

Prompt Caching: Repeated prompts are significantly cheaper, at $0.20 per million tokens, incentivizing developers to reuse context.

-

Tool Invocations: While token usage for tools is billed at standard rates, the act of invoking a tool carries a flat fee—$5.00 per 1,000 calls for Web Search or Code Execution, and $10.00 for File Attachments.

-

Usage Guideline Violation Fee: In a move that may set a new industry precedent, xAI charges a $0.05 fee for requests that are blocked by their safety filters before generation even begins.

The model itself remains accessible via a standard commercial API, with xAI recommending that all developers migrate to grok-4.3 as their “most intelligent and fastest model”.

Third-party benchmark evaluations and analysis

The reception of Grok 4.3 has been polarized, depending largely on the specific use case. Professional benchmarkers and developers have highlighted a “stark gap” between the model’s domain-specific strengths and its general reasoning consistency.

According to independent AI evaluation firm Vals AI, Grok 4.3 has taken the top spot on several specialized indices. It currently ranks #1 on CaseLaw v2 (79.3% accuracy) and #1 on CorpFin.

This 25-point jump in legal reasoning over Grok 4.20 suggests that the “always-on reasoning” architecture is particularly well-suited for the dense, logical structures of law and finance.

Artificial Analysis corroborated this performance, noting a massive improvement in agentic tasks, scoring an Elo of 1500 on the GDPval-AA benchmark, surpassing competitors like Gemini 3.1 Pro and GPT-5.4 mini.

Conversely, users focused on general-purpose agents and coding have highlighted deficiencies.

AI automated brick-and-mortar retail company Andon Labs reported that Grok 4.3 is a “big regression” on the Vending-Bench 2, which measures an AI’s ability to take consistent actions in a simulation.

They colorfully described the model as having “narcolepsy problems,” preferring to remain inactive for multiple simulation days rather than taking the required actions.

The sentiment was echoed by Vals AI, which noted that while the model improved in some coding areas, it remains weak on general coding tasks and “struggles with difficult math problems,” scoring only 11% on ProofBench.

Should your enterprise use Grok 4.3?

The launch of Grok 4.3 represents a calculated bet by xAI that the market wants specialized brilliance and extreme cost efficiency over a perfectly balanced generalist.

By achieving a score of 53 on the Artificial Analysis Intelligence Index while remaining on the “Pareto frontier” of cost-per-intelligence, xAI is positioning itself as the “value” leader for enterprise applications in legal and financial tech.

The “always-on reasoning” is a double-edged sword. While it provides the depth needed to navigate complex case law, the community reports of “narcolepsy” suggest that a model that is always “thinking” may occasionally think itself into a state of paralysis, or at least a state of excessive caution that inhibits agentic action.

In addition, prior Grok model scandals including an X chatbot version referring to itself as “MechaHitler” and posting antisemitic content, sexualized deepfake imagery generation and investigations, and references to racial conflicts and right-wing dog whistle framing of social issues — which appear to mirror many of founder Musk’s own positions, to the point that the model was at one point, checking Musk’s own X account before responding in its X implementation — nearly certain to give some enterprises pause when considering adoption. It’s unclear whether any of those issues remain with Grok 4.3, but one user did note that Grok’s system prompt appears to instruct it “you do not assign broad positive/negative utility functions to groups of people.”

For developers, the decision to adopt Grok 4.3 will likely come down to the nature of their data. For those needing to process a million tokens of legal documents at a fraction of the cost of Claude 4.6 or GPT-5.5, Grok 4.3 is a clear front-runner.

For those building high-frequency autonomous agents or complex math solvers, the “narcolepsy” and coding regressions suggest that xAI’s latest model may still need a few more “tuning passes”.

As OpenRouter noted on X upon making the model live, the “large jump in agentic performance” at a lower price point is an undeniable milestone. Whether that performance can be sustained across all domains remains the primary question for the summer of 2026.

-

Tech5 days ago

Tech5 days agoRegister Renaming | Hackaday

-

Crypto World7 days ago

Crypto World7 days agoHyperliquid $HYPE Rally Builds Momentum as AI Sector Enters Prove-It Phase

-

Politics4 days ago

Politics4 days agoDrax board avoid their own AGM, accused of greenwashing & environmental racism

-

Tech5 days ago

Tech5 days agoWhy Blue Badges Disappeared From Toyota Hybrids

-

Tech5 days ago

Tech5 days agoImages of Samsung’s rumored smart glasses have leaked

-

Sports6 days ago

Sports6 days agoIPL 2026: Ruturaj Gaikwad registers slowest fifty of the season, enters all-time unwanted list | Cricket News

-

Tech14 hours ago

Tech14 hours agoTrump’s 25% EU auto tariff breaches Turnberry Agreement that also covers semiconductors and digital trade

-

NewsBeat6 days ago

NewsBeat6 days agoLK Bennett closes all stores after entering administration

-

Fashion3 days ago

Fashion3 days agoKylie Jenner’s KHY Enters a New Era with ‘Born in LA’

-

Entertainment7 days ago

Entertainment7 days agoMariah Carey Slams Deposition Claims In Brother’s Lawsuit

-

Business3 days ago

Business3 days agoMost Commercial Energy Audits Miss the Real Losses

-

Crypto World4 days ago

Crypto World4 days agoCFTC’s AI will review U.S. crypto registration applications, chairman tells CoinDesk

-

Business5 days ago

Business5 days ago(VIDEO) Charlize Theron Climbs Times Square Billboard to Promote New Netflix Thriller ‘Apex’

-

Business3 days ago

Business3 days agoBarclay Brothers Avoid Bankruptcy: HSBC Drops High Court Petitions After IVA Deal

-

Sports14 hours ago

Sports14 hours agoPaul Scholes issues Marcus Rashford reality check as agreement emerges over Man United star

-

Tech6 days ago

Tech6 days agoMicrosoft to roll out Entra passkeys on Windows in late April

-

Tech6 days ago

Tech6 days agoOpenAI’s Sam Altman apologizes for not reporting ChatGPT account of Tumbler Ridge suspect to police

-

Business3 days ago

Business3 days agoTesla Officially Registers Elon Musk’s Stock: What Investors Need to Know

-

Tech6 days ago

Tech6 days agoOpenAI CEO apologizes to Tumbler Ridge community

-

Tech4 days ago

Tech4 days agoGet Ready for More Brain-Scanning Consumer Gadgets

You must be logged in to post a comment Login