Thousands of satellites are tightly packed into low Earth orbit, and the overcrowding is only growing.

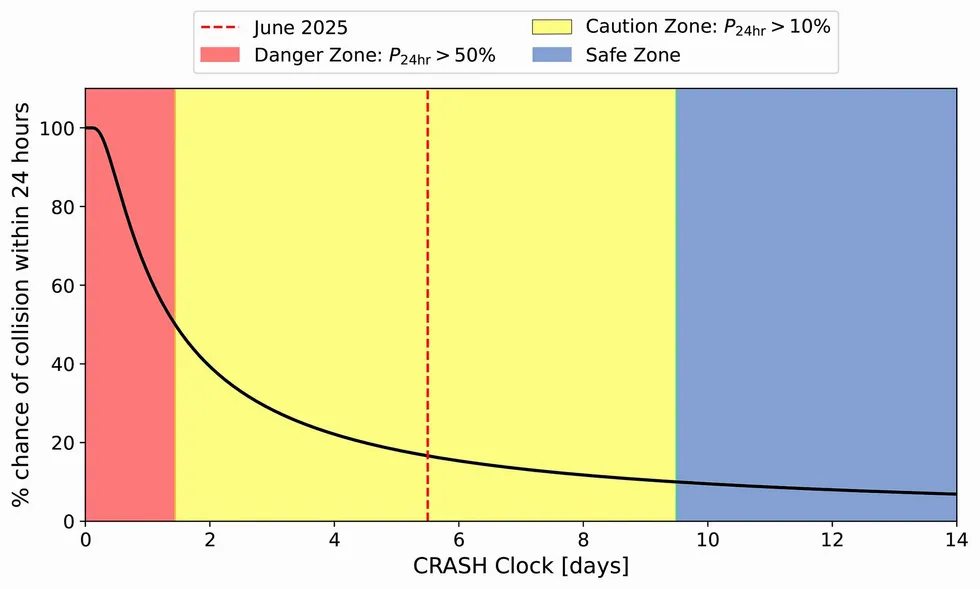

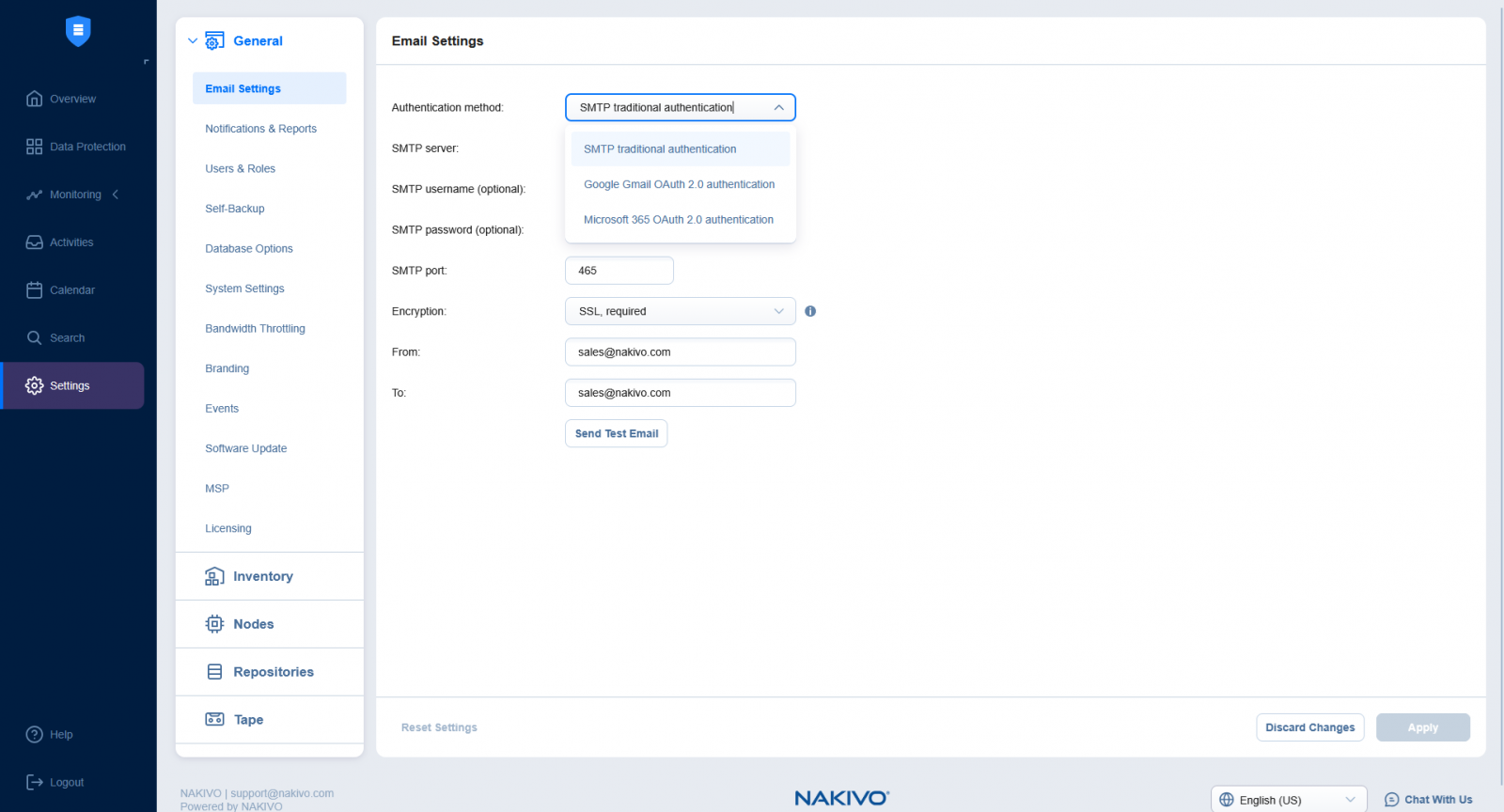

Scientists have created a simple warning system called the CRASH Clock that answers a basic question: If satellites suddenly couldn’t steer around one another, how much time would elapse before there was a crash in orbit? Their current answer: 5.5 days.

The CRASH Clock metric was introduced in a paper originally published on the Arxiv physics preprint server in December and is currently under consideration for publication. The team’s research measures how quickly a catastrophic collision could occur if satellite operators lost the ability to maneuver—whether due to a solar storm, a software failure, or some other catastrophic failure.

To be clear, say the CRASH Clock scientists, low Earth orbit is not about to become a new unstable realm of collisions. But what the researchers have shown, consistent with recent research and public outcry, is that low Earth orbit’s current stability demands perfect decisions on the part of a range of satellite operators around the globe every day. A few mistakes at the wrong time and place in orbit could set a lot of chaos in motion.

But the biggest hidden threat isn’t always debris that can be seen from the ground or via radar imaging systems. Rather, thousands of small pieces of junk that are still big enough to disrupt a satellite’s operations are what satellite operators have nightmares about these days. Making matters worse is SpaceX essentially locking up one of the most valuable altitudes with their Starlink satellite megaconstellation, forcing Chinese competitors to fly higher through clouds of old collision debris left over from earlier accidents.

IEEE Spectrum spoke with astrophysicists Sarah Thiele (graduate student at Princeton University), Aaron Boley (professor of physics and astronomy at the University of British Columbia, in Vancouver, Canada), and Samantha Lawler (associate professor of astronomy at the University of Regina, in Saskatchewan, Canada) about their new paper, and about how close satellites actually are to one another, why you can’t see most space junk, and what happens to the power grid when everything in orbit fails at once.

Does the CRASH Clock measure Kessler syndrome, or something different?

Sarah Thiele: A lot of people are claiming we’re saying Kessler syndrome is days away, and that’s not what our work is saying. We’re not making any claim about this being a runaway collisional cascade. We only look at the timescale to the first collision—we don’t simulate secondary or tertiary collisions. The CRASH Clock reflects how reliant we are on errorless operations and is an indicator for stress on the orbital environment.

Aaron Boley: A lot of people’s mental vision of Kessler syndrome is this very rapid runaway, and in reality this is something that can take decades to truly build.

Thiele: Recent papers found that altitudes between 520 and 1,000 kilometers have already reached this potential runaway threshold. Even in that case, the timescales for how slowly this happens are very long. It’s more about whether you have a significant number of objects at a given altitude such that controlling the proliferation of debris becomes difficult.

Understanding the CRASH Clock’s Implications

What does the CRASH Clock approaching zero actually mean?

Thiele: The CRASH Clock assumes no maneuvers can happen—a worst-case scenario where some catastrophic event like a solar storm has occurred. A zero value would mean if you lose maneuvering capabilities, you’re likely to have a collision right away. It’s possible to reach saturation where any maneuver triggers another maneuver, and you have this endless swarm of maneuvers where dodging doesn’t mean anything anymore.

Boley: I think about the CRASH Clock as an evaluation of stress on orbit. As you approach zero, there’s very little tolerance for error. If you have an accidental explosion—whether a battery exploded or debris slammed into a satellite—the risk of knock-on effects is amplified. It doesn’t mean a runaway, but you can have consequences that are still operationally bad. It means much higher costs—both economic and environmental—because companies have to replace satellites more often. Greater launches, more satellites going up and coming down. The orbital congestion, the atmospheric pollution, all of that gets amplified.

Are working satellites becoming a bigger danger to each other than debris?

Boley: The biggest risk on orbit is the lethal non-trackable debris—this middle region where you can’t track it, it won’t cause an explosion, but it can disable the spacecraft if hit. This population is very large compared with what we actually track. We often talk about Kessler syndrome in terms of number density, but really what’s also important is the collisional area on orbit. As you increase the area through the number of active satellites, you increase the probability of interacting with smaller debris.

Samantha Lawler: Starlink just released a conjunction report—they’re doing one collision avoidance maneuver every two minutes on average in their megaconstellation.

The orbit at 550 km altitude, in particular, is densely packed with Starlink satellites. Is that right?

Lawler: The way Starlink has occupied 550 km and filled it to very high density means anybody who wants to use a higher-altitude orbit has to get through that really dense shell. China’s megaconstellations are all at higher altitudes, so they have to go through Starlink. A couple of weeks ago, there was a headline about a Starlink satellite almost hitting a Chinese rocket. These problems are happening now. Starlink recently announced they’re moving down to 350 km, shifting satellites to even lower orbits. Really, everybody has to go through them—including ISS, including astronauts.

Thiele: 550 km has the highest density of active payloads. There are other orbits of concern around 800 km—the altitude of the [2007] Chinese anti-satellite missile test and the [2009] Cosmos-Iridium collision. Above 600 km, atmospheric drag takes a very long time to bring objects down. Below 600 km, drag acts as a natural cleaning mechanism. In that 800 km to 900 km band, there’s a lot of debris that’s going to be there for centuries.

Impact of Collisions at 550 Kilometers

What happens if there’s a collision at 550 km? Would that orbit become unusable?

Thiele: No, it would not become unusable—not a Gravity movie scenario. Any catastrophic collision is an acute injection of debris. You would still be able to use that altitude, but your operating conditions change. You’re going to do a lot more collision-avoidance maneuvers. Because it’s below 600 km, that debris will come down within a handful of years. But in the meantime, you’re dealing with a lot more danger, especially because that’s the altitude with the highest density of Starlink satellites.

Lawler: I don’t know how quickly Starlink can respond to new debris injections. It takes days or weeks for debris to be tracked, cataloged, and made public. I hope Starlink has access to faster services, because in the meantime that’s an awful lot of risk.

How do solar storms affect orbital safety?

Lawler: Solar storms make the atmosphere puff up—high-energy particles smashing into the atmosphere. Drag can change very quickly. During the May 2024 solar storm, orbital uncertainties were kilometers. With things traveling 7 kilometers per second, that’s terrifying. Everything is maneuvering at the same time, which adds uncertainty. You want to have margin for error, time to recover after an event that changes many orbits. We’ve come off solar maximum, but over the next couple of years it’s very likely we’ll have more really powerful solar storms.

Thiele: The risk for collision within the first few days of a solar storm is a lot higher than under normal operating conditions. Even if you can still communicate with your satellite, there’s so much uncertainty in your positions when everything is moving because of atmospheric drag. When you have high density of objects, it makes the likelihood of collision a lot more prominent.

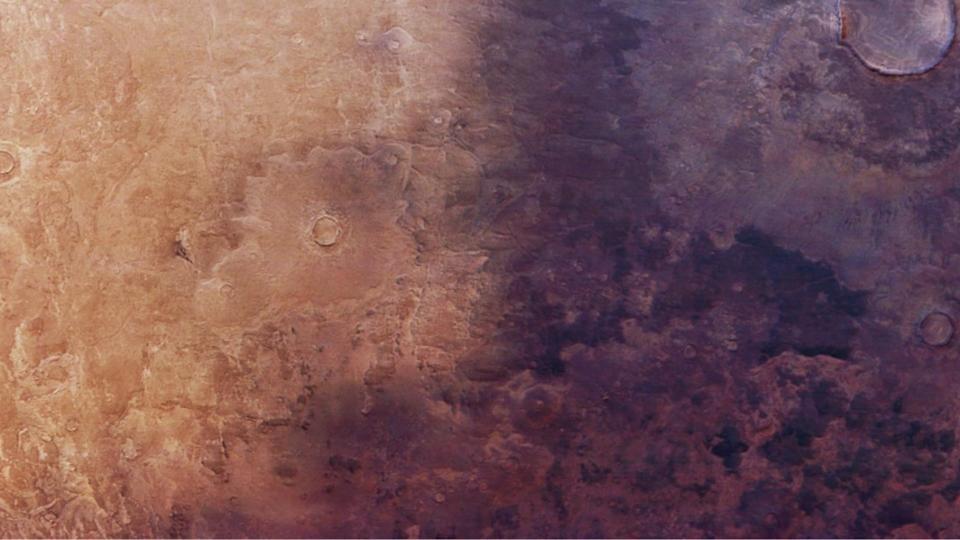

Canadian and American researchers simulated satellite orbits in low Earth orbit and generated a metric, the CRASH Clock, that measures the number of days before collisions start happening if collision-avoidance maneuvers stop. Sarah Thiele, Skye R. Heiland, et al.

Canadian and American researchers simulated satellite orbits in low Earth orbit and generated a metric, the CRASH Clock, that measures the number of days before collisions start happening if collision-avoidance maneuvers stop. Sarah Thiele, Skye R. Heiland, et al.

Between the first and second drafts of your paper that were uploaded to the preprint server, your key metric, the CRASH Clock finding, was updated from 2.8 days to 5.5 days. Can you explain the revision?

Thiele: We updated based on community feedback, which was excellent. The newer numbers are 164 days for 2018 and 5.5 days for 2025. The paper is submitted and will hopefully go through peer review.

Lawler: It’s been a very interesting process putting this on Arxiv and receiving community feedback. I feel like it’s been peer-reviewed almost—we got really good feedback from top-tier experts that improved the paper. Sarah put a note, “feedback welcome,” and we got very helpful feedback. Sometimes the internet works well. If you think 5.5 days is okay when 2.8 days was not, you missed the point of the paper.

Thiele: The paper is quite interdisciplinary. My hope was to bridge astrophysicists, industry operators, and policymakers—give people a structure to assess space safety. All these different stakeholders use space for different reasons, so work that has an interdisciplinary connection can get conversations started between these different domains.

From Your Site Articles

Related Articles Around the Web

Canadian and American researchers simulated satellite orbits in low Earth orbit and generated a metric, the CRASH Clock, that measures the number of days before collisions start happening if collision-avoidance maneuvers stop.

Canadian and American researchers simulated satellite orbits in low Earth orbit and generated a metric, the CRASH Clock, that measures the number of days before collisions start happening if collision-avoidance maneuvers stop.

You must be logged in to post a comment Login