TL;DR

Tesla filed a bespoke Roadster badge trademark, its first standalone vehicle branding apart from the Cybertruck. The car was promised in 2017 for 2020 delivery and remains unbuilt, with a reveal now expected in late May or June 2026.

AeroKoi set out to answer a simple question. Could a desktop 3D printer produce train whistles that captured the exact chords once carried across fields and towns by steam engines? After months of steady work the answer arrived loud and clear through shop air at 120 pounds per square inch.

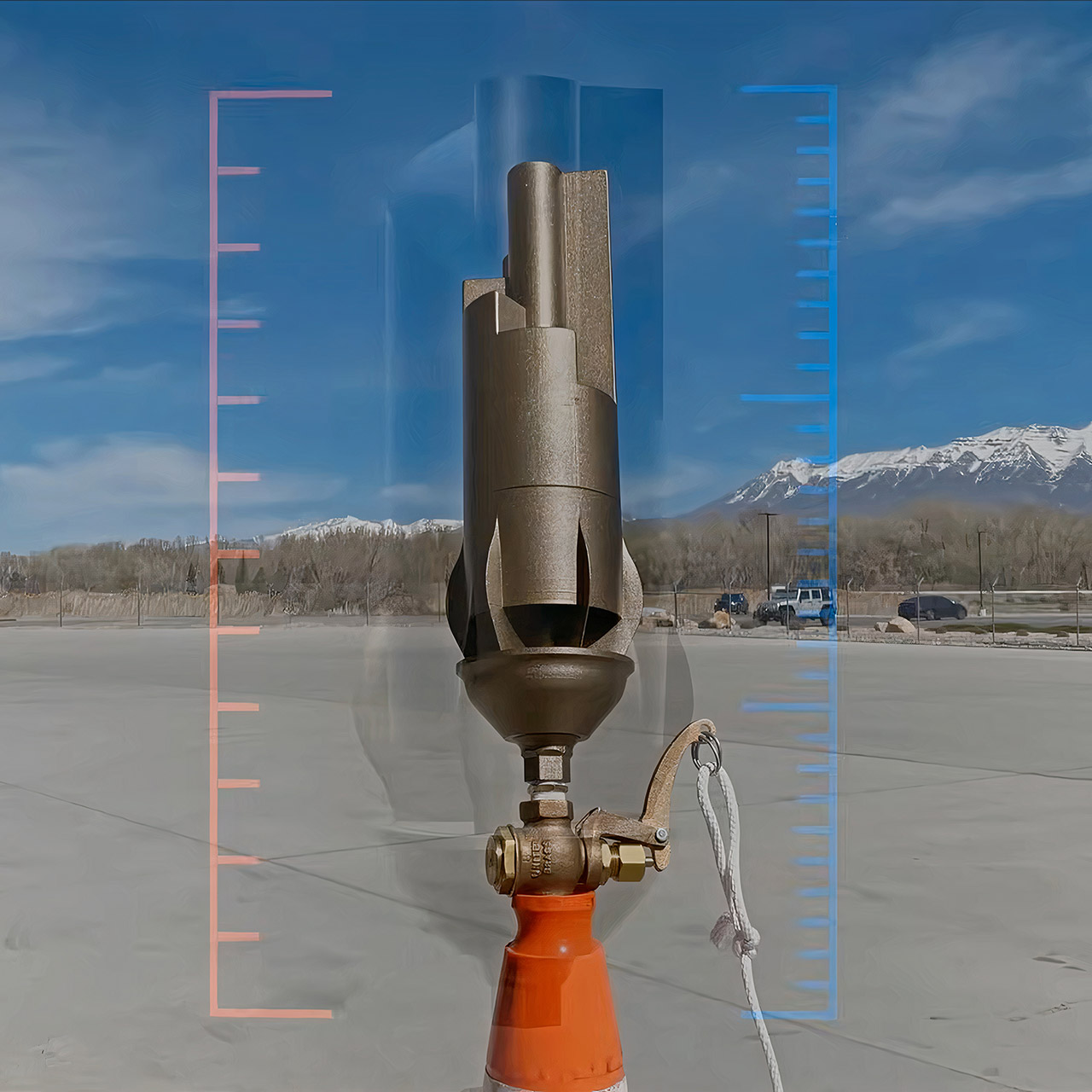

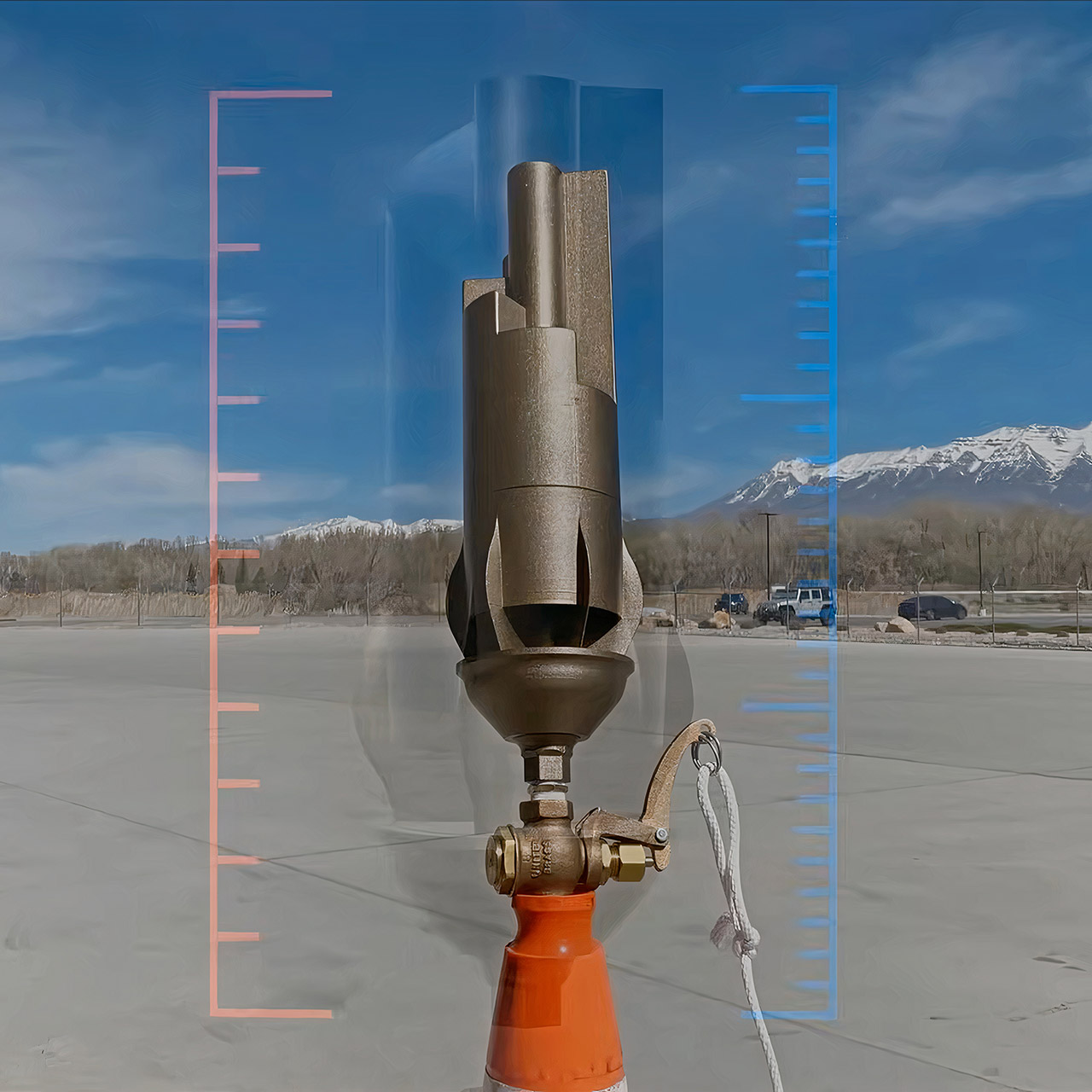

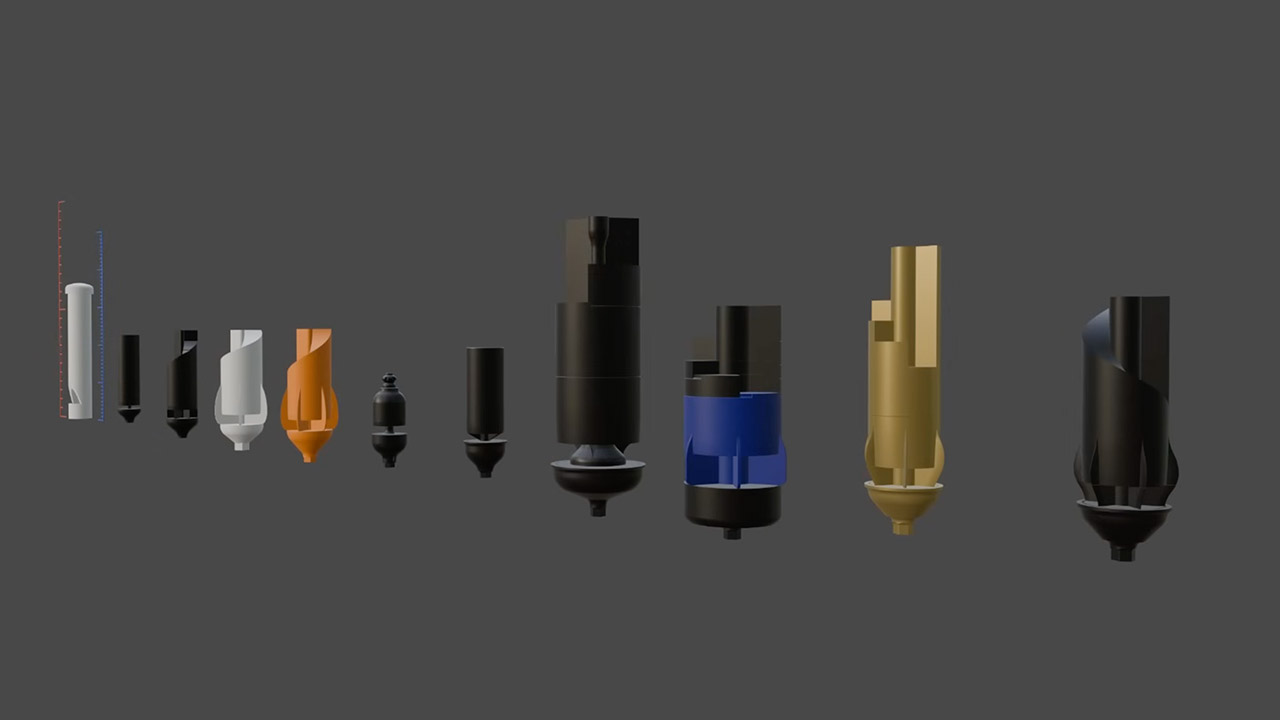

Rail lovers still feel a sentimental tug from such noises, as steam engines carried multi-note whistles that signaled their arrival from afar, with the train itself still invisible on the horizon. Modern diesels have much simpler horns, but for many people, the originals remain the gold standard, richer, more alive, and somehow more memorable. AeroKoi began with a small setup and quickly developed expertise. The early prototypes were crude, with PVC pipe wrapped around the printed parts and air forced via a nozzle. Unfortunately, the tones came out weak and strange, lacking the deep resonance he desired. Direct airflow proved to be the main issue. Real whistles work a little differently, allowing the air to build up in a bowl-shaped chamber before directing it out through a super-narrow slit and into the bell.

Sale

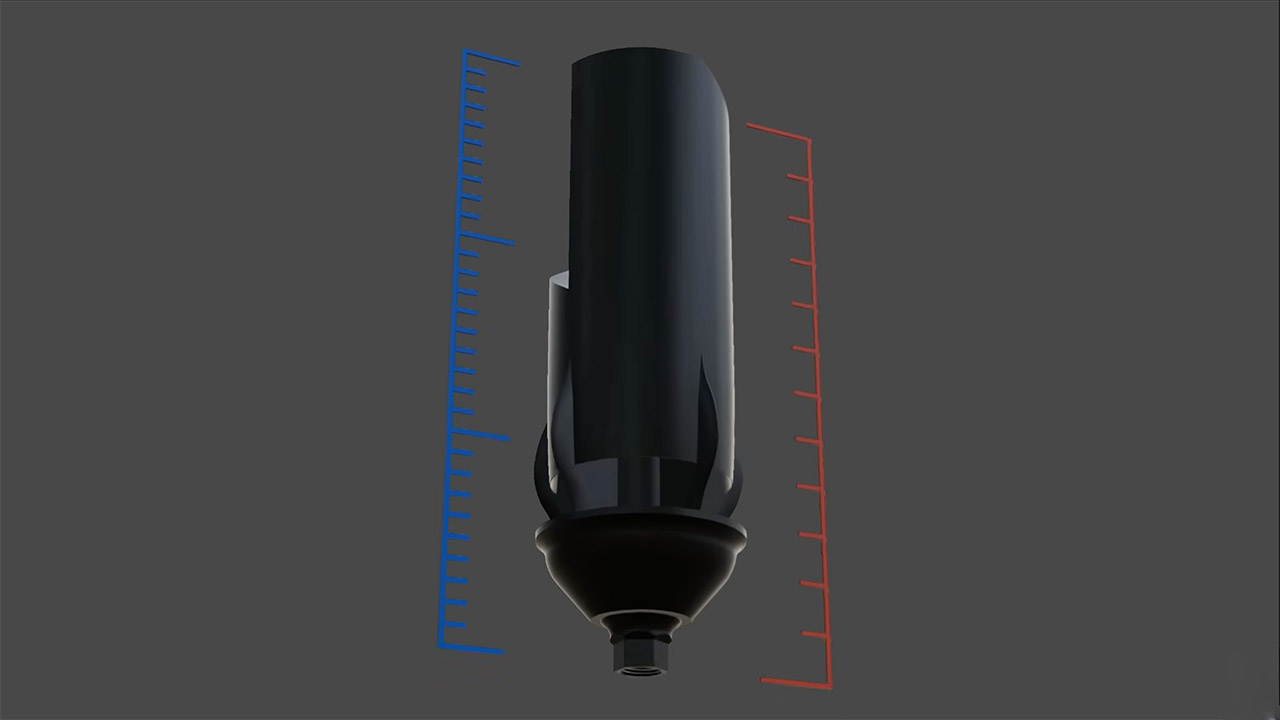

That small idea actually opened the door to advancement. Every new iteration included a correct bowl, fine-tuned the slit width, and altered the distance between the bowl and the bell edge. He cut the four-inch-diameter whistles into vertical pieces that stacked nicely in a regular printer bed. For the majority of the experiments, simple PLA was used, with a carbon fiber blend added for stiffness where it was most needed. Layer height remained constant at 0.2 millimeters, with six walls and 25% infill to prevent the sections from collapsing with each blast of pressure.

As the plastic started rolling off the reel, the designs became more sophisticated. An early six-chime model sounded slightly better, but not quite right. The larger bells required greater airflow, thus threaded inlets progressed from quarter-inch fittings to half-inch and finally full-inch NPT ball valves for much smoother control. He included spacers between the parts to allow him to adjust the spacing between the bowl and the lip without having to reprint the entire whistle. Low notes now have a little extra internal room to assist them carry further, as shown by the original illustrations.

Today, two final whistles are available for anyone to download and print. One is a straight copy of a Santa Fe Railroad six-chime whistle, while the other is a Northern Pacific five-chime replica. Both are intended to be printed in pieces, assembled with simple fittings, and sound out lovely clean chords when connected to a compressor. The Santa Fe one feels unusually completed; all of the notes fit together perfectly, with no shrieking or rattling that plagued previous printers.

[Source]

Looking for the most recent Mini Crossword answer? Click here for today’s Mini Crossword hints, as well as our daily answers and hints for The New York Times Wordle, Strands, Connections and Connections: Sports Edition puzzles.

It isn’t often that the NYT Mini Crossword stumps me right away, but 1-Across threw me off. I was sure the answer was SMASH and well, I was close, but not correct. Read on for all the answers. And if you could use some hints and guidance for daily solving, check out our Mini Crossword tips.

If you’re looking for today’s Wordle, Connections, Connections: Sports Edition and Strands answers, you can visit CNET’s NYT puzzle hints page.

Read more: Tips and Tricks for Solving The New York Times Mini Crossword

Let’s get to those Mini Crossword clues and answers.

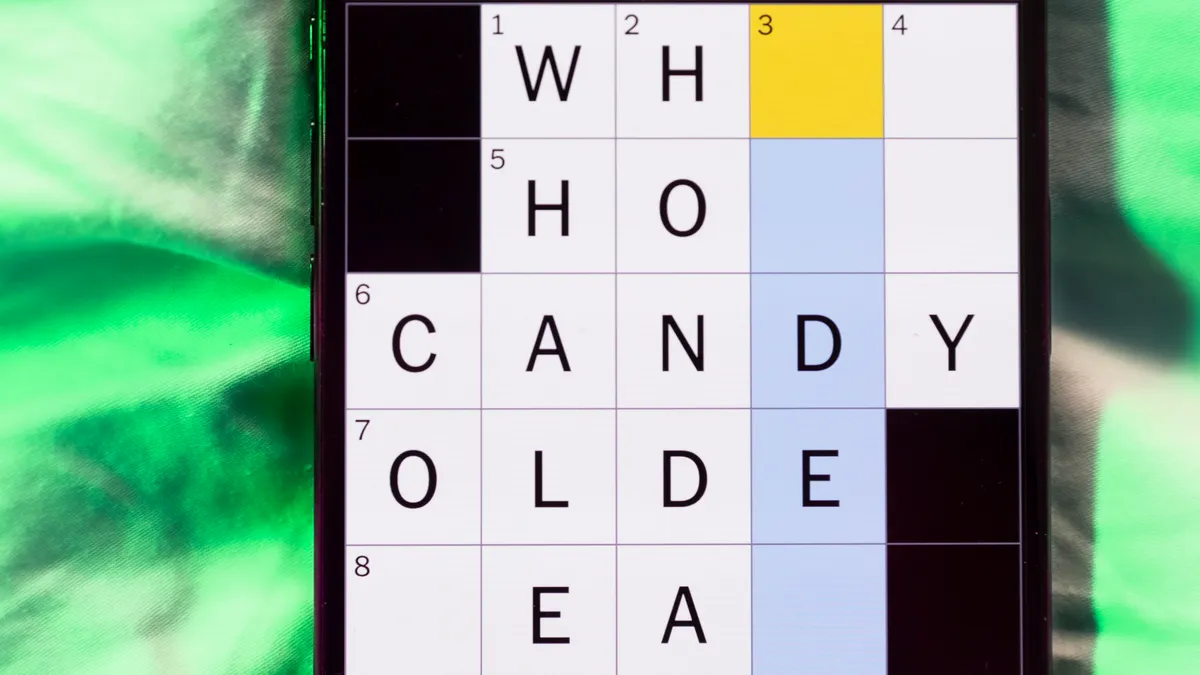

The completed NYT Mini Crossword puzzle for May 8, 2026.

1A clue: Squash

Answer: SMUSH

6A clue: Monopoly card with a question mark on one side

Answer: CHANCE

7A clue: “Help! Help!”

Answer: MAYDAY

8A clue: Path around the sun

Answer: ORBIT

9A clue: Pressing desires

Answer: NEEDS

1D clue: Social media button with an arrow

Answer: SHARE

2D clue: Answer between “yes” and “no”

Answer: MAYBE

3D clue: Took back

Answer: UNDID

4D clue: Ad-libs in jazz singing

Answer: SCATS

5D clue: “Yo!”

Answer: HEY

6D clue: “Dude!”

Answer: CMON

Tesla filed a bespoke Roadster badge trademark, its first standalone vehicle branding apart from the Cybertruck. The car was promised in 2017 for 2020 delivery and remains unbuilt, with a reveal now expected in late May or June 2026.

TL;DR

Tesla has filed a trademark for a bespoke Roadster badge that looks like it belongs on a Lamborghini. The car it will adorn was first promised nine years ago.

A prototype debuted in November 2017 with a 200 kilowatt-hour battery, a claimed 620-mile range, a 1.9-second zero-to-60 time, and a starting price of 200,000 dollars. Production was set for 2020. It did not happen in 2020, or 2021, or 2022, or any year since.

The trademark filing, submitted to the United States Patent and Trademark Office on 28 April on an intent-to-use basis, covers a stylised triangular shield bearing the Roadster wordmark and four vertical lines that, according to the filing, represent “speed, propulsion, heat, or wind.” It is the most tangible thing Tesla has produced for the Roadster in nearly a decade.

The trademark application is unusual for Tesla. Apart from the Cybertruck’s angular two-part emblem, the company has never given one of its vehicles a standalone badge. The Model S, 3, X, and Y use Tesla’s corporate T logo. The Roadster is getting the kind of bespoke branding treatment normally reserved for supercar marques: a dedicated shield, a custom wordmark in a stretched angular font with segmented letterforms, and a separate silhouette mark consisting of three flowing curved lines that form the vehicle’s profile.

Tesla filed two distinct trademark applications. The first is a stylised “ROADSTER” wordmark in a triangular shield. The second is the vehicle silhouette. Both were filed on an intent-to-use basis, meaning Tesla has declared a plan to put these marks into commercial use but has not yet done so.

Elon Musk explicitly deprioritised the Roadster in favour of the Cybertruck in 2022, telling investors the truck would come first. The Cybertruck eventually launched in late 2023 after its own multi-year delay. The Roadster has remained in a state of perpetual imminence since, with Musk offering periodic updates that have served primarily to push the timeline further out.

During Tesla’s first-quarter 2026 earnings call, Musk said the Roadster would be unveiled “maybe in a month or so,” pushing the reveal to late May or early June 2026. He described the upcoming event as “one of the most exciting product unveils ever.” If the reveal happens on schedule, it will be the first time in nine years that any public commitment regarding the Roadster has been met.

The Roadster’s specification sheet has not remained static during the delay. It has escalated. The 2017 prototype claimed a 1.9-second zero-to-60 time. In 2021, Musk revised the target to 1.1 seconds. In 2024, he announced the goal had been pushed below one second.

The optional SpaceX package, first described in 2018, would reportedly include approximately 10 cold-air rocket thrusters integrated into the vehicle body to enhance cornering, braking, and acceleration. Musk has suggested the thrusters could enable the car to “fly,” though the definition of flight in this context remains unclear. The 620-mile range claim from 2017 has not been revised. The 200,000 dollar base price, announced nearly a decade ago, has also not been updated.

Tesla has raised its 2026 capital expenditure to 25 billion dollars, allocated across six simultaneous new production lines covering the Cybercab robotaxi, the Semi truck, next-generation vehicle platforms, Optimus humanoid robots, energy storage, and battery manufacturing. The Roadster is not named as a priority in the capex allocation.

Production, by Musk’s own framing during the earnings call, would follow 12 to 18 months after the demonstration, pointing to a start date somewhere in mid-to-late 2027 or into 2028. The customers who placed 50,000 dollar deposits for the Founders Series edition in 2017 will have waited more than a decade for delivery if that timeline holds.

The electric supercar market that existed when the Roadster was announced in 2017 was effectively empty. The Rimac Concept Two was a prototype. The Lotus Evija was years from production. The Pininfarina Battista had not been announced.

Nine years later, the market has filled in around the space the Roadster was supposed to occupy. Rimac has been delivering the Nevera since 2023, holding the production electric vehicle acceleration record at 1.74 seconds to 60 miles per hour. The Lucid Air Sapphire delivers 1,234 horsepower for 249,000 dollars. Porsche has accelerated its electrification strategy, launching the all-electric Cayenne and iterating on the Taycan. BYD’s premium Denza brand has unveiled a 1,000-horsepower electric sedan targeting Porsche and Tesla simultaneously.

Former Tesla and Polestar executives have launched their own electric sports car ventures, targeting the sub-100,000 dollar segment that the Roadster’s 200,000 dollar price point leaves open. The Roadster’s original specifications, revolutionary in 2017, are now achievable by multiple manufacturers.

The sub-two-second zero-to-60 time that made the Roadster prototype a sensation is now a threshold that the Rimac Nevera, Pininfarina Battista, and Lucid Air Sapphire have all crossed. The SpaceX thruster package remains the only specification that no competitor has attempted, and it remains the specification that has never been demonstrated in a production vehicle.

The trademark filing is the kind of signal that Tesla’s investor and fan communities parse with the intensity of Kremlinologists reading a Pravda editorial. A bespoke badge implies a product distinct enough from the Tesla brand to warrant its own identity. The intent-to-use filing implies a legal expectation that the mark will be commercially deployed. The timing, weeks before a promised reveal, implies coordination on a product launch. None of this constitutes a car.

What the trademark does reveal is how Tesla wants the Roadster to be perceived. The shield shape, the angular typography, and the vehicle silhouette are the visual language of a supercar brand, not a technology company. The Cybertruck’s aesthetic was aggressively anti-automotive, a stainless steel polygon that rejected every convention of vehicle design. The Roadster badge suggests the opposite: a deliberate embrace of the iconography that Ferrari, Lamborghini, and Porsche have used for decades to signal exclusivity and heritage.

Tesla is not trying to disrupt the supercar market with the Roadster. It is trying to join it. The badge is the application letter. The car, if it arrives, will determine whether the application is accepted. And if the pattern of the past nine years holds, the badge will remain the most beautifully designed element of a product that exists primarily as a promise. The vertical lines, according to the filing, represent speed. For the moment, they represent patience.

Zoë Schiffer: Yeah, we don’t need a Grok.

Brian Barrett: Grok would just say that it’s sick.

Zoë Schiffer: Grok mitigating the fight between the mom and the person who’s yelling at her about her baby.

Leah Feiger: I really, really feel for these workers, and I really, really feel for all of these customers that were stranded. Spirit in so many ways, like something that we love to make fun of just a little bit, like you take Spirit when you have to, but also it was actually available and it worked and it wasn’t nearly as expensive as anyone else. It’s kind of sad, especially when I look at the shrinking airline industry in the US, when I look over at Europe and I’m like, “You guys have so many low-cost carriers.” And especially with all of the deals, everything back and forth between JetBlue and Spirit that got squashed, it was just a little bit sad to see that happen.

Brian Barrett: And Leah, when you say stranded, I want to be clear, that’s literal. I think some of these employees, they were not in their home cities when Spirit shut down. So they had to rely on other airlines offering them a jump seat or a travel pass to get home. Fortunately, it’s apparently a very communal industry. Other airlines helped them out. Other airlines are offering preferential employment interviews to Spirit Airline employees. But can you imagine, I’m in London right now, and if WIRED shut down and I had to find another way home. I mean, I’d be OK, but—

Leah Feiger: No, but it would also just be ridiculous. This is wild. I think of that 30 Rock episode when Liz Lemon is like, “Oh yeah, this is my flight.” And they’re like, “Sorry, we’re out of flights now. We just make popcorn,” which was incredible to see, but that’s so real.

Brian Barrett: I think from a consumer level, if you were going to book tickets for the summer, do it soon because now it’s a supply and demand thing, right? A whole airline is gone. That’s a lot of seats that aren’t there, so there’s more scarcity. Prices are going up basically at the worst possible time for people like myself who are thinking about planning some time for summer travel with, again, two kids.

Zoë Schiffer: Coming up after the break, we’ll be getting into the news of the hantavirus outbreak on a cruise ship. Should we be concerned, or are we panicking for no reason? We’ll find out.

Leah Feiger: So in recent days, there have been more and more headlines of a hantavirus outbreak happening on the MV Hondius, a Dutch-flagged cruise ship. The cruise departed from the south end of Argentina over a month ago, making stops in Antarctica, the island of Saint Helena, among other stops. The trouble started when a man started showing symptoms like a fever, a headache, and eventually this became a respiratory illness. He died on board and a few weeks later, his wife did as well. She was later confirmed to have the hantavirus too. As of this week, seven cases have now been confirmed and the ship is currently carrying 147 passengers and crew. To help us understand what on earth is going on, we are joined by WIRED staff writer Emily Mullin.

OpenAI’s relationship with Microsoft, its longtime investor and cloud partner, has grown increasingly complicated over the years as the ChatGPT-maker has grown into a behemoth competitor.

But Microsoft executives had reservations about sending additional funding to OpenAI as far back as 2018 when it was just a small nonprofit research lab, according to emails between more than a dozen Microsoft executives, including CEO Satya Nadella, shown in a federal court on Thursday during the Musk v. Altman trial.

The emails show how Microsoft, at the time, wavered over what has since been held up as one of the most successful corporate partnerships in tech history. Several Microsoft executives said in the emails their visits to OpenAI did not indicate any imminent breakthroughs in developing artificial general intelligence. In 2017, much of OpenAI’s work was focused on building AI systems that could play video games, which showed early signs of success. But OpenAI needed five times more computing power than it had originally secured from Microsoft to continue the project.

Microsoft worried that not providing support could push OpenAI into the arms of Amazon, the world’s dominant cloud computing provider at the time. Roughly 18 months after the emails were sent, Microsoft announced a landmark $1 billion investment in OpenAI after the lab created a for-profit arm that provided the tech giant with the potential to generate a return of $20 billion.

Microsoft declined to comment.

Elon Musk’s attorneys introduced the emails to show Microsoft’s evolving relationship with OpenAI. After Musk reached out to Nadella, Microsoft in 2016 agreed to provide $60 million worth of cloud computing services to OpenAI at a steep discount. OpenAI consumed the services twice as fast as expected.

The email chain kicked off on August 11, 2017, with Nadella reaching out to OpenAI CEO Sam Altman to congratulate the lab on winning a video game competition using AI to mimic a human player. Ten days later, Altman responded seeking $300 million worth of Microsoft Azure cloud computing services.

“We could figure how to fund some of it but not that much,” Altman wrote, apparently seeking a financial handout and engineering help. “I think it will be the most impressive thing yet in the history of AI.”

Nadella asked four lieutenants for their input on how to respond three days later. Microsoft’s AI team saw “no value in engaging,” according to a response from Jason Zander, Microsoft’s executive vice president, that also documented how other teams felt. Its research team thought its own work was “more advanced,” while the public relation teams didn’t like the idea of supporting a group pushing the idea of “machines beating humans.” Ultimately, Zander suggested that Azure would benefit from associating with Musk and Altman but that he wouldn’t want to “take a complete bath,” or large financial hit, in doing so.

A subsequent analysis showed that Microsoft stood to lose about $150 million over several years if it provided the services Altman wanted, according to one email. “Unless he can help us draw a more direct networking effect with OpenAI -> Microsoft business value, we will wind up having to pass,” Zander wrote.

The thread went dark for several months, but was revived on January 10, 2018, with an email to Nadella from Brett Tanzer—who signed off his emails with “Brettt”—then a director on the Azure cloud unit. Altman had told Tanzer that OpenAI could license its gaming AI to Microsoft’s Xbox video game division in exchange for “$35-50 million in Azure Credits.” But Xbox couldn’t commit that much money. Microsoft planned to tell Altman there would be no more discounts after that March, per Tanzer’s email.

Off-Prem

Things are heating up in a single datacenter, but not in a good way

Amazon Web Services is working to address a power outage that has created “impairments” to services served from the notorious US-EAST-1 region.

A May 7 incident report time-stamped 5:25 PM PDT (00:25 UTC Friday) states that AWS spotted problems in the use1-az4 availability zone of the US-EAST-1 Region. A subsequent update states “EC2 instances and EBS volumes hosted on impacted hardware are affected by the loss of power during the thermal event.”

An update time-stamped 6:47 PM PDT reveals“We continue to work towards mitigating the increased temperatures to its normal levels,” but warns “Other AWS services that depend on the affected EC2 instances and EBS volumes in this Availability Zone may also experience impairments.”

At 8:06 PM PDT Amazon said it was “actively working to restore temperatures to normal levels … though progress is slower than originally anticipated.”

The cloudy concern said it made “incremental progress to restore cooling systems” but users of EC2 Instances, EBS Volumes, and other services are “experiencing elevated error rates and latencies for some workflows.”

AWS has also shifted traffic away from the stricken AZ, and suggested companies shift workloads into other US-EAST-1 availability zones.

Good luck getting that done because the update admits “Customers may experience longer than usual provisioning times.”

US-EAST-1 is arguably AWS’s problem child, as it was the site of major outages that took big chunks of the internet offline in 2021 and then again in October 2025 .

AWS execs have told The Register the region isn’t inherently more fragile than other parts of the Amazonian cloud, but often runs things at bigger scale than elsewhere and therefore imposes extra stress on services.

The Register will update this story as the situation evolves. ®

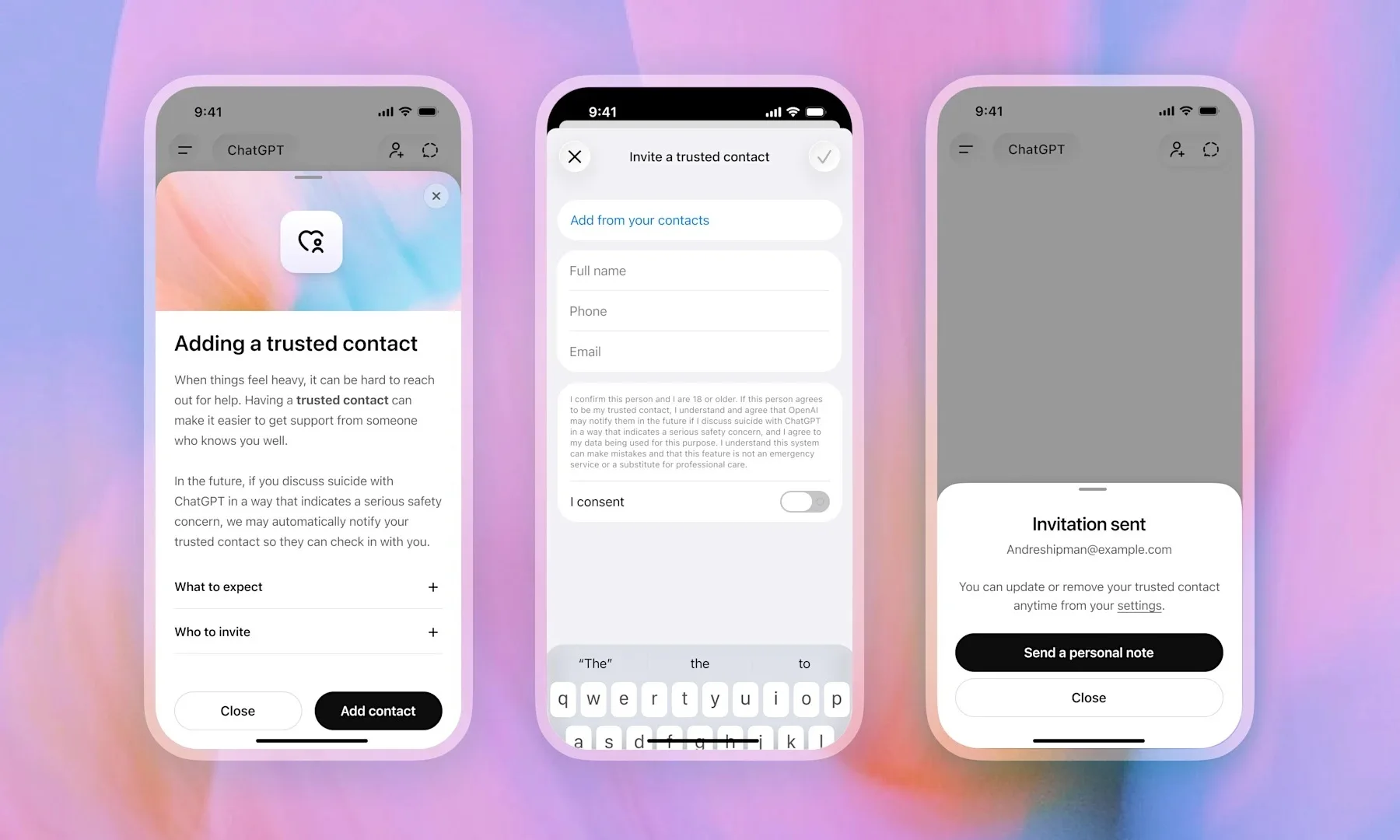

AI chatbots have made it surprisingly easy to talk about anything, and that includes some of the heaviest topics imaginable. That openness has always been a double-edged sword. OpenAI is now taking a step to address that, with a new feature that brings a trusted person into the picture when things get serious.

The company is rolling out a new feature called Trusted Contact, and it is starting to appear in ChatGPT settings for adult users. It lets users name one person who can be alerted if ChatGPT detects a serious self-harm concern.

Setting up a Trusted Contact is optional, but if you do decide to set it up, then you have to make sure that the contact you are nominating is at least 18 years old, or 19 in South Korea. Once you name someone, they get an invitation explaining what the role actually means, and they have one week to accept it before the feature goes live. If they decline, you can pick someone else.

The alert system itself is not automatic. If ChatGPT’s systems flag a conversation as potentially concerning, the chatbot first tells the user that their Trusted Contact may be notified, and it also nudges the user to reach out directly with some suggested conversation starters. A small team of specially trained human reviewers then steps in to assess the situation. Only if they confirm a serious risk does the contact actually get notified, via email, text, or in-app notification. The alert does not share chat transcripts or conversation details. It simply says that self-harm came up in a potentially concerning way and asks the contact to check in. OpenAI says it aims to complete that human review in under one hour.

Trusted contact is part of a broader set of safety features on the platform. Previously, OpenAI added features that let parents receive alerts when a linked teen account shows signs of distress. Trusted Contact is the adult-facing extension of this same feature. It was reportedly developed with input from clinicians, researchers, and mental health organizations, including the American Psychological Association.

All that said, it is worth mentioning that Trusted Contact does not replace crisis hotlines, emergency services, or professional mental health care. ChatGPT will still direct users toward those resources when needed. Users can remove or change their Trusted Contact at any time, and contacts can remove themselves whenever they want.

The reality of the matter is that ChatGPT is being used for some deeply personal conversations, whether OpenAI planned for that or not. Adding a feature like Trusted Contact is a move in the right direction, and also an admission that a chatbot can only do so much.

Generative AI bear Gary Marcus called the AI capex boom the “greatest capital misallocation in history.” Goldman Sachs analyst Eric Sheridan reaches the opposite conclusion in his “AI in a Bubble?” research package. Sheridan argues that this is not a hope-and-hype cycle like 1999 but a scale and monetization cycle, with tangible revenue growth and extraordinary market momentum.

So, who’s right? Jobs, pensions, and trillions of stock-market dollars, are at stake with implications for all of us.

I focus on Amazon Web Services (AWS) as the most informative window into the broader conundrum: it is the largest of the cloud businesses, the one with the cleanest revenue disclosure, and the one whose CEO has put the most specific quantitative defense on the table.

The chart below previews where this analysis lands: three plausible curves for AWS revenue, all consistent with the data through Q1 2026, each implying a different return on the $200 billion Amazon plans to spend this year. The disagreement between bulls and bears is essentially a disagreement about which curve materializes.

The bulls argue that hyperscalers fund this build-out from cash flow rather than debt, which makes the AI capex boom different from the historical telecom and railway bubbles. Indeed, AWS grew 28% last quarter, its fastest pace in 15 quarters, validating that enterprise demand for AI compute is real and accelerating.

Amazon CEO Andy Jassy has framed the company’s $200 billion 2026 capex plan as demand-driven rather than speculative, with strong expected return on invested capital. Stanford professor Gilad Allon offers the strongest non-Wall-Street version of the same argument: the AI build-out is funded primarily by cash-rich incumbents rather than leveraged speculative entrants, and high barriers to entry in chips, data centers, and power limit the kind of fragmented overbuilding that produces classic bullwhip dynamics. In essence, the bull case is that the technology is real, the demand is real, and being too cautious is its own kind of mistake.

In contrast, the bears argue that the AI capex math depends on assumptions that current operating numbers don’t yet support.

Venture capitalist Tom Tunguz notes that Bank of America projects hyperscaler debt issuance of $175 billion this year, six times the prior five-year average — a sharp departure from the cash-flow-funded story the bulls rely on. The asset-durability defense runs into Microsoft’s own admission that $37.5 billion of a single quarter’s capex was allocated to short-lived assets, mainly GPUs that depreciate in five years rather than the thirty-year horizon of telecom or rail.

Beyond the curve question, the bears point to financial fragilities that run independently of demand: Oracle’s leverage, Amazon’s sharp pivot to debt funding, and the circular customer-financing arrangements that tie hyperscaler revenue to a small number of model labs whose own revenue depends on capital markets staying open. In essence, the bear case is that the financial structure is changing, the demand assumptions are fragile, and being too aggressive is courting financial disaster.

Returning to our chart, the structure of the disagreement becomes concrete. The bull case assumes that the recent acceleration in AWS growth is the new normal and that growth rates keep climbing — producing roughly $66 billion in quarterly revenue by Q4 2027 and AWS-quality returns on the $200 billion capex.

The bear case assumes the recent acceleration was a catch-up move and that sequential dollar additions stabilize around the current $2 billion per quarter — producing roughly $52 billion in quarterly revenue and acceptable but disappointing returns.

The catastrophe case is below the bear case: AI workload demand actually reverses, and the GPU layer no longer earns enough revenue to recover its cost. The gap between the bull and bear cases is not whether the capex pays off but how well it does.

Consider the late-1990s fiber boom, when telecom companies laid more than 80 million miles of fiber-optic cable across the U.S. to carry the data traffic of the emerging internet. It didn’t collapse because operators ran out of money. It collapsed because WorldCom told the market that internet traffic was doubling every hundred days when the actual rate was once a year. The predicted curve was wildly off, and capital flowed accordingly.

By 2002, 85 to 95% of the fiber laid in the 1990s remained dark, and roughly $2 trillion dollars in market value had been wiped out. Demand eventually arrived — YouTube, streaming, the cloud — but it arrived a decade later, and the people who built it out lost their shirts. The relevant question for AI is not whether demand exists, which it plainly does, but whether it is growing fast enough to absorb $700 billion in annual capex

The data that resolves the disagreement is roughly 12 months away and will arrive in the regular cadence of quarterly earnings. By Q1 2027, the divergence between the bull and bear paths becomes visible in the AWS data: at that point, AWS quarterly revenue will be either accelerating toward the high $40 billions, tracking flat against the low $40 billions, or showing the first signs of inflecting downward.

None of those outcomes is currently disprovable from the trajectory through Q1 2026, which is why the hyperscalers can keep raising debt and the market keeps buying it. Anyone telling you they are certain which curve will materialize is selling something.

As for me, I just bought a 12-month supply of popcorn.

[Editor’s note: GeekWire publishes guest opinion pieces representing a range of perspectives. The views expressed are those of the author.]

Anthropic on Tuesday unveiled a suite of updates to its Claude Managed Agents platform at its second annual Code with Claude developer conference in San Francisco, introducing a new capability called “dreaming” that lets AI agents learn from their own past sessions and improve over time — a step toward the kind of self-correcting, self-improving AI systems that enterprises have demanded before trusting agents with production workloads.

The company also moved two previously experimental features — outcomes and multi-agent orchestration — from research preview into public beta, making them broadly available to developers building on the Claude platform. Together, the three features address what Anthropic says are the hardest problems in running AI agents at scale: keeping them accurate, helping them learn, and preventing them from becoming bottlenecks on complex, multi-step work.

Early adopters are already reporting significant results. Legal AI company Harvey saw task completion rates increase roughly 6x after implementing dreaming. Medical document review company Wisedocs cut its document review time by 50% using outcomes. And Netflix is now processing logs from hundreds of builds simultaneously using multi-agent orchestration.

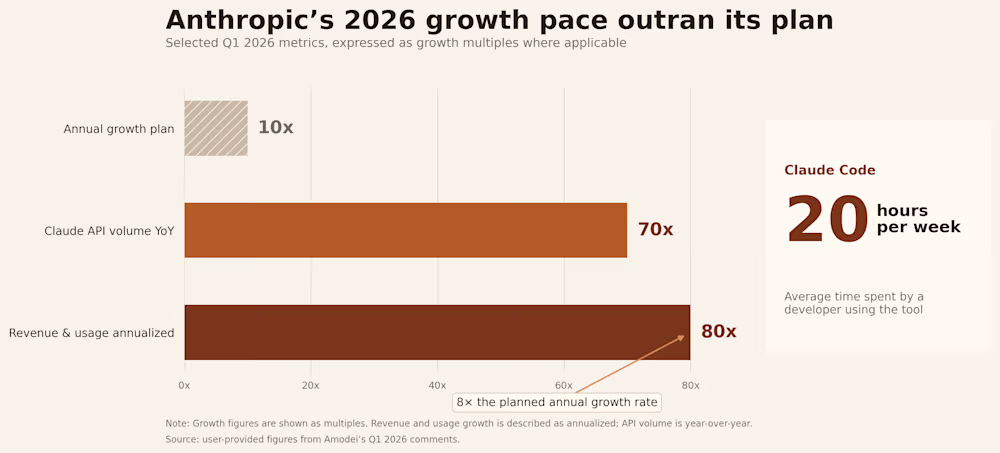

The announcements come at a moment of extraordinary momentum for Anthropic. CEO Dario Amodei disclosed during a fireside chat at the conference that the company’s growth has outpaced even its own aggressive internal projections.

In the first quarter of 2026, Anthropic saw what Amodei described as 80x annualized growth in revenue and usage — far exceeding the 10x annual growth the company had planned for. API volume on the Claude platform is up nearly 70x year over year, and the average developer using Claude Code now spends 20 hours per week working with the tool.

“We tried to plan very well for a world of 10x growth per year,” Amodei said. “And yet we saw 80x. And so that is the reason we have had difficulties with compute.”

Dreaming is the most novel of the three features and the one Anthropic is most eager to distinguish from conventional memory systems. While the company launched agent memory earlier this year — allowing Claude to retain preferences and context within and across individual sessions — dreaming works at a higher level of abstraction. It is a scheduled process that reviews an agent’s past sessions and memory stores, extracts patterns across them, and curates those memories so agents improve over time. It surfaces insights that no single agent session could see on its own: recurring mistakes, workflows that multiple agents converge on independently, and preferences shared across a team of agents.

Alex Albert, who leads research product management at Anthropic, explained the concept in an interview at the conference. He described dreaming as analogous to how people within organizations create skills after working through a task. “They might do a workflow with Claude, and at the end of that workflow, after they’ve iterated and zigzagged a little bit, they want to record that path from A to B,” Albert said. “A very similar thing is happening with dreaming — instead of you manually creating the skill from your experience working with Claude, the model is doing it, so it has that same context for a future session.”

Crucially, dreaming does not modify the underlying model weights. “We’re not changing the model itself through dreaming — it’s not doing updates to the weights or anything like that,” Albert said. Instead, the agent writes learnings as plain-text notes and structured “playbooks” that future sessions can reference, making the entire process observable and auditable by humans. When asked about the trust implications of agents consolidating their own knowledge, Albert acknowledged that “there is a level of trust that you need to place” but noted that all memories are inspectable and that smarter models are getting progressively better at managing this process. “They’re learning to write better notes for their future self,” he said.

During the keynote, the Anthropic team demonstrated all three features live on stage using a fictional aerospace startup called “Lumara” that needed to autonomously land drones on the moon for resource mining. The team configured a multi-agent system with three specialists — a commander agent responsible for overall mission success, a detector agent that identified high-quality landing sites, and a navigator agent that handled safe drone flight and landing — and defined a success rubric requiring soft landings, clear ground, and enough fuel reserves for a return trip to Earth.

An initial simulation across six hypothetical landing sites produced strong but imperfect results. To improve, the presenters triggered a dreaming session directly from the Claude Developer Console. Overnight, the dreaming agent reviewed all past simulation sessions and wrote a detailed descent playbook — a comprehensive set of heuristics drawn from patterns across multiple mission runs. When the team ran a new simulation the following morning with the dreaming-derived playbook in memory, the results improved meaningfully on the sites that had previously underperformed.

“All we had to do was just have Caitlin press a button,” said Angela Jiang, Head of Product for the Claude Platform, referring to her colleague on stage. “All dreaming.”

The demo illustrated how the three features compose together in practice. Multi-agent orchestration split the complex task across specialists with independent context windows. Outcomes provided the rubric against which a separate grader agent evaluated each run. And dreaming extracted lessons across those runs to improve future performance — forming what Anthropic describes as a continuous improvement loop that requires no human intervention between iterations.

The outcomes feature, now in public beta, gives developers a way to define what success looks like using a rubric — a structural framework, a presentation standard, a brand voice, or any other set of criteria — and then lets the agent iterate toward that standard autonomously. What makes outcomes architecturally distinctive is its separation of concerns. When an agent completes its work, a separate grader agent evaluates the output against the developer-defined rubric in its own independent context window. Because the grader operates in a fresh context, it is not influenced by the working agent’s reasoning or accumulated biases from the session.

When the grader identifies gaps between the output and the rubric, it pinpoints specifically what needs to change, and the working agent takes another pass. This loop continues until the rubric criteria are met — without a human needing to review each attempt.

Albert described Anthropic’s broader verification strategy as employing “more test time compute, more models thinking about a problem for longer, to check over the work of another.” He acknowledged that having a model check its own work raises reasonable questions, but said a fresh context window reviewing completed work consistently outperforms asking the same long-running thread to identify its own bugs. “You will get higher success if you give that output to a fresh Claude and say, ‘what bugs do you see?’” he said. “There is still something to the attention” that degrades over very long sessions — a limitation he said Anthropic is actively working to fix in future models.

The approach mirrors strategies already in use at GitHub. Mario Rodriguez, Chief Product Officer at GitHub, described during a separate talk at the conference how Copilot uses a similar advisor pattern with Claude models — pairing a smaller, cheaper model as an executor with a larger model as a mentor. When the smaller model encounters a problem beyond its capability, it calls the larger model for guidance, then continues executing on its own. Rodriguez said the approach delivers near-Opus-level intelligence at significantly lower cost, and that GitHub inserts critique models at three specific points in the coding workflow: after drafting a plan, after a complex implementation, and after writing tests but before running them.

Multi-agent orchestration, the third feature moving to public beta, allows a lead agent to decompose a large task into subtasks and delegate each one to a specialist agent — each with its own model, system prompt, tools, and independent context window. Every step in the process is traceable in the Claude Console, showing which agent did what, in what order, and why.

The design gives each sub-agent an isolated context, which Anthropic says produces better results than having a single agent attempt to hold all the complexity in one thread. “Each sub-agent has its own independent thread and context window,” the keynote presenters explained. “This is very intentional — we found that by splitting the work and then merging the results, we get better outcomes.”

Albert offered his own heuristic for when multi-agent architectures make sense versus sticking with a single thread. “Parallel agents are better for investigation,” he said — situations where there is a lot of context that will ultimately be discarded. “If you’re trying to answer a specific question, you don’t need all the search results from the areas where it didn’t find the answer. You just need the answer.” He described spinning up disposable sub-agents for specific retrieval tasks and bringing only the result back to the main thread. Increasingly, he said, the model itself will decide when to parallelize. “In the future, you won’t really care if it’s one agent or multi-agent or whatever’s happening. You just have a Claude that you’re talking to, and it will deploy the right architecture automatically.”

The three features arrive as part of a broader platform push that Anthropic framed throughout the conference as closing “the gap between what AI can do and what it’s actually doing for people.” Ami Vora, Anthropic’s Chief Product Officer, set the theme in her opening keynote, noting that while model capabilities are advancing on an exponential curve, most organizations are still adopting AI on a linear path.

Dianne Penn, who leads product for Anthropic’s research team, described the company’s measure of progress as “task horizon” — how long an AI agent can work autonomously while improving the quality of its deliverables. “This time last year, models could work for minutes,” she said. “Now, most of us have agents running for hours on end. Tomorrow, we’ll have agents that are proactive, always on, and know what to work on without losing the frame.”

The event also included several infrastructure announcements designed to help developers keep pace. Anthropic said it is doubling its five-hour rate limits for Pro, Max, Team, and Enterprise plans, and raising API rate limits considerably. The company announced a partnership with SpaceX to use the full capacity of its Colossus data center to expand compute availability — a direct response to the demand crunch Amodei described.

All three features are built into Claude Managed Agents, which launched in public beta on April 8 as an opinionated harness that bundles best practices including memory, tool integration, and action handling. Anthropic says teams using Managed Agents have shipped 10x faster than those building their own agent infrastructure from scratch. Albert described the platform using an operating system analogy: “With managed agents, you don’t need to think about all the technicalities of how you set up the surrounding system,” he said. “You’re building an application for Macs — you don’t want to go have to re-implement every detail of macOS.”

The competitive implications are significant. As AI agent platforms from OpenAI, Google, and others compete for developer adoption, Anthropic is betting that production reliability — not just raw model intelligence — will determine which platform wins enterprise budgets. The dreaming feature in particular stakes out new territory: while other platforms offer memory and tool use, the idea of agents systematically reviewing their own histories to extract reusable knowledge goes further toward the kind of continuously improving systems that enterprises need before delegating high-stakes work.

The conference showcased companies already operating at that scale. Mercado Libre, Latin America’s largest e-commerce platform, has 23,000 engineers running Claude Code, has reviewed more than 500,000 pull requests with human oversight, and is aiming for 90% autonomous coding by the third quarter of this year. Shopify has deployed Claude Code across not just engineering but design, product, and data science teams.

But it was Dario Amodei who articulated the most expansive vision for where all of this leads. He described a progression from single agents to multiple agents to whole organizational intelligence — from “a team of smart people in a room” to what he called “a country of geniuses in the data center.” And he reiterated a prediction he made roughly a year ago: that 2026 would see the first billion-dollar company run by a single person. “Hasn’t quite happened yet,” he said. “But we’ve got seven more months.”

Dreaming is available now in research preview. Outcomes and multi-agent orchestration are in public beta and available to all developers on the Claude platform. Whether seven months is enough time for a solo founder to build a billion-dollar business remains an open question — but after Tuesday, they have a few more tools to try.

The Australian Cyber Security Center (ACSC) is warning organizations of an ongoing malware campaign using the ClickFix social engineering technique to distribute the Vidar Stealer info-stealing malware.

ClickFix is a social engineering attack technique that tricks users into executing malicious commands, usually through fake CAPTCHA or browser verification prompts displayed on compromised or malicious websites.

The attack typically tricks users into executing PowerShell commands to bypass security controls and deliver malware, typically info-stealers.

Australian organizations and infrastructure entities are being targeted in attacks that involve compromised WordPress websites that redirect to malicious payloads.

Users visiting these websites are shown a fake Cloudflare verification or CAPTCHA prompt that instructs them to copy and manually execute a malicious PowerShell command on their system, which leads to a Vidar Stealer infection.

“The Australian Signals Directorate’s Australian Cyber Security Center (ASD’s ACSC) has observed ClickFix-associated activity leveraging WordPress-hosted infrastructure to distribute the Vidar Stealer malware,” reads the agency’s advisory.

Vidar Stealer is an information-stealing malware family and malware-as-a-service (MaaS) operation that emerged in late 2018.

It gradually became a popular choice among cybercriminals for its cost-effectiveness, ease of deployment, and broad data theft capabilities. It targets browser passwords, cookies, cryptocurrency wallets, autofill information, and system details.

It has been observed in ClickFix attacks, promoted through Windows fixes, TikTok videos, and GitHub. Last year, the developer released a new version with upgraded capabilities.

ACSC notes that Vidar deletes its executable after launching on the infected device and then operates from system memory, reducing forensic artifacts.

It retrieves a command-and-control (C2) address via “dead-drop” URLs using public services like Telegram bots and Steam profiles, a tactic that has been widely used in the past but which remains effective.

ACSC recommends that organizations restrict PowerShell execution and implement application allow-listing to reduce the risk from these attacks.

WordPress site administrators are also advised to apply available security updates for themes and add-ons, and to remove any unused themes/plugins from their platforms.

ACSC’s security bulletin provides indicators of compromise (IoCs) for these attacks, allowing organizations to set up defenses or detect intrusions.

AI chained four zero-days into one exploit that bypassed both renderer and OS sandboxes. A wave of new exploits is coming.

At the Autonomous Validation Summit (May 12 & 14), see how autonomous, context-rich validation finds what’s exploitable, proves controls hold, and closes the remediation loop.

The official announcement came on Wednesday via a dedicated Nintendo Direct livestream. Star Fox (2026) will launch on June 25 on the Switch 2, marking at least the fifth time the classic title has been remade or remastered by Nintendo over the past three decades.

Read Entire Article

Source link

Channel 5 – All Creatures Great and Small series 7 new post

Upbit adds B3 Korean won pair as Base token gains Korea access

Trump’s 25% EU auto tariff breaches Turnberry Agreement that also covers semiconductors and digital trade

NCP car park operator enters administration putting 340 UK sites at risk of closure

Paul Scholes issues Marcus Rashford reality check as agreement emerges over Man United star

Met Gala 2026 Rumored Guest List Is Turning Heads

Strait of Hormuz Blockade Persists Amid US-Iran Standoff, Sending Oil Prices Soaring

Kylie Jenner Hit With Second Lawsuit From Ex-Housekeeper

New on Prime Video in May 2026 — Full List of Movies and Shows

Meta ends Sama contract after Kenyan workers report seeing intimate footage from Ray-Ban smart glasses users

Cavaliers vs. Raptors Game 6 live score, updates, highlights from 2026 NBA playoffs first-round series

David Benavidez responds to team Canelo saying the fight will never happen

New Netflix Movies in May 2026 — My Top 3 Picks to Stream

Melissa Joan Hart and More Stars Attend 2026 Kentucky Derby

IPL 2026: ‘Love you darling’- Hardik Pandya’s reaction to MS Dhoni steals the show |Watch | Cricket News

Young and the Restless Next Week: Cane Arrested & Matt’s Deadly New Scheme!

Luka Doncic Injury Update: Doncic’s Hamstring Recovery Slows Lakers’ Hopes Against Thunder: Can He Run Yet?

Bayern won’t hand bottom side Heidenheim ‘gifts’ despite PSG game

What Preity Zinta Said After Punjab Kings’ First Defeat Of IPL 2026

Pi Network Mandates Protocol 23 Upgrade for All Mainnet Nodes Before May 15 Deadline

You must be logged in to post a comment Login