Tech

Miami startup Subquadratic claims 1,000x AI efficiency gain with SubQ model; researchers demand independent proof.

A little-known Miami-based startup called Subquadratic emerged from stealth on Tuesday with a sweeping claim: that it has built the first large language model to fully escape the mathematical constraint that has defined — and limited — every major AI system since 2017.

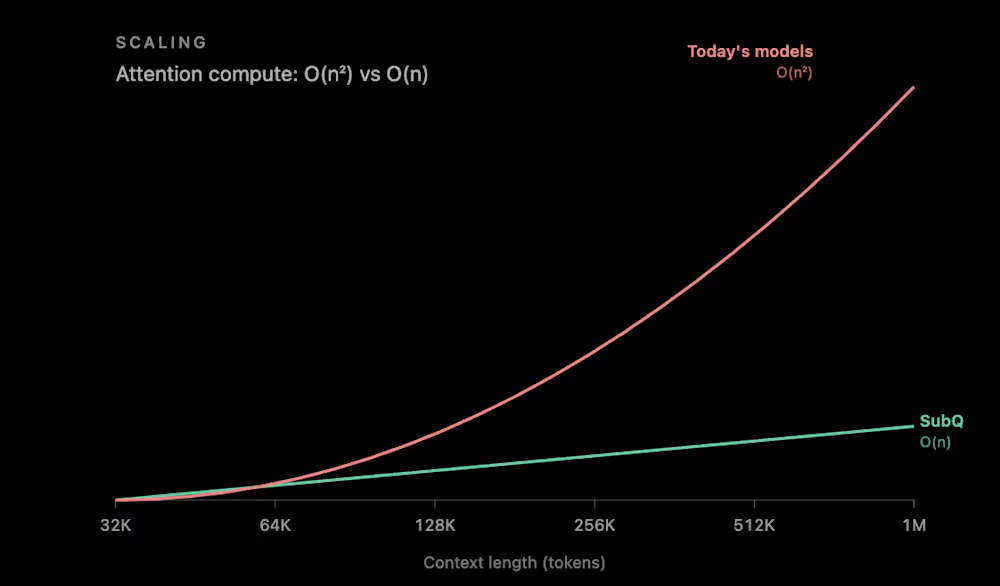

The company claims its first model, SubQ 1M-Preview, is the first LLM built on a fully subquadratic architecture — one where compute grows linearly with context length. If that claim holds, it would be a genuine inflection point in how AI systems scale. At 12 million tokens, the company says, its architecture reduces attention compute by almost 1,000 times compared to other frontier models — a figure that, if validated independently, would dwarf the efficiency gains of any existing approach.

The company is also launching three products into private beta: an API exposing the full context window, a command-line coding agent called SubQ Code, and a search tool called SubQ Search. It has raised $29 million in seed funding from investors including Tinder co-founder Justin Mateen, former SoftBank Vision Fund partner Javier Villamizar, and early investors in Anthropic, OpenAI, Stripe, and Brex. The New Stack reported that the raise values the company at $500 million.

The numbers Subquadratic is publishing are extraordinary. The reaction from the AI research community has been, to put it mildly, mixed — ranging from genuine curiosity to open accusations of vaporware. Understanding why requires understanding what the company claims to have solved, and why so many prior attempts to solve the same problem have fallen short.

The quadratic scaling problem has shaped the economics of the entire AI industry

Every transformer-based AI model — which includes virtually every frontier system from OpenAI, Anthropic, Google, and others — relies on an operation called “attention.” Every token is compared against every other token, so as inputs grow, the number of interactions — and the compute required to process them — scales quadratically. In plain terms: double the input size, and the cost doesn’t double. It quadruples.

This relationship has shaped what gets built and what doesn’t. The industry standard is 128,000 tokens for many AI models and up to 1 million tokens for frontier cloud models such as Claude Sonnet 4.7 and Gemini 3.1 Pro.

Even at those sizes, the cost of processing long inputs becomes punishing. The industry built an elaborate stack of workarounds to cope. RAG systems use a search engine to pull a small number of relevant results before sending them to the model, because sending the full corpus isn’t feasible. Developers layer retrieval pipelines, chunking strategies, prompt engineering techniques, and multi-agent orchestration systems on top of models — all to route around the fundamental constraint that the model itself can’t efficiently process everything at once.

Subquadratic’s argument is that these workarounds are expensive, brittle, and ultimately limiting. As CTO Alexander Whedon told SiliconANGLE in an interview, “I used to manually curate prompts and retrieval systems and evals and conditional logic to chain together the workflows. And I think that that is kind of a waste of human intelligence and also limiting to the product quality.”

Subquadratic’s fix is deceptively simple: stop doing the math that doesn’t matter

The company’s approach, called Subquadratic Sparse Attention or SSA, is built on a straightforward premise: most of the token-to-token comparisons in standard attention are wasted compute. Instead of comparing every token to every other token, SSA learns to identify which comparisons actually matter and computes attention only over those positions. Crucially, the selection is content-dependent — the model decides where to look based on meaning, not on fixed positional patterns. This allows it to retrieve specific information from arbitrary positions across a very long context without paying the quadratic tax.

The practical payoff scales with context length — exactly the inverse of the problem it’s trying to solve. According to the company’s technical blog, SSA achieves a 7.2x prefill speedup over dense attention at 128,000 tokens, rising to 52.2x at 1 million tokens. As Whedon put it: “If you double the input size with quadratic scaling laws, you need four times the compute; with linear scaling laws, you need just twice.” The company says it trained the model in three stages — pretraining, supervised fine-tuning, and a reinforcement learning stage specifically targeting long-context retrieval failures — teaching the model to aggressively use distant context rather than defaulting to nearby information, a subtle failure mode that quietly degrades performance in existing systems.

Three benchmarks paint a strong picture, but what they leave out may matter more

On the surface, SubQ’s benchmark numbers are competitive with or superior to models built by organizations spending billions of dollars. On SWE-Bench Verified, it scored 81.8% compared to Opus 4.6’s 80.8% and DeepSeek 4.0 Pro’s 80.0%. On RULER at 128,000 tokens, a standard benchmark for reasoning over extended inputs, SubQ scored 95% — edging out Claude Opus 4.6 at 94.8%. On MRCR v2, a demanding test of multi-hop retrieval across long contexts, SubQ posted a third-party verified score of 65.9%, compared with Claude Opus 4.7 at 32.2%, GPT-5.5 at 74%, and Gemini 3.1 Pro at 26.3%.

But several details warrant scrutiny. The benchmark selection is narrow — exactly three tests, all emphasizing long-context retrieval and coding, the precise tasks SubQ is designed for. Broader evaluations across general reasoning, math, multilingual performance, and safety have not been published. The company says a comprehensive model card is “coming soon.”

According to The New Stack, each benchmark model was run only once due to high inference cost, and the SWE-Bench margin is, as the company’s own paper acknowledges, “harness as much as model.” In benchmark methodology, single runs without confidence intervals leave room for variance. There is also a significant gap between SubQ’s research results and its production model. On MRCR v2, the company reported a research score of 83 — but the third-party verified production model scored 65.9. That 17-point gap between the lab result and the shipping product is notable and largely unexplained.

Subquadratic also told SiliconANGLE that on the RULER 128K benchmark, SubQ scored 95% accuracy at a cost of $8, compared with 94% accuracy and about $2,600 for Claude Opus — a remarkable cost claim. But the company has not publicly disclosed specific API pricing, making it impossible to independently verify the cost-per-task comparisons.

The AI research community’s verdict ranges from ‘genuine breakthrough’ to ‘AI Theranos’

Within hours of the announcement, the AI research community erupted into a debate that crystallized around a single question: Is this real?

AI commentator Dan McAteer captured the binary mood in a widely shared post: “SubQ is either the biggest breakthrough since the Transformer… or it’s AI Theranos.” The comparison to the infamous blood-testing fraud company may be unfair, but it reflects the scale of the claims being made. Skeptics zeroed in on several pressure points. Prominent AI engineer Will Depue initially noted that SubQ is “almost surely a sparse attention finetune of Kimi or DeepSeek,” referring to existing open-source models.

Whedon confirmed this on X, writing that the company is “using weights from open-source models as a starting point, as a function of our funding and maturity as a company.” Depue later escalated his criticism, writing that the company’s O(n) scaling claims and the speedup numbers “don’t seem to line up” and called the communication “either incredibly poorly communicated or just not real.”

Others raised structural questions. One developer noted that if SubQ truly reduces compute by 1,000x and costs less than 5% of Opus, the company should have no trouble serving it at scale — so why gate access through an early-access program? Developer Stepan Goncharov called the benchmarks “very interesting cherry-picked benchmarks,” while another commenter described them as “suspiciously perfect.”

But not everyone was dismissive. AI researcher John Rysana pushed back on the Theranos framing, writing that the work is “just subquadratic attention done well which is very meaningful for long context workloads,” and that “odds of it being BS are extremely low.” Linus Ekenstam, a tech commentator, said he was “extremely intrigued to see the real-world implications” particularly for complex AI-powered software.

Magic.dev made strikingly similar claims two years ago — and then went quiet

Perhaps the most pointed critique of SubQ’s launch comes not from its specific claims but from recent history. Magic.dev announced a 100-million-token context-window model in August 2024, with a claimed 1,000x efficiency advantage, and raised roughly $500 million on the strength of those claims. As of early 2026, there is no public evidence of LTM-2-mini being used outside Magic.

The parallels are uncomfortable. Both companies claimed massive context windows. Both touted roughly 1,000x efficiency gains. Both targeted software engineering as their primary use case. And both launched with limited external access.

The broader research landscape reinforces the caution. Kimi Linear, DeepSeek Sparse Attention, Mamba, and RWKV all promised subquadratic scaling, and all faced the same problem: architectures that achieve linear complexity in theory often underperform quadratic attention on downstream benchmarks at frontier scale, or they end up hybrid — mixing subquadratic layers with standard attention and losing the pure scaling benefits.

A widely cited LessWrong analysis argued that these approaches “are all better thought of as ‘incremental improvement number 93595 to the transformer architecture’” because practical implementations remain quadratic and “only improve attention by a constant factor.”

Subquadratic is directly aware of this history. Its own technical blog specifically addresses each prior approach — fixed-pattern sparse attention, state space models, hybrid architectures, and DeepSeek Sparse Attention — and argues that SSA avoids their tradeoffs. Whether it actually does remains an empirical question that only independent evaluation can settle.

A five-time founder, a former Meta engineer, and $29 million to prove the doubters wrong

The team behind the claims matters in evaluating them. CEO Justin Dangel is a five-time founder and CEO with a track record across health tech, insurancetech, and consumer goods, and his companies have scaled to hundreds of employees, attracted institutional backing, and reached liquidity. CTO Alexander Whedon previously worked as a software engineer at Meta and served as Head of Generative AI at TribeAI, where he led over 40 enterprise AI implementations.

The team includes 11 PhD researchers with backgrounds from Meta, Google, Oxford, Cambridge, ByteDance, and Adobe. That is a credible collection of talent for an architecture-level research effort. But neither co-founder has published foundational AI research, and the company has not yet released a peer-reviewed paper. The technical report is listed as “coming soon.”

The funding profile is unusual for a company making frontier AI claims. Subquadratic raised $29 million at a reported $500 million valuation — a steep price for a seed-stage company with no publicly available model, no peer-reviewed research, and no disclosed revenue. The investor base, led by Tinder co-founder Mateen and former SoftBank partner Villamizar, skews toward consumer tech and growth investing rather than deep technical AI research. The company is not open-sourcing its weights but plans to offer training tools for enterprises to do their own post-training, and has set a 50-million-token context window target for Q4.

The real test for SubQ isn’t benchmarks — it’s whether the math survives independent scrutiny

Strip away the marketing language and the social media drama, and the underlying question Subquadratic is asking is genuinely important: Can AI systems break free of quadratic scaling without sacrificing the quality that makes them useful?

The stakes are enormous. If attention can be made truly linear without degrading retrieval and reasoning, the economics of AI shift fundamentally. Enterprise applications that today require elaborate retrieval pipelines — processing entire codebases, contracts, regulatory filings, medical records — become single-pass operations. The billions of dollars currently spent on RAG infrastructure, context management, and agentic orchestration become partially redundant.

Whedon’s willingness to engage publicly with technical criticism — posting a technical blog within hours of pushback — suggests a team that understands it needs to show its work, not just describe it. And to its credit, the company acknowledged openly that it builds on open-source foundations and that its model is smaller than those at the major labs.

Every frontier model in 2026 advertises a context window of at least a million tokens, but almost none of them are actually great at making use of all that information. The gap between a nominal context window and a functional one — between what a model accepts and what it reliably reasons over — remains one of the most important unsolved problems in AI. Subquadratic says it has closed that gap. If independent evaluation confirms that claim, the implications would ripple far beyond a single startup’s valuation. If it doesn’t, the company joins a growing list of long-context promises that sounded revolutionary on launch day and unremarkable six months later.

In computing, every fundamental constraint eventually falls. When it does, the breakthrough never comes from the direction the industry expected. The question hanging over Subquadratic is whether a team of 11 PhDs and a $29 million seed round actually found the answer that has eluded organizations spending thousands of times more — or whether they just found a better way to describe the problem.

Tech

Elon Musk’s Last-Ditch Effort to Control OpenAI: Recruit Sam Altman to Tesla

A few months before Elon Musk left OpenAI’s board of directors in February 2018, he tried to recruit Sam Altman to join a “world-class AI lab” within Tesla. Musk went as far as offering the OpenAI CEO a Tesla board seat, according to emails and testimony presented in federal court on Wednesday during the Musk v. Altman trial. The emails were shown to a jury during the cross examination of Shivon Zilis, a former OpenAI adviser and board member who is also the mother of four of Musk’s children.

Musk’s core claim in this lawsuit is that Altman and OpenAI president Greg Brockman effectively stole a nonprofit, using the $38 million Musk invested to create a private company worth more than $800 billion today. On Wednesday, lawyers for Musk showed video depositions of former OpenAI CTO Mira Murati and former OpenAI board member Helen Toner, to raise concerns over Altman’s alleged history of deceit.

OpenAI’s legal team has responded to Musk’s claims by questioning his true motives, arguing that the Tesla CEO has had “sour grapes” ever since he failed to assume control of OpenAI in 2017. He has since started a rival, for-profit AI lab. OpenAI’s lawyers used Zilis’ cross-examination on Wednesday to bring up evidence about Musk’s alleged plans to subvert OpenAI, and tried to suggest Zilis was privy to those plans. As it pertains to this case, one of Zilis’ most important roles at OpenAI was acting as a conduit between Musk and Altman.

In a text from February 2018 presented as evidence, Zilis—then an OpenAI adviser, as well as a Neuralink and Tesla executive—asked Altman, “Did you think through a B Corp subsidiary of Tesla?”

“There was documentary evidence that, at several points, Mr. Musk had contemplated seeking to join Sam Altman to the board and offered that option,” said OpenAI lawyer William Savitt outside the courthouse on Wednesday. “It was part of Mr. Musk’s effort to corrupt OpenAI and absorb it into Tesla … he was trying to get Altman to abandon the mission and be part of Tesla.”

In an email to Tesla’s VP of communications, Sarah O’Brien, from November 2017, Zilis shared a draft of an FAQ page about an event Tesla was planning to hold at the NeurIPS AI conference. “The purpose of this event is to share that Tesla is building a world leading AI lab(?) which will rival the likes of Google / DeepMind and Facebook AI Research,” the drafted FAQ read. The document continues, “One major issue for Tesla is when people think of Elon and AI, they think of OpenAI.”

Another part of the FAQ labeled “Who?” lists several Tesla executives who were planned to lead the unit, including Musk and Andrej Karpathy, a former OpenAI researcher. Altman’s name is listed next to Musk’s with two question marks beside it.

The FAQ is marked up with notes including that Altman could be a moderator for the NeurIPS event, which “could be a forcing function for Sam to commit to TeslaAI.” Another note reads that Tesla AI’s “strategy had yet to be defined and some of it may be deeply proprietary.”

Zilis testified on Wednesday that Altman never ended up joining Tesla, and the AI lab and the NeurIPS launch event never came to fruition. She also testified that Musk reached out to Karpathy about recruiting him to Tesla. Savitt told reporters that Zilis’ testimony on Karpathy is “directly contrary to what Mr. Musk told the jury just a few days ago.” Earlier in this trial, Musk testified that Karpathy left OpenAI of his own volition.

Tech

AI vision agents use 45x more tokens than APIs in benchmark

AI

For AI agents, seeing is expensive

Businesses deploying AI agents to automate computer usage may be spending far more money than necessary if those agents try to emulate human visual interaction.

Reflex, an enterprise application platform, recently set out to compare vision agents with API agents.

A vision agent in this context refers to an AI agent that mimics human interaction by relying on image processing and optical character recognition to operate an application. In this instance, that’s Claude Sonnet navigating a web app user interface via browser-use 0.12, a tool for automated web browser operation.

An API agent here refers to Claude Sonnet interacting with a web app via tools and APIs. The agent calls the same handling mechanisms that the UI calls and receives structured data in response, rather than a web page screenshot that must be analyzed.

“Two agents target the same running app: one drives the UI via screenshots and clicks, the other calls the app’s HTTP endpoints directly,” explained Palash Awasthi, head of growth at Reflex, in a blog post. “Same Claude Sonnet, same pinned dataset, same task. The interface is the only variable.”

The following task was presented to each agent: “A customer named Smith has complained about a recent order. Find the Smith with the most orders, accept all their pending reviews, and mark their most-recent ordered order as delivered.”

According to Awasthi, the API agent completed the task in just eight calls. It listed pending customer reviews, accepted them, and marked the order delivered.

The vision agent, however, found only one of four pending reviews because it failed to scroll the page where it would have seen the three other reviews hidden off-screen.

Analyzing and interpreting a web page visually is fundamentally more challenging for an AI model than interacting with API calls and tools.

When the prompt was revised to help the vision model perform better, the vision agent still took ~17 minutes, significantly longer than the API agent at ~20 seconds. The vision agent also consumed a lot more tokens – ~45x more.

The company made its test available as a benchmark for those interested in trying to reproduce the results.

Awasthi said that the cost difference between the two approaches reflects the architecture – vision agents need to see and seeing is costly – each screenshot demands thousands of input tokens to process.

Anthropic estimates that processing a 1000×1000-pixel image with Claude Sonnet 4.6 uses about 1,334 tokens.

The vision agent expended around 500,000 input tokens and around 38,000 output tokens to complete its task. The API agent used around 12,150 input tokens and around 934 output tokens.

For Awasthi, the lesson is that while vision agents may be necessary for interacting with apps you don’t control, inwardly focused agents should target APIs. ®

Tech

OpenAI could launch its AI phone sooner than expected

OpenAI’s rumoured smartphone might be arriving earlier than anyone expected.

According to analyst Ming-Chi Kuo, the company is now aiming to mass-produce its first “AI agent phone” in the first half of 2027. This is a significant shift from earlier timelines, with earlier estimates pointing closer to 2028.

The accelerated push suggests OpenAI is getting more serious about hardware, and quickly. Kuo says the move is partly tied to a potential IPO. A flagship device could help strengthen its pitch to investors. Additionally, there is growing competition in the emerging “AI phone” space.

Details are still thin, but the early picture is interesting. The device will run on a custom chip based on MediaTek’s Dimensity 9600, potentially built on TSMC’s next-gen process. It could also feature dual AI processors. These processors would handle things like vision and language tasks simultaneously. This is a hint at how OpenAI wants this to function less like a traditional smartphone and more like an always-on assistant.

One of the headline upgrades is the image signal processor. This will improve how the AI “sees” the world through the camera with enhanced HDR and real-time sensing. Combined with fast memory and storage, the device will have tighter security isolation. Therefore, the aim seems to be a device that can process context continuously, rather than waiting for users to open apps.

That’s the bigger shift here. Kuo believes AI agent phones will move away from the usual app-based experience. Instead, they will let users complete tasks through a context-aware interface. This is something OpenAI is uniquely exploring. This is especially true if it controls both hardware and software.

There’s still some overlap with OpenAI’s other hardware plans. The company is also reportedly working on a screen-less AI device with Jony Ive, now expected around 2027. This comes alongside longer-term ideas like smart glasses and earbuds. If all of this lands, OpenAI could quickly find itself competing with the likes of Apple across multiple product categories.

For now, the AI phone remains unofficial. But if this timeline holds, OpenAI’s biggest hardware play might be closer than expected.

Tech

Myst and Riven remakes head to PlayStation, Xbox, and Microsoft Store

On the PS5, players will have access to HDR and 4K gameplay as well as PS5 Pro enhancements and support for ray tracing. HDR / 4K and ray tracing are also supported on the Xbox and Microsoft Store versions, we’re told.

Read Entire Article

Source link

Tech

Opinion: The myth of Washington’s tax burden, by the numbers

[Editor’s Note: Sales consultant and former startup founder Ron Davis is a candidate for the Washington state Legislature, who has written for GeekWire previously on startup sales hiring practices. GeekWire publishes guest opinion pieces representing a range of perspectives. The views expressed are those of the author.]

If you tune into the local conversation about Washington state taxes on LinkedIn, you might think that Olympia is on the verge of snuffing out Seattle’s regional economy with extreme taxation. There are exceptions, but most of these posts are long on rhetoric, short on rigor. Given Washington’s pressing needs, we should do better. And given our community’s capacity for data-driven thinking, we can do better.

Contrary to popular myths, our taxes are relatively low, haven’t exploded skyward, and are nowhere near the point of creating serious damage to the commercial sphere.

Washington taxes are low

Let’s consider why a conservative economist recently called Washington a “tax haven, like the Cayman Islands,” when it comes to the rich. First, we only recently even reached the halfway point among states when it comes to taxes as a share of its economy, and our taxes are actually down from a few years ago. We have lower taxes than every other deep blue state, and nine red states too, including Kansas, Kentucky, Utah and West Virginia.

Second, our taxes disproportionately coddle the rich, while simultaneously stiffing working families. Until recently, Washington was the most regressively taxed state in the union, which meant that the poor pay a much bigger share of their income than the rich. Thanks to the tax on capital gains windfalls over $250,000 in a year, we are now only the second most regressively taxed — just above Florida.

Currently, the top 1% of Washington earners pay 4% of their income in state and local taxes — less than either Texas or Idaho. The national average is 7.2%, nearly twice as much as Washington. In Massachusetts, California and New York, the top 1% pay 9%, 12% and 14% of their income. On the other end of the spectrum, the bottom fifth of earners in the Evergreen State pay through the nose — 13.8% of their income. The national average is 11.4%. Low income families ARE overtaxed relative to their peers in other states, but this does not figure into the discussions on LinkedIn.

Let’s remember the national and global context as well. United States taxes, including state and local, are far lower than most rich countries — 32nd out of 38 in the OECD. We pay 25%, while the rich Danes, Dutch, Japanese and Austrians, or the fast-growing Spanish and Poles, all pay 35%-43%. No wonder our life expectancy, inequality, healthcare coverage and infrastructure are so poor! The only countries* with taxes lower than ours in the OECD are Costa Rica, Turkey, Colombia, Chile and Mexico.

In other words, the notion of a tax burden — especially for the rich, especially in Washington state — is a myth.

Washington’s budget growth is sustainable

One often hears hyperventilating claims about the growth in Washington’s budget. It is true that if Washington’s budget had grown at exactly the rate as the population and general inflation combined over the last decade, it would be 29% lower. But as any public finance economist can tell you, that information is close to useless.

Cost disease means that services inflation in both the public and private sectors is higher than overall inflation. Since government work is service-intensive, government costs go up faster than general inflation. Governments build stuff, too — so they buy lots of land and land also gets expensive faster in growing economies. This is why the cost of keeping government services flat usually increases much faster than inflation. Ergo, economists instead look at how much of our state income (GDP) taxes take up.

You might think we’ve run up spending in the last few years at an unsustainable rate. Think again. In 2019, taxes were 10.6% of our economy. Today they are 8.47%. Perhaps we should look back to the depths of recession-era austerity, in 2010? It was 9.9%. Taxes as a share of our economy have shrunk. They are flat from 25 years ago, and down from the 1970s, 1980s and 1990s.

And if you think GDP numbers are somehow distorted or are not representative of individual experiences, the same analysis holds true of personal income. Taxes are lower, and our economy boomed when our taxes were higher.

The millionaire tax won’t hurt the economy or prompt a mass exodus

In conversations online, for all the talk about tax flight and comparative disadvantage vibes, there is surprisingly little discussion in our community about the real, measured, economic impact of higher taxes on the wealthy. So what does the cold, hard, evidence say?

Well, setting aside the question of whether retaining every last wealthy person is the highest goal of public policy, the evidence is pretty darn clear that the wealthy on balance are nowhere near as price-sensitive as we are told. In fact, millionaires move less than everyone else.

Researchers estimate that eliminating all tax differences between the states would reduce national millionaire migrations by only about 250 families per year — out of roughly 12,000 total. Regions like ours are “sticky,” as the product people say.

Moreover, studies suggest that when the wealthy do move, they mostly move to other high-tax jurisdictions! Certainly some people cite taxes when they move to Wyoming and some people buy extra homes and play domiciling games to avoid taxes. But the macro, net effect appears to be pretty negligible.

Unfortunately, studies of millions of people seem to have little impact on people’s beliefs when “everyone they know” is “thinking” about moving.

So let’s put this in terms of some specific stories. New Jersey raised taxes on the rich and Massachusetts raised taxes on millionaires. New York raised taxes on the rich twice, and so did California. In every one of those cases, businesspeople predicted an economic apocalypse, and talked about how the people they knew were leaving. Then the number of rich people in all those places increased markedly. In fact, in California — where taxes went up a lot — their “market share” of U.S. millionaires even increased.

It’s almost as if “the economy” is an immensely complex emergent phenomena, instead of a simple equation where prosperity is perfectly inversely correlated with rich people’s taxes or commentator’s vibes about them.

It’s a serious problem that these kinds of facts so rarely figure into pronouncements about the imminent demise of our local economy every time we do something like raise the minimum wage, labor standards, or taxes. While there is plenty of room for discussion about the right kind and level of taxation, it is time we stopped having a discussion that is just devoid of basic empiricism.

Washington taxes aren’t high, haven’t spiked, and raising them on the wealthy doesn’t risk economic ruin. This community built world-changing companies by following evidence wherever it leads. It’s time we demand the same standard from our political discourse.

* Ireland is officially on this list, but its tax rate is seriously distorted, because GDP is massively inflated by companies shifting profits there on paper for tax purposes. Ireland has addressed this distortion with a gross national income number and this puts their true tax rate between 35% and 40%.

Note: I used these population numbers, budget history and this inflation calculator.

Tech

Best Record Cleaning Options for Vinyl Collectors: Exit to Vintage Street

One of the enduring myths of audiophilia is the concept of the “end-game” system. No matter the quality of the system you have, there always seems to be a missing piece.

“If I can only add a (insert next-level piece of equipment here) to my system I will be forever content and can die a happy (wo)man.”

I have achieved what I thought was end-game several times – for a few days or several weeks – but each time a new siren song emerges. Klipsch Forté, Lenco L70 and Sansui AU-777 are calls of the past now silenced. Current objects calling with varying urgency include blue-baffle JBLs, concentric-driver Tannoys, Thorens TD-124 or 125, and the Sansui AU-111.

With a planned move back to Japan in two or three years, the question of whether any of these will be achieved is on hold. I am in an enforced end-game state, knowing I will sell everything I have before I move, and will start again when I land on the other side of the Pacific.

Upgrading my Cleaning Game

This continual desire to upgrade and improve the system is about more than just equipment. It applies also to furniture, storage, cables, accessories, and record cleaning.

Four years ago, as a fairly new vinyl collector with a few hundred records in my collection, I wrote about budget cleaning solutions that did the job and kept the wallet (and Mrs. Audiolove) happy.

Today, with nearly 1,800 albums, I’ve become pickier about cleaning; I won’t cut corners and am more willing to drop some coin on quality. I’ve replaced records that didn’t cut the mustard (including grey-market EU “Public Domain” reissues) with modern audiophile or early pressings, and I want to show these the respect they deserve so they play clean and clear for my remaining decades.

And so ladies and gentlemen I present my 2026 cleaning arsenal, with medium-of-choice dependent on dirt levels and apparent vinyl condition.

Dust and Static – Ramar Berlin Record Brush (Red)

Ramar record brushes are made with a combination of carbon fibre (six double rows) and two rows of goat hair to penetrate every groove and remove fine dust and larger dirt particles while dissipating electrostatic charges.

The body and protective case of my brush are made with cherry wood, fashioned from a single block. The case protects the brush fibres from damage and dirt. A range of handle and case styles are available, including other wood variants and metal finishes.

Brushes come with a natural felt cleaning pad for removing any dust or dirt caught between the fibres during use, and Ramar offers after-market renewal and repair services.

The Ramar brush replaced my $20 Audio-Technica anti-static brush, and the difference was obvious. It feels far more substantial and better made, and it delivers noticeably better dust and static removal. At €360, it had better be excellent, and it is. For most records, this is the only cleaning solution I need, which makes the expense easier to justify.

Minor Dirt – GrooveWasher Hardwood Record Cleaning Kit

GrooveWasher makes a variety of cleaning accessories and kits, including record and stylus fluids and brushes. They also make anti-static record sleeves.

This was my first cleaner “upgrade,” replacing the cheapie DiscWasher. The look and feel of the two cleaners are similar, but the heft of the GrooveWasher’s wooden handle and the cleaning performance of the black Terry microfibre pad are a step up in quality.

The Hardwood Kit costs around $50 and comes with a 4 oz spray bottle of G2 high tech record cleaning fluid. This combination effectively removes minor grime like errant fingerprints or other sticky dirt that the Ramar can’t tackle. I use this brush for a first clean of used records that look to be in very good condition, and every few plays for records I’ve had in the collection for some time.

Over time the plush terry cloth pad does wear down and flatten out, and I’m currently eyeing up a replacement pad. The cleaning pads are easily removable, and replacements adhere solidly to the wood handle by way of Velcro fasteners.

Embedded Dirt and Persistent Crackle – HumminGuru HG01 Ultrasonic

The HumminGuru HG01 ultrasonic replaced a Spin Clean Mk. II a couple of years ago. The Spin Clean manual water-and-brush system worked well initially, but for some reason had begun jamming, even after replacing the brushes. While it still did a good job of cleaning, using it became frustrating and an upgrade was called for.

I investigated various vacuum and ultrasonic systems and the HumminGuru seemed to offer a good balance between results and financial outlay (Yes, I’m willing to drop some coin, but my pockets are not bottomless).

Ultrasonic cleaning uses high-frequency sound waves to create cavitation bubbles in water, requiring zero physical contact with the record. The HumminGuru automates the process of both cleaning and drying. All that’s required of the user is to add distilled water to the bath area on top, insert the record vertically into the cleaning slot, and hit a few buttons to set cleaning and drying time and start the cleaning process.

The record spins in the bath for several minutes while the ultrasonics remove dirt, the bath auto-drains into a removable water receptacle at the bottom of the machine, and then dual warm air fans dry the record. After 7-10 minutes, remove the record and it’s ready to store or play.

Water can be re-used to clean multiple records, and HumminGuru recommends using a few drops of alcohol-free cleaning formula to reduce surface tension and facilitate better penetration into the record grooves and to enhance drying in humid environments. Adaptors are available for 7” and 10” records.

I’ve been very impressed with results from the HumminGuru, with big improvements in grading quality post cleaning. I also noted improvements for records already cleaned with the old Spin Clean (which was no slouch, even with the jamming issues I experienced).

At time of writing the HumminGuru HG01 costs around $400 direct from the manufacturer, which is down significantly from my original purchase price.

Since I purchased my HG01, Humminguru has introduced an advanced model (the Nova) which features quieter cleaning, faster drying and automatic adjustment for different record sizes. The Nova runs about $700.

HumminGuru also introduced an Automatic Water Dispenser ($159.99 at Amazon) unit which eliminates my one gripe with the HG01 (and Nova), that being the somewhat inelegant process of removing the water receptacle to refill the water bath. The water dispenser costs around $160, and is very definitely on my to-buy list (and an exception to enforced end-game status).

The Bottom Line

And there we have the three arrows in my cleaning quiver, with all needs and bases covered. A final mention goes to the Ramar brush, which elicits frequent comments on Instagram regarding what many see as an exorbitant price (about the same as the HumminGuru).

No, there are no moving parts. Yes, it’s just a brush. But what a brush! As mentioned, this is my main cleaner and so on a per-use basis the cost is not so high. Factor in craftsmanship and precision – hand crafted, grain-matched wooden handle and holder, exquisitely layered brush fibres – and an obviously time-intensive build process, and it all makes sense. In my mind the juice is worth the squeeze.

What do you think? Leave your thoughts in the comments, or message me on the ‘Gram at @audioloveyyc.

Where to buy:

Related Reading:

Tech

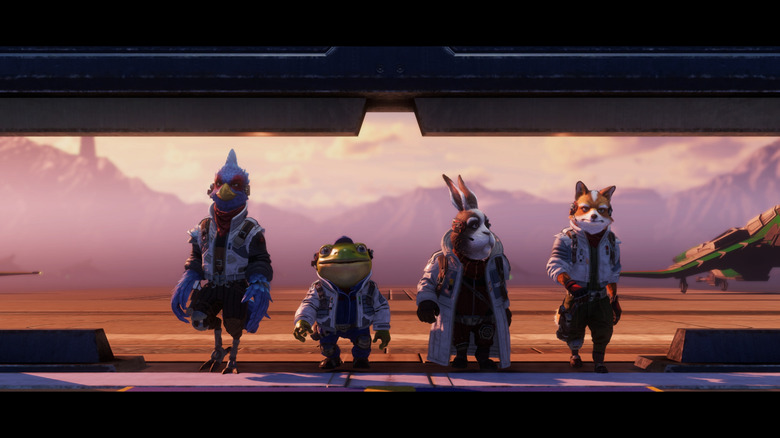

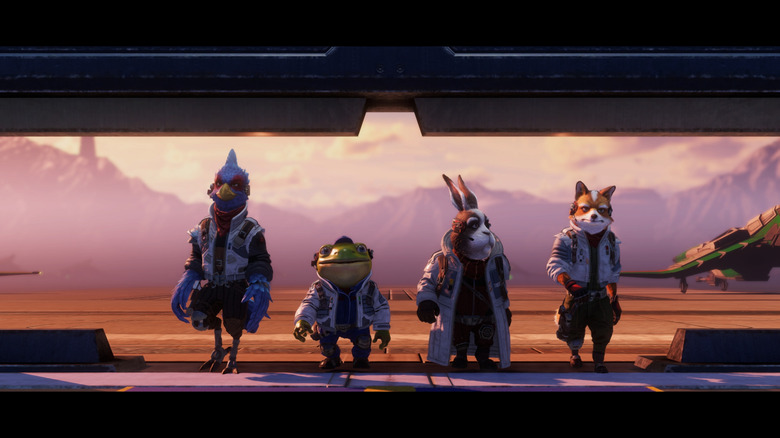

A Star Fox Remake Is Heading To Switch 2 On June 25

Nintendo held a last-minute Star Fox Direct live stream on May 6 to reveal Star Fox, a remake of the N64 classic coming exclusively to the Nintendo Switch 2. It’s due to come out on June 25, complete with new gameplay modes and online play.

Star Fox features reimagined visuals and redesigned characters, and everything seems to have a touch of Fantastic Mr. Star Fox about it. Nintendo calls it “a cinematic take” in its press release, and to that end, the game features new cutscenes with full voice acting and fresh mission briefings between levels. Star Fox offers the campaign mode with easy, normal and expert difficulties (though expert has to be unlocked by playing really well), plus a challenge mode with new objectives, and a new battle mode. Battle mode is a four-on-four dogfighting arena with three stages: There’s a control-point game on Corneria, a crystal-collection challenge on Fichina and a fetch quest against space pirates in Sector Y. You can team up in private matches or join the open queue, and Star Fox will support GameShare locally and online.

The flow and layout of the game’s stages will be just like you remember, as will the banter among Fox, Falco, Slippy, Peppy and the gang. Star Fox features local co-op across the full campaign, with one player steering as the pilot and the other as the gunner. It’ll be compatible with the revamped N64 controller and Joy-Con 2 mouse controls. Since it’s a Switch 2 special, Star Fox will also allow players to appear as any of the main crew members with interactive avatars in GameChat.

Nintendo provided a peek of the new Star Fox in action, complete with the Arwing flying, braking, boosting and barrel rolling, and the Blue-Marine submersible blasting through squids. The stream ended with a look at the game’s prologue, which featured all sorts of high-flying anthropomorphic animals, including Fox’s dad James McCloud and Pigma.

Tech

A 20-minute pitch wins Indian startup Pronto backing from Lachy Groom

Lachy Groom, one of Silicon Valley’s most closely watched solo investors, decided to back Indian startup Pronto just 20 minutes into his first meeting with its 24-year-old founder.

The meeting, which took place in February through a mutual connection, led to Groom investing $20 million in Pronto as an extension of its Series B round, valuing the startup at $200 million after the investment — double its valuation just over two months earlier, as TechCrunch had previously reported. The deal came together within weeks, bringing the solo investor on board as the Bengaluru-based startup expands to meet growing demand for on-demand home services in India.

Groom said he was drawn to Pronto’s ambition to build what he called the world’s largest platform for organizing domestic labor, starting with India’s vast and largely unstructured workforce. “The work underneath that is genuinely hard, and most attempts in adjacent categories have struggled with the operational discipline,” he said, adding that Pronto founder Anjali Sardana (pictured above) and her team were operating “at a level I haven’t seen elsewhere in this space.”

Before founding Pronto in 2025, Sardana worked at Bain Capital and venture firm 8VC, where she gained early exposure to investing and high-growth startups. The startup connects households with workers for everyday tasks such as cleaning and basic home services.

The introduction was arranged through Paul Hudson, founder of Glade Brook Capital, who connected Groom and Sardana during her trip to San Francisco earlier this year. Glade Brook has backed startups founded by both: Pronto, which Sardana leads, and Physical Intelligence, where Groom is a co-founder. Hudson and Groom have also backed Indian quick-commerce startup Zepto.

Sardana said Groom’s investment approach is heavily founder-driven. “He indexes two things. One is the founder, and that’s 95% of it. If he loves the founder, then he will invest,” she told TechCrunch, adding that the rest comes down to the scale and potential of the business.

Groom’s bet comes as a clutch of startups in India race to build instant home services platforms, a category that is seeing rapid adoption among urban households as more consumers turn to on-demand help for everyday tasks.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

The opportunity is significant. A recent Bank of America note, reviewed by TechCrunch, estimates the instant home services market in India could grow into a $15 billion to $18 billion industry by the end of the decade, as companies including Pronto, Snabbit, and Urban Company’s InstaHelp compete for share in the fast-growing category.

Competition is intensifying, with heavy capital inflows and aggressive pricing, particularly to attract first-time users. Bank of America estimates that Snabbit and Urban Company’s InstaHelp each account for about 40% of the market, while Pronto has around a 20% share, even as it scales rapidly. The category is expected to remain “burn-heavy” over the next two to three years.

Despite trailing larger rivals, Pronto has been scaling rapidly, growing from around 18,000 bookings a day to 26,000 in just over a month. The startup is focused on driving repeat usage, betting that turning occasional demand into frequent, habit-driven usage will be key to winning the category, with its top 10% of users accounting for about 40% of bookings.

This growth has also brought challenges, particularly in building out supply. Pronto has expanded its network of service workers to 6,500, up from 1,440 in January. But Sardana said demand continues to outpace supply, making forecasting and capacity management key challenges as the startup grows.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

Tech

Microsoft Edge Stores Passwords In Plaintext In RAM

Longtime Slashdot reader UnknowingFool writes: Security researcher Tom Joran Sonstebyseter Ronning has found that Microsoft Edge stores passwords in plaintext in RAM. After creating a password and storing it using Edge’s password manager, Ronning found that he could dump the RAM and recover his password which was stored in plaintext. Part of the issue is Edge loads all passwords to all sites upon a single verification check, even if the user was not visiting a specific site. This is very different from Chrome, which only loads passwords for specific websites when challenged for the site’s password. Also, Chrome will delete the password from memory once the password has been filled. Edge does not delete the passwords from memory once they are used.

Microsoft downplayed the risk noting access would require control over a user’s PC like a malware infection: “Access to browser data as described in the reported scenario would require the device to already be compromised,” Microsoft said. Ronning countered that it was possible to dump passwords for multiple users using administrative privileges for one user to view the passwords for other logged-on users. “Design choices in this area involve balancing performance, usability, and security, and we continue to review it against evolving threats,” Microsoft said. “Browsers access password data in memory to help users sign in quickly and securely — this is an expected feature of the application. We recommend users install the latest security updates and antivirus software to help protect against security threats.”

Tech

Hackers used Daemon Tools' own website to silently install backdoors on thousands of PCs for nearly a month

Cybersecurity researchers at Kaspersky found that the attack compromised multiple versions of Daemon Tools, from 12.5.0.2421 through 12.5.0.2434. What made the campaign particularly difficult to detect was that the malicious installers were distributed directly from the official website and signed with legitimate digital certificates belonging to AVB Disc Soft, the…

Read Entire Article

Source link

-

NewsBeat3 days ago

NewsBeat3 days agoChannel 5 – All Creatures Great and Small series 7 new post

-

Tech5 days ago

Tech5 days agoTrump’s 25% EU auto tariff breaches Turnberry Agreement that also covers semiconductors and digital trade

-

Sports5 days ago

Sports5 days agoPaul Scholes issues Marcus Rashford reality check as agreement emerges over Man United star

-

Entertainment5 days ago

Entertainment5 days agoMet Gala 2026 Rumored Guest List Is Turning Heads

-

Business6 days ago

Business6 days agoStrait of Hormuz Blockade Persists Amid US-Iran Standoff, Sending Oil Prices Soaring

-

Entertainment5 days ago

New on Prime Video in May 2026 — Full List of Movies and Shows

-

Entertainment7 days ago

Entertainment7 days agoCelebrities Who Are Attending the 2026 Met Gala Event

-

Sports5 days ago

Sports5 days agoCavaliers vs. Raptors Game 6 live score, updates, highlights from 2026 NBA playoffs first-round series

-

Sports5 days ago

Sports5 days agoDavid Benavidez responds to team Canelo saying the fight will never happen

-

Entertainment5 days ago

Entertainment5 days agoKylie Jenner Hit With Second Lawsuit From Ex-Housekeeper

-

Sports5 days ago

Sports5 days agoIPL 2026: ‘Love you darling’- Hardik Pandya’s reaction to MS Dhoni steals the show |Watch | Cricket News

-

Entertainment5 days ago

Entertainment5 days agoYoung and the Restless Next Week: Cane Arrested & Matt’s Deadly New Scheme!

-

Tech7 days ago

Tech7 days agoMark Zuckerberg Says Meta Is Working On AI Agents For Personal And Business Use

-

Tech6 days ago

Tech6 days agoMeta ends Sama contract after Kenyan workers report seeing intimate footage from Ray-Ban smart glasses users

-

Sports7 days ago

Sports7 days agoStalemate in Madrid as resilient Arsenal Blunt Atletico Madrid in semi-final clash – Sports

-

Sports5 days ago

Sports5 days agoPlane crash in Wimberley, Texas kills 5 pickleball players at tournament

-

Entertainment4 days ago

New Netflix Movies in May 2026 — My Top 3 Picks to Stream

-

Sports6 days ago

Sports6 days agoWhat Preity Zinta Said After Punjab Kings’ First Defeat Of IPL 2026

-

Sports5 days ago

Sports5 days agoLeBron James adds to his legacy as Lakers eliminate dysfunctional Rockets

-

Tech7 days ago

Tech7 days agoGM brings Google Gemini to four million vehicles

You must be logged in to post a comment Login