Tech

4 Tips From Consumer Reports For Saving Money On Your Energy Bill

Owning a home isn’t cheap (and we’re not even talking about the cost to get the keys in the first place). Electricity prices have reached their highest levels in a decade, and many households are feeling the strain. Worse, even the most modest projections tell us energy expenses will only continue to rise going forward. Even as homes get more and more efficient with better appliances, smarter lighting, and more efficient insulation, energy bills just keep on climbing.

Today, the average U.S. household spends about $2,000 per year on energy. But that average can be much higher depending on things like climate or home size. Over a lifetime, that’s tens of thousands spent. Luckily, Consumer Reports has publiushed some good advice here over the years. When taken together, their tips show meaningful savings don’t have to come from major renovations or expensive upgrades. Instead, homeowners simply have to make smarter decisions and change small habits. With Consumer Reports’ suggestions, you just might cut your energy bills by hundreds of dollars annually.

Invest in an energy audit

Spending money to save money might not sound like the most practical suggestion, but think about it: A single energy audit can go a long way to reduce your utility costs for a lifetime of homeownership. Consumer Reports says energy auditors can help you get a better understanding of where energy is being wasted. That way, you never have to waste time or money on fixes that only scratch the surface.

Professional auditors have the tools to find air leaks, insulation gaps, poorly sealed areas, even indoor air pollutants or carbon monoxide leaks. From there, you can get to work addressing all the areas for improvement in your place… and hopefully stop overpaying for your HVAC, natural gas, and electrical usage in the process. You might have to spend an average of around $400 for the audit, depending on the size of your home, but it’ll all be worth it when you see those energy bills start dropping.

It’s not always how you use energy, it’s when

When looking for ways to lower your energy bills, plenty of households only focus on how much energy they consume. However, timing can be just as important. Some energy companies offer time-of-use pricing plans, which charge different rates depending on demand. Using electricity will cost you more during peak hours, but you’ll spend less during the off-peak periods to make up for it.

By enrolling in one of these plans and shifting your most energy-consuming tasks (like dishes or laundry) to off-peak hours, Consumer Reports says you can shave a pretty meaningful amount off the bill. That could be thousands annually. Of course, it’s important to note that signing up without adjusting your habits can actually lead to higher bills. It has to be a two-step approach. First enroll, then adjust. Otherwise, you’re adding insult to injury by eating up tons of energy during peak surge pricing.

Drafts matter more than you think

If you live in an older home or apartment, you may have gotten used to draftiness. Alternatively, if you live in a newer place, you might assume draftiness isn’t an issue for you. Neither attitude is going to help your energy bill in the long run. Consumer Reports says even the most efficient heating and cooling systems will struggle if a home isn’t properly sealed. Air leaks around windows, doors, attics, and basements let all that cool air out, meaning your HVAC system has to work harder (and consume more energy) to chill your place. If you’re closing doors, you’re hurting the HVAC even more.

Sealing drafts and improving insulation can reduce energy costs by at least $27 per month, according to Consumer Reports estimates. Over the course of a year, that adds up to more than $300 in savings. Again, that’s the least you’re likely to save. Savings can only go up from there. Don’t forget about your HVAC filters, either. Clogged filters force systems to work harder, which drives your energy bill up higher. Keeping those filters clean can save you another $11 per month on average.

Small changes that yield big savings

The little things add up when it comes to energy consumption. It might not feel like you’re doing anything when you raise your thermostat by a degree or two or turn on a fan before blasting the A/C, but you’d be surprised. Consumer Reports says these little tweaks can save you a lot more than you realize. For example, lowering the temperature setting on your water heater from 140 degrees Fahrenheit to 120 degrees can cut annual energy costs by up to 22 percent. That’s hundreds of dollars for a difference of just 20 degrees. They say adding an insulating jacket to the tank can cut energy use by another 7 to 16 percent, as well.

Nobody’s saying you have to completely overhaul your home. Instead, it’s all about understanding the common places where energy is being wasted and making the kinds of small improvements that deliver the most meaningful results. With these steps in mind, just wait and see how much you can save.

Tech

Hackers used Daemon Tools' own website to silently install backdoors on thousands of PCs for nearly a month

Cybersecurity researchers at Kaspersky found that the attack compromised multiple versions of Daemon Tools, from 12.5.0.2421 through 12.5.0.2434. What made the campaign particularly difficult to detect was that the malicious installers were distributed directly from the official website and signed with legitimate digital certificates belonging to AVB Disc Soft, the…

Read Entire Article

Source link

Tech

Trump’s Anti-Migration Purge Is Breaking Up Military Families, Screwing Afghan Allies

from the MAGA-just-means-hating-American dept

The content of their character was never up for consideration. Under Donald Trump, the only thing that matters is the color of their skin. That’s why almost every single person granted asylum since Trump took office has been white. That’s why Trump has been asking (out loud!) why we keep getting migrants from “shithole” countries (like those located in South America, Africa, and Latin America) rather than blond haired, blue eyed expats from Scandinavian countries whose residents’ lives would become noticeably worse if they chose to move to the US.

The president wraps himself in the flag, delivers a lot of garbled Team USA jingoism, and routinely proclaims we have the best military in the world. But even the people most directly responsible for keeping the US on top of the military game aren’t allowed to remain here if they’re not white.

Jose Serrano, an active duty soldier who served three tours in Afghanistan, said immigration agents arrested his wife April 14 as they attended an appointment with immigration services to take steps toward her permanent residency.

“A person opened the door, escorted us through the hallway, and at the end of the hallway, my wife got arrested,” Serrano said. “Arrested without any order, any warrant … They took away my wife. They don’t tell me anything.”

On top of all this awfulness, this incident shows ICE isn’t actually shifting away from immigration court arrests despite (1) officials saying otherwise, and (2) more importantly, ICE itself supposedly letting officers know that court arrests like these are not allowed under current ICE policy.

The regular awfulness is this: the Trump administration is willing to attack its own military if it means racking up a few more arrests and deportations:

[L]ast April, DHS eliminated a 2022 policy that considered military service of an immediate family member to be a “significant mitigating factor” in deciding whether or not to pursue immigration enforcement. The administration’s new policy states that “military service alone does not exempt aliens from the consequences of violating U.S. immigration laws.”

It’s not just this nation’s relationship with its own military that’s being permanently damaged by Trump’s bigoted war on non-white people. It’s also any future relationships we might have in countries where we’re engaged in combat. When the US began its full withdrawal from Afghanistan, it promised protections to Afghans who worked with the military to provide intelligence or otherwise aided in the US in the decades-long war.

That’s all being tossed aside by Trump because he and his administration simply just don’t like non-white people.

After halting a U.S. resettlement program for Afghans who helped the American war effort, President Trump is in talks to send as many as 1,100 of them to the Democratic Republic of Congo, an aid worker briefed on the plan said Tuesday.

The group includes interpreters for the U.S. military, former members of the Afghan Special Operations forces and family members of American service members. More than 400 children are among them.

The Afghans have been living in limbo in Qatar for over a year. They were taken there after being evacuated by the United States for their own safety because they supported American forces during the war against the Taliban that began in 2001.

Thanks for your help. Now, go fuck yourselves. That’s the message the US is sending to people who aided the US during this war. It’s the kind of message that isn’t likely to score it any allies as it resumes hostilities in the Middle East.

This report says Trump is “in talks” with DRC to pursue this “resettlement” of Afghan allies — one the administration pursues despite the protests of the people who risked their own lives to assist the US during the Afghanistan war.

It’s hard to believe Trump is actually engaged in anything. DRC already has a refugee problem of its own.

More than 600,000 refugees, mostly from the Central African Republic and Rwanda, are currently in Congo, according to the United Nations. Human rights activists say that the country is not equipped to take in more in the midst of fighting with neighboring Rwanda that has displaced even more people because of attacks on refugee camps.

On top of this, many Afghan allies already have family members living in the United States due to previous efforts made by the Biden administration to protect those who aided the US. This forced resettlement in, well, pretty much any African country that agrees to take them divides even more families. It also demonstrates the United States is not to be trusted when it offers favors in return for assistance. All it takes is an election cycle to roll back guarantees and turn trusted allies into just another set of people being moved from “shithole country” to “shithole country” by a bunch of bigots who would rather destroy America than allow any more non-white people to become residents of what used the be the world’s “melting pot.”

At least for now, Trump has seemingly found a willing dumping ground for people he doesn’t want in this country:

On April 17, the U.S. government deported 15 people to the capital of the Democratic Republic of Congo, a deeply impoverished African country that’s been scarred by years of conflict.

The group—comprising men and women from Colombia, Ecuador and Peru—is the first to arrive as part of a secretive migration deal brokered with the Trump administration.

“They took us, they put us on a plane, and they chained us by our hands and feet,” said one Colombian man, sitting on a plastic chair in a shabby hotel near Kinshasa’s airport. The deportees didn’t know their final destination until they were on the plane, he added.

Like El Salvador, I’m sure the DRC is more than happy to take our money to take some people off our hands. And like El Salvador, I’m sure the DRC government doesn’t actually care what happens to any of these people being shoved out of DHS charter flights like so much human refuse. If the US can’t be bothered to care, why should some third party in a developing nation do anything more than allow planes to land so long as the checks keep clearing?

This is what America is now: a place where human rights, civil liberties, and basic human morality are no longer weaved into the fabric of the nation. America is no longer the world’s policeman. It is now the world’s corrupt, racist sheriff.

Filed Under: afghanistan, bigotry, cruelty, dhs, ice, mass deportation, pete hegseth, trump administration, us military

Tech

Microsoft made Copilot a co-author on every VS Code project, reverted after developers revolted

A recent pull request effectively turned Copilot into a “co-author” for every programming project created in Visual Studio Code – even when the programmer behind the screen did not use Copilot at all. Users informed Microsoft that they did not like the change, criticizing the company for adding more “slop”…

Read Entire Article

Source link

Tech

Today’s NYT Connections: Sports Edition Hints, Answers for May 7 #591

Looking for the most recent regular Connections answers? Click here for today’s Connections hints, as well as our daily answers and hints for The New York Times Mini Crossword, Wordle and Strands puzzles.

Today’s Connections: Sports Edition is a tough one, but fun for movie fans. If you’re struggling with the puzzle but still want to solve it, read on for hints and the answers.

Connections: Sports Edition is published by The Athletic, the subscription-based sports journalism site owned by The Times. It doesn’t appear in the NYT Games app, but it does in The Athletic’s own app. Or you can play it for free online.

Read more: NYT Connections: Sports Edition Puzzle Comes Out of Beta

Hints for today’s Connections: Sports Edition groups

Here are four hints for the groupings in today’s Connections: Sports Edition puzzle, ranked from the easiest yellow group to the tough (and sometimes bizarre) purple group.

Yellow group hint: If the shoe fits.

Green group hint: Fore!

Blue group hint: Take me out to the ball game.

Purple group hint: Cinema titles.

Answers for today’s Connections: Sports Edition groups

Yellow group: Sneaker brands.

Green group: Golf courses to host the U.S. Open.

Blue group: Famous nicknames for MLB teams.

Purple group: Movies that contain an NFL team name.

Read more: Wordle Cheat Sheet: Here Are the Most Popular Letters Used in English Words

What are today’s Connections: Sports Edition answers?

The completed NYT Connections: Sports Edition puzzle for May 7, 2026.

The yellow words in today’s Connections

The theme is sneaker brands. The four answers are Converse, New Balance, Saucony and Under Armour.

The green words in today’s Connections

The theme is golf courses to host the U.S. Open. The four answers are Pebble Beach, Shinnecock Hills, Torrey Pines and Winged Foot.

The blue words in today’s Connections

The theme is famous nicknames for MLB teams. The four answers are Amazin’ Mets, Big Red Machine, Gas House Gang and Murderers’ Row.

The purple words in today’s Connections

The theme is movies that contain an NFL team name. The four answers are Little Giants, Raiders of the Lost Ark, Remember the Titans and The Bad News Bears.

Tech

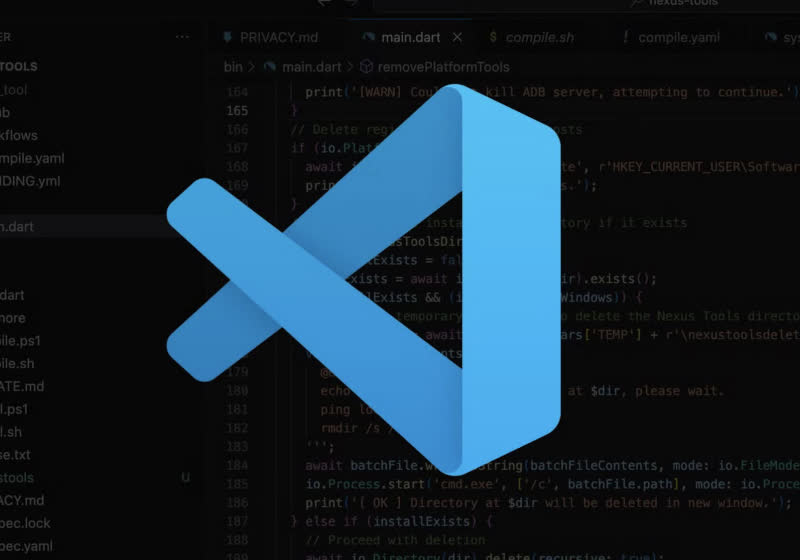

Miami startup Subquadratic claims 1,000x AI efficiency gain with SubQ model; researchers demand independent proof.

A little-known Miami-based startup called Subquadratic emerged from stealth on Tuesday with a sweeping claim: that it has built the first large language model to fully escape the mathematical constraint that has defined — and limited — every major AI system since 2017.

The company claims its first model, SubQ 1M-Preview, is the first LLM built on a fully subquadratic architecture — one where compute grows linearly with context length. If that claim holds, it would be a genuine inflection point in how AI systems scale. At 12 million tokens, the company says, its architecture reduces attention compute by almost 1,000 times compared to other frontier models — a figure that, if validated independently, would dwarf the efficiency gains of any existing approach.

The company is also launching three products into private beta: an API exposing the full context window, a command-line coding agent called SubQ Code, and a search tool called SubQ Search. It has raised $29 million in seed funding from investors including Tinder co-founder Justin Mateen, former SoftBank Vision Fund partner Javier Villamizar, and early investors in Anthropic, OpenAI, Stripe, and Brex. The New Stack reported that the raise values the company at $500 million.

The numbers Subquadratic is publishing are extraordinary. The reaction from the AI research community has been, to put it mildly, mixed — ranging from genuine curiosity to open accusations of vaporware. Understanding why requires understanding what the company claims to have solved, and why so many prior attempts to solve the same problem have fallen short.

The quadratic scaling problem has shaped the economics of the entire AI industry

Every transformer-based AI model — which includes virtually every frontier system from OpenAI, Anthropic, Google, and others — relies on an operation called “attention.” Every token is compared against every other token, so as inputs grow, the number of interactions — and the compute required to process them — scales quadratically. In plain terms: double the input size, and the cost doesn’t double. It quadruples.

This relationship has shaped what gets built and what doesn’t. The industry standard is 128,000 tokens for many AI models and up to 1 million tokens for frontier cloud models such as Claude Sonnet 4.7 and Gemini 3.1 Pro.

Even at those sizes, the cost of processing long inputs becomes punishing. The industry built an elaborate stack of workarounds to cope. RAG systems use a search engine to pull a small number of relevant results before sending them to the model, because sending the full corpus isn’t feasible. Developers layer retrieval pipelines, chunking strategies, prompt engineering techniques, and multi-agent orchestration systems on top of models — all to route around the fundamental constraint that the model itself can’t efficiently process everything at once.

Subquadratic’s argument is that these workarounds are expensive, brittle, and ultimately limiting. As CTO Alexander Whedon told SiliconANGLE in an interview, “I used to manually curate prompts and retrieval systems and evals and conditional logic to chain together the workflows. And I think that that is kind of a waste of human intelligence and also limiting to the product quality.”

Subquadratic’s fix is deceptively simple: stop doing the math that doesn’t matter

The company’s approach, called Subquadratic Sparse Attention or SSA, is built on a straightforward premise: most of the token-to-token comparisons in standard attention are wasted compute. Instead of comparing every token to every other token, SSA learns to identify which comparisons actually matter and computes attention only over those positions. Crucially, the selection is content-dependent — the model decides where to look based on meaning, not on fixed positional patterns. This allows it to retrieve specific information from arbitrary positions across a very long context without paying the quadratic tax.

The practical payoff scales with context length — exactly the inverse of the problem it’s trying to solve. According to the company’s technical blog, SSA achieves a 7.2x prefill speedup over dense attention at 128,000 tokens, rising to 52.2x at 1 million tokens. As Whedon put it: “If you double the input size with quadratic scaling laws, you need four times the compute; with linear scaling laws, you need just twice.” The company says it trained the model in three stages — pretraining, supervised fine-tuning, and a reinforcement learning stage specifically targeting long-context retrieval failures — teaching the model to aggressively use distant context rather than defaulting to nearby information, a subtle failure mode that quietly degrades performance in existing systems.

Three benchmarks paint a strong picture, but what they leave out may matter more

On the surface, SubQ’s benchmark numbers are competitive with or superior to models built by organizations spending billions of dollars. On SWE-Bench Verified, it scored 81.8% compared to Opus 4.6’s 80.8% and DeepSeek 4.0 Pro’s 80.0%. On RULER at 128,000 tokens, a standard benchmark for reasoning over extended inputs, SubQ scored 95% — edging out Claude Opus 4.6 at 94.8%. On MRCR v2, a demanding test of multi-hop retrieval across long contexts, SubQ posted a third-party verified score of 65.9%, compared with Claude Opus 4.7 at 32.2%, GPT-5.5 at 74%, and Gemini 3.1 Pro at 26.3%.

But several details warrant scrutiny. The benchmark selection is narrow — exactly three tests, all emphasizing long-context retrieval and coding, the precise tasks SubQ is designed for. Broader evaluations across general reasoning, math, multilingual performance, and safety have not been published. The company says a comprehensive model card is “coming soon.”

According to The New Stack, each benchmark model was run only once due to high inference cost, and the SWE-Bench margin is, as the company’s own paper acknowledges, “harness as much as model.” In benchmark methodology, single runs without confidence intervals leave room for variance. There is also a significant gap between SubQ’s research results and its production model. On MRCR v2, the company reported a research score of 83 — but the third-party verified production model scored 65.9. That 17-point gap between the lab result and the shipping product is notable and largely unexplained.

Subquadratic also told SiliconANGLE that on the RULER 128K benchmark, SubQ scored 95% accuracy at a cost of $8, compared with 94% accuracy and about $2,600 for Claude Opus — a remarkable cost claim. But the company has not publicly disclosed specific API pricing, making it impossible to independently verify the cost-per-task comparisons.

The AI research community’s verdict ranges from ‘genuine breakthrough’ to ‘AI Theranos’

Within hours of the announcement, the AI research community erupted into a debate that crystallized around a single question: Is this real?

AI commentator Dan McAteer captured the binary mood in a widely shared post: “SubQ is either the biggest breakthrough since the Transformer… or it’s AI Theranos.” The comparison to the infamous blood-testing fraud company may be unfair, but it reflects the scale of the claims being made. Skeptics zeroed in on several pressure points. Prominent AI engineer Will Depue initially noted that SubQ is “almost surely a sparse attention finetune of Kimi or DeepSeek,” referring to existing open-source models.

Whedon confirmed this on X, writing that the company is “using weights from open-source models as a starting point, as a function of our funding and maturity as a company.” Depue later escalated his criticism, writing that the company’s O(n) scaling claims and the speedup numbers “don’t seem to line up” and called the communication “either incredibly poorly communicated or just not real.”

Others raised structural questions. One developer noted that if SubQ truly reduces compute by 1,000x and costs less than 5% of Opus, the company should have no trouble serving it at scale — so why gate access through an early-access program? Developer Stepan Goncharov called the benchmarks “very interesting cherry-picked benchmarks,” while another commenter described them as “suspiciously perfect.”

But not everyone was dismissive. AI researcher John Rysana pushed back on the Theranos framing, writing that the work is “just subquadratic attention done well which is very meaningful for long context workloads,” and that “odds of it being BS are extremely low.” Linus Ekenstam, a tech commentator, said he was “extremely intrigued to see the real-world implications” particularly for complex AI-powered software.

Magic.dev made strikingly similar claims two years ago — and then went quiet

Perhaps the most pointed critique of SubQ’s launch comes not from its specific claims but from recent history. Magic.dev announced a 100-million-token context-window model in August 2024, with a claimed 1,000x efficiency advantage, and raised roughly $500 million on the strength of those claims. As of early 2026, there is no public evidence of LTM-2-mini being used outside Magic.

The parallels are uncomfortable. Both companies claimed massive context windows. Both touted roughly 1,000x efficiency gains. Both targeted software engineering as their primary use case. And both launched with limited external access.

The broader research landscape reinforces the caution. Kimi Linear, DeepSeek Sparse Attention, Mamba, and RWKV all promised subquadratic scaling, and all faced the same problem: architectures that achieve linear complexity in theory often underperform quadratic attention on downstream benchmarks at frontier scale, or they end up hybrid — mixing subquadratic layers with standard attention and losing the pure scaling benefits.

A widely cited LessWrong analysis argued that these approaches “are all better thought of as ‘incremental improvement number 93595 to the transformer architecture’” because practical implementations remain quadratic and “only improve attention by a constant factor.”

Subquadratic is directly aware of this history. Its own technical blog specifically addresses each prior approach — fixed-pattern sparse attention, state space models, hybrid architectures, and DeepSeek Sparse Attention — and argues that SSA avoids their tradeoffs. Whether it actually does remains an empirical question that only independent evaluation can settle.

A five-time founder, a former Meta engineer, and $29 million to prove the doubters wrong

The team behind the claims matters in evaluating them. CEO Justin Dangel is a five-time founder and CEO with a track record across health tech, insurancetech, and consumer goods, and his companies have scaled to hundreds of employees, attracted institutional backing, and reached liquidity. CTO Alexander Whedon previously worked as a software engineer at Meta and served as Head of Generative AI at TribeAI, where he led over 40 enterprise AI implementations.

The team includes 11 PhD researchers with backgrounds from Meta, Google, Oxford, Cambridge, ByteDance, and Adobe. That is a credible collection of talent for an architecture-level research effort. But neither co-founder has published foundational AI research, and the company has not yet released a peer-reviewed paper. The technical report is listed as “coming soon.”

The funding profile is unusual for a company making frontier AI claims. Subquadratic raised $29 million at a reported $500 million valuation — a steep price for a seed-stage company with no publicly available model, no peer-reviewed research, and no disclosed revenue. The investor base, led by Tinder co-founder Mateen and former SoftBank partner Villamizar, skews toward consumer tech and growth investing rather than deep technical AI research. The company is not open-sourcing its weights but plans to offer training tools for enterprises to do their own post-training, and has set a 50-million-token context window target for Q4.

The real test for SubQ isn’t benchmarks — it’s whether the math survives independent scrutiny

Strip away the marketing language and the social media drama, and the underlying question Subquadratic is asking is genuinely important: Can AI systems break free of quadratic scaling without sacrificing the quality that makes them useful?

The stakes are enormous. If attention can be made truly linear without degrading retrieval and reasoning, the economics of AI shift fundamentally. Enterprise applications that today require elaborate retrieval pipelines — processing entire codebases, contracts, regulatory filings, medical records — become single-pass operations. The billions of dollars currently spent on RAG infrastructure, context management, and agentic orchestration become partially redundant.

Whedon’s willingness to engage publicly with technical criticism — posting a technical blog within hours of pushback — suggests a team that understands it needs to show its work, not just describe it. And to its credit, the company acknowledged openly that it builds on open-source foundations and that its model is smaller than those at the major labs.

Every frontier model in 2026 advertises a context window of at least a million tokens, but almost none of them are actually great at making use of all that information. The gap between a nominal context window and a functional one — between what a model accepts and what it reliably reasons over — remains one of the most important unsolved problems in AI. Subquadratic says it has closed that gap. If independent evaluation confirms that claim, the implications would ripple far beyond a single startup’s valuation. If it doesn’t, the company joins a growing list of long-context promises that sounded revolutionary on launch day and unremarkable six months later.

In computing, every fundamental constraint eventually falls. When it does, the breakthrough never comes from the direction the industry expected. The question hanging over Subquadratic is whether a team of 11 PhDs and a $29 million seed round actually found the answer that has eluded organizations spending thousands of times more — or whether they just found a better way to describe the problem.

Tech

The app store for robots has arrived: Hugging Face launches open-source Reachy Mini App Store with 200+ apps

There’s an app for nearly every imaginable user and use case these days, but one thing they all have in common is that they’re centered around one device: the smartphone.

That changes today as Hugging Face, the 10-year-old New York City startup best known for being the go-to place online to host and use cutting-edge, open-source AI models, agents and applications, launches a new App Store for Reachy Mini, its low-cost ($299) open-source physical robot that debuted back in July 2025 (itself the fruit of Hugging Face’s acquisition of another startup, Pollen Robotics).

The new Hugging Face Reachy Mini App Store already hosts a library of over 200 community-built applications, and Reachy Mini owners will be able to download any of these free of charge to start (unlike smartphone apps, there’s no monetization option for app creators on this store — yet).

The Reachy Mini App Store will also offer Reachy Mini owners — around 10,000 units have been sold so far since last year — an easy means of building their own custom apps for the tiny, stationary desktop robot with built-in camera eyes, speaker, and microphone, via Hugging Face’s existing, AI-powered agent called “ML Intern.”

The significance lies not just in the hardware, but in the removal of the “roboticist” barrier; for the first time, individuals without a background in engineering or coding are shipping functional robotics software in under an hour.

“Anyone can build the apps,” said Clément Delangue, CEO and co-founder of Hugging Face, in a video interview with VentureBeat. “My intuition is that more and more [AI] model builders will release on Reachy Mini as a way to test the robotics ability of new models.”

Make robots as accessible to laypeople as PCs and smartphones

The technical bottleneck in robotics has historically been the scarcity of high-quality training data.

While Large Language Models (LLMs) have mastered general-purpose coding by training on massive repositories like Microsoft’s GitHub, the volume of code specific to robotics remains “tiny” by comparison (though Github does contain likely the largest existent, publicly accessible library of robotics code to date, with more than 17,000 different repositories or “repos” dedicated to the field).

This lack of data has meant that, until now, AI agents were relatively poor at understanding the physical abstractions and firmware requirements of hardware.

Hugging Face’s solution is an agentic toolkit that acts as an intermediary. Rather than forcing a user to learn a specific robotics SDK or master the nuances of a robot’s firmware, the toolkit allows a user to describe a desired behavior in plain English—for instance, “wave when someone says good morning”.

An AI agent then handles the heavy lifting: it writes the code, tests it against the robot’s specific constraints, and ships the final package

“Historically, it’s been extremely hard,” Delangue told VentureBeat of building robotics applications. “But we’ve worked really hard on the topic with a mix of open sourcing everything we do, working on the right abstractions for robotics, and making it easier for agents to understand and use it.”

The platform is model-agnostic, supporting a wide range of leading intelligence engines. Users can build apps using Hugging Face’s own ML Intern agent or leverage external models including GPT-5.5, Claude Opus 4.6, Kimmy 2.6, Mini Max GM5, and Deep Sig V4 Pro.

For real-time interaction, the official conversation apps utilize OpenAI Realtime and Gemini Live. By providing these high-level abstractions, Hugging Face has collapsed the traditional “integration weeks” of robotics work into a process that takes minutes.

Low-cost Reachy Mini is a hit

In order to take advantage of the new Hugging Face Reachy Mini App Store, users are encouraged to purchase Reachy Mini, a cute desktop robot Hugging Face launched back in July 2025 as an affordable, open-source alternative to the existing, commercially available robots from the likes of Boston Dynamics, whose infamous Spot robot dog retails for around $70,000. Even Chinese competitors start at $1,900+.

In contrast, the Reachy Mini is accessibly priced for hobbyists and developers. It comes in two variants:

-

Reachy Mini Lite ($299 plus shipping): A tethered version that connects via USB and uses an external computer for processing.

-

Reachy Mini Wireless ($449 plus shipping): A standalone version featuring an on-board Raspberry Pi CM 4 and Wi-Fi connectivity.

Delangue said that of the 10,000 Reachy Mini units sold so far, 3,000 were sold in just the past two weeks. Hugging Face expects to ship another 1,000 units within the next 30 days.

Even those who don’t own a Reachy Mini can still develop apps for it, however, using the Reachy Mini App Store and the Reachy App, which contains a 3D simulation of the robot and its responses.

The App Store itself is hosted on the Hugging Face Hub. It functions much like a standard software repository but for hardware behaviors:

-

Search and Install: Users can find apps, click a button, and install them directly to their robot.

-

Forkability: Every app is “forkable,” meaning a user can duplicate an existing app and ask an AI agent to modify it (e.g., “make it answer in French”).

-

Simulation Mode: Crucially, the store includes a browser-based simulator. This allows users who do not own a physical Reachy Mini to build, test, and play with the catalog in a virtual environment.

Both are part of Hugging Face’s ongoing “Le Robot” effort — a project that began in 2024 with Hugging Face researchers specializing in robotics and AI developing and publishing on the web their own open-source code, tutorials, and hardware to make robotics development more accessible to a wider audience.

And unlike Github, which is designed for a developer audience, the Hugging Face Reachy Mini App Store is designed for robot owners and users who may have no technical experience or training whatsoever.

Continuing with the open-source ethos and practice

Hugging Face’s strategy is rooted in the belief that closed-source hardware and software are “almost impossible” to build for at scale.

Delangue notes that closed systems prevent the training of agents and limit the ability of the community to innovate. Consequently, the entire Reachy Mini platform is open-source.

This open licensing model has two primary implications for the ecosystem:

-

Accelerated Development: Because the code is public and integrated with the Hugging Face ecosystem via “Spaces,” Hugging Face’s feature for hosting AI-powered web apps launched in 2021, agents can more easily learn how to interact with the hardware.

-

Community Sovereignty: Apps are not locked behind a proprietary wall. Currently, all 200+ apps on the store are free, though the platform’s foundation on “Spaces” provides the flexibility for creators to potentially monetize their work in the future.

“For the moment, all the apps are free,” Delangue noted. “It’s flexible, it’s built on [Hugging Face] Spaces, so at some point maybe people are going to make them paid.”

Robotics enters its accessible hobbyist era

Hugging Face’s Reachy Mini App Store is launching with 200 apps already available.

So who built them, and how did they do it without this platform existing prior?

Delangue told VentureBeat that more than 150 different creators have contributed to the store, most of whom had never written a line of robotics code before.

Yet, they have been able to do so thanks to Hugging Face’s ML Intern and Github. The new Hugging Face Reachy Mini App Store now puts the tools and existing apps into one place for easier accessibility.

Delangue was keen to highlight one of the early Reachy robotics app developers in particular to VentureBeat: Joel Cohen, a 78-year-old retired marketing executive.

Cohen, who is colorblind and has no technical background, spent two weeks assembling his Reachy Mini Lite (a task that usually takes three hours). Despite these physical challenges, he used an AI agent to build a “VP of Future Thinking” facilitator for his Zoom-based CEO peer groups. The app enables the robot to:

-

Greet 29 members by name.

-

Fact-check discussions in real-time.

-

Summarize key themes and push back on surface-level answers.

“I built this by describing what I needed in plain English,” Cohen stated in a press release provided to VentureBeat ahead of the launch. “No SDK. No robotics background. No developer experience”.

Other community-driven applications include:

-

Emotional Damage Chess: A robot that plays chess and mocks the user’s blunders.

-

Reachy Phone Home: An anti-procrastination tool that detects when a user picks up their phone and tells them to get back to work.

-

Language Tutor: A physical companion that listens to speech and corrects accents.

-

F1 Race Commentator: A desk companion that calls Formula 1 races live as they happen.

Delangue himself related to VentureBeat that in only a few hours, he built an app for his own Reachy Mini robot at the Hugging Face Miami office to have the robot act as a receptionist.

“It basically does face recognition to detect when you arrive in the office, and then it looks at you and onboards you,” Delangue related. “It says, ‘Hey, welcome to the office. Who are you here to see?’ Then it sends me a message: ‘Carl just arrived at the office. He’s here to meet you, and for these reasons.’ It works a little bit as my welcoming booth at the office, and it took me less than two hours to build that.”

Even for an experienced founder and developer as Delangue, building apps for a robot was out of the question until the combination of Reachy Mini and ML Intern.

“For me, it would have been impossible,” the Hugging Face CEO said. “If you weren’t a robotics developer, it probably would have been impossible, or it would have taken a few months.”

Democratizing robotics

The launch of the agentic App Store signals a fundamental shift in how we interact with machines. For sixty years, the field was gated by the requirement for deep technical expertise.

By combining low-cost open hardware with the reasoning capabilities of modern AI agents, Hugging Face is moving toward a future where the hardware is a commodity and the behavior is limited only by what a user can describe.

As Delangue noted during the launch, the goal was to provide a platform for people who “want to get into robotics but don’t have the hardware or the skills”.

With nearly 10,000 robots now “in the wild” and a burgeoning store of agent-written apps, the Reachy Mini has become the most widely deployed open-source desktop robot in history.

The question is no longer how to build a robot, but what—now that the gate is open—we will ask them to do.

Tech

A wider Galaxy Z Fold 8 may have leaked via Samsung’s own software

Samsung might have just revealed its next big foldable shift by accident.

References buried in One UI 9 firmware appear to confirm a new “Wide” version of the Galaxy Z Fold 8. This hints at a major redesign that moves away from the tall, narrow shape of previous models.

The leak suggests Samsung is experimenting with a shorter, wider form factor, potentially making the Fold feel closer to a standard smartphone when closed.

According to the firmware details, the regular Galaxy Z Fold 8 sticks with a familiar design, measuring around 158.4mm tall and 72.8mm wide when folded. The new “Wide” variant, however, drops to 123.9mm in height while stretching out to 82.2mm wide, which should make it noticeably broader in the hand.

Unfold it, and the difference becomes more obvious. The Wide model adopts a 4:3 aspect ratio, offering a more tablet-like canvas that better suits apps, reading, and multitasking, and it’s also slightly thicker at 9.8mm. This suggests Samsung may be prioritising usability over ultra-thin design this time around.

There are a few trade-offs, though. The leak points to a dual-camera setup on the Wide model, likely a 200MP main sensor paired with a 50MP ultrawide. This could mean dropping the telephoto lens found on the standard Fold 8. That would mark a shift in priorities, focusing more on core imaging performance than zoom versatility.

Performance shouldn’t be an issue either way; both versions are expected to run on the Snapdragon 8 Elite Gen 5 for Galaxy, alongside new crease-less OLED displays. These aim to smooth out one of the biggest complaints about foldables so far.

While nothing is official yet, the timing lines up with Samsung’s usual schedule. The Galaxy Z Fold 8 series is expected to launch at a Galaxy Unpacked event in July 2026 with rumours pointing to a possible London debut, a break from Samsung’s usual US or Korea venues.

If accurate, the Wide model could be Samsung’s answer to growing competition in the foldables space. It may also be a sign that the company is finally rethinking how these devices should feel in everyday use.

Tech

Canadian Officials Claim OpenAI Violated Federal And Provincial Privacy Laws

Philippe Dufresne, the Privacy Commissioner of Canada, has found OpenAI was “not compliant with” Canadian federal and provincial privacy laws in the training of its AI models. Following an investigation, Dufresne and his counterparts in Alberta, Quebec and British Columbia say OpenAI’s approach to things like data collection and consent stepped on multiple laws, including Canada’s Personal Information Protection and Electronic Documents Act (PIPEDA), which governs how companies collect and use personal information during the normal course of business.

The commissioners participating in the investigation identified multiple privacy issues with OpenAI’s approach, including that the company “gathered vast amounts of personal information without adequate safeguards to prevent use of that information to train its models,” and that it failed to acquire consent to collect and use that personal information in the first place. Warnings in ChatGPT note that interactions with the AI could be used in training, but third-party data OpenAI has purchased or scraped also includes personal details people likely aren’t even aware of. The fact that ChatGPT users have no way to access, correct or delete that data was another issue that the commissioners identified, according to a summary of the investigation’s findings, along with OpenAI’s lackluster attempts to acknowledge the inaccuracy of some of ChatGPT’s responses.

Canada’s Privacy Commissioner contends that OpenAI was open and responsive to the investigation, and has already committed to making multiple changes to ChatGPT to follow Canadian privacy laws. OpenAI has retired earlier models that violated Canadian privacy regulation, and now uses “a filtering tool to detect and mask personal information (such as names or phone numbers) in publicly accessible internet data and licensed datasets used to train its models,” the Commissioner says. The company has also agreed within the next three months to add a new notice to the signed-out version of ChatGPT explaining that chats can be used for training and sensitive information shouldn’t be shared, and within the next six months:

While Canada’s investigation into OpenAI’s privacy policies was opened in 2023, the company has received scrutiny from regulators more recently because of its connection to the mass shooting that occurred in Tumbler Ridge in February 2026. OpenAI had reportedly flagged the alleged shooter’s account in 2025 for containing warnings of real-world violence, but failed to escalate those concerns to Canadian law enforcement. Following the shooting, regulators demanded the company change its approach to safety, and OpenAI ultimately agreed to be more collaborative with Canadian law enforcement and health agencies in the future.

Tech

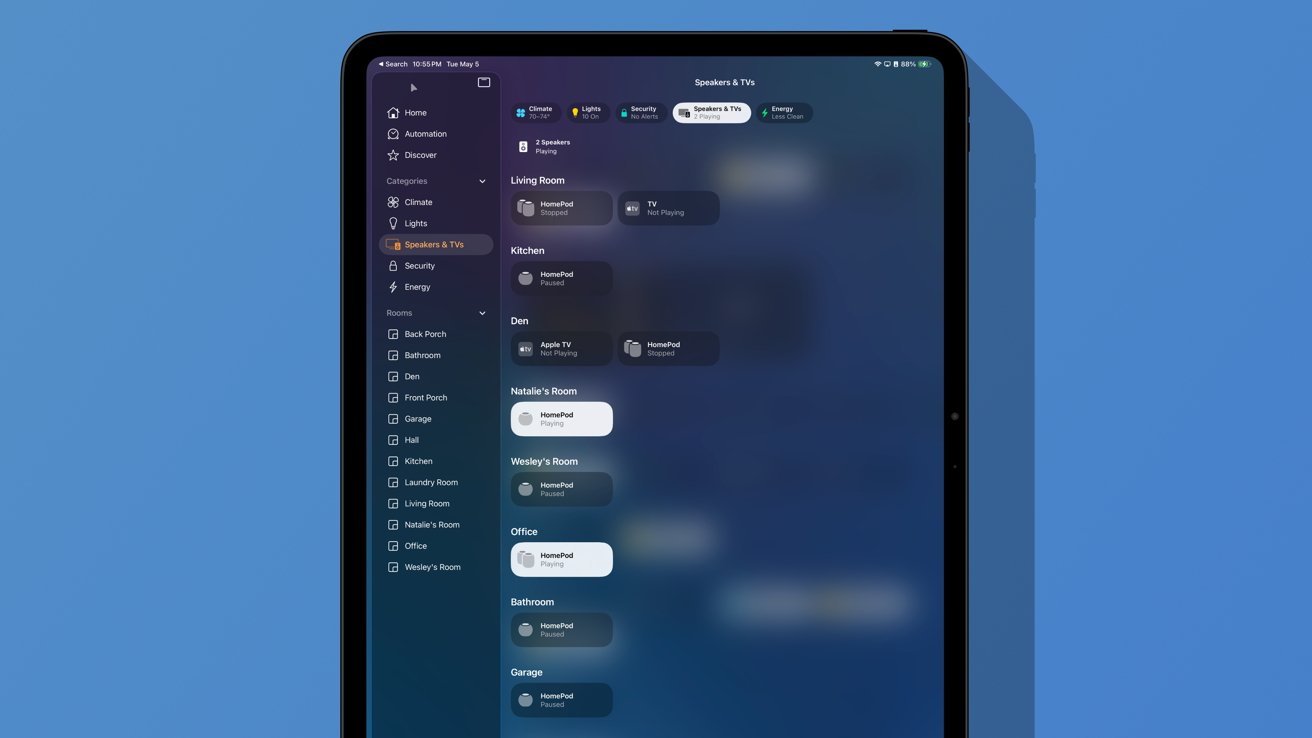

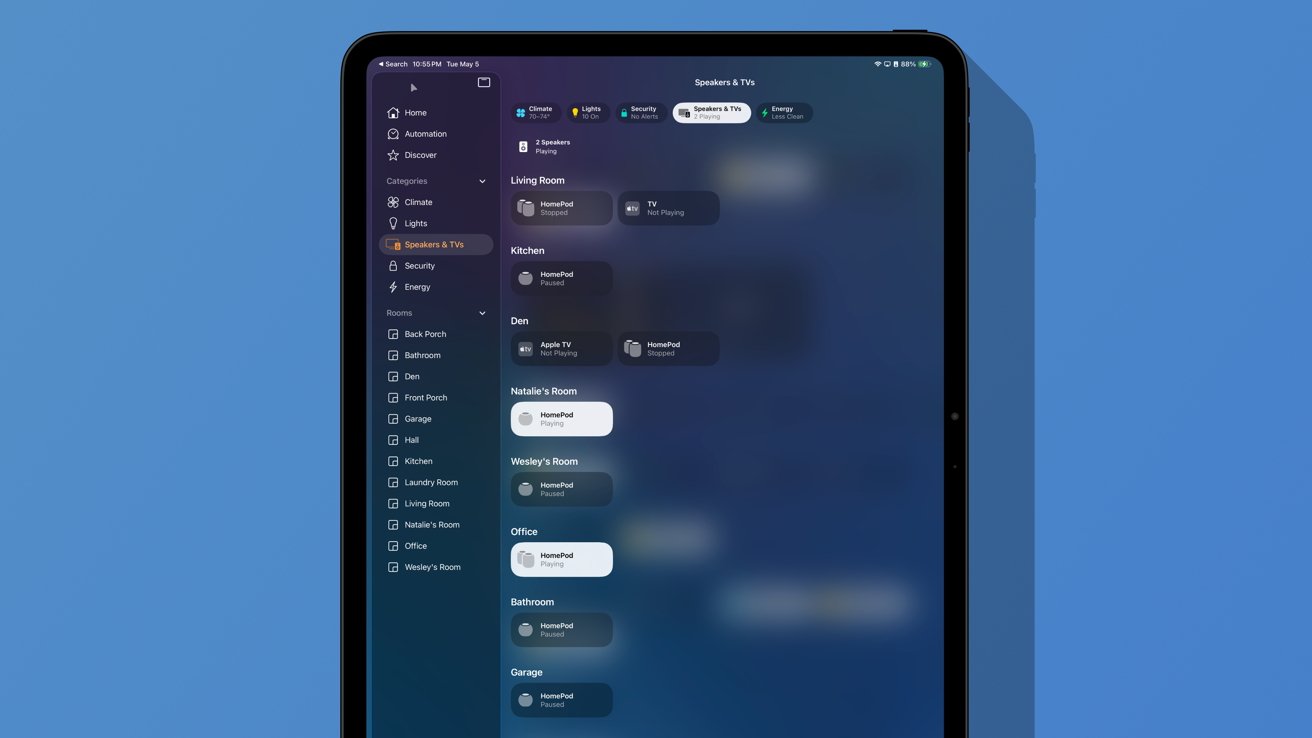

HomePods as a home audio system

Apple’s HomePod and HomePod mini are incredible additions to your smart home. Here’s how I’ve made use of Apple’s smart speaker after abandoning Sonos and other speakers.

We’re approaching two months in our new home, and I’m finally feeling like we’ve settled in nicely. If you missed my first installment on this Owning an Apple Home series, check it out to see how I approached the move and setup process.

While I’m working on stories involving pet care, smart kitchens, MagSafe mounts, and more, I’d like to examine what it is to be all-in on HomePods. That’s right, I don’t have any third-party speaker or other AirPlay solution in my home.

In May 2023, I sold my whole home Sonos system and went all-in on HomePod. I’d like to pretend I saw the troubles Sonos had coming ahead of time, but the reality is I wanted to simplify my Apple Home.

Apple had just brought back the large-sized HomePod in January 2023, and I decided I no longer wanted to use a third-party app to manage my music. I wanted the integration of AirPlay 2, Siri, and my Apple products without any effort or need for other tools.

I can happily say that I don’t regret the decision. Here’s how having a house full of HomePods has been and how I’ve integrated them in the new place.

HomePod setup and layout

In total, my home has two second-generation HomePods in a stereo pair, four first-generation HomePods in two stereo pairs, and five HomePod minis placed singularly in various rooms. Don’t be too aghast at this number, as they’ve been accumulated slowly over a decade.

How I decide to purchase HomePods is very similar to my thought process behind other Apple or smart home purchases. First, if a new model comes out that will prove useful, I upgrade the primary units and rotate the old ones down.

The current flagship HomePods in my home are the ones in the den hooked in a stereo pair to the latest Apple TV 4K. That’s my OLED TV setup with the PlayStation 5 Pro and Nintendo Switch 2, so I want it to be the best possible equipment there.

When Apple inevitably upgrades these speakers, the den is where the new gen-3 stereo pair will go.

Two large HomePods are in a stereo pair and used with the Living Room Apple TV 4K, while the remaining two are my office HomePods. The first-generation models are still going fine, as it seems I escaped whatever hardware issue led to bricking some.

There’s a HomePod mini in each bedroom, one in the full bathroom, one in the kitchen, and one in the workshop in the garage. Once a second-generation HomePod mini arrives, I’m sure we’ll have a few new ones floating around while older ones are paired up too.

Let’s get into how these speakers are used.

Music, TV, & more

Apple’s HomePods are in a funny place when compared to the competition. There are better-sounding speakers, lower-priced ones, and frankly, ones with better smart assistants.

However, the HomePod and HomePod mini aren’t great because of some singular feature, but a sum of their parts. They are the most private smart speakers and integrate directly with Apple Home.

Combine that with great sound and whole-home audio, and you’ve got a fairly great pair of speakers. I understand that they aren’t for everyone, but I’ve found that relying on a single system has been an improvement.

First, the two sets that are used as TV speakers are actually quite amazing. When I had the Sonos system in place, I was able to compare the two.

My main home entertainment system at the time consisted of a Sonos Playbar, two satellite Sonos Play 1s, and a Sonos Sub. They added up to a fairly solid 5.1 channel system pre-Dolby Atmos.

From my tests, the only thing the HomePods couldn’t compete with is bass. There’s no beating a dedicated subwoofer, especially one of that size.

That said, I was impressed, and within days of swapping out the systems, I no longer noticed. Since I was using the Apple TV through the eARC connection, the HomePods could also play audio from game consoles.

I’m aware that some gamers might be concerned about lag, but I never noticed any. The only place that it could actually impact gameplay is with rhythm games, but those usually let users adjust lag manually.

In the years I’ve spent playing various games from the slow and steady Pokemon to the twitchy Returnal, audio was never an issue.

Then there’s the HomePod as a music speaker. When cleaning, my wife and I like playing songs throughout the home.

Or, when we’re having a large group of friends over that results in everyone spreading out a bit, the party never stops just because you’re in a different room. The living room might be the center of the party, but you can still hear what’s being played in the kitchen, den, bathroom, or even outside on the porch.

The HomePod mini in the garage is attached to a battery pack that lets us carry it anywhere. It means cookouts get to benefit from the whole-home audio setup.

When everything is playing in sync at the right volumes, it is easy to wonder from room to room and feel like you’re surrounded by music. Also, you don’t have to have it too loud to have the music still at a listenable volume while also carrying on conversation.

If you’ve never experienced whole-home audio, it’s quite the treat.

Finally, one other major use of the HomePods in our home is individual. I love putting my podcasts on while I shower, while my wife likes listening to thunderstorms while she sleeps.

Sometimes, I like to join my HomePod mini with hers so I can have the same rain sounds in my room as well.

None of this is quite so easy when dealing with different device ecosystems, even when AirPlay is involved. I grew increasingly frustrated with Sonos and trying to tie it to HomePods or other devices.

Now, it all just works.

HomePods aren’t just for listening to music or TV audio. They’re quite the little utility as a part of an Apple Home.

Sure, the gen-2 HomePod and HomePod mini work as Thread border routers, but that’s only the tip of the iceberg. Apple has worked to include them in various features for communication and security.

First, there’s a utility I believe very few people actually use called Intercom. It’s something we’ve tried to embrace more in the new home, and it’s proven interesting.

If you pick up any of your devices or speak to a HomePod, you can say “Intercom everywhere, dinner is ready” and it will go out to every device. The HomePods play your voice out loud instead of Siri reading it back.

Sending an Intercom to a single room or person is also possible. My wife could send me a message hands-free via Intercom directly to my office.

One of my favorite features is the use of HomePods as doorbell chimes. Instead of relying on a single loud physical chime, every HomePod in the house plays a sound.

Since I’ve done the work to name faces in my Photos app via my Contacts database, the chime is followed by Siri stating who just rang the doorbell.

This might seem like a rather mundane feature, but it means we get a chime even if we’re in the garage. Or, if I’m working in the office with music playing, the music is paused to play the chime.

Apple has another excellent feature for HomePods that I don’t believe is discussed enough. If a fire alarm goes off in your home, the HomePod will send an alert to your iPhone.

It’s simple, but can be incredibly useful in case of an emergency. Some security products sell devices that listen for such alarms, and this function is built into the HomePod.

HomePod & Siri

The entire purpose of the original HomePod was to give Siri a home base. It was the user’s connection to the smart assistant that usually dwelled in their iPhone.

The world has mostly moved on from these traditional assistants, and Apple soon will too. While many see the current ML version as lacking, I’m still quite happy with how I’m using it.

I use Siri often and I’ve often described myself as a Siri unicorn. The commands I use rarely run into issues and I’m quite happy with Siri controlling my music, smart home, and various other functions.

In case you were wondering, the Siri voting system really does work well when you’ve got a home full of Apple products. If you just say “Siri, turn on the lights,” every device that heard you, including your iPhone or iPad, will work to decide which one you were speaking to and perform the action.

Room-specific actions work great. “Turn the lights off” instead of “Turn the living room lights off” is totally fine as long as you’re speaking to the Living Room HomePods.

General inquiries are rarer for my use, but I still occasionally ask about the weather or create a reminder. I like that it is an option.

There’s no way of knowing what’s coming next with Siri now that AI will be involved. But as long as I can still reliably control my home and my music, I’m happy.

Limitations with HomePods

I’ve already covered how Siri isn’t quite up to par with other modern systems, but I do believe that is changing soon. In the meantime, there are some other areas I’d like to see improved.

First, this is only tangentially related to HomePods, but Apple needs to figure out how to manage Listening History better. I only want my HomePods to keep track of what I listen to when I’m AirPlaying or specifically asking for music during work.

What I don’t want is sleep sounds, ambient music, or other such audio to be present in my music history. I’d love to have some classical or video game music playing in the kitchen as part of an automation, but I don’t want it dominating my recommendations.

Sure, I can turn off the HomePod’s ability to contribute to my algorithm altogether, but that shouldn’t be my only option. If I want Animal Crossing music to come out of my speaker at 1 p.m., I shouldn’t have to switch off the algorithm manually.

I’d also like to see Apple enhance what HomePods can do when acting as a home theater system. Of course, while a HomePod soundbar that’s also an Apple TV would be great, I’d simply settle for more speakers in a setup.

Instead of just two HomePods in a stereo pair, I should be able to have two in the front and two in the sides or back for even better surround.

Such a system could even work with Dolby Atmos. I think it makes total sense for Apple to implement such a feature, as it would mean selling four HomePods instead of just two for a single setup.

I’m not sure much will actually change with HomePods soon, even with a new generation. The rumored Home Hub could add an interesting aspect to Apple Home, but more on that another day.

Yes, that HomePod mini snail is available online in some places. Here’s one for $47.

Tech

Snap says its $400M deal with Perplexity ‘amicably ended’

Snap no longer has a deal with Perplexity, the company revealed on Wednesday as part of its quarterly earnings report. The deal, announced last November, would have seen Perplexity’s AI search engine integrated directly into Snapchat. Perplexity was set to pay Snap $400 million in cash and equity over one year as part of the deal.

Snap said that the companies “amicably ended the relationship in Q1″ and that its sales guidance “assumes no contribution from Perplexity.” When Snap announced the deal as part of its third-quarter earnings last year, it said it expected revenue from the partnership to begin contributing to its financials in 2026.

The deal would have seen Perplexity integrated into Snapchat’s “Chat” interface, allowing users to ask questions and receive conversational answers directly within the app.

Snap CEO Evan Spiegel said at the time of the announcement that the deal reflected the company’s vision to use AI to enhance discovery on Snapchat, and that Snap was looking forward to “collaborating with more innovative partners in the future.”

Perplexity did not immediately respond to TechCrunch’s request for comment.

Snap revealed on Wednesday that Snapchat’s global daily active users (DAU) rose 5% year-over-year to 483 million, while monthly active users (MAU) also grew 5% to reach 965 million. The company attributed the growth to new features across the app, including Snap Map and its Lenses AR filters.

“In Q1, we returned to growth in daily active users, accelerated revenue growth, expanded margins, and generated strong free cash flow,” Spiegel said in a press release. “We remain focused on disciplined execution as we invest in Specs and our longterm opportunity in intelligent eyewear and look forward to sharing more at AWE on June 16th.”

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

Snap said in April that it was laying off roughly 16% of its global workforce, impacting around 1,000 full-time employees, citing advancements in AI for the cuts.

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

-

NewsBeat3 days ago

NewsBeat3 days agoChannel 5 – All Creatures Great and Small series 7 new post

-

Entertainment7 days ago

Entertainment7 days agoInsider Claims Reason Behind Key & Peele Split

-

Tech5 days ago

Tech5 days agoTrump’s 25% EU auto tariff breaches Turnberry Agreement that also covers semiconductors and digital trade

-

Sports5 days ago

Sports5 days agoPaul Scholes issues Marcus Rashford reality check as agreement emerges over Man United star

-

Entertainment5 days ago

Entertainment5 days agoMet Gala 2026 Rumored Guest List Is Turning Heads

-

Business6 days ago

Business6 days agoStrait of Hormuz Blockade Persists Amid US-Iran Standoff, Sending Oil Prices Soaring

-

Entertainment7 days ago

Entertainment7 days agoCelebrities Who Are Attending the 2026 Met Gala Event

-

Entertainment5 days ago

New on Prime Video in May 2026 — Full List of Movies and Shows

-

Sports5 days ago

Sports5 days agoCavaliers vs. Raptors Game 6 live score, updates, highlights from 2026 NBA playoffs first-round series

-

Sports5 days ago

Sports5 days agoDavid Benavidez responds to team Canelo saying the fight will never happen

-

Entertainment5 days ago

Entertainment5 days agoKylie Jenner Hit With Second Lawsuit From Ex-Housekeeper

-

Sports5 days ago

Sports5 days agoIPL 2026: ‘Love you darling’- Hardik Pandya’s reaction to MS Dhoni steals the show |Watch | Cricket News

-

Entertainment5 days ago

Entertainment5 days agoYoung and the Restless Next Week: Cane Arrested & Matt’s Deadly New Scheme!

-

Sports7 days ago

Sports7 days agoSaudi Arabia set to withdraw LIV Golf funding after 2026 season, per reports

-

Tech7 days ago

Tech7 days agoMark Zuckerberg Says Meta Is Working On AI Agents For Personal And Business Use

-

Tech6 days ago

Tech6 days agoMeta ends Sama contract after Kenyan workers report seeing intimate footage from Ray-Ban smart glasses users

-

Sports5 days ago

Sports5 days agoPlane crash in Wimberley, Texas kills 5 pickleball players at tournament

-

Sports7 days ago

Sports7 days agoManchester United 2025/26 squad review – keep, sell, loan, release

-

Sports7 days ago

Sports7 days agoStalemate in Madrid as resilient Arsenal Blunt Atletico Madrid in semi-final clash – Sports

-

Sports5 days ago

Sports5 days agoLeBron James adds to his legacy as Lakers eliminate dysfunctional Rockets

You must be logged in to post a comment Login