Tech

Mistral AI launches Forge to help companies build proprietary AI models, challenging cloud giants

Mistral AI on Monday launched Forge, an enterprise model training platform that allows organizations to build, customize, and continuously improve AI models using their own proprietary data — a move that positions the French AI lab squarely against the hyperscale cloud providers in one of the most consequential and least understood markets in enterprise technology.

The announcement caps a remarkably aggressive week for Mistral, which also released its Mistral Small 4 model, unveiled Leanstral — an open-source code agent for formal verification — and joined the newly formed Nvidia Nemotron Coalition as a co-developer of the coalition’s first open frontier base model. Together, these moves paint the picture of a company that is no longer content to compete on model benchmarks alone and is instead racing to become the infrastructure backbone for organizations that want to own their AI rather than rent it.

Forge goes significantly beyond the fine-tuning APIs that Mistral and its competitors have offered for the past year. The platform supports the full model training lifecycle: pre-training on large internal datasets, post-training through supervised fine-tuning, DPO, and ODPO, and — critically — reinforcement learning pipelines designed to align models with internal policies, evaluation criteria, and operational objectives over time.

“Forge is Mistral’s model training platform,” said Elisa Salamanca, head of product at Mistral AI, in an exclusive interview with VentureBeat ahead of the launch. “We’ve been building this out behind the scenes with our AI scientists. What Forge actually brings to the table is that it lets enterprises and governments customize AI models for their specific needs.”

Why Mistral says fine-tuning APIs are no longer enough for serious enterprise AI

The distinction Mistral is drawing — between lightweight fine-tuning and full-cycle model training — is central to understanding why Forge exists and whom it serves.

For the past two years, most enterprise AI adoption has followed a familiar pattern: companies select a general-purpose model from OpenAI, Anthropic, Google, or an open-source provider, then apply fine-tuning through a cloud API to adjust the model’s behavior for a narrow set of tasks. This approach works well for proof-of-concept deployments and many production use cases. But Salamanca argues that it fundamentally plateaus when organizations try to solve their hardest problems.

“We had a fine-tuning API relying on supervised fine-tuning. I think it was kind of what was the standard a couple of months ago,” Salamanca told VentureBeat. “It gets you to a proof-of-concept state. Whenever you actually want to have the performance that you’re targeting, you need to go beyond. AI scientists today are not using fine-tuning APIs. They’re using much more advanced tools, and that’s what Forge is bringing to the table.”

What Forge packages, in Salamanca’s telling, is the training methodology that Mistral’s own AI scientists use internally to build the company’s flagship models — including data mixing strategies, data generation pipelines, distributed computing optimizations, and battle-tested training recipes. She drew a sharp line between Forge and the open-source tools and community tutorials that are freely available today.

“There’s no platform out there that provides you real-world training recipes that work,” Salamanca said. “Other open-source repositories or other tools can give you generic configurations or community tutorials, but they don’t give you the recipe that’s been validated — that we’ve been doing for all of our flagship models today.”

From ancient manuscripts to hedge fund quant languages, early customers reveal what off-the-shelf AI can’t do

The obvious question facing any product like Forge is demand. In a market where GPT-5, Claude, Gemini, and a growing fleet of open-source models can handle an enormous range of tasks, why would an enterprise invest the time, compute, and expertise required to train its own model from scratch?

Salamanca acknowledged the question head-on but argued that the need emerges quickly once companies move beyond generic use cases. “A lot of the existing models can get you very far,” she said. “But when you’re looking at what’s going to make you competitive compared to your competition — everyone can adopt and use the models that are out there. When you want to go a step beyond that, you actually need to create your own models. You need to leverage your proprietary information.”

The real-world examples she cited illustrate the edges of the current model ecosystem. In one case, Mistral worked with a public institution that had ancient manuscripts with missing text from damaged sections. “The models that were available were not able to do this because they’ve never seen the data,” Salamanca explained. “Digitization was not very good. There were some unique patterns and characters, and so we actually created a model for them to fill in the spans. This is now used by their researchers, and it’s accelerating their publication and understanding of these documents.”

In another engagement, Mistral partnered with Ericsson to customize its Codestral model for legacy-to-modern code translation. Ericsson, Salamanca said, has built up half a decade of proprietary knowledge around an internal calling language — a codebase so specialized that no off-the-shelf model has ever encountered it. “The concrete impact is like turning a year-long manual migration process, where each engineer needs six months of onboarding, to something that’s really more scalable and faster,” she said.

Perhaps the most telling example involves hedge funds. Salamanca described working with financial firms to customize models for proprietary quantitative languages — the kind of deeply guarded intellectual property that these firms keep on-premises and never expose to cloud-hosted AI services. Using Forge’s reinforcement learning capabilities, Mistral helped one hedge fund develop custom benchmarks and then trained the model to outperform on them, producing what Salamanca called “a unique model that was able to give them the competitive edge that was needed.”

How Forge makes money: license fees, data pipelines, and embedded AI scientists

Forge’s business model reflects the complexity of enterprise model training. According to Salamanca, it operates across several revenue streams. For customers who run training jobs on their own GPU clusters — a common requirement in highly regulated or IP-sensitive industries — Mistral does not charge for compute. Instead, the company charges a license fee for the Forge platform itself, along with optional fees for data pipeline services and what Mistral calls “forward-deployed scientists” — embedded AI researchers who work alongside the customer’s team.

“No competitor out there today is kind of selling this embedded scientist as part of their training platform offering,” Salamanca said.

This model has clear echoes of Palantir’s early playbook, where forward-deployed engineers served as the critical bridge between powerful software and the messy reality of enterprise data. It also suggests that Mistral recognizes a fundamental truth about the current state of enterprise AI: the technology alone is not enough. Most organizations lack the internal expertise to design effective training recipes, curate data at scale, or navigate the treacherous optimization landscape of distributed GPU training.

The infrastructure itself is flexible. Training can happen on Mistral’s own clusters, on Mistral Compute (the company’s dedicated infrastructure offering), or entirely on-premises within the customer’s own data centers. “We have all these different cases, and we support everything,” Salamanca said.

Keeping proprietary data off the cloud is Forge’s sharpest selling point

One of the sharpest points of differentiation Mistral is pressing with Forge is data privacy. When customers train on their own infrastructure, Salamanca emphasized that Mistral never sees the data at all.

“It’s on their clusters, it’s with their data — we don’t see anything of it, and so it’s completely under their control,” she said. “I think this is something that sets us apart from the competition, where you actually need to upload your data, and you have a black box effect.”

This matters enormously in sectors like defense, intelligence, financial services, and healthcare, where the legal and reputational risks of exposing proprietary data to a third-party cloud service can be deal-breakers. Mistral has already partnered with organizations including ASML, DSO National Laboratories Singapore, the European Space Agency, Home Team Science and Technology Agency Singapore, and Reply — a roster that suggests the company is deliberately targeting the most data-sensitive corners of the enterprise market.

Forge also includes data pipeline capabilities that Mistral has developed through its own model training: data acquisition, curation, and synthetic data generation. “Data is a critical piece of any training job today,” Salamanca said. “You need to have good data. You need to have a good amount of data to make sure that the model is going to be good performing. We’ve acquired, as a company, really great knowledge building out these data pipelines.”

In the age of AI agents, Mistral argues that custom models still matter more than MCP servers

The timing of Forge’s launch raises an important strategic question. The AI industry in 2026 has been consumed by agents — autonomous AI systems that can use tools, navigate multi-step workflows, and take actions on behalf of users. If the future belongs to agents, why does the underlying model matter? Can’t companies simply plug into the best available frontier model through an MCP server or API and focus their energy on orchestration?

Salamanca pushed back on this framing with conviction. “The customers that we’ve been working on — some of these specific problems are things that no MCP server would ever solve,” she said. “You actually need that intelligence. You actually need to create that model that will help you solve your most critical business problem.”

She also argued that model customization is essential even in purely agentic architectures. “There are some agentic behaviors that you need to bring to the model,” Salamanca said. “It can be about reasoning patterns, specific types of documentation, making sure that you have the right reasoning traces. Even in these cases where people are going completely agentic, you still need model customization — like reinforcement learning techniques — to actually get the right level of performance.”

Mistral’s press release makes this connection explicit, arguing that custom models make enterprise agents more reliable by providing deeper understanding of internal environments: more precise tool selection, more dependable multi-step workflows, and decisions that reflect internal policies rather than generic assumptions.

The platform also supports an “agent-first” design philosophy. Forge exposes interfaces that allow autonomous agents — including Mistral’s own Vibe coding agent — to launch training experiments, find optimal hyperparameters, schedule jobs, and generate synthetic data. “We’ve actually been building Forge in an AI-native way,” Salamanca said. “We’re already testing out how autonomous agents can actually launch training experiments.”

Mistral Small 4, Leanstral, and the Nvidia coalition: the week that redefined the company’s ambitions

To fully appreciate Forge’s significance, it helps to view it alongside the other announcements Mistral made in the same week — a barrage of releases that together represent the most ambitious expansion in the company’s short history.

Just yesterday, Mistral released Leanstral, the first open-source code agent for Lean 4, the proof assistant used in formal mathematics and software verification. Leanstral operates with just 6 billion active parameters and is designed for realistic formal repositories — not isolated math competition problems. On the same day, Mistral launched Mistral Small 4, a mixture-of-experts model with 119 billion total parameters but only 6 billion active per query, running 40 percent faster than its predecessor while handling three times more queries per second. Both models ship under the Apache 2.0 license — the most permissive open-source license in wide use.

And then there is the Nvidia Nemotron Coalition. Announced at Nvidia’s GTC conference, the coalition is a first-of-its-kind collaboration between Nvidia and a group of AI labs — including Mistral, Perplexity, LangChain, Cursor, Black Forest Labs, Reflection AI, Sarvam, and Thinking Machines Lab — to co-develop open frontier models. The coalition’s first project is a base model co-developed specifically by Mistral AI and Nvidia, trained on Nvidia DGX Cloud, which will underpin the upcoming Nvidia Nemotron 4 family of open models.

“Open frontier models are how AI becomes a true platform,” said Arthur Mensch, cofounder and CEO of Mistral AI, in Nvidia’s announcement. “Together with Nvidia, we will take a leading role in training and advancing frontier models at scale.”

This coalition role is strategically significant. It positions Mistral not merely as a consumer of Nvidia’s compute infrastructure but as a co-creator of the foundational models that the broader ecosystem will build upon. For a company that is still a fraction of the size of its American competitors, this is an outsized seat at the table.

Forge takes aim at Amazon, Microsoft, and Google — and says they can’t go deep enough

Forge enters a market that is already crowded — at least on the surface. Amazon Bedrock, Microsoft Azure AI Foundry, and Google Cloud Vertex AI all offer model training and customization capabilities. But Salamanca argued that these offerings are fundamentally limited in two respects.

First, they are cloud-only. “In one set of cases, it’s very easy to answer — they want to run this on their premises, and so all these tools that are available on the cloud are just not available for them,” Salamanca said. Second, she argued that the hyperscalers’ training tools largely offer simplified API interfaces that don’t provide the depth of control that serious model training requires.

There is also the dependency question. Salamanca described digital-native companies that had built products on top of closed-source models, only to have a new model release — more verbose than its predecessor — crash their production pipelines. “When you’re relying on closed-source models, you are also super dependent on the updates of the model that have side effects,” she warned.

This argument resonates with the broader sovereignty narrative that has powered Mistral’s rise in Europe and beyond. The company has positioned itself as the alternative for organizations that want to own their AI stack rather than lease it from American hyperscalers. Forge extends that argument from inference to training: not just running models you own, but building them in the first place.

The open-source foundation matters here, too. Mistral has been releasing models under permissive licenses since its founding, and Salamanca emphasized that the company is building Forge as an open platform. While it currently works with Mistral’s own models, she confirmed that support for other open-source architectures is planned. “We’re deeply rooted into open source. This has been part of our DNA since the beginning, and we have been building Forge to be an open platform — it’s just a question of a matter of time that we’ll be opening this to other open-source models.”

A co-founder’s departure to xAI underscores why Mistral is turning expertise into a product

The timing of Forge’s launch also arrives against a backdrop of fierce talent competition. As FinTech Weekly reported on March 14, Devendra Singh Chaplot — a co-founder of Mistral AI who headed the company’s multimodal group and contributed to training Mistral 7B, Mixtral 8x7B, and Mistral Large — left to join Elon Musk’s xAI, where he will work on Grok model training. Chaplot had previously also been a founding member of Thinking Machines Lab, the AI startup founded by former OpenAI CTO Mira Murati.

The loss of a co-founder is never insignificant, but Mistral appears to be compensating with institutional capability rather than individual brilliance. Forge is, in essence, a productization of the company’s collective training expertise — the recipes, the pipelines, the distributed computing optimizations — in a form that can scale beyond any single researcher. By packaging this knowledge into a platform and pairing it with forward-deployed scientists, Mistral is attempting to build a durable competitive asset that doesn’t walk out the door when a key hire departs.

Mistral’s big bet: the companies that own their AI models will be the ones that win

Forge is a bet on a specific theory of the enterprise AI future: that the most valuable AI systems will be those trained on proprietary knowledge, governed by internal policies, and operated under the organization’s direct control. This stands in contrast to the prevailing paradigm of the past two years, in which enterprises have largely consumed AI as a cloud service — powerful but generic, convenient but uncontrolled.

The question is whether enough enterprises will be willing to make the investment. Model training is expensive, technically demanding, and requires sustained organizational commitment. Forge lowers the barriers — through its infrastructure automation, its battle-tested recipes, and its embedded scientists — but it does not eliminate them.

What Mistral appears to be banking on is that the organizations with the most to gain from AI — the ones sitting on decades of proprietary knowledge in highly specialized domains — are precisely the ones for whom generic models are least sufficient. These are the companies where the gap between what a general-purpose model can do and what the business actually needs is widest, and where the competitive advantage of closing that gap is greatest.

Forge supports both dense and mixture-of-experts architectures, accommodating different trade-offs between performance, cost, and operational constraints. It handles multimodal inputs. It is designed for continuous adaptation rather than one-time training, with built-in evaluation frameworks that let enterprises test models against internal benchmarks before production deployment.

For the past two years, the enterprise AI playbook has been straightforward: pick a model, call an API, ship a feature. Mistral is now asking a harder question — whether the organizations willing to do the difficult, expensive, unglamorous work of training their own models will end up with something the API-callers never get.

An unfair advantage.

Tech

Handsome speaker/amp hybrids with excellent clarity

A new company needs to make a strong first impression. For Fender Audio, a new outfit owned by the legendary Fender Musical Instruments Corporation but operated by Riffsound, that introduction comes in the form of two speakers and a set of headphones. The Elie 6 ($300) and Elie 12 ($400) are portable Bluetooth speakers with sophisticated designs and unique features, offering similar functionality in two different sizes. These devices are essentially speaker/amplifier hybrids, since they both have ¼-inch/XLR combo inputs among their connections. Despite the unique mix of connectivity, the speakers still need to sound good and work well to compete with the many excellent portable options available today.

The Elie 12 is a large, powerful portable speaker with plenty of inputs, but weight and battery life could be deal breakers for some.

- Excellent audio clarity

- Four inputs

- Refined design

- IP rated but there’s exposed wood

- Big and heavy

- No app for customization

- Battery life lags behind top competition

The Elie 6 punches above its size in audio clarity and connectivity, but it’s heavy for such a small speaker and some competitors offer better battery life.

- Excellent audio clarity

- Four inputs

- Refined design

- IP rated but there’s exposed wood

- Limited playback controls

- No app for customization

- Battery life lags behind top competition

The good: Design, inputs and overall clarity

The first time I saw the Elie 6 and Elie 12 in person, my eyes were immediately drawn to the design. These certainly don’t look like your typical Bluetooth speakers. That’s due in large part to the refined, almost retro look that’s consistent across both models. The Elie duo are products you won’t mind showing off, while many portable speakers are too flashy or brightly colored to be kept in a prominent place.

All of the onboard controls are clearly labeled physical buttons or dials, so you’re not left wondering how anything works. Around back, both the Elie 6 and Elie 12 have combo ¼-inch/XLR inputs (with 48V phantom power) as well as buttons for two wireless inputs and a 3.5mm line out. That combo jack means both speakers can double as amps, and the dual wireless connections allow you to sync microphones for karaoke sessions or hosting trivia night. This expanded functionality speaks to Fender’s history as a guitar icon, but it also gives the Elie speakers an upper hand over much of the competition at these sizes. Typically if you want these types of inputs, you’ll need to consider a much larger party box-style speaker to get them.

Before I move on from the controls and inputs, I need to mention the dedicated three-way mode switch for single, stereo and multi-speaker uses. This is so much easier than what’s on most portable speakers, which usually entails some weird dance with Bluetooth pairing or an app to sync multiple units together. Enlisting a physical switch so you know exactly where things stand is a much better and faster experience.

Some of the Elie 12’s controls (Billy Steele for Engadget)

In terms of sound, the best thing the Elie 6 and Elie 12 speakers have going for them is their overall clarity. The crisp, clear quality gives these Fender Audio units an advantage over the competition at these sizes. Throughout a range of genres — including bluegrass, alt-rock and heavy metal — both the Elie 6 and Elie 12 handled the varied styles with ease. The Elie 12 has twice the speakers as the Elie 6 (two full range, two tweeters and two subwoofers) and double the power output at 120 watts. So, of course, there’s more volume and bassy oomph on the larger speaker.

Both the Elie 6 and Elie 12 have a wider soundstage than many speakers of similar sizes. You can really hear this on American Football’s debut album, where the guitars ring clear, interlaced with drums while the vocals float on top. All of the elements stand on their own, but are seamlessly blended throughout every track. The Elie 12 features more bass and volume, but the overall sound quality, and importantly, clarity, is pretty similar for both speakers. I did notice more instrumental separation on the larger model though, so the album is a bit more immersive there.

The not so great: Controls, no app and battery life

While I appreciate the physical controls on the Elie 6 and Elie 12, the playback options are limited, which means you’ll be reaching for your phone often. There’s only a play/pause button on both speakers, and no controls for skipping tracks. And no, you can’t skip forwards or backwards with a double or triple press on the play/pause button. Plus, only the Elie 12 has bass and treble dials, so there’s currently no option for adjusting the sound on the Elie 6.

That’s because Fender Audio is still working on an app for its speakers and headphones. The lack of customization was an issue for me on the Mix headphones, and it continues to be one here. Customers need access to features and settings on devices like this, even if a company decides to offer audio presets instead of a full EQ. Some type of visual interface would also help when you’re using a few of those inputs at once. A basic mult-channel mixer maybe? Hey, a boy can dream.

Going back to the controls, the volume dials on both speakers could use refining. First, a listenable volume doesn’t happen until halfway. Anything below that and that excellent clarity isn’t present, and you can’t really hear the content well at all. There’s plenty of power at 50 percent and above, so that’s not a concern, but the control needs to be recalibrated for more even increases. What’s more, adjustments are slightly delayed: when you turn the dial, it takes a second or two for the speaker to catch up. To me, it feels like that should be instantaneous.

The input panel on the Elie 6 (Billy Steele for Engadget)

When it’s time to venture outdoors, both the Elie 6 and Elie 12 are IP54 rated for dust and water splashes. However, both speakers have a wood panel on top, which certainly won’t withstand much moisture. As such, I find the IP ratings confusing, since it’s obvious the entirety of the designs aren’t up to that task. If you’re careful about water though, both speakers have enough volume for open-air use.

One other consideration for the Elie 6 and 12 is their weight. The smaller speaker weighs just over five pounds, while the larger model is a whopping 8.8 pounds. For comparison, the Sonos Play is just 2.87 pounds and JBL’s Xtreme 4 tips the scales at 4.63 pounds. This means the Elie 6 and 12 are portable options, but they aren’t the grab-and-go type of speakers some of the competition offers — especially when weight matters.

Battery life is one other area the Elie 6 and Elie 12 fall behind some of their competition. The smaller Elie 6 offers 15 hours of use while the larger Elie 12 should last up to 18 hours. That sounds like more than enough since it’s longer than a full day, right? Well, JBL Bluetooth speakers at comparable prices last 24 and 34 hours. The new Sonos Play is rated at 24 hours, and one of my personal favorites, the Bose SoundLink Max, lasts up to 20 hours.

Wrap-up

The Elie 6 (left) and Elie 12 (right) (Billy Steele for Engadget)

There’s no doubt Fender Audio built two versatile, great-looking speakers here. Both the Elie 6 and Elie 12 are capable devices, and you don’t have to sacrifice much if you opt for the smaller of the two. The unique collection of inputs is typically only available on much larger speakers and the overall sound quality is well-suited for a range of genres.

Speakers like these really need an app though, especially when a company offers four inputs to juggle. I’m sure would-be customers would also like to dial in the EQ to their preferences, too. Sure, you can find longer battery life elsewhere, but the blend of design, sound and connectivity stands out at these prices. I’d call that a solid first impression.

Tech

iPhone Fold screens will be made exclusively by Samsung because Apple has no choice

A new report claims that Apple has had to agree to a three-year Samsung Display contract because no other firm can make the screens needed for the iPhone Fold.

Render of a possible iPhone Fold design – image credit: AppleInsider

Apple likes having multiple suppliers, both to avoid over-reliance on any one source, and to play them off against each other in order to lower prices. Now a year ago rumor about Samsung Display producing iPhone Fold screens is reportedly confirmed, and the deal favors the supplier.

According to The Elec, Samsung Display proposed a three-year exclusive deal to supply the foldable OLED panels for the iPhone Fold. Reportedly, at present BOE’s foldable panels as used by Huawei are considered inadequate, and Apple’s other main supplier, LG Display, doesn’t yet make folding screens for smartphones.

Continue Reading on AppleInsider | Discuss on our Forums

Tech

Planet Labs Tests AI-Powered Object Detection On Satellite

BrianFagioli writes: Artificial intelligence has now run directly on a satellite in orbit. A spacecraft about 500km above Earth captured an image of an airport and then immediately ran an onboard AI model to detect airplanes in the photo. Instead of acting like a simple camera in space that sends raw data back to Earth for later analysis, the satellite performed the computation itself while still in orbit.

The system used an NVIDIA Jetson Orin module to run the object detection model moments after the image was taken. Traditionally, Earth observation satellites capture images and transmit large datasets to ground stations where computers process them hours later. Running AI directly on the satellite could reduce that delay dramatically, allowing spacecraft to analyze events like disasters, infrastructure changes, or aircraft activity almost immediately. “This success is a glimpse into the future of what we call Planetary Intelligence at scale,” said Kiruthika Devaraj, VP of Avionics & Spacecraft Technology. “By running AI at the edge on the NVIDIA Jetson platform, we can help reduce the time between ‘seeing’ a change on Earth and a customer ‘acting’ on it, while simultaneously minimizing downlink latency and cost. This shift toward integrated AI at the edge is a technological leap that can help differentiate solutions like Planet’s Global Monitoring Service (GMS), providing valuable insights for our customers and enabling rapid response times when it matters most.”

Tech

Intel is in talks with Google and Amazon to power AI chips with new packaging tech

Packaging has shifted from a back-end afterthought to the center of Intel’s manufacturing strategy as AI workloads push designers to stitch together many specialized dies into a single system. At its Rio Rancho, New Mexico, site – once home to a shuttered Fab 9 that sat idle for years –…

Read Entire Article

Source link

Tech

Less than 1 in 2 of S’pore private uni grads secured full-time jobs

Slightly more than 20% remained unemployed

Private institution graduates faced a tight job market in 2025, with fewer than half landing full-time employment. However, median salaries remained steady, according to the latest Private Education Institution (PEI) Graduate Employment Survey released by SkillsFuture Singapore (SSG) on Wednesday (Apr 8).

Only 46.9% of fresh graduates found full-time work, a figure similar to 2024’s 46.4%. At the same time, the median gross monthly wages of those in full-time jobs in 2025 remained the same as in 2024, at S$3,500.

In comparison, of the 83.4% of graduates from autonomous universities who found employment in 2025, 74.4% secured full-time positions, compared to 79.4% in 2024. The median gross monthly salary for graduates who secured jobs within six months remained steady at S$4,500.

The PEI Graduate Employment survey was conducted between Oct 2025 and Jan 2026, across 3,800 participants of the 6,150 fresh graduates of full-time bachelor’s degree programmes across 26 private institutions. This includes the Singapore Institute of Management and PSB Academy.

Overall, among the 2,600 graduates surveyed who were working or seeking employment, 78.9% of them landed a job within six months of graduation, a slight uptick from 78.6% in 2024.

Almost one-fourth of graduates (24%) found part-time or temporary work in 2025, similar to the year before. Those who were doing freelance work grew slightly at 5.1%, up from 4.2% in 2024.

Meanwhile, 21.1% remained unemployed. 2.4% had accepted offers but not yet started, and a small 0.6% were striking out on their own as entrepreneurs.

Not all private university graduates fared the same

Among the surveyed private university graduates, not all fared equally.

Health science graduates led in both employment and pay: 76.5% are in full-time permanent roles, with median salaries at S$3,935. This is followed by the Sciences at 57.5% employment, while engineering trailed at 49.4%. Information and digital technologies tied engineering for the second-highest pay at S$3,900.

When it came to salaries by institution, James Cook University graduates took home the most at S$3,700 a month, followed by those from the Management Development Institute of Singapore at S$3,580.

SSG’s director-general for private education, Angela Tan, struck an optimistic note.

“The employment outcomes for PEI graduates have remained steady, reflecting their adaptability and readiness for the workforce in today’s fast-changing job market,” she said, adding that SSG will continue working with partners to provide skills and career guidance.

- Read other articles we’ve written on Singapore’s current affairs here.

Featured Image Credit: Pexels

Tech

Dyson Spot+Scrub Ai Robot Vacuum Review (2026)

The vacuum failed to clean up two of the three test Cheerios that I strategically placed around my main floor to see which nooks and crannies it could reach. Both had small overhangs (one was an Ikea Billy bookshelf and the other a freestanding cabinet) that the Spot+Scrub couldn’t get under. It doesn’t have an extendable arm to help fix the issue.

I ran into a similar issue upstairs. My bathroom cabinets are the same basic builder-grade set as my downstairs kitchen, but the Spot+Scrub managed to wedge itself underneath my primary bathroom cabinets and then struggled to remove itself. It did the same thing with my low bed frame, forcing itself underneath after many attempts, and then it couldn’t get out. I marked the bed as a no-cleaning zone in the app, but, like the kitchen island, since the Dyson map doesn’t know where my bed is, I had to guesstimate, leaving a good portion of the bedroom without any vacuuming.

Photograph: Nena Farrell

Still, it did a good job of cleaning my upstairs carpet. While this robot vacuum can map multiple floors, the operation wasn’t as completely smooth as I had hoped. When the Spot+Scrub finishes cleaning on a floor without a base station, it’ll return to the starting position. Once you move it to the dock, it’ll just start charging without emptying. That means when it goes to work on my main floor, the mop pad isn’t cleaned, dry debris is still left in the vacuum, and the dirty water chamber isn’t dried. It’s almost like it resets itself, forgetting its last function.

So if you’re using it on multiple floors, save the one with the docking station for last. I ended up having the Spot+Scrub clean my main floor after the upstairs, just to make sure the vacuum would be fully emptied and dried. You also have to activate the cleaning function while the robot is still in its docking station, so it can prep for mopping, then pause the cleaning (you can do this in the Dyson app or on the vacuum itself) once it rolls out of the docking station to then lift and move it to another floor.

AI Wars

Photograph: Nena Farrell

Of course, there’s the feature this vacuum is named for: its built-in AI that spots stains and scrubs them away. The Spot+Scrub uses an HD camera to inspect the floors, then uses AI to analyze what it sees and know when to scrub trouble spots. It’ll go back and forth over those spots, and the AI will calculate how often it needs to do so to remove the stain.

Tech

CIA Reportedly Used Secret Quantum Tool To Find Downed Airman in Iran

alternative_right quotes a report from the New York Post: The CIA used a futuristic new tool called “Ghost Murmur” to find and rescue the second American airman who was shot down in southern Iran, The Post has learned. The secret technology uses long-range quantum magnetometry to find the electromagnetic fingerprint of a human heartbeat and pairs the data with artificial intelligence software to isolate the signature from background noise, two sources close to the breakthrough said. It was the tool’s first use in the field by the spy agency — and was alluded to Monday afternoon by President Trump and CIA Director John Ratcliffe at a White House briefing. “It’s like hearing a voice in a stadium, except the stadium is a thousand square miles of desert,” a source briefed on the program told The Post. “In the right conditions, if your heart is beating, we will find you.” The relatively barren landscape made for “an ideal first operational use” of Ghost Murmur, the first source noted.

“Normally this signal is so weak that it can only be measured in a hospital setting with sensors pressed nearly against the chest,” the source said. “But advances in a field known as quantum magnetometry — specifically sensors built around microscopic defects in synthetic diamonds — have apparently made it possible to detect these signals at dramatically greater distances.”

“The capability is not omniscient. It works best in remote, low-clutter environments and requires significant processing time,” this person added.

Tech

This could be our first look at OnePlus’ upcoming gaming handheld

OnePlus has been everywhere in the news lately, and not always for the calmest reasons. Reports recently suggested the brand was pulling back from several major markets, right after the exit of its India CEO, which is a pretty big deal considering how important India is for the company. And yet, here it is, doing the exact opposite of slowing down.

Just this month, OnePlus dropped the Nord 6, and now fresh reports hint that it’s stepping into the handheld gaming space as well. It’s an interesting move, especially in a year that already feels like a turning point for the brand. If anything, this new direction makes it clear that OnePlus isn’t retreating, it’s reshuffling and trying something bold.

OnePlus just teased something that refuses to be boring

A well-known tipster, Digital Chat Station, has shared what appears to be the first look at OnePlus’s upcoming gaming handheld on Weibo. And from that single image, there’s already quite a bit to unpack. The device seems to lean into a clean, almost boxy design, with a square-ish profile that makes it stand out from the usual rounded handhelds. Interestingly, there’s also a rear camera module on the back panel, which adds a bit of curiosity to the mix.

Another tipster, Bald Panda, also on Weibo, claims the handheld could feature an 8-inch display and run on a MediaTek Dimensity chipset. While the exact processor hasn’t been confirmed yet, all signs point towards MediaTek being the brains behind it. As for the design, it’s got a sleek black finish paired with a contrasting purple hand rest, which honestly sounds like a bit of a flex. It gives the device a premium, slightly playful look, rather than going all-in on the usual serious gamer aesthetic.

I’m genuinely excited to see OnePlus step into a completely new space. It’s not an easy category to crack, and building credibility here will take time. That said, OnePlus doesn’t exactly have a history of doing things halfway. When it commits to something, it usually brings a certain level of polish and thoughtfulness to the table. So while this handheld might be new territory for the brand, there’s a good chance it won’t feel like a first attempt. If anything, this could be OnePlus testing its limits a little, and that’s where things tend to get interesting.

Tech

This Sennheiser Momentum 4 deal slashes $250 off the best ANC headphones around

The Sennheiser Momentum 4 has earned a reputation as one of the most capable over-ear headphones at its price point, and this discount makes a strong case for picking one up right now.

A $250 discount makes that case even harder to ignore, bringing the Sennheiser Momentum 4 Wireless down to $199.95 from its original $449.95 on a pair of noise-cancelling headphones that genuinely punch above their discounted price.

Sennheiser’s Momentum 4 stays locked at its Amazon sale price, giving you 56% off high‑end, over‑ear audio

The Sennheiser Momentum 4 has a reputation as one of the most capable headphones at its price point, and this discount makes them even more tempting.

The 42mm dynamic drivers are a meaningful part of that story, delivering a frequency range of 6Hz to 22kHz that captures both the deep sub-bass rumble in electronic music and the fine harmonic detail in acoustic recordings that smaller drivers tend to smear.

AptX Adaptive Bluetooth helps maintain that audio quality wirelessly, adjusting the bitrate dynamically so that the signal holds up even when you are moving through environments with heavy wireless interference, like a crowded commuter train or a busy airport.

Adaptive noise cancellation handles the environmental side of things, and the transparency mode lets you flip back into the world around you without removing the headphones, which matters when you are navigating a city or need to catch a platform announcement.

Four beamforming microphones handle calls with enough directional precision to suppress wind and ambient noise independently, so the person on the other end hears your voice rather than the background of wherever you happen to be.

Battery life is where the 4.5-star Momentum 4 genuinely separates itself from the competition in this category, with 60 hours of playback on a single charge, meaning most users will go weeks between top-ups under realistic daily use patterns.

The foldable design and included carry case make the headphones practical for travel as well, and the package also includes a USB-C cable and a 3.5mm to 2.5mm audio cable for wired listening when Bluetooth is not an option.

Sennheiser’s Smart Control Plus app rounds out the experience, giving you access to a parametric equaliser, preset sound modes, and granular controls over noise cancellation and transparency levels.

For commuters and frequent travellers who will lean on both the ANC and the battery capacity daily, the Momentum 4 at $199.95 represents one of the strongest value propositions currently available in the premium over-ear headphone market.

An excellent pair of wireless headphones that deliver a balanced, neutral presentation, long battery life and very good noise cancellation. The Sennheiser Momentum 4 Wireless all-round performance is excellent though the Sony WH-1000XM5 are better in most respects, and available for similar money

-

Great comfort

-

Clear, musical audio

-

Very good noise cancellation

-

Massive battery life

-

Excellent wireless performance

-

Functional look

-

Not the best ANC at this price

-

Beaten for call quality

Tech

Amazon S3 Files gives AI agents a native file system workspace, ending the object-file split that breaks multi-agent pipelines

AI agents run on file systems using standard tools to navigate directories and read file paths.

The challenge, however, is that there is a lot of enterprise data in object storage systems, notably Amazon S3. Object stores serve data through API calls, not file paths. Bridging that gap has required a separate file system layer alongside S3, duplicated data and sync pipelines to keep both aligned.

The rise of agentic AI makes that challenge even harder, and it was affecting Amazon’s own ability to get things done. Engineering teams at AWS using tools like Kiro and Claude Code kept running into the same problem: Agents defaulted to local file tools, but the data was in S3. Downloading it locally worked until the agent’s context window compacted and the session state was lost.

Amazon’s answer is S3 Files, which mounts any S3 bucket directly into an agent’s local environment with a single command. The data stays in S3, with no migration required. Under the hood, AWS connects its Elastic File System (EFS) technology to S3 to deliver full file system semantics, not a workaround. S3 Files is available now in most AWS Regions.

“By making data in S3 immediately available, as if it’s part of the local file system, we found that we had a really big acceleration with the ability of things like Kiro and Claude Code to be able to work with that data,” Andy Warfield, VP and distinguished engineer at AWS, told VentureBeat.

The difference between file and object storage and why it matters

S3 was built for durability, scale and API-based access at the object level. Those properties made it the default storage layer for enterprise data. But they also created a fundamental incompatibility with the file-based tools that developers and agents depend on.

“S3 is not a file system, and it doesn’t have file semantics on a whole bunch of fronts,” Warfield said. “You can’t do a move, an atomic move of an object, and there aren’t actually directories in S3.”

Previous attempts to bridge that gap relied on FUSE (Filesystems in USErspace), a software layer that lets developers mount a custom file system in user space without changing the underlying storage. Tools like AWS’s own Mount Point, Google’s gcsfuse and Microsoft’s blobfuse2 all used FUSE-based drivers to make their respective object stores look like a file system.

Warfield noted that the problem is that those object stores still weren’t file systems. Those drivers either faked file behavior by stuffing extra metadata into buckets, which broke the object API view, or they refused file operations that the object store couldn’t support.

S3 Files takes a different architecture entirely. AWS is connecting its EFS (Elastic File System) technology directly to S3, presenting a full native file system layer while keeping S3 as the system of record. Both the file system API and the S3 object API remain accessible simultaneously against the same data.

How S3 Files accelerates agentic AI

Before S3 Files, an agent working with object data had to be explicitly instructed to download files before using tools. That created a session state problem. As agents compacted their context windows, the record of what had been downloaded locally was often lost.

“I would find myself having to remind the agent that the data was available locally,” Warfield said.

Warfield walked through the before-and-after for a common agent task involving log analysis. He explained that a developer was using Kiro or Claude Code to work with log data, in the object only case they would need to tell the agent where the log files are located and to go and download them. Whereas if the logs are immediately mountable on the local file system, the developer can simply identify that the logs are at a specific path, and the agent immediately has access to go through them.

For multi-agent pipelines, multiple agents can access the same mounted bucket simultaneously. AWS says thousands of compute resources can connect to a single S3 file system at the same time, with aggregate read throughput reaching multiple terabytes per second — figures VentureBeat was not able to independently verify.

Shared state across agents works through standard file system conventions: subdirectories, notes files and shared project directories that any agent in the pipeline can read and write. Warfield described AWS engineering teams using this pattern internally, with agents logging investigation notes and task summaries into shared project directories.

For teams building RAG pipelines on top of shared agent content, S3 Vectors — launched at AWS re:Invent in December 2024 — layers on top for similarity search and retrieval-augmented generation against that same data.

What analysts say: this is not just a better FUSE

AWS is positioning S3 Files against FUSE-based file access from Azure Blob NFS and Google Cloud Storage FUSE. For AI workloads, the meaningful distinction is not primarily performance.

“S3 Files eliminates the data shuffle between object and file storage, turning S3 into a shared, low-latency working space without copying data,” Jeff Vogel, analyst at Gartner, told VentureBeat. “The file system becomes a view, not another dataset.”

With FUSE-based approaches, each agent maintains its own local view of the data. When multiple agents work simultaneously, those views can potentially fall out of sync.

“It eliminates an entire class of failure modes including unexplained training/inference failures caused by stale metadata, which are notoriously difficult to debug,” Vogel said. “FUSE-based solutions externalize complexity and issues to the user.”

The agent-level implications go further still. The architectural argument matters less than what it unlocks in practice.

“For agentic AI, which thinks in terms of files, paths, and local scripts, this is the missing link,” Dave McCarthy, analyst at IDC, told VentureBeat. “It allows an AI agent to treat an exabyte-scale bucket as its own local hard drive, enabling a level of autonomous operational speed that was previously bottled up by API overhead associated with approaches like FUSE.”

Beyond the agent workflow, McCarthy sees S3 Files as a broader inflection point for how enterprises use their data.

“The launch of S3 Files isn’t just S3 with a new interface; it’s the removal of the final friction point between massive data lakes and autonomous AI,” he said. “By converging file and object access with S3, they are opening the door to more use cases with less reworking.”

What this means for enterprises

For enterprise teams that have been maintaining a separate file system alongside S3 to support file-based applications or agent workloads, that architecture is now unnecessary.

For enterprise teams consolidating AI infrastructure on S3, the practical shift is concrete: S3 stops being the destination for agent output and becomes the environment where agent work happens.

“All of these API changes that you’re seeing out of the storage teams come from firsthand work and customer experience using agents to work with data,” Warfield said. “We’re really singularly focused on removing any friction and making those interactions go as well as they can.”

-

NewsBeat6 days ago

NewsBeat6 days agoSteven Gerrard disagrees with Gary Neville over ‘shock’ Chelsea and Arsenal claim | Football

-

Business6 days ago

Business6 days agoNo Jackpot Winner and $194 Million Prize Rolls Over

-

Fashion5 days ago

Fashion5 days agoWeekend Open Thread: Spanx – Corporette.com

-

Crypto World7 days ago

Crypto World7 days agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Business3 days ago

Business3 days agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Business4 days ago

Business4 days agoExpert Picks for Every Need

-

Sports4 days ago

Sports4 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Business6 days ago

Business6 days agoLogin and Checkout Issues Spark Merchant Frustration

-

Tech15 hours ago

Tech15 hours agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Business3 days ago

Business3 days agoNo Jackpot Winner, Prize to Climb to $231 Million

-

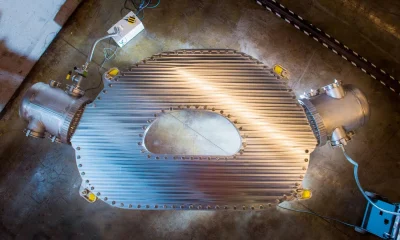

Tech6 days ago

Tech6 days agoCommonwealth Fusion Systems leans on magnets for near-term revenue

-

Fashion2 days ago

Fashion2 days agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Crypto World7 days ago

Crypto World7 days agoRipple rolls out enterprise crypto treasury platform for corporates

-

Tech7 days ago

Tech7 days agoDrawing Tablet Controls Laser In Real-Time

-

Crypto World7 days ago

Crypto World7 days agoWhy It’s Partnering, Not Issuing

-

Politics5 days ago

Wings Over Scotland | The quality of mercy

-

Sports7 days ago

Sports7 days agoSteal Gary Woodland’s subtle power move for longer drives

-

Tech7 days ago

Tech7 days agoBattery Tester Outperforms Cheaper Options

-

Business4 days ago

Business4 days agoAkebia Therapeutics, Inc. (AKBA) Discusses Pipeline Progress and Strategic Focus on Kidney Disease Treatments at R&D Day – Slideshow

-

Fashion20 hours ago

Fashion20 hours agoLet’s Discuss: DEI in 2026

You must be logged in to post a comment Login