Image: “The great exploit of lieutenant Warnefort 1916 England” by Gordon Crosby, public domain.

After all the crashing and burning of Imperial Germany’s Zeppelins in the later part of WWI – once the Brits managed to build interceptors that could hit their lofty altitude, and figured out the trick of using incendiary rounds to set off the hydrogen lift gas – there was a certain desire in airship circles to avoid fires. In the USA, that mostly took the form of substituting hydrogen for helium. Sure, it didn’t lift quite as well, but it also didn’t explode.

Still, supplies of helium were– and are– very much limited, and at least on a rigid Zeppelin, the hydrogen wasn’t even the most flammable part. As has become widely known, thanks in large part to the Mythbusters episode about the Hindenburg disaster, the doped cotton skin in use in those days was more flammable than some firestarters you can buy these days.

That’s a problem, because, as came up in the comments of our last airship article, rigid airships beat blimps largely on Rule of Cool. Who invented the blimp? Well, arguably it was Henri Griffard with his steam-driven balloon in 1857, but not many people have ever heard his name. Who invented the rigid airship? You know his name: Ferdinand Adolf Heinrich August Graf von Zeppelin. No relation. Probably. Well, admittedly most people don’t know the full name, but Count Zeppelin is still practically a household name over a century after his death. His invention was just that much cooler.

That unavoidable draw of coolness led to the Detroit Airship Company and their amazing tin blimp. The idea was the brainchild of a man named Ralph Upton, and is startling in its simplicity: why not take the all-metal, monocoque design that was just then being so successfully applied to heavier-than-air flight, and use it to build an airship?

Of course everyone’s initial reaction to the idea is that it’s absurd: metal is too heavy to fly! They said that about airplanes once, too, but airships are surely a different matter. Airships must be lighter than air. Could a skin of aluminum really hold enough lift gas to keep itself in the air? Upton convinced no lesser lights than Henry Ford to back him, and the Detroit Aircraft Company ultimately found a customer for the design in the US Navy.

Image credit: unknown, public domain.

It helped that Upton wasn’t exactly the first to come up with this idea: David Schwarz had tried to build a metal airship at the end of the 19th century. Arguably it is he who invented the rigid airship, not my aura farming not-ancestor. His design had metal skin over an internal framework, rather than the lighter monocoque construction Upton was exploring. While it was by no means a success, being destroyed on its maiden flight, the fact that it had a maiden flight at all at least proved that metal structures could be made light enough to get off the ground.

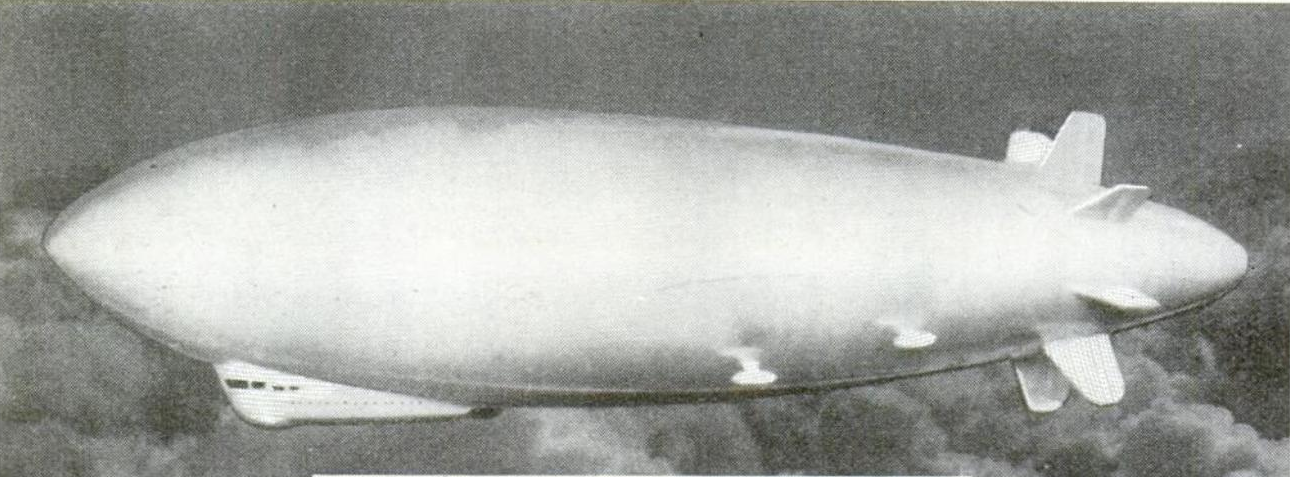

The Detroit Airship Company’s first– and only, as it turned out– prototype was much more successful, as we will see. It was immediately nicknamed the “tin blimp” by the press after it was unveiled in 1929, that name was incorrect in every particular. It wasn’t tin, and it wasn’t a blimp. Well, not exactly, anyway. More on that later.

How To Make a Metal Balloon

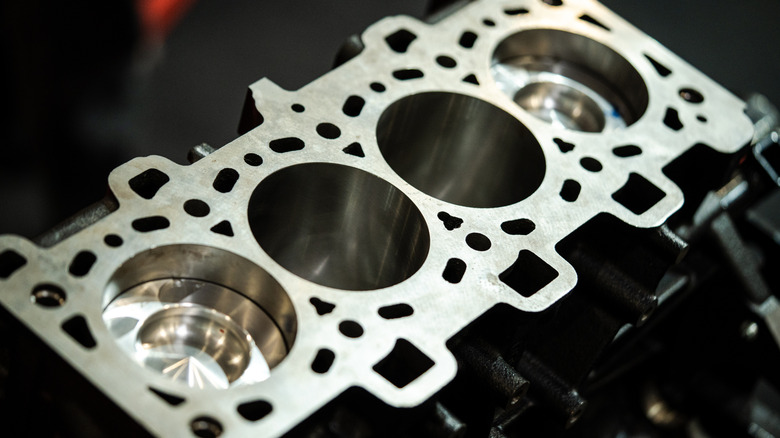

Compared to the various frames, longitudinal girders, bracing wires and fabric-backed gas bags of a Zeppelin-type airship, the ZMC-2’s balloon was simplicity itself. The balloon–if you can call it that–was a hollow spheroid built up of strips of 0.0095” (0.24 mm) Alclad sheeting. Alclad is a sort of metallic composite material: a sheet of duraluminum coated with a very thin protective layer of pure aluminum to provide corrosion resistance. The ZMC-2 was actually the first major use of Alclad, but hardly the last. At least for skins, most aircraft aluminum is actually alclad, as alloys with the desired strength-to-weight ratio are generally too vulnerable to corrosion to be exposed to the elements.

So, contrary to popular belief, no tin was involved. And the sturdy aluminum spheroid was not at all flexible, so the ZMC-2 was not really any kind of blimp. It also was not, technically, a Zeppelin. It was a whole new beast: a metalclad airship.

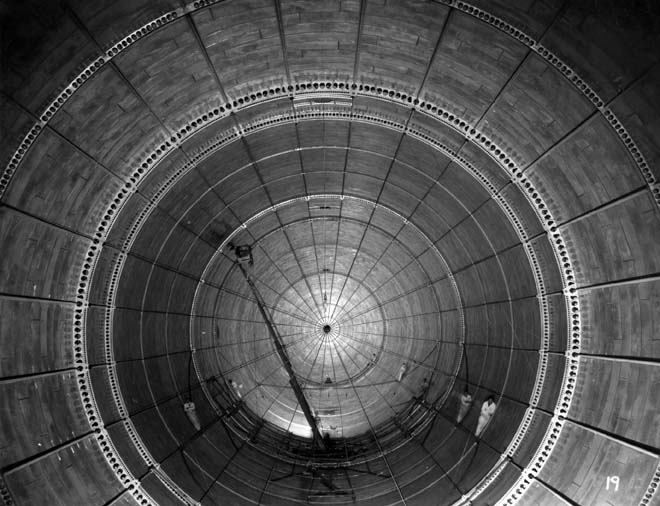

There is a film of the ship being built, and it’s rather fascinating. The strips of alclad are rolled into conical sections and riveted together, with a bituminous material serving as sealant. Even today, you would not want to weld this material, so instead three and a half million 0.035” (0.89 mm) rivets hold the plates together. A special automated riveting machine was invented for the construction of the metalclad airship, which “sewed” three rows simultaneously at a rate of five thousand rivets per hour.

Just like most monocoque airplanes, then and now, the skin doesn’t hold the entire load: there were five circular frames, flanged and full of lightening holes just like the ribs of an aeroplane fuselage, of various diameters to help the ‘gas bag’ hold shape. The gondola would attach to two of these.

Amazingly, with all of those rivets and the low-tech sealant, the metalclad held helium much better than its rivals. Yes, helium. While more expensive than hydrogen, the US Navy had already transitioned away from that more volatile gas and had no interest in going back. All of their groundside infrastructure was centered around helium. If that meant that the fireproof metalclad would not be able to lift quite so much as it otherwise might, well, too bad.

Image: Navy History and Heritage Command

OK, It’s a Bit Like a Blimp

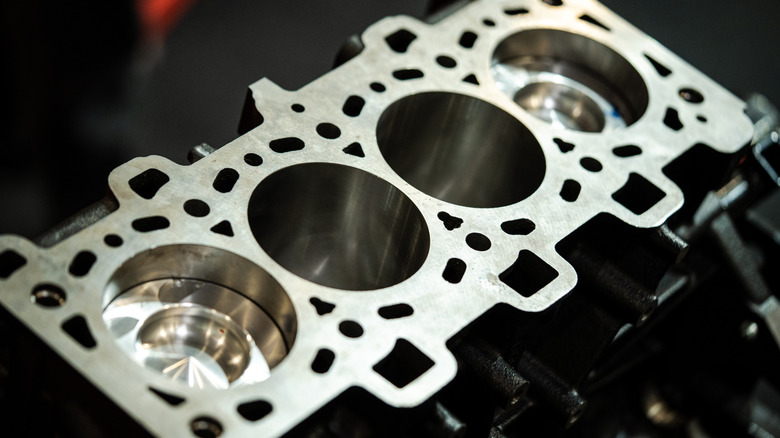

Aside from outward appearance, the metalclad airship is similar to a blimp in some respects. For one, like the blimps that would go on to serve into and well past WWII, and unlike every Zeppelin ever built, the metalclad design had no internal subdivisions. The great metal balloon, 52 ‘8 ” in diameter (16 m) and 149’ 5” (45.5m) long, held two air bladders, one fore, and one aft, but was otherwise cavernously empty.

Just like the blimps, those air bladders were used for trim: by pressurizing the fore bladder, the nose becomes heavy and trims the blimp down; likewise pressurizing the rear bladder trims the nose upwards. With both under pressure, the overall excess lift of the gasbag is reduced slightly, though the hull was not designed to withstand enough pressure for that to be notably useful at affecting overall buoyancy. The maximum the ZMC-2’s hull could take was said to be about two inches of water, or 0.07 PSIg (0.5 kPa).

Also like a blimp, that pressure was required to resist the force of aerodynamic drag, at least at high speeds. The aluminum skin could hold its own shape, obviously, and even at low speeds it was safe to fly at atmospheric pressure, but at speeds above about half velocity never exceed (VNE) there was a risk of buckling the nose. So, like a blimp–or the balloon tanks on the much later Atlas rockets–gas pressure was used as reinforcement. For that reason, there was much consternation at the time–and since–whether to count the metalclad as a rigid or non-rigid airship. Ultimately the US Navy, whose code was “Z” for airship and “R” for rigid or “S” for non-rigid, called it ZMC– z-airship, metal clad. That dodged the issue well enough.

A larger ship might have been able to afford the weight of stronger aluminum to take the buffeting of high-speed flight, thanks to the square-cube law, but the comparatively tiny ZMC-2 lacked that lift capacity. Even larger ships were always intended to use pressure-reinforcement; it’s a key part of the metalclad concept. Why waste lift capacity on metal when the gas can do it for you? As it was, the useful load of the prototype ZMC-2 was only 750 lbs (340 kg). The ZMC-2 wasn’t designed for useful load, though; it was only ever meant as a testbed.

Flying the Tin Blimp

As a testbed, the ZMC-2 was reasonably successful, and also a complete failure. It was reasonably successful in that its logbooks recorded 2,265 incident-free hours over 725 flights between its debut in August 1929 and its grounding in August 1939. In those ten years, it was found to fly well, in spite of its oddities.

The control car, with its crew of two or three–plus four passengers–and a pair of 220 HP Wright Whirlwind engines, would not have looked out of place on a blimp of similar size. Its overall size was not unlike blimps Goodyear was flying. Nor was the ZMC-2 particularly speedy, or unusually slow with a top speed of 70 mph (113 km/h). Aside from the metal-clad construction, two things made the ZMC-2 stand out amongst its contemporaries. The empennage — the “tail” — was perhaps unique in airship history– as near as I can tell, the Detroit Airship Company was the only one to ever fit eight equally-spaced fins to the rear of an airship. All had control surfaces, and in practice, there was no control mixing: four acted as elevators, and four as rudders. It worked well enough, as the ship was apparently quite maneuverable.

The other oddity helped with this maneuverability: the airship’s fineness ratio. It was oddly squat, at only 2.83. Like much in the world of airships, the concept of a fineness ratio is borrowed from the naval world– there, it is the ratio between a ship’s length and its beam, or width. For a flying ship, it’s the length to diameter of the gas bag, but the effect is the same. Picture a racing skiff vs a coracle, or a whitewater kayak. The racing skiff has a very high fineness ratio, which gives it high speed and low maneuverability as it cuts through the water. A coracle or whitewater kayak, on the other hand, has a low fineness ratio, often less than two, so that they can turn on a dime. They’re also incredibly difficult to keep going in a straight line. The ZMC-2 wasn’t quite that squat, but from the boating analogy I can only imagine it was a handful to keep on a straight course at times.

Image: Naval History and Heritage Command

The only reason I dare call the fabulous tin blimp a failure is because there was no ZMC-3, or -4, or N≠2. It was indeed the only metalclad to ever fly.

One of a Kind

It wasn’t the cute little prototype’s fault; it was the timing. The Detroit Aircraft Company launched the ZMC-2 with big plans– Upton’s first design was for a larger express passenger/cargo airship of 1,600,000 cu.ft. (45,307 m³) gas volume, compared to the meager 200,000 cu.ft. (5,663 m³) of the prototype. There was interest in the bigger designs, but the ZMC-2 would need to prove the concept– which it did, in August 1929. Then in October, the stock market crashed, the Great Depression hit, and there was a lot less money available for pie-in-the-sky ideas like metalclad airships.

The interest was there, mind you. The U.S. Army liked what they saw, and went hat-in-hand in 1931 to Congress asking for 4.5 million to buy a 20-ton-lift model that would have been larger than the Graf Zeppelin. At that point, Congress felt there were other priorities. Later on, Detroit’s metalclad design was The Navy’s preferred choice to replace the ill-fated Akron and Macon, but there were problems with funding and the Detroit Aircraft Company didn’t have a hangar big enough to build the thing in anyway.

Image: Popular Mechanics April 1931, via lynceans.org

That was the end of it. Though there was no notable metal fatigue or corrosion, the ZMC-2 flew less and less as the odds of a successor dropped. Some accounts claim it was grounded completely in 1939; others imply a handful of flights until US entry into WWII. With the war on, aluminum was in short supply and the ZMC-2 was broken up for scrap in 1941. It was simply too small for the antisubmarine duty the Navy’s blimps were being put to, and too weird to use as a training ship. Though the gondola was kept for a time as a learning aide for ground school, it was not preserved. It is likely that no physical trace of the fabulous tin blimp remains.

Legacy

Ultimately, the ZMC-2 was successful in proving that a metalclad airship could fly. During the various aborted attempts at an ‘airship renaissance’, various proposals for metalclads or similarly-built composite ships have been put forth, but as with Ralph Upton’s larger designs, no capital sufficient for construction ever materialized.

In spite of my praise of the non-rigid airship’s ability to shift with the winds– going so far as to say “Blimps win” in my last article, based on the historical record, I for one would love to see a metalclad fly again. Maybe it’s just the Rule of Cool– rigids are cooler, and metalclads are cooler yet. Maybe the image of the doughty ZMC-2 buzzing about like a giant, clumsy bumble bee has made me sentimental for the design. Maybe it’s just that there’s potential there. Thanks to the great Nan ships, we’ve got a pretty idea of what non-rigid airships are capable of. ZMC-2 only scratches the surface of what a metalclad could do; perhaps someday we’ll find out. With modern lithium-aluminum alloys being that much lighter, or the ‘black’ aluminum of carbon composites, we could probably build something exceeding Ralph Upton’s wildest dreams… if there was money to pay for it.

Image: Naval History and Heritage Command

You must be logged in to post a comment Login