WHEN KYIV-BORN ENGINEER Yaroslav Azhnyuk thinks about the future, his mind conjures up dystopian images. He talks about “swarms of autonomous drones carrying other autonomous drones to protect them against autonomous drones, which are trying to intercept them, controlled by AI agents overseen by a human general somewhere.” He also imagines flotillas of autonomous submarines, each carrying hundreds of drones, suddenly emerging off the coast of California or Great Britain and discharging their cargoes en masse to the sky.

“How do you protect from that?” he asks as we speak in late December 2025; me at my quiet home office in London, he in Kyiv, which is bracing for another wave of missile attacks.

Azhnyuk is not an alarmist. He cofounded and was formerly CEO of Petcube, a California-based company that uses smart cameras and an app to let pet owners keep an eye on their beloved creatures left alone at home. A self-described “liberal guy who didn’t even receive military training,” Azhnyuk changed his mind about developing military tech in the months following the Russian invasion of Ukraine in February 2022. By 2023, he had relinquished his CEO role at Petcube to do what many Ukrainian technologists have done—to help defend his country against a mightier aggressor.

It took a while for him to figure out what, exactly, he should be doing. He didn’t join the military, but through friends on the front line, he witnessed how, out of desperation, Ukrainian troops turned to off-the-shelf consumer drones to make up for their country’s lack of artillery.

Ukrainian troops first began using drones for battlefield surveillance, but within a few months they figured out how to strap explosives onto them and turn them into effective, low-cost killing machines. Little did they know they were fomenting a revolution in warfare.

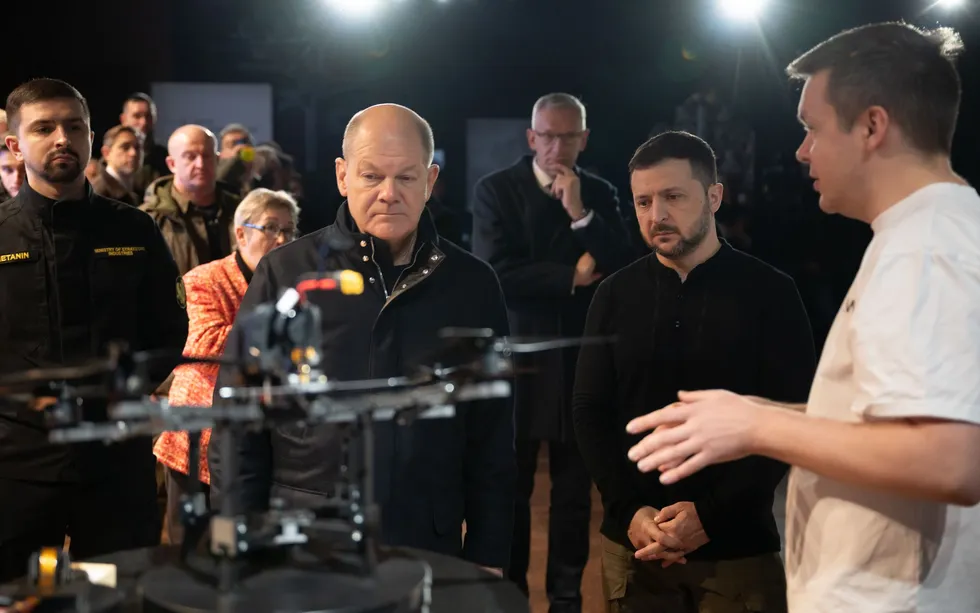

The Ukrainian robotics company The Fourth Law produces an autonomy module [above] that uses optics and AI to guide a drone to its target. Yaroslav Azhnyuk [top, in light shirt], founder and CEO of The Fourth Law, describes a developmental drone with autonomous capabilities to Ukrainian President Volodymyr Zelenskyy and German Chancellor Olaf Scholz.Top: THE PRESIDENTIAL OFFICE OF UKRAINE; Bottom: THE FOURTH LAW

The Ukrainian robotics company The Fourth Law produces an autonomy module [above] that uses optics and AI to guide a drone to its target. Yaroslav Azhnyuk [top, in light shirt], founder and CEO of The Fourth Law, describes a developmental drone with autonomous capabilities to Ukrainian President Volodymyr Zelenskyy and German Chancellor Olaf Scholz.Top: THE PRESIDENTIAL OFFICE OF UKRAINE; Bottom: THE FOURTH LAW

That revolution was on display last month, as the U.S. and Israel went to war with Iran. It soon became clear that attack drones are being extensively used by both sides. Iran, for example, is relying heavily on the Shahed drones that the country invented and that are now also being manufactured in Russia and launched by the thousands every month against Ukraine.

A thorough analysis of the Middle East conflict will take some time to emerge. And so to understand the direction of this new way of war, look to Ukraine, where its next phase—autonomy—is already starting to come into view. Outnumbered by the Russians and facing increasingly sophisticated jamming and spoofing aimed at causing the drones to veer off course or fall out of the sky, Ukrainian technologists realized as early as 2023 that what could really win the war was autonomy. Autonomous operation means a drone isn’t being flown by a remote pilot, and therefore there’s no communications link to that pilot that can be severed or spoofed, rendering the drone useless.

By late 2023, Azhnyuk set out to help make that vision a reality. He founded two companies, The Fourth Law and Odd Systems, the first to develop AI algorithms to help drones overcome jamming during final approach, the second to build thermal cameras to help those drones better sense their surroundings.

“I moved from making devices that throw treats to dogs to making devices that throw explosives on Russian occupants,” Azhnyuk quips.

Since then, The Fourth Law has dispatched “more than thousands” of autonomy modules to troops in eastern Ukraine (it declines to give a more specific figure), which can be retrofitted on existing drones to take over navigation during the final approach to the target. Azhnyuk says the autonomy modules, worth around US $50, increase the drone-strike success rate by up to four times that of purely operator-controlled drones.

And that is just the beginning. Azhnyuk is one of thousands of developers, including some who relocated from Western countries, who are applying their skills and other resources to advancing the drone technology that is the defining characteristic of the war in Ukraine. This eclectic group of startups and founders includes Eric Schmidt, the former Google CEO, whose company Swift Beat is churning out autonomous drones and modules for Ukrainian forces. The frenetic pace of tech development is helping a scrappy, innovative underdog hold at bay a much larger and better-equipped foe.

All of this development is careening toward AI-based systems that enable drones to navigate by recognizing features in the terrain, lock on to and chase targets without an operator’s guidance, and eventually exchange information with each other through mesh networks, forming self-organizing robotic kamikaze swarms. Such an attack swarm would be commanded by a single operator from a safe distance.

According to some reports, autonomous swarming technology is also being developed for sea drones. Ukraine has had some notable successes with sea drones, which have reportedly destroyed or damaged around a dozen Russian vessels.

The Skynode X system, from Auterion, provides a degree of autonomy to a drone.AUTERION

The Skynode X system, from Auterion, provides a degree of autonomy to a drone.AUTERION

For Ukraine, swarming can solve a major problem that puts the nation at a disadvantage against Russia—the lack of personnel. Autonomy is “the single most impactful defense technology of this century,” says Azhnyuk. “The moment this happens, you shift from a manpower challenge to a production challenge, which is much more manageable,” he adds.

The autonomous warfare future envisioned by Azhnyuk and others is not yet a reality. But Marc Lange, a German defense analyst and business strategist, believes that “an inflection point” is already in view. Beyond it, “things will be so dramatically different,” he says.

“Ukraine pretty rapidly realized that if the operator-to-drone ratio can be shifted from one-to-one to one-to-many, that creates great economies of scale and an amazing cost exchange ratio,” Lange adds. “The moment one operator can launch 100, 50, or even just 20 drones at once, this completely changes the economics of the war.”

Drones With a View

For a while, jammers that sever the radio links between drones and operators or that spoof GPS receivers were able to provide fairly reliable defense against human-controlled first-person-view attack drones (FPVs). But as autonomous navigation progressed, those electronic shields have gradually become less effective. Defenders must now contend with unjammable drones—ones that are attached to hair-thin optical fibers or that are capable of finding their way to their targets without external guidance. In this emerging struggle, the defenders’ track records aren’t very encouraging: The typical countermeasure is to try to shoot down the attacking drone with a service weapon. It’s rarely successful.

A truck outfitted with signal-jamming gear drives under antidrone nets near Oleksandriya, in eastern Ukraine, on 2 October 2025.ED JONES/AFP/GETTY IMAGES

A truck outfitted with signal-jamming gear drives under antidrone nets near Oleksandriya, in eastern Ukraine, on 2 October 2025.ED JONES/AFP/GETTY IMAGES

“The attackers gain an immense advantage from unmanned systems,” says Lange. “You can have a drone pop up from anywhere and it can wreak havoc. But from autonomy, they gain even more.”

The self-navigating drones rely on image-recognition algorithms that have been around for over a decade, says Lange. And the mass deployments of drones on Ukrainian battlefields are enabling both Russian and Ukrainian technologists to create huge datasets that improve the training and precision of those AI algorithms.

A Ukrainian land robot, the Ravlyk, can be outfitted with a machine gun.

A Ukrainian land robot, the Ravlyk, can be outfitted with a machine gun.

While uncrewed aerial vehicles (UAVs) have received the most attention, the Ukrainian military is also deploying dozens of different kinds of drones on land and sea. Ukraine, struggling with the shortage of infantry personnel, began working on replacing a portion of human soldiers with wheeled ground robots in 2024. As of early 2026, thousands of ground robots are crawling across the gray zone along the front line in Eastern Ukraine. Most are used to deliver supplies to the front line or to help evacuate the wounded, but some “killer” ground robots fitted with turrets and remotely controlled machine guns have also been tested.

In mid-February, Ukrainian authorities released a video of a Ukrainian ground robot using its thermal camera to detect a Russian soldier in the dark of the night and then kill the invader with a round from a heavy machine gun. So far these robots are mostly controlled by a human operator, but the makers of these uncrewed ground vehicles say their systems are capable of basic autonomous operations, such as returning to base when radio connection is lost. The goal is to enable them to swarm so that one operator controls not one, but a whole herd of mesh-connected killer robots.

But Bryan Clark, senior fellow and director of the Center for Defense Concepts and Technology at the Hudson Institute, questions how quickly ground robots’ abilities can progress. “Ground environments are very difficult to navigate in because of the terrain you have to address,” he says. “The line of sight for the sensors on the ground vehicles is really constrained because of terrain, whereas an air vehicle can see everything around it.”

To achieve autonomy, maritime drones, too, will require navigational approaches beyond AI-based image recognition, possibly based on star positions or electronic signals from radios and cell towers that are within reach, says Clark. Such technologies are still being developed or are in a relatively early operational stage.

How the Shaheds Got Better

Russia is not lagging behind. In fact, some analysts believe its autonomous systems may be slightly ahead of Ukraine’s. For a good example of the Russian military’s rapid evolution, they say, consider the long-range Iranian-designed Shahed drones. Since 2022, Russia has been using them to attack Ukrainian cities and other targets hundreds of kilometers from the front line. “At the beginning, Shaheds just had a frame, a motor, and an inertial navigation system,” Oleksii Solntsev, CEO of Ukrainian defense tech startup MaXon Systems, tells me. “They used to be imprecise and pretty stupid. But they are becoming more and more autonomous.” Solntsev founded MaXon Systems in late 2024 to help protect Ukrainian civilians from the growing threat of Shahed raids.

A Russian Geran-2 drone, based on the Iranian Shahed-136, flies over Kyiv during an attack on 27 December 2025.SERGEI SUPINSKY/AFP/GETTY IMAGES

A Russian Geran-2 drone, based on the Iranian Shahed-136, flies over Kyiv during an attack on 27 December 2025.SERGEI SUPINSKY/AFP/GETTY IMAGES

First produced in Iran in the 2010s, Shaheds can carry 90-kilogram warheads up to 650 km (50-kg warheads can go twice as far). They cost around $35,000 per unit, compared to a couple of million dollars, at least, for a ballistic missile. The low cost allows Russia to manufacture Shaheds in high quantities, unleashing entire fleets onto Ukrainian cities and infrastructure almost every night.

The early Shaheds were able to reach a preprogrammed location based on satellite-navigation coordinates. Even one of these early models could frequently overcome the jamming of satellite-navigation signals with the help of an onboard inertial navigation unit. This was essentially a dead-reckoning system of accelerators and gyroscopes that estimate the drone’s position from continual measurements of its motions.

In the Donetsk Region, on 15 August 2025, a Ukrainian soldier hunts for Shaheds and other drones with a thermalimaging system attached to a ZU23 23-millimeter antiaircraft gun.KOSTYANTYN LIBEROV/LIBKOS/GETTY IMAGES

In the Donetsk Region, on 15 August 2025, a Ukrainian soldier hunts for Shaheds and other drones with a thermalimaging system attached to a ZU23 23-millimeter antiaircraft gun.KOSTYANTYN LIBEROV/LIBKOS/GETTY IMAGES

Ukrainian defense forces learned to down Shaheds with heavy machine guns, but as Russia continued to innovate, the daily onslaughts started to become increasingly effective.

Today’s Shaheds fly faster and higher, and therefore are more difficult to detect and take down. Between January 2024 and August 2025, the number of Shaheds and Shahed-type attack drones launched by Russia into Ukraine per month increased more than tenfold, from 334 to more than 4,000. In 2025, Ukraine found AI-enabling Nvidia chipsets in wreckages of Shaheds, as well as thermal-vision modules capable of locking onto targets at night.

“Now, they are interconnected, which allows them to exchange information with each other,” Solntsev says. “They also have cameras that allow them to autonomously navigate to objects. Soon they will be able to tell each other to avoid a jammed region or an area where one of them got intercepted.”

These Russian-manufactured Shaheds, which Russian forces call Geran-2s, are thought to be more capable than the garden variety Shahed-136s that Iran has lately been launching against targets throughout the Middle East. Even the relatively primitive Shahed-136s have done considerable damage, according to press accounts.

Those Shahed successes may accrue, at least in part, from the fact that the United States and Israel lack Ukraine’s long experience with fending them off. In just two days in early March, upward of a thousand drones, mostly Shaheds, were launched against U.S. and Israeli targets, with hundreds of them reportedly finding their marks.

One attack, caught on videotape, shows a Shahed destroying a radar dome at the U.S. navy base in Manama, Bahrain. U.S. forces were understood to be attempting to fend off the drones by striking launch platforms, dispatching fighter aircraft to shoot them down, and by using some extremely costly air-defense interceptors, including ones meant to down ballistic missiles. On 4 March, CNN reported that in a congressional briefing the day before, top U.S. defense officials, including Secretary of Defense Pete Hegseth, acknowledged that U.S. air defenses weren’t keeping up with the onslaught of Shahed drones.

Russian V2U attack drones are outfitted with Nvidia processors and run computer-vision software and AI algorithms to enable the drones to navigate autonomously.GUR OF THE MINISTRY OF DEFENSE OF UKRAINE

Russian V2U attack drones are outfitted with Nvidia processors and run computer-vision software and AI algorithms to enable the drones to navigate autonomously.GUR OF THE MINISTRY OF DEFENSE OF UKRAINE

Russia is also starting to field a newer generation of attack drones. One of these, the V2U, has been used to strike targets in the Sumy region of northeastern Ukraine. The V2U drones are outfitted with Nvidia Jetson Orin processors and run computer-vision software and AI algorithms that allow the drones to navigate even where satellite navigation is jammed.

The sale of Nvidia chips to Russia is banned under U.S. sanctions against the country. However, press reports suggest that the chips are getting to Russia via intermediaries in India.

Antidrone Systems Step Up

MaXon Systems is one of several companies working to fend off the nightly drone onslaught. Within one year, the company developed and battle-tested a Shahed interception system that hints at the sci-fi future envisioned by Azhnyuk. For a system to be capable of reliably defending against autonomous weaponry, it, too, needs to be autonomous.

MaXon’s solution consists of ground turrets scanning the sky with infrared sensors, with additional input from a network of radars that detects approaching Shahed drones at distances of, typically, 12 to 16 km. The turrets fire autonomous fixed-winged interceptor drones, fitted with explosive warheads, toward the approaching Shaheds at speeds of nearly 300 km/h. To boost the chances of successful interception, MaXon is also fielding an airborne anti-Shahed fortification system consisting of helium-filled aerostats hovering above the city that dispatch the interceptors from a higher altitude.

“We are trying to increase the level of automation of the system compared to existing solutions,” says Solntsev. “We need automatic detection, automatic takeoff, and automatic mid-track guidance so that we can guide the interceptor before it can itself flock the target.”

An interceptor drone, part of the U.S. MEROPS defensive system, is tested in Poland on 18 November 2025.WOJTEK RADWANSKI/AFP/GETTY IMAGES

An interceptor drone, part of the U.S. MEROPS defensive system, is tested in Poland on 18 November 2025.WOJTEK RADWANSKI/AFP/GETTY IMAGES

In November 2025, the Ukrainian military announced it had been conducting successful trials of the Merops Shahed drone interceptor system developed by the U.S. startup Project Eagle, another of former Google CEO Eric Schmidt’s Ukraine defense ventures. Like the MaXon gear, the system can operate largely autonomously and has so far downed over 1,000 Shaheds.

What Works in the Lab Doesn’t Necessarily Fly on the Battlefield

Despite the progress on both sides, analysts say that the kind of robotic warfare imagined by Azhnyuk won’t be a reality for years.

“The software for drone collaboration is there,” says Kate Bondar, a former policy advisor for the Ukrainian government and currently a research fellow at the U.S. Center for Strategic and International Studies. “Drones can fly in labs, but in real life, [the forces] are afraid to deploy them because the risk of a mistake is too high,” she adds.

Ukrainian soldiers watch a GOR reconnaissance drone take to the sky near Pokrovsk in the Donetsk region, on 10 March 2025.ANDRIY DUBCHAK/FRONTLINER/GETTY IMAGES

Ukrainian soldiers watch a GOR reconnaissance drone take to the sky near Pokrovsk in the Donetsk region, on 10 March 2025.ANDRIY DUBCHAK/FRONTLINER/GETTY IMAGES

In Bondar’s view, powerful AI-equipped drones won’t be deployed in large numbers given the current prices for high-end processors and other advanced components. And, she adds, the more autonomous the system needs to be, the more expensive are the processors and sensors it must have. “For these cheap attack drones that fly only once, you don’t install a high-resolution camera that [has] the resolution for AI to see properly,” she says. “[You install] the cheapest camera. You don’t want expensive chips that can run AI algorithms either. Until we can achieve this balance of technological sophistication, when a system can conduct a mission but at the lowest price possible, it won’t be deployed en masse.”

While existing AI systems are doing a good job recognizing and following large objects like Shaheds or tanks, experts question their ability to reliably distinguish and pursue smaller and more nimble or inconspicuous targets. “When we’re getting into more specific questions, like can it distinguish a Russian soldier from a Ukrainian soldier or at least a soldier from a civilian? The answer is no,” says Bondar. “Also, it’s one thing to track a tank, and it’s another to track infantrymen riding buggies and motorcycles that are moving very fast. That’s really challenging for AI to track and strike precisely.”

Clark, at the Hudson Institute, says that although the AI algorithms used to guide the Russian and Ukrainian drones are “pretty good,” they rely on information provided bysensors that “aren’t good enough.” “You need multiphenomenology sensors that are able to look at infrared and visual and, in some cases, different parts of the infrared spectrum to be able to figure out if something is a decoy or real target,” he says.

German defense analyst Lange agrees that right now, battlefield AI image-recognition systems are too easily fooled. “If you compress reality into a 2D image, a lot of things can be easily camouflaged—like what Russia did recently, when they started drawing birds on the back of their drones,” he says.

Autonomy Remains Elusive on the Ground and at Sea, Too

To make Ukraine’s emerging uncrewed ground vehicles (UGVs) equally self-sufficient will be an even greater task, in Clark’s view. Still, Bondar expects major advances to materialize within the next several years, even if humans are still going to be part of the decision-making loop.

A mobile electronic-warfare system built by PiranhaTech is demonstrated near Kyiv on 21 October 2025.DANYLO ANTONIUK/ANADOLU/GETTY IMAGES

A mobile electronic-warfare system built by PiranhaTech is demonstrated near Kyiv on 21 October 2025.DANYLO ANTONIUK/ANADOLU/GETTY IMAGES

“I think in two or three years, we will have pretty good full autonomy, at least in good weather conditions,” she says, referring to aerial drones in particular. “Humans will still be in the loop for some years, simply because there are so many unpredictable situations when you need an intervention. We won’t be able to fully rely on the machine for at least another 10 or 15 years.”

Ukrainian defenders are apprehensive about that autonomous future. The boom of drone innovation has come hand in hand with the development of sophisticated jamming and radio-frequency detection systems. But a lot of that innovation will become obsolete once the pendulum swings away from human control. Ukrainians got their first taste of dealing with unjammable drones in mid-2024, when Russia began rolling out fiber-optic tethered drones. Now they have to brace for a threat on a much larger scale.

An experimental drone is demonstrated at the Brave1 defense-tech incubator in Kyiv.DANYLO DUBCHAK/FRONTLINER/GETTY IMAGES

An experimental drone is demonstrated at the Brave1 defense-tech incubator in Kyiv.DANYLO DUBCHAK/FRONTLINER/GETTY IMAGES

“Today, we have a situation where we have lots of signals on the battlefield, but in the near future, in maybe two to five years, UAVs are not going to be sending any signals,” says Oleksandr Barabash, CTO of Falcons, a Ukrainian startup that has developed a smart radio-frequency detection system capable of revealing precise locations of enemy radio sources such as drones, control stations, and jammers.

Last September, Falcons secured funding from the U.S.-based dual-use tech fund Green Flag Ventures to scale production of its technology and work toward NATO certification. But Barabash admits that its system, like all technologies fielded in Ukrainian war zones, has an expiration date. Instead of radio-frequency detectors, Barabash thinks, the next R&D push needs to focus on passive radar systems capable of identifying small and fast-moving targets based on the signal from sources like TV towers or radio transmitters that propagate through the environment and are reflected by those moving targets. Passive radars have a significant advantage in the war zone, according to Barabash. Since they don’t emit their own signal, they can’t be that easily discovered by the enemy.

“Active radar is emitting signals, so if you are using active radars, you are target No. 1 on the front line,” Barabash says.

Bondar, on the other hand, thinks that the increased onboard compute power needed for AI-controlled drones will, by itself, generate enough electromagnetic radiation to prevent autonomous drones from ever operating completely undetectably.

“You can have full autonomy, but you will still have systems onboard that emit electromagnetic radiation or heat that can be detected,” says Bondar. “Batteries emit electromagnetic radiation, motors emit heat, and [that heat can be] visible in infrared from far away. You just need to have the right sensors to be able to identify it in advance.” She adds that that takeaway is “how capable contemporary detection systems have become and how technically challenging it is to design drones that can reliably operate in the Ukrainian battlefield environment.”

There Will Be Nowhere to Hide from Autonomous Drones

When autonomous drones become a standard weapon of war, their threat will extend far beyond the battlefields of Ukraine. Autonomous turrets and drone-interceptor fortification might soon dot the perimeter of European cities, particularly in the eastern part of the continent.

A fixed-wing drone is tested in Ukraine in April 2025.ANDREWKRAVCHENKO/BLOOMBERG/GETTY IMAGES

A fixed-wing drone is tested in Ukraine in April 2025.ANDREWKRAVCHENKO/BLOOMBERG/GETTY IMAGES

Nefarious actors from all over the world have closely watched Ukraine and taken notes, warns Lange. Today, FPV drones are being used by Islamic terrorists in Africa and Mexican drug cartels to fight against local authorities.

When autonomous killing machines become widely available, it’s likely that no city will be safe. “We might see nets above city centers, protecting civilian streets,” Lange says. “In every case, the West needs to start performing similar kinetic-defense development that we see in Ukraine. Very rapid iteration and testing cycles to find solutions.”

Azhnyuk is concerned that the historic defenders of Europe—the United States and the European countries themselves—are falling behind. “We are in danger,” he says. While Russia and Ukraine made major strides in their drones and countermeasures over the past year, “Europe and the United States have progressed, in the best-case scenario, from the winter-of-2022 technology to the summer-of-2022 technology.

“The gap is getting wider,” he warns. “I think the next few years are very dangerous for the security of Europe.”

This article appears in the April 2026 print issue as “Rise of the AUTONOMOUS Attack Drones.”

From Your Site Articles

Related Articles Around the Web

The Ukrainian

The Ukrainian  The Skynode X system, from Auterion, provides a degree of autonomy to a drone.AUTERION

The Skynode X system, from Auterion, provides a degree of autonomy to a drone.AUTERION

A truck outfitted with signal-jamming gear drives under antidrone nets near Oleksandriya, in eastern Ukraine, on 2 October 2025.ED JONES/AFP/GETTY IMAGES

A truck outfitted with signal-jamming gear drives under antidrone nets near Oleksandriya, in eastern Ukraine, on 2 October 2025.ED JONES/AFP/GETTY IMAGES

A Ukrainian land robot, the Ravlyk, can be outfitted with a machine gun.

A Ukrainian land robot, the Ravlyk, can be outfitted with a machine gun.

A Russian Geran-2 drone, based on the Iranian Shahed-136, flies over Kyiv during an attack on 27 December 2025.SERGEI SUPINSKY/AFP/GETTY IMAGES

A Russian Geran-2 drone, based on the Iranian Shahed-136, flies over Kyiv during an attack on 27 December 2025.SERGEI SUPINSKY/AFP/GETTY IMAGES

In the Donetsk Region, on 15 August 2025, a Ukrainian soldier hunts for Shaheds and other drones with a thermalimaging system attached to a ZU23 23-millimeter antiaircraft gun.KOSTYANTYN LIBEROV/LIBKOS/GETTY IMAGES

In the Donetsk Region, on 15 August 2025, a Ukrainian soldier hunts for Shaheds and other drones with a thermalimaging system attached to a ZU23 23-millimeter antiaircraft gun.KOSTYANTYN LIBEROV/LIBKOS/GETTY IMAGES

Russian V2U attack drones are outfitted with

Russian V2U attack drones are outfitted with  An interceptor drone, part of the U.S. MEROPS defensive system, is tested in Poland on 18 November 2025.WOJTEK RADWANSKI/AFP/GETTY IMAGES

An interceptor drone, part of the U.S. MEROPS defensive system, is tested in Poland on 18 November 2025.WOJTEK RADWANSKI/AFP/GETTY IMAGES

Ukrainian soldiers watch a GOR reconnaissance drone take to the sky near Pokrovsk in the Donetsk region, on 10 March 2025.ANDRIY DUBCHAK/FRONTLINER/GETTY IMAGES

Ukrainian soldiers watch a GOR reconnaissance drone take to the sky near Pokrovsk in the Donetsk region, on 10 March 2025.ANDRIY DUBCHAK/FRONTLINER/GETTY IMAGES

A mobile electronic-warfare system built by PiranhaTech is demonstrated near Kyiv on 21 October 2025.DANYLO ANTONIUK/ANADOLU/GETTY IMAGES

A mobile electronic-warfare system built by PiranhaTech is demonstrated near Kyiv on 21 October 2025.DANYLO ANTONIUK/ANADOLU/GETTY IMAGES

An experimental drone is demonstrated at the Brave1 defense-tech incubator in Kyiv.DANYLO DUBCHAK/FRONTLINER/GETTY IMAGES

An experimental drone is demonstrated at the Brave1 defense-tech incubator in Kyiv.DANYLO DUBCHAK/FRONTLINER/GETTY IMAGES

A fixed-wing drone is tested in Ukraine in April 2025.ANDREWKRAVCHENKO/BLOOMBERG/GETTY IMAGES

A fixed-wing drone is tested in Ukraine in April 2025.ANDREWKRAVCHENKO/BLOOMBERG/GETTY IMAGES

You must be logged in to post a comment Login