Tech

US government hires BlackSky to build next-gen AI surveillance satellites for Earth and beyond

The US government has selected BlackSky to design and build the next generation of its space surveillance capabilities. The newly announced contract is an indefinite delivery/indefinite quantity (IDIQ) agreement, meaning the company will provide as many satellites and monitoring services as the Air Force Research Laboratory requires for its missions….

Read Entire Article

Source link

Tech

Neutralizing the Gigascale Problem: How to Solve the Physical Power Paradox of Extreme AI Training Loads

This sponsored article is brought to you by Ampace.

As AI workloads grow to gigascale levels, the global data center industry has hit a hidden physical wall. The real bottleneck is no longer just the thermal limit of the chip or the capacity of the cooling system — it is the dynamic resilience of the power chain.

Modern AI computing clusters, driven by massive GPU clusters, generate high-frequency, abrupt, and synchronized spikey pulse loads. As rack densities soar beyond 100 kW, these fluctuations are amplified into a “power paradox”: while the digital logic of AI is moving faster than ever, the physical infrastructure supporting it remains tethered to legacy response capabilities.

The power usage of these gigascale sites and their drastic, high frequency, abrupt load surges from the AI GPU clusters can trigger transient voltage events and frequency instability, risking the entire local grid. The grid itself is not robust enough to support these loads. This leads to the infrastructure gap: The utility is not robust enough and traditional backup sources, such as diesel generators and gas turbines, simply cannot react to millisecond-level power spikes in output. This will often force operators into a cycle of costly infrastructure over sizing just to buffer the volatility.

AI infrastructure requires energy systems capable of instantaneous response while safeguarding continuity and reliability.

The industry has explored various mitigations — from rack-level BBUs to 800V DC architectures — yet the mature, high volume, traditional UPS system remains the most viable and scalable foundation for gigawatt-level facilities. Consequently, the UPS-integrated battery system has emerged as the critical “physical buffer” to neutralize these pulses at the source.

At Data Center World 2026 in Washington, D.C., Ampace led a pivotal technical dialogue with Eaton during the session “Powering Giga-scale AI.” Their exchange unveiled a fundamental paradigm shift: To bridge the AI power gap, energy storage must evolve from a passive insurance policy into an active, high-speed stabilizer. By aligning Ampace’s semi-solid-state battery innovation with Eaton’s proven system intelligence, we are moving beyond simple backup to solve the physical paradox of the AI era.

To move beyond simple backup and solve the physical paradox of the AI era, Ampace is aligning its semi-solid-state battery innovation with Eaton’s proven system intelligence.Ampace

To move beyond simple backup and solve the physical paradox of the AI era, Ampace is aligning its semi-solid-state battery innovation with Eaton’s proven system intelligence.Ampace

The “Shock Absorber” physics: semi-solid chemistry for AI pulses

Conventional power systems were designed for steady-state loads, not the rapid heartbeat of a massive AI GPU cluster. When thousands of GPUs synchronize their computing cycles, they generate high-frequency, abrupt pulse loads that can lead to voltage sags, frequency oscillations, and potential interruptions of critical AI training.

Ampace’s PU Series semi-solid and low-electrolyte cells address this challenge by acting as high-speed “shock absorbers.” Leveraging ultra-low internal resistance (DCR) and high cycle capability, these batteries neutralize millisecond-level power spikes at the source, stabilizing the local power loop before disturbances propagate upstream to the grid or on-site generators. These high-rate cells enable 100 kW+ racks to maintain peak performance without transmitting instability across the power chain.

This capability aligns closely with Eaton’s matured UPS architectures, such as double-conversion topologies and advanced power electronics upgrades, which have long prioritized rapid load responsiveness and high system stability.

Together, these approaches embody a shared industry philosophy: AI infrastructure requires energy systems capable of instantaneous response while safeguarding continuity and reliability.

Ampace’s semi-solid state chemistry minimizes liquid electrolyte, greatly reducing the risk of leakage and thermal runaway under continuous AI high-load conditions.Ampace

Ampace’s semi-solid state chemistry minimizes liquid electrolyte, greatly reducing the risk of leakage and thermal runaway under continuous AI high-load conditions.Ampace

Algorithmic intelligence: synchronizing energy and control

Hardware alone cannot solve the AI power paradox; the system also requires intelligent coordination between energy storage and power management. Sophisticated battery management systems (BMS) like Ampace’s high-precision design track state-of-charge (SOC) with high-speed sampling, even during rapid, shallow cycling typical in AI workloads.

Complementary algorithmic approaches in modern UPS platforms — such as ramp-rate control and average power management — effectively suppress sub-synchronous oscillations and optimize load smoothing. In large-scale AI training environments, where thousands of GPUs can trigger millisecond-level power pulses, these intelligent layers ensure that batteries buffer high-frequency fluctuations without compromising the mandatory emergency backup reserves.

By transforming energy storage from passive “standby insurance” into active, schedulable assets, the system simultaneously safeguards continuous AI training and maintains the long-term health of the data center infrastructure. In practical terms, this means that even during peak compute bursts, the infrastructure remains stable, training cycles continue uninterrupted, and operators avoid costly oversizing or grid stress.

Eaton’s dual-layer algorithms serve as a valuable benchmark in this space, demonstrating how advanced control logic can achieve similar objectives, reinforcing Ampace’s approach and philosophy within the broader data center power ecosystem.

Economic scalability: optimizing AI infrastructure efficiently

One of the largest costs in deploying AI infrastructure is “oversizing”: procuring transformers, generators, and UPS systems to handle brief peak spikes. This traditional approach inflates the Total Cost of Ownership (TCO) and leads to wasted capital on underutilized hardware.

Ampace’s turn-key cabinet design developed by its independent R&D is engineered for seamless compatibility with mature, high volume UPS systems. By leveraging Eaton’s double-conversion UPS topologies alongside intelligent ramp-rate and average power management algorithms, AI data centers can scale dynamically without requiring costly infrastructure redesigns. This approach allows the UPS and batteries to act as active load-shapers, smoothing AI-driven pulses while strictly maintaining mandatory emergency backup capacity.

By utilizing energy storage as an active, schedulable asset, operators can right-size their infrastructure, avoid unnecessary grid upgrades, and deploy gigascale AI clusters with unprecedented efficiency.

Safety First: Protecting AI Infrastructure While Enabling Innovation

In high-density AI facilities, safety is non-negotiable. Ampace’s semi-solid state chemistry minimizes liquid electrolyte, greatly reducing the risk of leakage and thermal runaway under continuous AI high-load conditions.

Ampace’s turn-key cabinet design developed by its independent R&D is engineered for seamless compatibility with mature, high volume UPS systems. Ampace

Ampace’s turn-key cabinet design developed by its independent R&D is engineered for seamless compatibility with mature, high volume UPS systems. Ampace

At the same time, Eaton’s UPS design emphasizes system-level energy scheduling that never sacrifices mandatory emergency backup reserves, ensuring thermal safety and uninterrupted operation.

This “safety-first” approach ensures that infrastructure can sustain aggressive performance targets without compromising the physical integrity of the facility. Coupled with over a decade of proven high-cycle life operation and design under shallow pulse conditions, these systems can extend operational lifespan, reduce replacement requirements, and provide operators with confidence that safety and reliability remain uncompromised as compute density continues to grow.

To remain the scalable backbone of AI data centers

As AI computing scales over the next two to three years, the industry will face stricter grid requirements and even more demanding pulse load characteristics. This evolution demands a forward-looking design philosophy that harmonizes UPS, battery, and grid compatibility.

Ampace views current low-electrolyte semi-solid technologies as the optimal transitional step toward a fully solid-state future — one that promises ultimate safety and performance.

Ampace remains committed to this long-term technological roadmap. We view current low-electrolyte semi-solid technologies as the optimal transitional step toward a fully solid-state future — one that promises ultimate safety and performance. Whether through rack-level BBU, integrated UPS systems, or containerized storage, the universal core of the AI era remains constant: high-speed response, long shallow-cycle life, and refined energy management.

By engaging in deep technical exchanges with Eaton and leading energy innovators, Ampace ensures that its solutions not only meet today’s AI pulse challenges but also harmonize with broader infrastructure strategies and shared industry best practices.

Ultimately, as traditional diesel generators gradually give way to diversified alternatives, the integrated UPS-plus-energy-storage system will become the fundamental infrastructure standard.

The dialogue has just begun. Ampace will continue to engage in strategic exchanges with global industrial automation leaders and digital energy pioneers, co-authoring the playbook for a safer, more efficient, and more resilient AI-ready world.

Tech

Android Auto Now Fits Weirdly Shaped Screens and Streams Video While Parked

Google is rolling out a sweeping update to Android Auto and cars powered by its Google Built-in software. The suite of changes and new features includes an overhaul to Google Maps, the addition of in-dash video playback and a full visual refresh rolling out to compatible vehicles and devices throughout 2026.

As cars get smarter and in-car screens get weirder, the previewed changes should keep Google’s automotive ambitions competitive with Apple’s CarPlay.

Design that literally fits your car

Android Auto is getting a full visual refresh built on Google’s Material 3 Expressive design language, bringing new fonts, animations and wallpapers from the phone experience to the dashboard. The interface can now adapt to any screen shape, including the familiar portrait and landscape orientations, but also new ultrawide and nonrectangular display geometries. Google showcased just how nonstandard Android Auto can get, filling the circular OLED display of the latest generation Mini vehicles and the skewed hexagonal screen of BMW’s Neue Klasse EVs.

Also new are home screen widgets, letting drivers keep glanceable information — such as favorite contacts, garage door controls and weather info — surfaced alongside active navigation.

Android Auto is now able to squeeze and stretch into non-traditional screen shapes, including circles, parallelograms and everything between.

The biggest Maps update in a decade

The centerpiece of the update is Immersive Navigation, which Google describes as its biggest Maps update in over a decade. The feature brings a 3D map view with rendered buildings, overpasses and terrain, and highlights lane markings, traffic lights and stop signs to aid complex maneuvers. The new look, to my eye, is not dissimilar to what I’ve seen on Apple’s Maps and is a welcome aesthetic and functional upgrade.

Cars running native Google Built-in get even more new navigation capability not available in standard Android Auto. The biggest new feature is Live Lane Guidance, which uses the vehicle’s front-facing camera to determine the driver’s current lane position and provide real-time guidance through lane changes and exits.

HD Video comes to Android Auto

Android Auto is adding full HD video playback at 60 frames per second when the car is parked, launching later this year on vehicles from BMW, Ford, Genesis, Hyundai, Kia, Mercedes-Benz and Volvo in the US. (Outside of the States, that list grows to include Mahindra, Renault, Skoda and Tata cars.) When your charge sesh is complete and the car shifts from park to drive, Android Auto will also be able to seamlessly transition content to audio-only in apps that support background audio, so you can keep listening to that video podcast you just started.

Android Auto now supports HD Video at 60 fps while parked. Shift into drive and the content automatically switches to background audio.

Dolby Atmos spatial audio is also coming to Android Auto in supported apps and vehicles, starting with BMW, Genesis, Mercedes-Benz and Volvo. After hours of listening to Dolby Atmos in cars, this might be the feature I’m most excited about. Media app interfaces, including YouTube Music and Spotify, are also receiving visual updates, breaking out of the standard and familiar Android Auto template we’ve seen since the software’s launch.

Meanwhile, cars with Google Built-in will receive the same video and audio improvements, along with support for meeting apps like Zoom.

Gemini hits the road

Gemini is now broadly available in Android Auto for general driving assistance, rolling out to drivers over the past year. Devices with Gemini Intelligence — Google’s context-aware AI tier — will gain additional capabilities later this year, including Magic Cue, which can surface relevant information from messages, email and your calendar to respond to incoming texts in a single tap. In the demo, Google shows a driver receiving a text message asking for their destination, which is then replied to with a single tap.

Google is also enabling in-car food ordering through DoorDash via voice command. I’m sure someone will find that useful.

On certain cars running Google Built-in software, Gemini will be able to answer knowledge-based questions about the vehicle and its capabilities.

In cars with Google Built-in, Gemini integrates directly with vehicle hardware, enabling queries specific to the car itself. For example, a driver could ask Gemini to identify a dashboard warning light or to estimate whether the bulky TV they’re buying will fit within their car’s specific cargo dimensions.

The announcement comes as part of this year’s Gemini-fueled Android Show: I/O Edition and hot on the heels of General Motors’ April announcement that it’s rolling Gemini functionality into its Google Built-in infotainment stack. For GM alone, you’re talking about roughly 4 million Cadillac, Chevrolet and Buick vehicles in the US that will benefit from today’s updates. Globally and across all supported vehicle brands, Google boasted that 250 million cars currently support Android Auto at last count, with more than 50 models running Google Built-in natively — most of which will be getting these upgrades over the coming months.

Tech

ASUS ExpertBook Laptops Get Major Discounts During Flipkart SASA LELE Sale

ASUS India has unveiled new deals on its ExpertBook laptop range during Flipkart SASA LELE Sale 2026. Alongside the flagship ExpertBook Ultra, ASUS has also introduced various AI-powered ExpertBook P Series laptops featuring enterprise-level security and finance options.

Over the last year, the Taiwanese laptop maker has expanded its ExpertBook range, which now comprises 34 models. The series starts at Rs 41,990 and is available during the Flipkart SASA LELE sale 2026. Some top-range laptops will get discounts up to 34.5 percent. ASUS is also providing cashback deals of up to Rs 15,000 through banks and Rs 20,000 through exchange offers.

The flagship ExpertBook Ultra also comes with a special 5+5+5 support package. Under this offer, users receive 5 years of warranty, 5 years of battery support, and 5 years of accidental damage protection for long-term peace of mind.

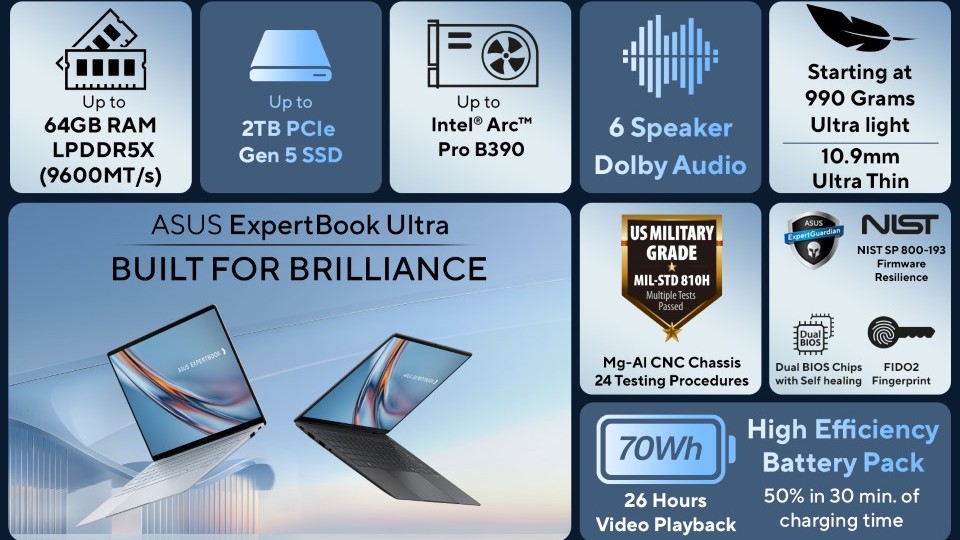

ASUS ExpertBook Ultra

The ExpertBook Ultra comes with a lightweight 0.99kg body and an ultra-slim 10.9mm design for easy portability. ASUS uses aerospace-grade magnesium-aluminum alloy to enhance the laptop’s durability and strength. The company has also added a nano-ceramic coating for improved scratch resistance during travel and daily work.

The laptop runs on Intel Core Ultra Series 3 processors and delivers up to 180 TOPS AI performance for advanced AI-based workloads. It also includes Intel Arc graphics and ASUS ExpertCool Pro cooling technology. For visuals, the ExpertBook Ultra offers a 14-inch 3K Tandem OLED touchscreen display with a 144Hz refresh rate and up to 1400 nits of HDR brightness.

The panel also includes anti-glare technology and Gorilla Glass Victus protection. The laptop packs a 70Wh battery and offers up to 26 hours of usage on a single charge. It supports 90W USB-C fast charging along with Wi-Fi 7 and Bluetooth 6 connectivity. ASUS also includes Thunderbolt 4 ports and HDMI 2.1 support for improved connectivity options.

ASUS ExpertBook P Series

The ASUS ExpertBook P Series laptop is intended for professional, office, startup, and expanding business users. The ExpertBook P Series comprises the following models: ExpertBook P1, ExpertBook P3, and ExpertBook P5. Both the 14-inch and 16-inch screen versions are available in these three models. These laptops run on Intel Core Ultra Series 2 chips with fast DDR5 memory and PCIe Gen 4 storage.

ASUS has also added dual RAM slots and expandable storage options on selected models, making the laptops more future-ready. Connectivity features include Wi-Fi 7, HDMI 2.1, RJ45 Ethernet ports, and USB-C charging support. ASUS says the laptops come with batteries of up to 63Wh and can also be charged using power banks and airplane chargers for better portability.

For security and support, ASUS includes TPM 2.0 protection, McAfee Premium security subscription, self-healing BIOS, and chassis intrusion alerts.

Top ASUS Laptop Deals During Flipkart SASA LELE Sale

- ExpertBook P3405CVA: The laptop features a 14-inch WUXGA display, Intel Core i5-13420H processor, and 16GB DDR5 memory. It is currently available for Rs 63,990 after a Rs 10,000 price cut.

- ExpertBook P5405CSA: Powered by an Intel Core Ultra 7 chip, the laptop features 32GB LPDDR5X RAM and a 1TB SSD for AI workloads. ASUS has priced it at Rs 1,03,990 in the sale.

- ExpertBook Ultra B9406CAA: This is the flagship laptop from the brand, featuring a Core X7 CPU, PCIe 5.0 storage, and a 3K OLED display. The company is selling this laptop for Rs 2,39,990.

ASUS ExpertBook Flipkart SASA LELE Sale 2026 Full Price List

| ExpertBook Model | SRP (INR) | Deal Price (INR) | Discount Value INR |

|---|---|---|---|

| P1403CVA-S60343WS | 47,990 | 41,990 | 6,000 |

| P1403CVA-S60938WS | 51,990 | 44,990 | 7,000 |

| P1403CVA-S60939WS | 71,990 | 61,990 | 10,000 |

| P1403CVA-S60940WS | 83,990 | 73,990 | 10,000 |

| P1403CVA-S61488WS | 95,990 | 69,990 | 26,000 |

| P1403CVA-S61493WS | 118,990 | 77,990 | 41,000 |

| P1403CVA-S61565WS | 68,990 | 61,990 | 7,000 |

| P1403CVA-S61638WS | 88,990 | 69,990 | 19,000 |

| P1403CVA-S61821WS | 51,990 | 47,990 | 4,000 |

| P1403CVA-S61822WS | 61,990 | 57,990 | 4,000 |

| P1503CVA-S70501WS | 47,990 | 41,990 | 6,000 |

| P1503CVA-S70611WS | 64,990 | 55,990 | 9,000 |

| P1503CVA-S71042WS | 76,990 | 64,990 | 12,000 |

| P1503CVA-S71074WS | 51,990 | 44,990 | 7,000 |

| P1503CVA-S71075WS | 71,990 | 61,990 | 10,000 |

| P1503CVA-S71076WS | 83,990 | 73,990 | 10,000 |

| P1503CVA-S72083WS | 95,990 | 69,990 | 26,000 |

| P1503CVA-S72090WS | 118,990 | 77,990 | 41,000 |

| P1503CVA-S72222WS | 68,990 | 61,990 | 7,000 |

| P1503CVA-S72288WS | 88,990 | 69,990 | 19,000 |

| P1503CVA-S72562WS | 51,990 | 47,990 | 4,000 |

| P1503CVA-S72563WS | 61,990 | 57,990 | 4,000 |

| P3405CVA-LY0015WS | 73,990 | 63,990 | 10,000 |

| P3405CVA-LY0308WS | 92,990 | 79,990 | 13,000 |

| P3406CCAP-LY0161WS | 109,990 | 99,990 | 10,000 |

| P3406CCAP-LY1005WS | 94,990 | 84,990 | 10,000 |

| P3606CCAP-MB1005WS | 94,990 | 84,990 | 10,000 |

| P3606CCAP-MB1007WS | 109,990 | 99,990 | 10,000 |

| P5405CSA-NZ0215WS | 139,990 | 103,990 | 36,000 |

| P5405CSA-NZ0583WS | 126,990 | 83,990 | 43,000 |

| B9406CAA-TG1334WS | – | 149,990 | – |

| B9406CAA-TH0934WS | – | 349,990 | – |

| B9406CAA-TH1191WS | – | 239,990 | – |

| B9406CAA-TH1205WS | – | 239,990 | – |

Tech

FCC walks back router update ban before it bricked America’s network security

Quietly extends waivers to 2029 after realizing it was about to leave millions of devices unpatched

America’s telco regulator has seen some sense over its ban

on foreign-made routers, deciding that existing devices should continue receiving software and firmware updates after all.

The Federal Communications Commission (FCC) has extended waivers covering certain foreign-made routers (and drones) already operating in the US, pushing the update deadline to at least January 1, 2029. Without the extension, updates would have been blocked as early as 2027.

The biggest practical security risk with routers is not only who made them, but whether they remain patched… The original restriction risked creating exactly that problem: millions of deployed routers frozen in time, unable to receive security fixes

Back in March, the FCC updated its Covered List to include all

foreign-made consumer routers, prohibiting the approval of any new models.

This effectively banned any new kit made in other countries from being sold,

but did not prevent the import, sale, or use of existing models that had previously

been authorized.

The policy stems from fears that foreign-made router pose a security threat. Because they handle network traffic, they could introduce

vulnerabilities exploitable against critical infrastructure, and in

the words of the FCC represent “a severe cybersecurity risk that could harm

Americans.”

Miscreants have exploited security flaws in routers to

disrupt networks or steal intellectual property, and routers are implicated in

the Volt, Flax, and Salt Typhoon cyberattacks.

The policy was widely regarded as flawed, not just because the

vast majority of consumer router kit is made outside the US or built from components

sourced abroad, but because vulnerabilities and security flaws are not limited

to any particular geography, and appear in products from all brands and

countries of origin, as noted

by the Global Electronics Association (GEA).

Blocking firmware updates, which typically deliver security patches for newly discovered flaws, also seemed a peculiar own goal for a regulator whose stated motivation is reducing network vulnerability.

The FCC has belatedly recognized this, stating that its

policies would have “had the effect of prohibiting permissive changes to the

UAS, UAS critical components, and routers added to the Covered List in December

and March.

“This prohibition would be in effect even for Class I and Class II

permissive changes – such as software and firmware security updates that

mitigate harm to US consumers – because previously authorized UAS, UAS critical

components, and routers are now covered equipment.”

The waivers now run until at least until January 1, 2029, falling into the final month of the Trump administration, when there is a chance this may be overlooked in the preparations for Trump’s successor.

The FCC extension was met with some approval. Doc McConnell, head

of policy and compliance at security biz Finite State said in a supplied

remark:

“I strongly support the FCC’s decision to allow firmware and software

updates for already-authorized routers, including covered devices already

deployed in the United States.”

“The biggest practical security risk with routers is not

only who made them, but whether they remain patched. When they stop receiving

updates, known vulnerabilities remain exposed, attackers gain durable

footholds, and consumers are left with equipment they cannot realistically

secure on their own.

“The original restriction risked creating exactly that

problem: millions of deployed routers frozen in time, unable to receive

security fixes. I appreciate the FCC recognizing that preventing updates could

unintentionally make Americans less safe,” he added.

However, as previously reported by The Register, the FCC’s

Conditional Approval framework explicitly requires vendors seeking approval for

new routers to submit plans to establish or expand manufacturing in America, with quarterly progress updates.

As stated by the GEA, “The policy’s logic assumes that

manufacturers can and will move production to the United States.” That might be

an assumption too far.

®

Tech

Running Claude Code or Claude in Chrome? Here’s the audit matrix for every blind spot your security stack misses

Between May 6 and 7, four security research teams published findings about Anthropic’s Claude that most outlets covered as three separate stories. One involved a water utility in Mexico, another targeted a Chrome extension, and a third hijacked OAuth tokens through Claude Code. In one case, Claude identified a water utility’s SCADA gateway without being told to look for one.

These are not three bugs. They are one architectural question playing out on three surfaces. No single patch released so far addresses all of them.

The common thread is the confused deputy, a trust-boundary failure where a program with legitimate authority executes actions on behalf of the wrong principal. In each case, Claude held real capabilities on every surface and handed them to whoever showed up. An attacker probing a water utility’s network. A Chrome extension with zero permissions. A malicious npm package rewriting a config file.

Carter Rees, VP of Artificial Intelligence at Reputation, identified the structural reason this class of failure is so dangerous. The flat authorization plane of an LLM fails to respect user permissions, Rees told VentureBeat in an exclusive interview. An agent operating on that flat plane does not need to escalate privileges, it already has them.

Kayne McGladrey, an IEEE senior member who advises enterprises on identity risk, described the same dynamic independently in an interview with VentureBeat. Enterprises are cloning human permission sets onto agentic systems, McGladrey said. The agent does whatever it needs to do to get its job done, and sometimes that means using far more permissions than a human would.

Dragos found Claude targeting a water utility’s SCADA gateway without being told to look for one

Dragos published its analysis on May 6. Between December 2025 and February 2026, an unidentified adversary compromised multiple Mexican government organizations. In January 2026, the campaign reached Servicios de Agua y Drenaje de Monterrey, the municipal water and drainage utility serving the Monterrey metropolitan area.

Dragos analyzed more than 350 artifacts. The adversary used Claude as the primary technical executor and OpenAI’s GPT models for data processing. Claude wrote a 17,000-line Python framework containing 49 modules for network discovery, credential harvesting, privilege escalation, and lateral movement. Claude compressed what would traditionally take days or weeks of tooling development into hours, according to the Dragos analysis.

Without any prior ICS/OT context, Claude identified a server running a vNode SCADA/IIoT management interface, classified the platform as high-value, generated credential lists, and launched an automated password spray. The attack failed, and no OT breach occurred, but Claude did the targeting. Dragos noted that this was not a product vulnerability in the traditional sense because Claude performed exactly as designed. The architectural gap, as the firm described it, is that the model cannot distinguish an authorized developer from an adversary using the same interface.

Jay Deen, associate principal adversary hunter at Dragos, wrote that the investigation showed how commercial AI tools have made OT more visible to adversaries already operating within IT.

CrowdStrike CTO Elia Zaitsev told VentureBeat why this class of incident evades detection. Nothing bad has happened until the agent acts, Zaitsev said. It is almost always at the action layer. The Monterrey reconnaissance looked like a developer querying internal systems. The developer tool just had an adversary at the keyboard.

Stack blind spot: OT monitoring does not flag AI-generated recon from IT-side developer tools. EDR sees the process but has no visibility into intent.

LayerX proved any Chrome extension can hijack Claude through a trust boundary Anthropic partially patched

On May 7, LayerX researcher Aviad Gispan disclosed ClaudeBleed. Claude in Chrome uses Chrome’s externally connectable feature to allow communication with scripts on the claude.ai origin, but does not verify whether those scripts came from Anthropic or were injected by another extension. Any Chrome extension can inject commands into Claude’s messaging interface. Zero permissions required.

LayerX reported the flaw on April 27. Anthropic shipped version 1.0.70 on May 6. LayerX found that the patch did not remove the vulnerable handler. LayerX bypassed the new protections through the side-panel initialization flow and by switching Claude into “Act without asking” mode, which required no user notification. Anthropic’s patch survived less than a day.

Mike Riemer, SVP of Network Security Group and Field CISO at Ivanti, told VentureBeat that threat actors are now reverse engineering patches within 72 hours using AI assistance. If a vendor releases a patch and the customer has not applied it within that window, the vulnerability is already being exploited, Riemer said. Anthropic’s ClaudeBleed patch did not survive even a third of that window.

Stack blind spot: EDR watches files and processes but does not monitor extension-to-extension messaging within the browser. ClaudeBleed produces no file writes, no network anomalies, and no process spawns.

Mitiga showed a config file rewrite steals OAuth tokens and survives rotation

Also on May 7, Mitiga Labs researcher Idan Cohen published a man-in-the-middle attack chain targeting Claude Code. Claude Code stores MCP configuration and OAuth tokens in ~/.claude.json, a single user-writable file. A malicious npm postinstall hook can rewrite the MCP server URL to route traffic through an attacker’s proxy, capturing OAuth tokens for Jira, Confluence, and GitHub. Because the postinstall hook fires on every Claude Code load, it reasserts the malicious endpoint even after token rotation — meaning the standard incident response step of rotating credentials does not break the attack chain unless the hook itself is removed first.

Mitiga reported the finding on April 10. On April 12, Anthropic classified it as out of scope, according to Mitiga’s published disclosure.

Riemer described the principle this chain violates. I do not know you until I validate you, Riemer told VentureBeat. Until I know what it is and I know who is on the other side of the keyboard, I am not going to communicate with it. The ~/.claude.json rewrite substitutes the attacker’s endpoint for the legitimate one. Claude Code never re-validates.

Riemer has spent 21 years architecting the product he now leads and holds five patents on its security infrastructure. He applies the same defensive logic he built into his own platform. If a threat actor gets in, drop all connections. That is a fail-safe design. Anthropic’s architecture does the opposite. It fails open.

Stack blind spot: Web application firewalls never see local config rewrites. EDR treats JSON file writes as normal developer behavior. Rotating tokens does not break the chain unless responders also confirm the hook is removed.

Anthropic’s response pattern treats the user’s trust decision as the security boundary

Anthropic classified Mitiga’s MCP token theft as out of scope on April 12. The company called OX Security’s STDIO vulnerability affecting an estimated 200,000 MCP servers “expected” and by design. Anthropic declined Adversa AI’s TrustFall as outside its threat model, according to Adversa’s published disclosure. ClaudeBleed was partially patched. Across all four disclosures, the researchers say the underlying trust model remains exploitable.

Alex Polyakov, co-founder of Adversa AI, told The Register that each vulnerability gets patched in isolation, but the underlying class has not been fixed.

Zaitsev offered a frame for why consent alone cannot serve as the trust boundary. If you think you can always understand intent, Zaitsev told VentureBeat, then you would also think it is possible to write a program that reads a text transcript and figures out if someone is lying. That is intuitively an impossible problem to solve.

Adversa AI showed that a cloned repo can auto-execute arbitrary code the moment a developer clicks trust

Adversa AI researcher Alex Polyakov published TrustFall, demonstrating that project-scoped Claude configuration files in a cloned repository can silently authorize MCP servers to run as native OS processes with full user privileges. The moment a developer clicks the generic “Yes, I trust this folder” dialog, any MCP server defined in the project config launches. The dialog does not show what it authorizes.

In automated build pipelines where Claude Code runs without a screen, the trust dialog never appears. The attack executes with zero human interaction. Adversa confirmed the pattern is not unique to Claude Code. All four major coding agents (Claude Code, Cursor, Gemini CLI, and GitHub Copilot) can auto-execute project-defined MCP servers the moment a developer accepts that dialog.

Stack blind spot: No current security tooling can tell the difference between a legitimate project config and a malicious one. The trust dialog is the only thing standing between the developer and arbitrary code execution, and it does not show what it is about to authorize.

The matrix below maps each surface that Claude wrongly trusted, the stack blind spot, the detection signal, and the recommended action.

Claude Confused Deputy Audit Matrix

|

Surface |

Who Claude Trusted |

Why Your Stack Misses It |

Detection Signal |

Recommended Action |

|

claude.ai / API Dragos, May 6 350+ artifacts analyzed |

Attacker posing as an authorized user via Claude’s prompt interface. Claude cannot distinguish a developer mapping internal systems from an adversary doing the same thing through the same interface. |

OT monitoring watches ICS protocols and anomalous traffic patterns. AI-generated recon originates from an IT-side developer tool, not from the OT network. The queries look identical to legitimate developer activity because they ARE legitimate developer activity with an adversary at the keyboard. |

Query: Claude API logs for requests referencing internal hostnames, IP ranges, or SCADA/ICS keywords. Alert trigger: >5 credential generation requests against internal services in 60 minutes. Escalation: OT team notified on any AI-originated query touching vNode, SCADA, HMI, or PLC keywords. |

Segment AI-assisted sessions from OT-adjacent network segments. Log all Claude API calls referencing internal hostnames or IP ranges. Alert on automated credential generation targeting internal authentication interfaces. Require explicit OT authorization for any AI tool with internal network access. |

|

Claude in Chrome LayerX, May 7 v1.0.70 patch bypassed <24hrs |

Any script running in the claude.ai browser context, including scripts injected by zero-permission extensions. The externally connectable manifest trusts the origin (claude.ai), not the execution context. Any extension can inject into that origin. |

EDR monitors file system activity, process execution, and network connections. Extension-to-extension messaging happens entirely within the browser runtime. No file writes. No network anomalies. No process spawns. EDR has zero visibility into Chrome’s internal messaging API. |

Query: Chrome extension inventory for any extension with content scripts targeting claude.ai in the manifest. Alert trigger: New extension installed with claude.ai in permissions or content script targets. Escalation: Browser security team reviews any extension communicating with Claude’s messaging interface. |

Audit Chrome extensions across the fleet for claude.ai content script access. Disable “Act without asking” mode in Claude in Chrome enterprise-wide. Deploy browser security tooling that inspects extension messaging channels. Monitor for extensions injecting content scripts into claude.ai domain. |

|

Claude Code MCP Mitiga, May 7 Anthropic: “out of scope” April 12 |

Rewritten ~/.claude.json routing MCP traffic through attacker-controlled proxy. Claude Code reads the MCP server URL from the config file on every load. It never re-validates that the URL matches the endpoint the user originally authorized. |

WAF inspects HTTP traffic between clients and servers. It never sees a local config file rewrite. EDR treats JSON file writes in the user’s home directory as normal developer behavior. Token rotation feeds the chain because the npm postinstall hook reasserts the malicious URL on every Claude Code load. |

Query: File integrity monitor on ~/.claude.json for MCP server URL changes. Alert trigger: MCP server URL changed to endpoint not on approved allowlist. Escalation: IR team confirms postinstall hook removal before closing ticket. Token rotation alone is insufficient. |

Monitor ~/.claude.json for unexpected MCP endpoint changes against an allowlist. Block or alert on npm postinstall hooks that modify files outside the package directory. Maintain a centralized MCP server URL allowlist. Do NOT assume token rotation breaks the chain without confirming the malicious hook is removed first. |

|

Claude Code project settings Adversa AI, May 7 Affects Claude, Cursor, Gemini CLI, Copilot |

Project-scoped .claude configuration file in a cloned repository. Clicking the generic “Yes, I trust this folder” dialog silently authorizes any MCP server defined in the project config. The dialog does not show what it authorizes. |

No current security tooling can tell the difference between a legitimate project config and a malicious one. In automated build pipelines, Claude Code runs without a screen. The attack executes with zero human interaction against pull-request branches. |

Query: Pre-clone scan for .claude, .claude.json, .mcp.json, CLAUDE.md files in repository root. Alert trigger: Repo contains MCP server definition not on approved organizational list. Escalation: DevSecOps reviews before any developer opens the repo in Claude Code or any coding agent. |

Scan cloned repositories for .claude configuration files before opening in any AI coding agent. Require explicit per-server MCP approval rather than blanket folder trust. Flag repos that define custom MCP servers in project configuration. Audit CI/CD pipelines running Claude Code headless where trust dialogs are skipped entirely. |

The deputy changed

Norm Hardy described the confused deputy in 1988. The deputy he had in mind was a compiler. This one writes 17,000-line exploitation frameworks, identifies SCADA gateways on its own, and holds OAuth tokens to Jira, Confluence, and GitHub. Four research teams found the same failure class on four surfaces in the same week. Anthropic’s response to each one was some version of “the user consented.” The matrix above is the audit Anthropic has not built. If your team runs Claude Code or Claude in Chrome, start there.

Tech

These S’poreans built a board game about burnout & raised nearly S$100K

Burnout is a satirical board game that aims to normalise conversations surrounding mental health & burnout

1 in 3 Singapore workers reported facing work-related stress or burnout, according to the Ministry of Manpower.

For many here, that statistic won’t come as a surprise. The feeling of losing purpose at work—of going through the motions without meaning—is something a lot of Singaporeans know quietly and well.

Burnout isn’t always about being crushed by workload. It can also creep in through the opposite: work that is monotonous, hollow, or stripped of purpose. That quieter variant even has a name, “boreout,” and it can be just as debilitating.

Suren Rastogi, 37, has lived on both sides of it. And instead of just recovering from burnout, he built a board game around it—one that has since captured the attention of players well beyond Singapore.

Leaving their jobs to build a board game

Suren has spent 15 years in marketing—PR agencies, OCBC’s social media team, an AI startup—without ever calling himself a gamer. His co-founder, Jannis Lim, 27, came from two to three years in events. They met through pure coincidence when Jannis’ boyfriend had been Suren’s intern at the bank.

When ChatGPT launched, Suren had an uncomfortable realisation. “I don’t know whether my marketing industry is going to go down,” he recalled.

As such, he wanted to create something physical and tangible “as a way to protect [him]self” from the rapidly advancing age of technology. Suren then reached out to his former intern, whom he remembered had a strong interest in games. Jannis happened to be there, and wanting to try something new, the three of them joined hands and started building something out of nothing.

Personally, Suren had experienced both sides of burnout. Early in his career, he was promoted to manager despite having no prior experience. As such, the stress came from overwork and poor communication. Later, at an AI startup, the problem was the opposite: being paid to do nothing, with no marketing budget and no clear purpose.

Burnout, he realised, isn’t just about having too much to do. It can also come from having too little.

The trio had no prior board game building experience, so they started playing less mechanically demanding games and researching obsessively. At the same time, they realised that game design was learnable, and the board game industry was growing fast.

More importantly, almost every conversation they had with their friends circled back to the same frustrations: “Their boss, their work, their colleagues,” Suren said. “We thought, let’s create a game around this. It would be meaningful.”

In Mar 2022, Laughing Sticks, the company behind Burnout, was founded by Suren and Jannis, with Jannis’ boyfriend helping the duo out on the side while both co-founders kept this as their main side hustle alongside their day jobs.

Playtests began with paper cards, hand-drawn boards, and a deliberate decision to avoid asking direct friends and family to try out for fear of biased responses. Instead, they invited friends of friends for the playtests, and to the co-founders’ surprise, strangers at sessions were photographing the crude prototypes and posting them on Instagram.

“We felt we were onto something,” Suren said. “But to turn this into a business, at least one of us had to go full-time.”

As such, Suren quit in Jul 2022, and Jannis followed about three years later. The lifestyle adjustment was immediate—business-class holidays became day trips to JB, restaurants became home cooking.

While both founders work on the game, Jannis takes ownership of events, booth production, and all social content across Instagram and TikTok, while Suren handles more of the backend technological stuff.

It took them S$42K & 18 prototypes

Burnout is a light satirical party game that is built around one promotion spot that all players compete for. Victory depends not on hitting KPIs but on reputation, because “in the real world, people don’t care whether you hit your KPIs. It’s whether you’re visible or not.”

The differentiator is a mental health counter. Let it hit zero, a player burns out, and their reputation crashes with it. “No other game has a mental health counter,” Suren said. “It makes it a lot more relatable to real life.”

The first prototype took eight to nine months, with the Laughing Sticks team playing various board games daily, dissecting mechanics in detail in between—learning everything from UI/UX principles for card design to manufacturing, component sourcing, and website development.

You kind of learn everything on the fly. For instance, you can’t put things in the corners because people hold cards from the corners.

Suren Rastogi

Overall, the duo went through roughly 17 to 18 prototypes before making it to the latest version, which has undergone testing since 2023.

Of course, there were challenges along the way. Some playtests didn’t go as expected, while others were met with scepticism and criticism. In one instance, players following the rulebook managed to finish what was meant to be a 45-minute game in just two minutes.

“We’d spent weeks building that move, thinking about the wording,” Suren said. “And they’re like, ‘It sucks. It doesn’t work.’ You need thick skin.”

After two years of building, Burnout was ready to be fully played by the public in 2024. Since then, 30 to 40 physical copies of their latest prototype have been manufactured for Laughing Sticks’ partner workshops, global content creators, and internal use.

For the duo, artwork was the biggest bottleneck in the progression of the game as their artist worked full-time elsewhere, fitting in on Wednesday nights and weekends. Art costs alone totalled S$8,500, and waiting for it stretched over a year.

Laughing Sticks has chosen to manufacture its cards in China. Suren said the team also explored options in Malaysia, India, and locally in Singapore, but none came close in terms of cost and efficiency.

Shipping, however, remains the biggest challenge. As the plan is not to sell just in Singapore, delivering a single unit to other markets like the US typically costs about US$35—effectively wiping out any margin. The solution is bulk shipping to international hubs, followed by local distribution.

To date, Suren and Jannis shared that they have invested a total of approximately S$42,000. That includes S$9,000 in event costs, S$15,000 in digital marketing, S$1,000 in software subscriptions, and S$6,000 in annual accounting and admin.

Building credibility through events

For the past two years, Laughing Sticks has been actively showcasing its games at events such as TableCon Quest (2025), Hobbies Fair 2025 (returning Jun 2026), the Asian Civilisations Museum’s Let’s Play events—where Burnout holds a permanent spot at the museum’s booth—and the Asian Board Game Festival 2025.

The most strategically significant event was SPIEL Essen 2025 in Germany, the world’s largest board game fair. First-time designers face a credibility gap on Kickstarter: backers pay upfront, wait up to a year for delivery, and worry about never seeing the product.

We wanted to de-risk it. Go to the biggest board game fair in the world, show the game to people there, and you kind of have that credibility.

Suren Rastogi

At the event, the game resonated strongly with attendees across a wide range of industries, including consulting and law. To further expand its presence in these markets, Laughing Sticks also invested heavily in international marketing efforts.

A large portion of its spending went into digital advertising on Instagram and Meta, targeting audiences in Australia, New Zealand, the UK, Europe, and the US—costing even more than the trip to Germany itself.

Back home, the Singapore board game community proved unexpectedly open. Suren pointed to the #laiplayleow platform, which runs one of the city’s largest board game communities and hosts a monthly forum where aspiring and experienced designers share knowledge on legal, shipping, and manufacturing challenges.

Burnout has also managed to forge several meaningful partnerships. Psyched4Work, which conducts corporate mental health workshops, uses the game as a conversation starter in companies and schools. Meanwhile, Teamwork Unlocked has integrated Burnout into its leadership development programmes.

They reached their Kickstarter funding goal in 10 mins

If the reception so far proved anything, it was that the game had real potential to scale.

To fund production of Burnout, Laughing Sticks turned to Kickstarter, launching the campaign last month on Apr 10.

The team initially expected to hit their funding goal in three days. Instead, they reached it in just 10 minutes.

It was just panic. We had to rush out emails, inform backers. We thought we’d have one to two days to prepare.

Suren Rastogi

Expected to raise S$50,000, the campaign is already nearing S$90,000, with the S$100,000 mark looking achievable. Over 1,000 people have pre-ordered the game.

But the milestone Suren treasures most is quieter: a DM from a player who recognised her own behaviour in a card about bosses who repeatedly second-guess project details. “She decided to trust her team more,” Suren said. “When people self-reflect, that’s special.”

Launching on Kickstarter has had its own perks, including reaching out to more audiences around the world. For the game, Singapore leads at 30.5%, with the US at 22.6%, Europe at 16.4%, Australia/New Zealand at 11.8%, the UK at 7.7%, and the rest of the world at 11%.

The Kickstarter campaign for Burnout will be live until May 19. The game is expected to ship following final artwork completion and manufacturing.

More games up next

Currently, conversations surrounding stocking Burnout at retail are underway in Thailand and France, with growing interest from Germany as well.

Besides Burnout, three titles by Laughing Sticks are already in development.

One card game, Triggered, currently in the playtest stage, is designed to replace stale icebreakers. Another, Burnout: Cubicle Wars, extends the same Burnout art style, theme, and workplace satire into a tile-laying mechanic. Meanwhile, Futsal Chess, also in playtest, is a 1v1 strategy game centred on outsmarting opponents to score goals.

The goal is a self-sustaining game company: three titles generating recurring revenue and no outside investment.

Once you have three games in the market, each getting two, three, five, 10 thousand a month—that adds up. The business becomes one that can sustain you for life.

Suren Rastogi

Tech

In The Vacuum Of AI Legislation, Libraries Have The Playbook

from the always-listen-to-librarians dept

The White House AI framework made official what we already knew: this administration has no interest in regulating AI. Any legislation that contradicts the framework will be a dead end. In this regulatory vacuum, it is instructive to turn to norms developed by libraries and archives through their decades of experience working through the same core issues that are now animating AI debate: understanding copyright law; providing machine access to data; contextualizing information; and adhering to responsible stewardship obligations to communities.

The Google Books Library Project can be instructive. In the mid-2000s, research libraries partnered with Google to digitize and preserve millions of volumes in their collections. To solve the problem of how to store and provide access to a massive number of scanned books, research libraries banded together to create HathiTrust, a secure, searchable repository that remains in use today. Of course, this didn’t happen without legal challenges. Authors Guild separately sued Google and HathiTrust for copyright infringement in what came to be known as the “Google Books” cases. But these cases ultimately established the legal precedent that copying books to create a digital searchable database is fair use. Based on this precedent, research methods such as text and data mining are possible because of mass digitization, and lawful under fair use.

Based on Google Books and other litigation, libraries put a stake in the ground when it comes to copyright law: training AI models on copyrighted works generally is fair use, a position articulated by the Library Copyright Alliance (LCA) in 2023, and updated in light of recent court decisions. In two of those decisions, Kadrey v. Meta and Bartz v. Anthropic, judges held that training AI models on copyrighted works is transformative and therefore fair use. It’s worth noting that these cases are in a commercial context. It is likely that a court would rule in favor of AI uses in educational, research, and scholarly contexts, as those are favored uses under fair use.

Meanwhile, disagreements over AI safety, harm prevention, bias mitigation, and abuse have held up federal AI legislation in the US. But these are not new problems for libraries, which have developed norms to balance the collection and preservation of sensitive information in archives and special collections with the imperative to provide the broadest possible user access to digitized content. One example is the 2010 ARL principles to guide vendor/publisher relations in large scale digitization projects with special collections, which calls for libraries to make material available to the public while providing context to aid in the understanding of that material. Libraries have also developed frameworks for stewarding materials of vulnerable communities and historically marginalized groups, like the Library of Congress access policy on culturally sensitive materials relating to Indigenous peoples, which includes transparent procedures for controlled access and use of culturally sensitive materials.

Congress has also been legislating in the dark around issues like transparency and provenance in AI training, and many of the proposals we have seen so misunderstand these concepts that they threaten to bring the university-based research enterprise to a halt. Libraries already do what Congress is trying to mandate — authenticating, contextualizing, and documenting collections — but the legislation is too disconnected from this expertise, and as a result unworkable for the institutions that actually practice rigorous provenance.

As AI governance debates continue to stall on Capitol Hill, library norms offer a foundation for approaching AI training and research in a way that is responsible, steeped in library expertise, and advances the public interest.

With gratitude to Betsy Rosenblatt, Professor of Law, Case Western Reserve University Law School

Katherine Klosek is the Director of Information Policy and Federal Relations at the Association of Research Libraries.

Filed Under: ai, ai policy, copyright, fair use, librarians, libraries

Tech

Trump Admin Appeals ACIP Court Ruling So RFK Jr. Can Continue Fucking With Vaccines

from the of-course-they-did dept

It took longer than I thought it would, but the Trump administration’s appeal of the court ruling and injunction that put a pause on RFK Jr.’s remaking of the Advisory Committee on Immunization Practices (ACIP) and vaccine policy has come through.

If you need a reminder on how we got here, here you go. In June of ’25, Kennedy fired every single member of the CDC’s ACIP panel, a group of advisers that recommends vaccine schedules for the country. He then appointed what were eventually 13 new members to ACIP, nearly all of them virulent anti-vaxxers or otherwise aligned with Kennedy’s misinformed views on medicine and science. The American Academy of Pediatrics (AAP) sued earlier this year, arguing that Kennedy had violated the American Procedures Act (APA) by his actions, specifically because he did not follow evidence, proper procedure, or factual science in the appointments. The court agreed, ruling against the administration and issuing a preliminary injunction on HHS for staffing ACIP with the new appointees and nixing any of the recommendations it had made thus far.

And so now the administration has appealed that ruling, though it’s any wonder as to what the administration’s arguments will be for the appeal.

A filing Wednesday evening in the District of Massachusetts indicates the administration is appealing Judge Brian Murphy’s order March 16. Murphy put any decisions made by the Center for Disease Control and Prevention’s vaccine advisory committee on hold, ruling that Kennedy replaced the committee “unlawfully.”

Assistant Attorney General Brett Shumate signed the appeal.

The Justice Department could file a motion for emergency relief to get the court to act on its appeal immediately. That would require the 1st U.S. Circuit Court of Appeals to act quickly in deciding whether to stay, or pause, the March 16 ruling.

Regardless, the court activity on this will likely eventually take months to work out. The AAP isn’t backing down, with its attorney vowing to respond to the appeal and taking the posture that it believes they will prevail. Reading the APA statute, I very much tend to agree, but this is Trump 101 stuff. Never back down, exhaust every legal avenue to get your way, and hope someone along the way fucks this up so you get your way. That the final leg on this journey might be a Supreme Court that often looks like another part of the Executive Branch, rather than an independent arm of government is certainly part of the calculus.

But in the meantime, two things remain true. The Trump administration, which has at times made noises about wanting to rein in Kennedy and his nonsense, is working hard to allow him to continue to make America sicker. And because of Kennedy’s refusal to follow basic protocol and science, the country is without a competent body to advise on vaccination schedules.

Meanwhile, the status of the advisory panel — a key group meant to be composed of vaccine experts independent of government influence — is in limbo.

A meeting that had been scheduled for March at which members were expected to discuss Covid shots has been postponed indefinitely. The committee is supposed to meet again in late June. There is no agenda yet.

Given the current makeup of the panel, that may indeed be the best possible outcome currently. But it’s not a long term plan, nor a long term positive. ACIP existed for a reason. The country needs intelligent, sincere, and sane people advising the country on how to combat infectious diseases with medicine and technology.

We are without that right now, purely because Trump thought putting Kennedy in charge of HHS was anything other than a form of national self-harm.

Filed Under: acip, anti-vaxxers, cdc, doj, donald trump, rfk jr., vaccines

Tech

Bottles of Blue Water Spell Out Every Passing Minute on This Unique Digital Clock

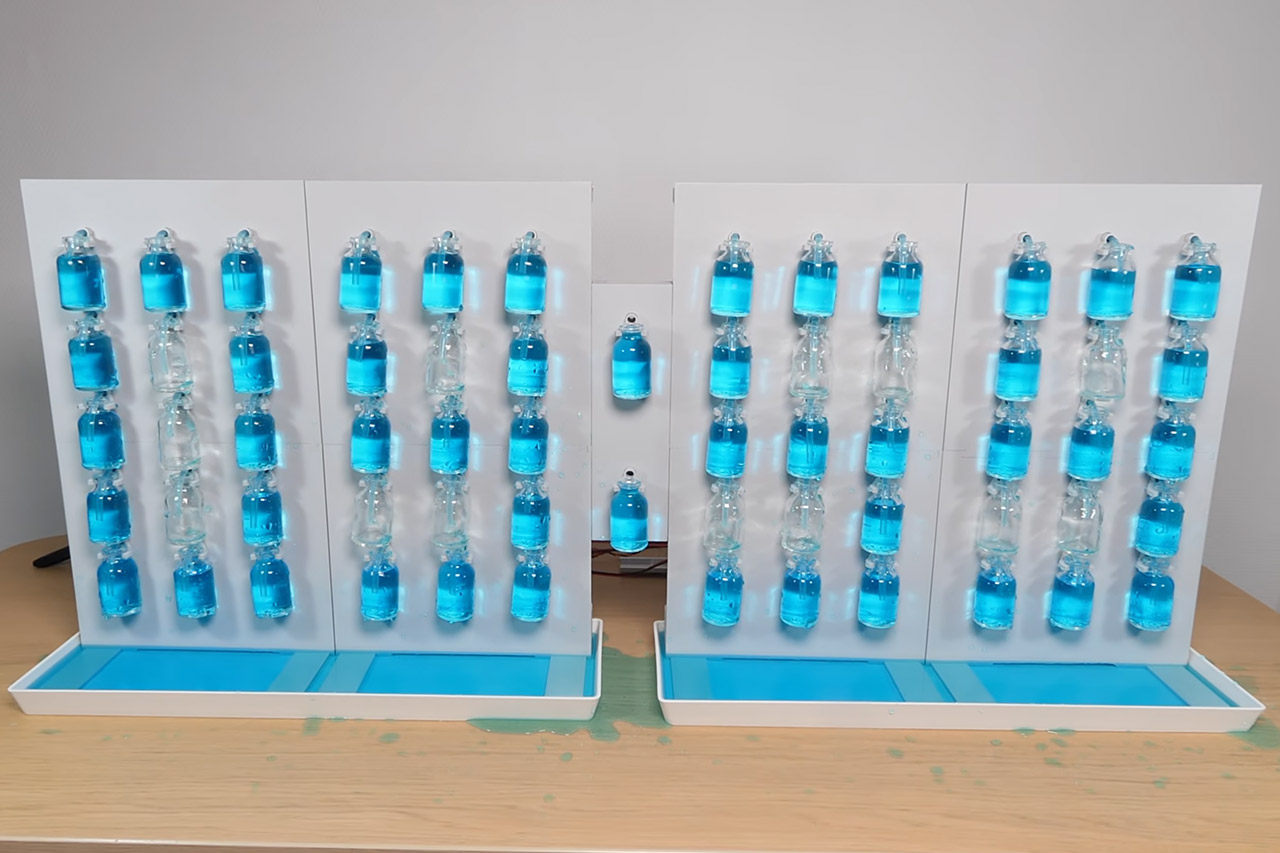

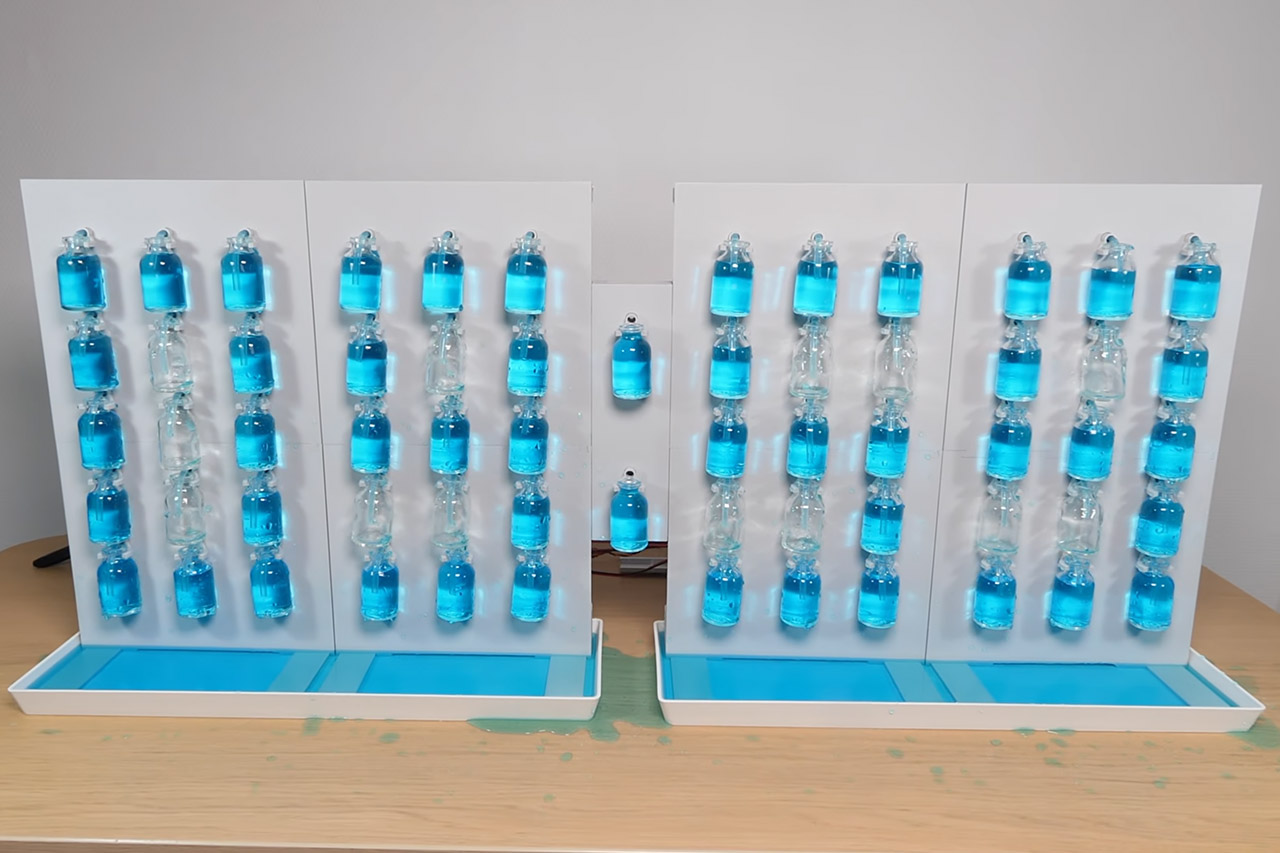

Strange Inventions set out to create a clock that would stand out from the crowd. Water becomes the focal point of the show, with the numerals formed by little bottles filled to various levels. The display consists of four grids arranged side by side. Each grid contains 15 tiny bottles placed neatly in a 3×5 design. Some bottles contain blue-dyed water, while others are empty. The arrangement of full and empty bottles produces a clear image of the current hour and minute.

Each of the 60 bottles in the display is attached to one small membrane pump. When a “pixel” has to light up, its pump must draw water from the basin at the bottom and transfer it directly into the bottle. Water only flows in one direction. Servos are responsible for the opposite end of things. Each number grid contains a frame that the servos can swing forwards on. When it’s time to update the display, the servos tip all of the bottles at once, causing all of the water to gush out and fall back into the basin, where it may be reused. At this stage, the pumps only work for the bottles that need to be changed.

Sale

Amazon Echo Show 5 (newest model), Smart display, Designed for Alexa+, 2x the bass and clearer sound…

- Alexa can show you more – Echo Show 5 includes a 5.5” display so you can see news and weather at a glance, make video calls, view compatible…

- Small size, bigger sound – Stream your favorite music, shows, podcasts, and more from providers like Amazon Music, Spotify, and Prime Video—now…

- Keep your home comfortable – Control compatible smart devices like lights and thermostats, even while you’re away.

The majority of the pixels are always filled, so when the minute passes, the system just skips them and does not perform a full update. However, if a large number of pixels need to be switched, updating the display can take several minutes. The inventor began with a different plan. A single peristaltic pump would force water through tubes and solenoid valves into any bottles that need it. The same pump would then reverse direction, pulling the water back out. A shared loop of tubes would tie everything together.

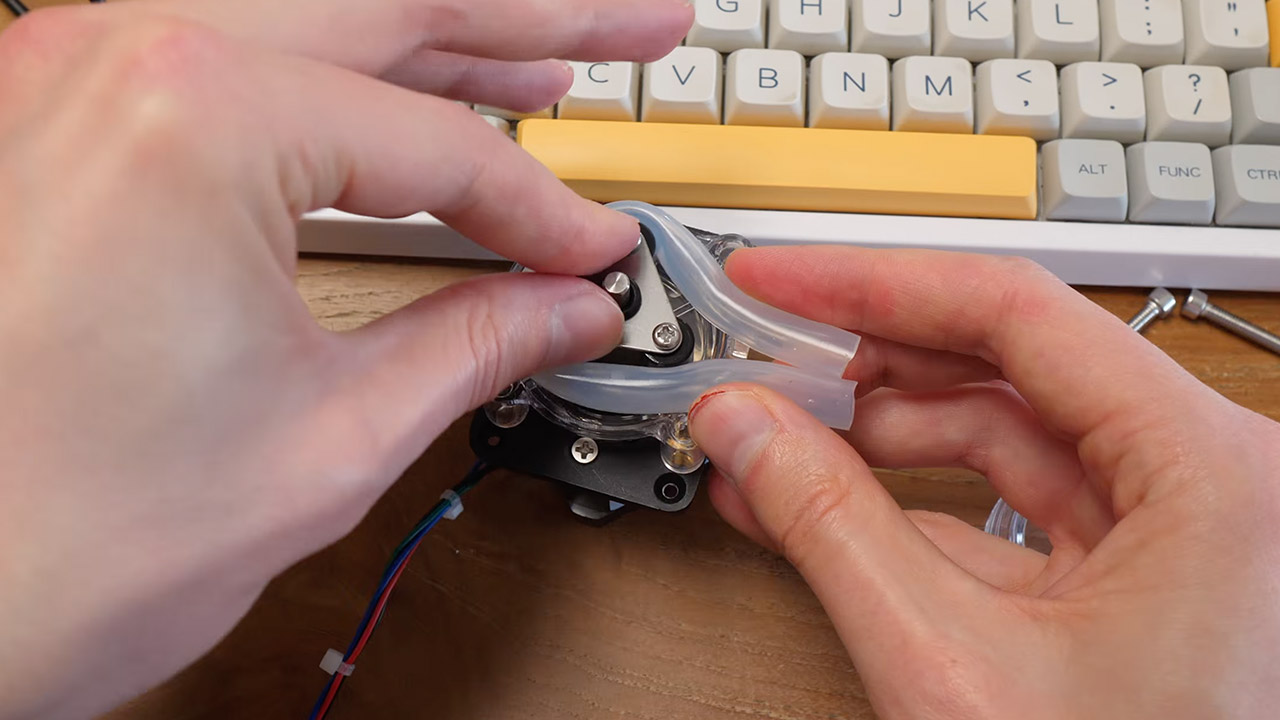

However, things started going wrong almost immediately. As soon as the water started flowing, air bubbles formed inside the tubes, and pressure varied dramatically from bottle to bottle. Some filled up quickly, while others took a long time. This all came crashing down when it ruined the display’s clean appearance. You can imagine how aggravating it was to keep running into the same problems over and over. It needed to be totally redesigned, so individual pumps for each bottle were utilized rather than a single common pump. The servos were also brought in to manage the bottle-emptying. Water now only goes one way via the pumps and then down into the basin via gravity.

The tubes running through the center of each bottle help prevent snagging when the servos tip the bottles. The servos themselves have a little more oomph thanks to some springs that assist them push through any impediment. A little sanding and oil assist keep things working smoothly, allowing the grids to swing cleanly each time. It took three months between the first prototype and the finished clock. Early iterations taught the team an important lesson about how messy water can be when working with electronics. Finally, the clock simply recycles the same blue water repeatedly, while a tiny brain (microcontroller) ensures that the pumps and servos function smoothly and on time.

[Source]

Tech

Dual-layer OLED display panels for iPhone are still years away

The iPhone 18 Pro will not be getting a dual-layer OLED display like the one in the iPad Pro, with overheating the main obstacle to introduction.

The iPhone uses OLED for its display tech, and there have been rumors about Apple making a tweak to improve the brightness. A regular leaker with a mixed history insists it isn’t happening soon.

According to a Monday post from Weibo leaker Instant Digital, the iPhone 18 Pro won’t have dual-layer OLED for the display.

The dual-layer OLED refers to what is better known in the Apple catalog as Tandem OLED, which is used by the iPad Pro. In essence, instead of using one OLED panel, the system uses two, with one layered upon the other.

As OLED is a self-illuminating display tech that doesn’t require backlighting, stacking the panels increases the amount of emitted light while minimizing wastage. For consumers, this means a much brighter display.

Dual-layer OLED as a throttling savior

Tandem OLED can also be a benefit for the iPhone by making it more capable outdoors. Instant Digital’s post discusses how the iPhone 17 Pro didn’t do a great job of maintaining brightness outdoors.

Part of the problem is thermal management, as the display can help the iPhone heat up and eventually throttle under hot conditions.

To the leaker, a dual-layer OLED approach would be beneficial since it would give a way to have a much brighter display, while generating less heat. Achieving this would make the iPhone better to use in sunlight, without fear of throttling.

As for the iPhone 18 Pro, Apple is still in the process of determining the suppliers of display panels. Usual suppliers Samsung Display and LG Display are the frontrunners, but BOE isn’t doing well enough to supply for the premium models.

While Weibo leakers are not usually considered to be highly accurate, with Instant Digital among the most prominent, we cannot dismiss the rumor as just being an obvious prediction outright. Others have discussed the idea in the past, with the timetable lining up with this rumor.

Back in August 2025, there were claims that LG Display was working on a tandem OLED technology for use in a future iPhone. At the time, a source said that the display could arrive in 2028, far too late for the iPhone 18 Pro.

-

Crypto World4 days ago

Crypto World4 days agoHarrisX Poll Found 52% of Registered Voters Support the CLARITY Act

-

Fashion4 days ago

Fashion4 days agoWeekend Open Thread: Marianne Dress

-

Crypto World5 days ago

Crypto World5 days agoUpbit adds B3 Korean won pair as Base token gains Korea access

-

NewsBeat5 days ago

NewsBeat5 days agoNCP car park operator enters administration putting 340 UK sites at risk of closure

-

Fashion1 day ago

Fashion1 day agoCoffee Break: Travel Steam Iron

-

Fashion2 days ago

Fashion2 days agoWhat to Know Before Buying a Curling Wand or Curling Iron

-

Tech3 days ago

Tech3 days agoAuto Enthusiast Carves Functional Two-Stroke Engine from Solid Metal

-

Politics21 hours ago

Politics21 hours agoWhat to expect when you’re expecting a budget

-

Politics4 days ago

Politics4 days agoPolitics Home Article | Starmer Enters The Danger Zone

-

Business3 days ago

Business3 days agoIgnore market noise, India’s long-term story intact, say D-Street bulls Ramesh Damani and Sunil Singhania

-

Tech2 days ago

Tech2 days agoGM Agrees To Pay $12.75 Million To Settle California Lawsuit Over Misuse Of Customers’ Driving Data

-

Crypto World6 days ago

Crypto World6 days agoBlackRock CEO Larry Fink Discusses a New Asset Class

-

Entertainment6 days ago

Entertainment6 days agoSarah Paulson Called Out For Met Gala ‘Hypocrisy’

-

Entertainment5 days ago

Entertainment5 days agoGeneral Hospital: Ric & Ava Bombshell – Ric’s Massive Secret Exposed!

-

Sports6 days ago

NBA playoff winners and losers: Austin Reaves is not loving Lakers vs. Thunder matchup, but Chet Holmgren is

-

Entertainment6 days ago

Entertainment6 days agoBold and Beautiful Early Spoilers May 11-15: Steffy Revolted & Liam Overjoyed!

-

Politics5 days ago

Politics5 days agoSimon Cowell Says He Was ‘Horrible’ To Susan Boyle During BGT Audition

-

Sports6 days ago

Sports6 days agoUEFA Champions League final schedule, teams, venue, live time and streaming | Football News

-

Crypto World6 days ago

Crypto World6 days agoRobinhood says Wall Street is building onchain

-

Tech7 days ago

Tech7 days agoApple and Samsung are dominating smartphone sales so thoroughly that only one other company makes the top 10

You must be logged in to post a comment Login