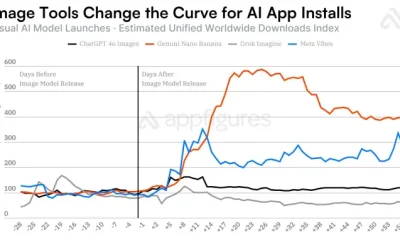

The undying thirst for smarter (historically, that means larger) AI models and greater adoption of the ones we already have has led to an explosion in data-center construction projects, unparalleled both in number and scale. Chief among them is Meta’s planned 5-gigawatt data center in Louisiana, called Hyperion, announced in June of 2025. Meta CEO Mark Zuckerberg said Hyperion will “cover a significant part of the footprint of Manhattan,” and the first phase—a 2-GW version—will be completed by 2030.

Though the project’s stated 5-GW scale is the largest among its peers, it’s just one of several dozen similar projects now underway. According to Michael Guckes, chief economist at construction-software company ConstructConnect, spending on data centers topped US $27 billion by July of 2025 and, once the full-year figures are tallied, will easily exceed $60 billion. Hyperion alone accounts for about a quarter of that.

For the engineers assigned to bring these projects to life, the mix of challenges involved represent a unique moment. The world’s largest tech companies are opening their wallets to pay for new innovations in compute, cooling, and network technology designed to operate at a scale that would’ve seemed absurd five years ago.

At the same time, the breakneck pace of building comes paired with serious problems. Modern data-center construction frequently requires an influx of temporary workers and sharply increases noise, traffic, pollution, and often local electricity prices. And the environmental toll remains a concern long after facilities are built due to the unprecedented 24/7 energy demands of AI data centers which, according to one recent study, could emit the equivalent of tens of millions of tonnes of CO2 annually in the United States alone.

Regardless of these issues, large AI companies, and the engineers they hire, are going full steam ahead on giant data-center construction. So, what does it really take to build an unprecedentedly large data center?

AI Rewrites Building Design

The stereotypical data-center building rests on a reinforced concrete slab foundation. That’s paired with a steel skeleton and poured concrete wall panels. The finished building is called a “shell,” a term that implies the structure itself is a secondary concern. Meta has even used gigantic tents to throw up temporary data centers.

Still, the scale of the largest AI data centers brings unique challenges. “The biggest challenge is often what’s under the surface. Unstable, corrosive, or expansive soils can lead to delays and require serious intervention,” says Robert Haley, vice president at construction consulting firm Jacobs. Amanda Carter, a senior technical lead at Stantec, said a soil’s thermal conductivity is also important, as most electrical infrastructure is placed underground. “If the soil has high thermal resistivity, it’s going to be difficult to dissipate [heat].” Engineers may take hundreds or thousands of soil samples before construction can begin.

GPUs

Modern AI data centers often use rack-scale systems, such as the Nvidia GB200 NVL72, which occupy a single data-center rack. Each rack contains 72 GPUs, 36 CPUs, and up to 13.4 terabytes of GPU memory. The racks measure over 2.2 meters tall and weigh over one and a half tonnes, forcing AI data centers to use thicker concrete with more reinforcement to bear the load.

A single GB200 rack can use up to 120 kilowatts. If Hyperion meets its 5-gigawatt goals, the data-center campus could include over 41,000 rack-scale systems, for a total of more than 3 million GPUs. The final number of GPUs used by Hyperion is likely to be less than that, though only because future GPUs will be larger, more capable, and use more power.

Money

According to ConstructConnect, spending on data centers neared US $27 billion through July of 2025 and, according to the latest data, will tally close to $60 billion through the end of the year. Meta’s Hyperion project is a big slice of the pie, at $10 billion.

Data-center spending has become an important prop for the construction industry, which is seeing reduced demand in other areas, such as residential construction and public infrastructure. ConstructConnect’s third quarter 2025 financial report stated that the quarter’s decline “would have been far more severe without an $11 billion surge in data center starts.”

There’s apparently no shortage of eligible sites, however, as both the number of data centers under construction, and the money spent on them, has skyrocketed. The spending has allowed companies building data centers to throw out the rule book. Prior to the AI boom, most data centers relied on tried-and-true designs that prioritized inexpensive and efficient construction. Big tech’s willingness to spend has shifted the focus to speed and scale.

The loose purse strings open the door to larger and more robust prefabricated concrete wall and floor panels. Doug Bevier, director of development at Clark Pacific, says some concrete floor panels may now span up to 23 meters and need to handle floor loads up to 3,000 kilograms per square meter, which is more than twice the load international building codes normally define for manufacturing and industry. In some cases, the concrete panels must be custom-made for a project, an expensive step that the economics of pre-AI data centers rarely justified.

Simultaneously, the time scale for projects is also compressed: Jamie McGrath, senior vice president of data-center operations at Crusoe, says the company is delivering projects in “about 12 months,” compared to 30 to 36 months before. Not all projects are proceeding at that pace, but speed is universally a priority.

That makes it difficult to coordinate the labor and materials required. Meta’s Hyperion site, located in rural Richland Parish, Louisiana, is emblematic of this challenge. As reported by NOLA.com, at least 5,000 temporary workers have flocked to the area, which has only about 20,000 permanent residents. These workers earn above-average wages and bring a short-term boost for some local businesses, such as restaurants and convenience stores. However, they have also spurred complaints from residents about traffic and construction noise and pollution.

This friction with residents includes not only these obvious impacts, but also things you might not immediately suspect, such as light pollution caused by around-the-clock schedules. Also significant are changes to local water tables and runoff, which can reduce water quality for neighbors who rely on well water. These issues have motivated a few U.S. cities to enact data-center bans.

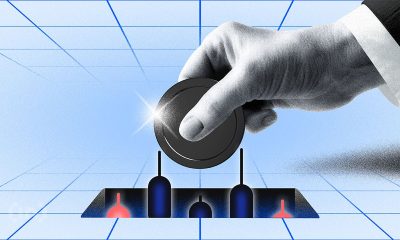

Data Centers Often Go BYOP (bring your own power)

Meta’s Richland Parish site also highlights a problem that’s priority No. 1 for both AI data centers and their critics: power.

Data centers have always drawn large amounts of power, which nudged data-center construction to cluster in hubs where local utilities were responsive to their demands. Virginia’s electric utility, Dominion Energy, met demand with agreements to build new infrastructure, often with a focus on renewable energy.

The power demands of the largest AI data centers, though, have caught even the most responsive utilities off guard. A report from the Lawrence Berkeley National Laboratory, in California, estimated the entire U.S. data-center industry consumed an average load of roughly 8 GW of power in 2014. Today, the largest AI data-center campuses are built to handle up to a gigawatt each, and Meta’s Hyperion is projected to require 5 GW.

“Data centers are exasperating issues for a lot of utilities,” says Abbe Ramanan, project director at the Clean Energy Group, a Vermont-based nonprofit.

Ramanan explains that utilities often use “peaker plants” to cope with extra demand. They’re usually older, less efficient fossil-fuel plants which, because of their high cost to operate and carbon output, were due for retirement. But Ramanan says increased electricity demand has kept them in service.

Meta secured power for Hyperion by negotiating with Entergy, Louisiana’s electric utility, for construction of three new gas-turbine power plants. Two will be located near the Richland Parish site, while a third will be located in southeast Louisiana.

Entergy frames the new plants as a win for the state. “A core pillar of Entergy and Meta’s agreement is that Meta pays for the full cost of the utility infrastructure,” says Daniel Kline, director of power-delivery planning and policy at Entergy. The utility expects that “customer bills will be lower than they otherwise would have been.” That would prove an exception, as a recent report from Bloomberg found electricity rates in regions with data centers are more likely to increase than in regions without.

CO2

Research published in Nature in 2025 projects that data-center emissions will range from 24 million to 44 million CO2-equivalent metric tonnes annually through 2030 in the United States alone. While some materials used in data centers, such as concrete, lead to significant emissions, the majority of these emissions will result from the high energy demands of AI servers.

Estimating the carbon emissions of Hyperion is difficult, as the project won’t be completed until 2030. Assuming that the three new natural gas plants that are planned for construction as part of the project produce emissions typical for their type, however, the plants could lead to full life-cycle emissions of between 4 million and 10 million metric tons of CO2 annually—roughly equivalent to the annual emissions of a country like Latvia.

Concrete

Data centers are typically built from concrete, with steel used as a skeleton to reinforce and shape the concrete shell. While the foundation is often poured concrete, the walls and floors are most often built from prefabricated concrete panels that can span up to 23 meters. Floors use a reinforced T-shape, similar to a steel girder, measuring up to 1.2 meters across at its thickest point. The largest data centers include hundreds of these concrete panels.

The America Cement Association projects that the current surge in building will require 1 million tonnes of cement over the next three years, though that’s still a tiny fraction of the overall cement industry, which weighed in at roughly 103 million tonnes in 2024.

The plants, which will generate a combined 2.26 GW, will use combined-cycle gas turbines that recapture waste heat from exhaust. This boosts thermal efficiency to 60 percent and beyond, meaning more fuel is converted to useful energy. Simple-cycle turbines, by contrast, vent the exhaust, which lowers efficiency to around 40 percent.

Even so, total life-cycle emissions for the Hyperion plants could range from 4 million to over 10 million tonnes of CO2 each year, depending on how frequently the plants are put in use and the final efficiency benchmarks once built. On the high end, that’s as much CO2 as produced by over 2 million passenger cars. Fortunately, not all of Meta’s data centers take the same approach to power. The company has announced a plan to power Prometheus, a large data-center project in Ohio scheduled to come online before the end of 2026, with nuclear energy.

But other big tech companies, spurred by the need to build data centers quickly, are taking a less efficient approach.

xAI’s Colossus 2, located in Memphis, is the most extreme example. The company trucked dozens of temporary gas-turbine generators to power the site located in a suburban neighborhood. OpenAI, meanwhile, has gas turbines capable of generating up to 300 megawatts at its new Stargate data center in Abilene, Texas, slated to open later in 2026. Both use simple-cycle turbines with a much lower efficiency rating than the combined-cycle plants Entergy will build to power Hyperion.

Demand for gas turbines is so intense, in fact, that wait times for new turbines are up to seven years. Some data centers are turning toward refurbished jet engines to obtain the turbines they need.

AI Racks Tip the Scales

The demand for new, reliable power is driven by the power-hungry GPUs inside modern AI data centers.

In January of 2025, Mark Zuckerberg announced in a post on Facebook that Meta planned to end 2025 with at least 1.3 million GPUs in service. OpenAI’s Stargate data center plans to use over 450,000 Nvidia GB200 GPUs, and xAI’s Colossus 2, an expansion of Colossus, is built to accommodate over 550,000 GPUs.

GPUs, which remain by far the most popular for AI workloads, are bundled into human-scale monoliths of steel and silicon which, much like the data centers built to house them, are rapidly growing in weight, complexity, and power consumption.

Memory

In addition to raw compute performance, Nvidia GB200 NVL72 racks also require huge amounts of memory. An Nvidia GB200 NVL72 rack may include up to 13.4 terabytes of high-bandwidth memory, which implies a data-center campus at Hyperion’s scale will require at least several dozen petabytes.

The immense demand has sent memory prices soaring: The price of DRAM, specifically DDR5, has increased 172 percent in 2025.

Power

Hyperion is expected to use 5 gigawatts of power across 11 buildings, which works out to just under 500 megawatts per building, assuming each will be similar to its siblings. That’s enough to power roughly 4.2 million U.S. homes.

Just one Hyperion data center built at the Richland Parish site will consume twice as much power as xAI’s Colossus which, at the time of its completion in the summer of 2024, was among the largest data centers yet built.

Nvidia’s GB200 NVL72—a rack-scale system—is currently a leading choice for AI data centers. A single GB200 rack contains 72 GPUs, 36 CPUs, and up to 17 terabytes of memory. It measures 2.2 meters tall, tips the scales at up to 1,553 kilograms, and consumes about 120 kilowatts—as much as around 100 U.S. homes. And this, according to Nvidia, is just the beginning. The company anticipates future racks could consume up to a megawatt each.

Viktor Petik, senior vice president of infrastructure solutions at Vertiv, says the rapid change in rack-scale AI systems has forced data centers to adapt. “AI racks consume far more power and weigh more than their predecessors,” says Petik. He adds that data centers must supply racks with multiple power feeds, without taking up extra space.

The new power demands from rack-scale systems have consequences that are reflected in the design of the data center—even its footprint.

In 2022 Meta broke ground on a new data center at a campus in Temple, Texas. According to SemiAnalysis, which studies AI data centers, construction began with the intent to build the data center in an H-shaped configuration common to other Meta data centers.

LAND

Meta CEO Mark Zuckerberg kicked off the buzz around Hyperion by saying it would cover a large chunk of Manhattan. Many took that to mean Hyperion would be a single building of that size, which isn’t correct. Hyperion will actually be a cluster of data centers—11 are currently planned—with over 370,000 square meters of floor space. That’s a lot smaller even than New York City’s Central Park, which covers 6 percent of Manhattan.

Meta has room to grow, however. The Richland Parish site spans 14.7 million square meters in total, which is about a quarter the area of Manhattan. And the 370,000 square meters of floor space Hyperion is expected to provide doesn’t include external infrastructure, such as the three new combined-cycle gas power plants Louisiana utility Entergy is building to power the project.

Construction was paused midway in December of 2022, however, as part of a company-wide review of its data-center infrastructure. Meta decided to knock down the structure it had built and start from scratch. The reasons for this decision were never made public, but analysts believe it was due to the old design’s inability to deliver sufficient electricity to new, power-hungry AI racks. Construction resumed in 2023.

Meta’s replacement ditches the H-shaped building for simple, long, rectangular structures, each flanked by rows of gas-turbine generators. While Meta’s plans are subject to change, Hyperion is currently expected to comprise 11 rectangular data centers, each packed with hundreds of thousands of GPUs, spread across the 13.6-square-kilometer Richland Parish campus.

Cooling, and Connecting, at Scale

Nvidia’s ultradense AI GPU racks are changing data centers not only with their weight, and power draw, but also with their intense cooling and bandwidth requirements.

Data centers traditionally use air cooling, but that approach has reached its limits. “Air as a cooling medium is inherently inferior,” says Poh Seng Lee, head of CoolestLAB, a cooling research group at the National University of Singapore.

Instead, going forward, GPUs will rely on liquid cooling. However, that adds a new layer of complexity. “It’s all the way to the facilities level,” says Lee. “You need pumps, which we call a coolant distribution unit. The CDU will be connected to racks using an elaborate piping network. And it needs to be designed for redundancy.” On the rack, pipes connect to cold plates mounted atop every GPU; outside the data-center shell, pipes route through evaporation cooling units. Lee says retrofitting an air-cooled data center is possible but expensive.

The networking used by AI data centers is also changing to cope with new requirements. Traditional data centers were positioned near network hubs for easy access to the global internet. AI data centers, though, are more concerned with networks of GPUs.

These connections must sustain high bandwidth with impeccable reliability. Mark Bieberich, a vice president at network infrastructure company Ciena, says its latest fiber-optic transceiver technology, WaveLogic 6, can provide up to 1.6 terabytes per second of bandwidth per wavelength. A single fiber can support 48 wavelengths in total, and Ciena’s largest customers have hundreds of fiber pairs, placing total bandwidth in the thousands of terabits per second.

This is a point where the scale of Meta’s Hyperion, and other large AI data centers, can be deceptive. It seems to imply the physical size of a single data center is what matters. But rather than being a single building, Hyperion is actually a set of buildings connected by high-speed fiber-optics.

“Interconnecting data centers is absolutely essential,” says Bieberich. “You could think about it as one logical AI training facility, but with geographically distributed facilities.” Nvidia has taken to calling this “scale across,” to contrast it with the idea that data centers must “scale up” to larger singular buildings.

The Big but Hazy Future

The full scale of the challenges that face Hyperion, and other future AI data centers of similar scale, remain hazy. Nvidia has yet to introduce the rack-scale AI GPU systems it will host. How much power will it demand? What type of cooling will it require? How much bandwidth must be provided? These can only be estimated.

In the absence of details, the gravity of AI data-center design is pulled toward one certainty: It must be big. New data-center designers are rewriting their rule book to handle power, cooling, and network infrastructure at a scale that would’ve seemed ridiculous five years ago.

This innovation is fueled by big tech’s fat wallet, which shelled out tens of billions of dollars in 2025 alone, leading to questions about whether the spending is sustainable. For the engineers in the trenches of data-center design, though, it’s viewed as an opportunity to make the impossible possible.

“I tell my engineers, this is peak. We’re being engineers. We’re being asked complicated questions,” says Stantec’s Carter. “We haven’t got to do that in a long time.”

This article appears in the April 2026 print issue.

Source link

The idea of using cardboard for a sloppy PC case isn’t new; it’s a time-honored tradition dating back to at least the 1990s. That said, with today’s CNC cutters and other advanced tooling available to hobbyists, you might be curious to see how far you can push the concept. As demonstrated in a recent video by [mryeester], the answer appears to be that good planning and a solid understanding of cardboard’s limitations are as essential as ever.

The idea of using cardboard for a sloppy PC case isn’t new; it’s a time-honored tradition dating back to at least the 1990s. That said, with today’s CNC cutters and other advanced tooling available to hobbyists, you might be curious to see how far you can push the concept. As demonstrated in a recent video by [mryeester], the answer appears to be that good planning and a solid understanding of cardboard’s limitations are as essential as ever.

You must be logged in to post a comment Login