Not everyone wants to rule the world, but it does seem lately as if everyone wants to warn the world might be ending.

Tech

Who gets to warn us the world is ending?

On Tuesday, the Bulletin of the Atomic Scientists unveiled their annual resetting of the Doomsday Clock, which is meant to visually represent how close the experts at the organization feel that the world is to ending. Reflecting a cavalcade of existential risks ranging from worsening nuclear tensions to climate change to the rise of autocracy, the hands were set to 85 seconds to midnight, four seconds closer than in 2025 and the closest the clock has ever been to striking 12.

The day before, Anthropic CEO Dario Amodei — who may as well be the field of artificial intelligence’s philosopher-king — published a 19,000-word essay entitled “The Adolescence of Technology.” His takeaway: “Humanity is about to be handed almost unimaginable power, and it is deeply unclear whether our social, political and technological systems possess the maturity to wield it.”

Should we fail this “serious civilizational challenge,” as Amodei put it, the world might well be headed for the pitch black of midnight. (Disclosure: Future Perfect is funded in part by the BEMC Foundation, whose major funder was also an early investor in Anthropic; they don’t have any editorial input into our content.)

As I’ve said before, it’s boom times for doom times. But examining these two very different attempts at communicating existential risk — one very much a product of the mid-20th century, the other of our own uncertain moment — presents a question. Who should we listen to? The prophets shouting outside the gates? Or the high priest who also runs the temple?

The Doomsday Clock has been with us so long — it was created in 1947, just two years after the first nuclear weapon incinerated Hiroshima — that it’s easy to forget how radical it was. Not just the Clock itself, which may be one of the most iconic and effective symbols of the 20th century, but the people who made it.

The Bulletin of the Atomic Scientists was founded immediately after the war by scientists like J. Robert Oppenheimer — the very men and women who had created the bomb they now feared. That lent an unparalleled moral clarity to their warnings. At a moment of uniquely high levels of institutional trust, here were people who knew more about the workings of the bomb than anyone else, desperately telling the public that we were on a path to nuclear annihilation.

The Bulletin scientists had the benefit of reality on their side. No one, after Hiroshima and Nagasaki, could doubt the awful power of these bombs. As my colleague Josh Keating wrote earlier this week, by the late 1950s there were dozens of nuclear tests being conducted around the world each year. That nuclear weapons, especially at that moment, presented a clear and unprecedented existential risk was essentially inarguable, even by the politicians and generals building up those arsenals.

But the very thing that gave the Bulletin scientists their moral credibility — their willingness to break with the government they once served — cost them the one thing needed to end those risks: power.

As striking as the Doomsday Clock remains as a symbol, it is essentially a communication device wielded by people who have no say over the things they’re measuring. It’s prophetic speech without executive authority. When the Bulletin, as it did on Tuesday, warns that the New START treaty is expiring or that nuclear powers are modernizing their arsenals, it can’t actually do anything about it except hope policymakers — and the public — listen.

And the more diffuse those warnings become, the harder it is to be heard.

Since the end of the Cold War took nuclear war off the agenda — temporarily, at least — the calculations behind the Doomsday Clock have grown to encompass climate change, biosecurity, the degradation of US public health infrastructure, new technological risks like “mirror life,” artificial intelligence, and autocracy. All of these challenges are real, and each in their own way threatens to make life on this planet worse. But mixed together, they muddy the terrifying precision that the Clock promised. What once seemed like clockwork is revealed as guesswork, just one more warning among countless others.

Even more than most AI leaders, Amodei has frequently been compared to Oppenheimer.

Amodei was a physicist and a scientist first. Amodei did important work on the “scaling laws” that helped unlock powerful artificial intelligence, just as Oppenheimer did critical research that helped blaze the trail to the bomb. Like Oppenheimer, whose real talent lay in the organizational abilities required to run the Manhattan Project, Amodei has proven to be highly capable as a corporate leader.

And like Oppenheimer — after the war at least — Amodei hasn’t been shy about using his public position to warn in no uncertain terms about the technology he helped create. Had Oppenheimer had access to modern blogging tools, I guarantee you he would have produced something like “The Adolescence of Technology,” albeit with a bit more Sanskrit.

Sign up here to explore the big, complicated problems the world faces and the most efficient ways to solve them. Sent twice a week.

The difference between these figures is one of control. Oppenheimer and his fellow scientists lost control of their creation to the government and the military almost immediately, and by 1954 Oppenheimer himself had lost his security clearance. From then on, he and his colleagues would largely be voices on the outside.

Amodei, by contrast, speaks as the CEO of Anthropic, the AI company that at the moment is perhaps doing more than any other to push AI to its limits. When he spins transformative visions of AI as potentially “a country of geniuses in a datacenter,” or runs through scenarios of catastrophe ranging from AI-created bioweapons to technologically enabled mass unemployment and wealth concentration, he is speaking from within the temple of power.

It’s almost as if the strategists setting nuclear war plans were also fiddling with the hands on the Doomsday Clock. (I say “almost” because of a key distinction — while nuclear weapons promised only destruction, AI promises great benefits and terrible risks alike. Which is perhaps why you need 19,000 words to work out your thoughts about it.)

All of which leaves the question of whether the fact that Amodei has such power to influence the direction of AI gives his warnings more credibility than those on the outside, like the Bulletin scientists — or less.

The Bulletin’s model has integrity to spare, but increasingly limited relevance, especially to AI. The atomic scientists lost control of nuclear weapons the moment they worked. Amodei hasn’t lost control of AI — his company’s release decisions still matter enormously. That makes the Bulletin’s outsider position less applicable. You can’t effectively warn about AI risks from a position of pure independence because the people with the best technical insight are largely inside the companies building it.

But Amodei’s model has its own problem: The conflict of interest is structural and inescapable.

Every warning he issues comes packaged with “but we should definitely keep building.” His essay explicitly argues that stopping or substantially slowing AI development is “fundamentally untenable” — that if Anthropic doesn’t build powerful AI, someone worse will. That may be true. It may even be the best argument for why safety-conscious companies should stay in the race. But it’s also, conveniently, the argument that lets him keep doing what he’s doing, with all the immense benefits that may bring.

This is the trap Amodei himself describes: “There is so much money to be made with AI — literally trillions of dollars per year — that even the simplest measures are finding it difficult to overcome the political economy inherent in AI.”

The Doomsday Clock was designed for a world where scientists could step outside the institutions that created existential threats and speak with independent authority. We may no longer live in that world. The question is what we build to replace it — and how much time we have left to do so.

Tech

Thinking Of Converting A Shipping Container Into A Garage? Here’s What To Know First

Shipping containers are a necessary component of nearly every cargo vessel that crosses the Atlantic. But while they’re built to hold a wide variety of goods, supplies, and a lot more, some people also use them for personal storage. If you’re one of these people and you’re thinking about converting a shipping container into a garage, there are some things you should know beforehand.

Shipping containers are durable and weather resistant, so you won’t have to worry about your vehicle. But a container is only about eight feet wide, which is a problem for bigger cars. Containers are customizable, so you can add doors, windows, and even insulation. But if you use one as-is, you’ll have to contend with a lack of ventilation due to its airtight construction. Plus, you’ll have to deal with temperature swings, because it heats up in the summer and cools down in the winter.

Shipping containers can be pricey, and once your start to modify it, costs only go up from there. However, they can be cheaper than building a garage from the ground up, and since they’re portable, you can move it later on if you need to. Additionally, once you get it in place, depending on what your plan is, you can have it ready in no time. Of course, unless you can haul it and set it up yourself, you’ll likely have to pay even more to a service to do it for you.

Understanding the laws regarding shipping container use

While there are pros and cons to using a shipping container as a garage, there are some legalities you should know as well. First off, whether or not you’re allowed to even use a container, is determined by your local zoning laws. These laws control how property can be used, as it may even be illegal to build a shed on your property without a permit. So you may need one before converting, or even placing, a container on your land.

But even if you pass the zoning requirements in your area, you may not be able to move forward. That’s because you may still need to get approval from local building departments in your area. These departments can use building codes to review your container to ensure it’s structurally solid, and it’s placed on a strong foundation. Then there’s how the container is classified, and whether or not it meets your local government’s requirements to be used as a garage. Finally, if you get all the paperwork in order and have the green light to move ahead, check with your homeowner’s association. They may have guidelines in place that can actually override what’s allowed by local law.

If you hit a roadblock, it’s not a good idea to go around the system. Setting up a container without proper approval can lead to fines. You may be ordered to stop work, or you may be forced to remove the container altogether.

Tech

5 Handy Uses For Smart Sensors You Probably Didn’t Think Of

We may receive a commission on purchases made from links.

A smart motion sensor typically detects the presence or movement of a person, while a smart switch is most often used to turn lights on or off. These devices are already useful just as they were designed, but did you know that you can actually program them to let you accomplish other useful things?

With a little creativity, you can use a smart sensor to warn you if you’re forgetting something, to catch if something goes wrong before it becomes a major problem, or to just generally make your life easier. Most of the features also do not require complex programming — you can either install the smart sensor directly where needed, or, at worst, add a timer via your preferred home app and keep notifications on.

Let’s look at a few handy uses for smart sensors that you probably haven’t considered yet. You might be surprised what these things can accomplish with a little bit of ingenuity.

Warn you if you left a door or window open

Contact sensors are pretty simple smart devices, as their primary purpose is to warn you when the two pieces are not in contact. These are most commonly used for doors and windows — you can program them to send a notification on your phone when you leave your house, and you left a window or door open. There are also several creative uses for contact sensors, including automatically turning on lights and running a home routine.

Though these sensors are typically attached to doors and windows, that does not mean they’re limited to those used for access. If you often second-guess yourself about whether you’ve properly closed your fridge door, especially when you’re on vacation for a couple of days, attaching a contact sensor to it will help you avoid that problem. You can then set the sensor to warn you if the door is open for too long. If you still need to check, just open your phone and check the device’s status.

You can also install the sensor inside a cupboard or cabinet if you’re guarding stuff. For example, if you keep chocolates in your pantry and don’t want your kids raiding your sweets without your knowledge, you can set the sensor to warn you when the cupboard door opens, allowing you to check your smart security camera to see who’s the culprit.

Get notifications for mail or packages

Smart mailbox alarms exist to ensure you don’t miss letters or packages that might’ve arrived when you were asleep or weren’t home. After all, we rarely receive snail mail these days, but the letters that do come in are quite important, like a jury duty summons or your tax return check. These sensors work similarly to a motion sensor in that they detect movement in your inbox.

Because of this, you do not need to purchase a smart mailbox alarm — if you already have an extra motion sensor at home, you can install it in your mailbox and set it to alert you if it detects movement. However, this might not work for larger packages that won’t fit in your mailbox. So, if you want to get alerted when an Amazon package arrives at your doorstep, consider getting a Ring camera.

This smart doorbell camera can tell you when someone’s at your door, but you can also program it to notify you if it detects movement on your porch. This means it can double as a security camera while simultaneously notifying you if someone leaves a package at your doorstep.

Control the exhaust fan bathroom automatically

Smart switches are often used for remotely turning on devices using voice commands. But because you can control them with an app, you can make them do a lot of other clever things beyond switching on or off on your command. One thing you can do is program your bathroom exhaust fan to work automatically based on which light you turn on.

The biggest problem some people encounter with bathroom exhaust fans is that they do not work optimally. Most exhaust fans turn on when you switch on the bathroom lights — this is a problem for some people, as it can cause a cold draft while they’re showering. And when you switch off the light after showering, it also turns off the exhaust, leaving excess moisture stuck inside your bathroom.

If you use a smart switch to control the lights and the exhaust fan in your bathroom, you can program it so that you don’t have to think about the exhaust fan. For example, you can set the exhaust fan to automatically turn on when you switch on the light for your toilet area to keep the area fresh. But for your shower, you can instead command the exhaust fan switch to turn on minutes after you turn off the light. That way, you don’t get chilly while you’re bathing, while the exhaust fan will still ensure that moisture is exhausted after you leave the restroom.

Avoid extensive water damage by catching leaks early

You might think that a water leak sensor is an unnecessary smart device for your home, especially if you live in a newly constructed dwelling. But if you live in an older home or experience freezing winters in your area, this might be a prudent investment. That’s because a leak sensor will warn you if you have a problem with your plumbing before it becomes a major issue, making it one of the smart gadget upgrades you can install in your bathroom or kitchen.

As the name suggests, these devices alert you when they detect a water leak. That way, you can immediately shut off your taps before the water spreads all over your floor. Since these smart devices send a notification to your phone, you also get warned wherever you are in the world as long as you’re online.

This is crucial if you’re away for an extended period. That way, you can immediately inform a trusted person to turn off the taps in your home and avoid coming home to extensive (and expensive) water damage.

Toggle perimeter and safety lights as necessary

Smart switches can be programmed to automatically turn on and off your perimeter and safety lights based on sunrise and sunset times. However, this only takes into account the position of the sun and does not consider meteorological effects. So, if you want to ensure that your lights turn on when the skies get too dark, you can install a smart light sensor.

With a smart light sensor installed, you can then use it to command all the lights around your home to switch on when it gets too dark — whether through the setting of the sun or because of a snowstorm — and turn off when there’s enough light to save on electricity costs. You can even use it to automatically adjust the dimmable lights in your home, ensuring a consistent brightness level throughout the day and night.

You can also pair a smart light sensor with a smart motion detector to specific areas in your home. That way, you can program lights in common areas, like your hallway or garden, to turn on only when someone is in the area and it’s dark. You can also use it to automatically lower or raise smart blinds to help keep the temperature in your home under control or to maximize natural light.

Tech

Iran-linked hackers use Cold War tricks and fake online identities to steal secrets from Apple and Microsoft users

- Charming Kitten relies on deception rather than exploiting technical software vulnerabilities

- Fake identities build trust before phishing attacks compromise sensitive user credentials

- Operations extend across Apple and Microsoft platforms, affecting diverse users globally

Iran-linked cyber operations are drawing renewed attention for relying less on advanced code and more on human manipulation to gain access to sensitive systems.

At the centre of this activity is Charming Kitten, a group associated with Iran’s security apparatus which has spent years targeting officials, researchers, and corporate employees.

Instead of exploiting technical vulnerabilities, operatives frequently impersonate trusted contacts, using carefully crafted messages to trick victims into revealing credentials or installing malicious software.

Article continues below

Cold War tactics and social engineering

These tactics echo intelligence strategies more commonly associated with Cold War espionage, where access and trust often proved more effective than technical superiority.

Fake online identities — including personas built around attractive or credible profiles — are used to establish relationships before launching phishing attacks.

This approach has enabled the group to operate across platforms used by both Apple and Microsoft ecosystems, exposing both Mac and Windows users to compromise.

Alongside external deception campaigns, investigators have raised concerns about insider threats linked to individuals embedded within major technology firms.

A high-profile case involving members of the Ghandali family centres on allegations of trade secret theft from companies including Google.

Prosecutors claim that sensitive data related to processor security and cryptography was extracted over time and transferred outside the United States.

Ex-counterintelligence officials describe the method as a “slow, deliberate extraction” carried out by actors with training or external direction.

Rather than relying on digital exfiltration tools, some of the alleged activity involved photographing computer screens — a low-technology method designed to avoid detection by cybersecurity systems.

“The most damaging breaches often originate from within,” one expert noted, adding that trusted access can bypass even advanced defenses.

Analysts argue that these operations reflect a wider intelligence framework that combines cyber activity, human networks, and surveillance capabilities.

Former officials state that Iran has developed a layered approach that includes recruitment, online intelligence gathering, and procurement channels.

One source described Iran as “the third most sophisticated adversary,” adding that its activities were underestimated for years compared with those of larger rivals.

The same networks have also been linked to monitoring dissidents abroad, indicating that operations are not limited to economic or military objectives.

This dual focus — external competition and internal control — complicates assessments of intent and scale.

Cases such as that of Monica Witt, who allegedly provided intelligence to Iran after defecting, reinforce concerns about insider cooperation.

Staying safe from phishing and espionage requires a layered approach to digital security. Users should verify identities before sharing credentials or sensitive information.

Strong, unique passwords combined with multi-factor authentication help limit account compromise.

Also, installing reliable antivirus software protects against known threats, while maintaining an active firewall prevents unauthorized access.

In addition, trusted malware removal tools can detect and eliminate suspicious activity before it spreads.

Via MSN

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

Tech

Copilot is ‘for entertainment purposes only,’ according to Microsoft’s terms of use

AI skeptics aren’t the only ones warning users not to unthinkingly trust models’ outputs — that’s what the AI companies say themselves in their terms of service.

Take Microsoft, which is currently focused on getting corporate customers to pay for Copilot. But it’s also been getting dinged on social media over Copilot’s terms of use, which appear to have been last updated on October 24, 2025.

“Copilot is for entertainment purposes only,” the company warned. “It can make mistakes, and it may not work as intended. Don’t rely on Copilot for important advice. Use Copilot at your own risk.”

A Microsoft spokesperson told PCMag that the company will be updating what they described as “legacy language.”

“As the product has evolved, that language is no longer reflective of how Copilot is used today and will be altered with our next update,” the spokesperson said.

Tom’s Hardware noted that Microsoft isn’t the only company using this kind of disclaimer for AI. For example, both OpenAI and xAI caution users that they should not rely on their output as “the truth” (to quote xAI) or as “a sole service of truth or factual information” (OpenAI).

Tech

How to Get Reliable Wi-Fi in Your Backyard

No one wants the tunes buffering when they have friends round for a barbecue or a stuttering podcast as they try to finish yard work. While the average router might fill your home with Wi-Fi, it doesn’t always extend to the patio or deck, much less the end of your backyard. But you can get great Wi-Fi coverage in your outdoor spaces, and I will show you the best options.

You may also want to read up on how to make your Wi-Fi faster, how to buy a router, and whether you should opt for a single router or a mesh system.

Table of Contents

Adjust or Move Your Router

Before you think about spending any money, try adjusting or moving your wireless router. Routers send out Wi-Fi signals in a rough circle, so I always recommend placing your router in the center of your home. Moving it slightly closer to your backyard or wherever you want to extend Wi-Fi is the simplest option. Ensure it’s positioned high and in the open. You may need a longer Ethernet cable. If your router has adjustable antennas, I also strongly recommend moving them and testing the signal strength in your problem spot (this can make a surprising difference).

If you have a mesh system, try moving one of the nodes to the back windowsill of your home to extend Wi-Fi into the backyard. If you’re able, running an Ethernet cable between your main router and the node nearest your outside space for wired backhaul can also extend range and speed significantly. If you have an outbuilding, you could even consider running an armored Ethernet cable from your main router to a mesh node or access point out there.

Use Your Smartphone as a Hot Spot

If you get a decent cellular network signal on your phone in your garden and you have plenty of data, it might be worth using your phone as a hot spot, which enables other devices to piggyback on your mobile network connection. We have a full guide on how to use your smartphone as a hot spot, but it’s very easy to do. Here’s the quick version:

- On an iPhone: Open Settings, Personal Hotspot, toggle on Allow Others to Join, and set a Wi-Fi Password.

- On an Android: Open Settings, Network and Internet (or Connections on a Samsung phone), choose Hotspot and tethering, toggle Wi-Fi hotspot on, and pick a name and password.

The problem with this is that it will use up your data allowance, tie up your phone, and drain your battery fast. But it’s a good solution in a pinch.

Upgrade Your Setup

If the two options above don’t fix your Wi-Fi woes, it might be time to upgrade your hardware. We have guides to the best routers, best mesh systems, and best Wi-Fi extenders. If you’re currently using an old or ISP-provided router, simply snagging a new one could make a big difference to your range. Most routers have a rough estimate of the square-footage range, but the construction of your home and other factors will impact it.

Switching from a single router to a mesh system is a better upgrade if you need to extend that Wi-Fi coverage. I’m not keen on Wi-Fi extenders, but they can sometimes be the most cost-effective way to get Wi-Fi to a single trouble spot. If you recently upgraded or already have a mesh, there are still other options.

Get an Outdoor Router

Folks with a mesh system can often add an outdoor router or node easily. Outdoor routers are weatherproof and generally have an IP rating determining what kind of weather they can withstand. They often come with fixings to mount on an exterior wall, fence, or pole, but you must consider how to run a power cable to an outlet. The right outdoor router for you depends entirely on your mesh system.

Tech

Week in Review: Most popular stories on GeekWire for the week of March 29, 2026

Get caught up on the latest technology and startup news from the past week. Here are the most popular stories on GeekWire for the week of March 29, 2026.

Sign up to receive these updates every Sunday in your inbox by subscribing to our GeekWire Weekly email newsletter.

Most popular stories on GeekWire

Rec Room shutting down: Once valued at $3.5B, social gaming platform finds profits elusive

Rec Room, the Seattle-based social gaming platform once valued at $3.5 billion, is shutting down on June 1, ending a decade-long run for one of the city’s most prominent startup unicorns. … Read More

Mary Jo Foley: What the heck is going on with Microsoft lately?

In her debut column for GeekWire, longtime Microsoft watcher Mary Jo Foley digs into the recent wave of reorgs, hiring freezes, and leadership shakeups in Redmond, and asks whether it’s business as usual or something bigger. … Read More

Microsoft VP’s memoir of growing up in India makes unexpected case for what matters in the age of AI

Ravi Vedula leads the data and insights organization behind Microsoft 365 and Copilot. … Read More

Report: Amazon buys 1,300 acres near Columbia River that could become a giant data center

Amazon has purchased 1,300 acres in Boardman, Ore., for a potential $12 billion “exascale” data center campus capable of housing up to 20 buildings, the Oregonian reports. … Read More

Tech Moves: C-suite exec leaves Microsoft for Alaska Airlines; Amazon leaders depart; HashiCorp CTO resigns

Lindsay-Rae McIntyre leaves Microsoft for Alaska Airlines, while HashiCorp’s co-founder leaves the company and two Amazon execs depart. … Read More

‘Let’s go!’ NASA launches humanity’s first moon voyage in nearly 54 years

Artemis 2 mission will send four astronauts around the moon during the ‘opening act’ of a new age of discovery. … Read More

Snap acquires assets from Rec Room as social gaming platform announces shutdown

Snap has acquired select assets from Rec Room Inc., with some employees joining the Spectacles hardware subsidiary, as the Seattle-based social gaming company shuts down its platform on June 1. … Read More

On Apple’s 50th, recalling the time a Microsoft engineer drove Steve Jobs so crazy he invented the iPad

David Pogue’s new book “Apple: The First 50 Years” is packed with stories about the rivalry between Apple and Microsoft. … Read More

Tech Moves: Microsoft execs depart; TerraClear, UserTesting, EchoMark and Read AI add leaders

Microsoft’s Joy Chik is retiring after 28 years, the company’s VP of energy resigns, and a slate of Seattle-area startups add leaders. … Read More

Report puts Seattle among leading global innovation cities, but it needs more premium office space

The latest edition of commercial real estate firm JLL’s Innovation Geographies report reveals that while Seattle is outpacing traditional hubs like New York and London in talent migration, a shortage of “investment-grade” real estate is creating a bottleneck for the city’s next era of tech expansion. … Read More

Tech

When And Why US Aircraft Carriers Break This Vital ‘5-Mile’ Rule

All 11 U.S. aircraft carriers employ what is called a “Five-Mile Rule,” which is rarely broken. The rule is a 5 nautical mile (5.75-mile) exclusion zone established around aircraft carriers, and its purpose is essentially force protection. Aircraft carriers are huge machines that can be dangerous to get close to, as colliding with one will always end in the carrier’s favor. Additionally, the constant need for flight operations ensures the safety of both the pilots and crew. Essentially, a five-mile buffer serves to further protect the carrier from threats.

It’s almost unfathomable how large carriers like the Lincoln are, as it displaces over 100,000 tons of seawater. When moving, it can’t turn or stop on a dime, as its inertia is considerable. Getting too close means that a collision can be unavoidable, so the exclusion zone’s purpose is essentially all about safety. While you might see pictures showing tight formations with the Lincoln among the vessels that comprise its Carrier Strike Group, that’s not normal during combat and flight operations, as breaking the Five-Mile Rule is a big naval no-no … until it isn’t.

Violating the exclusion zone isn’t common, but it happens. Think of it more as a rule that’s allowed to be broken than an unwavering law because there are conditions that warrant its violation. Typically, an emergency, where someone falls overboard, an unforeseen issue that arises during combat or flight operation, or any emergent situation might compel an aircraft carrier’s captain to chuck the exclusion zone into the drink and move the carrier or another ship closer than normal. Everyone onboard is trained for these situations, but it’s nonetheless dangerous since exclusion zones are there for good reasons.

The Five-Mile Rule and why it’s necessary

First and foremost, all U.S. aircraft carriers have a five-mile rule, and it’s all for the same reason. In 2000, the USS Cole (DDG-67) was attacked by a small vessel, causing widespread damage to its hull while killing 17 sailors and wounding almost 40 additional personnel. Since then, the U.S. Navy has been wary of small vessels, and a five-mile buffer ensures that none can get close to the carrier, as the Cole bombing proved the danger that explosive-laden craft could pose in potentially sinking an aircraft carrier. Another reason is flight operations, which is dangerous in and of itself.

The danger is elevated when an approaching aircraft has problems with onboard weapon systems or fuel. This can endanger surrounding ships, so the buffer offers added protection. Also for flight operations, the carrier must turn into the wind, requiring a large turn radius, making it imperative that its surrounding waters are devoid of any vessels. Air operations also require a bubble of airspace for recovering aircraft low on fuel, which the exclusion zone provides. The rule is only violated when combat action requires it, but under normal conditions, breaking the buffer can be hazardous.

Another aspect of carrier operations results in high-powered radar and electronic warfare radio signals. These can disrupt communications and electronics, especially with commercial, civilian vessels. Keeping them away limits potential damage to their navigation and communications equipment. The carrier is further protected by a series of submarines, cruisers, and guided-missile destroyer escorts, ensuring that no vessels stray too close. This ensures that everyone on or around an aircraft carrier like the Lincoln remains safe and secure.

Tech

5 Things You Didn’t Know Your Apple Watch Can Do In 2026

Are you using your Apple Watch to its full potential? Whether you’ve owned an Apple Watch for years, answering texts and counting steps with the small device on your wrist, or you’re new to wearing a smart watch, it’s likely that there’s a trove of hidden features and tools that you’ve never used.

Apple currently sells three versions of its popular watch: the Series 11, the SE 3, and the Ultra 3. Each version offers different features, display sizes, and battery life, but no matter which version you own, there are hidden tools that you aren’t utilizing.

It’s common knowledge that you can answer texts and phone calls, tap to pay, and track your daily exercise routine, but all models of Apple Watch offer much more. Before you get too involved in picking a fun watch face and comfortable or stylish band, take the time to learn about all the functions your watch offers. After all, it’s a big investment, especially if you opted for the high-end Ultra 3. Here are some Apple Watch features that may have flown under your radar.

Sleep apnea detection

If you or a loved one snores loudly or wakes up after hours of sleep still feeling tired, you may be showing symptoms of sleep apnea, a disorder that causes pauses in your breathing while you’re asleep. It affects about 30 million Americans, and the risk increases as you age. Untreated sleep apnea can lead to other issues with your health, such as high blood pressure and cardiac rhythm disturbances. If you suspect you have sleep apnea, you should make an appointment with a health care provider, but while you wait to be seen by a doctor, your Apple Watch can help look for breathing disturbances.

The Sleep Apnea Notifications feature is available on Apple Watch Series 9 or later, Apple Watch Ultra 2, or Apple Watch SE 3. Your phone must be updated to the latest version of iOS, and you must also turn on the Sleep Tracker feature, found in the Health app on your phone. Then, wear your watch when you sleep for a minimum of 10 nights over a 30-day period, and the data will be analyzed every 30 days.

To turn on sleep apnea notifications, open the Health app on your iPhone, tap Search, then tap Respiratory, and set up Sleep Apnea Notifications. If you receive a notification, you can export the report as a PDF to share with your health care provider.

Mute notifications with gestures

You’re in an important meeting, or mid-way through the first act of Hamilton, when you suddenly realize you forgot to silence notifications on your Apple Watch. The situation dictates stealth — you don’t want to interrupt your boss or draw attention to yourself in a dark theatre. Luckily, Apple offers several options that will allow you to quickly mute the notifications on your watch.

If you only remember that notifications are active because you receive an alert, such as an incoming phone call or text, you can quickly mute your that alert by covering your watch display with your hand for at least three seconds. You’ll feel a tap to notify you that you’ve successfully muted the notification. This option is typically on by default, but you can check by going to the Settings app on your watch and tapping Gestures.

You can also mute calls and dismiss notifications simply by quickly turning your wrist over and back again. Apple dubbed this the wrist flick gesture, and it’s supported on the SE 3, Series 9, Ultra 2, and later models. Again, this feature is turned on by default, and you can access it on the Settings app under Gestures.

Live Listen

If you’re hard of hearing, you have a loved one that is struggling with their hearing, or you’re simply struggling to hear clearly in an especially noisy situation, you can turn an Apple Watch into an accessibility aid using Live Listen. This feature uses the microphone on your iPhone to stream sound to your AirPods or MFi hearing devices. When paired with your Apple Watch, a transcription of the conversation also appears on your watch’s screen in real time.

You must use headphones or a hearing device with this feature, and Live Captions is not available in all languages or regions. Apple also warns that the accuracy of the captions may vary, so you should not rely on the transcription in an emergency situation. Live Listen is available with watchOS 26, which requires an Apple Watch Series 6 or SE 2 or later. It’s available on all models of the Apple Watch Ultra.

If you want to keep Live Listen easily accessible, add it to your watch’s Control Center. Once you’ve done that, simply place your phone near the source you want to listen to, such as a speaker or a lecturer. Open the Control Center on your watch and tap the Hearing Controls button. Scroll down to Live Listen, then you can start a session, or rewind a current session, view the live transcription, and stop the session.

Music recognition

We’ve all been there: you’re enjoying coffee at a cafe or watching a movie with friends and you hear a catchy tune that you simply love. You don’t know the name or the artist, and the song is fleeting – once it’s over, you may wait months to hear it again! In the days before smart phones, you’d have to describe the song to a friend or family member, hoping someone would recognize it from your clumsy humming. Eventually, music recognition apps like Shazam appeared on the scene, but you’d have to get your phone out and get the app going before the song ended.

If you own an Apple Watch, you no longer have to hum for friends or even get your phone out of your pocket. Apple now owns Shazam and has built music recognition directly into your watch, with no additional app necessary. Simply open Music Recognition on your watch by tapping the icon (a blue circle with a white, S-shaped logo) and tap again to initiate listening. Once it identifies the song, your watch will display both the title and artist. You can then see the song in Apple Music, add it to your library or playlist, and even see additional details about the song, such as the album and release date, all without pulling out your phone. If you forget to take note of a song that you heard days or weeks earlier, open the Music Recognition app on your watch and scroll down to see a history of identified songs. This capability is available on all watches running watchOS26.

Live translation

Only about 23% of Americans are bilingual, or able to speak more than one language. Though much of the world speaks English, this can still be a challenge, especially when we travel — only about 360 million out of more than eight billion people speak English as their first language. If you frequently travel internationally, you may want to consider investing in an Apple Watch rather than relying on a translation app on your phone.

Apple’s live translation app allows users to translate both text and voice into a long list of supported languages. You can also download new languages so you can use them without an internet connection. Offline translation is available on the Apple Watch SE 3, Series 9 and newer, and Ultra 2 and newer models.

To use live translation, first open the Translate app on your Apple watch. Tap the language you want to translate your text or speech into. If you require verbal translation, tap the microphone button and say a phrase. Your watch will translate as you type or speak and translation will appear on your watch display. To play the audio translation, tap the play button. To automatically hear translations, tap More, then Play Translations. If it’s a common phrase that you’ll likely repeat throughout your day, you can save the translation as a favorite for easy access in the future. If a word has several meanings, your Apple Watch allows you to select the one you want, and you can also select feminine or masculine translations for words.

Tech

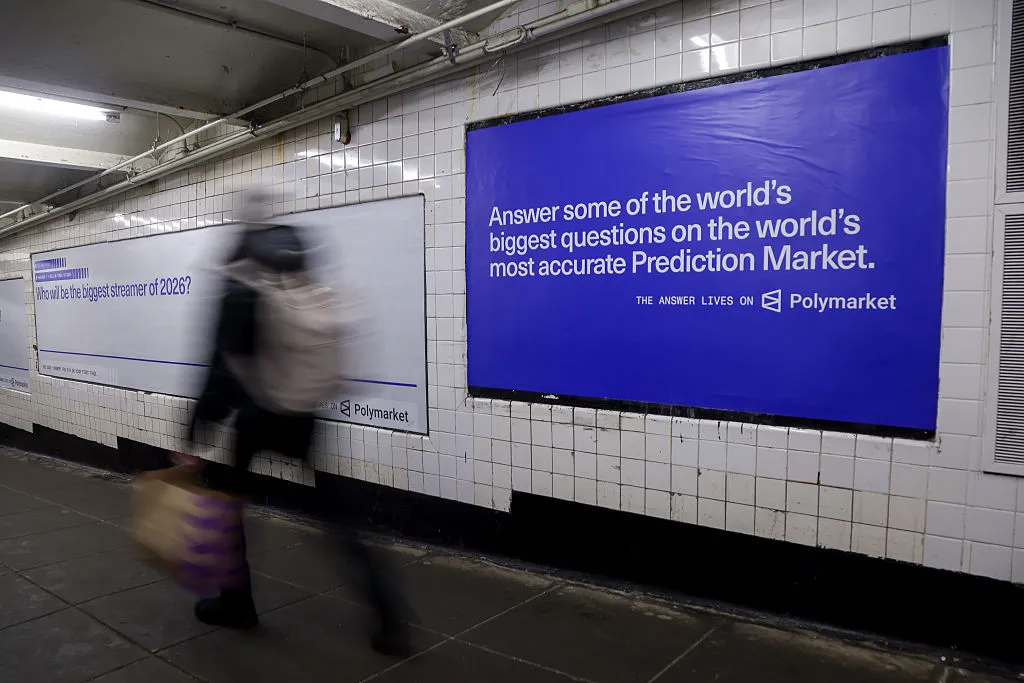

Polymarket took down wagers tied to rescue of downed Air Force officer

A Democratic congressman had harsh criticism for Polymarket for allowing users to bet on the date the United States would confirm the rescue of Air Force service members shot down over Iran.

In a social media post on Friday, Representative Seth Moulton wrote, “They could be your neighbor, a friend, a family member. And people are betting on whether or not they’ll be saved. This is DISGUSTING.” (President Donald Trump announced early Sunday that the second service member, a weapons system officer, has been rescued.)

Moulton also described Polymarket as a “dystopian death market” and noted that Donald Trump Jr. is an investor. The congressman recently banned his staff from participating in prediction markets like Polymarket and Kalshi.

Polymarket responded that it had taken the market down “immediately” for not meeting the company’s integrity standards.

“It should not have been posted, and we are investigating how this slipped through our internal safeguards,” the company said.

Polymarket previously saw hundreds of millions of dollars traded on contracts tied to the bombing of Iran by the United States and Israel.

Tech

Building A Vise Stand With Pen-Like Retracting Wheels

Old shop tools have a reputation for resilience and sturdiness, and though some of this is due to survivorship bias, some of it certainly comes down to an abundance of cast iron. The vise which [Marius Hornberger] recently restored is no exception, which made a good stand indispensable; it needed to be mobile for use throughout the shop, yet stay firmly in place under significant force. To do this, he built a stand with a pen-like locking mechanism to deploy and retract some caster wheels.

Most of the video goes over the construction of the rest of the stand, which is interesting in itself; the stand has an adjustable height, which required [Marius] to construct two interlocking center columns with a threaded adjustment mechanism. The three legs of the stand were welded out of square tubing, and the wheels are mounted on levers attached to the inside of the legs. One of the levers is longer and has a foot pedal that can be pressed down to extend all the casters and lock them in place. A second press on the pedal unlocks the levers, which are pulled up by springs. The locking mechanism is based on a cam that blocks or allows motion depending on its rotation; each press down rotates it a bit. This mechanism, like most parts of the stand, was laser-cut and laser-welded (if you want to skip ahead to its construction, it begins at about 29:00).

Unlike locking caster wheels, this provides significant grip when the wheels are retracted; considering the heft of the vise [Marius] restored, this must be helpful. If you’re more interested in building a vise than a stand, we’ve seen that too.

Thanks to [Keith Olson] for the tip!

-

NewsBeat3 days ago

NewsBeat3 days agoSteven Gerrard disagrees with Gary Neville over ‘shock’ Chelsea and Arsenal claim | Football

-

Business3 days ago

Business3 days agoNo Jackpot Winner and $194 Million Prize Rolls Over

-

Fashion2 days ago

Fashion2 days agoWeekend Open Thread: Spanx – Corporette.com

-

Entertainment7 days ago

Fans slam 'heartbreaking' Barbie Dream Fest convention debacle with 'cardboard cutout' experience

-

Crypto World4 days ago

Crypto World4 days agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Business3 hours ago

Business3 hours agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Crypto World6 days ago

Dems press CFTC, ethics board on prediction-market insider trades

-

Tech7 days ago

Tech7 days agoAvatar Legends: The Fighting Game comes out in July and it looks pretty slick

-

Sports1 day ago

Sports1 day agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Business4 days ago

Business4 days agoLogin and Checkout Issues Spark Merchant Frustration

-

Tech6 days ago

Tech6 days agoApple will hide your email address from apps and websites, but not cops

-

Tech6 days ago

Tech6 days agoEE TV is using AI to help you find something to watch

-

Sports5 days ago

Sports5 days agoTallest college basketball player ever, standing at 7-foot-9, entering transfer portal

-

Politics6 days ago

Politics6 days agoShould Trump Be Scared Strait?

-

Tech6 days ago

Tech6 days agoFlipsnack and the shift toward motion-first business content with living visuals

-

Tech6 days ago

Daily Deal: StackSkills Premium Annual Pass

-

Fashion7 days ago

Fashion7 days agoThe Best Spring Trends of 2026

-

Crypto World6 days ago

Crypto World6 days agoU.S. rule change may open trillions in 401(k) funds to crypto

-

Sports6 days ago

Sports6 days agoWomen’s hockey camp eyes fitness boost, tactics ahead of WC 2026 campaign | Other Sports News

-

Tech6 days ago

Tech6 days agoHow to back up your iPhone & iPad to your Mac before something goes wrong

You must be logged in to post a comment Login