Tech

Yes, Section 230 Should Apply Equally To Algorithmic Recommendations

from the it-won’t-do-what-you-think-if-you-remove-it dept

If you’ve spent any time in my Section 230 myth-debunking guide, you know that most bad takes on the law come from people who haven’t read it. But lately I keep running into a different kind of bad take—one that often comes from people who have read the law, understand the basics passably well, and still say: “Sure, keep 230 as is, but carve out algorithmically recommended content.”

Unlike the usual nonsense, this one is often (though not always) offered in good faith. That makes it worth engaging with seriously.

It’s still wrong.

Let’s start with the basics: as we’ve described at great length, the real benefits of Section 230 are its procedural protections, which make it so that vexatious cases get tossed out at the earliest (i.e., cheapest) stage. That makes it possible for sites that host third party content to do so in a way that they won’t get sued out of existence any time anyone has a complaint about someone else’s content being on the site. This important distinction gets lost in almost every 230 debate, but it’s important. Because if the lawsuits that removing 230 protections would enable would still eventually win on First Amendment grounds, the only thing you’re doing in removing 230 protections is making lawsuits impossibly expensive for individuals and smaller providers, without doing any real damage to large companies, who can survive those lawsuits easily.

And that takes us to the key point: removing Section 230 for algorithmic recommendations would only lead to vexatious lawsuits that will fail.

But what about [specific bad thing]?

Before diving into the legal analysis, let’s engage with the strongest version of this argument. Proponents of carving out algorithmic recommendations typically aren’t imagining ordinary defamation suits. They’re worried about something more specific: cases where an algorithm itself arguably causes harm through its recommendation patterns—radicalization pipelines, engagement-driven amplification of dangerous content, recommendation systems that push vulnerable users toward self-harm.

The theory goes something like this: maybe the underlying content is protected speech, but the act of recommending it—especially when the algorithm was designed to maximize engagement and the company knew this could cause harm—should create liability, usually as some sort of “products liability” type complaint.

It’s a more sophisticated argument than “platforms are publishers.” But it still fails, for reasons I’ll explain below. The short version: a recommendation is an opinion, opinions are protected speech, and the First Amendment doesn’t carve out “opinions expressed via algorithm” as a special category.

A short history of algorithmic feeds

To understand why removing 230 from algorithmic recommendations would be such a mistake, it helps to remember the apparently forgotten history of how we got here. In the pre-social media 2000s, “information overload” was the panic of the moment. Much of the discussion centered on the “new” technology of RSS feeds, and there were plenty of articles decrying too much information flooding into our feed readers. People weren’t worried about algorithms—they were desperate for them. Articles breathlessly anticipated magical new filtering systems that might finally surface what you actually wanted to see.

The most prominent example was Netflix, back when it was still shipping DVDs. Because there were so many movies you could rent, Netflix built one of the first truly useful recommendation algorithms—one that would take your rental history and suggest things you might like. The entire internet now looks like that, but in the mid-2000s, this was revolutionary.

Netflix’s approach was so novel that they famously offered $1 million to anyone who could improve their algorithm by 10%. We followed that contest for years as it twisted and turned until a winner was finally announced in 2009. Incredibly, Netflix never actually implemented the winning algorithm—but the broader lesson was clear: recommendation algorithms were valuable, and people wanted them.

As social media grew, the “information overload” panic of the blog+RSS era faded, precisely because platforms added recommendation algorithms to surface content users were most likely to enjoy. The algorithms weren’t imposed on users against their will—they were the answer to users’ prayers.

Public opinion only seemed to shift on “algorithms” after Donald Trump was elected in 2016. Many people wanted something to blame, and “social media algorithms” was a convenient excuse.

Algorithmic feeds: good or bad?

Many people claim they just want a chronological feed, but studies consistently show the vast majority of people prefer algorithmic recommendations, because they surface more of what users actually want, compared to chronological feeds.

That said, it’s not as simple as “algorithms good.” There’s evidence that algorithms optimized purely for engagement can push emotionally charged political content that users don’t actually want (something Elon Musk might take notice of). But there’s also evidence that chronological feeds expose users to more untrustworthy content, because algorithms often filter out garbage.

So, algorithms can be good or bad depending on what they’re optimized for and who controls them. That’s the real question: will any given regulatory approach give more power to users, to companies, or to the government?

Keep that frame in mind. Because removing 230 protections for algorithmic recommendations shifts power away from users and toward incumbents and litigants.

The First Amendment still exists

As mentioned up top, the real role of Section 230 is providing a procedural benefit to get vexatious lawsuits tossed well before (and at much lower cost) they would get tossed anyway, under the First Amendment. With Section 230, you can get a case dismissed for somewhere in the range of $50k to $100k (maybe up to $250k with appeals and such). If you have to rely on the First Amendment, it’s up in the millions of dollars (probably $5 to $10 million).

And, the crux of this is that any online service sued over an algorithmic recommendation, even for something horrible, would almost certainly win on First Amendment grounds.

Because here’s the key point: a recommendation feed is a website’s opinion of what they think you want to see. And an opinion is protected speech. Even if you think it’s a bad or dangerous opinion. One thing that the US has been pretty clear on is that opinions are protected speech.

Saying that an internet service can be held liable for giving its opinion on “what we think you’d like to see” would be earth shatteringly problematic. As partly discussed above, the modern internet today relies heavily on algorithms recommending stuff, giving opinions. Every search result is just that, an opinion.

This is why the “algorithms are different” argument fails. Yes, there’s a computer involved. Yes, the recommendation emerges from machine learning rather than a human editor’s conscious decision. But the output is still an expression of judgment: “Based on what we know, we think you’ll want to see this.” That’s an opinion. The First Amendment doesn’t distinguish between opinions formed by editorial meetings and opinions formed by trained models.

In the earlier internet era, there were companies that sued Google because they didn’t like how their own sites appeared (or didn’t appear) in Google search results. The E-Ventures v. Google case here is instructive. Google determined that E-Venture’s “SEO” techniques were spammy, and de-indexed all its sites. E-Ventures sued. Google (rightly) raised a 230 defense which (surprisingly!) a court rejected.

But the case went on longer, and after lots more money on lawyers was spent, Google did prevail on First Amendment grounds.

This is exactly what we’re discussing here. Google search ranking is an algorithmic recommendation engine, and in this one case a court (initially) rejected a 230 defense, causing everyone to spend more money… to get to the same basic result in the long run. The First Amendment protects a website using algorithms to express an opinion over what it thinks you’ll want… or not want.

Who has agency?

This brings us back to the steelman argument I mentioned above: what about cases where an algorithm recommends something genuinely dangerous?

Our legal system has a clear answer, and it’s grounded in agency. A recommendation feed is not hypnotic. If an algorithm surfaces content suggesting you do something illegal or dangerous, you still have to make the choice to do the illegal or dangerous thing. The algorithm doesn’t control you. You have agency.

But there’s a stronger legal foundation here too. Courts have consistently found that recommending something dangerous is still protected by the First Amendment, particularly when the recommender lacks specific knowledge that what they’re recommending is harmful.

The Winter v. GP Putnam’s Sons case is instructive here. The publisher of a mushroom encyclopedia included recommendations to eat mushrooms that turned out to be poisonous—very dangerous! But the court found the publisher wasn’t liable because they didn’t have specific knowledge of the dangerous recommendation. And crucially, the court noted that the “gentle tug of the First Amendment” would block any “duty of care” that would require publishers to verify the safety of everything they publish:

The plaintiffs urge this court that the publisher had a duty to investigate the accuracy of The Encyclopedia of Mushrooms’ contents. We conclude that the defendants have no duty to investigate the accuracy of the contents of the books it publishes. A publisher may of course assume such a burden, but there is nothing inherent in the role of publisher or the surrounding legal doctrines to suggest that such a duty should be imposed on publishers. Indeed the cases uniformly refuse to impose such a duty. Were we tempted to create this duty, the gentle tug of the First Amendment and the values embodied therein would remind us of the social costs.

Now, I should acknowledge that Winter was a products liability case involving a physical book, not a defamation or tortious speech case involving an algorithm, but almost all of the current cases challenging social media are self-styled as product liability cases to try (usually without success) to avoid the First Amendment. And that’s all they would be regarding algorithms as well.

The underlying principle remains the same whether you call it a products liability case or one officially about speech: the First Amendment bars requirements that publishing intermediaries must “investigate” whether everything they distribute is accurate or safe. The reason is obvious—such liability would prevent all sorts of things from getting published in the first place, putting a massive damper on speech.

Apply that principle to algorithmic recommendations, and the answer is clear. If a book publisher can’t be required to verify that every mushroom recommendation is safe, a platform can’t be required to verify that every algorithmically surfaced piece of content won’t lead someone to harm.

The end result?

So what would it mean if we somehow “removed 230 from algorithmic recommendations”?

Practically, it means that if companies have to rely on the First Amendment to win these cases, only the biggest companies can afford to do so. The Googles and Metas of the world can absorb $5-10 million in litigation costs. For smaller companies, those costs are existential. They’d either exit the market entirely or become hyper-aggressive about blocking content at the first hint of legal threat—not because the content is harmful, but because they can’t afford to find out in court.

The end result would be that the First Amendment still protects algorithmic recommendations—but only for the very biggest companies that can afford to defend that speech in court.

That means less competition. Fewer services that can recommend content at all. More consolidation of power in the hands of incumbents who already dominate the market.

Remember the frame from earlier: does this give more power to users, companies, or the government? Removing 230 from algorithmic recommendations doesn’t empower users. It doesn’t make platforms more “responsible.” It just makes it vastly harder for anyone other than the giant platforms to exist while also giving more power to governments, like the one currently run by Donald Trump, to define what things an algorithm can, and cannot, recommend.

Rather than diminishing the power of billionaires and incumbents, this would massively entrench it. The people pushing for this carve-out often think they’re fighting Big Tech. In reality, they’re fighting to build Big Tech a new moat.

Filed Under: 1st amendment, algorithmic feeds, algorithmic recommendations, algorithms, feeds, free speech, opinion, section 230

Tech

PSA: If you use the Meta AI app, your friends will find out and it will be embarrassing

Meta released its new Muse Spark AI model on Wednesday as part of a major overhaul of its AI efforts. It’s do-or-die time for Meta — the company cannot afford another billion-dollar investment into something that doesn’t pan out, like the metaverse. Well, maybe they literally can afford it, but it’d be pretty damaging, not to mention embarrassing.

Speaking of embarrassing: imagine a bunch of your friends, family, and strangers you met once in college getting a notification that you use the Meta AI app. I have lived this humiliation, and I am here to warn you that it could happen to you, too.

Meta’s Muse Spark model might be new, but the Meta AI app is not. It came out last April, and at the time, I wrote an article about the app’s launch. As one does when reporting on an app, I downloaded the app. I used it.

At some point, Meta started sending people Instagram notifications about which of their friends were using the Meta AI app, presumably to encourage them to download it. It has been almost a year. I continue to get texts from my friends in which they alert me that Instagram told them I am on the Meta AI app. This is generally considered to be uncool behavior.

In its first month and a half in the App Store, only 6.5 million people had downloaded the app, market intelligence provider Appfigures told us at the time. That’s a lot of people, but not for a company that counts an estimated 42% of the entire world as daily users of at least one of its apps.

Perhaps that’s why in the early days of the Meta AI app, I stuck out on my friends’ Instagram notification feeds. (Yes, your friends will get a whole notification devoted to your use of the app, displayed as prominently as a new follower.)

Things are looking up for the Meta AI app, though. It is seeing a spike in downloads after releasing its revamped chatbot, now charting at No. 5 on the U.S. App Store, up from No. 57, per Appfigures. That’s also why I must warn you now about the horrors you may face if you use this app and Instagram tells your friends.

As much as I don’t want people to know I installed an app with an AI-generated “vibes” feed, this issue runs deeper. Meta’s apps are so interconnected that it’s hard to keep up with what data we’re sharing, where, and with whom. Why would I think that my Instagram mutuals would know I’m on the Meta AI app? (At least X didn’t tell people that I used Grok’s anime waifu — which was also for work.)

In order to access the Meta AI app, you have to log in with a Meta account — so, I joined using the same account I’ve had since I was a teenager, which connects to my Instagram and Facebook. Meta will continue to use whatever I do on Instagram, Facebook, and yes, now even the Meta AI app, to show me targeted ads. So, if I were to confide in Meta AI about an issue with my menstruation, Instagram might show me ads for period panties.

The Meta AI app never asked permission to notify people about my use of the app, nor has it asked if I want my AI chats to be used as advertising fodder. But it doesn’t have to, because I probably implicitly opted into it in some terms of service agreement that I never actually read. I mean, I also learned via Instagram that my brother was weirdly invested in Eurovision last year, since we can all see each other’s liked Reels. We all know too much about each other, and yet, Meta knows even more.

In a sense, I’m lucky that the only thing that people knew about my Meta AI usage was that I was on the app. Some users had unwittingly shared much more incriminating information about themselves: their AI chatlogs.

As a grizzled veteran of the Meta AI app, I can tell you that back in my day (over the summer), Meta experimented with a Discover feed on the app. Meta did not account for the fact that a lot of boomers use its app, and they are sometimes bad at using technology. Combine that with the fact that, since AI is not real, people will use chatbots to discuss things that they find too intimate or embarrassing to share with others. Then, you have a disaster on your hands.

Soon, people like a16z partner Justine Moore began to notice that the Meta AI discover feed was mostly filled with older users who didn’t realize that they were sharing their AI conversations with the world.

Sometimes, these shared conversations were benign: at the time, I encountered a man with a Southern accent who asked, “Hey, Meta, why do some farts stink more than other farts?” In other cases, we saw people share their personal home address, information about medical issues, and intimate concerns about their marriage.

To give Meta some credit, these users did have to manually press publish on these chats. But enough people seemed to accidentally share private information that, clearly, there was a design issue to address. (Meta has since removed this Discover feed.)

At least if using the Meta AI app turns out to be a hot new trend, I will get to rub it in my friends’ faces that I was there first. But I would not bet on that future. There is still that “Vibes” feed, after all.

Tech

This Coffee Writer Brewed 20 Bags of Grocery Store Beans. Here Are the 5 Best to Buy

1: Intelligentsia House Blend

Trendy Intelligentsia coffee isn’t worth the steep price.

Intelligentsia is a Chicago-founded roaster that’s become a widespread specialty coffee brand in grocery stores coast to coast. At $20 for a 12-ounce bag of whole beans at my local Brooklyn grocery store, Intelligentsia House Blend coffee can be considered an investment. The lack of a “roasted by” date on the bag, however, means freshness is a gamble. This tester ended up with a whisper of flavor with three months left on the “best by” date. It lacked any noticeable tasting notes, potentially due to an overstay in the grocery aisle. The Intelligentsia House Blend bag also lacks any tasting note descriptors or instructions whatsoever on the packaging.

Even with low expectations, the beans still produced a bland cup of coffee, firmly placing it in the “low” category. If you’re interested in drinking Intelligentsia coffee, I’d recommend heading to the brand’s coffee shops or purchasing a fresh bag straight from the roaster.

What to try instead: Groundwork

Groundwork’s Organic Bitches Blend was a standout for its deep flavor and notes of dark chocolate and caramel.

For specialty coffee from the grocery store, instead look for brands that include a “roasted by” date, such as Verve or Partners coffee. The closer to the roast date, the better, but because packaging helps protect coffee, it could take three to six months before flavor degradation results in a lackluster brew. Otherwise, Groundwork Organic Bitches Brew was a standout for deep flavor and its notes of dark chocolate and caramel even without a roasted date. It also includes a ratio of coffee to water on the bag for anyone who wants a launching point.

2: Maxwell House House Blend

I’d suggest politely declining your invitation to Maxwell House.

The first sip of Maxwell House House Blend was bitter, and the progressive sips didn’t improve. Like other value-driven blends, this one tastes as if the manufacturer never expected anyone to drink it without copious amounts of cream and sugar. I don’t believe you should need to drown out the notes of burnt beans and organic fillers to make it drinkable.

The Maxwell House instructions recommend only 1 tablespoon for 6 ounces of water. Once the Maxwell House started to cool, the flavor was milder and less offensive, but I didn’t find it more enticing since any true tasting notes fell flat. I also noticed an acidity that made me nervous about a stomachache. For a household brand, I had hoped for a better showing.

What to try instead: Chock Full O’ Nuts Original

Chock Full o’ Nuts’ original blend was a surprise hit among the budget set.

Avoid the kind of coffee that makes people say, “bean juice is not for them.” If you want an affordable, approachable can of coffee, reach for the original Chock Full O’ Nuts for a slightly sweet, mild variety. You could also reach for Lavazza Tierra Organic for a similarly priced medium roast or Café Bustelo for a more robust roast in a familiar canned packaging.

3: Great Value Classic Roast by Walmart

Walmart’s Great Value coffee is cheap for a reason.

The Great Value Classic Roast brand is a generic offering akin to Folgers, where value and quantity are top priorities. I wanted to test this option since Walmart is one of the largest grocery store chains in the US and a staple at my parents’ house. That said, I’d best equate the flavor of this blend with church-basement or airplane coffee. The beans offer a burnt yet bland flavor that begs for extra creamer. Still, the sheer volume is hard to beat at 25.4 ounces per can. When it comes to coffee, I’m a pragmatist, not a purist, so I understand that some of us treat it as fuel rather than a specialty beverage. I’m here to say there’s a better way forward.

What to try instead: Whole Foods Early Bird Blend

Early Bird is one of the best value coffees I tested.

Anyone looking for value should consider subscribing to Whole Foods Market coffee deliveries for an additional discount and savings on both time and gas. Great Value Classic Roast isn’t 100% arabica, so it likely contains cheaper, more caffeinated robusta beans. Another option is Café Bustelo espresso grounds for a rich cup that still packs plenty of kick thanks to its robusta blend.

4: Chock Full o’ Nuts French Roast

Chock Full o’ Nut’s French roast left something to be desired.

Chock Full o’ Nuts is, for many, an iconic grocery store coffee brand, yet it doesn’t have the ubiquity of Folgers or Maxwell House. My taste test revealed a slightly sweet finish and a very mild flavor. I anticipated a more robust cup of coffee; however, that wasn’t the case, despite the French Roast descriptor. The “best by” date on the can I purchased had five months remaining. Based on that alone, I can’t recommend buying this one if you’re expecting something hearty and deep-roasted, as the packaging suggests. The fact that it’s still quite drinkable means it’s a safer option than some others on this list.

What to try instead: Café Bustelo

Café Bustelo is versatile and smooth — a true dark roast.

If you’re looking to try a dark roast, then grab a can of Cafe Bustelo, which I detailed in full in the “best” grocery store coffee list above. It’s versatile, smooth and a true dark roast as an espresso blend. Of course, you can also stick with the original Chock Full O’ Nuts blend for a sweet yet nutty flavor in a canned grocery store coffee, too.

5: Eight O’Clock Original Blend

I found Eight O’clock’s signature blend flat and acidic.

The Eight O’Clock Original blend ground coffee was passable, though uninspired. The medium roast shares a certain sweetness with Chock Full O’ Nuts but offers a more robust finish. I started with a small, half-batch since the bag recommends 2 to 3 tablespoons of coffee to 12 ounces of water. I then tried a full 2.5:12 oz ratio. The resulting brew was somewhat flat and acidic, with a thin body and a flavor profile that was immediately forgettable after each sip. The “best by” date on the bag was eight months out, suggesting that despite the manufacturer’s optimistic shelf-life projection, the quality had not held up.

What to try instead: Lavazza Tierra Organic

Lavazza’s Tierra blend provided a robust flavor without much bitterness.

For something reasonably priced and available at big-box stores, try Lavazza Tierra Organic coffee. A ratio of 1 tablespoon of coffee to 6 ounces of water provided a robust flavor without bitterness, maintaining a heavier roast profile than the light roast, with full-bodied descriptors noted on the bag. Alternatively, you can rely on Caribou Coffee Daybreak Blend in the Midwest or Peet’s Coffee House Blend at most big-box grocery stores.

Tech

Meta Removes Ads For Social Media Addiction Litigation

Meta has started removing ads from law firms seeking clients for social media addiction lawsuits, just weeks after a jury found Meta and YouTube negligent in a landmark case involving harm to a young user. “Lawyers across the country now are seeking new plaintiffs, in the hopes of bringing a class action lawsuit that could result in lucrative verdicts,” reports Axios. From the report: Axios has identified more than a dozen such ads that were deactivated today, some of which came from large national firms like Morgan & Morgan and Sokolove Law. Almost all of them ran on both Facebook and Instagram. Some also appeared on Threads and Messenger, plus Meta’s Audience Network — which distributes ads to thousands of third-party sites.

One such ad read: “Anxiety. Depression. Withdrawal. Self-harm. These aren’t just teenage phases — they’re symptoms linked to social media addiction in children. Platforms knew this and kept targeting kids anyway.” A few of the ads still remain active, including some that were posted earlier today. “We’re actively defending ourselves against these lawsuits and are removing ads that attempt to recruit plaintiffs for them,” a Meta spokesperson said in a statement. “We will not allow trial lawyers to profit from our platforms while simultaneously claiming they are harmful.”

Tech

OpenAI introduces ChatGPT Pro $100 tier with 5X usage limits for Codex compared to Plus

OpenAI is making moves to try and court more developers and vibe coders (those who build software using AI models and natural language) away from rivals like Anthropic.

Today, the firm arguably most synonymous with the generative AI boom announced it will begin offering a new, more mid-range subscription tier — a $100 ChatGPT Pro plan — which joins its free, Go ($8 monthly), Plus ($20 monthly) and existing Pro ($200 monthly) plans for individuals using ChatGPT and related OpenAI products.

OpenAI also currently offers Edu, Business ($25 per user monthly, formerly known as Team) and Enterprise (variably priced) plans for organizations in said sectors.

Why offer a $100 monthly ChatGPT Pro plan?

So why introduce a new $100 ChatGPT Pro plan, then?

The big selling point from OpenAI is that the new plan offers five times greater usage limits on Codex, the company’s agentic vibe coding application/harness (the name is shared by both, as well as a lineup of coding-specific language models), than the existing, $20 monthly Plus plan, which seems fair given the math ($20×5=$100).

As OpenAI co-founder and CEO Sam Altman wrote in a post on X: “It is very nice to see Codex getting so much love. We are launching a $100 ChatGPT Pro tier by very popular demand.”

However, alongside this, OpenAI’s official company account on X noted that “we’re rebalancing Codex usage in [ChatGPT] Plus to support more sessions throughout the week, rather than longer sessions in a single day.”

That sounds a lot like OpenAI is also simultaneously reducing how much ChatGPT Plus users can use its Codex harness and application per day.

What are the new usage limits for the new $100 ChatGPT Pro plan vs. the $20 Plus?

So, what are the current limits on the $20 Plus plan? The new Pro plan gives you 5X greater than…what?

Turns out, this is trickier than you’d think to calculate, because it actually varies depending on which underlying AI model you are using to power the Codex application or harness, and whether you are working on code stored in the cloud or locally on your machine or servers.

OpenAI’s Developer website underwent several updates today, so we’ve only reflected the latest pricing structure and offerings below as of Thursday, April at 10:45 pm ET. It notes that for individual users, Codex usage is categorized by “Local Messages” (tasks run on the user’s machine) and “Cloud Tasks” (tasks run on OpenAI’s infrastructure), and those limits share a five-hour rolling window.

It also says additional weekly limits may apply. The current Codex pricing page now shows lower displayed usage ranges than the older version, and it measures Code Reviews in a five-hour window rather than per week. For Pro 5x specifically, OpenAI says the currently shown limits include a temporary 2x usage boost that ends May 31, 2026.

ChatGPT Plus ($20/month)

-

GPT-5.4: 20–100 local messages every 5 hours.

-

GPT-5.4-mini: 60–350 local messages every 5 hours.

-

GPT-5.3-Codex: 30–150 local messages and 10–60 cloud tasks every 5 hours.

-

Code Reviews: 20–50 every 5 hours.

ChatGPT Pro 5x ($100/month)

-

GPT-5.4: 200–1,000 local messages every 5 hours.

-

GPT-5.4-mini: 600–3,500 local messages every 5 hours.

-

GPT-5.3-Codex: 300–1,500 local messages and 100–600 cloud tasks every 5 hours.

-

Code Reviews: 200–500 every 5 hours.

Note: The limits shown for Pro 5x include a temporary 2x usage boost that ends May 31, 2026.

ChatGPT Pro 20x ($200/month)

-

GPT-5.4: 400–2,000 local messages every 5 hours.

-

GPT-5.4-mini: 1,200–7,000 local messages every 5 hours.

-

GPT-5.3-Codex: 600–3,000 local messages and 200–1,200 cloud tasks every 5 hours.

-

Code Reviews: 400–1,000 every 5 hours.

-

Exclusive access: Includes GPT-5.3-Codex-Spark in research preview for ChatGPT Pro users only. OpenAI says it has its own separate usage limit, which may adjust based on demand.

And as OpenAI’s Help documentation states:

“The number of Codex messages you can send within these limits varies based on the size and complexity of your coding tasks, and where you execute tasks. Small scripts or simple functions may only consume a fraction of your allowance, while larger codebases, long running tasks, or extended sessions that require Codex to hold more context will use significantly more per message.”

The larger strategic implications and context

OpenAI’s sudden move toward the $100 price point and expanded agentic capacity comes amid the unprecedented financial ascent of its chief rival, Anthropic.

Just days ago, Anthropic revealed its annualized run-rate revenue (ARR) has topped $30 billion, surpassing OpenAI’s last reported ARR of approximately $24–$25 billion.

This growth has been fueled by the massive adoption of Claude Code and Claude Cowork, products that have set the benchmark for enterprise-grade autonomous coding.

The competitive friction intensified on April 4, 2026, when Anthropic officially blocked Claude subscriptions from being used to provide the intelligence for third-party agentic AI harnesses like OpenClaw.

To be clear, Anthropic Claude models themselves can still be used with OpenClaw, users just must now pay for access to Claude models through Anthropic’s application programming interface (API) or extra usage credits, rather than as part of the monthly Claude subscription tiers (which some have likened to an “all-you-can eat” buffet, making the economics challenging for Anthropic when power users and third-party harnesses like OpenClaw consume more than the $20 or $200 monthly user spend on the plans in tokens).

OpenClaw’s creator, Peter Steinberger, was notably hired by OpenAI in February 2026 to lead their personal agent strategy, and has, since joining, actively spoken out against Anthropic’s limitations — advising that OpenAI’s Codex and models generally don’t have the same restrictions as Anthropic is now imposing.

By hiring Steinberger and subsequently launching a Pro tier that provides the high-volume capacity Anthropic recently restricted, OpenAI is effectively courting the displaced OpenClaw community to reclaim the professional developer market.

Tech

Musk, Bezos, Both Cry To Trump’s FCC In Bid To Dominate Satellite Broadband

from the dominating-the-skies dept

Elon Musk is desperate to dominate the Low-Earth-Orbit (LEO) satellite broadband market. So is Jeff Bezos. And now the two billionaires are engaged in proxy fights at Trump’s FCC over who’ll get the honor.

Amazon’s LEO offering, Project Leo, is significantly behind Musk’s Starlink, and has been rushing to build out its LEO satellite constellation. To slow down their pace, Musk’s Starlink has started complaining to the FCC, insisting that Amazon violated orbital debris requirements by launching satellites into orbital altitudes that are too high, increasing the risks to other satellites and spacecraft.

Amazon has responded by basically saying Musk’s Starlink is lying to slow the arrival of a competitor to market:

“SpaceX only objected to the launch parameters after moving its Starlink satellites into nearby altitudes, Amazon said. Changing the altitude of a recent Leo launch would have delayed it by months, according to Amazon. Both Amazon and SpaceX have accused each other of using Federal Communications Commission proceedings to delay the other’s satellite launches at various times over the years.”

Hoping to avoid harming “free market innovation,” it took years for the FCC to finally recently implement some bare bones “space junk” LEO collision guidance, though enforcement has been sporadic, and I’m doubtful two billionaire Trump donors will ever see much in the way of accountability.

Both billionaires are hoping to leverage their ongoing support of Trump to their own benefit. Both have already had significant success on that front; Musk and Bezos convinced the Trump administration to redirect billions in infrastructure bill subsidies (earmarked for reliable, faster fiber) over to their LEO satellite broadband businesses for service they already planned to deploy.

I’m not inclined to believe either billionaire or their companies. Nor am I inclined to believe that FCC boss Brendan Carr has the integrity or competence to manage this dispute or to protect the public longer term. Starlink has recently seen several satellites blow up in orbit and has been very murky about the reasons for it. Tens of thousands more LEO satellites are slated for launch in the next few years.

The grand irony is that the mad dash toward LEO satellite broadband doesn’t really deliver on the promise of significantly better broadband. LEO satellite connectivity is great for folks who have no other option, but the technology comes with a long list of caveats.

The resulting networks will be too congested to truly scale or provide real competition for local telecom monopolies. The resulting services are also routinely too expensive for the folks who currently can’t afford access. Then there’s the problem of LEO satellite launches harming astronomy research and the ozone layer, issues I suspect won’t be a priority for Bezos, Musk, or Carr.

I’d expect to see much more orbital (and terrestrial consumer) chaos in the years to come, given absolutely none of these folks tend to think too deeply about the public interest.

Filed Under: brendan carr, elon musk, fcc, jeff bezos, leo, leo satellites

Companies: amazon, project leo, spacex, starlink

Tech

Microsoft starts removing unnecessary Copilot buttons in Windows 11

Microsoft has rolled out a Notepad update for Windows Insiders that removes the Copilot branding and icon from within the app, Windows Central has reported. The old Copilot menu has been replaced with “writing tools,” but it’s worth noting that the tools are still powered by AI and are pretty much identical to the selection found in the old menu. Microsoft has just replaced the Copilot button with a pen icon. In addition, the company has removed mentions of AI in the Settings menu and has placed the option to disable the AI-powered writing tools within the “Advanced features” section.

The company first announced that it was dialing back its Copilot branding last month, most likely in response to all the criticisms against the AI assistant. It’s not very well-liked, with people complaining that Microsoft is forcing them to use the assistant inside all its apps and that Copilot doesn’t provide a consistent experience across different applications. “You will see us be more intentional about how and where Copilot integrates across Windows,” said Windows and Devices EVP Pavan Davuluri. Microsoft also promised to remove “unnecessary Copilot entry points,” starting with Notepad, Snipping Tool, Photos and Widgets. According to The Verge, Microsoft has already stopped showing the Copilot button when selecting areas to capture with the Snipping Tool, as well. Clearly, the company has been making good progress on yanking at least the visual reminders of Copilot from its apps.

Tech

Aventon Current ADV Electric Mountain Bike Review: Feels Just Like the Real Thing

While Aventon is known first and foremost as an ebike brand, the company started by making fixies in 2013. That gives it some bona fides when it comes to making enjoyable rides for experienced cyclists. (In addition to the Current ADV, there’s also a higher-end model, the Current EXP, with a more expensive carbon frame and better components.) Since its first venture into e-MTBs with the Ramblas in 2024, the company has continued to develop very nicely specced electric mountain bikes for the price.

The designers behind the newest iterations did a masterful job. The Current ADV looks 100 percent the part of contemporary mountain bike. With its 6061 aluminum frame, SRAM Eagle groupset, tubeless-ready Maxxis Minion tires wrapping a pair of double-walled 29-inch wheels, a 170-mm X Fusion Manic dropper post, a Rockshox Psylo Gold front suspension that boasts 150 mm of travel, and a Rockshox Deluxe Select+, it’d be easy to confuse the Current ADV for a traditional analog mountain bike.

Photograph: Michael Venutolo-Mantovani

It’s worth noting that while the motor is proprietary to Aventon, the components are not. It might be difficult to get your local bike shop to look at the battery and motor, but assuming those are fine, it won’t be hard to swap anything else out should you need to repair it.

Despite its design and ride feel, all of which can make you easily forget you’re riding electric, the Current ADV is a class 1 e-MTB (which can be toggled to a class 3 via the brand’s app), and one that gives hours and hours of riding on a single charge.

The 800-watt-hour battery is tucked neatly into the bike’s relatively small downtube, giving a claimed range of up to 105 miles. Of course, I didn’t get nearly that, as I was constantly switching through any of the Current ADV’s five power modes (Auto, Eco, Trail, Turbo, and a new, 30-second Boost Mode for extra torque on big hills). Still, the longest day I spent in the bike’s super-comfy Selle Royal SRX saddle was about three hours. In that time, the battery dropped only about 20 percent.

Eyes Up

The biggest flaw I found in the Current is small and seemingly simple, but it nonetheless had a major impact on my rides. That is the fact that, when clicking through power settings, the bike beeps, and all those beeps sound the same.

When I’m mountain biking (and probably when you’re mountain biking, too), the last thing I want to do is to take my eyes off the trail. Having those beeps be the exact same tone meant I instinctively kept looking down at the top-tube-mounted display to see which mode I was in.

Tech

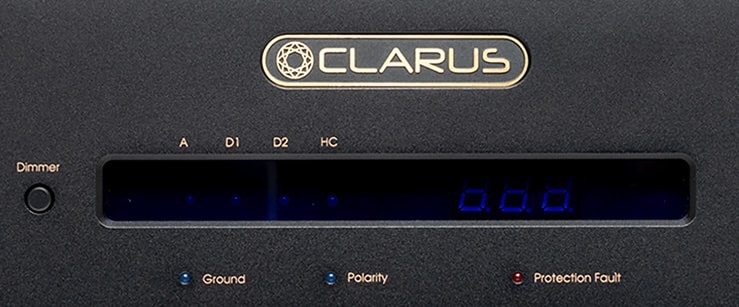

Clarus Concerto MKII Power Conditioner Debuts at AXPONA 2026 With Ultra Premium Design and Price to Match

Clarus isn’t chasing the budget crowd at AXPONA 2026, and with the debut of the Concerto MKII power conditioner, it’s making that crystal clear. Clean, stable power isn’t an audiophile myth; it directly impacts noise floor, dynamic range, and the overall coherence of a system. Your AC power isn’t perfectly clean. It can carry noise from other devices on the same circuit; dimmers, refrigerators, and switching power supplies, along with voltage fluctuations, spikes, and grounding issues that can impact how your system performs.

Clarus has built a serious reputation in high-performance audio, particularly with its well-regarded cable lineup, and the Concerto MKII leans into that credibility with a no-compromise approach to power delivery and conditioning. It’s not cheap—far from it. In fact, the price lands well beyond what most people have ever considered spending on an entire hi-fi system. But for those running upper five-figure or six-figure setups, where every upstream variable matters, this is exactly the kind of component that could make sense—and one worth hearing before dismissing outright.

Building on the original Concerto, the MKII increases current capacity, refines the filtering architecture, improves grounding, and optimizes power distribution. The goal is lower noise without restricting current delivery or altering the electrical behavior that high-performance audio systems rely on.

The Concerto MKII is built on the idea that reducing noise should not come at the expense of dynamics or tonal integrity. Rather than chasing maximum attenuation, its design focuses on the types of noise that actually propagate through real-world audio systems, aiming to lower interference while preserving musical coherence and ensuring unrestricted current delivery for analog, digital, and high-current components.

Clarus Repositions the Concerto MKII for a Different Class of System

The original Concerto launched in 2019 at $4,000, but rising material, manufacturing, and development costs would push that same design closer to $8,000 today. Rather than simply reissue it at a higher price, Clarus chose to develop the MKII as a redesigned successor with updated performance goals. The result is a significantly more expensive product at $12,000, positioned not as a direct replacement, but as a step up aimed at higher-end systems where incremental improvements in noise reduction and power delivery are more likely to be realized.

“Many power conditioners reduce noise by constraining current,” says Jay Victor, Concerto MKII power conditioner design engineer. “The MKII takes a different approach by targeting acoustically destructive noise while preserving the dynamics and harmonic structure that make music feel alive.”

Inside the Concerto MKII

At the core of the Concerto MKII is an application-specific filter architecture that tailors its approach to the electrical demands of connected components. Sensitive analog gear is fed through high-permeability common-mode “Clarus FluxCore” filtering, designed to reduce ground-referenced noise without restricting current or compressing dynamics.

Digital Optimized Power Outlets: Four outlets labeled “Digital” use a combination of differential-mode and common-mode “Clarus FluxCore” filtering to suppress high-frequency noise generated by digital sources.

High Current Power Outlets: Two outlets labeled “High Current” are equipped with “Clarus FluxCore-HC” filtering, engineered to reduce noise while supporting large transient current demands and limiting both conducted and radiated interference.

Analog Power Outlets: Two outlets labeled “Analog” utilize high-permeability “Clarus FluxCore” nanocrystalline inductor technology to deliver low-noise power tailored for sensitive analog components.

FluxCore Filtering: Designed to address common AC line imperfections such as noise and interference, Clarus’ FluxCore filtering aims to stabilize incoming power without restricting current delivery, helping reduce variables that can impact system performance.

Oil-Filled Capacitors: Newly added oil-filled capacitors are used to improve thermal stability and long-term reliability in the analog and high-current sections.

High Current Tolerance: The Concerto MKII features full 20-amp internal circuitry, allowing it to operate on both 15-amp and 20-amp residential lines without introducing current limitations. A star-ground architecture and isolated-ground outlets are employed to reduce ground noise and minimize circulating currents.

Internal Power Distribution: Power is distributed via heavy-gauge copper bus bars to reduce voltage drop and support high transient current demands.

System Protection: Protection is handled by a hydraulic-magnetic circuit breaker designed to tolerate high inrush currents without nuisance tripping. Constrained-layer damping is applied within the chassis to reduce mechanically induced vibration from internal components, helping maintain stable operation.

Low Noise Performance: Together, these design elements aim to provide a stable, low-noise electrical foundation that allows connected components to operate consistently under real-world conditions.

By addressing electrical noise based on its source and how it propagates, and by aligning filtering, grounding, and current delivery with the needs of each connected component, the Concerto MKII is designed to reduce interference without imposing a distinct sonic character. The intended result is lower background noise, improved low-level detail, greater dynamic contrast, and better clarity during complex passages—without restricting system performance.

Clarus Concerto MKII Specifications

| Clarus Model | Concerto MKII |

| Product Type | Power Conditioner |

| Price | $12,000 |

| Applications | High-end audio, home theater, analog and digital systems |

| Power Rating | 1800 Watts / 15 Amps (20 Amps Max) |

| Number of Power Outlets | 8 Outlets Total |

| Power Outlet Zones | 2 High Current Optimized 4 Digital Optimized 2 Analog Optimized |

| Filtering Technology | Clarus-Core C-Core and HC-Core inductors |

| Line Voltage | 120VAC 15A, 50/60 Hz |

| Spike Protection Modes | L-N, N-G, L-G |

| Maximum Surge Current | 80 kA (Line-to-Neutral) |

| Spike Clamping Voltage (VMAX) | 395V |

| Max Spike Energy (Joule) | L-N = 960 Joules |

| Voltage Monitoring | Under/over-voltage shutdown with auto reset |

| Safety Features | TMOV (Thermally Protected Metal Oxide Varistor) surge suppression, thermal breaker, fault indicators |

| Chasis Construction | Vibration-controlled housing with cable support bar |

| RoHS (Restriction of Hazardous Substances) | Yes |

| Dimensions (HWD) | 3.5” X 19” X 12.75” |

| Net Weight | 19 lbs. |

| Warranty | 3 Years (Limited) |

| Package Contents | 1 x CLARUS CONCERTO MKII 1 x Installation & Operation Guide 1 x Rear Cable Support Bracket NOTE: Power Cable not included |

The Bottom Line

Power conditioning sits in that uncomfortable gray area between necessity and obsession, and the Concerto MKII doesn’t try to pretend otherwise. What Clarus is offering here is not a universal upgrade, but a highly targeted solution for systems where power quality is already a known variable and the rest of the chain is resolving enough to expose it.

What makes the Concerto MKII stand out is its application-specific filtering approach, separation of outlet types, and focus on maintaining current delivery rather than restricting it. That’s a meaningful distinction in a category where some designs can choke dynamics in the name of noise reduction. The inclusion of 20-amp internal architecture, star grounding, and heavy-duty power distribution shows that this is built for serious systems, not entry-level setups.

Who is this for? Not the average listener. Not even most enthusiasts. This is aimed squarely at owners of upper five-figure and six-figure systems, in environments where electrical noise, grounding issues, or inconsistent power are real concerns—not theoretical ones.

Is it worth $12,000? For most people, no. For a small group chasing the last few percent of performance and already invested deep enough that power delivery becomes part of system tuning, it might be. The key is knowing which side of that line you’re on before writing the check.

Price & Availability

The Clarus Concerto MKII Power Conditioner has a suggested retail price of $12,000 (not including power cable). It will become available during Q2 2026 through authorized Clarus retailers.

The Clarus Concerto MKII will be debuting at AXPONA 2026, April 10 – 12, in support of the Harman Luxury Group.

Related Reading:

Tech

Amazon pledges its satellite internet starts this year

Amazon’s satellite-based internet service, Leo, will enter service by mid-2026, so says company CEO Andy Jassy. Writing in his annual letter, Jassy claimed Leo would offer download speeds of up to 1Gbps, far more than what Starlink presently offers. Sadly, Amazon declined to offer any more details about what that mid-2026 service would look like. But given select partners have already been kicking Leo’s tyres for a while, we can only hope.

The mega-retailer is making some grand promises, including faster up and download speeds, cheaper cost and direct integration with Amazon’s other products. Of course, the company can also sell itself on the fact it’s a satellite internet provider not owned by Elon Musk. But it will have to buck its ideas up fast, given how far behind in its deployment of satellites it is.

— Daniel Cooper

The other big stories this morning

It’s a showcase for the Snapdragon X2 Elite.

Devindra Hardawar for Engadget

ASUS’ ZenBook A16 is a 16-inch ultraportable designed to go toe-to-toe with LG’s Gram Pro 16. It’s equipped with Qualcomm’s Snapdragon X2 Elite and designed to address the flaws Devindra Hardawar found in last year’s ZenBook A14. Did it succeed? You’ll have to read his review to get the full story, but he’s certainly happy to have spent the last week using this thing.

It will begin at the start of 2027.

Greece will ban under 15s from accessing social media, Prime Minister Kyriakos Mitsotakis has announced. Like many nations both in Europe and beyond, officials are concerned about the effect social media is having on children’s mental and physical health. The big platforms will be in charge of enforcing the ban, backed up by the hefty punishments enabled by the Digital Services Act.

Know what doesn’t lose support after a few years? Books.

Amazon

If you’re still using a Kindle or Fire tablet made in 2012 or before, then it’s going to get a little less useful on May 20. Amazon is discontinuing support for those earlier models on that date, removing the ability to purchase, borrow or download new titles. Thankfully, whatever is on the hardware already will remain, so don’t fret if you’re only a third of the way through Remembrance of Things Past.

Fancy, but heavy.

Billy Steele for Engadget

Billy Steele has been putting Fender Audio’s new speakers through their paces to find what can only be described as a mixed bag. Excellent audio quality and a wide variety of inputs get high praise, but the heavy weight, exposed wood and limited battery life all dent the paintwork.

About time too.

WhatsApp’s CarPlay interface isn’t the most elegant or easy way to keep in touch with your friends while driving. Meta has, however, given the UI a little polish to help make it a little easier to get something useful done without pulling your attention from the road.

Tech

The influencer economy’s invisible workers are first in line for the AI chop

The creator economy loves a neat little fairy tale: one magnetic person, one camera, one lucky break. It’s a great story. It’s also nonsense.

A lot of so-called organic growth has been industrialized for years. The Hollywood Reporter recently showed how major creators and media companies relied on armies of clippers to carve long videos into viral bait, turning audience growth into a volume game. And that operation never stopped with clippers. It sprawled into a wider layer of digital labor, from editors and thumbnail makers to virtual assistants handling scheduling, posting, inbox cleanup, and brand admin.

Many of those workers sit in the same countries that power global remote services, including the Philippines and India, where outsourcing still employs millions. The Philippines’ IT-BPM sector closed 2024 with 1.82 million jobs and $38 billion in revenue, while India’s tech sector workforce reached 5.43 million in FY24.

The creator economy didn’t invent this setup. It simply borrowed it, gave it ring lights, and called it hustle.

The creator economy built a labor pipeline it could underpay

What looked like spontaneity was often logistics with good lighting. Influencers didn’t just appear everywhere on TikTok, Reels, and Shorts by force of personality. They paid for a production chain that could cut clips, resize videos, write captions, schedule posts, and keep the content conveyor belt moving.

That arrangement worked because the labor was affordable and mostly invisible. Now the same businesses that benefited from it are turning to tools like OpusClip, which promise to turn long videos into short clips and publish them across platforms with a click. The factory floor was always there. AI just wants fewer people on it.

AI usually doesn’t kill the job first. It cheapens it

This is the part the booster crowd likes to skip. A job usually doesn’t disappear in one dramatic moment. It gets stripped for parts first.

The editor becomes the person checking AI cuts, fixing captions, swapping thumbnails, cleaning timestamps, repackaging clips, and posting them across five platforms because the software still does a few things badly enough to be embarrassing. Upwork’s 2026 skills report puts a number on the shift: demand for AI video generation and editing rose 329% year over year.

That doesn’t mean human labor is gone. It means human labor is being pushed into babysitting the machine that’s learning how to absorb more of the work.

The next shock lands in outsourcing hubs, not just creator mansions

The easy version of this story is a rich influencer replacing an editor in Los Angeles. The more honest version reaches much farther. In Latin America, regional platforms such as Workana grew by serving workers shut out by language and market barriers on global platforms, with the World Bank describing Workana as the largest freelance and remote work platform in the region.

So when AI starts squeezing this layer of work, the fallout won’t stop at a few creator agencies or freelance editors in big US cities. It’ll hit the remote workers in outsourcing economies who were told digital work was the safer future. The same system that turned customer support and back-office tasks into globally tradable labor did the same thing to creator work. It chopped the job into repeatable pieces, sent them abroad, and rewarded whoever could do them fastest and cheapest.

That’s why the clipping story matters beyond creator gossip. AI isn’t crashing into some pristine meritocracy. It’s tightening the screws on a system that was already built to make workers interchangeable.

The creator economy was perfectly happy with invisible human labor when it was cheap and easy to ignore. Now it’s discovering that the cleanest version of “organic reach” is one that no longer has to pay the army behind it.

-

Fashion7 days ago

Fashion7 days agoWeekend Open Thread: Spanx – Corporette.com

-

Business5 days ago

Business5 days agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Sports6 days ago

Sports6 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Business6 days ago

Business6 days agoExpert Picks for Every Need

-

Tech3 days ago

Tech3 days agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Business5 days ago

Business5 days agoNo Jackpot Winner, Prize to Climb to $231 Million

-

Fashion4 days ago

Fashion4 days agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Fashion3 days ago

Fashion3 days agoLet’s Discuss: DEI in 2026

-

Crypto World2 days ago

Crypto World2 days agoBitcoin recovers as US and Iran Agree a Ceasefire Deal

-

Business6 days ago

Business6 days agoAkebia Therapeutics, Inc. (AKBA) Discusses Pipeline Progress and Strategic Focus on Kidney Disease Treatments at R&D Day – Slideshow

-

Crypto World1 day ago

Crypto World1 day agoCanary Capital Files SEC Registration for PEPE ETF

-

Politics6 days ago

Politics6 days agoThe UK should not pay a penny in slavery reparations

-

Tech4 days ago

Tech4 days agoSamsung just gave up on its own Messages app

-

Tech4 days ago

Tech4 days agoHaier is betting big that your next TV purchase will be one of these

-

Tech7 days ago

Tech7 days agoFlat tire? Dead battery? Speedy’s serves stranded Seattle riders as a quicker e-bike picker-upper

-

Fashion7 days ago

Fashion7 days agoWeekly News Update, 4.3.26 – Corporette.com

-

Sports7 days ago

A Kevin O’Connell Theory Can Now Be Retired

-

NewsBeat7 days ago

NewsBeat7 days agoKemi Badenoch talks ‘spring cleaning’ Reform defections

-

Tech4 days ago

Tech4 days agoGamer Restores the Original PlayStation Portal From Two Decades Ago

-

Tech4 days ago

Tech4 days agoThe Xiaomi 17 Ultra has some impressive add-ons that make snapping photos really fun

You must be logged in to post a comment Login