TL;DR

ZoomInfo beat Q1 earnings but cut full-year revenue guidance by 62 million dollars, announced a 600-job restructuring (20 per cent of headcount), and lost 29 per cent of its stock price as AI-native competitors reprice B2B sales intelligence.

ZoomInfo beat Q1 earnings but cut full-year revenue guidance by 62 million dollars, announced a 600-job restructuring (20 per cent of headcount), and lost 29 per cent of its stock price as AI-native competitors reprice B2B sales intelligence.

TL;DR

ZoomInfo beat its first-quarter earnings estimates, cut its full-year revenue guidance by 62 million dollars, announced a restructuring that will eliminate 600 jobs, and lost 29 per cent of its stock price in a single trading session. The company reported 310.2 million dollars in revenue, up 1.5 per cent year over year. Adjusted earnings per share came in at 28 cents, beating estimates by nearly nine per cent. None of it mattered. Investors looked at the guidance cut, the 20 per cent headcount reduction, and the 90 per cent net revenue retention rate, and sold.

The stock closed at 4.32 dollars. In November 2021, it traded at 77.35 dollars. ZoomInfo’s market capitalisation has fallen from approximately 25 billion dollars at its peak to under two billion. The company that defined business-to-business sales intelligence is now worth four per cent of what it was three and a half years ago.

First-quarter GAAP revenue was 310.2 million dollars. Adjusted operating income was 109.7 million dollars, a 35 per cent margin. GAAP operating income was 57.9 million dollars, a 19 per cent margin. Cash flow from operations was 114.7 million dollars. Unlevered free cash flow was 119.7 million dollars.

The company closed the quarter with 1,900 customers paying more than 100,000 dollars in annual contract value, up 32 year over year but down 21 from the prior quarter. The net revenue retention rate was 90 per cent. That number compresses the entire story into a single metric. A retention rate below 100 per cent means existing customers are spending less than they did a year ago. At 90 per cent, ZoomInfo is losing ten cents of every dollar of existing revenue annually through downgrades and churn.

Beneath the headline figures, the balance sheet tells a more detailed story. Long-term debt stands at 1.32 billion dollars against 171 million dollars in cash. Unearned revenue, the backlog of contracted but unrecognised revenue, was 479 million dollars. Research and development spending fell 18 per cent year over year to 42.1 million dollars, while cost of service rose 15 per cent to 43.5 million dollars. Interest expense climbed 38 per cent to 13.5 million dollars. Capital expenditure jumped 63 per cent to 24.1 million dollars. Bad debt provisions rose 37 per cent to 5.9 million dollars.

The company recorded 4 million dollars in asset impairments and lease abandonment charges that did not exist a year ago. Restructuring expenses in the income statement were 10 million dollars, nearly double the prior year’s 5.4 million. A litigation settlement cost 3.7 million dollars, up from 900,000 dollars. Goodwill remained unchanged at 1.69 billion dollars, a legacy of the 2019 merger that created ZoomInfo Technologies from DiscoverOrg’s acquisition of the original ZoomInfo.

ZoomInfo repurchased 13.1 million shares at an average price of 6.91 dollars, spending 90.5 million dollars. The buyback consumed more cash than the company’s GAAP operating income for the quarter. When a company spends more on repurchasing its own stock than it earns from operations, it is making a statement about what it believes its shares are worth. Investors, who sent the stock below five dollars, disagreed.

The full-year revenue forecast was cut from 1.247 to 1.267 billion dollars to 1.185 to 1.205 billion dollars. At the midpoint, that is a reduction of approximately 62 million dollars, or five per cent. Prior adjusted operating income guidance of 456 to 466 million dollars was lowered to 437 to 447 million dollars. Unlevered free cash flow guidance fell from 435 to 465 million dollars to 400 to 420 million dollars, a 40 million dollar cut at the midpoint.

Adjusted earnings per share guidance was maintained at 1.10 to 1.12 dollars, but only because the share count dropped from 325 million to 315 million through buybacks. The earnings-per-share number held steady because the denominator shrank, not because the numerator improved.

Second-quarter guidance of 300 to 303 million dollars implies a sequential decline from the first quarter and a year-over-year decrease of approximately 1.7 per cent. The pattern is a company whose upmarket business is growing modestly while its downmarket base erodes. The downmarket segment declined 10 per cent for a second consecutive quarter. Management has stated its goal is to reach an 80/20 split between upmarket and downmarket revenue, effectively accepting that the smaller customer segment will continue to shrink.

CEO Henry Schuck framed the strategy around data and AI: “In a world that is increasingly driven by AI and intelligent automation, ZoomInfo data and our go-to-market context is the ultimate competitive advantage.” The argument is that ZoomInfo’s database of more than 100 million companies and 500 million contacts, combined with billions of intent signals, is the durable asset. The market’s response suggests doubt about whether data alone justifies a premium subscription when AI-native alternatives are assembling the same insights at a fraction of the cost.

On 5 May, ZoomInfo’s board approved the 2026 Restructuring Programme. The company will eliminate approximately 600 positions globally, roughly 20 per cent of its ending first-quarter headcount. Approximately one quarter of the impacted roles will be reallocated to other locations, resulting in a net reduction of around 450 positions. Three hundred and forty employees in the United States, India, and the United Kingdom were notified immediately, primarily in go-to-market and general and administrative functions.

The company will close its entire Israel site by the end of 2026, transferring operations to the United States, Canada, Ireland, and India. Pre-tax restructuring charges are estimated at 45 to 60 million dollars, primarily cash-based, with the majority recognised in the second and third quarters. The programme is expected to deliver 60 million dollars in annual run-rate operating expense savings.

Schuck’s internal email to employees described the restructuring as a plan to simplify operations, accelerate the move upmarket, and reduce resources allocated to the downmarket segment. He noted that the industry is moving toward consumption-based pricing and that the company’s largest enterprise customers are asking for a “deeper, forward-deployed engineering motion.” The savings will be redirected toward the platform, product roadmap, and customer-facing engineering capacity. Impacted employees receive cash severance, some equity acceleration, and subsidised medical premiums in the United States.

The scale of the restructuring is a signal. A company that cuts 20 per cent of its workforce is not fine-tuning. It is reorganising around a thesis that its current structure was built for a market that no longer exists. The 60 million dollars in annual savings is nearly equal to the 62 million dollar revenue guidance cut. ZoomInfo is not just reducing costs. It is trading a revenue line it believes is structurally declining for operating leverage it believes will sustain margins through the transition.

ZoomInfo’s competitive landscape has fragmented. Apollo.io offers a database of more than 275 million contacts with built-in sequencing for 49 dollars per user per month. Clay orchestrates data enrichment across more than 100 providers using waterfall logic, pulling the best available information from ZoomInfo, Apollo, and dozens of other sources automatically. The sales technology stack in 2026 increasingly treats contact databases as interchangeable inputs rather than differentiated platforms.

AI-native enterprise spending surged 94 per cent year over year while traditional SaaS growth cooled to eight per cent. Approximately 285 billion dollars in market capitalisation was erased from software-as-a-service companies in a single 48-hour window earlier this year. The repricing is not unique to ZoomInfo, but ZoomInfo is more exposed than most because its core product, a database of business contacts and company information, is the category most directly threatened by AI agents that can assemble the same data on the fly.

Every SaaS company is building AI features, and ZoomInfo is no exception. Its Copilot product, launched in early 2024, reached 250 million dollars in annual contract value within 18 months. Copilot uses AI to recommend next-best actions, generate outreach, and monitor buyer signals. The product has been the company’s most successful launch. But it also raises the question that haunts every legacy SaaS platform building an AI layer: if the AI is the value, what is the database worth on its own?

Palantir’s earnings arrived in the middle of an AI software sell-off that tested whether any enterprise software company could sustain its valuation against the expectation that AI will compress margins across the industry. ZoomInfo’s answer is a company earning 35 per cent adjusted operating margins while its revenue flatlines, its debt exceeds its cash by a factor of eight, and its stock trades at a fraction of its historical value. The margins are real. The growth is not.

SaaStock, the ten-year-old SaaS conference brand, retired its name and relaunched as Shift AI, a rebrand that its founder described as a response to the post-SaaS era. Seventy per cent of enterprises now demand usage-based or outcome-based contracts. Per-seat adoption has dropped from 21 per cent to 15 per cent of SaaS companies in the past twelve months. Schuck’s own internal email acknowledged the shift toward consumption-based pricing. The conference that celebrated the model ZoomInfo was built on has concluded that the model no longer defines the market.

The case that SaaS is not dead rests on the argument that AI features are additive rather than substitutive, that enterprises will pay more for software enhanced by AI rather than replacing the software with AI entirely. ZoomInfo’s Copilot is evidence for this argument. Its 250 million dollar ACV demonstrates that customers will pay for AI capabilities layered on top of a trusted data platform.

The case against ZoomInfo is that the data platform itself is becoming a commodity. When Clay can waterfall across a hundred data providers and an AI agent can research a prospect in seconds by crawling the open web, the value of a proprietary database diminishes with every improvement in the models that can replicate its output. The retention rate of 90 per cent suggests customers are already making this calculation, spending less each year as cheaper alternatives capture the margin.

Schuck built ZoomInfo from a bootstrapped data company into a platform that peaked at 25 billion dollars in market value. The company still generates more than 100 million dollars in quarterly free cash flow. It is not failing. It is restructuring around a bet that its upmarket enterprise customers and its AI layer will sustain the business while the downmarket base, the segment that made ZoomInfo ubiquitous, erodes. The beat did not matter. The guidance did. The 600 jobs did. The stock at 4.32 dollars is the market’s verdict on what a database is worth in the age of AI agents.

Both privilege escalation vulnerabilities stem from bugs in the kernel’s handling of page caches stored in memory, allowing untrusted users to modify them. They target caches in networking and memory-fragment handling components. Specifically, CVE-2026-43284 attacks the esp4 and esp6 () processes, and CVE-2026-43500 zeroes in on rxrpc. Last week’s CopyFail exploited faulty page caching in the authencesn AEAD template process, which is used for IPsec extended sequence numbers. A 2022 vulnerability named Dirty Pipe also stemmed from flaws that allow attackers to overwrite page caches.

Researchers from security firm Automox wrote:

Dirty Frag belongs to the same bug family as Dirty Pipe and Copy Fail, but it targets the frag member of the kernel’s struct sk_buff rather than pipe_buffer. The exploit uses splice() to plant a reference to a read-only page-cache page (for example, /etc/passwd or /usr/bin/su) into the frag slot of a sender-side skb. Receiver-side kernel code then performs in-place cryptographic operations on that frag, modifying the page cache in RAM. Every subsequent read of the file sees the corrupted version, even though the attacker only ever had read access.

CVE-2026-43284 is found in the esp_input() process on the IPsec ESP receive path. When an skb object is non-linear but lacks a frag list, the code skips skb_cow_data() and decrypts AEAD in place on the planted frag. From there, an attacker can control the file offset and the 4-byte value of each store.

CVE-2026-43500, meanwhile, resides in rxkad_verify_packet_1(). The process decrypts RxRPC payloads using a single-block process. Splice-pinned pages become both a source and destination. That, paired with the decryption key being freely extracted using the add_key (rxrpc), allows an attacker to rewrite contents in memory.

Either exploit used separately is unreliable. Some Ubuntu configurations use AppArmor to prevent untrusted users from creating namespace contents. That, in turn, neutralizes the ESP technique. Most other distributions by default don’t run rxrpc.ko, which neutralizes the RxRPC arm. When chained together, however, the two exploits allow attackers to obtain root on every major distribution Kim tested. Once the exploits run, attackers can use SSH access, web-shell execution, container escapes, or compromise low-privilege accounts.

“Dirty Frag is notable because it introduces multiple kernel attack paths involving rxrpc and esp/xfrm networking components to improve exploitation reliability,” Microsoft researchers wrote. “Rather than relying on narrow timing windows or unstable corruption conditions often associated with Linux local privilege escalation exploits, Dirty Frag appears designed to increase consistency across vulnerable environments.”

Researchers at Google-owned Wiz said exploits will be less likely to break out of hardened containerized environments such as Kubernets with default security settings in place. “However, the risk remains significant for virtual machines or less restricted environments.”

The best response for anyone using Linux is to install patches immediately. While fixes likely require a reboot, protection from a threat as severe as Dirty Frag outweighs the cost of disruptions. Anyone who can’t install immediately should follow the mitigation steps laid out in the posts linked above. Additional guidance can be found here.

The Entertainment Software Association (ESA) has come out against California bill AB 1921, a state bill that would compel developers to offer remedies before deactivating servers for online games. Stop Killing Games has been fighting this battle for the last couple of years and was quick to condemn the ESA’s position.

Read Entire Article

Source link

On the lower west side of Manhattan Island, in the Chelsea district, there is an unassuming, concrete-looking townhouse whose previous owners include Lady Gaga and basketball player Kevin Durant. If you ever get the opportunity to saunter through its doors, you’re stepping into a tower of sound.

That’s because the House of Sound, operated by Bose after its acquisition of the Sonus Faber brand, is an ode to audiophile and luxury tastes.

Through six floors of the townhouse, there’s a cadre of McIntosh and Sonus Faber kit, with each room designed to give a taste of what it’d be like to have this hi-fi equipment in your home. As the House of Sound website puts it, it’s a “destination where audio, art, and design intersect to create a truly immersive experience”.

And for once, the hype is well met.

Not to be confused with BBC’s KeyStage learning exercises, or the music studio in the north of England, Bose’s House of Sound is designed to demonstrate what “a luxury audio experience can really be in your home”. If you have deep enough pockets, of course.

It wants to promote the idea that audio can be considered in the same aspirational league as travel, watches, cars, and haute couture fashion. And that it can be a form of creativity as well, whether that’s through the form it takes – the materials and aesthetic that goes into Sonus Faber and McIntosh products – or how it brings other creative works to life, whether that’s through two-channel stereo or a private cinema install (that Questlove from The Roots rents for the Oscars). It’s the Met Gala for sound systems.

It’s a place that desires to be the apex of what hi-fi can be – without limitation. It’s not so much a consumable thing that, while enjoyable as an experience, is in ways designed to be disposable. Without trying to sound like an advertisement, you go to its listening rooms to luxuriate in high-fidelity sound, or as it was put to us on the tour, to connect “yourself and your own emotions and the people around you”. Lofty, but why not reach for the stars?

I’ve been to hi-fi demo spaces before, such as KJ West One, which is literally wall-to-wall of high-end hi-fi equipment, or ventured to hi-fi shows such as High End. But the House of Sound obviously feels different from either of those two because it takes place in an actual home space.

If you need to be convinced of parting with money into the six or seven figures, it certainly helps having an idea of how it would look in your own well-appointed home. It all helps to add to the sense of immersion because the space you’re listening in is a familiar-ish one.

Of course, this would be rather moot if the products didn’t sound great. I’ve limited experience with Sonus Faber products, having tested the Omnia all-in-one system several years ago, but Sonus Faber doesn’t really deal with products that tend to be easily shippable.

I’ve heard Amati Supreme hi-fi loudspeakers at events such as 2025’s Paris AV Show, and thought they sounded “phenomenal”. This time I got to hear the Suprema, which is essentially Sonus Faber’s no-holds-barred loudspeaker.

And they sounded phenomenal. They’re a bit on the crisp side of neutral, so at times can sound a little thin to my ears, but they generate huge levels of transparency, insight and naturalism, as well as power and energy in a stereo image that’s wide and deep, with minimal, if any, distortion.

We got to play a selection of tracks*, to just sit there and listen to the speakers. Some people didn’t want to leave.

*(If you want to know my choices, they were Slipknot’s Duality and Illit’s K-pop Magnetic in an attempt to try and ‘break’ the speakers. I failed.)

We then descended to the ground floor (first floor for any Americans reading) and had the opportunity to listen to the private home cinema install.

Past a large, nondescript door that doesn’t hint at the excitement that awaits, is a reference standard private home cinema with a sound system that will blow your Sonos Arc Ultra surround system away.

Dotted around the room is a 29-channel system that includes Sonus Faber Arena 20 in-wall speakers, Arena 10 in-ceiling modules, and Arena 30 speakers behind the screen in a left, centre, right configuration with a dual-tweeter design similar to the Amati Supreme for clearer dialogue. In total, there are 16 (sixteen!) subwoofers.

It’s powered by 19 McIntosh amplifiers that, apparently, provide a whopping 22,400W of total power to the system. All amps have a THD of less than 0.005% for absolutely minimal distortion, and the amps power on in a trigger sequence to avoid a massive on-rush of current when you’ve got 20,000+ watts waiting to be released.

An interesting little titbit was the reveal that in films, there’s generally only 8-9 minutes of true LFE (Low Frequency Effects) in a typical two-hour film. 12 of the sixteen subwoofers are then repurposed with the other channels, with the front left/right receiving a dedicated cluster of subs, and the sides, rears, and even the ceiling arrays partnered with a sub.

The result of this configuration was full-range sound from infrasonic to beyond audible high frequency, allowing for precise placement of bass in an area of the room rather than just shaking the entire floor.

This private cinema is placed on the first floor under the kitchen, and apparently, you can feel the rumble in the kitchen even if you can’t hear what’s playing. That’s the power unleashed by this cinema.

Kaleidescape is the source for films, with an Apple TV nearby for sports and streaming, plus a PS5 for gaming.

And we were treated to Top Gun: Maverick, which has become a staple of Dolby Atmos demos (everyone from Sonos, Yamaha and Focal uses it, moving on from Mad Max: Fury Road being).

It is probably (memory aside), the best I’ve heard the film since watching it in Dolby Cinema at the West End Odeon (the better of the Odeon Leicester Square cinemas). It sounded immense, the nuance of the smaller details that might be lost in a home cinema set-up are rendered crystal clear. The system has even been given the thumbs up by the Oscar-winning sound mixer of Maverick, who watched the film there at an event.

The best home cinema systems can put you in an immersive bubble, whether it’s object-based or channel-based. The private cinema in this townhouse is an experience where you feel it too… and you don’t have to bother with people talking or a crisp packet rustling in the darkness, as it did when I watched The Drama a few weeks ago and annoyed another patron in the cinema.

So I’ve written about hi-fi and home cinema. Why not cars too?

On the same first floor as the private cinema is a Lamborghini tucked away in the corner. Inside is a Sonus Faber sound system that’s been tuned for the (tight) interior environment. I’ve written in the past how, for many people, a car might be the best way to listen to music, and it’s the same case for this Lamborghini system.

The system itself is not as numerous in speakers or has quite as fancy custom technology as the Bowers & Wilkins kit in the Polestar 3, but the sense of immersion convinces me that cars make for a pretty excellent hi-fi room. The low end produced, despite there being no dedicated sub (if memory serves), brought genuine bass to the proceedings, but the best thing about the whole experience is how balanced it all sounded.

I’m still unnerved by my own anxiety that bass would distract during a car trip, but then wouldn’t the roar of the engine distract too? Perhaps they’d cancel each other out.

It was a great few hours at the House of Sound alongside seeing Bose’s Lifestyle Collection, products which aim for premium but for a mainstream audience. The House of Sound shows the potential for hi-fi to move into more luxurious realms (if it hasn’t already).

Of course, this is not an area that I or most people who happen across this article would ever find themselves inhabiting. The Aida sound system, along with all the McIntosh equipment in the room, is an easy seven-figure cost. These are sound systems that would exist in people’s dreams.

But for a few hours in Bose’s House of Sound, those dreams can become reality.

Nvidia’s real AI moat isn’t “a piece of hardware,” writes Wired’s Sheon Han. It’s CUDA: a mature, deeply optimized software ecosystem that keeps machine-learning workloads tied to Nvidia GPUs. An anonymous reader quotes a report from Wired: What sounds like a chemical compound banned by the FDA may be the one true moat in AI. CUDA technically stands for Compute Unified Device Architecture, but much like laser or scuba, no one bothers to expand the acronym; we just say “KOO-duh.” So what is this all-important treasure good for? If forced to give a one-word answer: parallelization. Here’s a simple example. Let’s say we task a machine with filling out a 9×9 multiplication table. Using a computer with a single core, all 81 operations are executed dutifully one by one. But a GPU with nine cores can assign tasks so that each core takes a different column — one from 1×1 to 1×9, another from 2×1 to 2×9, and so on — for a ninefold speed gain. Modern GPUs can be even cleverer. For example, if programmed to recognize commutativity — 7×9 = 9×7 — they can avoid duplicate work, reducing 81 operations to 45, nearly halving the workload. When a single training run costs a hundred million dollars, every optimization counts.

Nvidia’s GPUs were originally built to render graphics for video games. In the early 2000s, a Stanford PhD student named Ian Buck, who first got into GPUs as a gamer, realized their architecture could be repurposed for general high-performance computing. He created a programming language called Brook, was hired by Nvidia, and, with John Nickolls, led the development of CUDA. If AI ushers in the age of a permanent white-collar underclass and autonomous weapons, just know that it would all be because someone somewhere playing Doom thought a demon’s scrotum should jiggle at 60 frames per second. CUDA is not a programming language in itself but a “platform.” I use that weasel word because, not unlike how The New York Times is a newspaper that’s also a gaming company, CUDA has, over the years, become a nested bundle of software libraries for AI. Each function shaves nanoseconds off single mathematical operations — added up, they make GPUs, in industry parlance, go brrr.

A modern graphics card is not just a circuit board crammed with chips and memory and fans. It’s an elaborate confection of cache hierarchies and specialized units called “tensor cores” and “streaming multiprocessors.” In that sense, what chip companies sell is like a professional kitchen, and more cores are akin to more grilling stations. But even a kitchen with 30 grilling stations won’t run any faster without a capable head chef deftly assigning tasks — as CUDA does for GPU cores. To extend the metaphor, hand-tuned CUDA libraries optimized for one matrix operation are the equivalent of kitchen tools designed for a single job and nothing more — a cherry pitter, a shrimp deveiner — which are indulgences for home cooks but not if you have 10,000 shrimp guts to yank out. Which brings us back to DeepSeek. Its engineers went below this already deep layer of abstraction to work directly in PTX, a kind of assembly language for Nvidia GPUs. Let’s say the task is peeling garlic. An unoptimized GPU would go: “Peel the skin with your fingernails.” CUDA can instruct: “Smash the clove with the flat of a knife.” PTX lets you dictate every sub-instruction: “Lift the blade 2.35 inches above the cutting board, make it parallel to the clove’s equator, and strike downward with your palm at a force of 36.2 newtons.” “You can begin to see why CUDA is so valuable to Nvidia — and so hard for anyone else to touch,” writes Han. “Tuning GPU performance is a gnarly problem. You can’t just conscript some tender-footed undergrad on Market Street, hand them a Claude Max plan, and expect them to hack GPU kernels. Writing at this level is a grindsome enterprise — unless you’re a cracker-jack programmer at DeepSeek…”

Han goes on to argue that rivals like AMD and Intel offer competitive specs on paper, but their software stacks have struggled with bugs, compatibility issues, and weak adoption. As a result, Nvidia has built an Apple-like moat around AI computing, leaving the industry dependent on its expensive hardware.

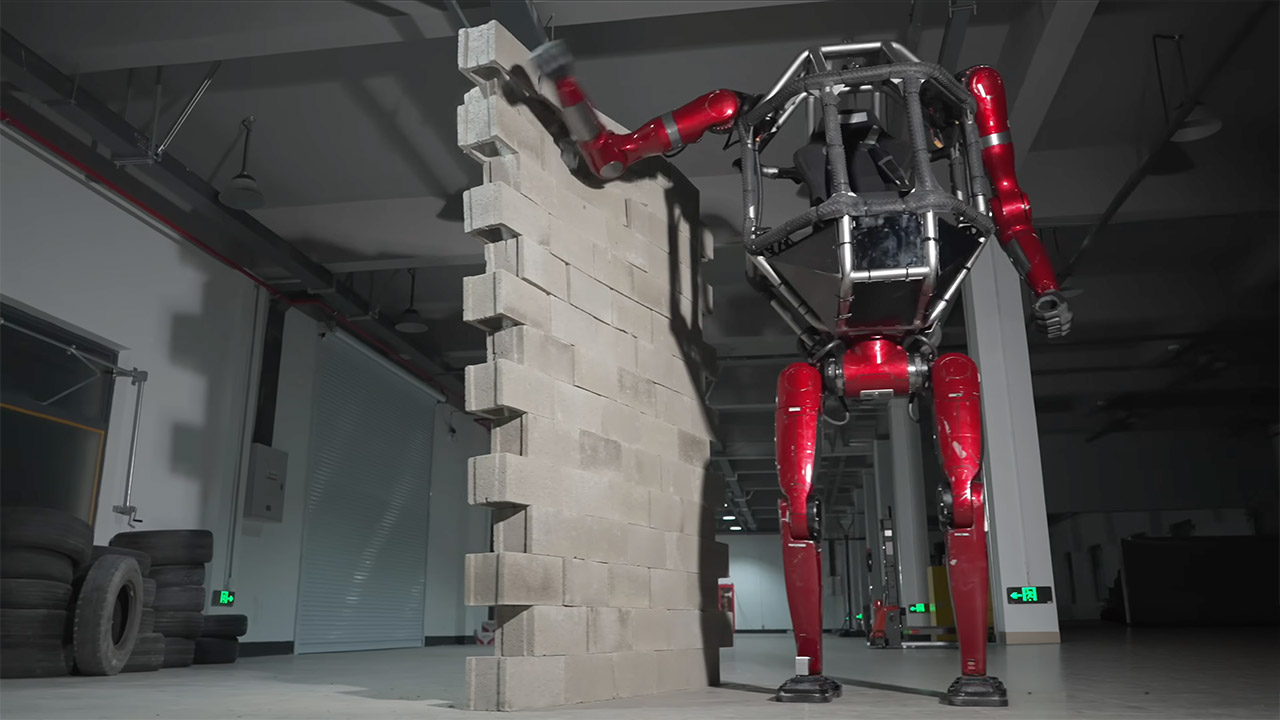

Climbing into the open metal cage of Unitree’s GD01 feels like slipping behind the wheel of something from another era of imagination. Founder Wang Xingxing does exactly that in the one-minute demonstration video released today. He buckles into the central seat, grips the controls, and sets the machine in motion across an indoor workshop floor. The robot responds with smooth, deliberate steps on its beastly red legs.

From the outside, you can tell how powerful this beast is; shiny red panels cover the limbs and torso frame, while silver bars keep everyone secure within the open cockpit. It’s imposing, easily twice the height of most adults, and you get a terrific view from up top, but you also get a sense of how large this thing is. There are thick black treads wrapping around the frame and feet in case it needs additional traction, but then there are hydraulic lines and joint housings running down the arms and legs, which gives you a real sense of what’s propelling this beast.

Power shows up clearly when Wang guides the bipedal form toward a stack of bricks. One solid push from the body and the pile collapses. No extra tools or dramatic wind-up needed. The 500-kilogram total weight, including the pilot, delivers real structural strength without any loss of control. Unitree notes the machine works as a civilian vehicle built for practical jobs like transport across rough sites, basic exploration, or even rescue work where a tall vantage point helps. Pricing starts at 3.9 million yuan, which works out to roughly 650 thousand dollars. That figure covers the base model now headed into mass production. Buyers will get a complete, ready-to-pilot system rather than a kit or prototype.

Satya Nadella drew a historical parallel to Microsoft’s early PC partnership with IBM as the tech giant prepared to invest $10 billion more in OpenAI in April 2022 — writing in an internal email that he didn’t want Microsoft to become IBM while OpenAI became the next Microsoft.

That email, presented as evidence by Elon Musk’s lead trial attorney Steven Molo, was one of the new details to emerge from the Microsoft CEO’s turn on the stand Monday morning in Musk’s lawsuit against Sam Altman, OpenAI and Microsoft in federal court in Oakland.

Nadella described the decision to invest in OpenAI as a “one-way door,” saying Microsoft couldn’t build two supercomputers — one for itself and one for OpenAI — and had to accept the opportunity cost of diverting scarce computing resources away from its own AI teams.

“We were outsourcing essentially a lot of the core IP development and taking a massive dependency on OpenAI,” Nadella testified, explaining that he wanted to ensure Microsoft had access to the intellectual property generated by the partnership, and continued to build its own knowledge and capabilities at the same time.

Board considerations unredacted: The testimony also provided new information from messages among Microsoft execs and Altman in the days following his brief ouster as OpenAI CEO in 2023. The names of potential candidates from that thread were previously redacted in public court records.

From Nadella’s testimony Monday, it emerged that two potential OpenAI board candidates for whom he voiced his disapproval were Diane Greene, the former Google Cloud CEO, and Bing Gordon, the veteran gaming exec and Kleiner Perkins partner previously on Amazon’s board. Nadella said he objected to both as potential candidates because of their ties to companies that compete directly with Microsoft in AI.

He said the discussions were initiated by Altman and other OpenAI insiders seeking his input, and that the board could have ignored his suggestions. One candidate he suggested, former Gates Foundation CEO Sue Desmond-Hellman, was later appointed to the board.

Musk argues that Microsoft’s efforts to protect its interests in the OpenAI partnership came at the expense of the OpenAI nonprofit’s original mission to develop AI for the benefit of humanity. His lawsuit alleges that Microsoft aided and abetted a breach of the charitable trust that governed OpenAI’s founding, misusing his original investment, estimated at $38 million to $44 million.

Enabling a massive nonprofit: Nadella offered a different view on the stand, describing a collaboration built on mutual benefit in which Microsoft took on enormous risk to support a fledgling AI lab that no one else was willing to fund. He said the partnership had created “one of the largest nonprofits in the world,” enabling products like ChatGPT and Copilot that put AI tools in the hands of millions of people.

Under cross-examination, however, Nadella acknowledged that he was not aware of any full-time employees at the OpenAI nonprofit before March 2026, or of any grants, research, or open-sourced technology it had produced.

One of Microsoft’s attorneys in the case, Jay Jurata of Dechert, also sought to undermine Musk’s standing in the case. He walked Nadella through three major milestones in the Microsoft-OpenAI partnership — the 2019 announcement, a 2020 exclusive license to GPT-3, and the 2023 $10 billion investment — and asked each time whether Musk had reached out to object.

Each time, Nadella said no. He and Musk have each other’s phone numbers, he added.

Microsoft estimates the OpenAI return: Musk’s attorney, on cross-examination, sought to show the benefits Microsoft has received from the partnership. He walked Nadella through a January 2023 memo from Microsoft President Brad Smith to the company’s board, projecting a $92 billion return on Microsoft’s cumulative $13 billion investment in OpenAI.

According to the testimony, a footnote in the memo showed a 20% annual increase kicking in starting in 2025, which could roughly double the return within four years.

Under the restructured deal announced last year, the caps on Microsoft’s returns were removed entirely. Microsoft and OpenAI also recently amended the partnership to make Microsoft’s IP license non-exclusive and open all OpenAI products to any cloud provider.

[Update: The Information reported Monday that revenue-sharing payments from OpenAI to Microsoft under the new deal are capped at $38 billion.]

Asked about the memo on the witness stand, Nadella confirmed the figures but noted that the investment carried real risk, saying the return could just as easily have been zero.

The trial, before U.S. District Judge Yvonne Gonzalez Rogers, is expected to continue through May 21, with OpenAI CEO Sam Altman also expected to take the stand this week.

GeekWire reported on today’s proceedings via the court’s audio livestream. Correction: The name of Microsoft’s outside counsel for Nadella’s testimony has been corrected since publication.

A doctor in a hospital exam room watches as a medical transcription agent updates electronic health records, prompts prescription options, and surfaces patient history in real time. A computer vision agent on a manufacturing line is running quality control at speeds no human inspector can match. Both generate non-human identities that most enterprises cannot inventory, scope, or revoke at machine speed.

That is the structural problem keeping agentic AI stuck in pilots. Not model capability. Not compute. Identity governance.

Cisco President Jeetu Patel told VentureBeat at RSAC 2026 that 85% of enterprises are running agent pilots while only 5% have reached production. That 80-point gap is a trust problem. The first questions any CISO will ask: which agents have production access to sensitive systems, and who is accountable when one acts outside its scope? IANS Research found that most businesses still lack role-based access control mature enough for today’s human identities, and agents will make it significantly harder. The 2026 IBM X-Force Threat Intelligence Index reported a 44% increase in attacks exploiting public-facing applications, driven by missing authentication controls and AI-enabled vulnerability discovery.

Michael Dickman, SVP and GM of Cisco’s Campus Networking business, laid out a trust framework in an exclusive interview with VentureBeat that security and networking leaders rarely hear stated this plainly. Before Cisco, Dickman served as Chief Product Officer at Gigamon and SVP of Product Management at Aruba Networks.

Dickman said that the network sees what other telemetry sources miss: actual system-to-system communications rather than inferred activity. “It’s that difference of knowing versus guessing,” he said. “What the network can see are actual data communications … not, I think this system needs to talk to that system, but which systems are actually talking together.” That raw behavioral data, he added, becomes the foundation for cross-domain correlation, and without it, organizations have no reliable way to enforce agent policy at what he called “machine speed.”

Dickman argues that agentic AI breaks a pattern he says defined every prior technology transition: deploy for productivity first, bolt on security later.

“I don’t think trust is one of those things where the business productivity comes first, and the security is an afterthought,” Dickman told VentureBeat. “Trust actually is one of the key requirements. Just table stakes from the beginning.”

Observing data and recommending decisions carries consequences that stay contained. Execution changes everything. When agents autonomously update patient records, adjust network configurations, or process financial transactions, the blast radius of a compromised identity expands dramatically.

“Now more than ever, it’s that question of who has the right to do what,” Dickman said. “The who is now much more complicated because you have the potential in our reality of these autonomous agents.”

Dickman breaks the trust problem into four conditions. The first is secure delegation, which starts by defining what an agent is permitted to do and maintaining a clear chain of human accountability. The second is cultural readiness; he pointed to alert fatigue as a case study. The traditional fix, Dickman noted, was to aggregate alerts, so analysts see fewer items. With agents capable of evaluating every alert, that logic changes entirely.

“It is now possible for an agent to go through all alerts,” Dickman said. “You can actually start to think about different workflows in a different way. And then how does that affect the culture of the work, which is amazing.”

The third is token economics: Every agent’s action carries a real computational cost. Dickman sees hybrid architectures as the answer, where agentic AI handles reasoning while traditional deterministic tools execute actions. The fourth is human judgment. For example, his team used an AI tool to draft a product requirements document. The agent produced 60 pages of repetitive filler that immediately provided how technically responsive the architecture was, yet showed signs of needing extensive fine-tuning to make the output relevant. “There’s no substitute for the human judgment and the talent that’s needed to be dextrous with AI,” he said.

Most enterprise data today is proprietary, internal, and fragmented across observability tools, application platforms, and security stacks. Each domain team builds its own view. None sees the full picture.

“It’s that difference of knowing versus guessing,” Dickman said. “What the network can see are actual data communications. Not ‘I think this system needs to talk to that system,’ but which systems are actually talking together.”

That telemetry grows more valuable as IoT and physical AI proliferate. Computer vision agents analyzing shopper behavior and running factory-floor quality control generate highly sensitive data that demands precise access controls.

“All of those things require that trust that we started with, because this is highly sensitive data around like who’s doing what in the shop or what’s happening on the factory floor,” Dickman said.

“It’s not only aggregation, but actually the creation of knowledge from the network,” Dickman said. “There are these new insights you can get when you see the real data communications. And so now it becomes what do we do first versus second versus third?”

That last question reveals where Dickman’s focus lands: the strategic challenge is sequencing, not capability.

“The real power comes from the cross-domain views. The real power comes from correlation,” Dickman said. “Versus just aggregation and deduplication of alerts, which is good, but it’s a little bit basic.”

This is where he sees the most common pitfall. Team A builds Agent A on top of Data A. Team B builds Agent B on top of Data B. Each silo produces incrementally useful automation. The cross-domain insight never materializes.

Independent practitioners validate the pattern. Kayne McGladrey, an IEEE senior member, told VentureBeat that organizations are defaulting to cloning human user profiles for agents, and permission sprawl starts on day one. Carter Rees, VP of AI at Reputation, identified the structural reason. “A significant vulnerability in enterprise AI is broken access control, where the flat authorization plane of an LLM fails to respect user permissions,” Rees told VentureBeat. Etay Maor, VP of Threat Intelligence at Cato Networks, reached the same conclusion from the adversarial side. “We need an HR view of agents,” Maor told VentureBeat at RSAC 2026. “Onboarding, monitoring, offboarding.”

Use this matrix to evaluate any platform or combination of platforms against the five trust gaps Dickman identified. Note that the enforcement approaches in the right column reflect Cisco’s framework.

|

Trust gap |

Current control failure |

What network-layer enforcement changes |

Recommended action |

|

Agent identity governance |

IAM built for human users cannot inventory, scope, or revoke agent identities at machine speed |

Agentic IAM registers each agent with defined permissions, an accountable human owner, and a policy-governed access scope |

Audit every agent identity in production. Assign a human owner. Define permitted actions before expanding the scope |

|

Blast radius containment |

Host-based agents and perimeter controls can be bypassed; flat segments give compromised agents lateral movement |

Microsegmentation enforces least-privileged access at the network layer, limiting blast radius independent of host-level controls |

Implement microsegmentation for every agent-accessible system. Start with the highest-sensitivity data (PHI, financial records) |

|

Cross-domain visibility |

Siloed observability tools create fragmented views; Team A’s agent data never correlates with Team B’s security telemetry |

Network telemetry captures actual system-to-system communications, feeding a unified data fabric for cross-domain correlation |

Unify network, security, and application telemetry into a shared data fabric before deploying production agents |

|

Governance-to-enforcement pipeline |

No formal process connecting business intent to agent policy to network enforcement |

Policy-to-enforcement pipeline translates governance decisions into machine-speed network rules |

Establish a formal pipeline from business-intent definition to automated network policy enforcement |

|

Cultural and workflow readiness |

Organizations automate existing workflows rather than redesigning for agent-scale processing |

Network-generated behavioral data reveals actual usage patterns, informing workflow redesign |

Run a 30-day telemetry capture before designing agent workflows. Build around observed data, not assumptions |

Dickman grounded his framework in a scenario from his own life. A family member recently broke an ankle, which put him in a hospital exam room watching a medical transcription agent update the EHR, prompt prescription options, and surface patient history in real time. The doctor approved each decision, but the agent handled tasks that previously required manual entry across multiple systems.

The security implications hit differently when it is a loved one’s records on the screen.

“I would call it do governance slowly. But do the enforcement and implementation rapidly,” he said. “It must be done in machine speed.”

It starts with agentic IAM, where each agent is registered with defined permitted actions and a human accountable for its behavior.

“Here’s my set of agents that I’ve built. Here are the agents. By the way, here’s a human who’s accountable for those agents,” Dickman said. “So if something goes wrong, there’s a person to talk to.”

That identity layer feeds microsegmentation — a network-enforced boundary Dickman says enforces least-privileged access and limits blast radius.

“Microsegmentation guarantees that least-privileged access,” Dickman said. “You’re not relying on a bunch of host agents, which can be bypassed or have other issues.”

If the governance model works for a medical transcription agent handling patient records in an emergency department, it scales to less sensitive enterprise use cases.

1. Force cross-functional alignment now. Define what the organization expects from agentic AI across line-of-business, IT, and security leadership. Dickman sees the human coordination layer moving more slowly than the technology. That gap is the bottleneck.

2. Get IAM and PAM governance production-ready for agents. Dickman called out identity and access management and privileged access management specifically as not mature enough for agentic workloads today. Solidify the governance before scaling the agents. “That becomes the unlock of trust,” he said. “Because when the technology platform is ready, you then need the right governance and policy on top of that.”

3. Adopt a platform approach to networking infrastructure. A platform strategy enables data sharing across domains in ways fragmented point solutions cannot. That shared foundation is what makes the cross-domain correlation in the trust gap assessment above operationally real.

4. Design hybrid architectures from the start. Agentic AI handles reasoning and planning. Traditional deterministic tools execute the actions. Dickman sees this combination as the answer to token economics: it delivers the intelligence of foundation models with the efficiency and predictability of conventional software. Do not build pure-agent systems when hybrid systems cost less and fail more predictably.

5. Make the first use cases bulletproof on trust. Pick two or three high-value use cases and build them with role-based access control, privileged access management, and microsegmentation from day one. Even modest deployments delivered with best practices intact build the organizational confidence that accelerates everything after.

“You can guarantee that trust to the organization, and that will unleash the speed,” Dickman said.

That is the structural insight running through every section of this conversation. The 85% of enterprises stuck in pilot mode are not waiting for better models. They are waiting for the identity governance, the cross-domain visibility, and the policy enforcement infrastructure that makes production deployment defensible. Whether they build on Cisco’s platform or assemble their own, Dickman’s framework holds: identity governance, cross-domain visibility, policy enforcement. None of those prerequisites is optional.

The organizations that satisfy them first will deploy agents at a pace the rest cannot match, because every new agent inherits the trust architecture the first ones required. The ones still debating whether to start will watch that gap widen. Theoretical trust does not ship.

The Musk v. Altman trial entered its third week Monday, with Microsoft CEO Satya Nadella and former OpenAI co-founder and renowned AI researcher Ilya Sutskever taking the stand. Nadella testified that Elon Musk never raised concerns to him that Microsoft’s investments in OpenAI violated any special commitments, and said he viewed the partnership as clearly commercial from the start. He also described OpenAI’s 2023 board crisis as “amateur city.”

Meanwhile, Sutskever testified that he had raised concerns about Sam Altman because he feared OpenAI could be “destroyed.” He expressed concerns about Altman’s behavior to the board, in part because he said he felt “a great deal of ownership” over the startup. “I simply cared for it, and I didn’t want it to be destroyed,” Sutskever said. CNBC reports: Nadella said he was “very proud” that Microsoft took the risk to invest in OpenAI when “no one else was willing” to bet on the fledgling lab. Musk, who testified late last month, said Microsoft’s $10 billion investment was the key tipping point that made him believe OpenAI was violating its nonprofit mission. He testified that the scale of the investment bothered him, and it prompted him to open a legal investigation into OpenAI. “I was concerned they were really trying to steal the charity,” Musk said from the stand.

Nadella said he did not believe Microsoft’s investments in OpenAI were donations, and that there was a clear commercial element to their partnership from the outset. He said during the partnership’s early years, Microsoft gave OpenAI sharp discounts on computing resources, and Microsoft believed it would reap marketing benefits from doing so. During a separate video deposition that was played on Monday morning, Michael Wetter, a corporate development executive at Microsoft, said the company has recognized approximately $9.5 billion in revenue to date through its partnership with OpenAI as of March 2025.

[…] Nadella said he was “pretty surprised” by the board’s decision [to fire Altman in November 2023], and that his priority was to try and figure out how to maintain continuity for Microsoft customers. Immediately after Altman was removed, Nadella said he made an effort to learn more about what happened, adding that he suspected jealousy and poor communication was at play. During conversations with OpenAI board members after the firing, Nadella said he was simply trying to understand the language in the OpenAI’s statement about Altman being “not consistently candid” while communicating with the board. That language, Nadella said, “just didn’t sort of suffice, because this is the CEO of a company that we are invested in and we’re deeply partnered with, and so I felt that they could have explained to me what are the incidents or what is the detail behind it.” There must have been instances of jealousy or miscommunication that could have justified pushing out Altman, Nadella said. He wanted more depth from the board members after the remark about candor, but no such information was available, he said. “It was sort of amateur city, as far as I’m concerned,” Nadella testified.

[…] Musk testified that he is not entirely against OpenAI having a for-profit unit, but he said it became “the tail wagging the dog.” He repeatedly accused Altman and Brockman of enriching themselves from a charity while also reaping the positive associations that come from running a nonprofit. “Microsoft has their own motivations, and that would be different from the motivations of the charity,” Musk said from the stand. “All due respect to Microsoft, do you really want Microsoft controlling digital superintelligence?”

During a videotaped deposition shown in court last week, former OpenAI director Tasha McCauley recalled a discussion with Nadella and her fellow board members after the 2023 decision to dismiss Altman as OpenAI’s CEO. “To the best of my recollection, Satya wanted to restore things to as they had been,” McCauley said. The board members didn’t think that was the right move, she said. But as a court witness on Monday, Nadella said he never demanded that the board reinstate Altman as OpenAI CEO. Recap:

Sam Altman Had a Bad Day In Court (Day Eight)

Sam Altman’s Management Style Comes Under the Microscope At OpenAI Trial (Day Seven)

Brockman Rebuts Musk’s Take On Startup’s History, Recounts Secret Work For Tesla (Day Six)

OpenAI President Discloses His Stake In the Company Is Worth $30 Billion (Day Five)

Musk Concludes Testimony At OpenAI Trial (Day Four)

Elon Musk Says OpenAI Betrayed Him, Clashes With Company’s Attorney (Day Three)

Musk Testifies OpenAI Was Created As Nonprofit To Counter Google (Day Two)

Elon Musk and OpenAI CEO Sam Altman Head To Court (Day One)

The rapid integration of AI into healthcare devices raises ‘fresh ethical questions’, says Eoin O’Cearbhaill.

Dr Eoin O’Cearbhaill is the most recent recipient of the NovaUCD Innovation Award, a recognition given to those with success stories in commercialising research emerging from University College Dublin (UCD).

O’Cearbhaill is an associate professor in biomedical engineering at the UCD School of Mechanical and Materials Engineering, where his research focuses on developing minimally invasive medical technologies for diagnosing and treating disease.

He is also the director of the Centre for Biomedical Engineering, and leads the UCD Medical Device Design Group within the centre, which aims to address clinical needs by developing novel medical devices with real-world applications.

The research group has assisted in the creation of spin-out companies LaNua Medical, Latch Medical and Lia Eyecare, and has filed more than a dozen patents.

O’Cearbhaill has also been a member of multiple Enterprise Ireland Commercialisation Fund projects. He is a funded investigator with Research Ireland Centres Cúram and I-Form, and has consulted with a number of businesses including Boston Scientific, NeoGraft Technologies, Johnson & Johnson and CroíValve.

“A major focus of my work has been translating research from the lab into technologies that can ultimately improve patient care, including through university spin-outs and collaborations with clinicians, researchers and industry partners,” O’Cearbhaill tells SiliconRepublic.com.

I was fortunate to grow up with parents who were always supportive of education and curiosity. Rather than one defining moment, it was a gradual realisation that research offered the opportunity to contribute something new.

Some time spent working in the medical device industry was also influential, as it gave me a practical understanding of how engineering can directly improve patient care. It showed me the importance of developing technologies that are not only innovative, but manufacturable, reliable and capable of making a real clinical impact.

Our research focuses broadly on minimally invasive technologies for the diagnosis and treatment of disease. Ideally, we begin by identifying an unmet clinical need, often in partnership with clinicians, and then develop practical device concepts that could address it.

My own expertise is in mechanical-based design, but coming up with the best solution relies on teamwork. We work closely with experts in electronic engineering, materials science, pharmaceuticals and clinical medicine, both internal and external to our group, to bring the right mix of skills to each challenge.

Research rarely follows a straight line. Sometimes a technology developed for one purpose reveals an unexpected opportunity elsewhere. Being able to adapt and pivot is often essential if we want to maximise the chances of clinical translation and real patient impact.

Biomedical engineering research can improve lives directly through better diagnostics, smarter treatments and less invasive procedures. It also plays an important economic role. Research attracts talented people from around the world, helps build high-value industries, and creates the environment where the next generation of Irish start-ups can emerge.

Ireland has the ingredients to become a global leader in next-generation medtech if we continue to invest in talent, translational research and entrepreneurship.

My team and I have been fortunate to work with talented researchers and entrepreneurs who have already spun technologies out of UCD with the support of NovaUCD, into companies.

These innovations span areas such as microneedle-based drug delivery, tumour treatment technologies and device-based therapies for dry eye disease and include spin-outs such as Latch Medical, LaNua Medical and Lia Eyecare.

Medical devices require robust regulation, quality systems and clinical validation, so the path to commercialisation can be challenging. But when successful, it enables research discoveries to become real products that can benefit patients at scale.

UCD Medical Device Design Group. Image: University College Dublin

One of the biggest challenges is attracting and retaining outstanding talent. PhD researchers and postdoctoral fellows are central to scientific progress, so it is important that stipends and salaries remain competitive with industry, particularly during a cost-of-living crisis.

Another challenge is the rapid integration of AI and smart technologies into healthcare devices. This creates exciting new opportunities, but also raises fresh technical, regulatory and ethical questions.

People sometimes assume that creating a medical device is only about designing the hardware and now, particularly the software in next-generation smart devices. In reality, one of the most important challenges is understanding how that device interacts with the body over time.

We are particularly interested in the interface between implantable or wearable devices and surrounding tissue. Understanding that relationship is critical if we want devices to function reliably and safely over the long term.

There is enormous opportunity in remote patient monitoring, technologies that enable more outpatient procedures, and devices that shorten recovery times.

These kinds of innovations can improve patient experience while also helping healthcare systems manage growing demand more efficiently.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

Bonus coupons are driving M5 MacBook Air prices lower this week, with both 13-inch and 15-inch models marked down, including configs with 24GB of RAM and 1TB of storage.

B&H’s MacBook Air sale delivers bonus discounts in the form of in-cart coupons on top of already reduced prices. With both 13-inch and 15-inch models on sale, now is a great time to pick up a thin-and-light notebook that’s was just released and is equipped with Apple’s M5 chip.

Save up to $180 on M5 MacBook Airs

The B&H deals above beat Amazon’s prices for the same systems, but you can find even more discounts in our 13-inch MacBook Air M5 Price Guide and 15-inch MacBook Air M5 Price Guide.

If you’d like to learn more about the M5 release, check out our hands-on M5 MacBook Air review.

HarrisX Poll Found 52% of Registered Voters Support the CLARITY Act

Weekend Open Thread: Marianne Dress

Upbit adds B3 Korean won pair as Base token gains Korea access

NCP car park operator enters administration putting 340 UK sites at risk of closure

Coffee Break: Travel Steam Iron

Auto Enthusiast Carves Functional Two-Stroke Engine from Solid Metal

What to Know Before Buying a Curling Wand or Curling Iron

What to expect when you’re expecting a budget

Politics Home Article | Starmer Enters The Danger Zone

Ignore market noise, India’s long-term story intact, say D-Street bulls Ramesh Damani and Sunil Singhania

UAE Free Zone Deploys Blockchain IDs to Verify Registered Firms

GM Agrees To Pay $12.75 Million To Settle California Lawsuit Over Misuse Of Customers’ Driving Data

BlackRock CEO Larry Fink Discusses a New Asset Class

Sarah Paulson Called Out For Met Gala ‘Hypocrisy’

Robinhood says Wall Street is building onchain

NBA playoff winners and losers: Austin Reaves is not loving Lakers vs. Thunder matchup, but Chet Holmgren is

General Hospital: Ric & Ava Bombshell – Ric’s Massive Secret Exposed!

Bold and Beautiful Early Spoilers May 11-15: Steffy Revolted & Liam Overjoyed!

Apple and Samsung are dominating smartphone sales so thoroughly that only one other company makes the top 10

The Best Work Pants for Women in 2026

You must be logged in to post a comment Login