Crypto World

AI Infrastructure Development Company Powering Enterprise AI Leadership

Artificial intelligence has entered a defining new phase. The competitive conversation is no longer centered solely around model innovation, data volume, or algorithmic breakthroughs. Instead, the question enterprise leaders must now answer is far more foundational:

Is our compute foundation strong enough to scale AI across the business?

In 2026, the AI race has evolved into an infrastructure race – one that demands collaboration with the right AI infrastructure development Company and long-term architectural foresight. Amazon’s $12 billion investment in AI-focused data center campuses in Louisiana reflects a larger global reality: enterprise AI growth now depends on physical and architectural compute capacity.

The message for business leaders is clear: compute strategy defines market leadership.

The Shift from AI Experimentation to AI Industrialization

For years, AI initiatives lived in innovation labs – contained within pilots, proofs of concept, or isolated departmental use cases. Infrastructure requirements were minimal because workloads were temporary and limited in scale.

That reality has fundamentally changed.

AI now operates inside mission-critical systems, powering core operations, customer experience platforms, cybersecurity defenses, supply chain optimization, real-time analytics engines, and generative copilots. These are not experimental environments; they are revenue-generating, risk-sensitive business functions.

This evolution demands a formalized enterprise AI infrastructure strategy.

Deloitte’s 2026 Tech Trends analysis highlights a critical inflection point: the challenge is no longer just training models, but managing the long-term economics and scalability of inference at enterprise scale. As AI becomes operational, compute demand shifts from sporadic experimentation to continuous, production-level execution.

Enterprises must now make deliberate decisions about workload placement, hybrid scaling models, cost governance, and performance optimization.

AI is no longer a tactical deployment.

It is a strategic compute architecture commitment.

Amazon’s $12B Move: A Blueprint for AI-Ready Data Centers

Amazon’s $12 billion investment in new AI-focused data center campuses in Louisiana is more than geographic expansion – it is a signal of where global AI infrastructure economics are heading.

As reported by CNBC and covered in depth by Bloomberg, Amazon is expanding its cloud and AI capacity through purpose-built, next-generation data center campuses engineered for high-density compute workloads. These facilities are designed to support advanced AI applications that demand massive processing power, ultra-fast networking, and scalable energy infrastructure.

This investment reflects:

- Long-term compute capacity expansion

- AI-optimized hardware integration

- Advanced cooling systems built for dense GPU clusters

- Infrastructure tailored for large-scale, real-time AI inference

This is what AI-ready data center architecture for enterprises looks like in practice.

Unlike traditional facilities designed for general enterprise IT, AI-optimized data centers are engineered specifically to handle:

- GPU-intensive model training

- High-bandwidth, low-latency interconnects

- Continuous inference workloads

- Distributed real-time data processing environments

Amazon’s strategic expansion reinforces a broader industry truth: AI leadership is no longer defined solely by software innovation – it is secured through physical infrastructure leadership.

Why Compute Architecture Is Now a Strategic Weapon

Modern AI systems, particularly generative AI, real-time analytics engines, and autonomous decision systems, demand far more than virtualized servers. They require a reimagined enterprise compute architecture for AI workloads. Let’s examine why.

1. AI Is Compute-Intensive by Design

Training advanced foundation models can require thousands of GPUs operating simultaneously. Even inference, once considered lightweight, now demands specialized accelerators for high-speed response times.

Organizations that rely on outdated compute environments face:

- Processing bottlenecks

- Latency spikes

- Escalating operational costs

- Infrastructure fragility

AI doesn’t tolerate inefficiency. It exposes it.

2. Real-Time AI Changes Infrastructure Requirements

AI is increasingly embedded in live environments:

- Fraud detection in financial services

- Predictive maintenance in manufacturing

- Personalized product recommendations in e-commerce

- AI copilots in enterprise workflows

These applications require infrastructure for real-time AI, not batch-processing systems designed for overnight analytics.

Real-time AI demands:

- Ultra-low latency networking

- Edge integration capabilities

- Distributed processing

- Seamless scalability

According to TechRepublic’s enterprise AI coverage, many organizations struggle to transition AI from pilot to production because their compute, storage, and networking layers weren’t designed for production-grade workloads, creating bottlenecks that delay or derail deployments.

3. Energy, Cooling, and Sustainability Are Now AI Variables

One often overlooked aspect of AI infrastructure is energy intensity. AI workloads consume significantly more power than traditional enterprise systems.

Modern AI-optimized facilities incorporate:

- Advanced liquid cooling systems

- High-density rack configurations

- Renewable energy integration

- Intelligent power distribution networks

Amazon’s Louisiana campuses are expected to include significant utility and infrastructure upgrades – including new electrical systems funded in partnership with Southwestern Electric Power Company and up to $400 million in water infrastructure improvements to support high-performance operations.

The AI era is also an energy era. Infrastructure planning must integrate sustainability, resilience, and cost efficiency simultaneously.

The Rise of a Formal Enterprise AI Infrastructure Strategy

What separates AI leaders from followers is not experimentation – it is architectural foresight. A strong enterprise AI infrastructure strategy includes:

- Strategic Capacity Planning

Forecasting compute requirements aligned with AI adoption roadmaps.

- Hybrid & Multi-Cloud Alignment

Balancing hyperscale cloud, on-premise systems, and edge environments.

Monitoring inference economics to prevent uncontrolled compute spend.

Embedding zero-trust principles into AI workloads and data flows.

Workload Placement Intelligence

Running the right workloads on the right platforms for performance and cost optimization.

Without a structured strategy, enterprises face:

- Siloed AI deployments

- Fragmented compute environments

- Rising operational costs

- Limited scalability

Infrastructure must move from reactive to predictive.

Why Enterprises Are Turning to Specialized Partners

Designing, deploying, and optimizing AI infrastructure is not trivial. It requires deep expertise across hardware, orchestration, networking, and AI deployment pipelines.

This is why organizations increasingly collaborate with experienced:

- AI infrastructure development companies

- Enterprise AI development companies

These partners help enterprises:

- Architect scalable compute frameworks

- Optimize GPU utilization

- Design resilient multi-cloud ecosystems

- Integrate AI seamlessly into enterprise environments

Infrastructure transformation is complex, but strategic partnerships reduce risk and accelerate deployment timelines.

The Economic Implications of AI Data Center Expansion

Large-scale AI infrastructure investments are signaling a structural transformation in the global economy. Compute capacity is becoming a strategic asset influencing energy markets, semiconductor supply chains, regional talent hubs, and capital allocation priorities.

Enterprises are no longer simply purchasing software licenses; they are competing for sustained access to scalable compute ecosystems. As AI adoption accelerates, infrastructure availability, performance efficiency, and cost governance increasingly determine which organizations can innovate reliably at scale.

The deeper shift is this: AI infrastructure is becoming industrial infrastructure.

Just as railroads powered manufacturing growth and broadband enabled digital commerce, AI-ready compute environments now form the backbone of competitive enterprise ecosystems. Organizations that recognize infrastructure as strategic capital, not operational overhead, will define the next decade of market leadership.

What Enterprise Leaders Must Do Now

Infrastructure decisions can no longer be deferred to IT roadmaps. They must sit at the center of enterprise AI strategy. To remain competitive in the Infrastructure Era of AI, leaders should:

1. Conduct a Compute Readiness Assessment

Identify architectural bottlenecks, GPU constraints, latency risks, and cost inefficiencies that could limit AI scale.

2. Formalize an enterprise AI infrastructure strategy

Align infrastructure investment with long-term AI adoption plans, ensuring compute capacity grows alongside business ambition.

3. Redesign enterprise compute architecture for AI workloads

Move beyond retrofitting legacy systems. Build environments purpose-designed for training, inference, and hybrid scaling.

4. Build a dedicated infrastructure for real-time AI

Enable low-latency, production-grade AI systems that operate within mission-critical workflows.

5. Partner with AI Infrastructure Experts

Work with specialists who can design scalable compute environments and ensure your infrastructure supports sustainable AI growth.

The organizations that act decisively will turn infrastructure into a growth multiplier. Those who delay will find their AI ambitions constrained by architectural limits.

The New Definition of AI Leadership

AI leadership in 2026 is no longer measured by isolated model innovation, but by the strength and scalability of enterprise compute foundations. As AI shifts from experimentation to industrialization, competitive advantage depends on a well-defined enterprise AI infrastructure strategy and a purpose-built enterprise compute architecture for AI workloads. Organizations that invest in AI-ready data center architecture for enterprises and build infrastructure for real-time AI position themselves to scale efficiently, control costs, and sustain performance.

In this new era, infrastructure is not operational support – it is strategic capital. Market leaders will be those who align compute capacity with long-term business vision. Aniter, an enterprise AI development company, helps organizations design, deploy, and optimize scalable AI systems that deliver resilient, production-grade performance and measurable business impact.

Crypto World

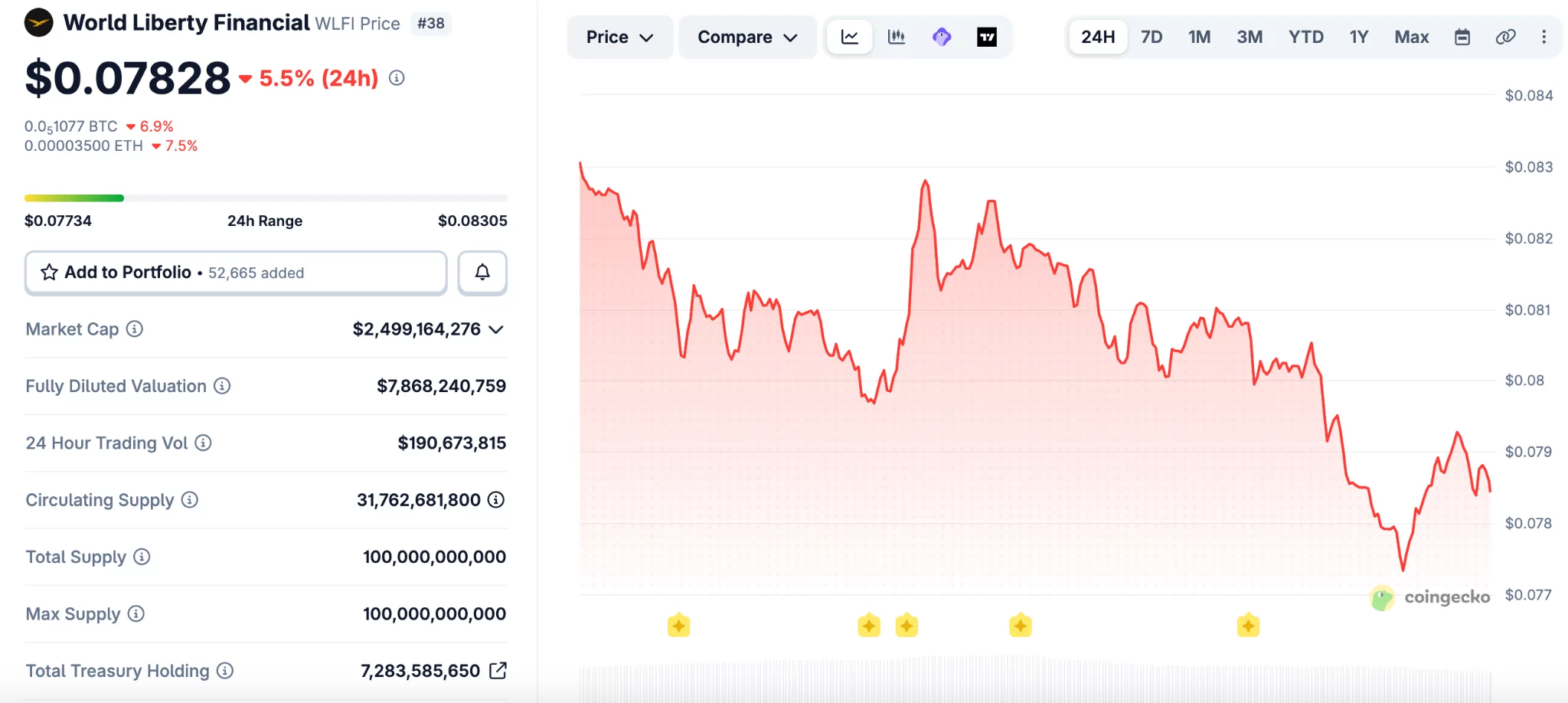

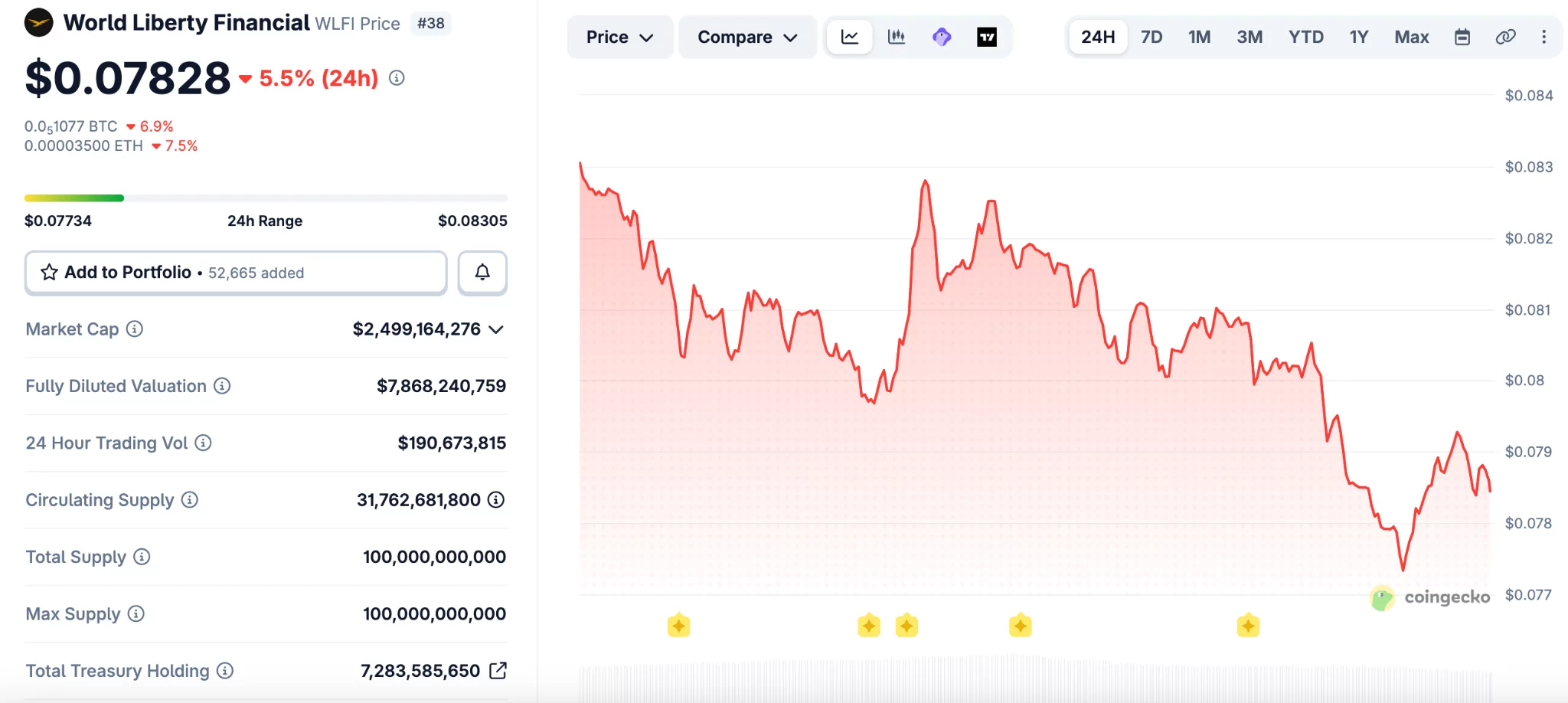

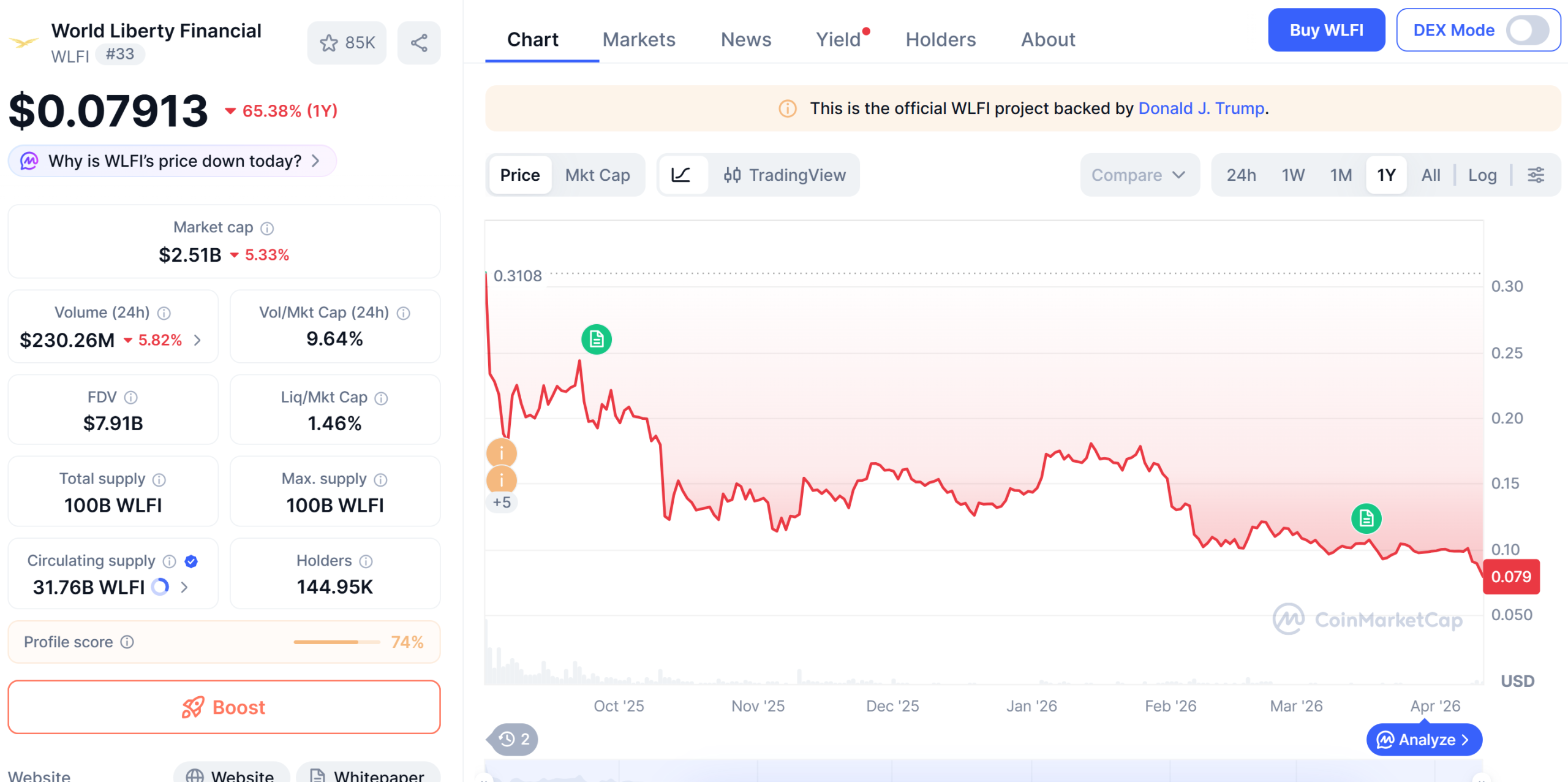

WLFI drops to record low after token-backed loan draws scrutiny

WLFI (WLFI) fell to a new all-time low on Saturday after onchain data showed wallets linked to World Liberty Financial used large token holdings to borrow stablecoins.

Summary

- WLFI fell to a record low after a self-backed loan raised fresh market risk questions.

- Onchain data showed linked wallets used 5 billion WLFI tokens to borrow stablecoins on Dolomite.

- World Liberty said its positions remain safe and framed the lending move as yield strategy.

The move added pressure to the Trump-linked project as traders weighed the risk tied to using its own token as collateral.

WLFI dropped to about $0.077, its lowest level on record, before trading near $0.079. The token is now down 76% from its peak of $0.33 reached in September, based on CoinGecko data.

The decline followed reports that wallets tied to World Liberty Financial deposited about 5 billion WLFI tokens on Dolomite. The same position was then used to borrow $75 million in USD1 and USDC.\

Arkham data showed that more than $40 million of the borrowed funds later moved to Coinbase Prime. That transfer drew more attention to the project’s financing activity and the size of its exposure.

The market reaction was swift because WLFI is not viewed as a deeply liquid asset. A large collateral position tied to price swings can increase pressure if the token falls further.

DeFi users on X said the structure could create risk for lenders if WLFI moves closer to liquidation levels. Some pointed to the token’s high fully diluted valuation and limited trading depth as a weak point.

“WLFI has almost a $10 billion FDV, but it is not an extremely liquid asset,” wrote one user. “So imagine what would happen if 5% of WLFI’s total supply would suddenly need to be sold to liquidate the position.”

Another user compared the setup to borrowing cash against self-created value. The user said,

“It’s the financial equivalent of printing casino chips, borrowing cash against them, and telling everyone else not to panic because the house still believes in the chips.”

Dolomite remains a smaller player in DeFi lending. DefiLlama ranks it 19th among lending platforms by total value locked, which added more focus to the size of the WLFI-linked position.

World Liberty defends the strategy

World Liberty Financial responded on social media and said its positions remain well above liquidation thresholds. The project described itself as an “anchor borrower” and said the strategy supports yield generation.

The team wrote,

“Everyday users are earning outsized stablecoin yields right now — at a time when traditional markets are offering very little.” It added, “That’s the whole point.”

The project also said it plans to introduce a governance proposal for early retail holders. The proposal would replace immediate token access with a phased vesting schedule, subject to a community vote.

Disclosure: This article does not represent investment advice. The content and materials featured on this page are for educational purposes only.

Crypto World

WLFI drops to record low after token-backed loan draws ccrutiny

WLFI (WLFI) fell to a new all-time low on Saturday after onchain data showed wallets linked to World Liberty Financial used large token holdings to borrow stablecoins.

Summary

- WLFI fell to a record low after a self-backed loan raised fresh market risk questions.

- Onchain data showed linked wallets used 5 billion WLFI tokens to borrow stablecoins on Dolomite.

- World Liberty said its positions remain safe and framed the lending move as yield strategy.

The move added pressure to the Trump-linked project as traders weighed the risk tied to using its own token as collateral.

WLFI dropped to about $0.077, its lowest level on record, before trading near $0.079. The token is now down 76% from its peak of $0.33 reached in September, based on CoinGecko data.

The decline followed reports that wallets tied to World Liberty Financial deposited about 5 billion WLFI tokens on Dolomite. The same position was then used to borrow $75 million in USD1 and USDC.\

Arkham data showed that more than $40 million of the borrowed funds later moved to Coinbase Prime. That transfer drew more attention to the project’s financing activity and the size of its exposure.

The market reaction was swift because WLFI is not viewed as a deeply liquid asset. A large collateral position tied to price swings can increase pressure if the token falls further.

DeFi users on X said the structure could create risk for lenders if WLFI moves closer to liquidation levels. Some pointed to the token’s high fully diluted valuation and limited trading depth as a weak point.

“WLFI has almost a $10 billion FDV, but it is not an extremely liquid asset,” wrote one user. “So imagine what would happen if 5% of WLFI’s total supply would suddenly need to be sold to liquidate the position.”

Another user compared the setup to borrowing cash against self-created value. The user said,

“It’s the financial equivalent of printing casino chips, borrowing cash against them, and telling everyone else not to panic because the house still believes in the chips.”

Dolomite remains a smaller player in DeFi lending. DefiLlama ranks it 19th among lending platforms by total value locked, which added more focus to the size of the WLFI-linked position.

World Liberty defends the strategy

World Liberty Financial responded on social media and said its positions remain well above liquidation thresholds. The project described itself as an “anchor borrower” and said the strategy supports yield generation.

The team wrote,

“Everyday users are earning outsized stablecoin yields right now — at a time when traditional markets are offering very little.” It added, “That’s the whole point.”

The project also said it plans to introduce a governance proposal for early retail holders. The proposal would replace immediate token access with a phased vesting schedule, subject to a community vote.

Disclosure: This article does not represent investment advice. The content and materials featured on this page are for educational purposes only.

Crypto World

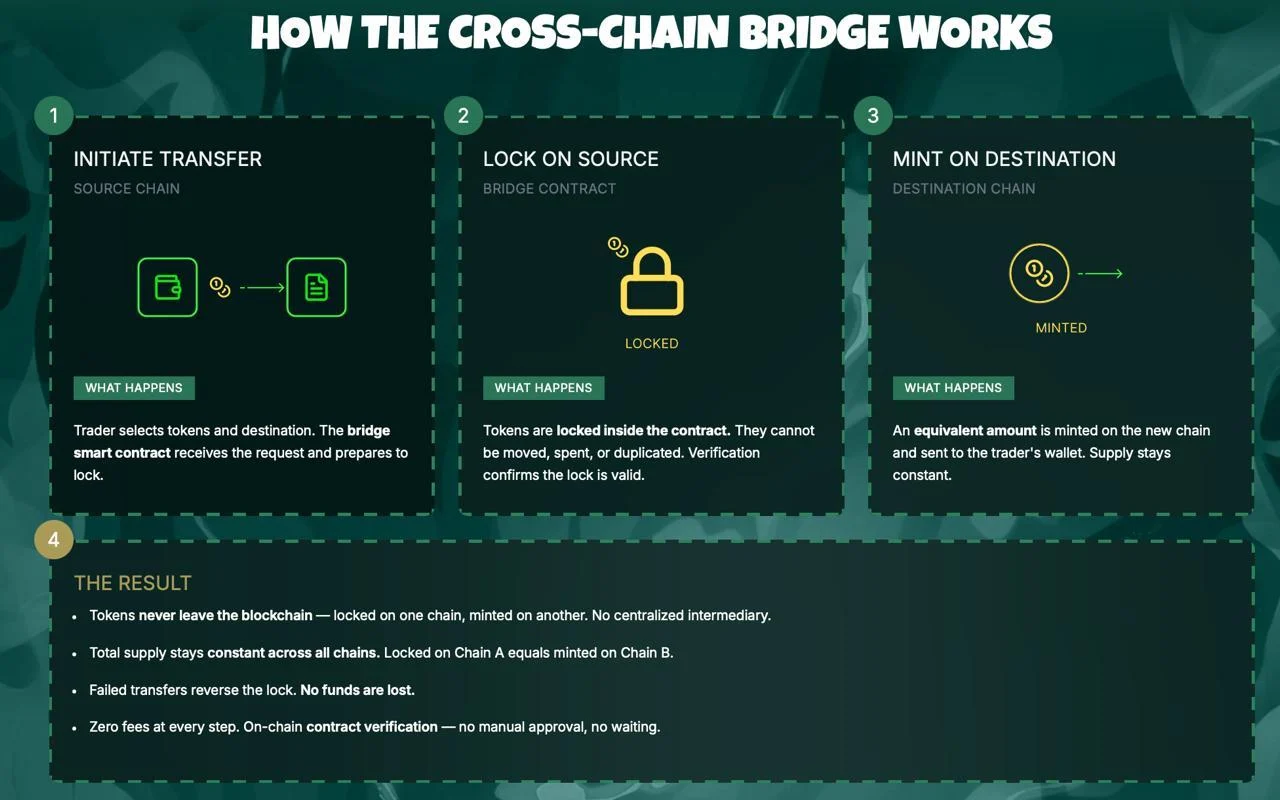

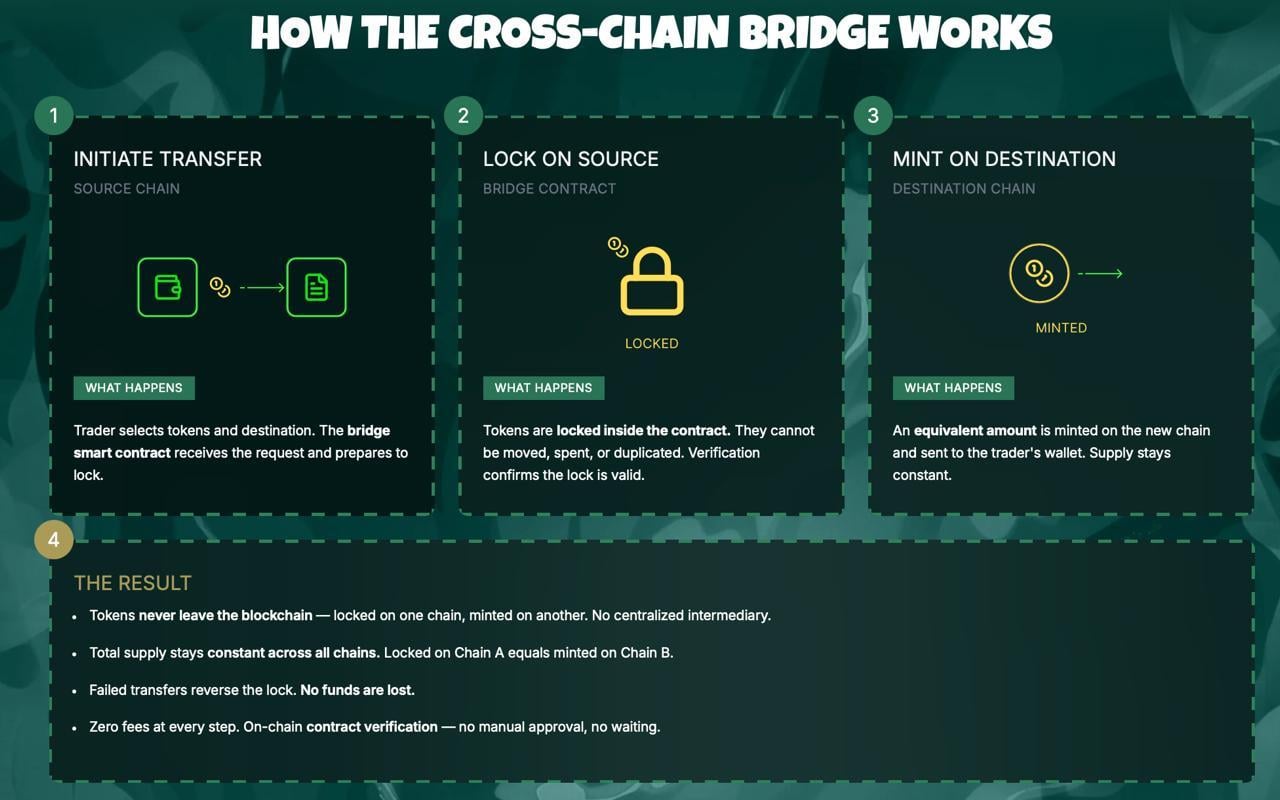

Aethir Contains Bridge Hack While Losses Stay Below $90K

Aethir said it remains fully operational after containing an attack on its ATH bridge contracts.

Summary

- Aethir said it contained the ATH bridge exploit quickly and kept total user losses below $90,000.

- The company said Ethereum ATH supply stayed intact while affected contracts were disconnected to stop losses.

- PeckShield first estimated higher losses, while Aethir said compensation details will arrive next week soon.

The company said the exploit did not affect the main ATH supply on Ethereum, while user losses stayed below $90,000.

Aethir said it detected a malicious attack targeting ATH bridge contracts that connect Ethereum with other chains. The company said it disconnected all affected contracts soon after finding the issue and stopped further damage.

The team added that the ETH-ARB bridge on Squid was not affected during the incident. It also said the main ATH supply on Ethereum remains intact, which helped prevent wider disruption across the network.

Aethir said it will share a full compensation plan next week. The company also said it is working with authorities and exchange partners to trace the attacker and block related funds.

“A full attacker wallet list will be posted in Discord as we monitor the funds,” Aethir said, in its update.

It added that a detailed memo will explain what happened, which users were affected, and how compensation will work.

Aethir credited several exchanges for acting quickly after the exploit. The company named Binance, Upbit, Bithumb, and HTX among the platforms that blacklisted identified wallets tied to the incident.

The project also thanked ZeroShadow for helping with analysis during the response. Aethir said that early action from partners helped limit the scope of the losses and support the ongoing investigation.

PeckShield had flagged the exploit a day earlier and initially estimated losses at about $400,000. The blockchain security firm also said the attacker moved funds from BNB Chain to Tron through several addresses.

That early estimate differed from Aethir’s latest figure of under $90,000 in user losses. The gap places more attention on fund tracing and the final accounting of the incident.

Crypto attacks continue to pressure the market

The Aethir case comes as crypto security breaches keep hitting the market. PeckShield recently said losses from 20 security incidents reached about $52 million in March, nearly double the February total.

The firm also pointed to a growing pattern where one exploit can spread stress across linked DeFi platforms. Those events can weaken liquidity, create bad debt, and strain lending markets beyond the first target.

PeckShield cited ResolvLabs and Venus Protocol as recent examples of wider fallout after exploits. It also noted targeted attacks on individuals, including a multimillion-dollar theft tied to social engineering on Kraken. The trend has carried into April as other platforms deal with new attacks.

Crypto World

Binance New Listing Calendar Heats Up as Strategy Holds 766,970 BTC and One Presale Fills Fast

Strategy now holds 766,970 BTC worth over $54 billion after buying roughly 45,000 BTC in the past 30 days, proving the largest corporate holder is not slowing down even with BTC above $71,000.

The binance new listing calendar is where the next round of returns gets decided, and the presale filling fastest right now is the one with a confirmed spot on that calendar.

Pepeto is approaching its listing date with more than $8.87 million raised and the cofounder who built the original Pepe coin, and wallets are rushing to lock in the presale entry before stages close.

Strategy bought roughly 45,000 BTC over the past month, pushing its total to 766,970 BTC per CoinDesk. The company launched its STRC offering to fund more purchases, and the position now tops $54 billion.

BTC cleared $72,700 after the ceasefire rally that wiped $600 million in shorts per Bloomberg, and the next confirmed binance new listing is where presale holders turn floor entries into returns that BTC at current prices cannot match.

How BNB, SOL, and the Next Binance New Listing Compare for Returns

Pepeto: What Happens When a Working Exchange Hits the Binance New Listing Calendar

Most tokens land on Binance with a whitepaper and a promise. Pepeto lands with a finished exchange that already runs live trades, and that difference is why the binance new listing for this token is getting more attention than any other presale this cycle.

Zero fee swaps keep positions whole. The bridge moves tokens across chains without charging a cent. The contract scanner catches rug pulls before a dollar goes in. All three tools are live, not coming soon, live.

The presale hit $8.87 million during extreme fear while the rest of the market froze, and the Pepe cofounder who took 420 trillion tokens to $11 billion with nothing behind it is building a real exchange this time. Every contract cleared a SolidProof audit, 186% APY staking grows every position, and analysts model 100x to 300x starting at the $0.0000001863 entry.

The presale fills faster each round because the wallets inside know what listing day does, it replaces this price permanently and the return belongs only to the wallets that got in while the number still existed. The binance new listing date is the clock, and every hour closer is one hour less before the entry is gone.

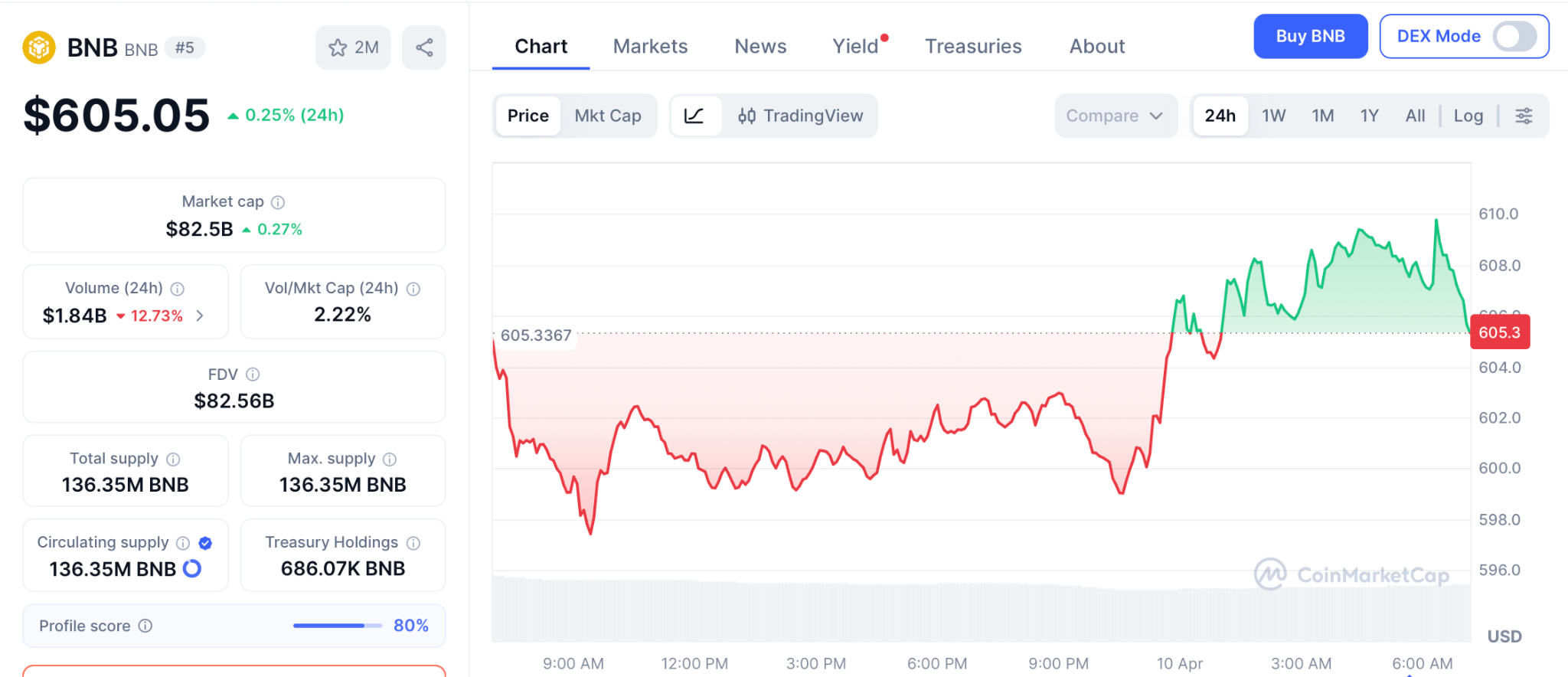

BNB: Exchange Token Holds $605 but Upside Stays Measured

BNB holds near $605 with quarterly token burns and Binance volume keeping demand steady per CoinMarketCap.

New listings on Binance historically lift BNB as traders move capital onto the platform, and the upcoming Pepeto listing adds another event to the calendar.

BNB offers stability, but from $605 the path to $900 is roughly 50%, far from the kind of return a presale floor delivers when the binance new listing opens the gap between entry and market price.

Solana: SOL Sits at $84 With Strong Fundamentals but Limited Ceiling

SOL trades near $84 after commodity classification cleared regulatory clouds per CoinGecko. Nine ETF filings and the Alpenglow upgrade add long term weight, and institutional ownership of SOL products sits at 48.8%, the highest of any crypto fund.

CME Group also plans SUI and AVAX futures for May, showing derivatives markets are expanding beyond BTC and ETH. SOL is strong, but from $84 even a move to $300 delivers 3.5x over months while presale entries hold the spread between presale and listing where the widest returns get built.

Conclusion

Strategy’s 766,970 BTC proves long term confidence in digital assets, but the wallets watching the binance new listing calendar are looking past large caps toward presale entries with real weight. Pepeto has the live exchange, the capital, and the confirmed listing to back it.

The last stage sold out ahead of schedule, and this one fills while these words load. The presale price stops existing the moment the binance new listing date arrives, and the returns belong only to the wallets that got in while the door was still open.

Click To Visit Pepeto Website To Enter The Presale

FAQs

What is the most expected binance new listing in 2026?

Pepeto leads with $8.87 million raised, a live exchange with zero fee trading, and a confirmed listing date. The Pepe cofounder and SolidProof audit give it the strongest profile this cycle.

Can a binance new listing deliver bigger returns than holding BNB or SOL?

BNB at $605 offers 50% to $900 and SOL at $84 targets 3.5x to $300. Presale entries at floor price carry the listing gap where the widest returns in every cycle get built.

Disclaimer: This is a Press Release provided by a third party who is responsible for the content. Please conduct your own research before taking any action based on the content.

Crypto World

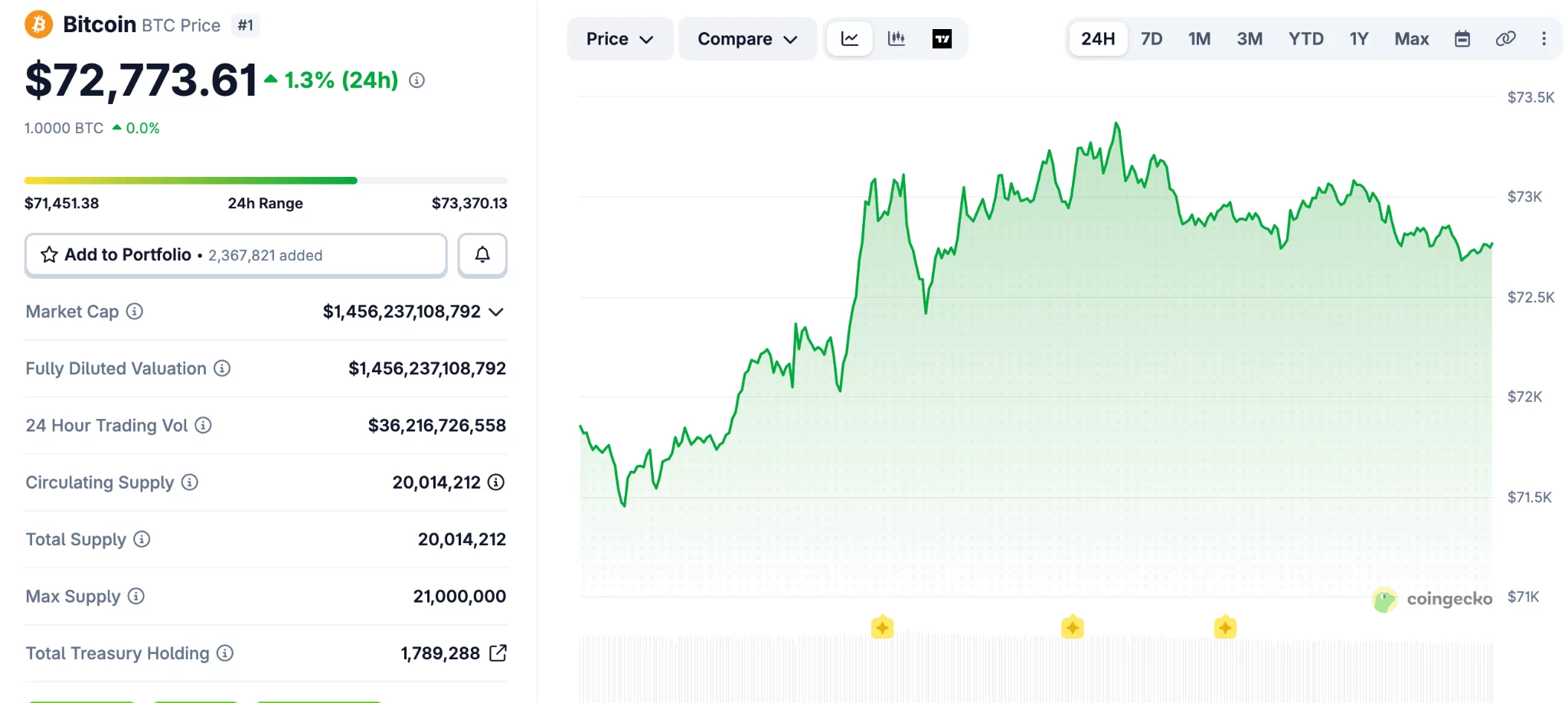

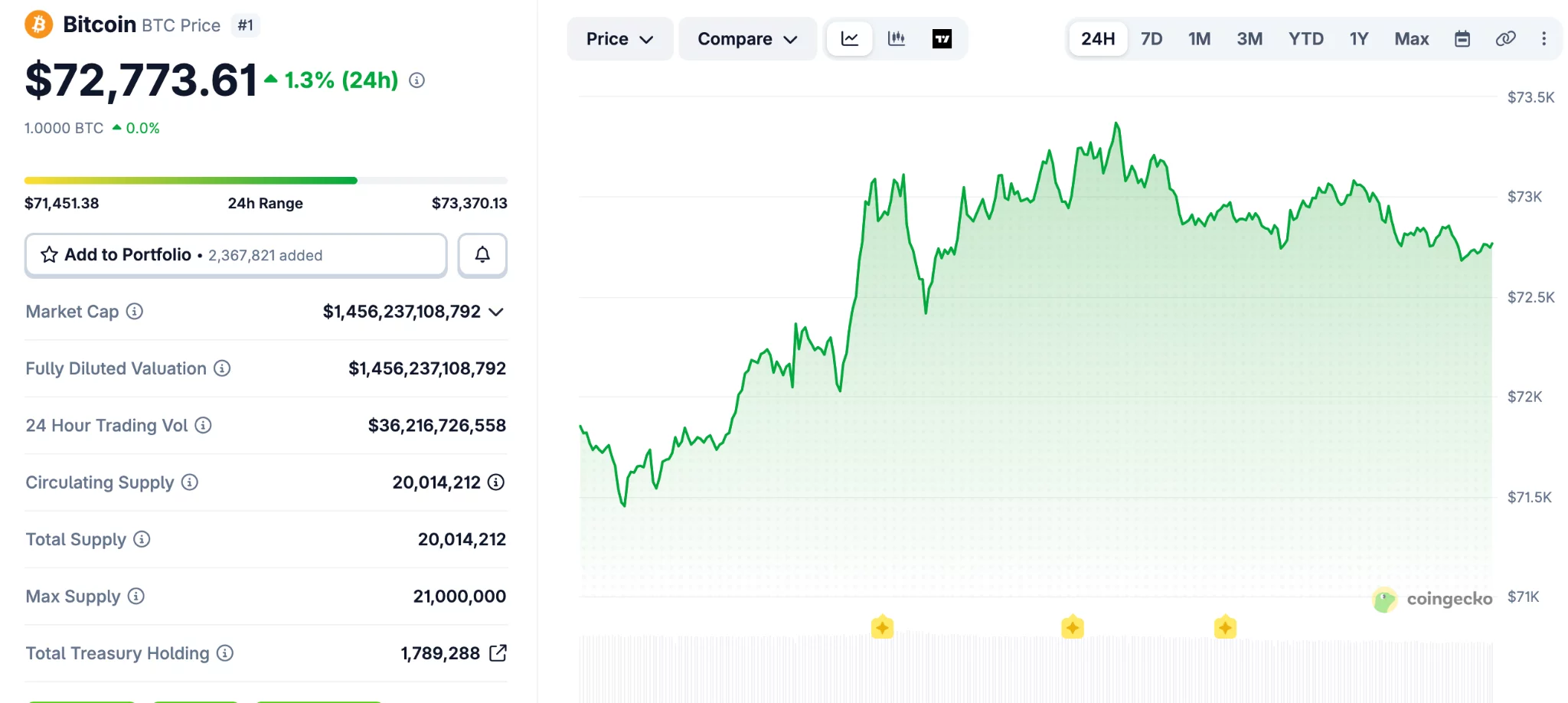

Bitcoin nears $73K again as ETH and HYPE push higher

Bitcoin (BTC) extended its upward move over the past 24 hours and reached its highest level in three weeks.

Summary

- Bitcoin climbed above $73,000 as traders weighed cease-fire updates and stronger March CPI data yesterday.

- Ethereum moved back above $2,200, while HYPE and DASH posted gains across the altcoin market.

- RAVE surged 100% in one day and entered the top 100 tokens.

The broader crypto market also moved higher, with Ethereum (ETH), HYPE (HYPE), and RAVE among the tokens posting gains.

Bitcoin traded in a tight range between $66,000 and $67,000 over the weekend. That changed on Monday when the asset moved above $70,000 after reports said the United States and Iran had started talks.

The price later slipped below $68,000 after follow-up reports challenged that claim. Bitcoin then turned higher again on Tuesday after both sides announced a “two-week cease-fire,” which supported market sentiment.

The asset kept climbing even after March CPI data showed stronger inflation. It reached $73,500 earlier today, its highest level since March 18, before easing slightly below $73,000.

Bitcoin’s market value rose to $1.455 trillion, according to CoinGecko data. Its share of the total crypto market also increased over the past week and now stands above 57%.

Ethereum, HYPE, and RAVE lead altcoin gains

Ethereum moved back above the $2,200 mark after a 2.3% daily rise. BNB also traded higher and moved past $600, while HYPE climbed more than 5% and reclaimed $40.

Most large-cap altcoins followed the same direction, though gains remained moderate. A few tokens, including WLFI, XMR, and CC, posted small losses during the session.

RAVE recorded the strongest move among the top gainers. The token jumped 100% in one day and extended its weekly gain to about 700%, which pushed it into the top 100 assets by market value.

DASH also posted a sharp advance and moved above $45 after a 13% gain. SIREN added 10% and returned to the $0.80 level.

The total crypto market value increased by more than $100 billion from last week. It stood at $2.530 trillion at press time, showing broader strength across the sector.

Disclosure: This article does not represent investment advice. The content and materials featured on this page are for educational purposes only.

Crypto World

Iran Bitcoin toll report raises questions over oil ship payments

Reports that Iran may accept crypto for oil tanker tolls in the Strait of Hormuz have sparked debate across the digital asset market.

Summary

- Reports on Iran’s possible crypto tolls for oil tankers have split opinion across Bitcoin and stablecoin circles.

- Analysts said stablecoins face freeze risks, while Bitcoin supporters called BTC harder to block or control.

- Galaxy’s Alex Thorn said tanker payments may use Bitcoin addresses, not Lightning, due to size limits.

The discussion followed a Financial Times report that linked the proposal to Iran’s efforts to reduce exposure to US sanctions.

Market participants have focused on one question: whether Bitcoin would play a real role in such payments. Conflicting claims have since pointed to stablecoins or Chinese yuan as other possible options.

The latest debate started after reports said Iran was considering Bitcoin payments for ships crossing the Strait of Hormuz. The waterway remains one of the world’s busiest energy routes, which has pushed the topic beyond crypto circles and into wider market discussions.

Alex Thorn, head of firmwide research at Galaxy, said later reports did not fully support the original Bitcoin claim. He said some accounts suggested the tolls could instead be settled in stablecoins or Chinese yuan, which left the payment method unclear.

That uncertainty has driven much of the reaction from Bitcoin supporters and market analysts. With no confirmed payment framework in place, traders and industry figures have treated the story as a developing issue rather than a settled policy.

The lack of an official and detailed public plan from Iranian authorities has also kept room for doubt. For now, the crypto market is responding more to reports and commentary than to a final rule.

Bitcoin and stablecoins draw different arguments

Bitcoin supporters argued that BTC would be harder for outside parties to freeze or block. Justin Bechler said, “USDT and USDC include built-in blacklist functions at the smart contract level,” adding that issuers can freeze funds when addresses are flagged.

He also said, “Bitcoin has no issuer, no compliance officer to pressure, and no freeze function.” That argument has pushed some market participants to present Bitcoin as a more resilient option for cross-border settlement under sanctions pressure.

Still, that view has not settled the debate. Stablecoins remain widely used in global crypto payments because they reduce price swings, and that may still matter for any large commercial transaction tied to oil shipping.

The discussion also reflects the difference between theory and practice. A payment method may look strong on paper, but large state-linked payments depend on speed, scale, compliance risk, and operational ease.

Payment size and logistics remain key issues

Thorn estimated that tanker tolls could range from $200,000 to $2 million per ship. That size has raised doubts about whether the Lightning Network would be the main rail, even though some early reporting suggested a payment could be completed within seconds.

He said the more likely setup would involve Iran providing a QR code or a Bitcoin address after approving a ship’s passage. That method would avoid the limits that can affect very large Lightning payments.

Thorn also noted that the largest known Lightning transaction to date was about $1 million. That figure matters because some tanker tolls may sit above that level, which could make direct onchain settlement or pre-arranged transfers more practical.

Crypto World

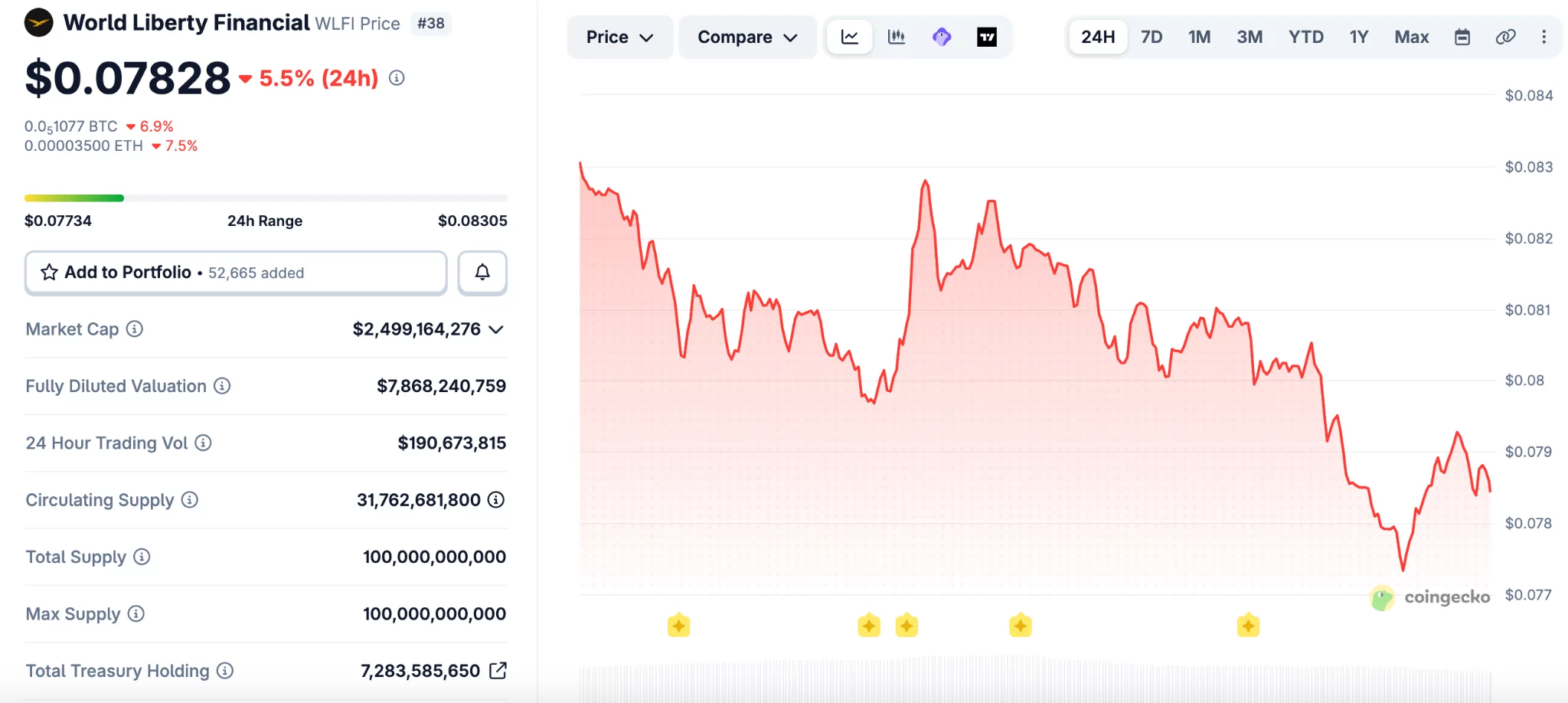

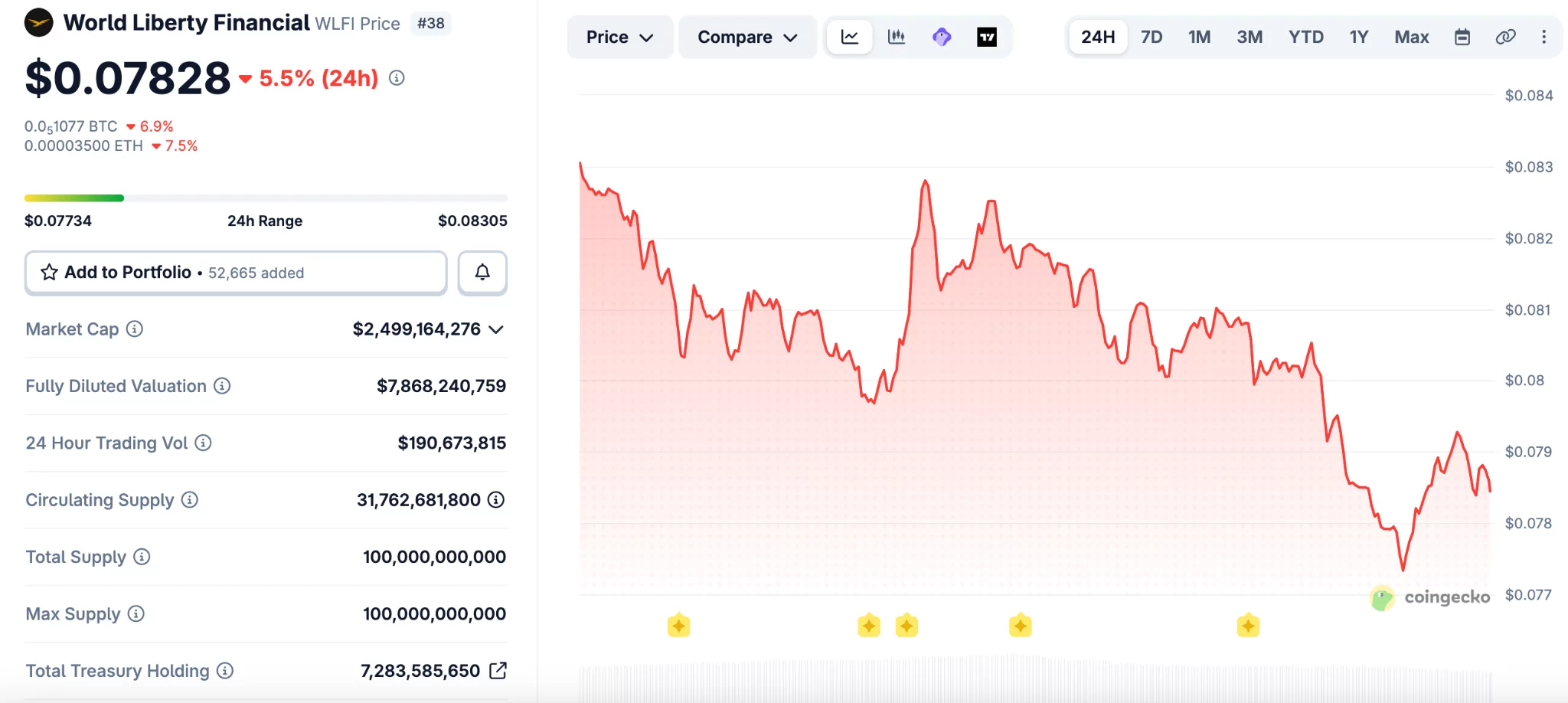

WLFI Drops to Record Low After Token-Backed Borrowing Raises Risk Concerns

WLFI, the native token of the Donald Trump–backed World Liberty Financial platform, sank to an all-time low on Saturday as crypto users expressed concerns after revelations that the project used a large amount of its own tokens to take out loans.

The token hit a new low of around $0.07714 on Saturday, down 83% from its peak of $0.46 reached last September, according to data from CoinMarketCap. WLFI is currently at $0.07879, down by 4.66% over the past day.

The downturn came after it was revealed that wallets linked to World Liberty Financial deployed substantial WLFI holdings as collateral on Dolomite, a decentralized lending platform co-founded by the project’s chief technology officer, Corey Caplan.

Onchain data from Arkham shows that a wallet linked to World Liberty Financial deposited around 5 billion WLFI tokens on Dolomite. The wallet then used the tokens as collateral to borrow $75 million in USD1 and USDC (USDC) stablecoins, later transferring more than $40 million to Coinbase Prime.

Related: CFTC unveils innovation task force members in crypto clarity push

WLFI-backed loan position sparks concerns

The large collateral position has raised concerns among DeFi analysts, who warn it could create risks for lenders on Dolomite if WLFI’s price falls and approaches liquidation levels.

“WLFI has almost a $10 billion FDV, but it is not an extremely liquid asset,” one user wrote on X. “So imagine what would happen if 5% of WLFI’s total supply would suddenly need to be sold to liquidate the position,” he added.

Another X user argued that the setup resembles creating artificial “chips” and borrowing against them. “It’s the financial equivalent of printing casino chips, borrowing cash against them, and telling everyone else not to panic because the house still believes in the chips,” they claimed.

Dolomite has a relatively small footprint in decentralized finance, ranking 19th among lending platforms by total value locked, according to DefiLlama.

Related: White House warns staff as Iran bets add to growing insider trading concerns

World Liberty defends WLFI lending

World Liberty Financial acknowledged the lending activity on social media, but sought to calm markets, stating that its positions remain well above liquidation thresholds. The project described itself as an “anchor borrower” for WLFI and argued that the strategy helps generate yield.

“Everyday users are earning outsized stablecoin yields right now — at a time when traditional markets are offering very little. That’s the whole point,” the project wrote on X.

On Friday, World Liberty said it will soon introduce a governance proposal to create a phased unlock schedule for WLFI tokens held by early retail buyers, replacing immediate access with a long-term vesting plan subject to community vote.

Magazine: Bitcoin may take 7 years to upgrade to post-quantum — BIP-360 co-author

Crypto World

Court blocks Arizona’s bid to regulate Kalshi’s event contracts

A federal court in Arizona has granted a temporary shield for Kalshi against state-level gambling enforcement, aligning with U.S. regulators in a widening dispute over whether Kalshi’s event-based contracts belong under federal derivatives law or under state betting statutes. Judge Michael Liburdi issued the order at the request of the Commodity Futures Trading Commission (CFTC) and the federal government, effectively blocking Arizona from pursuing civil or criminal actions against Kalshi on contracts listed on CFTC-regulated markets.

The core question of the case is how to classify Kalshi’s “event contracts”—whether they are swaps governed by the Commodity Exchange Act (CEA) or purely gambling under state law. The court indicated that the CFTC is likely to prevail in arguing that the contracts fall within the federal framework, which would give the agency exclusive authority over swaps traded on designated contract markets. The temporary restraining order will hold until April 24, 2026, as the court weighs a longer-term preliminary injunction.

Key takeaways

- The Arizona court temporarily halts state enforcement against Kalshi’s event contracts, pending a ruling on a longer injunction and federal jurisdiction.

- The judge found the CFTC is likely to succeed in classifying Kalshi’s contracts as swaps under the CEA, placing them under federal oversight.

- The decision highlights a broader tension between state gaming laws and federal derivatives regulation as regulators seek uniform treatment for prediction-market products.

- The ruling comes as other states and regulators take related steps—Nevada has extended its ban on Kalshi’s event-based contracts, and Utah has moved to classify such bets as gambling; New Jersey enforcement challenges have also featured in related coverage.

- Kalshi’s status remains unsettled as the legal process continues, with observers watching how the federal/state dynamic will evolve for prediction markets nationwide.

Federal jurisdiction vs. state gambling laws in the Kalshi case

At the heart of the Arizona order is the question of whether Kalshi’s event contracts should be treated as swaps traded on designated contract markets—subject to federal regulation under the CEA—or as gambling offerings governed by state statutes. The CFTC and the Department of Justice argued that the contracts resemble traditional financial instruments because they are contingent on the outcome of real-world events and are cleared on regulated marketplaces. The court agreed that, based on the arguments presented, the CFTC has a strong likelihood of proving the contracts qualify as swaps, thereby placing them under federal jurisdiction.

Arizona authorities had signaled intent to pursue enforcement actions under local gambling rules. The court’s restraining order explicitly blocks such actions while the case proceeds, maintaining a default status quo that preserves Kalshi’s ability to offer its event contracts on federally regulated venues without immediate state-level interference.

Context: a broader patchwork of state actions

The Arizona decision sits inside a wider regional contest over the status of prediction-market products. Kalshi and similar platforms have faced varying treatment across states, with regulators arguing that the products resemble traditional gambling while platform proponents emphasize their roots in financial market design and risk-trading mechanics.

Nevada has already taken a tougher stance, with a judge extending a ban on Kalshi’s offerings in the state, concluding that the contracts closely resemble sports betting and fall under state gaming laws. That ruling underscores the potential for disparate regulatory outcomes as states apply their own legal lenses to prediction markets.

Meanwhile, Utah lawmakers moved to block Kalshi and Polymarket by classifying proposition-style bets on in-game events as gambling, signaling a broader appetite among some state governments to restrict such offerings despite federal regulatory perspectives. In related coverage, a US appeals court previously upheld a decision preventing enforcement against Kalshi in New Jersey, illustrating a fragmented regulatory landscape that Kalshi and its peers must navigate as they scale.

Implications for investors, traders, and the broader ecosystem

For participants in Kalshi’s market, the Arizona ruling reinforces the importance of regulatory clarity when evaluating risk, liquidity, and legal exposure. Federal preemption, if upheld in the longer injunction, could provide a more uniform operating environment for event contracts traded on Kalshi’s platform, potentially stabilizing trading activity across jurisdictions that recognize the federal framework. Conversely, continued state actions—such as Nevada’s ongoing restrictions and Utah’s legislative moves—could constrain Kalshi’s reach and create jurisdictional risk for traders who rely on access to multiple markets.

From a market structure perspective, the decision illustrates how the treatment of prediction markets can pivot on regulatory interpretation. If courts consistently categorize event contracts as swaps, the federal regime could promote standardized disclosure, risk controls, and oversight on trading venues. If states succeed in carving out exceptions or maintaining strict gambling classifications, traders may face a more fragmented landscape with varying access and compliance requirements by venue and state.

Regulators’ stance matters for investors looking at the long-term viability of prediction-market infrastructure. A federal framework that categorizes these products as swaps would align Kalshi with traditional derivatives market design, including clearing, margin, and therefore potential counterparty risk mitigation. However, it would also place these offerings under the same set of rules that govern swaps, which can carry stringent capital and reporting requirements—factors that shape product design, pricing, and user experience.

What’s next

The court will decide whether to extend the injunction beyond April 24, 2026, and how to balance Kalshi’s operations with state enforcement considerations. While the CFTC’s position remains central to the case, the evolving regulatory environment suggests that further developments are likely across multiple states as lawmakers reassess how prediction markets should be treated under gambling or financial-law paradigms.

As Kalshi and other platforms navigate this regulatory mosaic, traders and developers should monitor: potential federal rulings on the classification of event contracts, any new state laws tightening or loosening constraints, and the continued interplay between state enforcement actions and federal oversight that could shape the trajectory of prediction-market products in the United States.

Crypto World

Crypto Market Drops 22% in Q1 2026, But Structural Quality Reaches Record Highs: Report

TLDR:

- Stablecoin market cap hit $320B in Q1 2026, with monthly transfer volumes peaking at $1.8T.

- Systemic leverage compressed to ~3% after October’s deleveraging, reshaping how crypto trades.

- Corporate Bitcoin holdings crossed 1.13M BTC, with treasury strategies turning actively managed.

- Bitcoin ETPs attracted $18.7B in global inflows, with March alone bringing $1.3B net back in.

Digital asset markets fell sharply in the first quarter of 2026, shedding roughly 22% of total market value. Total capitalisation dropped to approximately $2.42 trillion, according to AMINA Bank’s Q1 Crypto Market Monitor.

Yet beneath the price decline, core adoption metrics hit record highs. Stablecoin supply reached $320 billion, corporate Bitcoin reserves crossed 1.13 million BTC, and systemic leverage compressed to around 3%.

Leverage Collapses as Market Structure Resets After October Shock

According to the AMINA Bank report , the October 2025 deleveraging event fundamentally reset how digital assets trade.

Reflexive, momentum-driven rallies gave way to a market built on spot flows and structured hedging. That transition defined Q1 2026.

Total trading volume reached $20.57 trillion for the quarter. Derivatives accounted for $18.63 trillion of that figure. Within derivatives, the composition shifted.

Bitcoin options open interest consistently exceeded perpetual futures, with positions weighted toward downside protection. That shift, highlighted in AMINA Bank’s report, signals that institutional participants are managing risk rather than chasing direction.

The macro backdrop accelerated the repricing. US inflation held at 2.7% while GDP expanded 5.3%. The Federal Reserve kept rates at 3.50% to 3.75%, with markets pricing out cuts for the year.

In late February, geopolitical escalation in the Middle East led to the Strait of Hormuz closure. Oil surpassed $112 per barrel. Risk appetite fell across asset classes.

Through that pressure, Bitcoin held above prior lows. It also showed resilience following Google’s Quantum AI paper, which triggered a fresh wave of quantum computing fears.

When markets absorb bad news without breaking down, AMINA Bank’s report frames that pattern as evidence of seller exhaustion.

Bitcoin Treasury Strategies Go Active as Stablecoins Become Financial Rail Infrastructure

Bitcoin maintained approximately 56% market dominance through the quarter. Corporate accumulation continued, but the behaviour behind it changed. Treasury strategies moved from passive holding to active capital management.

Strategy Inc. added nearly 65,000 BTC during Q1, lifting total holdings to 762,000 BTC. Japan-based Metaplanet scaled its position to over 40,000 BTC.

MARA Holdings sold more than 15,000 BTC to optimise its balance sheet. The divergence illustrates that corporate Bitcoin exposure is no longer uniform. It is becoming a managed allocation decision.

ETF flows reflected a similar dynamic.

The quarter recorded modest net outflows overall, but March reversed that trend with over $1.3 billion in net inflows. Globally, exchange-traded products drew $18.7 billion in inflows for the period, according to AMINA Bank’s data.

Stablecoins emerged as the quarter’s most structurally important development. Monthly transfer volumes peaked at $1.8 trillion. Solana led throughput, processing approximately $650 billion in monthly stablecoin volume.

New purpose-built chains including Plasma, Arc, and Tempo entered development specifically for stablecoin settlement. The GENIUS Act framework also moved into its operational phase, introducing formal rulemaking for payment stablecoins in the US.

DeFi total value locked rose to $92.43 billion. Tokenised real-world assets crossed $20 billion in market capitalisation. AI-driven agents executed over 120 million on-chain transactions during the quarter.

Ethereum, despite a 35% price decline, retained over 56% of total DeFi value locked. Its forthcoming Glamsterdam upgrade targets Layer 1 throughput through enshrined proposer-builder separation and block-level parallel execution.

In public markets, selectivity replaced appetite. BitGo’s post-IPO performance declined 44%. Kraken paused its IPO plans.

Circle, by contrast, posted strong revenue growth as USDC circulation expanded, reinforcing that capital is still flowing to sustainable infrastructure models.

Crypto World

Arizona barred from acting against Kalshi event contracts

A federal judge in Arizona has temporarily stopped state officials from enforcing gambling laws against Kalshi, a prediction market platform regulated by the Commodity Futures Trading Commission.

Summary

- A federal judge paused Arizona action against Kalshi and backed the CFTC’s jurisdiction argument.

- The restraining order blocks Arizona enforcement until April 24 as the case moves forward.

- State and federal officials remain split on whether event contracts are swaps or gambling.

The ruling adds to the legal fight over whether event-based contracts should be treated as financial products under federal law or as gambling under state rules.

The order came from Judge Michael Liburdi of the US District Court for the District of Arizona. The court granted a request from the CFTC and the federal government to pause Arizona’s action while the case moves ahead. The restraining order will stay in place until April 24 as the court considers the next step.

The case centers on Kalshi’s event contracts, which let users trade on the outcome of real-world events. The CFTC argued that these products qualify as swaps under the Commodity Exchange Act and therefore fall under federal oversight rather than state gambling law.

The court said the federal government is likely to succeed on that argument. That finding led the judge to block Arizona from starting or continuing civil or criminal action tied to contracts listed on CFTC-regulated markets. The ruling also paused Arizona’s criminal case against Kalshi.

Additionally, Arizona had moved against Kalshi under state gambling rules and filed criminal charges tied to event-based trading. State prosecutors argued that Kalshi was offering unlawful betting products, including contracts tied to political events and sports outcomes.

After the CFTC stepped in, the federal court halted that effort. The order means Arizona officials cannot continue enforcement tied to Kalshi’s contracts during the current restraining period. Reports also said a scheduled arraignment was called off after the ruling.

Wider fight over prediction markets

The Arizona case is part of a wider dispute over prediction markets in the United States. On April 6, a federal appeals court ruled that New Jersey could not restrict Kalshi’s sports-related event contracts, finding that the CFTC has exclusive jurisdiction over those products.

Other states have taken a different view. In Nevada, a judge last week extended a ban on Kalshi’s event contracts, saying the products were close enough to sports betting to fall under state gaming law.

Utah lawmakers have also moved against proposition-style event markets. Those split outcomes show that the legal fight over Kalshi and similar platforms is still active.

Furthermore, the latest order gives Kalshi temporary relief, but it does not settle the full dispute. The larger question is whether platforms offering these contracts operate as regulated exchanges or as betting businesses under state law.

-

Business5 days ago

Business5 days agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Sports7 days ago

Sports7 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Politics16 hours ago

Politics16 hours agoUS brings back mandatory military draft registration

-

Fashion17 hours ago

Fashion17 hours agoWeekend Open Thread: Veronica Beard

-

Tech4 days ago

Tech4 days agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Business6 days ago

Business6 days agoNo Jackpot Winner, Prize to Climb to $231 Million

-

Fashion5 days ago

Fashion5 days agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Sports17 hours ago

Sports17 hours agoMan United discover Nico Schlotterbeck transfer fee as defender reaches Dortmund agreement

-

Fashion4 days ago

Fashion4 days agoLet’s Discuss: DEI in 2026

-

Crypto World3 days ago

Crypto World3 days agoBitcoin recovers as US and Iran Agree a Ceasefire Deal

-

Crypto World2 days ago

Crypto World2 days agoCanary Capital Files SEC Registration for PEPE ETF

-

Business14 hours ago

Business14 hours agoTesla Model Y Tops China Auto Sales in March 2026 With 39,827 Registrations, Beating Cheaper EVs and Gas Cars

-

Business6 days ago

Business6 days agoAkebia Therapeutics, Inc. (AKBA) Discusses Pipeline Progress and Strategic Focus on Kidney Disease Treatments at R&D Day – Slideshow

-

Business23 hours ago

Business23 hours agoOpenAI Halts Stargate UK Data Centre Project Over Energy Costs and Copyright Row

-

Tech5 days ago

Tech5 days agoGamer Restores the Original PlayStation Portal From Two Decades Ago

-

Tech5 days ago

Tech5 days agoHaier is betting big that your next TV purchase will be one of these

-

Tech5 days ago

Tech5 days agoThe Xiaomi 17 Ultra has some impressive add-ons that make snapping photos really fun

-

Tech5 days ago

Tech5 days agoSamsung just gave up on its own Messages app

-

Tech5 days ago

Tech5 days agoSave $130 on the Samsung Galaxy Watch 8 Classic: rotating bezel, sleep coaching, and running coach for $369

-

Tech5 days ago

Tech5 days agoItalian court says Netflix must refund customers up to $576 over price hikes

You must be logged in to post a comment Login