Crypto World

AI Security, Governance & Compliance Solutions Guide

Artificial Intelligence is no longer confined to innovation labs; it is now production-grade infrastructure powering credit underwriting, healthcare diagnostics, fraud detection, supply chain optimization, and generative enterprise copilots. As enterprises scale AI adoption, the need for advanced AI security services becomes critical to protect sensitive data, proprietary models, and distributed AI infrastructure. AI systems directly influence revenue decisions, risk exposure, regulatory standing, operational efficiency, customer trust, and brand reputation. Yet as adoption accelerates, so do the risks. AI expands the enterprise attack surface, increases regulatory complexity, and raises ethical accountability, making structured enterprise AI governance essential for long-term stability. Traditional IT security models cannot protect adaptive, data-driven systems operating across distributed environments.

To scale responsibly, organizations must implement structured and robust AI governance solutions, proactive AI risk management services, and integrated AI compliance solutions, all grounded in the principles of responsible AI development. Achieving this level of security, transparency, and regulatory alignment requires collaboration with a trusted, secure AI development company that understands the technical, operational, and compliance dimensions of enterprise AI transformation.

Why AI Introduces an Entirely New Category of Enterprise Risk ?

Artificial Intelligence is not just another layer of enterprise software; it represents a fundamental shift in how systems operate, decide, and evolve.

Traditional software systems are deterministic. They:

- Execute predefined logic

- Produce predictable, repeatable outputs

- Change only when developers modify the code

AI systems, however, operate differently. They:

- Learn patterns from historical and real-time data

- Continuously adapt through retraining

- Generate probabilistic, not guaranteed, outputs

- Process unstructured inputs such as text, images, and voice

- Evolve over time without explicit rule-based programming

This dynamic behavior introduces a new and complex category of enterprise risk.

1. Decision Risk

AI systems can produce inaccurate or biased outcomes due to flawed training data, insufficient validation, or model drift. Since decisions are probabilistic, even high-performing models can fail under edge conditions; impacting revenue, customer trust, or compliance.

2. Security Risk

AI models are high-value digital assets. They can be manipulated through adversarial attacks, extracted via repeated API queries, or compromised during training. Unlike traditional systems, AI introduces model-level vulnerabilities that require specialized protection.

3. Regulatory Risk

AI-driven decisions—particularly in finance, healthcare, insurance, and hiring—may unintentionally violate compliance regulations. Without structured oversight, organizations face legal scrutiny, fines, and operational restrictions.

4. Ethical & Reputational Risk

Biased or opaque AI decisions can trigger public backlash, regulatory investigations, and long-term brand damage. Ethical lapses in AI are not just technical failures—they are governance failures.

5. Operational Risk

AI performance can silently degrade over time due to data drift, environmental changes, or shifting user behavior. Unlike traditional systems that fail visibly, AI models may continue operating while gradually producing unreliable outputs.

Because AI systems function with varying degrees of autonomy, failures are often subtle and delayed. By the time issues surface, financial, regulatory, and reputational damage may already be significant.

This is why AI risk must be managed differently and more proactively than traditional enterprise software risk.

AI Security: Protecting Data, Models, and Infrastructure

AI security is not limited to perimeter defense or endpoint protection. It requires safeguarding the entire AI lifecycle from raw data ingestion to model deployment and continuous monitoring. Enterprise-grade AI security services are designed to protect not just systems, but the intelligence layer itself.

A secure AI architecture begins with the foundation: the data pipeline.

Layer 1: Securing the Data Pipeline

AI models depend on vast volumes of data flowing through ingestion, preprocessing, labeling, training, and storage environments. If this pipeline is compromised, the model’s integrity is compromised.

Key Threats in AI Data Pipelines

Data Poisoning: Attackers deliberately inject malicious or manipulated data into training datasets to influence model behavior, potentially embedding hidden vulnerabilities or bias.

Data Drift Manipulation: Subtle, gradual changes in incoming data can alter model outputs over time, leading to performance degradation or skewed predictions.

Unauthorized Data Access: Training datasets often include sensitive financial, healthcare, or personal information. Weak access controls can result in data breaches or regulatory violations.

Synthetic Data Injection: Maliciously generated or low-quality synthetic data may distort learning patterns and corrupt model accuracy.

Deep Mitigation Strategies

A mature AI security framework incorporates layered safeguards, including:

- End-to-end encryption for data at rest and in transit

- Secure, segmented data lakes with strict access control policies

- Dataset hashing and tamper-evident logging mechanisms

- Comprehensive data lineage tracking to trace the dataset origin and transformations

- Role-based access control (RBAC) for training and experimentation environments

- Differential privacy techniques to prevent memorization of sensitive data

- Federated learning architectures for privacy-sensitive industries

Data integrity validation is not optional; it is the bedrock of trustworthy AI. Without a secure data foundation, even the most advanced models cannot be considered reliable, compliant, or safe for enterprise deployment.

Layer 2: Model Security & Integrity Protection

While data is the foundation of AI, the model itself is the strategic core. Trained AI models represent years of research, proprietary algorithms, curated datasets, and competitive advantage. They are high-value intellectual property assets and increasingly attractive targets for cybercriminals, competitors, and malicious insiders.

Unlike traditional applications, AI models can be attacked both during training and after deployment. Securing model integrity is therefore a critical component of enterprise-grade AI risk management services.

Advanced AI Model Threats

Adversarial Attacks: These attacks introduce subtle, often imperceptible perturbations into input data, such as minor pixel modifications in images or slight token manipulation in text that cause the model to produce incorrect predictions. In high-stakes environments like healthcare or autonomous systems, such manipulations can lead to catastrophic outcomes.

Model Extraction Attacks: Attackers repeatedly query publicly exposed APIs to approximate and replicate a proprietary model’s behavior. Over time, they can reconstruct a functionally similar model, effectively stealing intellectual property without breaching internal systems directly.

Model Inversion Attacks: Through systematic querying and output analysis, attackers can infer or reconstruct sensitive data used during training posing serious privacy and regulatory risks, particularly in healthcare and finance.

Backdoor Attacks: Malicious actors may insert hidden triggers into training data. When activated by specific inputs, these triggers cause the model to behave unpredictably or maliciously while appearing normal during testing.

Prompt Injection Attacks (Large Language Models): For generative AI systems, attackers can manipulate prompts to override guardrails, extract confidential information, or bypass operational restrictions. Prompt injection is rapidly becoming one of the most exploited vulnerabilities in enterprise LLM deployments.

Enterprise-Grade Model Protection Controls

Professional AI risk management services and advanced AI security services deploy multi-layered defensive strategies, including:

- Red-team adversarial testing to simulate real-world attack scenarios

- Robustness training and gradient masking techniques to reduce model sensitivity to adversarial perturbations

- Model watermarking and fingerprinting to establish ownership and detect unauthorized duplication

- Secure API gateways with rate limiting, anomaly detection, and behavioral monitoring

- Token-level input filtering and validation in generative AI systems

- Output moderation engines to prevent unsafe or non-compliant responses

- Encrypted model storage and artifact signing to prevent tampering

- Isolated inference environments to restrict lateral movement in case of compromise

Without structured model integrity protection, AI systems remain vulnerable to exploitation, IP theft, and operational sabotage. Model security is no longer optional; it is a strategic necessity.

Layer 3: Infrastructure & MLOps Security

AI systems do not operate in isolation. They run on complex, distributed infrastructure that introduces its own set of vulnerabilities.

Enterprise AI environments typically rely on:

- High-performance GPU clusters

- Distributed containerized workloads

- Kubernetes orchestration layers

- Continuous integration and deployment (CI/CD) pipelines

- Cloud-hosted inference APIs and microservices

Each layer, if improperly configured can expose sensitive models, training data, or deployment credentials.

A mature secure AI development company integrates infrastructure security directly into AI architecture through:

- Zero-trust security models across all AI workloads and services

- Continuous container image scanning for vulnerabilities and misconfigurations

- Infrastructure-as-code (IaC) validation to detect security flaws before deployment

- Encrypted and access-controlled model registries

- Secure key management systems (KMS) for API tokens, credentials, and encryption keys

- Runtime intrusion detection and anomaly monitoring across GPU clusters and containers

- Secure multi-party computation (SMPC) or confidential computing for highly sensitive use cases

Infrastructure security must align with broader AI governance solutions and enterprise compliance requirements. AI security cannot be retrofitted after deployment. It must be engineered into development workflows, embedded into MLOps pipelines, and continuously monitored throughout the system’s lifecycle. Only when data, models, and infrastructure are secured together can AI systems operate with the level of trust required for enterprise-scale deployment.

Secure Your AI Systems Today — Talk to Our AI Security Experts

AI Governance: Building Structured Oversight Mechanisms for Enterprise AI

As AI systems become deeply embedded in business-critical operations, governance can no longer be informal or policy-driven alone. AI governance is the structured framework that ensures AI systems operate with accountability, transparency, fairness, and regulatory alignment across their entire lifecycle.

Modern AI governance solutions go far beyond static documentation or compliance checklists. They integrate oversight directly into development pipelines, MLOps workflows, approval processes, and monitoring systems—making governance operational rather than theoretical. At the enterprise level, governance is what transforms AI from experimental technology into regulated, board-level infrastructure.

Pillar 1: Ownership & Accountability Framework

Every AI system deployed within an organization must have clearly defined ownership and control mechanisms. Without accountability, AI becomes a shadow asset; operating without oversight or traceability.

A structured enterprise AI governance framework requires:

- A clearly defined business purpose and intended use case

- Formal risk classification (low, medium, high, critical)

- A designated model owner responsible for performance and compliance

- Defined escalation authority for risk incidents or model failures

- A documented governance approval process prior to deployment

In mature governance environments, no AI system moves into production without formal compliance, risk, and ethics review.

This structured control prevents:

- Shadow AI deployments by individual departments

- Unapproved generative AI experimentation

- Regulatory blind spots

- Unmonitored third-party AI integrations

Ownership ensures responsibility. Responsibility ensures control.

Pillar 2: Explainability & Transparency Mechanisms

Explainability is no longer optional—particularly in regulated sectors such as finance, healthcare, and insurance. Regulatory bodies increasingly require organizations to justify automated decisions, especially when those decisions affect individuals’ rights, credit eligibility, employment opportunities, or medical outcomes.

To meet these expectations, organizations must embed transparency into AI architecture through:

- Model interpretability frameworks such as SHAP and LIME

- Decision traceability logs that record input-output relationships

- Version-controlled documentation of model changes

- Model cards outlining purpose, limitations, training data scope, and known risks

- Human-in-the-loop override capabilities for high-risk decisions

Transparency reduces legal exposure and strengthens stakeholder trust. When decisions can be explained and traced, enterprises are better positioned for audits, regulatory reviews, and board-level oversight. Explainability is not just a technical feature; it is a governance safeguard.

Pillar 3: Bias & Fairness Governance

AI bias represents one of the most significant ethical, reputational, and regulatory challenges in enterprise AI. Biased outcomes can lead to discrimination claims, regulatory penalties, and public backlash.

Bias can originate from multiple sources, including:

- Skewed or non-representative training datasets

- Historical discrimination embedded in legacy data

- Proxy variables that indirectly encode sensitive attributes

- Imbalanced class representation

- Inadequate validation across demographic segments

Effective AI governance solutions implement structured bias management protocols, including:

- Pre-training bias audits to assess dataset representation

- Fairness metric benchmarking (demographic parity, equal opportunity, equalized odds)

- Continuous fairness drift monitoring post-deployment

- Regular demographic impact assessments

- Threshold-based alerts for fairness deviations

Bias governance is central to responsible AI development. It ensures that AI systems align not only with performance metrics but also with societal expectations and regulatory standards. Without fairness monitoring, even technically accurate models may fail ethically and legally.

Pillar 4: Lifecycle Governance

AI governance cannot be limited to pre-deployment review. It must span the entire model lifecycle to ensure long-term reliability and compliance.

A comprehensive governance framework covers:

- Design: Risk assessment, ethical review, and use-case validation

- Data Collection: Dataset quality checks and compliance alignment

- Training: Secure model development with audit documentation

- Validation: Performance, bias, and robustness testing

- Deployment: Governance approval and secure release management

- Monitoring: Continuous drift, bias, and anomaly detection

- Retirement: Controlled decommissioning and archival documentation

Continuous lifecycle governance prevents silent model degradation, regulatory violations, and operational surprises. In high-performing enterprises, governance is not a bottleneck; it is an enabler of sustainable AI scale. By embedding structured oversight mechanisms into every stage of AI development and deployment, organizations ensure their AI systems remain secure, compliant, ethical, and aligned with strategic objectives.

AI Risk Management: From Initial Identification to Continuous Oversight

Effective AI risk management is not a one-time compliance activity, it is a structured, lifecycle-driven discipline. Professional AI risk management services implement comprehensive frameworks that govern AI systems from conception to retirement, ensuring resilience, compliance, and operational integrity.

Stage 1: Comprehensive AI Risk Identification

Every AI initiative must begin with structured risk discovery. Organizations should conduct a multidimensional evaluation that examines:

- Business impact and criticality: What operational or financial consequences arise if the model fails?

- Regulatory exposure: Does the system fall under sector-specific regulations (finance, healthcare, public sector)?

- Data sensitivity: Does the model process personally identifiable information (PII), financial records, or protected health data?

- Model autonomy level: Is the AI advisory, assistive, or fully autonomous?

- End-user exposure: Does the system directly affect customers, patients, or employees?

High-risk AI systems particularly those influencing critical decisions which require elevated scrutiny and governance controls from the outset.

Stage 2: Structured Risk Assessment & Categorization

Once risks are identified, AI systems must be classified using structured assessment frameworks. This tier-based categorization determines the depth of oversight, documentation, and control mechanisms required.

High-risk AI categories typically include:

- Credit scoring and lending decision systems

- Healthcare diagnostic and treatment recommendation models

- Insurance underwriting and claims automation engines

- Autonomous industrial and manufacturing systems

- AI systems used in public policy or critical infrastructure

These systems demand enhanced governance measures, including formal validation protocols, regulatory documentation, and executive-level oversight. Risk categorization ensures proportional governance thus allocating more stringent safeguards where impact and exposure are highest.

Stage 3: Embedded Risk Mitigation Controls

Risk mitigation must be operationalized within AI workflows not layered on as an afterthought. Mature AI risk management frameworks integrate technical and procedural safeguards such as:

- Human-in-the-loop review checkpoints for high-impact decisions

- Real-time anomaly detection systems to identify unusual behavior

- Secure retraining pipelines with validated data sources

- Documented incident response and escalation frameworks

- Access segregation and role-based permissions

- Audit trails for model updates and configuration changes

By embedding mitigation mechanisms directly into development and deployment processes, organizations reduce exposure to operational failure, regulatory penalties, and reputational damage.

Stage 4: Continuous Monitoring & Audit Readiness

AI risk is dynamic. Models evolve, data distributions shift, and regulatory landscapes change. Static governance approaches are insufficient.

Continuous monitoring frameworks include:

- Data and concept drift detection algorithms

- Performance degradation alerts and threshold monitoring

- Bias trend analysis across demographic groups

- Security anomaly detection and adversarial activity tracking

- Automated compliance reporting and audit documentation generation

This ongoing oversight transforms AI governance from reactive damage control to proactive risk anticipation.

Organizations that implement continuous monitoring achieve:

- Faster issue detection

- Reduced compliance risk

- Greater operational stability

- Stronger stakeholder trust

From Reactive Risk Management to Proactive AI Resilience

True AI risk management extends beyond compliance checklists. It builds adaptive systems capable of detecting, responding to, and learning from emerging threats.

When implemented effectively, structured AI risk management:

- Protects business continuity

- Safeguards sensitive data

- Enhances regulatory alignment

- Preserves brand reputation

- Enables responsible innovation at scale

AI risk is inevitable. Unmanaged AI risk is not.

AI Compliance: Navigating Global Regulatory Frameworks

Regulatory pressure around AI is accelerating globally. Enterprises require structured AI compliance solutions integrated into development pipelines.

EU AI Act

The EU AI Act mandates:

-

- Risk classification

- Conformity assessments

- Transparency obligations

- Incident reporting

- Technical documentation

Non-compliance may result in fines up to 7% of global revenue.

U.S. AI Governance Directives

Emphasis on:

-

- Algorithmic accountability

- National security risk assessment

- Bias mitigation

- Model transparency

Industry-Specific Compliance

- Healthcare:

- HIPAA compliance

- Clinical validation protocols

- Finance:

- Model risk management frameworks

- Fair lending audits

- Insurance:

- Anti-discrimination controls

- Manufacturing:

- Autonomous system safety standards

Integrated AI compliance solutions reduce audit risk and regulatory exposure.

Secure Build Compliant & Secure AI Solutions — Get a Free Strategy Session

Responsible AI Development: Engineering Ethical Intelligence

Responsible AI development operationalizes ethical principles into enforceable technical standards.

It includes:

- Privacy-by-design architecture

- Inclusive dataset sourcing

- Clear documentation standards

- Sustainability-aware model training

- Transparent stakeholder communication

- Ethical review committees

Responsible AI improves:

- Regulatory alignment

- Customer trust

- Investor confidence

- Long-term scalability

Ethics and engineering must operate in alignment.

Why Enterprises Need a Secure AI Development Partner ?

Deploying AI at enterprise scale is no longer just a technical initiative; it is a strategic transformation that intersects cybersecurity, regulatory compliance, risk management, and ethical governance. Building secure and compliant AI systems requires deep cross-disciplinary expertise spanning data science, infrastructure security, regulatory law, model governance, and operational risk frameworks. Few organizations possess all these capabilities internally.

A strategic, secure AI development partner brings structured oversight, technical rigor, and regulatory alignment into every phase of the AI lifecycle.

Such a partner provides:

- Advanced AI security services to protect data pipelines, models, APIs, and infrastructure from evolving threats

- Structured AI governance frameworks embedded directly into development and deployment workflows

- Lifecycle-based AI risk management services covering identification, assessment, mitigation, and continuous monitoring

- Regulatory-aligned AI compliance solutions tailored to global and industry-specific mandates

- Demonstrated expertise in responsible AI development, including bias mitigation, explainability, and transparency controls

Without governance and security, AI innovation can amplify enterprise risk, exposing organizations to regulatory penalties, operational failures, intellectual property theft, and reputational damage. With the right secure AI development partner, innovation becomes structured, resilient, and strategically sustainable. AI innovation without governance increases enterprise exposure. AI innovation with governance builds long-term competitive advantage.

Trust Is the Infrastructure of AI

AI is reshaping industries at unprecedented speed, but innovation without trust creates fragility, risk, and long-term instability. Sustainable AI adoption demands more than advanced models; it requires strong foundations. Enterprises that embed robust AI security services, scalable governance frameworks, continuous risk management processes, regulatory-aligned compliance systems, and structured responsible AI practices will define the next phase of digital leadership. In the enterprise AI era, security protects innovation, governance protects reputation, compliance protects longevity, and trust protects growth. Trust is not a soft value; it is operational infrastructure. At Antier, we engineer AI systems where innovation and governance evolve together. We help enterprises scale AI securely, responsibly, and with confidence.

Crypto World

Will Hedera price crash as stablecoin supply and app revenue decline?

Hedera price has been in a downtrend over the past month as the token continues to be bruised by the geopolitical concerns that have pushed investors away from risk assets.

Summary

- Hedera price dropped to a six-week low of $0.083, down over 12% in a month amid weak market sentiment and geopolitical tensions.

- On-chain activity declined, with DeFi app revenue falling nearly 70% and stablecoin supply dropping 6%, signaling reduced network usage and liquidity.

- Technical indicators remain bearish, with price trading in a descending channel and key support seen at $0.087.

According to data from crypto.news, Hedera (HBAR) price fell to a six-week low of $0.083 on Tuesday, down over 12% in the past month and over 20% from its year-to-date high.

Hedera price fell amid weakness in its underlying ecosystem activity as key performance indicators started to flash red. Data from DeFiLlama shows that revenue generated by DeFi apps on the network had slumped nearly 70% from the previous month’s high.

A drop in app revenue means that a lower number of users are interacting with the Hedera ecosystem, signaling weakening demand for its decentralized applications and reduced overall network usage.

Third-party data also show that the total supply of stablecoins on the network has fallen 6% over the past 7 days to $52.71 million. Declining stablecoin supply typically reflects reduced liquidity and capital inflows on the network, further reinforcing signs of slowing activity.

Hedera price has also remained in a downtrend due to reduced investor appetite for risk assets amid the ongoing U.S.-Iran war that has led to a flight to more traditional safe-haven assets such as gold and U.S. equities.

On the daily chart, Hedera price has been trading within a descending parallel channel pattern, a formation where the asset consistently makes lower highs and lower lows. As long as an asset trades within such a pattern, it will likely continue to face persistent selling pressure as it bounces between the upper and lower boundaries.

Technical indicators also appear to portray a bearish outlook for Hedera price in the upcoming sessions. Notably, the Bollinger Bands have begun to narrow, with the price trading below the middle band, suggesting contracting volatility while the short-term trend remains tilted to the downside.

The Aroon Down is at 92.86% while the Aroon Up remains at 0%, indicating strong downward momentum and that a recent low has likely been established within the current trend.

For now, the immediate support level for Hedera price lies at $0.087, which aligns with the 23.6% Fibonacci retracement level. A drop below this level could increase selling pressure and open the door for a move toward lower support zones.

Disclosure: This article does not represent investment advice. The content and materials featured on this page are for educational purposes only.

Crypto World

Inside Coinbase’s push to bring prediction markets on chain and on venue

Coinbase is folding regulated prediction markets into its “everything exchange” vision, using The Clearing Company to clear on‑chain event contracts beside crypto and stocks.

Summary

- Coinbase is moving prediction markets from a Kalshi integration toward an on‑chain, in‑house stack after acquiring The Clearing Company, aiming to keep them inside a regulated perimeter.

- In Europe, financial‑underlying prediction markets fall under MiFID while politics and sports are pushed into fragmented national gambling regimes, leaving most current on‑chain volume in regulatory limbo.

- Coinbase is already experimenting with cross‑margining via perpetual futures and sees long‑term scope to extend collateral efficiency across prediction markets, crypto, and tokenized assets on a single venue.

Coinbase’s push to become an “everything exchange” will increasingly run through regulated prediction markets rather than just spot crypto, according to Côme Prost‑Boucle, the exchange’s head of international listings, speaking with crypto.news at ETHGlobal Cannes on March 31.

For Prost‑Boucle, prediction markets are not a novelty bolt‑on. They sit at the core of Coinbase’s plan to become what he calls an “everything exchange.” “The whole strategy is pretty simple,” he told crypto.news.

“We want to build the everything exchange with Coinbase, meaning that we want to bring under one regulated umbrella all of the asset classes that you can imagine and offer this to both our retail customers and our institutional customers.”

Coinbase leading the way to become an ‘Everything Exchange’

That umbrella now stretches beyond spot crypto into derivatives, options, tokenized stocks and equities, token sales and, crucially, event‑based contracts that let users trade on future outcomes. “We have this whole breadth of different products that we’re bringing into one umbrella, which is Coinbase,” he said. “Our goal is to push this to as many users as possible across the world, and the reaction has been pretty tremendous so far.”

Coinbase’s debut in prediction markets was deliberately conservative. The initial launch in the U.S. leaned on Kalshi, the CFTC‑regulated event‑contract venue, giving the product an immediate regulatory backbone but also clear constraints on geography and design.

“The first iteration of the product is available in the US and in a couple of regions, but for instance, it’s not available in Europe because of lack of regulatory clarity,” Prost‑Boucle said. That version effectively pipes Kalshi’s markets into the Coinbase interface, letting users trade small‑ticket contracts on elections, sports, macro data and other real‑world events while staying inside a U.S. event‑contract framework.

The second phase is more aggressive. In December, Coinbase agreed to acquire The Clearing Company, a specialist prediction‑market clearing startup with roots in the existing event‑contract ecosystem.

Prost‑Boucle referred to it in the interview as “a company called The Clearing House,” but the strategic intent is clear. “The goal is for us to bring these capacities internally so that we can develop this product on chain and we can develop with the DNA that we have to bring all asset classes on chain,” he said. In effect, Coinbase is moving from renting regulated rails to owning the clearing and risk stack, and then pushing more of the lifecycle on‑chain while staying within the event‑contract perimeter. That stands in contrast to crypto‑native venues such as Polymarket, which prioritizes unconstrained on‑chain liquidity first and only later began to grapple with regulatory structure.

Prediction markets dominate conversation at ETHGlobal

If prediction markets are to sit alongside crypto, derivatives and tokenized stocks in a single app, collateral efficiency will determine whether users actually route meaningful size through Coinbase. Here, Prost‑Boucle says institutional desks are already applying pressure. “That’s also something that institutional clients have been pushing for,” he noted when asked about cross‑margining prediction markets with other Coinbase products. “We’re currently doing cross‑margining for our perpetual futures product, and that’s something that our institutional clients have been craving,” he added, pointing to demand for “always‑on exposure possibilities, weekend hedging, all of this that perpetual futures have as internal features.” The logical goal is to have a single collateral pool backing BTC perpetuals, tokenized equity and a portfolio of geopolitical or macro event contracts, rather than trapping capital in isolated silos across venues. “At the moment we’re working on this product,” he said of cross‑margining, “but I think that’s a good vision for us in the longer term—to have cross‑margining across the different asset classes, I guess.”

The main structural obstacle to that vision is Europe. “Prediction markets in the EU are pretty difficult to apprehend because there’s no unified regulatory framework,” Prost‑Boucle said. “It all depends on what you have as an underlying asset.” He draws a sharp line that mirrors emerging legal commentary: a contract on the future price of Bitcoin is treated as a financial derivative under MiFID, while a contract on an election or football match is pushed into gambling. “If the contract lies on a financial underlying asset, that would be regulated by MiFID,” he explained. “But all of the other classes, where currently all of the volumes are—on politics, on sports, this would be regulated under gambling laws in Europe.”

That split leaves most of today’s on‑chain volume—heavily skewed toward politics and sports—in regulatory limbo from the perspective of a regulated exchange. Any operator that wants to offer political or sports markets across the bloc has to navigate a patchwork of national gambling regimes, each with its own licensing, consumer rules and, in some cases, state monopolies. “It means you would have to go for every single European gambling law, because there is no unified regulatory framework,” Prost‑Boucle said. “These laws are pretty national, they’re quite country‑specific and they’re quite hard to get.” Despite that, he is not writing off the region. “I guess we’re still hopeful that at some point we’re going to have regulatory clarity on prediction markets and a better structure in Europe that enables this type of contract to flourish as well,” he said.

Beyond trading revenues, Coinbase clearly sees prediction markets as an information layer that competes with polling, research, and even traditional media. Prost‑Boucle points to cases in the U.S. where broadcasters are already embedding live market odds, such as CNBC, CNN, the Dow Jones and other media recently integrating Polymarket odds into the ‘traditional’ newscycle.

That, in turn, brings the problem of truth into focus. Once markets start pricing geopolitics, conflicts, and leadership changes, disputes over what actually happened can become payout disputes. That means oracles used to resolve contracts may be facing increasing scrutiny from not only bettors, but also regulators.

Prost‑Boucle argues that most of the damage begins with poor contract design. “It’s crucial when you enter a contract to look at what the event criteria are,” he said. “Obviously you want to diversify sources of truth and have kind of fixed criteria to make sure there is no ambiguity when an event like this happens,” he added. Asked whether AI agents could help by aggregating across outlets and delivering a consolidated verdict, he is open but cautious. “Potentially, AI could be helping with sorting out across different sources‑of‑truth venues and making sure that we have a consolidated view and a fixed view that is not biased by any specific media or even a group of people,” he said.

For now, Coinbase’s approach is less about chasing the wildest version of prediction markets and more about proving they can live inside the same rule‑set as everything else on the platform: keep them in a regulated perimeter, pull clearing and risk in‑house via The Clearing Company, and wire the whole thing into a broader multi‑asset venue where collateral actually earns its keep across products. As Brian Armstrong has put it in other contexts, Coinbase wants to be “the most trusted bridge” into the crypto economy, and in that frame, everything else—from MiFID hair‑splitting in Brussels to the next generation of AI‑driven oracles—is just another set of constraints to engineer around, not a reason to sit out a market.

Crypto World

CoinShares Stock Debuts on Nasdaq After $1.2B SPAC Deal

CoinShares, a European-based digital asset manager, is slated to make its US public markets debut today following the completion of a special purpose acquisition company (SPAC) merger, highlighting the crypto industry’s deepening ties with public markets.

The company announced Wednesday that it had finalized a previously announced business combination with Vine Hill Capital Investment Corp., resulting in the formation of a new holding entity, CoinShares PLC. The combined company begins trading on the Nasdaq on Wednesday under the ticker symbol CSHR.

The transaction, first unveiled in September, values CoinShares at approximately $1.2 billion and includes a $50 million capital commitment from institutional investors.

Although the Nasdaq debut marks CoinShares’ entry into US public markets, the company was already publicly traded in Europe prior to the listing.

A US listing aims to attract institutional capital, wider analyst coverage and increased visibility, while positioning CoinShares to expand its footprint in the world’s largest financial market. The move also comes as the regulatory backdrop for digital assets in the United States continues to evolve.

CoinShares manages more than $6 billion in assets and is one of Europe’s largest crypto-focused investment firms. It is best known for its crypto exchange-traded products (ETPs), which are listed on European exchanges.

A tougher backdrop for crypto stocks

The backdrop for digital asset companies has shifted dramatically since September, when CoinShares’ SPAC deal was first announced.

The exchange-traded fund issuer’s CoinShares Bitcoin Mining ETF (WGMI) is down more than 22% in the last six months, Yahoo Finance data shows.

The crypto market has since lost more than half its value, following a broad correction in digital asset prices, declining trading volumes and the fallout from the Oct. 10 crypto liquidation event that triggered widespread deleveraging, alongside a more volatile environment for capital raising and investors.

Crypto-linked equities have been among the hardest hit. Companies such as Coinbase, Gemini and Figure Technologies are down sharply this year, while Circle has bucked the trend amid continued growth in stablecoins.

However, analysts at Bernstein don’t expect the downturn to persist. In a recent note, they said crypto-related stocks could be nearing a bottom heading into first-quarter earnings, which are widely expected to reflect weak performance.

Related: Circle plunged on CLARITY Act fears, but fundamentals unchanged — Bernstein

Crypto World

S&P Dow Jones Indices and Kaiko Bring iBoxx Treasury Index On-Chain via Canton Network

At Kaiko’s Cannes conference, S&P DJI and Kaiko unveiled plans to tokenize the iBoxx U.S. Treasury index on Canton, turning it into programmable on-chain IP.

Summary

- iBoxx U.S. Treasuries is being brought natively on Canton alongside DTCC’s on-chain Treasuries to support index-linked product issuance on the same infrastructure.

- S&P will distribute the index as a smart contract token embedding full index data, IP rights, licensing terms, fees and access controls.

- The model treats index data “like a financial asset,” enabling traceability, automated fee collection and reusable, scalable licensing on-chain.

At the Agora Kaiko conference in Cannes on March 31, S&P Dow Jones Indices’ Chief Product and Operations Officer Cameron Drinkwater and Kaiko CEO Ambre Soubiran unveiled a partnership to tokenize one of S&P’s flagship fixed-income benchmarks, the iBoxx U.S. Treasury index, on the Canton network, turning the index itself into a programmable on-chain IP product rather than a simple price feed.

New Canton, Kaiko and S&P DGI partnership announced

Kaiko CEO Ambre Soubiran announced that “Kaiko and S&P DGI, we’ve been partnering now in tokenizing one of the biggest S&P benchmarks, the iBoxx index, and bringing that onto the Canton Network.” The move follows DTCC’s decision to bring U.S. Treasuries natively onto Canton (CC), which Drinkwater described as “a natural opportunity for us to bring the iBoxx Treasury index also on Canton to give product developers or counterparties a tool to use with the physical underlying also on that chain.”

Soubiran emphasized this is “not just publishing the price of the benchmark on the network.” Instead, S&P is “actually creating a smart contract token that contains all of the index data,” so that clients receive “a smart contract containing the index data but also explicitly having licensing and fees and access control all embedded into a smart contract.” She framed it as “more about a distribution play rather than a data play,” delivering the full index product on-chain.

Drinkwater said choosing iBoxx was a “total no-brainer” because with DTCC putting U.S. Treasuries on Canton, “you have the underlying” and “a very active kind of treasury institutional trade landscape on Canton” plus “real demand for the iBoxx Treasury index to be used as a underlying for product issuance on the Canton chain.”

On-chain IP and data-as-asset

For S&P, tokenizing indices as full IP products changes how licensing and economics work. Drinkwater argued that “one of the great advantages for an IP issuer like ourselves on chain is we actually have better auditability, visibility in how IP is being used, reporting on that use case and… instantaneous reporting and potentially commercial exchange based on that smart contract.” In traditional markets, he noted, S&P is “dependent on delayed reporting on volumes,” often disputed, followed by “multiple months on contract settlement,” whereas on chain “the whole timeline pulls in quite considerably” with “far less opportunity for dispute.”

Soubiran linked this to a broader shift: “the more we bring capital markets applications on chain, the more we bring data on chain, especially private and IP protected data, the more we need to treat data like a financial asset.” Blockchain infrastructure, she said, enables “traceability of data and treat data like a financial asset and trace where that data goes,” which is “great from a IP protection standpoint” and for “programmatically” managing monetization of IP in financial products.

Drawing on Kaiko’s own index business, she noted that many index fee arrangements are tied to AUM and turnover, with end-of-year reconciliations still “quite heavily manual.” Moving indices on-chain allows firms to “on chain verify what is the AUM related to the financial product that is linked to your index or your benchmark” and enable “daily fee collection based on daily turnover.” It is, she said, “not necessarily a novel product, it’s just a novel way of distributing” existing benchmarks.

Composability, evergreen contracts and Canton

Both speakers highlighted composability as a key benefit of this design. “The idea of tokenizing an index is for product issuers… to consume that index product natively on chain and wrap it into a index-linked financial product,” Soubiran explained, calling the application of composability to data products “extremely new and powerful.”

Drinkwater described the structure as layered: “you can think of the token being the index and then the smart contract being wrapped around it and that’s the use case, the use case specific terms and conditions, audit rights, etc.” That wrapper “can be tailored to whatever use case clients come to us for, but then it’s repeatedly usable. It’s evergreen. It’s on chain.” Compared with today’s model, where “clients have to come to us for every use case, it’s a new schedule on their MSA,” he said this offers “a very frictionless process of getting new product issued on chain, massively speeding up timelines,” and a “reusable infrastructure that really benefits all parties.”

On why Canton matters, Drinkwater pointed to its ability to straddle public and private workflows. On fully public chains like Ethereum, “that reporting is going to be public,” which does not fit “a lot of our use cases” such as “private exchange swaps… between institutions and they don’t want that public.” Canton’s setup, he said, lets reporting be “private when it needs to be private, public where it can be public, but back to us nonetheless,” unifying reporting across use cases in a way that “in TradFi is not the case.”

Soubiran framed the broader aim as servicing “almost a new addressable market that is your existing clients moving to an infrastructure that is programmatic and a little bit more disintermediated,” stressing that “a lot of great things exist in our current financial system,” but that the opportunity lies in “making things more automated… more programmatic in the transfer of information, the transfer of data.”

S&P’s broader digital roadmap

Drinkwater placed the Kaiko and Canton partnership within S&P’s longer digital asset strategy. He recalled that SPY “was not SPY for the first decade of its life, but it flag planted,” and said S&P understands “the power of moving first and establishing real use cases in new technology.” With a brand “known and trusted by institutions and retail alike,” S&P wants “to move first and early when we have conviction in new products and new technologies because we need our brand to be firmly planted there as an established entity.”

Over the last year, he said, S&P has “very selectively” chosen “high quality players as partners and putting IP on chain where we saw very discrete and tangible use cases,” citing the on-chain S&P 500 token with Centrifuge and the Digital Markets 50 index with Genari that bundles blockchain-exposed equities and cryptocurrencies in a structure “hard to replicate in TradFi.” Even so, he signaled he is “most excited about the innovation that we’re pushing today” with tokens wrapped in smart contracts that are “tailored to use cases, but extensible and evergreen on chain,” because this “unlocks so many use cases and scalability of our IP.”

Crypto World

Who is Keven Warsh, Trump’s Pick for the Federal Reserve?

The US Senate could soon hear testimony to confirm financier Kevin Warsh as the new chair of the Federal Reserve.

Warsh, who previously served on the Fed’s Board of Governors from 2006 to 2011, has criticized the central bank’s policies under current chair Jerome Powell. Warsh has called for “regime change” and lower interest rates.

Regarding crypto, Warsh has a somewhat nuanced approach. He hails Bitcoin as a sustainable store of value, but claims it doesn’t function as money.

Lower interest rates and a fairly open attitude toward crypto could be good news for digital asset prices, which most investors perceive as risk-on. But even if Warsh passes his nomination, there’s no guarantee he’ll affect the changes expected.

Warsh wants to lower Fed interest rates, but can he?

Warsh, a graduate of Stanford and Harvard, started his career at Morgan Stanley, where he eventually became a VP and executive director. He then served as an executive secretary of the White House National Economic Council under President George W. Bush.

Bush nominated him to the Board of Governors of the Federal Reserve in 2006, where his hawkish views on inflation often differed from his colleagues. He was critical of the aggressive use of its balance sheet, which he said led to a period of “monetary dominance” that artificially depressed rates.

Some of this appears to have changed in recent years. In a November 2025 op-ed for the Wall Street Journal, Warsh criticized Powell’s leadership at the Fed, claiming that “inflation is a choice, and the Fed’s track record under Chairman Jerome Powell is one of unwise choices.”

He said “credit on Main Street is too tight” and that the Fed’s balance sheet, which is “bloated” due to past crisis-management efforts, “can be reduced significantly.”

“That largesse can be redeployed in the form of lower interest rates to support households and small and medium-size businesses,” he said.

Plans for cutting interest rates come at an economically fraught time. The US and Israel’s joint attack on Iran, which could soon escalate into an invasion if US President Donald Trump so decides, has wreaked havoc on oil prices.

Increasing oil prices had a direct effect on the core inflation metrics the Federal Reserve uses when considering rate changes. This could put the damper on any plans for rate cuts, at least certainly under Powell.

Warsh told Barron’s that the “core theory of inflation that the Fed is using” is “mistaken.” He said that “we need to fundamentally rethink macro, which is a fundamental rethink of the core economic models that the Fed is using.”

In his accounting, rising wages and commodity prices are not to blame for inflation. Rather, “at the core, I think inflation comes about when the government spends too much and prints too much.”

Returning to monetarism, as well as dumping some of the debt held by the Federal Reserve, could help address inflation concerns, in his view.

Bankers and former Bush administration officials have congratulated Warsh on the nomination. Former US Secretary of State Condoleezza Rice said the Fed would “benefit from his steady, principled leadership.”

“He understands the central bank’s key role for the United States and our allies around the world,” she said.

Bank of England Governor Andrew Bailey has also welcomed Warsh’s nomination. He said that he knew both Powell and Warsh well, and that “They’re both very qualified.”

Qualifications aside, Warsh may find it difficult to enact his preferred policies.

Roger W. Ferguson Jr., the Steven A. Tananbaum Distinguished Fellow for International Economics at the Council on Foreign Relations (CFR), and Maximilian Hippold, a research associate for international economics at CFR, wrote that Warsh won’t revolutionize the Fed.

They said that the chair alone does not make inflation rate decisions. “They are determined by the Federal Open Market Committee (FOMC), a twelve-member body that includes seven Fed governors and five regional Fed presidents.” The chair can’t change policy without convincing a majority.

Others argue that Warsh’s interest in lowering interest rates is a recent pivot and may not be a core conviction around which he will focus central bank policy. A December 2025 analysis from Deutsche Bank noted Warsh’s response to the global financial crisis in 2008, when he was a Governor at the Fed.

“His views while he was a Governor around the GFC [global financial crisis] at times skewed more hawkish than his colleagues,” the report read. “Although Warsh has argued for lower rates recently, we do not view him as structurally dovish.”

They further questioned Warsh’s plans to lower interest rates and cut assets on the Fed balance sheet. “This trade-off would only be feasible if regulatory changes are made that lower banks’ demand for reserves. While several Fed officials have made this argument recently, including Vice Chair of Supervision Bowman and Governor Miran, it is not obvious these changes are realistic in the near-term.”

“The chair has just one vote amongst a particularly divided committee.”

Warsh’s nomination and Fed independence

Commentators have also drawn attention to Warsh’s connection to the Trump administration. Warsh’s father-in-law, Ronald Lauder, is a classmate of Trump and a major donor to his political campaigns.

His relatively recent opinions on low interest rates also make him uniquely suited to the role, at least in Trump’s eyes. Ferguson and Hippold wrote, “Trump believes he has found a successor who will align with his economic priorities in Warsh.”

The president has long bemoaned Fed officials who supposedly promise rate cuts, but then raise them once in office. “It’s too bad, sort of disloyalty, but they got to do what they think is right,” he said in a speech at Davos last year.

Trump has long pushed for lower interest rates, claiming that they are needed to spur his economic development plans. Powell’s refusal to acquiesce to the White House’s request led to political scandal.

Last year, the Department of Justice (DoJ) opened a criminal investigation into Powell, alleging that he misappropriated billions of dollars for new offices for the Federal Reserve.

A federal judge recently quashed the DoJ’s subpoenas in the case. Judge James Boasberg wrote in a memorandum opinion, “A mountain of evidence suggests that the dominant purpose is to harass Powell to pressure him to lower rates. For years, the President has publicly targeted Powell because the Fed is not delivering the low rates that Trump demands.”

Regarding his pick, Trump said in a January press event in the Oval Office that it would be “inappropriate” to ask Warsh about his stance on interest rates. “I want to keep it nice and pure, but he certainly wants to cut rates, I’ve been watching him for a long time.”

Just a couple of weeks later, in an interview with NBC, Trump said Warsh understands that he wants to lower interest rates. “But I think he wants to anyway. If he came in and said ‘I want to raise them’ […] he would not have gotten the job.”

But Warsh hasn’t “gotten the job,” at least not yet. He will face tough questioning from Democrats on the Senate Banking Committee, possibly as soon as April 13.

In a letter lambasting Warsh’s role in bailing out banks in 2008, Senator Elizabeth Warren, who serves on the committee, said, “I have no doubt that you will serve as a rubber stamp on President Trump’s Wall Street First agenda.”

Warren expected written responses to this, and to Warsh’s opinion about Trump’s “witch hunts” against Powell and Fed Governor Lisa Cook, by April 2.

Magazine: Nobody knows if quantum secure cryptography will even work

Crypto World

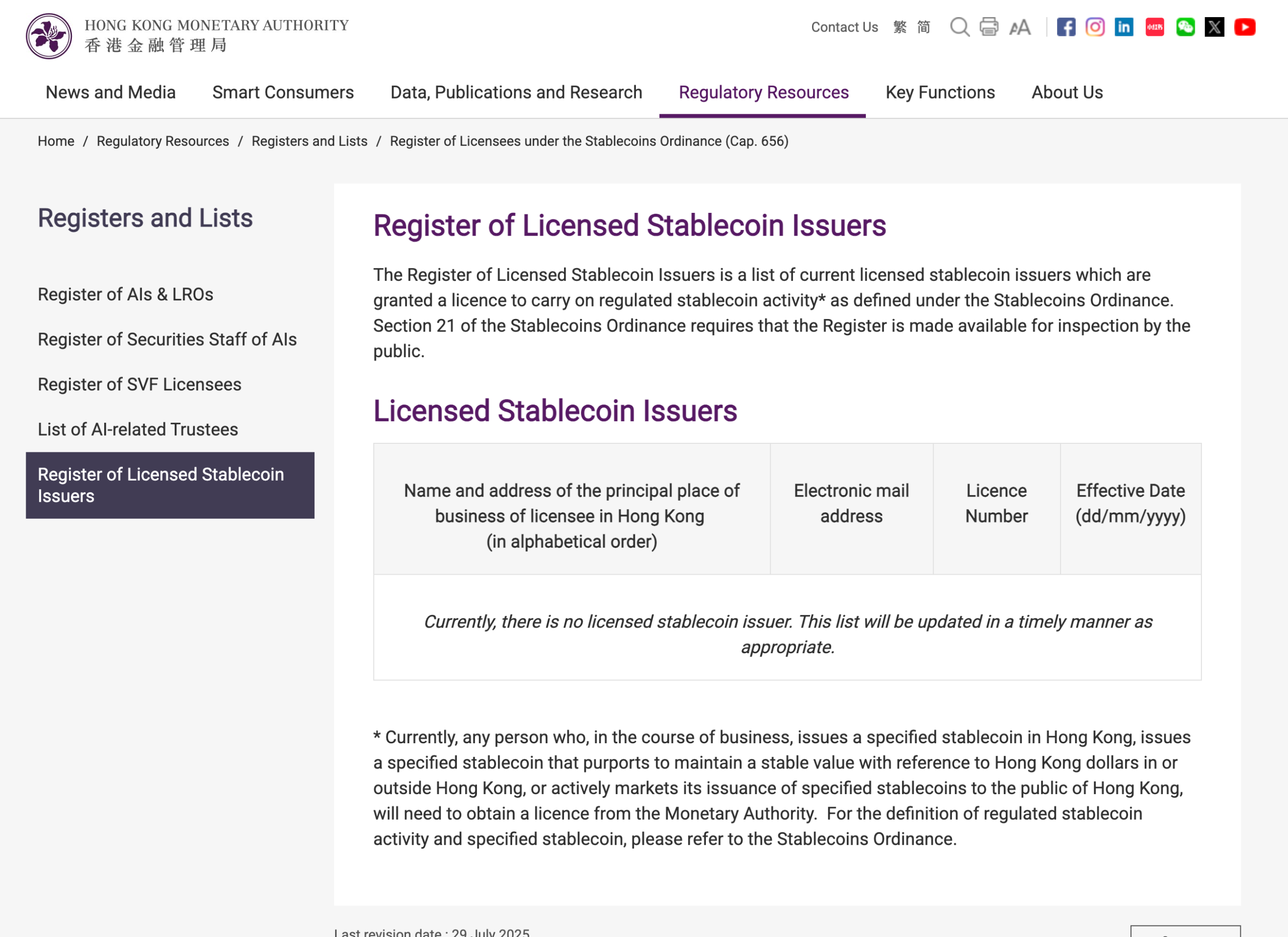

Hong Kong Misses March Deadline for Stablecoin Licences

Hong Kong’s first stablecoin licences failed to materialize by the expected end of March target, with the HKMA saying only that it is still advancing the process.

Hong Kong has missed an earlier end of March target for awarding its first stablecoin licences, with the Hong Kong Monetary Authority saying only that the licensing process is advancing and decisions will be announced shortly.

A spokesperson for the Hong Kong Monetary Authority (HKMA) told Cointelegraph that the HKMA is “actively taking forward the licensing matter and will announce further details in due course,” without offering a revised timetable.

The HKMA’s public register still showed no licensed stablecoin issuers at the time of writing.

The March timetable had been set out earlier by HKMA chief executive Eddie Yue, who reportedly told lawmakers in February that only a very small number of issuers would be approved initially and that reviews were focusing on use cases, risk management, anti-money laundering controls and backing assets.

HKMA misses March stablecoin target

Earlier reports indicated that global banking giants HSBC and a Standard Chartered-backed venture were among the frontrunners to receive approvals in the initial cohort, although the HKMA did not confirm the names of any successful applicants.

Hong Kong’s caution is partly a function of how strict the regime is. Cointelegraph previously reported that the city’s stablecoin framework requires issuers to fully back tokens with high-quality liquid reserves, process redemptions within one business day and maintain a physical presence in Hong Kong, alongside broader Know Your Customer and transaction monitoring controls.

The missed deadline comes as Hong Kong places stablecoin regulation at the heart of its strategy to become a global crypto and fintech hub.

China pressure clouds Hong Kong rollout

Cointelegraph previously reported that major fintech players, including Ant International, were preparing to seek Hong Kong stablecoin licenses as the city rolled out its new regime.

Related: How Hong Kong is turning tokenized bonds into real market infrastructure

In October 2025, the FT reported that Ant Group and JD.com had paused their Hong Kong stablecoin plans after regulators in mainland China, including the People’s Bank of China and the Cyberspace Administration of China, raised concerns about privately controlled digital currencies.

Big Questions: Is China hoarding gold so yuan becomes global reserve instead of USD?

Crypto World

Will BTC Price Hit $80K?

Michael Saylor’s Strategy (MSTR) looks set to restart its Bitcoin (BTC) accumulation engine after a short pause, with its STRC preferred stock likely funding fresh crypto purchases this week.

Key takeaways:

-

Strategy may purchase at least $76.25 million in Bitcoin this week.

-

Combined with a technical setup, Bitcoin may rise to $80,000 in April.

Strategy may buy at least 1,111 BTC this week

On Tuesday, STRC closed at $100.02, just above its $100 par value. Trading at or above par gives Strategy room to issue new shares, raise fresh capital and deploy the proceeds into Bitcoin.

Estimates from STRC.LIVE suggest Strategy had raised enough by Tuesday’s close to fund the purchase of more than 1,085 BTC, with the weekly total rising to over 1,111 BTC. That is equivalent to around $76.25 million.

This is a shift from the previous week, when STRC traded mostly below par and generated no estimated BTC purchases.

As of late March, the company held 762,099 BTC at an average acquisition price of about $75,694, according to its latest filings.

BTC rebounds as Strategy’s buying window reopens

The renewed buying window has coincided with a bounce in Bitcoin prices.

Since Tuesday, BTC/USD has climbed more than 5%, briefly reaching nearly $69,300. The move mirrors earlier gains seen during periods when Strategy was actively raising capital through STRC to buy Bitcoin.

One example came in the week ending March 15, when Bitcoin rose more than 10% despite weak broader risk sentiment. Over the same period, Strategy purchased 22,337 BTC worth about $1.57 billion.

The opposite dynamic emerged afterward. Bitcoin fell 14.55% over the next two weeks, roughly aligning with Strategy’s pause in purchases as STRC slipped below its $100 par value.

On March 23, Strategy unveiled a $44.1 billion capital-raising capacity to buy more Bitcoin via the sales of STRC and other preferred stocks, indicating that it would remain a meaningful source of Bitcoin demand in the coming months.

Stretch Dividend Rate maintained at 11.50% for April 2026. $STRC pic.twitter.com/8Jl0QlfNhK

— Michael Saylor (@saylor) April 1, 2026

Bitcoin eyes $80K after bouncing from flag support

From a technical standpoint, Bitcoin’s rebound began after it retested the lower boundary of its prevailing bear flag pattern as support.

BTC could advance toward the flag’s upper trendline near $80,000 in April if the recovery gains further traction, particularly if boosted by renewed Strategy buying and signs of easing Iran war tensions.

The $80,000 upside target also aligns with the 50-period exponential moving average on the three-day chart, making the area a key near-term resistance zone.

Related: Bitcoin ETFs post $1.3B in March inflows, first monthly gain of 2026

Conversely, Bitcoin risks losing the flag’s lower trendline support and confirming the pattern’s typical bearish breakdown if those supportive catalysts fade.

In that scenario, the measured downside target would come in near the $49,000–$50,000 zone. That aligns with the downside projections shared by multiple analysts in the past.

This article is produced in accordance with Cointelegraph’s Editorial Policy and is intended for informational purposes only. It does not constitute investment advice or recommendations. All investments and trades carry risk; readers are encouraged to conduct independent research before making any decisions. Cointelegraph makes no guarantees regarding the accuracy or completeness of the information presented, including forward-looking statements, and will not be liable for any loss or damage arising from reliance on this content.

Crypto World

Franklin Templeton Expands Crypto Arm With CoinFund Deal

Global asset manager Franklin Templeton is set to expand its crypto footprint by acquiring a spinoff of the crypto-native investment firm CoinFund.

Franklin Templeton said Wednesday it plans to acquire 250 Digital, a CoinFund spinoff that runs liquid crypto investment strategies, expanding the asset manager’s digital asset business. The deal will form part of a new unit called Franklin Crypto once it closes.

The move follows CoinFund’s decision earlier this year to spin out its liquid strategies business into 250 Digital as the company sharpened its focus on venture investing.

Christopher Perkins will lead the new Franklin Crypto, and Seth Ginns will serve as chief investment officer alongside Franklin Templeton digital assets veteran Tony Pecore, as the company broadens its crypto investment platform for institutional clients.

The deal will incorporate BENJI tokens, which represent ownership shares in the Franklin OnChain US Government Money Fund (FOBXX), a regulated money market fund tokenized by Franklin Templeton in 2021.

Acquisition involves all liquid strategies previously run by CoinFund

Franklin said the undisclosed transaction includes the 250 Digital investment team and all liquid cryptocurrency strategies previously run by CoinFund, and that it will also invest in those strategies as part of the agreement.

The transaction is expected to close in the second quarter of 2026, subject to the execution of definitive transaction agreements, client consents and other customary closing conditions.

Franklin Templeton’s digital asset arm manages around $1.8 billion in assets and is a major institutional player in the crypto industry, where it has been building a presence since 2018.

The company is known for being one of the first to launch a US-listed spot Bitcoin ETF alongside other major asset managers such as BlackRock in 2024.

Related: Franklin Templeton, Ondo to launch tokenized ETFs with 24/7 trading via crypto wallets

The acquisition comes during a prolonged slump in the crypto market, with Bitcoin down around 45% from its peak above $126,000 recorded in October 2025.

However, Franklin Templeton says the environment is attracting talent and creating opportunities to build long-term infrastructure.

Franklin’s head of innovation, Sandy Kaul, told The Wall Street Journal the recent market selloff helped create an opening to expand.

“This big selloff that we had in the crypto markets is creating a very unique opportunity that really made us all decide that this is the right time to pull the trigger,” Kaul said.

Crypto World

Ripple Brings Crypto Capabilities to Treasury Management Systems

Ripple has added digital asset capabilities to its treasury management platform, allowing corporate finance teams to hold, track and manage cryptocurrencies and fiat balances within a single system, the company said.

According to a company announcement, the update introduces Digital Asset Accounts and a unified dashboard that aggregates balances across bank accounts, custody providers and onchain wallets, giving treasury teams real-time visibility into both cash and digital assets.

The system supports assets including XRP (XRP) and Ripple USD (RLUSD), with balances updated in real time and recorded alongside fiat transactions. APIs connect external custodians and sync activity into the platform, according to Ripple.

Ripple said the update embeds digital asset functionality directly into its treasury system, rather than requiring separate crypto platforms. The company said this could reduce reliance on manual reconciliation and fragmented reporting across banking and custody systems.

Mark Johnson, chief product officer at Ripple, told Cointelegraph the shift is about making digital assets “a core part of treasury operations,” allowing companies to manage them alongside traditional balances while enabling use cases such as stablecoin settlement and yield on idle cash.

The launch follows Ripple’s October acquisition of GTreasury for $1 billion. The company said the product is already live for customers in beta ahead of a broader rollout, with availability varying by jurisdiction depending on regulatory requirements and geography.

Related: Ripple CEO says stablecoins could be crypto’s ‘ChatGPT moment’ for businesses

Digital assets move into financial infrastructure

A survey published by Ripple in March found that 72% of more than 1,000 global finance leaders believe companies must offer digital asset solutions to remain competitive, reflecting growing focus on custody, security and infrastructure.

The findings point to a broader shift from adoption to integration, as institutions look to incorporate these assets into existing financial systems rather than manage them separately.

That transition is driving increased activity across financial infrastructure. In July, Visa expanded its settlement platform to support additional stablecoins and blockchain networks, building on its initial use of USDC (USDC) for settlement in 2021.

Banks have also begun integrating tokenized money into their operations. In November, JPMorgan expanded access to its JPM Coin deposit token, allowing institutional clients to move funds on blockchain networks for real-time settlement.

Similar efforts are emerging in credit and capital markets. In October, Securitize and BNY said they would collaborate to bring instruments such as collateralized loan obligations onchain.

Magazine: XRP yet to ‘price in’ 3 bullish catalysts, Bitcoin to $80K? Trade Secrets

Crypto World

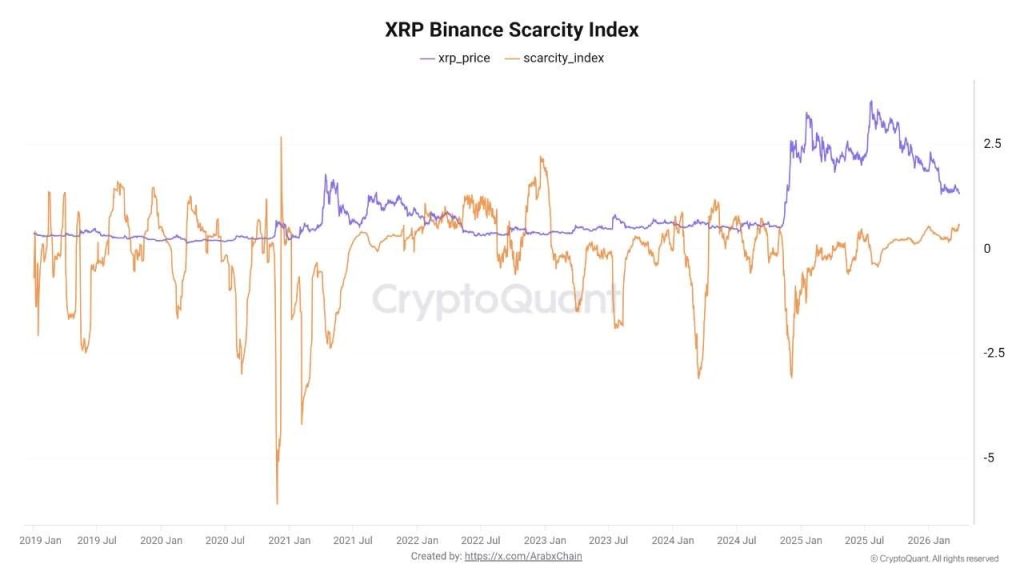

XRP Crypto Holders Pull Coins Off Exchanges, On-Chain Data Signals Supply Shock

XRP crypto is trading at $1.32, and while the price chart looks fragile, the on-chain data underneath it is telling a different story.

Chain’s scarcity indicator for XRP on Binance has hit 0.59 – its highest reading since 2024 – as coins leave exchanges at a pace that is mechanically compressing the available sell-side pool.

The magnitude is not subtle. On March 10 alone, approximately $738 million worth of XRP was withdrawn from major platforms in a single 24-hour window, described by analysts as one of the most substantial single-day net outflows recorded year-to-date.

February saw 7.03 billion XRP exit centralized exchanges entirely, with Binance accounting for roughly 3.38 billion of that volume. The supply mechanics are shifting – but the price hasn’t fully priced it in yet.

Discover: The best pre-launch token sales

XRP Crypto Price Prediction: Can $1.40 Hold as Exchange Balances Drop?

XRP is pressing against the $1.40 resistance zone that analysts have flagged as the critical battleground. Below it, the $1.27–$1.30 band represents the next meaningful support cluster.

The RSI on the daily is hovering near 42 – not oversold, but not generating momentum signals either. The 50-day EMA sits just above spot price, capping intraday recovery attempts.

The on-chain divergence is the real tension here. Whale wallets accumulated approximately 40 million XRP in March even as US-listed XRP spot ETFs – now holding a combined $1.02 billion in assets – recorded $30.12 million in net outflows over the same period.

CoinShares data puts global XRP fund outflows at $130 million for the month. Institutional selling and whale buying are colliding directly at $1.40.

On the chart, $1.27 is the line that really matters, because as long as price holds above it, the accumulation story stays intact, especially with whales stepping in and ETF flows starting to stabilize, which could open the door for a push through $1.40 and a move higher if momentum follows.

But right now it is more of a tug of war, with XRP likely chopping between $1.27 and $1.40 while the market figures itself out, because you have strong accumulation on one side and lingering sell pressure on the other, and neither has fully taken control yet.

If that $1.27 level breaks clean with volume, the whole setup starts to fall apart fast and opens the door for a deeper pullback, because at that point price is no longer respecting the accumulation zone, and that always takes priority over any on chain signal.

What makes this cycle different is the institutional layer, with players like Bitwise holding massive chunks of XRP through ETF products, meaning even small outflows can hit the order book hard, while Ripple keeps building out its infrastructure in the background, which is exactly the kind of long term story bigger players tend to front run.

Explore: Best crypto assets to diversify your portfolio

The post XRP Crypto Holders Pull Coins Off Exchanges, On-Chain Data Signals Supply Shock appeared first on Cryptonews.

-

Business6 days ago

Business6 days agoInstagram, YouTube Found Responsible for Teen’s Mental Health Struggle in Historic Ruling

-

Tech6 days ago

Tech6 days agoIntercom’s new post-trained Fin Apex 1.0 beats GPT-5.4 and Claude Sonnet 4.6 at customer service resolutions

-

NewsBeat5 days ago

NewsBeat5 days agoThe Story hosts event on Durham’s historic registers

-

Sports5 days ago

Sports5 days agoSweet Sixteen Game Thread: Tide vs Michigan

-

Entertainment2 days ago

Fans slam 'heartbreaking' Barbie Dream Fest convention debacle with 'cardboard cutout' experience

-

Entertainment4 days ago

Entertainment4 days agoLana Del Rey Celebrates Her Husband’s 51st Birthday In New Post

-

Crypto World2 days ago

Dems press CFTC, ethics board on prediction-market insider trades

-

Tech3 days ago

Tech3 days agoThe Pixel 10a doesn’t have a camera bump, and it’s great

-

Crypto World5 hours ago

Crypto World5 hours agoGold Price Prediction: Worst Month in 17 Years fo Save Haven Rock

-

Sports1 day ago

Sports1 day agoTallest college basketball player ever, standing at 7-foot-9, entering transfer portal

-

Tech2 days ago

Tech2 days agoEE TV is using AI to help you find something to watch

-

Tech2 days ago

Tech2 days agoApple will hide your email address from apps and websites, but not cops

-

Entertainment7 days ago

Entertainment7 days agoHBO’s Harry Potter Series Will Definitely Fail For One Big Reason, And It’s Not J.K. Rowling Or Snape

-

Tech2 days ago

Tech2 days agoHow to back up your iPhone & iPad to your Mac before something goes wrong

-

Tech2 days ago

Tech2 days agoFlipsnack and the shift toward motion-first business content with living visuals

-

Fashion6 days ago

Fashion6 days agoEn Vogue in Brown Leather and Tailored Neutrals by Atelier Savoir, Styled by J Bolin

-

Politics2 days ago

Politics2 days agoShould Trump Be Scared Strait?

-

Crypto World2 days ago

Crypto World2 days agoU.S. rule change may open trillions in 401(k) funds to crypto

-

Fashion6 days ago

Fashion6 days agoWhat Are Your Favorite T-Shirts for the Weekend?

-

Fashion5 days ago

Fashion5 days agoWeekly News Update, 3.27.26 – Corporette.com

You must be logged in to post a comment Login