Crypto World

BTC price falls with ETH, SOLwhile decred, AI-linked tokens advance: Crypto Markets Today

Decred (DCR), a token built for autonomy and decentralized governance, extended gains even as the broader market led by bitcoin struggled.

The token has risen 16% in the past 24 hours and now trades at $34.58, the highest since November, CoinDesk data show. It’s the best-performing top-100 token over the past four weeks, having gained more than 80% after a Feb. 8 change to its treasury rules.

Bitcoin, for its part, is facing renewed selling pressure, trading just around $67,000, a weak follow-through after bouncing to $70,000 on Wednesday. The cryptocurrency is down 2% on a 24-hour basis, with ether (ETH), XRP (XRP), solana (SOL), and the CoinDesk 20 Index (CD20) registering similar losses.

Market participants remain cautious and are continuing to seek put options, or downside protection, in bitcoin. Deribit said that ETF holders and corporate treasuries are buying put options at the $60,000 strike expiring in six to 12 months.

Analysts said institutional flows are improving but not yet decisive, and traders should avoid taking big risks.

“Long-term investors may consider staggered accumulation (SIP-style allocation) near support zones rather than deploying lump sums at resistance,” Vikram Subburaj, CEO of crypto exchange Giottus.com, said in an email to CoinDesk.

Derivatives positioning

- Cumulative crypto futures open interest (OI) has fallen back to recent multimonth lows of around $93.5 billion. The drop shows how quickly the optimism sparked by Wednesday’s bitcoin price bounce has fizzled out.

- Major tokens, including bitcoin and ether, have seen capital outflows from futures as notional OI declined more than their spot prices.

- The market-wide long-short ratio continues to show a dominance of shorts, or bearish bets.

- OI in tether gold (XAUT) dropped another 11% extending the decline from early this week. Gold-linked assets seem to have fallen out of favor lately.

- Most large-cap tokens, including BTC and ETH, are again seeing negative perpetual funding rates. That means bearish plays are dominating the market once more.

- Participation in CME bitcoin futures is falling, as shown by open interest hitting the lowest levels this year.

- On Deribit, one-month bitcoin puts still trade at a 7% premium to calls in a sign of lingering concerns of further spot price declines. The same is true for ether.

- Bitcoin put spreads, a bearish strategy, accounted for 75% of the total block flow over 24 hours. In ETH’s case, traders chased put spreads and straddles (volatility strategies).

Token Talk

The DFINITY Foundation proposed burning 20% of cloud engine revenue, introducing a deflationary element tied directly to network usage for Internet Computer (ICP).

The remaining 80% of revenue would be routed to node operators, replacing fixed emissions with performance-based incentives. The idea is to make ICP’s token supply more responsive to real demand.

ICP’s price moved up roughly 6% in the last 24-hour period, from around $2.41 to $2.56. It’s down from a high of $2.7 seen during the period. The price appears to be influenced not just by the foundation’s proposal, but also by Nvidia’s blowout earnings.

Those earnings boosted sentiment surrounding artificial intelligence-linked assets, with Nvidia CEO Jensen Huang saying AI is only getting better.

ICP, often marketed as a decentralized alternative to traditional cloud AI infrastructure, was among several AI-linked tokens, including render (RENDER) and bittensor (TAO), to benefit from renewed investor interest in the sector.

Crypto World

Trump-Linked Crypto Tokens Plunge, Renewed Backlash Erupts

Trump-associated memecoins have entered a volatile stretch, with both the Official Trump token (TRUMP) and the World Liberty Financial (WLFI) governance token sliding toward new lows as regulatory scrutiny and questions about tokenomics weigh on market sentiment. Data show the TRUMP token trading in the low double digits of dollars and WLFI hovering near single-centre cents, underscoring the fragility of celebrity-backed crypto ventures in a tightening regulatory climate.

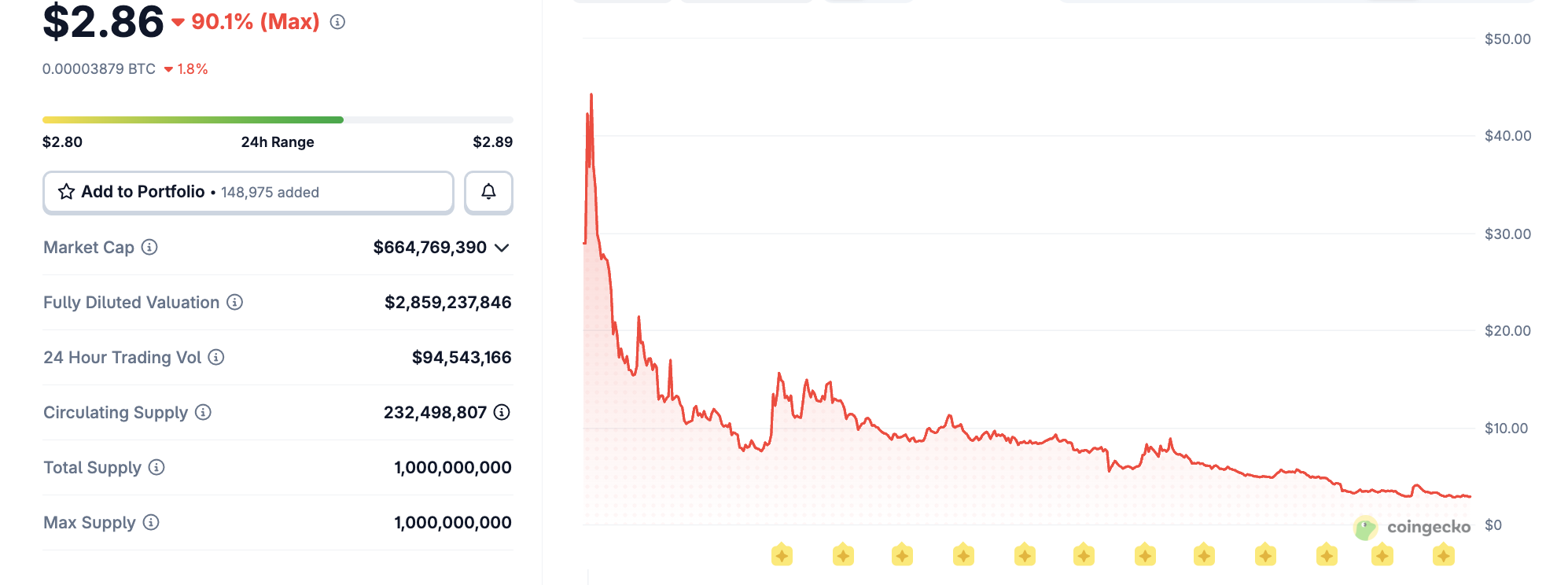

According to market data, the TRUMP memecoin fell to an all-time low near $2.73 in March 2026 and was trading around $2.86 at the time of reporting, per CoinGecko. The WLFI token, promoted as a DeFi governance token associated with a Trump-linked project co-founded by the former president’s sons, tumbled to about $0.07, a drop of roughly 75% from its all-time high near $0.31 reached in September 2025. The TRUMP token had previously peaked above $73 in January 2025, illustrating the dramatic reversal from fevered debut to current caution.

Key takeaways

- TRUMP token prices reached an all-time high above $73 in January 2025, but by March 2026 had fallen to about $2.73, trading near $2.86.

- WLFI, the governance token tied to a Trump-linked DeFi project, hit an all-time low of about $0.07, after peaking around $0.31 in September 2025—roughly a 75% decline.

- The collapse in these meme coins underscores the volatility of celebrity-backed crypto projects and the risks of token economics that depend on ongoing hype rather than durable use cases.

- U.S. lawmakers intensified scrutiny of memecoin events tied to public figures, with a letter demanding details on an upcoming Trump-era gala and concerns about access arrangements that could benefit token holders and promoters.

- Analysts and academics cited the broader risk factors in meme-coin markets, including governance structure, conflicts of interest, and potential regulatory actions as pivotal in shaping near-term momentum.

Prices, hype, and a changed meme-coin landscape

The TRUMP memecoin, launched in January 2025 amid a wave of celebrity-backed tokens, rapidly drew attention from traders and media. Its price trajectory—soaring to multi-dollar levels before retreating—captured a classic meme-coin arc: rapid inflows driven by social media attention, followed by a sharp correction as liquidity and speculative interest waned. By March 2026, CoinGecko records show the token at roughly $2.73, with a marginal recovery to around $2.86, signaling that gains since the peak have largely eroded.

WLFI’s story runs parallel in the world of DeFi governance tokens tied to high-profile endorsements. The token’s decline from its all-time high near $0.31 in September 2025 to about $0.07 reflects a broader pattern where governance models backed by glamour rather than proven utility struggle to sustain value. CoinMarketCap tracking shows the pullback was steep but not isolated to a single project, highlighting the risk profile unique to memecoin ecosystems and their often uncertain long-term viability.

Professor Tonya Evans, a noted scholar in crypto policy, voiced a pointed critique of the broader dynamics around celebrity-driven ventures. “We thought Sam Bankman-Fried or Gary Gensler were the worst things to happen to the crypto industry, and they were horrible,” she said. “But, turns out, it was the guy who surrounds himself with sycophants, siphons every bit of value he can for himself, and then expeditiously bankrupts companies and casinos without consequence.”

Regulatory and political scrutiny tightens the heat

The political timeline around Trump-linked tokens has grown more complicated as lawmakers attempt to map governance, access, and potential conflicts of interest. Senators Elizabeth Warren, Richard Blumenthal and Adam Schiff recently sent a letter to Bill Zanker—the promoter behind the Trump memecoin—seeking clarity on the April gala announced for token holders. The lawmakers argued the event could function as a vehicle for influence peddling, noting that access to the former president would be tied to holding TRUMP tokens, a structure that could tip economic incentives in favor of promoters and organizers.

Politico, which obtained a copy of the letter, reported that the organizers were “dangling access” to Trump in exchange for participation, raising questions about governance, transparency, and the ethics of fundraising through memecoins. The April 25 gala, already drawing attention for its potential optics, sits at the center of a broader debate about how public figures’ crypto ventures intersect with campaign-era fundraising norms and regulatory oversight.

For investors and builders in the memecoin space, the unfolding questions are not merely about price. They signal a shift in how regulators and lawmakers may treat celebrity-endorsed crypto projects, particularly those that tie token access to real-world events or interactions with public figures. The tension between hype-driven launches and the need for robust disclosures, clear tokenomics, and independent governance remains a defining fault line for the sector.

Earlier coverage from Cointelegraph highlighted the wider scrutiny around Trump-linked crypto projects, including concerns about conflicts of interest and potential insider dynamics. The current developments reinforce the need for heightened transparency and better alignment between token functionality and long-term value creation rather than purely promotional appeal.

The landscape for meme coins linked to high-profile figures thus sits at a crossroads: the immediate price signals remain volatile, while the regulatory and ethical questions could shape the rules and norms that govern this corner of the market going forward.

What matters next is how regulators and market participants respond to these tensions. Watch for any official statements on memecoin governance norms, disclosures around event-driven access schemes, and potential Congressional or administrative actions that could recalibrate the incentives driving celebrity-backed crypto projects.

Crypto World

How $5K Could Hit $750K as RaveDAO Prints 250% and Pepeto Targets 150x While DOGE and LINK Hold

The crypto news landed hard this week when RaveDAO exploded 250% on April 10, driven by months of quiet accumulation after its Coinbase debut. One listing turned an overlooked token into a $300 million asset overnight. Large caps barely moved while the listed projects printed gains that changed portfolios.

The presale is next in line with $8.9 million already raised, a running exchange, and a confirmed Binance listing ahead. At today’s entry, $5,000 converts to over 26 billion tokens, and if the price reaches what Pepe hit on the same 420 trillion supply, that is 150x, turning $5,000 into $750,000.

RaveDAO gained 250% in a single session on April 10, pushing past $300 million in market cap after its February Coinbase listing created the foundation for a breakout, according to CoinMarketCap.

Overbought readings on the chart raised caution flags around the speed of the move, a pattern common after sudden listing-driven spikes, according to CoinGecko.

Every wallet that positioned in RaveDAO ahead of its Coinbase debut walked away with the gains. The wallets that showed up after the spike are now holding bags at elevated prices.

DOGE, LINK, Pepeto, and Where One Listing Turns Small Entries Into Real Wealth

Pepeto

The crypto news keeps proving that the market rewards the tools it can rely on. The exchange was built to solve a real problem, screening tokens for exploits and traps so traders stop losing money to scam contracts that look normal on the surface.

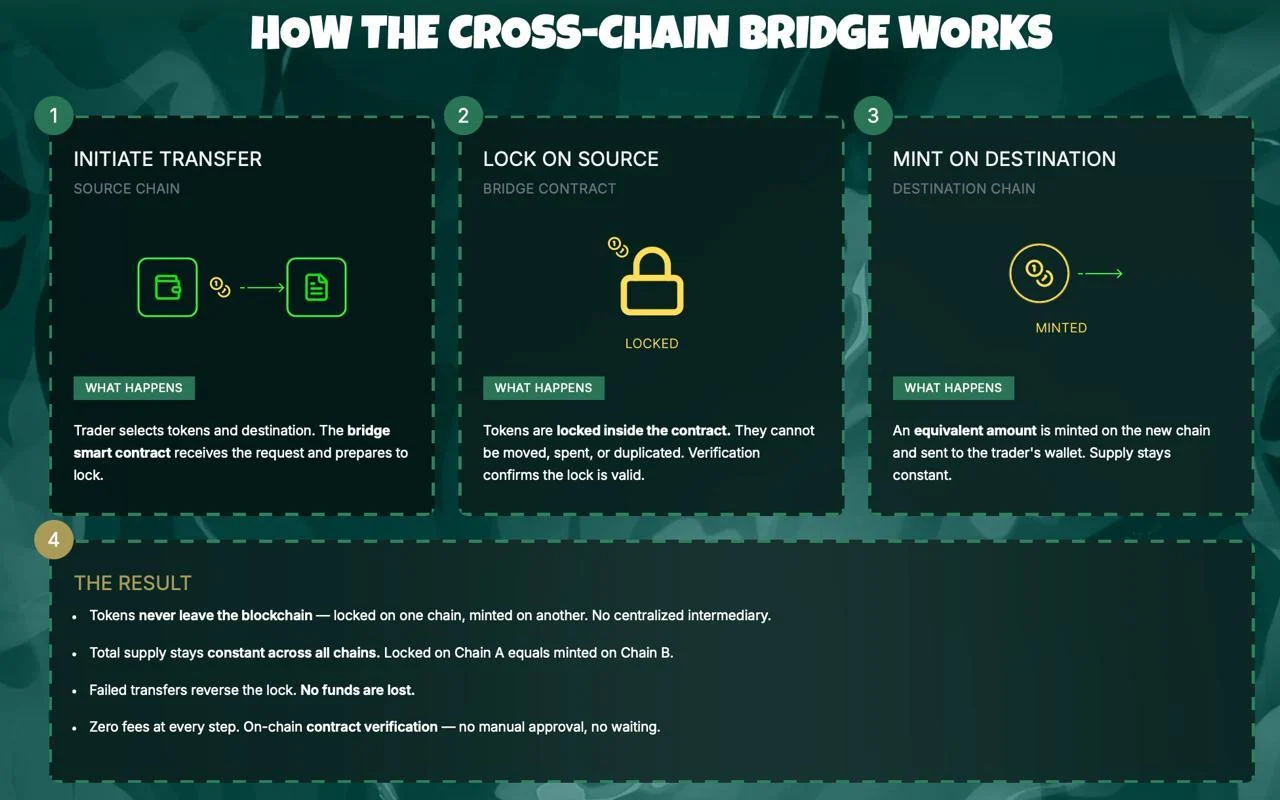

A full contract audit runs before any trade executes, checking for drain functions, honeypot code, and fake supply manipulation. Results appear in clear language anyone can read. Trades clear through PepetoSwap with no fee attached, and the bridge shifts tokens across chains without deducting anything from the transfer.

The numbers tell the story the crypto news has not printed yet. Over 26 billion tokens at $0.000000186 for $5,000. Pepe reached $0.00002803 on 420 trillion tokens and no working product. Reaching that same level from today’s presale price means 150x, which sends $5,000 to $750,000.

The exchange already runs, the SolidProof audit is done, a Binance operations veteran sits on the team, the creator of the original Pepe token built every tool, and 185% APY staking grows each position while stages close. When the listing drops, the crypto news will cover Pepeto the way it covered RaveDAO this week, and you are either positioned or you are not.

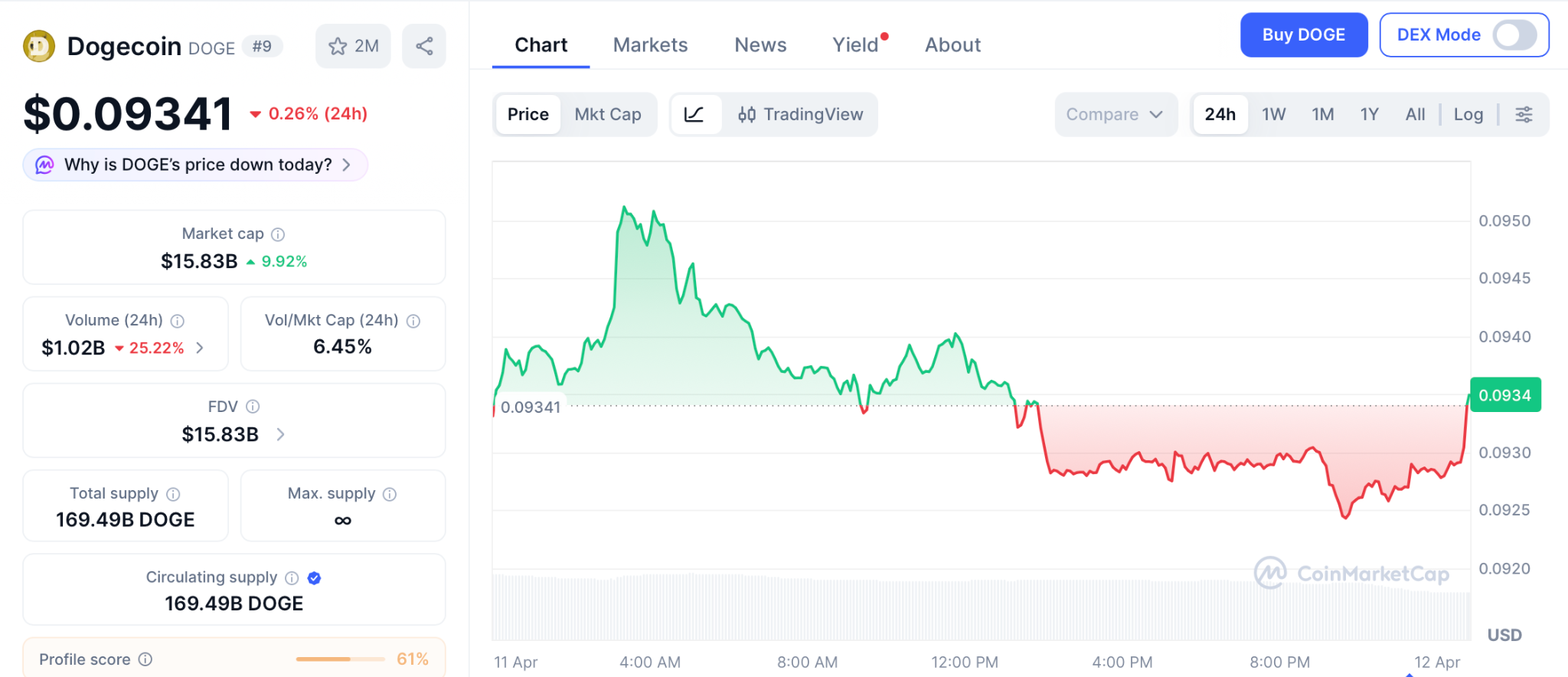

Dogecoin (DOGE) Price at $0.093 as Commodity Status Is Official but Buyers Stay Away

Dogecoin (DOGE) sits at $0.093 per CoinMarketCap, down 0.26% after the SEC finalized its commodity classification without triggering fresh demand.

DOGE must clear $0.102 before any bounce holds, with $0.087 acting as the floor. The token once ran from $0.007 to a $90 billion cap, but at current levels a strong run delivers 2x to 3x over months. A presale priced for 150x from a single listing offers a different equation entirely.

Chainlink (LINK) Price at $9.10 as Bitwise ETF Opens LINK to Retirement Accounts

Chainlink (LINK) trades at $9.10 per CoinMarketCap, gaining 2% after the Bitwise LINK ETF (CLNK) launched on NYSE Arca and opened LINK to 401(k) and IRA holders for the first time.

Support holds at $8.50, resistance at $9.50, with CCIP now processing $18 billion in monthly volume. Analysts target $15 by late 2026, a solid double that takes months to arrive. A presale listing compresses that kind of gain into days instead of quarters.

Conclusion

You sat through the last cycle and watched other wallets collect while you waited for a better price that never came. You told yourself next time would be different, and this is next time. The crypto news this week showed RaveDAO printing 250% from a listing while DOGE holds $0.093 and LINK sits deep in fear.

The stages are filling faster now, and every one that closes raises the floor for the next. The Binance listing is not a theory. It is confirmed and approaching. Pepeto’s official site is where the decision gets made, and a 2026 portfolio without this entry is the mistake you take into 2027 the same way last cycle’s hesitation followed you into this year.

Click To Visit Pepeto Website To Enter The Presale

FAQs

What is the latest crypto news about listing events and presale returns in 2026?

RaveDAO gained 250% after its Coinbase listing this week while Pepeto heads toward a Binance listing with $8.9 million raised and 150x projected by analysts.

Is Dogecoin (DOGE) at $0.093 a better entry than Pepeto at presale pricing?

DOGE must break $0.102 for recovery and offers 2x to 3x over months at best. Pepeto targets 150x from a presale price of $0.000000186 with one listing event ahead.

Disclaimer: This is a Press Release provided by a third party who is responsible for the content. Please conduct your own research before taking any action based on the content.

Crypto World

Trump-Linked Crypto Tokens Face Renewed Scrutiny After Plummeting in Price

United States President Donald Trump is facing renewed scrutiny, as crypto tokens and projects promoted by the US president crash to all-time lows or sit near record low levels.

The Official Trump token (TRUMP), a memecoin promoted by Trump, hit an all-time low of about $2.73 in March 2026 and is currently trading at about $2.86, according to data from CoinGecko.

World Liberty Financial (WLFI), a decentralized finance (DeFi) platform co-founded by Trump’s sons, also issued a governance token, which crashed to an all-time low on Saturday, falling to just $0.07.

WLFI is down by nearly 75% from its all-time high of about $0.31 reached in September 2025, while the TRUMP memecoin is down by about 90% since its all-time high of over $73 reached in January 2025.

“We thought Sam Bankman-Fried or Gary Gensler were the worst things to happen to the crypto industry, and they were horrible,” Professor Tonya Evans said in response to the plummeting token prices. She added:

“But, turns out, it was the guy who surrounds himself with sycophants, siphons every bit of value he can for himself, and then expeditiously bankrupts companies and casinos without consequence.”

President Trump also announced another gala for token holders, scheduled to take place on April 25, fueling renewed scrutiny from US Democratic lawmakers, who have accused Trump of influence peddling by giving token holders access to him.

Related: Trump memecoin whales pile in ahead of Mar-a-Lago gala

US lawmakers send letter to Trump memecoin creator

Senators Elizabeth Warren, Richard Blumenthal and Adam Schiff recently sent a letter to Bill Zanker, the individual who launched the Trump memecoin, requesting details on the purpose of the planned Trump memecoin gala in April.

The organizers of the event are “dangling access” to Trump, the lawmakers said, according to Politico, which obtained a copy of the letter.

Trump and his family members stand to benefit from increased sales of the Trump memecoin; attendees are required to hold TRUMP tokens to gain access to the event, the Senators said.

Magazine: Trump’s crypto ventures raise conflict of interest, insider trading questions

Crypto World

Is Recovery Real or Bears Prevail?

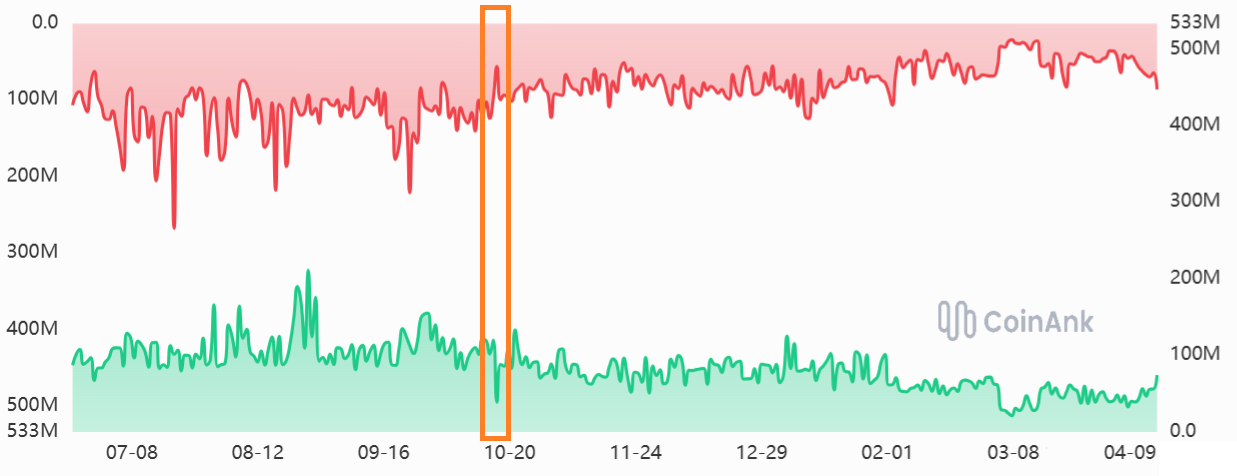

Liquidity in Bitcoin markets remains fragile more than six months after the Oct. 10, 2025 flash crash, which wiped out roughly $19 billion in leveraged positions and unsettled market structure. New data compiled by market analytics firms indicate a persistent erosion of depth across the Bitcoin orderbook, with liquidity collapsing roughly 50% from levels seen in September 2025 and reappearing as a recurring theme into 2026.

Analysts note that the fragility appears driven more by evolving market dynamics in 2026 than by the October 2025 shock alone. Indicators point to a thinner orderbook, cautious bullish leverage demand, and mixed signals from derivatives activity and ETF trading. The evolving picture suggests a market that remains structurally more fragile than a year prior, even as certain segments intermittently regain activity.

Key takeaways

- Bitcoin orderbook depth has fallen about 50% since September 2025, signaling a persistent liquidity squeeze across the market.

- By February 2026, liquidity metrics showed renewed strain, with Bitcoin orderbook depth dropping below $60 million for roughly 10 days as the price hovered near $65,000.

- Derivatives volumes cooled relative to the late-2025 peak, while US-listed BTC ETFs surged at times but trended lower into April 2026; ETH ETFs also cooled, with volumes dipping from earlier levels.

- The BTC perpetual futures funding rate indicates shifting risk appetite: historically normal ranges gave way to stability in late 2025, followed by a pullback toward negative territory in February 2026, signaling renewed hedging pressure.

- Even with the Oct. 2025 crash, market structure held relatively firm through February 2026, implying the long-term significance of that event may be less than initially feared.

Liquidity pressure persists after the 2025 crash

In the run-up to the crash, the aggregate Bitcoin orderbook depth, measured on the +1% to -1% axis, typically fluctuated between roughly $180 million and $260 million in September 2025. On Oct. 10, 2025, a confluence of technical issues at major venues and auto-deleveraging on decentralized exchanges triggered a liquidity lapse that many observers attributed to structural fragility in the space. By mid-November 2025, depth had recovered only modestly, hovering near $150 million, far below the pre-crash range.

As 2026 progressed, the erosion persisted. By April 2026, Bitcoin’s orderbook depth seldom exceeded $130 million, keeping the market in a state of diminished resilience. A more acute squeeze appeared in February 2026, when depth dipped below $60 million for about 10 days as Bitcoin traded around the $65,000 mark. Taken together, these trends paint a market where liquidity is consistently thinner than in the years prior to 2025.

Derivatives volumes and ETF demand map the pulse

Analyses tracking overall market activity show derivatives volumes fluctuating within a narrower band than during the peak of 2025. Over the past 30 days, cryptocurrency derivatives volumes have cycled between roughly $40 billion and $130 billion, well short of the $200 billion peak observed in September 2025. While the softer derivatives backdrop may temper near-term bullish bets, it is not automatically a bearish signal, as longs and shorts have been relatively balanced on average during this period.

On the exchange-traded fund (ETF) side, activity has been mixed. US-listed spot Bitcoin ETFs moved to more robust daily levels between January and March 2026, typically trading above $4 billion per day, before easing to under $3.3 billion in the first week of April. For Ether, ETF volumes declined from roughly $2 billion per day in September 2025 to about $1 billion per day in the first weeks of 2026, a sign that demand for ETF exposure remained sensitive to evolving market conditions.

Source data for these ETF volumes often cited Coinglass, while other data series tracking broader volumes came from TokenInsight for total crypto trading activity and Laevitas for futures funding dynamics.

Funding rate signals shifting risk appetite

The Bitcoin perpetual futures funding rate—a barometer of market-wide risk appetite—typically ranges from 6% to 12% annually to compensate for the cost of capital. In the months surrounding the 2025 crash, funding remained relatively stable through November 2025, suggesting a balance between long and short positioning. A notable shift appeared in February 2026, when the funding rate moved toward lower figures, with periods of negative funding emerging, indicating that shorts were occasionally paying to keep their positions open. This pattern aligns with a broader tightening of bullish leverage and a more cautious stance among traders during that interval.

These dynamics illustrate how risk sentiment can diverge from headline price moves: even as BTC traded in a wide range, funding parity reflected tempered appetite for leverage and a heightened emphasis on hedging and risk control.

Market structure vs. the Oct crash: what changed?

One of the more nuanced takeaways from the data is that, while the Oct. 2025 flash crash catalyzed immediate concern, the market’s underlying structure appeared to hold up comparatively well through February 2026. In other words, the material impact on market health may have been more transient than anticipated, with liquidity and derivative activity not collapsing in lockstep with the initial shock. Nonetheless, the late-2025 to early-2026 data point to a market that remains structurally thinner than pre-crash levels, and a recovery in core liquidity remains a critical watchpoint for traders and institutions alike.

For readers tracking these dynamics, recent coverage also highlighted steps by major exchanges to curb abnormal executions and improve trading guardrails, a reminder that post-crash reform continues to shape market behavior. See related coverage noting Binance’s enhancements to trading guardrails as part of ongoing risk-control measures.

As regulators, market makers, and investor desks reassess liquidity provisioning, the next few months will reveal whether the 2026 liquidity baseline can stabilize at higher levels or if the fragility persists. Investors will want to monitor orderbook depth across major venues, the pace of ETF inflows, and the evolution of futures funding as signals of broader risk appetite and structural resilience return to the market.

Crypto World

Can Bitcoin ETF Inflows Sustain Momentum as Institutional Buying Builds?

TLDR:

- Spot Bitcoin ETFs recorded a net inflow of 3,350 BTC, worth roughly $240M, in a single trading day.

- BlackRock led all issuers with 3,741 BTC in daily inflows, driving the bulk of the day’s net positive result.

- Grayscale posted another 162 BTC outflow, continuing a months-long pattern of legacy holder redemptions.

- The 7-day cumulative inflow reached 7,358 BTC, confirming a broader and sustained accumulation wave forming.

Spot Bitcoin ETF inflows recorded 3,350 BTC, worth approximately $240 million, in a single trading day. BlackRock led all issuers while Grayscale continued its steady outflow trend.

ETFs now collectively hold 721,090 BTC valued at roughly $56.75 billion, reflecting a sustained shift in Bitcoin ownership from active market circulation into long-term institutional balance sheets.

BlackRock Leads a Two-Speed ETF Market

Bitcoin ETF inflows continue to reflect a clear divide among issuers. BlackRock recorded a single-day inflow of 3,741 BTC, accounting for the bulk of the day’s net positive figure.

That one entry essentially drove the entire day’s result across all spot ETF products. Grayscale posted another outflow of 162 BTC, extending a pattern that has held for months.

Legacy holders are exiting while new institutional capital enters through lower-fee, more efficient vehicles. Fidelity, Bitwise, and ARK Invest contributed steady secondary demand but remain well behind BlackRock in volume.

The contrast between issuers reflects a broader rotation in how institutions access Bitcoin. Newer, cost-efficient products are attracting the larger flows.

Older structures continue to see gradual redemptions as capital migrates toward better-structured options. This two-speed dynamic shows no sign of reversing in the near term.

721,000 BTC Absorbed as Supply Squeeze Builds

ETFs collectively hold 721,090 BTC, valued at approximately $56.75 billion, marking a structural shift in Bitcoin ownership. Coins entering ETF products tend to remain there, reducing the amount of Bitcoin available for active trading.

Each inflow day quietly removes more supply from the liquid market. The 7-day cumulative inflow total reached 7,358 BTC, confirming that the single-day figure was not an isolated event.

Despite periodic outflow days visible in the daily flow data, the cumulative trend line has continued moving upward. That resilience points to consistent absorption, where selling pressure is steadily met by fresh institutional demand.

Meanwhile, Ethereum products showed mixed flows, and Solana-linked products recorded net outflows over the same period. That divergence reinforces Bitcoin’s position as the primary institutional entry point among digital assets.

In transitional market phases, capital tends to consolidate into the most established asset, and Bitcoin continues to fill that role.

With sell-side liquidity thinning, marginal buyers are increasingly required to bid higher to acquire meaningful size. The accumulation slope accelerated in late 2024 and again in mid-2025, both phases aligning with rising price momentum.

Yesterday’s inflow pattern suggests a similar setup may be forming if consecutive positive days follow.

Crypto World

WLFI Token Hits All-Time Low Amid World Liberty’s DeFi Lending Controversy

World Liberty Financial has scrambled to pay down $25 million of its highly scrutinized loan on the DeFi lending protocol Dolomite.

The immediate repayments comprise $15 million on April 7 and an additional $10 million on April 10. These payments arrive amid mounting industry backlash over the project’s use of its own token as collateral.

WLFI’s Repayment Follows Intense Community Pressure

Data from BeInCrypto showed that the ongoing controversy dragged the WLFI token down to an all-time low of $0.07967. This is its weakest performance since the project’s highly publicized rollout in 2025.

The market rout follows revelations that World Liberty essentially used its own governance tokens as collateral to extract massive quantities of stablecoins.

According to Arkham Intelligence, the Trump-affiliated venture pledged roughly $406 million worth of WLFI across two digital wallets to borrow $150 million in USDC.

This maneuver rapidly depleted Dolomite’s USD1 lending pool, pushing utilization rates above 93%. Consequently, retail depositors faced a severe liquidity crunch, making it difficult to withdraw their funds.

Meanwhile, the optics of the transaction were further complicated by intertwined leadership. Dolomite co-founder Corey Caplan currently serves as an official advisor to World Liberty Financial.

As the digital asset’s price cratered, DeFi analysts raised alarms regarding the systemic risk of bad debt. WLFI’s collateral now accounts for approximately 55% of Dolomite’s $835.7 million in total value locked, heavily concentrating risk in a single, depreciating asset.

World Liberty Financial Dismisses ‘FUD’

However, World Liberty executives have aggressively pushed back against the market anxiety, dismissing insolvency fears as “FUD.”

In a series of social media statements, the developers argued that their massive borrowing benefits the broader ecosystem. They claimed that acting as an “anchor borrower” generates outsized yield for other participants.

However, critics warned that a sharper decline could raise the risk of bad debt for lenders if collateral values fall faster than the position can be adjusted. World Liberty rejected that scenario, saying it could post more collateral if needed.

“We are one of the largest suppliers and borrowers on WLFI Markets. Yes, we supplied WLFI as collateral and borrowed stablecoins. No, we are nowhere near liquidation — and frankly, even if markets moved dramatically against us, we’d simply supply more collateral. That’s not a risk. That’s how this works,” the team added.

In a simultaneous bid to appease early backers facing steep paper losses, World Liberty announced an upcoming governance proposal to unlock restricted tokens.

According to the team, the proposed framework will feature a structured, long-term vesting schedule specifically targeted at early retail buyers.

The post WLFI Token Hits All-Time Low Amid World Liberty’s DeFi Lending Controversy appeared first on BeInCrypto.

Crypto World

Epic Market Flash Crash Killed Bull Market: Is Crypto Healthier Now?

Key takeaways:

-

Bitcoin orderbook depth has plummeted by 50% since September 2025, signaling a substantial decline in overall market liquidity.

-

Indicators suggest that the current market fragility stems more from recent 2026 trends than from the 2025 flash crash itself.

Bitcoin (BTC) and crypto markets took a massive hit on Oct. 10, 2025, precisely 6 months ago. That devastating flash crash wiped out a record-breaking $19 billion in leveraged positions while some altcoins collapsed 40% to 80%. Many traders speculated that multiple market makers had been wiped out, while others accused the Binance exchange of blatant manipulation.

Was the crypto market structure actually altered after the October 2025 crash, and what has changed in liquidity, derivatives markets, and institutional metrics?

Bitcoin’s aggregate orderbook depth, ranging from +1% to -1%, typically oscillated between $180 million and $260 million in September 2025. On most days, there would be a healthy $90 million in bids, but that was not the case on Oct. 10, 2025. A mix of technical issues at Binance and auto-deleveraging on decentralized exchanges caused a temporary liquidity lapse.

During the flash crash, Bitcoin’s orderbook depth entered a downward spiral, stabilizing near $150 million by mid-November 2025. Currently, Bitcoin’s order book depth seldom exceeds $130 million, down 50% from levels seen in September 2025.

The already fragile market conditions deteriorated further in February 2026. Bitcoin’s orderbook depth plunged below $60 million for nearly 10 days as the price struggled to hold the $65,000 level. Cryptocurrency market volumes declined considerably, especially in the derivatives markets.

Cryptocurrency derivatives volumes oscillated between $40 billion and $130 billion over the past 30 days, falling short of the $200 billion mark commonly seen in September 2025. Still, the reduced appetite for futures contracts is not necessarily a bearish indicator as longs (buyers) and shorts (sellers) are evenly matched at all times.

Demand for bullish leverage remains weak, ETF volumes lag

The Bitcoin perpetual futures funding rate can be used to assess traders’ risk appetite.

Under normal conditions, the indicator should range between 6% to 12% to compensate for the cost of capital. Excessive demand for bearish leverage can push the indicator below 0%, meaning shorts are the ones paying to keep their positions open. Data indicate stable conditions throughout November 2025, followed by a sharp decline in February 2026.

Curiously, volumes of US-listed spot Bitcoin exchange-traded funds (ETFs) were not impacted by the Oct. 10, 2025 flash crash. In fact, by late November, activity in those instruments jumped to their highest levels in 20 months at $11.5 billion per day.

Related: Binance adds spot trading guardrails to limit abnormal executions

Bitcoin ETFs regularly traded at volumes above $4 billion per day between January and March 2026, but eventually fell below $3.3 billion by the first week of April. Similarly, US-listed Ether (ETH) ETFs average daily volume dropped to $1 billion, down from $2 billion in September 2025.

Orderbook depth, funding rate, derivatives and ETF volumes all point to a much less healthy cryptocurrency market in April 2026 relative to 6 months prior. However, given that the market structure held relatively firm through February 2026, the relevance of the Oct. 10, 2025 flash crash seems much less than previously imagined.

This article is produced in accordance with Cointelegraph’s Editorial Policy and is intended for informational purposes only. It does not constitute investment advice or recommendations. All investments and trades carry risk; readers are encouraged to conduct independent research before making any decisions. Cointelegraph makes no guarantees regarding the accuracy or completeness of the information presented, including forward-looking statements, and will not be liable for any loss or damage arising from reliance on this content.

Crypto World

$1.5 Billion Ethereum Treasury Ether Machine Deal Collapses

The Ether Machine and Dynamix Corporation (NASDAQ: ETHM) have mutually terminated their business combination agreement, effective April 8, 2026.

In a post on X, the firm stated that the deal fell through due to unfavorable market conditions.

Ether Machine Cites “Unfavorable Market Conditions” as SPAC Merger Dies

The Ether Machine first unveiled plans to go public in July 2025, targeting more than $1.5 billion in fully committed capital and an initial treasury of more than 400,000 ETH.

The proposed deal drew backing from major industry players, including Pantera Capital, Kraken, and Blockchain.com.

However, the deal did not reach the finish line.

“The Ether Machine, a planned public company following a pending business combination with Dynamix Corporation (Nasdaq: ETHM) and The Ether Reserve LLC, together with certain other parties thereto, announced today that they have mutually agreed to terminate their previously announced Business Combination Agreement, effective immediately, as a result of unfavorable market conditions,’ the post read.

The termination comes as the crypto market continues to face headwinds. Asset prices have declined sharply since October, and Q1 2026 has added further pressure.

While geopolitical tensions briefly lifted Ethereum, the token still remains nearly 55% below its all-time high set in August 2025.

The impact is not limited to The Ether Machine. BitMine, the largest corporate ETH holder, is sitting on roughly $6.5 billion in unrealized losses, with its stock down 31.7% year to date.

Subscribe to our YouTube channel to watch leaders and journalists provide expert insights

The pattern extends beyond ETH as well. Bitcoin treasury firms have also faced pressure, with some moving to liquidate their holdings.

$50 Million Termination Fee and Indemnification Provisions

According to the 8-K filing with the SEC, the termination agreement includes mutual releases, a covenant not to sue, and non-disparagement clauses. The designated “Payor” also must pay $50 million to Dynamix within 15 days of the agreement’s effective date.

“The Termination Agreement further provides that the Payor will indemnify Dynamix, the Sponsor and their affiliates and the Berns Parties for certain losses arising out of or caused by or based upon certain actions brought by any ETHM Investor other than an ETHM Investor that is a SPAC Releasing Party and that Dynamix will indemnify Pubco, the Company, the Seller, the Payor and their affiliates and the Berns Parties for certain losses arising out of or caused by or based upon certain actions brought by any Dynamix shareholder, in their capacity as a shareholder, who is not an ETHM Investor,” the filing reads.

Dynamix has until November 22, 2026, to complete a business combination or face liquidation. If no deal is finalized, public shareholders will receive pro-rata redemptions from the trust account.

Follow us on X to get the latest news as it happens

The post $1.5 Billion Ethereum Treasury Ether Machine Deal Collapses appeared first on BeInCrypto.

Crypto World

Musk’s SpaceX holds $603 million in bitcoin despite $5 billion loss stemming from xAI

SpaceX is sitting on 8,285 BTC worth $603 million in Coinbase Prime custody while reporting a loss of nearly $5 billion for 2025, according to Arkham Intelligence data and a report from The Information published late Friday.

The loss marks a sharp reversal from a year earlier when SpaceX generated roughly $8 billion in profit on revenues estimated between $15 billion and $16 billion.

Revenue grew to $18.5 billion in 2025, but the integration of xAI, Elon Musk’s artificial intelligence venture acquired in February, drove costs past the top line.

There are no changes to the company’s bitcoin position despite these losses. Transfer history analyzed by CoinDesk shows the last significant movement was an internal rebalance roughly four months ago, with 614 BTC and 1,021 BTC moving between SpaceX’s own wallets.

The balance history chart shows holdings have remained stable since mid-2024 after peaking above $1.6 billion in value during the October 2025 all-time high.

For a company that just posted a $5 billion loss and is actively pursuing an IPO, holding $603 million in a volatile asset rather than liquidating it to improve the balance sheet is a statement about how Musk (or the broad) view bitcoin as a treasury asset.

SpaceX’s position is now the fourth-largest known corporate bitcoin holding behind Strategy, Marathon Digital, and Riot Platforms.

CoinDesk reported last month that SpaceX had filed for an IPO that would disclose the bitcoin position in public filings for the first time, potentially forcing a fair-value accounting decision under the new FASB rules that took effect in late 2025.

Crypto World

Bitcoin Price Signals Short Squeeze as Open Interest Nears $25B

Bitcoin is set for a potential short squeeze as on-chain indicators illuminate a crowded setup against a backdrop of rising open interest and persistently negative funding rates. After BTC briefly breached $73,000 last Friday, traders are watching how leveraged shorts might be forced to cover as funding costs stay deeply negative and open interest climbs to a five-week high.

CryptoQuant’s Quicktake analysis highlighted that Bitcoin was “crowded” with short positions, noting that BTC is moving off exchanges while funding rates remain strongly negative. This combination, according to contributors, can amplify a squeeze if demand returns and shorts are compelled to unwind their bets. Source: CryptoQuant

Key takeaways

- Bitcoin open interest rose to about $24.2 billion, the highest since early March, signaling growing leverage as traders position for a potential move.

- Funding rates on major exchanges sit in deeply negative territory, indicating short positions are paying longs and increasing the risk of a forced reversal.

- Analysts say large-scale speculators have turned net long on BTC again, a posture that historically foreshadows a powerful move when conviction builds.

- After BTC cleared $73,000, some market voices eye higher targets, including $80,000 and beyond, though caution remains warranted amid persistent volatility.

- Daily liquidations across the broader crypto space remained subdued, with CoinGlass reporting under $100 million in cross-crypto liquidations over a 24-hour window.

Open interest and the squeeze dynamic

Analysts have flagged that the confluence of rising open interest and continuous negative funding rates creates a precarious setup for Bitcoin’s upside trade. Since March, negative funding has become more frequent and has persisted through April, reinforcing a narrative where shorts have dominated the market. CoinNiel summarized the situation, noting that “shorts paying longs” amid a tightening squeeze environment increases the potential for a reversal driven by forced liquidations when prices move against crowded bets. CryptoQuant analysis and accompanying posts have framed the setup as a developing risk for anyone wagering on continued upside with overweight leverage.

Bitcoin’s price action recently reignited the debate around who’s in control. BTC/USD pushed past $73,000 on Friday, a move traders interpreted as a potential catalyst for a squeeze if short bets were to unwind aggressively. Open interest’s uptick to five-week highs, paired with the negative funding climate, has kept the market on edge about a rapid shift in momentum.

“Since March, negative funding has become more frequent, and throughout April it has remained in negative territory without flipping positive.”

In this context, CoinNiel cautioned that the combination of rising open interest and negative funding suggests an accumulation of leveraged short exposure, warning that the current range could still be a zone of buying demand rather than a clean breakout. Further Quicktake notes reinforce the view that the market remains cautious despite the bounce in price.

Sentiment, positioning, and trader perspectives

Market voices have begun to point to a potential shift in sentiment as large-volume participants tilt toward a net-long stance. Trader Michaël van de Poppe noted that speculators are net long Bitcoin, drawing a parallel with prior occasions when similar positioning preceded a notable breakout in 2023. His observation, echoed by others tracking the positioning of institutional and high-net-worth traders, underscores a tension between a crowded short setup and a growing conviction among bulls that a new leg higher could be underway. Van de Poppe’s commentary highlights the evolving consensus among key market participants.

Despite the renewed optimism among some traders, risk remains. The market has not yet exhibited a sharp deleveraging that would accompany a decisive breakout; instead, it sits at a fragile equilibrium where shorts could be squeezed only if buyers sustain pressure, while a renewed wave of selling could reintroduce downward volatility.

What to watch next

Several data points will be critical to assess the likelihood and scale of any squeeze or new rally:

- Funding rates and exchange net flows: Continued negative funding and ongoing outflows from exchange wallets would reinforce the crowded-short narrative and caution against premature bullish bets.

- Open interest dynamics: Whether open interest maintains its upward trajectory or begins to roll over will signal whether leverage is expanding or unwinding.

- Liquidation activity: Short-term spikes in cross-asset liquidations could foreshadow a rapid price revaluation, though the current snapshot shows relatively modest liquidation levels (under $100 million over 24 hours according to CoinGlass).

- Key price targets and risk markers: Trader targets around $80,000 and higher are in circulation, but traders caution that the market remains vulnerable to shifts in macro momentum or regulatory headlines that could reverse the trend.

Taken together, the setup suggests a careful balance between a potential burst higher if shorts capitulate and the risk of a quick reversal if the market fails to sustain upside momentum. As always, participants should monitor on-chain signals, funding costs, and liquidity conditions to gauge whether the next move is a breakout or a test of support.

This article synthesizes observations from CryptoQuant’s Quicktake posts, CoinNiel’s summaries, CoinGlass liquidity data, and trader commentary from Michaël van de Poppe, in the context of BTC’s recent price action around $73,000 and the broader narrative on leveraged positioning in crypto markets.

-

Business6 days ago

Business6 days agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Politics1 day ago

Politics1 day agoUS brings back mandatory military draft registration

-

Fashion1 day ago

Fashion1 day agoWeekend Open Thread: Veronica Beard

-

Tech4 days ago

Tech4 days agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Business7 days ago

Business7 days agoNo Jackpot Winner, Prize to Climb to $231 Million

-

Fashion6 days ago

Fashion6 days agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Sports1 day ago

Sports1 day agoMan United discover Nico Schlotterbeck transfer fee as defender reaches Dortmund agreement

-

Fashion4 days ago

Fashion4 days agoLet’s Discuss: DEI in 2026

-

Crypto World3 days ago

Crypto World3 days agoCanary Capital Files SEC Registration for PEPE ETF

-

Business1 day ago

Business1 day agoTesla Model Y Tops China Auto Sales in March 2026 With 39,827 Registrations, Beating Cheaper EVs and Gas Cars

-

Crypto World3 days ago

Crypto World3 days agoBitcoin recovers as US and Iran Agree a Ceasefire Deal

-

Politics2 days ago

Politics2 days agoMalcolm In The Middle OG Turned Down ‘Buckets Of Money’ To Appear In Reboot

-

Business9 hours ago

Business9 hours agoIreland Fuel Protests Enter Day 5 as Blockades Spark Shortages and Government Prepares Support Package

-

Business2 days ago

Business2 days agoOpenAI Halts Stargate UK Data Centre Project Over Energy Costs and Copyright Row

-

Tech6 days ago

Tech6 days agoHaier is betting big that your next TV purchase will be one of these

-

Tech6 days ago

Tech6 days agoGamer Restores the Original PlayStation Portal From Two Decades Ago

-

Tech6 days ago

Tech6 days agoThe Xiaomi 17 Ultra has some impressive add-ons that make snapping photos really fun

-

Tech6 days ago

Tech6 days agoSamsung just gave up on its own Messages app

-

Politics2 days ago

Politics2 days agoLBC Presenter Mocks Trump Over Iran War Failures

-

Tech6 days ago

Tech6 days agoSave $130 on the Samsung Galaxy Watch 8 Classic: rotating bezel, sleep coaching, and running coach for $369

You must be logged in to post a comment Login