Crypto World

What’s going on with crypto?

by Gonzalo Wangüemert Villalba

•

4 September 2025

Introduction The open-source AI ecosystem reached a turning point in August 2025 when Elon Musk’s company xAI released Grok 2.5 and, almost simultaneously, OpenAI launched two new models under the names GPT-OSS-20B and GPT-OSS-120B. While both announcements signalled a commitment to transparency and broader accessibility, the details of these releases highlight strikingly different approaches to what open AI should mean. This article explores the architecture, accessibility, performance benchmarks, regulatory compliance and wider industry impact of these three models. The aim is to clarify whether xAI’s Grok or OpenAI’s GPT-OSS family currently offers more value for developers, businesses and regulators in Europe and beyond. What Was Released Grok 2.5, described by xAI as a 270 billion parameter model, was made available through the release of its weights and tokenizer. These files amount to roughly half a terabyte and were published on Hugging Face. Yet the release lacks critical elements such as training code, detailed architectural notes or dataset documentation. Most importantly, Grok 2.5 comes with a bespoke licence drafted by xAI that has not yet been clearly scrutinised by legal or open-source communities. Analysts have noted that its terms could be revocable or carry restrictions that prevent the model from being considered genuinely open source. Elon Musk promised on social media that Grok 3 would be published in the same manner within six months, suggesting this is just the beginning of a broader strategy by xAI to join the open-source race. By contrast, OpenAI unveiled GPT-OSS-20B and GPT-OSS-120B on 5 August 2025 with a far more comprehensive package. The models were released under the widely recognised Apache 2.0 licence, which is permissive, business-friendly and in line with requirements of the European Union’s AI Act. OpenAI did not only share the weights but also architectural details, training methodology, evaluation benchmarks, code samples and usage guidelines. This represents one of the most transparent releases ever made by the company, which historically faced criticism for keeping its frontier models proprietary. Architectural Approach The architectural differences between these models reveal much about their intended use. Grok 2.5 is a dense transformer with all 270 billion parameters engaged in computation. Without detailed documentation, it is unclear how efficiently it handles scaling or what kinds of attention mechanisms are employed. Meanwhile, GPT-OSS-20B and GPT-OSS-120B make use of a Mixture-of-Experts design. In practice this means that although the models contain 21 and 117 billion parameters respectively, only a small subset of those parameters are activated for each token. GPT-OSS-20B activates 3.6 billion and GPT-OSS-120B activates just over 5 billion. This architecture leads to far greater efficiency, allowing the smaller of the two to run comfortably on devices with only 16 gigabytes of memory, including Snapdragon laptops and consumer-grade graphics cards. The larger model requires 80 gigabytes of GPU memory, placing it in the range of high-end professional hardware, yet still far more efficient than a dense model of similar size. This is a deliberate choice by OpenAI to ensure that open-weight models are not only theoretically available but practically usable. Documentation and Transparency The difference in documentation further separates the two releases. OpenAI’s GPT-OSS models include explanations of their sparse attention layers, grouped multi-query attention, and support for extended context lengths up to 128,000 tokens. These details allow independent researchers to understand, test and even modify the architecture. By contrast, Grok 2.5 offers little more than its weight files and tokenizer, making it effectively a black box. From a developer’s perspective this is crucial: having access to weights without knowing how the system was trained or structured limits reproducibility and hinders adaptation. Transparency also affects regulatory compliance and community trust, making OpenAI’s approach significantly more robust. Performance and Benchmarks Benchmark performance is another area where GPT-OSS models shine. According to OpenAI’s technical documentation and independent testing, GPT-OSS-120B rivals or exceeds the reasoning ability of the company’s o4-mini model, while GPT-OSS-20B achieves parity with the o3-mini. On benchmarks such as MMLU, Codeforces, HealthBench and the AIME mathematics tests from 2024 and 2025, the models perform strongly, especially considering their efficient architecture. GPT-OSS-20B in particular impressed researchers by outperforming much larger competitors such as Qwen3-32B on certain coding and reasoning tasks, despite using less energy and memory. Academic studies published on arXiv in August 2025 highlighted that the model achieved nearly 32 per cent higher throughput and more than 25 per cent lower energy consumption per 1,000 tokens than rival models. Interestingly, one paper noted that GPT-OSS-20B outperformed its larger sibling GPT-OSS-120B on some human evaluation benchmarks, suggesting that sparse scaling does not always correlate linearly with capability. In terms of safety and robustness, the GPT-OSS models again appear carefully designed. They perform comparably to o4-mini on jailbreak resistance and bias testing, though they display higher hallucination rates in simple factual question-answering tasks. This transparency allows researchers to target weaknesses directly, which is part of the value of an open-weight release. Grok 2.5, however, lacks publicly available benchmarks altogether. Without independent testing, its actual capabilities remain uncertain, leaving the community with only Musk’s promotional statements to go by. Regulatory Compliance Regulatory compliance is a particularly important issue for organisations in Europe under the EU AI Act. The legislation requires general-purpose AI models to be released under genuinely open licences, accompanied by detailed technical documentation, information on training and testing datasets, and usage reporting. For models that exceed systemic risk thresholds, such as those trained with more than 10²⁵ floating point operations, further obligations apply, including risk assessment and registration. Grok 2.5, by virtue of its vague licence and lack of documentation, appears non-compliant on several counts. Unless xAI publishes more details or adapts its licensing, European businesses may find it difficult or legally risky to adopt Grok in their workflows. GPT-OSS-20B and 120B, by contrast, seem carefully aligned with the requirements of the AI Act. Their Apache 2.0 licence is recognised under the Act, their documentation meets transparency demands, and OpenAI has signalled a commitment to provide usage reporting. From a regulatory standpoint, OpenAI’s releases are safer bets for integration within the UK and EU. Community Reception The reception from the AI community reflects these differences. Developers welcomed OpenAI’s move as a long-awaited recognition of the open-source movement, especially after years of criticism that the company had become overly protective of its models. Some users, however, expressed frustration with the mixture-of-experts design, reporting that it can lead to repetitive tool-calling behaviours and less engaging conversational output. Yet most acknowledged that for tasks requiring structured reasoning, coding or mathematical precision, the GPT-OSS family performs exceptionally well. Grok 2.5’s release was greeted with more scepticism. While some praised Musk for at least releasing weights, others argued that without a proper licence or documentation it was little more than a symbolic gesture designed to signal openness while avoiding true transparency. Strategic Implications The strategic motivations behind these releases are also worth considering. For xAI, releasing Grok 2.5 may be less about immediate usability and more about positioning in the competitive AI landscape, particularly against Chinese developers and American rivals. For OpenAI, the move appears to be a balancing act: maintaining leadership in proprietary frontier models like GPT-5 while offering credible open-weight alternatives that address regulatory scrutiny and community pressure. This dual strategy could prove effective, enabling the company to dominate both commercial and open-source markets. Conclusion Ultimately, the comparison between Grok 2.5 and GPT-OSS-20B and 120B is not merely technical but philosophical. xAI’s release demonstrates a willingness to participate in the open-source movement but stops short of true openness. OpenAI, on the other hand, has set a new standard for what open-weight releases should look like in 2025: efficient architectures, extensive documentation, clear licensing, strong benchmark performance and regulatory compliance. For European businesses and policymakers evaluating open-source AI options, GPT-OSS currently represents the more practical, compliant and capable choice. In conclusion, while both xAI and OpenAI contributed to the momentum of open-source AI in August 2025, the details reveal that not all openness is created equal. Grok 2.5 stands as an important symbolic release, but OpenAI’s GPT-OSS family sets the benchmark for practical usability, compliance with the EU AI Act, and genuine transparency.

Crypto World

Meta builds photorealistic AI Zuckerberg to engage employees in real time

Meta Platforms is experimenting with AI to develop a new way for its chief executive, Mark Zuckerberg, to communicate with his staff without being physically present.

Summary

- Meta Platforms is developing a photorealistic AI-powered 3D version of Mark Zuckerberg to enable real-time interaction with employees without physical presence.

- The system is being trained on Zuckerberg’s voice, expressions, and communication style, with the goal of providing staff direct access to leadership for guidance and updates.

- The initiative comes as Meta expands its social commerce tools, allowing creators to link product catalogues within Reels, turning content into shoppable storefronts across 22 countries.

A recent report by the Financial Times says the company is building a photorealistic, AI-powered 3D version of Zuckerberg, which would be capable of engaging with his employees in real time.

The system will be designed to simulate natural conversations, allowing staff members to interact with the digital representation of Zuckerberg, who can respond in a human-like manner.

While still in early stages, the initiative signals Meta’s continued investment in virtual human systems that can speak, respond, and hold conversations across different environments.

The digital version is being trained using Zuckerberg’s voice, facial expressions, tone, and public speaking patterns. It is also learning from his recent statements on company strategy, so it can deliver responses aligned with his views. Reports indicate that Zuckerberg is actively involved in testing and refining the system.

Meta expects the tool to give employees real-time access to leadership for guidance, feedback, and updates. The company also sees it as a way to improve internal communication, especially given its global workforce, where direct interaction with executives is limited.

However, it should be noted that creating such a system requires massive computing power to ensure lifelike visuals and low-latency conversations. Teams at Meta have been working to improve both rendering quality and voice realism. As part of this effort, the company has strengthened its capabilities through acquisitions such as PlayAI and WaveForms.

The project is separate from Meta’s internal CEO assistant agent, which helps Zuckerberg manage daily tasks and retrieve information. Unlike that system, the 3D model is focused on communication and interaction, and could eventually extend beyond internal use.

Once successful, the approach may open the door for creators and influencers to build their own AI-driven avatars to engage audiences. Meta has already taken initial steps in this direction through its AI Studio platform.

Meta pushes into social commerce to strengthen creator ecosystem

The development follows Meta Platforms’ expansion in social commerce by linking creators, artificial intelligence, and advertising more closely to purchasing activity across platforms like Instagram and Reels.

A central part of the strategy involves increasing the role of creators in the shopping journey. Businesses in 22 countries, including India, will soon be able to share product catalogues directly with creators. These can then be tagged and linked within Reels, effectively turning content into shoppable storefronts.

The update would narrow the gap between entertainment and commerce, allowing users to move more seamlessly from discovery to purchase within the same interface.

Crypto World

Crypto ETP Inflows Hit $1.1 Billion, Strongest Since January

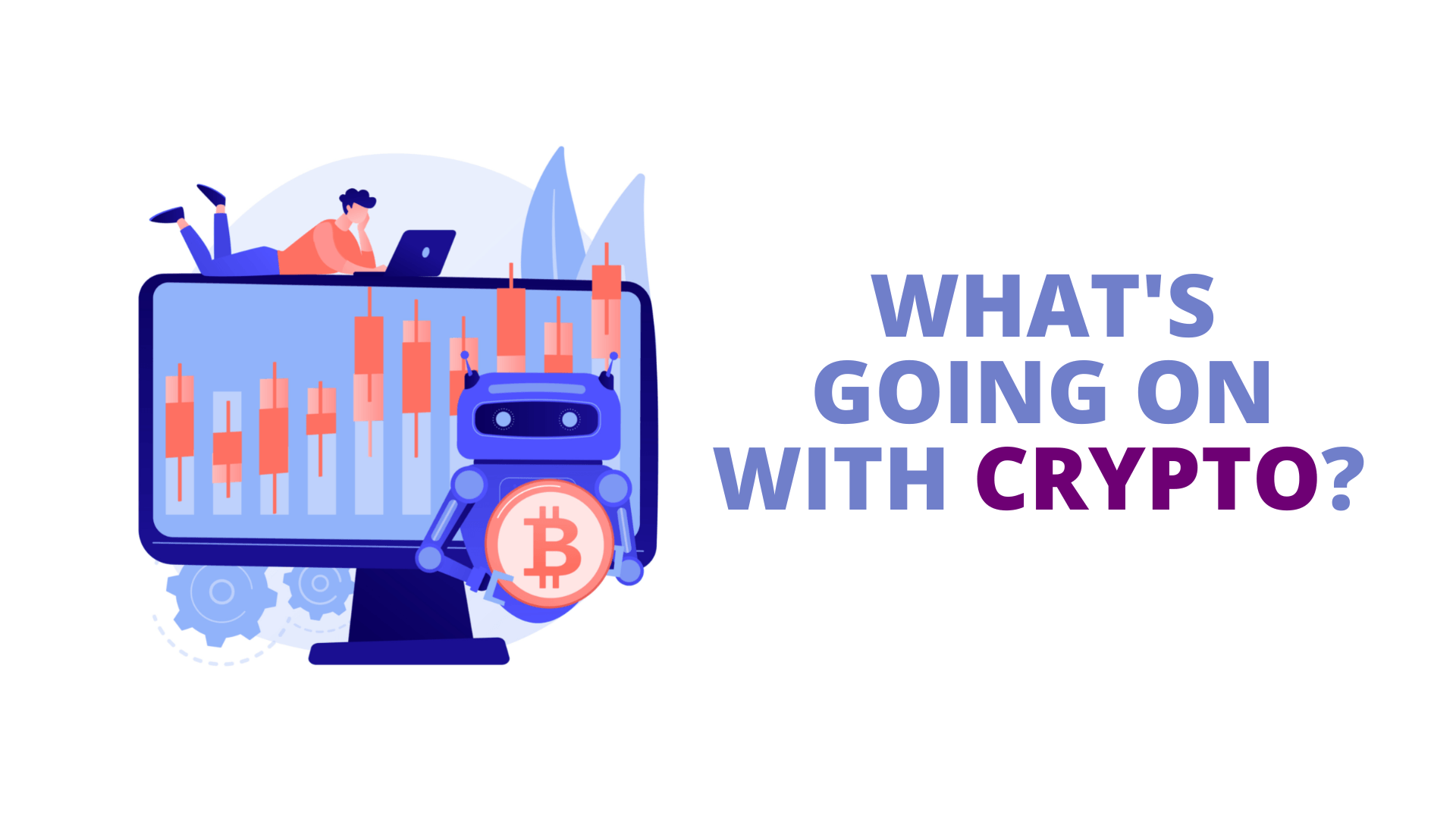

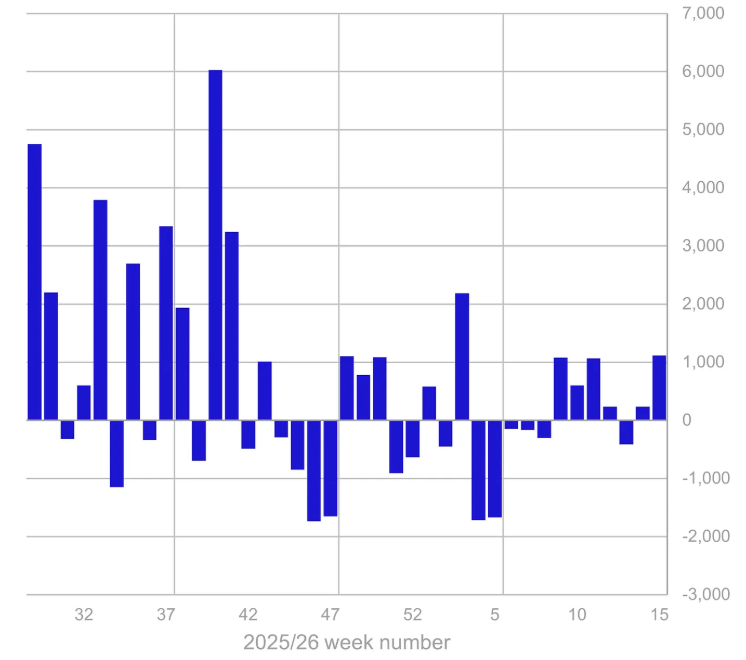

Cryptocurrency investment products clocked significant inflows last week, marking their strongest weekly gains since January.

Global crypto exchange-traded products (ETPs) logged $1.1 billion in inflows last week, with Bitcoin (BTC) leading the gains with $871 million in inflows, CoinShares reported on Monday.

The inflows marked the second-biggest weekly gains in 2026 so far, following only the $2.17 billion in weekly inflows recorded in mid-January.

CoinShares’ head of research, James Butterfill, attributed the spike in inflows to a rebound in investor risk appetite following tentative ceasefire developments in Iran, alongside support from softer-than-expected US inflation and spending data.

The inflows came amid volatility in spot markets, with BTC reclaiming $70,000 and briefly topping $73,000 last week, even as broader market sentiment remained negative, underscoring sustained institutional demand and resilience in regulated investment products.

Ether ETP flows rebound, but year-to-date inflows are still negative

Ether (ETH) ETPs saw a strong rebound in sentiment with around $196.5 million in inflows, the first inflows after three consecutive weeks of outflows.

Despite the gains, Ether remains one of the only assets in a net outflow position year-to-date, at $130 million. In contrast, Bitcoin sits on the largest inflows this year so far at $1.9 billion and accounts for around 83% of the $2.3 billion in total crypto ETP inflows year-to-date.

Although Bitcoin ETPs posted significant inflows, short-Bitcoin investors were also active last week, with weekly inflows totaling $20 million, their largest weekly inflows since November 2024, Butterfill noted.

Among other gains, XRP (XRP) ETPs posted inflows of around $19 million. Solana (SOL) saw minor outflows of $2.5 million.

Related: BlackRock Bitcoin ETF sees $269M inflows, best day since early March

Regionally, positive sentiment was almost entirely concentrated in the US, which saw inflows of $1 billion, accounting for 95% of net weekly inflows. The majority of Bitcoin ETP inflows were driven by US spot BTC exchange-traded funds, which posted $786.3 million in inflows last week, according to SoSoValue data.

Germany recorded inflows of $34.6 million, while Canada and Switzerland saw more modest inflows of $7.8 million and $6.9 million, respectively.

Crypto World

Strategy’s STRC gives hedge funds a new reason to short MSTR

Every new share of STRC by Strategy (formerly MicroStrategy) creates a perpetual claim on the company’s cash flow, and this might give institutions a reason to short the company’s MSTR common stock.

Strategy is a bitcoin (BTC) acquisition company that uses most of the proceeds of all types of its share sales to buy BTC.

Although MSTR has no upside limit and has unlimited price appreciation potential to penalize short-sellers, plenty of traders already short MSTR. Specifically, short interest exceeds 35 million shares of MSTR, equivalent to an alarming 11% of the float.

Yet few people understand that a small portion of this MSTR short interest might be the result of its interplay with STRC.

STRC is Strategy’s quasi-pegged stock that pays a variable, 11.5% annualized dividend and is supposed to trade near $100.

It’s fluctuated within 10% of that band during its lifespan.

The company’s common stock, MSTR, pays no dividends and fluctuates in price with no regard for any peg. Indeed, it’s fluctuated mostly, over the last 18 months, in a very downward direction and has halved over the past year.

There are $5.3 billion worth of STRC outstanding paying an 11.5% annual dividend in cash USD. Unfortunately, the company cannot fund those $609 million in annual payouts from regular business profits, which have been in decline for years.

Moreover, the company’s management, rather than focusing on fixing their software business, are “laser focused” on selling more STRC, according to founder Michael Saylor.

Indeed, CEO Phong Le has admitted that the company intends to pivot away from at the market (ATM) MSTR issuances in favor of perpetual preferred offerings.

Unfortunately, those preferred shares like STRC create obligations on the assets owned by MSTR.

Read more: STRC could be funding more Strategy bitcoin buys than ever

How STRC dividends actually work

Again, each new STRC issuance perpetually siphons dollars from Strategy which is collectively owned by MSTR, after STRC’s more senior claims. Yes, STRC is called a perpetual preferred for a reason.

Strategy owes $609 million per year in STRC dividends, and that cash has to come from somewhere. For years, it’s mostly been coming from MSTR ATMs.

In other words, each new STRC share increases Strategy’s annual cash dividend obligations.

Since the company generates negligible to negative earnings, the market expects those obligations to be funded by MSTR share dilution as a last resort, given the preeminence of MSTR as the most popular, liquid, and indexed security of the company.

Thus, STRC creates an expectation of predictable MSTR dilution that short sellers can front-run.

Moreover, the success of STRC at attracting capital is somewhat at the expense of demand that might otherwise bid for MSTR.

Rather than shareholders bidding for MSTR because they believe in Strategy, if they buy STRC instead, they benefit MSTR only in a one-time purchase of BTC yet then siphon out cash from the company forever.

STRC dividends at the discretion of the board

Even though short-sellers might be correct about their prediction about ongoing MSTR dilution, STRC dividends aren’t a fixed obligation to literally guarantee this dilution.

Strategy’s board declares dividends at its sole discretion. Moreover, the dividend rate of STRC is variable. Although it has only gone higher since inception, the board of directors can technically reduce it by 25 basis points plus certain declines in the one-month US Treasury secured overnight financing rate (SOFR).

Strategy can also fund dividends from any legally available cash, not just MSTR sales.

For example, the company might fund dividends through further STRC issuances, sales of other preferred shares, traditional debt, or other capital raises.

Read more: Saylor continues to liken STRC to a money market as risks mount

Buying converts, shorting commons

Before Strategy sold non-convertible preferred shares like STRC, it sold convertible bond notes.

A less exotic asset type than Strategy’s perpetual preferreds, and therefore with a longer history for academic studies, the short-selling of common stock by companies that have issued convertible notes is a well-documented phenomenon.

Hedge funds frequently buy convertible notes, short the common stock to delta-hedge their position, and profit from volatility. Academic research confirms that convertible bond arbitrageurs drive significant increases in short-selling near issuance dates.

As of Friday, Strategy held 766,970 BTC at an average cost basis of $75,644 per coin. Over the weekend, BTC was below $71,000, well below Strategy’s cost basis.

Strategy still has more than $22 billion in remaining STRC ATM capacity. Each $1 billion more of STRC means another $115 million in annual obligations in perpetuity.

Protos has previously documented how Strategy has hiked STRC’s dividend seven times since launch, from 9% to 11.5%, to encourage optimism after STRC traded as low as $90.52 in November and $93.10 in February.

Got a tip? Send us an email securely via Protos Leaks. For more informed news, follow us on X, Bluesky, and Google News, or subscribe to our YouTube channel.

Crypto World

Goldman Sachs (GS) Stock Surges on Strong Q1 Results and Record Equities Trading

Key Highlights

- First-quarter net profits reached $5.63 billion, marking a 19% increase compared to the prior year

- Earnings per share of $17.55 exceeded Wall Street projections of $16.47; total revenue of $17.23 billion surpassed the $17 billion consensus

- Equities trading generated an all-time high of $5.33 billion, climbing 27%, while fixed income revenue declined 10% to $4.01 billion

- Investment banking revenues jumped 48% to reach $2.84 billion, with the firm capturing top M&A market share globally

- Asset and wealth management division grew 10% to $4.08 billion; the firm finalized its Innovator Capital Management purchase

Goldman Sachs delivered impressive first-quarter performance, posting net profits of $5.63 billion — representing a 19% increase over the comparable quarter a year ago.

The investment bank’s earnings per share reached $17.55, comfortably beating Wall Street’s consensus forecast of $16.47. Total net revenue of $17.23 billion also exceeded analyst expectations of $17 billion, based on FactSet consensus estimates.

The standout performance was fueled by unprecedented strength in equities trading. Revenue from the bank’s equity trading and financing operations surged 27% to reach $5.33 billion — marking an all-time record for this division.

The Goldman Sachs Group, Inc., GS

The only area showing weakness was fixed income, currencies and commodities trading, which decreased 10% to $4.01 billion.

Chief Executive David Solomon maintained a measured outlook despite the impressive figures. “The geopolitical landscape remains very complex — so disciplined risk management must remain core to how we operate,” he stated in the earnings release.

Increased market turbulence stemming from the Iran conflict has prompted investors to adjust their holdings and implement hedging strategies, creating favorable conditions for trading operations. Goldman was strategically positioned to capitalize on this elevated client activity.

Investment Banking Powers Ahead

Investment banking emerged as another major growth driver. Fees in this segment skyrocketed 48% year-over-year to $2.84 billion, supported by robust merger and acquisition activity.

Global M&A transaction volume reached $1.38 trillion during the first quarter, according to Dealogic figures. Research from Jefferies highlighted that Goldman secured the leading market share position as worldwide M&A advisory fees climbed 19% to $11.3 billion.

Goldman served as advisor on several marquee transactions during the period, including Unilever’s announced merger of its food division with McCormick to establish a $65 billion entity, and Equitable’s proposed combination with Corebridge to create a $22 billion insurance company.

The initial public offering landscape also remains robust. Goldman obtained a lead underwriter position for SpaceX’s expected June market debut, which could generate $75 billion in proceeds at a $1.75 trillion company valuation. The firm additionally managed PayPay’s $880 million U.S. public offering.

Wealth Management Division Maintains Growth Trajectory

The asset and wealth management segment generated $4.08 billion in revenue, representing a 10% increase. Goldman has strategically expanded this business line to create more stable, recurring revenue streams to complement its traditionally volatile trading and banking operations.

The company’s private credit fund weathered an industry-wide redemption wave during the quarter. Investors withdrew just under 5% of fund assets — remaining within allowable limits — as artificial intelligence-related concerns created broader turbulence in private credit markets.

Goldman recently finalized its acquisition of Innovator Capital Management, an active ETF platform, earlier this month. This transaction expands the firm’s total ETF assets under supervision to $90 billion.

GS shares have advanced more than 3% year-to-date in 2026, building on a 53% rally in 2025.

Crypto World

Secure a spot in the leading crypto presale in 2026 now

Disclosure: This article does not represent investment advice. The content and materials featured on this page are for educational purposes only.

Investors shift to utility-meme hybrids as projects like DOGEBALL gain traction in the 2026 presale market.

Summary

- Utility-meme hybrids gain traction in 2026 as investors shift from hype to functional crypto ecosystems

- DOGEBALL powers DOGECHAIN, a gaming-focused Layer 2 blockchain with fast, low-cost transactions

- Presale demand rises as DOGEBALL blends gaming utility and infrastructure ahead of Q1 altcoin cycle

Financial freedom in the blockchain space has always favored the fast and the focused. While most retail traders are distracted by fading trends, a silent accumulation is happening within a new sector: Utility-Meme Hybrids.

The era of buying tokens with no purpose is dead. Today, savvy investors are migrating toward projects that offer high-speed infrastructure and immediate gaming utility. For those who missed the explosive early days of the original meme icons, the top crypto presale in 2026 is officially their second chance to enter a high-utility ecosystem before the mainstream surge.

This article explores the shift from speculative assets to functional powerhouses. We will analyze the historical trajectory of XRP as a blueprint for success, deep-dive into the technical USPs of DOGEBALL (DOGEBALL), and explain why the current Stage 2 pricing offers a mathematically superior entry point. From a custom Layer 2 (L2) blockchain to a $1m prize pool, every metric suggests that DOGEBALL is positioned to lead the upcoming Q1 altcoin run.

The XRP blueprint: Why early skeptics missed millions

History proves that the most lucrative opportunities are often the most doubted. When XRP first launched, it was dismissed by many as a niche tool for banks. However, those who looked past the noise recognized its fundamental utility in solving cross-border liquidity. Investors who entered during the XRP ICO at fractions of a cent saw their holdings multiply by thousands of percent. It wasn’t luck; it was the result of identifying a project with a clear use case and aggressive market positioning before the “herd” arrived.

The crypto world is constantly cycling, bringing new chances to those who missed the previous boat. The lesson from XRP is simple: timing is the ultimate multiplier. Today, the focus has shifted to the gaming and L2 sectors. The top crypto presale in 2026 represents that same ground-floor window. While others wait for a “safe” listing on major exchanges, the real wealth is being built right now by participants who recognize that DOGEBALL is combining the viral power of DOGE with the technical robustness of a dedicated Ethereum Layer 2.

DOGEBALL technicals: A custom L2 blockchain for the top crypto presale in 2026

DOGEBALL (DOGEBALL) is not another derivative project; it is the native utility token of DOGECHAIN. This is a world-first, custom-built ETH L2 blockchain designed specifically for the global gaming industry. Unlike many competitors that offer “paper promises,” DOGEBALL features a live, testable blockchain explorer on its website. With near-zero gas fees and sub-2-second transaction finality, it is built to handle the high-frequency micro-transactions required for modern online gaming and partnerships with industry giants like Falcon Interactive.

Why settle for a standard memecoin when there is an opportunity to own the infrastructure it runs on? This project brings real-life dual utility: it powers the DOGECHAIN and serves as the primary currency for an addictive, leaderboard-driven dodgeball game. With a total supply capped at 80 billion tokens and a transparent 4-month presale window, the scarcity is built-in. By bridging the gap between “fun” and “function,” the top crypto presale in 2026 provides a credible, evidence-based argument for long-term value appreciation that hype-only projects simply cannot match.

50x ROI potential: Potential to turn $0.0004 into $0.015 by May 2026

The math behind the top crypto presale in 2026 is clear and compelling. The presale launched on 2nd January 2026 and is strictly scheduled to end on 2nd May 2026. This 4-month window is one of the fastest in the industry, ensuring investors aren’t trapped in long vesting cycles. Currently in Stage 2, the price is set at $0.0004. With a confirmed exchange listing price of $0.015, early participants are looking at a 37.5x return on price action alone, while Stage 1 buyers have already secured a 50x path.

To maximize these gains, the project has introduced the limited-time bonus code DB25. By applying this code at checkout, buyers receive a 25% increase in their DOGEBALL token allocation instantly. This is more than just a “bonus”; it is a strategic advantage that lowers the average cost basis and increases the share of the 20 billion tokens allocated for the ICO. With over $196,000 already raised and 750+ participants, the transition to Stage 3 and higher price points is imminent. Buying today is the only way to lock in these specific margins.

Quick guide: How to join The DOGEBALL crypto presale 2026

Securing a position in the top crypto presale in 2026 is a streamlined process designed for speed. First, visit the official DOGEBALL website and connect a compatible web3 wallet such as MetaMask or Trust Wallet. The platform is highly accessible, accepting a wide range of currencies including ETH, USDT, BNB, SOL, and even traditional Credit/Debit cards. This multi-chain compatibility ensures that no matter where liquidity is, anyone can participate without complex bridging.

Once the wallet is connected, enter the amount to contribute and remember to input the bonus code DB25. This code is a time-sensitive offer that grants a 25% token boost on every purchase. After the transaction is confirmed, DOGEBALL tokens will be visible in a personal dashboard. The investors can then choose to hold for the May launch or utilize the 80% staking rewards to further grow their balance during the presale period. It is a simple four-step path to becoming an early stakeholder in a high-growth L2 ecosystem.

VIP Rewards: 100% bonus for the buyer of the week

The DOGEBALL community thrives on healthy competition and massive rewards. It recently witnessed a historic battle for the “Buyer of the Week” title that perfectly illustrates the project’s momentum. In the final minutes of the weekly cycle, a $2,131 buy hit the chain at 23:58 UTC to take the lead. However, in a stunning move at 23:59 UTC, a final purchase of $2,320 was recorded, snatching the top spot at the very last second. This level of activity proves that high-value investors are racing to accumulate.

To honor this dedication, the “Buyer of the Week” is treated like true royalty. The winner receives a staggering 100% additional token bonus for their entire spend that week, reflected directly in their user dashboard. This means the winner effectively doubled their investment for free simply by topping the leaderboard. This VIP incentive resets every seven days, offering a recurring opportunity for anyone to maximize their holdings. Be the one to dominate the rankings next week and claim the 100% bonus.

Final verdict: Why the DOGEBALL presale is a smart play

As we look toward the 2026 altcoin bull run, the distinction between “winners” and “losers” will come down to utility. We have discussed how early XRP adopters ignored the noise to find success, and how DOGEBALL is now applying that same logic to the gaming world. With a proprietary L2 blockchain that is already testable and a professional partnership with Falcon Interactive, this project is built for longevity. It avoids the “empty hype” trap by delivering a functional product before the presale even concludes.

The 4-month timeline is a gift to investors who value liquidity and momentum. Joining the DOGEBALL presale today means aligning with a project that has an audited 100% security score and a clear path to a $0.015 listing. Use the focus keyword “top crypto presale in 2026” to stay updated on our progress, and don’t forget to use code DB25 for 25% token boost. The opportunity to turn a modest investment into a significant portfolio cornerstone is here, but it only lasts until May 2nd.

For more information, visit the official website, Telegram, and X.

FAQs for top crypto presale in 2026

What crypto to buy early 2026?

DOGEBALL is widely considered the top crypto presale in 2026 because it offers a functional L2 blockchain and a $1m gaming prize pool. Its current Stage 2 price of $0.0004 provides a massive upside compared to the $0.015 launch price.

What is the best presale crypto to buy now?

The best option is the DOGEBALL crypto presale 2026 due to its real-world utility and 80% staking rewards. Unlike typical meme coins, $DOGEBALL powers a custom Ethereum Layer 2 chain, making it a high-value asset for both gamers and long-term investors.

Which crypto will give 1000x in 2026?

While market conditions vary, DOGEBALL has the ingredients for explosive growth. By combining a 50x launch target with a proprietary gaming ecosystem and the viral “Doge” branding, it represents the top crypto presale in 2026 for those seeking significant ROI.

Disclosure: This content is provided by a third party. Neither crypto.news nor the author of this article endorses any product mentioned on this page. Users should conduct their own research before taking any action related to the company.

Crypto World

Micron (MU) Stock Could Soar 40% Higher, According to Wall Street Analyst

Key Takeaways

- John Vinh of KeyBanc maintains an Overweight stance on Micron with a $600 price objective, representing approximately 40% potential appreciation from present trading levels.

- The memory chip manufacturer’s shares have soared nearly six times their value over the trailing twelve months, propelled by robust AI memory chip demand.

- For fiscal Q3, Vinh projects revenue reaching $35.1 billion with earnings per share of $20.54, surpassing Street estimates.

- Supply constraints are anticipated to persist through at least the middle of 2027, with quarterly price increases of 30–50% projected for Q2 2026.

- Aletheia Capital identifies Micron as positioned to benefit from an anticipated 33% year-over-year surge in cloud infrastructure spending during 2026.

Micron Technology has delivered one of the semiconductor industry’s most spectacular performances over the past twelve months. Shares have multiplied nearly six times, yet certain Wall Street analysts believe significant appreciation potential remains.

John Vinh from KeyBanc has identified Micron among semiconductor stocks offering the most attractive risk/reward profiles entering the current earnings cycle. His firm maintains an Overweight recommendation with a $600 price objective. Monday’s premarket session saw shares changing hands around $413.54, reflecting a 1.7% decline — positioning the analyst’s target approximately 40% above present valuation.

Vinh’s investment thesis builds on several fundamental arguments. Notably, he contends Micron remains attractively valued. Notwithstanding the extraordinary price appreciation, the company trades at among the most compressed forward price-to-earnings ratios across the entire S&P 500 index. Such valuation discrepancies typically prove unsustainable, particularly when earnings trajectories point upward.

Projected Results Exceed Street Expectations

For the fiscal third quarter, Vinh anticipates revenue of $35.1 billion with earnings per share reaching $20.54. Both projections exceed Wall Street’s consensus estimates of $33.8 billion in revenue and $19.26 per share. The company is scheduled to announce these results toward the end of June.

Vinh also anticipates forward guidance surpassing market expectations. “We expect Micron will post better results and higher guidance, supported by a structurally stronger-for-longer memory cycle driven by hyperscaler demand and constrained supply,” Vinh stated in research commentary released Sunday.

The memory semiconductor sector has historically exhibited pronounced cyclical characteristics. Expansion phases typically transition into contractions, leaving investors vulnerable. However, Vinh believes the present environment differs fundamentally. His analysis suggests demand will continue outstripping supply through at least mid-2027, when substantial new production capacity becomes operational.

Near-term projections call for sequential pricing increases of 30–50% during Q2 2026. Such pricing leverage represents an uncommon development within the semiconductor space and would translate directly into margin expansion.

Data Center Investment Wave Provides Tailwinds

The optimistic perspective on Micron extends beyond KeyBanc’s analysis. Aletheia Capital released complementary research Monday, highlighting a significant data center investment cycle benefiting memory and semiconductor supply chain participants.

The research firm forecasts the leading quartet of cloud infrastructure providers will expand general server capital investment by 33% year-over-year in 2026, with an additional 21% growth following in 2027. This expenditure wave stems from agentic artificial intelligence applications, which consume substantial memory volumes.

Aletheia identifies an inflection point for component manufacturers beginning in Q2 2026, with system integrators accelerating through Q3 and Q4. Micron appears alongside AMD and SK Hynix among the primary beneficiaries.

The firm also notes unconventional seasonal patterns emerging this year — unit shipments are projected to expand sequentially throughout each quarter, departing from historical norms.

Celestica, another participant in the AI infrastructure ecosystem, has already appreciated 344% over the past year and currently trades near its 52-week peak of $363.

Micron’s quarterly results are scheduled for late June 2026. Analyst consensus currently stands at $33.8 billion in revenue with $19.26 earnings per share for the quarter.

Crypto World

Crypto ETPs See $1.1B Inflows, Largest Since January

Crypto investment products posted a decisive rebound last week, with global exchange-traded products (ETPs) drawing about $1.1 billion in inflows. Bitcoin led the charge, attracting roughly $871 million for the week, according to CoinShares’ weekly Digital Asset Fund Flows report. The week represented the strongest swing for crypto ETPs in 2026 aside from the mid-January surge of $2.17 billion inflows.

Ether’s ETPs also turned positive, logging about $196.5 million in inflows—the first weekly inflows after three straight weeks of outflows—while the regional flow pattern remained heavily skewed toward the United States, underscoring a clear appetite for regulated crypto exposure amid mixed macro signals.

Key takeaways

- Total inflows for the week reached about $1.1 billion, with Bitcoin accounting for roughly $871 million and continuing to drive the bulk of new money into regulated crypto exposure.

- Ether ETPs rebounded to about $196.5 million in inflows, yet Ether remains one of the few assets with negative year-to-date momentum, down about $130 million, while Bitcoin leads overall YTD flows at roughly $1.9 billion and represents about 83% of the $2.3 billion total YTD inflows.

- Investors added to short-Bitcoin products as weekly inflows hit $20 million—the largest since November 2024—while XRP ETPs drew roughly $19 million and Solana saw modest outflows of about $2.5 million.

- Regional dispersion remained highly US-centric, with about $1 billion of inflows concentrated in the United States (roughly 95% of weekly net inflows). US spot BTC ETPs led the way, pulling in around $786.3 million, according to SoSoValue data. Germany, Canada, and Switzerland posted smaller inflows of $34.6 million, $7.8 million, and $6.9 million, respectively.

Bitcoin-led demand and the broader price backdrop

Bitcoin’s surge to the forefront of weekly inflows coincided with persistent volatility in spot markets. The token briefly reclaimed the $70,000 level and even traded above $73,000 at times last week, even as the wider market sentiment remained fragile. CoinShares notes that the strength of ETP inflows points to continued institutional demand and a preference for regulated investment products, even in a period of mixed macro signals.

James Butterfill, head of research at CoinShares, attributed the inflow spike to a confluence of factors: a rebound in risk appetite following tentative ceasefire developments in Iran, alongside softer-than-expected U.S. inflation and spending data. The combination appeared to reassure investors that regulated exposure to crypto remains a viable proxy for risk-on positioning, even as the broader market contends with volatility and policy ambiguity.

Ether’s rebound amid a cautious year

Ether’s $196.5 million inflow marks a notable shift after three weeks of outflows, suggesting some rotation back into Ethereum-based products as investors reassess narrative risk and chain-level activity. Despite the rebound, Ether’s year-to-date tally remains negative, reflecting a broader rotation away from certain non-Bitcoin assets within regulated vehicles. By contrast, Bitcoin’s stronger YTD inflows highlight continued demand for the largest crypto as a core exposure within ETP portfolios.

Regional focus and notable movers

The geographic split of flows further underscored a US-dominated appetite for crypto ETPs. Roughly $1 billion of weekly inflows originated in the United States, with US spot BTC ETPs alone contributing about $786.3 million. Germany registered inflows of $34.6 million, while Canada and Switzerland saw smaller inflows of $7.8 million and $6.9 million, respectively. In the smaller movers, XRP ETPs added about $19 million, and Solana saw modest outflows of around $2.5 million. The week also featured active positioning in short-BTC instruments, reflecting tactical bets on near-term price dynamics.

These patterns align with a broader narrative: investors remain willing to deploy capital into regulated crypto access points, even as the macro environment remains uncertain. The US-led flows, in particular, emphasize how regulatory clarity and product availability can shape allocation during periods of mixed sentiment.

What this means for investors going forward

The latest CoinShares data reinforce a theme that has persisted through 2026: demand for regulated crypto exposure is highly sensitive to macro signals and policy cues, with the United States acting as the primary engine of inflows. The strong BTC performance relative to Ether underscores a potential preference for flagship assets as a core ballast within ETP portfolios, especially when risk appetite improves alongside softer inflation readings.

For traders and institutions alike, the focus will likely remain on two fronts: the durability of the US-led inflow pattern and how Ether’s recent rebound evolves as broader liquidity conditions shift. The sizable short-BTC inflows also merit attention, as they can illuminate hedging dynamics and speculative positioning tied to near-term price expectations.

CoinShares’ data suggest that the near-term trajectory for crypto ETPs will hinge on macro clarity and regulatory developments. As policymakers and markets absorb ongoing inflation signals and geopolitical headlines, investors will watch whether the US stream of inflows sustains its lead and whether Ether can turn the year’s momentum more decisively in its favor.

Looking ahead, traders should monitor how forthcoming macro data, regulatory updates, and potential ceasefire developments influence risk appetite and flow leadership among BTC, ETH, and other liquid assets within regulated products.

Crypto World

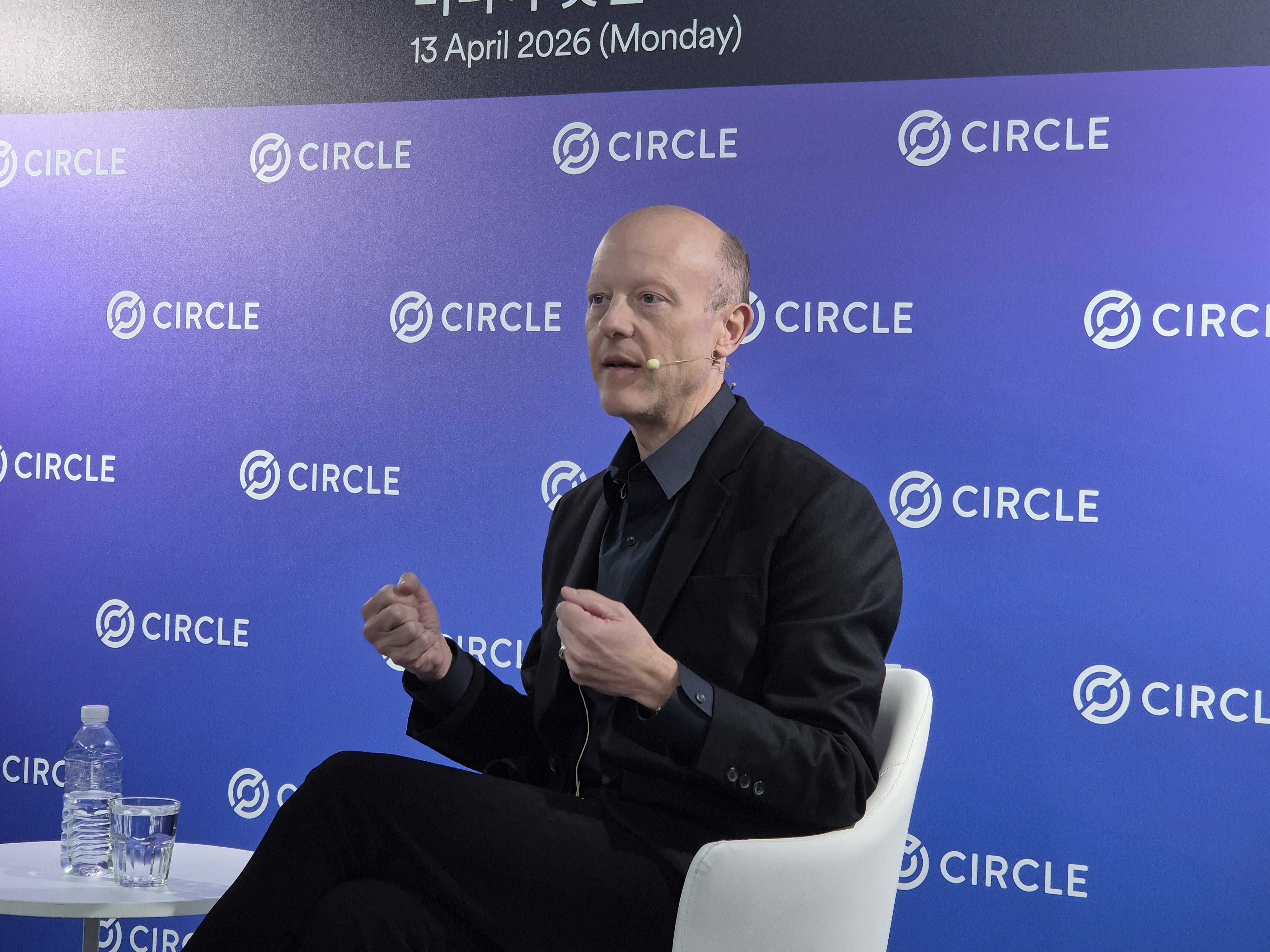

Circle CEO Says Crypto Tolls at Hormuz Strait Unlikely To Use USDC

Circle CEO Jeremy Allaire pushed back on concerns that USDC could be used for Iran’s crypto transit tolls at the Strait of Hormuz.

Allaire made the remarks at a press conference in Seoul on the afternoon of April 13, where BeInCrypto East Asia Editor-In-Chief, Oihyun Kim, was present. Allaire is visiting South Korea this week to meet exchanges, banks, and regulators.

Hormuz Tolls: ‘Highly Unlikely’ for USDC

A reporter asked whether Iran’s Revolutionary Guards might accept USDC for Hormuz passage fees. Allaire dismissed the idea.

“Circle operates a highly compliant infrastructure,” he said.

He noted that the company works closely with law enforcement and sanctions authorities.

Allaire pointed to public research from the United Nations and forensic firms. That data shows sanctioned actors tend to favor other stablecoins over USDC. He did not name specific tokens.

“It’s highly unlikely that a regime under sanctions would attempt something where the likelihood of the assets being immediately frozen is extremely high,” he said.

Drift Hack: Circle Defends Freeze Delay

The $285 million Drift Protocol exploit on April 1 drew sharp criticism of Circle. Attackers bridged over $230 million in stolen USDC from Solana to Ethereum over six hours. Circle took no action to freeze the funds during that window.

Allaire said the company follows strict legal obligations. Circle can only freeze wallets at the direction of law enforcement or courts.

“We do not as a company decide what is the right path,” he said. He warned that letting a private firm make those calls creates a “very significant moral quandary.”

He acknowledged the gap in the current framework. Circle is pushing for the CLARITY Act to include “safe harbors” that would let issuers freeze funds preemptively under extreme circumstances.

“We need that to be in the law, not just what we decide on our own,” he said.

Clarity Act: Yield Ban Won’t Hurt Circle

Allaire also addressed the CLARITY Act’s proposed ban on passive stablecoin yield. The bill would bar platforms from paying interest simply for holding stablecoins.

He said the change does not affect Circle directly. The GENIUS Act already forbids stablecoin issuers from paying interest to holders.

The real impact falls on distributors like exchanges and wallets. They can still offer activity-based rewards, but cannot market stablecoin holdings as bank deposit substitutes.

Allaire called the yield debate “overblown.” He noted that the vast majority of stablecoin holders worldwide receive no rewards at all. About half of the $120 trillion global M2 money supply sits in physical cash or non-interest-bearing accounts.

Korea Visit: Exchanges, Banks, and Regulation

Allaire spent several days in Seoul meeting major exchanges, financial groups, and regulators. Upbit operator Dunamu and Bithumb both signed MOUs with Circle on the same day. He also met executives from Shinhan, Hana, and KB Financial.

He said Circle does not plan to issue a Korean won stablecoin itself.

Korean law will likely require domestic bank-led consortiums for that role. Circle would instead offer its technology stack to local issuers.

The post Circle CEO Says Crypto Tolls at Hormuz Strait Unlikely To Use USDC appeared first on BeInCrypto.

Crypto World

Ministers and Members of Parliament at Paris Blockchain Week 2026: A Historic Signal for the Institutionalization of Crypto-Assets

For the first time, Paris Blockchain Week will simultaneously welcome ministers, an ambassador, and nearly twenty Members of Parliament, an unprecedented show of political mobilization for an event dedicated to crypto-assets and blockchain in Europe.

Paris Blockchain Week 2026 will be held on April 15 and 16 at the Carrousel du Louvre, at a time when digital assets, artificial intelligence, and digital infrastructure have become strategic issues for Europe’s competitiveness, sovereignty, and financial future. This political mobilization marks a turning point: crypto-assets are no longer a niche subject, but a leading institutional priority.

France has established itself as one of the most advanced G7 jurisdictions in the field of digital assets. Building on the PACTE law, the PSAN framework, and the entry into force of MiCA, it has made Paris a major hub for international institutions. This momentum is part of a broader ambition: to make France a regulatory and institutional reference point in Europe.

This edition will welcome participants from over 100 countries, including senior executives from BNP Paribas, Crédit Agricole, Banque de France, HSBC, JPMorgan Chase, Goldman Sachs, Morgan Stanley, and hundreds of other leading institutions. The week will open with the VIP Dinner at the Palace of Versailles, bringing together 500 leaders from finance, technology, and institutions.

In this context, this unprecedented political presence sends a strong signal to markets, policymakers, and investors: France intends to remain at the forefront of the debates shaping digital finance, innovation, and European strategic autonomy.

Program Highlights

Tuesday, April 14 — VIP Dinner, Palace of Versailles.

Jean-Didier Berger, Minister Delegate to the Minister of the Interior, will deliver the opening address.

Wednesday, April 15 — Carrousel du Louvre.

09:15 – 09:35 — Anne Le Hénanff, Minister Delegate for Artificial Intelligence and Digital Affairs, will open proceedings on the Master Stage with a fireside chat with Michael Amar, Chairman of Paris Blockchain Week.

09:35 – 09:45 — Press Q&A session with Anne Le Hénanff in the Media Room.

Thursday, April 16 — Carrousel du Louvre.

09:00 – 09:20 — Clara Chappaz, Ambassador for Digital and Artificial Intelligence, will take the Master Stage for a fireside chat with Henri Delahaye. 09:20 – 09:35 — Laurent Nuñez, Minister of the Interior, will take the Master Stage for a fireside chat with Michael Amar.

09:45 – 10:00 — Press Q&A session with Laurent Nuñez in the Media Room.

Parliamentary Representation

Paris Blockchain Week will also welcome Michel Barnier, former Prime Minister, along with around twenty Members of the National Assembly: Liliana Tanguy (MP for Finistère), Alexandre Allegret-Pilot (MP for Gard), Hanane Mansouri (MP for Isère), Sabrina Sebaihi (MP for Hauts-de-Seine), Constance Le Grip (MP for Hauts-de-Seine), Marc Ferracci (MP for French citizens abroad), Bastien Marchive (MP for Deux-Sèvres), Charles Rodwell (MP for Yvelines), Anne-Sophie Ronceret (MP for Somme), Julien Dive (MP for Aisne), Corentin Le Fur (MP for Côtes-d’Armor), Félicie Gérard (MP for Nord), Philippe Latombe (MP for Vendée), Belkhir Belhaddad (MP for Moselle), Annaïg Le Meur (MP for Finistère), and Natalia Pouzyreff (MP for Yvelines). Their presence embodies France’s political commitment to the digital asset ecosystem and innovation.

About Paris Blockchain Week

Paris Blockchain Week is Europe’s leading institutional conference dedicated to blockchain technology and digital assets. Held annually in Paris, it brings together policymakers, institutional investors, entrepreneurs, and executives to shape the future of the digital economy.

The post Ministers and Members of Parliament at Paris Blockchain Week 2026: A Historic Signal for the Institutionalization of Crypto-Assets appeared first on BeInCrypto.

Crypto World

Top 7 quantum AI stock trading bot free tools for beginners in 2026 to earn passive income

Disclosure: This article does not represent investment advice. The content and materials featured on this page are for educational purposes only.

AI stock trading bots attract beginners seeking faster, automated entry into modern financial markets.

Summary

- AI stock trading bots rise in 2026 as beginners seek fast, automated entry into modern financial markets

- MoneyFlare offers fully automated trading with zero learning curve, targeting passive income seekers

- Demand grows for data-driven trading tools as investors shift from manual strategies to AI automation

In 2026, more beginners are searching for quantum AI stock trading bot free tools as a way to enter the market without spending years learning trading strategies. The reality is simple: modern financial markets move too fast for manual trading to keep up. Data flows continuously, prices shift in milliseconds, and opportunities can disappear instantly.

This is where AI-powered trading bots change the game. By combining quantitative trading models with automation, these tools allow users to participate in the stock market with far less effort. Instead of watching charts all day, traders can rely on systems that analyze data, execute trades, and manage risk automatically.

For beginners, the appeal is clear — a more structured, data-driven way to pursue passive income.

What is quantitative trading and why it matters

Quantitative trading, often called quant trading, is a method of using mathematical models and algorithms to make trading decisions. Instead of relying on intuition or news headlines, it focuses on patterns hidden inside data.

In practical terms, this means:

- Strategies are based on historical probabilities

- Trades are executed automatically when conditions are met

- Risk is controlled through predefined rules

With the integration of AI in 2026, quant trading has become more adaptive. Systems can now adjust to market conditions in real time, learning from new data instead of following rigid rules.

The biggest advantage is consistency. While human traders may hesitate or react emotionally, AI systems execute strategies exactly as designed. Over time, this can lead to more disciplined trading behavior.

How AI quant trading works in real markets

AI quant trading is no longer experimental — it’s already widely used across the financial industry.

In real-world applications, these systems are used to:

- Identify short-term trading opportunities

- Detect trends across large datasets

- Execute trades faster than human traders

- Apply risk controls automatically

For individual users, this translates into a simpler experience. There is no need to analyze every stock or monitor the market constantly. The system does the heavy lifting.

However, it’s important to stay realistic. While AI can improve efficiency and reduce manual effort, market risks still exist, and outcomes can vary depending on conditions.

The 7 best quantum AI stock trading bot free tools in 2026 (beginner-friendly breakdown)

1. MoneyFlare — The Easiest Way to Start Fully Automated AI Trading

MoneyFlare is built for one type of user: people who want results without complexity. There’s no need to configure strategies, connect APIs, or understand market mechanics in depth. Once activated, the system handles analysis, execution, and risk management automatically in the background.

For beginners, this creates a truly frictionless experience. Users do not react to the market — the system is already doing it on their behalf, consistently and without emotion.

What makes it stand out:

Fully automated, zero learning curve, one-click activation

Best use case:

Passive income seekers who want a hands-off experience

Limitation:

Limited customization for advanced users

Beginner-Friendliness Score: ⭐⭐⭐⭐⭐ (5/5)

Click to register and receive a free $10 real reward and $50 trial credit!

2. Kavout — AI stock ranking with clear guidance

Kavout is ideal for those who are not ready to fully rely on automation but still want AI support. Instead of trading for a user’s behalf, it analyzes massive datasets and ranks stocks based on performance potential.

This means they don’t need to research hundreds of stocks — the AI narrows it down for them. Traders still make the final decision, but with significantly better information.

What makes it stand out:

AI-powered stock scoring system simplifies decision-making

Best use case:

Beginners who want guidance while staying in control

Limitation:

No automated trade execution

Beginner-Friendliness Score: ⭐⭐⭐⭐☆ (4/5)

3. Trade Ideas — Real-time AI market scanner

Trade Ideas are designed for speed. Its AI continuously scans the market and surfaces high-probability opportunities in real time.

Instead of guessing what to trade, traders receive ready-made ideas backed by data. However, traders still need to execute trades themselves, which adds a layer of involvement.

What makes it stand out:

Real-time AI signals and opportunity detection

Best use case:

Active traders who want AI-assisted decisions

Limitation:

Requires manual execution

Beginner-Friendliness Score: ⭐⭐⭐⭐☆ (4/5)

4. TrendSpider — Automated technical analysis

TrendSpider removes one of the biggest barriers in trading: chart analysis. It automatically detects patterns, trendlines, and key levels, then allows users to build strategies based on that data.

This makes technical trading more consistent and less time-consuming, especially for users who struggle with manual charting.

What makes it stand out:

AI-driven charting and pattern recognition

Best use case:

Users who prefer structured, data-driven strategies

Limitation:

Still requires some learning and setup

Beginner-Friendliness Score: ⭐⭐⭐☆☆ (3/5)

5. Composer — No-code strategy builder

Composer is perfect for users who want to create their own strategies without writing code. Through a visual interface, traders can design how their portfolio behaves and let the system execute it automatically.

It offers flexibility, but also requires more thinking upfront compared to plug-and-play platforms.

What makes it stand out:

Visual strategy creation with automation

Best use case:

Users who want customization without coding

Limitation:

Requires strategy design knowledge

Beginner-Friendliness Score: ⭐⭐⭐☆☆ (3/5)

6. Capitalise.ai — Plain-English trading automation

Capitalise.ai simplifies automation by letting traders write strategies in plain English. They describe what they want, and the system turns it into executable logic.

This lowers the barrier significantly, especially for non-technical users. However, it still relies on predefined rules rather than adaptive AI.

What makes it stand out:

No-code automation using natural language

Best use case:

Beginners who want simple rule-based automation

Limitation:

Less adaptive compared to AI-driven systems

Beginner-Friendliness Score: ⭐⭐⭐⭐☆ (4/5)

7. Tickeron — AI insights with probability scoring

Tickeron focuses on helping users understand the market through AI-generated insights. It assigns probability scores to different trade scenarios, making risk evaluation clearer.

It doesn’t automate trading, but it improves decision quality — especially for users who want to stay involved.

What makes it stand out:

AI probability models and pattern recognition

Best use case:

Users who want AI insights but manual control

Limitation:

No automation for passive income

Beginner-Friendliness Score: ⭐⭐⭐☆☆ (3/5)

How beginners can start without overcomplicating it

Getting started with an AI trading bot in 2026 is much simpler than most people expect.

In most cases, the process involves choosing a platform, creating an account, selecting a strategy or system, and activating it. After that, the AI takes over key tasks such as analyzing data and executing trades.

For beginners, the most important step is not to overcomplicate things. Starting with a simple setup and gradually understanding how the system behaves is often more effective than trying to master everything at once.

Why AI trading bot continues to grow

The rapid growth of AI trading is driven by a clear shift in the market. Data is becoming more important, trading speed is increasing, and manual strategies are becoming less effective.

At the same time, more users are looking for ways to generate income without constant effort. AI trading meets this demand by offering automation, efficiency, and accessibility.

With mobile-friendly platforms and simplified interfaces, it’s now possible to manage trading activities anytime, from anywhere.

A realistic view on risks

Despite its advantages, AI trading is not risk-free.

Markets remain unpredictable, and even advanced algorithms can struggle during extreme volatility. Relying entirely on automation without understanding the basics can also lead to poor decisions.

A more balanced approach is to start with smaller amounts, diversify strategies, and monitor performance over time. AI should be seen as a tool that improves efficiency—not a guarantee of profits.

Conclusion

Quantum AI stock trading bots are transforming how beginners approach investing in 2026. By combining quantitative trading with intelligent automation, these tools reduce complexity and make the market more accessible.

Platforms like MoneyFlare stand out for their fully automated, beginner-friendly design, while others like Kavout and Composer offer more control and flexibility.

Ultimately, the value of these tools lies in their ability to simplify trading while maintaining a structured, data-driven approach. With the right expectations and risk management, they can become a practical way to explore passive income opportunities in modern financial markets.

Disclosure: This content is provided by a third party. Neither crypto.news nor the author of this article endorses any product mentioned on this page. Users should conduct their own research before taking any action related to the company.

-

Politics3 days ago

Politics3 days agoUS brings back mandatory military draft registration

-

Fashion3 days ago

Fashion3 days agoWeekend Open Thread: Veronica Beard

-

Sports3 days ago

Sports3 days agoMan United discover Nico Schlotterbeck transfer fee as defender reaches Dortmund agreement

-

Tech6 days ago

Tech6 days agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Politics22 hours ago

Politics22 hours agoWorld Cup exit makes Italy enter crisis mode

-

Crypto World4 days ago

Crypto World4 days agoCanary Capital Files SEC Registration for PEPE ETF

-

Business3 days ago

Business3 days agoTesla Model Y Tops China Auto Sales in March 2026 With 39,827 Registrations, Beating Cheaper EVs and Gas Cars

-

Crypto World5 days ago

Crypto World5 days agoBitcoin recovers as US and Iran Agree a Ceasefire Deal

-

Politics3 days ago

Politics3 days agoMalcolm In The Middle OG Turned Down ‘Buckets Of Money’ To Appear In Reboot

-

Fashion6 days ago

Fashion6 days agoLet’s Discuss: DEI in 2026

-

NewsBeat14 hours ago

NewsBeat14 hours agoPep Guardiola and Gary Neville agree over Arsenal title problem that benefits Man City

-

Business3 days ago

Business3 days agoOpenAI Halts Stargate UK Data Centre Project Over Energy Costs and Copyright Row

-

Business2 days ago

Business2 days agoIreland Fuel Protests Enter Day 5 as Blockades Spark Shortages and Government Prepares Support Package

-

Politics3 days ago

Politics3 days agoLBC Presenter Mocks Trump Over Iran War Failures

-

Crypto World3 days ago

Crypto World3 days agoFederal judge blocks Arizona from bringing criminal charges against Kalshi

-

Tech4 days ago

Tech4 days agoA version of Windows 10 released a decade ago is now eligible for additional security patches

-

NewsBeat1 day ago

NewsBeat1 day agoJD Vance announces ‘no agreement’ with Iran over nuclear weapons fear

-

Business3 days ago

Business3 days agoIMF retains floor for precautionary balances at SDR 20 billion

-

Business2 days ago

Coreweave CSO Venturo sells $5.5m in class a common stock

-

Sports2 days ago

1st-Round WR Enters Vikings Mock Draft Orbit

You must be logged in to post a comment Login