Like many engineers, Sarang Gupta spent his childhood tinkering with everyday items around the house. From a young age he gravitated to projects that could make a difference in someone’s everyday life.

When the family’s microwave plug broke, Gupta and his father figured out how to fix it. When a drawer handle started jiggling annoyingly, the youngster made sure it didn’t do so for long.

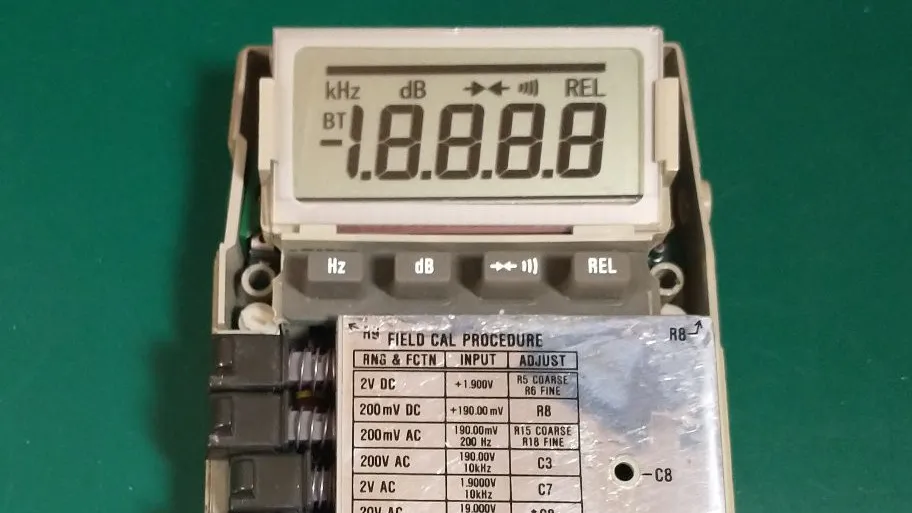

Sarang Gupta

Employer

OpenAI in San Francisco

Job

Data science staff member

Member grade

Senior member

Alma maters

The Hong Kong University of Science and Technology; Columbia

By age 11, his interest expanded from nuts and bolts to software. He learned programming languages such as Basic and Logo and designed simple programs including one that helped a local restaurant automate online ordering and billing.

Gupta, an IEEE senior member, brings his mix of curiosity, hands-on problem-solving, and a desire to make things work better to his role as member of the data science staff at OpenAI in San Francisco. He works with the go-to-market (GTM) team to help businesses adopt ChatGPT and other products. He builds data-driven models and systems that support the sales and marketing divisions.

Gupta says he tries to ensure his work has an impact. When making decisions about his career, he says, he thinks about what AI solutions he can unlock to improve people’s lives.

“If I were to sum up my overall goal in one sentence,” he says, “it’s that I want AI’s benefits to reach as many people as possible.”

Pursuing engineering through a business lens

Gupta’s early interest in tinkering and programming led him to choose physics, chemistry, and math as his higher-level subjects at Chinmaya International Residential School, in Tamil Nadu, India. As part of the high school’s International Baccalaureate chapter, students select three subjects in which to specialize.

“I was interested in engineering, including the theoretical part of it,” Gupta says, “But I was always more interested in the applications: how to sell that technology or how it ties to the real world.”

After graduating in 2012, he moved overseas to attend the Hong Kong University of Science and Technology. The university offered a dual bachelor’s program that allowed him to earn one degree in industrial engineering and another in business management in just four years.

In his spare time, Gupta built a smartphone app that let students upload their class schedules and find classmates to eat lunch with. The app didn’t take off, he says, but he enjoyed developing it. He also launched Pulp Ads, a business that printed advertisements for student groups on tissues and paper napkins, which were distributed in the school’s cafeterias. He made some money, he says, but shuttered the business after about a year.

After graduating from the university in 2016, he decided to work in Hong Kong’s financial hub and joined Goldman Sachs as an analyst in the bank’s operations division.

From finance to process optimization at scale

After two parties agree on securities transactions, the bank’s operations division ensures that the trade details are recorded correctly, the securities and payments are ready to transfer, and the transaction settles accurately and on time.

As an analyst, Gupta’s task was to find bottlenecks in the bank’s workflows and fix them. He identified an opportunity to automate trade reconciliation: when analysts would manually compare data across spreadsheets and systems to make sure a transaction’s details were consistent. The process helped ensure financial transactions were recorded accurately and settled correctly.

Gupta built internal automation tools that pulled trade data from different systems, ran validation checks, and generated reports highlighting any discrepancies.

“Instead of analysts manually checking large datasets, the tools automatically flagged only the cases that required investigation,” he says. “This helped the team spend less time on repetitive verification tasks and more time resolving complex issues. It was also my first real exposure to how software and data systems could dramatically improve operational workflows.”

“Whether it’s helping a person improve a trait like that or driving efficiencies at a business, AI just has so much potential to help. I’m excited to be a little part of that.”

The experience made him realize he wanted to work more deeply in technology and data-driven systems, he says. He decided to return to school in 2018 to study data science and AI, when the fields were just beginning to surge into broader awareness.

He discovered that Columbia offered a dedicated master’s degree program in data science with a focus on AI. After being accepted in 2019, he moved to New York City.

Throughout the program, he gravitated to the applied side of machine learning, taking courses in applied deep learning and neural networks.

One of his major academic highlights, he says, was a project he did in 2019 with the Brown Institute, a joint research lab between Columbia and Stanford focused on using technology to improve journalism. The team worked with The Philadelphia Inquirer to help the newsroom staff better understand their coverage from a geographic and social standpoint. The project highlighted “news deserts”—underserved communities for which the newspaper was not providing much coverage—so the publication could redirect its reporting resources.

To identify those areas, Gupta and his team built tools that extracted locations such as street names and neighborhoods from news articles and mapped them to visualize where most of the coverage was concentrated. The Inquirer implemented the tool in several ways including a new web page that aggregated stories about COVID-19 by county.

“Journalism was an interesting problem set for me, because I really like to read the news every day,” Gupta says. “It was an opportunity to work with a real newsroom on a problem that felt really impactful for both the business and the local community.”

The GenAI inflection point

After earning his master’s degree in 2020, Gupta moved to San Francisco to join Asana, the company that developed the work management platform by the same name. He was drawn to the opportunity to work for a relatively small company where he could have end-to-end ownership of projects. He joined the organization as a product data scientist, focusing on A/B testing for new platform features.

Two years later, a new opportunity emerged: He was asked to lead the launch of Asana Intelligence, an internal machine learning team building AI-powered features into the company’s products.

“I felt I didn’t have enough experience to be the founding data scientist,” he says. “But I was also really interested in the space, and spinning up a whole machine learning program was an opportunity I couldn’t turn down.”

The Asana Intelligence team was given six months to build several machine learning–powered features to help customers work more efficiently. They included automatic summaries of project updates, insights about potential risks or delays, and recommendations for next steps.

The team met that goal and launched several other features including Smart Status, an AI tool that analyzes a project’s tasks, deadlines, and activity, then generates a status update.

“When you finally launch the thing you’ve been working on, and you see the usage go up, it’s exhilarating,” he says. “You feel like that’s what you were building toward: users actually seeing and benefiting from what you made.”

Gupta and his team also translated that first wave of work into reusable frameworks and documentation to make it easier to create machine learning features at Asana. He and his colleagues filed several U.S. patents.

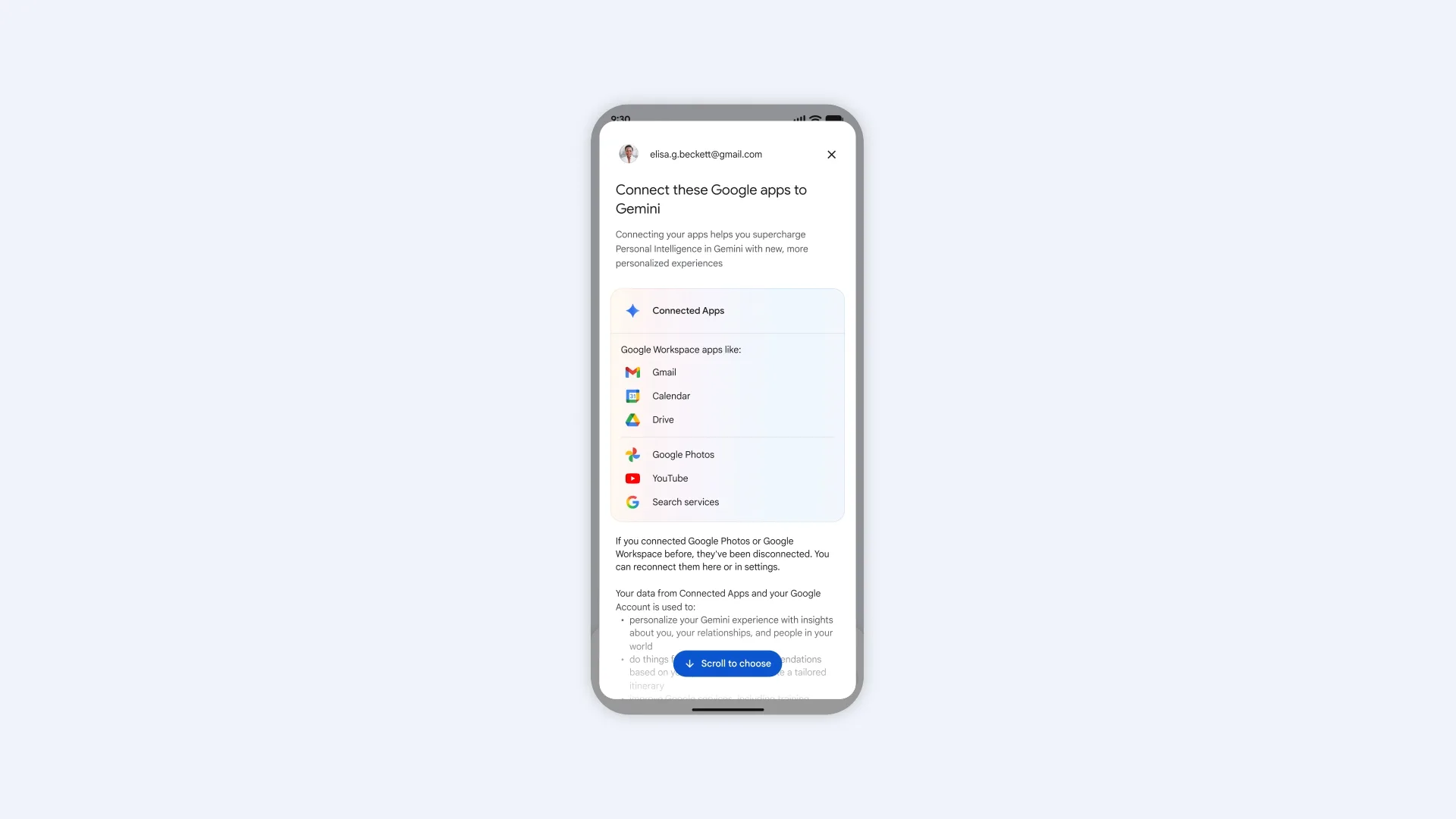

At the time he took on that role, OpenAI launched ChatGPT. The mainstreaming of generative AI and large language models shifted much of his work at Asana from model development to assessing LLMs.

OpenAI captured the attention of people around the world, including Gupta. In September 2025 he left Asana to join OpenAI’s data science team.

The transition has been both energizing and humbling, he says. At OpenAI, he works closely with the marketing team to help guide strategic decisions. His work focuses on developing models to understand the efficiency of different marketing channels, to measure what’s driving impact, and to help the company better reach and serve its customers.

“The pace is very different from my previous work. Things move quickly,” he says. “The industry is extremely competitive, and there’s a strong expectation to deliver fast. It’s been a great learning experience.”

Gupta says he plans to stay in the AI space. With technology evolving so rapidly, he says, he sees enormous potential for task automation across industries. AI has already transformed his core software engineering work, he says, and it’s helped him enhance areas that aren’t natural strengths.

“I’m not a good writer, and AI has been huge in helping me frame my words better and present my work more clearly,” he says. “Whether it’s helping a person improve a trait like that or driving efficiencies at a business, AI just has so much potential to help. I’m excited to be a little part of that.”

Gupta has been an IEEE member since 2024, and he values the organization as both a technical resource and a professional network.

He regularly turns to IEEE publications and the IEEE Xplore Digital Library to read articles that keep him abreast of the evolution of AI, data science, and the engineering profession.

IEEE’s member directory tools are another valuable resource that he uses often, he says.

“It’s been a great way to connect with other engineers in the same or similar fields,” he says. “I love sharing and hearing about what folks are working on. It brings me outside of what I’m doing day to day.

“It inspires me, and it’s something I really enjoy and cherish.”

From Your Site Articles

Related Articles Around the Web

You must be logged in to post a comment Login