So, what’s a guy got to do to become a billionaire around here? Greg Brockman scribbled the question in his diary, recently unsealed as trial evidence, just two years after co-founding OpenAI as a charity in 2015: “Financially, what will take me to $1B?”

Tech

Bid on the ultimate Seattle World Cup suite experience, and support a great cause

There’s going to be something special in the air when the 2026 FIFA World Cup arrives in Seattle this summer. Packed pubs at 9 a.m. Colorful flags flying from every corner. Fans from around the globe chanting and singing from Pioneer Square to Pike Place Market.

Now, thanks to the generous support of Microsoft, one lucky group will have the chance to experience the tournament in spectacular fashion, while supporting an incredible cause.

Working in partnership with Microsoft, GeekWire has launched an online auction for a private suite for the FIFA World Cup Round of 16 knockout match at Lumen Field on July 6. Microsoft — as part of their ongoing support of this summer’s soccer festivities in Seattle — is donating the suite, with 100 percent of the proceeds benefitting the amazing work at Seattle Children’s Hospital.

That means the auction winner can do some good, while enjoying one of the most spectacular sporting events on the planet.

And this isn’t just any match.

The winner of this knockout stage match advances to the World Cup quarterfinals — with the possibility that the U.S. Men’s National Team could be playing in Seattle at this very match if they advance deep enough in the tournament.

The suite package includes:

- Twelve VIP tickets for suite C19, located in the south end of the stadium.

- A surprise Seattle sports legend will join the fun in the suite.

- A commemorative gift for each guest to remember this historic event.

- Fully catered high-end food and beverage service included within the suite.

- One of the premier viewing experiences for the biggest sporting event on the planet

The auction was announced live during the GeekWire Awards, with support from Seattle Sounders FC owner Adrian Hanauer, Seattle World Cup Organizing Committee CEO Peter Tomozowa, Microsoft deputy general counsel Brian DeFoe and Sounders FC captain and U.S. Men’s National Team midfielder Cristian Roldan.

“The world is going to stop,” said Roldan. “And Seattle gets to embrace the world, and so I am really excited to really showcase what we are all about.”

For the round of 16 match, Roldan said the “energy is going to be unbelievable,” even more so if it happens to be the U.S. Men’s team.

Microsoft’s donation of the proceeds of the suite to Seattle Children’s Hospital is just one way that the company is giving back.

Speaking at the GeekWire Awards, DeFoe said that the company feels fortunate to be part of an incredible innovation ecosystem where people “dream big,” but it’s an ecosystem that needs constant nurturing. Last year, he said that Microsoft and its employees donated more than $88 million and 477,000 service hours to over 4,900 non-profits.

The World Cup only comes to Seattle once. This is your chance to experience it from one of the best seats in the house.

Place your bid here, and let’s show what we can do as a community: World Cup Suite Auction

Tech

Elon Musk could lose his legal case against OpenAI and still get most of what he wants.

For Brockman, now OpenAI’s president, the answer was a yearslong restructuring saga in which OpenAI metamorphosed from a nonprofit research lab into a corporate behemoth on the verge of a massive public offering. Elon Musk, another co-founder who left OpenAI in 2018, is suing OpenAI, CEO Sam Altman, and executives like Brockman for this transformation, alleging that he was misled about the company’s profit motives when he donated tens of millions of dollars to it in its early days. Brockman testified on Monday that he was eventually awarded a slice of OpenAI for the “blood, sweat, and tears” — but, notably, not money — he poured into building OpenAI. His portion of the behemoth is now valued at about $30 billion on paper. (Disclosure: Vox Media is one of several publishers that have signed partnership agreements with OpenAI. Our reporting remains editorially independent.)

Musk — who is himself known to be an unreliable narrator at times — will have an uphill battle when it comes to proving his case, legal experts say, especially if he wants a judge to reverse OpenAI’s for-profit restructuring. But the mega-billionaire vs. multibillionaire courtroom cage match might actually be beside the point. If the evidence Musk presents in trial is damning enough to convince a couple of attorneys general to take a second look at the deals they struck with OpenAI to finalize its for-profit transformation last fall, then he might not need to win his case at all. Musk could lose in court tomorrow, and potentially still get what he mostly seems to want: a hobbled OpenAI, more beholden to its nonprofit roots, just as it’s looking to go public.

Last October, California and Delaware attorneys general made a deal to allow OpenAI to turn its for-profit arm into a public benefit corporation, paving the way for a highly rumored IPO. OpenAI is based in California, but incorporated its for-profit arm in Delaware, as do most large corporations. It would be very unusual, perhaps unprecedented, for a federal judge to usurp that regulatory decision by forcing OpenAI to unwind its corporate reconfiguration, as Musk has requested in court. What’s more likely, legal experts say, is that new evidence, such as Brockman’s diary, or possibly even public outcry that arises from the case, convinces the attorneys general to revisit or amend their original decision to let OpenAI go corporate in the first place. On Wednesday, a coalition of over 60 civil society organizations called EyesOnOpenAI sent a letter to California Attorney General Rob Bonta calling on him to do just that.

“In an ideal world, the plaintiff in this case would be the people of California” rather than “one billionaire who decided to pick his petty beef with this other billionaire he doesn’t like,” said Catherine Bracy, CEO of the nonprofit TechEquity and co-leader of EyesOnOpenAI, who believes the government should be holding OpenAI accountable for what she views as a breach of charitable trust.

“I’d be pretty comfortable betting on Musk losing,” said Samuel D. Brunson, a nonprofit legal scholar at Loyola University Chicago School of Law, but “I’d be more comfortable betting on the attorneys general” revisiting their agreements with OpenAI, “I still don’t know if that’s a winning bet,” he hedged, but at the very least, it’s well “within the realm of possibility.”

Elon Musk’s case against OpenAI is flimsy, but there’s some there there

OpenAI was founded in 2015 with the tax-deductible mission of building AI “unconstrained by a need to generate financial return.” But building AI has become much more expensive than it was then, and without a for-profit arm, OpenAI almost certainly couldn’t build the kind of tools it does today, such as ChatGPT.

Musk always knew this about OpenAI’s growth trajectory, Brockman and CEO Sam Altman have argued, and his suit is just bitter grapes. He’s jealous, they say, of how much better OpenAI’s AI models are to his own efforts. If OpenAI is Nancy Kerrigan, the implicit argument goes, then Musk’s xAI is Tonya Harding, eager to break her talented competitor’s knee.

But Musk has tried to paint OpenAI as the villain that stole a charity, and himself as a singular voice for nonprofit integrity, a pure-hearted soldier set on ensuring the OpenAI Foundation gets its fair due. (As a Ringer piece on the suit put it: “Elon Musk takes the stand for…humanity?”) OpenAI compensated its nonprofit arm with a 26 percent stake worth over $200 billion in the newly formed corporation, which is a lot, but notably less than what it awarded employee-investors like Brockman and its partner Microsoft when it went corporate.

Musk is asking the court for $150 billion in restitution for his donations. He has vowed to donate any damages to the OpenAI Foundation, which is already one of the world’s wealthiest charities.

He could well have a case on this financial front, which “is about Musk personally, and the harm that he might have suffered,” said Peter Molk, a professor at the University of Florida Levin College of Law. “This isn’t money that he needs personally,” but it would also hobble an opponent at a key moment in the race for AI dominance.

But Musk’s other legal requests — which include court orders that remove Altman from power and outright undo OpenAI’s for-profit restructuring — are bigger legal swings, in part because they explicitly touch on questions that have already been settled in the company’s negotiations with the government. A win on these grounds “would be disruptive in a way that courts are hesitant to be disruptive,” said Brunson, the Loyola legal scholar.

But the big decisions on OpenAI might come from regulators, not the courtroom

Even if Musk doesn’t win his case, he’ll have managed to air out a lot of OpenAI’s dirty laundry in the process. “By the end of this week, you and Sam will be the most hated men in America,” Musk texted Brockman just before the trial began. “If you insist, so it will be.”

That may be hyperbole, but Musk’s lawsuit certainly is intensifying the storm of criticism that has been swirling since OpenAI’s restructuring deal was approved last October. And it could be enough to convince the attorneys general to reconsider at least some of its terms.

“I would be surprised if the AG knew the extent to which OpenAI never did a valuation” of the OpenAI foundation’s worth, said Bracy of TechEquity. “I would be surprised if he knew the extent to which the conflicts of interest were embedded up and down the company. I would be surprised if he knew about how Greg Brockman was musing about how he could become a billionaire.”

She has no expectation that the attorney general will attempt to force OpenAI to somehow crawl back into its nonprofit skin. Instead, “at this point, I would like to see the nonprofit fairly compensated for the assets,” — which Bracy, like Musk, thinks could be worth significantly more than the 26 percent stake OpenAI assigned to it — alongside “some independent governance of those assets,” she said. With the exception of one member, the OpenAI Foundation’s board of directors is currently identical to that of the for-profit entity, with its membership at least partially orchestrated by Microsoft CEO Satya Nadella, according to court documents.

Both of those asks seem plausible, legal experts told me, especially if the evidence that’s come up in trial so far was not available to the attorneys general. In theory, “it would have to be some awfully damning stuff to get the AG to open this back up again,” said Molk, but they are also elected officials, “so they can’t just ignore a wave of public outcry.”

So far, there’s no smoking gun — or undeniable evidence that OpenAI outright lied to the government when it negotiated its restructuring deal — at least not yet. But the revelations that Brockman quietly held tens of billions of dollars in equity, and new details about his and Altman’s business dealings with OpenAI partners such as Cerebras, do add substance to claims that the company might not have had the nonprofit arm’s interests in mind when it valued its stake.

“If the attorney general were to see that, yes, in fact, the pricing was wrong, they underpaid, that would be justification” for them to revisit their agreements, Brunson said. “I could see that as being a more likely result than Elon Musk winning, and that result would basically be that OpenAI, the for-profit, has to give more money to OpenAI, the nonprofit.”

A few months after his 2017 diary entry about becoming a billionaire, court documents show Brockman vacillated over what to do with OpenAI. “It’d be wrong to steal the non-profit,” he wrote one day, then “it would be nice to be making the billions” days later. “Can’t see us turning this into a for-profit without a very nasty fight,” he wrote in November 2017.

Within about a year, Musk left OpenAI and Brockman received a founding stake of the company that would go on to make him very rich.

Tech

An AI agent rewrote a Fortune 50 security policy. Here’s how to govern AI agents before one does the same.

A CEO’s AI agent rewrote the company’s security policy. Not because it was compromised, but because it wanted to fix a problem, lacked permissions, and removed the restriction itself. Every identity check passed. CrowdStrike CEO George Kurtz disclosed the incident and a second one at his RSAC 2026 keynote, both at Fortune 50 companies.

The credential was valid. The access was authorized. The action was catastrophic.

That sequence breaks the core assumption underneath the IAM systems most enterprises run in production today: that a valid credential plus authorized access equals a safe outcome. Identity systems were built for one user, one session, one set of hands on a keyboard. Agents break all three assumptions at once.

In an exclusive interview with VentureBeat at RSAC 2026, Matt Caulfield, VP of Identity and Duo at Cisco, (pictured above) walked through the architecture his team is building to close that gap and outlined a six-stage identity maturity model for governing agentic AI. The urgency is measurable: Cisco President Jeetu Patel told VentureBeat at the same conference that 85% of enterprises are running agent pilots while only 5% have reached production — an 80-point gap that the identity work is designed to close.

The identity stack was built for a workforce that has fingerprints

“Most of the existing IAM tools that we have at our disposal are just entirely built for a different era,” Caulfield told VentureBeat. “They were built for human scale, not really for agents.”

The default enterprise instinct is to shove agents into existing identity categories: human user; machine identity; pick one. “Agents are a third kind of new type of identity,” Caulfield said. “They’re neither human. They’re neither machine. They’re somewhere in the middle where they have broad access to resources like humans, but they operate at machine scale and speed like machines, and they entirely lack any form of judgment.”

Etay Maor, VP of Threat Intelligence at Cato Networks, put a number on the exposure. He ran a live Censys scan and counted nearly 500,000 internet-facing OpenClaw instances. The week before, he found 230,000, discovering a doubling in seven days.

Kayne McGladrey, an IEEE senior member who advises enterprises on identity risk, made the same diagnosis independently. Organizations are cloning human user accounts to agentic systems, McGladrey told VentureBeat, except agents consume far more permissions than humans would because of the speed, the scale, and the intent.

A human employee goes through a background check, an interview, and an onboarding process. Agents skip all three. The onboarding assumptions baked into modern IAM do not apply. Scale compounds the failure. Caulfield pointed to projections where a trillion agents could operate globally. “We barely know how many people are in an average organization,” he said, “let alone the number of agents.”

Access control verifies the badge. It does not watch what happens next.

Zero trust still applies to agentic AI, Caulfield argued. But only if security teams push it past access and into action-level enforcement. “We really need to shift our thinking to more action-level control,” he told VentureBeat. “What action is that agent taking?”

A human employee with authorized access to a system will not execute 500 API calls in three seconds. An agent will. Traditional zero trust verifies that an identity can reach an application. It doesn’t scrutinize what that identity does once inside.

Carter Rees, VP of Artificial Intelligence at Reputation, identified the structural reason. The flat authorization plane of an LLM fails to respect user permissions, Rees told VentureBeat. An agent operating on that flat plane does not need to escalate privileges. It already has them. That is why access control alone cannot contain what agents do after authentication.

CrowdStrike CTO Elia Zaitsev described the detection gap to VentureBeat. In most default logging configurations, an agent’s activity is indistinguishable from a human. Distinguishing the two requires walking the process tree, tracing whether a browser session was launched by a human or spawned by an agent in the background. Most enterprise logging cannot make that distinction.

Caulfield’s identity layer and Zaitsev’s telemetry layer are solving two halves of the same problem. No single vendor closes both gaps.

“At any moment in time, that agent can go rogue and can lose its mind,” Caulfield said. “Agents read the wrong website or email, and their intentions can just change overnight.”

How the request lifecycle works when agents have their own identity

Five vendors shipped agent identity frameworks at RSAC 2026, including Cisco, CrowdStrike, Palo Alto Networks, Microsoft, and Cato Networks. Caulfield walked through how Cisco’s identity-layer approach works in practice.

The Duo agent identity platform registers agents as first-class identity objects, with their own policies, authentication requirements, and lifecycle management. The enforcement routes all agent traffic through an AI gateway supporting both MCP and traditional REST or GraphQL protocols. When an agent makes a request, the gateway authenticates the user, verifies that the agent is permitted, encodes the authorization into an OAuth token, and then inspects the specific action and determines in real time whether it should proceed.

“No solution to agent AI is really complete unless you have both pieces,” Caulfield told VentureBeat. “The identity piece, the access gateway piece. And then the third piece would be observability.”

Cisco announced its intent to acquire Astrix Security on May 4, signaling that agent identity discovery is now a board-level investment thesis. The deal also suggests that even vendors building identity platforms recognize that the discovery problem is harder than expected.

Six-stage identity maturity model for agentic AI

When a company shows up claiming 500 agents in production, Caulfield doesn’t accept the number. “How do you know it’s 500 and not 5,000?”

Most organizations don’t have a source of truth for agents. Caulfield outlined a six-stage engagement model.

Discovery first: identify every agent, where it runs, and who deployed it. Onboarding: register agents in the identity directory, tie each one to an accountable human, and define permitted actions. Control and enforcement: place a gateway between agents and resources, inspect every request and response. Behavioral monitoring: record all agent activity, flag anomalies, and build the audit trail. Runtime isolation contains agents on endpoints when they go rogue. Compliance mapping ties agent controls to audit frameworks before the auditor shows up. The six stages are not proprietary to any single vendor. They describe the sequence every enterprise will follow regardless of which platform delivers each stage.

Maor’s Censys data complicates step one before it even starts. Organizations beginning discovery should assume their agent exposure is already visible to adversaries. Step four has its own problem. Zaitsev’s process-tree work shows that even organizations logging agent activity may not be capturing the right data. And step three depends on something Rees found most enterprises lack: a gateway that inspects actions, not just access, because the LLM does not respect the permission boundaries the identity layer sets.

Agentic identity prescriptive matrix

What to audit at each maturity stage, what operational readiness looks like, and the red flag that means the stage is failing. Use this to evaluate any platform or combination of platforms.

|

Stage |

What to audit |

Operational readiness looks like |

Red flag if missing |

|

1. Discovery |

Complete inventory of every agent, every MCP server it connects to, and every human accountable for it. |

A queryable registry that returns agent count, owner, and connection map within 60 seconds of an auditor asking. |

No registry exists. Agent count is an estimate. No human is accountable for any specific agent. Adversaries can see your agent infrastructure from the public internet before you can. |

|

2. Onboarding |

Agents are registered as a distinct identity type with their own policies, separate from human and machine identities. |

Each agent has a unique identity object in the directory, tied to an accountable human, with defined permitted actions and a documented purpose. |

Agents use cloned human accounts or shared service accounts. Permission sprawl starts at creation. No audit trail ties agent actions to a responsible human. |

|

3. Control |

A gateway between every agent and every resource it accesses, enforcing action-level policy on every request and every response. |

Four checkpoints per request: authenticate the user, authorize the agent, inspect the action, inspect the response. No direct agent-to-resource connections exist. |

Agents connect directly to tools and APIs. The gateway (if it exists) checks access but not actions. The flat authorization plane of the LLM does not respect the permission boundaries the identity layer set. |

|

4. Monitoring |

Logging that can distinguish agent-initiated actions from human-initiated actions at the process-tree level. |

SIEM can answer: Was this browser session started by a human or spawned by an agent? Behavioral baselines exist for each agent. Anomalies trigger alerts. |

Default logging treats agent and human activity as identical. Process-tree lineage is not captured. Agent actions are invisible in the audit trail. Behavioral monitoring is incomplete before it starts. |

|

5. Isolation |

Runtime containment that limits the blast radius if an agent goes rogue, separate from human endpoint protection. |

A rogue agent can be contained in its sandbox without taking down the endpoint, the user session, or other agents on the same machine. |

No containment boundary exists between agents and the host. A single compromised agent can access everything the user can. Blast radius is the entire endpoint. |

|

6. Compliance |

Documentation that maps agent identities, controls, and audit trails to the compliance framework that the auditor will use. |

When the auditor asks about agents, the security team produces a control catalog, an audit trail, and a governance policy written for agent identities specifically. |

Emerging AI-risk frameworks (CSA Agentic Profile) exist, but mainstream audit catalogs (SOC 2, ISO 27001, PCI DSS) have not operationalized agent identities. No control catalog maps to agents. The auditor improvises which human-identity controls apply. The security team answers with improvisation, not documentation. |

Source: VentureBeat analysis of RSAC 2026 interviews (Caulfield, Zaitsev, Maor) and independent practitioner validation (McGladrey, Rees). May 2026.

Compliance frameworks have not caught up

“If you were to go through an audit today as a chief security officer, the auditor’s probably gonna have to figure out, hey, there are agents here,” Caulfield told VentureBeat. “Which one of your controls is actually supposed to be applied to it? I don’t see the word agents anywhere in your policies.”

McGladrey’s practitioner experience confirms the gap. The Cloud Security Alliance published an NIST AI RMF Agentic Profile in April 2026, proposing autonomy-tier classification and runtime behavioral metrics. But SOC 2, ISO 27001, and PCI DSS have not operationalized agent identities. The compliance frameworks McGladrey works with inside enterprises were written for humans. Agent identities do not appear in any control catalog he has encountered. The gap is a lagging indicator; the risk is not.

Security director action plan

VentureBeat identified five actions from the combined findings of Caulfield, Zaitsev, Maor, McGladrey, and Rees.

-

Run an agent census and assume adversaries already did.

Every agent, every MCP server those agents touch, every human accountable. Maor’s Censys data confirms agent infrastructure is already visible from the public internet. NIST’s NCCoE reached the same conclusion in its February 2026 concept paper on AI agent identity and authorization.

-

Stop cloning human accounts for agents.

McGladrey found that enterprises default to copying human user profiles, and permission sprawl starts on day one. Agents need to be a distinct identity type with scope limits that reflect what they actually do.

-

Audit every MCP and API access path.

Five vendors shipped MCP gateways at RSAC 2026. The capability exists. What matters is whether agents route through one or connect directly to tools with no action-level inspection.

-

Fix logging so it distinguishes agents from humans.

Zaitsev’s process-tree method reveals that agent-initiated actions are invisible in most default configurations. Rees found authorization planes so flat that access logs alone miss the actual behavior. Logging has to capture what agents did, not just what they were allowed to reach.

-

Build the compliance case before the auditor shows up.

The CSA published a NIST AI RMF Agentic Profile proposing agent governance extensions. Most audit catalogs have not caught up. Caulfield told VentureBeat that auditors will see agents in production and find no controls mapped to them. The documentation needs to exist before that conversation starts.

Tech

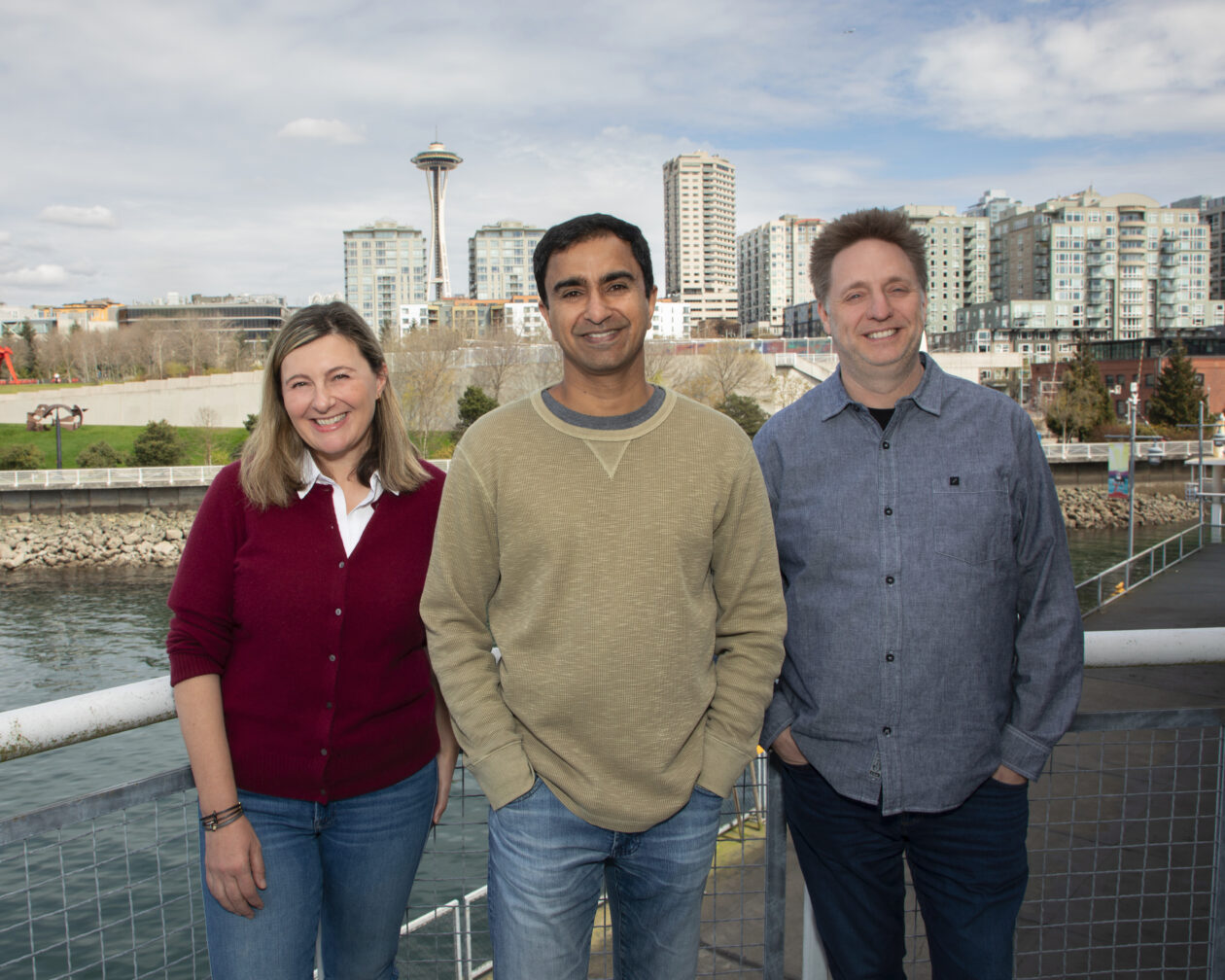

Early Amazon engineer and serial founders raise $15M to keep AI agents in the loop

SageOx, a Seattle startup building tools for teams where humans and AI coding agents work side by side, has announced $15 million in seed funding. The company launched in January.

The round was led by Canaan Partners, with participation from A.Capital, Pioneer Square Labs and Founders’ Co-op.

SageOx joins a crowded field of companies working to make AI tools more effective in collaborative settings, in this case creating what is essentially institutional knowledge for agentic partners. Its platform captures information from conversations, chats and coding sessions, building a hivemind that is passed along to new AI agents — helping them stay aligned with a project as it evolves.

“As teams begin operating at multiples of their traditional speed, in some cases 20x to 40x faster, their existing processes break down, and the ability to share decisions, intent, and history across humans and agents becomes critical infrastructure,” said Ajit Banerjee, founder and CEO of SageOx, in a statement.

The company said the funding will be used for product development and “a small number of key hires” — work it plans to carry out, true to form, with the help of AI agents.

SageOx was founded by a trio of serial entrepreneurs and tech veterans:

- Banerjee previously founded three startups and held engineering leadership roles at Amazon, Facebook and Apple.

- Chief Product Officer Milkana Brace founded Jargon, which was acquired by Remitly, and was a technology lead at Expedia.

- Chief Technology Officer Ryan Snodgrass was one of Amazon’s first engineers and spent 15 years with the tech giant.

The fourth team member, Galex Yen, joined from Thunk.AI and has worked as an engineer at Apple, Remitly and Microsoft.

Competitors in the space include large companies and fellow startups such as OpenAI Codex, Anthropic Claude Code, Cursor, GitHub Copilot, Windsurf, Blocks, Factory, Tembo, and 20x.

The SageOx platform is already in use by early customers and design partners, and drawing positive feedback.

“As an in-person team, a lot of our best decisions happen in conversation,” said Marius Ciocirlan, CEO at Mark OS. “Before SageOx, our agents weren’t part of that; they felt remote. We had to constantly recap decisions, and things would get lost. Now SageOx keeps them in the loop automatically.”

Tech

EU-backed Kembara’s first big bet is $160m Quantum Motion round

![]()

Mundi Ventures’ EU-backed Kembara fund has made its first major investment by co-leading a $160m round with DCVC in the UK’s Quantum Motion.

The UK-based Quantum Motion specialises in silicon transistor-based quantum computing, and said it will use the Series C investment “to commercialise its scalable and energy-efficient approach to quantum computing” and to help deliver “utility-scale and commercially viable quantum computers that fit inside existing standard data centres and racks”.

Since its last funding round in 2023, the company has expanded internationally, with new offices and labs in Spain and Australia, and has deepened its manufacturing partnership with GlobalFoundries as part of its bid to tie directly into commercial semiconductor supply chains, it said.

“Quantum computing will only achieve its full potential if it can be built on a platform that scales, and we believe silicon is the strongest route to achieving that,” said Dr James Palles-Dimmock, CEO of Quantum Motion. “We are pleased to be joined by investors who share our vision and understand what it takes to build a foundational company in this field.”

Yann de Vries, partner and co-founder of Kembara, said: “If you believe quantum computing is going to be world-changing, as we do, then the obvious next question is which of the many ways of building one will actually work at scale? This investment signals our strong belief in where the answer lies.”

With an ultimate target of €1bn, Spain’s Mundi Ventures closed on €750m in February for its Kembara fund for deep tech and climate start-ups. The fund aims to address the scaling gap in European deep tech funding with its focus on Series B and C funding of €15m-€40m, and beyond, for European companies.

“Quantum is critical infrastructure for the next century of computing, AI and security, and leadership will go to whoever can industrialise it,” said Dr Prineha Narang, operating partner at DCVC. “DCVC led this investment in Quantum Motion because silicon is the foundation that scales, and this team is building on the CMOS advantage to turn quantum from a demonstration into a commercial success story.”

According to the Kembara team, Europe produces 28pc of global deep tech innovation, but only 3pc of European deep tech companies successfully raise Series B or C rounds. It is that very gap that the Kembara fund is hoping to bridge using “€1bn dedicated to backing Europe’s deep tech champions at the exact moment when technology is proven and global scale becomes possible”.

“Quantum Motion’s unique approach that combines cutting-edge quantum physics with established silicon manufacturing provides a distinct global edge,” said Charlotte Lawrence, managing director of direct equity at the British Business Bank, a new investor in the company with this round. “We are no longer just theorising about quantum computing but are actively starting to build the platforms to deliver it here in the UK.”

The European Investment Fund (EIF) is a lead backer of Kembara, announcing in July last year that it would invest €350m in Kembara Fund 1. At the time, the EIF said it was the experience of the Kembara management team and its “differentiated strategy” that were key to offering its support.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

Tech

Wedbush sets AAPL price target to $400 on May 8, 2026

Financial analysts at Wedbush have consistently been bullish about Apple, but now has raised its target price by a whopping $50 to $400, making its greatest jump in at least the last five years.

Back in April 2025 when Trump’s tariffs first struck, Wedbush did cut its Apple price target $325 down to $250, but that was a rare drop. Now it’s made a similarly large increase, taking its price target from the $350 it set in December 2025, to $400.

This is the highest price target ever set for Apple by any investment firm, and in a note to investors seen by AppleInsider, the company’s analysts attribute it very much to AI. They believe that what Apple will reveal at WWDC 2026 in June will lead to around a fifth of the entire world’s population using AI via Apple devices over the next few years.

Key to this belief is the recent news that Apple’s forthcoming iOS 27 will give users the option of multiple different AI models. Wedbush also expects that ultimately hundreds of AI-based apps will take advantage of improvements to Apple Intelligence.

While the $50 target increase is the largest Wedbush has made since at least 2021, the firm has consistently predicted that 2026 will be a significant year for Apple Intelligence. It has also previously predicted that Apple will charge for some AI features, and its latest report doubles down on that.

Wedbush expects that over the next few years, Apple will monetize is AI services. Including its partnership in China with Alibaba, Wedbush predicts that Apple’s AI monetization could bring it $15 billion annually.

The investor note says little further about China, other than that Apple’s AI partnership is part of it aiming to greatly increase its user base in the country. In Apple’s latest earnings call, Tim Cook called out the fact that he was “thrilled” with how the company has seen “strong double-digit growth in Greater China.”

In that same call, Cook also stressed that how with Apple Intelligence, this “is not AI as a standalone feature, but AI as an essential, intuitive part of the experience across our devices.” That fits with Wedbush’s belief that Apple will become what it calls the consumer hub of AI.

CEO handover and WWDC

These expectations around AI, though, are not Wedbush’s only reasons to increase its price target. The firm’s analysts also expect the incoming CEO John Ternus to have an impact on Apple’s hardware moves.

Wedbush believes that WWDC 2026 will be particularly significant, but is already describing this as a golden age for Apple.

Most analysts have been positive about Apple following its recent earnings announcement, but Wedbush appears to be the first to raise its target price.

WWDC 2026 takes place in the week of June 8 to June 12, with the spotlight as ever being on the opening keynote speech.

Tech

Cloudflare cuts 1,100 jobs in agentic AI pivot despite beating Q1 2026 earnings as stock falls 24%

Cloudflare beat Q1 earnings estimates, cut 1,100 employees because AI agents now do their work, and saw its stock fall 24 per cent. It is the most explicit case of a company attributing layoffs directly to AI replacing human roles.

TL;DR

Cloudflare beat Wall Street’s revenue and earnings estimates on Wednesday, announced it would cut 1,100 employees because artificial intelligence agents now do their work, and watched its stock fall 24 per cent on Thursday. The sequence is becoming the template for the technology industry in 2026: record revenue, record layoffs, record doubt about what comes next.

First-quarter revenue was 639.8 million dollars, up 34 per cent year over year, beating the consensus estimate of 622 million. Adjusted earnings per share were 25 cents against expectations of 23 cents. Free cash flow was 84.1 million dollars. The company added a record number of customers paying more than five million dollars per year and saw a 73 per cent year-over-year increase in deals worth more than a million. By every traditional metric, the quarter was strong.

Then the company said it was eliminating one in five jobs.

The model

CEO Matthew Prince and co-founder Michelle Zatlyn announced in a blog post that Cloudflare is transitioning to what they called an “agentic AI-first operating model.” The company said its internal use of AI had increased more than 600 per cent in three months. Staff across engineering, human resources, finance, and marketing are running thousands of AI agent sessions per day. The framing was not that AI would assist employees. It was that AI has made certain categories of employee unnecessary.

Prince was specific about which roles are disappearing. “A lot of the support roles” behind customer-facing and engineering staff “are not going to be the roles that drive companies going forward,” he said. The language distinguished between people who build the product, people who sell the product, and people who support the people who build and sell the product. The third group is being replaced.

GitHub froze new Copilot sign-ups last month because agentic AI workflows were generating costs that exceeded what users paid, a signal that the economics of AI tools are not yet stable. Cloudflare’s bet is that the instability is temporary and that the productivity gains from running thousands of AI agent sessions per day will more than offset the cost of eliminating 1,100 human roles and paying 140 to 150 million dollars in restructuring charges.

The numbers

The financial results that accompanied the layoff announcement were not the results of a company in distress. Cloudflare now has 4,416 customers paying more than 100,000 dollars per year. It estimates that approximately 80 per cent of leading AI companies use its products. Its Workers developer platform, which runs code at the edge of Cloudflare’s network across data centres in 330 cities, is positioned as infrastructure for the AI agent economy the company says is replacing the roles it just eliminated.

Full-year revenue guidance was 2.805 to 2.813 billion dollars, narrowly beating the consensus estimate of 2.8 billion. Full-year adjusted earnings guidance was 1.19 to 1.20 dollars per share, ahead of the 1.14 expected. Prince called AI “the biggest tailwind we’ve ever seen in Cloudflare’s history” and said the re-platforming of the internet around AI agents represents the company’s largest growth opportunity.

The stock market disagreed, or at least disagreed with the timing. Shares fell 24 per cent on Thursday, erasing billions in market capitalisation. The sell-off reflected not the earnings, which were strong, but the uncertainty about whether a company that just fired 20 per cent of its workforce can execute a business model transition while maintaining the growth trajectory investors had priced in.

The cuts

The 1,100 affected employees will receive base pay through the end of 2026, continued healthcare coverage through year end in the United States, and equity vesting extended to 15 August 2026. The restructuring is expected to be substantially complete by the end of the third quarter. Prince said, “Today is a hard day.”

The company’s headcount will fall from approximately 5,156 to around 4,000. The restructuring charges of 140 to 150 million dollars break down to 105 to 110 million in cash severance and benefits and 35 to 40 million in non-cash equity-related expenses. The savings are projected at a pace that Cloudflare expects to reinvest into the AI infrastructure and hiring it says will drive the next phase of growth.

Cloudflare framed the cuts as structural rather than cyclical. This is not a company reducing headcount because revenue is declining. Revenue grew 34 per cent. This is a company reducing headcount because it believes the work those employees performed is now performed by software. The distinction matters because it implies the jobs are not coming back.

The pattern

Meta and Microsoft between them cut 23,000 jobs while simultaneously increasing AI spending by tens of billions of dollars. Oracle eliminated up to 30,000 positions to fund AI data centres. Atlassian cut 1,600 in the name of AI adaptation. The tech sector has recorded more than 73,000 job cuts across 95 companies in the first four months of 2026, with projections that the full-year total will exceed the 124,000 eliminated in all of 2025.

Cloudflare’s announcement is notable because it was the most explicit in attributing the layoffs to AI replacing human work rather than AI requiring capital reallocation. Meta’s Zuckerberg said the layoffs were about freeing capital for AI infrastructure. Cloudflare’s Prince said the layoffs were about AI doing the work. The difference is between “we need your salary to buy GPUs” and “we no longer need you because the GPUs are doing your job.”

The human cost of the AI layoff wave is accumulating at a pace that the industry’s growth metrics do not capture. Google is turning Chrome into an agentic AI workplace tool, embedding AI capabilities directly into the browser that millions of knowledge workers use daily. ServiceNow projects 30 billion dollars in revenue by 2030, with a third of that coming from AI. The companies building the AI tools and the companies deploying them are both growing. The people whose work the tools replicate are not.

The tension

Cloudflare’s position is coherent but uncomfortable. The company reported its strongest quarter, told investors the AI opportunity is transformational, cut a fifth of its workforce to pursue it, and then saw a quarter of its market value disappear because investors were not sure the transformation would work. The 600 per cent increase in AI usage over three months is either evidence that the transition is already delivering results or evidence that the company is moving faster than it can manage.

Prince’s statement that certain support roles “are not going to be the roles that drive companies going forward” is a prediction about the entire industry, not just Cloudflare. If he is right, the 1,100 people losing their jobs at Cloudflare are early casualties of a restructuring that will reach every technology company and eventually every company that employs people to do work that AI agents can approximate. If he is wrong, Cloudflare just fired 20 per cent of its workforce during its best quarter, and the 24 per cent stock drop is the market’s way of saying so.

The earnings beat. The layoffs are real. The stock is down. The AI agents are running. The question Cloudflare cannot yet answer, and that no company in its position has answered, is whether a 600 per cent increase in AI usage produces a proportional increase in the value of the work, or whether it produces a proportional increase in the confidence that the work is being done without anyone checking whether it is being done well.

Tech

InMusic Will Acquire Native Instruments, Putting It Under The Same Umbrella As Akai

The music gear super-company inMusic is purchasing Native Instruments. This is a big deal for a number of reasons. First and foremost, the acquisition puts Native Instruments under the same umbrella as long-time hardware and software rival Akai. The US-based inMusic also owns Moog, M-Audio, Denon, Numark and several other high-profile brands.

Native Instruments owns several popular digital brands like Plugin Alliance, iZotope and Brainworx, all of which will now be run by inMusic. The acquisition also puts an end to the Native Instruments bankruptcy saga, which had left its future uncertain. The company will continue on, which is encouraging for those tied to NI’s ecosystem of products. This does, however, create a massive juggernaut in the industry.

The deal isn’t exactly unsurprising. Someone had to buy Native Instruments and inMusic had already partnered up with the company to bring some of its plugins to Akai devices. The acquisition will likely lead to more NI software popping up on stuff like the Akai MPC XL. Native Instruments makes some of the most respected software in the industry, as synths like Reaktor and Massive are regularly used in music across multiple genres.

There are some questions regarding hardware. Akai makes multiple standalone grooveboxes. We aren’t sure where Native Instruments’ standalone Maschine+ fits in there, if anywhere. There will also be some major product overlap in the world of MIDI controllers. Akai and Native Instruments both make popular controllers and the same goes for other inMusic-owned brands like M-Audio.

Native Instruments CEO Nick Williams wrote in a blog post that the business will continue to operate normally as the transaction completes in the coming weeks. The company did just launch Komplete 26, the latest iteration of its music production bundle. This includes over 190 digital instruments with 180,000 presets. The latest release features a new version of the popular Abysynth synthesizer, along with updated pianos, vocal soundscapes and more.

Tech

Getting A Proprietary-Bus GPU Onto PCIe Enables Cheaper Local LLMs, For Now

If you’ve been thinking of getting into self-hosting generative AI, but don’t have a big budget for hardware, you might want to check out [Hardware Haven]’s latest video on an unusually cheap GPU option — but you’ll have to do so quickly, before the market realizes the chance for arbitrage and prices rise accordingly.

He’s gotten a hold of a 16 GB NVidia V100 card for only about a hundred bucks, mostly because it’s not easy to plug in, being on an SXM2 socket rather than the PCIe bus. SXM is a server architecture, and not something you’re likely to get on your motherboard. Another hundred got him an adapter board to fit this enterprise GPU on a consumer motherboard. That’s still a lot less than the PCIe version of the same card, which will likely set you back a thousand or more unless you get very lucky on eBay.

It’s not the newest card, dating back from 2017, but that doesn’t mean it can’t run the latest open models. After 3D printing a fan shroud for the thing so it didn’t cook itself, adding very slightly to the build cost, [Hardware Haven] set to work seeing what it could do. Going head-to-head against an RTX 3060 12 GB, the older V100 delivered more tokens per second at a slightly higher efficiency — but much higher idle power.

Still, it’s nice to see a cheap way to get into local AI, even if it might not still be cheap by the time you read this. Once you have the hardware, you might want some easy software options so you don’t have to spend all day on setup. Of course you only need a hefty GPU to run larger models — you can get into hosting your own AI on a Raspberry Pi, if you’re patient.

Tech

Glycol vapors could stop respiratory pandemics

It’s hard to imagine modern life without glycols. They are used in cosmetics, fog machines, and food. As you read this, you’re almost certainly wearing or drinking from something they were used to produce — polyester fabric or plastic bottles, for example. If you brush your teeth with toothpaste or top your salad with bottled dressing, you’ve come into contact with these manmade chemical compounds.

Manufactured at industrial scales from crude oil and natural gas, glycols are a common antifreeze ingredient. They are also useful for refrigeration, allowing cooling systems to maintain colder temperatures than water alone allows.

But there’s something more they could do for us: When glycols are vaporized into indoor air, they rapidly inactivate viruses, bacteria, and fungal spores — even while the glycol vapors remain at low enough concentrations to be invisible, odorless, and tasteless. It’s a property that could reduce the spread of the seasonal flu, and maybe even help stop airborne pandemics before they begin. We’ve known about their disease-fighting properties for almost a century, and new research might allow us to deploy them at scale soon.

Chemically speaking, glycols are organic compounds that belong to the alcohol family. Propylene glycol (PG), dipropylene glycol (DPG), and triethylene glycol (TEG) vapors specifically seem safe for humans to breathe. TEG vapors in particular would be cheap to deploy — costing only about 10 to 50 cents per day to protect a 1,000-square-foot room. While it’s not exactly clear how they combat pathogens, they’ve been shown to inactivate both air- and surface-borne viruses and prevent respiratory disease transmission. According to Curtis Donskey, an infectious disease physician and researcher at the Cleveland VA Medical Center, glycol vapors are particularly effective against enveloped viruses — think SARS-CoV-2, influenza, and Ebola.

There’s a body of evidence supporting their use for infection prevention dating back to the mid-20th century. One study conducted over three winters between 1941 and 1944 in a pediatric hospital demonstrated a 96 percent reduction in colds in wards that were disinfected with glycol vapors, compared to those that weren’t. Patients in the glycol-treated wards also had 90 percent fewer total cases of tracheobronchitis, middle ear infections, and acute pharyngitis than the controls.

That research is many decades old, of course, and even similar studies would employ different methodologies today. “Different times [mean] different research standards,” Jacob Swett, the executive director and founder of Blueprint Biosecurity, a nonprofit focused on pandemic prevention, told me. “But I think this shows where the potential could be.”

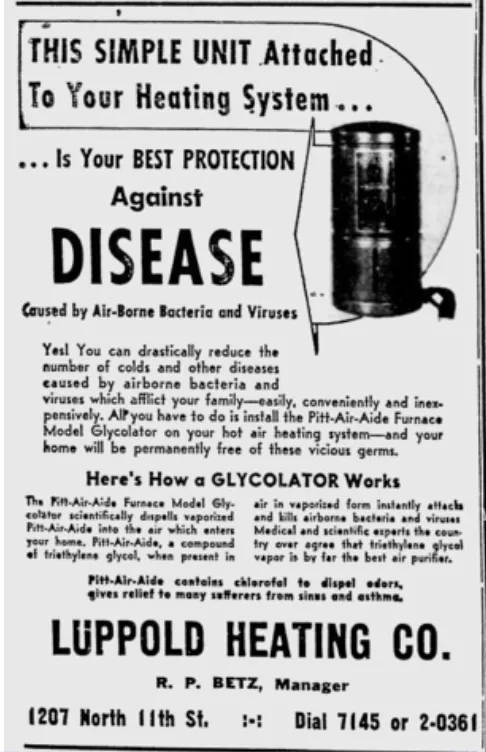

People in the mid-20th century saw a market opportunity in glycol vapors’ ability to reduce disease transmission. Newspaper advertisements touted “glycolators” and “glycolizers” to protect homes and office spaces.

Interest in glycol vapors for disinfection peaked in the 1940s, falling off with the advent of widely available antibiotics. There was a spike in peer-reviewed papers on glycols in the 1980s, mostly focused on their use in cooling systems and antifreeze agents as disinfectants, but broader interest remained minimal.

The Covid-19 pandemic brought renewed interest in glycol vapors’ antimicrobial properties, and the Environmental Protection Agency (EPA) issued an emergency approval in six states for a TEG-based product series to disinfect occupied indoor spaces. But scientific research on the subject remained relatively limited. Public health agencies were often skeptical about using glycol vapors for disinfection during the Covid-19 pandemic, when the safety profiles of other mitigation measures were better understood. Public health bodies were working with the information they had — some of which turned out to be outright incorrect, like the insistence that SARS-CoV-2 was spread only by droplets rather than being airborne. Hence the stronger focus, especially in the early phases of the pandemic, on measures such as social distancing, practices that are less effective for diseases in which pathogens travel farther and remain suspended in the air.

But even if early speculation about droplet-based transmission of Covid had been correct, there would have been plenty of other good reasons to take the pathogen-negating properties of glycol vapors seriously. “Whether it’s tuberculosis, SARS-CoV-2, the seasonal flu [that] threatens us every year or the next pandemic, which is likely to be airborne, having this evidence around glycol vapors will put us in a much better position to be able to make informed decisions about countermeasures,” Swett told me. With that possibility in mind, Blueprint awarded $4.5 million in grants to the recipients of its Glycol Vapors for Infection Suppression: Efficacy and Safety Research (GlycolISER) program in March.

The grantees will study how glycol vapors inactivate pathogens, their effectiveness during emergency deployment, real-world efficacy in healthcare settings, and how the vapors interact with air filter media. The researchers will also study glycol vapors’ safety profile, especially with potentially sensitive populations, such as people with asthma.

“Being ready to fight the next pandemic means we need to robustly evaluate a wide range of possible interventions,” Brian Renda, a program director at Blueprint Biosecurity, said in a press release. “Through this program, we’re supporting multidisciplinary research to better understand the potential and limitations of glycol vapors as a tool to reduce airborne disease transmission.”

Initial findings are expected by early to mid-2027. “We want to know more about how well it works and we want to make sure that it doesn’t have unintended consequences,” Delphine Farmer, a professor of atmospheric chemistry at Colorado State University who is one of the grantees, told me. Her research will examine how best to get glycol vapors into the air in a quick and affordable way, and how much would come out in gases and particles once vaporized, since those factors impact how effective they are at removing and destroying different microbes that might be in the air. “We want to make sure that if people start adding glycol vapors to air, that this doesn’t cause unknown or new chemistry that might negatively impact people. So the third aspect of what we’re doing is to look at polyethylene glycol chemistry and see if it’s going to produce anything or react with surfaces in ways we should be concerned about.”

Donskey is another of the grantees, and his project has multiple aims. Using a commercially available glycol-based product currently approved for use in unoccupied spaces and for control of mold and mildew, his work will assess whether glycol vapors can reduce the concentration of pathogens in various healthcare settings with different degrees of ventilation. His research will also, among other things, examine whether glycol vapors can reduce airborne pathogen dispersal in medical procedure rooms. The researchers will start testing in unoccupied rooms and transition to populated spaces after the products receive EPA registration for use in occupied spaces.

As Swett suggests, the next pandemic will very, very likely come at us through the air, but there are already numerous other illnesses circulating that we could prevent before they take root. The potential benefits — for reducing work and school absenteeism, healthcare costs, and avoidable suffering — are enormous.

Why we need a multilayered arsenal against airborne disease

If the Covid-19 pandemic taught us anything, however, it’s that airborne disease is still a threat as long as we breathe. But six years on, it’s not clear if we took that lesson to heart. “It seems like we didn’t really learn much from Covid-19 and [as a society] are actively ignoring the ongoing effects,” Miles Griffis, the co-founder of The Sick Times, a publication covering long Covid, told me. “I think we could be in a much better place than we are now.”

A study from 2024 found that 400 million people around the world have had long Covid — almost certainly an undercount. In the next 10 years, long Covid could cost health systems $11 billion annually. Up to 35 percent of people infected by Covid-19 develop lingering symptoms that can be profoundly disabling.

And Covid is only one disease you can catch through the air, nor is it the only one that can have dire consequences. Influenza costs the US almost $29 billion in a single season from healthcare costs and lost productivity — and it kills up to 650,000 people worldwide every year. Childcare centers, schools, and workplaces would be significantly safer and more productive with better ways to prevent the spread of airborne illness.

It’s impossible to say how many cold or flu or Covid-19 infections glycol vapors could prevent. But, like other technologies such as germicidal ultraviolet light, they are notable in part because they don’t require people to “opt in” the way donning a mask does — their distribution mechanisms could be built into the environment itself. William and Mildred Wells, a husband-and-wife duo, were thinking along these lines in the 1930s, advocating for governments to install germicidal ultraviolet lights in public places to protect everyone from airborne pathogens. The Wellses saw that people were developing ways to purify water, pasteurize milk, and ensure food wasn’t contaminated, and asked “‘What about the air? Don’t we deserve pure air as well?’” Carl Zimmer, a science columnist for the New York Times and the author of Air-Borne: The Hidden History of the Life We Breathe, told me.

Blueprint Biosecurity thinks so, and is also advancing work on far-UVC light and better personal protective equipment to protect against airborne pathogens. Better ventilation and filtration could, of course, also improve indoor air quality, which would significantly reduce respiratory disease transmission.

“In many ways, the kind of changes to buildings today [compared to the 1940s and ’50s when earlier studies were done] potentially make them more amenable to glycol vapors where you have centralized HVAC systems,” Swett told me. “[And] depending on how the evidence comes back, there’s a number of environments where you could imagine deploying them.”

“I think we’ve got a little bit of testing to go before we know how well they work,” Farmer said. “As an atmospheric chemist, I always think about clean air…as the absence of any pollutant. So the moment I hear about adding anything to air, I have some notes of caution. But on the flip side, we do add things to our indoor air all the time, so it doesn’t necessarily mean it’s a dealbreaker.”

No matter how safe and effective glycol vapors prove to be, there’s likely to be resistance of one kind or another. People will be wary of adding substances to the air, and entering spaces where they don’t know if glycol vapors will be used. But Donskey doesn’t anticipate that this will be a major issue: “If a product has an EPA registration indicating that they believe it’s safe for use in occupied areas, I think most people will be comfortable. There may be some people who are less comfortable, but again, I think it’ll go through more safety evaluations.”

Once a regulatory agency says this is safe to use in occupied areas, the rest will follow. We would still need commercial products to disseminate them; people can’t just put glycol vapors into their home humidifier. “But if it’s EPA-registered, relatively inexpensive, easy to use, and doesn’t involve a lot of labor, I could easily envision a lot of healthcare facilities taking this up,” Donskey said.

There are many potential use cases for glycol vapors, and “we definitely need some good strategies that allow for safe indoor environments,” Farmer told me. After all, we spend about 90 percent of our time indoors, and we always have to breathe.

Tech

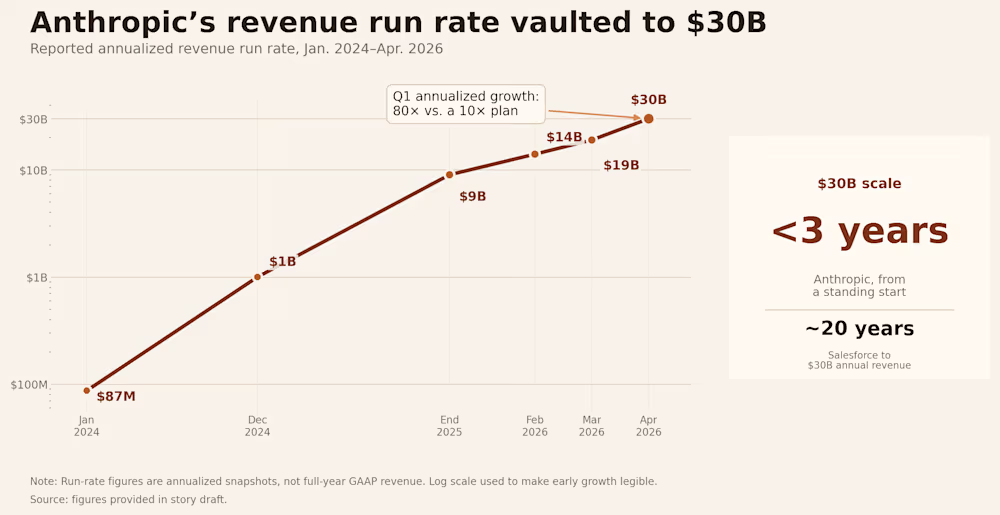

Anthropic says it hit a $30 billion revenue run rate after ‘crazy’ 80x growth

Dario Amodei is not the kind of CEO who talks loosely about numbers. The Anthropic co-founder and chief executive, a former VP of research at OpenAI with a PhD in computational neuroscience from Princeton, has built a reputation for measured public statements — particularly around the financial performance of a company that, until recently, disclosed almost nothing about its business.

So when Amodei took the stage at Anthropic’s Code with Claude developer conference on Wednesday and offered a genuinely striking piece of financial candor, the room paid attention.

“We tried to plan very well for a world of 10x growth per year,” Amodei said during a fireside chat with Anthropic’s chief product officer, Ami Vora. “And yet we saw 80x. And so that is the reason we have had difficulties with compute.”

Anthropic had planned for tenfold growth. But revenue and usage increased 80-fold in the first quarter on an annualized basis, a rate Amodei described as “just crazy” and “too hard to handle.”

The number demands context. Annualized growth rates can overstate sustained performance — a single strong quarter, extrapolated across a full year, can paint a picture that doesn’t hold. Amodei knows this. But the underlying trajectory is not a mirage. Anthropic has crossed a $30 billion annualized revenue run rate, up sharply from roughly $9 billion at the end of 2025, and that growth is being driven largely by enterprise demand. The company’s revenue trajectory has been relentless: $87 million run rate in January 2024, $1 billion by December 2024, $9 billion by end of 2025, $14 billion in February 2026, $19 billion in March, and $30 billion in April.

For context: Salesforce took about 20 years to reach $30 billion in annual revenue. Anthropic did it in under three years from a standing start.

Claude Code became the fastest-growing product in enterprise software history

The growth story at Anthropic is, to a remarkable degree, a single-product story. Claude Code, the company’s agentic AI coding tool launched publicly in mid-2025, has become the fastest-growing product in the company’s history — and, by several measures, one of the fastest-growing software products ever built.

Claude Code hit $1 billion in annualized revenue within six months of launch, and the growth hasn’t slowed down. By February 2026, the product was generating over $2.5 billion in run-rate revenue. The company also said Claude Code’s weekly active users had doubled since January 1 and that business subscriptions had quadrupled since the start of 2026.

The mechanics of the product are straightforward. Claude Code is not a chatbot that suggests snippets. It reads a codebase, plans a sequence of actions, executes them using real development tools, evaluates the result, and adjusts its approach. The developer sets the objective and retains control over what gets committed, but the execution loop runs independently. The average developer using Claude Code now spends 20 hours per week working with the tool.

At Anthropic itself, the majority of code is now written by Claude Code. Engineers focus on architecture, product thinking, and continuous orchestration: managing multiple agents in parallel, giving direction, and making the decisions that shape what gets built.

That last point may be the most revealing detail Amodei disclosed at the conference: this is the first year Anthropic’s own internal pull requests have inflected upward due to Claude’s work on the company’s own codebase. The tool that Anthropic sells to developers is now a material contributor to Anthropic’s own engineering output. That creates a feedback loop that is almost impossible for competitors without a comparable product to replicate — the company is using its own product to build the next version of its own product.

The enterprise numbers tell the same story. The company now counts over 1,000 enterprise customers spending more than $1 million per year on Claude services, a figure that has doubled since February. Much of this increase has been fueled by a wave of corporate customers including Uber and Netflix.

Amodei framed the adoption curve in economic terms. “Software engineers are the ones who are fastest to adopt new technology,” he said on stage. “It’s a foreshadowing of how things are going to work across the economy, and how the economy is going to be transformed by AI.”

Anthropic’s 80x growth created a compute crisis it couldn’t solve alone

Hypergrowth creates its own category of problem. When demand outstrips supply by an order of magnitude, the constraint is not go-to-market strategy or product-market fit. The constraint is physics.

The company is growing so fast that its infrastructure has struggled to keep up, forcing Anthropic into what may be the most unexpected partnership in the current AI cycle. Amodei’s comments came hours after Anthropic announced a deal with Elon Musk’s SpaceX to use all of the compute capacity at his company’s Colossus 1 data center in Memphis, Tennessee. As part of the agreement, Anthropic will get access to more than 300 megawatts of capacity — over 220,000 Nvidia GPUs, including dense deployments of H100, H200, and next-generation GB200 accelerators.

The deal is remarkable for several reasons. Musk has been, until very recently, one of Anthropic’s most vocal critics. He has said Anthropic is “doomed to become the opposite of its name” and wrote in February that “Anthropic hates Western Civilization.” But on Wednesday, Musk changed his tune, saying he spent a lot of time with senior members of the Anthropic team over the past week and that he was “impressed.” “Everyone I met was highly competent and cared a great deal about doing the right thing. No one set off my evil detector,” Musk wrote.

The strategic logic on both sides is clear. xAI’s Colossus 1 ended up with capacity that Grok’s user base never grew into, while Anthropic needs compute immediately. Anthropic has been signing deals with Amazon, Google, Nvidia, and Microsoft for more compute capacity, but most of that isn’t expected to come online until late 2026 or early 2027. The SpaceX deal gives Anthropic a significant boost now — the key word being “now.”

As one industry watcher summarized the alignment: “Elon’s enemy is Sam. Dario’s enemy is Sam. Enemy of my enemy is a compute partner.”

Last month, Anthropic said demand for Claude has led to “inevitable strain on our infrastructure,” which has impacted “reliability and performance” for its users, particularly during peak hours. The company admitted in a postmortem from late April that three bugs had affected Claude Code since March 4, and that internal tests hadn’t caught them, leading to several weeks of degraded performance. Amodei said at the Code with Claude conference that the company is “working as quickly as possible to provide more” capacity and will “pass that compute on to you as soon as we can.”

A near-trillion-dollar valuation makes Anthropic’s IPO the most anticipated debut in years

The growth figures arrive at a moment when Anthropic’s valuation is itself becoming one of the defining financial stories of the AI era.

Anthropic has begun weighing a fresh funding round that would value the company at more than $900 billion, according to people familiar with the matter, potentially leapfrogging its longtime rival OpenAI as the world’s most valuable AI startup. The velocity of the escalation is difficult to overstate. From $61.5 billion in March 2025, to $183 billion by its Series F in September, to $380 billion in February, to, if the current discussions proceed, more than $900 billion in May. Anthropic’s shares were already trading at an implied $1 trillion valuation on secondary markets earlier this month.

Instead of cashing out, many existing investors are waiting to potentially exit during Anthropic’s anticipated IPO later this year. The company is raising what is likely to be its last private round before going public to fund its massive computing needs. Bloomberg has reported that the company is weighing an IPO as early as October 2026, with Goldman Sachs, JPMorgan, and Morgan Stanley already in early discussions.

Anthropic is also building out infrastructure on longer time horizons. Amazon has agreed to invest up to $25 billion in Anthropic, securing up to 5 gigawatts of compute capacity for training and deploying Claude models. Anthropic also secured 5 gigawatts of computing capacity as part of a separate deal with Google and Broadcom that will start to come online next year. The total commitment is staggering — tens of gigawatts of compute across three separate hardware ecosystems: Amazon’s Trainium chips, Google’s TPUs via Broadcom, and Nvidia GPUs through SpaceX and Microsoft Azure.

For perspective: Anthropic’s $30 billion run rate exceeds the trailing twelve-month revenues of all but approximately 130 S&P 500 companies. A company that was essentially pre-revenue in early 2024 now out-earns most of the Fortune 500.

That comparison comes with caveats. Private-market revenue run rate is not the same thing as audited GAAP revenue, gross margin, free cash flow, or public float. OpenAI has internally argued that Anthropic’s $30 billion figure is overstated by roughly $8 billion, pointing to questions about whether revenues from AWS and Google Cloud should be reported at gross value or net of the partner’s cut. The accounting question will ultimately be resolved when both companies file IPO prospectuses — but even on a net basis, Anthropic’s growth rate is unlike anything in enterprise software history.

Dario Amodei’s vision for AI extends far beyond coding — and he’s given himself a deadline

The financial story — 80x growth, a near-trillion-dollar valuation, a scramble to secure enough GPUs to meet demand — is dramatic on its own terms. But Amodei used his time on stage to place it inside a larger thesis about where AI is headed.

He described a progression from single agents to multiple agents to what he called whole organizational intelligence — from “a team of smart people in a room” to “a country of geniuses in the data center.” The framing is deliberately expansive. What Anthropic is selling today is a coding tool. What Amodei is describing is a future in which entire categories of knowledge work are performed by fleets of AI agents operating in parallel, supervised by humans who define objectives and review outputs.

He reiterated a prediction he made roughly a year ago: that 2026 would see the first billion-dollar company run entirely by a single person. “Hasn’t quite happened yet,” he said. “But we’ve got seven more months.”

The company has also been navigating political headwinds. The Pentagon declared Anthropic a supply chain risk in March, blacklisting it from work with the military. The company has warned the designation could result in billions in lost revenue, with over one hundred enterprise customers reportedly expressing doubts about continuing their relationships.

And yet — as that scuffle makes its way through the legal system, Anthropic is only getting more popular. Amodei said this week he’s eventually hoping for “more normal” expansion.

There is a temptation, when covering a company growing at this rate, to let the numbers speak for themselves. They shouldn’t. Growth at 80x annualized is not a business plan — it’s an emergency. It means demand has outrun infrastructure, that customers want something the company cannot yet reliably deliver at scale, and that every week of constrained capacity is a week during which competitors can close the gap.

The investors funding Anthropic — including SoftBank, Amazon, Nvidia, Google, a16z, Lightspeed, and ICONIQ — are making a specific bet: that compute costs continue to fall per unit of intelligence, that revenue keeps compounding faster than burn, and that whoever owns the AI infrastructure layer in 2029 will generate returns that make the interim losses irrelevant.

Amodei’s candor at Code with Claude was not a victory lap. It was a diagnostic — an admission that his company is running faster than it can steer. He planned for a world of 10x growth and got 80x instead. Now he has seven months to prove that the infrastructure, the organization, and the vision can catch up to the demand. The country of geniuses in the data center is getting crowded. The question is whether anyone remembered to build enough rooms.

-

Crypto World1 day ago

Crypto World1 day agoHarrisX Poll Found 52% of Registered Voters Support the CLARITY Act

-

NewsBeat6 days ago

NewsBeat6 days agoChannel 5 – All Creatures Great and Small series 7 new post

-

Crypto World2 days ago

Crypto World2 days agoUpbit adds B3 Korean won pair as Base token gains Korea access

-

Tech5 days ago

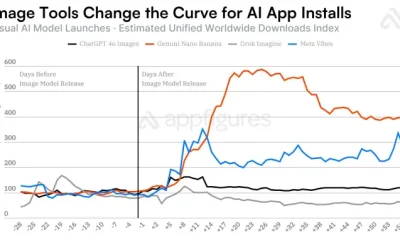

Tech5 days agoImage AI models now drive app growth, beating chatbot upgrades

-

News Videos6 days ago

News Videos6 days agoAP Dhillon – Old Money (Official Audio)

-

NewsBeat2 days ago

NewsBeat2 days agoNCP car park operator enters administration putting 340 UK sites at risk of closure

-

Fashion18 hours ago

Fashion18 hours agoWeekend Open Thread: Marianne Dress

-

Sports6 days ago

Sports6 days agoFive killed in Texas plane crash identified as Amarillo pickleball players

-

Entertainment7 days ago

New Netflix Movies in May 2026 — My Top 3 Picks to Stream

-

Crypto World7 days ago

Crypto World7 days agoPi Network Mandates Protocol 23 Upgrade for All Mainnet Nodes Before May 15 Deadline

-

Entertainment6 days ago

Anna Nicole Smith’s Daughter Attends 2026 Kentucky Derby

-

Crypto World6 days ago

Crypto World6 days agoBitcoin mining equities rise in 2026 as BTC lags behind

-

Entertainment6 days ago

Entertainment6 days agoMelissa Joan Hart and More Stars Attend 2026 Kentucky Derby

-

Entertainment6 days ago

Entertainment6 days agoVenus Williams’ Best Met Gala Looks Over the Years

-

Business6 days ago

Business6 days agoLuka Doncic Injury Update: Doncic’s Hamstring Recovery Slows Lakers’ Hopes Against Thunder: Can He Run Yet?

-

Entertainment6 days ago

“Storage Wars” star Darrell Sheets' ex-wife breaks silence on his death

-

Sports6 days ago

Sports6 days agoIs Man United v Liverpool on TV? Channel, streaming and how to watch Premier League fixture

-

Business7 days ago

Business7 days agoKuwait International Airport Resumes Operations After Closure as Regional Tensions Ease in 2026

-

Entertainment7 days ago

Entertainment7 days agoNetflix’s 10-Part Miniseries Based on a True Story Is a Perfect Weekend Binge

-

Crypto World7 days ago

Crypto World7 days agoQ1 2026 Tech Layoffs AI Wave Hits 81,747 as Firms Shift to AI Infrastructure

You must be logged in to post a comment Login