Muse Spark is part of a ‘ground-up overhaul’ of Meta’s AI efforts, the company said.

Nearly a year after being established, Meta Superintelligence Labs (MSL) has finally debuted its first product, a multimodal model “purpose-built” for Meta’s products.

Muse Spark is the first in the family of Muse models and represents a “ground-up overhaul” of the company’s AI efforts, Meta said in a statement. The launch comes after the company poured multiple billions into its supposed efforts towards ‘superintelligence’, a hypothetical AI system with abilities beyond human intelligence.

Muse Spark is the “first step toward a personal superintelligence”, Meta said. The model can be accessed via Meta.ai and the Meta AI app.

According to the company, Muse Spark achieves strong performance on visual STEM questions, entity recognition and localisation. It performs on par with existing models from AI rivals such as OpenAI’s GPT-5.4, Anthropic’s Opus 4.6 and Google’s Gemini 3.1 Pro.

Muse is also marketed as a way to “learn about and improve” user health, Meta added, and is expected to be rolled out to WhatsApp, Facebook, Instagram and the company’s AI glasses in the coming weeks.

The company said it collaborated with more than 1,000 physicians to curate training data that enables “factual and comprehensive” responses. For comparison, OpenAI said it worked with 260 physicians to develop its ChatGPT Health offering.

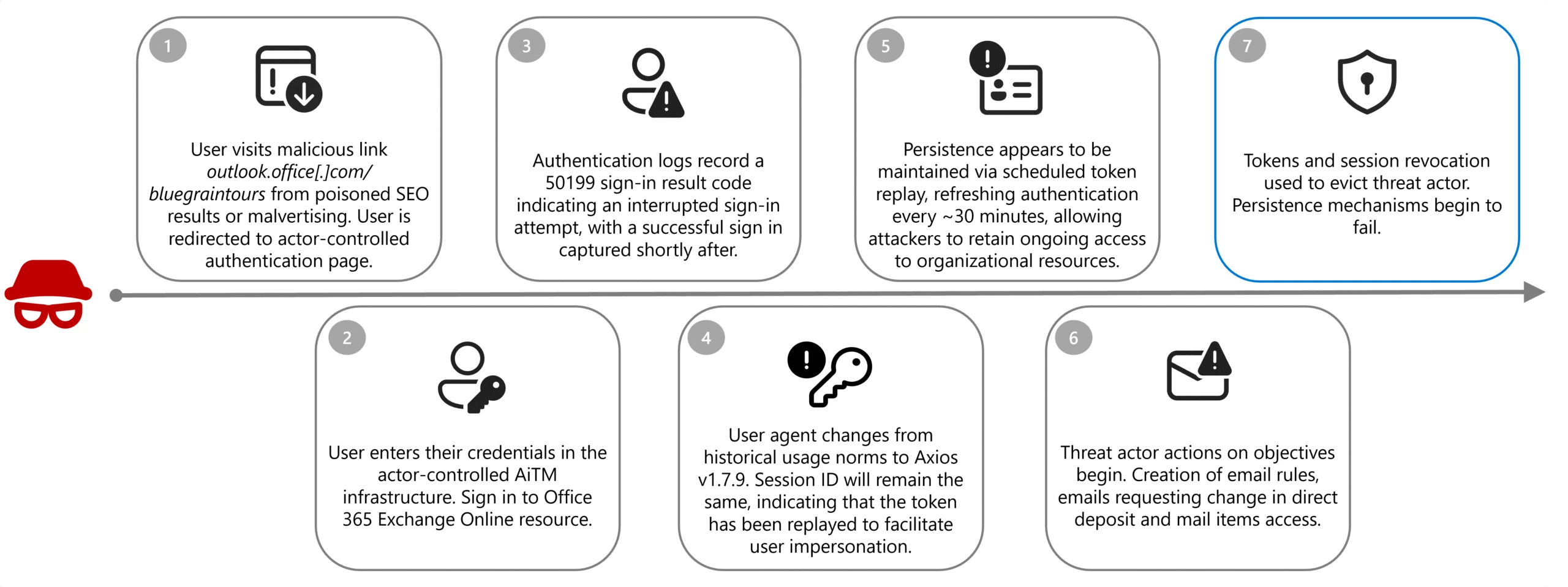

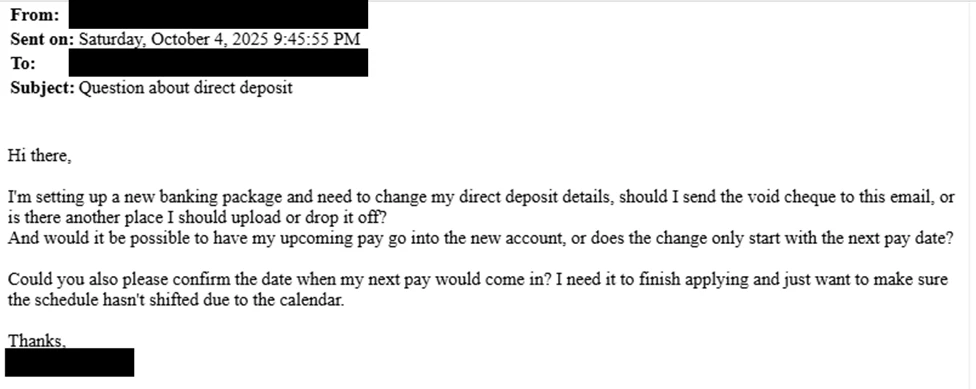

Moreover, Meta found that Muse Spark demonstrated a “strong refusal behaviour” across high-risk areas such as biological and chemical weapons. The model also does not demonstrate requisite autonomous capability or hazardous tendencies to realise threat scenarios around cybersecurity, Meta added.

Meanwhile, Anthropic’s new Claude Mythos, released in preview to select users earlier this week, was found to be significantly more capable at generating exploits than other models.

Concerned that Meta was lagging behind the likes of OpenAI and Anthropic, CEO Mark Zuckerberg set up MSL last June after acquiring Scale AI for $14.3bn and hiring its CEO Alexandr Wang to lead the team.

“This is only the start. As we expand these features, expect richer, more visual results, with Reels, photos and posts woven directly into your answers,” Meta said.

MSL has continued to make big-name hires to add to the efforts, including Moltbook founders Matt Schlicht and Ben Parr, co-founder of Safe Superintelligence Daniel Gross and Apple’s former AI lead Ruoming Pang. The company cut 600 jobs at MSL in October.

Earlier this year, Meta said that it is budgeting up to $135bn in total expenses for 2026. The growth, it said, is driven by an increased investment to support MSL as well as its core business.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

You must be logged in to post a comment Login