Most enterprise RAG pipelines are optimized for one search behavior. They fail silently on the others. A model trained to synthesize cross-document reports handles constraint-driven entity search poorly. A model tuned for simple lookup tasks falls apart on multi-step reasoning over internal notes. Most teams find out when something breaks.

Databricks set out to fix that with KARL, short for Knowledge Agents via Reinforcement Learning. The company trained an agent across six distinct enterprise search behaviors simultaneously using a new reinforcement learning algorithm. The result, the company claims, is a model that matches Claude Opus 4.6 on a purpose-built benchmark at 33% lower cost per query and 47% lower latency, trained entirely on synthetic data the agent generated itself with no human labeling required. That comparison is based on KARLBench, which Databricks built to evaluate enterprise search behaviors.

“A lot of the big reinforcement learning wins that we’ve seen in the community in the past year have been on verifiable tasks where there is a right and a wrong answer,” Jonathan Frankle, Chief AI Scientist at Databricks, told VentureBeat in an exclusive interview. “The tasks that we’re working on for KARL, and that are just normal for most enterprises, are not strictly verifiable in that same way.”

Those tasks include synthesizing intelligence across product manager meeting notes, reconstructing competitive deal outcomes from fragmented customer records, answering questions about account history where no single document has the full answer and generating battle cards from unstructured internal data. None of those has a single correct answer that a system can check automatically.

“Doing reinforcement learning in a world where you don’t have a strict right and wrong answer, and figuring out how to guide the process and make sure reward hacking doesn’t happen — that’s really non-trivial,” Frankle said. “Very little of what companies do day to day on knowledge tasks are verifiable.”

The generalization trap in enterprise RAG

Standard RAG breaks down on ambiguous, multi-step queries drawing on fragmented internal data that was never designed to be queried.

To evaluate KARL, Databricks built the KARLBench benchmark to measure performance across six enterprise search behaviors: constraint-driven entity search, cross-document report synthesis, long-document traversal with tabular numerical reasoning, exhaustive entity retrieval, procedural reasoning over technical documentation and fact aggregation over internal company notes. That last task is PMBench, built from Databricks’ own product manager meeting notes — fragmented, ambiguous and unstructured in ways that frontier models handle poorly.

Training on any single task and testing on the others produces poor results. The KARL paper shows that multi-task RL generalizes in ways single-task training does not. The team trained KARL on synthetic data for two of the six tasks and found it performed well on all four it had never seen.

To build a competitive battle card for a financial services customer, for example, the agent has to identify relevant accounts, filter for recency, reconstruct past competitive deals and infer outcomes — none of which is labeled anywhere in the data.

Frankle calls what KARL does “grounded reasoning”: running a difficult reasoning chain while anchoring every step in retrieved facts. “You can think of this as RAG,” he said, “but like RAG plus plus plus plus plus plus, all the way up to 200 vector database calls.”

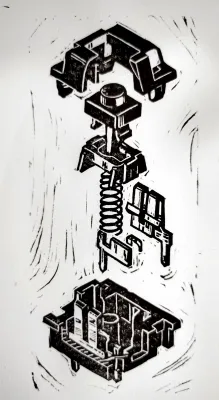

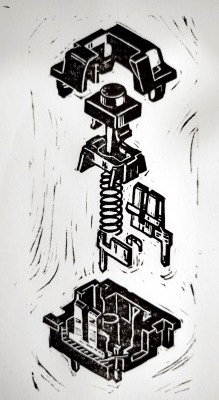

The RL engine: why OAPL matters

KARL’s training is powered by OAPL, short for Optimal Advantage-based Policy Optimization with Lagged Inference policy. It’s a new approach, developed jointly by researchers from Cornell, Databricks and Harvard and published in a separate paper the week before KARL.

Standard LLM reinforcement learning uses on-policy algorithms like GRPO (Group Relative Policy Optimization), which assume the model generating training data and the model being updated are in sync. In distributed training, they never are. Prior approaches corrected for this with importance sampling, introducing variance and instability. OAPL embraces the off-policy nature of distributed training instead, using a regression objective that stays stable with policy lags of more than 400 gradient steps, 100 times more off-policy than prior approaches handled. In code generation experiments, it matched a GRPO-trained model using roughly three times fewer training samples.

OAPL’s sample efficiency is what keeps the training budget accessible. Reusing previously collected rollouts rather than requiring fresh on-policy data for every update meant the full KARL training run stayed within a few thousand GPU hours. That is the difference between a research project and something an enterprise team can realistically attempt.

Agents, memory and the context stack

There has been a lot of discussion in the industry in recent months about how RAG can be replaced with contextual memory, also sometimes referred to as agentic memory.

For Frankle, it’s not an either/or discussion, rather he sees it as a layered stack. A vector database with millions of entries sits at the base, which is too large for context. The LLM context window sits at the top. Between them, compression and caching layers are emerging that determine how much of what an agent has already learned it can carry forward.

For KARL, this is not abstract. Some KARLBench tasks required 200 sequential vector database queries, with the agent refining searches, verifying details and cross-referencing documents before committing to an answer, exhausting the context window many times over. Rather than training a separate summarization model, the team let KARL learn compression end-to-end through RL: when context grows too large, the agent compresses it and continues, with the only training signal being the reward at the end of the task. Removing that learned compression dropped accuracy on one benchmark from 57% to 39%.

“We just let the model figure out how to compress its own context,” Frankle said. “And this worked phenomenally well.”

Where KARL falls short

Frankle was candid about the failure modes. KARL struggles most on questions with significant ambiguity, where multiple valid answers exist and the model can’t determine whether the question is genuinely open-ended or just hard to answer. That judgment call is still an unsolved problem.

The model also exhibits what Frankle described as giving up early on some queries — stopping before producing a final answer. He pushed back on framing this as a failure, noting that the most expensive queries are typically the ones the model gets wrong anyway. Stopping is often the right call.

KARL was also trained and evaluated exclusively on vector search. Tasks requiring SQL queries, file search, or Python-based calculation are not yet in scope. Frankle said those capabilities are next on the roadmap, but they are not in the current system.

What this means for enterprise data teams

KARL surfaces three decisions worth revisiting for teams evaluating their retrieval infrastructure.

The first is pipeline architecture. If your RAG agent is optimized for one search behavior, the KARL results suggest it is failing on others. Multi-task training across diverse retrieval behaviors produces models that generalize. Narrow pipelines do not.

The second is why RL matters here — and it’s not just a training detail. Databricks tested the alternative: distilling from expert models via supervised fine-tuning. That approach improved in-distribution performance but produced negligible gains on tasks the model had never seen. RL developed general search behaviors that transferred. For enterprise teams facing heterogeneous data and unpredictable query types, that distinction is the whole game.

The third is what RL efficiency actually means in practice. A model trained to search better completes tasks in fewer steps, stops earlier on queries it cannot answer, diversifies its search rather than repeating failed queries, and compresses its own context rather than running out of room. The argument for training purpose-built search agents rather than routing everything through general-purpose frontier APIs is not primarily about cost. It is about building a model that knows how to do the job.