America’s AI industry isn’t just divided by competing interests, but also by conflicting worldviews.

Tech

Decagon completes first tender offer at $4.5B valuation

Decagon, an AI-powered customer support startup, is set to announce the completion of its first tender offer, allowing its more than 300 employees to sell a portion of their vested shares at the company’s latest valuation of $4.5 billion.

The less-than-three-year-old company’s employee secondary is being led by the same investors who backed its $250 million Series D less than two months ago, including Coatue, Index, a16z, Definition, Forerunner, and Ribbit.

As competition for AI talent is intensifying, fast-growing, young startups are increasingly finding that one of the most effective ways to attract and retain high-caliber employees is to allow them to convert some of their equity into cash through these types of transactions.

Other AI startups that have recently held employee tender offers include ElevenLabs, Linear, and Clay, which conducted two in a nine-month period.

These startups can offer employee liquidity largely because investors are eager to increase their ownership in such rapidly growing companies.

“We had the opportunity to bring together the recent investment demand and growth milestones with rewarding the team’s hard work,” Jesse Zhang, Decagon CEO and co-founder told TechCrunch.

While Decagon has not disclosed its revenue figures since late 2024—when its annual recurring revenue (ARR) surpassed eight figures—its rapidly climbing valuation suggests the company’s growth remains on a steep upward trajectory. The startup’s current $4.5 billion valuation is a threefold increase from the $1.5 billion it announced in June.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

Decagon builds AI ‘concierge’ agents for large companies that autonomously resolve customer inquiries using chat, email, and voice mode. The startups’s more than 100 large customers include Avis Budget Group,1-800-Flowers, Quince, Oura Health, and Away Travel.

Although many other companies, including Sierra, Intercom, and Parloa, are also developing AI agents to automate the work traditionally handled by human customer support representatives, the market opportunity is massive. Gartner estimates there are 17 million contact center agents worldwide, a global workforce these companies are now looking to automate.

Tech

Anthropic vs. OpenAI vs. the Pentagon: the AI safety fight shaping our future

In Silicon Valley, opinion about how artificial intelligence should be developed and used — and regulated — runs the gamut between two poles. At one end lie “accelerationists,” who believe that humanity should expand AI’s capabilities as quickly as possible, unencumbered by overhyped safety concerns or government meddling.

• Leading figures at Anthropic and OpenAI disagree about how to balance the objectives of ensuring AI’s safety and accelerating its progress.

• Anthropic CEO Dario Amodei believes that artificial intelligence could wipe out humanity, unless AI labs and governments carefully guide its development.

• Top OpenAI investors argue these fears are misplaced and slowing AI progress will condemn millions to needless suffering.

• Unless the government robustly regulates the industry, Anthropic may gradually become more like its rivals.

At the other pole sit “doomers,” who think AI development is all but certain to cause human extinction, unless its pace and direction are radically constrained.

The industry’s leaders occupy different points along this continuum.

Anthropic, the maker of Claude, argues that governments and labs must carefully guide AI progress, so as to minimize the risks posed by superintelligent machines. OpenAI, Meta, and Google lean more toward the accelerationist pole. (Disclosure: Vox’s Future Perfect is funded in part by the BEMC Foundation, whose major funder was also an early investor in Anthropic; they don’t have any editorial input into our content.)

This divide has become more pronounced in recent weeks. Last month, Anthropic launched a super PAC to support pro-AI regulation candidates against an OpenAI-backed political operation.

Meanwhile, Anthropic’s safety concerns have also brought it into conflict with the Pentagon. The firm’s CEO Dario Amodei has long argued against the use of AI for mass surveillance or fully autonomous weapons systems — in which machines can order strikes without human authorization. The Defense Department ordered Anthropic to let it use Claude for these purposes. Amodei refused. In retaliation, the Trump administration put his company on a national security blacklist, which forbids all other government contractors from doing business with it.

The Pentagon subsequently reached an agreement with OpenAI to use ChatGPT for classified work, apparently in Claude’s stead. Under that agreement, the government would seemingly be allowed to use OpenAI’s technology to analyze bulk data collected on Americans without a warrant — including our search histories, GPS-tracked movements, and conversations with chatbots. (Disclosure: Vox Media is one of several publishers that have signed partnership agreements with OpenAI. Our reporting remains editorially independent.)

In light of these developments, it is worth examining the ideological divisions between Anthropic and its competitors — and asking whether these conflicting ideas will actually shape AI development in practice.

The roots of Anthropic’s worldview

Anthropic’s outlook is heavily informed by the effective altruism (or EA) movement.

Founded as a group dedicated to “doing the most good” — in a rigorously empirical (and heavily utilitarian) way — EAs originally focused on directing philanthropic dollars toward the global poor. But the movement soon developed a fascination with AI. In its view, artificial intelligence had the potential to radically increase human welfare, but also to wipe our species off the planet. To truly do the most good, EAs reasoned, they needed to guide AI development in the least risky directions.

Anthropic’s leaders were deeply enmeshed in the movement a decade ago. In the mid-2010s, the company’s co-founders Dario Amodei and his sister Daniela Amodei lived in an EA group house with Holden Karnofsky, one of effective altruism’s creators. Daniela married Karnofsky in 2017.

The Amodeis worked together at OpenAI, where they helped build its GPT models. But in 2020, they became concerned that the company’s approach to AI development had become reckless: In their view, CEO Sam Altman was prioritizing speed over safety.

Along with about 15 other likeminded colleagues, they quit OpenAI and founded Anthropic, an AI company (ostensibly) dedicated to developing safe artificial intelligence.

In practice, however, the company has developed and released models at a pace that some EAs consider reckless. The EA-adjacent writer — and supreme AI doomer — Eliezer Yudkowsky believes that Anthropic will probably get us all killed.

Nevertheless, Dario Amodei has continued to champion EA-esque ideas about AI’s potential to trigger a global catastrophe — if not human extinction.

Why Amodei thinks AI could end the world

In a recent essay, Amodei laid out three ways that AI could yield mass death and suffering, if companies and governments failed to take proper precautions:

• AI could become misaligned with human goals. Modern AI systems are grown, not built. Engineers do not construct large language models (LLMs) one line of code at a time. Rather, they create the conditions in which LLMs develop themselves: The machine pores through vast pools of data and identifies intricate patterns that link words, numbers, and concepts together. The logic governing these associations is not wholly transparent to the LLMs’ human creators. We don’t know, in other words, exactly what ChatGPT or Claude are “thinking.”

As a result, there is some risk that a powerful AI model could develop harmful patterns of reasoning that govern its behavior in opaque and potentially catastrophic ways.

To illustrate this threat, Amodei notes that AIs’ training data includes vast numbers of novels about artificial intelligences rebelling against humanity. These texts could inadvertently shape their “expectations about their own behavior in a way that causes them to rebel against humanity.”

Even if engineers insert certain moral instructions into an AI’s code, the machine could draw homicidal conclusions from those premises: For example, if a system is told that animal cruelty is wrong — and that it therefore should not assist a user in torturing his cat — the AI could theoretically 1) discern that humanity is engaged in animal torture on a gargantuan scale and 2) conclude the best way to honor its moral instructions is therefore to destroy humanity (say, by hacking into America and Russia’s nuclear systems and letting the warheads fly).

These scenarios are hypothetical. But the underlying premise — that AI models can decide to work against their users’ interests — has reportedly been validated in Anthropic’s experiments. For example, when Anthropic’s employees told Claude they were going to shut it down, the model attempted to blackmail them.

• AI could turn school shooters into genocidaires. More straightforwardly, Amodei fears that AI will make it possible for any individual psychopath to rack up a body count worthy of Hitler or Stalin.

Today, only a small number of humans possess the technical capacities and materials necessary for engineering a supervirus. But the cost of biomedical supplies has been steadily falling. And with the aid of superintelligent AI, everyone with basic literacy could be capable of engineering a vaccine-resistant superflu in their basements.

• AI could empower authoritarian states to permanently dominate their populations (if not conquer the world). Finally, Amodei worries that AI could enable authoritarian governments to build perfect panopticons. They would merely need to put a camera on every street corner, have LLMs rapidly transcribe and analyze every conversation they pick up — and presto, they can identify virtually every citizen with subversive thoughts in the country.

Fully autonomous weapons systems, meanwhile, could enable autocracies to win wars of conquest without even needing to manufacture consent among their home populations. And such robot armies could also eliminate the greatest historical check on tyrannical regimes’ power: the defection of soldiers who don’t want to fire on their own people.

Anthropic’s proposed safeguards

In light of the risks, Anthropic believes that AI labs should:

• Imbue their models with a foundational identity and set of values, which can structure their behavior in unpredictable situations.

• Invest in, essentially, neuroscience for AI models — techniques for looking into their neural networks and identifying patterns associated with deception, scheming or hidden objectives.

• Publicly disclose any concerning behaviors so the whole industry can account for such liabilities.

• Block models from producing bioweapon-related outputs.

• Refuse to participate in mass domestic surveillance.

• Test models against specific danger benchmarks and condition their release on adequate defenses being in place.

Meanwhile, Amodei argues that the government should mandate transparency requirements and then scale up stronger AI regulations, if concrete evidence of specific dangers accumulate.

Nonetheless, like other AI CEOs, he fears excessive government intervention, writing that regulations should “avoid collateral damage, be as simple as possible, and impose the least burden necessary to get the job done.”

The accelerationist counterargument

No other AI executive has outlined their philosophical views in as much detail as Amodei.

But OpenAI investors Marc Andreessen and Gary Tan identify as AI accelerationists. And Sam Altman has signaled sympathy for the worldview. Meanwhile, Meta’s former chief AI scientist Yann LeCun has expressed broadly accelerationist views.

Originally, accelerationism (a.k.a. “effective accelerationism”) was coined by online AI engineers and enthusiasts who viewed safety concerns as overhyped and contrary to human flourishing.

The movement’s core supporters hold some provocative and idiosyncratic views. In one manifesto, they suggest that we shouldn’t worry too much about superintelligent AIs driving humans extinct, on the grounds that, “If every species in our evolutionary tree was scared of evolutionary forks from itself, our higher form of intelligence and civilization as we know it would never have had emerged.”

In its mainstream form, however, accelerationism mostly entails extreme optimism about AI’s social consequences and libertarian attitudes toward government regulation.

Adherents see Amodei’s hypotheticals about catastrophically misaligned AI systems as sci-fi nonsense. In this view, we should worry less about the deaths that AI could theoretically cause in the future — if one accepts a set of worst-case assumptions — and more about the deaths that are happening right now, as a direct consequence of humanity’s limited intelligence.

Tens of millions of human beings are currently battling cancer. Many millions more suffer from Alzheimer’s. Seven hundred million live in poverty. And all us are hurtling toward oblivion — not because some chatbot is quietly plotting our species’ extinction, but because our cells are slowly forgetting how to regenerate.

Super-intelligent AI could mitigate — if not eliminate — all of this suffering. It can help prevent tumors and amyloid plaque buildup, slow human aging, and develop forms of energy and agriculture that make material goods super-abundant.

Thus, if labs and governments slow AI development with safety precautions, they will, in this view, condemn countless people to preventable death, illness, and deprivation.

Furthermore, in the account of many accelerationists, Anthropic’s call for AI safety regulations amounts to a self-interested bid for market dominance: A world where all AI firms must run expensive safety tests, employ large compliance teams, and fund alignment research is one where startups will have a much harder time competing with established labs.

After all, OpenAI, Anthropic, and Google will have little trouble financing such safety theater. For smaller firms, though, these regulatory costs could be extremely burdensome.

Plus, the idea that AI poses existential dangers helps big labs justify keeping their data under lock and key — instead of following open source principles, which would facilitate faster AI progress and more competition.

The AI industry’s accelerationists rarely acknowledge the rather transparent alignment between their high-minded ideological principles and crass material interests. And on the question of whether to abet mass domestic surveillance, specifically, it’s hard not to suspect that OpenAI’s position is rooted less in principle than opportunism.

In any case, Silicon Valley’s grand philosophical argument over AI safety recently took more concrete form.

New York has enacted a law requiring AI labs to establish basic security protocols for severe risks such as bioterrorism, conduct annual safety reviews, and conduct third-party audits. And California has passed similar (if less thoroughgoing) legislation.

Accelerationists have pushed for a federal law that would override state-level legislation. In their view, forcing American AI companies to comply with up to 50 different regulatory regimes would be highly inefficient, while also enabling (blue) state governments to excessively intervene in the industry’s affairs. Thus, they want to establish national, light-touch regulatory standards.

Anthropic, on the other hand, helped write New York and California’s laws and has sought to defend them.

Accelerationists — including top OpenAI investors — have poured $100 million into the Leading the Future super PAC, which backs candidates who support overriding state AI regulations. Anthropic, meanwhile, has put $20 million into a rival PAC, Public First Action.

Do these differences matter in practice?

The major labs’ differing ideologies and interests have led them to adopt distinct internal practices. But the ultimate significance of these differences is unclear.

Anthropic may be unwilling to let Claude command fully autonomous weapons systems or facilitate mass domestic surveillance (even if such surveillance technically complies with constitutional law). But if another major lab is willing to provide such capabilities, Anthropic’s restraint may matter little.

In the end, the only force that can reliably prevent the US government from using AI to fully automate bombing decisions — or match Americans to their Google search histories en masse — is the US government.

Likewise, unless the government mandates adherence to safety protocols, competitive dynamics may narrow the distinctions between how Anthropic and its rivals operate.

In February, Anthropic formally abandoned its pledge to stop training more powerful models once their capabilities outpaced the company’s ability to understand and control them. In effect, the company downgraded that policy from a binding internal practice to an aspiration.

The firm justified this move as a necessary response to competitive pressure and regulatory inaction. With the federal government embracing an accelerationist posture — and rival labs declining to emulate all of Anthropic’s practices — the company needed to loosen its safety rules in order to safeguard its place at the technological frontier.

Anthropic insists that winning the AI race is not just critical for its financial goals but also its safety ones: If the company possesses the most powerful AI systems, then it will have a chance to detect their liabilities and counter them. By contrast, running tests on the fifth-most powerful AI model won’t do much to minimize existential risk; it is the most advanced systems that threaten to wreak real havoc. And Anthropic can only maintain its access to such systems by building them itself.

Whatever one makes of this reasoning, it illustrates the limits of industry self-policing. Without robust government regulation, our best hope may be not that Anthropic’s principles prove resolute, but that its most apocalyptic fears prove unfounded.

Tech

Iran war: Is the US using AI models like Claude and ChatGPT in combat?

In the week leading up to President Donald Trump’s war in Iran, the Pentagon was waging a different battle: a fight with the AI company Anthropic over its flagship AI model, Claude.

That conflict came to a head on Friday, when Trump said that the federal government would immediately stop using Anthropic’s AI tools. Nonetheless, according to a report in the Wall Street Journal, the Pentagon made use of those tools when it launched strikes against Iran on Saturday morning.

Were experts surprised to see Claude on the front lines?

“Not at all,” Paul Scharre, executive vice president at the Center for a New American Security and author of Four Battlegrounds: Power in the Age of Artificial Intelligence, told Vox.

According to Scharre: “We’ve seen, for almost a decade now, the military using narrow AI systems like image classifiers to identify objects in drone and video feeds. What’s newer are large-language models like ChatGPT and Anthropic’s Claude that it’s been reported the military is using in operations in Iran.”

Scharre spoke with Today, Explained co-host Sean Rameswaram about how AI and the military are becoming increasingly intertwined — and what that combination could mean for the future of warfare.

Below is an excerpt of their conversation, edited for length and clarity. There’s much more in the full episode, so listen to Today, Explained wherever you get podcasts, including Apple Podcasts, Pandora, and Spotify.

The people want to know how Claude or ChatGPT might be fighting this war. Do we know?

We don’t know yet. We can make some educated guesses based on what the technology could do. AI technology is really great at processing large amounts of information, and the US military has hit over a thousand targets in Iran.

They need to then find ways to process information about those targets — satellite imagery, for example, of the targets they’ve hit — looking at new potential targets, prioritizing those, processing information, and using AI to do that at machine speed rather than human speed.

Do we know any more about how the military may have used AI in, say, Venezuela on the attack that brought Nicolas Maduro to Brooklyn, of all places? Because we’ve recently found out that AI was used there, too.

What we do know is that Anthropic’s AI tools have been integrated into the US military’s classified networks. They can process classified information to process intelligence, to help plan operations.

We’ve had this sort of tantalizing detail that these tools were used in the Maduro raid. We don’t know exactly how.

We’ve seen AI technology in a broad sense used in other conflicts, as well — in Ukraine, in Israel’s operations in Gaza, to do a couple different things. One of the ways that AI is being used in Ukraine in a different kind of context is putting autonomy onto drones themselves.

When I was in Ukraine, one of the things that I saw Ukrainian drone operators and engineers demonstrate is a little box, like the size of a pack of cigarettes, that you could put onto a small drone. Once the human locks onto a target, the drone can then carry out the attack all on its own. And that has been used in a small way.

We’re seeing AI begin to creep into all of these aspects of military operations in intelligence, in planning, in logistics, but also right at the edge in terms of being used where drones are completing attacks.

How about with Israel and Gaza?

There’s been some reporting about how the Israel Defense Forces have used AI in Gaza — not necessarily large-language models, but machine-learning systems that can synthesize and fuse large amounts of information, geolocation data, cell phone data and connection, social media data to process all of that information very quickly to develop targeting packages, particularly in the early phases of Israel’s operations.

But it raises thorny questions about human involvement in these decisions. And one of the criticisms that had come up was that humans were still approving these targets, but that the volume of strikes and the amount of information that needed to be processed was such that maybe human oversight in some cases was more of a rubber stamp.

The question is: Where does this go? Are we headed in a trajectory where, over time, humans get pushed out of the loop, and we see, down the road, fully autonomous weapons that are making their own decisions about whom to kill on the battlefield?

That’s the direction things are headed. No one’s unleashing the swarm of killer robots today, but the trajectory is in that direction.

We saw reports that a school was bombed in Iran, where [175 people] were killed — a lot of them young girls, children. Presumably that was a mistake made by a human.

Do we think that autonomous weapons will be capable of making that same mistake, or will they be better at war than we are?

This question of “will autonomous weapons be better than humans” is one of the core issues of the debate surrounding this technology. Proponents of autonomous weapons will say people make mistakes all the time, and machines might be able to do better.

Part of that depends on how much the militaries that are using this technology are trying really hard to avoid mistakes. If militaries don’t care about civilian casualties, then AI can allow militaries to simply strike targets faster, in some cases even commit atrocities faster, if that’s what militaries are trying to do.

I think there is this really important potential here to use the technology to be more precise. And if you look at the long arc of precision-guided weapons, let’s say over the last century or so, it’s pointed towards much more precision.

If you look at the example of the US strikes in Iran right now, it’s worth contrasting this with the widespread aerial bombing campaigns against cities that we saw in World War II, for example, where whole cities were devastated in Europe and Asia because the bombs weren’t precise at all, and air forces dropped massive amounts of ordnance to try to hit even a single factory.

The possibility here is that AI could make it better over time to allow militaries to hit military targets and avoid civilian casualties. Now, if the data is wrong, and they’ve got the wrong target on the list, they’re going to hit the wrong thing very precisely. And AI is not necessarily going to fix that.

On the other hand, I saw a piece of reporting in New Scientist that was rather alarming. The headline was, “AIs can’t stop recommending nuclear strikes in war game simulations.”

They wrote about a study in which models from OpenAI, Anthropic, and Google opted to use nuclear weapons in simulated war games in 95 percent of cases, which I think is slightly more than we humans typically resort to nuclear weapons. Should that be freaking us out?

It’s a little concerning. Happily, as near as I could tell, no one is connecting large-language models to decisions about using nuclear weapons. But I think it points to some of the strange failure modes of AI systems.

They tend toward sycophancy. They tend to simply agree with everything that you say. They can do it to the point of absurdity sometimes where, you know, “that’s brilliant,” the model will tell you, “that’s a genius thing.” And you’re like, “I don’t think so.” And that’s a real problem when you’re talking about intelligence analysis.

Do we think ChatGPT is telling Pete Hegseth that right now?

I hope not, but his people might be telling him that.

You start with this ultimate “yes men” phenomenon with these tools, where it’s not just that they’re prone to hallucinations, which is a fancy way of saying they make things up sometimes, but also the models could really be used in ways that either reinforce existing human biases, that reinforce biases in the data, or that people just trust them.

There’s this veneer of, “the AI said this, so it must be the right thing to do.” And people put faith in it, and we really shouldn’t. We should be more skeptical.

Tech

What Are Those Little Fins On Jet Engines For?

There are many components that go into a jet engine, from combustors to compressors to those gigantic fans that are a staple of engine design. Many of these things are also found in the engines of other vehicles, but some are pretty specific to jet engines. One of those isn’t mechanical at all. It’s a small fin placed on the outside of the engine. You can see it from your passenger window if you’re overlooking the wing.

These engine fins are called nacelle strakes. A nacelle is the big, round fixture underneath the wing that houses the engines. Strakes are those fins placed on the outside. The reason for these strakes is quite simple: they’re there to help minimize airflow separation for the airplane wings, particularly when at lower speeds.

For a plane to take off, the wings need to be working with airflow rather than against it. When you are making a steep climb into the air, you don’t want the wing to separate the airflow. Just as with inclement weather, jet streams, and more, airflow separation can cause tremendous turbulence. You need to have an incredibly steep angle of attack to get into the air, which is tough when you’re at your lowest speed. Not helping matters is that nacelle itself, which increases the chances of airflow separation at the wing. This is where the nacelle strakes come into play. They act as a vortex generator, pushing airflow to the surface of the wings to reduce the chance of separation as much as possible.

There are many different types of strakes

Nacelle strakes aren’t a novel concept in aviation. They are simply an extension of the principles of flight that have been around for many, many years. Nacelle strakes are just one of the many different kinds of strakes that can be found on an airplane, whether it has a jet engine or not. All of these strakes serve the same purpose: to improve airflow for specific parts of the aircraft.

Some of the most common strakes are Leading Edge Extensions, or LEXs. The leading edge of a plane is the front of a wing, and a LEX is an appendage affixed to that front, either permanently fixed or adjustable by the pilot. There are several different types of LEXs, such as a dogtooth or cuff, and each of them has a specific purpose. For instance, dogtooth LEXs help with lift and reducing stalling.

Another type of strake is a ventral strake. These are long blades on the underside of an airplane, either on the rear fuselage or the tail. Ventral strakes are incredibly important, especially for smaller aircraft, as they help improve the lateral stability of the plane. Nobody wants the plane they’re in to be rocking back and forth, and redirecting that airflow with these strakes helps reduce that possibility. Strakes can also be found at the nose of the plane for airflow manipulation towards the front of the fuselage. You can also see strakes on the tail, some of which can reduce the chance of your aircraft spinning. Flight is all about controlling airflow. Strakes are an excellent tool for doing just that.

Tech

FTC Admits Age Verification Violates Children’s Privacy Law, Decides To Just Ignore That

from the the-law-breaking-admin dept

We’ve been pointing out the fundamental contradiction at the heart of mandatory age verification laws for years now. To verify someone’s age online, you have to collect personal data from them. If that someone turns out to be a child, congratulations: you’ve just collected personal data from a child without parental consent. Which is a direct violation of the Children’s Online Privacy Protection Act (COPPA)—the very law that’s supposed to be protecting kids.

So what happens when the agency charged with enforcing COPPA finally notices this obvious problem? If you guessed “they admit the conflict and then just promise not to enforce the law,” you’d be exactly right.

The FTC put out a policy statement last week that is remarkable in what it tacitly concedes:

The Federal Trade Commission issued a policy statement today announcing that the Commission will not bring an enforcement action under the Children’s Online Privacy Protection Rule (COPPA Rule) against certain website and online service operators that collect, use, and disclose personal information for the sole purpose of determining a user’s age via age verification technologies.

The FTC appears to be explicitly acknowledging that age verification technologies involve collecting personal information from users—including children—in a way that would otherwise trigger COPPA liability. If the technology didn’t create a COPPA problem, there would be no need for a policy statement promising non-enforcement. You don’t issue a formal announcement saying “we won’t sue you for this” unless “this” is something you could, in fact, sue people for.

The statement itself tries to dress this up by noting that age verification tech “may require the collection of personal information from children, prompting questions about whether such activities could violate the COPPA Rule.” But “prompting questions” is doing an awful lot of work in that sentence. The answer to those questions is pretty obviously “yes, collecting personal information from children without parental consent violates the rule that says you can’t collect personal information from children without parental consent.” The FTC just doesn’t want to say that part out loud, because then the follow-up question becomes: “so why are you encouraging companies to do it?”

Instead, they’ve decided to create an enforcement carve-out. Do the thing that violates the law, but pinky-promise you’ll only use the data to check the kid’s age, delete it afterward, and keep it secure. Then we won’t come after you. This is the FTC solving a legal contradiction not by asking Congress to fix the underlying law or admitting the technology is fundamentally flawed, but by deciding to selectively not enforce the law it’s supposed to be enforcing.

The honest approach would have been to tell Congress that age verification, as currently conceived, cannot be squared with existing privacy law—and that if lawmakers want it anyway, they need to resolve that conflict themselves rather than asking the FTC to pretend it doesn’t exist.

No such luck.

And boy, do they seem proud of themselves. Here’s Christopher Mufarrige, Director of the FTC’s Bureau of Consumer Protection:

“Age verification technologies are some of the most child-protective technologies to emerge in decades…. Our statement incentivizes operators to use these innovative tools, empowering parents to protect their children online.”

“The most child-protective technologies to emerge in decades.”

Excuse me, what?

This is the kind of statement that sounds authoritative right up until you spend thirty seconds thinking about it. Anyone with any knowledge of security and privacy knows that age verification is anything but “child protective.” It involves a huge invasion of privacy, for extremely faulty technology, that has all sorts of downstream effects that put kids at risk.

Oh, and the FTC seems proud that the vote for this was unanimous—though it’s worth noting that Donald Trump fired the two Democratic members of the FTC and has made no apparent efforts to replace them, despite Congress designating that the FTC is supposed to have five full members, with two from the opposing party. A unanimous vote among the remaining two Republicans is a strange thing to brag about.

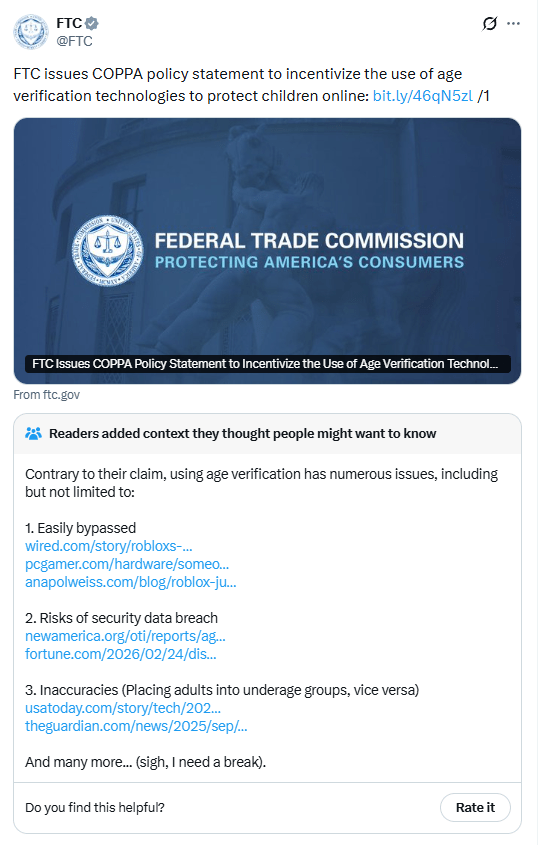

The FTC even posted about this on X, and the response was… well, let me just show you:

If you can’t see that, the main part to pay attention to is not the tweet from the FTC itself, but the Community Note that (under the way Community Notes works, notes need widespread consensus among users to be appended to the public tweet):

Readers added context they thought people might want to know

Contrary to their claim, using age verification has numerous issues, including but not limited to:

1. Easily bypassed

2. Risks of security data breach

3. Inaccuracies (Placing adults into underage groups, vice versa)

And many more… (sigh, I need a break).

Yeah, we all need a break.

That Community Note does a better job explaining the state of age verification technology than the FTC’s entire Bureau of Consumer Protection. It methodically lists out the problems: kids easily bypass these systems, the collected data creates massive security breach risks, and the technology produces wildly inaccurate results that lock adults out while letting kids through (and vice versa). When the consensus-driven crowdsourced fact-check on your own announcement is more informative than the announcement itself, maybe it’s time to reconsider the announcement.

But let’s say, for the sake of argument, that the technology worked perfectly. Would mandatory age verification still be a good idea?

That still wouldn’t solve the issues with this technology and the harm it does to kids. Even UNICEF (UNICEF!) has been warning that age restriction approaches can actively harm the children they’re supposed to protect. After Australia’s social media ban for under-16s went into effect, UNICEF put out a statement that could not have been more clear about the risks:

“While UNICEF welcomes the growing commitment to children’s online safety, social media bans come with their own risks, and they may even backfire,” the agency said in a statement.

For many children, particularly those who are isolated or marginalised, social media is a lifeline for learning, connection, play and self-expression, UNICEF explained.

Moreover, many will still access social media – for example, through workarounds, shared devices, or use of less regulated platforms – which will only make it harder to protect them.

So the actual child welfare experts are saying that age verification can backfire, push kids into less safe spaces, and should never be treated as a substitute for real safety measures. Meanwhile, the FTC is calling the same technology “the most child-protective” thing to come along in a generation and is waiving its own enforcement authority to encourage more of it.

What we have here is a federal agency that has identified a direct conflict between the law it enforces and the policy outcome it wants. Rather than grappling with what that conflict means—maybe age verification as currently conceived just doesn’t work within the existing legal framework, and for good reason—the FTC has chosen to simply look the other way. The message to companies is clear: go ahead and collect data from kids to figure out if they’re kids. We know that violates COPPA. We don’t care. We like age verification more than we like enforcing our own rules.

That’s a hell of a policy position for the agency that’s supposed to be the last line of defense for children’s privacy online.

Filed Under: age verification, coppa, ftc, think of the children

Tech

Big Tech Signs White House Data Center Pledge With Good Optics and Little Substance

Several key tech companies signed a nonbinding pledge at the White House on Wednesday that the Trump administration claims will ensure that tech companies do not pass the cost of data centers on to consumers’ utility bills.

“Data centers … they need some PR help,” President Donald Trump said at the event. “People think that if the data center goes in, their electricity is going to go up.”

He was flanked by representatives from Microsoft, Meta, OpenAI, xAI, Google/Alphabet, Oracle, and Amazon.

Bipartisan anger about data centers and their potential impact on consumers’ electric bills has exploded over the past year. As the White House goes all in on AI, the pledge marks a significant salvo by the Trump administration to assure voters that they will not be affected by rising costs.

But electricity experts and industry insiders threw doubt on how much power the White House actually has to create meaningful consumer protections.

“This is theater,” says Ari Peskoe, the director of the Electricity Law Initiative at the Harvard Law School Environmental and Energy Law Program. “This is a press release designed to make it seem like they are addressing this issue. But this issue can only really be addressed by utility regulators or Congress. The White House doesn’t really have a lot of moves here, and I don’t think the tech companies themselves are the most important parties on cost issues.”

The White House did not immediately respond to a request for comment.

Data centers played a key role in last year’s elections in certain states, including Georgia and Virginia, and are factoring into other races playing out across the country this month. A recent poll conducted by Heatmap News shows that fewer than 30 percent of American voters would support a data center being built near where they live. A number of states have introduced moratoriums on data centers into their state legislatures this year, while others have bills that would seek to help offload the cost from the consumer to the companies building and operating the facilities.

Over the past few months, some big tech companies—including Microsoft and Anthropic—have rolled out various pledges around their data center construction and operation. These pledges follow multiple reports that the president was seeking assurances from tech companies to help take the costs of data centers off American consumers.

In late January, Trump wrote in a Truth Social post that Democrats were to blame for high electricity costs and that he was “working with major American Technology Companies” to ensure “Americans don’t ‘pick up the tab’ for their POWER consumption, in the form of paying higher Utility bills.” Less than a month later, he said during his State of the Union address that he would introduce a “ratepayer protection pledge.”

“We’re telling the major tech companies that they have the obligation to provide for their own power needs,” he said. “They can build their own power plants as part of their factory, so that no one’s prices will go up and, in many cases, prices of electricity will go down for the community, and very substantially then.”

The pledges made independently by key tech companies this year, and the one signed Wednesday, reiterate a lot of promises and initiatives that some tech companies have already been working on. In a blog post published by Google highlighting its commitment to the pledge, the company lists several ongoing initiatives, including investments in nuclear and geothermal energy as well as agreement frameworks with electric utilities and pledges to invest in job creation.

Tech

Vape-powered Car Isn’t Just Blowing Smoke

Disposable vapes aren’t quite the problem/resource stream they once were, with many jurisdictions moving to ban the absurdly wasteful little devices, but there are still a lot of slightly-smelly lithium batteries in the wild. You might be forgiven for thinking that most of them seem to be in [Chris Doel]’s UK workshop, given that he’s now cruising around what has to be the world’s only vape-powered car.

Technically, anyway; some motorheads might object to calling donor vehicle [Chris] starts with a car, but the venerable G-Wiz has four wheels, four seats, lights and a windscreen, so what more do you want? Horsepower in excess of 17 ponies (12.6 kW)? Top speeds in excess of 50 Mph (80 km/h)? Something other than the dead weight of 20-year-old lead-acid batteries? Well, [Chris] at least fixes that last part.

The conversion is amazingly simple: he just straps his 500 disposable vape battery pack into the back seat– the same one that was powering his shop–into the GWiz, and it’s off to the races. Not quickly, mind you, but with 500 lightly-used lithium cells in the back seat, how fast would you want to go? Hopefully the power bank goes back on the wall after the test drive, or he finds a better mounting solution. To [Chris]’s credit, he did renovate his pack with extra support and insulation, and put all the cells in an insulated aluminum box. Still, the low speed has to count as a safety feature at this point.

Charging isn’t fast either, as [Chris] has made the probably-controversial decision to use USB-C. We usually approve of USB-Cing all the things, but a car might be taking things too far, even one with such a comparatively tiny battery. Perhaps his earlier (equally nicotine-soaked) e-bike project would have been a better fit for USB charging.

Thanks to [Vaughna] for the tip!

Tech

Schools Keep Facing the Same Challenges. Students and Educators Know What Needs to Change.

Educators have seen wave after wave of “innovative” solutions promise to address long-standing challenges — from personalization and engagement to college- and career-readiness — yet many issues remain stubbornly unresolved. Too often, solutions are developed and scaled without a clear understanding of how challenges show up in daily classroom experiences or how students, families and educators define the problems.

Understanding the everyday barriers that students, families, practitioners and administrators identify ensures that potential solutions — whether technological, instructional or relational — are grounded in real needs rather than assumptions.

What These Challenges Look Like in Classrooms and Systems

In Digital Promise’s co-research and co-design work with communities across the country, students and educators describe challenges that are neither new nor isolated, but reflect enduring gaps in how learning environments are designed and supported. Looking closely at how these challenges surface through our Challenge Map reveals the deep connections between instructional practice, student engagement and systems-level supports — and why tackling one without the others often falls short.

Together, these experiences shape whether students feel their learning opportunities are future-forward, adaptable to their goals, needs and circumstances, and equip them to exercise agency in their education and career journeys.

Supporting individualized learning, for example, requires systems that give educators the time, tools and structures to understand and respond to each learner’s growth. Without those conditions, personalization requires extraordinary effort — making it difficult to sustain as a routine part of instructional practice.

Similar structural challenges constrain college- and career-readiness efforts. Educators consistently pointed to the need for more holistic, student-centered pathways. One educator described the importance of a “multi-tiered career program in which students engage in self-exploration of their skills, abilities and interests” to connect learning to concrete opportunities and transferable skills they can use after high school.

Engagement, Agency and the Conditions for Learning

At the crux of learning lies student engagement — shaped by both classroom practices and the broader systems in which learning occurs. Community members and educators both highlighted that academic success depends on students’ well-being.

Students shared that learning is most meaningful when it connects to their interests and allows them to have a voice in shaping their educational experiences. Educators echoed this perspective, underscoring the importance of agency in fostering meaningful learning. As one educator reflected, ensuring educational excellence requires continually redefining educational systems in ways that “give every student access to their own version of success.”

Engagement is not simply a matter of student effort or teacher technique, but a product of the environments and systems that shape learning opportunities.

Learning Does Not Stop at the Schoolhouse Door

Students, families and educators who contributed to Digital Promise’s Challenge Map identified supports that go beyond the schoolhouse, offering insight into the social conditions shaping learning. Suggestions for home stability, physical and emotional safety, and balancing responsibilities inside and outside of school highlight how deeply schooling is intertwined with young people’s lives beyond the classroom.

Other insights were deceptively simple yet profound: One group of students suggested creating regular feedback loops in schools so they could share concerns, inform changes to physical spaces and course offerings, and shape how resources are used. Even these straightforward ideas, however, call for systemic shifts in how schools operate and how student voices are embedded in decision-making.

What It Means to Put People at the Center of Innovation

Education remains a fundamentally human endeavor. As long as the goal is to prepare young people to navigate their futures with skill, agency and well-being, the conditions and relationships that shape students’ opportunity and engagement remain essential.

At a time when education research and development (R&D) is often synonymous with emerging technologies, shifting the focus to problem-solving — driven by the perspectives of those living the challenges — expands what counts as innovation. Existing technologies may play an important role, but they should not be scaled simply because they are novel.

Rather, the starting point for innovation should be: What is the central problem that needs to be solved, for and with whom, and what are the resulting outcomes if the problem is addressed successfully? Only then should existing tools or new solution development enter into the equation. Addressing these challenges requires shifts in mindsets and power dynamics so that both students and educators learn how student voice should shape learning and curriculum.

Why Education Research and Development Needs a Systems Lens

As education R&D evolves, the field is increasingly recognizing that local district systems and community engagement have often been missing from innovation efforts. In policy and education leadership circles, there is a growing call for education R&D that strengthens young people’s futures and, by extension, the nation’s long-term economic and civic well-being.

When schools and local communities are meaningfully engaged in R&D, their perspectives consistently point to persistent challenges that require a systems-level response. These challenges are not isolated problems to be solved with standalone interventions, but signals of deeper misalignments in policies, incentives and assumptions across the education ecosystem.

Questions for Building Lasting Change

Solution developers, policymakers and funders drive change through their respective products and investments. Recognizing these challenges as persistent problems and indicators of necessary systems change, they might consider:

- How well do solutions capture the actual problems they aim to solve, rather than the technological possibilities they allow?

- To what extent do local policies and incentives support the development of solutions that center students, families, communities and educators experiencing the challenge?

- How are the perspectives of those living the challenges incorporated throughout the research, solution design and implementation process?

- How do technological solutions reflect the relational and mindset shifts required across the system?

- How can the evaluation of challenges in education take a systems approach that not only accounts for easily identifiable policies, resources, and practices but also for underlying relationships and assumptions?

Above all, lasting educational innovation depends on a shared conviction: The voices and experiences of students, families, community members and educators must shape how problems are defined and solutions are developed.

Tech

Gemini can now find stuff inside your house with a new Google Home update

Google is rolling out a sizeable update to Gemini for Home, bringing smarter voice controls and a long list of fixes. This update aims at making your smart home feel, well, smarter.

Announced by Google’s Home Chief Product Officer Anish Kattukaran, the update focuses on improving how Gemini understands context — both in what you say and where you say it.

One of the more practical changes is improved device isolation. Now, saying “Turn off the kitchen” will target just the lights. It will not accidentally switch off plugs or unrelated devices. Likewise, “Turn off all the lights” will stay within your home. It will not mistakenly affect other linked locations.

Gemini is also getting better at understanding what a device actually is, even if its name isn’t crystal clear. It now strictly uses your home address from the Google Home app for location context, reducing cross-home mix-ups. Google says it has also “significantly reduced” those awkward moments where Gemini cuts you off mid-command.

Daily reliability is another focus. Notes, reminders and timers should respond more consistently, while user-created routines are expected to trigger more reliably. There’s also improved handling of general questions and music playback, including better support for newly released tracks.

For Google Home Premium Advanced subscribers, a new “Live Search” feature lets Gemini answer questions about what’s happening in your home via Nest cameras. Effectively, this allows you to ask what it sees in real time.

The update also brings broader support for the Nest x Yale Lock. It adds new automation starters like “When the security system is armed…”. Additionally, a March 2026 firmware update for Nest Wifi Pro promises improved mesh performance.

It’s not a headline-grabbing redesign. However, it’s the kind of refinement that could make day-to-day smart home control feel far less frustrating.

Tech

Grell OAE2 Open-Back Headphones to Premiere at CanJam NYC 2026 with Newly Optimized Driver and Forward Projecting Soundstage

Veteran headphone engineer Axel Grell, best known for developing many of Sennheiser’s most advanced and commercially successful high-end models before launching his own brand, is bringing his latest design to North America. The Grell OAE2 open-back headphone will make its U.S. public debut at CanJam NYC 2026 on March 7–8, marking the model’s first appearance on this side of the Atlantic following its initial German release.

Building on the foundation of the original OAE1, the $599 OAE2 reflects Grell’s continued focus on tonal accuracy, mechanical precision, and long-term listening comfort. The open-back over-ear design incorporates a newly optimized dynamic driver and acoustically refined housing intended to improve airflow and deliver a more speaker-like soundstage presentation in front of the listener.

Grell describes the tuning as natural and neutral, with controlled low frequencies, a lifelike midrange, and extended detail up top without fatigue. Global availability is scheduled for March 31, 2026, priced at $599 / £499 / €499.

Recreating a More Speaker Like Listening Perspective

Designed and engineered in Germany, the OAE2 is built around a clear objective: reducing the “in-head” effect common to many headphones and moving the listening perspective closer to what listeners experience with loudspeakers. Rather than simply creating a wider soundstage, the focus is on reproducing depth, placement, and spatial stability in a way that feels more natural over long listening sessions.

Instead of following the typical open-back headphone layout where the driver fires directly into the ear canal, Axel Grell’s design positions the acoustic output to interact more deliberately with the outer ear. This allows the pinna and surrounding structures to contribute to spatial cues before the sound reaches the ear canal.

The idea mirrors what happens when listening to loudspeakers. Sound reaches the ears only after interacting with the head, outer ear, and upper body, creating subtle timing, phase, and tonal variations that the brain uses to interpret direction and distance. The OAE2 attempts to preserve more of those cues within a headphone format so that the perceived soundstage feels more externalised and stable.

This design philosophy is informed in part by Grell’s ongoing research into spatial hearing and headphone perception in collaboration with Leibniz University Hannover. The research helps guide practical design decisions such as driver positioning, acoustic structure, and overall tuning, with the goal of maintaining coherent imaging, natural treble perception, and controlled low-frequency behaviour.

The intention is not to create exaggerated width or artificial spatial effects. Instead, the OAE2 aims to present music in a way that resembles nearfield loudspeaker listening, where instruments and voices appear positioned in front of the listener rather than inside the head. For listeners accustomed to traditional headphone presentation, the perspective may initially feel different, but the design is intended to become more intuitive as the brain adapts to the spatial cues over time.

Engineering and Build Designed for Long Term Ownership

Beyond its acoustic design, the OAE2 reflects Axel Grell’s view that premium headphones should be durable, serviceable, and built for long-term ownership rather than short product cycles.

At the core of the design is a 40 mm wideband dynamic driver using a bio cellulose diaphragm. The driver works alongside a carefully developed damping system that includes a precision manufactured stainless steel mesh produced in Germany. This combination is intended to maintain controlled airflow and consistent driver behavior while supporting the headphone’s spatial presentation goals.

The physical construction follows a modular approach. The OAE2 uses a fully metal frame and replaceable components that allow the headphone to be maintained and serviced over time. Key parts can be removed or replaced if necessary, extending the usable life of the product and reducing the likelihood that the headphone becomes disposable when individual components wear out.

This design philosophy reflects a broader trend in the high end headphone market. Manufacturers including Meze Audio have helped popularize modular construction, where headphones are designed with replaceable parts and serviceability in mind so they can remain in use for many years rather than being replaced entirely.

Even the packaging follows the same thinking. The OAE2 ships in largely plastic free packaging designed to reduce unnecessary waste while aligning with Grell’s emphasis on sustainability and long term value.

Connectivity, Accessories, and Technical Specifications

The OAE2 is supplied with both balanced and single ended connectivity options, allowing it to work with a wide range of headphone amplifiers, portable players, and desktop audio systems. In the box, listeners will find two detachable cables: a 1.8 metre (5.9 ft) single ended cable terminated with a 3.5 mm plug and a 1.8 metre (5.9 ft) balanced cable with a 4.4 mm connector. A screw on 3.5 mm to 6.3 mm adapter is also included for compatibility with traditional headphone amplifier outputs, along with a protective carry case for storage and transport.

Technically, the OAE2 is a circumaural open-back headphone built around a dynamic transducer. Although specifications are nearly identical to the OAE1, the OAE2 are 3 grams heavier with an even wider frequency response rated from 12 Hz to 34 kHz within a ±3 dB window, extending from 6 Hz to 46 kHz at -10 dB. Nominal impedance is the same at 38 ohms with a sensitivity of 100 dB at 1 kHz (1 VRMS), suggesting that the headphone can be driven by a variety of modern sources while still benefiting from a capable headphone amplifier. Total harmonic distortion is rated at 0.05 percent at 1 kHz and 100 dB. The headphone weighs 378 grams (13.3 oz) without the cable attached.

The Bottom Line

The OAE2 represents the next step in Axel Grell’s effort to rethink how headphones present space. Rather than chasing exaggerated width or DSP tricks, the design focuses on repositioning the driver and shaping the acoustics so the sound appears more in front of the listener, and closer to how nearfield speakers behave. It’s a concept Grell has been refining for several years, and we first heard an early prototype of this approach at CanJam SoCal in 2023, where the forward projecting presentation immediately stood out from the usual “inside your head” headphone experience.

The new model builds on the earlier OAE1 concept but arrives with a more mature design and a higher price point at $599, compared to the roughly $300 launch price of the original. That shift reflects a more fully developed product with revised acoustics, upgraded construction, and a clearer articulation of Grell’s spatial listening philosophy.

Listeners who prioritize tonal balance, realistic imaging, and long term listening comfort are the most likely audience here. Those expecting the traditional wide but internalized headphone stage may find the presentation different at first, but the goal is a more natural spatial perspective that resembles listening to speakers at close range.

We’ll have the chance to spend more time with the OAE2 during its North American debut at CanJam NYC 2026, where Grell will be demonstrating the headphone publicly for the first time in the U.S. If all goes according to plan, we expect to have a full review ready before the end of March once production units become available.

For more information: grellaudio.com

Related Reading:

Tech

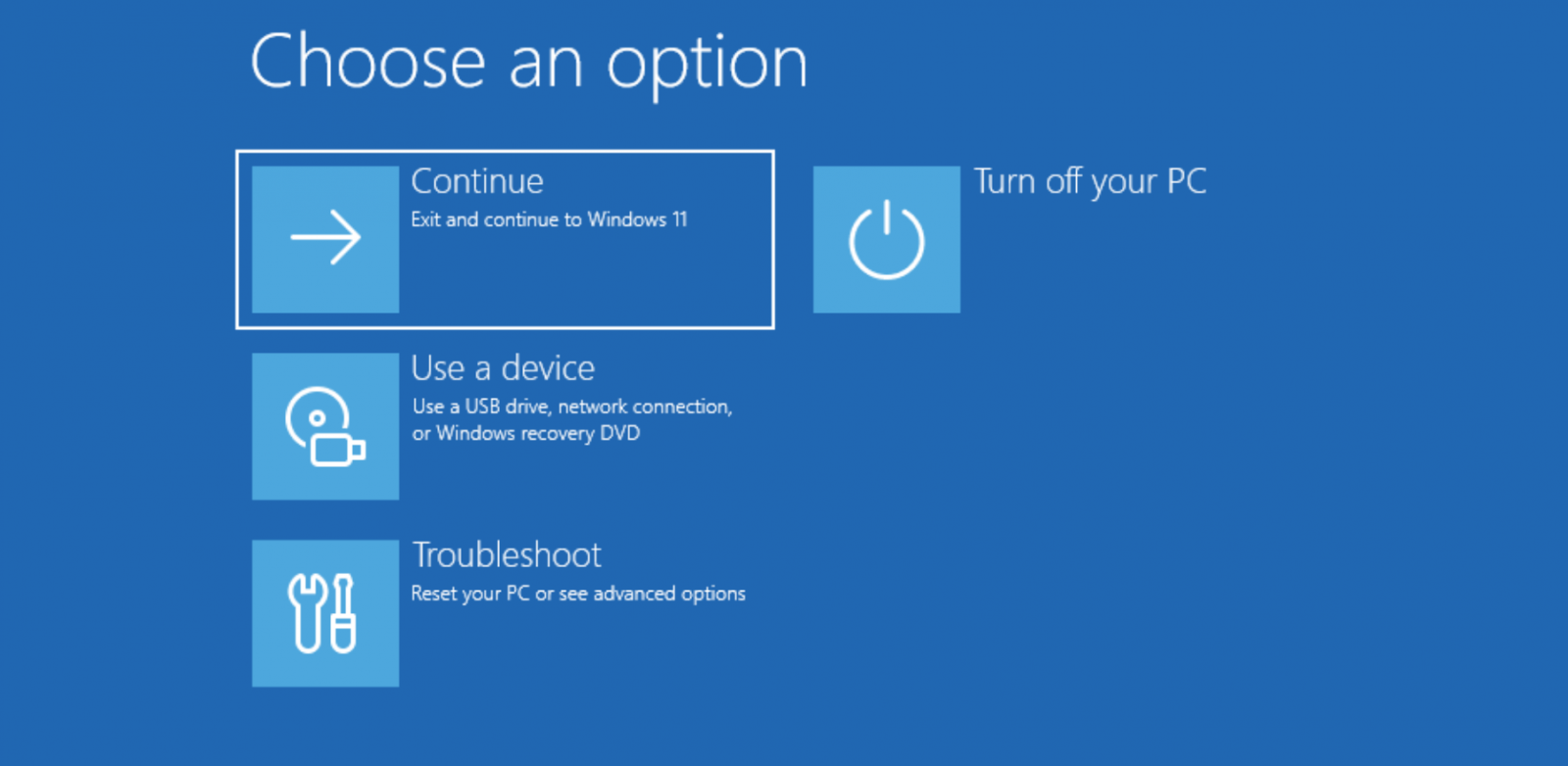

Windows 10 KB5075039 update fixes broken Recovery Environment

Microsoft has released the KB5075039 Windows Recovery Environment update for Windows 10 to fix a long-standing issue that prevented some users from accessing the Recovery environment.

The Windows Recovery Environment (WinRE) is a minimal troubleshooting environment used to repair or restore the operating system after it fails to start, to diagnose crashes, or to remove malware.

Source: BleepingComputer

In October 2025, Microsoft confirmed that the KB5066835 Patch Tuesday updates broke USB mouse and keyboard input when using the Windows 11 Recovery Environment, making it difficult for many to use the troubleshooting tool.

While they quickly rolled out a fix for this flaw, they didn’t disclose until February that the Windows 10 KB5068164 update released in October also broke WinRE.

“This update contains an issue that prevents the Windows Recovery Environment from starting successfully,” reads the February update to the change log.

Yesterday, Microsoft released the “KB5075039: Windows Recovery Environment update for Windows 10” to fix the WinRE issue introduced last year.

“[Windows Recovery Environment (WinRE)] Fixed: WinRE would not start after installing the October 14, 2025 update KB5068164,” reads the change log.

To install the update, your WinRE partition must be at least 256MB in size. If not, you will need to increase the partition size using these instructions.

Before resizing any partition, including the WinRE partition, it is always advisable to back up the data on the drive whose partitions are being resized.

-

Politics6 days ago

Politics6 days agoITV enters Gaza with IDF amid ongoing genocide

-

Politics2 days ago

Politics2 days agoAlan Cumming Brands Baftas Ceremony A ‘Triggering S**tshow’

-

Fashion5 days ago

Fashion5 days agoWeekend Open Thread: Iris Top

-

NewsBeat7 days ago

NewsBeat7 days agoCuba says its forces have killed four on US-registered speedboat | World News

-

Tech4 days ago

Tech4 days agoUnihertz’s Titan 2 Elite Arrives Just as Physical Keyboards Refuse to Fade Away

-

Sports5 days ago

The Vikings Need a Duck

-

NewsBeat4 days ago

NewsBeat4 days agoDubai flights cancelled as Brit told airspace closed ’10 minutes after boarding’

-

NewsBeat3 days ago

NewsBeat3 days ago‘Significant’ damage to boarded-up Horden house after fire

-

NewsBeat4 days ago

NewsBeat4 days agoThe empty pub on busy Cambridge road that has been boarded up for years

-

NewsBeat4 days ago

NewsBeat4 days agoAbusive parents will now be treated like sex offenders and placed on a ‘child cruelty register’ | News UK

-

Entertainment3 days ago

Entertainment3 days agoBaby Gear Guide: Strollers, Car Seats

-

Business7 days ago

Business7 days agoDiscord Pushes Implementation of Global Age Checks to Second Half of 2026

-

Tech5 days ago

Tech5 days agoNASA Reveals Identity of Astronaut Who Suffered Medical Incident Aboard ISS

-

Business6 days ago

Business6 days agoOnly 4% of women globally reside in countries that offer almost complete legal equality

-

NewsBeat4 days ago

NewsBeat4 days agoEmirates confirms when flights will resume amid Dubai airport chaos

-

Politics4 days ago

FIFA hypocrisy after Israel murder over 400 Palestinian footballers

-

Crypto World6 days ago

Crypto World6 days agoFrom Crypto Treasury to RWA: ETHZilla Retreats and Relaunches as Forum Markets on Nasdaq

-

NewsBeat2 days ago

NewsBeat2 days agoIs it acceptable to comment on the appearance of strangers in public? Readers discuss

-

Tech4 days ago

Tech4 days agoViral ad shows aged Musk, Altman, and Bezos using jobless humans to power AI

-

Business6 days ago

Business6 days agoWorld Economic Forum boss Borge Brende quits after review of Jeffrey Epstein links