Mistral AI, the Paris-based artificial intelligence company valued at €11.7 billion ($13.8 billion), today released Workflows in public preview — a production-grade orchestration layer designed to move enterprise AI systems out of proofs of concept and into the business processes that generate revenue.

The product, which launches as part of Mistral’s Studio platform, is the company’s clearest articulation yet of a thesis that is quietly reshaping the enterprise AI market: that the bottleneck for organizations adopting AI is no longer the model itself, but the infrastructure required to run it reliably at scale.

“What we’re seeing today is that organizations are struggling to go beyond isolated proofs of concept,” Elisa Salamanca, who leads go-to-market for Mistral’s enterprise products, told VentureBeat in an exclusive interview ahead of the launch. “The gap is operational. Workflows is the infrastructure to run AI systems reliably across business-critical processes.”

The release arrives at a pivotal moment for both Mistral and the broader AI industry. The dedicated agentic AI market has been valued at approximately $10.9 billion in 2026 and is projected to reach $199 billion by 2034. Yet despite that staggering growth trajectory, industry research points to a stark reality: over 40% of agentic AI projects will be aborted by 2027 due to high costs, unclear value, and complexity. Mistral is betting that Workflows can help its enterprise customers avoid becoming one of those statistics.

Mistral’s new orchestration layer separates execution from control to keep enterprise data private

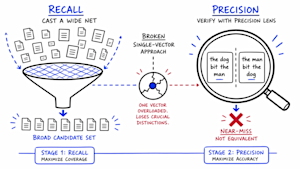

At its core, Workflows provides a structured system for defining, executing, and monitoring multi-step AI processes — from simple sequential tasks to complex, stateful operations that blend deterministic business rules with the probabilistic outputs of large language models.

Salamanca described Workflows as containing several key components. The first is a development kit that allows engineers to build orchestration logic in just a few lines of Python code. “We have also been able to expose MCP servers,” she explained, referring to the Model Context Protocol standard for connecting AI systems to external tools, “so that they can actually do this with agent authoring.”

The second — and arguably more technically significant — component is an architecture that separates orchestration from execution. “We’re decorrelating the orchestration from the execution,” Salamanca said. “Execution can happen close to the customer’s data — their critical systems — and orchestration can happen on the cloud or wherever they want to run it.” This means the data never has to leave the customer’s perimeter, a design decision with enormous implications for regulated industries where data sovereignty is non-negotiable. “Enterprises do not have to worry about us having access to the data,” she added.

The third pillar is observability. According to Mistral’s blog post announcing the release, every branch, retry, and state change within a workflow is recorded in Studio with native support for OpenTelemetry. Salamanca noted that this is not an afterthought: “You can easily see what decisions have been taken by the workflow, by the agent, and you can deep dive into where problems are happening.”

Workflows is fully customizable across models — engineers can select which model handles which step and can inject arbitrary code, allowing them to blend deterministic pipelines with agentic sections. The system also supports connectors that integrate directly with CRMs, ticketing systems, support platforms, and other enterprise tools, with built-in authentication and secrets management.

Why Mistral chose a code-first approach over low-code drag-and-drop builders

Unlike some competitors offering drag-and-drop workflow builders, Mistral has deliberately targeted developers and engineers rather than business users. “There are a couple of solutions out there that have click-and-drag, drag-and-drop solutions for workflows,” Salamanca acknowledged. “This is not the approach that we’ve been taking. We’ve been really focused towards developers and critical systems that will not scale if you’re doing these drag-and-drop workflows.”

The decision is part of a broader philosophy at Mistral: that enterprise AI systems handling mission-critical operations — cargo releases, compliance reviews, financial transactions — require the precision and version control that only code can provide. Business users are not excluded from the picture, but their role is downstream. Once engineers write a workflow in Python, it can be published to Le Chat, Mistral’s chatbot platform, so anyone in the organization can trigger it. Every step remains tracked and auditable in Studio.

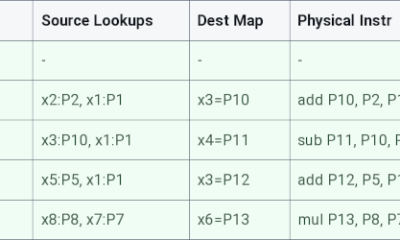

Under the hood, Workflows runs on Temporal’s durable execution engine — a platform whose $5 billion valuation reflects how its durable execution capabilities, originally built for cloud workflow orchestration, have become essential infrastructure for AI agents requiring reliable, long-running, stateful processes. Temporal’s customers include OpenAI, Snap, Netflix, and JPMorgan Chase, and its technology powers orchestration at companies like Stripe and Salesforce.

Mistral extended Temporal’s core engine for AI-specific workloads by adding streaming, payload handling, multi-tenancy, and observability that the base engine does not provide out of the box. “Workflows is built on top of Temporal,” Salamanca confirmed. “We added all the AI requirements to make these AI workflows reliable. It provides out of the box durability, retries, state management. Whenever there’s a failure, it starts again wherever it stopped.” Originally spun out of Uber’s Cadence project, Temporal transparently handles retries, state persistence, and timeouts, providing durable execution across failures. In late 2025, Temporal joined the newly formed Agentic AI Foundation as a Gold Member and announced an official OpenAI Agents SDK integration. By building on this infrastructure rather than creating a proprietary alternative, Mistral inherits battle-tested reliability while focusing its own engineering efforts on the AI-specific layer that sits above it.

From cargo ships to KYC reviews, customers are already running millions of daily executions

Mistral is not launching Workflows as a concept — the company says customers are already running the product in production, processing millions of executions daily across three primary use cases.

The first is cargo release automation in the logistics sector. Global shipping still runs on paperwork, and a single cargo release can involve customs declarations, dangerous goods classifications, safety inspections, and regulatory checks spanning multiple jurisdictions. Salamanca described the scope of the problem: “Their global shipping today runs on paperwork. They have to involve customs declaration, Dangerous Goods classification, safety inspections, regulatory checks, and Workflows is now powering that with our models and business rules inside.”

Critically, the system keeps humans in the loop at the right moments. According to Mistral’s blog, the human approval step in a workflow is a single line of code — wait_for_input() — that pauses the workflow indefinitely with no compute consumption, notifies the reviewer, and resumes exactly where it left off once approval is given. “Humans are still in the loop, but they’re in the loop at the right time,” Salamanca said. “They just get the validation — I don’t have to go into multiple tools — and the shipment gets released.”

The second production use case is document compliance checking for financial institutions, specifically Know Your Customer reviews. These reviews are manual, repetitive, and traditionally require hours of analyst time per case. Salamanca said Workflows now processes these reviews in minutes and provides outputs in an auditable manner — a requirement for meeting regulatory obligations.

The third example involves customer support in the banking sector. “You’d have millions of users actually asking to have credit cards blocked, or feedbacks on their account situation, on their credit feedbacks,” Salamanca said. With Workflows, incoming support tickets are analyzed, categorized by intent and urgency, and routed automatically. Each routing decision is visible and traceable in Studio, and when the system gets a categorization wrong, the team can correct it at the workflow level without retraining the model.

How Workflows fits into Mistral’s three-layer enterprise AI platform strategy

Workflows does not exist in isolation. It is the middle layer of a three-part enterprise platform that Mistral has been assembling at a rapid clip throughout 2026.

At the bottom sits Forge, the custom model training platform Mistral launched in March at Nvidia’s GTC conference. Forge allows organizations to build, customize, and continuously improve AI models using their own proprietary data. At the top sits Vibe, Mistral’s coding agent platform that provides the user-facing interaction layer — available on web, mobile, or desktop.

Salamanca connected the three explicitly: “We just released Forge. It enables you to create your own models. But the question is, how do you put these models to do valuable work for your enterprise? That’s where Workflows comes in, because this is the orchestration piece — how you blend in deterministic rules and agentic capabilities. And then if you really want to have your end users interact with these AI patterns, it’s where Vibe comes into play.”

Forge is already seeing strong traction, Salamanca said, across two distinct patterns of enterprise demand. “First, they wanted to really build completely dedicated models to solve unique problems — transformers-based architecture for time series in the financial sector, adding new types of modalities to the LLMs,” she explained. “And the second motion was about customers with really specific tasks they want to solve. Reinforcement learning really caught their attention as to how they can use Forge and Forge RL to actually have models do these tasks very well.”

This layered architecture — model customization, workflow orchestration, and end-user interfaces — positions Mistral as something more ambitious than a model provider. It is building a full-stack enterprise AI platform, a strategy that pits it directly against not just other AI labs like OpenAI and Anthropic, but also against the hyperscale cloud providers. The company’s product portfolio now ranges, as Salamanca put it, “from compute to end-user interfaces,” including data centers in Europe, document processing with its OCR model, and audio capabilities through its Voxtral models.

Mistral’s aggressive scaling campaign and the $14 billion valuation powering it

The Workflows launch comes as Mistral executes one of the most aggressive scaling campaigns in the history of the European technology industry. The French AI startup has increased its revenue twentyfold within a year, with co-founder and CEO Arthur Mensch putting the company’s annualized revenue run rate at over $400 million, compared to just $20 million the previous year. The Paris-based company aims to achieve recurring annual revenue of more than $1 billion by year-end.

The company’s fundraising trajectory has been equally dramatic. Mistral announced a €1.7 billion ($1.9 billion) Series C round at a €11.7 billion ($12.8 billion) valuation in September 2025. Bloomberg reported in September 2025 that the company was finalizing a €2 billion investment valuing it at €12 billion ($14 billion). ASML led the round and contributed €1.3 billion, a landmark investment that aligned chip manufacturing expertise with frontier AI development and underscored European industrial capital’s commitment to building a sovereign AI ecosystem. Mistral then secured $830 million in debt in March 2026 to buy 13,800 Nvidia chips for a new data center near Paris.

The financial picture illustrates why Workflows matters strategically. Mistral’s revenue growth is being driven primarily by enterprise adoption, with approximately 60% of revenue coming from Europe, according to CEO Mensch’s public statements. Those enterprise customers are not buying Mistral’s models for casual chatbot applications — they are deploying them in regulated, mission-critical environments where reliability and data sovereignty are table stakes. Workflows gives those customers the production infrastructure they need to actually deploy AI systems that matter.

In May 2025, Mistral released Mistral Medium 3, which was priced at $0.40 per million input tokens and $2 per million output tokens. The company said clients in financial services, energy, and healthcare had been beta testing it for customer service, workflow automation, and analyzing complex datasets. That model now becomes one of many that can be plugged into Workflows, creating a flywheel where better models drive more workflow adoption, which in turn drives more inference revenue.

Where Mistral’s orchestration play fits in an increasingly crowded competitive landscape

Mistral’s entry into workflow orchestration arrives in an increasingly crowded field. AI orchestration platforms are quickly becoming the backbone of enterprise AI systems in 2026, and as businesses deploy multiple AI agents, tools, and LLMs, the need for unified control, oversight, and efficiency has never been greater.

Major cloud providers — Amazon with Bedrock AgentCore, Microsoft with Copilot Studio, Google with Vertex AI’s agent tools, and IBM with WatsonX — all offer some form of workflow or agent orchestration. Open-source frameworks like LangChain, LlamaIndex, and Microsoft AutoGen provide developer-level building blocks. And dedicated orchestration startups are proliferating.

Mistral’s differentiation rests on three pillars. First, vertical integration: because Workflows is native to Studio, the orchestration layer and the components it orchestrates — models, agents, connectors, observability — are built to work together, eliminating the integration tax that enterprises pay when stitching together disparate tools. Second, deployment flexibility: the split control-plane/data-plane architecture means customers in regulated industries can run execution workers in their own environments while still benefiting from managed orchestration. Third, data sovereignty: Mistral’s European roots and infrastructure investments give it a natural advantage with organizations wary of routing sensitive data through U.S.-headquartered cloud providers — a concern that has intensified amid ongoing geopolitical tensions and growing European anxiety about relying on foreign providers for over 80% of digital services and infrastructure.

Still, the challenges are real. OpenAI and Anthropic both have significantly larger model ecosystems and developer communities. The hyperscalers control the cloud infrastructure where most enterprise workloads actually run. And the enterprise sales cycles for production-grade AI deployments remain long and complex, requiring deep technical integration work that even well-funded startups can struggle to staff.

What comes next for Workflows — and why Mistral thinks orchestration is the real AI battleground

Salamanca outlined three areas of near-term development. First, Mistral plans to release a more managed version of Workflows that abstracts deployment logic for developers who don’t need granular control over worker placement. “Whenever you want to have this flexibility, you can, but if you want to be able to have this on a managed infrastructure, even if it’s running in your own VPC, this is something that we’re adding,” she said.

Second, the company intends to make Workflows accessible to business users, not just engineers. “With Vibe code, you can actually author a workflow. This can be executed at scale, and any end user, in the end, can actually do that with Workflows,” Salamanca explained. The third area is enterprise guardrails and safety controls for agentic applications — ensuring agents use the correct tools, run with appropriate permissions, and that administrators can enforce policies at scale. “Making sure that we have all these enterprise controls to be able to scale the authoring and the building of these workflows is something we’re actively working on,” she said.

The Python SDK for Workflows (v3.0) is now publicly available. Developers can try the product in Studio and access documentation and demo templates immediately. Mistral will be hosting its inaugural AI Now Summit in Paris on May 27–28, where the company is expected to provide additional details on its platform roadmap.

For three years, the AI industry has been captivated by a single question: who can build the most powerful model? Mistral’s Workflows launch suggests the company has moved on to a different question entirely — one that may prove far more consequential for the enterprises writing the checks. It’s not about which model is smartest. It’s about which one can actually show up for work.

You must be logged in to post a comment Login