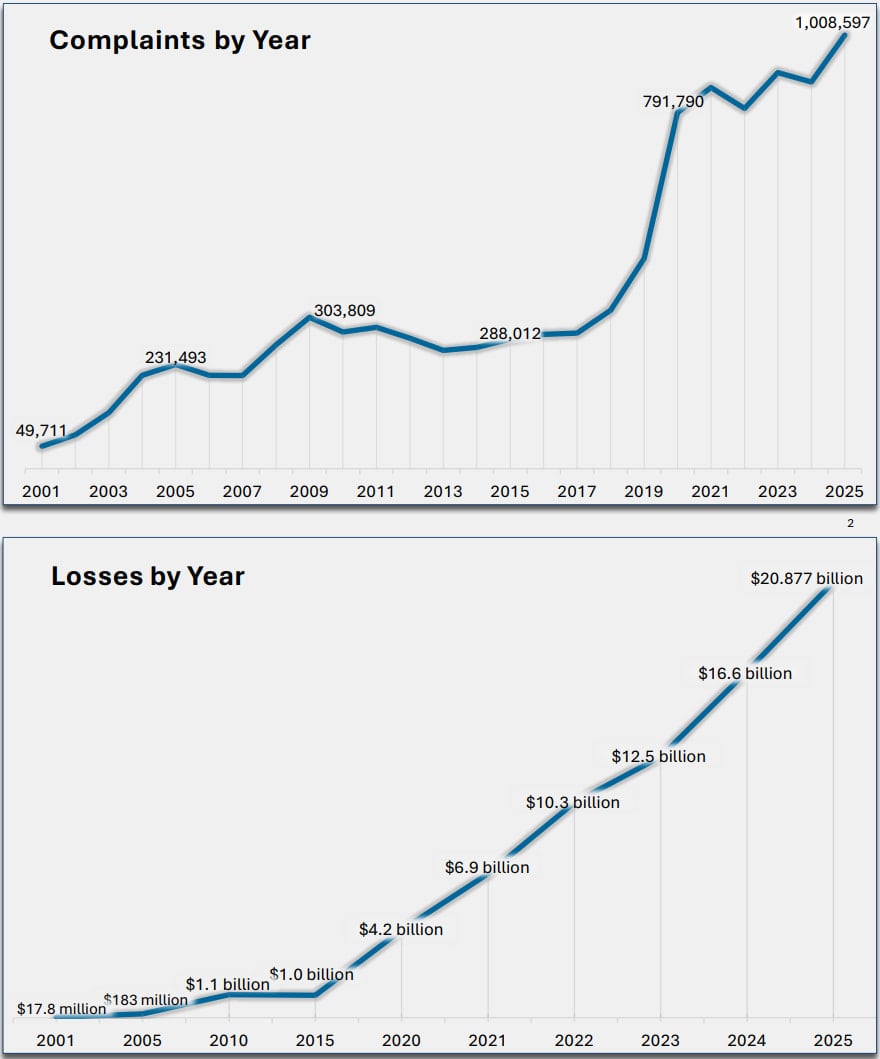

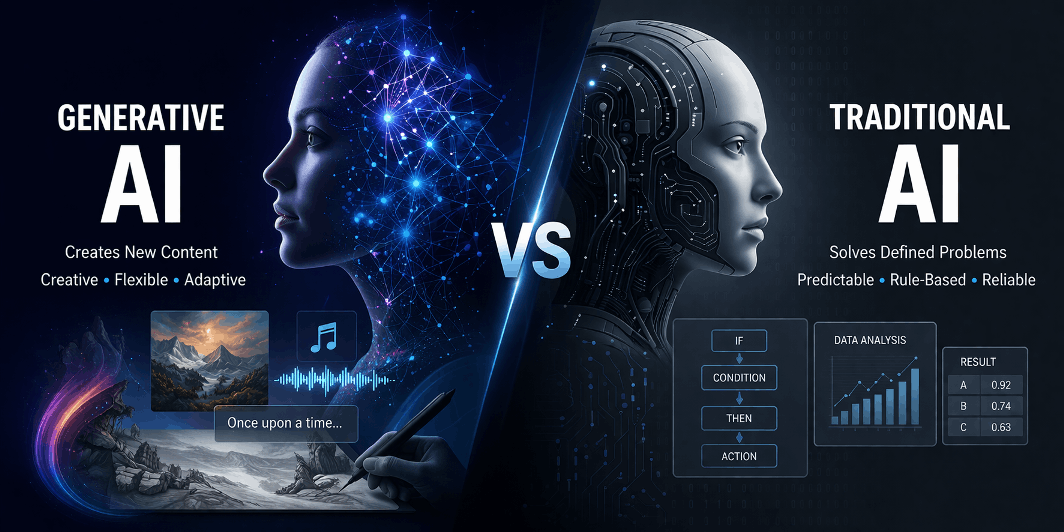

From being merely an auxiliary element, artificial intelligence has now become an integral part of the driving force behind businesses. Be it the analysis of large data sets or the execution of repetitive functions, the importance of artificial intelligence has already been demonstrated in multiple industries. However, the introduction of generative artificial intelligence has now added a new dimension to the capabilities that are being offered.

While traditional AI has been widely used for years, the rise of generative AI is making it important for businesses to understand how the two differ. Although these technologies are part of the same broad category, namely ‘artificial intelligence solutions,’ but they have very distinct functionalities and differences.

Understanding Traditional AI

Conventional AI systems are created to perform data handling, pattern recognition, and predictions.

Core Characteristics of Traditional AI

Predictive Capabilities

Traditional AI is trained to make predictions based on the data they have been trained on.

Structured Data Dependency

This system is most suitable for handling structured data, i.e., tables and databases.

Task-Specific Design

They are built to perform specific jobs.

Rule-Based or Supervised Learning Models

They are based on algorithms that use rule-based systems or supervised learning.

Common Use Cases

• Fraud detection in financial systems

• Recommendation systems in online platforms

• Demand forecasting in supply chains

• Risk assessment in insurance and banking

Traditional AI is an excellent choice for businesses that need accuracy and precision in handling data. Due to this excellent feature, it is the backbone of enterprise-level AI solutions.

What Is Generative AI?

Generative AI, on the other hand, is a distinct concept. It is more focused on producing new outputs instead of analysis. It can learn from large data sets and produce different outputs such as text, images, and codes.

Key Characteristics of Generative AI

Content Creation

It can create original content instead of predictions.

Unstructured Data Handling

Generative AI can handle complex data such as natural language and images.

Contextual Understanding

It is capable of responding based on the context.

Adaptive Learning

This model can learn and improve its output.

Corporations can create programs that facilitate creative and strategic operations and go beyond technology through the use of generative AI services.

Generative AI vs Traditional AI: A Side-by-Side Comparison

|

Aspect

|

Traditional AI

|

Generative AI

|

|

Primary Function

|

Data analysis and prediction

|

Content creation and generation

|

|

Data Type

|

Structured data

|

Structured and unstructured data

|

|

Output

|

Predictions, classifications

|

Text, images, code, and more

|

|

Flexibility

|

Limited to predefined tasks

|

Highly flexible and adaptive

|

|

Learning Approach

|

Task-specific training

|

Large-scale deep learning models

|

|

Interaction Style

|

Reactive

|

Context-aware and interactive

|

|

Use Case Scope

|

Narrow

|

Broad and multi-functional

|

This comparison emphasizes how generative AI development increases the opportunities of AI beyond conventional boundaries.

Technical Perspective: How They Work

Traditional AI Workflow

1. Data collection and preprocessing

2. Feature selection and engineering

3. Model training using algorithms such as regression or classification

4. Output generation based on learned patterns

Traditional systems are heavily dependent on structured workflows and objectives.

Generative AI Workflow

1. Training on large datasets with deep learning models

2. Learning patterns and relationships

3. Generating outputs based on inputs or prompts

4. Improving outputs with feedback and iterations

Generative AI employs transformer models to process the context and generate outputs similar to humans.

Why Generative AI Is Driving New Opportunities

The increased interest in generative AI services is attributed to the potential they have to enable innovation and efficiency in multiple functions.

Key Benefits of Generative AI

1. Scalable Content Creation

Generative AI enables businesses to generate large quantities of content within a short time, such as marketing content, reports, and product descriptions, thus helping them save time and be consistent in the content they generate.

2. Enhanced Customer Engagement

Businesses can use AI-based chat tools to produce more personalized & engaging content, thus giving the customer a superior experience and effectively fulfilling their needs.

3. Quicker Product Development

Generative AI enables faster product development through the generation of prototypes, codes, and testing.

4. Personalization

Businesses can use generative AI to create a personalized experience based on individual needs, hence providing a better experience and satisfying users.

5. Data Augmentation

Artificial data helps improve models, especially when there is a lack of data, hence providing accurate results and improved performance.

These advantages make generative AI development a vital part of digital strategy for many organizations.

Real-World Applications Across Industries

Traditional AI Applications

• Financial fraud detection

• Predictive maintenance in manufacturing

• Inventory management

• Customer segmentation

Generative AI Applications

• AI chatbots and conversational agents

• Marketing content creation

• Code generation and debugging

• Image and video generation

• Virtual assistants and knowledge systems

These examples show how generative AI solutions extend beyond traditional automation into areas that require creativity and adaptability.

Combining Both Approaches

In fact, most organizations use a mix of traditional and generative AI to get the best out of the systems.

Example Use Case

• Traditional AI systems process customer information to find patterns

• Generative AI systems use the patterns to create personalized content

This enables businesses to use the advantages of both systems to create a more robust artificial intelligence system.

Challenges and Considerations

Traditional AI Limitations

• Limited flexibility

• Difficulty in dealing with unstructured data

• Need for manual updating for new applications

Generative AI Challenges

• Higher computational costs

• Chances of inaccurate or biased outcomes

• Need for effective governance and compliance

Understanding regulatory requirements is equally important, as generative AI regulatory compliance helps businesses manage risks effectively while adopting new technologies.

Business Impact of Generative AI

The rise of generative AI services is influencing how businesses operate and compete.

Key Areas of Impact

• Marketing and Content Creation

Faster production of high-quality content

• Customer Support

Improved interaction through intelligent chat systems

• Product Innovation

Rapid prototyping and idea generation

• Operational Efficiency

Automation of complex workflows

Organizations investing in generative ai development are finding new ways to improve productivity and deliver value.

Choosing the Right Approach

Selecting the right AI approach depends on the nature of your business needs.

Use Traditional AI When:

• Working with structured data

• Focusing on prediction and analysis

• Managing risk and compliance tasks

Use Generative AI When:

• Creating content or designs

• Building conversational interfaces

• Driving innovation and personalization

Key Factors to Consider

• Data availability and quality

• Infrastructure requirements

• Integration with existing systems

• Long-term scalability

A thoughtful evaluation helps businesses select the most suitable generative AI solution or combination of tools.

Concluding Thoughts

The difference between traditional AI and generative AI is based on how they approach problems and how they deliver the results. While traditional AI is based on analyzing data and making predictions, generative AI creates new information and allows for more interactive experiences.

As the business world continues to look into more sophisticated technology, the development of generative AI is a significant part of the current business plans. This is because it opens up more opportunities for creativity and efficiency in the customer world.

However, traditional AI is still a viable tool in the world of data-based tasks. The most viable approach to artificial intelligence solutions is a mix of traditional and generative AI. This ensures a balance between traditional and generative artificial intelligence solutions and allows businesses to thrive and become more competitive in a more data-based world.

.png)

You must be logged in to post a comment Login