The basic assumptions behind online commerce are starting to fracture, says Paul Conroy, CTO at Square1, as he looks back at last week’s Stripe Sessions in San Francisco.

Stripe bills Sessions as its “internet economy conference”. Across a few days in San Francisco, thousands of people from around the world gathered last week to talk about the future of online commerce.

But for all the product launches and big-name keynotes, one fundamental shift kept surfacing – the basic assumptions behind online commerce are starting to fracture.

For more than 20 years, payment systems have been built on the assumption that bots are the problem. A good customer browses, hesitates, clicks around and eventually buys something. A suspicious customer lands directly on a payment page, provides almost zero behavioural signal and comes from a server farm rather than a smartphone.

Stripe Sessions 2026 made it very clear: that assumption is dead. In the next phase of commerce, it’s likely that the bot is actually the customer.

Agents need merchants they can understand

One of the clearest examples of this shift is the soon-to-be-everyday idea of asking an AI agent to buy you something. Not just “find me this jacket”, but something more concierge-like: “Get me a full outfit for hiking in France in July, within this budget.”

That request asks vastly more of a merchant than a traditional product search. A human can squint at a product page, read around missing information, infer whether two items might work together and gauge if a return policy feels fair. An agent needs that same information in a structured, reliable format. It needs to understand sizes, materials, compatibility and, crucially, whether a merchant can be trusted.

For merchants, agentic commerce raises a practical question – can your products, prices and policies actually be understood by machines?

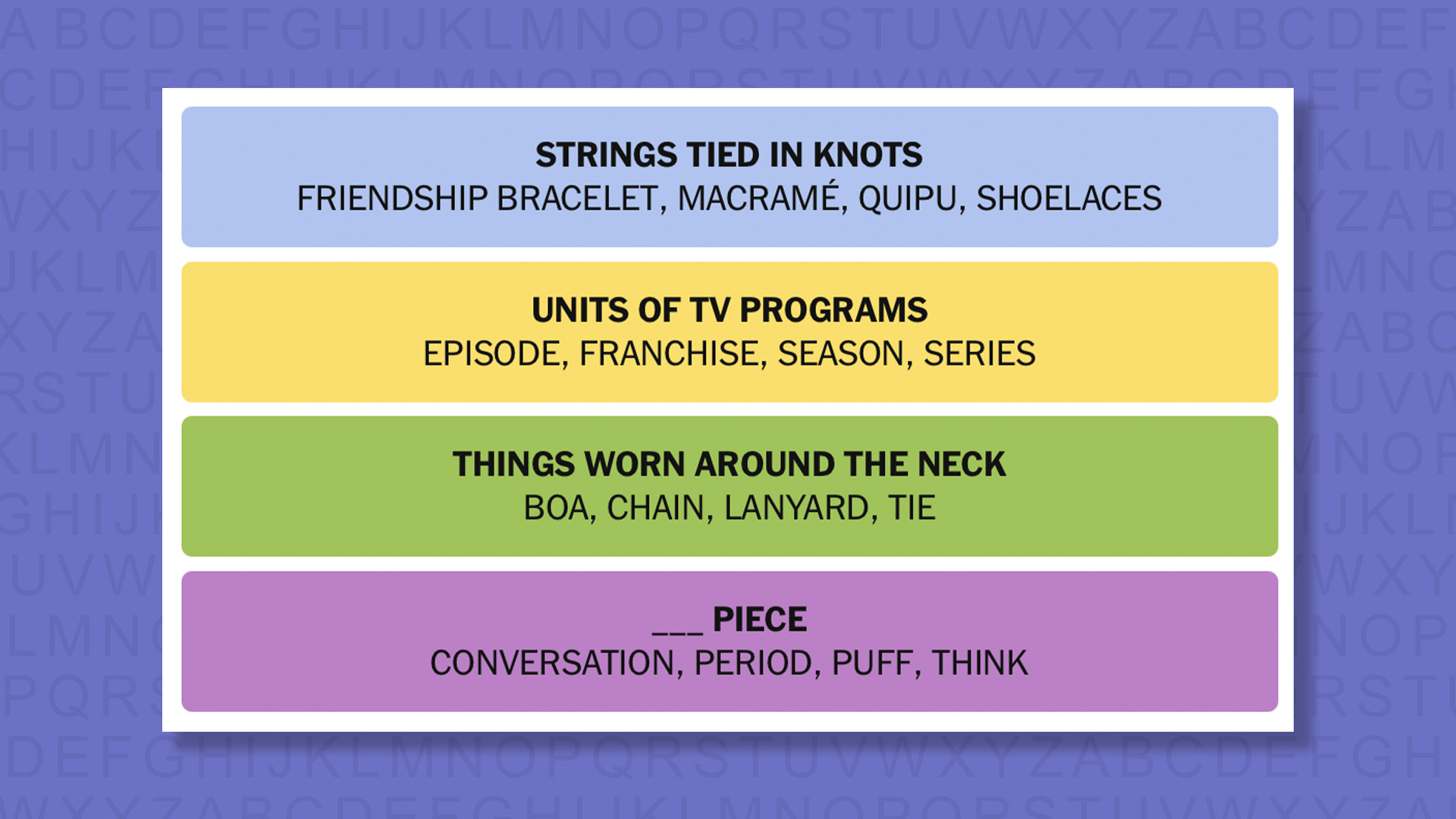

This is why new commerce protocols are suddenly so vital. The Universal Commerce Protocol (supported by companies like Stripe, Shopify and Google) is an attempt to standardise how this should work. If agents are going to shop, merchants need a common way to tell those agents what they sell and how it can be bought. Businesses with messy product data will soon find themselves effectively invisible to machine customers.

The new unit economics of AI

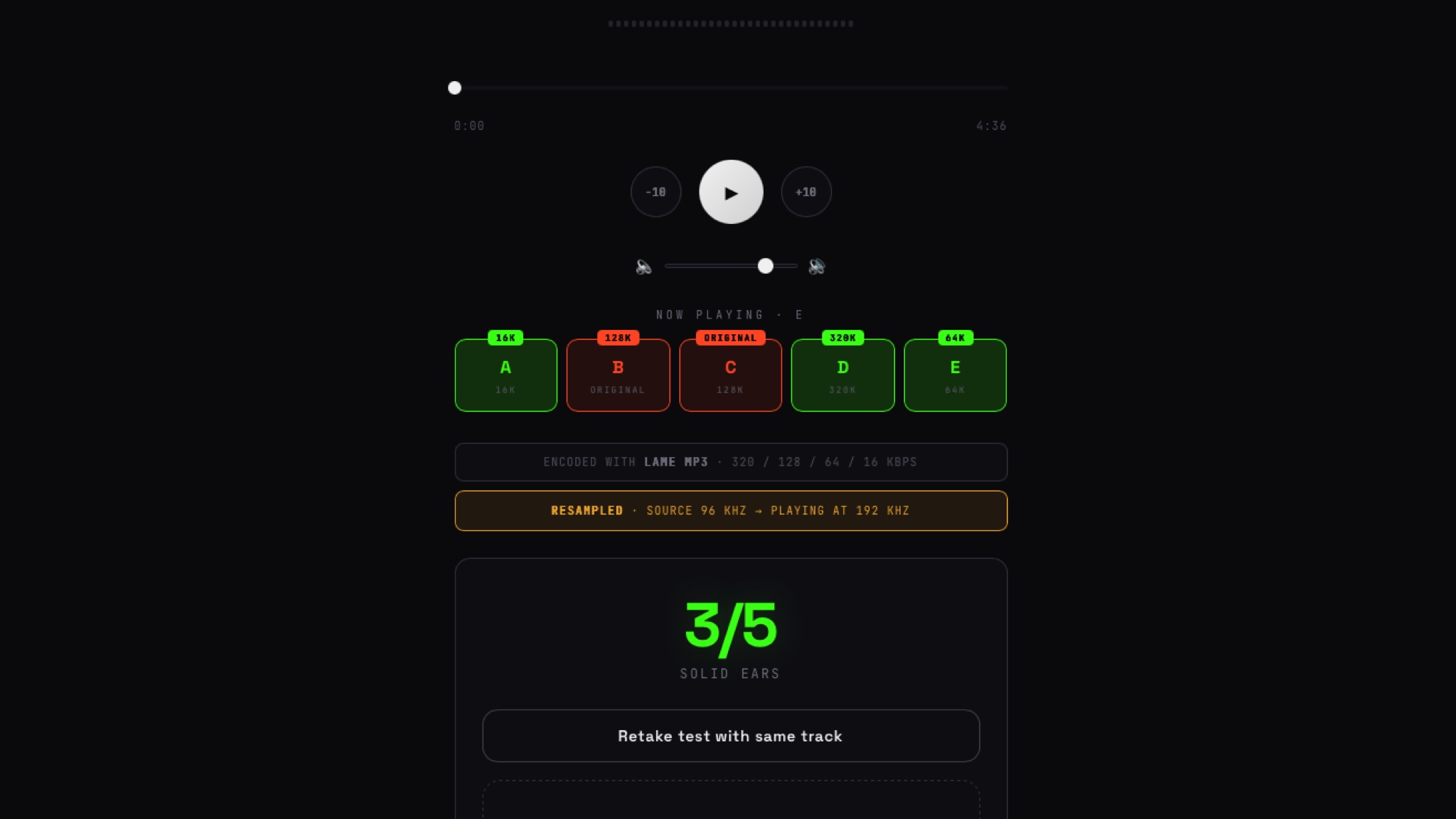

This evolution also shows up when we look at agents paying for digital work in tiny increments.

One demo at Sessions involved a code review tool which charges based on tokens consumed. That sounds niche until you consider the economics of AI more broadly. As more companies rely on AI, the cost of inference becomes a massive operational risk. We have all seen the funny screenshots where someone persuades a fast-food chatbot to ignore the menu and write a React app instead. That unintended use is amusing until it is applied to a service with real inference costs behind it. If usage spikes, costs spike.

In the demo, the tool’s price was thousandths of a cent per token used. That is far too small to make sense through traditional credit card processing, so delayed billing in aggregate is common, though risky, for this type of merchant today. However, if an agent can call an API, use an authorised wallet, and make thousands of tiny payments as and when it consumes a service – while keeping processing fees low – viable microtransactions suddenly look very real.

How do you charge for AI-native services when the unit economics are too small, too fast-moving or too risky for traditional payment models? This is where stablecoins graduate from crypto buzzword to practical infrastructure.

The view from Europe

Spending a few days in San Francisco makes the difference in pace hard to ignore. Coming from Dublin, where the bus shelters are more likely to be selling phone plans or supermarket offers, it is striking to arrive somewhere where every billboard seems to be advertising some novel AI startup, or a company with a new way to move money.

Some of that is inevitably hype. But what is entirely real is that the US is actively wiring up the infrastructure to support these shifts. Stablecoin adoption and agent wallets are rapidly moving from theoretical concepts to live commercial deployments.

From a European perspective, that should make us slightly uncomfortable. We have a tendency to approach new financial infrastructure by regulating first. The rollout of the MiCA (Markets in Crypto-Assets) framework is a perfect example. While it gives Europe necessary regulatory clarity, our heavy focus on compliance often means commercial deployment lags behind.

Consumer protection and stability are critical, of course. But there is a difference between moving carefully and moving so slowly that the next generation of infrastructure is built somewhere else, with someone else’s interests at heart. If AI-native commerce, agent wallets and real-time stablecoin microtransactions become the foundation of how online commerce is conducted, Europe cannot afford to watch from the sidelines. The challenge is to regulate well without regulating late.

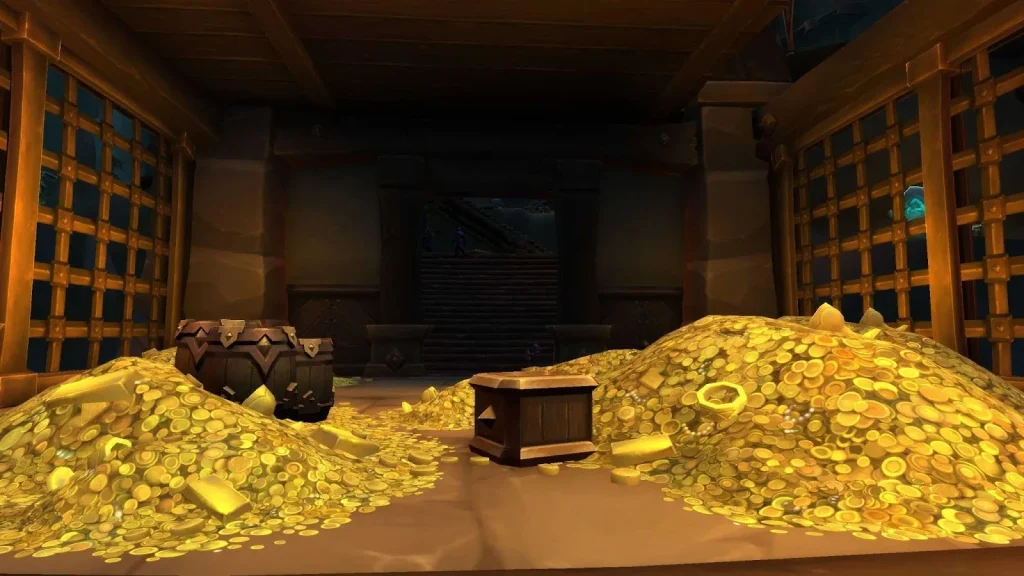

The fraud arms race gets harder

The fraud angle is where this agentic ecosystem gets significantly more complicated.

Historically, fraud tooling has treated bot-like behaviour as suspicious by default. No normal browsing pattern, a single fast request to transact and a data-centre IP were strong signals that something bad was happening. In an agentic commerce world, a perfectly legitimate transaction will look exactly like that.

This creates a catch-22 for merchants. Block good agents, you lose revenue. Allow bad agents, you lose money. The old signals are failing in both directions.

This came up repeatedly during Sessions. There is a new arms race developing: fraudsters using AI to scale attacks and probe weaknesses, and Stripe using its own AI models in Radar to detect and respond. What I found most interesting was the frankness in many of the talks. There was no triumphalism, just a lot of, “we do not have this fully figured out just yet”. How do we authenticate intent? Who owns the transaction when a user has delegated the decision to an agent?

These are existential questions for businesses operating on low margins. The same automation that makes new buying experiences possible makes abuse much cheaper to attempt.

Clean APIs and the human element

Between talks in the main hall, instead of piped-in background music, a live string quartet played pop covers. It sounds like a tiny thing, and it won’t appear in anyone’s ROI model, but it was a conscious decision somebody somewhere in Stripe made, to make the room a nicer place to be.

That theme of the hidden utility of beauty came up during Patrick Collison’s interview with Sam Altman. Altman noted that Stripe has cared to an almost irrational degree about design and beauty in its APIs for years. That aesthetic consistency was designed to appeal to human developers, but ironically, it may become their biggest advantage in a world of agents. Agents, it turns out, benefit from the exact same things human developers do – clear APIs, coherent abstractions and predictable behaviour. Stripe spent years making itself easier for developers to choose, putting it in a remarkably strong position now that software starts choosing tools too.

During that same interview, there was an interruption as a protester with a guitar walked down the aisle, singing that music and art should be made by humans, not machines. It was a strange and funny moment. The Moscone Center acoustics are so good, many thought he was part of the show initially, before he was hurriedly escorted away. There were numerous callbacks to this during subsequent talks – John Collison noted that an AI demo that was taking too long to run could have used a guy with a guitar to keep people entertained – but it served as another reminder that AI is changing more than commerce. It is colliding directly with culture more broadly, for better and worse.

The future is unevenly distributed

For visitors to San Francisco, Waymo’s autonomous cars navigating the hills still feel like a futuristic tourist attraction. For locals, they are just more traffic.

Agentic commerce feels a lot like those driverless cars. It brings to mind William Gibson’s famous line about the future being already here, just not evenly distributed.

While agentic commerce is unevenly distributed, it is very much here. The businesses that prepare now by cleaning up their data, rethinking their pricing for microtransactions and strengthening their fraud controls will be ready for a fundamentally new kind of customer.

The ones that wait may find the agents have already learned to shop somewhere else.

Paul Conroy is CTO at Square1, an award-winning digital transformation agency specialising in payments and online publishing. He was also among the first cohort of Stripe Partner Advocates – a group of technical leaders with deep payments experience, chosen to collaborate directly with Stripe product teams. Disclosure: Square1 is a longtime collaborator of Silicon Republic.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

You must be logged in to post a comment Login