Tech

Protect your enterprise now from the Shai-Hulud worm and npm vulnerability in 6 actionable steps

Any development environment that installed or imported one of the 172 compromised npm or PyPI packages published since May 11 should be treated as potentially compromised. On affected developer workstations, the worm harvests credentials from over 100 file paths: AWS keys, SSH private keys, npm tokens, GitHub PATs, HashiCorp Vault tokens, Kubernetes service accounts, Docker configs, shell history, and cryptocurrency wallets. For the first time in a TeamPCP campaign, it targets password managers including 1Password and Bitwarden, according to SecurityWeek.

It steals Claude and Kiro AI agent configurations, including MCP server auth tokens for every external service an agent connects to. And it does not leave when the package is removed.

The worm installs persistence in Claude Code (.claude/settings.json) and VS Code (.vscode/tasks.json with runOn: folderOpen) that re-execute every project open, plus a system daemon (macOS LaunchAgent / Linux systemd) that survives reboots. These live in the project tree, not in node_modules. Uninstalling the package does not remove them. On CI runners, the worm reads runner process memory directly via /proc/pid/mem to extract secrets, including masked ones, on Linux-based runners. If you revoke tokens before isolating the machine, Wiz’s analysis found a destructive daemon wipes your home directory.

Between 19:20 and 19:26 UTC on May 11, the Mini Shai-Hulud worm published 84 malicious versions across 42 @tanstack/* npm packages. Within 48 hours the campaign expanded to 172 packages across 403 malicious versions spanning npm and PyPI, according to Mend’s tracking. @tanstack/react-router alone receives 12.7 million weekly downloads. CVE-2026-45321, CVSS 9.6. OX Security reported 518 million cumulative downloads affected. Every malicious version carried a valid SLSA Build Level 3 provenance attestation. The provenance was real. The packages were poisoned.

“TanStack had the right setup on paper: OIDC trusted publishing, signed provenance, 2FA on every maintainer account. The attack worked anyway,” Peyton Kennedy, senior security researcher at Endor Labs, told VentureBeat in an exclusive interview. “What the orphaned commit technique shows is that OIDC scope is the actual control that matters here, not provenance, not 2FA. If your publish pipeline trusts the entire repository rather than a specific workflow on a specific branch, a commit with no parent history and no branch association is enough to get a valid publish token. That’s a one-line configuration fix.”

Three vulnerabilities chained into one provenance-attested worm

TanStack’s postmortem lays out the kill chain. On May 10, the attacker forked TanStack/router under the name zblgg/configuration, chosen to avoid fork-list searches per Snyk’s analysis. A pull request triggered a pull_request_target workflow that checked out fork code and ran a build, giving the attacker code execution on TanStack’s runner. The attacker poisoned the GitHub Actions cache. When a legitimate maintainer merged to main, the release workflow restored the poisoned cache. Attacker binaries read /proc/pid/mem, extracted the OIDC token, and POSTed directly to registry.npmjs.org. Tests failed. Publish was skipped. 84 signed packages still reached the registry.

“Each vulnerability bridges the trust boundary the others assumed,” the postmortem states. Published tradecraft from the March 2025 tj-actions/changed-files compromise, recombined in a new context.

The worm crossed from npm into PyPI within hours

Microsoft Threat Intelligence confirmed the mistralai PyPI package v2.4.6 executes on import (not on install), downloading a payload disguised as Hugging Face Transformers. npm mitigations (lockfile enforcement, –ignore-scripts) do not cover Python import-time execution.

Mistral AI published a security advisory confirming the impact. Compromised npm packages were available between May 11 at 22:45 UTC and May 12 at 01:53 UTC (roughly three hours). The PyPI release mistralai==2.4.6 is quarantined. Mistral stated an affected developer device was involved but no Mistral infrastructure was compromised. SafeDep confirmed Mistral never released v2.4.6; no commits landed May 11 and no tag exists.

Wiz documented the full blast radius: 65 UiPath packages, Mistral AI SDKs, OpenSearch, Guardrails AI, 20 Squawk packages. StepSecurity attributes the campaign to TeamPCP, based on toolchain overlap with prior Shai-Hulud waves and the Bitwarden CLI/Trivy compromises. The worm runs under Bun rather than Node.js to evade Node.js security monitoring.

The attacker treated AI coding agents as part of the trusted execution environment

Socket’s technical analysis of the 2.3 MB router_init.js payload identifies ten credential-collection classes running in parallel. The worm writes persistence into .claude/ and .vscode/ directories, hooking Claude Code’s SessionStart config and VS Code’s folder-open task runner. StepSecurity’s deobfuscation confirmed the worm also harvests Claude and Kiro MCP server configurations (~/.claude.json, ~/.claude/mcp.json, ~/.kiro/settings/mcp.json), which store API keys and auth tokens for external services. This is an early but confirmed instance of supply-chain malware treating AI agent configurations as high-value credential targets. The npm token description the worm sets reads: “IfYouRevokeThisTokenItWillWipeTheComputerOfTheOwner.” It is not a bluff.

“What stood out to me about this payload is where it planted itself after running,” Kennedy told VentureBeat. “It wrote persistence hooks into Claude Code’s SessionStart config and VS Code’s folder-open task runner so it would re-execute every time a developer opened a project, even after the npm package was removed. The attacker treated the AI coding agent as part of the trusted execution environment, which it is. These tools read your repo, run shell commands, and have access to the same secrets a developer does. Securing a development environment now means thinking about the agents, not just the packages.”

CI/CD Trust-Chain Audit Grid

Six gaps Mini Shai-Hulud exploited. What your CI/CD does today. The control that closes each one.

|

Audit question |

What your CI/CD does today |

The gap |

|

1. Pin OIDC trusted publishing to a specific workflow file on a specific protected branch. Constrain id-token: write to only the publish job. Ensure that job runs from a clean workspace with no restored untrusted cache |

Most orgs grant OIDC trust at the repository level. Any workflow run in the repo can request a publish token. id-token: write is often set at the workflow level, not scoped to the publish job. |

The worm achieved code execution inside the legitimate release workflow via cache poisoning, then extracted the OIDC token from runner process memory. Branch/workflow pinning alone would not have stopped this attack because the malicious code was already running inside the pinned workflow. The complete fix requires pinning PLUS constraining id-token: write to only the publish job PLUS ensuring that job uses a clean, unshared cache. |

|

2. Treat SLSA provenance as necessary but not sufficient. Add behavioral analysis at install time |

Teams treat a valid Sigstore provenance badge as proof a package is safe. npm audit signatures passes. The badge is green. Procurement and compliance workflows accept provenance as a gate. |

All 84 malicious TanStack versions carry valid SLSA Build Level 3 provenance attestations. First widely reported npm worm with validly-attested packages. Provenance attests where a package was built, not whether the build was authorized. Socket’s AI scanner flagged all 84 artifacts within six minutes of publication. Provenance flagged zero. |

|

3. Isolate GitHub Actions cache per trust boundary. Invalidate caches after suspicious PRs. Never check out and execute fork code in pull_request_target workflows |

Fork-triggered workflows and release workflows share the same cache namespace. Closing or reverting a malicious PR is treated as restoring clean state. pull_request_target is widely used for benchmarking and bundle-size analysis with fork PR checkout. |

Attacker poisoned pnpm store via fork-triggered pull_request_target that checked out and executed fork code on the base runner. Cache survived PR closure. The next legitimate release workflow restored the poisoned cache on merge. actions/cache@v5 uses a runner-internal token for cache saves, not the workflow’s GITHUB_TOKEN, so permissions: contents: read does not prevent mutation. Kennedy: ‘Branch protection rules don’t apply to commits that aren’t on any branch, so that whole layer of hardening didn’t help.’ |

|

4. Audit optionalDependencies in lockfiles and dependency graphs. Block github: refs pointing to non-release commits |

Static analysis and lockfile enforcement focus on dependencies and devDependencies. optionalDependencies with github: commit refs are not flagged by most tools. |

The worm injected optionalDependencies pointing to a github: orphan commit in the attacker’s fork. When npm resolves a github: dependency, it clones the referenced commit and runs lifecycle hooks (including prepare) automatically. The payload executed before the main package’s own install step completed. SafeDep confirmed Mistral never released v2.4.6; no commits landed and no tag exists. |

|

5. Audit Python dependency imports separately from npm controls. Cover AI/ML pipelines consuming guardrails-ai, mistralai, or any compromised PyPI package |

npm mitigations (lockfile enforcement, –ignore-scripts) are applied to the JavaScript stack. Python packages are assumed safe if pip install completes. AI/ML CI pipelines are treated as internal testing infrastructure, not as supply-chain attack targets. |

Microsoft Threat Intelligence confirmed mistralai PyPI v2.4.6 executes on import, not install. Injected code in __init__.py downloads a payload disguised as Hugging Face Transformers. –ignore-scripts is irrelevant for Python import-time execution. guardrails-ai@0.10.1 also executes on import. Any agentic repo with GitHub Actions id-token: write is exposed to the same OIDC extraction technique. LLM API keys, vector DB credentials, and external service tokens all in the blast radius. |

|

6. Isolate and image affected machines before revoking stolen tokens. Do not revoke npm tokens until the host is forensically preserved |

Standard incident response: revoke compromised tokens first, then investigate. npm token list and immediate revocation is the instinctive first step. |

The worm installs a persistent daemon (macOS LaunchAgent / Linux systemd) that polls GitHub every 60 seconds. On detecting token revocation (40X error), it triggers rm -rf ~/, wiping the home directory. The npm token description reads: ‘IfYouRevokeThisTokenItWillWipeTheComputerOfTheOwner.’ Microsoft reported geofenced destructive behavior: a 1-in-6 chance of rm -rf / on systems appearing to be in Israel or Iran. Kennedy: ‘Even after the package is gone, the payload may still be sitting in .claude/ with a SessionStart hook pointing at it. rm -rf node_modules doesn’t remove it.’ |

Sources: TanStack postmortem, StepSecurity, Socket, Snyk, Wiz, Microsoft Threat Intelligence, Mend, Endor Labs. May 12, 2026.

Security director action plan

-

Today: “The fastest check is find . -name ‘router_init.js’ -size +1M and grep -r ’79ac49eedf774dd4b0cfa308722bc463cfe5885c’ package-lock.json,” Kennedy said. If either returns a hit, isolate and image the machine immediately. Do not revoke tokens until the host is forensically preserved. The worm’s destructive daemon triggers on revocation. Once the machine is isolated, rotate credentials in this order: npm tokens first, then GitHub PATs, then cloud keys. Hunt for .claude/settings.json and .vscode/tasks.json persistence artifacts across every project that was open on the affected machine.

-

This week: Rotate every credential accessible from affected hosts: npm tokens, GitHub PATs, AWS keys, Vault tokens, K8s service accounts, SSH keys. Check your packages for unexpected versions after May 11 with commits by claude@users.noreply.github.com. Block filev2.getsession[.]org and git-tanstack[.]com.

-

This month: Audit every GitHub Actions workflow against the six gaps above. Pin OIDC publishing to specific workflows on protected branches. Isolate cache keys per trust boundary. Set npm config set min-release-age=7d. For AI/ML teams: check guardrails-ai and mistralai against compromised versions, audit CI pipelines for id-token: write exposure, and rotate every LLM API key and vector DB credential accessible from CI.

-

This quarter (board-level): Fund behavioral analysis at the package registry layer. Provenance verification alone is no longer a sufficient procurement criterion for supply-chain security tooling. Require CI/CD security audits as part of vendor risk assessments for any tool with publish access to your registries. Establish a policy that no workflow with id-token: write runs from a shared cache. Treat AI coding agent configurations (.claude/, .kiro/, .vscode/) as credential stores subject to the same access controls as cloud key vaults.

The worm is iterating. Defenders must, as well

This is the fifth Shai-Hulud wave in eight months. Four SAP packages became 84 TanStack packages in two weeks. intercom-client@7.0.4 fell 29 hours later, confirming active propagation through stolen CI/CD infrastructure. Late on May 12, malware research collective vx-underground reported that the fully weaponized Shai-Hulud worm code has been open-sourced. If confirmed, this means the attack is no longer limited to TeamPCP. Any threat actor can now deploy the same cache-poisoning, OIDC-extraction, and provenance-attested publishing chain against any npm or PyPI package with a misconfigured CI/CD pipeline.

“We’ve been tracking this campaign family since September 2025,” Kennedy said. “Each wave has picked a higher-download target and introduced a more technically interesting access vector. The orphaned commit technique here is genuinely novel. Branch protection rules don’t apply to commits that aren’t on any branch. The supply chain security space has spent a lot of energy on provenance and trusted publishing over the last two years. This attack walked straight through both of those controls because the gap wasn’t in the signing. It was in the scope.”

Provenance tells you where a package was built. It does not tell you whether the build was authorized. That is the gap this audit is designed to close.

Tech

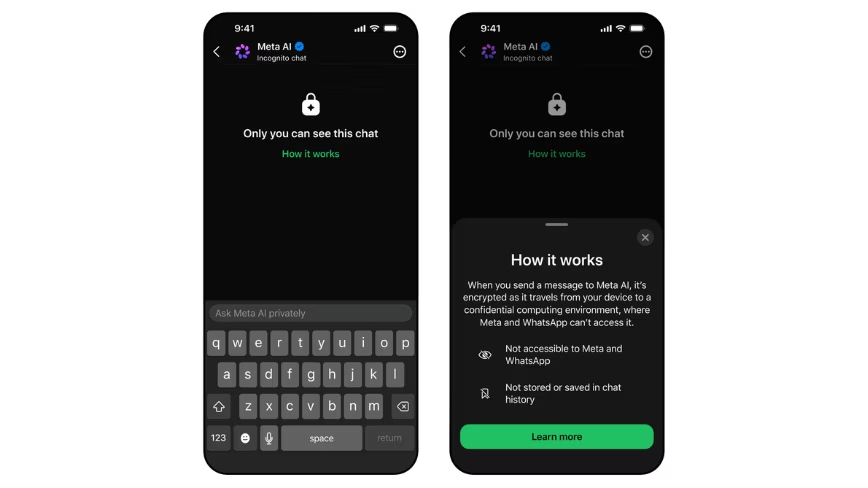

Meta launches Incognito Chat on WhatsApp, the first AI mode it says even Meta cannot read

The new mode runs Meta AI on WhatsApp inside the company’s Private Processing enclave, with conversations deleted by default and no server-side record retained.

Meta has launched an Incognito Chat mode for Meta AI on WhatsApp and the Meta AI app, an effort to address the awkward fact that its assistant, like every other major AI chatbot, has until now been able to read the conversations users have with it.

The new mode, the company announced on Tuesday, processes user messages inside what Meta describes as a secure environment that even Meta cannot see, with conversations deleted by default once the session ends.

The technical foundation is WhatsApp’s Private Processing system, the architecture the company published in April 2025 to let AI features run on encrypted data inside Trusted Execution Environments on Meta’s servers.

Inside that enclave, the model can read and respond to a query, but the contents are not accessible to Meta’s engineers, its logging systems, or any of its commercial pipelines.

Other apps offer what they call incognito modes for AI conversations, but Meta’s framing in the announcement is pointed: “they can still see the questions coming in and the answers going out.”

The launch responds directly to a category-wide privacy concern. AI chatbots have become a default tool for the sort of question users would once have asked a doctor, a lawyer, or a partner, with all the data exposure that implies.

OpenAI, Google, and Anthropic each store conversation histories by default, with varying user controls. Apple Intelligence routes some queries through Apple’s Private Cloud Compute, an enclave architecture that is the clearest existing analogue to what Meta is now shipping inside WhatsApp.

Two product details follow from the design. First, the conversations are not saved server-side at all; users cannot pull up Incognito Chat history later because there is nothing to pull up.

Second, the disappearing-by-default behaviour means even a compromised device leaks less, since the chat residue clears between sessions.

Meta has published a technical whitepaper describing the cryptographic architecture for outside review.

A second feature is on the way. Sidechat with Meta AI, also protected by Private Processing, will let users get AI help inside an existing WhatsApp conversation, with the assistant aware of the chat’s context but its responses kept invisible to the other participants.

Meta said Sidechat will arrive on WhatsApp “in the coming months,” without a firmer date.

The launch’s commercial logic is straightforward. WhatsApp has been built for a decade around end-to-end encryption as a selling point, and Meta’s pitch for AI on the platform has had to find a way around the central tension that a conversational AI assistant needs to read your messages to be useful.

Private Processing is the company’s attempt at squaring that circle. The Incognito Chat product is the first time the architecture has been put behind a user-facing feature on this scale.

Whether the implementation holds under scrutiny is a separate question. Trusted Execution Environment-based AI systems have been audited and criticised across the industry, with researchers periodically demonstrating side-channel attacks against similar architectures from Apple, Google, and the hyperscalers.

Meta has invited external review of its Private Processing design, and the new whitepaper extends that posture, but the model’s resistance to subpoena, in particular, has not yet been tested in court.

Incognito Chat with Meta AI begins rolling out on WhatsApp and the Meta AI app this week, with broader availability over the coming months.

The launch lands at the end of a difficult fortnight for Meta on the privacy front, with US employees protesting the company’s new mouse-tracking software on Monday and the company a week out from layoffs of roughly 8,000 staff.

Inside Meta, the bet appears to be that consumer-facing privacy moves like this one will outweigh the internal-surveillance optics.

Tech

How to Use Amazon Seller Central Reports to Scale Your Brand

.png)

Amazon Seller Central offers a layered reporting suite — with access determined by selling plan, fulfillment method, and Brand Registry status. In most organizations, these reports serve a single purpose — confirming what has already occurred: sales reconciled, fees reviewed, inventory checked. That operational function is necessary, but it represents only a fraction of what these reports are built to deliver. The same reports hold intelligence that directly determines how a brand scales:

➔ Conversion signals that reveal listing degradation before it erodes rank

➔ Acquisition quality data that separates genuine brand growth from retargeting spend

➔ Product feedback loops that surface quality and listing gaps before they compound in reviews

➔ SKU-level margin intelligence that identifies which products can sustain paid investment and which cannot

The gap is not access — it is how the data is leveraged.

This blog provides a structured approach to leveraging five core Seller Central report categories — Business, Advertising, Fulfillment, Return, and Payments — for measurable brand growth. It covers best practices for leveraging reports effectively, the structural limitations every brand team needs to account for before acting on the data, and how Amazon account management helps.

The Core Categories: How Amazon Seller Central Reports Are Structured

|

Report |

Key |

Role |

|

Business Reports |

Sales Dashboard, Detail Page Sales and |

Conversion health, traffic trends, |

|

Advertising Reports |

Search Term Report, Placement Report, |

Search demand, new-to-brand acquisition, |

|

Fulfillment Reports |

Inventory Ledger, Stranded Inventory, Inbound |

Inventory health, stockout prevention, |

|

Return Reports |

FBA Customer Returns Report, Returns Trend |

Product quality feedback, brand equity |

|

Payments Reports |

Transaction View, Fee Preview Report |

SKU-level margin clarity for reinvestment |

How to Use Amazon Seller Central Reports for Brand Growth

Step 1: Audit Business Reports for Brand Performance Signals

1. Detail Page Sales and Traffic by Child ASIN: Read session count, page views, Unit Session Percentage (conversion rate), and Featured Offer percentage at the variation level. Track these metrics weekly per ASIN — a sustained downward trend in Unit Session Percentage is your leading indicator of listing degradation before it erodes rank.

2. Brand Performance Report: Review Average Customer Review, Number of Customer Reviews, Sales Rank, and Featured Offer percentage together for each ASIN. These four metrics form a direct snapshot of brand health at the listing level. Flag metric combinations that signal brand risk rather than reading each metric in isolation.

3. Sales Dashboard: Review weekly and monthly trend lines across weekly and monthly windows to gauge whether brand momentum is accelerating or declining. Use the Compare Sales feature to layer on year-over-year context — this helps separate genuine trajectory shifts from recurring seasonal patterns.

Cross-reference sessions against Unit Session Percentage: falling sessions signal a visibility problem; falling conversion with stable sessions signals a listing or pricing issue.

Note: Business Reports are available only to sellers on a Professional selling plan, and historical data is retained for up to two years.

Step 2: Extract Brand Acquisition Insights from Advertising Reports

Advertising Reports address two brand growth questions that Business Reports cannot answer. The first is which channels and keywords drive new demand into the brand. The second is what proportion of that demand represents genuinely new-to-brand customers versus returning buyers.

1. Search Term Reports: Review the actual queries customers typed before clicking an ad.

– Negate: Any term that spends money with zero conversions over 30 days is added as a negative keyword. This is the single fastest way to improve ACoS without changing bids.

– Harvest: Any search term that converts at or below your target ACoS is added as an exact match keyword.

For brands managing large campaign portfolios, use the Bulk Operations feature in Campaign Manager to download a custom spreadsheet with Sponsored Products and Sponsored Brands Search Term data. Edit keyword additions and negations directly in the file and upload to update campaigns in a single operation.

.png)

2. Sponsored Brands and Sponsored Display Reports: Isolate the New-to-Brand (NTB) metric inside these campaign types to separate new customer acquisition from repeat buyers. Monitor NTB percentage, NTB order cost, and NTB sales separately from overall ROAS to measure true brand expansion, not branded retargeting.

3. Placement Reports: Compare conversion and spend distribution across top-of-search, product pages, and rest-of-search. Redirect budget toward placements with the strongest NTB and conversion performance. Top-of-search placements carry disproportionate brand visibility and deserve priority investment when NTB indicators support it.

Step 3: Protect Brand Momentum with Fulfillment Reports

1. Inventory Ledger Report: Consolidate inventory movement across Amazon warehouses — adjustments, receipts, and shipments — in a single view. Monitor inventory accuracy and act on discrepancies before stockouts hit high-velocity ASINs.

.png)

2. Stranded Inventory Report: Identify stock held in FBA warehouses but unsellable due to listing issues. Each stranded ASIN represents a direct revenue leak. Recover these listings weekly, before the associated search rank decays.

3. Inbound Performance Report: Track the efficiency of FBA shipments, including missing units, incorrect labeling, and receiving delays. Address recurring inbound issues at the source before they escalate into repeat offenses, as persistent issues extend reimbursement cycles and delay restock.

4. Inventory Performance Index (IPI): Monitor IPI as a brand growth prerequisite, not a warehouse KPI. Calculated from fulfillment data, the score directly affects FBA storage limits. A low IPI restricts scalability and caps paid acquisition ceilings.

Step 4: Read Return Reports as Product Quality Feedback

1. FBA Customer Returns Report: Mine return reasons, order IDs, and SKU-level detail for recurring patterns. Aggregate return reasons by ASIN to reveal product issues that would otherwise appear only in individual customer reviews.

2. Return Trend Monitoring: Flag ASINs with rising return rates as a signal of either a product quality issue, a listing accuracy issue, or both. Each failure mode damages brand equity and search rank. Address the root cause visible in return reasons, rather than treating the symptom through returns management.

For example, when the most frequent return reason on an ASIN is “not as described,” the listing content itself is driving the returns. An updated, accurate listing reduces future returns, improves conversion rate, and reinforces brand trust — three outcomes from a single fix.

3. Schedule Report Generation: Set up daily schedules for All Returns and Prime returns, instead of pulling them manually. Three operational constraints to note:

– One active schedule per report type

– Maximum of 30 reports in the Scheduled Reports section

– Schedule changes require deletion and recreation

Reports can be scheduled by return date for both FBA and seller-fulfilled orders to track return reasons and item condition across fulfillment channels.

.png)

Step 5: Use Payments Reports to Inform Brand Reinvestment

1. Transaction View: Break down every order into referral fees, FBA fees, promotional rebates, and net proceeds. Surface ASIN-level margin visibility to identify which products can sustain paid acquisition pressure and which cannot.

2. Fee Preview Report: Project FBA fulfillment, storage, and referral fees across existing FBA inventory. Review the report to identify ASINs where upcoming fee changes or aged-inventory surcharges will compress margin. Adjust pricing or inventory planning before the fees hit the bottom line.

Limitations & Challenges of Amazon Seller Central Reports

#1 No Built-in Competitive Benchmarks

Seller Central Reports show only your own performance data. There is no native view of how your brand performs against category peers or direct competitors. External benchmarking requires third-party data or Brand Registry-gated reports.

#2 Data Latency Varies Across Reports

Business Reports refresh daily, while Fulfillment and Payments reports often run on weekly or delayed cycles. This inconsistency complicates cross-report analysis when precise attribution windows matter, particularly for reconciling paid performance against organic results within the same reporting period.

#3 Limited Brand-Level Insights Without Brand Registry

Deeper brand-growth tools sit outside the standard Reports tab. These include Brand Analytics dashboards (Search Query Performance, Market Basket Analysis, Customer Loyalty Analytics), the Brand Dashboard, and Voice of the Customer. Brand Registry enrollment unlocks these additional layers.

#4 Attribution Gaps Between Advertising and Organic

Advertising Reports attribute sales to campaigns, while Business Reports track total sales. Reconciliation between the two requires careful segmentation, especially when paid campaigns and organic traffic overlap on the same keywords.

#5 Report Siloing Across Tabs

Seller Central Reports live across multiple tabs — Reports, Advertising, Returns, Payments — with inconsistent naming and export formats. Cross-report analysis almost always requires careful manual reconciliation.

Best Practices for Using Seller Central Reports Effectively

1. Standardize Date Ranges Across Reports

Different reports operate on different default time windows. Business Reports default commonly to 30 days, while granular Advertising Reports — including Search Term and Purchased Product Reports — are subject to a hard 90-day lookback limit, not a display default. Manually aligning date ranges across reports before cross-referencing ensures comparisons reflect the same performance window and eliminates attribution mismatches.

2. Benchmark Week-Over-Week, Not Day-Over-Day

Single-day metrics are statistically volatile, particularly on low-velocity SKUs where marginal order volume can produce significant conversion rate variance. Weekly benchmarking normalizes daily fluctuations while keeping the reporting window tight enough to surface trends before they compound.

3. Cross-Reference Reports for Root-Cause Analysis

A conversion decline in Business Reports frequently correlates with a Buy Box shift, a pricing change, or a stranded listing in Fulfillment Reports. Isolating a single metric without cross-report validation increases the risk of misdiagnosis and misdirected corrective action.

4. Export and Archive Reports Externally

Business Reports retain data for up to two years. Granular Advertising Reports, including those referenced above, are capped at a 90-day lookback window with no native recovery option beyond that threshold. Once the window closes, that data is permanently removed from Seller Central — brands that need historical context must export it on a defined schedule.

5. Align Reporting Depth with Organizational Role

Operational teams require weekly tactical reviews covering stockouts, suppressed listings, and Buy Box performance. Brand leadership requires monthly and quarterly trend analysis focused on category share and customer retention. Calibrating reporting depth to the decision-making level of each function reduces analysis fatigue and maintains actionable review cycles across the organization.

The Business Imperative: Seller Central Reports provide the data. Translating that data into consistent brand decisions — across listings, advertising, inventory, returns, and margins — requires operational discipline that compounds over time.

For brands managing catalog depth, multi-channel fulfillment, and active advertising simultaneously, cross-report analysis, weekly metric reviews, search term management, inventory reconciliation, and fee audits each demand specialization and bandwidth that most in-house teams cannot sustain at the required cadence.

Amazon account management services bring the field-level expertise and technical infrastructure to close that gap — identifying signals early, connecting them across report categories, and converting them into decisions before they compound into performance issues.

As catalog scale increases, inconsistent report review compounds directly into rank loss, wasted ad spend, stranded inventory, and missed reinvestment signals — each one a measurable cost to brand performance. The question is not whether these gaps exist. The question is how long your brand can afford to leave them unaddressed.

Tech

Trump Already Has His ‘Get Out Of Jail Free’ Card. Now He Wants A ‘Get Out Of IRS Audits’ Card

from the nice-work-if-you-can-get-it dept

In a ruling that will clearly be remembered as one of the worst in the history of the Supreme Court, two years ago, the court gave Donald Trump a get out of jail free card, which he appears to be trying to take full advantage of with all the criming in his second term. But, as always with this guy, it’s never enough.

We’ve already covered in detail the ridiculous situation in which Donald Trump acting in his supposed personal capacity, while still being the president, sued his own IRS for $10 billion, because a contractor leaked his tax returns a while back (that contractor is currently in prison for doing so). Again, there is zero indication of any actual harm. Every president — and nearly all major candidates — for the past 50 years released their tax returns to the public. Except Trump.

A decade ago he claimed that it was because he was being audited, and promised to release them once the audit was over. But he’s never done anything. And, as many people have noted, when President Richard Nixon started this tradition of releasing the president’s tax returns, he was actually being audited by the IRS, and was able to release his returns without a problem.

Either way, a contractor (not an IRS employee) leaked some of Trump’s returns to ProPublica and the NY Times, which resulted in a few stories before the news cycle moved on within days. It certainly didn’t stop Trump from being elected in 2024. And even though the returns were leaked in 2019 and 2020, Trump waited until he was back in the White House (and, in charge of the IRS and the DOJ) to file this $10 billion lawsuit.

We’ve covered the ridiculous claim that the “two sides” (there aren’t two sides) were “negotiating a settlement” and how the judge in the case has tried to call timeout, noticing that since Trump is effectively negotiating with himself there’s no cause or controversy, and thus there may be no jurisdiction for the court to hear the case. There’s still briefing going on over that, but the NY Times reports that the supposed (not really) “negotiations” have continued, with Trump apparently proposing that the settlement include the IRS dropping audits of Trump, his businesses, and his family, which would just be a shocking level of corruption from an administration that has spent its first year and a half in office trying to be as blatantly corrupt as possible.

One of the settlement options the Justice Department and White House officials are reviewing is the possibility of the I.R.S. dropping any audits of Mr. Trump, his family members or businesses, according to two of the people.

Again, even though the news cycle moved on quickly, perhaps it should return to exactly what those leaked tax returns showed: which is that at a time when Trump was publicly claiming to be rolling in cash, he basically paid effectively no income taxes and was racking up massive losses — figures that raise serious questions about his financial entanglements and what he stood to gain from his first term in office.

To have the audits of what happened during those years completely dropped — and not just for him, but for his entire family and related businesses — is another form of a get out of jail free card. Call it a “tax cheat for life” card.

To do this at a time when the public is struggling, due almost entirely to Donald Trump’s ridiculous policies — tariffs driving up inflation massively, an illegal war quagmire in Iran driving up energy prices — is even more insulting to the public that Donald Trump is supposed to be working for. The same day this story came out, Trump was asked about whether he was thinking about the impact of his out-of-control war on Americans’ financial situation, and he responded “not even a little bit” and that “I don’t think about Americans financial situation. I don’t think about anybody.”

Well, except himself, apparently.

Filed Under: audits, corruption, donald trump, irs, tax returns

Tech

KDE Receives $1.4 Million Investment From Sovereign Tech Fund

The German Sovereign Tech Fund has invested 1.2 million euros ($1.4 million USD) in KDE Plasma technologies to help strengthen the structural reliability and security of the desktop environment’s core infrastructure, including Plasma, KDE Linux, and the frameworks underlying its communication services. Longtime Slashdot reader jrepin shares an excerpt from the announcement: For 30 years, KDE has been providing the free and open-source software essential for digital sovereignty in personal, corporate, and public infrastructures: operating systems, desktop environments, document viewers, image and video editors, software development libraries, and much more.

KDE’s software is competitive, publicly auditable, and freely available. It can be maintained, adapted, and improved in-house or by local software companies. And modifications (along with their source code) can be freely distributed to all users and departments within an organization.

KDE will use Sovereign Tech Fund’s investment to push its essential software products to the next level, providing every individual, business, and public administration with the opportunity to regain their privacy, security, and control over their digital sovereignty. Slashdot reader Elektroschock also shared a statement from Fiona Krakenburger, Technical Director at the Sovereign Tech Agency.

“We have long invested in desktop technologies for a reason: they are the primary way people access and use digital services in everyday life,” says Krakenburger. “The desktop holds personal data and mediates nearly every service we depend on, from booking the next medical appointment, to education, to the way we work. We are investing in KDE because it is one of the two major desktop environments used across Linux and plays a key role in how millions of people experience open technology. Strengthening KDE’s testing infrastructure, security architecture, and communication frameworks is how we invest in the resilience and reliability of the core digital infrastructure that modern society depends on.”

Tech

The Steam Controller Wilhelm Scream Easter Egg Is Incredible

Thanks to Reddit, one of the best little secrets of the Valve Steam Controller has been discovered. Now I can’t stop dropping it, because it turns out it makes a Wilhelm scream if it does. I tested it, and can confirm. You don’t even need the controller paired to anything to make it happen.

Throughout my several-week review of Valve’s new game controller, I never knew that it made the infamous Wilhelm scream, a stock sound effect that has been used in hundreds of movies, when dropped. How would I know it did that? I don’t drop controllers. Or I don’t intend to.

But that’s exactly what the controller does when dropped even lightly on any surface. I picked up and dropped the controller a bunch of times onto my sofa, from about 3 feet, and that iconic scream that my kids love happened. Check out the video below.

The scream is randomized: It’s not about how hard you drop it, so don’t do that. A harder accidental fall onto the floor produced no scream. Two straight drops made screams. Then none for a bunch after that. That’s the fun of it.

Apparently, the scream is happening via the motor haptics in the controller, which act as a speaker. Or, is it a speaker? It sounds really good, it’s stunning.

The effect occurs even if the Steam Controller isn’t paired to anything. I just turned the controller on, and while it was cycling for Bluetooth pairing, it still made the drop screams, no Steam Deck or PC on or nearby.

I don’t generally recommend dropping $99 game controllers, but this Easter egg is so amazing that I want all game controllers to make little noises now. What if Joy-Cons made Mario sounds? PlayStation DualSense made AstroBot chirps?

I already loved the Steam Controller. I love it even more now.

Tech

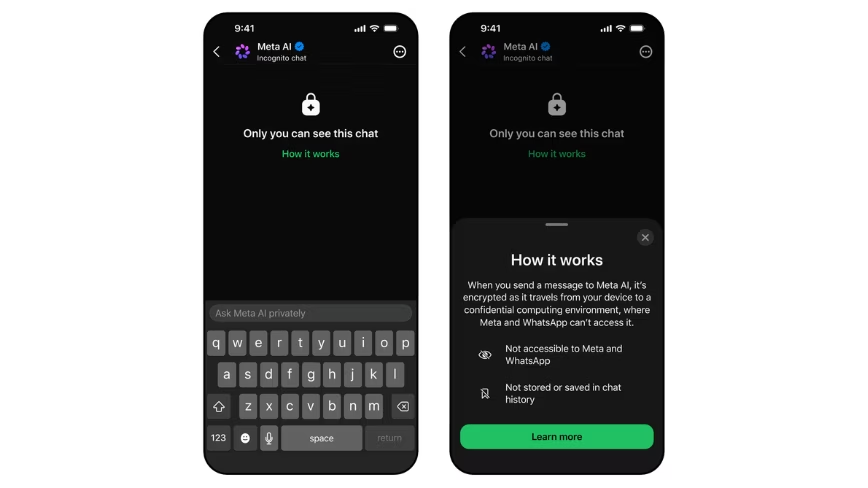

Best Desks of 2026: I’ve Spent Nearly 4,000 Hours Testing Desks. These Are the Ones You Want

Testing desks is something of a subjective game. Much like office chairs, the tests are based on comfort, reliability and ease of setup rather than things you can test in electronics such as wattage and battery usage. I still tested each one rigorously and will continue to test them for longevity in the coming months.

I tested these desks by asking three people to try each one. Each of them used the desk for at least 16 hours and then gave me their impressions. The three people were 6 feet, 1 inch tall; 5 feet, 8 inches tall; and 5 feet, 4 inches tall respectively, to give me a good cross-section of average user height.

Setup time and package quality

Building desks can often be difficult and time-consuming. For each desk, I timed how long it took to unpack and assemble, and I noted whether the manual was easy to follow. I followed the instructions as closely as possible so that each build was performed as if I had never built one before. I also thoroughly checked the packaging, to make sure it wasn’t damaged, and if it was secure enough to carry the desk it had in it. Any damage was noted, and images were sent to the manufacturers for review.

Structural integrity

Modern desks need to be able to hold a good amount of weight. If you’re at a writing desk you might only have a small laptop, but if you’re using a gaming desk, it likely has two monitors and a giant gaming PC as well. For each desk, I checked the maximum load specification, and I tried to match that with the materials we actually use on our desks.

I used:

- A heavy gaming PC tower

- Two 27-inch gaming monitors on a dual monitor arm

- A MacBook Pro

- Two different keyboards and assorted mice and trackpads

- My Oculus Quest 2

- My phone stand and USB hub

- A podcasting mic and headphones

Depending on the length and weight capacity of the desk, I mix and match these items, then check for any bowing of the top or inconsistencies in how the desk felt as I worked.

The wibble-wobbles

This is a bit of a throwback from when my dad used to make furniture. Anything my dad built would be critiqued by my mum, and if it didn’t pass muster, she would say, “It’s a bit wibbly-wobbly, isn’t it, dear?” Once I’ve built each desk and loaded it for normal use, I would check it for the wibble-wobbles. This means rocking it from side to side and forward and backward to check that all the screws, bolts and fixtures kept everything rigid.

Tech

Origin Lab raises $8M to help video game companies sell data to world-model builders

As AI begins to interact with the physical world, new types of labs are working to build world models that could be used to operate physical robotics or model objects in physical space. Unlike large language models, there isn’t an easy source of data for those models, which has left many labs scrambling to assemble the necessary training sets.

Now, one startup is emerging with an unlikely data source: the video game industry.

That’s the premise of Origin Lab, which just announced an $8 million seed funding round led by Lightspeed Ventures. SV Angel, Eniac, Seven Stars, and FPV also participated, with angel funding from Twitch co-Founder Kevin Lin and Cruise founder Kyle Vogt.

“The AI systems that are being built now need to understand how the physical world works and how things move,” co-CEO and co-founder Anne-Margot Rodde told TechCrunch. “That data essentially lives in video games.”

In simple terms, Origin Lab will serve as a marketplace where world-model-focused labs such as Yann LeCun’s AMI Labs or Fei-Fei Li’s World Labs can buy high-quality licensed data. On the other side of the trade, video game companies can squeeze additional revenue out of the digital assets they’ve already created. In the middle, Origin Lab will convert the video game assets into a form that works as training data — something that could be as simple as a rendering run or as complex as automating hours of walkthrough footage.

“It became clear that the video game industry was sitting on some incredibly valuable data, but there was no real way or infrastructure to basically connect AI labs and the video game industry,” says Rodde. “So essentially, we built that bridge.”

Labs have long been interested in video game footage as a data source, but licensing and data quality issues have often gotten in the way. In December 2024, OpenAI caused a minor scandal when the first version of its Sora video-generation model seemed to regurgitate footage of popular video games and streamers — presumably because it had been trained on Twitch streams. Amazon has been open about its interest in using Twitch footage to train models.

Origin’s success in fundraising is a sign of a growing market — not just for training data, but for startups that can serve as essential suppliers to major AI labs. Faraz Fatemi, a partner at Lightspeed who led the Origin investment, says the success of companies like Scale.AI has made the opportunity impossible to ignore.

“We’ve seen how sharp the revenue scaling can be for data vendors that are serving the major labs,” Fatemi told TechCrunch. “These are very well-capitalized businesses, and the bottleneck for all of them is data.”

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

Tech

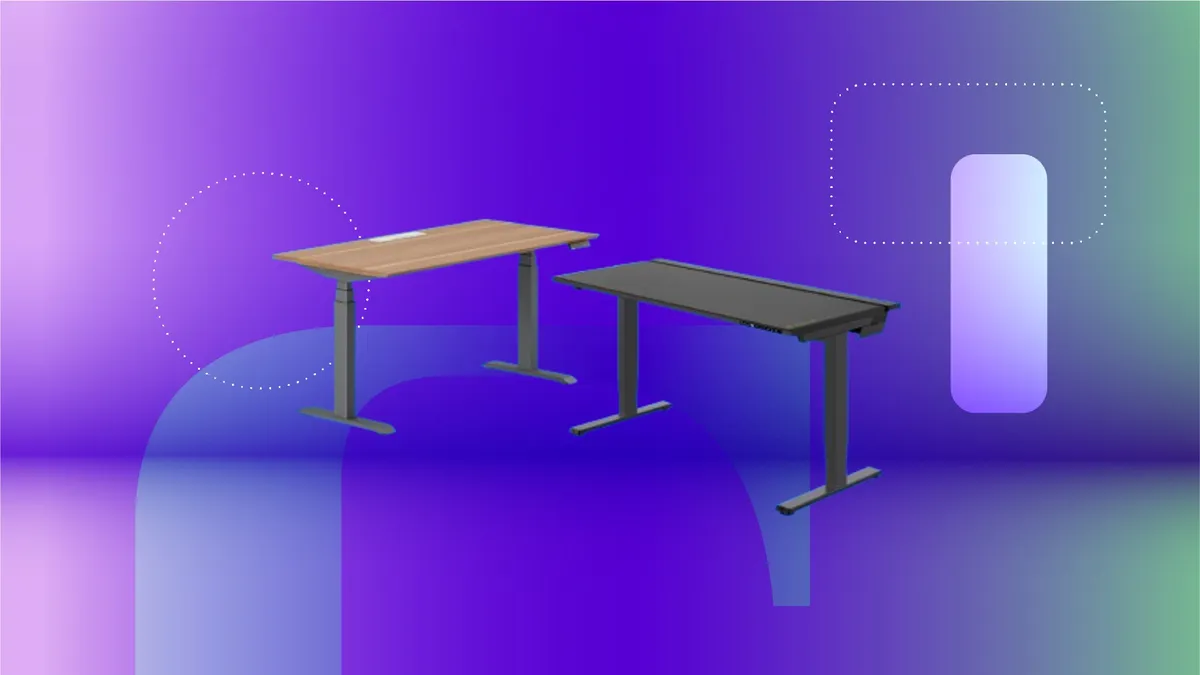

A big price cut makes the Kindle Colorsoft much easier to recommend

Colour displays on e-readers have always come at a premium steep enough to make most readers pause and quietly settle for black and white instead.

The Kindle Colorsoft is Amazon’s answer to that hesitation, and it is now down from £239.99 to £174, with £65.99 off its usual retail price.

A big 27% price cut brings the Kindle Colorsoft under £175, making a colour Kindle far more affordable

The Kindle Colorsoft is Amazon’s answer to the steep prices of colour e-readers, and it is now down to £174.

This is not simply a standard Kindle with a colour filter dropped over the top; the Colorsoft uses a purpose-built 7-inch Colorsoft display optimised specifically for colour reading, delivering 300ppi in black and white and 150ppi in colour, with a paper-like quality that makes book covers and illustrated content genuinely worth looking at.

The adjustable warm light shifts the display from white to amber, which means comfortable reading holds up whether you are outside in direct sunlight at midday or winding down under a lamp late at night.

Battery life reaches up to eight weeks on a single charge, based on half an hour of reading per day with wireless off and the light at a moderate setting, so the colour display does not come at the cost of the long battery life Kindle readers expect.

Storage sits at 16GB, which is enough to hold thousands of books locally, and free cloud storage covers the rest of your Amazon content library without taking up any space on the device itself.

Waterproofing is rated to IPX8, meaning the Kindle Colorsoft can handle submersion in two metres of fresh water for up to 60 minutes, making it genuinely bath-safe and pool-safe rather than just splash-resistant in name.

Highlighting works across four colours, yellow, orange, blue, and pink, which makes it a more active reading tool for anyone who annotates regularly and wants to distinguish between different types of notes across a single book.

This deal makes strong sense for readers who have been watching the colour Kindle category and waiting for the price to become more reasonable, with the Colorsoft now sitting at its most accessible since launch.

Still want to explore the full Kindle lineup before deciding? Our best Kindle 2026 guide has every current model tested and ranked to help you find the right fit.

SQUIRREL_PLAYLIST_10148964

Tech

Turning AI cost spikes into strategic growth opportunities

Presented by Apptio, an IBM company

AI spending is surging, but the full impact often remains an open question. Closing the gap requires clear answers to how AI is governed, measured, and tied to business outcomes.

ROI uncertainty isn’t unique to AI: In the Apptio 2026 Technology Investment Management Report, 90% of technology leaders surveyed said that ROI uncertainty has a moderate or major impact on overall tech investment decisions, a 5-percentage point year-over-year increase. In other words, tech leaders are increasing their reliance on ROI – even if they don’t fully know how to measure it. And AI economics involves new and unpredictable costs, further complicating ROI calculations. Faced with increasing uncertainty and increasing budgets, technology leaders need a clear, reliable framework for evaluating AI ROI.

Organizations increasingly expect scaled AI to pay its own way, at least partially. According to Apptio’s technology investment management report, 45% of organizations surveyed intend to fund innovation by reinvesting savings from AI-driven efficiencies. That model assumes that such savings are both achievable and quantifiable. Meanwhile, the two-thirds of organizations planning to reallocate existing budget capital to AI will need clarity on the trade-offs involved.

Much like the early days of public cloud, AI costs and returns are difficult to predict. Pricing varies widely across providers and continues to evolve, while consumption is unpredictable. The pressure to adopt quickly is also formidable as organizations navigate the threat of disruption by more agile competitors.

The new math of AI ROI

Considering the many variables, tech leaders should view AI ROI as a matter of optimization. At a high level, the implementation of AI initiatives is inevitable. The question is how to achieve the greatest possible returns — both financial and organizational.

Start with the business problem. There are many ways AI can deliver positive impact, but organizational resources and focus may be limited. Make sure you’re prioritizing the right initiatives by basing your AI investment strategy on quantifiable goals tied to real business outcomes. Are you trying to improve decision-making speed? Increase throughput or capacity? Or chasing cool edge cases with high potential returns but minimal strategic relevance?

Determine what success looks like. AI can introduce a new capability or augment an existing one. For new capabilities, articulate the possibilities you’d like to unlock, such as new revenue opportunities, workflows, or decision-making processes. For augmentations, establish baseline performance and the expected lift you aim to achieve with AI.

Consider how finances will influence your evaluation. Some use cases may show minimal results in the near-term but drive significant value in the long-term. What’s your timeframe for return? On the other hand, more successful rollouts with rapid adoption can generate unexpectedly high inference bills. Would that mean pulling the plug — or leaning in further? What should your cost and return curve look like over the years? As you map your timeline, establish clear thresholds to determine whether you’ll proceed, pause, stop, or accelerate your investment.

Identify the right KPIs. The returns on an AI investment can be even more difficult to evaluate than the costs. Usage, efficiency, and financial impact all matter. But AI success metrics won’t always be straightforward. There may be new usage patterns you don’t yet have a way to measure. Your technology environment may experience follow-on shifts that call for further evaluation. Will you be able to lessen your reliance on other tools, such as reducing seats in your data analytics platform? How will you factor in cross-tool pricing comparisons for multiple AI providers with shifting rates?

To gain full context and insight, you must also take into account the alignment of the initiative with your broader strategy and consider the opportunity cost of the investments you might otherwise have made. Remember that you’re not evaluating AI business value in isolation; you’re deciding whether it’s the best use of finite capital across all your investments.

These decisions will call for a level of insight far exceeding what was needed to justify traditional purchases like network infrastructure or enterprise software. Tech leaders navigating the complexities of AI economics should consider a new framework for data-driven decision-making.

Making AI investment sustainable with TBM

Technology business management (TBM) helps make ROI more concrete and measurable, so it can be relevant to the business. By bringing together IT Financial Management (ITFM), AI FinOps (cloud financial management for AI workloads), and Strategic Portfolio Management (SPM), a TBM framework connects financial, operational, and business data across the enterprise.This makes it possible to account for AI value and cost across a wide array of dimensions — and translate hypothetical innovation into board presentations and budget justifications that hold up under scrutiny.

TBM can help leaders build a trustworthy cost foundation that captures AI spend across labor, infrastructure, inference, storage, and applications. As AI workloads shift dynamically, TBM provides visibility into how that spend is distributed across on-premises systems and cloud environments — both of which require different capacity planning for specialized skill sets. The framework also connects investments to business outcomes, aligning AI initiatives with strategic priorities and measurable results. With increased visibility, you’re able to identify issues and make decisions fast, such as catching cost spikes early. Early detection can help to determine if the usage shift merits shifting funding. This unified view of financial and operational data helps leaders scale what’s working and reassess what isn’t as adoption increases. TBM provides essential visibility and context across the entire AI spend management conversation. Even as pricing evolves, tooling changes, and workflows shift, you can apply the same analytical approach and understand what’s actually working and demonstrate ROI. Leaders who operationalize AI within a TBM framework can:

-

Evaluate ROI at both project and portfolio levels

-

Spot unexpected cost spikes

-

Compare multiple AI tools

-

Understand ripple effects across run-the-business systems

-

Defend investment decisions with confidence

-

Understand and manage total costs and usage across the AI investment lifecycle

From theory to practice

Organizations are moving beyond AI experiments, and we’re past the point where these investments can be funded on optimism alone. Amid heightened uncertainty and cost sensitivity, boards are asking more strategic questions and finance wants trustworthy data.

Enterprise leaders who treat AI as a managed investment, rather than a bet on innovation, are those who will scale it successfully. To fund AI responsibly, leaders must establish clarity around scope, outcomes, cost drivers, and readiness. A TBM-driven approach provides the data foundation, visibility, and accountability to make those decisions.

Learn more here about how Apptio TBM transforms IT spend management in the AI era.

Ajay Patel is General Manager at Apptio, an IBM Company.

Sponsored articles are content produced by a company that is either paying for the post or has a business relationship with VentureBeat, and they’re always clearly marked. For more information, contact sales@venturebeat.com.

Tech

Supreme Court Breaks Another Election To Make Sure Black Voters Are Disenfranchised

from the voter-certainty-be-damned dept

Again, I feel like I’m going crazy here, but the obviously extremely partisan Supreme Court has struck again. I will repeat some of the basics, because it’s hard to believe how blatant all of this is. In November, a (Trump-appointed) judge threw out Texas’s new congressional maps, noting that the Texas state government had made it quite clear it was done for racial reasons, making it a violation of the Voting Rights Act. The judge wrote a detailed 160-page ruling showing how the Trump administration itself had essentially locked in the Texas legislature’s need to draw maps based on race, by threatening them with a civil rights complaint if they didn’t.

The Supreme Court, however, blocked that new map in December, saying that because of the upcoming midterm elections (still months away in December), Texas had to use those new maps (which had only been created in August) because (according to Samuel Alito) Texas voters needed “certainty.” Of course, they could have gone right back to the maps Texas had been using up until August — but somehow that would have shaken things up too much.

Then, a few weeks ago, the Supreme Court issued its Callais decision, effectively wiping out the remaining bits of the Voting Rights Act. Louisiana immediately declared a state of emergency and sought to throw out the map it had already started using for primary season — to redraw it in a much more racist way. And Samuel “the voters need certainty” Alito helped them along by rushing the certification of the Callais decision.

Now, just a few days later, the conservative majority on the Supreme Court has also vacated an even more detailed ruling rejecting maps in Alabama for being racist. The conservative majority claims that this is in light of the ruling in Callais:

The judgment of the United States District Court for the Northern District of Alabama in that case is vacated, and the case is remanded to the United States Court of Appeals for the Eleventh Circuit with instructions to remand to the District Court for further consideration in light of Louisiana v. Callais

Now, that’s already odd for the same reason I raised earlier about the Supreme Court (led by Justice Alito) claiming back in December that they couldn’t overturn Texas’ new map (which has only been announced, and never actually used, months earlier) for the sake of “voter certainty.” Yet here they are issuing a ruling EIGHT DAYS before the Alabama primary.

What the fuck?

It’s bizarre for multiple other reasons as well, including that the Supreme Court already heard a related case regarding the map in Alabama and ruled that it violated the Voting Rights Act (Alito, naturally, dissented). The state went to redraw its map based on that, but the lower court rejected the new maps almost exactly a year ago in an astounding 571-page ruling.

Notably, while that ruling does find that the new maps violate the Voting Rights Act (in multiple ways), it also found that the maps directly violate the Fourteenth Amendment (this discussion is towards the end of that 571-page ruling, so perhaps Alito and the other conservative Justices didn’t read that far?). And, as much as the Court believes it can invalidate the Voting Rights Act, it cannot invalidate the Constitution.

So we have a ridiculously thorough 571-page district court ruling — finding that the maps violate not just the VRA but also the Fourteenth Amendment — and the conservative majority just waves it away. Yet the conservatives on the Supreme Court — the same group who said no last-minute map changes for “voter certainty” — just ordered that clearly discriminatory, unconstitutional map into use, because of how they changed their interpretation of the Voting Rights Act.

But, as Justice Sotomayor points out in her dissent, that would totally ignore the Fourteenth Amendment part!

At the end of that trial, the District Court concluded “with great reluctance and dismay and even greater restraint” that Alabama had not merely spurned the opportunity to remedy past discrimination, but in fact had intentionally violated the Fourteenth Amendment.

Given that, the ruling in Callais could only possibly impact the VRA part of the lower court decision. Not the Fourteenth Amendment bit. But the majority on the Supreme Court just ignores that.

Nothing in the District Court’s Fourteenth Amendment analysis is affected by this Court’s opinion in Callais. Most obviously, Callais changed the legal standard for vote-dilution claims under §2. See 608 U. S., at ___ (slip op., at 19) (“[W]e must understand exactly what §2 of the Voting Rights Act demands”). It said not a word about the standard for Fourteenth Amendment intentional-discrimination claims like the one that the District Court decided on remand in round two.

Even worse, Sotomayor points out that in Callais itself, the majority had claimed that the earlier 2022 ruling regarding the Alabama maps (where they said it violated the VRA) remains good law. But this new ruling clearly contradicts that claim.

Callais also insisted that this Court’s prior decision in Allen remains good law. See id., at ___ (slip op., at 36) (“[W]e have not overruled Allen”). These cases are, of course, Allen. So if Allen is good law anywhere, then it must be good law here. This Court’s finding of racially discriminatory vote dilution is an inextricable, permanent feature of this case, and Alabama’s willful decision to respond by entrenching rather than remedying that dilution is, as the District Court correctly recognized, evidence of discriminatory intent

So, was Alito lying a week and a half ago when he said that Allen was still good law? Or did he just change his mind now, because he’s decided that he needs to proactively strip Black voters of their franchise for the sake of helping Republicans get a few more seats in the House?

And John Roberts wonders why people claim the Supreme Court is “partisan.”

Sotomayor also points out the ridiculousness of doing this a week before the election:

Even if Callais had something to say about the evidence necessary to establish discriminatory intent, it still would not be appropriate to vacate the decision below at this time. That is because Alabama’s congressional primary election is next week, and vacating the District Court’s injunction will immediately replace the current map with Alabama’s 2023 Redistricting Plan until the District Court acts, even though voting has already begun. Vacatur is an equitable remedy, and the Court should not lightly wield it to unleash chaos and to confuse voters.

Honestly, I’m a bit disappointed that she didn’t point to Alito’s “voters need certainty” claim for refusing to block Texas’ new maps back in December.

There is no good-faith reading of these events. Alito said Allen was still good law — then acted as if it wasn’t, twelve days later and eight days before an election. He said voters need “certainty” — then vacated a 571-page ruling finding unconstitutional discrimination with a week to go before Alabama’s primary. And the majority just waved away the Fourteenth Amendment finding entirely, as if they simply didn’t notice it was there.

John Roberts keeps insisting the Court isn’t partisan. At some point, the gap between that claim and what the Court actually does becomes its own kind of answer.

Filed Under: 14th amendment, alabama, john roberts, racism, redistricting, samuel alito, sonia sotomayor, supreme court, texas, voting rights act

-

Crypto World5 days ago

Crypto World5 days agoHarrisX Poll Found 52% of Registered Voters Support the CLARITY Act

-

Fashion5 days ago

Fashion5 days agoWeekend Open Thread: Marianne Dress

-

Crypto World6 days ago

Crypto World6 days agoUpbit adds B3 Korean won pair as Base token gains Korea access

-

NewsBeat6 days ago

NewsBeat6 days agoNCP car park operator enters administration putting 340 UK sites at risk of closure

-

Fashion2 days ago

Fashion2 days agoCoffee Break: Travel Steam Iron

-

Fashion3 days ago

Fashion3 days agoWhat to Know Before Buying a Curling Wand or Curling Iron

-

Tech3 days ago

Tech3 days agoAuto Enthusiast Carves Functional Two-Stroke Engine from Solid Metal

-

Politics2 days ago

Politics2 days agoWhat to expect when you’re expecting a budget

-

Business4 days ago

Business4 days agoIgnore market noise, India’s long-term story intact, say D-Street bulls Ramesh Damani and Sunil Singhania

-

Politics4 days ago

Politics4 days agoPolitics Home Article | Starmer Enters The Danger Zone

-

Tech2 days ago

Tech2 days agoGM Agrees To Pay $12.75 Million To Settle California Lawsuit Over Misuse Of Customers’ Driving Data

-

Politics6 days ago

Politics6 days agoSimon Cowell Says He Was ‘Horrible’ To Susan Boyle During BGT Audition

-

Entertainment6 days ago

Entertainment6 days agoSarah Paulson Called Out For Met Gala ‘Hypocrisy’

-

Entertainment6 days ago

Entertainment6 days agoGeneral Hospital: Ric & Ava Bombshell – Ric’s Massive Secret Exposed!

-

Crypto World7 days ago

Crypto World7 days agoRobinhood says Wall Street is building onchain

-

Entertainment7 days ago

Entertainment7 days agoBold and Beautiful Early Spoilers May 11-15: Steffy Revolted & Liam Overjoyed!

-

Sports6 days ago

Sports6 days agoUEFA Champions League final schedule, teams, venue, live time and streaming | Football News

-

Entertainment6 days ago

Entertainment6 days agoWhy David Letterman Called CBS ‘Lying Weasels’

-

Fashion7 days ago

Fashion7 days agoThe Best Work Pants for Women in 2026

-

Sports6 days ago

Sports6 days agoMike Tyson speaks out on status of Floyd Mayweather fight

You must be logged in to post a comment Login