TL;DR

SpaceX won a $4.16B Space Force contract for missile-tracking satellites. Combined with a $2.29B deal from Tuesday, it holds $6.45B in Golden Dome work.

This story was originally published by ProPublica. Republished under a CC BY-NC-ND 3.0 license.

In late 2024, the federal government’s cybersecurity evaluators rendered a troubling verdict on one of Microsoft’s biggest cloud computing offerings.

The tech giant’s “lack of proper detailed security documentation” left reviewers with a “lack of confidence in assessing the system’s overall security posture,” according to an internal government report reviewed by ProPublica.

Or, as one member of the team put it: “The package is a pile of shit.”

For years, reviewers said, Microsoft had tried and failed to fully explain how it protects sensitive information in the cloud as it hops from server to server across the digital terrain. Given that and other unknowns, government experts couldn’t vouch for the technology’s security.

Such judgments would be damning for any company seeking to sell its wares to the U.S. government, but it should have been particularly devastating for Microsoft. The tech giant’s products had been at the heart of two major cybersecurity attacks against the U.S. in three years. In one, Russian hackers exploited a weakness to steal sensitive data from a number of federal agencies, including the National Nuclear Security Administration. In the other, Chinese hackers infiltrated the email accounts of a Cabinet member and other senior government officials.

The federal government could be further exposed if it couldn’t verify the cybersecurity of Microsoft’s Government Community Cloud High, a suite of cloud-based services intended to safeguard some of the nation’s most sensitive information.

Yet, in a highly unusual move that still reverberates across Washington, the Federal Risk and Authorization Management Program, or FedRAMP, authorized the product anyway, bestowing what amounts to the federal government’s cybersecurity seal of approval. FedRAMP’s ruling — which included a kind of “buyer beware” notice to any federal agency considering GCC High — helped Microsoft expand a government business empire worth billions of dollars.

“BOOM SHAKA LAKA,” Richard Wakeman, one of the company’s chief security architects, boasted in an online forum, celebrating the milestone with a meme of Leonardo DiCaprio in “The Wolf of Wall Street.” Wakeman did not respond to requests for comment.

It was not the type of outcome that federal policymakers envisioned a decade and a half ago when they embraced the cloud revolution and created FedRAMP to help safeguard the government’s cybersecurity. The program’s layers of review, which included an assessment by outside experts, were supposed to ensure that service providers like Microsoft could be entrusted with the government’s secrets. But ProPublica’s investigation — drawn from internal FedRAMP memos, logs, emails, meeting minutes, and interviews with seven former and current government employees and contractors — found breakdowns at every juncture of that process. It also found a remarkable deference to Microsoft, even as the company’s products and practices were central to two of the most damaging cyberattacks ever carried out against the government.

FedRAMP first raised questions about GCC High’s security in 2020 and asked Microsoft to provide detailed diagrams explaining its encryption practices. But when the company produced what FedRAMP considered to be only partial information in fits and starts, program officials did not reject Microsoft’s application. Instead, they repeatedly pulled punches and allowed the review to drag out for the better part of five years. And because federal agencies were allowed to deploy the product during the review, GCC High spread across the government as well as the defense industry. By late 2024, FedRAMP reviewers concluded that they had little choice but to authorize the technology — not because their questions had been answered or their review was complete, but largely on the grounds that Microsoft’s product was already being used across Washington.

Today, key parts of the federal government, including the Justice and Energy departments, and the defense sector rely on this technology to protect highly sensitive information that, if leaked, “could be expected to have a severe or catastrophic adverse effect” on operations, assets and individuals, the government has said.

“This is not a happy story in terms of the security of the U.S.,” said Tony Sager, who spent more than three decades as a computer scientist at the National Security Agency and now is an executive at the nonprofit Center for Internet Security.

For years, the FedRAMP process has been equated with actual security, Sager said. ProPublica’s findings, he said, shatter that facade.

“This is not security,” he said. “This is security theater.”

ProPublica is exposing the government’s reservations about this popular product for the first time. We are also revealing Microsoft’s yearslong inability to provide the encryption documentation and evidence the federal reviewers sought.

The revelations come as the Justice Department ramps up scrutiny of the government’s technology contractors. In December, the department announced the indictment of a former employee of Accenture who allegedly misled federal agencies about the security of the company’s cloud platform and its compliance with FedRAMP’s standards. She has pleaded not guilty. Accenture, which was not charged with wrongdoing, has said that it “proactively brought this matter to the government’s attention” and that it is “dedicated to operating with the highest ethical standards.”

Microsoft has also faced questions about its disclosures to the government. As ProPublica reported last year, the company failed to inform the Defense Department about its use of China-based engineers to maintain the government’s cloud systems, despite Pentagon rules stipulating that “No Foreign persons may have” access to its most sensitive data. The department is investigating the practice, which officials say could have compromised national security.

Microsoft has defended its program as “tightly monitored and supplemented by layers of security mitigations,” but after ProPublica’s story published last July, the company announced that it would stop using China-based engineers for Defense Department work.

In response to written questions for this story and in an interview, Microsoft acknowledged the yearslong confrontation with FedRAMP but also said it provided “comprehensive documentation” throughout the review process and “remediated findings where possible.”

“We stand by our products and the comprehensive steps we’ve taken to ensure all FedRAMP-authorized products meet the security and compliance requirements necessary,” a spokesperson said in a statement, adding that the company would “continue to work with FedRAMP to continuously review and evaluate our services for continued compliance.”

But these days, ProPublica found, there aren’t many people left at FedRAMP to work with.

The program was an early target of the Trump administration’s Department of Government Efficiency, which slashed its staff and budget. Even FedRAMP acknowledges it is operating “with an absolute minimum of support staff” and “limited customer service.” The roughly two dozen employees who remain are “entirely focused on” delivering authorizations at a record pace, FedRAMP’s director has said. Today, its annual budget is just $10 million, its lowest in a decade, even as it has boasted record numbers of new authorizations for cloud products.

The consequence of all this, people who have worked for FedRAMP told ProPublica, is that the program now is little more than a rubber stamp for industry. The implications of such a downsizing for federal cybersecurity are far-reaching, especially as the administration encourages agencies to adopt cloud-based artificial intelligence tools, which draw upon reams of sensitive information.

The General Services Administration, which houses FedRAMP, defended the program, saying it has undergone “significant reforms to strengthen governance” since GCC High arrived in 2020. “FedRAMP’s role is to assess if cloud services have provided sufficient information and materials to be adequate for agency use, and the program today operates with strengthened oversight and accountability mechanisms to do exactly that,” a GSA spokesperson said in an emailed statement.

The agency did not respond to written questions regarding GCC High.

About two decades ago, federal officials predicted that the cloud revolution, providing on-demand access to shared computing via the internet, would usher in an era of cheaper, more secure and more efficient information technology.

Moving to the cloud meant shifting away from on-premises servers owned and operated by the government to those in massive data centers maintained by tech companies. Some agency leaders were reluctant to relinquish control, while others couldn’t wait to.

In an effort to accelerate the transition, the Obama administration issued its “Cloud First” policy in 2011, requiring all agencies to implement cloud-based tools “whenever a secure, reliable, cost-effective” option existed. To facilitate adoption, the administration created FedRAMP, whose job was to ensure the security of those tools.

FedRAMP’s “do once, use many times” system was intended to streamline and strengthen the government procurement process. Previously, each agency using a cloud service vetted it separately, sometimes applying different interpretations of federal security requirements. Under the new program, agencies would be able to skip redundant security reviews because FedRAMP authorization indicated that the product had already met standardized requirements. Authorized products would be listed on a government website known as the FedRAMP Marketplace.

On paper, the program was an exercise in efficiency. But in practice, the small FedRAMP team could not keep up with the flood of demand from tech companies that wanted their products authorized.

The slow approval process frustrated both the tech industry, eager for a share in the billions of federal dollars up for grabs, and government agencies that were under pressure to migrate to the cloud. These dynamics sometimes pitted the cloud industry and agency officials together against FedRAMP. The backlog also prompted many agencies to take an alternative path: performing their own reviews of the products they wanted to adopt, using FedRAMP’s standards.

It was through this “agency path” that GCC High entered the federal bloodstream, with the Justice Department paving the way. Initially, some Justice officials were nervous about the cloud and who might have access to its information, which includes highly sensitive court and law enforcement records, a Justice Department official involved in the decision told ProPublica. The department’s cybersecurity program required it to ensure that only U.S. citizens “access or assist in the development, operation, management, or maintenance” of its IT systems, unless a waiver was granted. Justice’s IT specialists recommended pursuing GCC High, believing it could meet the elevated security needs, according to the official, who spoke on condition of anonymity because they were not authorized to discuss internal matters.

Pursuant to FedRAMP’s rules, Microsoft had GCC High evaluated by a so-called third-party assessment organization, which is supposed to provide an independent review of whether the product has met federal standards. The Justice Department then performed its own evaluation of GCC High using those standards and ruled the offering acceptable.

By early 2020, Melinda Rogers, Justice’s deputy chief information officer, made the decision official and soon deployed GCC High across the department.

It was a milestone for all involved. Rogers had ushered the Justice Department into the cloud, and Microsoft had gained a significant foothold in the cutthroat market for the federal government’s cloud computing business.

Moreover, Rogers’ decision placed GCC High on the FedRAMP Marketplace, the government’s influential online clearinghouse of all the cloud providers that are under review or already authorized. Its mere mention as “in process” was a boon for Microsoft, amounting to free advertising on a website used by organizations seeking to purchase cloud services bearing what is widely seen as the government’s cybersecurity seal of approval.

That April, GCC High landed at FedRAMP’s office for review, the final stop on its bureaucratic journey to full authorization.

In theory, there shouldn’t have been much for FedRAMP’s team to do after the third-party assessor and Justice reviewed GCC High, because all parties were supposed to be following the same requirements.

But it was around this time that the Government Accountability Office, which investigates federal programs, discovered breakdowns in the process, finding that agency reviews sometimes were lacking in quality. Despite missing details, FedRAMP went on to authorize many of these packages. Acknowledging these shortcomings, FedRAMP began to take a harder look at new packages, a former reviewer said.

This was the environment in which Microsoft’s GCC High application entered the pipeline. The name GCC High was an umbrella covering many services and features within Office 365 that all needed to be reviewed. FedRAMP reviewers quickly noticed key material was missing.

The team homed in on what it viewed as a fundamental document called a “data flow diagram,” former members told ProPublica. The illustration is supposed to show how data travels from Point A to Point B — and, more importantly, how it’s protected as it hops from server to server. FedRAMP requires data to be encrypted while in transit to ensure that sensitive materials are protected even if they’re intercepted by hackers.

But when the FedRAMP team asked Microsoft to produce the diagrams showing how such encryption would happen for each service in GCC High, the company balked, saying the request was too challenging. So the reviewers suggested starting with just Exchange Online, the popular email platform.

“This was our litmus test to say, ‘This isn’t the only thing that’s required, but if you’re not doing this, we are not even close yet,’” said one reviewer who spoke on condition of anonymity because they were not authorized to discuss internal matters. Once they reached the appropriate level of detail, they would move from Exchange to other services within GCC High.

It was the kind of detail that other major cloud providers such as Amazon and Google routinely provided, members of the FedRAMP team told ProPublica. Yet Microsoft took months to respond. When it did, the former reviewer said, it submitted a white paper that discussed GCC High’s encryption strategy but left out the details of where on the journey data actually becomes encrypted and decrypted — so FedRAMP couldn’t assess that it was being done properly.

A Microsoft spokesperson acknowledged that the company had “articulated a challenge related to illustrating the volume of information being requested in diagram form” but “found alternate ways to share that information.”

Rogers, who was hired by Microsoft in 2025, declined to be interviewed. In response to emailed questions, the company provided a statement saying that she “stands by the rigorous evaluation that contributed to” her authorization of GCC High. A spokesperson said there was “absolutely no connection” between her hiring and the decisions in the GCC High process, and that she and the company complied with “all rules, regulations, and ethical standards.”

The Justice Department declined to respond to written questions from ProPublica.

As 2020 came to a close, a national security crisis hit Washington that underscored the consequences of cyber weakness. Russian state-sponsored hackers had been quietly working their way through federal computer systems for much of the year and vacuuming up sensitive data and emails from U.S. agencies — including the Justice Department.

At the time, most of the blame fell on a Texas-based company called SolarWinds, whose software provided hackers their initial opening and whose name became synonymous with the attack. But, as ProPublica has reported, the Russians leveraged that opening to exploit a long-standing weakness in a Microsoft product — one that the company had refused to fix for years, despite repeated warnings from one of its engineers. Microsoft has defended its decision not to address the flaw, saying that it received “multiple reviews” and that the company weighs a variety of factors when making security decisions.

In the aftermath, the Biden administration took steps to bolster the nation’s cybersecurity. Among them, the Justice Department announced a cyber-fraud initiative in 2021 to crack down on companies and individuals that “put U.S. information or systems at risk by knowingly providing deficient cybersecurity products or services, knowingly misrepresenting their cybersecurity practices or protocols, or knowingly violating obligations to monitor and report cybersecurity incidents and breaches.”

Deputy Attorney General Lisa Monaco said the department would use the False Claims Act to pursue government contractors “when they fail to follow required cybersecurity standards — because we know that puts all of us at risk.”

But if Microsoft felt any pressure from the SolarWinds attack or from the Justice Department’s announcement, it didn’t manifest in the FedRAMP talks, according to former members of the FedRAMP team.

The discourse between FedRAMP and Microsoft fell into a pattern. The parties would meet. Months would go by. Microsoft would return with a response that FedRAMP deemed incomplete or irrelevant. To bolster the chances of getting the information it wanted, the FedRAMP team provided Microsoft with a template, describing the level of detail it expected. But the diagrams Microsoft returned never met those expectations.

“We never got past Exchange,” one former reviewer said. “We never got that level of detail. We had no visibility inside.”

In an interview with ProPublica, John Bergin, the Microsoft official who became the government’s main contact, acknowledged the prolonged back-and-forth but blamed FedRAMP, equating its requests for diagrams to a “rock fetching exercise.”

“We were maybe incompetent in how we drew drawings because there was no standard to draw them to,” he said. “Did we not do it exactly how they wanted? Absolutely. There was always something missing because there was no standard.”

A Microsoft spokesperson said without such a standard, “cloud providers were left to interpret the level of abstraction and representation on their own,” creating “inconsistency and confusion, not an unwillingness to be transparent.”

But even Microsoft’s own engineers had struggled over the years to map the architecture of its products, according to two people involved in building cloud services used by federal customers. At issue, according to people familiar with Microsoft’s technology, was the decades-old code of its legacy software, which the company used in building its cloud services.

One FedRAMP reviewer compared it to a “pile of spaghetti pies.” The data’s path from Point A to Point B, the person said, was like traveling from Washington to New York with detours by bus, ferry and airplane rather than just taking a quick ride on Amtrak. And each one of those detours represents an opportunity for a hijacking if the data isn’t properly encrypted.

Other major cloud providers such as Amazon and Google built their systems from the ground up, said Sager, the former NSA computer scientist, who worked with all three companies during his time in government.

Microsoft’s system is “not designed for this kind of isolation of ‘secure’ from ‘not secure,’” Sager said.

A Microsoft spokesperson acknowledged the company faces a unique challenge but maintained that its cloud products meet federal security requirements.

“Unlike providers that started later with a narrower product scope, Microsoft operates one of the broadest enterprise and government platforms in the world, supporting continuity for millions of customers while simultaneously modernizing at scale,” the spokesperson said in emailed responses. “That complexity is not ‘spaghetti,’ but it does mean the work of disentangling, isolating, and hardening systems is continuous.”

The spokesperson said that since 2023, Microsoft has made “security‑first architectural redesign, legacy risk reduction, and stronger isolation guarantees a top, company‑wide priority.”

The FedRAMP team was not the only party with reservations about GCC High. Microsoft’s third-party assessment organizations also expressed concerns.

The firms are supposed to be independent but are hired and paid by the company being assessed. Acknowledging the potential for conflicts of interest, FedRAMP has encouraged the assessment firms to confidentially back-channel to its reviewers any negative feedback that they were unwilling to bring directly to their clients or reflect in official reports.

In 2020, two third-party assessors hired by Microsoft, Coalfire and Kratos, did just that. They told FedRAMP that they were unable to get the full picture of GCC High, a former FedRAMP reviewer told ProPublica.

“Coalfire and Kratos both readily admitted that it was difficult to impossible to get the information required out of Microsoft to properly do a sufficient assessment,” the reviewer told ProPublica.

The back channel helped surface cybersecurity issues that otherwise might never have been known to the government, people who have worked with and for FedRAMP told ProPublica. At the same time, they acknowledged its existence undermined the very spirit and intent of having independent assessors.

A spokesperson for Coalfire, the firm that initially handled the GCC High assessment, requested written questions from ProPublica, then declined to respond.

A spokesperson for Kratos, which replaced Coalfire as the GCC High assessor, declined an interview request. In an emailed response to written questions, the spokesperson said the company stands by its official assessment and recommendation of GCC High and “absolutely refutes” that it “ever would sign off on a product we were unable to fully vet.” The company “has open and frank conversations” with all customers, including Microsoft, which “submitted all requisite diagrams to meet FedRAMP-defined requirements,” the spokesperson said.

Kratos said it “spent extensive time working collaboratively with FedRAMP in their review” and does not consider such discussions to be “backchanneling.”

FedRAMP, however, was dissatisfied with Kratos’ ongoing work and believed the firm “should be pushing back” on Microsoft more, the former reviewer said. It placed Kratos on a “corrective action plan,” which could eventually result in loss of accreditation. The company said it did not agree with FedRAMP’s action but provided “additional trainings for some internal assessors” in response to it.

The Microsoft spokesperson told ProPublica the company has “always been responsive to requests” from Kratos and FedRAMP. “We are not aware of any backchanneling, nor do we believe that backchanneling would have been necessary given our transparency and cooperation with auditor requests,” the spokesperson said.

In response to questions from ProPublica about the process, the GSA said in an email that FedRAMP’s system “does not create an inherent conflict of interest for professional auditors who meet ethical and contractual performance expectations.”

GSA did not respond to questions about back-channeling but said the “correct process” is for a third-party assessor to “state these problems formally in a finding during the security assessment so that the cloud service provider has an opportunity to fix the issue.”

The back-and-forth between the FedRAMP reviewers and Microsoft’s team went on for years with little progress. Then, in the summer of 2023, the program’s interim director, Brian Conrad, got a call from the White House that would alter the course of the review.

Chinese state-sponsored hackers had infiltrated GCC, the lower-cost version of Microsoft’s government cloud, and stolen data and emails from the commerce secretary, the U.S. ambassador to China and other high-ranking government officials. In the aftermath, Chris DeRusha, the White House’s chief information security officer, wanted a briefing from FedRAMP, which had authorized GCC.

The decision predated Conrad’s tenure, but he told ProPublica that he left the conversation with several takeaways. First, FedRAMP must hold all cloud providers — including Microsoft — to the same standards. Second, he had the backing of the White House in standing firm. Finally, FedRAMP would feel the political heat if any cloud service with a FedRAMP authorization were hacked.

DeRusha confirmed Conrad’s account of the phone call but declined to comment further.

Within months, Conrad informed Microsoft that FedRAMP was ending the engagement on GCC High.

“After three years of collaboration with the Microsoft team, we still lack visibility into the security gaps because there are unknowns that Microsoft has failed to address,” Conrad wrote in an October 2023 email. This, he added, was not for FedRAMP’s lack of trying. Staffers had spent 480 hours of review time, had conducted 18 “technical deep dive” sessions and had numerous email exchanges with the company over the years. Yet they still lacked the data flow diagrams, crucial information “since visibility into the encryption status of all data flows and stores is so important,” he wrote.

If Microsoft still wanted FedRAMP authorization, Conrad wrote, it would need to start over.

A FedRAMP reviewer, explaining the decision to the Justice Department, said the team was “not asking for anything above and beyond what we’ve asked from every other” cloud service provider, according to meeting minutes reviewed by ProPublica. But the request was particularly justified in Microsoft’s case, the reviewer told the Justice officials, because “each time we’ve actually been able to get visibility into a black box, we’ve uncovered an issue.”

“We can’t even quantify the unknowns, which makes us very uncomfortable,” the reviewer said, according to the minutes.

Microsoft was furious. Failing to obtain authorization and starting the process over would signal to the market that something was wrong with GCC High. Customers were already confused and concerned about the drawn-out review, which had become a hot topic in an online forum used by government and technology insiders. There, Wakeman, the Microsoft cybersecurity architect, deflected blame, saying the government had been “dragging their feet on it for years now.”

Meanwhile, to build support for Microsoft’s case, Bergin, the company’s point person for FedRAMP and a former Army official, reached out to government leaders, including one from the Justice Department.

The Justice official, who spoke on condition of anonymity because they were not authorized to discuss the matter, said Bergin complained that the delay was hampering Microsoft’s ability “to get this out into the market full sail.” Bergin then pushed the Justice Department to “throw around our weight” to help secure FedRAMP authorization, the official said.

That December, as the parties gathered to hash things out at GSA’s Washington headquarters, Justice did just that. Rogers, who by then had been promoted to the department’s chief information officer, sat beside Bergin — on the opposite side of the table from Conrad, the FedRAMP director.

Rogers and her Justice colleagues had a stake in the outcome. Since authorizing and deploying GCC High, she had received accolades for her work modernizing the department’s IT and cybersecurity. But without FedRAMP’s stamp of approval, she would be the government official left holding the bag if GCC High were involved in a serious hack. At the same time, the Justice Department couldn’t easily back out of using GCC High because once a technology is widely deployed, pulling the plug can be costly and technically challenging. And from its perspective, the cloud was an improvement over the old government-run data centers.

Shortly after the meeting kicked off, Bergin interrupted a FedRAMP reviewer who had been presenting PowerPoint slides. He said the Justice Department and third-party assessor had already reviewed GCC High, according to meeting minutes. FedRAMP “should essentially just accept” their findings, he said.

Then, in a shock to the FedRAMP team, Rogers backed him up and went on to criticize FedRAMP’s work, according to two attendees.

In its statement, Microsoft said Rogers maintains that FedRAMP’s approach “was misguided and improperly dismissed the extensive evaluations performed by DOJ personnel.”

Bergin did not dispute the account, telling ProPublica that he had been trying to argue that it is the purview of third-party assessors such as Kratos — not FedRAMP — to evaluate the security of cloud products. And because FedRAMP must approve the third-party assessment firms, the program should have taken its issues up with Kratos.

“When you are the regulatory agency who determines who the auditors are and you refuse to accept your auditors’ answers, that’s not a ‘me’ problem,” Bergin told ProPublica.

The GSA did not respond to questions about the meeting. The Justice Department declined to comment.

If there was any doubt about the role of FedRAMP, the White House issued a memorandum in the summer of 2024 that outlined its views. FedRAMP, it said, “must be capable of conducting rigorous reviews” and requiring cloud providers to “rapidly mitigate weaknesses in their security architecture.” The office should “consistently assess and validate cloud providers’ complex architectures and encryption schemes.”

But by that point, GCC High had spread to other federal agencies, with the Justice Department’s authorization serving as a signal that the technology met federal standards.

It also spread to the defense sector, since the Pentagon required that cloud products used by its contractors meet FedRAMP standards. While it did not have FedRAMP authorization, Microsoft marketed GCC High as meeting the requirements, selling it to companies such as Boeing that research, develop and maintain military weapons systems.

But with the FedRAMP authorization up in the air, some contractors began to worry that by using GCC High, they were out of compliance. That could threaten their contracts, which, in turn, could impact Defense Department operations. Pentagon officials called FedRAMP to inquire about the authorization stalemate.

The Defense Department acknowledged but did not respond to written questions from ProPublica.

Rogers also kept pressing FedRAMP to “get this thing over the line,” former employees of the GSA and FedRAMP said. It was the “opinion of the staff and the contractors that she simply was not willing to put heat to Microsoft on this” and that the Justice Department “was too sympathetic to Microsoft’s claims,” Eric Mill, then GSA’s executive director for cloud strategy, told ProPublica.

In the summer of 2024, FedRAMP hired a new permanent director, government technology insider Pete Waterman. Within about a month of taking the job, he restarted the office’s review of GCC High with a new team, which put aside the debate over data flow diagrams and instead attempted to examine evidence from Microsoft. But these reviewers soon arrived at the same conclusion, with the team’s leader complaining about “getting stiff-armed” by Microsoft.

“He came back and said, ‘Yeah, this thing sucks,’” Mill recalled.

While the team was able to work through only two of the many services included in GCC High, Exchange Online and Teams, that was enough for it to identify “issues that are fundamental” to risk management, including “timely remediation of vulnerabilities and vulnerability scanning,” according to a summary of the team’s findings reviewed by ProPublica.

Those issues, as well as a lack of “proper detailed security documentation” from Microsoft, limit “visibility and understanding of the system” and “impair the ability to make informed risk decisions.”

The team concluded, “There is a lack of confidence in assessing the system’s overall security posture.”

A Microsoft spokesperson said in a statement that the company “never received this feedback in any of its communications with FedRAMP.”

When ProPublica read the findings to Bergin, the Microsoft liaison, he said he was surprised.

“That’s pretty damning,” Bergin said, adding that it sounded like language that “would’ve generally been associated with a finding of ‘not worthy.’ If an assessor wrote that, I would be nervous.”

Despite the findings, to the FedRAMP team, turning Microsoft down didn’t seem like an option. “Not issuing an authorization would impact multiple agencies that are already using GCC-H,” the summary document said. The team determined that it was a “better value” to issue an authorization with conditions for continued government oversight.

While authorizations with oversight conditions weren’t unusual, arriving at one under these circumstances was. GCC High reviewers saw problems everywhere, both in what they were able to evaluate and what they weren’t. To them, most of the package remained a vast wilderness of untold risk.

Nevertheless, FedRAMP and Microsoft reached an agreement, and the day after Christmas 2024, GCC High received its FedRAMP authorization. FedRAMP appended a cover report to the package laying out its deficiencies and noting it carried unknown risks, according to people familiar with the report.

It emphasized that agencies should carefully review the package and engage directly with Microsoft on any questions.

Microsoft told ProPublica that it has met the conditions of the agreement and has “stayed within the performance metrics required by FedRAMP” to ensure that “risks are identified, tracked, remediated, and transparently communicated.”

But under the Trump administration, there aren’t many people left at FedRAMP to check.

While the Biden-era guidance said FedRAMP “must be an expert program that can analyze and validate the security claims” of cloud providers, the GSA told ProPublica that the program’s role is “not to determine if a cloud service is secure enough.” Rather, it is “to ensure agencies have sufficient information to make these risk decisions.”

The problem is that agencies often lack the staff and resources to do thorough reviews, which means the whole system is leaning on the claims of the cloud companies and the assessments of the third-party firms they pay to evaluate them. Under the current vision, critics say, FedRAMP has lost the plot.

“FedRAMP’s job is to watch the American people’s back when it comes to sharing their data with cloud companies,” said Mill, the former GSA official, who also co-authored the 2024 White House memo. “When there’s a security issue, the public doesn’t expect FedRAMP to say they’re just a paper-pusher.”

Meanwhile, at the Justice Department, officials are finding out what FedRAMP meant by the “unknown unknowns” in GCC High. Last year, for example, they discovered that Microsoft relied on China-based engineers to service their sensitive cloud systems despite the department’s prohibition against non-U.S. citizens assisting with IT maintenance.

Officials learned about this arrangement — which was also used in GCC High — not from FedRAMP or from Microsoft but from a ProPublica investigation into the practice, according to the Justice employee who spoke with us.

A Microsoft spokesperson acknowledged that the written security plan for GCC High that the company submitted to the Justice Department did not mention foreign engineers, though he said Microsoft did communicate that information to Justice officials before 2020. Nevertheless, Microsoft has since ended its use of China-based engineers in government systems.

Former and current government officials worry about what other risks may be lurking in GCC High and beyond.

The GSA told ProPublica that, in general, “if there is credible evidence that a cloud service provider has made materially false representations, that matter is then appropriately referred to investigative authorities.”

Ironically, the ultimate arbiter of whether cloud providers or their third-party assessors are living up to their claims is the Justice Department itself. The recent indictment of the former Accenture employee suggests it is willing to use this power. In a court document, the Justice Department alleges that the ex-employee made “false and misleading representations” about the cloud platform’s security to help the company “obtain and maintain lucrative federal contracts.” She is also accused of trying to “influence and obstruct” Accenture’s third-party assessors by hiding the product’s deficiencies and telling others to conceal the “true state of the system” during demonstrations, the department said. She has pleaded not guilty.

There is no public indication that such a case has been brought against Microsoft or anyone involved in the GCC High authorization. The Justice Department declined to comment. Monaco, the deputy attorney general who launched the department’s initiative to pursue cybersecurity fraud cases, did not respond to requests for comment.

She left her government position in January 2025. Microsoft hired her to become its president of global affairs.

A company spokesperson said Monaco’s hiring complied with “all rules, regulations, and ethical standards” and that she “does not work on any federal government contracts or have oversight over or involvement with any of our dealings with the federal government.”

Filed Under: cloud computing, fedramp, gcc high, gsa, security

Companies: microsoft

Activision is ending Call of Duty: Warzone support for PS4 and Xbox One later this year, drawing a line under the battle royale’s last-gen era at a moment when the cost of upgrading to current hardware has risen sharply for players who have held off.

The game will be removed from PS4 and Xbox One storefronts on 4 June and will no longer be available to download, with Activision removing the in-game store on both platforms on 25 June before Warzone becomes fully unplayable once Call of Duty: Modern Warfare 4 season 1 begins later this year.

The timing adds friction for remaining last-gen players, as both Sony and Microsoft have raised console prices over the past year, leaving the PS5 and Xbox Series X each sitting $150 above their original $499 launch prices.

Activision announced the Warzone changes on the same day it revealed Call of Duty: Modern Warfare 4, which will launch on PS5, Xbox Series S and X, and Nintendo Switch 2, marking the first Call of Duty title to appear on a Nintendo platform following the 10-year deal Microsoft agreed with Nintendo as part of its Activision Blizzard acquisition.

Players on PS4 and Xbox One will need to move to a PS5 or Xbox Series S or X to continue playing Warzone once season 6 of Call of Duty: Black Ops 7 concludes, with Activision confirming the full cutoff is tied to the Modern Warfare 4 season 1 launch window.

The deprecation reflects a broader industry shift away from last-gen hardware, with developers increasingly unwilling to maintain split builds across console generations as the PS4 and Xbox One user base continues to shrink more than four years after their successors launched.

All of that remains subject to change in terms of exact timing, however, with Activision yet to confirm a specific date for when Modern Warfare 4 season 1 will begin and last-gen support will officially end for those already playing.

SpaceX won a $4.16B Space Force contract for missile-tracking satellites. Combined with a $2.29B deal from Tuesday, it holds $6.45B in Golden Dome work.

The US Space Force awarded SpaceX a $4.16 billion contract on Friday to build satellites that track foreign aircraft and missiles. The programme is called Space-Based Advanced Moving Target Indicator, or SB-AMTI. It is part of the Trump administration’s $185 billion Golden Dome missile defence initiative.

Two days earlier, the Space Force awarded SpaceX $2.29 billion for the Space Data Network Backbone, a secure communications layer built on Starshield satellites. Combined, SpaceX now holds approximately $6.45 billion in Golden Dome contracts. That figure exceeds the combined prototype awards given to every other company in the programme.

The AMTI satellites are designed as an interconnected system combining space-based sensors, secure communications links, and AI-enabled ground processing. The system will detect, track, and alert for airborne threats from orbit. The US has historically relied on ground-based sensors and military aircraft for this function.

Placing detection capabilities in space eliminates potential blind spots that ground-based systems cannot cover. The Space Force described the architecture as designed to “drive closer cooperation across the government space industrial base.” SpaceX must integrate the AMTI sensors with the data transport backbone it is already building under the separate $2.29 billion contract.

The scale of SpaceX’s Golden Dome position is unprecedented for a commercial contractor. The programme has distributed more than $3.2 billion in prototype contracts across SpaceX and 11 other firms, including Anduril, Lockheed Martin, Northrop Grumman, Raytheon, and True Anomaly. SpaceX’s $4.16 billion AMTI contract alone exceeds that entire prototype pool.

SpaceX filed its IPO prospectus last week, targeting a valuation of more than $1.75 trillion. The company is expected to raise approximately $75 billion in what would be the largest IPO in history. Every new defence contract adds to the revenue narrative that underpins the listing.

The timing is notable. Two major Golden Dome contracts awarded in the same week as a Starship V3 test flight and an IPO roadshow preparation creates a cadence that looks orchestrated to maximise pre-IPO momentum. SpaceX held more than $22 billion in government contracts as of 2024. The Golden Dome awards add meaningfully to that base.

The Golden Dome programme’s total cost has risen to $185 billion, up $10 billion from the original estimate, after the programme director approved an acceleration of space-based capabilities in March. The fiscal 2027 budget request includes initial Golden Dome funding. Full-scale procurement is expected to begin post-2028.

True Anomaly raised $650 million in April after being selected for Golden Dome space-based interceptor prototypes. Anduril raised $5 billion at a $61 billion valuation. Both are working on separate Golden Dome layers. But neither holds a position comparable to SpaceX’s combined sensing, tracking, and communications role.

The conflict-of-interest concerns that have surrounded SpaceX’s government contracting are amplified by the Golden Dome awards. Elon Musk is simultaneously the largest financial backer of the sitting president, the CEO of the company receiving the contracts, and the owner of a social media platform that shapes public discourse about the programme. The IPO prospectus acknowledges government contract risk but does not address the political dimensions directly.

Friday’s Starship V3 test flight demonstrated that SpaceX can deploy satellites from the vehicle, even though the Super Heavy booster was destroyed after separation. The AMTI constellation will eventually require launch capacity that only Starship can provide at scale. The contract, the IPO, and the rocket programme are three legs of the same strategy.

Two contracts, $6.45 billion, four days. SpaceX is not just participating in Golden Dome. It is becoming the programme’s commercial backbone. Whether that concentration of national security infrastructure in a single pre-IPO company is a strategic advantage or a systemic risk is a question the Space Force has implicitly answered by signing the contracts. The market will answer it again when the IPO prices in June.

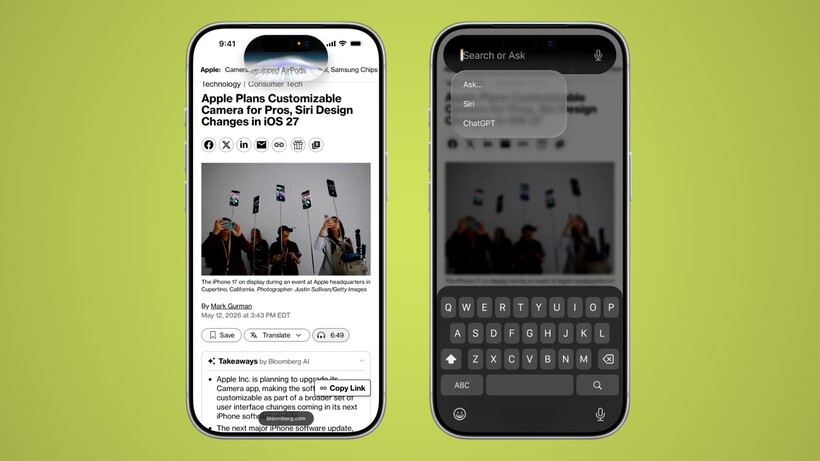

Apple is preparing to overhaul Siri at WWDC 2026 in ways that go well beyond a simple feature update, and we’ve just had our first look at the redesigned UI.

Bloomberg’s Mark Gurman has published an early preview of the company’s redesign of the iPhone’s interface, placing its Gemini-powered AI agent at the centre of everyday use.

The redesign moves Siri into the iPhone’s Dynamic Island, where it will remain accessible via voice, the power button, or a new downward swipe from the top centre of the screen that opens a “Search or Ask” interface drawing on elements from iOS 26‘s existing Search experience.

That interface brings together familiar features like Siri Suggestions alongside new functionality, with Gurman reporting it will support app launches, text messages, calendar appointments, note searches, and more, with results surfacing in a rich text card that expands directly from the Dynamic Island.

Swiping further down opens a full chatbot-style conversation view inside a dedicated Siri app, which Apple intends to position as a direct competitor to ChatGPT and Claude, supporting text and voice input alongside photo and document uploads and persistent conversation history.

To accommodate Siri’s new prominence, Apple is moving Notification Centre access to a pull-down from the top left of the screen, a small but consequential shift that reflects how central the assistant has become to the iPhone’s navigation logic.

Camera and Photos also see significant changes, with a new mode set to replace Visual Intelligence by combining Google reverse image search with third-party AI analysis, while the Photos app gains Reframe and Extend tools that use AI to alter image perspective or generate content beyond a photo’s existing frame.

Underpinning all of it is a Siri that can search the web and draw on-screen context and personal data to complete tasks, with Gurman noting the assistant will be able to cross-reference a user’s calendar availability before scheduling anything.

All of that remains subject to change, however, with Gurman noting Apple tests multiple designs internally and the final version shown at WWDC on 9 June could differ from what Bloomberg has illustrated, with a release expected as early as September.

The European Commission has announced its second fine ever against an international company for violating the Digital Services Act. Temu, the controversial Chinese online marketplace for low-cost products, was found to have played a role in the sale of illegal goods that could have harmed consumers in the European Union.

Read Entire Article

Source link

AI is everywhere now, or at least that is what the industry keeps telling us. It is in browsers, search engines, image editors, office suites, developer tools, Windows, phones, and soon enough, probably your toaster. But there is a difference between AI being available and AI becoming part of your…

Read Entire Article

Source link

The AI movie Dreams of Violets is the creation of Ash Koosha and his brother Pooya. As for the direction, writing, and production of this movie, the two brothers created the film as part of their production company Fountain 0. At the time of its production, Ash was in London, and the movie took about three months to make, with a production budget of just $2,000.

Yes, everything had been created using AI; at first, Ash recorded some temporary voices for the characters before taking various methods to translate text into an animation sequence. Kling AI had the responsibility of translating still images into video footage with the help of Claude. The twin brothers also used their own technology at Fountain 0 to keep the characters consistent throughout scenes as well as to make camera movements look natural.

Sale

The story is set in Tehran in January 2026. It is based on actual reports, images, and accounts from persons who observed the protests, which were greeted with violent force by the authorities and resulted in major bloodshed. The film tells the narrative of five strangers who find themselves in the same dead-end alley before dawn, trapped between forces closing in on them. A soldier stumbles across them, while a child named Amir watches over them from a window in his wheelchair.

According to Ash Koosha, it was a very personal project for him, because he and his brother had to flee from Iran in 2009, but nowadays the news becomes really important because it’s very hard to receive trustworthy reports while you have no communications and everything around is unknown to you. The movie itself is rather a fiction based on reality, because Ash wanted to concentrate on the human aspect of the matter, and not on the chaos itself.

The Tribeca Festival elected to include Dreams of Violets in their main schedule, and it will screen in New York on June 10th as part of a run that begins June 3rd and ends June 14th. Festival co-founder Jane Rosenthal was amazed by how they were able to blend new technology with the strength of the tales being told, and she believes it’s an excellent example of how technology is being used to deliver stories that we really need to hear right now.

[Source]

I used to say that all my best days started with waking up in a sleeping bag. Waking up in a sleeping bag usually means you’re out there somewhere, doing something interesting. In the past couple of years, though, I’ve found myself waking up out there to wonderful days spent doing interesting things, but without a sleeping bag in sight. Instead, I’m sleeping in what thru-hikers and ultralight redditors call a quilt.

This is not a quilt like the one your grandmother gave you. Backpacking quilts are made of nylon and filled with down like a traditional sleeping bag. The difference is that they lay over you like, well, a quilt, rather than wrapping all the way around you like a sleeping bag. The benefit is twofold: A quilt is lighter, meaning less weight to carry in your pack, and, as an added bonus, I sleep better than I ever have in the backcountry.

Let’s face it, there’s a reason backpackers have nicknamed sleeping bags “mummy bags.” They’re constricting at the best of times, suffocating at the worst. I don’t know about you, but for me, there’s nothing about a mummy that I want to emulate, not even when I’m sleeping. I was, therefore, as well primed as anyone to jump on the quilt bandwagon when it really began to take off a few years ago. And yet, I didn’t. Perhaps it was something like Stockholm Syndrome; I’d finally accepted the mummy thing and was, honestly, a little nervous to give up my sleeping bag for a quilt. But then I did, and I’m never coming back. Or mostly never coming back.

But first, what’s the difference between a sleeping bag and a quilt? As briefly noted above, the quilt goes on top of you, rather than all around you like a sleeping bag. Consider the burrito vs. the taco. In this case, the sleeping bag/quilt is the tortilla and you are the filling. Would you rather be wrapped up like a burrito? Sleeping bag. Prefer the obviously superior experience of the taco, with its warm, soft tortilla lying on top of you? You’re (potentially) a quilt person.

The science here is that when you lie down in your traditional sleeping bag, the weight of your body forces most of the down fill off to the sides. The down left under you is so compacted you’re not getting any real insulation from it anyway—so why carry that extra nylon and down around? Enter the quilt. Quilts get rid of the bottom layer of useless nylon and down, and lay over you like the quilt on your bed at home. Quilts typically weigh less than sleeping bags and pack down smaller, making them very popular with backpackers trying to reduce weight and save space.

While I think the weight savings make quilts a great choice for anyone looking to carry a lighter load, how much you love a quilt over a sleeping bag will depend somewhat on how you sleep. If you’re a taco person, and the thought of having a sleeping bag wrapped up like burrito gives you the sweats just thinking it, the quilt is your happy, happy future. Or, if you like to curl up in a ball, move around a lot at night, are a side sleeper, or want to share covers with your tent mate, then again, the quilt is for you.

If you rarely move around at night, sleeping somewhat like a mummy, then you might not mind a traditional sleeping bag and wouldn’t share my enthusiasm for the quilt.

Photo credit: Sonny Dickson

Images shared this week by longtime leaker Sonny Dickson give the first clear look at the finishes Apple appears ready to offer on the iPhone 18 Pro. Four dummy units sit side by side, each finished in a different shade and built to the same overall shape as last year’s Pro model. Four color choices stand out clearly in the shared photos.

Dark Cherry appears to be a deep, rich color with an almost wine-like depth that can shift to a purple-tinged hue depending on how the light hits it. Light Blue gives off a gentler, more airy vibe, similar to the misty hue of the former base model Sierra Blue finishes or the more traditional era finishes. Silver is back in a very straightforward metal finish, while Dark Gray steps up as the new black option, with its dark finish sitting very close to the deep blue from the iPhone 17 Pro and the classic Black Titanium look from the iPhone 16 Pro days, as anyone who has missed the black option in recent years should be very happy to hear it is back.

Sale

Taking a closer look at the camera area reveals some subtle changes. The rectangular glass strip beneath the primary camera bar has been tweaked to match the surrounding metal frame much better than previously, and it now rests somewhat higher on the body. These improvements are minimal, but they are quite helpful to case manufacturers since they provide them with the exact measurements they need to get started right away. The remainder of the fake units are based on the same layout as the 17 Pro, and it’s nice to see that button placement, port locations, and proportions are all accurate.

As is customary, dummy units like these exist primarily for accessory makers to test their designs and ensure fit and finish before receiving real production units. History suggests that Apple’s color options are occasionally trimmed shortly before they hit shops, so one of these hues (Dark Cherry, Light Blue, or the original black) may yet be removed before the phones arrive in the autumn.

The iPhone 17 Pro was available in Cosmic Orange, Deep Blue, and Silver, but the new version replaces the deep blue with Dark Cherry and Light Blue, as well as the new Black. These early versions already provide a solid indication of what to expect, allowing case makers to finalize their designs and purchasers to determine which one will best suit them months before the official release. With a September launch date now seeming certain, we have a better sense of what colors to expect, although we wouldn’t be surprised if the Ultra, is released before the big day.

Many simulator-style games have their own dedicated controllers, from racing sims with pedals, steering wheels, and shifters to flight sims which have their own joysticks and sometimes entire cockpits. But for how prevalent riding horses is in a wide array of video games from Red Dead Redemption to Zelda to The Witcher we’re not sure we’ve ever seen a controller built specifically for riding virtual horses, at least not until [Squalius] built this horse riding controller.

[Squalius] has been working through a few prototypes of his OpenRidingController and has a fairly complete riding setup now, complete with reins and stirrups for controlling one’s in-game companions. The reins are attached to infrared distance sensors which can send analog signals to the game for controlling steering, and are attached to each other through an elastic band to provide a more realistic feeling when both are pulled to ask the horse to stop. The stirrups can be pulled to tell the horse to move at various speeds, and although a horse doesn’t need to be commanded to jump in real life, this controller provides a method for jumping an in-game horse as well.

Although we’ve mentioned a few games famous for using horses already, [Squalius] also added a handheld joystick to enable his controller to be used in less-conventional games like Minecraft where the player can use a mod to add a horse, and has also used his controller to play DOOM as well. As its name suggests it’s also open source and the code for it is all available on the project’s GitHub page. It’s a type of controller we didn’t realize we were missing until now, and perhaps we would have expected to see one before something like a controller meant for a virtual trombone.

Thanks to [Keith] for the tip!

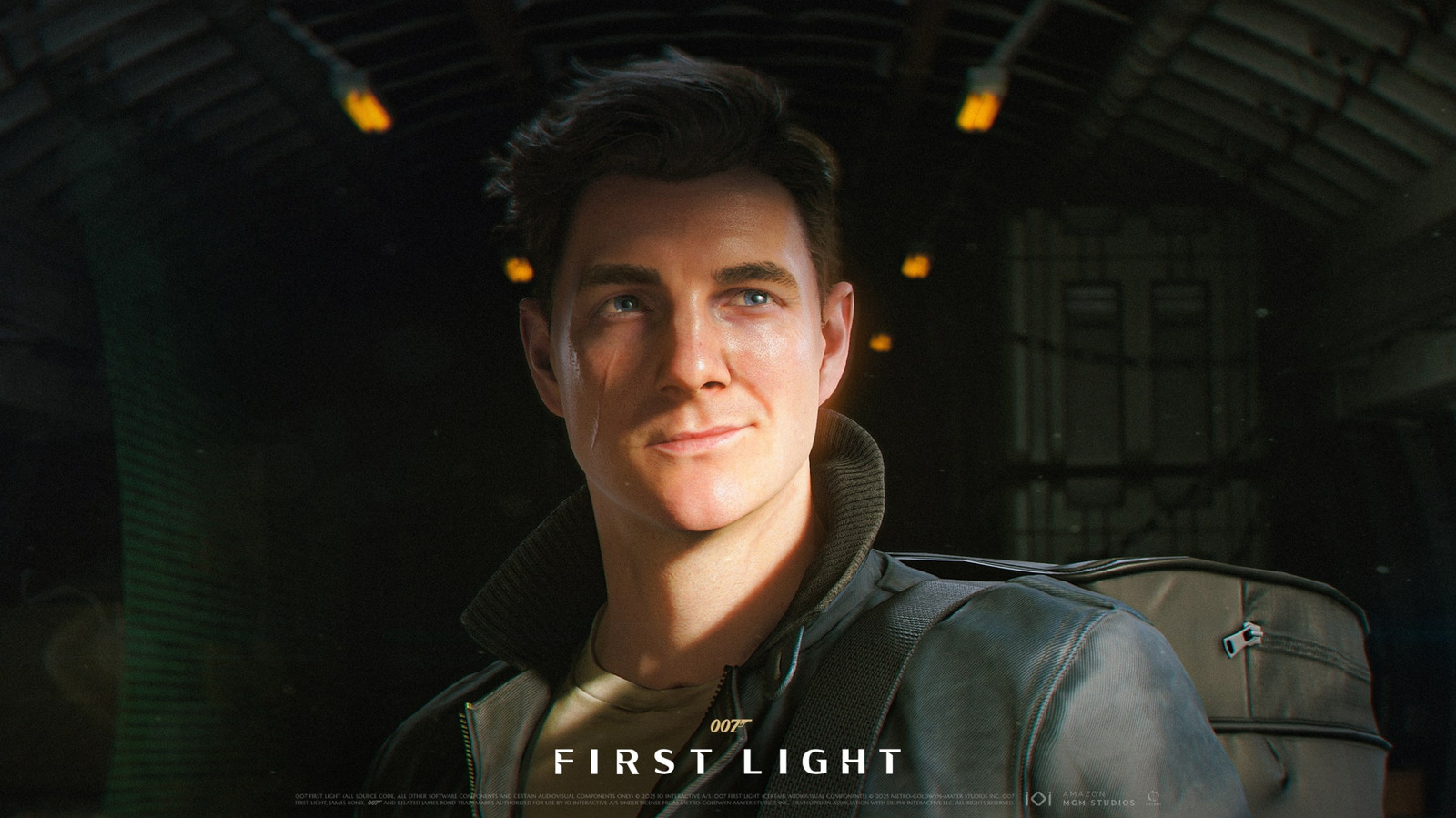

IOI’s Hitman roots are clear from the very beginning of First Light, but they become even more apparent once you reach the end of spy school. First you have to infiltrate a crowded night club to track down a suspect, which hearkens back to a handful of classic Hitman levels. The game’s scale becomes truly apparent in the second mission, where you’re looking for a former MI:6 agent in a boutique hotel (which also happens to be holding a chess tournament). The hotel itself is massive, immaculately designed and filled with dozens of guests and attendees, many of which are involved in scripted routines or conversations. This one portion of First Light’s clockwork pocket universe feels more alive than many soulless open world games.

It’s not quite an immersive sim like the Dishonored games, but in true Hitman fashion, you can accomplish your objectives in multiple ways. Just don’t expect to go in guns blazing. In most scenarios, First Light‘s “License to Kill” feature prohibits you from firing on enemies unless they pull their guns first. It’s really just a reminder that you’re not playing a cold-blooded assassin, and it encourages you to spend your time stealthily moving around environments and taking down enemies silently.

The game is thankfully more forgiving than Hitman if you blow your cover, where doing so could alert the entire map and force you to re-load a save. If an enemy spots Bond, you can just beat them down or slam them into nearby surfaces. Things get more complicated if multiple enemies see you, but you can still proceed with your mission once you take care of them.

While First Light remains relatively grounded most of the time, it wouldn’t be a Bond game without a few elaborate set pieces. You’ll find yourself parkouring through London skylines (a nod to Casino Royale’s opening), having fist fights where you’re crashing through multiple floors and plowing through cars in a garbage truck. There are also a handful of shootouts where you’ll have to mow down dozens of enemies, which offer visceral thrills but also quickly feel repetitive.

IOI has clearly spent more time thinking about stealth than large-scale action, and it’s sometimes tough to tell where you need to go when 20 people are shooting at you. I replayed the first major shootout, which took place in an airport, around 10 times before I found a survivable pathway. (For the easily frustrated, you can also reduce your difficulty level on the fly.)

Perhaps it was just a result of flying through the game for this review, but it was hard to ignore pacing issues throughout First Light. As the action and nefarious conspiracy escalates, the game gets bogged down by extended stealth sequences, fetch quests and half-hearted boss fights. They don’t ruin First Light’s overall experience, but it definitely feels like it could use some narrative tightening.

NYT Strands Answers May 24 2026 Revealed for Puzzle No. 812 Theme Summer Essentials

Israel says it has killed new Hamas military leader in Gaza City airstrikes

Bridgerton Season 5: Cast, Release Date And Everything We Know So Far

Selena Gomez Reportedly Upset Over Benny Blanco’s Comments on Her ‘Terrible’ Diet

Micron Crosses $1 Trillion Market Cap as AI Demand Reshapes Memory Sector

Microsoft’s quiet Claude Code retreat and the real cost of enterprise AI

BTS Sells Out Four Las Vegas Shows at Allegiant Stadium for ARIRANG World Tour

China assigns ID codes to 28,000+ humanoid robots

XRP *JUST* SUCCEEDED!!!! CLARITY ACT EXPOSED!!! (SHE EXPOSED IT)

Waymo dominates autonomous vehicle registrations as Tesla trails behind

The Samsung pay deal is the moment Korean unions changed register

Westone Audio and Etymotic Acquired by Fidelity Collective in Major IEM Market Move

Millions of AI agents imperiled by critical vulnerability in open source package

Brian Armstrong Outlines Crypto Vision for the Future Financial System

‘Breaking Bad’ Star’s Easy-to-Binge 6-Part Crime Series Spin-Off Is Finally Heading to Free Streaming

SpaceX’s $2 Trillion IPO: Why Tech Giants Nvidia (NVDA), Apple (AAPL), and Microsoft (MSFT) May Face Pressure

Nvidia (NVDA) CEO Calls on Super Micro to Strengthen Export Controls Amid Smuggling Probe

NASA taps Blue Origin to deliver lunar rovers for Moon Base initiative

Hottest May day ever as London hits 34.8C in 2C leap from previous records

Snowflake (SNOW) Stock Rallies on Strong Q1 Results and AI Product Growth

You must be logged in to post a comment Login