Logitech G Pro X2 Superstrike: Two-minute review

I should probably preface this review by saying that I’ve long been a fan of Logitech‘s mice, having used a G502 Lightspeed Wireless as my daily driver for more than five years. In fact, I love it so much that when mine finally gave up the ghost back in 2024, I literally just bought another identical model.

If you’re familiar with my work, you might suspect a slight degree of bias in this review – and I’m sure that the coveted five-star rating above won’t assuage those suspicions.

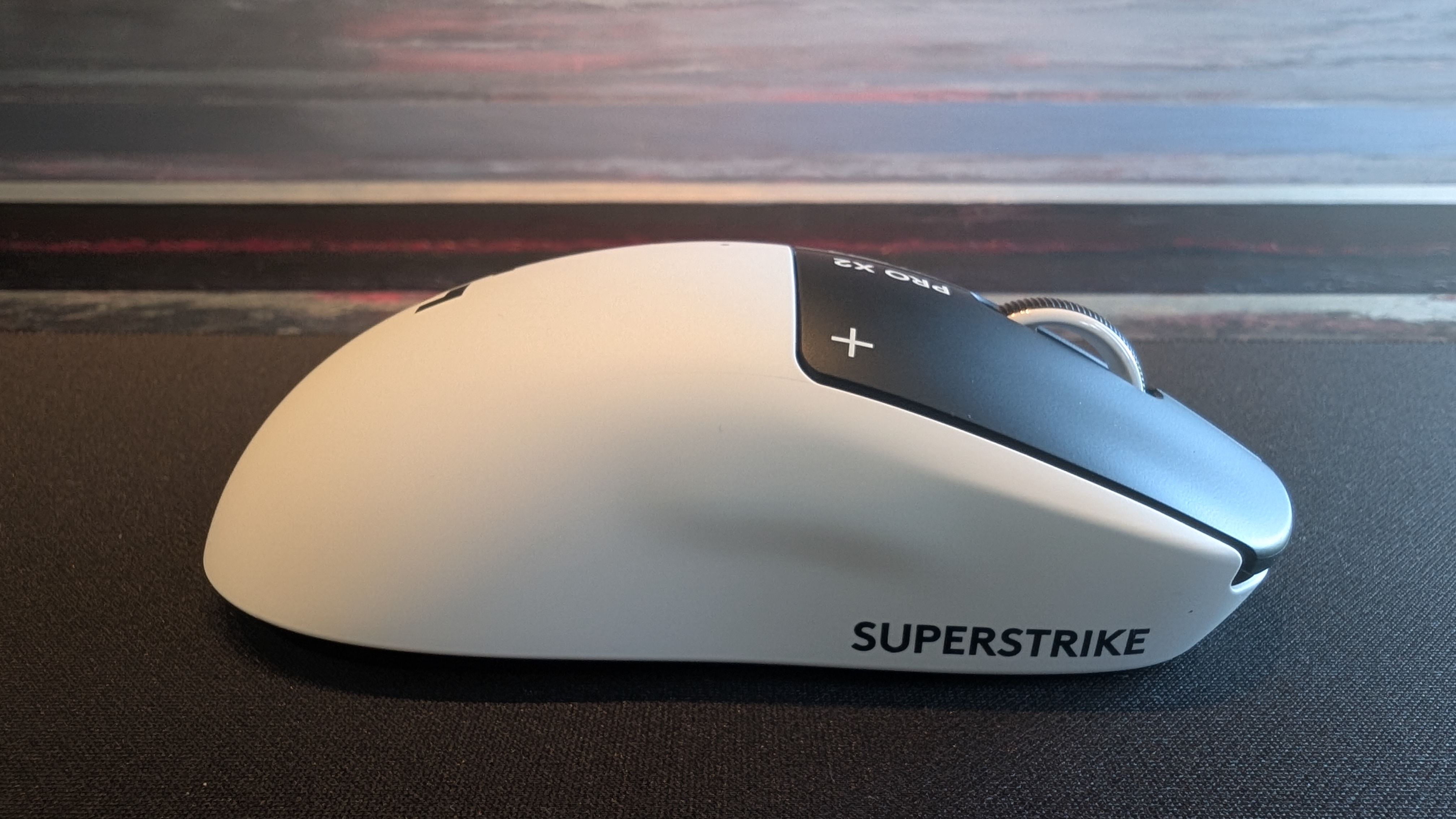

But honestly? I wasn’t expecting much from the Logitech G Pro X2 Superstrike. The design is pretty simple, just a near-symmetrical chassis with two thumb buttons and a basic scroll wheel, plus a mildly futuristic aesthetic that you’ll either find appealing or obnoxious. I’ve seen a hundred mice like this before, I thought upon unboxing it for the first time.

That was before I knew about HITS. The ‘haptic inductive trigger system’ is the main selling point of the Pro X2 Superstrike, and it’s really something special: user-tunable actuation for the two main mouse buttons, with rapid trigger reset points to minimize latency. In other words, you can personally tweak the tactility of these clickers to exactly how you want them to feel, and it’s frankly awesome. It’s reminiscent of the satisfying feedback of hall effect buttons, and the mechanics behind it are similar as well, but I’ll get into that later on in this review.

HITS aside (but really, these buttons absolutely rock), the Logitech G Pro X2 Superstrike is just a staggeringly competent piece of hardware design. The rounded, symmetrical shape is very comfortable in the hand, and the total package weight of just 61g combined with smooth-gliding UHMWPE feet makes it feel great to use even on lower sensitivities. But with a 44,000 DPI sensor and 8K polling rate mode, it’s well-equipped for fans of twitchy online shooters.

I’m just gonna say it: this is straight up one of the best gaming mice money can buy right now. Speaking of money…

Logitech G Pro X2 Superstrike: Price & availability

- How much does it cost? $179.99 / £159.99 / AU$299.95

- When is it available? Available now

- Where can you get it? Available globally

Yeah, this hurts a little. Clocking in at $179.99 / £159.99 / AU$299.95, there’s no avoiding the fact that a lot of PC gamers will be priced out of enjoying the perfect clicks of the Logitech G Pro X2 Superstrike.

It’s similarly priced to the Razer Deathadder V4 Pro, which we featured in our list of the best mice, and is a comparable premium esports-focused mouse with a simple, lightweight design – though it uses optical switches instead, which are durable and responsive but a lot noisier.

However – and it’s not often that I say this – I do actually think this is a product that manages to fully justify its price tag. The Superstrike is something entirely new, but even aside from that, it’s simply an excellent product in almost every way.

Logitech G Pro X2 Superstrike: Design

- Simple but comfortable design

- Robust build quality

- No left-handed version

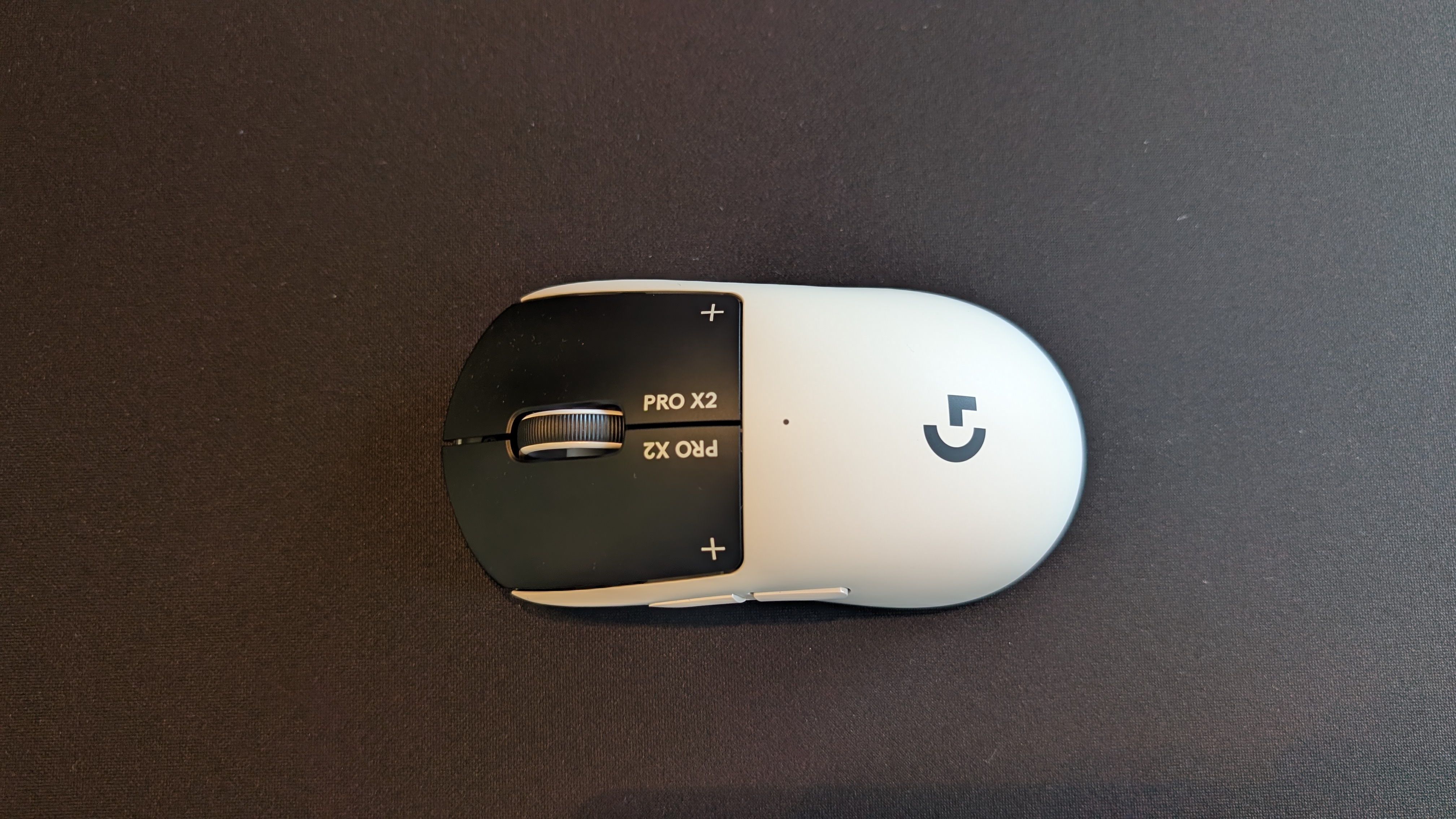

I suspect that the ultra-modern design of the Logitech G Pro X2 Superstrike will be a turn-off for some potential buyers, but I like it. No rainbow RGB here, just a lone LED indicating your DPI preset. Stamping the product name all over the device makes it feel like something out of a utilitarian corporate dystopia – a vibe I’m fine with for my hardware aesthetic, though I’d rather steer clear from a societal standpoint.

Aesthetics aside, the chassis design isn’t anything particularly earth-shattering, but you don’t mess with a proven winner. The shape is essentially the same as Logitech G’s previous Pro X Superlight 2, a symmetrical design with a gentle curve across it that fits comfortably in the palm. I’ve got pretty big hands, so I asked my (smaller-handed) partner to give it a try, and he reported that it felt very comfortable to use as well. I might say that the shape is somewhat better suited to claw- and fingertip-style grips, but as a palm-grip user, I found it comfortable even during extended gaming sessions.

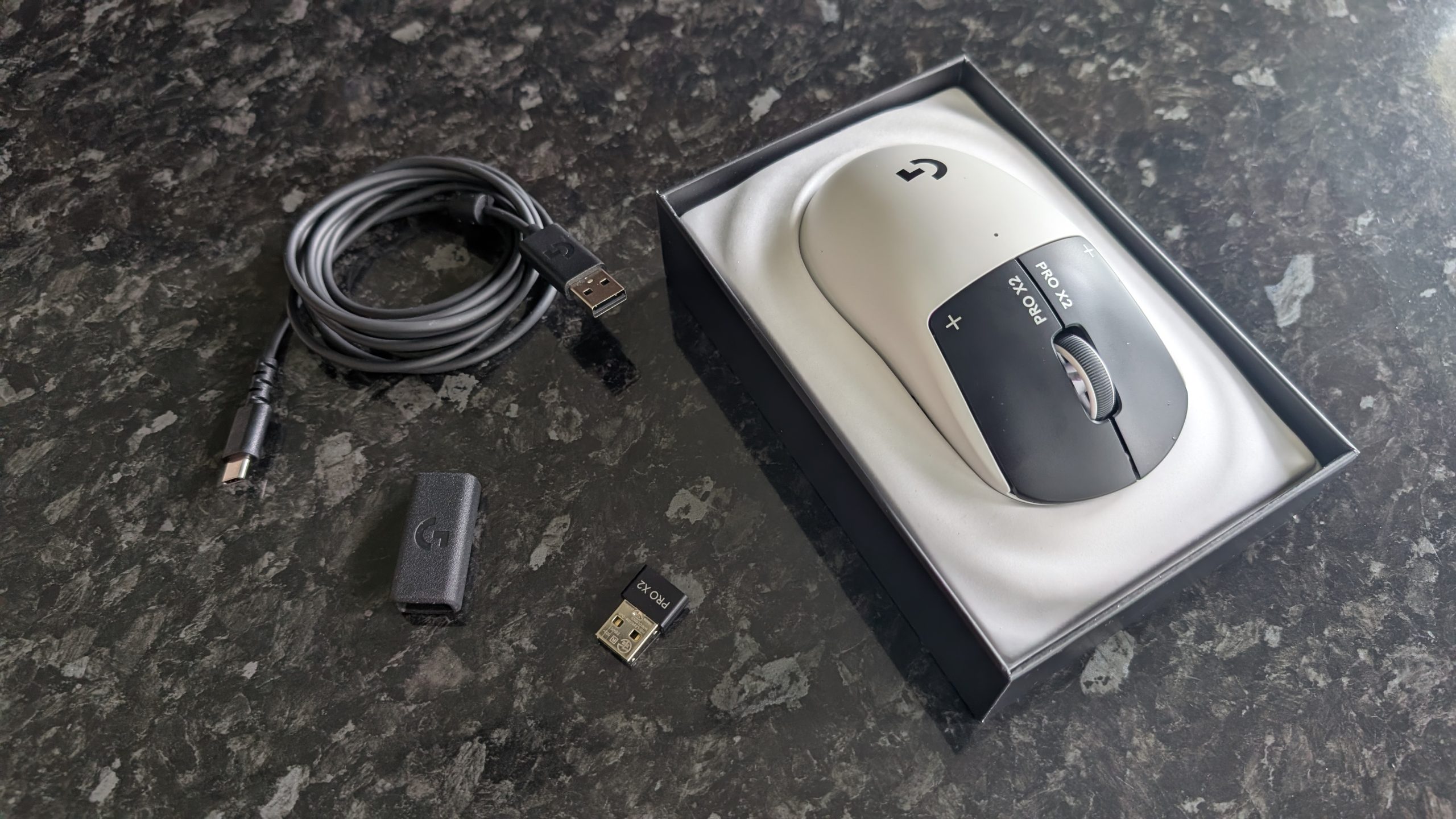

Despite weighing barely more than 60 grams, the Pro X2 Superstrike doesn’t feel flimsy in the slightest. The whole thing feels well-constructed, with a physical power switch and magnetic cover on the underside that conceals a slot to store the USB dongle. The feet are UHMWPE, tough and low-friction, and a small cutout at the front of the mouse houses the USB-C port for charging or wired use.

The main buttons have a weighty, tactile feel to them, while the scroll wheel offers firm rotation and a quiet but robust click. The side buttons are a bit softer, but still have a decent level of physical feedback and are well-spaced – I often like to map actions to these thumb buttons in shooters, and I didn’t experience any misclicks. The mouse is very slightly front-heavy, presumably due to the HITS switch assembly underneath the two main buttons, and while I didn’t have any issues with this, users who regularly lift their mouse clear of the mat may find that it requires a bit of getting used to.

The sensor is the Logitech G HERO 2 sensor, found in a wide range of the brand’s premium gaming mice. It supports up to 44,000 DPI with up to 88G acceleration registration, and I can attest from using other mice with the same sensor that it’s very reliable. For those seeking the absolute best low-latency performance, the box includes an adapter for you to connect the dongle to the power cable and place it directly on your desk, but it worked fine just plugged into the back of my PC, too.

The matte plastic shell does a good job of repelling fingerprint smudges (even from my sweaty hands during a heatwave that hit the UK while I was reviewing the Pro X2 Superstrike), and the casing is generally sturdy. It feels like a product that was built to last. Honestly, my only criticism here is the lack of a left-handed model; I’m a southpaw myself, and while I’ve adapted to using a mouse with my right hand, the same can’t be said of every left-hander out there.

Logitech G Pro X2 Superstrike: Performance

- HITS switches are truly phenomenal

- Fast and smooth movement is great for shooters

- Logitech G Hub software works well

Alright, let me talk about these switches properly for a moment. The way HITS works is essentially the same principle as hall effect keyboard switches, using metal plates and copper coils carrying an electromagnetic current with an analog sensor that precisely measures the click input.

Now, this means that you get incredibly fast input response, on par with the optical switches that are becoming more popular in gaming mice, but the real takeaway here is the adjustable actuation. Because you’re not pressing a physical switch but rather moving a bit of metal up and down, you can use Logitech’s G Hub software to manually adjust the actuation point. If you want hair-trigger actuation, it’s yours. Prefer only firm, deep clicks to register? It can do that too, and everything in between.

The HITS design also allows you to adjust the trigger reset points (put simply, how soon the button can register another input when you start to lift your finger after clicking), and with no physical switch involved, the Pro X2 Superstrike allows for ultra-rapid-fire inputs. If you’re using a semi-automatic gun, the only limit on fire rate is whatever the game itself imposes.

Without an actual switch to click underneath these buttons, there’s no tactile feedback. In fact, when I first received the Pro X2 Superstrike and clicked the buttons before turning it on, I was immediately worried that it would feel horrible to use. That’s where the ‘haptic’ part of ‘haptic inductive trigger system’ comes in: when you click, the button releases a tiny vibration that mimics the click input of a traditional mouse. It sounds silly, but it genuinely works – and like the actuation and trigger resets, you can adjust this too, or even turn it off if you’re so inclined. But I wouldn’t – it’s really quite good once you get used to it.

The best part? They’re ridiculously quiet. If you’re noise-sensitive or you’re a late-night gamer like me, a near-silent mouse is a genuine boon. In fact, Logitech, if you’re reading this: please make a G502 with HITS (and then send it directly to my home address). I adore the Superstrike, but I do miss my thumb rest for everyday work.

Alright, enough about the HITS. Overall, the Logitech G Pro X2 Superstrike feels excellent for gaming, gliding smoothly across my mouse mat and delivering precise, latency-free inputs thanks to the Logitech Lightspeed dongle.

The G Hub software gives you plenty of sliders to slide, letting you adjust the usual settings like sensitivity and polling rate, as well as create profiles for individual games depending on your preferences. The 8K polling mode is something of a gimmick that likely won’t make much of a difference to all but the sweatiest esports lovers, but it’s there if you want it (though it’s oddly not available in wired mode; you have to use the included dongle).

I stuck with the defaults for most of the games I tested, but I did make custom profiles for Valorant and Marathon to make the most of the super-reactive HERO 2 sensor. You can also map button input combos as macros, which was particularly useful for adjusting the DPI manually, as there’s no dedicated DPI button here.

Did it make me better at shooting? No, my aim is still aggressively mid, but I certainly felt better playing with the Pro X2 Superstrike. After tweaking the HITS actuation to accept feather-touch inputs with an equally low reset point and strong haptic feedback, plinking hostile players at range with a precision rifle in Marathon felt gratifying.

The battery life is also solid, with Logitech claiming 90 hours of use on a single charge. I found this held up; I charged the mouse to full after unboxing it, and it was still kicking after a week of work and gaming.

Should you buy the Logitech G Pro X2 Superstrike?

|

Value |

The price is high, but you get one seriously premium-feeling mouse for your money. |

4/5 |

|

Design |

The Logitech G Pro X2 Superstrike is comfortable, durable, and wisely keeps the design minimalist to focus purely on performance and tactile experience. |

5/5 |

|

Performance |

The sensor performs well and the battery life is good, but the HITS switches are the star of the show; a revelation for gaming mice that I can’t wait to see appear in more mice from Logitech. |

5/5 |

|

Average rating |

Logitech has knocked it out of the park here. The Pro X2 Superstrike officially sets a new standard for mice, and deserves the highest praise. |

4.84/5 |

Buy the Logitech G Pro X2 Superstrike if…

Don’t buy it if…

Logitech G Pro X2 Superstrike: Also consider

How I tested the Logitech G Pro X2 Superstrike

I traded out my usual Logitech G502 Lightspeed Wireless for the Pro X2 Superstrike for a total of eight days while putting together this review, and guess what… I’m still using it. Not for everyday work (I value a thumb rest too much for that), but it’s currently perched on the corner of my desk for whenever I load up Marathon or Warframe.

During my eight-day testing period, I used the Superstrike for both my regular day-to-day work for TechRadar (which, in mouse-specific terms, mostly involves a lot of clicking on links and highlighting text) and for everything I use my PC for during my off hours. This is mostly gaming, with a bit of mucking about in Discord and Scrivener for personal projects. Aside from the games I’ve already mentioned in this review, I also tested the Pro X2 Superstrike in Overwatch, Apex Legends, and Tiny Tina’s Wonderlands (yes, I know I’m late to that particular party – I’ll get around to Borderlands 4 eventually).

First reviewed May 2026

You must be logged in to post a comment Login