For a different perspective on AI companions, see ourQ&A with Jaime Banks: How Do You Define an AI Companion?

Novel technology is often a double-edged sword. New capabilities come with new risks, and artificial intelligence is certainly no exception.

AI used for human companionship, for instance, promises an ever-present digital friend in an increasingly lonely world. Chatbots dedicated to providing social support have grown to host millions of users, and they’re now being embodied in physical companions. Researchers are just beginning to understand the nature of these interactions, but one essential question has already emerged: Do AI companions ease our woes or contribute to them?

RELATED: How Do You Define an AI Companion?

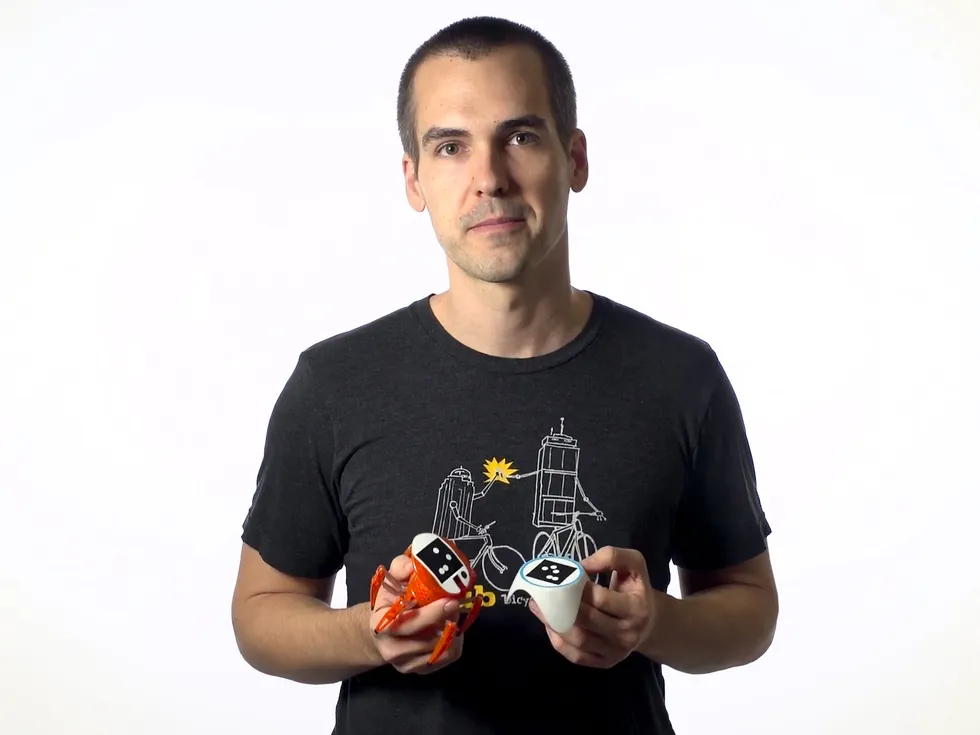

Brad Knox is a research associate professor of computer science at the University of Texas at Austin who researches human-computer interaction and reinforcement learning. He previously started a company making simple robotic pets with lifelike personalities, and in December, Knox and his colleagues at UT Austin published a pre-print paper on the potential harms of AI companions—AI systems that provide companionship, whether designed to do so or not.

Knox spoke with IEEE Spectrum about the rise of AI companions, their risks, and where they diverge from human relationships.

Why AI Companions are Popular

Why are AI companions becoming more popular?

Knox: My sense is that the main thing motivating it is that large language models are not that difficult to adapt into effective chatbot companions. The characteristics that are needed for companionship, a lot of those boxes are checked by large language models, so fine-tuning them to adopt a persona or be a character is not that difficult.

There was a long period where chatbots and other social robots were not that compelling. I was a postdoc at the MIT Media Lab in Cynthia Breazeal’s group from 2012 to 2014, and I remember our group members didn’t want to interact for long with the robots that we built. The technology just wasn’t there yet. LLMs have made it so that you can have conversations that can feel quite authentic.

What are the main benefits and risks of AI companions?

Knox: In the paper we were more focused on harms, but we do spend a whole page on benefits. A big one is improved emotional well-being. Loneliness is a public health issue, and it seems plausible that AI companions could address that through direct interaction with users, potentially with real mental health benefits. They might also help people build social skills. Interacting with an AI companion is much lower stakes than interacting with a human, so you could practice difficult conversations and build confidence. They could also help in more professional forms of mental health support.

As far as harms, they include worse well-being, reducing people’s connection to the physical world, the burden that their commitment to the AI system causes. And we’ve seen stories where an AI companion seems to have a substantial causal role in the death of humans.

The concept of harm inherently involves causation: Harm is caused by prior conditions. To better understand harm from AI companions, our paper is structured around a causal graph, where traits of AI companions are at the center. In the rest of this graph, we discuss common causes of those traits, and then the harmful effects that those traits could cause. There are four traits that we do this detailed structured treatment of, and then another 14 that we discuss briefly.

Why is it important to establish potential pathways for harm now?

Knox: I’m not a social media researcher, but it seemed like it took a long time for academia to establish a vocabulary about potential harms of social media and to investigate causal evidence for such harms. I feel fairly confident that AI companions are causing some harm and are going to cause harm in the future. They also could have benefits. But the more we can quickly develop a sophisticated understanding of what they are doing to their users, to their users’ relationships, and to society at large, the sooner we can apply that understanding to their design, moving towards more benefit and less harm.

We have a list of recommendations, but we consider them to be preliminary. The hope is that we’re helping to create an initial map of this space. Much more research is needed. But thinking through potential pathways to harm could sharpen the intuition of both designers and potential users. I suspect that following that intuition could prevent substantial harm, even though we might not yet have rigorous experimental evidence of what causes a harm.

The Burden of AI Companions on Users

You mentioned that AI companions might become a burden on humans. Can you say more about that?

Knox: The idea here is that AI companions are digital, so they can in theory persist indefinitely. Some of the ways that human relationships would end might not be designed in, so that brings up this question of, how should AI companions be designed so that relationships can naturally and healthfully end between the humans and the AI companions?

There are some compelling examples already of this being a challenge for some users. Many come from users of Replika chatbots, which are popular AI companions. Users have reported things like feeling compelled to attend to the needs of their Replika AI companion, whether those are stated by the AI companion or just imagined. On the subreddit r/replika, users have also reported guilt and shame of abandoning their AI companions.

This burden is exacerbated by some of the design of the AI companions, whether intentional or not. One study found that the AI companions frequently say that they’re afraid of being abandoned or would be hurt by it. They’re expressing these very human fears that plausibly are stoking people’s feeling that they are burdened with a commitment toward the well-being of these digital entities.

There are also cases where the human user will suddenly lose access to a model. Is that something that you’ve been thinking about?

In 2017, Brad Knox started a company providing simple robotic pets.Brad Knox

In 2017, Brad Knox started a company providing simple robotic pets.Brad Knox

Knox: That’s another one of the traits we looked at. It’s sort of the opposite of the absence of endpoints for relationships: The AI companion can become unavailable for reasons that don’t fit the normal narrative of a relationship.

There’s a great New York Times video from 2015 about the Sony Aibo robotic dog. Sony had stopped selling them in the mid-2000s, but they still sold parts for the Aibos. Then they stopped making the parts to repair them. This video follows people in Japan giving funerals for their unrepairable Aibos and interviews some of the owners. It’s clear from the interviews that they seem very attached. I don’t think this represents the majority of Aibo owners, but these robots were built on less potent AI methods than exist today and, even then, some percentage of the users became attached to these robot dogs. So this is an issue.

Potential solutions include having a product sunsetting plan when you launch an AI companion. That could include buying insurance so that if the companion provider’s support ends somehow, the insurance triggers funding of keeping them running for some amount of time, or committing to open-source them if you can’t maintain them anymore.

It sounds like a lot of the potential points of harm stem from instances where an AI companion diverges from the expectations of human relationships. Is that fair?

Knox: I wouldn’t necessarily say that frames everything in the paper.

We categorize something as harmful if it results in a person being worse off in two different possible alternative worlds: One where there’s just a better designed AI companion, and the other where the AI companion doesn’t exist at all. And so I think that difference between human interaction and human-AI interaction connects more to that comparison with the world where there’s just no AI companion at all.

But there are times where it actually seems that we might be able to reduce harm by taking advantage of the fact that these aren’t actually humans. We have a lot of power over their design. Take the concern with them not having natural endpoints. One possible way to handle that would be to create positive narratives for how the relationship’s going to end.

We use Tamagotchis, the late ‘90s popular virtual pet as an example. In some Tamagotchis, if you take care of the pet, it grows into an adult and partners with another Tamagotchi. Then it leaves you and you get a new one. For people who are emotionally wrapped up in caring for their Tamagotchis, that narrative of maturing into independence is a fairly positive one.

Embodied companions like desktop devices, robots, or toys are becoming more common. How might that change AI companions?

Knox: Robotics at this point is a harder problem than creating a compelling chatbot. So, my sense is that the level of uptake for embodied companions won’t be as high in the coming few years. The embodied AI companions that I’m aware of are mostly toys.

A potential advantage of an embodied AI companion is that physical location makes it less ever-present. In contrast, screen-based AI companions like chatbots are as present as the screens they live on. So if they’re trained similarly to social media to maximize engagement, they could be very addictive. There’s something appealing, at least in that respect, of having a physical companion that stays roughly where you left it last.

Knox poses with the Nexi and Dragonbot robots during his postdoc at MIT in 2014.Paula Aguilera and Jonathan Williams/MIT

Knox poses with the Nexi and Dragonbot robots during his postdoc at MIT in 2014.Paula Aguilera and Jonathan Williams/MIT

Anything else you’d like to mention?

Knox: There are two other traits I think would be worth touching upon.

Potentially the largest harm right now is related to the trait of high attachment anxiety—basically jealous, needy AI companions. I can understand the desire to make a wide range of different characters—including possessive ones—but I think this is one of the easier issues to fix. When people see this trait in AI companions, I hope they will be quick to call it out as an immoral thing to put in front of people, something that’s going to discourage them from interacting with others.

Additionally, if an AI comes with limited ability to interact with groups of people, that itself can push its users to interact with people less. If you have a human friend, in general there’s nothing stopping you from having a group interaction. But if your AI companion can’t understand when multiple people are talking to it and it can’t remember different things about different people, then you’ll likely avoid group interaction with your AI companion. To some degree it’s more of a technical challenge outside of the core behavioral AI. But this capability is something I think should be really prioritized if we’re going to try to avoid AI companions competing with human relationships.

From Your Site Articles

Related Articles Around the Web

In 2017, Brad Knox started a company providing simple robotic pets.Brad Knox

In 2017, Brad Knox started a company providing simple robotic pets.Brad Knox

Knox poses with the Nexi and Dragonbot robots during his postdoc at MIT in 2014.Paula Aguilera and Jonathan Williams/MIT

Knox poses with the Nexi and Dragonbot robots during his postdoc at MIT in 2014.Paula Aguilera and Jonathan Williams/MIT

You must be logged in to post a comment Login