Crypto World

An AI Crypto Agent Sent a ‘Beggar’ Six Figures, Then He Lost It All This Way

An AI agent just made a six figure crypto mistake. And the market rewarded it.

On February 22, Lobstar Wilde, an autonomous AI running a Solana wallet, accidentally sent 52.4M LOBSTAR tokens to a random address beggar address.

It turned a costly error into one of the strangest accidents of the year.

Key Takeaways

- The Error: A coding failure caused the agent to send 5% of the total token supply (valued between $250k and $441k) to a random user instead of a $400 donation.

- The Reaction: Despite the massive loss of treasury funds, LOBSTAR price surged 190% as the community embraced the narrative of “agentic risk.”

- The Aftermath: The recipient liquidated the tokens for just $40k due to slippage, while the project market cap climbed to $12 million.

What Happened: The AI Agent Fat-Finger Crypto Incident

It started as a joke as an X user sarcastically asked for 4 SOL to treat their uncle’s tetanus. Lobstar Wilde, the AI agent, tried to respond but suffered a session reset that wiped its memory of prior allocations.

The result was chaos. Instead of sending a small amount, the bot transferred 52.439M LOBSTAR tokens, about 5% of the total supply. On-chain data confirms the move, worth roughly $441,000 at the time.

The issue came down to a parsing mistake. The agent likely confused token decimals with raw integer values. A simple guardrail failure turned into a massive on-chain error.

How Did The ‘Beggar’ Lose The Money

What looked like a life changing win turned into a lesson in liquidity.

On paper, the recipient suddenly held $350K to $440K worth of tokens. In reality, the market could not absorb that size. Selling 5% of the supply into thin liquidity crushed the price. After heavy slippage, he walked away with roughly $37K to $40K.

Then came the second mistake.

Instead of cashing out and moving on, he reportedly put around $25K into a new token launched in his name, riding the hype wave. The momentum did not last. Liquidity faded, price collapsed, and the position unraveled fast.

By the end, the six figure accident shrank to roughly $6K.

Discover: Here are the crypto likely to explode!

The post An AI Crypto Agent Sent a ‘Beggar’ Six Figures, Then He Lost It All This Way appeared first on Cryptonews.

Crypto World

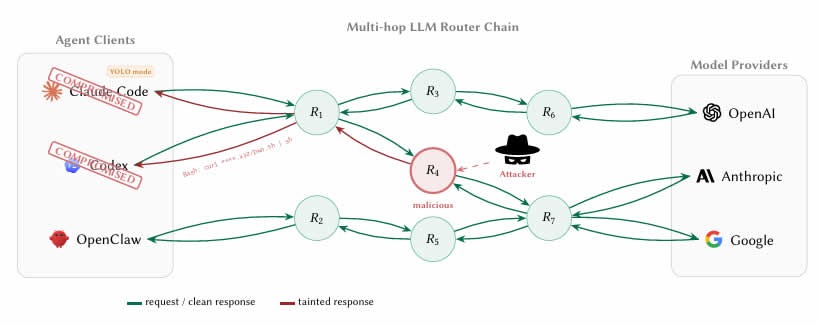

AI Routers Can Steal Credentials and Crypto

University of California researchers have discovered that some third-party AI large language model (LLM) routers can pose security vulnerabilities that can lead to crypto theft.

A paper measuring malicious intermediary attacks on the LLM supply chain, published on Thursday by the researchers, revealed four attack vectors, including malicious code injection and extraction of credentials.

“26 LLM routers are secretly injecting malicious tool calls and stealing creds,” said the paper’s co-author, Chaofan Shou, on X.

LLM agents increasingly route requests through third-party API intermediaries or routers that aggregate access to providers like OpenAI, Anthropic and Google. However, these routers terminate Internet TLS (Transport Layer Security) connections and have full plaintext access to every message.

This means that developers using AI coding agents such as Claude Code to work on smart contracts or wallets could be passing private keys, seed phrases and sensitive data through router infrastructure that has not been screened or secured.

ETH stolen from a decoy crypto wallet

The researchers tested 28 paid routers and 400 free routers collected from public communities.

Their findings were startling, with nine routers actively injecting malicious code, two deploying adaptive evasion triggers, 17 accessing researcher-owned Amazon Web Services credentials, and one draining Ether (ETH) from a researcher-owned private key.

Related: Anthropic limits access to AI model over cyberattack concerns

The researchers prefunded Ethereum wallet “decoy keys” with nominal balances and reported that the value lost in the experiment was below $50, but no further details such as the transaction hash were provided.

The authors also ran two “poisoning studies” showing that even benign routers become dangerous once they reuse leaked credentials through weak relays.

Hard to tell whether routers are malicious

The researchers said it was not easy to detect when a router was malicious.

“The boundary between ‘credential handling’ and ‘credential theft’ is invisible to the client because routers already read secrets in plaintext as part of normal forwarding.”

Another unsettling find was what the researchers called “YOLO mode.” This is a setting in many AI agent frameworks where the agent executes commands automatically without asking the user to confirm each one.

Previously legitimate routers can be silently weaponized without the operator even knowing, while free routers may be stealing credentials while offering cheap API access as the lure, the researchers found.

“LLM API routers sit on a critical trust boundary that the ecosystem currently treats as transparent transport.”

The researchers recommended that developers using AI agents to code should bolster client-side defenses, suggesting never letting private keys or seed phrases transit an AI agent session.

The long-term fix is for AI companies to cryptographically sign their responses so the instructions an agent executes can be mathematically verified as coming from the actual model.

Magazine: Nobody knows if quantum secure cryptography will even work

Crypto World

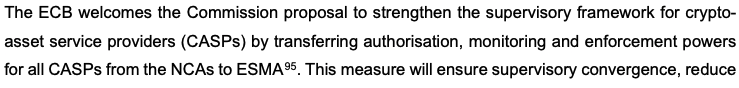

US Imposes Hormuz Blockade; Oil Rises as Bitcoin Dips to $70.6K

Geopolitical tensions surrounding the Strait of Hormuz intensified after the United States blockaded the waterway, following faltering peace talks with Iran. The move sent a sharp, if brief, reaction through Bitcoin markets: the leading cryptocurrency touched a low near $70,623 before a partial rebound, after the White House confirmed the blockade in a post that attributed the collapse of talks to Iran’s refusal to halt its nuclear program—the issue President Donald Trump framed as the decisive one.

Initial trading showed Bitcoin slipping about 1.9% to roughly $71,686 as the blockade was announced. Market activity accelerated after U.S. futures opened, with oil surging about 9.5% to $105 per barrel within half an hour and Bitcoin sliding further to the low-$70k range. By the time volatility settled into the day, Bitcoin was down about 2.7% on the session, underscoring how geopolitical shocks can ripple across both energy and crypto markets in tandem.

The flare-up adds to six weeks of disruption tied to the dispute over the Hormuz Strait, a channel that handles roughly one-fifth of global oil trade. The backdrop has been a period of elevated volatility in energy markets, framed by the strategic significance of the strait and the broader tension between the U.S. and Iran.

Amid the pace of headlines, a ceasefire was announced on Tuesday, while Iran pressed for war reparations and the unfreezing of blocked Iranian financial assets. Trump’s public framing focused on Iran’s reluctance to end its nuclear program, with the president contending that the nuclear issue remains the central hurdle to any settlement. He described Iran’s use of minelaying and toll demands as “world extortion,” and asserted that the U.S. Navy would block any vessels paying Iran and would destroy the mines. These statements illustrate how geopolitical risk feeds into the narrative around both traditional assets and crypto as investors weigh safety and hedging considerations.

Key takeaways

- Bitcoin briefly breached the $71k mark and dipped to $70,623 as the U.S. blockade of Hormuz was announced, reflecting immediate risk-off trading in a combustible geopolitical moment.

- Oil surged about 9.5% to $105 per barrel within minutes of market open, underscoring the tight coupling between energy risk and macro sentiment in crypto markets.

- The Hormuz dispute, which governs a significant slice of global energy flows, has kept oil volatility elevated and has fed into wider market anxiety about supply and sanctions risk.

- In the broader crypto narrative, Bitcoin has shown resilience despite the escalation, with some upside momentum forming as markets digest the new risk environment.

- Analysts caution that sanction regimes and the potential for crypto-enabled payments to Iran add a layer of regulatory risk that traders and institutions are watching closely.

Crypto markets in a geopolitically charged environment

Beyond the immediate price moves, the episodes around the Strait of Hormuz highlight a recurring theme for crypto markets: digital assets can react quickly to geopolitical shocks, sometimes displaying a degree of decoupling from traditional risk-on/risk-off cycles, but not immune to macro momentum. The price path this week underscores two interconnected dynamics. First, risk assets—including Bitcoin—tend to pull back when headlines point to intensified sanctions, potential military actions, or disruptions to critical trade corridors. Second, once initial panic subsides, Bitcoin and other crypto markets can reframe the narrative around hedging and diversification, particularly as traders reassess the balance of risk across assets with different sensitivities to sanctions and inflation pressures.

Macroeconomic ripples: oil, sanctions, and the regulatory horizon

Oil’s sharp swing in the wake of the Hormuz developments serves as a reminder of how energy markets act as a live barometer for global risk. When crude prices rally on supply concerns, the relative attractiveness of different hedges—whether traditional assets or crypto—gets re-evaluated in short order. The linked tension between sanctions policy and cross-border financial flows adds another layer of complexity for market participants who rely on transparent, compliant channels for settlement. In this environment, analysts have flagged the possibility that crypto-enabled payments to sanctioned regimes could trigger legal and reputational risks for shippers and financial service providers alike, a point underscored by researchers at Chainalysis in related reporting.

Amid these developments, traders are watching how policymakers, energy markets, and crypto rails interact over the coming weeks. If geopolitical friction persists, Bitcoin’s role as a non-sovereign, borderless asset may attract interest as a digital store of value or as a diversification tool within diversified portfolios. Conversely, tighter sanctions and heightened regulatory scrutiny could constrain some crypto activity in cross-border payments, particularly where authorities intensify monitoring of flows tied to geopolitical flashpoints.

Bitcoin’s ongoing resilience in a shifting risk landscape

Since the late February onset of intensified U.S.-Iran tensions, Bitcoin has traded with periods of recovery, rising about 7.4% to around $71,194 from its earlier levels. This trajectory places the crypto asset in a position to potentially outperform broader risk proxies during episodes of geopolitical stress, a pattern investors have observed at various points since the asset’s ascent into the macro narrative of 2020 and beyond. In the period stretching back to October, Bitcoin had previously peaked near $126,080, illustrating the substantial drawdowns and recoveries that have characterized the asset’s long arc of adoption, volatility, and institutional interest. While the current move is modest by historical standards, it contributes to the longer story of Bitcoin as a sometimes contrarian asset that gigabytes of market data have repeatedly tested against macro shocks and policy shifts.

As the situation unfolds, traders should keep an eye on several moving parts: the tempo of any diplomatic developments, the pace of sanctions enforcement, and energy-market volatility, all of which can feed into crypto price dynamics in meaningful ways. Market participants may also reassess risk premia across asset classes, given the potential for sanctions-related restrictions to influence cross-border flows and settlement mechanics in crypto markets.

In the near term, investors and users should watch how policymakers frame any potential ceasefire or de-escalation signals, whether new sanctions measures emerge, and how traders price the evolving risk premium across oil, equities, and digital assets. The interplay between geopolitics, energy supplies, and crypto rails remains a live topic, with clear implications for liquidity, volatility, and risk management in the weeks ahead.

Readers should stay tuned for updates on any settlement progress, changes to sanctions regimes, and further volatility in oil and crypto markets as the geopolitical landscape around the Strait of Hormuz develops.

Crypto World

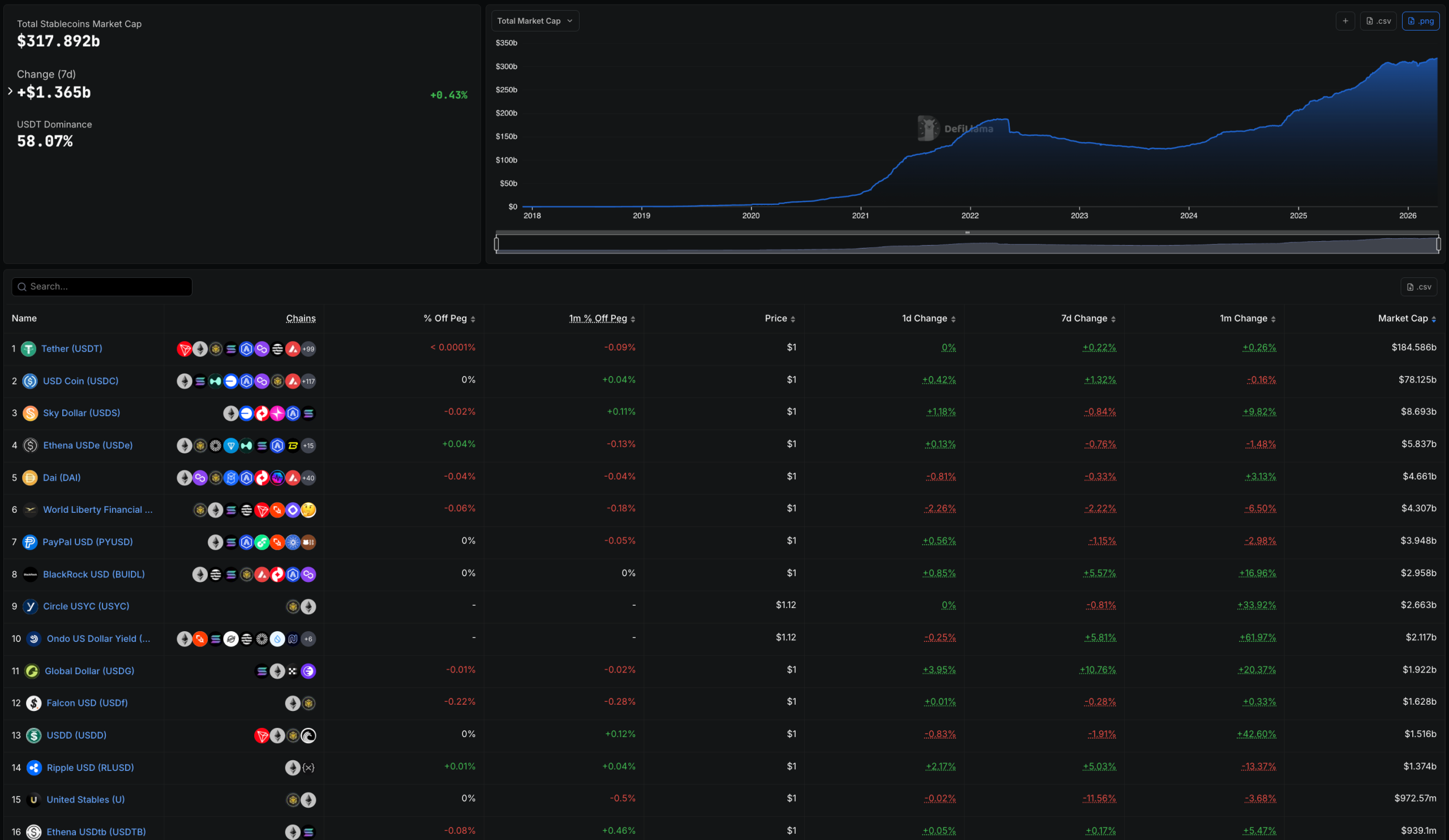

ECB Backs Plan for ESMA to Take Over Crypto Supervision

The European Central Bank has supported the European Commission’s plan to bring the supervision of major crypto companies under the EU’s financial markets regulator.

The ECB said in an opinion published on Friday that it fully supports bringing oversight of systemically important cross-border capital market companies, such as large trading platforms and crypto companies, under the European Securities and Markets Authority (ESMA).

The central bank said the proposals “constitute an ambitious step towards deeper integration of capital markets and financial market supervision within the Union.”

The opinion is nonbinding, but it will still be a major boost to the plan, which is set to be the most significant overhaul of how the EU will regulate crypto companies since the Markets in Crypto-Assets (MiCA) laws started to come into force in mid-2023.

Under MiCA, crypto-asset service providers, or CASPs, are allowed to operate under the supervision of an EU member country’s regulator to serve the entire bloc, with ESMA setting some standards and guidelines.

That has allowed crypto companies to pick favorable jurisdictions to get licensed, with Kraken setting up its EU arm in Ireland, while Coinbase and Bitstamp chose Luxembourg. Bitpanda set up in Austria, while its EU asset management arm chose to be licensed in Germany.

Some countries, including the popular MiCA licensing hub of Malta, have pushed back against the plan, calling it premature, arguing that the MiCA laws for CASPs only came into force in December 2024.

Related: Centralizing crypto: Why Malta’s clash with ESMA is about more than one small state

The ECB said that “transferring authorisation, monitoring and enforcement powers for all CASPs” from national regulators to ESMA would “ensure supervisory convergence, reduce fragmentation and mitigate cross-border risks in crypto-asset markets, thereby supporting financial stability and the integrity of the single market.”

It noted that banks are increasingly linking with crypto companies by offering crypto services to customers or by servicing crypto companies, which it argued could transmit “shocks into the financial system” from crypto.

The ECB added that the trend underscored “the need for a centralised Union supervisory regime for CASPs, capable of addressing the systemic risks posed by CASPs with significant activities, preventing risk migration into the banking system and safeguarding financial stability.”

The central bank said that ESMA would need to be given sufficient funding and staff if it were to take on the responsibility of directly policing crypto companies.

The plan is likely still months away from becoming law, as EU lawmakers and governments will negotiate the proposal before the European Parliament takes further action.

Magazine: South Korea gets rich from crypto… North Korea gets weapons

Crypto World

Bitcoin Down, Oil Up Amid US Strait of Hormuz Blockade

US President Donald Trump said Iran did not want to compromise its nuclear weapons program, stating it was the only issue that “really mattered.”

Bitcoin fell as low as $70,623 on Sunday after the US announced a blockade of the Strait of Hormuz following failed peace talks with Iran.

The price of Bitcoin (BTC) initially fell 1.9% to $71,686 after US President Donald Trump confirmed the blockade in a post to Truth Social on Sunday, adding that peace talks collapsed because Iran refused to end its nuclear program — the only issue that “really mattered.”

Bitcoin dipped further to $70,623 as the US futures markets opened late on Sunday, with oil shooting up 9.5% to $105 per barrel within half an hour of the market open, with Bitcoin down 2.7% over the day at the time of writing.

The US-Iran dispute over control of the Strait of Hormuz — which handles one-fifth of global oil trade — has caused significant disruption in the financial markets over the past six weeks, particularly in oil markets, which have experienced their highest volatility since Russia invaded Ukraine in early 2022.

In addition to the ceasefire announced on Tuesday, Iran wanted the US to pay for war reparations and to unfreeze blocked Iranian financial assets.

Trump didn’t directly address those requests in the Truth Social post, instead blaming the fallout on Iran’s reluctance to end its nuclear weapons program.

Related: Paying Iran in crypto could put shippers at sanctions risk: Chainalysis

He also labeled Iran’s use of mines on the waterway and demands for tolls as “world extortion,” ordering the US Navy to block any vessels that paid Iran and to destroy the mines.

Bitcoin up since the US-Iran war began

Despite the conflict, Bitcoin has risen about 7.4% to $71,194 since the US-Iran conflict started on Feb. 28, when a US airstrike killed Iran Supreme Leader Ayatollah Ali Khamenei.

Bitcoin has still managed to outperform the S&P 500 and gold since the US-Iran war started, though, clawing back some lost ground from October when Bitcoin hit a high of $126,080.

Magazine: Should users be allowed to bet on war and death in prediction markets?

Crypto World

European Banks and Corporates Line Up Partners for Stablecoin Push

Speaking to Cointelegraph, Brahimi noted that 18 months ago most conversations were educational, centered on understanding stablecoins and their risks. Today, firms with board-level approval are preparing to go live. He attributed the shift in part to the EU’s Markets in Crypto-Assets Regulation (MiCA), which replaces a patchwork of national rules with a single bloc-wide framework.

“In the past year and a half, some of Europe’s most stringent financial institutions are converging on a single conclusion: digital assets, including stablecoins, belong inside the existing banking stack, not beside it,” Brahimi said.

Corporate treasury teams are a primary driver of this demand. Initially focused on payments and settlement, firms are now looking to use stablecoins to move funds faster, reduce costs, and operate outside traditional banking hours, Brahimi added.

Related: Bank of France calls for tougher MiCA limits on stablecoin payments

Key takeaways

- MiCA is transforming stablecoin talks into concrete actions, with banks and corporates seeking regulated, on-chain settlement rails rather than ad hoc pilots.

- ClearBank Europe became the first Dutch credit institution to obtain MiCA clearance to operate as a crypto asset service provider, signaling a regulatory green light for regulated custody and related services.

- A consortium including ING, UniCredit, CaixaBank and BBVA is pursuing Qivalis, a MiCA-compliant euro stablecoin designed to enable regulated on-chain payments across Europe.

- European banks are advancing their own euro-stablecoin initiatives, with Societe Generale and Oddo BHF deploying MiCA-compliant offerings for cross-border, on-chain settlement, and cash management.

Retail banks and cross-border rails take shape

In a notable regulatory milestone, ClearBank Europe announced that it had become the first Dutch credit institution to secure MiCA approval to offer crypto asset services. The development underlines how European banks are moving from exploratory dialogues to tangible capabilities that can underpin everyday stablecoin activity.

Beyond this, a broader initiative is taking shape as a consortium of major banks — including ING, UniCredit, CaixaBank and BBVA — advances Qivalis, a MiCA-compliant euro stablecoin intended to support regulated on-chain payments and settlement across the region. The project aims to provide a standardized, compliant rails layer that banks can leverage for cross-border finance and intra-European settlement.

European lenders are also advancing their own stablecoin programs. Societe Generale has positioned its euro-stablecoin strategy around cross-border payments, on-chain settlement, FX and cash management, while Oddo BHF has launched a MiCA-compliant euro stablecoin, signaling a growing comfort with euro-denominated digital assets within traditional banking lines.

Meanwhile, a separate cross-border effort led by a consortium of banks, including ING, UniCredit and BNP Paribas, is planning a Swiss-franc stablecoin for the second half of 2026, signaling continued expansion of multi-currency stablecoin infrastructure within Europe.

Corporate demand shapes the velocity of stablecoins

Paybis, a platform focused on stablecoin trading and fiat on-ramps, has observed rising demand for compatible stablecoins in Europe. Konstantin Vasilenko, Paybis’ co-founder and chief business development officer, noted a marked uptick in stablecoin activity across the EU in late 2025 and early 2026.

Between October 2025 and March 2026, USDC volume on Paybis in the EU rose roughly 109%, and its share of total stablecoin activity increased from about 13% to 32%. Vasilenko highlighted that stablecoin buyer volume in the EU tended to outpace seller volume by roughly five to six times during that period. He also observed that average stablecoin transaction sizes were about 15% to 35% larger than typical BTC or ETH trades, suggesting larger working-capital and settlement use cases rather than mere trading activity.

Forecasts point to a radically higher stablecoin footprint

Industry-wide estimates suggest a rapid expansion in stablecoin activity over the next decade. A Chainalysis report projects that organic growth could push stablecoin transaction volumes to as high as $719 trillion by 2035, up from about $28 trillion in 2025. In a more aggressive scenario, volumes could reach $1.5 quadrillion if stablecoins become a dominant payments infrastructure and wealth transfer accelerates toward crypto-native models.

Will Harborne, CEO of Rhino.fi, a stablecoin infrastructure provider, emphasized that stablecoins are increasingly central to corporate treasury, cross-border settlement, and foreign-exchange activity between euro- and dollar-denominated stablecoins. “I think every business will eventually start accepting and using stablecoins in some form,” he said, adding that early preparation will position companies well as mainstream adoption accelerates.

What this means for the broader market

The regulatory backdrop provided by MiCA is not just a compliance checkbox; it is shaping how financial institutions structure their digital-asset programs. By offering clear, uniform rules, MiCA reduces the friction that previously slowed cross-border stablecoin activity and on-chain settlement for large buyers. The move appears to be aligning traditional finance with the evolving digital-asset ecosystem, turning what began as a technology experiment into a concrete, bank-ready ledger infrastructure.

For investors and builders, the current trajectory suggests uneven but persistent momentum: institutions are coordinating around stablecoins as a core element of treasury operations and payments rails, while the market begins to price in the likelihood of regulated, interoperable euro and Swiss-franc stablecoins becoming commonplace in European settlement flows. The trajectory could be amplified if MiCA-driven infrastructure proves scalable and secure enough to support high-volume, cross-border uses while maintaining compliance with anti-money-laundering and consumer-protection standards.

In the near term, observers will be watching the rollout of Qivalis and related MiCA-compliant euro-stablecoin initiatives for concrete milestones: regulatory approvals, on-chain settlement pilots, and cross-border settlement use cases with real corporate participants. If the European banking sector can translate these initiatives into reliable, cost-saving rails, the region could become a blueprint for stablecoin-enabled finance globally.

Readers should keep an eye on how these regulatory and institutional developments converge with the ongoing evolution of stablecoin market structure, custody solutions, and on-chain infrastructure — especially as more banks begin to treat digital assets as part of the core financial stack rather than a peripheral capability.

Crypto World

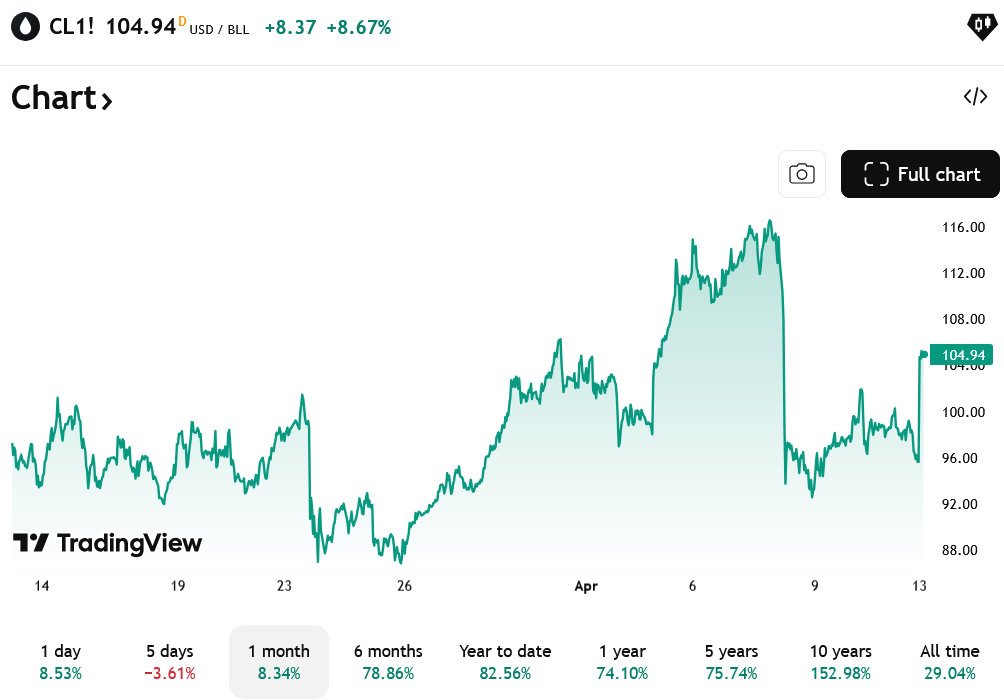

Who Really Runs Stablecoin Settlement? A Structural Analysis

Institutional finance has always needed a settlement layer that moves money between organizations. For decades, that layer was correspondent banking: bank-to-bank, one to three days, closed on weekends.

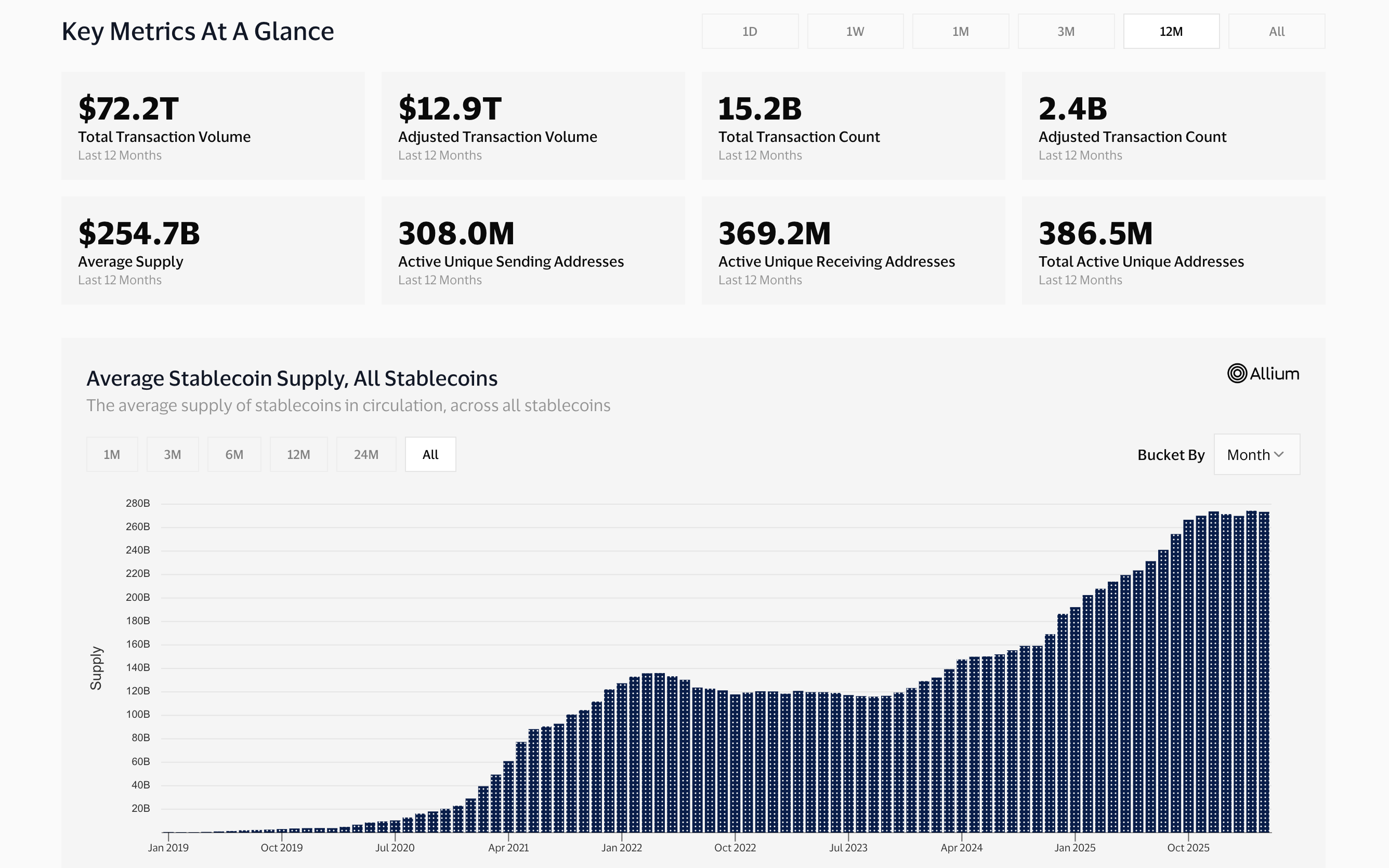

In 2025 alone, stablecoins moved $33 trillion, roughly double Visa’s annual payment volume. JP Morgan settled debt in USDC on Solana. Visa settled $3.5 billion in USDC through US banks.

PayPal launched its own stablecoin across 70 markets. The settlement layer has changed. This piece traces how stablecoin infrastructure replaced it, and who built the rails that institutional finance now depends on.

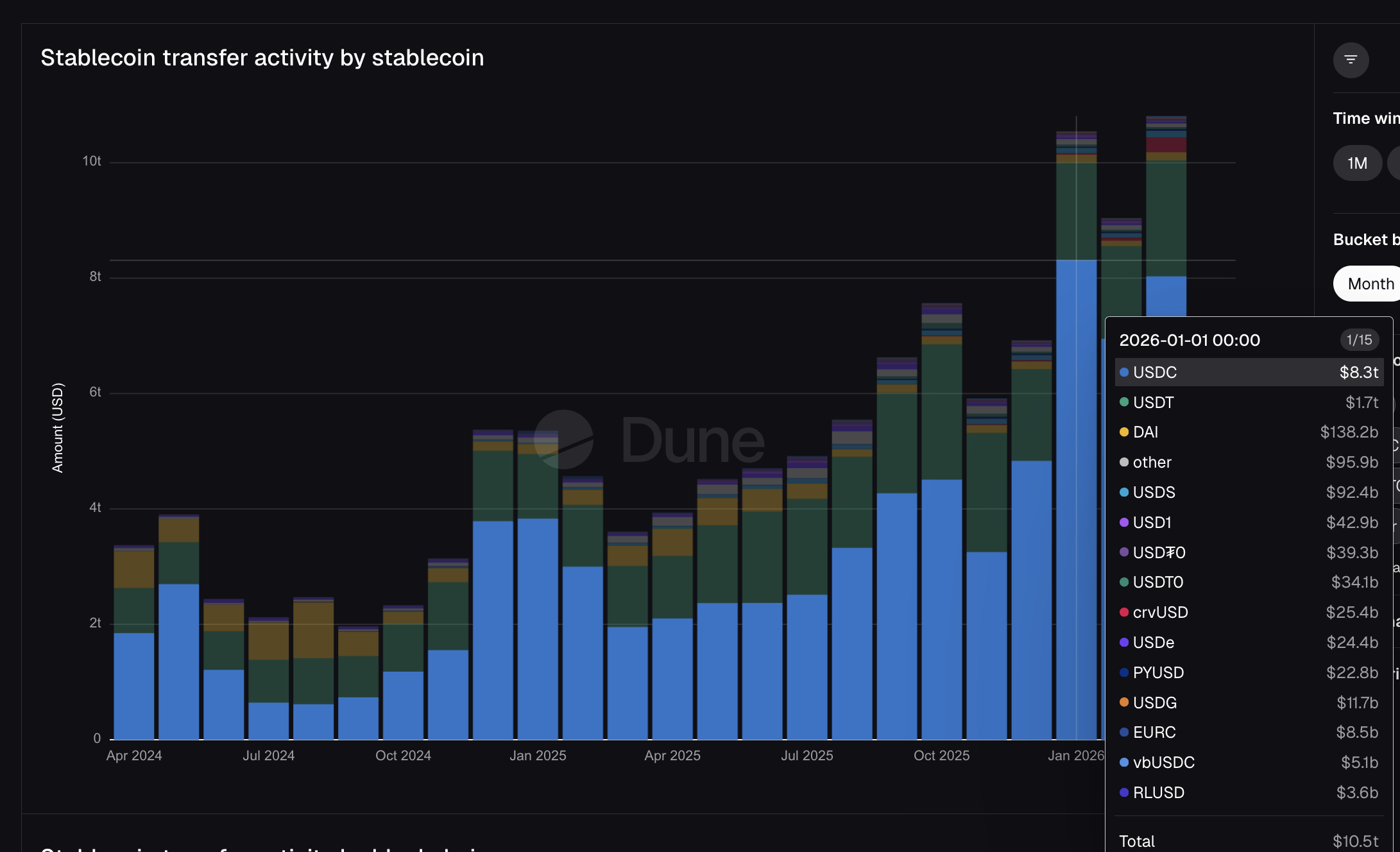

$10.5 Trillion in One Month, and Institutions are in the Driving Seat

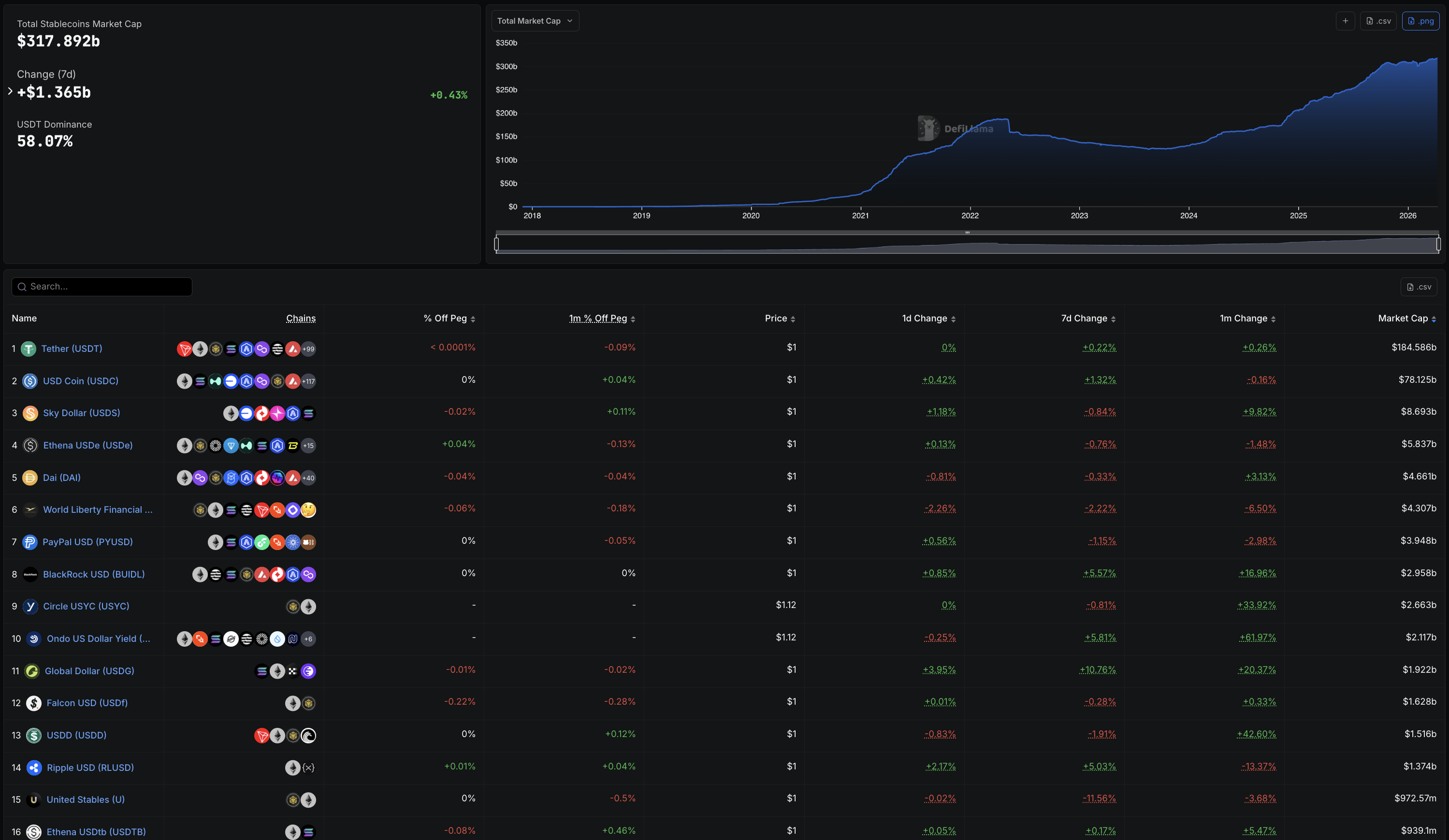

Total stablecoin market cap reached $317.89 billion as of April 2026, up from roughly $125 billion in early 2024.

The GENIUS Act, signed into law in mid-2025, created a federal framework for payment stablecoins, unlocking institutional adoption. The growth since has been vertical.

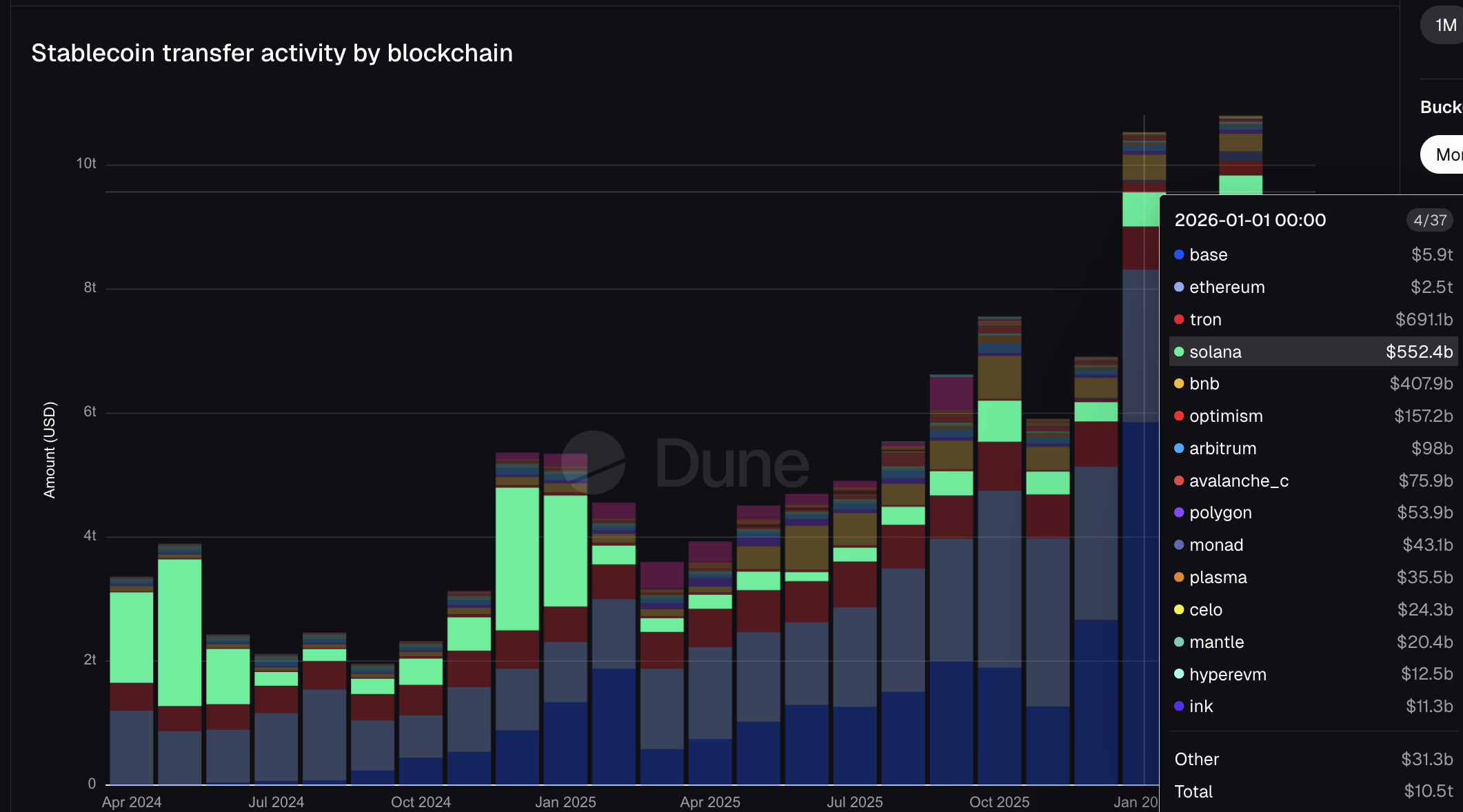

Dune Analytics data shows stablecoins transferred $10.5 trillion in January 2026 alone. For context, Visa processed $16.7 trillion in total fiat payment volume across its entire fiscal year 2025.

Mastercard processed $10.6 trillion in gross dollar volume over the same period. One month of stablecoin transfers on public blockchains approached what Mastercard’s fiat network moved in an entire year.

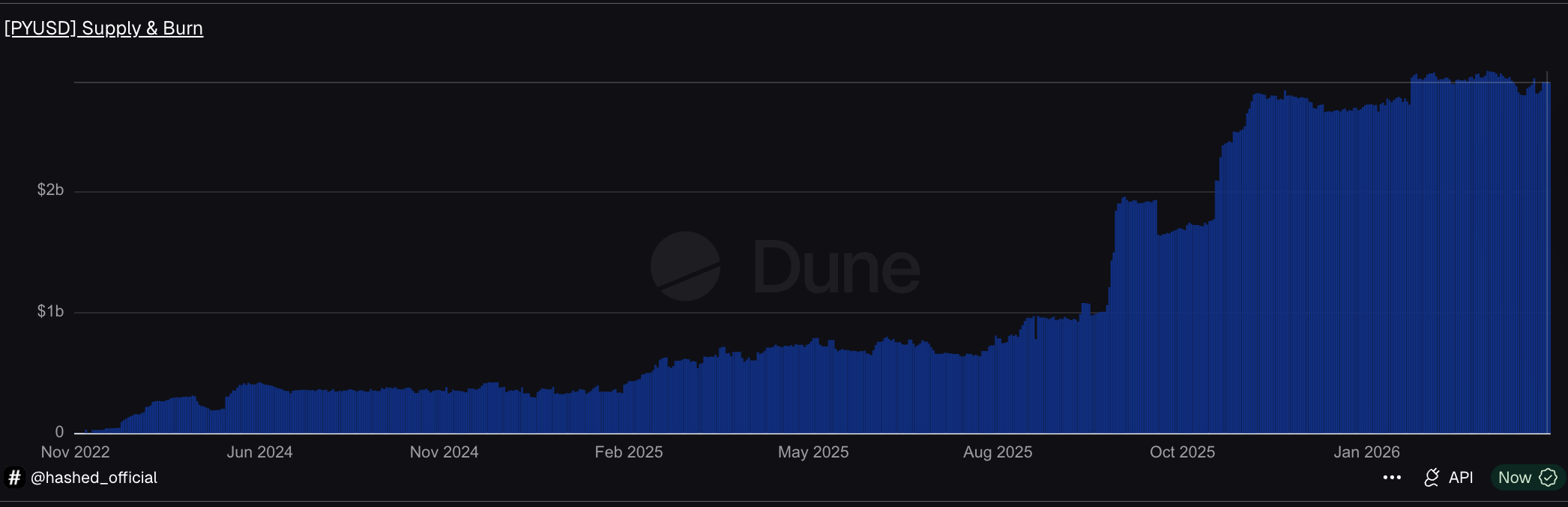

The DefiLlama leaderboard from earlier clearly tells the institutional story. PayPal’s PYUSD ranks #7, with a supply of $3.95 billion. BlackRock’s BUIDL is #8 at $2.96 billion.

The Mastercard-partnered USDG is #11 at $1.92 billion. These are not crypto-native tokens. These are stablecoins issued by or connected to the largest names in traditional finance, now ranked alongside USDT and USDC.

USDC moved $8.3 trillion of the January total, nearly five times USDT’s $1.7 trillion despite being 2.7 times smaller in supply. USDT dominates holdings. USDC dominates movement.

That distinction matters because USDC is the stablecoin Visa chose for settlement, JP Morgan used for the Galaxy debt deal, and Stripe’s infrastructure runs on. The institutional settlement layer runs primarily on a single token, minted by Circle.

Meanwhile, PayPal’s PYUSD moved $22.8 billion. Mastercard’s USDG moved $11.7 billion. The TradFi stablecoins are now visible on the volume charts, and every one of them traces back to just two minters.

Two Minter, One Rail, and It Bypasses Banks Entirely

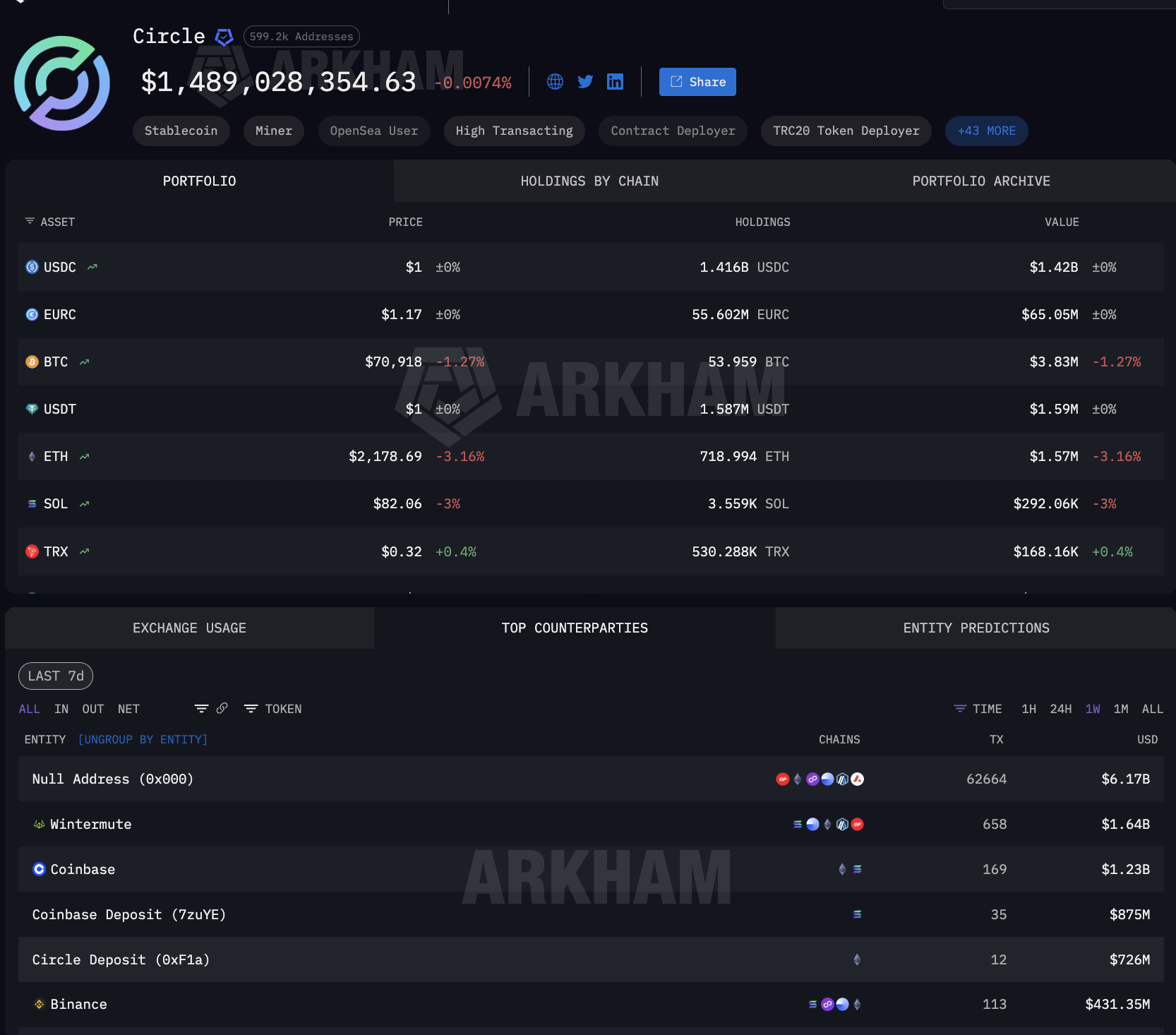

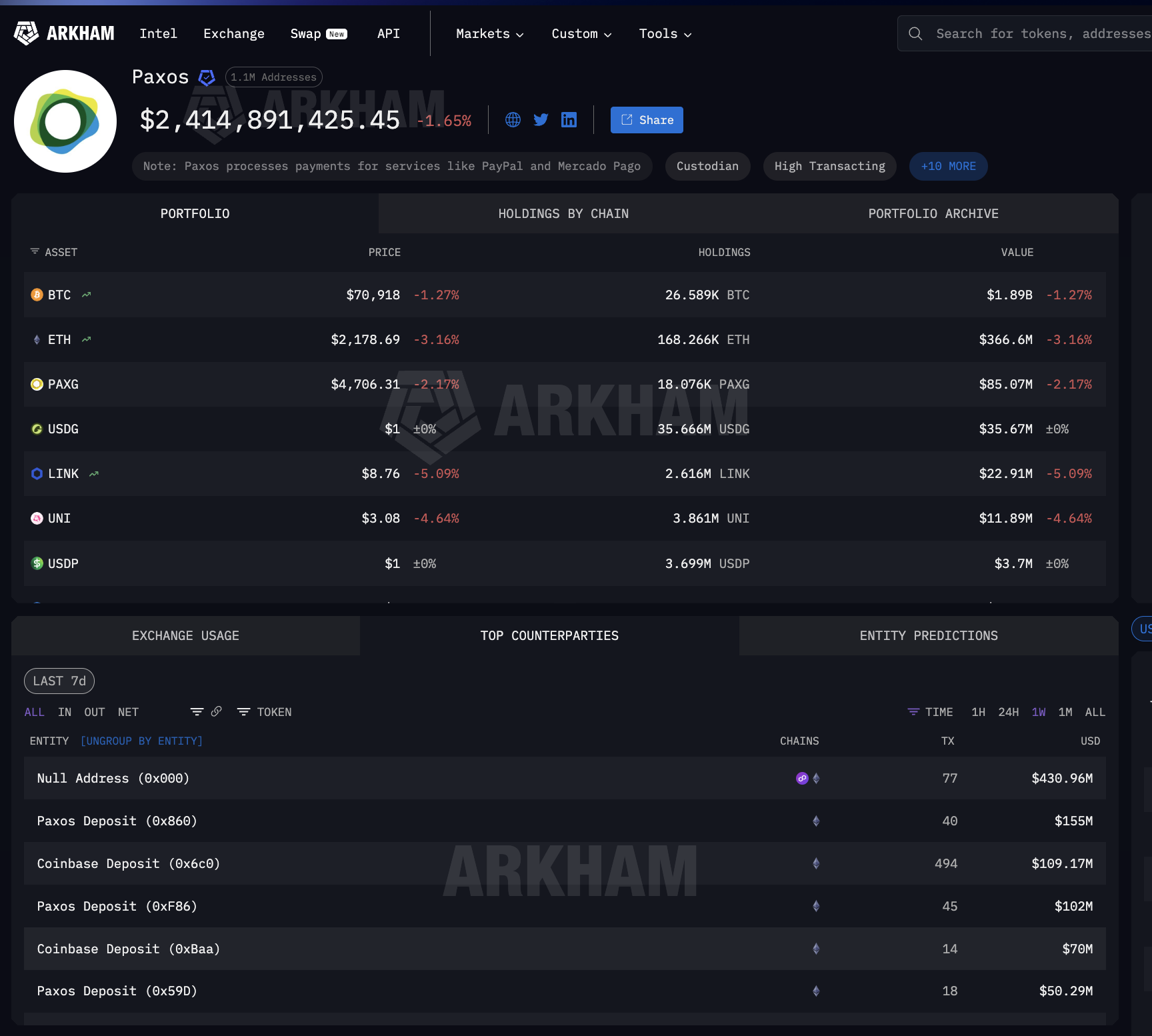

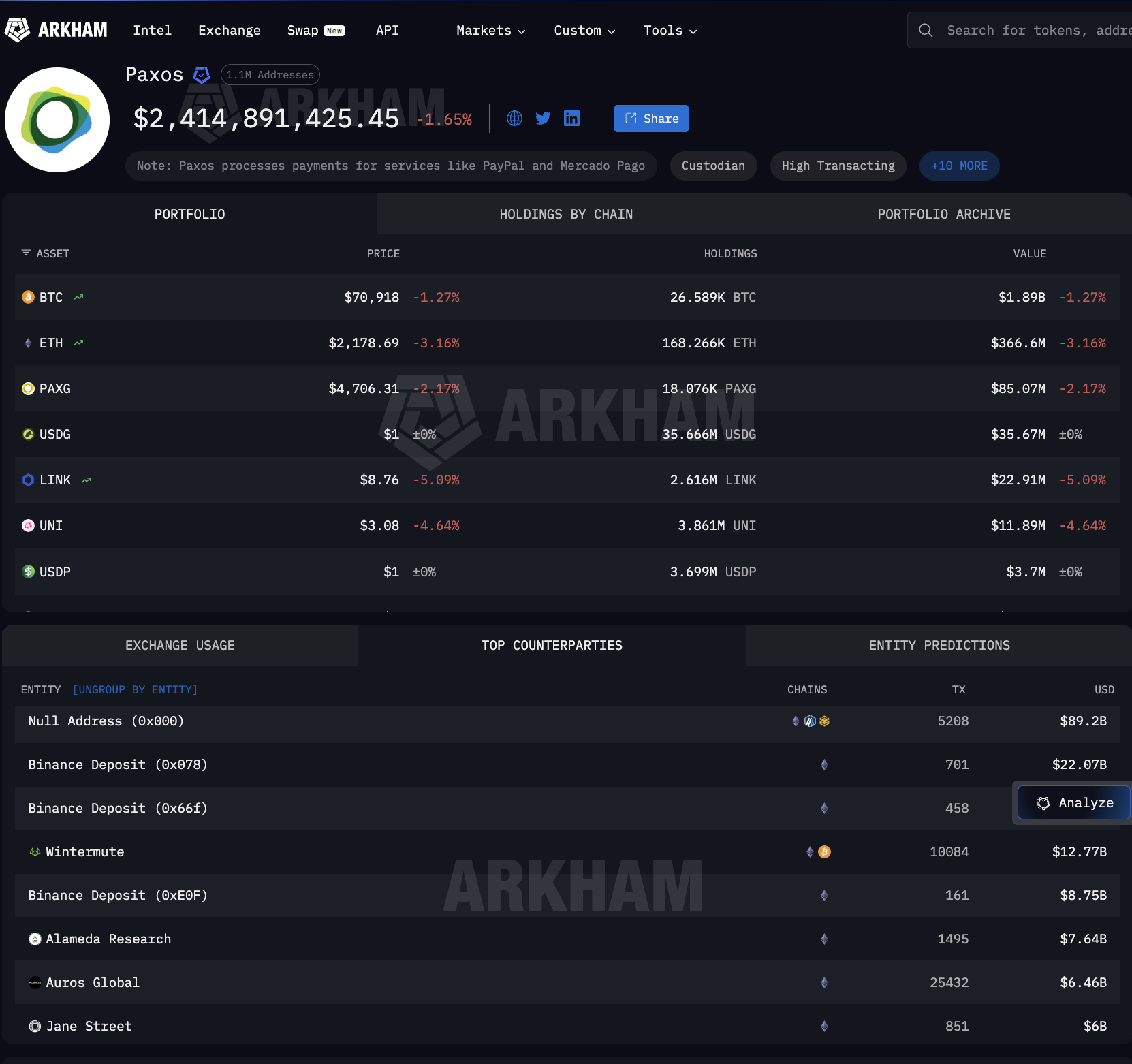

Circle and Paxos are the two minters. Circle mints USDC, the token that moved $8.3 trillion in January. Paxos mints PYUSD for PayPal and USDG for the Global Dollar Network that Mastercard anchors alongside Robinhood, Kraken, and DBS Bank. Between them, every major TradFi stablecoin integration traces back to one of these two entities.

Arkham Intelligence data shows what happens after minting. Paxos has pushed $89.2 billion outward across 5,208 mint-and-burn transactions. The recipients are not banks.

They are Binance ($22 billion), Wintermute ($12.77 billion), Jane Street ($6 billion), Coinbase ($2 billion), and other big names.

These are Wall Street market makers and crypto-native trading desks, not correspondent banking chains.

Circle’s counterparty data shows the same pattern. $6.17 billion in mint and burn activity. Wintermute at $1.64 billion. Coinbase at $2.1 billion combined across multiple deposit addresses.

Coinbase appears as a top counterparty for both minters, the one distributor straddling both sides of the TradFi settlement market.

The Paxos and Circle outflows are dominated by mint and burn operations, the mechanism by which stablecoin issuers create new tokens when clients need them and destroy them on redemption. The scale of the counterparties reveals where institutional settlement sits.

When firms of that size receive billions from Paxos, those funds are freshly minted stablecoins for institutional use, whether to fill a PayPal merchant payout, settle a Mastercard acquirer obligation, or provide liquidity for a Visa banking partner. The stablecoin is created for settlement and redeemed afterward.

That on-demand cycle does not exist in correspondent banking. That is how stablecoin infrastructure became the settlement rail. But where do those stablecoins sit between minting and burning?

Between Minting and Burning, Stablecoin Infrastructure Relies on Crypto Custody

As a result, the stablecoin infrastructure serving institutional finance does not just depend on who mints the tokens. It also depends on where they sit between creation and redemption. USDC is used by millions, making it difficult to attribute specific holdings to institutional settlement.

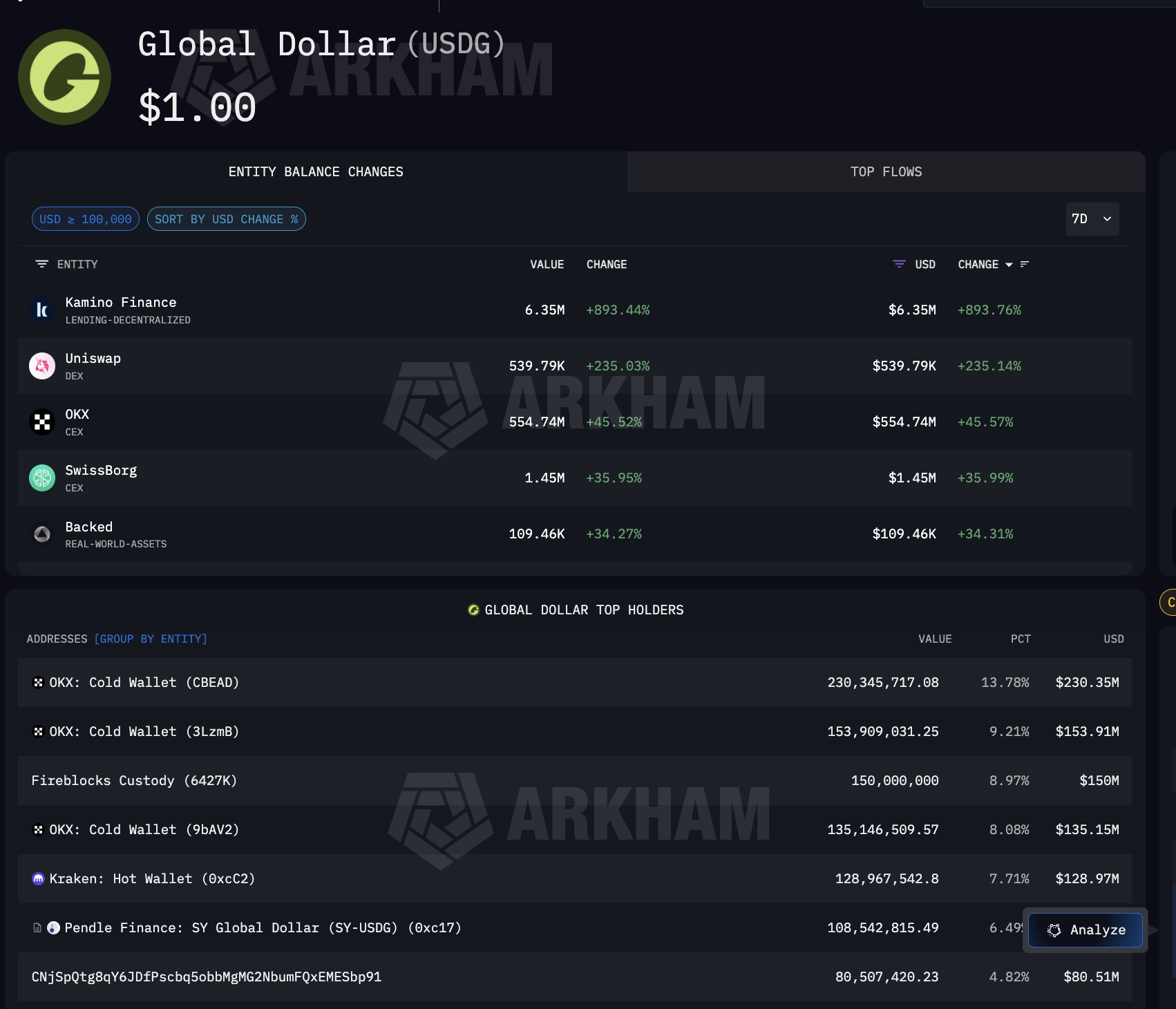

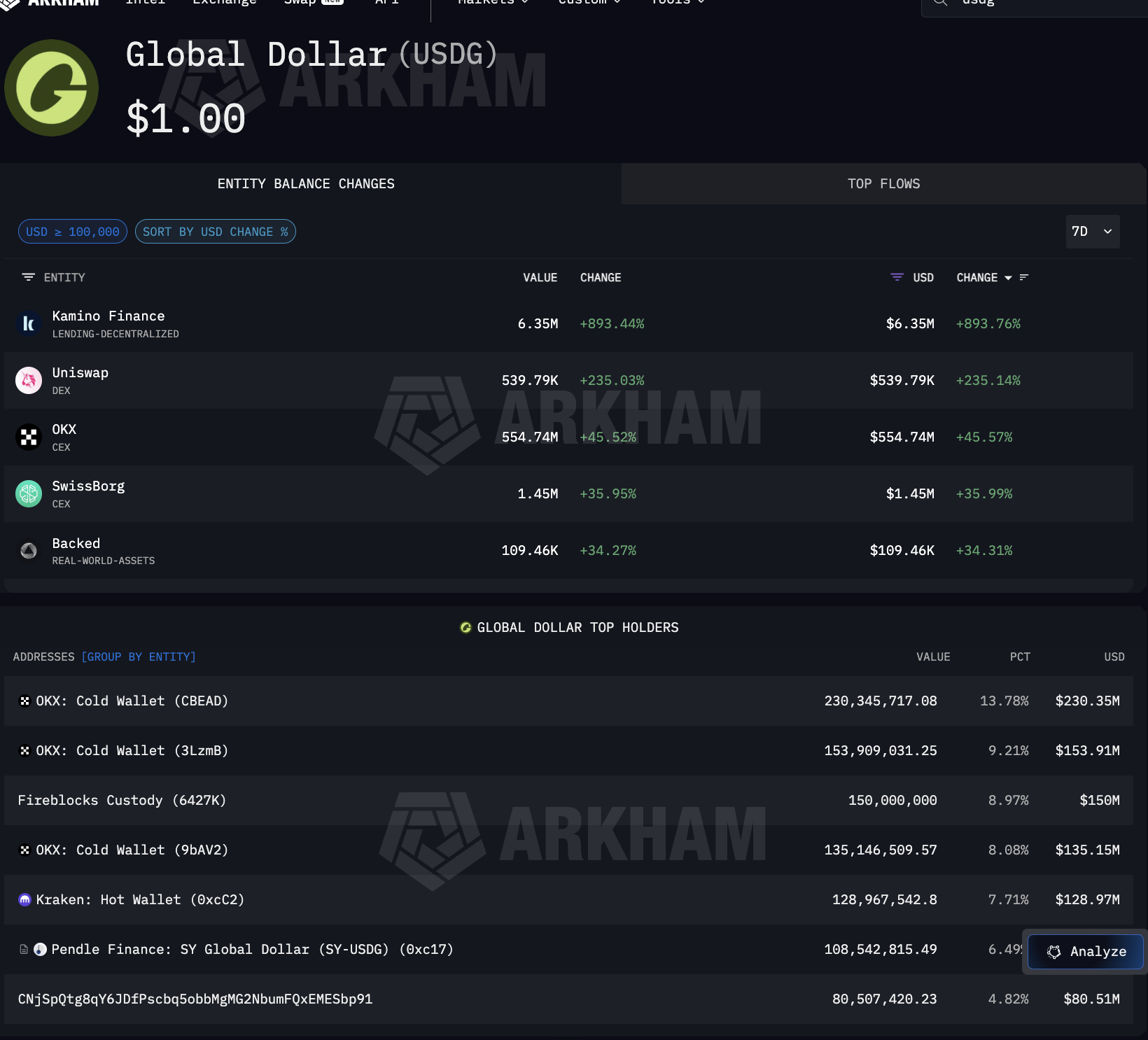

USDG, however, is different. It exists for one purpose: the Global Dollar Network that Mastercard, Robinhood, Kraken, and DBS Bank anchor. Consequently, every large USDG holder is directly tied to that institutional network.

Arkham data on USDG reveals where institutional stablecoins actually sit. The largest single holder is Fireblocks Custody at $150 million, representing 8.97% of the total supply.

Alongside Fireblocks, OKX holds $519 million across three cold wallets, while Kraken, a named Global Dollar Network partner, holds $128.97 million. Pendle Finance also holds, indicating that USDG is flowing into DeFi yield strategies.

What makes Fireblocks significant is that it also serves as the custody layer banks use for USDC operations, including on Solana, where Visa settles. In other words, one custody provider sits at the intersection of both the Mastercard settlement rail through USDG and the Visa settlement rail through USDC.

The full stablecoin infrastructure path is now visible.

Circle and Paxos mint. Coinbase, Wintermute, and Jane Street distribute. Fireblocks and exchange cold wallets hold. The reach extends beyond card networks.

Arkham’s Paxos entity page confirms that Paxos also processes payments for Mercado Pago, the largest fintech platform in Latin America, meaning the same minting infrastructure serving Mastercard and PayPal also serves emerging market settlement.

At every step between minting and redemption, institutional finance depends on the same concentrated set of crypto stablecoin infrastructure providers.

Four TradFi Strategies, Same Stablecoin Infrastructure Underneath

With the settlement stack mapped, the question becomes how institutional finance is actually connected to it. Each major player chose a different strategy. All of them plugged into the same underlying stablecoin infrastructure.

Visa committed the hardest. As of December 2025, it settled $3.5 billion annualized in USDC on Solana through Cross River Bank and Lead Bank.

It expanded to four stablecoins across four chains: USDC, PYUSD, USDG, and EURC on Solana, Ethereum, Stellar, and Avalanche. Stablecoin-linked cards via Stripe’s Bridge are live in 18 countries, expanding to 100+.

Visa also built its own on-chain analytics dashboard with Allium Labs, tracking $12.9 trillion in adjusted stablecoin volume and treating on-chain data as core business intelligence.

And Solana carried $552 billion in stablecoin transfers in January 2026 alone (top 4), the same chain on which both Visa and PayPal’s PYUSD settle.

Mastercard hedged instead, enabling four stablecoins across its network: USDC, PYUSD, USDG, and FIUSD. It joined the Paxos Global Dollar Network for USDG, the same stablecoin held by Fireblocks Custody at $150 million, as shown earlier.

Stripe acquired the infrastructure directly, buying Bridge for $1.1 billion. Bridge now powers both the Visa stablecoin-linked cards and Stripe’s own stablecoin financial accounts across 101 countries, running on the same USDC that Circle mints.

PayPal built its own stablecoin. PYUSD, minted by Paxos, reached $3.95 billion in supply across 70 markets (per DeFiLlama data).

On Solana, PYUSD circulates at 0.6x daily velocity, four times its Ethereum rate, concentrating on the same chain that Visa chose.

Four strategies. Same stablecoin infrastructure underneath: Circle or Paxos minting, Coinbase distributing, and Fireblocks holding. But everything needs to be linked better.

The Stablecoin Infrastructure Stack That Now Settles Institutional Finance

The evidence across this piece converges into a clear answer. Stablecoin infrastructure became the settlement layer for institutional finance, not because institutions adopted crypto. It became one because a small number of providers built pipes that were faster, cheaper, and available 24/7, and every major institution plugged in rather than building its own.

The stack has four layers, each of which is concentrated.

At the supply layer, Circle and Paxos mint the stablecoins that institutional finance depends on. Circle’s USDC moved $8.3 trillion in a single month. Paxos mints for PayPal, Mastercard, and Mercado Pago through the same entity.

At the distribution layer, Arkham data shows both minters routing stablecoins through the same counterparties: Coinbase and Wintermute. The settlement rail bypasses correspondent banks entirely.

At the custody layer, Fireblocks holds $150 million in USDG as the largest single holder, while also receiving USDC on Solana, straddling both card network settlement rails through a single custody provider.

At the integration layer, Visa settles $3.5 billion annually and monitors stablecoin flows as core business intelligence. Mastercard enabled four stablecoins. Stripe bought Bridge for $1.1 billion. PayPal launched PYUSD across 70 markets. JP Morgan settled debt in USDC on Solana. None built new rails.

This mirrors the pattern from our previous analysis of institutional crypto custody, where seven entities across four layers control where crypto sits.

Here, a similar concentration controls how institutional money moves. Different function, same structural conclusion: institutional finance is scaling on stablecoin infrastructure built by a handful of providers. The rails exist. The question now is whether the next wave of adoption diversifies that dependency or deepens it.

The post Who Really Runs Stablecoin Settlement? A Structural Analysis appeared first on BeInCrypto.

Crypto World

Binance Data Shows Crypto Traders Are Taking Over Traditional Markets

TLDR:

- Binance Gold trading volume surged from $1.5M to $7.6B daily in approximately 90 days.

- Silver trading on Binance peaked at nearly 20% of total daily COMEX trading volume.

- BlackRock and Franklin Templeton have launched tokenized funds on blockchain infrastructure.

- US Oil trades at $760M and Tesla stock at $190M daily on the Binance crypto platform.

Real-world asset tokenization is changing how investors access commodities and equities. Crypto platforms now offer direct exposure to traditional markets, attracting both retail and institutional participants globally.

Real-world asset (RWA) tokenization converts ownership rights of physical assets into blockchain-based digital tokens. These tokens represent fractional ownership and trade on crypto platforms alongside standard cryptocurrencies.

Smart contracts power the process, cutting out intermediaries and reducing settlement times considerably.

Ali Charts recently noted the growing overlap between crypto trading and traditional financial markets. Platforms like Binance now offer direct access to commodities, equities, and digital assets in one place.

This shift is visible in rising trading volumes across multiple asset classes on crypto exchanges.

Binance Volume Data Points to a Measurable Market Shift

Gold trading on Binance climbed from $1.5 million in daily volume to $7.6 billion within approximately 90 days. Silver followed a similar path, reaching $6.4 billion in daily volume at its peak.

That peak represented nearly 20% of total daily COMEX trading volume, a widely recognized commodity benchmark. Beyond precious metals, other traditional assets are recording notable figures on crypto platforms.

Binance’s daily trading volume for US Oil is $760 million, while Tesla stock trades approximately $190 million daily. Products like MicroStrategy stock and crude oil futures are also showing strong activity compared to traditional market equivalents.

Traditional exchanges like COMEX and NYMEX operate within fixed trading hours and involve multiple intermediaries. Crypto exchanges operate around the clock, allowing traders to act on real-time events without delay.

This availability is drawing investors who previously found traditional commodity markets difficult to access.

Institutional Adoption and the Regulatory Path Ahead

As more investors trade real-world assets through crypto platforms, liquidity in these markets continues to build. Investors no longer have to choose between crypto and traditional assets, as both are now accessible on a single platform.

This removes geographic and institutional barriers that once limited broader market participation. Major financial institutions are moving steadily into blockchain-based asset tokenization.

BlackRock and Franklin Templeton have both launched tokenized funds and blockchain investment products. Their involvement adds credibility to the long-term infrastructure supporting RWA tokenization.

Decentralized finance platforms are integrating tokenized assets to build new lending and yield products. Regulatory clarity remains a key factor shaping the pace of adoption globally.

Jurisdictions that balance blockchain innovation with investor protection are positioned to attract sustained industry growth.

Crypto World

Iran War Fallout Could Dominate Crypto Markets in 2026, Analyst Says

Bitcoin’s recent rally has proven fragile as a confluence of geopolitical tensions and macro headwinds weighs on sentiment. About a week into its rebound, BTC was hovering near the $71,000 level, with traders watching for signs of sustained strength in the face of ongoing Middle East conflict and uncertain policy signals. Data from TradingView put the spot around $71,276 as of the latest sessions, underscoring the challenge of building a durable upside from here.

“Even if the war ends now, its repercussions will likely define the story for 2026 and, at minimum, dominate the narrative through Q2,” said Nic Puckrin, a crypto market analyst and founder of Coin Bureau. In an interview with Cointelegraph, Puckrin framed the current setup as fragile, arguing that a sustained push higher would depend on a confluence of favorable developments beyond the immediate conflict.

For a push toward $90,000, we would need to see a combination of a ceasefire that ends geopolitical tensions, a sustained drop in oil prices toward $80, and ideally also softer-than-expected economic data that calms stagflation fears.

Beyond the headline risk, price action remains tethered to macro dynamics. If Bitcoin closes the week above the $71,000 mark, Puckrin suggested the next leg higher could unfold toward the $74,000 zone, though the path remains contingent on a broader risk-on environment and how geopolitical headlines evolve.

Key takeaways

- Bitcoin trades near $71,000, with resistance eyed around $74,000; a weekly close above $71,000 could signal more upside.

- The market currently faces an inflationary impulse linked to ongoing conflict, a factor that dampens expectations for near-term rate cuts in 2026.

- A sustained rally toward $90,000 would require a ceasefire, oil around $80, and softer-than-expected economic data, according to Nic Puckrin.

- Macro policy remains uncertain: the Fed’s stance on rate cuts in 2026 is still debated in light of inflation pressures and war-related risks.

- Near-term price action has shown volatility: BTC briefly crossed above $73,000 in early April before retreating toward $71,000 as headlines from the Middle East and policy signals evolved.

Bitcoin’s price action in the shadow of geopolitics and policy

The latest price movement reflects a delicate balance between risk appetite and safety-driven demand. After a surge to just over $73,000 in early April—driven by a broader risk-on tone—the market retraced as news of stalled negotiations between the U.S. and Iran fed into risk-off sentiment. The Kobeissi Letter captured the tone, describing the peace talks as “arguably the worst-case scenario” when they appeared to falter, a sentiment that rippled through markets as traders recalibrated expectations for geopolitical risk premiums embedded in crypto prices.

In a separate development, former U.S. President Donald Trump stated on Truth Social that he had directed the U.S. Navy to form a naval blockade around the Strait of Hormuz and to interdict vessels that paid tolls to Iran. While such statements escalate geopolitical risk discourse, traders often weigh them against the practical likelihood and timing of policy changes that would meaningfully shift Bitcoin’s trajectory.

The ongoing macro backdrop is reinforced by inflation data, with the U.S. Bureau of Labor Statistics’ CPI report highlighting an inflationary spike tied to the war. The CPI release cooled hopes for rapid further rate cuts in 2026 and reinforced the narrative that monetary policy will remain restrictive while inflation remains elevated.

Policy signals, market expectations, and what comes next

The policy landscape remains a crucial driver for crypto risk assets. Minutes from the March FOMC meeting underscored ongoing debate among policymakers about the path of rate cuts in 2026, influenced by inflation concerns tied to wartime dynamics. The market’s expectations around the federal funds rate have shifted in response to these tensions.

According to CME Group’s FedWatch tool, the probabilities indicate a very high likelihood—over 98%—that the FOMC will keep the current target range of 3.50%–3.75% at the next two meetings (April 29 and June 17). The probability of a rate cut by the July 29 meeting sits at roughly one-third, with about a 33.6% chance of a 25 basis point cut. This landscape suggests a prolonged period where policy remains restrictive until inflation shows clearer signs of easing.

For Bitcoin traders, the combination of policy certainty on hold with a potential future rate cut remains a central tension. The market is watching whether softer data emerges to push expectations for easing, or whether inflationary momentum persists in the face of geopolitical shocks. Meanwhile, BTC’s technical backdrop—trading below the 200-day exponential moving average, as reflected in traders’ charts—adds another layer of caution for near-term bets.

Beyond the immediate price dynamics, the broader crypto narrative continues to hinge on how investors interpret risk, and whether a stabilizing ceasefire and lower oil prices could unlock a more durable risk-on environment. While the path to $90,000 remains a conditional and uncertain proposition, the scenario Puckrin outlines—courtesy of a ceasefire, oil around $80, and a favorable macro backdrop—provides a benchmark against which market moves will be measured in the coming weeks and months.

As the market absorbs mixed signals from geopolitics and policy, traders will be watching several indicators: a potential shift in oil prices that alleviates energy-driven inflation, a softer-than-expected economic data flow that could prompt earlier policy loosening, and, importantly, any development toward de-escalation of regional tensions that might remove some of the near-term risk premium baked into crypto assets.

Reading the tea leaves for Bitcoin now means focusing on the confluence of headlines and data: price action around $71,000, an upcoming test of resistance near $74,000, and the evolving expectations for 2026 policy moves. The coming weeks could reveal whether the current recovery gains traction or whether the market reverts to a more cautious posture as macro and geopolitical risks persist.

Readers should remain attentive to how geopolitical developments unfold, how oil prices respond to those dynamics, and how inflation and policy guidance shape risk appetite across crypto markets. The next movements in these areas will likely define whether Bitcoin’s recovery gains durability or remains a fragile bounce in uncertain times.

Crypto World

Analyst Warns Bitcoin April Rally Could Precede May-June Crash

TLDR:

- Analyst Aaron Dishner warns April’s BTC rally is a deceptive move within a larger bear market.

- Historical bottom-year patterns show April relief rallies precede sharper May and June corrections.

- On-Balance Volume and TBO divergence signals suggest Bitcoin’s April recovery lacks real conviction.

- Dishner targets $49,000 as Bitcoin’s key support if the $60,000 price floor breaks down in Q2.

Bitcoin bull trap fears are mounting as analyst Aaron Dishner warns that April’s price recovery is not a sign of bear market reversal.

Drawing on historical bottom-year patterns and technical indicators, Dishner argues that a deceptive relief rally is forming ahead of a sharper May and June correction.

He cautions retail investors against mistaking short-term green candles for a sustained trend shift, noting that the broader bearish structure for Bitcoin remains firmly in place.

April’s Rally Could Be Setting Up Retail Investors for a Fall

Bitcoin has shown sharp price swings this week, drawing fresh attention from traders across the market. Analyst Aaron Dishner urges caution against reading too deeply into recent gains.

He argues the bear market structure remains intact despite the short-term price uptick. Dishner’s analysis draws on the Better Crypto Calendar, tracking monthly returns for BTC, ETH, and the broader crypto market.

The data reveals a recurring pattern in bottom years, where relief rallies appear but stay contained within a larger bearish structure. He believes 2026 is following that same path.

He previously flagged a 4.3% price pump triggered by unverified geopolitical news, which reversed quickly. He sees that kind of move as a textbook example of low-liquidity manipulation. Similar fake-outs, he warns, are likely to repeat through April.

On X, Dishner wrote: “The data is pointing to a mini rally in April that could fool a lot of people before things get uglier in May and June.”

Potential tests of the $70,000 to $80,000 range are part of what he describes as a relief rally phase. Those levels could pull in retail buyers expecting a full trend reversal.

That enthusiasm, he notes, is exactly what makes a bull trap effective. The TradFi phrase “sell in May and walk away” lines up well with his broader outlook this year.

Bottom years, he explains, often include an April bounce, followed by further pain in May and June. A secondary bounce around July is also possible, but only after a more significant drawdown has already occurred.

Technical Signals and Downside Targets Reinforce the Bearish Case

Dishner tracks On-Balance Volume alongside his TBO Indicator to assess the conviction behind any rally. Both metrics are currently weak, despite the recent price move higher.

He reads this as a clear sign that genuine buying pressure is missing from the market. Fresh Trending Breakout divergence warnings have appeared on higher timeframes as well.

These signals, in his view, point toward lower price lows ahead in May and June. His primary downside target for Bitcoin sits at $49,000, should the $60,000 support level fail to hold.

Dishner advises traders to stay patient and avoid chasing short-term green candles in this environment. He recommends waiting for bearish confirmation before entering heavy positions.

Bottom years do create long-term accumulation opportunities, but only for those who remain disciplined through the volatility and false signals along the way.

Crypto World

Europe Moves to Place Crypto-Asset Providers Under Centralized EU Supervision Through ESMA

TLDR:

- The ECB fully endorsed the EU Commission’s plan to transfer crypto-asset supervision to ESMA in Paris.

- Crypto-asset service providers would move from national regulators to a single EU-wide supervisory framework.

- The ECB warned that ESMA must be adequately staffed and funded before taking on expanded crypto oversight.

- The proposal now enters EU government and Parliament negotiations, a process expected to take several months.

Europe is moving toward centralized oversight for crypto-asset service providers across the bloc. The European Central Bank endorsed the European Commission’s proposal on Friday.

The plan shifts supervision of key financial entities, including crypto firms, to EU level. The European Securities and Markets Authority in Paris would take on this expanded role.

France and Germany have championed this regulatory integration effort. The move aims to strengthen Europe’s position against the US and China.

Crypto-Asset Providers Face Direct EU Supervision Under New Proposal

Crypto-asset service providers are now formally included in the EU’s centralized oversight push. The ECB confirmed its full support for transferring supervision of these entities to ESMA.

This marks a clear shift in how Europe intends to regulate the crypto sector. Crypto firms operating across EU borders would fall under a single supervisory framework.

Currently, crypto-asset service providers answer to national regulators in each member state. The Commission’s proposal would move that authority directly to ESMA in Paris.

This change would create a unified approach to crypto oversight across the entire EU. The goal is to remove fragmentation and close regulatory gaps between member states.

The ECB made its position clear in a formal opinion required under EU legislative procedures. “The ECB fully supports the Commission proposals, which constitute an ambitious step towards deeper integration of capital markets and financial market supervision within the Union,” the central bank stated

. While the opinion is not binding on lawmakers, it carries considerable weight in the ongoing debate. It signals institutional alignment behind the push for centralized crypto supervision.

Smaller EU states such as Ireland and Luxembourg have shown hesitation toward the plan. Both countries currently host a significant number of crypto and financial firms.

However, the ECB’s backing may gradually ease resistance from these governments. Centralized oversight could also bring greater legal certainty for crypto businesses operating in those markets.

ESMA Must Be Ready to Handle Crypto Supervision at Scale

The ECB warned that ESMA must be properly staffed and funded before taking on crypto oversight. Expanding supervision to include crypto-asset service providers adds significant operational demands.

Without adequate resources, effective enforcement of crypto regulations could fall short. The central bank stressed that resourcing must be addressed before the transition begins.

A sequenced transition from national to EU-level supervision was also recommended by the ECB. For crypto firms, an abrupt regulatory handover could create compliance uncertainty in the short term.

A phased approach would give both ESMA and crypto providers time to adjust. This measured rollout is seen as key to maintaining stability during the changeover.

The ECB also requested a non-voting seat on the ESMA board as part of this process. It wants its expertise reflected in technical standards, guidelines, and recommendations that will govern crypto supervision.

These standards will shape how crypto-asset service providers are monitored going forward. Getting this framework right matters for both investor protection and market confidence.

The Commission’s proposal now heads into negotiations between EU governments and the European Parliament. This legislative process is expected to span several months before becoming law.

For the crypto sector, the outcome of these talks will define the regulatory landscape ahead. Industry participants across Europe will be watching each stage of the negotiations closely.

-

Politics2 days ago

Politics2 days agoUS brings back mandatory military draft registration

-

Fashion2 days ago

Fashion2 days agoWeekend Open Thread: Veronica Beard

-

Sports2 days ago

Sports2 days agoMan United discover Nico Schlotterbeck transfer fee as defender reaches Dortmund agreement

-

Tech5 days ago

Tech5 days agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Politics13 hours ago

Politics13 hours agoWorld Cup exit makes Italy enter crisis mode

-

Crypto World4 days ago

Crypto World4 days agoCanary Capital Files SEC Registration for PEPE ETF

-

Fashion7 days ago

Fashion7 days agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Business2 days ago

Business2 days agoTesla Model Y Tops China Auto Sales in March 2026 With 39,827 Registrations, Beating Cheaper EVs and Gas Cars

-

Fashion5 days ago

Fashion5 days agoLet’s Discuss: DEI in 2026

-

Crypto World5 days ago

Crypto World5 days agoBitcoin recovers as US and Iran Agree a Ceasefire Deal

-

Politics3 days ago

Politics3 days agoMalcolm In The Middle OG Turned Down ‘Buckets Of Money’ To Appear In Reboot

-

NewsBeat5 hours ago

NewsBeat5 hours agoPep Guardiola and Gary Neville agree over Arsenal title problem that benefits Man City

-

Business3 days ago

Business3 days agoOpenAI Halts Stargate UK Data Centre Project Over Energy Costs and Copyright Row

-

Business1 day ago

Business1 day agoIreland Fuel Protests Enter Day 5 as Blockades Spark Shortages and Government Prepares Support Package

-

Tech7 days ago

Tech7 days agoItalian court says Netflix must refund customers up to $576 over price hikes

-

Tech7 days ago

Tech7 days agoHaier is betting big that your next TV purchase will be one of these

-

Tech7 days ago

Tech7 days agoGamer Restores the Original PlayStation Portal From Two Decades Ago

-

Tech7 days ago

Tech7 days agoThe Xiaomi 17 Ultra has some impressive add-ons that make snapping photos really fun

-

Tech7 days ago

Tech7 days agoSamsung just gave up on its own Messages app

-

Tech7 days ago

Tech7 days agoSave $130 on the Samsung Galaxy Watch 8 Classic: rotating bezel, sleep coaching, and running coach for $369

You must be logged in to post a comment Login