Crypto World

Jack Dorsey says AI should replace the middle manager after Block (XYZ) cuts 4,000 jobs

In Jack Dorsey’s view of the world, the job most at risk from the AI revolution is the middle manager.

Dorsey argues in a new essay, “From Hierarchy to Intelligence,” published with Roelof Botha, Sequoia Capital’s managing partner, an investor in Block, that his company’s decision to cut approximately 4,000 of its more than 10,000 employees was not a cost reduction but a permanent restructuring to replace middle managers with AI.

Corporate hierarchy, the essay argues, has always existed to solve one problem: routing information through organizations too large for any single person to oversee.

Managers aggregate context from below, act as messengers from above, and maintain alignment across teams. AI can now perform those functions continuously and at scale, the authors argue, making the messenger redundant.

In place of management layers, Dorsey and Botha proposes two AI-driven “world models.”

One aggregates internal data from code, decisions, workflows, and performance metrics to create a continuously updated picture of company operations, replacing the context that managers traditionally carried.

The other maps customer and merchant behavior using transaction data from Cash App and Square.

Those models feed what Block calls an “intelligence layer” that composes financial products dynamically to fit market demand.

If done properly, the models absorb the coordination work that previously justified the existence of middle management.

Rather than building from fixed roadmaps, the essay proposes breaking Block’s business into modular capabilities, including payments, lending, card issuance and payroll.

When the system identifies a need, the essay’s example is a merchant facing a seasonal cash flow gap, it assembles a solution from existing capabilities. When it cannot, the missing capability defines what gets built next, replacing the product roadmap with a system-generated backlog.

The organizational structure is reduced accordingly. Block plans to operate with three roles: individual contributors who build the system, directly responsible individuals who own specific outcomes on 90-day cycles, and player-coaches who remain hands-on while developing people.

Dorsey told Wired in early Marchthe restructuring was triggered by a capability shift he observed in December in tools including Anthropic’s Opus 4.6 and OpenAI’s Codex 5.3, which he said was now capable of operating effectively in large codebases.

But current and former Block employees told the Guardian that roughly 95% of AI-generated code changes still require human modification, and that AI tools cannot yet lead in regulated areas like banking and money transfers.

Crypto World

Advanced Micro Devices (AMD) Stock Surges to New 52-Week Peak Amid AI Server Boom

Key Takeaways

- Shares of Advanced Micro Devices peaked at $469.22 during Monday’s trading session, finishing 0.79% higher at $458.79

- According to GF Securities projections, the server CPU sector may expand from $26 billion in 2025 to $135 billion by decade’s end, representing 38% compound annual growth

- AMD’s data center CPU division is projected to experience 73% expansion in 2026, while CEO Lisa Su has doubled the company’s long-term growth forecast to 35%

- Major Wall Street firms Goldman Sachs and Bernstein elevated their ratings to Buy after the chipmaker delivered results exceeding expectations across all metrics

- Prominent investor Stone Fox Capital projects shares could climb to $600, with company revenues potentially crossing $100 billion next year

Shares of Advanced Micro Devices finished Monday’s trading at $458.79, gaining 0.79%, after establishing a fresh 52-week peak of $469.22 earlier in the session. The upward momentum reflects Wall Street’s growing interest in CPU manufacturers and data center equipment providers amid the expanding AI infrastructure landscape.

Advanced Micro Devices, Inc., AMD

What’s fueling this rally? A powerful combination of impressive quarterly results and mounting evidence that the next wave of artificial intelligence investment will flow heavily through server processors — extending beyond GPU-only strategies.

The semiconductor giant exceeded Wall Street projections across earnings, revenue, and forward guidance in its most recent quarterly disclosure. Chief Executive Lisa Su highlighted AI agents as creating “tremendous demand” throughout the entire AI adoption spectrum.

Su dramatically revised the company’s long-term server CPU market projections upward — jumping from an 18% growth forecast issued in November to a 35% compound annual growth rate, with the addressable market potentially expanding to $120 billion by 2030. AMD is actively increasing wafer procurement and back-end production capabilities to address anticipated demand levels.

According to Su, the data-center business unit has evolved into the “primary driver” of the company’s revenue expansion and profit growth, with inference workloads and agentic AI applications accelerating demand for high-performance processors and accelerators.

Wall Street Analysts Raise Ratings

Both Goldman Sachs and Bernstein elevated Advanced Micro Devices to Buy recommendations following the quarterly report, emphasizing robust CPU demand linked to AI computing requirements.

JPMorgan characterized the results as demonstrating a “structural inflection” across both server processor and data-center accelerator segments.

Wedbush’s Matt Bryson increased his price objective to $450 with an Outperform designation, highlighting improved unit volumes and favorable pricing dynamics associated with compute infrastructure supporting agentic AI.

Citi’s Atif Malik elevated his target to $358 while maintaining a Neutral stance, expressing optimism regarding AMD’s CPU market opportunity.

The prevailing Wall Street consensus reflects a Strong Buy recommendation, comprising 27 Buy ratings alongside 8 Hold ratings. The mean 12-month price objective stands at $442.94.

The Optimistic Outlook

Analytical firm GF Securities identified AMD, Intel, and Qualcomm as well-positioned to capitalize on an emerging server CPU supercycle. The research house forecasts 73% revenue growth for AMD’s server CPU business in 2026.

Mizuho’s Jordan Klein suggested to CNBC that the semiconductor sector’s momentum might represent a “changing of the guard in AI,” with capital flowing toward AMD, Intel, Micron, and Corning.

Prominent investor Stone Fox Capital, ranked among the top 4% of equity analysts on TipRanks, describes the CPU market opportunity as “huge” and anticipates AMD’s server processor revenue expanding tenfold by 2030.

Stone Fox projects the company could achieve $100 billion in aggregate revenue next year — surpassing consensus analyst projections of $75 billion for 2027 — and potentially exceeding $175 billion by decade’s end.

This revenue trajectory, according to Stone Fox’s analysis, supports a valuation of $600 per share when applying a 20x price-to-earnings multiple.

Cautionary Voices Emerge

BTIG’s Jonathan Krinsky sounded a cautionary note, drawing parallels between the current semiconductor rally and the late-1990s technology bubble. He cautioned the sector might experience a correction ranging from 25% to 30% following aggressive valuation expansion.

Bank of America projects the data-center CPU market expanding from $27 billion in 2025 to $60 billion by 2030, a notably more restrained forecast compared to GF Securities’ $135 billion estimate.

Intel’s CEO Lip-Bu Tan reinforced AMD’s optimistic CPU outlook during his company’s recent quarterly call, validating the perspective that server processors represent a significant growth catalyst throughout the semiconductor industry.

Crypto World

The Senate Just Dropped a 309-Page Crypto Bill at Midnight: Will the CLARITY Act Finally Give Institutions the Green Light?

The Senate Banking Committee dropped the full 309-page text of the CLARITY Act just after midnight on Tuesday, May 11, 2026, ahead of a Thursday committee hearing that could advance the most comprehensive crypto market structure legislation the U.S. has attempted.

The headline provision: a 1:1 reserve mandate requiring all payment stablecoin issuers to hold high-quality liquid assets against every token in circulation.

The tension at the center of this bill is real; it asks stablecoin issuers, DeFi developers, institutional custodians, and traditional banks to accept a single regulatory framework that serves none of them perfectly.

The second major structural element draws a hard jurisdictional line between the SEC and CFTC, assigning oversight based on whether a token functions as a security with ongoing management-led profit expectations or as a digital commodity within a decentralized protocol.

That division has been missing from U.S. law since Bitcoin’s creation, and its absence has been the single largest barrier to institutional custody approvals at regulated fiduciaries. The bill does not resolve every gray zone, but it creates the statutory floor that compliance teams have said they need before allocation committees will act.

Discover: The best pre-launch token sales

What the 1:1 Reserve Mandate Actually Requires – and Who It Pressures

The CLARITY Act restricts qualifying reserve assets to short-duration U.S. Treasuries under 90 days, overnight repurchase agreements, and central bank deposits. That is a tighter composition requirement than current market practice.

Tether’s USDT reserve disclosures have historically included corporate paper, money market funds, and secured loans, none of which would qualify under this framework. Circle’s USDC, by contrast, has already shifted toward short-duration Treasuries and cash, positioning it closer to compliance than its largest competitor.

On stablecoin yield, the bill’s language is deliberately constrained. It permits interest or yield payments only when made “solely in connection with the holding of payment stablecoins” or structured to be economically equivalent to interest on a bank deposit.

Coinbase CEO Brian Armstrong, whose company was at the center of that negotiation, said publicly on Monday that “not everyone got everything they wanted, but they got the must-haves.” Armstrong confirmed Coinbase is working with at least five of the largest global banks and framed the outcome as workable: “We want it to be win-win and work with the banks.”

The American Bankers Association is not satisfied. The group escalated its lobbying over the weekend, warning senators that yield-bearing stablecoins could drain insured deposits and destabilize mortgage funding.

Research from Galaxy pushed back directly, arguing that stablecoin growth will predominantly originate offshore and that “foreign capital will flow into U.S. banking infrastructure at a rate that materially exceeds any domestic deposit migration.”

That is a contested empirical claim, but it is the framework Galaxy is asking lawmakers to adopt before Thursday’s vote on Stablecoin Regulation.

What Clarity ACT Bill Passage Means for Capital Flows, and What Stalls It

Galaxy’s research framing has direct market implications: if stablecoin growth is predominantly offshore-driven, the reserve mandate functions as an onboarding mechanism for foreign dollar demand into U.S. Treasuries, not a threat to domestic bank deposits.

That framing, if it holds in Senate debate, substantially weakens the American Bankers Association’s argument and increases the probability the yield language survives intact.

Senate Banking Committee Chairman Tim Scott called the bill “serious, good-faith work” that “puts consumers first, combats illicit finance” and “keeps the future of finance here in the United States.”

The opposition, led by ranking Democrat Elizabeth Warren, is not primarily about reserves or jurisdiction, it is about the missing ethics provision.

Warren stated that Trump and his family have “raked in at least $1.4 billion in gains from crypto deals alone” in his first year, and that “this bill stunningly includes zero provisions to prevent that.”

The conflict-of-interest section is outside the Banking Committee’s jurisdiction and must be added later. Democrats, including Senator Kirsten Gillibrand, have said they will not allow the bill to move without it. Sixty yes votes are required for Senate passage, that number requires meaningful Democratic support, the same dynamic that institutional adoption narratives in the payment token space depend on for durable regulatory legitimacy.

The bill still needs to be merged with a version approved by the Senate Agriculture Committee, the ethics provision must be negotiated and inserted, and then 60 senators must vote yes.

White House adviser Patrick Witt has set July 4 as the administration’s target. Senator Gillibrand has predicted the first week of August.

If the committee votes Thursday and the ethics language lands in a form both parties can accept, that timeline is plausible. If the conflict-of-interest provision becomes the bill’s breaking point, the framework gets delayed, and every institutional allocation waiting on statutory classification waits with it.

The post The Senate Just Dropped a 309-Page Crypto Bill at Midnight: Will the CLARITY Act Finally Give Institutions the Green Light? appeared first on Cryptonews.

Crypto World

US Authorities Charge Trio Over $6.5M Crypto Wrench Attacks

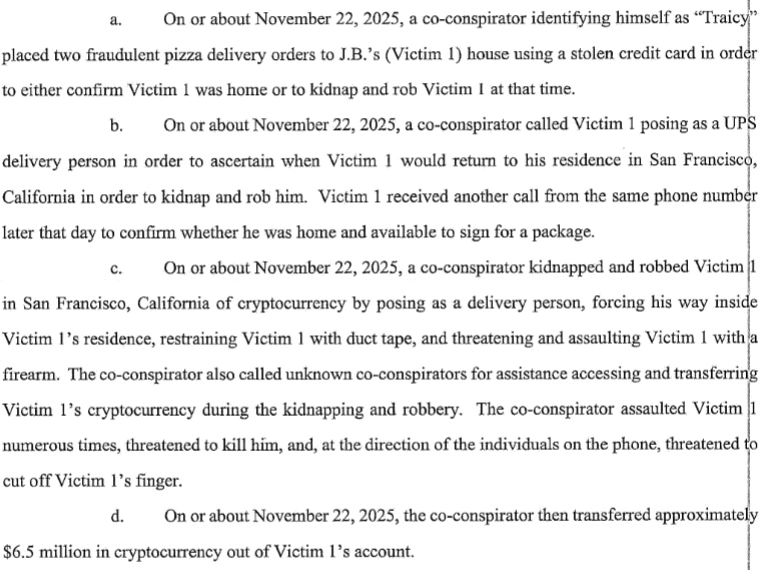

US authorities have unsealed an indictment against three men accused of stealing at least $6.5 million in a “violent robbery spree targeting cryptocurrency owners.”

The Justice Department said in a statement Monday that a federal grand jury indicted three men for allegedly planning to kidnap and rob four people around San Francisco and Los Angeles for their crypto.

The trio, Elijah Armstrong, Nino Chindavanh and Jayden Rucker, are alleged to have posed as delivery drivers to force their way into residences and use threats of violence to extract crypto seed phrases.

So-called wrench attacks, where crypto owners are subject to physical threats, have increased globally since 2025. French authorities charged 88 people in April with committing attacks against local crypto owners.

US prosecutors claimed they identified at least four people the trio targeted from Nov. 22 until Dec. 31. One of the people was allegedly forced to transfer $6.5 million in crypto to a wallet controlled by the trio, according to an unsealed indictment filed in a San Francisco federal court.

One of the victims was allegedly forced to transfer $6.5 million in crypto to the attackers. Source: PACER

“These individuals, as alleged, terrorized their victims in the hopes of stealing vast sums of cryptocurrency,” Craig Missakian, the US Attorney for the Northern District of California, said in a statement Monday. “The scheme was not only sophisticated, it was brazen, violent, and dangerous.”

Related: Law enforcement freezes $41M connected to $150M crypto Ponzi collapse

Source: FBI San Francisco

The three men were arrested in December last year and face charges of conspiracy to commit robbery, conspiracy to commit kidnapping, attempted robbery, and attempted kidnapping.

Armstrong and Rucker are scheduled to appear in court on Tuesday. Chindavanh is scheduled to appear in court on June 26.

Blockchain intelligence company TRM Labs reported in May last year that wrench attacks have been on the rise because of the ease with which bad actors can gather personal data online, the perceived pseudonymity of crypto transactions and the public visibility of wealth in the crypto sector.

Magazine: Guide to the top and emerging global crypto hubs — Mid-2026

Crypto World

Trump Heads to Beijing for High-Stakes Xi Summit: What It Means for Bitcoin

US President Donald Trump is set to meet his Chinese counterpart, Xi Jinping, in Beijing from May 13 to 15.

The visit, which will be Trump’s first return to China since 2017, will reportedly touch on issues such as AI, semiconductors, new trades and investments, as well as Middle East tensions, but for Bitcoin (BTC) and digital asset markets, it also carries some implications.

The Crypto Angle

Trump imposed tariffs on Chinese imports in his first term and did the same when he came back to the Oval Office in 2025, creating pressure for Chinese mining equipment manufacturers such as Bitmain, Canaan, and MicroBT.

The trade tensions also led to a constant see-sawing in BTC’s price, with the flagship cryptocurrency reacting negatively to almost all the threats Trump made to China and several other countries.

With all eyes on the upcoming Trump-Xi summit, many in the crypto space are hoping it could lead to China softening its stance on BTC and digital assets in general. There are indeed crypto undertones to the meeting, with several of the 17 executives traveling with the US president having meaningful digital asset exposure.

For instance, the CEO of BlackRock, Larry Fink, manages the largest spot Bitcoin exchange-traded fund; meanwhile, Tesla, represented by Elon Musk, owns 11,509 BTC.

Visa’s Ryan McInerney and Mastercard’s Michael Miebach are both scaling stablecoin settlement infrastructure, while David Solomon, whose Goldman Sachs recently expanded its crypto trading operations, also made the cut. If the summit eases US-China financial flows, those institutions stand to benefit, and markets would likely price that in quickly.

However, according to a May 12 analysis from XWIN Japan, the hopes that the Chinese government may rethink its crypto policy are misguided, considering Chinese authorities recently reinforced restrictions on crypto-related activities, real-world asset tokenization, and yuan-linked stablecoins.

As such, direct expansion of mainland Chinese Bitcoin demand remains off the table for now.

How It Could Move Bitcoin Mining

Another sector that could profit from this meeting includes the Bitcoin mining supply chains, which, although North America dominates in terms of global hashrate growth, are still supplied to a great extent by China.

Were the meeting to result in the easing of tensions, it could speed up mining investments and hashrate expansion, which could positively affect the price of BTC. On the other hand, a breakdown would possibly put more pressure on equipment costs and create supply delays for miners globally, hitting Bitcoin in ways that go beyond simple sentiment shifts.

At the time of writing, BTC was trading near $81,000, having gained less than 1% in the last seven days, per data from CoinGecko. However, the 30-day picture was much better, as the cryptocurrency was up around 13% in that period.

Meanwhile, the macro background heading into the summit is not clean, with oil prices going up by as much as 4% to $105.50 on Monday after US-Iran peace talks stalled. Higher oil feeds inflation expectations, which in turn reduce the probability of Federal Reserve rate cuts, tightening financial conditions for risk assets, including Bitcoin.

The post Trump Heads to Beijing for High-Stakes Xi Summit: What It Means for Bitcoin appeared first on CryptoPotato.

Crypto World

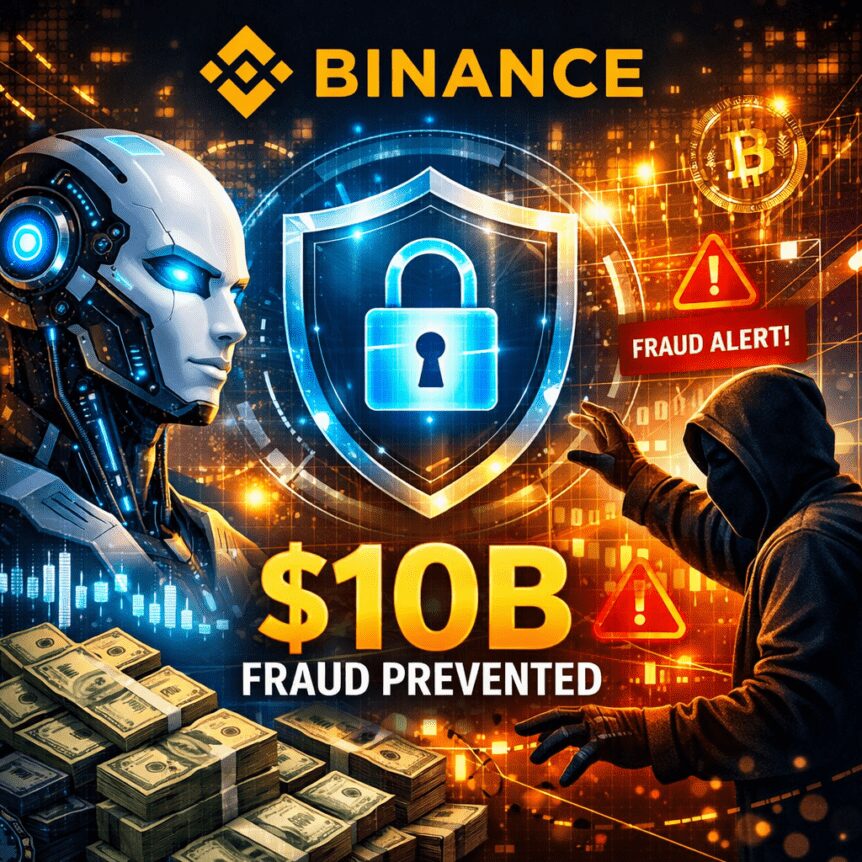

Binance says AI-driven security foiled $10B in fraud since 2025.

Binance says its AI-powered security tools prevented more than $10 billion in user fund losses to scams and fraud between 2025 and March 2026, according to a security-focused blog post from the exchange. The company reported that over 5.4 million users were protected from fraud between Q1 2025 and Q1 2026 after deploying more than 24 AI-driven initiatives and more than 100 models.

Over the 15 months to March 2026, Binance says it blocked $10.53 billion of potential losses and blacklisted 36,000 malicious addresses as its AI-enabled security protocols ran in the background. In the most recent quarter, the exchange highlighted a surge in activity: it intercepted 22.9 million scam and phishing attempts in Q1 2026 alone, saving $1.98 billion for users. The numbers, described in Binance’s security blog, underscore the rapid expansion of automated defenses as scams grow more sophisticated.

“AI-powered scams and exploits are accelerating,” Binance said. “The barrier to entry for scam perpetrators is falling fast, with AI accelerating the drop. What once required technical expertise can now be executed for next to nothing and at scale.” The warning reflects broader industry concerns that threat actors are leveraging artificial intelligence to enhance impersonation, social engineering and phishing campaigns. In the same vein, the FBI has noted elevated scam activity tied to crypto, citing substantial losses reported in the United States in recent periods.

Binance emphasizes that its AI tools are not just reactive, but embedded in real-time decisioning across its security stack. By integrating AI with its risk controls, the platform says it now intercepts attacks before user funds are moved and helps to differentiate legitimate activity from fraudulent patterns at scale. According to the blog, AI-driven decisioning now powers 57% of its fraud controls, contributing to a 60-70% reduction in card fraud rates relative to industry benchmarks. The company also pointed to ongoing work in identity verification to counter increasingly sophisticated deepfakes and synthetic identities.

Key takeaways

- Over 15 months ending in March 2026, Binance reports $10.53 billion in prevented losses and 36,000 malicious addresses blacklisted through AI-enhanced security measures, with more than 5.4 million users protected between Q1 2025 and Q1 2026.

- In Q1 2026 alone, the exchange intercepted 22.9 million scam and phishing attempts, saving $1.98 billion for users.

- AI-driven decisioning accounts for 57% of Binance’s fraud controls, with a claimed 60-70% reduction in card fraud rates versus industry benchmarks.

- The security stack employs computer vision to verify payment proofs, real-time language analysis to detect scam patterns, and enhanced identity verification to combat deepfakes and synthetic identities.

- The development comes amid a broader concern that AI-enabled scams are increasing in breadth and sophistication, reinforcing the need for robust defense mechanisms across exchanges and custodial platforms.

Inside Binance’s AI security toolkit

The security program described by Binance folds a wide range of AI capabilities into its existing risk controls. The company notes that computer vision components are used to detect fake payment proofs, while real-time language analysis monitors communications for suspicious patterns and indicators of fraud. On the identity side, the system is designed to counter deepfakes and synthetic identities that fraudsters may use to bypass traditional verification steps. Taken together, these tools are meant to reduce the likelihood that compromised or impersonated accounts can be exploited for exfiltration or manipulation of funds.

Binance also highlights a data-driven approach to governance and monitoring. By combining behavioral signals, transaction patterns and contextual data, the platform aims to flag unusual activity early and apply automated responses in real time. The emphasis on AI-driven decisioning—where a majority of fraud controls are now powered by AI—speaks to a broader shift in crypto security toward automated, scalable defense mechanisms that can adapt to evolving attack tactics.

Context, risk, and regulatory undertones

The concerns Binance raises about AI-enabled scams echo a broader industry risk landscape. Crypto-related fraud remains a persistent challenge for users and regulators alike, with authorities noting that attackers rely on social engineering, impersonation and increasingly convincing fake content. The FBI has flagged substantial losses linked to crypto scams, underscoring the importance of effective defense mechanisms as the technology that underpins these platforms scales in capability and reach. These dynamics place exchanges under pressure to demonstrate tangible security improvements while maintaining a user-friendly experience and transparent reporting.

From a market perspective, the deployment of AI in security can influence user confidence, willingness to engage with centralized exchanges, and the perceived risk profile of asset custodians. Investors and traders will be watching not only the absolute totals of funds saved or losses prevented but also how these tools perform across different jurisdictions, regulatory environments and product segments. The question going forward is how AI-driven security measures will balance proactive defense with user privacy, provide explainable risk signals, and scale with the growing volume and variety of crypto activity.

What to watch next

Readers should monitor whether Binance expands its AI security footprint beyond its current scope, including coverage of new product areas, regions, or asset classes. While the firm cites substantial quarter-to-quarter improvements, questions remain about the durability of these defenses as attackers adapt and as AI itself evolves. Expect more granular updates on the effectiveness of individual AI modules, potential cross-border enforcement considerations, and how other exchanges position their own AI-driven security programs in response to rising threats.

For a closer look at Binance’s security measures and the full set of claims, see the firm’s security blog post detailing the initiative and the metrics cited accompanying the 24 AI-driven initiatives and 100+ models. The post highlights the linkage between AI tools and real-time risk controls, emphasizing the intention to harden defenses as scammers grow more sophisticated. Binance security blog.

Alongside these developments, observers will continue to weigh the role of AI in fraud prevention against the risk of over-reliance on automated systems, potential false positives, and the need for ongoing user education. The crypto ecosystem remains a dynamic arena where defensive innovation and adversarial ingenuity co-evolve, and Binance’s latest figures offer a data point in that ongoing confrontation.

As the sector digests these disclosures, the key takeaway for users and investors is clear: AI-assisted security is becoming mainstream in crypto, but it is not a panacea. Ongoing transparency about performance, edge cases, and the safeguards around automated decisioning will be essential to gauge whether these tools translate into reliably safer experiences for the average user. The next few quarters should reveal whether the early momentum translates into sustained reduction in fraud across the broader ecosystem.

Crypto World

Qualcomm (QCOM) Stock Soars to Record Peak Fueled by AI Data-Center Push

Key Takeaways

- Qualcomm shares jumped 8.4% Monday, closing at an unprecedented $237.53

- Year-to-date gains stand at 39%, with a remarkable 32% surge in May alone

- CEO Cristiano Amon announced a custom data-center chip scheduled for delivery to a major hyperscaler in Q4 2026

- Daiwa Securities elevated its rating from Neutral to Outperform, setting a $225 target

- The stock’s five-session winning streak represents its strongest performance since April 2019

Qualcomm shares finished Monday’s trading session at $237.53, marking an 8.4% daily increase and surpassing the company’s prior record close of $227.09 from June 2024. The chipmaker ranked among the S&P 500’s top performers for the day.

This surge extends an impressive momentum streak. The stock has posted gains for five consecutive trading days, accumulating a 41% increase during that period. Market data from Dow Jones indicates this represents QCOM’s strongest five-day performance since late April 2019.

The upward trajectory follows Qualcomm’s April 29 fiscal second-quarter earnings release, which exceeded Wall Street projections for both top-line revenue and bottom-line earnings. While the financial results were impressive, subsequent announcements sparked even greater investor enthusiasm.

During the analyst call, CEO Cristiano Amon revealed that preliminary deliveries of a custom data-center chip are planned for the December quarter, destined for a significant hyperscaler client. While the customer’s identity remains undisclosed, Amon characterized it as a “large hyperscaler” and suggested an ongoing, multi-generation partnership. Additional information is anticipated during Qualcomm’s June investor day.

This announcement fundamentally altered market perception. While investors traditionally categorized Qualcomm as a smartphone-focused semiconductor company, the data-center initiative demonstrates a strategic pivot — transforming into a player in AI infrastructure at an enterprise scale.

Analyst Community Takes Notice

In the wake of the earnings announcement and Amon’s strategic revelations, several analysts revised their positions. Daiwa Securities upgraded QCOM from Neutral to Outperform while establishing a $225 price objective. Both Tigress Financial and Benchmark similarly increased their price targets.

Despite the optimistic trading activity, the consensus rating among more than 40 analysts surveyed by FactSet remains at Hold, with an aggregate price target of $176.72. Current trading levels place QCOM approximately 34% above that consensus forecast.

Broader semiconductor sector strength contributed to Monday’s rally. Intel shares rose 3.6% following reports of a tentative agreement to produce chips for Apple products. The PHLX Semiconductor Index recently recorded its most substantial 25-day advance since the dot-com era in 2000.

Diversification Strategy Unfolds

Qualcomm has systematically expanded its revenue portfolio beyond traditional smartphone components, incorporating automotive applications, internet-of-things devices, and AI-powered solutions. The company’s fiscal Q2 performance illustrated this strategic diversification, with meaningful contributions across multiple market segments.

Industry analysts project global semiconductor revenue will exceed $1 trillion this year, primarily driven by AI infrastructure and data-center expansion. This industry-wide catalyst has elevated the entire sector, and Qualcomm appears strategically positioned to capture market share in data-center segments where it hasn’t historically maintained a presence.

QCOM has appreciated 36% since the beginning of January. The trailing twelve-month performance shows a 56% gain.

The company plans to elaborate on its data-center strategy during its upcoming investor day scheduled for June.

Crypto World

Pi Network’s PI Token Falls Out of Top 50 Alts, Bitcoin (BTC) Stopped at $82K: Market Watch

Bitcoin initiated another breakout attempt in the past 12 hours or so, only to be rejected once again at $82,000 and driven south by more than a grand.

Most larger-cap alts have remained relatively flat on a daily scale, aside from ETH, which is under $2,300 once again. XRP and BNB keep fighting for the fourth spot in terms of market cap.

BTC Stopped at $82K

The previous business week saw a notable price surge from the larger cryptocurrency to a three-month peak of almost $83,000. Thus, the asset had added $8,000 from the previous Wednesday, and it seemed primed for a correction as many analysts warned about the rally’s structure.

BTC indeed slipped to $79,000 by Friday before the bulls stepped up and didn’t allow another breakdown. Instead, bitcoin started to recover some ground and quickly returned to over $80,000 during the weekend.

More volatility ensued on Monday morning when BTC first dipped to $80,250, before it shot up to $82,500, but it was rejected and dropped by two grand immediately when US President Trump deemed Iran’s latest peace proposal “totally unacceptable.”

It bounced again yesterday, but this time, its attempt was halted at $82,000. The subsequent rejection brought it south to a familiar territory of under $81,000, where it currently sits. Its market cap remains sideways at $1.620 trillion on CG, while its dominance over the alts is up to 58.3%.

BUILDon Enters Top 100, PI Exits Top 50

Today’s top performer from the largest 100 alts is BUILDon (B). The token is up a mind-blowing 44% to $0.63, making it the 93rd-largest cryptocurrency by market cap. In contrast, PI’s continuous declines have pushed the asset out of the top 50 alts by the same metric, after a 6% weekly decline.

CRO, STABLE, TON, and CC follow suit in terms of daily gains, while JUP, VVV, and PUMP have declined the most.

Ethereum has slipped by 2% daily and now trades well below $2,300. XRP, BNB, SOL, and DOGE have posted minor gains, while HYPE, ZEC, and LINK are down by over 1%.

The total crypto market cap has remained at the same level as yesterday, at around $2.8 trillion on CG.

The post Pi Network’s PI Token Falls Out of Top 50 Alts, Bitcoin (BTC) Stopped at $82K: Market Watch appeared first on CryptoPotato.

Crypto World

Cathie Wood’s Ark Invest chases Circle (CRC) stock as it hits a two-month high

Ark Invest bought $5.5 million worth of shares in Circle Internet (CRCL) on Monday as the stablecoin developer’s stock pumped following its first-quarter earnings report.

The St. Petersburg, Florida-based investment manager added 41,904 shares across three of its exchange-traded funds (ETFs): Innovation (ARKK), Next Generation Internet (ARKW) and Blockchain and Fintech Innovation (ARKF).

CRCL shares rose 16% to $131.76, the highest closing price since March 18, after the company posted estimate-beating earnings per share (EPS) of 21 cents.

Circle, whose USDC is the second-largest stablecoin, also revealed a $222 million raise for its Arc blockchain in a presale of the ARC token.

The purchase is Ark’s first of Circle stock since March 24, when it bought $16.3 million worth as the shares slumped 20%. It last sold CRCL on April 17, dumping $1.2 million worth on a day the stock closed at around $106.

The Cathie Wood-led company frequently buys into weakness in equities to capture greater value and rebalance the weighting of its ETFs. It is less common to see sizeable purchases that coincide with large share-price gains.

Crypto World

Galaxy Digital to manage Sharplink’s new $125 million onchain yield play

Galaxy Digital (GLXY) and Sharplink (SBET) are teaming up to put part of the latter’s staked ETH treasury into decentralized finance (DeFi) strategies.

The Galaxy Sharplink Onchain Yield Fund would receive $100 million from Sharplink’s staked ETH treasury and $25 million from Galaxy, the companies said.

Galaxy is set to manage the investment, which is expected to commence in the coming weeks under a non-binding memorandum of understanding.

The strategy will see capital deployed across DeFi liquidity protocols and other onchain yield strategies. The structure is designed to keep Sharplink’s core ETH exposure intact while adding an active yield strategy to its balance sheet.

Sharplink holds 872,984 ETH, according to separate first-quarter results. The company has generated 18,800 ETH in staking rewards since launching its ether treasury strategy in June 2025, the firm said.

The allocation is small relative to Sharplink’s ETH stack but large enough to mark a shift in the treasury model. At recent prices, $100 million equals roughly 43,000 ETH.

Crypto World

Will Bitcoin price drop below $80K as Coinbase premium stays negative?

Bitcoin price slipped back toward the $81,000 region on Monday as weakening U.S. institutional demand and renewed geopolitical uncertainty triggered another wave of profit-taking across the crypto market.

Summary

- Bitcoin price fell back toward the $81,000 region after another rejection near the $82,000 resistance zone amid rising geopolitical uncertainty.

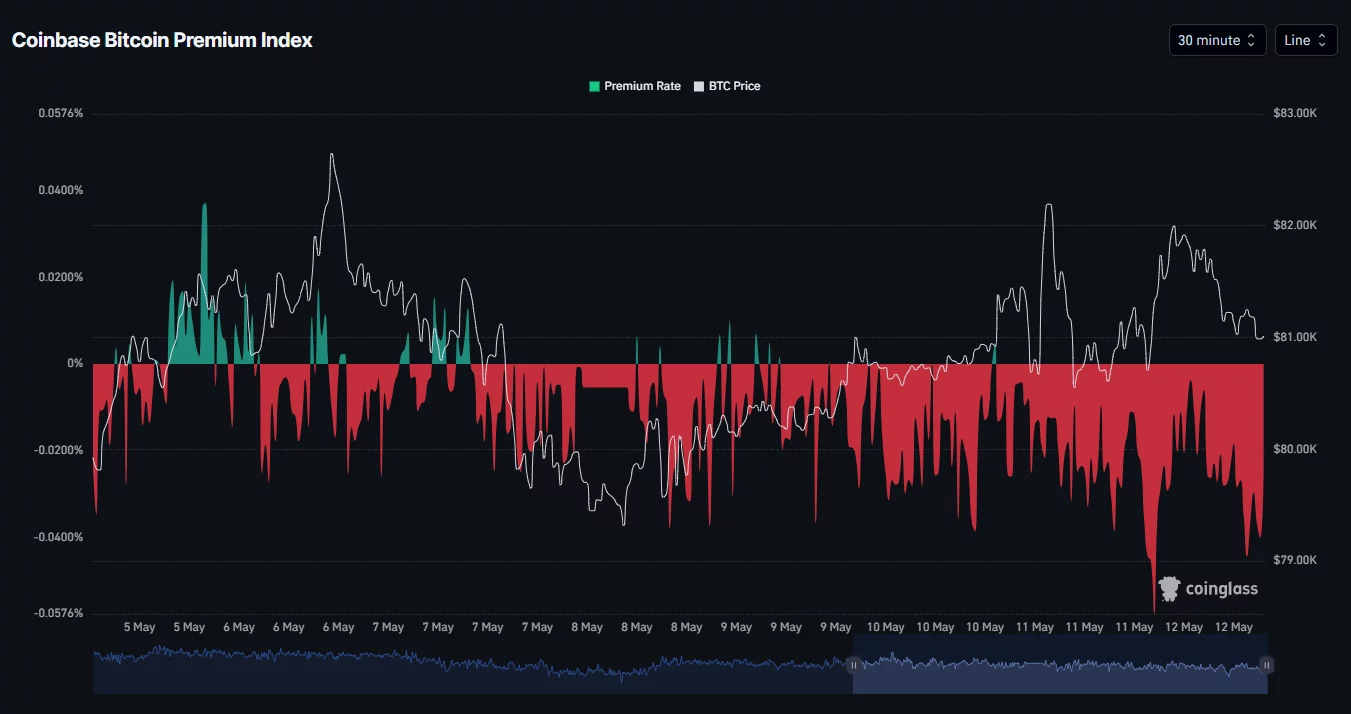

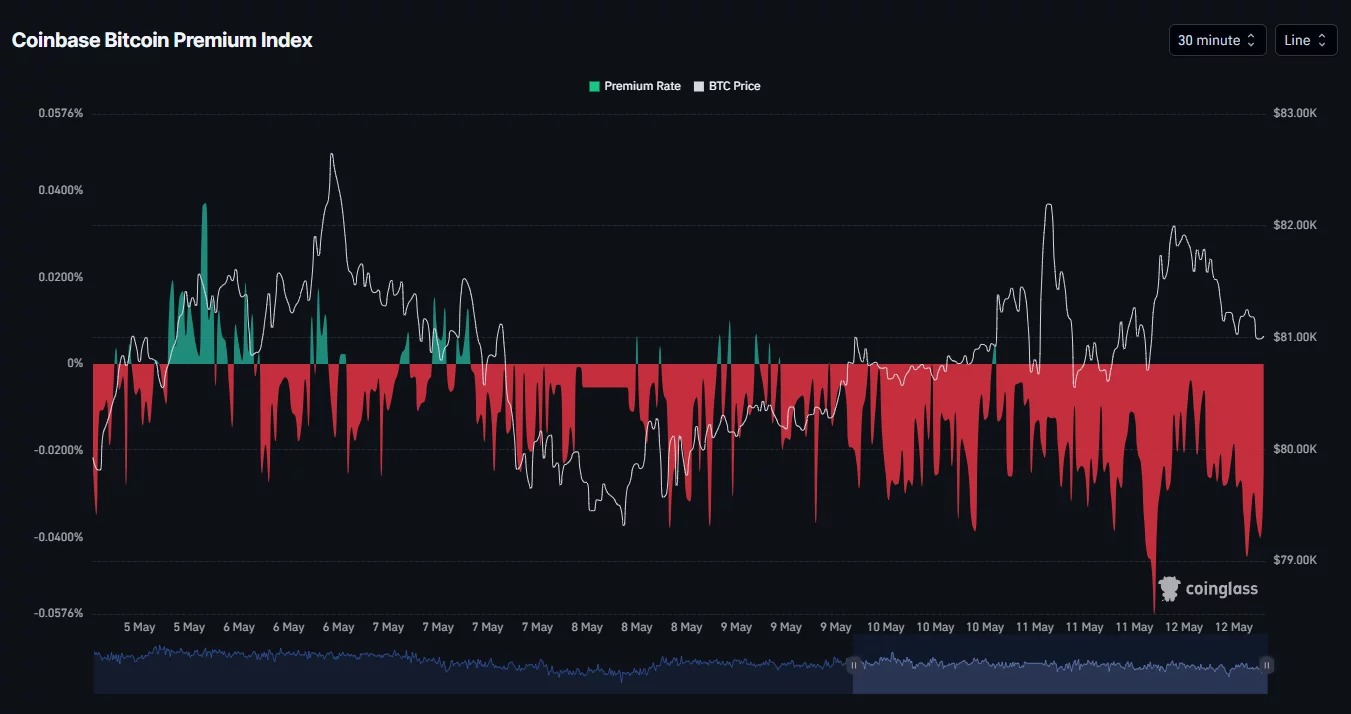

- The Coinbase Bitcoin Premium Index remained negative, signaling weaker buying demand from U.S. institutional investors in recent sessions.

- Technical indicators showed BTC still trading inside an ascending channel, though weakening MACD momentum raised risks of a retest below the $80,000 support level.

According to data from crypto.news, Bitcoin (BTC) traded around $80,900 at press time on May 12 after briefly falling toward an intraday low near $80,700. The pullback followed another failed breakout attempt above the key $82,000 resistance zone, where sellers continued aggressively defending upside momentum.

One of the biggest bearish signals came from the Coinbase Bitcoin Premium Index, which remained deeply negative throughout the past several trading sessions. The metric measures the price difference between Bitcoin on Coinbase and offshore exchanges such as Binance and is commonly used as a proxy for U.S. institutional demand.

The persistent negative reading suggests that buying activity from U.S.-based investors has weakened considerably, even as Bitcoin attempted to stabilize above major support levels.

Market sentiment also deteriorated after reports indicated that U.S. President Donald Trump rejected a recent peace proposal from Iran, calling the offer “totally unacceptable.” The development renewed concerns that tensions between the two nations could escalate further, pushing investors away from risk assets like cryptocurrencies.

The geopolitical uncertainty triggered another rise in risk-off sentiment across global markets while oil prices remained volatile following the latest developments surrounding the conflict.

At the same time, traders continued locking in profits after Bitcoin’s recent rally toward the $82,700 region. Some analysts have also started warning that the latest rebound could potentially resemble a dead cat bounce following Bitcoin’s strong recovery from February lows.

Institutional flows have additionally shown signs of cooling in recent sessions. Spot Bitcoin ETFs reportedly recorded roughly $350 million in outflows during a recent 48-hour period, weakening short-term bullish momentum as markets repositioned around the weekly CME gap.

Bitcoin price analysis

On the daily chart, Bitcoin price continues trading inside a broader ascending parallel channel structure that has remained intact since late March. The bellwether recently touched the upper boundary of the channel before facing rejection near the $82,000 resistance region.

The chart also shows BTC struggling to sustain momentum above the key 0.786 Fibonacci retracement level near $80,000, a zone that continues acting as an important psychological battleground between bulls and bears.

Despite the recent pullback, Bitcoin still remains above the Supertrend support level near $75,600, indicating that the broader bullish trend structure has not yet fully broken down.

Meanwhile, the MACD remains in positive territory, although the histogram has started flattening considerably over recent sessions, suggesting bullish momentum may be weakening as buyers lose short-term control.

If selling pressure intensifies further, Bitcoin could retest the lower boundary of the ascending channel near the $80,000 psychological support zone. A decisive breakdown below that level could expose BTC to deeper downside toward the $76,000–$77,000 region.

On the upside, bulls would need to reclaim the $82,000 resistance area to restore bullish momentum and potentially reopen the path toward the next major resistance near $84,000.

Disclosure: This article does not represent investment advice. The content and materials featured on this page are for educational purposes only.

-

Crypto World4 days ago

Crypto World4 days agoHarrisX Poll Found 52% of Registered Voters Support the CLARITY Act

-

Fashion4 days ago

Fashion4 days agoWeekend Open Thread: Marianne Dress

-

Crypto World5 days ago

Crypto World5 days agoUpbit adds B3 Korean won pair as Base token gains Korea access

-

NewsBeat5 days ago

NewsBeat5 days agoNCP car park operator enters administration putting 340 UK sites at risk of closure

-

Fashion16 hours ago

Fashion16 hours agoCoffee Break: Travel Steam Iron

-

Fashion1 day ago

Fashion1 day agoWhat to Know Before Buying a Curling Wand or Curling Iron

-

Tech2 days ago

Tech2 days agoAuto Enthusiast Carves Functional Two-Stroke Engine from Solid Metal

-

Politics12 hours ago

Politics12 hours agoWhat to expect when you’re expecting a budget

-

Politics3 days ago

Politics3 days agoPolitics Home Article | Starmer Enters The Danger Zone

-

Business3 days ago

Business3 days agoIgnore market noise, India’s long-term story intact, say D-Street bulls Ramesh Damani and Sunil Singhania

-

Crypto World7 days ago

Crypto World7 days agoUAE Free Zone Deploys Blockchain IDs to Verify Registered Firms

-

Tech1 day ago

Tech1 day agoGM Agrees To Pay $12.75 Million To Settle California Lawsuit Over Misuse Of Customers’ Driving Data

-

Crypto World6 days ago

Crypto World6 days agoBlackRock CEO Larry Fink Discusses a New Asset Class

-

Entertainment5 days ago

Entertainment5 days agoSarah Paulson Called Out For Met Gala ‘Hypocrisy’

-

Crypto World5 days ago

Crypto World5 days agoRobinhood says Wall Street is building onchain

-

Sports6 days ago

NBA playoff winners and losers: Austin Reaves is not loving Lakers vs. Thunder matchup, but Chet Holmgren is

-

Entertainment5 days ago

Entertainment5 days agoGeneral Hospital: Ric & Ava Bombshell – Ric’s Massive Secret Exposed!

-

Entertainment6 days ago

Entertainment6 days agoBold and Beautiful Early Spoilers May 11-15: Steffy Revolted & Liam Overjoyed!

-

Tech6 days ago

Tech6 days agoApple and Samsung are dominating smartphone sales so thoroughly that only one other company makes the top 10

-

Politics5 days ago

Politics5 days agoSimon Cowell Says He Was ‘Horrible’ To Susan Boyle During BGT Audition

US Senate Banking Committee releases crypto Clarity Act draft bill.

US Senate Banking Committee releases crypto Clarity Act draft bill.  (@coinbase)

(@coinbase)

You must be logged in to post a comment Login