Tech

‘A Rigged and Dangerous Product’: The Wildest Week for Prediction Markets Yet

Kalshi CEO Tarek Mansour posted a video on Wednesday of six men decked out in business casual doing push-ups on the sidewalk. “This is how Kalshi Q1 board meeting ended,” he wrote on X. The board members are laughing and smiling in the video after their impromptu cardio session, and the mood is jubilant. The next day, it became clear that the team had ample reason to celebrate: Kalshi had just raised $1 billion at a $22 billion valuation, making the company worth on paper roughly double what it was only a few months ago.

The funding round represented a bright spot during one of the most turbulent weeks for the prediction market industry yet. In just the past five days, Nevada temporarily banned Kalshi by issuing a temporary restraining order and Arizona filed criminal charges accusing it of running an illegal gambling business; an Israeli reporter said that he received an avalanche of threats from Polymarket traders furious about how a story he wrote impacted their wagers; Polymarket scored a major deal with Major League Baseball, further entrenching itself in the world of professional sports; and US Senators introduced legislation to ban specific types of markets offered by the industry, including any involving “government actions, terrorism, war, assassination, and events where an individual knows or controls the outcome.” It is the latest in a series of bills intended to place guardrails around the prediction industry.

Senator Chris Murphy, a cosponsor of the bill and one of the industry’s most outspoken critics, said in an interview with WIRED that prediction markets are “a rigged and dangerous product,” and represent “a brand-new source of mind-bending corruption.”

“Kalshi already bans insider trading and markets directly tied to death and war,” says Kalshi spokesperson Elisabeth Diana. “As a US-based exchange, we support regulators and policymakers from both sides of the aisle in their efforts to keep these markets safe and responsible in America.” Polymarket did not return requests for comment.

Existing law gives the Commodity Futures Trading Commission, the agency that oversees prediction markets, the authority to ban offerings related to assassination, war, terrorism, and other subjects deemed contrary to the public interest. Some prediction markets already stay away from these categories. But not all of their users understand where exactly the lines are drawn, which created a messy situation when some assumed that a market on the fate of Iran’s supreme leader would result in a payout if he “left office” by getting killed.

Meanwhile, Polymarket, which largely operates outside of the United States, offers plenty of war markets—but legislation is unlikely to impact these offerings. The platform is currently offering a market on whether Israeli Prime Minister Benjamin Netanyahu will be “out” by certain dates; someone recently wagered $177,000 that he would be out by March 31. Polymarket would likely resolve the market to “yes” and allow its bettors to profit if Netanyahu dies, just as it did when Khamenei was killed.

One of the reasons Senator Murphy is so passionate about prediction markets is because he sees them as vectors for insider trading. The Israeli government, for example, has charged two of its citizens with leaking classified information by placing Polymarket bets tied to the war in Iran. The Connecticut lawmaker suspects that other trades related to the conflict may have been carried out by members of Trump’s inner circle who have advanced knowledge about military operations. “It’s bone chilling to think that there are staffers inside the situation room that are pushing the United States into war, not because it’s good for our security, but because they’re going to make $100,000 off it,” he says.

Tech

The World Is Yours | Techdirt

from the they-can’t-kill-us-all dept

Forgive me for this digression. I know it’s usually left to Mike Masnick to lift us up from our collective doldrums when things seem even more hopeless than they did last year. His New Year’s posts are never wrong. There are always silver linings, even if the filigree is more difficult to detect with each passing year.

This isn’t about Mike or silver linings or the as of yet unfulfilled promise of the New Year. This is a post written by a die hard defeatist and cynic who generally views each passing moment with increasing levels of defeatism.

But I’m wrong. Mike is actually right, even if my spirits often pretend they’re anchored to the ground like so many pre-oh-the-humanity German-built dirigibles.

I will tell you why I’m wrong. And it’s embarrassing. I have plenty to say about lots of stuff but I rarely convert my words into action. Recently, however, I did. And it has made all the difference.

At the request of my oldest kid, we attended the recent “No Kings” rally in Sioux Falls. I was clad in my finest Da Share Zone anti-ICE gear:

He was wearing my protest alternate, a Black Sabbath-inspired bit of rhetoric sure to piss off white Christian nationalists:

Suitably suited, we headed to the protest with a friend of mine and his wife.

Long story short, it was life-affirming. It was exactly what anyone who feels they are losing hope needs. I feel I’m pretty good with word stuff, but I think Will Bunch absolutely nailed it in his post-No Kings column for the Philadelphia Inquirer. Quoting Marlon Brando’s mantra in The Wild One (“What are you rebelling against? Whaddya got?”), Bunch moves on to quote real people engaged in protests against something both nebulous and evil… and finding solace in being around people just like them.

“You feel less isolated when you see everybody here, and then they feel less isolated,” Nancy Harris, a 62-year-old retired mental-health crisis counselor from Prospect Park, told me over the steady car honks from supportive motorists. “And I think it just motivates people in general…just putting good vibes out into the universe.”

There’s more. Here’s a 75-year-old protester who not only knows what’s at stake, but knows why you should never give up:

“I’ve been going up against the establishment my whole life,” said [John] Coia, speaking for a generation that grew up exercising its all-American right of free speech and, now in old age, is determined to keep using it while they still can. I asked him what was the last straw with Trump that convinced him to join “No Kings.”

“There is no last straw,” he said over the car honks. “It just keeps going. There’s a new straw every day.”

Both of these things can be true.

You can find hope in being with people who share your beliefs. You can also feel the fight is never-ending because the current administration just won’t stop being abjectly evil.

But the first thing is what matters: the government may never stop being evil, no matter who’s currently sitting behind the Resolute Desk. And people who want the government to serve the people and be less evil will always exist. The ebb and flow of these constants may shift the prevailing narrative, but it can’t undermine the actual truth — something Mike highlighted in a recent post about the horrors perpetrated by the administration in Minneapolis, Minnesota.

Here’s the quote from the Atlantic’s Adam Serwer that Mike highlighted in a long, must-read post that pointed out everything that’s right about America, even when everything seems to be going wrong:

The secret fear of the morally depraved is that virtue is actually common, and that they’re the ones who are alone.

This is where we come together. Until recently, I believed that “coming together” was just a meeting of the minds. But that’s just preaching to the converted, which doesn’t really do much, even if my “converted” are objectively better people than the MAGA “converted.”

What really matters is that people are resisting in increasingly large numbers. We often consider the word “community” to be a cliche because that’s how the government uses it (for example, “Intelligence Community”). We view it with the same (healthy!) suspicion as we would statements delivered by company officials claiming they treat employees like “family.”

It never means anything until you’ve actually experienced (firsthand) a good one. “Family” isn’t a compliment if yours sucks. The same can be said for any “community.”

Unlike families, you can choose your community. You don’t have to align yourselves with empty mouths spewing even emptier platitudes. You just need to go out and see for yourself. Sure, I’m my own anecdata in this post. But trust me, if things feel hopeless, all you really need is the company of people who do this day in and day out, despite the table being stacked against them.

I’m sure many (if not nearly all) of you have already had this experience. My greatest regret is that I put it off for so long. No one who truly believes in the cause will care one way or another about your day-to-day devotion. They’ll welcome you and stand beside you. Participation can be its own reward. And you’ll leave feeling more inspired to be the change we need in this world.

I just wish I had done this sooner. The world is ours. Let’s go take it.

Filed Under: corruption, evil, no kings, trump administration

Tech

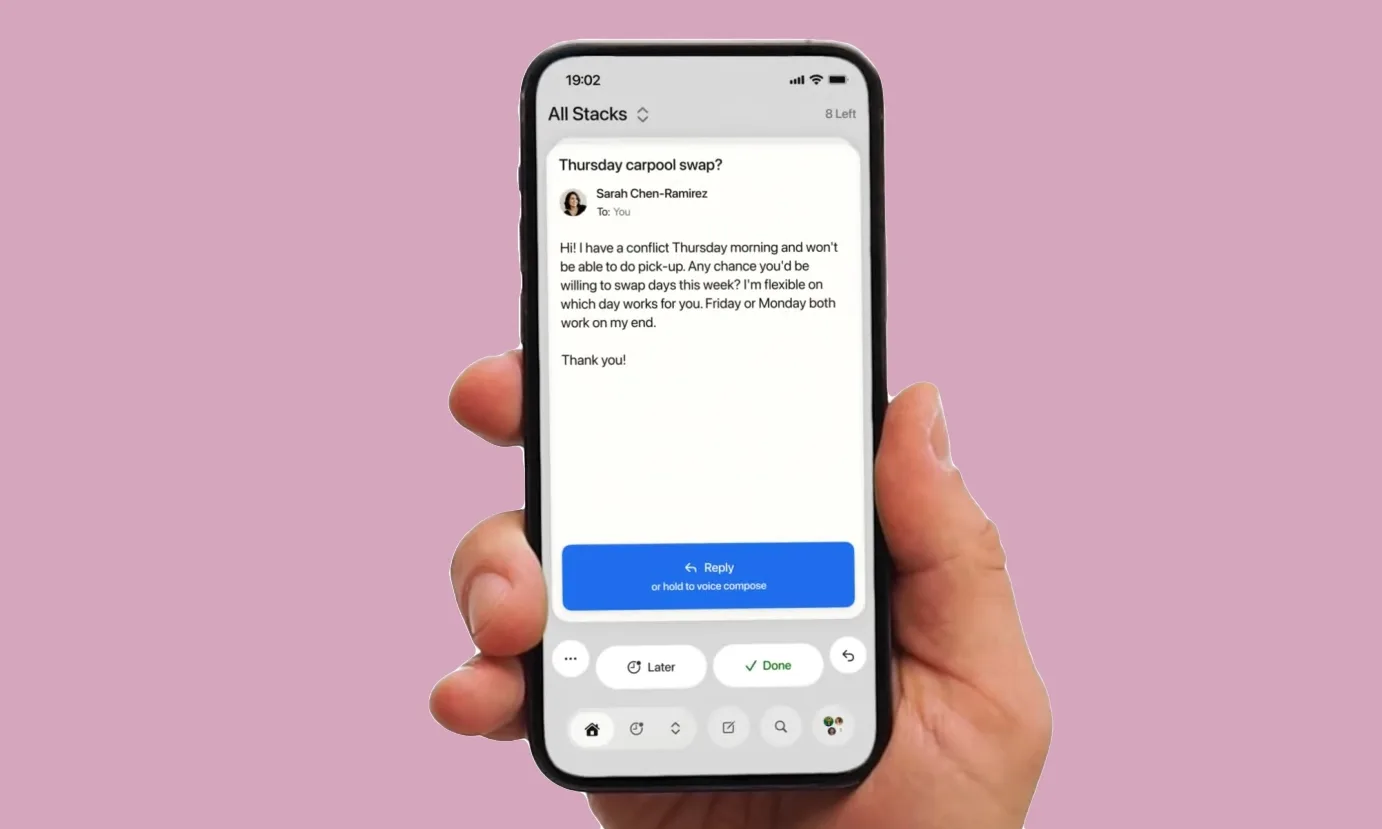

Hate boring email apps? Avec turns your inbox into a swipe-happy mess fixer

Email apps have spent years trying to make inbox management feel faster, smarter, and less soul-crushing. But Avec seems to have looked at all that and decided the real answer was right in front of us. Just make the emails behave like a dating app.

Avec is a new mobile email app that presents your inbox to you as a stack of swipeable cards instead of the usual list view. While it may sound like a big change, the idea is pretty simple in terms of functionality. Swipe left to deal with an email later, swipe right to mark it done or archive it, and swipe down to throw less important messages into an “unimportant” pile that the app can later group together for easier cleanup.

Why this is such an interesting solution

The card-based interface is the headline here, and yes, the Tinder comparison is doing a lot of the marketing work. But Avec is aiming to reduce in-box fatigue on mobile phones, where email usually feels cramped, tedious, and too easy to ignore. The app includes a regular list-based inbox for people who aren’t ready to fully make the jump to a swipe-card style.

But this design alone could’ve made Avec into just another quirky email client. Instead, it is also leaning into voice inputs and AI.

How does AI play into this?

Avec lets users hold a button to dictate an email reply by voice. And once you stop speaking, the app turns that recording into a draft that you can review, edit, and send. This, according to founder Jonathan Unikowski, gives Avec an edge over separate keyboard-based dictation tools because the app can see the full email context, understand names, and better match tone and writing styles.

But there’s a catch.

For now, Avec is only available in the US, and it is exclusive to the iPhone. Though it is free to use for Gmail users. Outlook support is reportedly in the works, and the company says paid tiers will come later. However, what these premium features will offer has yet to be finalized.

Tech

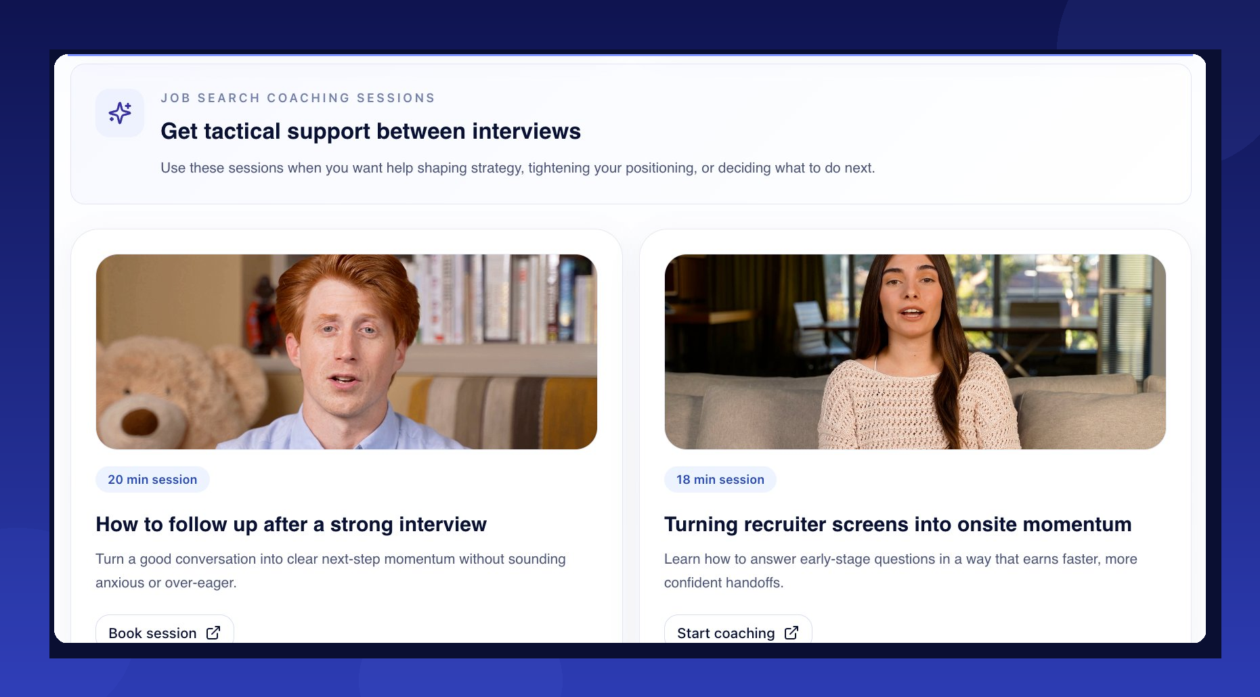

Humanly raises $25M to put AI to work for job seekers, not just the companies hiring them

The market for recruiting software — tools that help companies find and screen candidates — is worth $14 billion. The market for actually placing people in jobs is worth $500 billion.

Humanly just raised $25 million to chase the bigger number.

The Bellevue, Wash.-based company, which makes AI-powered interviewing tools for employers, is using its new Series B funding to reposition itself as what CEO Prem Kumar calls a “service-as-a-software” company — one that doesn’t just give recruiters tools to find candidates, but delivers pre-vetted, ready-to-hire job seekers on demand. It’s less recruiting software, more staffing agency replacement.

The round included participation from SEEK Investments, Drive Capital, Zeal Capital Partners, Converge and others. Humanly has raised $52 million to date.

“I wouldn’t call it pivoting, but we’re reinventing ourselves,” Kumar said. “Instead of a tool to go out and find profiles of job seekers, we’re just giving you the candidate themselves.”

The goal is to build a continuously refreshed database of pre-interviewed job seekers, essentially like LinkedIn’s profile network, but everyone in it has already been vetted and is ready to place.

Founded in 2018, Humanly uses automation software to help companies screen job candidates, schedule interviews, automate initial communication, run reference checks, and more. It targets customers with high volume hiring needs.

Humanly is also launching a job seeker-facing product, offering AI-powered coaching on interview preparation, resume writing, and salary negotiation — giving the company a direct relationship with candidates rather than relying solely on employer clients to funnel people into its system.

The timing may be working in Humanly’s favor. A difficult job market means more applicants chasing fewer openings, which Kumar says only amplifies the problem his company is trying to solve. AI-powered application tools have made it easier than ever for candidates to blast out applications en masse, turning virtually every open role into a high-volume hiring event. That puts more pressure on employers to filter smarter — and faster.

To build out its candidate database at scale, Humanly is striking partnerships that give it access to job marketplaces where job seekers go to find work and access training. Humanly is currently conducting around 9,000 interviews per day and could gain access to an estimated 20 million job seekers over the next 12 months.

Humanly is also working with Microsoft on its neurodiversity hiring program, using AI to help neurodiverse candidates practice for technical and behavioral interviews in a structured, low-pressure environment. The partnership addresses a gap Kumar says traditional hiring processes often miss — that interview performance frequently measures communication under pressure rather than actual capability.

Through the program, candidates use Humanly’s AI avatar coach to practice explaining their thinking, walking through trade-offs, and building confidence before facing a real interviewer. Kumar has ADHD and his son was recently diagnosed, and he said the tool may also help reduce a subtler problem.

“We have a lot of data around some of the bias in human interviews,” Kumar said. “We feel an AI interviewer, interviewing someone neurodiverse, might bias against them less than humans in some cases.”

Humanly, which is No. 152 on the GeekWire 200 ranked index of the Pacific Northwest’s top startups, counts Microsoft, Domino’s, Massage Envy, Worldwide Flight Services, and MGM Resorts and Casinos among its more than 120 customers. Kumar said the company’s revenue has grown 3.9 times over the past seven months and the startup now employs about 50 people.

Overall, Kumar sees Humanly’s shift as bigger than just its own reinvention — it’s a fundamental change in what enterprise software can now deliver.

“You no longer need to hire a big team to run a bunch of tools to get the outcome,” he said. “The tools can begin to do that themselves.”

Tech

Particles Seen Emerging From Empty Space For First Time

Longtime Slashdot reader fahrbot-bot shares a report from NewScientist: According to quantum chromodynamics (QCD) — widely considered to be our best theory for describing the strong force, which binds quarks inside protons and neutrons — even a perfect vacuum isn’t truly empty. Instead, it is filled with short-lived disturbances in the underlying energy of space that flicker in and out of existence, known as virtual particles. Among them are quark-antiquark pairs. Under normal conditions, these fleeting pairs vanish almost as soon as they appear. But if enough energy is injected into a vacuum, QCD predicts they can be promoted into real, detectable particles with measurable mass. Now, the STAR collaboration — an international team of physicists working at the Relativistic Heavy Ion Collider in Brookhaven National Laboratory in New York state — has observed this process for the first time.

The team smashed together high-energy protons in a vacuum, producing a spray of particles. Some of these particles should be quark-antiquark pairs pulled directly from the vacuum itself, but quarks can never exist alone and immediately combine into composite particles. Quarks and antiquarks are born with their spins correlated — a shared quantum alignment inherited from the vacuum. The researchers found that this link persists even after the quarks and antiquarks become part of larger particles called hyperons, which decay in less than a tenth of a billionth of a second. Spotting these spin-aligned hyperons in the aftermath of the proton collisions allowed the researchers to confirm that the quarks within them came from the vacuum. The findings have been published in the journal Nature.

Tech

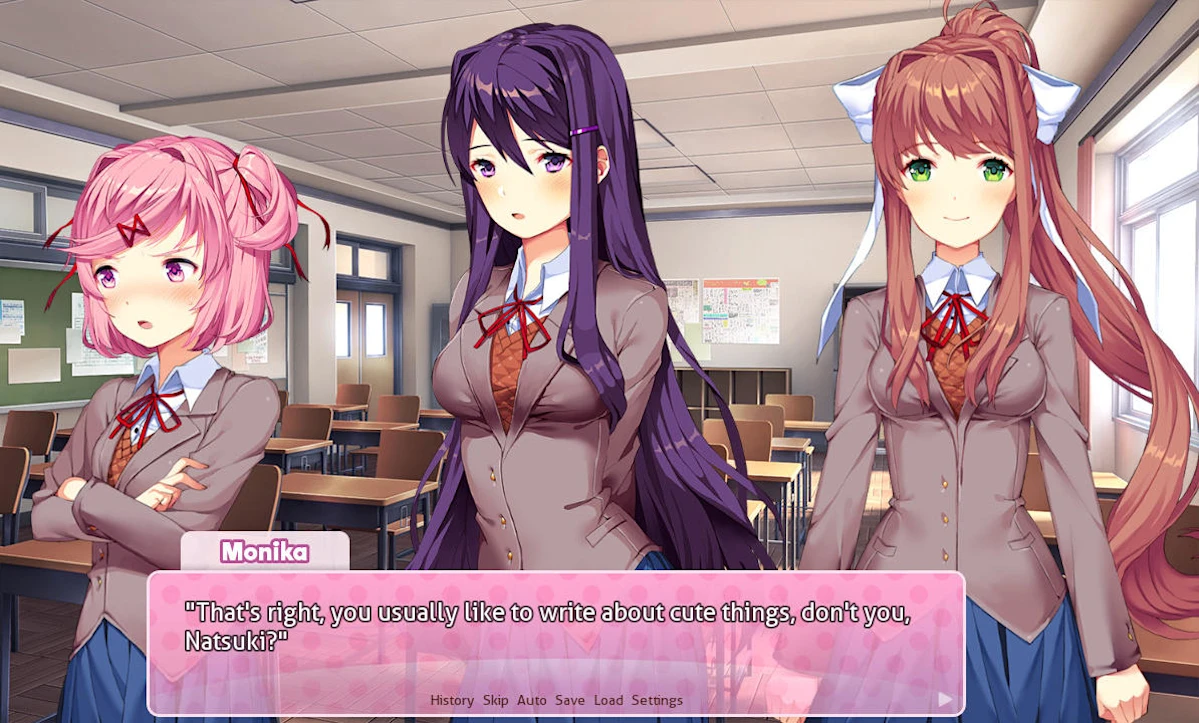

Google removes Doki Doki Literature Club! from the Play Store

Google has removed popular psychological horror game Doki Doki Literature Club! from the Play Store. According to Dan Salvato, who led its development team, and publisher Serenity Forge, Google told them the visual novel was removed because it violated its Terms of Service in its depiction of sensitive themes. The game is “widely celebrated for portraying mental health in a way that meaningfully connects deeply with players around the world,” they said in their announcement. Its free version, which came out first, has been downloaded at least 30 million times, while the paid “Plus” version has had at least one million downloads. The visual novel has repeatedly made Engadget’s lists of favorite games over the years.

Doki Doki Literature Club! has the drawing style and the makings of a typical dating sim, but players find themselves confronted with serious themes, including depression and suicide, soon after starting. Its Play listing was appropriately marked as “Mature 17+,” which means that children won’t be able to download it if their devices have parental controls. In addition, the developers clearly communicate that the game tackles serious issues. “This game is not suitable for children or those who are easily disturbed” is the first line of the game. “In-game content warnings for such material can be enabled in the Settings menu at any time,” it also warns players. In settings, there’s link to a page that lists content warnings that apply to the visual novel.

We’ve asked Google for a statement on why the game was removed, and we’ll update this post when we hear back. Salvator and Serenity Forge said they’re doing everything they can to “find a path forward for getting DDLC reinstated on the Google Play Store.” They’re also looking at other methods of distribution for Android devices. At the moment, the game’s Play listing shows that it’s still not available, but it’s still out on Steam, PlayStation, Switch eshop and iOS.

Tech

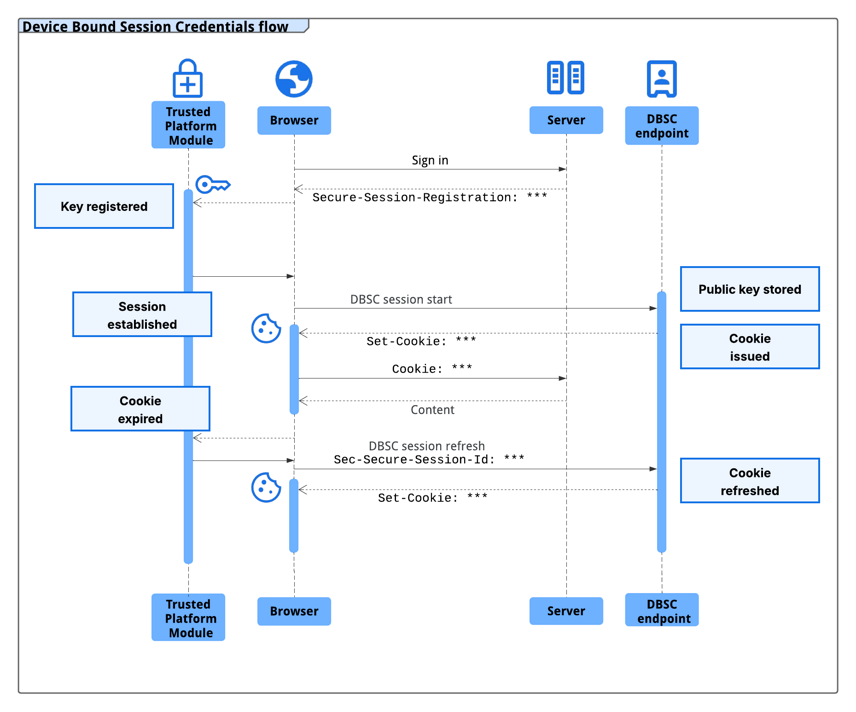

Google Chrome adds infostealer protection against session cookie theft

Google has rolled out Device Bound Session Credentials (DBSC) protection in Chrome 146 for Windows, designed to block info-stealing malware from harvesting session cookies.

macOS users will benefit from this security feature in a future Chrome release that has yet to be announced.

The new protection has been announced in 2024, and it works by cryptographically linking a user’s session to their specific hardware, such as a computer’s security chip – the Trusted Platform Module (TPM) on Windows and the Secure Enclave on macOS.

Since the unique public/private keys for encrypting and decrypting sensitive data are generated by the security chip, they cannot be exported from the machine.

This prevents the attacker from using stolen session data because the unique private key protecting it cannot be exported from the machine.

“The issuance of new short-lived session cookies is contingent upon Chrome proving possession of the corresponding private key to the server,” Google says in an announcement today.

Without this key, any exfiltrated session cookie expires and becomes useless to an attacker almost immediately.

source: Google

A session cookie acts as an authentication token, typically with a longer validity time, and is created server-side based on your username and password.

The server uses the session cookie for identification and sends it to the browser, which presents it when you access the online service.

Because they allow authenticating to a server without providing credentials, threat actors use specialized malware called infostealer to collect session cookies.

Google says that multiple infostealer malware families, like LummaC2, “have become increasingly sophisticated at harvesting these credentials,” allowing hackers to gain access to users’ accounts.

“Crucially, once sophisticated malware has gained access to a machine, it can read the local files and memory where browsers store authentication cookies. As a result, there is no reliable way to prevent cookie exfiltration using software alone on any operating system” – Google

The DBSC protocol was built to be private by design, with each session being backed by a distinct key. This prevents websites from correlating user activity across multiple sessions or sites on the same device.

Additionally, the protocol enables minimal information exchange that requires only the per-session public key necessary to certify proof of possession, and does not leak device identifiers.

In a year of testing an early version of DBSC in partnership with multiple web platforms, including Okta, Google observed a notable decline in session theft events.

Google partnered with Microsoft for developing the DBSC protocol as an open web standard and received input “from many in the industry that are responsible for web security.”

Websites can upgrade to the more secure, hardware-bound sessions by adding a dedicated registration and refresh endpoints to their backends without sacrificing compatibility with the existing frontend.

Web developers can turn to Google’s guide for DBSC implementation details. Specifications are available on the World Wide Web Consortium (W3C) website, while an explainer can be found on GitHub.

Tech

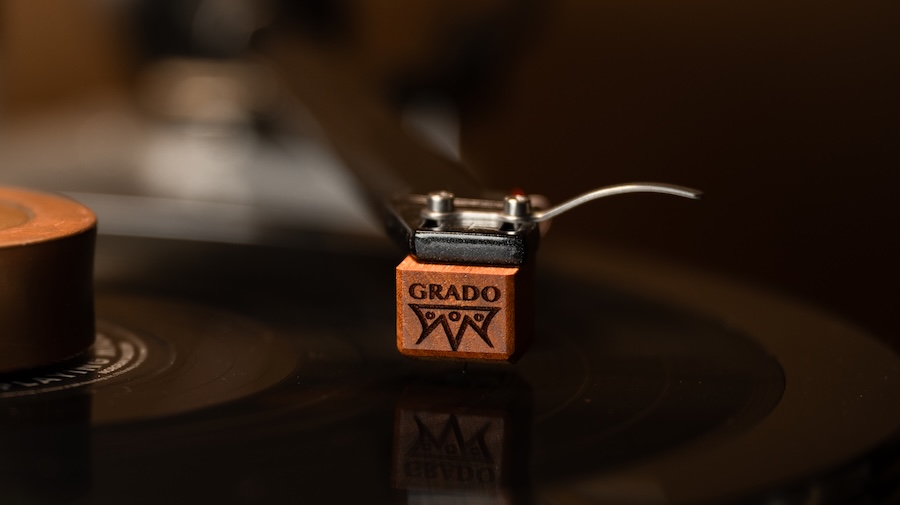

Grado updates its 2026 phono cartridge lineup across Lineage, Timbre, and Prestige series: Brooklyn Built For Your Turntable

Grado didn’t exactly drop this out of nowhere. When we spoke with the team at CanJam NYC 2026, there were enough hints to read between the lines, but nobody was about to say it out loud. Loose lips and all that. So we kept it quiet. Nobody wanted to end up in the East River. Now it’s official.

Grado Labs is rolling out an updated phono cartridge lineup across its Lineage, Timbre, and Prestige Series, built around targeted refinements to the stylus assembly, coil composition, and housing geometry. No reinvention, no marketing circus, just a clear effort to improve how these cartridges track, resolve detail, and behave in real-world setups.

The timing isn’t accidental. Vinyl’s resurgence has been very good to Grado’s cartridge business, but it’s also brought a flood of competition from legacy brands tightening their game to newer players looking to grab market share. Standing still isn’t an option when Ortofon, Audio-Technica, Denon, Hana, and Dynavector keep rolling out new cartridge models with every product cycle.

That’s what makes this update matter. They’re going back to the core elements that define cartridge performance and refining them across the board—better materials, tighter tolerances, and more consistency from model to model.

And let’s be clear—John Grado and Rich Grado didn’t build this brand by coasting. This is what staying relevant looks like when you’ve been doing it since 1953.

In other words, the vinyl boom may have kept the lights on, but this is Grado making sure nobody else walks in and starts rearranging the furniture.

What’s Actually Changed: Stylus, Coils, and Housing Get Real Upgrades

The stylus assembly has been refined across the lineup, with diamond profiles and cantilever materials more carefully matched to each specific model. That matters. You’re not getting a one-size-fits-all approach anymore. Some models step up to nude Shibata diamonds, which offer better groove contact and improved tracking compared to the elliptical profiles used throughout much of the previous generation—but not every cartridge gets that upgrade, and Grado isn’t pretending otherwise.

Coils have been updated across the board with OCC copper, with purity levels scaled depending on the model. The goal is pretty straightforward: cleaner signal transmission, better channel balance, and fewer inconsistencies from unit to unit.

On the mechanical side, the wood-bodied cartridges see revised housing geometry. This isn’t just cosmetic. The updated shapes are designed to improve stability during playback and make setup less of a headache—something anyone who has wrestled with cartridge alignment will appreciate.

Lineage Series: Grado’s Top Shelf, No Apologies

The Lineage Series sits at the top of Grado’s cartridge lineup and uses the company’s low-output moving iron, flux-bridger architecture across all three models. All three get Brazilian Ebony wood bodies, nude Shibata styli, and stereo/mono options, but the cantilever material, frequency range, resistance, and weight are not identical across the range. That’s where the pecking order starts to show.

Grado Epoch4 — $9,995

The Epoch4 is the flagship. It uses a Brazilian Ebony wood body, sapphire cantilever with Shibata diamond, 1.0mV output at 5 CMV (45 degrees), 5 Hz to 75 kHz controlled frequency response, average 35 dB channel separation from 10-30 kHz, 10-47k ohm input load, 8mH inductance, 95 ohms resistance, 10.5 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. Grado also says the internal signal path uses ultra-high purity OCC copper with gold plating, and that the cartridge undergoes cryogenic treatment during component prep and final assembly.

Grado Aeon4 — $4,995

The Aeon4 keeps the Brazilian Ebony body and sapphire/Shibata combo, with the same 1.0mV output, 10-47k ohm input load, 8mH inductance, 10.5 gram weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. Where it differs from the Epoch4 is the controlled frequency response, which is listed at 5 Hz to 70 kHz, and resistance, which drops to 74 ohms. Grado specifies ultra-high purity 7N OCC copper here. In other words, still serious, just not wearing the full tux.

Grado Statement4 — $3,500

The Statement4 is the entry point into the Lineage family, but it is not a stripped-down tourist model. It uses a Brazilian Ebony wood body and swaps to a machined boron cantilever with Shibata diamond. Specs include 1.0mV output at 5 CMV (45 degrees), 5 Hz to 65 kHz controlled frequency response, average 35 dB channel separation from 10-30 kHz, 10-47k ohm input load, 8mH inductance, 74 ohms resistance, 10 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. Like the Aeon4, it uses ultra-high purity 7N OCC copper and cryogenic treatment.

Timbre Series: Where Grado Dials It In for the Real World

The Timbre Series is where Grado Labs hits the balance point—high-end analog performance without drifting into Lineage-level pricing. This is the middle of the lineup, but it’s not a compromise. It’s a deliberate tuning exercise.

Across the range, Grado sticks with elliptical diamond styli and its moving iron, flux-bridger design. The emphasis here isn’t on any single upgrade—it’s on how everything works together. Stylus profile, cantilever material, coil composition, and housing are treated as a system, not a checklist. The result is a presentation that leans into tonal balance, coherence, and musical flow rather than hyper-detail for its own sake.

Material choices define the hierarchy. The Reference3 and Master3 use American Osage wood bodies with boron cantilevers for greater control and resolution, while the Sonata3 and Platinum3 move to Mediterranean Olive wood paired with aluminum cantilevers. The Opus3, built from American Maple, rounds things out with a simpler aluminum cantilever configuration. Same core design philosophy throughout, just scaled in execution.

Grado Reference4 — $1,500

The Reference4 sits at the top of the Timbre Series. It uses an American Osage wood body, machined boron cantilever with Shibata diamond, 4.0mV high output or 1.0mV low output at 5 CMV (45 degrees), 10 Hz to 60 kHz controlled frequency response, average 30 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 55mH inductance in high output and 6mH in low output, 660 ohms resistance in high output and 70 ohms in low output, 9.6 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. Grado also specifies ultra high purity 6N OCC copper in the internal signal path, along with cryogenic treatment and internal damping.

Grado Master4 — $1,000

The Master4 uses an American Osage wood body, machined boron cantilever with elliptical diamond, 4.0mV high output or 1.0mV low output at 5 CMV (45 degrees), 10 Hz to 60 kHz controlled frequency response, average 30 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 55mH inductance in high output and 6mH in low output, 660 ohms resistance in high output and 70 ohms in low output, 9.6 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. It is offered in high output, low output, and mono versions.

Grado Sonata4 — $600

The Sonata4 uses a Mediterranean Olive wood body, special aluminum cantilever with elliptical diamond, 4.0mV high output or 1.0mV low output at 5 CMV (45 degrees), 10 Hz to 60 kHz controlled frequency response, average 30 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 55mH inductance in high output and 6mH in low output, 660 ohms resistance in high output and 70 ohms in low output, 9.4 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. It is also offered in high output, low output, and mono versions.

Grado Platinum4 — $400

The Platinum4 uses a Mediterranean Olive wood body, aluminum cantilever with elliptical diamond, 4.0mV high output or 1.0mV low output at 5 CMV (45 degrees), 10 Hz to 60 kHz controlled frequency response, average 30 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 55mH inductance in high output and 6mH in low output, 660 ohms resistance in high output and 70 ohms in low output, 9.4 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. It is available in high output, low output, and mono versions.

Grado Opus4 — $300

The Opus4 is the entry point into the Timbre Series. It uses an American Maple wood body, aluminum cantilever with elliptical diamond, 4.0mV high output or 1.0mV low output at 5 CMV (45 degrees), 10 Hz to 60 kHz controlled frequency response, average 30 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 55mH inductance in high output and 6mH in low output, 660 ohms resistance in high output and 70 ohms in low output, 8.3 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 μm/mN compliance. Grado says the internal signal path uses ultra high purity 5N OCC copper, with cryogenic treatment and internal damping as part of the build process.

Prestige Series: Where Grado Keeps It Simple and Affordable

The Prestige Series is the foundation of what Grado Labs has been doing for decades and it hasn’t survived this long by accident. This is the entry point into the lineup, but it’s built on a design that’s been refined over more than fifty years, not reinvented every product cycle. Those paying attention will notice that the lineup has been trimmed down.

Across the range, Grado sticks with elliptical diamond styli, aluminum cantilevers, and its moving iron, flux-bridger design. The goal here isn’t to chase ultimate resolution—it’s consistency. Strong tracking, a balanced tonal presentation, and performance that doesn’t drift over time. These are cartridges designed to work, not impress on spec sheets.

One of the biggest advantages in the Prestige Series is the user-replaceable stylus system. When the stylus wears out, you don’t toss the cartridge, you swap the stylus and keep going. It’s practical, cost-effective, and a big part of why these have remained popular with both newcomers and long-time vinyl listeners.

No exotic wood bodies here, no sapphire cantilevers, just a straightforward design that prioritizes reliability and ease of use without abandoning the Grado house sound.

Grado Prestige Gold4 — $260

The Prestige Gold4 sits at the top of the current Prestige Series. It uses a four piece OTL cantilever with a Grado specific elliptical diamond stylus mounted on a brass bushing, 4 mV output at 5 CMV (45 degrees), 10 Hz to 55 kHz frequency response, average 25 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 50 mH inductance, 660 ohms DC resistance, 6 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 um/mN compliance. Grado also says the Gold4 uses a machined turned generator for lower distortion and greater transparency, along with ultra high purity copper wire and its twin magnet / Flux-Bridger moving iron design.

Grado Prestige Red4 — $190

The Prestige Red4 uses a bonded elliptical diamond mounted to an aluminum cantilever, 4 mV output at 5 CMV (45 degrees), 10 Hz to 55 kHz frequency response, average 25 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 50 mH inductance, 660 ohms DC resistance, 6 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 um/mN compliance. Grado describes it as a high output moving iron cartridge and notes that the stylus assembly is user-replaceable, with Prestige Series styli interchangeable across models.

Grado Prestige Green4 — $140

The Prestige Green4 uses a bonded elliptical diamond mounted to an aluminum cantilever, 4 mV output at 5 CMV (45 degrees), 10 Hz to 55 kHz frequency response, average 25 dB channel separation from 10-30 kHz, 10k-47k ohm input load, 50 mH inductance, 660 ohms DC resistance, 6 gram cartridge weight, 1.6-1.9 gram tracking force, and 20 um/mN compliance. Grado also describes it as a high output moving iron cartridge with a user-replaceable stylus assembly, available in both standard mount and P-mount versions.

Trade-In Program: Grado’s Answer to Cartridge Burnout

Grado takes a different approach to long-term ownership, and it’s one that actually makes sense if you’ve been around analog long enough to know how this usually goes.

For its wood-bodied models, Grado Labs offers a cartridge trade-in program that lets you send back your existing cartridge; no matter how worn, and apply it toward a new one at a reduced cost. No drama, no “must be in mint condition” nonsense.

The idea is simple: keep people in the ecosystem without forcing them to start from scratch every time their stylus wears down or their system evolves. Instead of treating cartridges as disposable, Grado treats them like part of a longer-term upgrade path.

That flexibility cuts both ways. You can move up the range if you’re chasing more performance, or step sideways or even downward if your system changes or priorities shift. Either way, you’re getting a current production model with the latest refinements baked in. You won’t get that from Denon, Hana, or Clearaudio.

The Bottom Line

Grado didn’t reinvent anything—they refined the parts that actually matter. Across Lineage, Timbre, and Prestige, the updates focus on improved stylus assemblies, higher-purity OCC copper coils, and revised housing geometry, all aimed at better tracking, consistency, and easier setup.

On paper, the lineup is clearly tiered: Lineage pushes materials and resolution at the top, Timbre balances performance and design choices in the middle, and Prestige continues as the accessible, user-friendly foundation with its replaceable stylus system. Each range sticks to the same moving iron DNA, just executed at different levels.

Who should pay attention? Anyone with a vinyl setup who hasn’t looked at Grado in a while—and especially those watching how established brands respond to a more competitive cartridge market.

Reviews land in May and June.

For more information: gradolabs.com

Related Reading:

Tech

2026 M5 Pro 16-inch MacBook Pro with 48GB RAM plunges to best $2,899

Pick up Apple’s brand-new M5 Pro 16-inch MacBook Pro with an upgrade to 48GB RAM for $2,899, the lowest price on record, thanks to a $200 cash discount.

M5 Pro 16-inch MacBook Pro with 48GB RAM has dropped to record low $2,899 – Image credit: Apple

Released in March 2026, the M5 Pro 16-inch MacBook Pro features higher unified memory bandwidth and is equipped with Apple’s N1 chip for Wi-Fi 7 and Bluetooth 6 support. And today, Amazon is slashing $200 off an upgraded configuration with a bump up to 48GB of RAM.

Save $200 on 16″ MacBook Pro 48GB RAM

Continue Reading on AppleInsider | Discuss on our Forums

Tech

Best VPN Apps for Android in 2026 (Tested and Ranked)

A VPN is one of the simplest and highest-impact privacy tools you can add to your Android phone. It encrypts all traffic leaving your device, masks your IP address, and prevents your mobile carrier, network administrators, and anyone sniffing public Wi-Fi from reading what you send and receive. The problem is that the market is saturated with hundreds of options — and some of them are actively worse than using no VPN at all.

This guide covers five vetted options for Android in 2026, chosen based on independent speed testing, verified no-log audit status, and real-world usability on Android. Pricing is accurate as of publication; use the links below to confirm current rates before subscribing.

Quick Take:Best overall: NordVPN — fast, independently audited, feature-complete Android app

Best for privacy purists: Mullvad — no email, no account details, flat pricing

Best free option: ProtonVPN Free — no data cap, no ads, no data selling

Best for streaming: ExpressVPN — fastest tested speeds, reliable geo-unblocking

Best for households: Surfshark — unlimited simultaneous devices on one subscription

What to Look for Before You Choose

The VPN market has two distinct quality tiers, and the difference isn’t always visible from app store screenshots. Before picking any option — paid or free — apply these four filters:

- Independent no-log audit: Any VPN can claim it doesn’t log your traffic. Only a handful have had that claim verified by an external cybersecurity firm with access to their infrastructure. Prioritise providers that have passed at least one named, published audit.

- Protocol quality: WireGuard is the current standard for speed and security. OpenVPN is battle-tested but slower. Proprietary protocols (NordLynx, Lightway) are acceptable when built on WireGuard or audited independently. Avoid providers that use only outdated PPTP or L2TP.

- Kill switch: If the VPN connection drops, a kill switch halts all internet traffic until the tunnel is restored. Without it, your real IP and unencrypted traffic briefly expose themselves every time the connection interrupts — which happens more on mobile than on desktop.

- Business model transparency: If the product is free and there is no visible paid tier, advertising revenue, or clear funding source — your data is the product. This is not speculation; it is documented by independent research.

1. NordVPN — Best Overall for Android

NordVPN is the most balanced option across speed, privacy verification, and Android-specific features. Its NordLynx protocol — built on WireGuard — delivered less than 20% speed reduction on a 250 Mbps connection in Security.org’s independently conducted Android VPN speed tests in early 2026. That puts it consistently above average for mobile use.

On the privacy side, NordVPN completed its sixth independent no-logs assurance engagement in February 2026, with auditors given full access to servers, employee interviews, and infrastructure configurations. The result confirmed NordVPN stores no connection logs, IP addresses, traffic logs, or browsing activity.

The Android app includes a built-in ad and tracker blocker (Threat Protection Lite), split tunneling, and an automatic kill switch. It supports up to 10 simultaneous devices per subscription.

- Server count: 6,000+ in 111 countries

- Protocol: NordLynx (WireGuard-based), OpenVPN

- No-log audit: Yes — 6 completed, most recent Feb 2026

- Starting price: From approximately $3.09–$4.39/month (2-year plan)

- Free tier: No — 30-day money-back guarantee only

- Google Play rating: 4.3/5

Best for: Users who want a one-app solution that handles speed, privacy, ad blocking, and streaming without configuration.

2. ProtonVPN — Best for Privacy-First Users (and the Best Free Option)

ProtonVPN occupies a unique position: it is the only free VPN recommended in this guide, and it earns that position by having a completely different business model from other free offerings. The free tier is funded by paid subscribers, not by data collection or advertising. There is no data cap on the free tier — an extremely rare offering in this market.

ProtonVPN passed its fourth consecutive independent no-logs audit in 2025, conducted by Securitum. The company also publishes a transparency report documenting every legal request for user data — and because it logs nothing, it has nothing to hand over. Its apps are fully open source, meaning the code is publicly inspectable by any security researcher at any time.

Speed is near the top tier. Independent testing in 2026 showed ProtonVPN slowing download speeds by roughly 8% — compared to NordVPN’s 6% — a difference that is imperceptible in real-world use. The paid VPN Accelerator feature reportedly improves speeds on distant servers by 40–50%.

- Server count: 9,500+ in 112 countries (paid); limited server selection on free

- Protocol: WireGuard, OpenVPN, Stealth (obfuscated)

- No-log audit: Yes — 4 completed, most recent 2025

- Starting price: Free (no data cap) | Paid from approximately $2.99/month

- Free tier: Yes — unlimited data, 3 server locations, 1 device

- Open source: Yes — full client source code publicly available

Best for: Users who want the strongest privacy credentials, anyone on a budget who needs a genuinely trustworthy free tier, and anyone who wants to verify the code before trusting it.

Free tier limitation to know: The free tier restricts access to three server locations (US, Netherlands, Japan) and one device. For most basic privacy needs — securing public Wi-Fi, hiding traffic from your carrier — this is sufficient. For streaming geo-restricted content, you will need a paid plan.

3. ExpressVPN — Best for Speed and Streaming

ExpressVPN has held its position as a top-tier speed performer for several years, and that remains true in 2026. Its proprietary Lightway protocol delivered an average of 214 Mbps download and 207 Mbps upload in Android-specific testing — among the fastest recorded for any mobile VPN. CNET’s 2026 best Android VPN evaluation named it their top pick, citing outstanding streaming performance, geo-unblocking reliability, and ease of use.

The Android app is polished and simple — a single tap connects to the recommended server. It includes a kill switch, split tunneling, and threat manager (blocks known malicious domains). ExpressVPN operates from the British Virgin Islands, outside the EU and Five Eyes data-sharing arrangements.

- Server count: 3,000+ in 105 countries

- Protocol: Lightway (proprietary, audited), OpenVPN, IKEv2

- No-log audit: Yes — multiple completed

- Starting price: From approximately £1.99/month (promotional) | Regular from $6.67/month

- Free tier: No — 30-day money-back guarantee

- Google Play rating: 4.1/5

Best for: Users whose primary use case is streaming geo-restricted content, travelling users who need reliable connections across regions, and anyone who values a fast, no-configuration mobile experience.

One trade-off: ExpressVPN is among the more expensive options at its standard rate. The promotional price requires a long-term commitment; monthly plans cost significantly more. Factor in the full cost if you prefer flexibility.

4. Mullvad — Best for Maximum Anonymity

Mullvad is the most privacy-radical mainstream VPN available. It requires no email address and no personal information to create an account — you are assigned a 16-digit account number and that is your entire identity on the platform. Payment is accepted via cash by post, cryptocurrency, and card. No name, no email, no phone number is ever stored.

As Engadget’s 2026 budget VPN guide notes, Mullvad’s pricing has not changed since 2009: €5 per month, with no long-term contracts and no promotional pricing. What you see is what you pay. It supports up to five simultaneous devices.

- Server count: 900+ in 46 countries

- Protocol: WireGuard, OpenVPN

- No-log audit: Yes — independently audited

- Starting price: €5/month (flat — no annual discount)

- Free tier: No — 30-day refund policy

- Account signup: No email required

Best for: Journalists, activists, lawyers handling confidential cases, or anyone for whom account anonymity matters as much as traffic privacy. Also ideal for technically-minded users who dislike email-based accounts and marketing relationships with software vendors.

When not to use Mullvad: If your primary need is streaming, Mullvad’s smaller server network offers less geo-unblocking coverage than NordVPN or ExpressVPN. It is a privacy tool first, a convenience tool second.

5. Surfshark — Best Value for Multiple Devices

Surfshark’s defining advantage is its device policy: unlimited simultaneous connections on one subscription. Every other major VPN imposes a device cap (typically 5–10). If you need to cover a phone, tablet, family member’s device, laptop, and smart TV simultaneously, Surfshark removes that constraint entirely.

Speed and privacy credentials are solid — it uses WireGuard, maintains a no-log policy, and its Android app includes ad and malware blocking (CleanWeb), a kill switch, and split tunneling. It consistently appears in multi-product roundups from PCMag, TechRadar, and RTINGS as a strong second-tier option.

- Server count: 3,200+ in 100 countries

- Protocol: WireGuard, OpenVPN, IKEv2

- No-log audit: Yes — independently audited

- Starting price: From approximately $2.19/month (2-year plan)

- Free tier: No — 30-day money-back guarantee

- Device limit: Unlimited

Best for: Users covering a whole household, multi-device power users, or anyone who wants a capable VPN at the lowest per-month price point without compromising on verified privacy credentials.

How These VPNs Compare

| VPN | Best For | No-Log Audit | Free Tier | Starting Price | Devices |

|---|---|---|---|---|---|

| NordVPN | Overall best | Yes (6 audits) | No | ~$3.09/mo | 10 |

| ProtonVPN | Privacy + free tier | Yes (4 audits) | Yes (unlimited data) | Free / ~$2.99/mo | 10 (paid) |

| ExpressVPN | Speed & streaming | Yes | No | From ~£1.99/mo | 8 |

| Mullvad | Maximum anonymity | Yes | No | €5/mo (flat) | 5 |

| Surfshark | Multi-device value | Yes | No | ~$2.19/mo | Unlimited |

Free VPNs — A Risk Most People Underestimate

Warning: The majority of free VPN apps on the Google Play Store are not privacy tools. Many are data collection tools wearing a VPN’s interface.

A Zimperium zLabs study of more than 800 free VPN apps — published in October 2025 — found that nearly two-thirds relied on vulnerable code, leaked personal data, or provided no meaningful privacy protection. Separately, Tom’s Guide reported research projecting that by 2025, 80% of free VPN apps would embed tracking features, with data sales to third parties affecting up to 60% of the category.

The mechanism is straightforward: a free VPN app has no paid revenue. The cost of operating VPN infrastructure — servers, bandwidth, maintenance — is real. Something funds it. In many cases, that something is selling aggregated user traffic data to advertising networks and data brokers. Installing such an app to “protect your privacy” achieves the opposite.

The exception is ProtonVPN Free, listed above. It is funded by paid subscribers and has an independently verified no-log policy. Outside of that and a small number of other verified providers, treat free VPNs as a significant risk rather than a safe default.

VPN Protocols — What the Labels Mean

You will encounter protocol names in VPN settings and marketing. Here is what they mean in plain terms:

- WireGuard: The current speed and security standard. Lean codebase (~4,000 lines vs OpenVPN’s ~400,000), fast handshakes, and strong cryptography. If available, use this.

- NordLynx (NordVPN): NordVPN’s implementation of WireGuard, with an additional privacy layer to resolve WireGuard’s default IP assignment behaviour. Functionally WireGuard with an extra step.

- Lightway (ExpressVPN): ExpressVPN’s proprietary protocol, designed for fast connection and reconnection on mobile networks. Independently audited. Performs comparably to WireGuard in speed tests.

- OpenVPN: The long-standing standard. Battle-tested and extensively audited over many years. Slower than WireGuard on modern hardware but universally supported. Use it as a fallback if WireGuard is unavailable.

- IKEv2/IPSec: Good for mobile use specifically because it handles network switches well (e.g. moving from Wi-Fi to mobile data). Reconnects faster than OpenVPN. Standard feature on many VPNs.

- Stealth / Obfuscated protocols: Designed to disguise VPN traffic as regular HTTPS traffic to bypass VPN blocks — relevant in countries with active censorship or on networks that block VPN connections.

Before You Subscribe — Checklist

- ☐ Confirm the VPN has a published, independent no-log audit — not just a self-declared policy

- ☐ Check that the Android app includes a kill switch (not all apps enable it by default)

- ☐ Verify the app is downloaded directly from Google Play Store — not a third-party APK

- ☐ Enable the kill switch after installation before your first connection

- ☐ Test your real IP before and after connection using a browser-based IP checker

- ☐ For free VPNs: verify the provider has a paid tier and a published no-log audit before trusting it

- ☐ Enable auto-reconnect to restore VPN after network switches (Wi-Fi to mobile data)

Frequently Asked Questions

Does a VPN make my Android phone completely private?

No. A VPN encrypts the connection between your phone and the VPN server and hides your IP address from the sites you visit. It does not anonymise you at the app level — apps with your account information still know who you are. It also does not protect against malware already on your device. Think of it as one layer in a wider phone data security strategy — not a complete solution on its own.

Will a VPN slow down my Android phone?

Yes, to a measurable but usually minor degree. NordVPN’s NordLynx protocol showed less than 20% speed reduction on a 250 Mbps connection in independent testing. On a typical mobile connection of 50–100 Mbps, the real-world impact is rarely noticeable for browsing, messaging, or video calls. Streaming in 4K on a congested VPN server is where speed reduction becomes visible.

Is it safe to use a VPN on public Wi-Fi?

Using a VPN on public Wi-Fi is safer than not using one. Public Wi-Fi creates opportunities for man-in-the-middle attacks, traffic sniffing, and rogue hotspot impersonation. A VPN neutralises the first two. It does not protect against a rogue hotspot at the DNS level unless your VPN provides its own DNS servers, which most reputable providers do. Enable the VPN before connecting to public Wi-Fi, not after.

Can a free VPN be trusted?

Very few can. The Zimperium study of 800+ free VPN apps found nearly two-thirds were unsafe in measurable, documented ways. The only free VPN recommended in this guide is ProtonVPN Free — it has an independently audited no-log policy, no data cap, and a business model funded by paid subscribers rather than data collection.

Does a VPN protect me from hackers?

A VPN protects against network-level interception — eavesdropping on your traffic, recording your browsing activity from a network position, and revealing your IP address. It does not protect against phishing, malware, social engineering, or account compromise through password reuse. For those threats, you need a separate set of measures covered in our Android data security guide.

Do I need a VPN if I only use mobile data (not public Wi-Fi)?

On mobile data, your carrier can see your DNS queries, general traffic metadata, and browsing patterns. Many carriers sell anonymised (but not always reliably anonymised) data to third parties. A VPN prevents that. It also prevents your ISP from throttling specific services like video streaming based on traffic inspection. Whether that risk profile matters to you depends on your threat model — it is not an emergency for most users, but it is a real trade-off.

Which VPN should I choose if I just want something that works without setup?

NordVPN or ExpressVPN. Both have polished Android apps with single-tap connect, work reliably across all common Android versions, and handle network switches without manual reconnection. NordVPN is the better all-round value; ExpressVPN is the better streaming option.

Is it legal to use a VPN in the UK?

Yes — VPNs are legal in the United Kingdom and across the European Union. Using a VPN to access content that is itself illegal remains illegal regardless of VPN usage. Using a VPN to access geo-restricted streaming content (e.g., a US Netflix library from the UK) may violate streaming platform terms of service, though it is not a criminal matter.

Tech

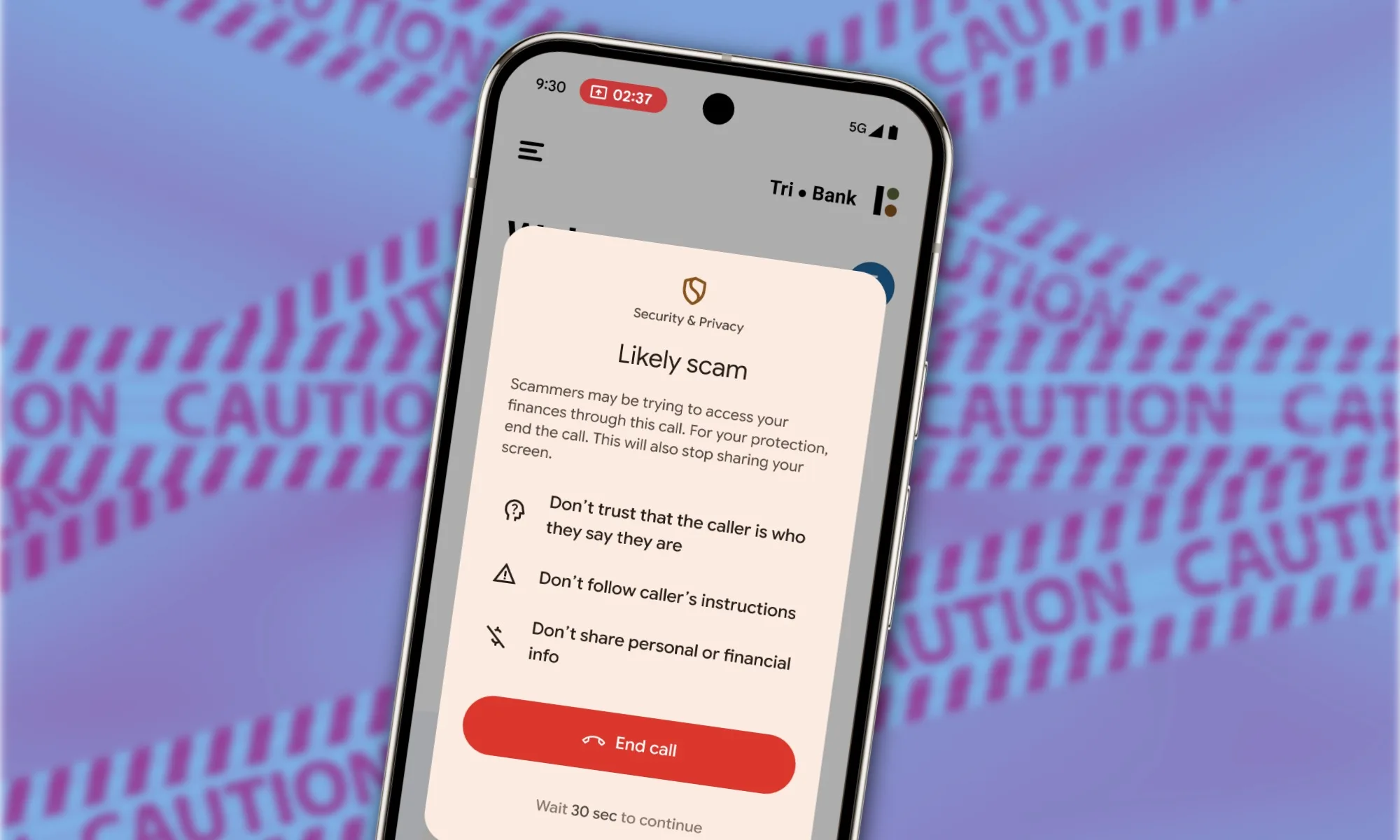

Samsung’s next-gen foldable phones will inherit anti-scam call superpowers

Scam calls are evolving. Your phone is about to do the same. Samsung’s upcoming foldables are shaping up to get an intelligence upgrade, with Google’s Gemini-powered Scam Detection expected to expand to devices like the Galaxy Z Fold 8, Galaxy Z Flip 8, and even a new Wide Fold variant. And yes, this time your phone may finally be better at spotting fraudsters than your patience at 7 PM after the fifth unknown call of the day.

Samsung joins the scam detection club

Google has been steadily building Scam Detection into its AI ecosystem, using Gemini to analyze live phone conversations and flag suspicious behavior as it happens. So, if a caller starts sounding like they’re scripting a heist movie, your phone gently steps in and says, “Maybe don’t trust this one.” On Pixel devices, this feature runs directly on-device, so it doesn’t send your calls to the cloud for analysis. That keeps things private while still letting AI do the heavy lifting of spotting patterns that usually scream scam.

Earlier this year, Samsung teamed up with Google to bring this capability to its own Phone app, starting with the Galaxy S26 series. That meant users didn’t have to rely on Google’s default dialer anymore to get scam protection baked in. There was a catch, though. The rollout has so far been limited to English-speaking users in the US, leaving many global users still answering unknown calls the old-fashioned way. Now, that seems to be changing.

Recent findings from the Phone by Google app suggest that Scam Detection is being prepared for Samsung’s next-generation foldables. The feature appears linked to several model families, including the Galaxy Z Fold 8, the Galaxy Z Flip 8, and a new Wide Fold device. These appear alongside a wide range of regional variants, suggesting a global rollout strategy. In short, Samsung isn’t just testing the waters here. It looks like it’s preparing to scale the feature across markets from day one.

Beyond the US-only limitation

Google’s Scam Detection already works in multiple regions on newer Pixel devices, including the Pixel 9 and Pixel 10 series. That suggests Samsung’s eventual rollout may not remain as geographically restricted as it is today. If anything, the inclusion of multiple regional variants in the code points to a broader ambition: making scam protection a standard feature rather than a market-specific perk. And honestly, it’s about time.

Samsung is expected to unveil its next foldables at its usual Galaxy Unpacked event around July 2026. While new hinges, displays, and processors will likely take the spotlight, this AI-powered call protection adds something more practical to the mix. And if Samsung and Google get this right, your next foldable might just be the smartest thing you use before you even unlock it.

-

Fashion7 days ago

Fashion7 days agoWeekend Open Thread: Spanx – Corporette.com

-

Business4 days ago

Business4 days agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Sports6 days ago

Sports6 days agoIndia men’s 4x400m and mixed 4x100m relay teams register big progress | Other Sports News

-

Business6 days ago

Business6 days agoExpert Picks for Every Need

-

Tech3 days ago

Tech3 days agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Business5 days ago

Business5 days agoNo Jackpot Winner, Prize to Climb to $231 Million

-

Fashion4 days ago

Fashion4 days agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Fashion3 days ago

Fashion3 days agoLet’s Discuss: DEI in 2026

-

Politics7 days ago

Wings Over Scotland | The quality of mercy

-

Crypto World2 days ago

Crypto World2 days agoBitcoin recovers as US and Iran Agree a Ceasefire Deal

-

Business5 days ago

Business5 days agoAkebia Therapeutics, Inc. (AKBA) Discusses Pipeline Progress and Strategic Focus on Kidney Disease Treatments at R&D Day – Slideshow

-

Crypto World22 hours ago

Crypto World22 hours agoCanary Capital Files SEC Registration for PEPE ETF

-

Fashion7 days ago

Fashion7 days agoFrugal Friday’s Workwear Report: Hammered Metallic Button Sweater Vest

-

Politics6 days ago

Politics6 days agoThe UK should not pay a penny in slavery reparations

-

Tech4 days ago

Tech4 days agoSamsung just gave up on its own Messages app

-

Tech4 days ago

Tech4 days agoHaier is betting big that your next TV purchase will be one of these

-

Fashion7 days ago

Fashion7 days agoTory Burch’s Spring 2026 Campaign Goes on a Getaway

-

Fashion7 days ago

Fashion7 days agoWeekly News Update, 4.3.26 – Corporette.com

-

NewsBeat7 days ago

NewsBeat7 days agoKemi Badenoch talks ‘spring cleaning’ Reform defections

-

Tech4 days ago

Tech4 days agoGamer Restores the Original PlayStation Portal From Two Decades Ago

You must be logged in to post a comment Login