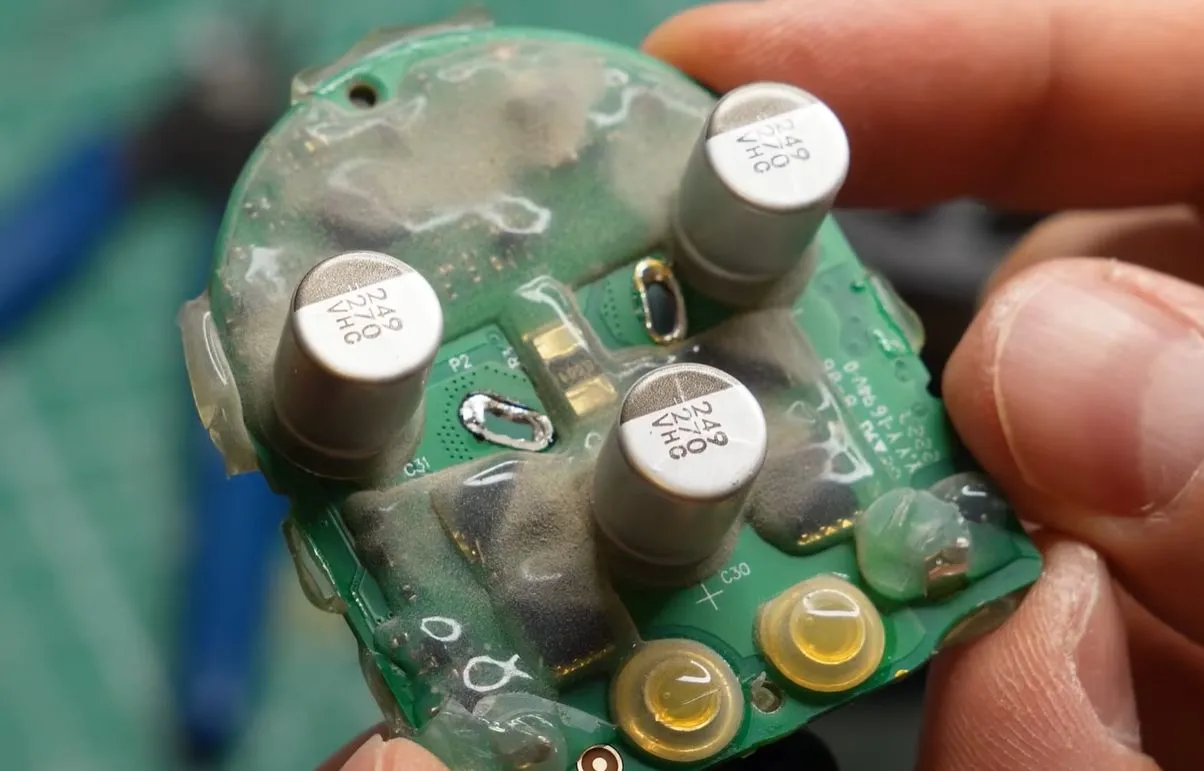

The company also launched the latest iteration of its TPUs.

Google has made a series of new enterprise-focused launches, including a new platform to build and manage AI agents and the latest generation of its AI-specific Tensor Processing Units (TPU), as competition between tech giants targeting the lucrative enterprise sector continues to intensify.

The announcements were made at the company’s annual Cloud Next conference in Las Vegas yesterday (22 April), with around 32,000 in attendance.

There is no shortage of companies offering agentic AI services, including OpenAI, Anthropic, Microsoft and China’s Alibaba, among more, with Google being the latest to join the enterprise AI race.

Parent company Alphabet has a planned spending of up to $185bn this year as it attempts to capitalise on the AI market.

To bolster its positioning, Google launched a new Gemini Enterprise Agent platform to build scale, govern, and optimise agents.

Users can manage aspects of the agents and deliver them through the company’s existing Gemini Enterprise platform, which saw a 40pc growth in paid monthly active users quarter-to-quarter in Q1.

The new launch is Google’s answer to Amazon’s Bedrock AgentCore and Microsoft Foundry.

The agent platform provides access to Gemini 3.1 Pro – Google’s most advanced model yet – the viral Nano Banana 2, audio model Lyria 3, and leading models from Anthropic, including Claude Opus, Sonnet, Haiku and Claude Opus 4.7.

Plus, a central monitoring unit lets users oversee and guide all agents from one location.

“The agentic enterprise is real – and deployed at a scale the world has never before seen,” said Thomas Kurian, Google Cloud’s CEO.

In a blogpost on the company’s site, CEO Sundar Pichai noted: “The conversation has gone from ‘Can we build an agent?’ to ‘How do we manage thousands of them?’”

Alongside this, Google is adding to its vertical stack offerings with a new cybersecurity platform that combines Google’s Threat Intelligence and Security Operations with Wiz’s cloud and AI security platform to detect and respond to threats.

Moreover, the company also launched the latest iteration of its TPUs, but this time, separating them into two distinct processors. Both chips will become available later this year.

TPU 8t will be used for “accelerated” training, while 8i will be used for “near-zero latency” inference, the company said.

These new systems are key components of Google Cloud’s AI Hypercomputer, an integrated supercomputing architecture that combines hardware, software and networking to power the full AI life cycle, Google said.

Don’t miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic’s digest of need-to-know sci-tech news.

You must be logged in to post a comment Login