Tech

Amphibious StabiX 250UC Opens Remote Shores That Stay Out of Reach for Most Campers

Drive along a rough coastal road, and the StabiX 250UC camper just continues rolling, right to the water’s edge. Instead than stopping or scrambling to load supplies into a second boat, the driver simply keeps going, tires gripping the wet beach and driving straight into the waves. The movable legs perform their job and lift those wheels clear, then the main outboard takes over and you’re gliding along.

The 250UC, built in New Zealand, begins as a 25-foot boat hull before engineers add a specific land drive system for getting it onto the beach or along a private access road. Four enormous 26-inch tyres on moveable legs, powered by a 40-horsepower Briggs & Stratton V-twin engine. A drive-by-wire system at the helm simplifies things; you can travel at up to 5.6 miles per hour on land if necessary, and the technology is primarily designed to get you from the dirt to the water without the need for a trailer or ramp.

LEGO City Holiday Adventure Camper Van Building Toy Set – Vacation Toy for Kids, Boys and Girls, Ages…

- CAMPER VAN BUILDING SET – Kids can enjoy vacations every day with this LEGO City Holiday Adventure Camper Van building set

- TOY FIGURES & CAMPER PLAYSET – This vehicle set includes everything kids need to build a camper van with a detailed living space, plus a campfire…

- FUN FEATURES – The camper van opens up for full access to an interior living space with a kitchen, toilet, 2 bunk beds, a crib and a removable…

Once afloat, the 250UC is powered by a conventional 300 horsepower Mercury or Yamaha outboard engine, or anything you like. Water speed increases into the low 40s in miles per hour. A decent 300-liter fuel tank will bring you out for full days of cruising, and the hull handles the waves nicely, making it an easy fish or a quick journey to an island with no appropriate docks.

If you’re just traveling, the 8.5-foot-wide cabin can accommodate five or seven people. At night, it all changes into sleeping accommodations for three or four people, with a V-berth in the front, dinette seating that folds flat into another bed, a galley area with a two-burner diesel cooktop, sink, and compact drawer fridge, as well as an electric toilet. You can also install diesel heating to keep the space warm regardless of the weather. Roof vents provide fresh air, and you may add solar panels or extra navigation screens as needed.

It’s relatively modest at 25 feet long overall, but the base price is starting to become a little high, around 467,500 New Zealand dollars ($271,615). However, if you add an expanded roof, canvas side walls, a roof rack, and a full galley setup, the price might reach just about 525,000 New Zealand dollars. Not a bad price for a high-end boat, but with only around 25 built each year, you can be certain that each one is heavily customized in terms of paint colors, upholstery patterns, and so on.

[Source]

Tech

Rockstar Games hit with ransom demand after third-party data breach

The group responsible, ShinyHunters, says it didn’t breach Rockstar or its data-warehouse provider, Snowflake. Instead, it exploited access from Anodot, a SaaS analytics tool Rockstar uses to track cloud costs and performance. The attackers allegedly stole authentication tokens from Anodot’s systems and used them to gain unauthorized access to Rockstar’s…

Read Entire Article

Source link

Tech

Apple's future smart glasses plan is just part of a larger computer vision play

Apple Glass will be a direct competitor to Meta’s Ray-Ban smart glasses, but it will be only a part of a larger three-pronged AI wearable strategy for the company. Here’s what’s coming.

Optimistic renders of what Apple Glass could look like – Image Credit: AppleInsider

Apple has long been working on its smart glasses, known as Apple Glass. What is anticipated to actually launch will be quite close to what the existing Meta Ray-Bans can already do.

In Sunday’s “Power On” newsletter for Bloomberg, Mark Gurman writes that the Apple Glass will be easily able to handle everyday uses, including photographs and video capture, dealing with phone calls, handling notifications from an iPhone, and music playback.

Rumor Score: 🤔 Possible

Continue Reading on AppleInsider | Discuss on our Forums

Tech

Why data quality matters when working with data at scale

Data quality has always been an afterthought. Teams spend months instrumenting a feature, building pipelines, and standing up dashboards, and only when a stakeholder flags a suspicious number does anyone ask whether the underlying data is actually correct. By that point, the cost of fixing it has multiplied several times over.

This is not a niche problem. It plays out across engineering organizations of every size, and the consequences range from wasted compute cycles to leadership losing trust in the data team entirely. Most of these failures are preventable if you treat data quality as a first-class concern from day one rather than a cleanup task for later.

How a typical data project unfolds

Before diagnosing the problem, it helps to walk through how most data engineering projects get started. It usually begins with a cross-functional discussion around a new feature being launched and what metrics stakeholders want to track. The data team works with data scientists and analysts to define the key metrics. Engineering figures out what can actually be instrumented and where the constraints are. A data engineer then translates all of this into a logging specification that describes exactly what events to capture, what fields to include, and why each one matters.

That logging spec becomes the contract everyone references. Downstream consumers rely on it. When it works as intended, the whole system hums along well.

Before data reaches production, there is typically a validation phase in dev and staging environments. Engineers walk through key interaction flows, confirm the right events are firing with the right fields, fix what is broken, and repeat the cycle until everything checks out. It is time consuming but it is supposed to be the safety net.

The problem is what happens after that.

The gap between staging and production reality

Once data goes live and the ETL pipelines are running, most teams operate under an implicit assumption that the data contract agreed upon during instrumentation will hold. It rarely does, not permanently.

Here is a common scenario. Your pipeline expects an event to fire when a user completes a specific action. Months later, a server side change alters the timing so the event now fires at an earlier stage in the flow with a different value in a key field. No one flags it as a data impacting change. The pipeline keeps running and the numbers keep flowing into dashboards.

Weeks or months pass before anyone notices the metrics look flat. A data scientist digs in, traces it back, and confirms the root cause. Now the team is looking at a full remediation effort: updating ETL logic, backfilling affected partitions across aggregate tables and reporting layers, and having an uncomfortable conversation with stakeholders about how long the numbers have been off.

The compounding cost of that single missed change includes engineering time on analysis, effort on codebase updates, compute resources for backfills, and most damagingly, eroded trust in the data team. Once stakeholders have been burned by bad numbers a couple of times, they start questioning everything. That loss of confidence is hard to rebuild.

This pattern is especially common in large systems with many independent microservices, each evolving on its own release cycle. There is no single point of failure, just a slow drift between what the pipeline expects and what the data actually contains.

Why validation cannot stop at staging

The core issue is that data validation is treated as a one-time gate rather than an ongoing process. Staging validation is important but it only verifies the state of the system at a single point in time. Production is a moving target.

What is needed is data quality enforcement at every layer of the pipeline, from the point data is produced, through transport, and all the way into the processed tables your consumers depend on. The modern data tooling ecosystem has matured enough to make this practical.

Enforcing quality at the source

The first line of defense is the data contract at the producer level. When a strict schema is enforced at the point of emission with typed fields and defined structure, a breaking change fails immediately rather than silently propagating downstream. Schema registries, commonly used with streaming platforms like Apache Kafka, serialize data against a schema before it is transported and validate it again on deserialization. Forward and backward compatibility checks ensure that schema evolution does not silently break consuming pipelines.

Avro formatted schemas stored in a schema registry are a widely adopted pattern for exactly this reason. They create an explicit, versioned contract between producers and consumers that is enforced at runtime and not just documented in a spec file that may or may not be read.

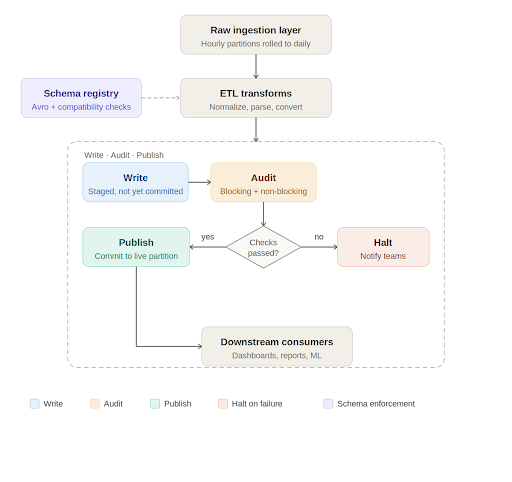

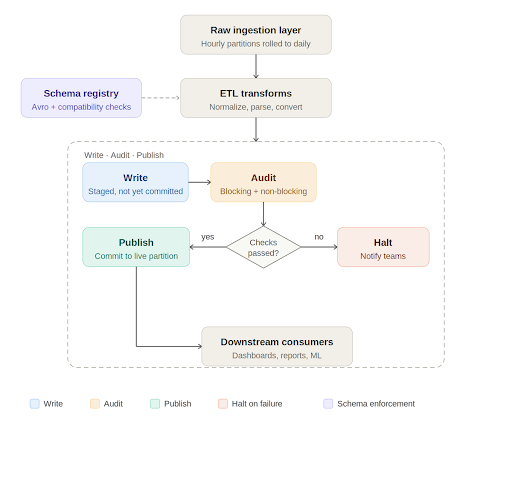

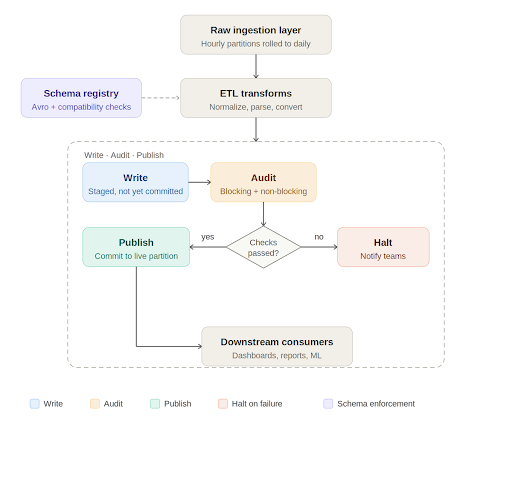

Write, audit, publish: A quality gate in the pipeline

At the processing layer, Apache Iceberg has introduced a useful pattern for data quality enforcement called Write-Audit-Publish, or WAP. Iceberg operates on a file metadata model where every write is tracked as a commit. The WAP workflow takes advantage of this to introduce an audit step before data is declared production ready.

In practice, the daily pipeline works like this. Raw data lands in an ingestion layer, typically rolled up from smaller time window partitions into a full daily partition. The ETL job picks up this data, runs transformations such as normalizations, timezone conversions, and default value handling, and writes to an Iceberg table. If WAP is enabled on that table, the write is staged with its own commit identifier rather than being immediately committed to the live partition.

At this point, automated data quality checks run against the staged data. These checks fall into two categories. Blocking checks are critical validations such as missing required columns, null values in non-nullable fields, and enum values outside expected ranges. If a blocking check fails, the pipeline halts, the relevant teams are notified, and downstream consumers are informed that the data for that partition is not yet available. Non-blocking checks catch issues that are meaningful but not severe enough to stop the pipeline. They generate alerts for the engineering team to investigate and may trigger targeted backfills for a small number of recent partitions.

Only when all checks pass does the pipeline commit the data to the live table and mark the job as successful. Consumers get data that has been explicitly validated, not just processed.

Data quality as engineering practice, not a cleanup project

There is a broader point embedded in all of this. Data quality cannot be something the team circles back to after the pipeline is built. It needs to be designed into the system from the start and treated with the same discipline as any other part of the engineering stack.

With modern code generation tools making it cheaper than ever to stand up a new pipeline, it is tempting to move fast and validate later. But the maintenance burden of an untested pipeline, especially one feeding dashboards used by product, business, and leadership teams, is significant. A pipeline that runs every day and silently produces wrong numbers is worse than one that fails loudly.

The goal is for data engineers to be producers of trustworthy, well documented data artifacts. That means enforcing contracts at the source, validating at every stage of transport and transformation, and treating quality checks as a permanent part of the pipeline rather than a one time gate at launch.

When stakeholders ask whether the numbers are right, the answer should not be that we think so. It should be backed by an auditable, automated process that catches problems before anyone outside the data team ever sees them.

Tech

Greg Kroah-Hartman Tests New ‘Clanker T1000’ Fuzzing Tool for Linux Patches

The word clanker — a disparaging term for AI and robots — “has made its way into the Linux kernel,” reports the blog It’s FOSS “thanks to Greg Kroah-Hartman, the Linux stable kernel maintainer and the closest thing the project has to a second-in-command.”

He’s been quietly running what looks like an AI-assisted fuzzing tool on the kernel that lives in a branch called “clanker” on his working kernel tree. It began with the ksmbd and SMB code. Kroah-Hartman filed a three-patch series after running his new tooling against it, describing the motivation quite simply. [“They pass my very limited testing here,” he wrote, “but please don’t trust them at all and verify that I’m not just making this all up before accepting them.”] Kroah-Hartman picked that code because it was easy to set up and test locally with virtual machines.

“Beyond those initial SMB/KSMBD patches, there have been a flow of other Linux kernel patches touching USB, HID, F2FS, LoongArch, WiFi, LEDs, and more,” Phoronix wrote Tuesday, “that were done by Greg Kroah-Hartman in the past 48 hours….

Those patches in the “Clanker” branch all note as part of the Git tag: “Assisted-by: gregkh_clanker_t1000”

The T1000 presumably in reference to the Terminator T-1000.

It’s FOSS emphasizes that “What Kroah-Hartman appears to be doing here is not having AI write kernel code. The fuzzer surfaces potential bugs; a human with decades of kernel experience reviews them, writes the actual fixes, and takes responsibility for what gets submitted.”

Linus has been thinking about this too. Speaking at Open Source Summit Japan last year, Linus Torvalds said the upcoming Linux Kernel Maintainer Summit will address “expanding our tooling and our policies when it comes to using AI for tooling.”

He also mentioned running an internal AI experiment where the tool reviewed a merge he had objected to. The AI not only agreed with his objections but found additional issues to fix. Linus called that a good sign, while asserting that he is “much less interested in AI for writing code” and more interested in AI as a tool for maintenance, patch checking, and code review.

Tech

DNA-Level Encryption Developed by Researchers to Protect the Secrets of Bioengineered Cells

The biotech industry’s engineered cells could become an $8 trillion market by 2035, notes Phys.org. But how do you keep them from being stolen? Their article notes “an uptick in the theft and smuggling of high-value biological materials, including specially engineered cells.”

In Science Advances, a team of U.S. researchers present a new approach to genetically securing precious biological material. They created a genetic combination lock in which the locking or encryption process scrambled the DNA of a cell so that its important instructions were non-functional and couldn’t be easily read or used. The unlocking, or decryption, process involves adding a series of chemicals in a precise order over time — like entering a password — to activate recombinases, which then unscramble the DNA to their original, functional form…

They created a biological keypad with nine distinct chemicals, each acting as a one-digit input. By using the same chemicals in pairs to form two-digit inputs, where two chemicals must be present simultaneously to activate a sensor, they expanded the keypad to 45 possible chemical inputs without introducing any new chemicals. They also added safety penalties — if someone tampers with the system, toxins are released — making it extremely unlikely for an unauthorized person to access the cells.

“The researchers conducted an ethical hacking exercise on the test lock and found that random guessing yielded a 0.2% success rate, remarkably close to the theoretical target of 0.1%.”

Tech

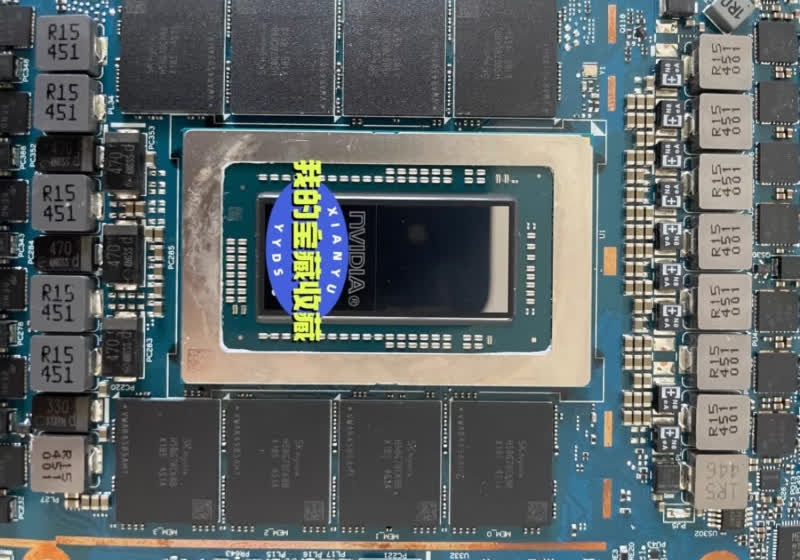

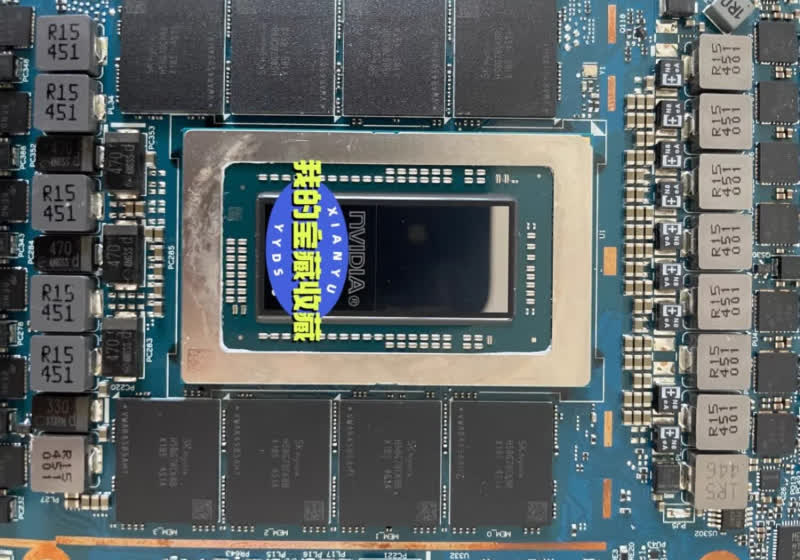

Nvidia's mythical N1 SoC surfaces on a real motherboard, and it's packing 128GB of LPDDR5X

The long-rumored Nvidia N1 chip has been circulating in leaks and rumors for what feels like an eternity. But with a fresh leak, we may finally be getting our first proper look at it – and this time, it includes actual, high-quality images. From these, the product appears closer to…

Read Entire Article

Source link

Tech

Blu-ray lives on as Verbatim and I-O Data pledge support with new drives and discs

In an official announcement translated by Automaton West, the two firms recently confirmed plans to strengthen their partnership to maintain the supply of Blu-ray discs and players in Japan. Verbatim and I-O Data acknowledged that, despite the rise of digital distribution, individuals and businesses still use optical discs for recording,…

Read Entire Article

Source link

Tech

Amphion Argon7LX at AXPONA 2026 Proves Finland Still Builds Speakers That Shame the Rest of Us (Quietly, of Course)

Finland usually exports two things with authority: hockey players like Teemu Selänne and beverages that feel like a dare. High-end loudspeakers? Not so much — at least that was the assumption before Amphion Loudspeakers decided to quietly ruin that narrative.

First unveiled at High End Munich 2025, the new Argon X-Series which includes the Argon3X, Argon3LX, and Argon7LX, finally made its way to AXPONA 2026, giving us our first real chance to hear what all the quiet confidence was about.

No, Amphion doesn’t offer the same overwhelming breadth of models as the Danes who practically carpet-bombed this show with options, but that’s not really the point. What Amphion brings is focus: cleaner execution, refined engineering, and a sound that leans toward honesty over theatrics. With expanded U.S. distribution through Playback Distribution, these Finnish imports are no longer a niche curiosity.

Finnish Precision Meets Studio Credibility

For more than 25 years, Amphion Loudspeakers has taken a more restrained approach to speaker design. Instead of boosting bass or adding extra sparkle up top to grab attention in a quick demo, their speakers are built to play it straight. What you hear is closer to what was actually recorded, which means better recordings sound great and bad ones have nowhere to hide.

That same approach has carried into the pro audio world over the past decade, where engineers working with Billie Eilish, Beck, and Kendrick Lamar rely on Amphion studio monitors for mixing. Film composers such as Ali Shaheed Muhammad and Jussi Tegelman have adopted them as well, where consistency and accuracy matter more than sounding impressive for five minutes.

Amphion Argon7LX: What It Is and What Actually Changed

The Argon7LX is a floorstanding loudspeaker from Amphion Loudspeakers that sticks to a fairly straightforward concept on paper but executes it with a level of precision that’s anything but casual. It’s a two-way design using a passive radiator system, built around a newly developed 1 inch titanium tweeter and dual 6.5-inch aluminum woofers. That configuration is meant to deliver full range sound without relying on a traditional port, which helps keep the bass tighter and more controlled, especially in real rooms where things can get messy fast.

The biggest update here is the tweeter, and it’s not a cosmetic change. Amphion revised it to improve low level detail and clean up the top end without pushing things into fatigue. There’s more information, but it’s presented in a controlled way. The crossover has also been reworked and sits at 1600 Hz, which is relatively low, helping create a smoother transition between the tweeter and woofers. The result is better integration, so the sound doesn’t feel segmented across frequencies.

That carries into the soundstage. Imaging is stable, placement is precise, and nothing shifts around when the material gets more complex. The bass remains controlled, but the more noticeable change is how it connects with the midrange and treble. The overall presentation is more cohesive and consistent.

For the demo, Amphion Loudspeakers used two compact TEAC AP-507 power amplifiers, also distributed in the U.S. by Playback Distribution. Each amplifier delivers 170 watts per channel into 4 ohms and can be configured for stereo, bi-amp, or bridged operation, with higher output available in BTL mode. The pairing had no issue driving the Argon7LX to normal listening levels with control and stability, which is notable given the size of the amplifiers.

On the practical side, the Argon7LX is a 4 ohm speaker with a sensitivity rating of 91 dB, which means it’s not especially hard to drive but will benefit from an amplifier with solid current delivery. Amphion recommends anywhere from 50 to 300 watts, which gives you some flexibility depending on your setup.

Frequency response is rated from 28 Hz to 55 kHz at minus 6 dB, so it reaches low enough for most music without needing a subwoofer, while also extending well beyond the limits of human hearing on the top end.

Physically, it’s a substantial speaker without being ridiculous. Just over 45 inches tall, under 10 inches wide, and weighing about 60 pounds each, it’s designed to fit into real living spaces without dominating them.

So how did it sound? Calm, controlled… and slightly judging you

I walked into the room expecting at least a small crowd and… nothing. A few seats open, plenty of space, almost suspiciously calm. This system had no business being that overlooked. My host didn’t rush anything, just handed me the reins. When I asked for electronic music, he cracked a slight smile and queued up a few tracks he clearly had ready. Finns get it. They’ll dismantle your penalty kill and still have time to argue about synth textures.

Right off the bat, the neutrality hits. No extra flavor, no “look what I can do” tuning. Just fast, clean, open sound that moves with real intent. Propulsive fits. The music had momentum, not just presence. It filled the room without feeling pushed, and there was an ease to it that made you stop thinking about the system and just let it run. Detail was there, but it didn’t feel dissected. More like everything was just… available.

The bass? Not trying to win any Texas BBQ competitions. This isn’t brisket dripping onto your plate. More like a perfectly trimmed filet—tight, controlled, and cooked exactly how it should be. You might want a little more heft if that’s your thing, but it never felt thin or out of place. There was even a hint of that club-like scale, just without the kind of low end that rearranges your organs and your plans for the next morning. Don’t forget to bring some protection.

For more information: amphion.fi

Related Reading:

Tech

xAI sues Colorado over AI law, calling it a threat to free speech

The company frames the dispute not as a question of safety or bias mitigation, but as a First Amendment issue over who controls the information that large-scale AI systems generate.

Read Entire Article

Source link

Tech

Slate Auto: Everything you need to know about the Bezos-backed EV startup

In April 2025, a new company called Slate Auto came out of stealth and shocked the car industry. Not only was this startup focused on making an ultra-cheap, customizable electric pickup truck with funding from Jeff Bezos, but it had also been operating in secret for three years in Troy, Michigan — the backyard of major automakers like Ford and General Motors.

TechCrunch was first to the story, reporting in early April about the company’s existence, its involvement with the Amazon founder, and its curious and unique business model. The weeks between our report and Slate’s official coming out party in late April provided a whirlwind of news, with prototypes of the startup’s truck popping up around California.

Slate is an aberration in the U.S. EV sector, where bankruptcies, failed product launches, and pivots have become commonplace. And while its current backers, executive lineup, first product, and business model provide a compelling path forward, the road is still riddled with potential hurdles as it pushes toward production in late 2026.

Here’s a timeline that charts out everything you need to know about Slate Auto, from its origin story and backers to its product, business model, and production plans.

Inside the EV startup secretly backed by Jeff Bezos

April 8 – After a year-long investigation, TechCrunch published a story revealing that a secretive EV startup called Slate Auto had been operating for three years with the financial backing of Jeff Bezos and LA Dodgers owner Mark Walter.

Unlike other EV startups, Slate had been working on developing an extremely low-cost electric pickup truck that would start at around $25,000. This truck would be deeply customizable, leveraging the experience of many former employees from Harley-Davidson and Chrysler, two companies that have extensive accessories and aftermarket parts businesses.

Slate Auto’s pickup truck spotted in the wild

April 10 – One day later, a photo of a nondescript electric truck started circulating on the r/whatisthiscar subreddit, with Redditors speculating it could be Slate’s mystery EV.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

TechCrunch was able to confirm the photo was, in fact, of a prototype of Slate’s truck parked outside the company’s Long Beach, California design center.

An EV that can change like a ‘Transformer’

April 21 – Slate began putting concept versions of the Slate EV on public streets to generate marketing buzz ahead of its planned launch event on April 24. Curiously, some of them appeared to be styled more like SUVs or hatchbacks, not just pickup trucks.

TechCrunch was able to confirm the company had developed the EV to have “Transformer-like” modular capabilities, and that this stunt was a way to tease this customization.

The analog EV pickup truck that is decidedly anti-Tesla

April 24 – Slate made its debut at a launch event in Long Beach, California, where it revealed its customizable electric pickup truck. Slate also announced the truck would be available for under $20,000 — with the $7,500 federal EV tax credit.

The base version of the truck was revealed to be very bare-bones, with just 150 miles of range, no power windows, no main infotainment screen, and not even any paint. Slate promised essentially everything about the truck would be customizable, even down to the number of seats and the overall silhouette.

A former Indiana printing plant eyed for EV truck production

April 25 – TechCrunch reported that Slate had identified a former printing plant in Warsaw, Indiana as the location for its truck factory. The 1.4 million-square-foot facility was built in 1958 and had been dormant for around two years.

Slate Auto crosses 100,000 refundable reservations in two weeks

May 12 – Slate confirmed to TechCrunch it had already surpassed 100,000 refundable $50 reservations for its affordable EV truck. It was evidence that the company’s ideas had caught on with a wide audience, despite no one knowing about Slate just two months prior.

Slate Auto drops ‘under $20,000’ pricing after Trump administration ends federal EV tax credit

July 3 – The Trump administration pushed through a massive tax-cut bill that, among many other actions, set a September end-date for the $7,500 federal EV tax credit. That means Slate’s truck will no longer be able to lean on that credit to reach the “under $20,000” starting price the startup was touting. As such, Slate pulled that language from its website before the bill was even signed into law.

Why this LA-based VC firm was an early investor in Slate Auto

July 8 – Slate’s 2023 funding round included at least 16 investors — one of them being Bezos. While most of those investors have still not been identified, Los Angeles-based Slauson & Co. spoke to TechCrunch about why it threw in with the EV startup in that initial funding round, as well as Slate’s Series B.

Slate Auto appears on the TechCrunch Disrupt main stage

October 30 – Slate Auto CEO Chris Barman sat down for an interview on the main stage at TechCrunch Disrupt 2025, where she talked about Jeff Bezos’ involvement, the challenge of building an automaker from scratch, and how the company plans to make a marketplace for customization.

Slate passes 150,000 reservations

December 16 – Despite EV growth cooling off in the U.S., Slate Auto crosses 150,000 refundable reservations for its truck and SUV, showing there is still serious interest in the vehicle despite the loss of the federal tax credit. And with fewer EVs set to come to the U.S., it appears that the startup will have very little competition at the low end of the market.

2026

A surprise CEO swap

March 9 – Slate pulls a surprise and swaps in a new CEO: former Amazon Marketplace VP Peter Faricy. Former CEO (and Slate’s first hire) Chris Barman is staying with the company though, shifting over to a “President of Vehicles” role. Slate tapped Faricy to get the startup ready for its end-of-year commercial launch – starting with converting the reservation list into as many full orders as possible.

-

Business7 days ago

Business7 days agoThree Gulf funds agree to back Paramount’s $81 billion takeover of Warner, WSJ reports

-

Politics2 days ago

Politics2 days agoUS brings back mandatory military draft registration

-

Fashion2 days ago

Fashion2 days agoWeekend Open Thread: Veronica Beard

-

Tech5 days ago

Tech5 days agoHow Long Can You Drive With Expired Registration? What Florida Law Says

-

Fashion6 days ago

Fashion6 days agoMassimo Dutti Offers Inspiration for Your Summer Mood Board

-

Sports2 days ago

Sports2 days agoMan United discover Nico Schlotterbeck transfer fee as defender reaches Dortmund agreement

-

Crypto World3 days ago

Crypto World3 days agoCanary Capital Files SEC Registration for PEPE ETF

-

Fashion5 days ago

Fashion5 days agoLet’s Discuss: DEI in 2026

-

Business2 days ago

Business2 days agoTesla Model Y Tops China Auto Sales in March 2026 With 39,827 Registrations, Beating Cheaper EVs and Gas Cars

-

Crypto World4 days ago

Crypto World4 days agoBitcoin recovers as US and Iran Agree a Ceasefire Deal

-

Politics2 days ago

Politics2 days agoMalcolm In The Middle OG Turned Down ‘Buckets Of Money’ To Appear In Reboot

-

Politics2 hours ago

Politics2 hours agoWorld Cup exit makes Italy enter crisis mode

-

Business2 days ago

Business2 days agoOpenAI Halts Stargate UK Data Centre Project Over Energy Costs and Copyright Row

-

Business1 day ago

Business1 day agoIreland Fuel Protests Enter Day 5 as Blockades Spark Shortages and Government Prepares Support Package

-

Tech6 days ago

Tech6 days agoItalian court says Netflix must refund customers up to $576 over price hikes

-

Tech6 days ago

Tech6 days agoGamer Restores the Original PlayStation Portal From Two Decades Ago

-

Tech6 days ago

Tech6 days agoHaier is betting big that your next TV purchase will be one of these

-

Tech6 days ago

Tech6 days agoThe Xiaomi 17 Ultra has some impressive add-ons that make snapping photos really fun

-

Tech6 days ago

Tech6 days agoSamsung just gave up on its own Messages app

-

Tech6 days ago

Tech6 days agoSave $130 on the Samsung Galaxy Watch 8 Classic: rotating bezel, sleep coaching, and running coach for $369

![Young Thug - Money On Money (feat. Future) [Official Video]](https://wordupnews.com/wp-content/uploads/2026/04/1776012320_maxresdefault-80x80.jpg)

You must be logged in to post a comment Login