Many of the world’s most advanced electronic systems—including Internet routers, wireless base stations, medical imaging scanners, and some artificial intelligence tools—depend on field-programmable gate arrays. Computer chips with internal hardware circuits, the FPGAs can be reconfigured after manufacturing.

On 12 March, an IEEE Milestone plaque recognizing the first FPGA was dedicated at the Advanced Micro Devices campus in San Jose, Calif., the former Xilinx headquarters and the birthplace of the technology.

The FPGA earned the Milestone designation because it introduced iteration to semiconductor design. Engineers could redesign hardware repeatedly without fabricating a new chip, dramatically reducing development risk and enabling faster innovation at a time when semiconductor costs were rising rapidly.

The ceremony, which was organized by the IEEE Santa Clara Valley Section, brought together professionals from across the semiconductor industry and IEEE leadership. Speakers at the event included Stephen Trimberger, an IEEE and ACM Fellowwhose technical contributions helped shape modern FPGA architecture. Trimberger reflected on how the invention enabled software-programmable hardware.

Solving computing’s flexibility-performance tradeoff

FPGAs emerged in the 1980s to address a core limitation in computing. A microprocessor executes software instructions sequentially, making it flexible but sometimes too slow for workloads requiring many operations at once.

At the other extreme, application-specific integrated circuits are chips designed to do only one task. ASICs achieve high efficiency but require lengthy development cycles and nonrecurring engineering costs, which are large, upfront investments. Expenses include designing the chip and preparing it for manufacturing—a process that involves creating detailed layouts, building masks for the fabrication machines, and setting up production lines to handle the tiny circuits.

“ASICs can deliver the best performance, but the development cycle is long and the nonrecurring engineering cost can be very high,” says Jason Cong, an IEEE Fellow and professor of computer science at the University of California, Los Angeles. “FPGAs provide a sweet spot between processors and custom silicon.”

Cong’s foundational work in FPGA design automation and high-level synthesis transformed how reconfigurable systems are programmed. He developed synthesis tools that translate C/C++ into hardware designs, for example.

At the heart of his work is an underlying principle first espoused by electrical engineer Ross Freeman: By configuring hardware using programmable memory embedded inside the chip, FPGAs combine hardware-level speed with the adaptability traditionally associated with software.

The FPGA architecture originated in the mid-1980s at Xilinx, a Silicon Valley company founded in 1984. The invention is widely credited to Freeman, a Xilinx cofounder and the startup’s CTO. He envisioned a chip with circuitry that could be configured after fabrication rather than fixed permanently during creation.

Articles about the history of the FPGA emphasize that he saw it as a deliberate break from conventional chip design.

At the time, semiconductor engineers treated transistors as scarce resources. Custom chips were carefully optimized so that nearly every transistor served a specific purpose.

Freeman proposed a different approach. He figured Moore’s Law would soon change chip economics. The principle holds that transistor counts roughly double every two years, making computing cheaper and more powerful. Freeman posited that as transistors became abundant, flexibility would matter more than perfect efficiency.

He envisioned a device composed of programmable logic blocks connected through configurable routing—a chip filled with what he described as “open gates,” ready to be defined by users after manufacturing. Instead of fixing hardware in silicon permanently, engineers could configure and reconfigure circuits as requirements evolved.

Freeman sometimes compared the concept to a blank cassette tape: Manufacturers would supply the medium, while engineers determined its function. The analogy captured a profound shift in who controls the technology, shifting hardware design flexibility from chip fabrication facilities to the system designers themselves.

In 1985 Xilinx introduced the first FPGA for commercial sale: the XC2064. The device contained 64 configurable logic blocks—small digital circuits capable of performing logical operations—arranged in an 8-by-8 grid. Programmable routing channels allowed engineers to define how signals moved between blocks, effectively wiring a custom circuit with software.

Fabricated using a 2-micrometer process (meaning that 2 µm was the minimum size of the features that could be patterned onto silicon using photolithography), the XC2064 implemented a few thousand logic gates. Modern FPGAs can contain hundreds of millions of gates, enabling vastly more complex designs. Yet the XC2064 established a design workflow still used today: Engineers describe the hardware behavior digitally and then “compile the design,” a process that automatically translates the plans into the instructions the FPGA needs to set its logic blocks and wiring, according to AMD. Engineers then load that configuration onto the chip.

The breakthrough: hardware defined by memory

Earlier programmable logic devices, such as erasable programmable read-only memory, or EPROM, allowed limited customization but relied on largely fixed wiring structures that did not scale well as circuits grew more complex, Cong says.

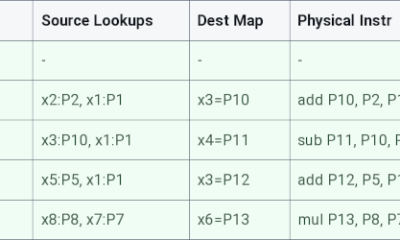

FPGAs introduced programmable interconnects—networks of electronic switches controlled by memory cells distributed across the chip. When powered on, the device loads a bitstream configuration file that determines how its internal circuits behave.

“As process technology improved and transistor counts increased, the cost of programmability became much less significant,” Cong says.

From “glue logic” to essential infrastructure

“Initially, FPGAs were used as what engineers called glue logic,” Cong says.

Glue logic refers to simple circuits that connect processors, memory, and peripheral devices so the system works reliably, according to PC Magazine. In other words, it “glues” different components together, especially when interfaces change frequently.

Early adopters recognized the advantage of hardware that could adapt as standards evolved. In “The History, Status, and Future of FPGAs,” published in Communications of the ACM, engineers at Xilinx and organizations such as Bell Labs, Fairchild Semiconductor, IBM, and Sun Microsystems said the earliest uses of FPGAs were for prototyping ASICs. They also used it for validating complex systems by running their software before fabrication, allowing the companies to deploy specialized products manufactured in modest volumes.

Those uses revealed a broader shift: Hardware no longer needed to remain fixed once deployed.

Attendees at the Milestone plaque dedication ceremony included (seated L to R) 2025 IEEE President Kathleen Kramer, 2024 IEEE President Tom Coughlin, and Santa Clara Valley Section Milestones Chair Brian Berg.Douglas Peck/AMD

Attendees at the Milestone plaque dedication ceremony included (seated L to R) 2025 IEEE President Kathleen Kramer, 2024 IEEE President Tom Coughlin, and Santa Clara Valley Section Milestones Chair Brian Berg.Douglas Peck/AMD

Semiconductor economics changed the equation

The rise of FPGAs closely followed changes in semiconductor economics, Cong says.

Developing a custom chip requires a large upfront investment before production begins. As fabrication costs increased, products had to ship in large quantities to make ASIC development economically viable, according to a post published by AnySilicon.

FPGAs allowed designers to move forward without that larger monetary commitment.

ASIC development typically requires 18 to 24 months from conception to silicon, while FPGA implementations often can be completed within three to six months using modern design tools, Cong says. The shorter cycle and the ability to reconfigure the hardware enabled startups, universities, and equipment manufacturers to experiment with advanced architectures that were previously accessible mainly to large chip companies.

Lookup tables and the rise of reconfigurable computing

A popular technique for implementing mathematical functions in hardware isthe lookup table (LUT). A LUT is a small memory element that stores the results of logical operations, according to “LUT-LLM: Efficient Large Language Model Inference with Memory-based Computations on FPGAs,” a paper selected for presentation next month at the 34th IEEE International Symposium on Field-Programmable Custom Computing Machines (FCCM).

Instead of repeatedly recalculating outcomes, the chip retrieves answers directly from memory. Cong compares the approach to consulting multiplication tables rather than recomputing the arithmetic each time.

Research led by Cong and others helped develop efficient methods for mapping digital circuits onto LUT-based architectures, shaping routing and layout strategies used in modern devices.

As transistor budgets expanded, FPGA vendors integrated memory blocks, digital signal-processing units, high-speed communication interfaces, cryptographic engines, and embedded processors, transforming the devices into versatile computing platforms.

Why the gate arrays are distinct from CPUs, GPUs, and ASICs

FPGAs coexist with other processors because each one optimizes different priorities. Central processing units excel at general computing. Graphics processing units, designed to perform many calculations simultaneously, dominate large parallel workloads such as AI training. ASICs provide maximum efficiency when designs remain stable and production volumes are high.

“ASICs can deliver the best performance, but the development cycle is long, and the nonrecurring engineering cost can be very high. FPGAs provide a sweet spot between processors and custom silicon.” —Jason Cong, IEEE Fellow and professor of computer science at UCLA.

“FPGAs are not replacements for CPUs or GPUs,” Cong says. “They complement those processors in heterogeneous computing systems.”

Modern computing platforms increasingly combine multiple types of processors to balance flexibility, performance, and energy efficiency.

A Milestone for an idea, not just a device

This IEEE Milestone recognizes more than a successful semiconductor product. It also acknowledges a shift in how engineers innovate.

Reconfigurable hardware allows designers to test ideas quickly, refine architectures, and deploy systems while standards and markets evolve.

“Without FPGAs,” Cong says, “the pace of hardware innovation would likely be much slower.”

Four decades after the first FPGA appeared, the technology’s enduring legacy reflects Freeman’s insight: Hardware did not need to remain fixed. By accepting a small amount of unused silicon in exchange for adaptability, engineers transformed chips from static products into platforms for continuous experimentation—turning silicon itself into a medium engineers could rewrite.

Among those who attended the Milestone ceremony were 2025 IEEE President Kathleen Kramer; 2024 IEEE President Tom Coughlin; Avery Lu, chair of the IEEE Santa Clara Valley Section; and Brian Berg, history and milestones chair of IEEE Region 6. They joined AMD’s chief executive, Lisa Su, and Salil Raje, senior vice president and general manager of adaptive and embedded computing at AMD.

The IEEE Milestone plaque honoring the field-programmable gate array reads:

“The FPGA is an integrated circuit with user-programmable Boolean logic functions and interconnects. FPGA inventor Ross Freeman cofounded Xilinx to productize his 1984 invention, and in 1985 the XC2064 was introduced with 64 programmable 4-input logic functions. Xilinx’s FPGAs helped accelerate a dramatic industry shift wherein ‘fabless’ companies could use software tools to design hardware while engaging ‘foundry’ companies to handle the capital-intensive task of manufacturing the software-defined hardware.”

Administered by the IEEE History Center and supported by donors, the IEEE Milestone program recognizes outstanding technical developments worldwide that are at least 25 years old.

Check out Spectrum’s History of Technology channel to read more stories about key engineering achievements.

From Your Site Articles

Related Articles Around the Web

Attendees at the Milestone plaque dedication ceremony included (seated L to R) 2025

Attendees at the Milestone plaque dedication ceremony included (seated L to R) 2025

You must be logged in to post a comment Login